Evolution of Critical Infrastructure Protection Theory A RightBrained

Evolution of Critical Infrastructure Protection Theory A “Right-Brained” Approach: Deterrence, Networks, Resilience Eric Taquechel, Ted Lewis 1

Classic Risk Equation • R=TVC • Threat – Deterrence/Game Theory – Cognitive Biases • Vulnerability – Transfer Networks • Consequence – Network Resilience 2

“Three Attack Paradigms” • Direct attacks against CIKR – Intent: disable/destroy that CIKR – Original approach of many CIKR risk models • Direct attacks against CIKR – systems – Intent: cause cascading failures throughout a system of CIKR – Resilience (cascading failure modeling) • Exploitation of a CIKR - systems – Intent: inflict damage on a “downstream” CIKR in a system by exploiting “upstream” CIKR – Transfer networks (WMD, other illicit materials) 3

Framework for Evolution • Given these paradigms, and existing literature… • How might we treat T, V, and C differently? 4

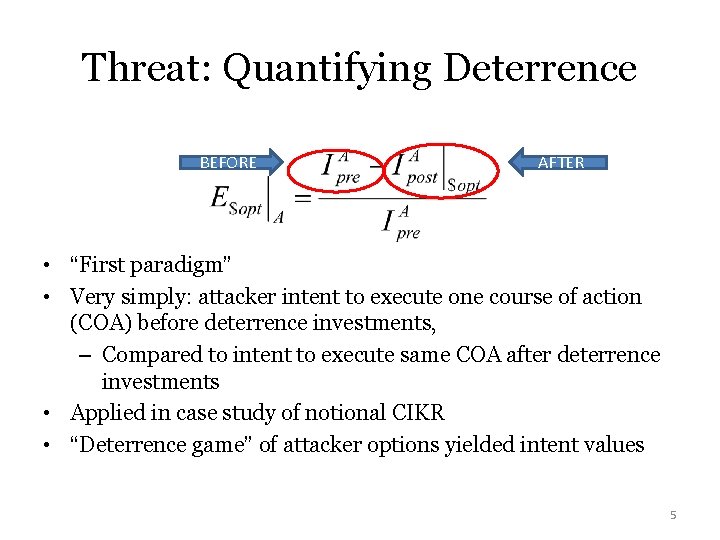

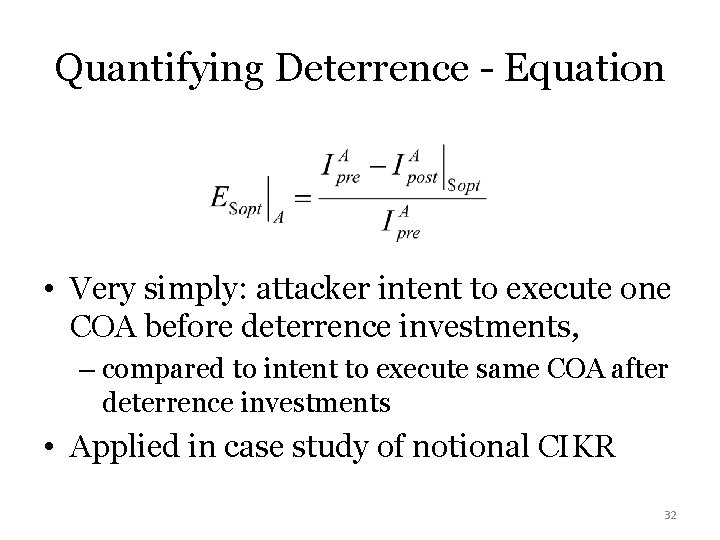

Threat: Quantifying Deterrence BEFORE AFTER • “First paradigm” • Very simply: attacker intent to execute one course of action (COA) before deterrence investments, – Compared to intent to execute same COA after deterrence investments • Applied in case study of notional CIKR • “Deterrence game” of attacker options yielded intent values 5

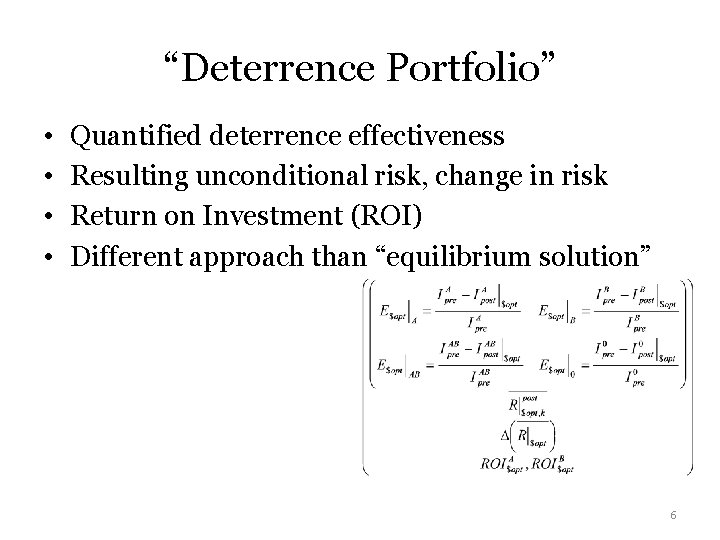

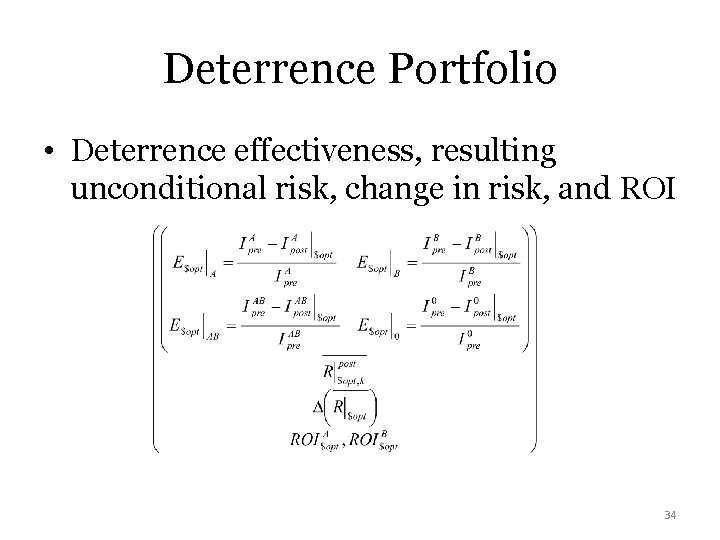

“Deterrence Portfolio” • • Quantified deterrence effectiveness Resulting unconditional risk, change in risk Return on Investment (ROI) Different approach than “equilibrium solution” 6

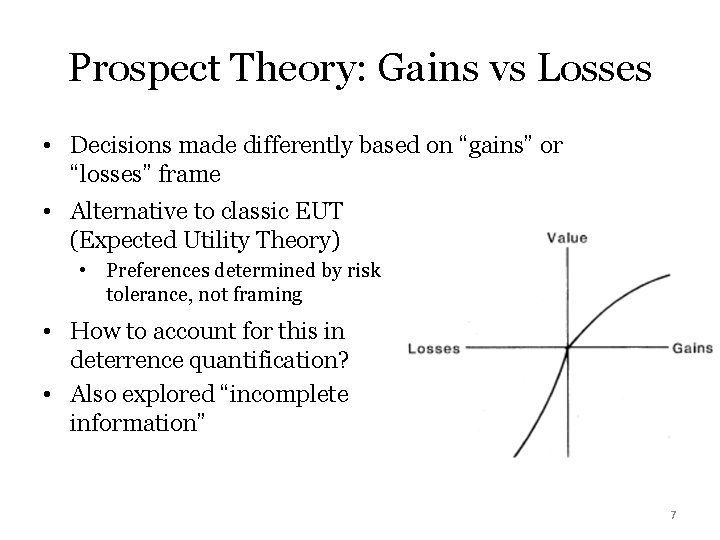

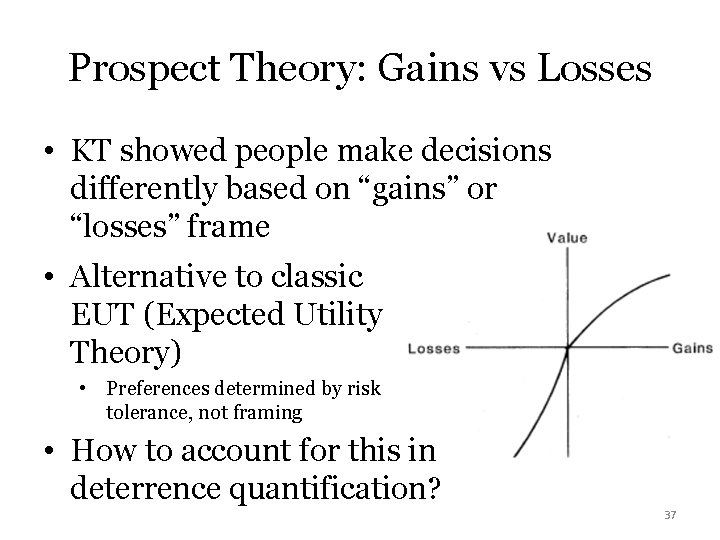

Prospect Theory: Gains vs Losses • Decisions made differently based on “gains” or “losses” frame • Alternative to classic EUT (Expected Utility Theory) • Preferences determined by risk tolerance, not framing • How to account for this in deterrence quantification? • Also explored “incomplete information” 7

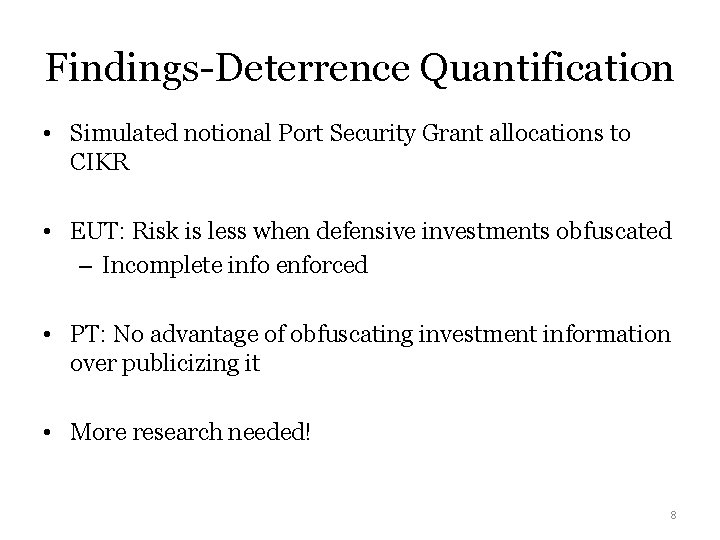

Findings-Deterrence Quantification • Simulated notional Port Security Grant allocations to CIKR • EUT: Risk is less when defensive investments obfuscated – Incomplete info enforced • PT: No advantage of obfuscating investment information over publicizing it • More research needed! 8

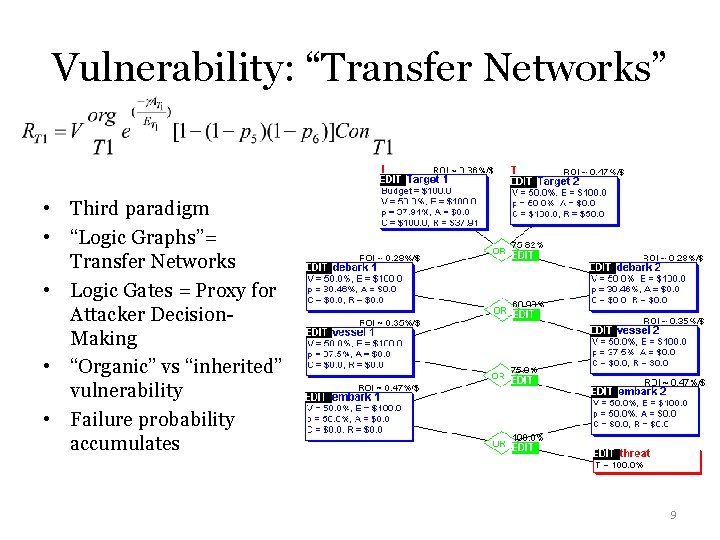

Vulnerability: “Transfer Networks” • Third paradigm • “Logic Graphs”= Transfer Networks • Logic Gates = Proxy for Attacker Decision. Making • “Organic” vs “inherited” vulnerability • Failure probability accumulates 9

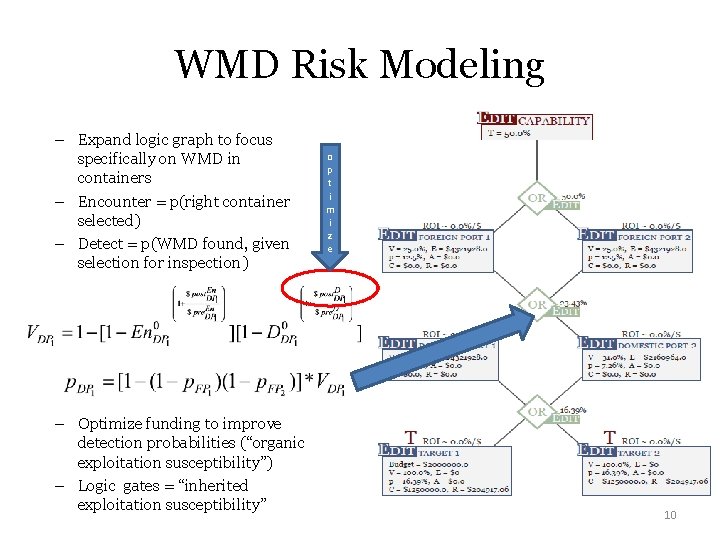

WMD Risk Modeling – Expand logic graph to focus specifically on WMD in containers – Encounter = p(right container selected) – Detect = p(WMD found, given selection for inspection) – Optimize funding to improve detection probabilities (“organic exploitation susceptibility”) – Logic gates = “inherited exploitation susceptibility” o p t i m i z e 10

Findings-WMD Transfer Network Modeling • If intel confident both US ports would be exploited… – Foreign port exploitation preferences had no bearing on allocations to US ports – Focus intel analysis efforts? – Focus law enforcement surge efforts? • Support for “calibrating” new WMD technology – Performance vs costs to operate/maintain 11

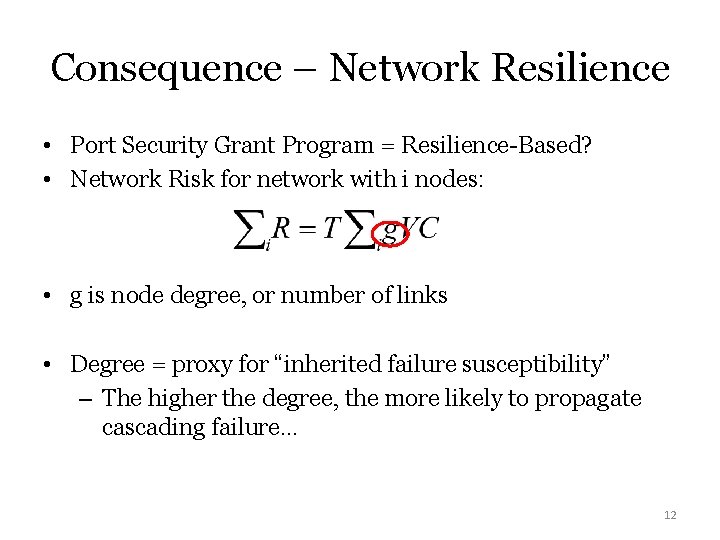

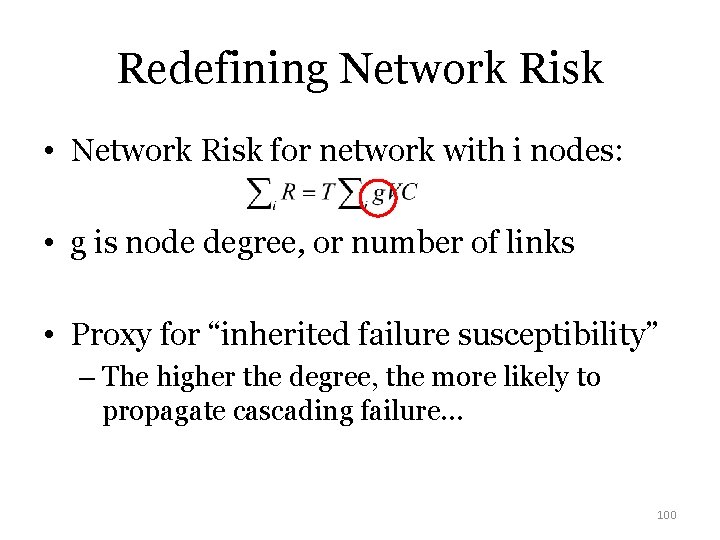

Consequence – Network Resilience • Port Security Grant Program = Resilience-Based? • Network Risk for network with i nodes: • g is node degree, or number of links • Degree = proxy for “inherited failure susceptibility” – The higher the degree, the more likely to propagate cascading failure… 12

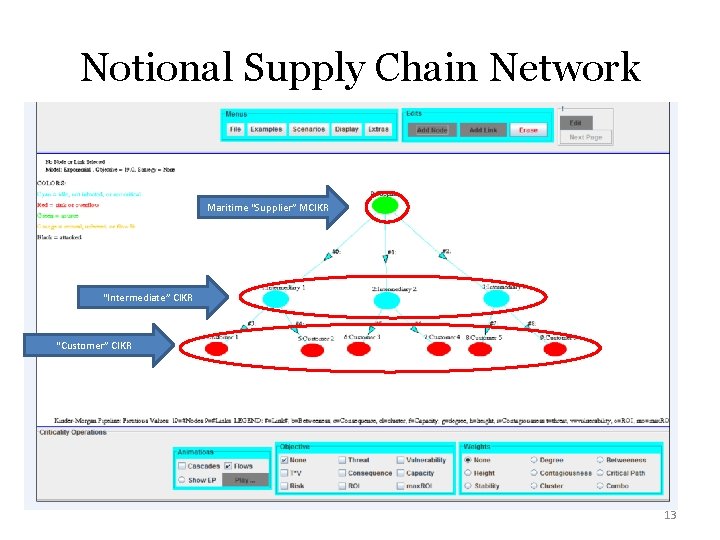

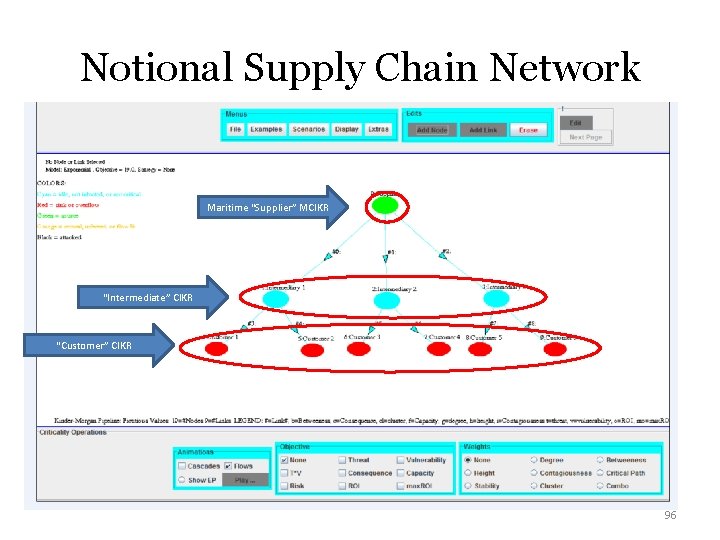

Notional Supply Chain Network Maritime “Supplier” MCIKR “Intermediate” CIKR “Customer” CIKR 13

Findings- PSGP “Network Resilience Modeling” • Showed “optimal” PSGP resilience investments to improve MCIKR rebuilding probability – Post-attack, not pre-attack • Implications for supply chain network resilience, port resilience 14

Future of CIKR Risk Theory=? ? T V C 15

Thank you! 16

EXTRA SLIDES “If I had more time, I would have written a shorter letter. ” 17

DHS Risk Lexicon • Updated 2010 • How has risk theory evolved since then? • Theory used to support CIKR protection strategy and education 18

Classic Risk Equation • R=TVC • Threat – Deterrence? – Game Theory? – Cognitive Biases? • Vulnerability – Networks? • Consequence – Resilience? – Exceedence Probability? – “Antifragility? ” 19

“Three Attack Paradigms” • Direct attacks against CIKR – Intent: disable/destroy that CIKR – Original approach of many CIKR risk models • Direct attacks against CIKR – systems – Intent: cause cascading perturbations throughout a system of CIKR – Resilience (cascade or failure flow modeling) • Exploitation of a CIKR - systems – Intent: inflict damage on a different CIKR “downstream” in a system – Transfer networks (WMD, other illicit materials) 20

Framework for “Evolution” • Given these paradigms, and given previous work… • How can we treat T, V, and C differently? 21

THREAT R=TVC • • “Paradigm 1” Deterrence Game Theory Cognitive Biases 22

Quote “Deterrence theory is applicable to many of the 21 st century threats the US will face… but how theory is put into practice, or “operationalized”, needs to be advanced” -Chilton and Weaver, 2009 23

Threat: Input or Output? • Probabilistic risk analysis (PRA) community: IN R = TVC • OR community: T= f (V, C)… so (V, C) T • Why not b 0 th? OUT – Entering argument: T= f(intent, capability) 24

Compromise • CIKR risk = function of both tactical and strategic intelligence • Intentstrategic = f (Intel community estimates) • Intenttactical = f (V, C)target – “Adaptative adversaries can observe our defenses” • Therefore, Rtactical = Intenttactical*Capability*V*C 25

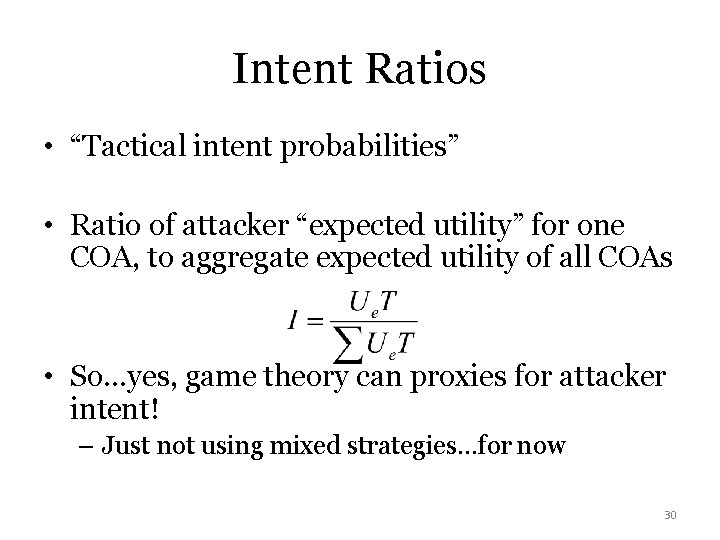

Quantifying Deterrence • If we believe Intenttactical = f (V, C)target… • Can game theory yield proxies for attacker intent? 26

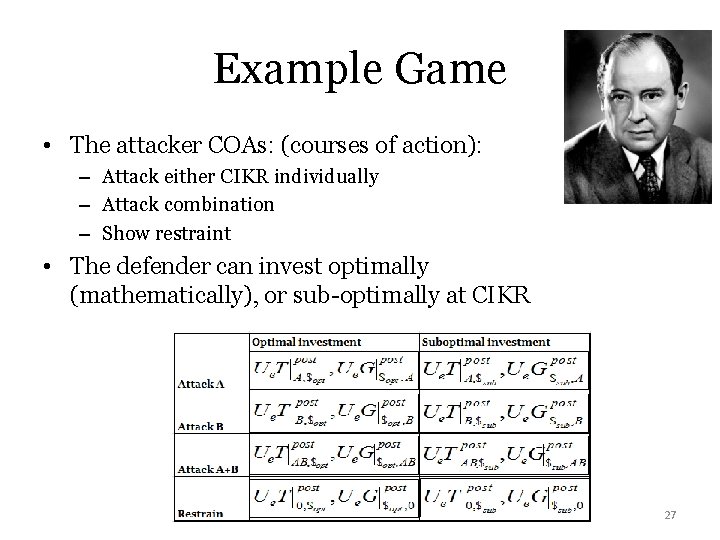

Example Game • The attacker COAs: (courses of action): – Attack either CIKR individually – Attack combination – Show restraint • The defender can invest optimally (mathematically), or sub-optimally at CIKR 27

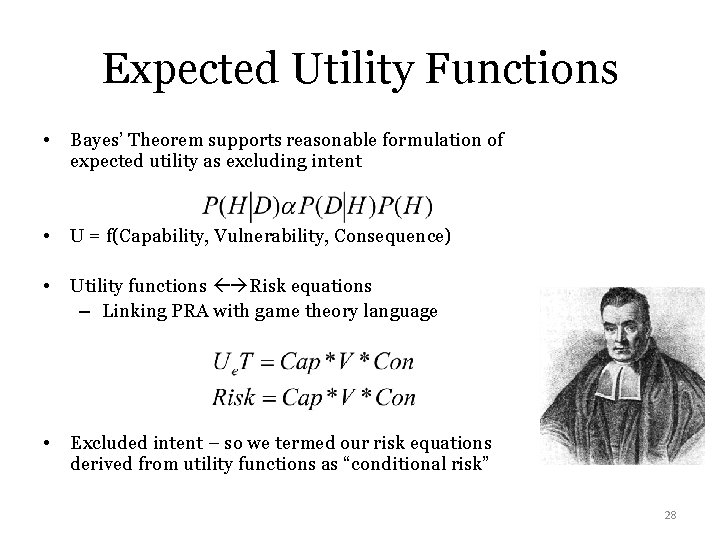

Expected Utility Functions • Bayes’ Theorem supports reasonable formulation of expected utility as excluding intent • U = f(Capability, Vulnerability, Consequence) • Utility functions Risk equations – Linking PRA with game theory language • Excluded intent – so we termed our risk equations derived from utility functions as “conditional risk” 28

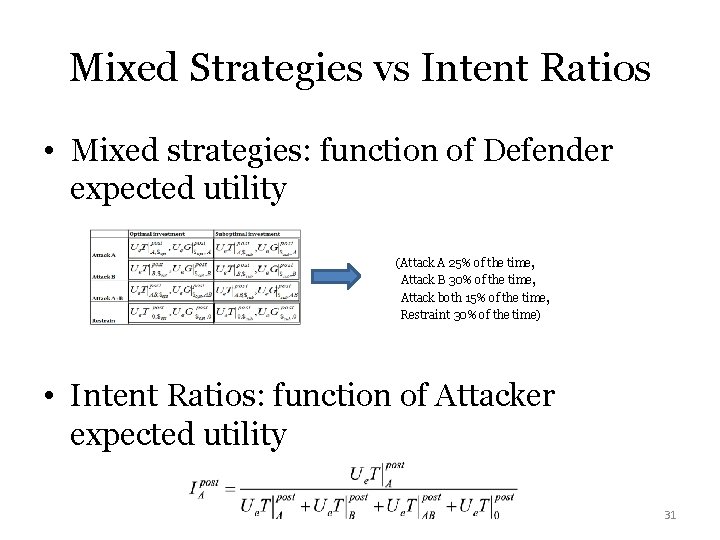

Pure vs Mixed Strategies • Pure vs mixed strategy Nash Equilibrium outcome – Pure: Attacker Intent for 1 COA = 100% – Mixed: Attacker intent = COA 1 x% of the time, COA 2 y% of the time • Reflects what one player should do to make opponent indifferent between their choices • Mixed strategy disregarded 29

Intent Ratios • “Tactical intent probabilities” • Ratio of attacker “expected utility” for one COA, to aggregate expected utility of all COAs • So…yes, game theory can proxies for attacker intent! – Just not using mixed strategies…for now 30

Mixed Strategies vs Intent Ratios • Mixed strategies: function of Defender expected utility (Attack A 25% of the time, Attack B 30% of the time, Attack both 15% of the time, Restraint 30% of the time) • Intent Ratios: function of Attacker expected utility 31

Quantifying Deterrence - Equation • Very simply: attacker intent to execute one COA before deterrence investments, – compared to intent to execute same COA after deterrence investments • Applied in case study of notional CIKR 32

Deterrence Portfolios • This answered the question circulating at the time: – “How do you quantify deterrence? ” • The larger the different in pre- and postinvestment risk, the more we “quantified deterrence” • More importantly – risk now accounted for tactical intelligence – “Deterrence Portfolios” – metrics showing risk accounting for this nuance 33

Deterrence Portfolio • Deterrence effectiveness, resulting unconditional risk, change in risk, and ROI 34

Next Step – “Cognitive Biases” • Original Approach - assumed attackers evaluated options the same regardless of “framing” • Kahneman and Tversky (KT) showed consistent evaluation of options doesn’t always occur • So…implications for measuring deterrence and risk profiles? 35

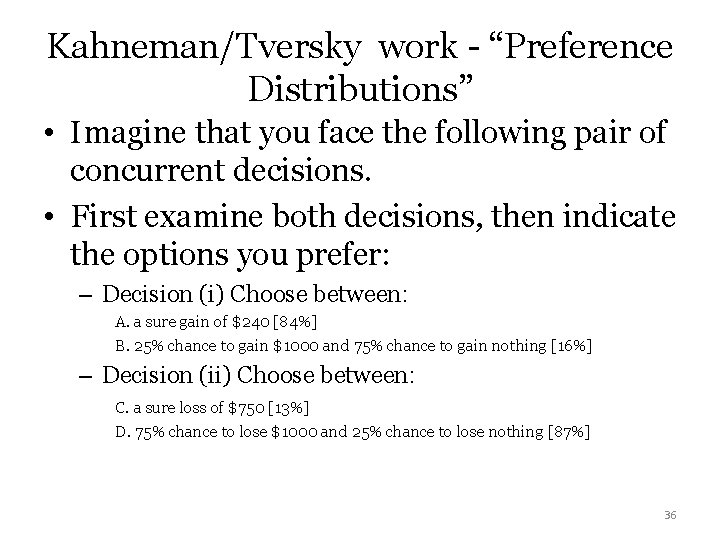

Kahneman/Tversky work - “Preference Distributions” • Imagine that you face the following pair of concurrent decisions. • First examine both decisions, then indicate the options you prefer: – Decision (i) Choose between: A. a sure gain of $240 [84%] B. 25% chance to gain $1000 and 75% chance to gain nothing [16%] – Decision (ii) Choose between: C. a sure loss of $750 [13%] D. 75% chance to lose $1000 and 25% chance to lose nothing [87%] 36

Prospect Theory: Gains vs Losses • KT showed people make decisions differently based on “gains” or “losses” frame • Alternative to classic EUT (Expected Utility Theory) • Preferences determined by risk tolerance, not framing • How to account for this in deterrence quantification? 37

Certainty and Reflection Effects • This meant people favor sure gains over larger gains with a probabilistic element – “Certainty effect” – bias toward sure gains – The utility of money decreases, the more you have! • People prefer probabilistic but larger losses to smaller but sure losses – “Reflection effect” – the “certainty effect” is reversed – People take larger risks to avoid losses than they do to achieve equivalent gains 38

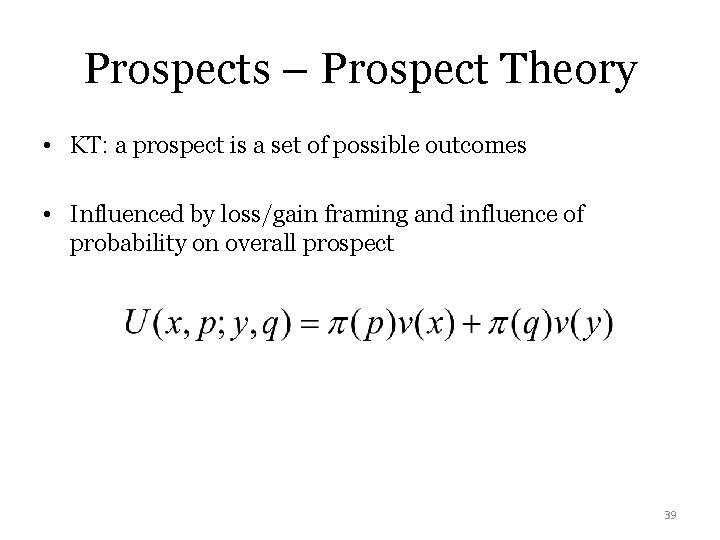

Prospects – Prospect Theory • KT: a prospect is a set of possible outcomes • Influenced by loss/gain framing and influence of probability on overall prospect 39

Prospects – Other Interpretations • We proposed the concept of “ordinary prospect” – Aggregation of outcomes, regardless of EUT or PT – Not explicitly tied to game theoretic outcomes • “Equilibrium Prospect” • “Aggregate Prospect” - hedges against equilibrium COA selection Either equilibrium or aggregate prospects could be used to form “intent ratios” 40

Incomplete Information • Frequent topic in game theory literature • What if attackers make assumptions about our CIKR defenses? • “Organizational Obfuscation Bias” = proxies for estimates of CIKR vulnerability 41

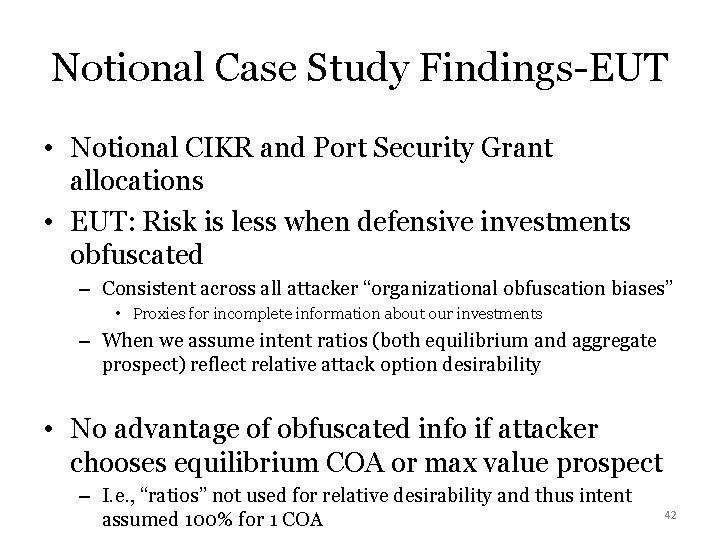

Notional Case Study Findings-EUT • Notional CIKR and Port Security Grant allocations • EUT: Risk is less when defensive investments obfuscated – Consistent across all attacker “organizational obfuscation biases” • Proxies for incomplete information about our investments – When we assume intent ratios (both equilibrium and aggregate prospect) reflect relative attack option desirability • No advantage of obfuscated info if attacker chooses equilibrium COA or max value prospect – I. e. , “ratios” not used for relative desirability and thus intent assumed 100% for 1 COA 42

Applicability of PT to our approach • Kahneman and Tversky's “preference distributions” … good for attacker intent ratio proxies? • Not elicited in “game theoretic competition” context • Other literature review – utility functions directly incorporated KT insights – However, made assumptions inapplicable to our research 43

Notional Case Study Findings-PT • We assumed “attacker reference point” = maximum death and economic consequence – Destruction of both targets – Complete info • Ruled out attacker COAs per application of certainty and reflection effects • Predicted attacker would prefer a different COA than under EUT assumptions • No advantage of obfuscating investment information over publicizing it 44

So what? • Results may have implications for CIKR defense strategy • Deterrence quantification could yield different results – EUT or PT? • Do we publicize or obfuscate our defensive investments? • Is this decision robust to assumptions about “framing” of options from attacker’s perspective? • Lots of options for future research 45

TRANSITION! T V C 46

VULNERABILITY R=TVC • “Paradigm 3” • Layered Defense Networks • WMD Deterrence and Interdiction 47

Quote “Maritime security now involves risks that must be met with a layered approach that identifies and interdicts the threat as far as possible from the U. S. borders…. a potential worst case scenario is the risk that a weapon of mass destruction is concealed in a container… …a successful strategy will use risk management to align capabilities with threats to achieve the optimal response and protect the nation. ” - 2005 Maritime Commerce Security Plan 48

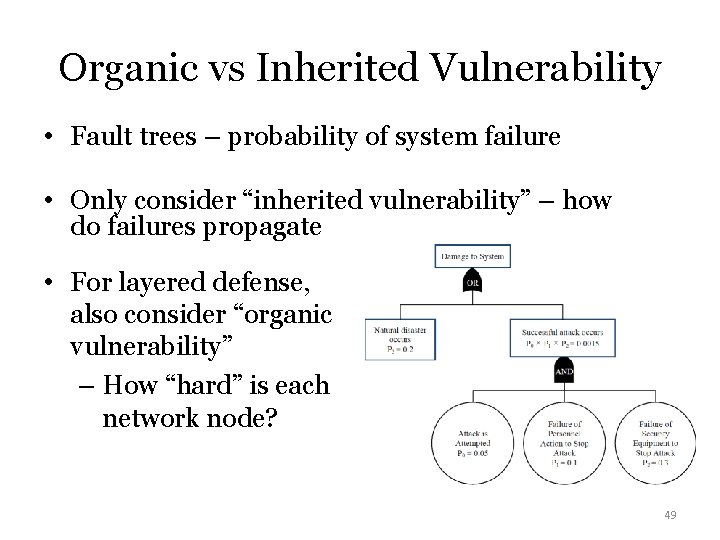

Organic vs Inherited Vulnerability • Fault trees – probability of system failure • Only consider “inherited vulnerability” – how do failures propagate • For layered defense, also consider “organic vulnerability” – How “hard” is each network node? 49

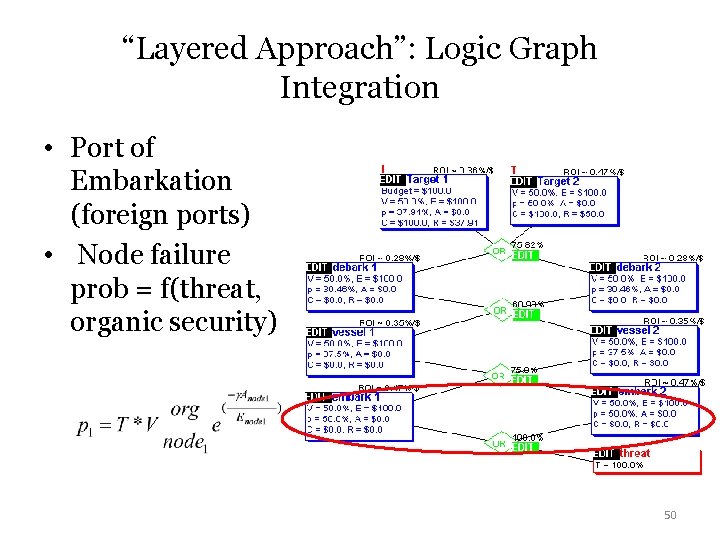

“Layered Approach”: Logic Graph Integration • Port of Embarkation (foreign ports) • Node failure prob = f(threat, organic security) 50

Logic Gates = Topology? • Traditional logic gates – fault propagation • Physical failure of engineering components • New use logic gates – Proxy for attacker decision making – Quantification of attacker tactics? – Different type of “network topology”? 51

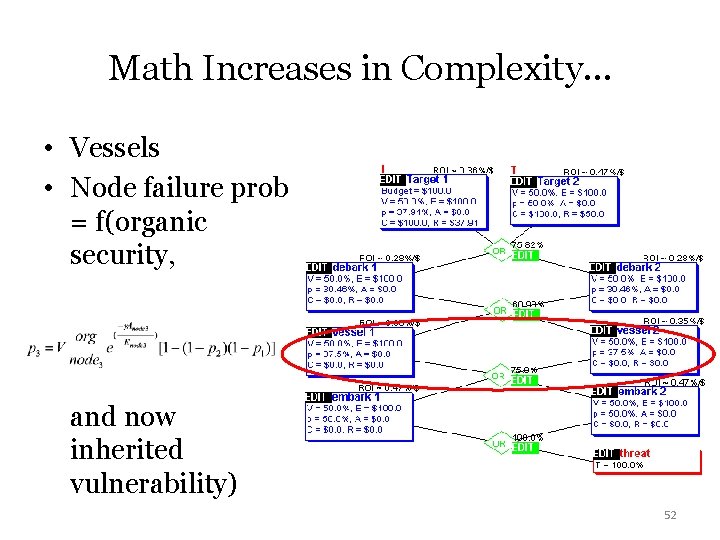

Math Increases in Complexity… • Vessels • Node failure prob = f(organic security, and now inherited vulnerability) 52

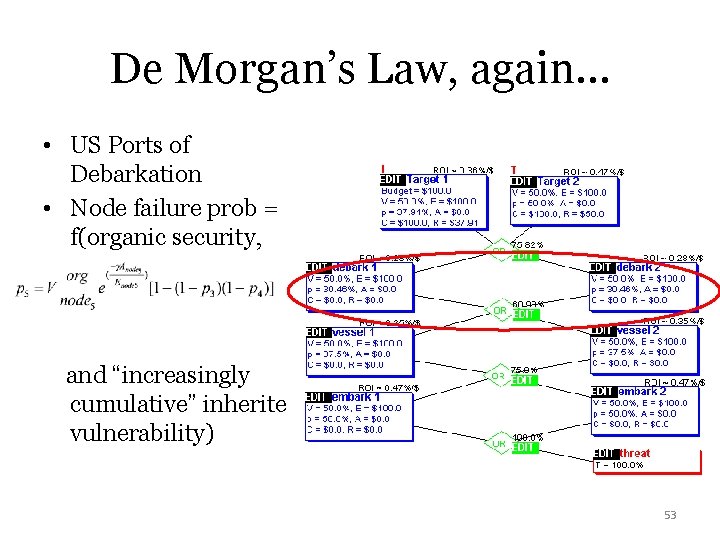

De Morgan’s Law, again… • US Ports of Debarkation • Node failure prob = f(organic security, and “increasingly cumulative” inherited vulnerability) 53

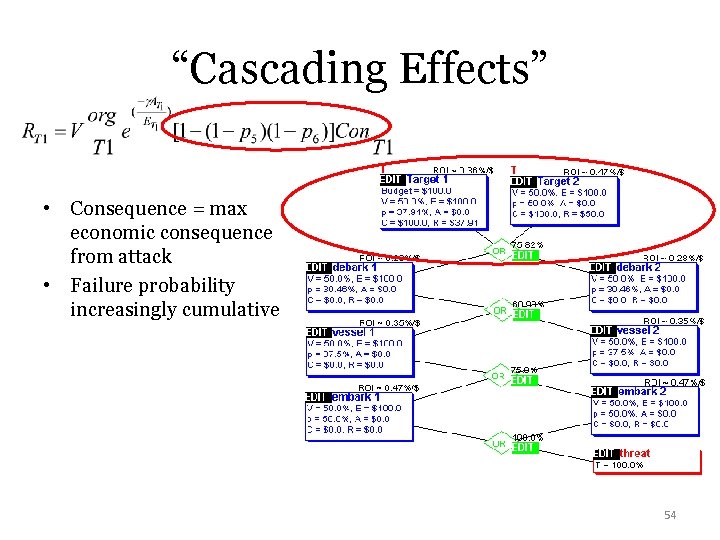

“Cascading Effects” • Consequence = max economic consequence from attack • Failure probability increasingly cumulative 54

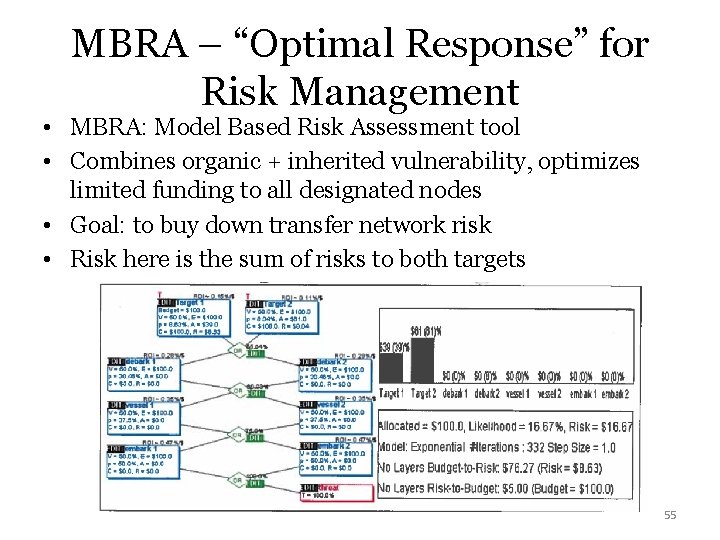

MBRA – “Optimal Response” for Risk Management • MBRA: Model Based Risk Assessment tool • Combines organic + inherited vulnerability, optimizes limited funding to all designated nodes • Goal: to buy down transfer network risk • Risk here is the sum of risks to both targets 55

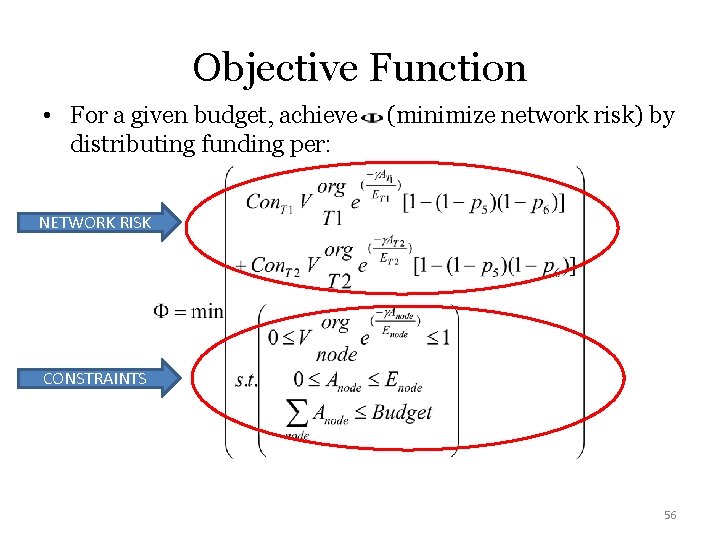

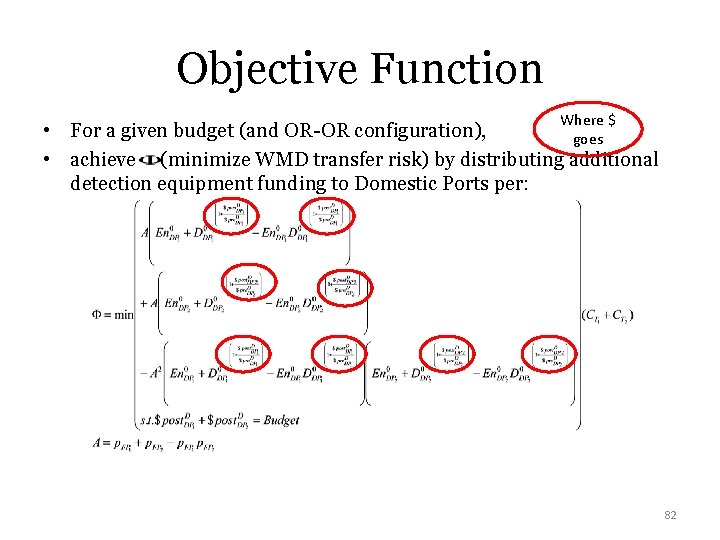

Objective Function • For a given budget, achieve distributing funding per: (minimize network risk) by NETWORK RISK CONSTRAINTS 56

Notional Results • MBRA allows “manual” re-allocation – Once equilibrium “optimal” allocation is achieved • Can redistribute money, but network risk will likely increase • Also show ROI –network risk reduced, per investment at each node – Proxy for which nodes present highest “potential for risk reduction” in the network? 57

Next Step – WMD Modeling “Maritime security now involves risks that must be met with a layered approach that identifies and interdicts the threat as far as possible from the U. S. borders…. a potential worst case scenario is the risk that a weapon of mass destruction is concealed in a container… …a successful strategy will use risk management to align capabilities with threats to achieve the optimal response and protect the nation. ” - 2005 Maritime Commerce Security Plan 58

Current State (at time of research) • Lots of work on modeling technology effectiveness • GNDA – Global Nuclear Detection Architecture – “Layered defense” for detecting illicit material • Emphasis on deterrence in addition to detect/interdict • Existing risk and interdiction models did certain specific things 59

Future State – Integration • We asked: “Can we propose a modeling solution to integrate : -WMD detection effectiveness, -layered defense networks, -risk analysis, -deterrence, and -estimates of optimal investment solutions? ” 60

Methodology • Use general layered defense model from previous work • Increase granularity on modeling probabilistic node risk as f(WMD detection technology investment) • Account foreign port exploitation susceptibility – And, domestic port encounter and detect probability are separated 61

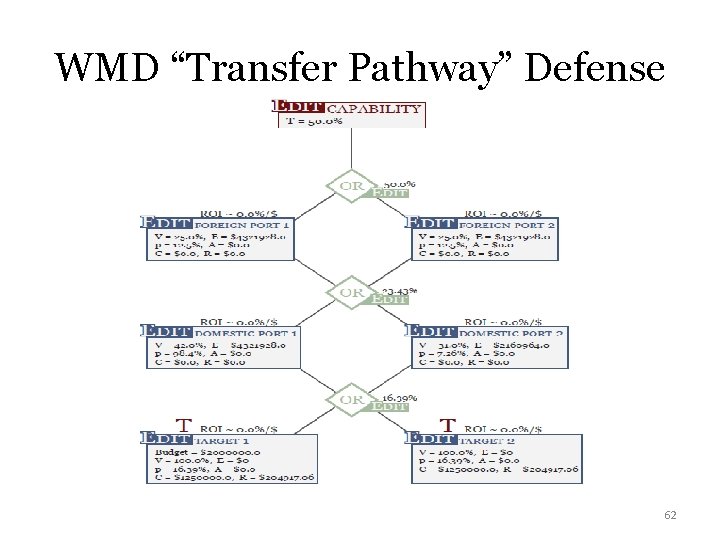

WMD “Transfer Pathway” Defense 62

More Detail • Modeling organic node vulnerability as “Exploitation Susceptibility” – Inland city assumed target, not US port of entry – CIKR are “exploited”- a specific form of vulnerability • Optimization show what “optimal” costs of WMD detection technology at US ports could be 63

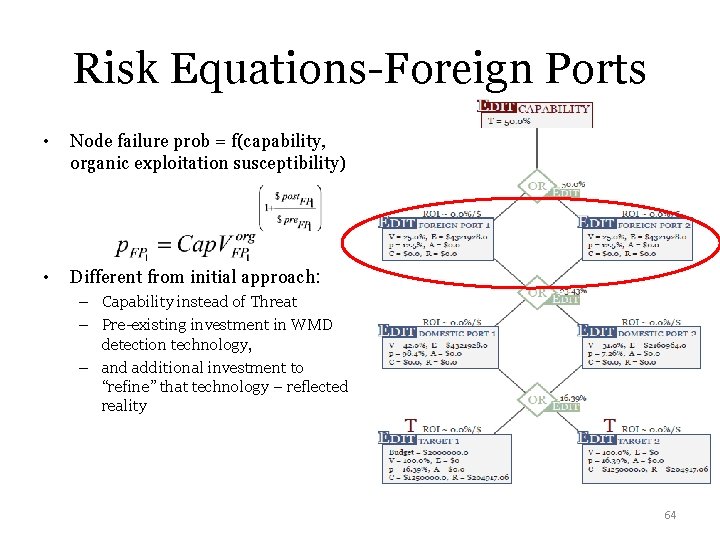

Risk Equations-Foreign Ports • Node failure prob = f(capability, organic exploitation susceptibility) • Different from initial approach: – Capability instead of Threat – Pre-existing investment in WMD detection technology, – and additional investment to “refine” that technology – reflected reality 64

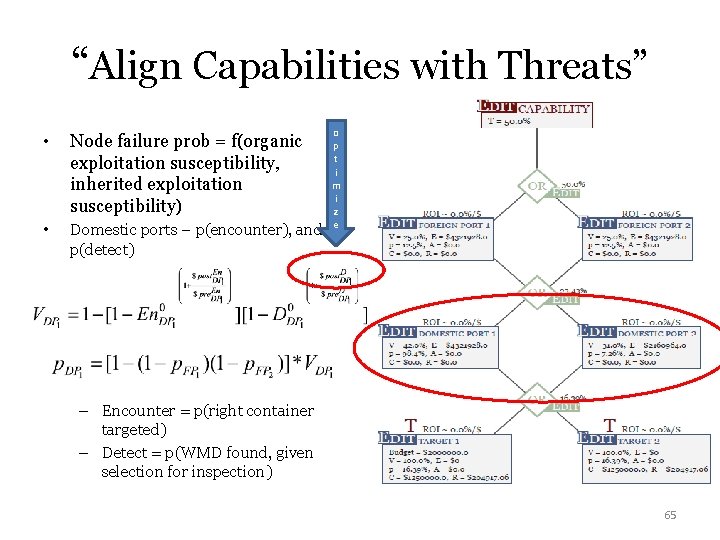

“Align Capabilities with Threats” • Node failure prob = f(organic exploitation susceptibility, inherited exploitation susceptibility) • Domestic ports – p(encounter), and p(detect) o p t i m i z e – Encounter = p(right container targeted) – Detect = p(WMD found, given selection for inspection) 65

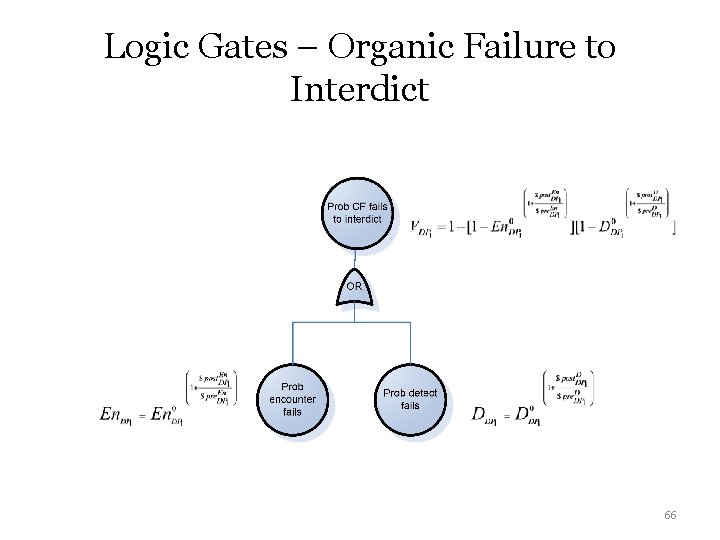

Logic Gates – Organic Failure to Interdict 66

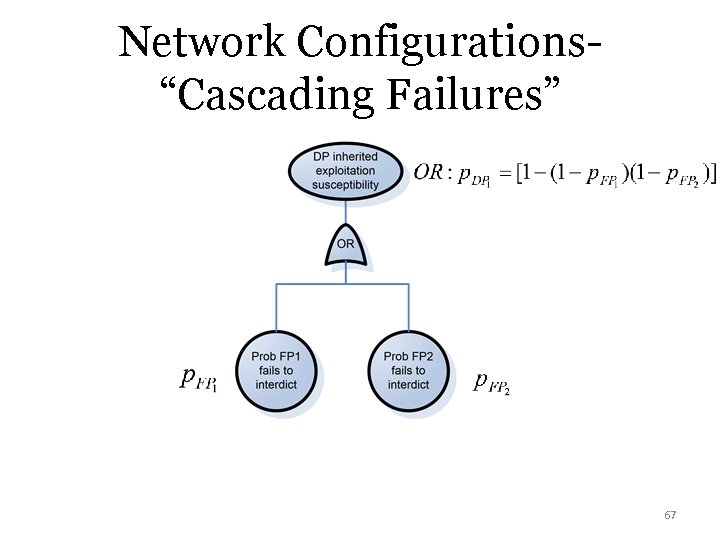

Network Configurations“Cascading Failures” 67

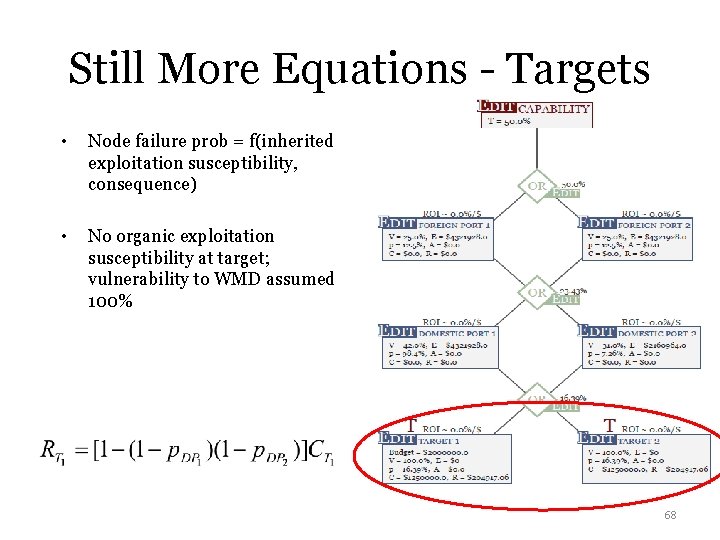

Still More Equations - Targets • Node failure prob = f(inherited exploitation susceptibility, consequence) • No organic exploitation susceptibility at target; vulnerability to WMD assumed 100% 68

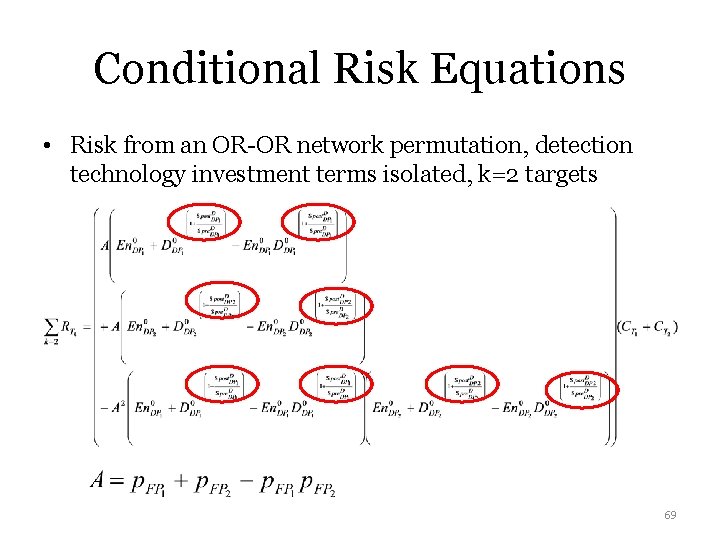

Conditional Risk Equations • Risk from an OR-OR network permutation, detection technology investment terms isolated, k=2 targets 69

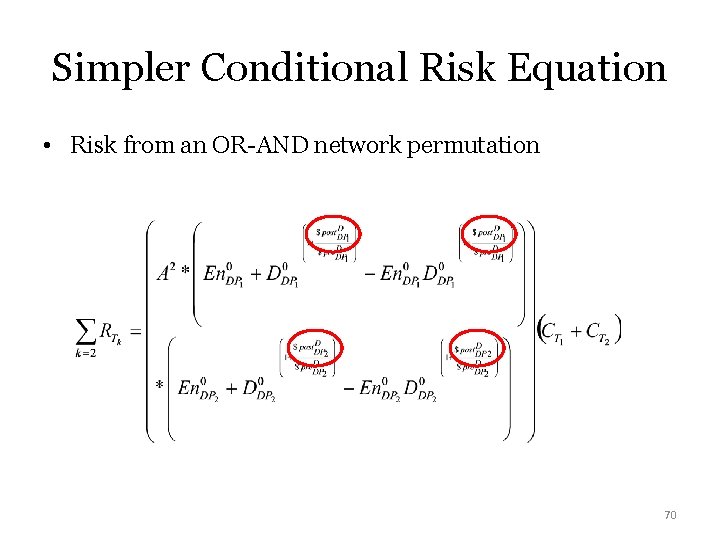

Simpler Conditional Risk Equation • Risk from an OR-AND network permutation 70

Additional Conditional Risk Eqs. • We create for: – AND-OR – AND-AND 71

Pathway vs “Network Configuration” • Pathway = exploit specific domestic ports • “Network configurations”– distribute funds amongst ports within a “layer” – so consolidate pathways for optimization – Don’t specify individual pathways – If only one domestic port would be exploited, no need for optimization! Just put all resources there – Game shows individual pathways for attacker COA; but defender COAs reflect optimization 72 amongst network configurations

Next steps • Convert risk equations to expected utility – Pre-deterrence, post-deterrence • Facilitates game theory analysis – measuring deterrence • Game pits different WMD technology investment options (Defender) – vs different pathway exploitation options (Attacker) – Doesn’t use equilibrium results per se • Quantify deterrence, determine attacker intent, estimate unconditional risk 73

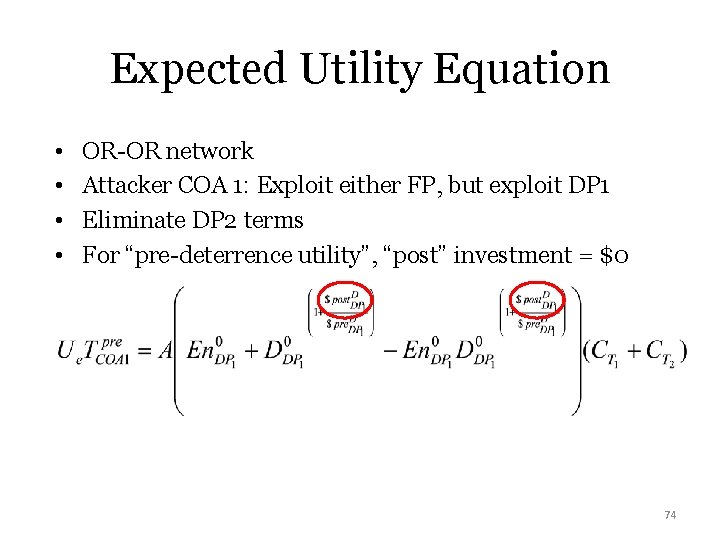

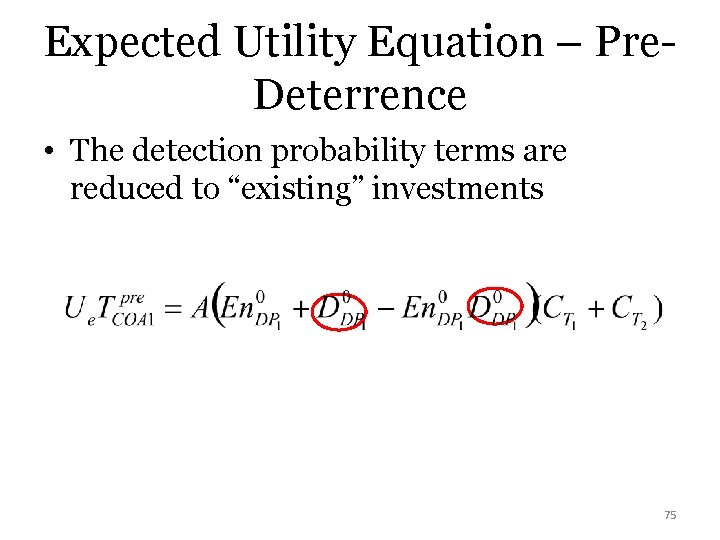

Expected Utility Equation • • OR-OR network Attacker COA 1: Exploit either FP, but exploit DP 1 Eliminate DP 2 terms For “pre-deterrence utility”, “post” investment = $0 74

Expected Utility Equation – Pre. Deterrence • The detection probability terms are reduced to “existing” investments 75

Remaining Expected Utilities • Additional expected utility fxs for – OR-OR, but exploit DP 2 instead – AND-OR, exploit DP 1 – AND-OR, exploit DP 2 – OR-AND, exploit both DP – AND-AND, exploit both DP 76

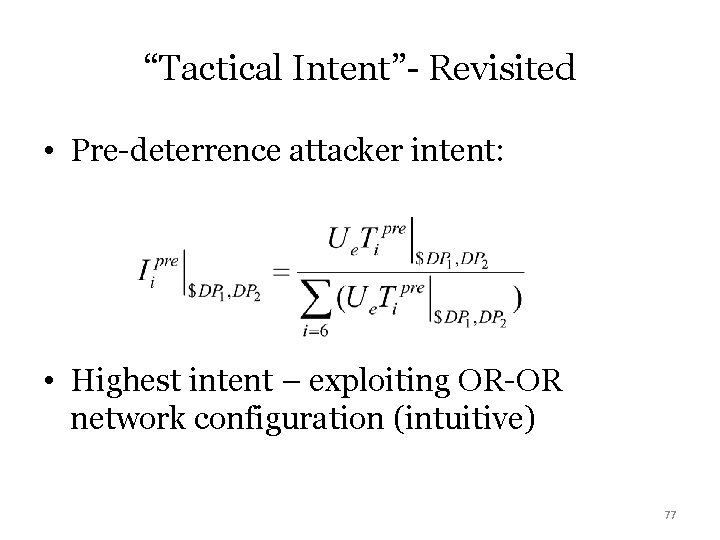

“Tactical Intent”- Revisited • Pre-deterrence attacker intent: • Highest intent – exploiting OR-OR network configuration (intuitive) 77

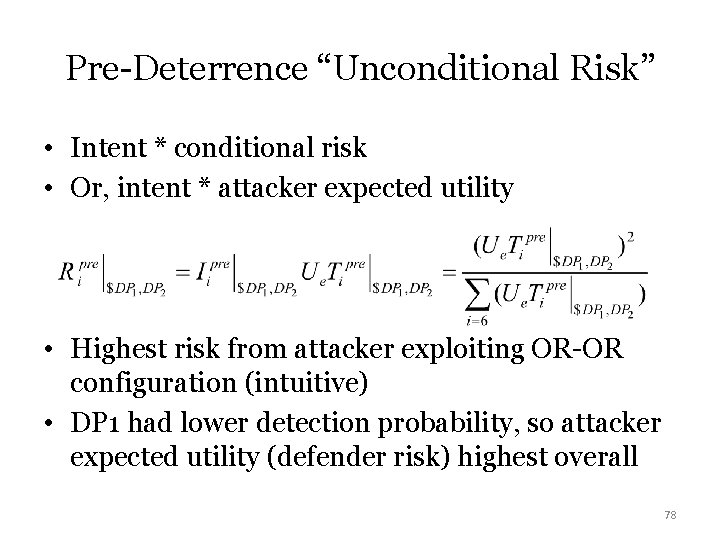

Pre-Deterrence “Unconditional Risk” • Intent * conditional risk • Or, intent * attacker expected utility • Highest risk from attacker exploiting OR-OR configuration (intuitive) • DP 1 had lower detection probability, so attacker expected utility (defender risk) highest overall 78

“Averaging Risk” • Better approach to hedge against attacker choosing “best option”? • We summed risk from all attacker COAs, divided by 6 COAs 79

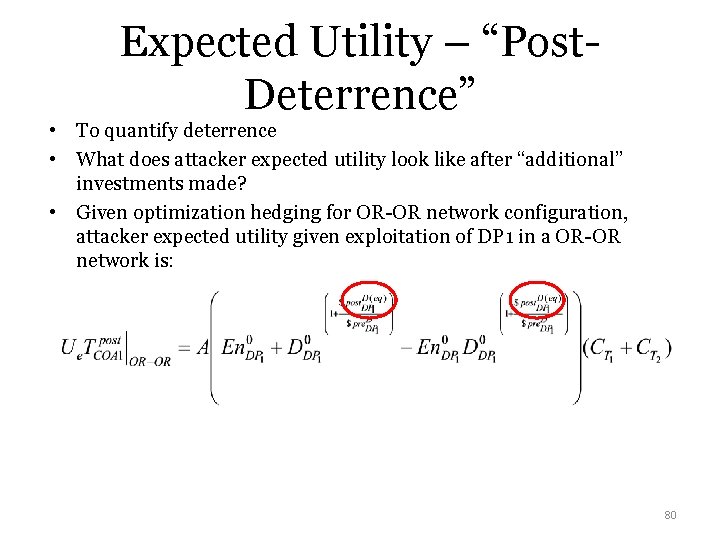

Expected Utility – “Post. Deterrence” • To quantify deterrence • What does attacker expected utility look like after “additional” investments made? • Given optimization hedging for OR-OR network configuration, attacker expected utility given exploitation of DP 1 in a OR-OR network is: 80

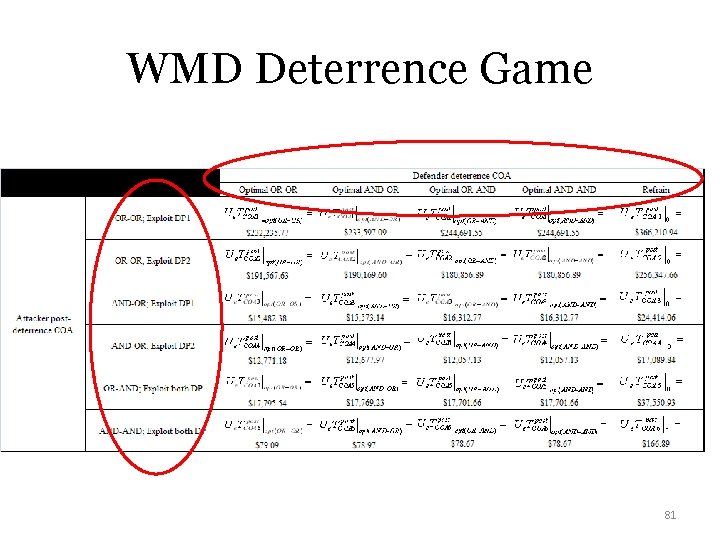

WMD Deterrence Game 81

Objective Function Where $ • For a given budget (and OR-OR configuration), goes • achieve (minimize WMD transfer risk) by distributing additional detection equipment funding to Domestic Ports per: 82

Notable Findings- Expected Utility from Deterrence Game • Highest attacker expected utility as f(defender investment): – Exploit OR-OR network, DP 1, defender refrains from investment • Lowest attacker expected utility as f(defender investment): – Exploit AND-AND network, defender makes optimal investment • So far, intuitive! • But we want risk, and averaged at that… 83

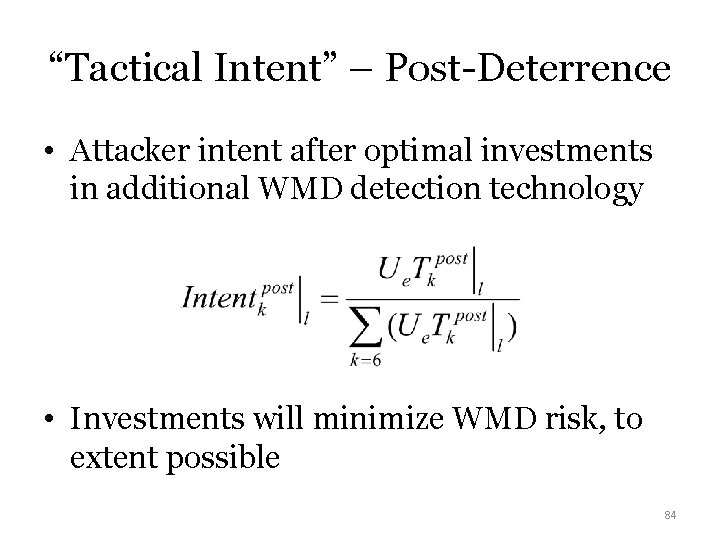

“Tactical Intent” – Post-Deterrence • Attacker intent after optimal investments in additional WMD detection technology • Investments will minimize WMD risk, to extent possible 84

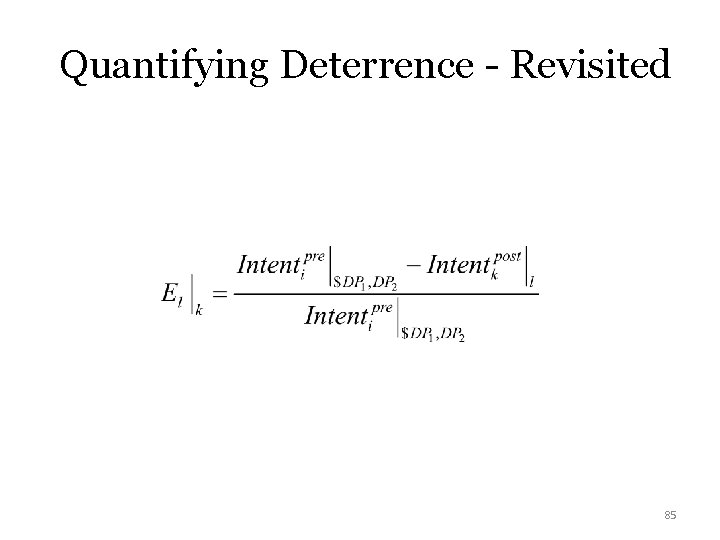

Quantifying Deterrence - Revisited 85

Notable Findings –Deterrence Quantification • When one DP organically less susceptible to exploitation ( “stronger”) than the other, • AND…attacker wants to only exploit 1 FP, – Attacker incentivized to exploit stronger DP after optimization for OR-OR configuration… – Attacker deterred from exploiting weaker target • But if attacker wants to exploit BOTH FP, – Still deterred from DP 1 exploitation (weaker) by OROR configuration investment 86

Post-Deterrence Unconditional Risk • Again, applying tactical intent to postdeterrence conditional risk • We average risk across all attacker COAs, for a given defender investment strategy 87

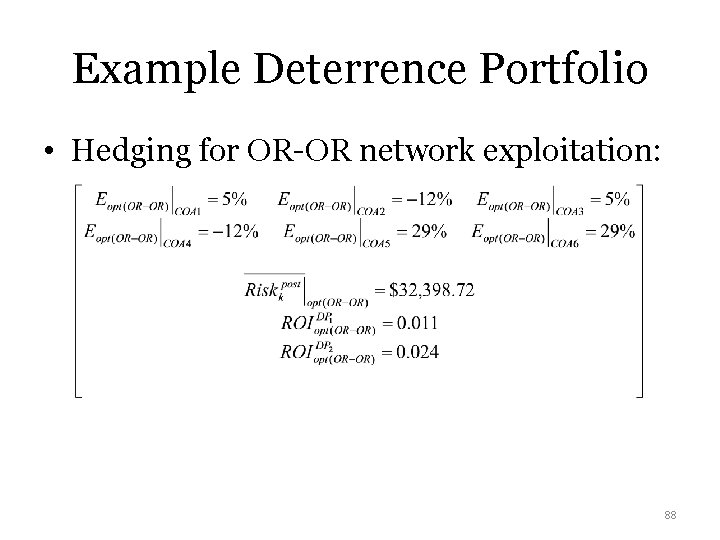

Example Deterrence Portfolio • Hedging for OR-OR network exploitation: 88

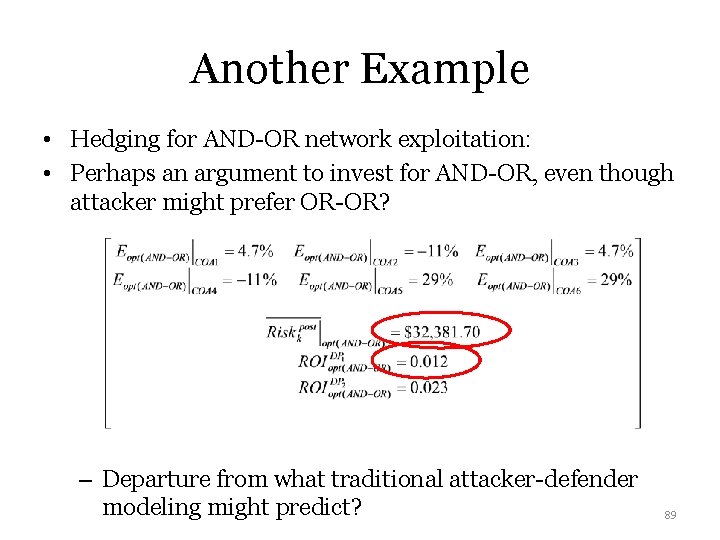

Another Example • Hedging for AND-OR network exploitation: • Perhaps an argument to invest for AND-OR, even though attacker might prefer OR-OR? – Departure from what traditional attacker-defender modeling might predict? 89

Notable Findings - Overall • If intel confident both US ports would be exploited… – Foreign port exploitation preferences had no bearing on model’s results – Focus intel analysis efforts? – Focus surge efforts? • Support for “calibrating” new WMD technology – performance vs costs to operate/maintain 90

So what? • WMD threat – high consequence event warranting analysis • Vulnerability in “paradigm 3” requires a network approach • Integrate network science, deterrence, optimization techniques, and WMD detection technology effectiveness – Implications for intelligence collections, foreign port security evaluation – Implications for technology engineers – calibrating performance against costs • Again, many possible directions for future research 91

TRANSITION! T V C 92

CONSEQUENCE R=TVC • • • “Paradigm 2” Resilience Self-Organized Criticality Exceedence Probability Antifragility? 93

Quote Resilience: ability of systems, infrastructures, government, business, communities, and individuals to resist, tolerate, absorb, recover from, prepare for, or adapt to an adverse occurrence that causes harm, destruction, or loss 94

Port Security Grant Program • FEMA program allocating money to protect ports • Ports are the “start” of supply chain networks in continental US • Could the grant program be resilience-based, instead of protection-based? 95

Notional Supply Chain Network Maritime “Supplier” MCIKR “Intermediate” CIKR “Customer” CIKR 96

Proposed Definitions of Resilience • For ports - given “macro-distribution” amongst ports: – Potential of a port to minimize risk, or restore port performance to a pre-disruption level, if any of the port MCIKR are attacked • For supply chain networks: given a “microdistribution” of funding amongst MCIKR within a single port: – Potential of the supply chain network that “begins” at that MCIKR to restore network performance to pre-disruption level 97

Network Resilience - Types • Resilience can be: – Organic – with existing resources to rebuild damaged MCIKR – Enhanced – with additional PSGP allocations 98

What does “potential” mean? • Resilience calculations after optimization of limited PSGP allocations are proxy for network “potential” to restore – If high “enhanced” resilience given simulation allocation of rebuilding funding to MCIKR, high potential to restore productivity – If low “enhanced” resilience, lower potential to restore 99

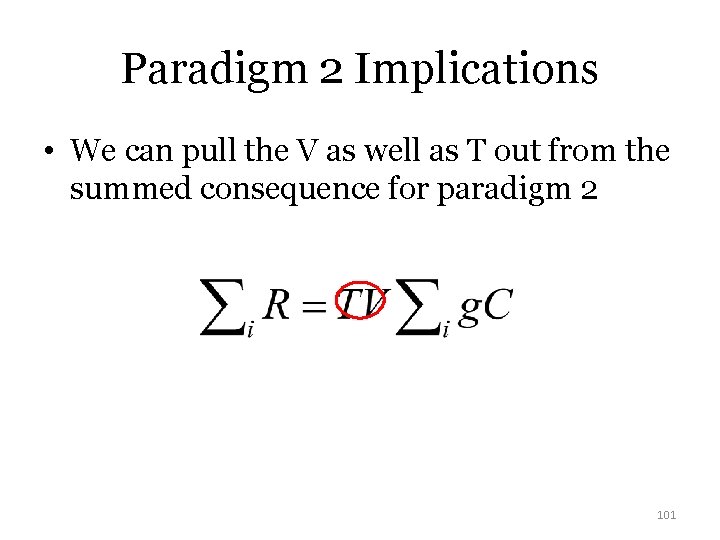

Redefining Network Risk • Network Risk for network with i nodes: • g is node degree, or number of links • Proxy for “inherited failure susceptibility” – The higher the degree, the more likely to propagate cascading failure… 100

Paradigm 2 Implications • We can pull the V as well as T out from the summed consequence for paradigm 2 101

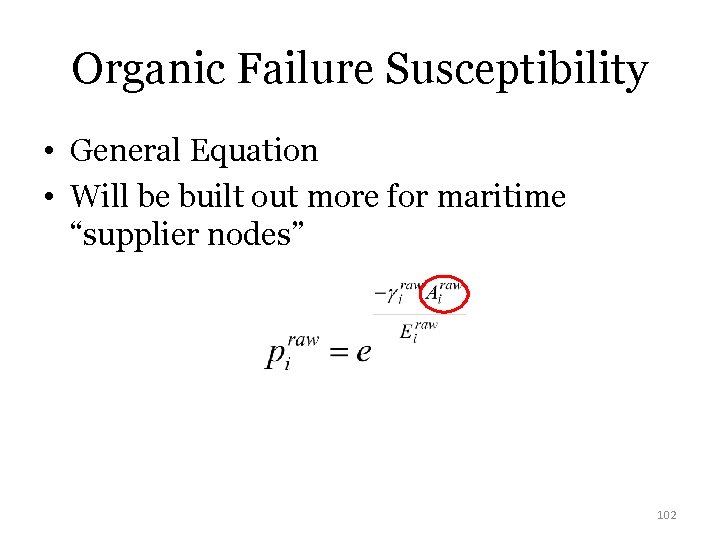

Organic Failure Susceptibility • General Equation • Will be built out more for maritime “supplier nodes” 102

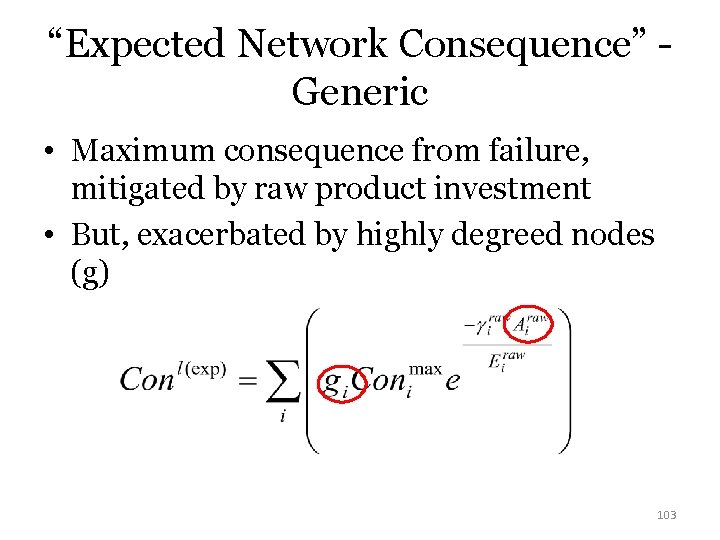

“Expected Network Consequence” Generic • Maximum consequence from failure, mitigated by raw product investment • But, exacerbated by highly degreed nodes (g) 103

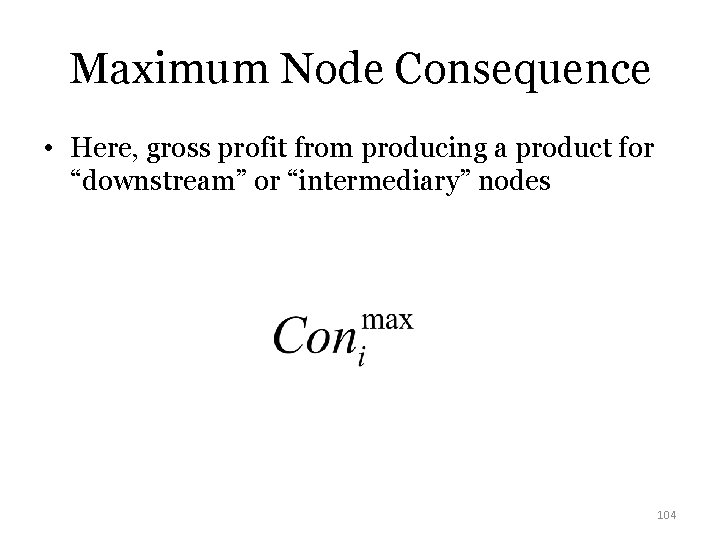

Maximum Node Consequence • Here, gross profit from producing a product for “downstream” or “intermediary” nodes 104

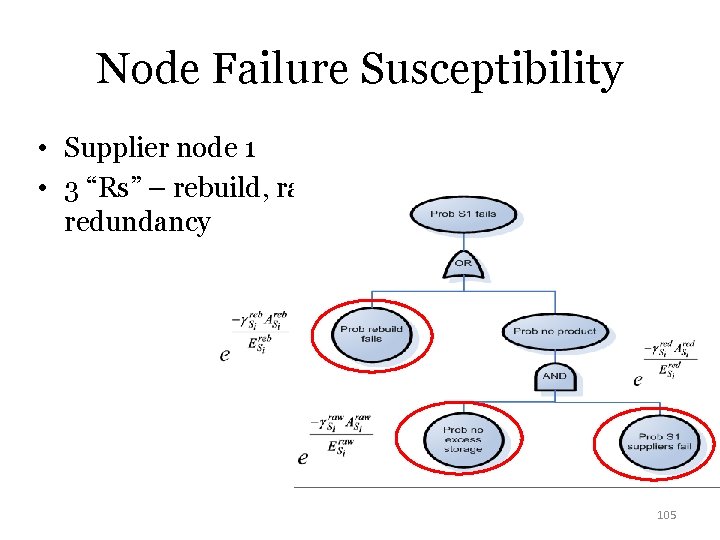

Node Failure Susceptibility • Supplier node 1 • 3 “Rs” – rebuild, raw, redundancy 105

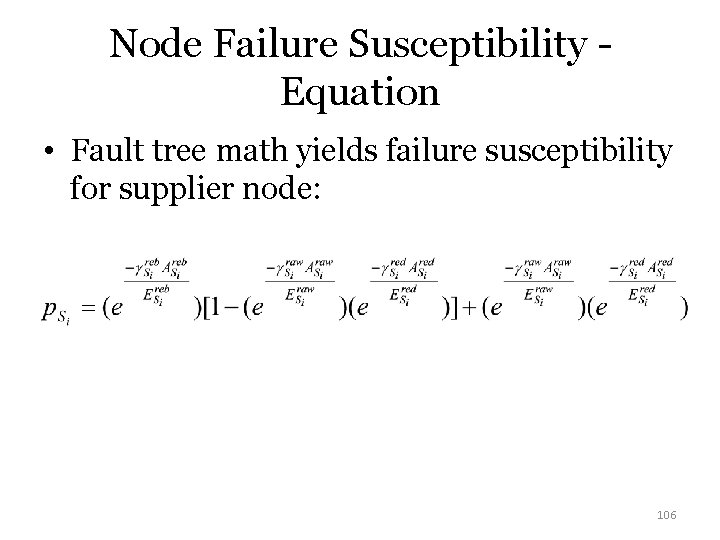

Node Failure Susceptibility Equation • Fault tree math yields failure susceptibility for supplier node: 106

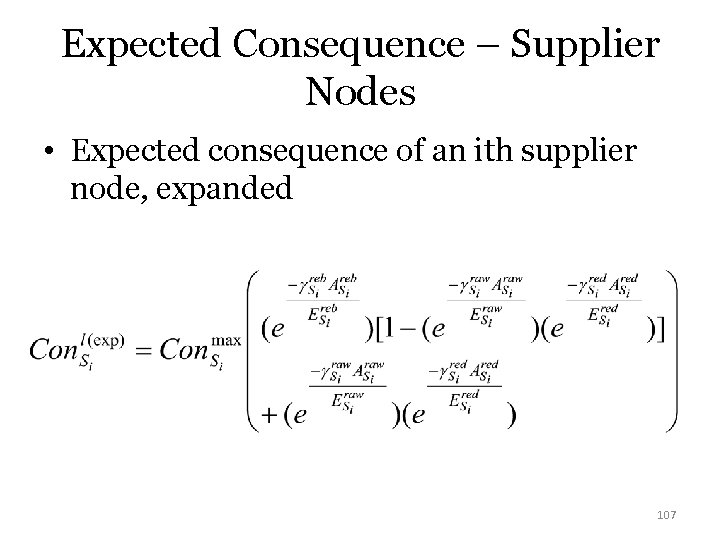

Expected Consequence – Supplier Nodes • Expected consequence of an ith supplier node, expanded 107

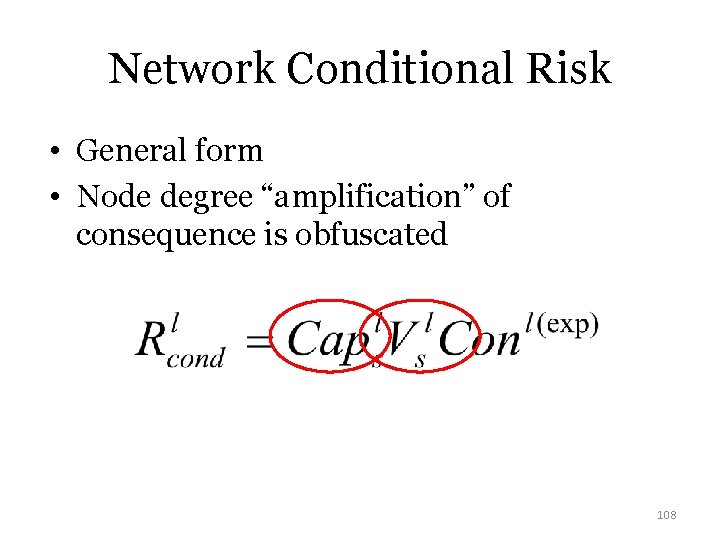

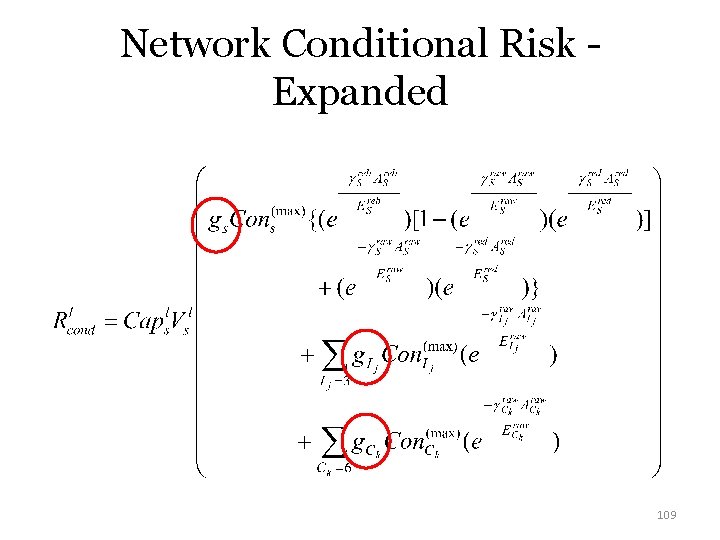

Network Conditional Risk • General form • Node degree “amplification” of consequence is obfuscated 108

Network Conditional Risk Expanded 109

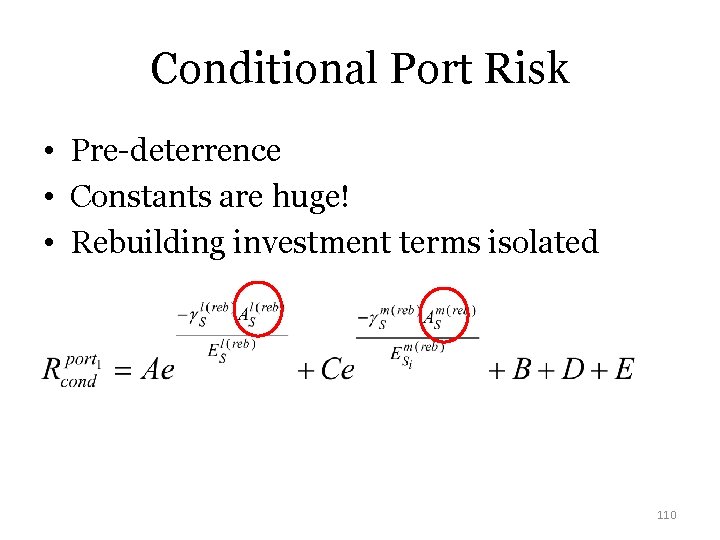

Conditional Port Risk • Pre-deterrence • Constants are huge! • Rebuilding investment terms isolated 110

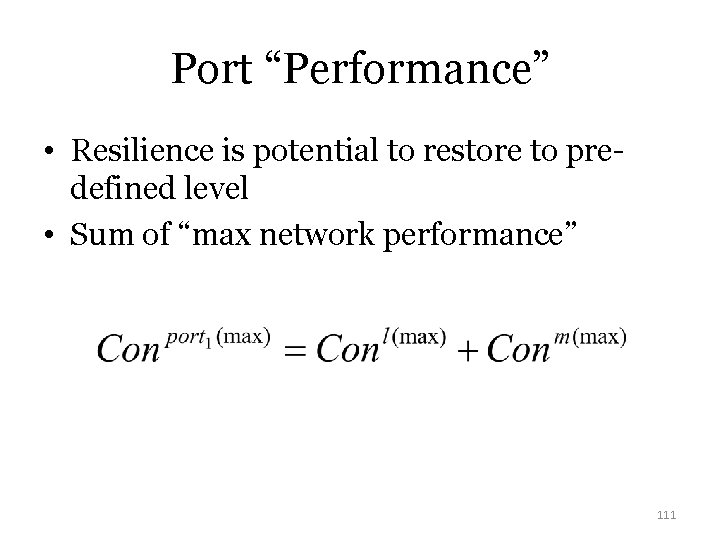

Port “Performance” • Resilience is potential to restore to predefined level • Sum of “max network performance” 111

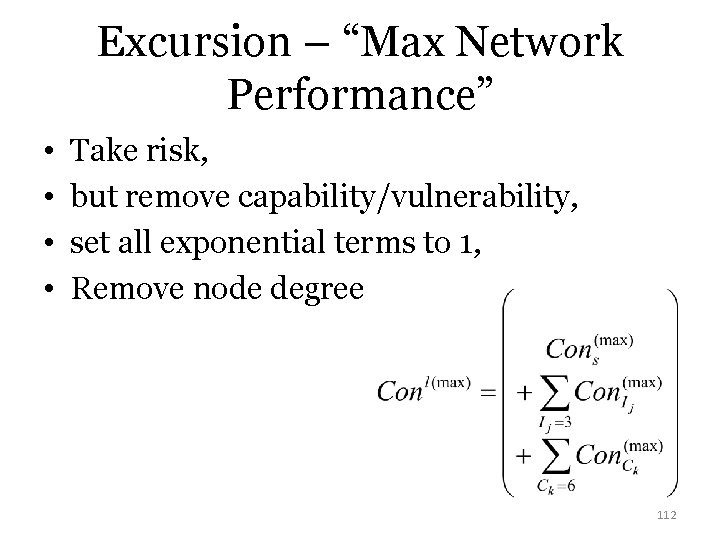

Excursion – “Max Network Performance” • • Take risk, but remove capability/vulnerability, set all exponential terms to 1, Remove node degree 112

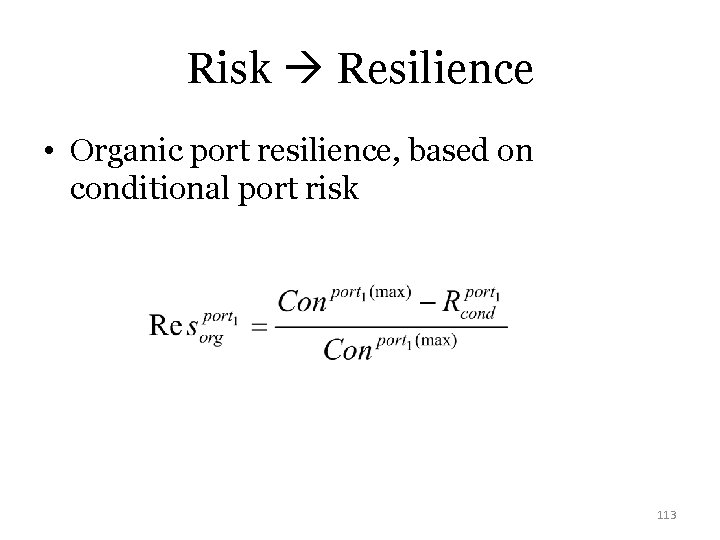

Risk Resilience • Organic port resilience, based on conditional port risk 113

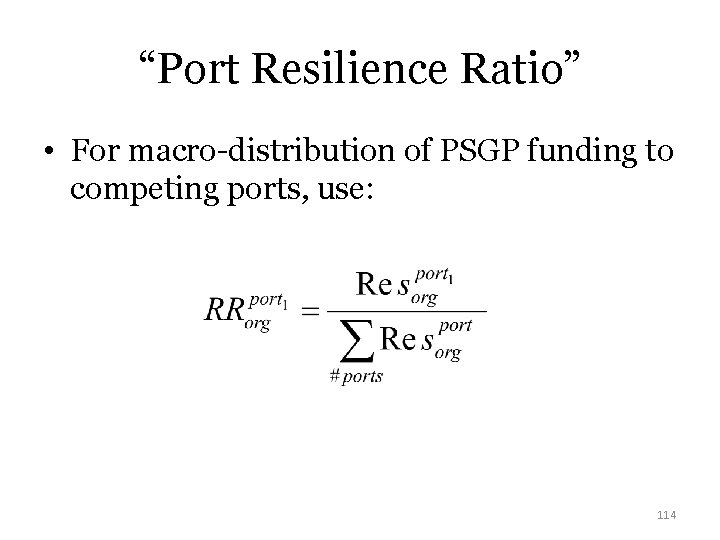

“Port Resilience Ratio” • For macro-distribution of PSGP funding to competing ports, use: 114

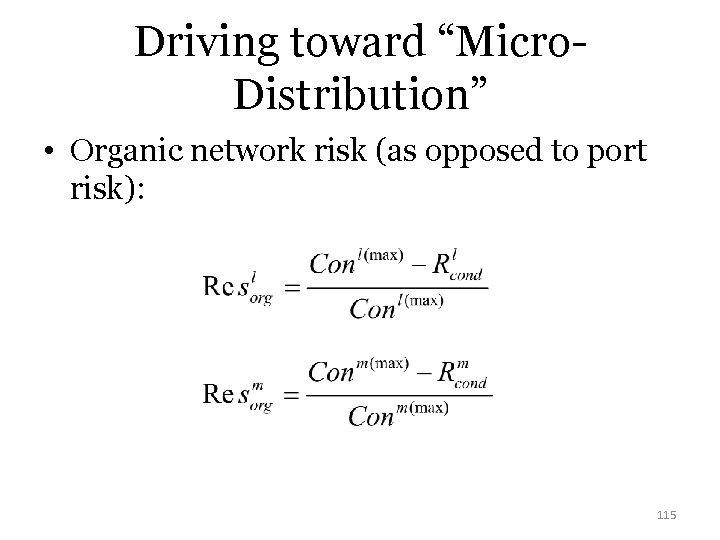

Driving toward “Micro. Distribution” • Organic network risk (as opposed to port risk): 115

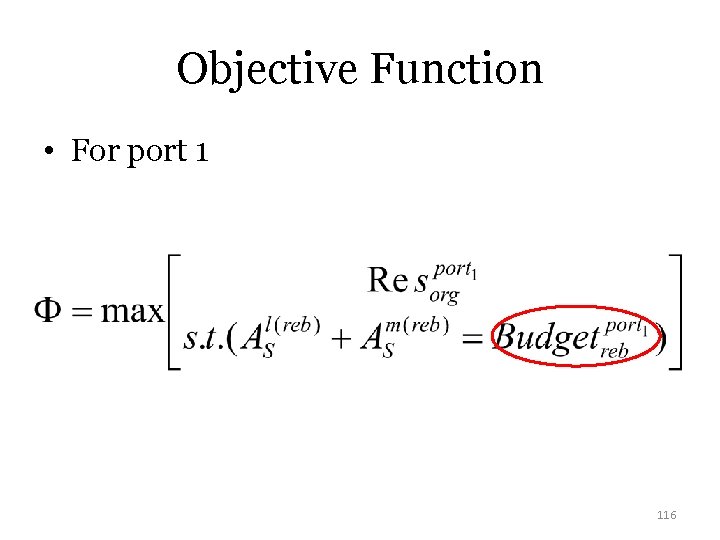

Objective Function • For port 1 116

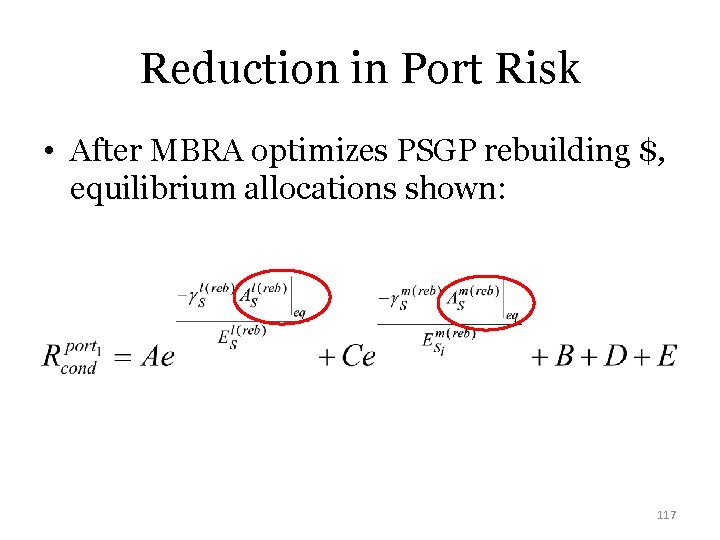

Reduction in Port Risk • After MBRA optimizes PSGP rebuilding $, equilibrium allocations shown: 117

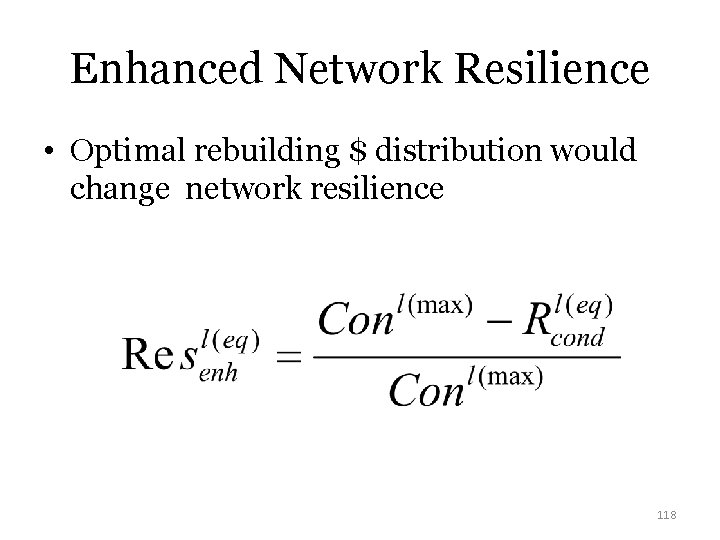

Enhanced Network Resilience • Optimal rebuilding $ distribution would change network resilience 118

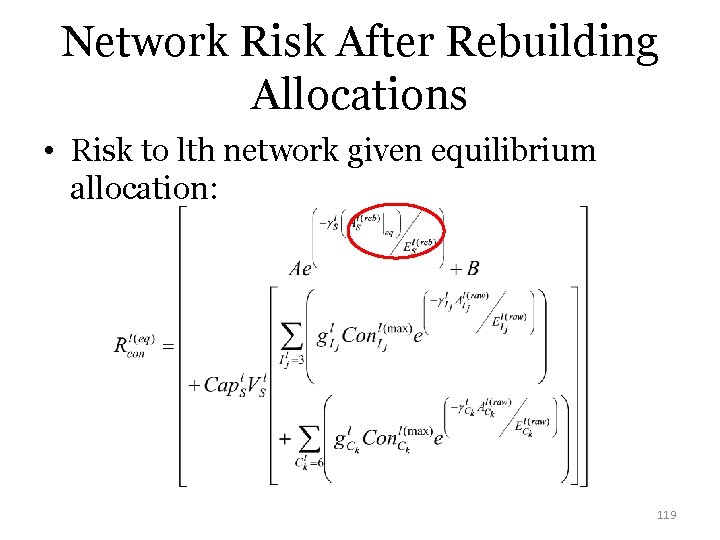

Network Risk After Rebuilding Allocations • Risk to lth network given equilibrium allocation: 119

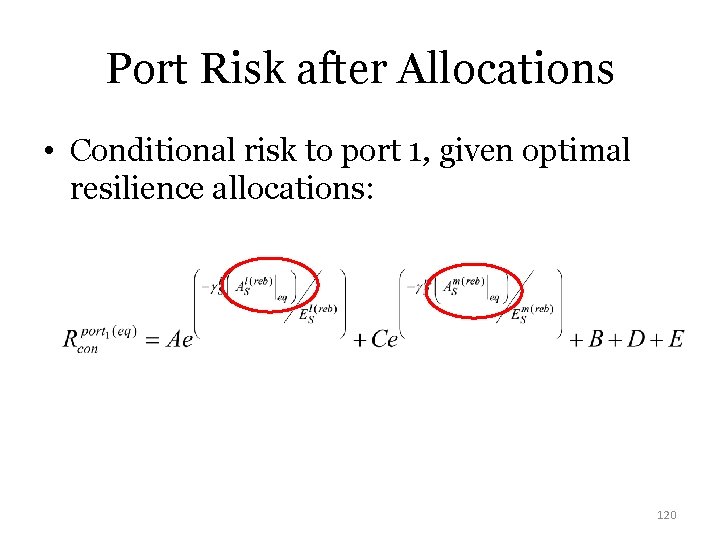

Port Risk after Allocations • Conditional risk to port 1, given optimal resilience allocations: 120

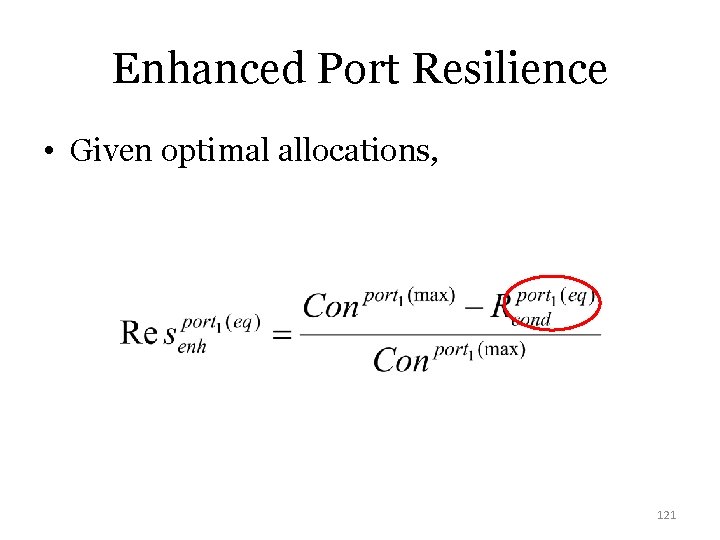

Enhanced Port Resilience • Given optimal allocations, 121

Other Considerations • Suboptimal Allocation – After MBRA reached equilibrium allocation – Decision maker trade off • Conditional vs Unconditional risk – Incorporates Intelligent Adversary Concerns – Can resilience investments “deter” attacks on supply chains? 122

Findings • “Synthesized risk, resilience, network science, performance constraints, optimization, and quantification of deterrence into a unified modeling/simulation approach to potentially support a paradigm shift in an existing DHS program. ” 123

TRANSITION! T V C 124

Self-Organized Criticality • “Catastrophic failure potential of a tightly coupled system prone to cascading failures” • SOC is an emergent property – systems optimize for efficiency, but less resilience may result • Network topology – “scale free” 125

Exceedence Probability (EP) • Instead of p(something occuring), why not: p(something exceeding a threshold)? • q is a “resilience exponent” • Proxy for SOC of a network? 126

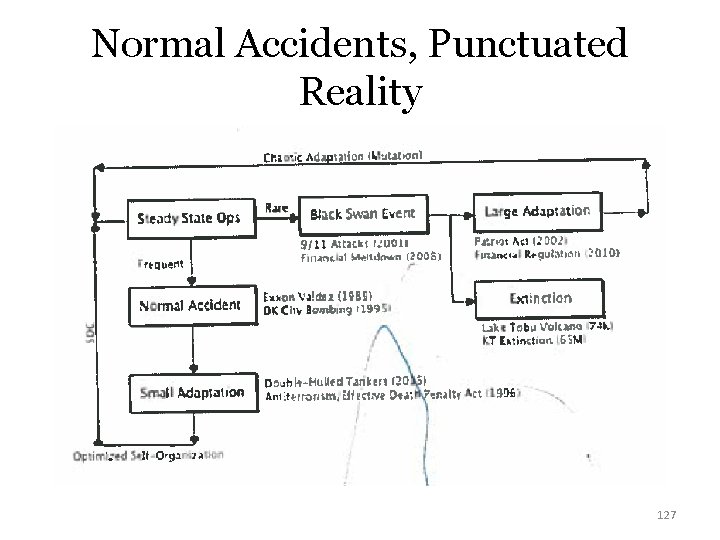

Normal Accidents, Punctuated Reality 127

Antifragility • N. N. Taleb - things that benefit from perturbations • Is not “resilience” • Applicability to CIKR? – “Organic-mechanical dichotomy” 128

“Shoulders of Giants” • Our work built on the many excellent contributions of other authors across various disciplines. • We could not have done this without their accomplishments! 129

- Slides: 129