EVENT DRIVEN CUBE MONITORING David Prime David Elliott

EVENT DRIVEN CUBE MONITORING. David Prime & David Elliott SQLBits 6

WHO WE ARE. Who we are – David Prime – Betfair Research – David Elliott – Information Management & Analytics Architect Betfair – – – Launched June 2000 around an exchange betting platform You can bet that an outcome will happen (back) or that it won't happen (lay). You can choose the odds at which you want to play. You can bet whilst the game is in play. You can play on a range of products and games other than sports wagering What this means in terms of data – Bets: >5 million bets daily – Latency: 99. 9% bets processed <1 sec – More trades than all of the European stock exchanges combined

OUR OBJECTIVES. Background – – – Early look at Stream. Insight in Deep-dive Architectural direction: EDSOA Real Time requirements: Anti-Fraud, Legislation, Exposure Monitoring BI / OI Analytics API / Continuous ETL Cube Monitoring – A good use-case and an opportunity to assess using SI with the rest of the BI stack – Provide light-weight aggregated usage information for the business Real-Time – Alerts: name and shame greedy users, discover broken code – Aggregate session data – Using time windows to run complex monitoring scenarios

COMPLEX EVENT PROCESSING. Betfair is awash with events Your online business probably is too

COMPLEX EVENT PROCESSING.

STREAMINSIGHT. What is Stream. Insight? New Integrated Fast Improving

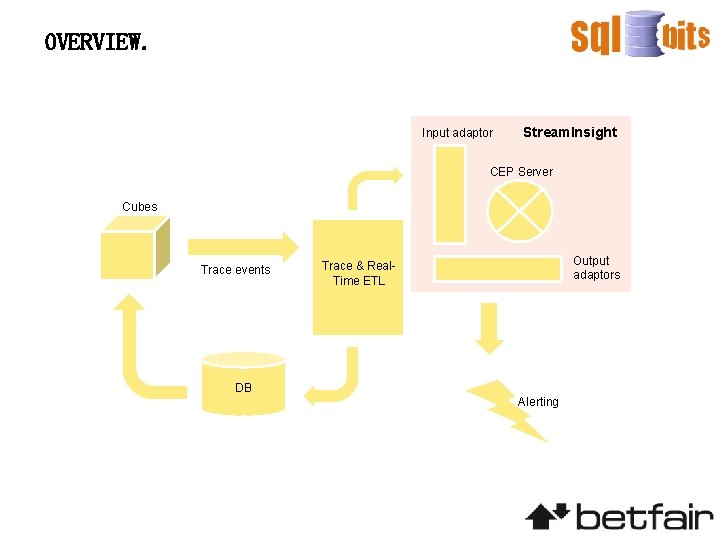

OVERVIEW. Input adaptor Stream. Insight CEP Server Cubes Trace events DB Output adaptors Trace & Real. Time ETL Alerting

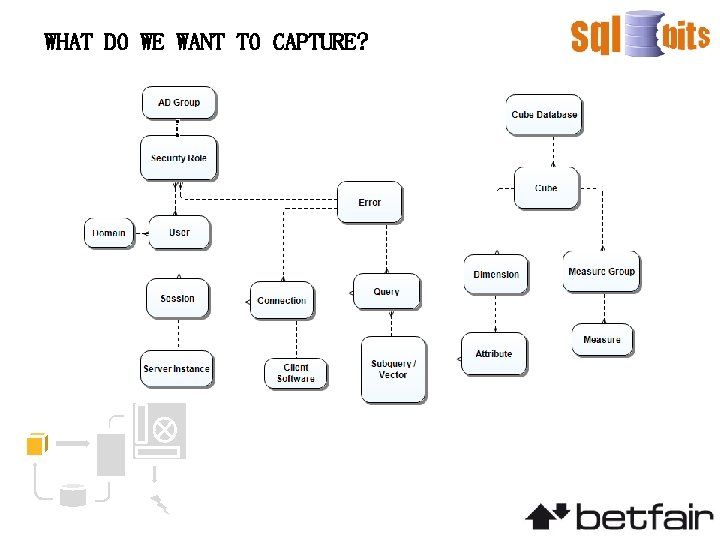

WHAT DO WE WANT TO CAPTURE?

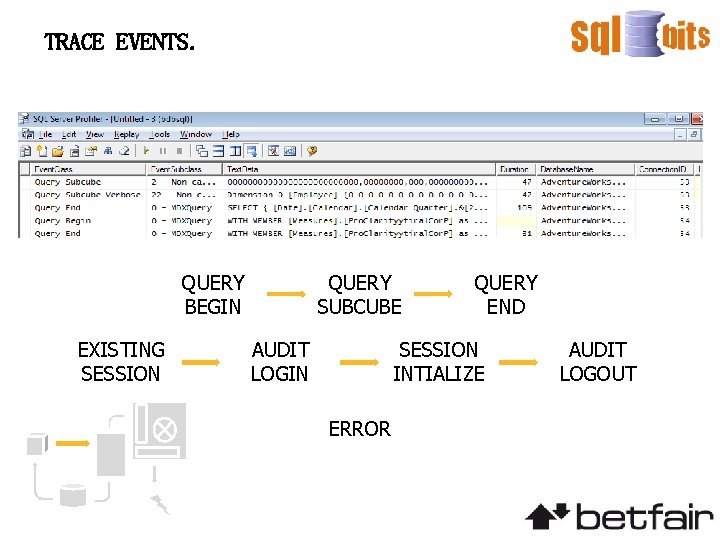

TRACE EVENTS. QUERY BEGIN EXISTING SESSION QUERY SUBCUBE AUDIT LOGIN QUERY END SESSION INTIALIZE ERROR AUDIT LOGOUT

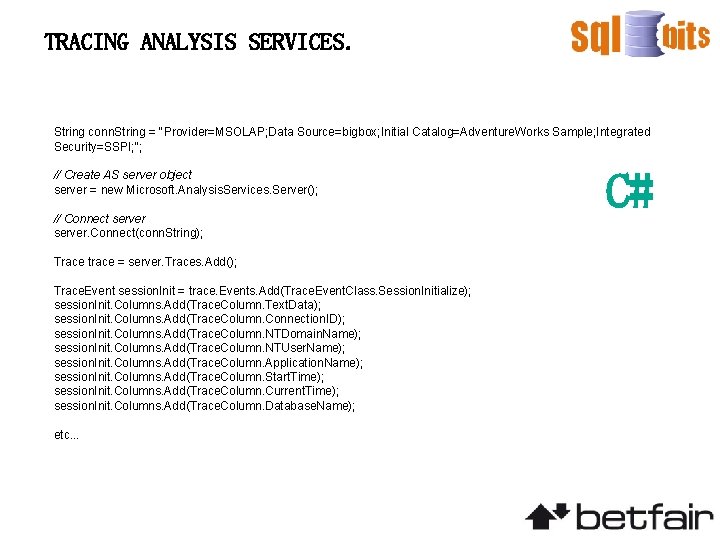

TRACING ANALYSIS SERVICES. String conn. String = "Provider=MSOLAP; Data Source=bigbox; Initial Catalog=Adventure. Works Sample; Integrated Security=SSPI; "; // Create AS server object server = new Microsoft. Analysis. Services. Server(); // Connect server. Connect(conn. String); Trace trace = server. Traces. Add(); Trace. Event session. Init = trace. Events. Add(Trace. Event. Class. Session. Initialize); session. Init. Columns. Add(Trace. Column. Text. Data); session. Init. Columns. Add(Trace. Column. Connection. ID); session. Init. Columns. Add(Trace. Column. NTDomain. Name); session. Init. Columns. Add(Trace. Column. NTUser. Name); session. Init. Columns. Add(Trace. Column. Application. Name); session. Init. Columns. Add(Trace. Column. Start. Time); session. Init. Columns. Add(Trace. Column. Current. Time); session. Init. Columns. Add(Trace. Column. Database. Name); etc. . . C#

TRACING ANALYSIS SERVICES. trace. Update(); //engage the traces Trace. Event. Handler on. Trace. Event = new Trace. Event. Handler(On. Trace. Event); Trace. Stopped. Event. Handler on. Trace. Stopped = new Trace. Stopped. Event. Handler(On. Trace. Stopped); trace. On. Event += new Trace. Event. Handler(On. Trace. Event); trace. Stopped += new Trace. Stopped. Event. Handler(On. Trace. Stopped); trace. Start(); C#

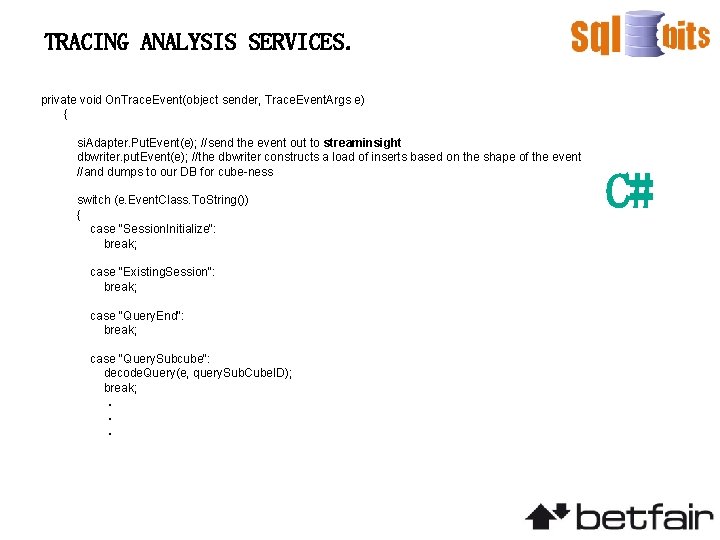

TRACING ANALYSIS SERVICES. private void On. Trace. Event(object sender, Trace. Event. Args e) { si. Adapter. Put. Event(e); //send the event out to streaminsight dbwriter. put. Event(e); //the dbwriter constructs a load of inserts based on the shape of the event //and dumps to our DB for cube-ness switch (e. Event. Class. To. String()) { case "Session. Initialize": break; case "Existing. Session": break; case "Query. End": break; case "Query. Subcube": decode. Query(e, query. Sub. Cube. ID); break; . . . C#

NOW WHERE?

STREAMINSIGHT. //filters out the events we want Cep. Stream<Query. Summ> query. Summ = from e in producer. Alter. Event. Duration(e => Time. Span. From. Minutes(1)) where e. event. Class == "Query. End" select new Query. Summ { user. Name = e. user. Name, all. Time = e. duration, cpu. Time = e. cpu. Time, start. Time = e. start. Time, end. Time = e. end. Time }; //detects slow queries so we can go and moan at the user Cep. Stream<Slow. Alert> slow. Producer = from e in query. Summ where e. all. Time. Milliseconds > 1000 select new Slow. Alert { user. Name = e. user. Name, all. Time = e. all. Time, cpu. Time = e. cpu. Time, start. Time = e. start. Time, end. Time = e. end. Time }; LINQ

STREAMINSIGHT. //filters out the events we want Cep. Stream<Query. Summ> query. Summ = from e in producer. Alter. Event. Duration(e => Time. Span. From. Minutes(1)) where e. event. Class == "Query. End" select new Query. Summ { user. Name = e. user. Name, all. Time = e. duration, cpu. Time = e. cpu. Time, start. Time = e. start. Time, end. Time = e. end. Time }; //detects slow queries so we can go and moan at the user Cep. Stream<Slow. Alert> slow. Producer = from e in query. Summ where e. all. Time. Milliseconds > 1000 select new Slow. Alert { user. Name = e. user. Name, all. Time = e. all. Time, cpu. Time = e. cpu. Time, start. Time = e. start. Time, end. Time = e. end. Time }; LINQ

AND THEN? Output adaptors are nice • Nagios • Splunk • Homebrew • MORE CUBES : )

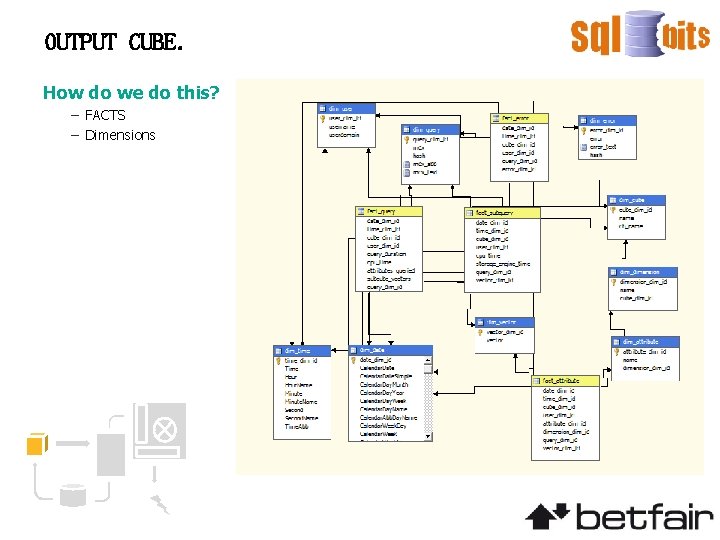

OUTPUT CUBE. How do we do this? – FACTS – Dimensions

DEMO.

NEXT STEPS: SECURITY. Security Monitoring / Auditing – Alerting on suspicious querying activity / disallowed querying 4 Alerting 4 Reporting 4 Analysis – Provide an audit trail of querying on sensitive attributes – Regulatory Reporting – Dynamic Security

NEXT STEPS: PERFORMANCE. Performance Recommendations – Provide data to enable assessment of ‘hot’ areas within the cubes 4 Alerting 4 Reporting 4 Analysis – Feed into third party monitoring tools – Identify heavy users – Identify poorly performing queries for tuning – Automatic aggregation generation

EXTENDING THE FRAMEWORK.

QUESTIONS.

- Slides: 22