Evaluation Practical Evaluation Michael Quinn Patton Systematic collection

- Slides: 36

Evaluation

Practical Evaluation Michael Quinn Patton

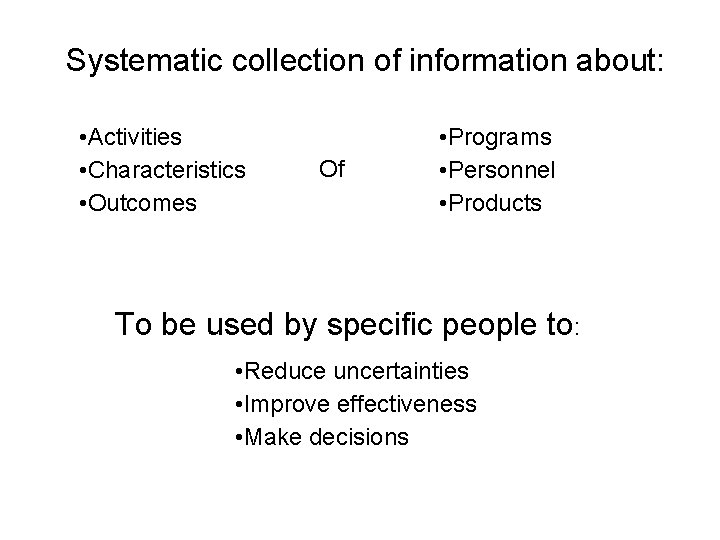

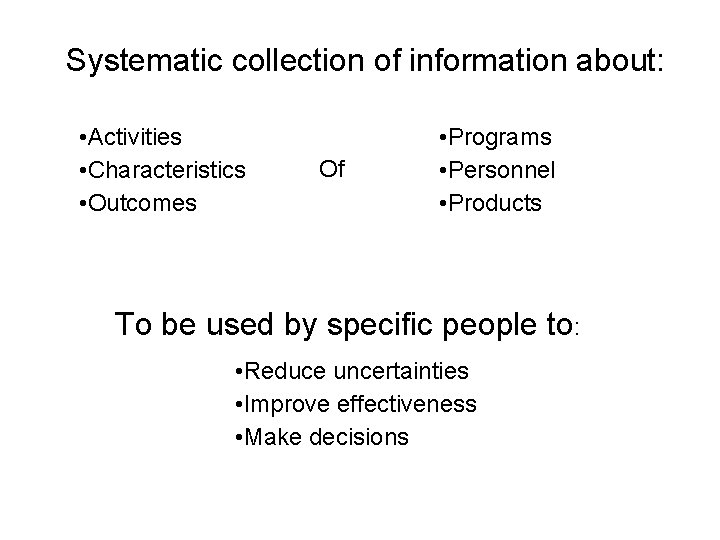

Systematic collection of information about: • Activities • Characteristics • Outcomes Of • Programs • Personnel • Products To be used by specific people to: • Reduce uncertainties • Improve effectiveness • Make decisions

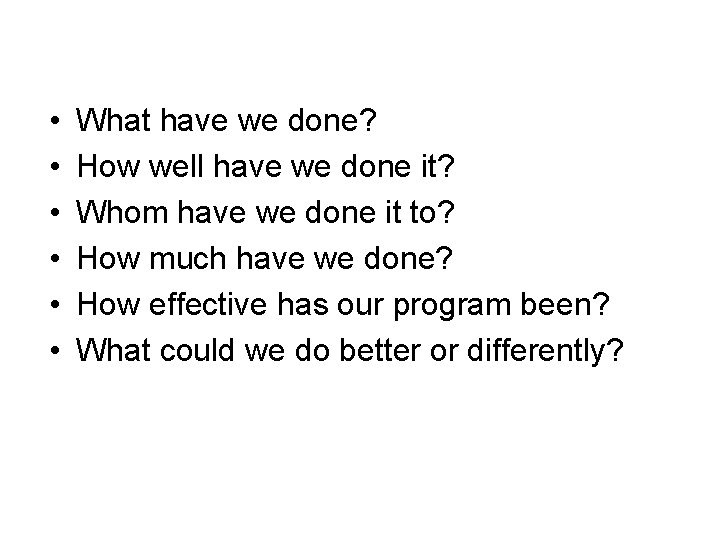

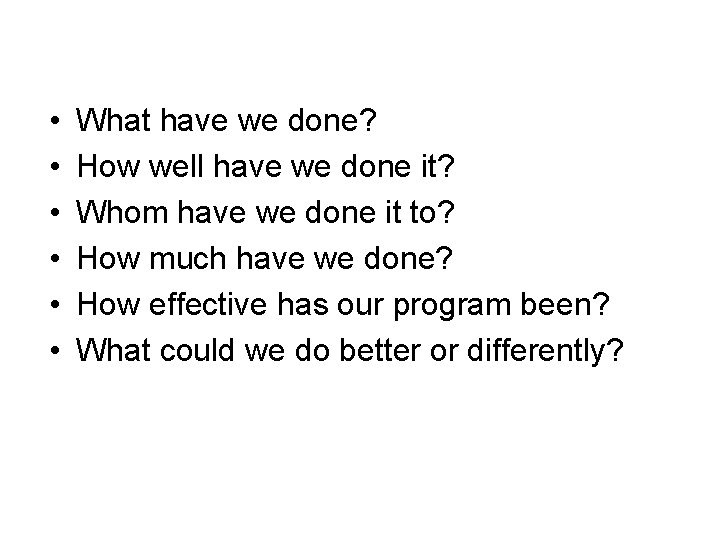

• • • What have we done? How well have we done it? Whom have we done it to? How much have we done? How effective has our program been? What could we do better or differently?

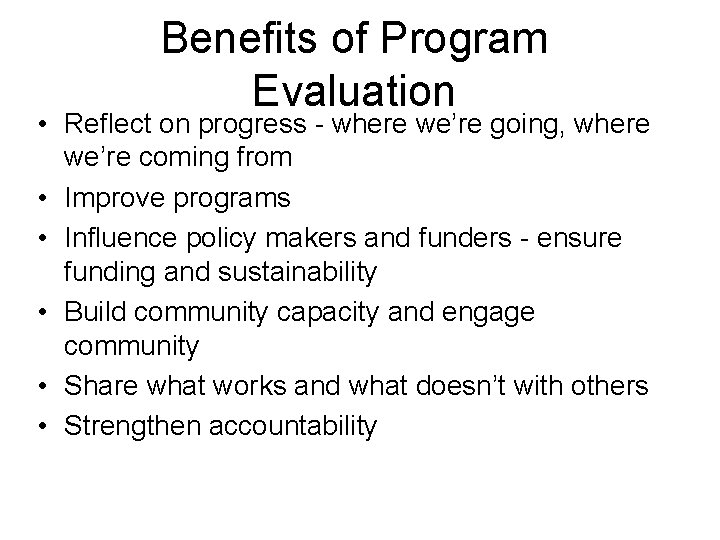

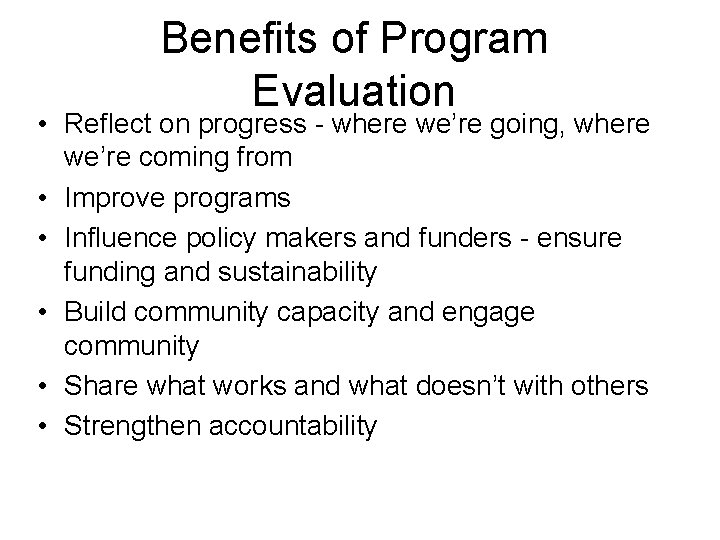

Benefits of Program Evaluation • Reflect on progress - where we’re going, where we’re coming from • Improve programs • Influence policy makers and funders - ensure funding and sustainability • Build community capacity and engage community • Share what works and what doesn’t with others • Strengthen accountability

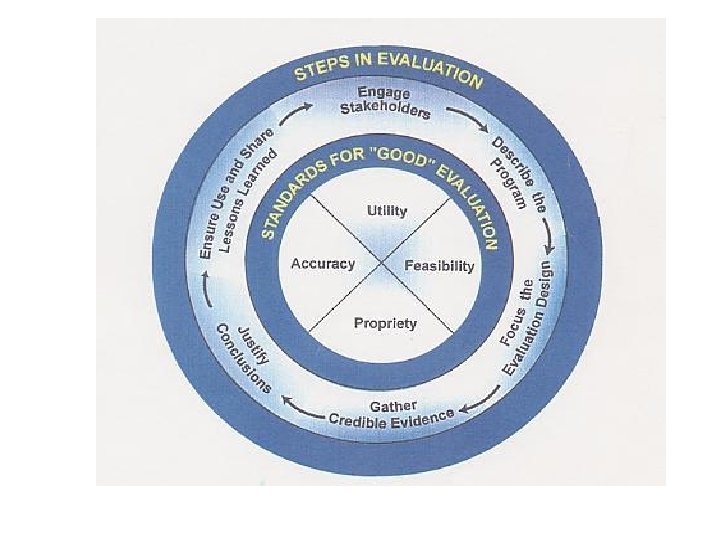

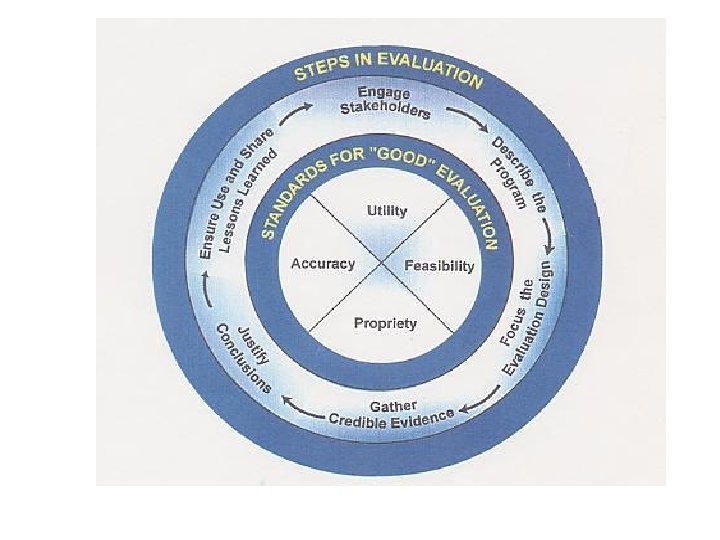

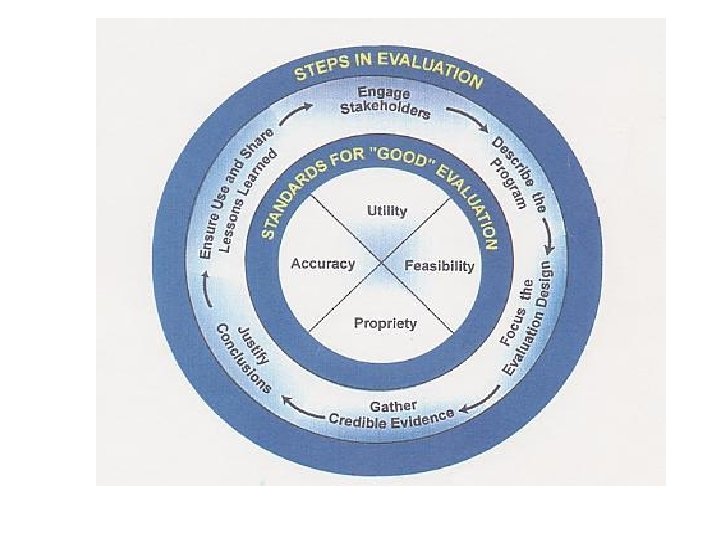

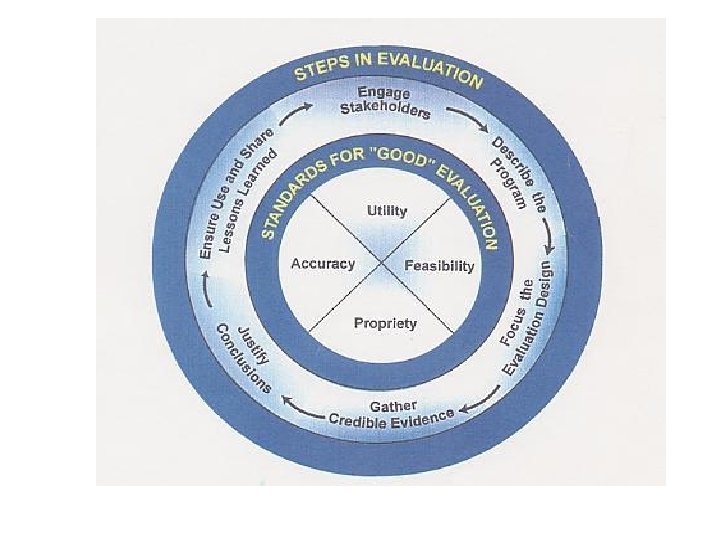

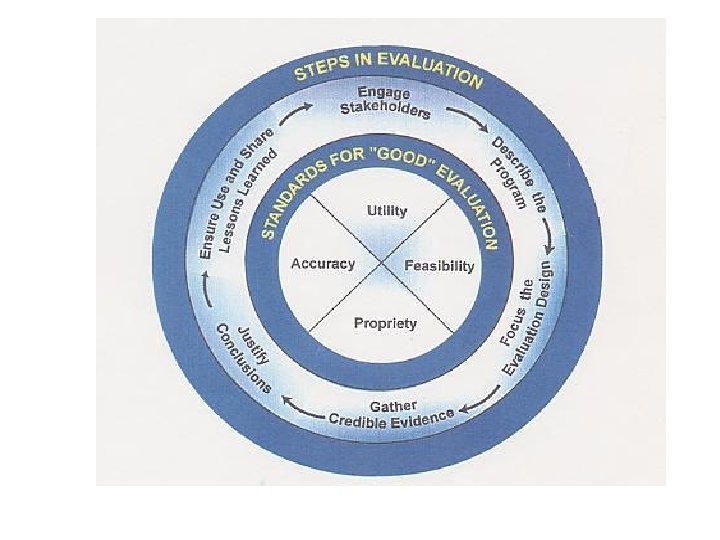

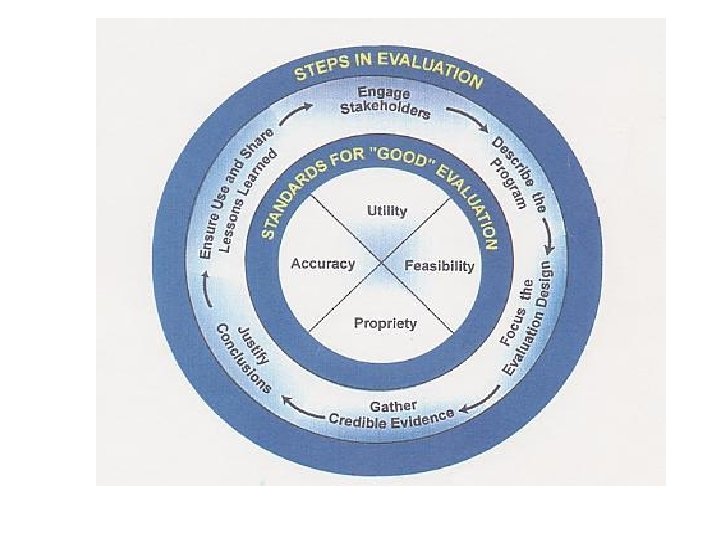

4 Standards: • • Useful Feasible Proper Accurate Joint Committee on Standards of Educational Evaluation, 1994

Useful • Will results be used to improve practice or allocate resources better? • Will the evaluation answer stakeholders’ questions?

Feasible • Does the political environment support this evaluation? • Do you have personnel, time, and monetary resources to do it in house? • Do you have resources to contract with outside consultants? • If you can’t evaluate all parts of the program, what parts can you evaluate?

Proper • Is your approach fair and ethical? • Can you keep individual responses confidential?

Accurate • Are you using appropriate data collecting methods? • Have interviewers been trained if you are using more than one? • Have survey questions been tested for reliability and validity?

Step 1: Engage Stakeholders

Those involved in program operations – administrators – managers – staff – contractors – sponsors – collaborators – coalition partners – funding officials

Those served or affected by the program – clients – family members – neighborhood organizations – academic institutions – elected officials – advocacy groups – professional organizations – skeptics – opponents

Primary intended users of the evaluation – Those in a position to do or decide something regarding the program. – In practice, usually a subset of all stakeholders already listed.

Step 2: Describe the Program

• Mission • Need • Logic model components • inputs • outcomes • Objectives • outcome • process • Context • setting • history • environmental influences

Step 3: Focus the Design

Goals of Focusing • Evaluation assesses issues of greatest concern to stakeholder - and at the same time: • Evaluation using time and resources as efficiently as possible

Questions to be answered to focus the evaluation: • What questions will be answered? (i. e. what is the real purpose? What outcomes will be addressed? ) • What process will be followed? • What methods will be used to collect, analyze, and interpret the data? • Who will perform the activities? • How will the results be disseminated?

Step 4: Gather Credible Evidence

Data must be credible to the evaluation audience • Data gathering methods are reliable and valid • Data analysis is done by credible personnel • “triangulation” - applying different kinds and data to answer the question

Indicators • Translate general program concepts into specific measures • Samples of indicators • • • participation rates client satisfaction changes in behavior or community norms health status quality of life expenditures

Data Sources • Routine statistical reports • census • vital stats • NHANES • Program Reports • log sheets • service utilization • personnel time sheets • Special Surveys

Sources of Data • People • • participants staff key informants representatives of advocacy groups • Documents • meeting minutes • media reports • surveillance summaries • Direct Observation

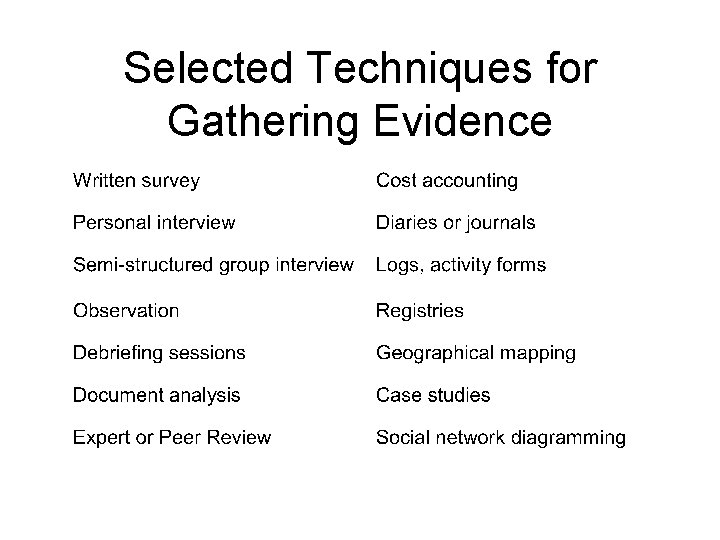

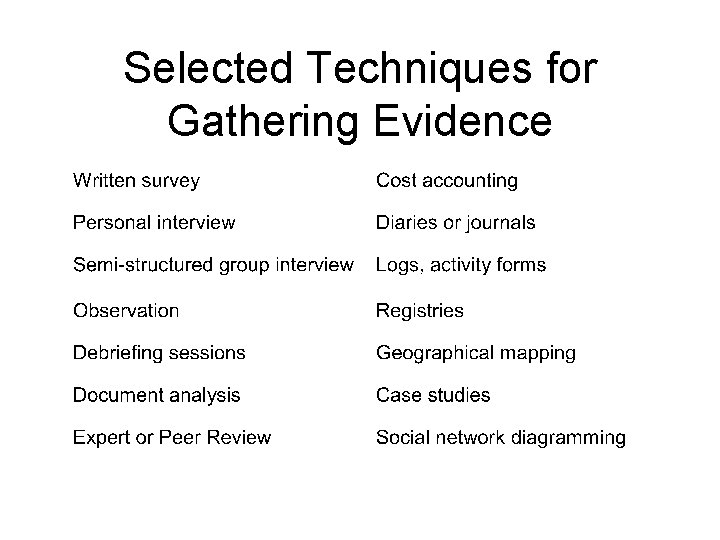

Selected Techniques for Gathering Evidence

Step 5: Justify Conclusions

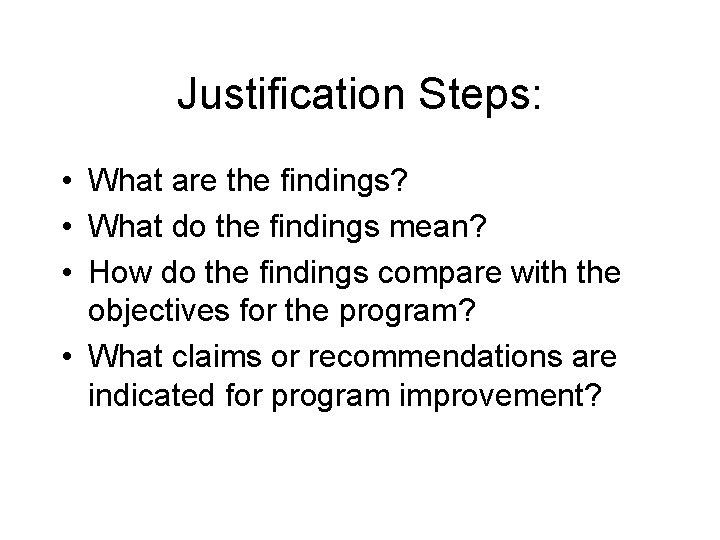

Justification Steps: • What are the findings? • What do the findings mean? • How do the findings compare with the objectives for the program? • What claims or recommendations are indicated for program improvement?

Step 6: Ensure Use and Share Lessons Learned

“Evaluations that are not used or inadequately disseminated are simply not worth doing. ” “The likelihood that the evaluation findings will be used increases through deliberate planning, preparation, and follow-up. ” Practical Evaluation of Public Health Programs, Public Health Training Programs

Activities to Promote Use and Dissemination: • Designing the evaluation from the start to achieve intended uses • Preparing stakeholders for eventual use by discussing how different findings will effect program planning • Scheduling follow-up meetings with primary intended users • Disseminating results using targeted communication strategies

Group Work • Describe the ideal stakeholder group for your project evaluation. • What questions will be answered? (include the questions inherent in your objectives) • What data will be collected, analyzed and interpreted? – How will this get done & by whom? • How will you disseminate your findings?