Evaluation of Infini Band for CBM experiment Sergey

Evaluation of Infini. Band for CBM experiment Sergey Linev GSI, Darmstadt, Germany

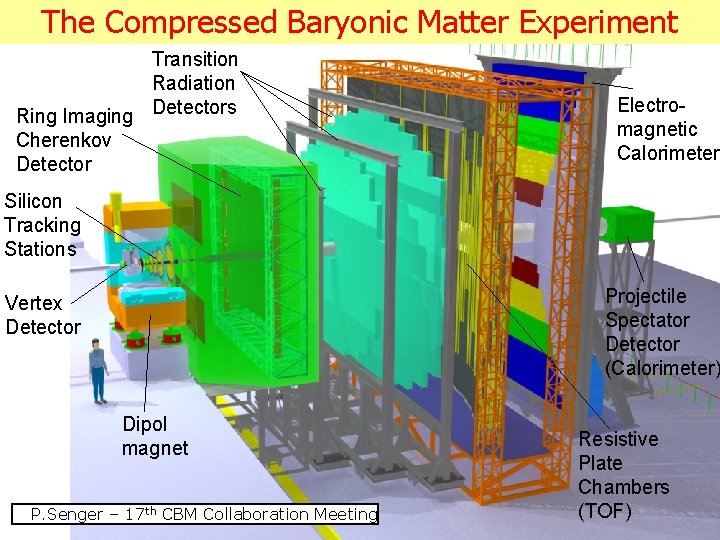

The Compressed Baryonic Matter Experiment Transition Radiation Ring Imaging Detectors Cherenkov Detector Electromagnetic Calorimeter Silicon Tracking Stations Projectile Spectator Detector (Calorimeter) Vertex Detector Dipol magnet S. Linev, Evaluation of Infini. Band th P. Senger 15. 01. 2013– 17 CBM Collaboration for. Meeting CBM experiment Resistive Plate Chambers (TOF) 2

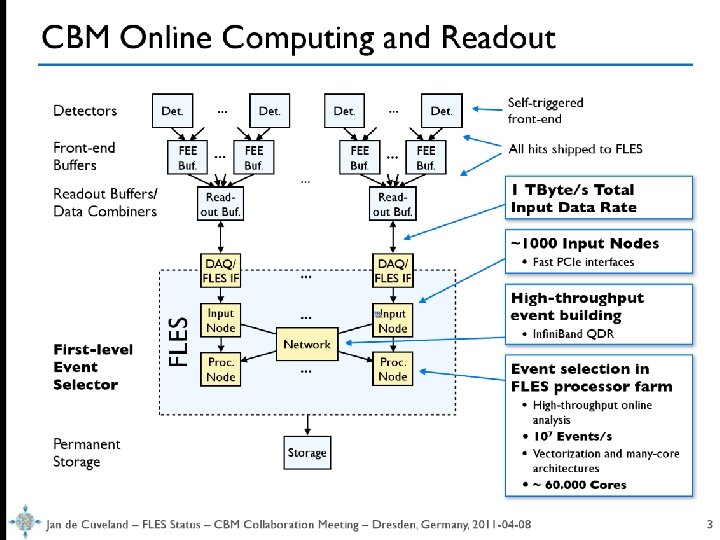

DABC 15. 01. 2013 CBM experiment Sergey Linev http: //dabc. gsi. de 3

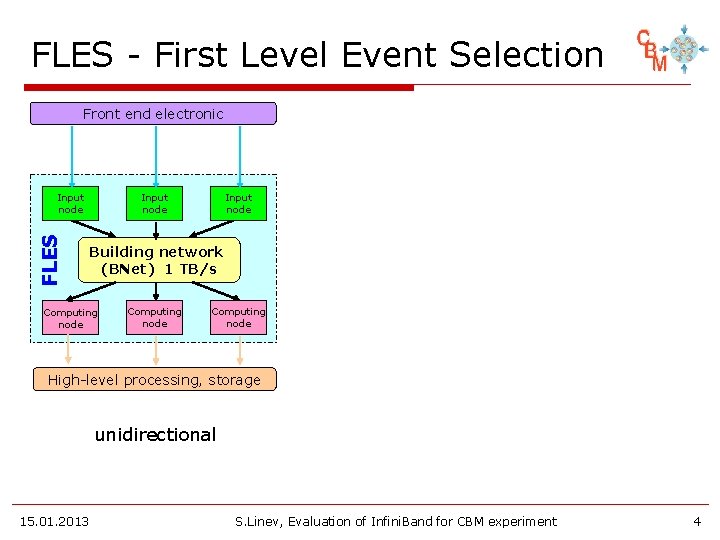

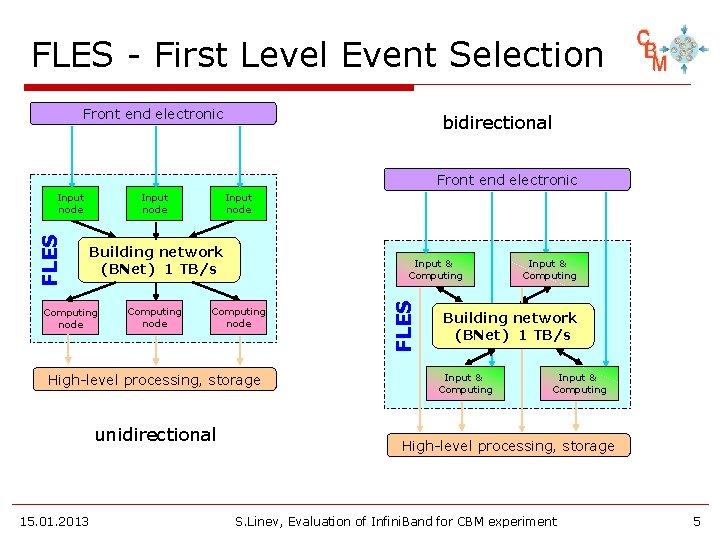

FLES - First Level Event Selection Front end electronic FLES Input node Building network (BNet) 1 TB/s Computing node High-level processing, storage unidirectional 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 4

FLES - First Level Event Selection Front end electronic bidirectional Front end electronic Input node Building network (BNet) 1 TB/s Computing node Input & Computing node High-level processing, storage unidirectional 15. 01. 2013 FLES Input node Input & Computing Building network (BNet) 1 TB/s Input & Computing High-level processing, storage S. Linev, Evaluation of Infini. Band for CBM experiment 5

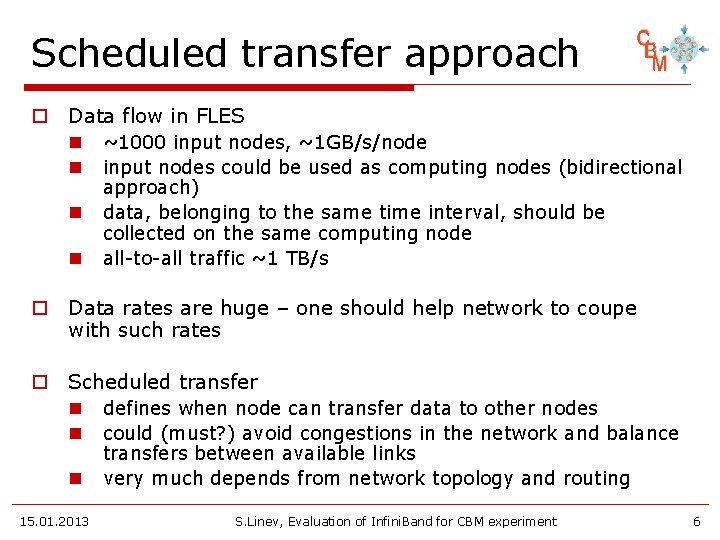

Scheduled transfer approach o Data flow in FLES n ~1000 input nodes, ~1 GB/s/node n input nodes could be used as computing nodes (bidirectional n n approach) data, belonging to the same time interval, should be collected on the same computing node all-to-all traffic ~1 TB/s o Data rates are huge – one should help network to coupe with such rates o Scheduled transfer n defines when node can transfer data to other nodes n could (must? ) avoid congestions in the network and balance n 15. 01. 2013 transfers between available links very much depends from network topology and routing S. Linev, Evaluation of Infini. Band for CBM experiment 6

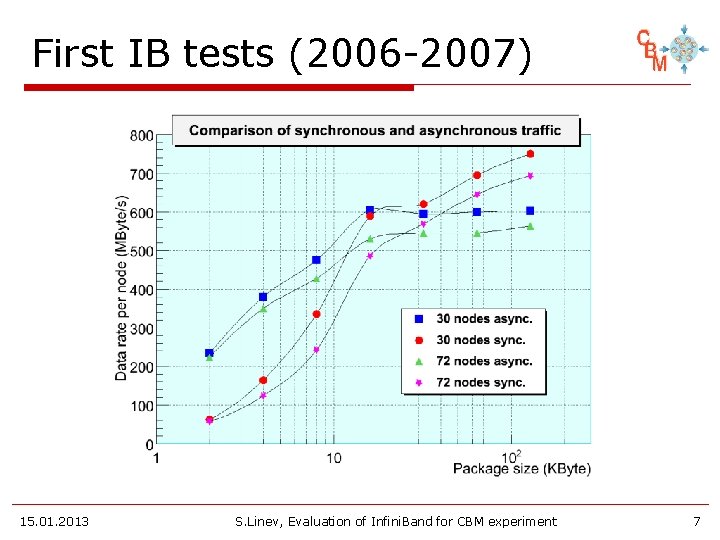

First IB tests (2006 -2007) 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 7

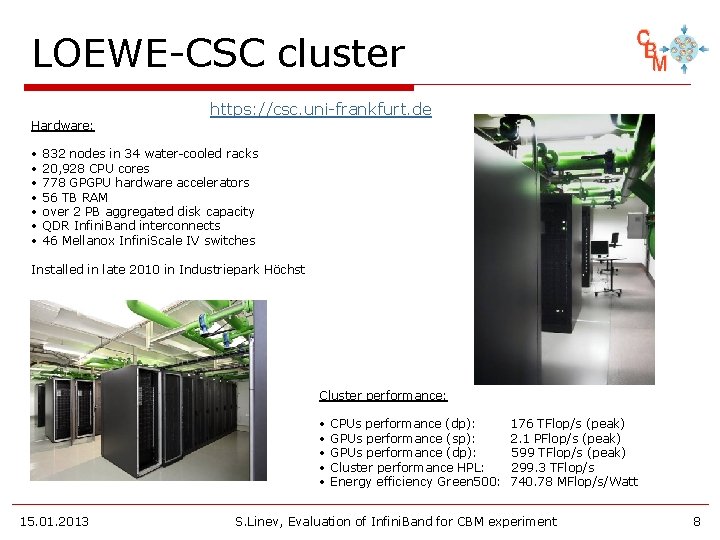

LOEWE-CSC cluster Hardware: • • https: //csc. uni-frankfurt. de 832 nodes in 34 water-cooled racks 20, 928 CPU cores 778 GPGPU hardware accelerators 56 TB RAM over 2 PB aggregated disk capacity QDR Infini. Band interconnects 46 Mellanox Infini. Scale IV switches Installed in late 2010 in Industriepark Höchst Cluster performance: • • • 15. 01. 2013 CPUs performance (dp): GPUs performance (sp): GPUs performance (dp): Cluster performance HPL: Energy efficiency Green 500: 176 TFlop/s (peak) 2. 1 PFlop/s (peak) 599 TFlop/s (peak) 299. 3 TFlop/s 740. 78 MFlop/s/Watt S. Linev, Evaluation of Infini. Band for CBM experiment 8

First results on LOEWE o Use OFED VERBs for test app o Point-to-point: n one-to-one n one-to-many n many-to-one 2. 75× 109 B/s 2. 88× 109 B/s 3. 18× 109 B/s o all-to-all scheduled transfer: n avoids congestion on receiving nodes n about 2. 1× 109 B/s/node n scales good up to 20 nodes n BUT - performance degrading with nodes increase o Same problem as before n should one take into account network topology? n LOEWE-CSC cluster uses ½ fat tree topology 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 9

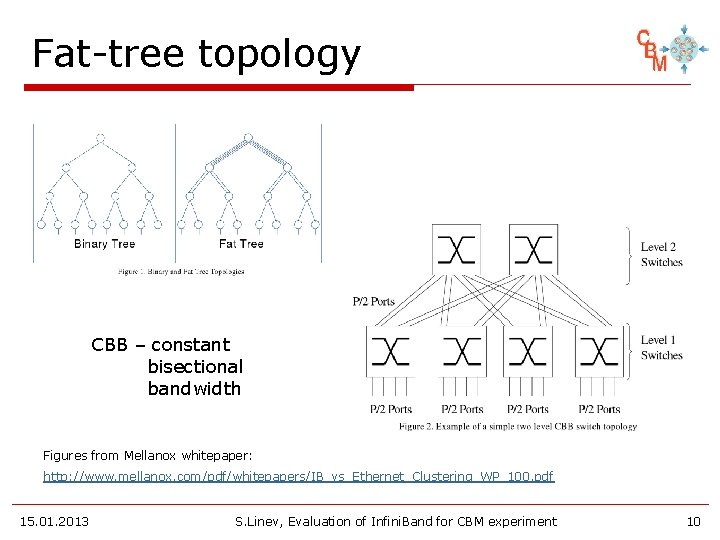

Fat-tree topology CBB – constant bisectional bandwidth Figures from Mellanox whitepaper: http: //www. mellanox. com/pdf/whitepapers/IB_vs_Ethernet_Clustering_WP_100. pdf 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 10

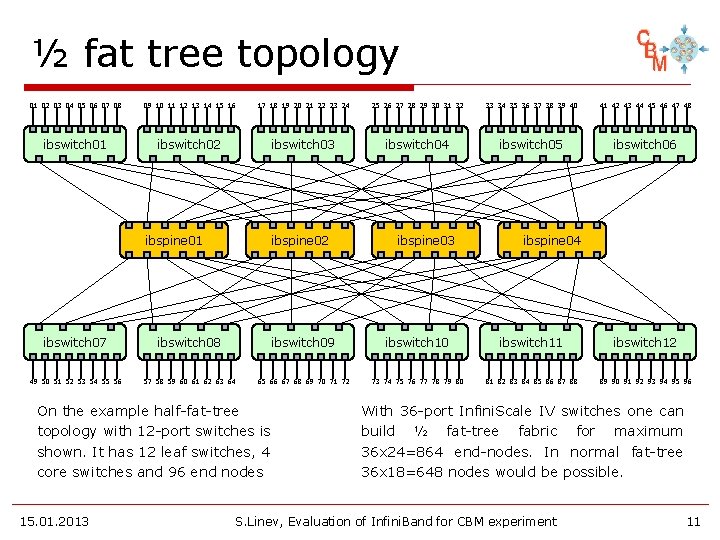

½ fat tree topology 01 02 03 04 05 06 07 08 09 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 ibswitch 01 ibswitch 02 ibswitch 03 ibswitch 04 ibswitch 05 ibswitch 06 ibspine 01 ibspine 02 ibspine 03 ibspine 04 ibswitch 07 ibswitch 08 ibswitch 09 ibswitch 10 ibswitch 11 ibswitch 12 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 On the example half-fat-tree topology with 12 -port switches is shown. It has 12 leaf switches, 4 core switches and 96 end nodes 15. 01. 2013 With 36 -port Infini. Scale IV switches one can build ½ fat-tree fabric for maximum 36 x 24=864 end-nodes. In normal fat-tree 36 x 18=648 nodes would be possible. S. Linev, Evaluation of Infini. Band for CBM experiment 11

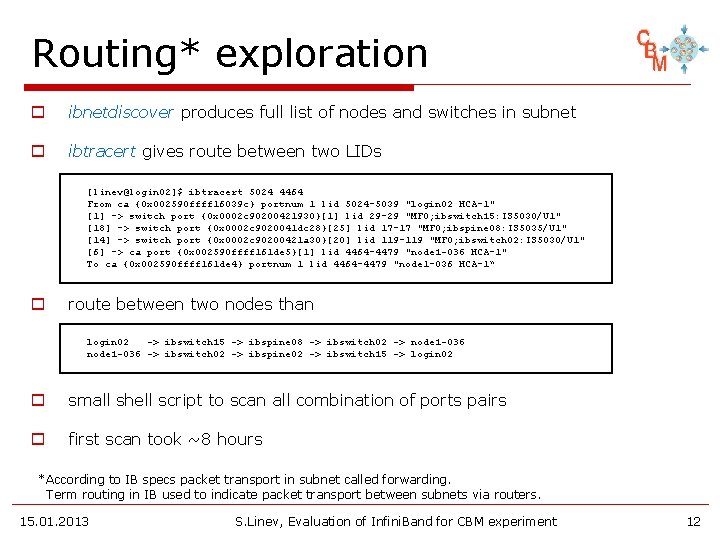

Routing* exploration o ibnetdiscover produces full list of nodes and switches in subnet o ibtracert gives route between two LIDs [linev@login 02]$ ibtracert 5024 4464 From ca {0 x 002590 ffff 16039 c} portnum 1 lid 5024 -5039 "login 02 HCA-1" [1] -> switch port {0 x 0002 c 90200421930}[1] lid 29 -29 "MF 0; ibswitch 15: IS 5030/U 1" [18] -> switch port {0 x 0002 c 9020041 dc 28}[25] lid 17 -17 "MF 0; ibspine 08: IS 5035/U 1" [14] -> switch port {0 x 0002 c 90200421 a 30}[20] lid 119 -119 "MF 0; ibswitch 02: IS 5030/U 1" [6] -> ca port {0 x 002590 ffff 161 de 5}[1] lid 4464 -4479 "node 1 -036 HCA-1" To ca {0 x 002590 ffff 161 de 4} portnum 1 lid 4464 -4479 "node 1 -036 HCA-1“ o route between two nodes than login 02 -> ibswitch 15 -> ibspine 08 -> ibswitch 02 -> node 1 -036 -> ibswitch 02 -> ibspine 02 -> ibswitch 15 -> login 02 o small shell script to scan all combination of ports pairs o first scan took ~8 hours *According to IB specs packet transport in subnet called forwarding. Term routing in IB used to indicate packet transport between subnets via routers. 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 12

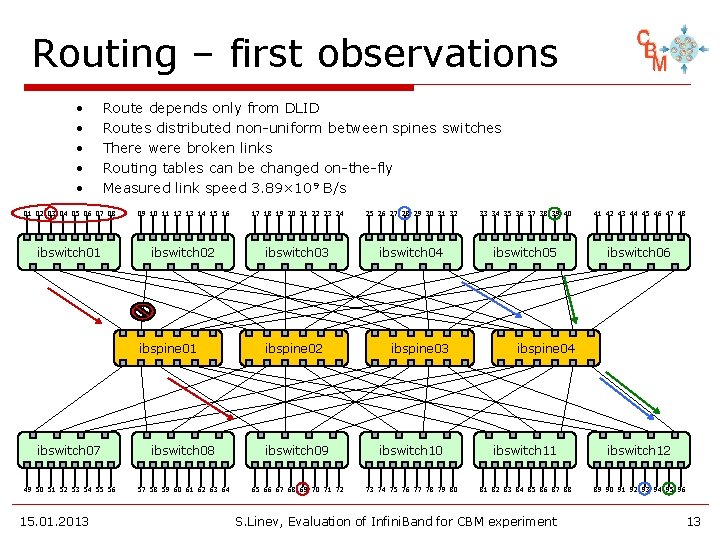

Routing – first observations • • • Route depends only from DLID Routes distributed non-uniform between spines switches There were broken links Routing tables can be changed on-the-fly Measured link speed 3. 89× 109 B/s 01 02 03 04 05 06 07 08 09 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 ibswitch 01 ibswitch 02 ibswitch 03 ibswitch 04 ibswitch 05 ibswitch 06 ibspine 01 ibspine 02 ibspine 03 ibspine 04 ibswitch 07 ibswitch 08 ibswitch 09 ibswitch 10 ibswitch 11 ibswitch 12 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 13

Subnet manager o Runs on one of the core switches n can be configured on any host PC o Tasks (not all) of subnet manager are: n discover nodes in the net n assign LID (Local IDentifier) to the ports n set routing tables for the switches o According to IB specs, route between two ports defined by source (SLID) and destination (DLID) identifiers o Open questions – easy possibility of: n fixed LID assignment? n fixed (regular) routing tables? 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 14

Routing – properties o Using obtained routing tables, one can estimate number of congestions for different kind of traffics o In ½ fat tree congestion means that more than 2 transfer goes via the same link o For simple round-robin transfer n n n o One could try to optimize schedule n o 1. 8 transfer/link average, but 6 transfer at maximum per link all the time more than 10% of transfers with congestions take into account routing tables to avoid congestions Main problem n n n 15. 01. 2013 there are many physical paths between two nodes but only single path is available for node 1 -> node 2 transfer no real optimization is possible S. Linev, Evaluation of Infini. Band for CBM experiment 15

Multiple LIDs o Problems with single LID n n there is always the only route between two nodes no possibility to optimize transfers, doing routing between nodes via different spines ourselves o Solution – LMC (LID Mask Control) n When LMC=4, lower 4 bits of host LID are reserved for routing n Subnet Manager can assign up to 16 routes to that node n Not always smoothly works o Problem – scan all these routes n 8 h x 16 = ~5 days o Solution n modified version of ibtracert program with cashing of n 15. 01. 2013 intermediate tables and excluding scan of similar routes reduce scanning time to about 4 minutes S. Linev, Evaluation of Infini. Band for CBM experiment 16

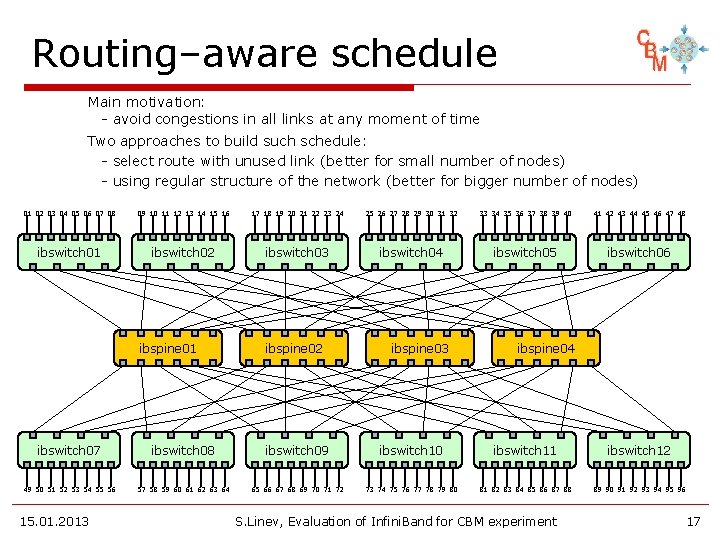

Routing–aware schedule Main motivation: - avoid congestions in all links at any moment of time Two approaches to build such schedule: - select route with unused link (better for small number of nodes) - using regular structure of the network (better for bigger number of nodes) 01 02 03 04 05 06 07 08 09 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 ibswitch 01 ibswitch 02 ibswitch 03 ibswitch 04 ibswitch 05 ibswitch 06 ibspine 01 ibspine 02 ibspine 03 ibspine 04 ibswitch 07 ibswitch 08 ibswitch 09 ibswitch 10 ibswitch 11 ibswitch 12 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 17

ib-test application o implemented with dabc 2 (beta quality) o dabc 2 n multithreaded application environment n classes to working with OFED verbs n udp-based command channel between nodes n configuration and startup of multi-node application n used as DAQ framework in many CBM beam tests o ib-test n master-slave architecture n all actions are driven by master node n all-to-all connectivity n time synchronization with master n scheduled transfers with specified rate n transfers statistic https: //subversion. gsi. de/dabc/trunk/applications/ib-test 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 18

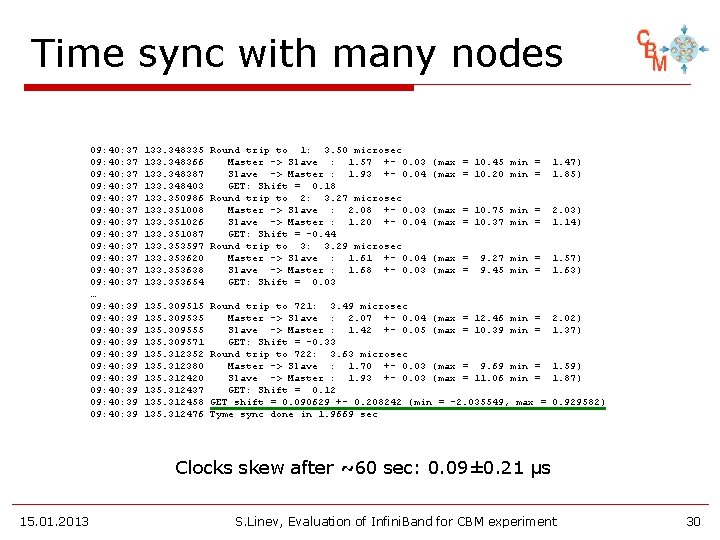

Time synchronization o Scheduled transfer means submit send/receive operations at predefined time o With 2 x 109 Bytes/s and 1 MB buffers one requires time precision of several µs o One can use small IB round-trip packet, which should have very small latency o On LOEWE-CSC cluster such round-trip packet takes about 3. 5 µs. Measuring time on both nodes, one can calculate time shift and compensate it o Excluding 30% of max. variation due to system activity, one can achieve precision below 1 µs 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 19

all-to-all IB performance o In May 2011 before planned cluster shutdown I get about 4 hours for IB performance tests o By CPU load 774 nodes were selected for tests o different transfer rates were tested n 1. 5 x 109 B/s/node – 0. 8% packets skipped n 1. 6 x 109 B/s/node – 4. 4% packets skipped n 0. 5 x 109 B/s/node – with skip disabled o Means at maximum: 1. 25 x 1012 B/s o Main problem here: skipped transfers and how one could avoid them 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 20

Skipped transfers o Already with first tests on 150 -200 nodes I encounter a problem, that some transfers were not completed in reasonable time (~100 ms) o Simple guess – there was other traffic n first tests were performed parallel to other jobs o Very probable, that physical-layer errors and many retransmission also causing that problem o To coupe with such situation, simple skip was implemented – when several transfers to the some node are hanging, following transfers just skipped o Would it be better to use unreliable transport here? 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 21

IB + GPU o Infini. Band is just transport n one need to deliver data to computing entity for data analysis and selection o All LOEWE-CSC nodes equipped with GPUs n use GPU as data sink for transfer n use GPU also as source of data o GPU -> host -> IB -> host -> GPU o With small 4 x nodes setup n 1. 1 GB/s/node for all-to-all traffic pattern 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 22

Outlook and plans o MPI performance o Use of unreliable connections (UC) o RDMA to GPU memory n GPU -> IB -> GPU? n NVIDIA GPUDirect? o Multicast performance/reliability o Subnet manager control 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 23

Conclusion o One must take into account IB fabric topology o Only with scheduling one could achieve 70 -80% of available bandwidth o Nowadays IB fulfill CBM requirements o A lot of work need to be done before real system will run 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 24

Wishlist for Mellanox o By-time execution n perform operation not immediately but at specified time o operation cancellation n how one could remove submitted operation from the queues o No LMC for switch ports n waste of very limited address space o RDMA-completion signaling for slave side o How 36 -port switch build inside? 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 25

Thank you! 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 26

BACKUP SLIDES 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 27

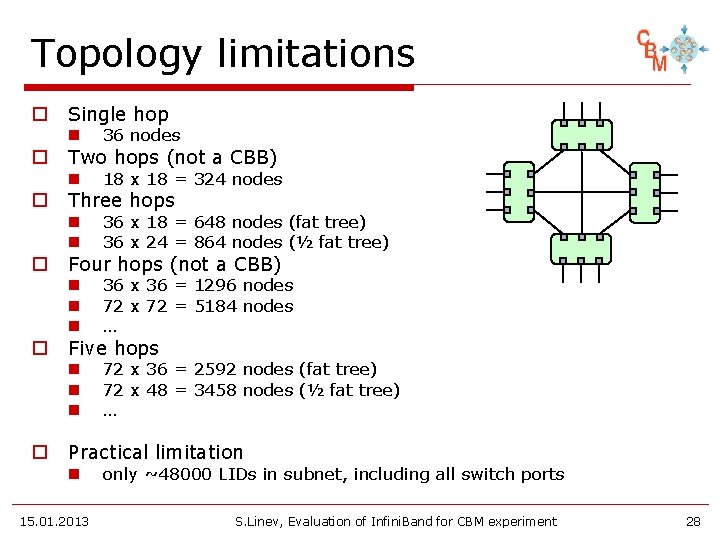

Topology limitations o o o Single hop n 36 nodes n 18 x 18 = 324 nodes n n 36 x 18 = 648 nodes (fat tree) 36 x 24 = 864 nodes (½ fat tree) n n n 36 x 36 = 1296 nodes 72 x 72 = 5184 nodes … n n n 72 x 36 = 2592 nodes (fat tree) 72 x 48 = 3458 nodes (½ fat tree) … Two hops (not a CBB) Three hops Four hops (not a CBB) Five hops Practical limitation n 15. 01. 2013 only ~48000 LIDs in subnet, including all switch ports S. Linev, Evaluation of Infini. Band for CBM experiment 28

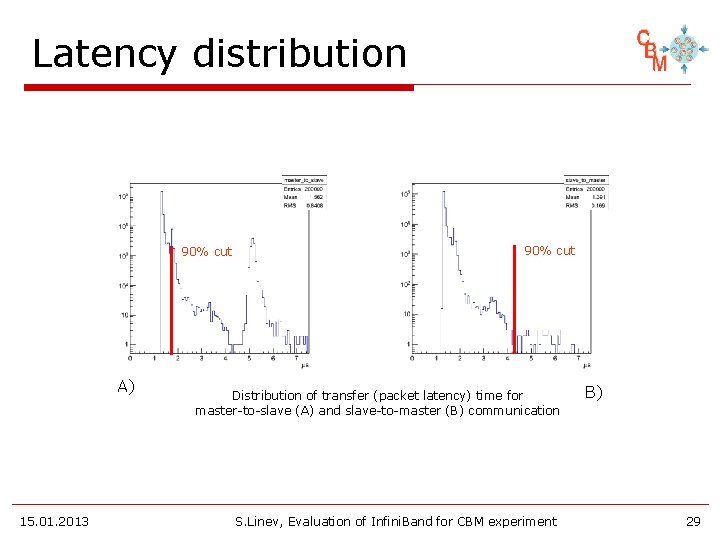

Latency distribution 90% cut A) 15. 01. 2013 90% cut Distribution of transfer (packet latency) time for master-to-slave (A) and slave-to-master (B) communication S. Linev, Evaluation of Infini. Band for CBM experiment B) 29

Time sync with many nodes 09: 40: 37 09: 40: 37 09: 40: 37 … 09: 40: 39 09: 40: 39 09: 40: 39 133. 348335 Round trip to 1: 3. 50 microsec 133. 348366 Master -> Slave : 1. 57 +- 0. 03 133. 348387 Slave -> Master : 1. 93 +- 0. 04 133. 348403 GET: Shift = 0. 18 133. 350986 Round trip to 2: 3. 27 microsec 133. 351008 Master -> Slave : 2. 08 +- 0. 03 133. 351026 Slave -> Master : 1. 20 +- 0. 04 133. 351087 GET: Shift = -0. 44 133. 353597 Round trip to 3: 3. 29 microsec 133. 353620 Master -> Slave : 1. 61 +- 0. 04 133. 353638 Slave -> Master : 1. 68 +- 0. 03 133. 353654 GET: Shift = 0. 03 135. 309515 135. 309535 135. 309555 135. 309571 135. 312352 135. 312380 135. 312420 135. 312437 135. 312458 135. 312476 (max = 10. 45 min = (max = 10. 20 min = 1. 47) 1. 85) (max = 10. 75 min = (max = 10. 37 min = 2. 03) 1. 14) (max = 1. 57) 1. 63) 9. 27 min = 9. 45 min = Round trip to 721: 3. 49 microsec Master -> Slave : 2. 07 +- 0. 04 (max = 12. 46 min = Slave -> Master : 1. 42 +- 0. 05 (max = 10. 39 min = GET: Shift = -0. 33 Round trip to 722: 3. 63 microsec Master -> Slave : 1. 70 +- 0. 03 (max = 9. 69 min = Slave -> Master : 1. 93 +- 0. 03 (max = 11. 06 min = GET: Shift = 0. 12 GET shift = 0. 090629 +- 0. 208242 (min = -2. 035549, max = Tyme sync done in 1. 9669 sec 2. 02) 1. 37) 1. 59) 1. 87) 0. 929582) Clocks skew after ~60 sec: 0. 09± 0. 21 µs 15. 01. 2013 S. Linev, Evaluation of Infini. Band for CBM experiment 30

- Slides: 30