Evaluating your EQUIP Initiative Helen King Objectives To

Evaluating your EQUIP Initiative Helen King

Objectives • To enable teams to develop a shared understanding of the purpose, use and stakeholders for evaluation; • To provide teams with a range of examples of approaches to evaluation; • To develop a platform on which teams might build their evaluation strategy.

Developing a Shared Understanding 2 minutes: Individually, write on post-its (one idea per post-it): • What is evaluation (for)? • Who is evaluation for?

What is evaluation? Evaluation is the purposeful gathering, analysis and discussion of evidence from relevant sources about the quality, worth and impact of provision, development or policy. (Murray Saunders)

What is evaluation for? • Evaluation for accountability eg measuring results or efficiency; identifying links between initiative objectives and behaviour change or development • Evaluation for development eg providing evaluative help to strengthen institutions or projects • Evaluation for knowledge eg obtaining a deeper understanding in some specific area or policy field

When to evaluate • Formative and summative When the cook tastes the soup, it is formative evaluation; when the dinner guest tastes the soup, it is summative evaluation. . • Formative evaluation for dissemination.

Who is evaluation for? Stakeholders • • • Students Academic staff Senior Management Your EQUIP team Others. . .

Developing a Shared Understanding • What is evaluation (for)? • Who is evaluation for? • 10 minutes: as a team, sort the ideas, prioritise and identify what is most important / meaningful to your team

Evaluation can help to. . . • Make sense of what is happening • Bridge projects to their wider contexts • Identify outcomes and impact • Identify by-products and serendipitous outcomes

Approaches to Evaluation • What is it that you wish to evaluate? • Your outcomes: – Product – Process (internal and external to the EQUIP team) • What data do you need to collect? And from whom? – Stakeholders – Evaluation methods • Quantitative • Qualitative

Prioritising / Focusing your Approach to Evaluation • Good Evaluation Method (GEM: Grant 2010) – Modest: knows what it’s trying to do and is focused – Ethical: attends to issues of consent, anonymity and power – Economical: right size for purpose, does not collect data that won’t be used • Uses of evaluation – will it: – – – Inform academic practice? Feed in to policy? Feed back in to your EQUIP initiative? Be filed away / not used? Other?

Models of Evaluation • RUFDATA • Appreciative Inquiry • Academy Framework

RUFDATA • RUFDATA is an acronym for the procedural decisions, which would shape evaluation activity in a dispersed office. They stand for the following elements. • Reasons and purposes • Uses • Focus • Data and evidence • Audience • Timing • Agency

• What are our Reasons and Purposes for evaluation? – These could be planning, managing, learning, developing, accountability • What will be our Uses of our evaluation? – They might be providing and learning from embodiments of good practice, staff development, strategic planning, PR, provision of data for management control • What will be the Foci for our evaluations? – These include the range of activities, aspects, emphasis to be evaluated, they should connect to the priority areas for evaluation

• What will be our Data and Evidence for our evaluations? – numerical, qualitative, observational, case accounts • Who will be the Audience for our evaluations? – Community of practice, commissioners, yourselves • What will be the Timing for our evaluations? – When should evaluation take place, coincidence with decision-making cycles, life cycle of projects • Who should be the Agency conducting the evaluations? – Yourselves, external evaluators, combination

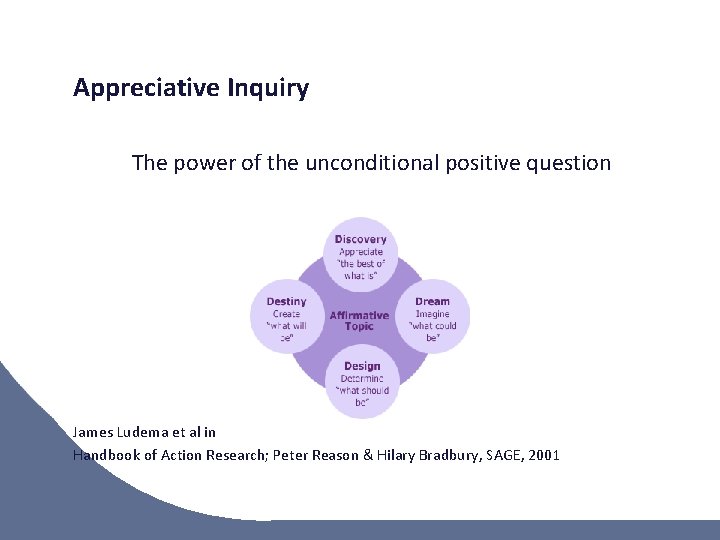

Appreciative Inquiry The power of the unconditional positive question James Ludema et al in Handbook of Action Research; Peter Reason & Hilary Bradbury, SAGE, 2001

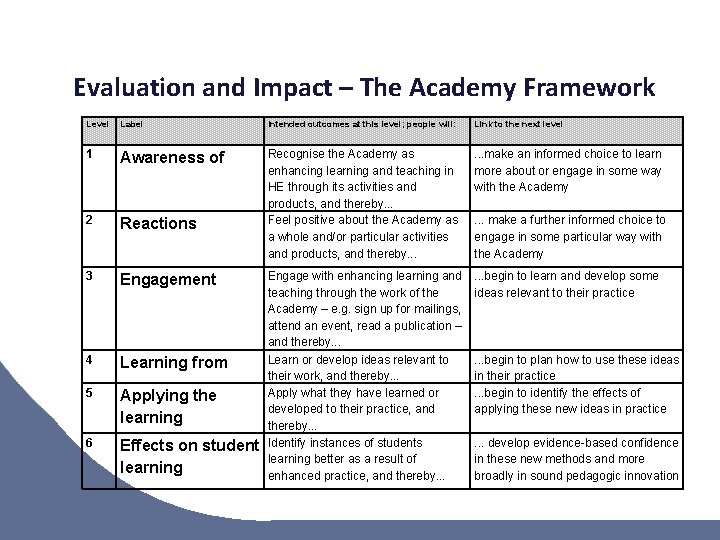

Evaluation and Impact – The Academy Framework Level Label Intended outcomes at this level; people will: Link to the next level 1 Awareness of . . . make an informed choice to learn more about or engage in some way with the Academy 2 Reactions Recognise the Academy as enhancing learning and teaching in HE through its activities and products, and thereby. . . Feel positive about the Academy as a whole and/or particular activities and products, and thereby. . . 3 Engagement . . . begin to learn and develop some ideas relevant to their practice 4 Learning from 5 Applying the learning 6 Effects on student learning Engage with enhancing learning and teaching through the work of the Academy – e. g. sign up for mailings, attend an event, read a publication – and thereby. . . Learn or develop ideas relevant to their work, and thereby. . . Apply what they have learned or developed to their practice, and thereby. . . Identify instances of students learning better as a result of enhanced practice, and thereby. . . make a further informed choice to engage in some particular way with the Academy . . . begin to plan how to use these ideas in their practice. . . begin to identify the effects of applying these new ideas in practice. . . develop evidence-based confidence in these new methods and more broadly in sound pedagogic innovation

…and so • • What impact will the evaluation have? (How) will it be used? Formative and summative Involving / informing key stakeholders (including yourselves) Capturing those unintended consequences University of Sheffield, Evaluation Resources: • http: //www. sheffield. ac. uk/lets-evaluate/general/methodscollection/questions. html

This session helped our team to develop a shared understanding of the purpose, use and stakeholders for evaluation • Agree • Disagree • Neutral

- Slides: 19