Evaluating Topk Queries over WebAccessible Databases Nicolas Bruno

Evaluating Top-k Queries over Web-Accessible Databases Nicolas Bruno Luis Gravano Amélie Marian Columbia University 2/27/2002

“Top-k” Queries Natural in Many Scenarios Example: NYC Restaurant Recommendation Service. Goal: Find best restaurants for a user: Close to address: “ 2290 Broadway” Price around $25 Good rating Query: Specification of Flexible Preferences Answer: Best k Objects for Distance Function 2/27/2002 2

Attributes often Handled by External Sources Map. Quest returns the distance between two addresses. NYTimes Review gives the price range of a restaurant. Zagat gives a food rating to the restaurant. 2/27/2002 3

“Top-k” Query Processing Challenges Attributes handled by external sources (e. g. , Map. Quest distance). External sources exhibit a variety of interfaces (e. g. , NYTimes Review, Zagat). Existing algorithms do not handle all types of interfaces. 2/27/2002 4

Processing Top-k Queries over Web-Accessible Data Sources Data and query model Algorithms for sources with different interfaces Our new algorithm: Upper Experimental results 2/27/2002 5

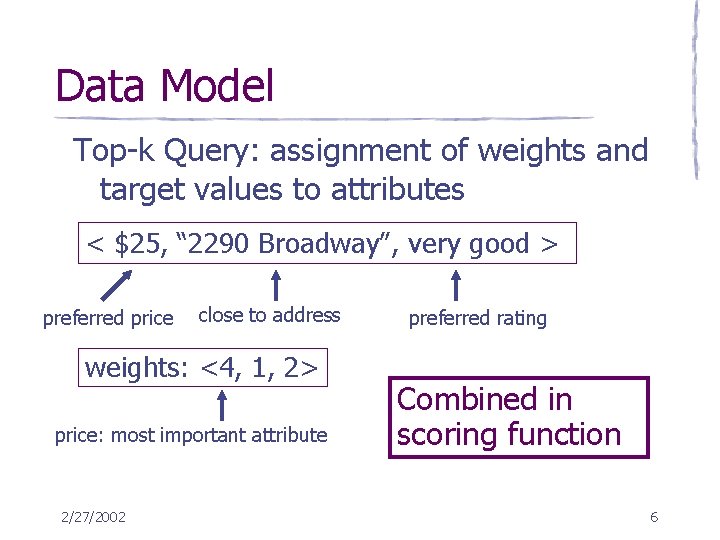

Data Model Top-k Query: assignment of weights and target values to attributes < $25, “ 2290 Broadway”, very good > preferred price close to address weights: <4, 1, 2> price: most important attribute 2/27/2002 preferred rating Combined in scoring function 6

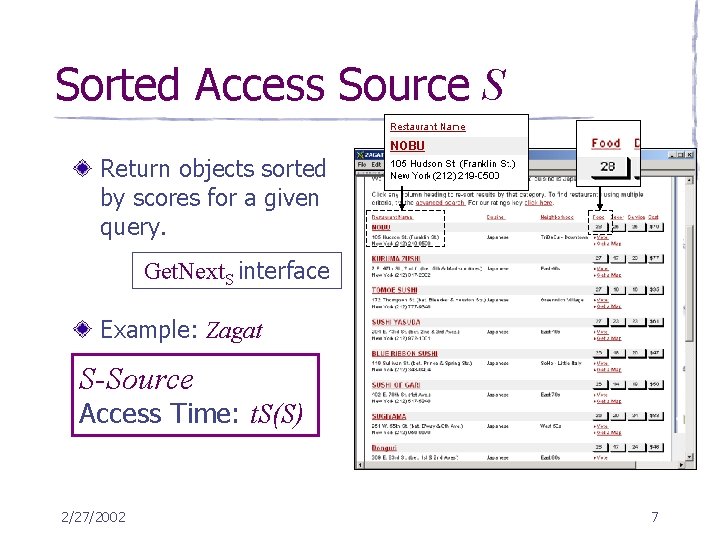

Sorted Access Source S Return objects sorted by scores for a given query. Get. Next. S interface Example: Zagat S-Source Access Time: t. S(S) 2/27/2002 7

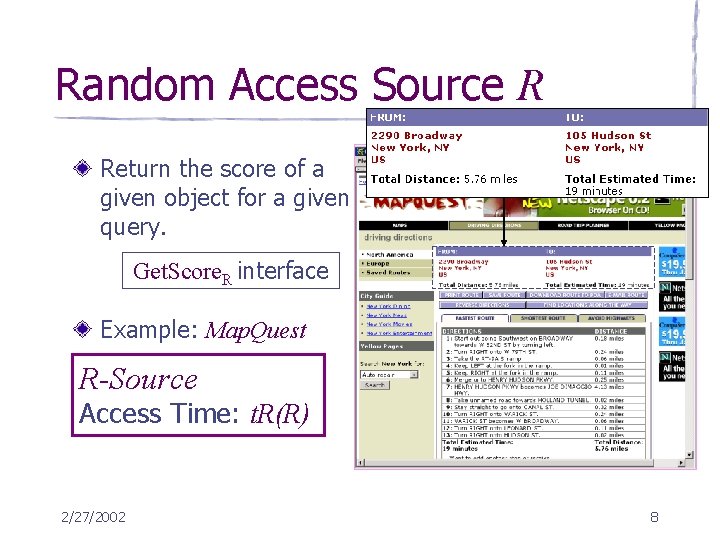

Random Access Source R Return the score of a given object for a given query. Get. Score. R interface Example: Map. Quest R-Source Access Time: t. R(R) 2/27/2002 8

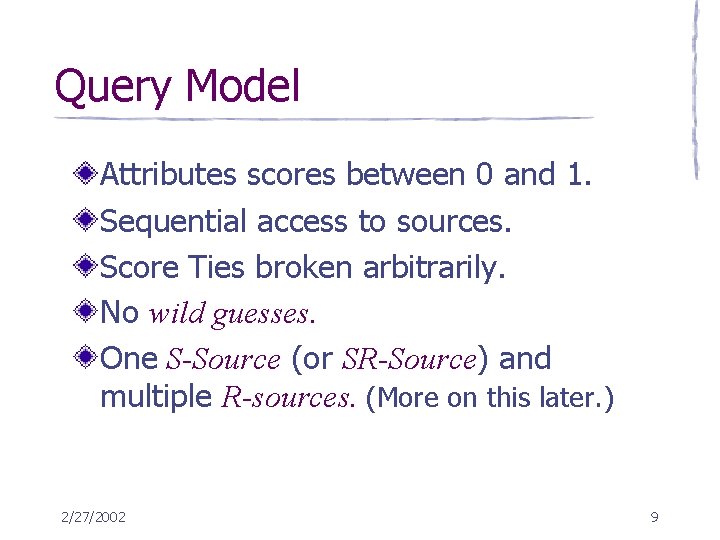

Query Model Attributes scores between 0 and 1. Sequential access to sources. Score Ties broken arbitrarily. No wild guesses. One S-Source (or SR-Source) and multiple R-sources. (More on this later. ) 2/27/2002 9

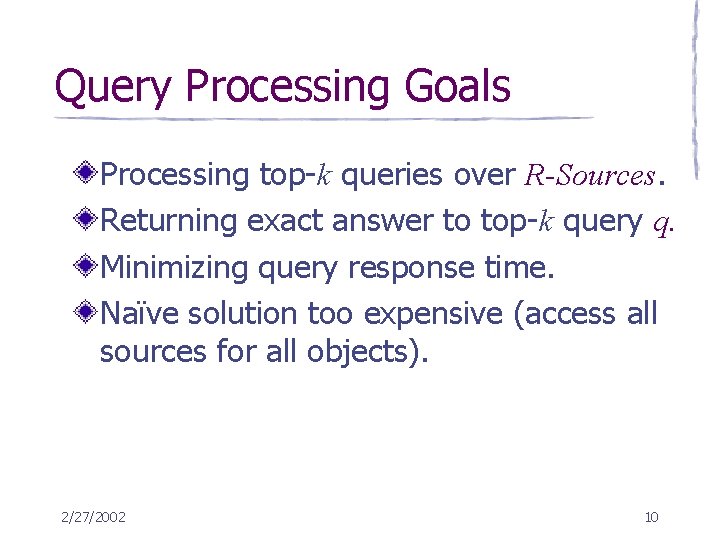

Query Processing Goals Processing top-k queries over R-Sources. Returning exact answer to top-k query q. Minimizing query response time. Naïve solution too expensive (access all sources for all objects). 2/27/2002 10

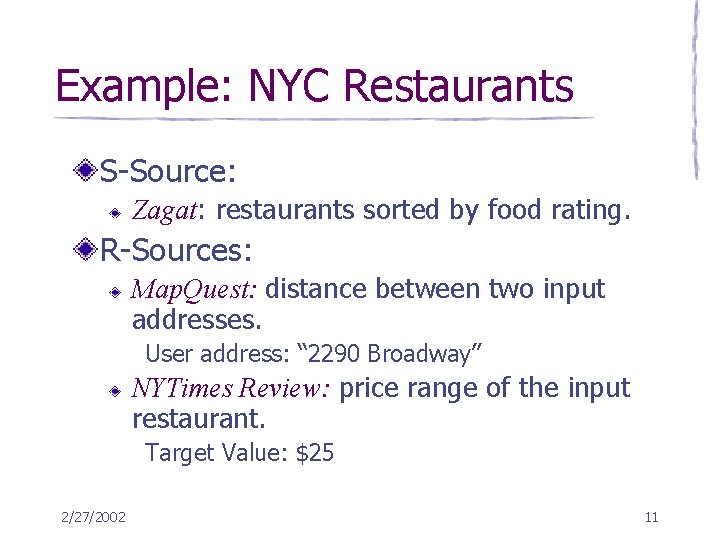

Example: NYC Restaurants S-Source: Zagat: restaurants sorted by food rating. R-Sources: Map. Quest: distance between two input addresses. User address: “ 2290 Broadway” NYTimes Review: price range of the input restaurant. Target Value: $25 2/27/2002 11

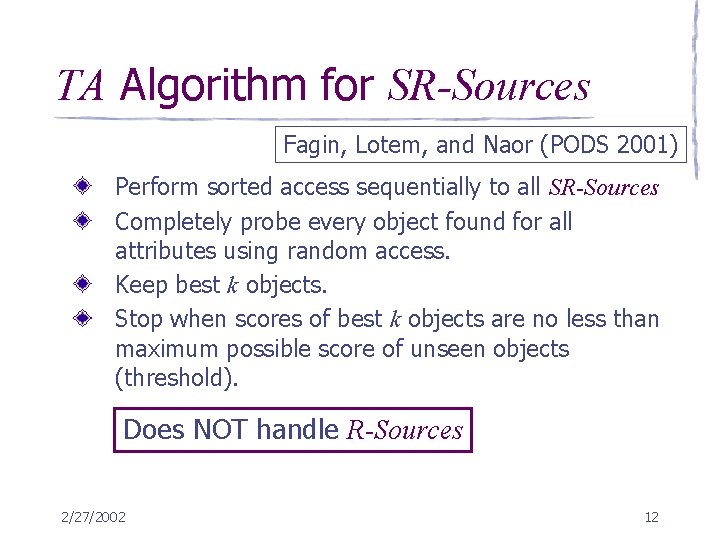

TA Algorithm for SR-Sources Fagin, Lotem, and Naor (PODS 2001) Perform sorted access sequentially to all SR-Sources Completely probe every object found for all attributes using random access. Keep best k objects. Stop when scores of best k objects are no less than maximum possible score of unseen objects (threshold). Does NOT handle R-Sources 2/27/2002 12

Our Adaptation of TA Algorithm for R-Sources: TA-Adapt Perform sorted access to S-Source S. Probe every R-Source Ri for newly found object. Keep best k objects. Stop when scores of best k objects are no less than maximum possible score of unseen objects (threshold). 2/27/2002 13

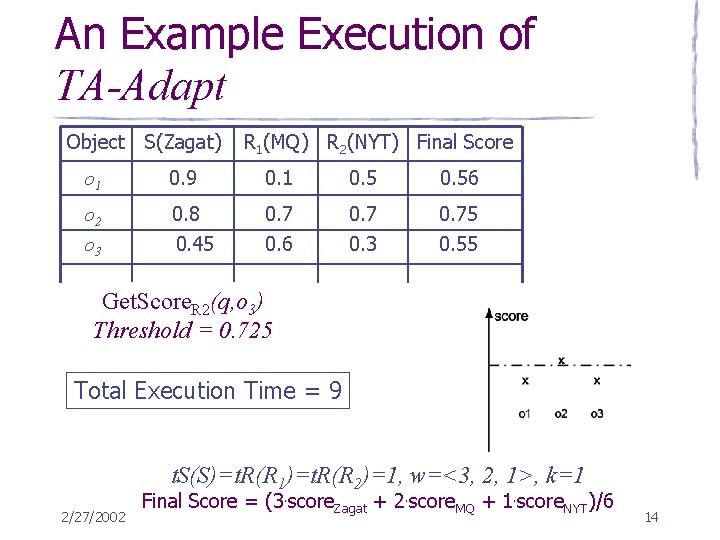

An Example Execution of TA-Adapt Object S(Zagat) R 1(MQ) R 2(NYT) Final Score o 1 0. 9 0. 1 0. 56 o 2 o 3 0. 8 0. 45 0. 7 0. 6 0. 7 0. 3 0. 75 0. 55 Get. Score Get. Next (q) Get. Score Get. Next (q, o R 2 R 1 S(q, o R 1 R 2 S(q)123)) Threshold===10. 95 0. 9 0. 725 Total Execution Time = 9 t. S(S)=t. R(R 1)=t. R(R 2)=1, w=<3, 2, 1>, k=1 2/27/2002 Final Score = (3. score. Zagat + 2. score. MQ + 1. score. NYT)/6 14

Improvements over TA-Adapt Add a shortcut test after each randomaccess probe (TA-Opt). Exploit techniques for processing selections with expensive predicates (TAEP). Reorder accesses to R-Sources. Best weight/time ratio. 2/27/2002 15

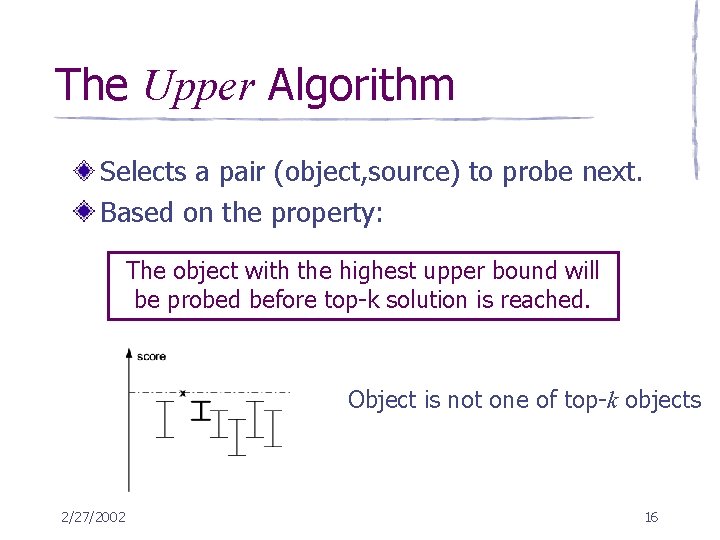

The Upper Algorithm Selects a pair (object, source) to probe next. Based on the property: The object with the highest upper bound will be probed before top-k solution is reached. Object is not of top-k objects one of top-k objects 2/27/2002 16

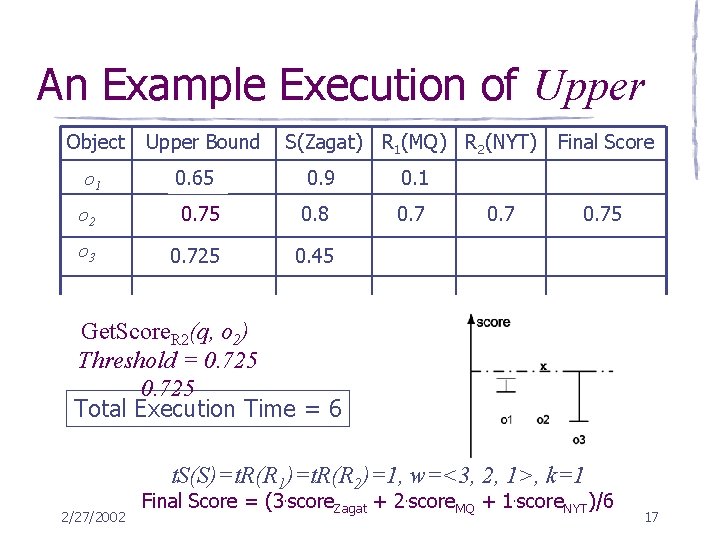

An Example Execution of Upper Object Upper Bound S(Zagat) R 1(MQ) R 2(NYT) 0. 65 0. 9 0. 1 o 2 0. 75 0. 8 0. 9 0. 8 0. 7 o 3 0. 725 0. 45 o 1 0. 7 Final Score 0. 75 Get. Score Get. Next (q) o 122) R 1 S(q, R 2 R 1 S(q, o S(q) Threshold ===0. 725 0. 95 Threshold == = 0. 9 10. 9 0. 725 Total Execution Time = 6 t. S(S)=t. R(R 1)=t. R(R 2)=1, w=<3, 2, 1>, k=1 2/27/2002 Final Score = (3. score. Zagat + 2. score. MQ + 1. score. NYT)/6 17

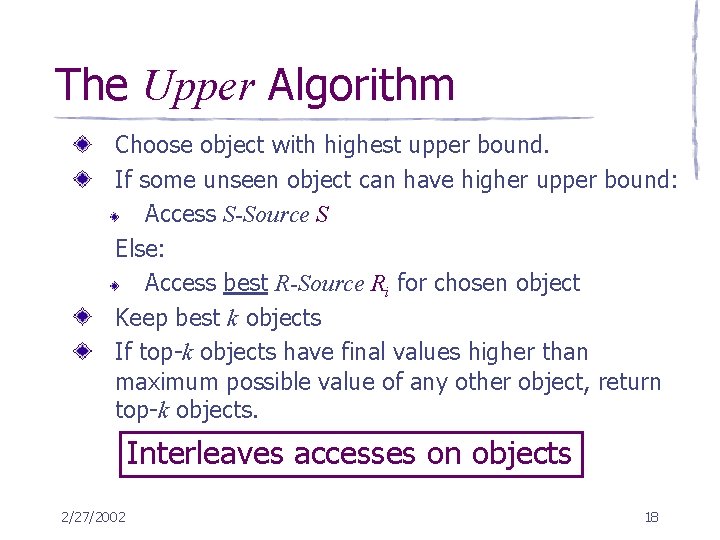

The Upper Algorithm Choose object with highest upper bound. If some unseen object can have higher upper bound: Access S-Source S Else: Access best R-Source Ri for chosen object Keep best k objects If top-k objects have final values higher than maximum possible value of any other object, return top-k objects. Interleaves accesses on objects 2/27/2002 18

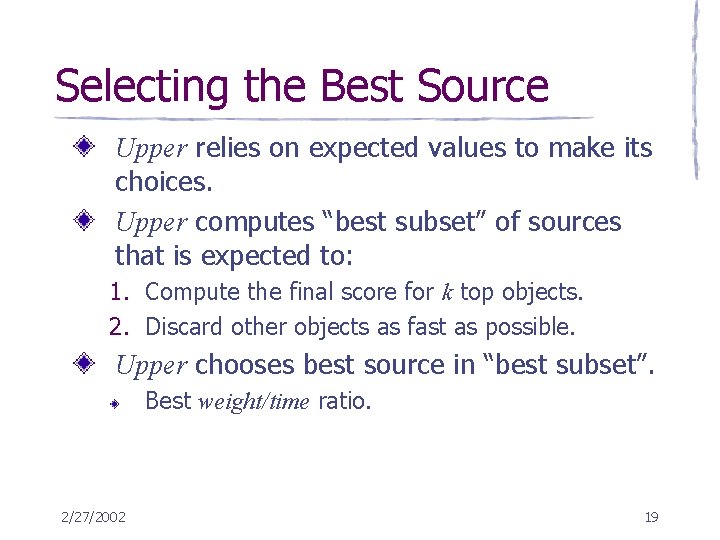

Selecting the Best Source Upper relies on expected values to make its choices. Upper computes “best subset” of sources that is expected to: 1. Compute the final score for k top objects. 2. Discard other objects as fast as possible. Upper chooses best source in “best subset”. Best weight/time ratio. 2/27/2002 19

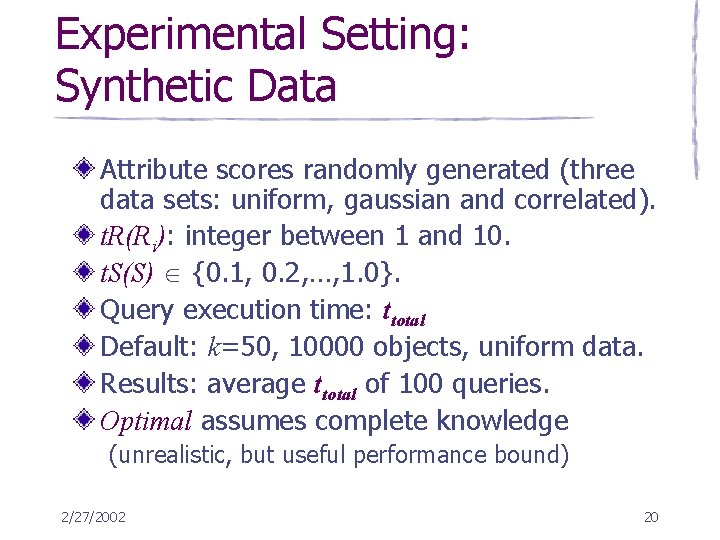

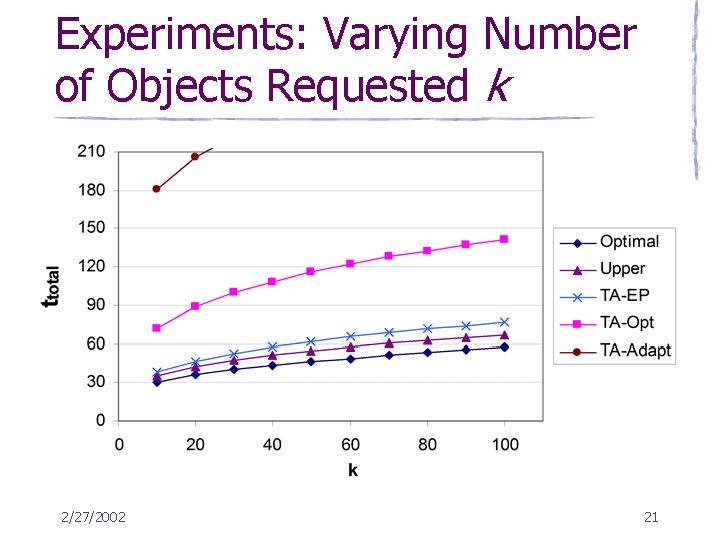

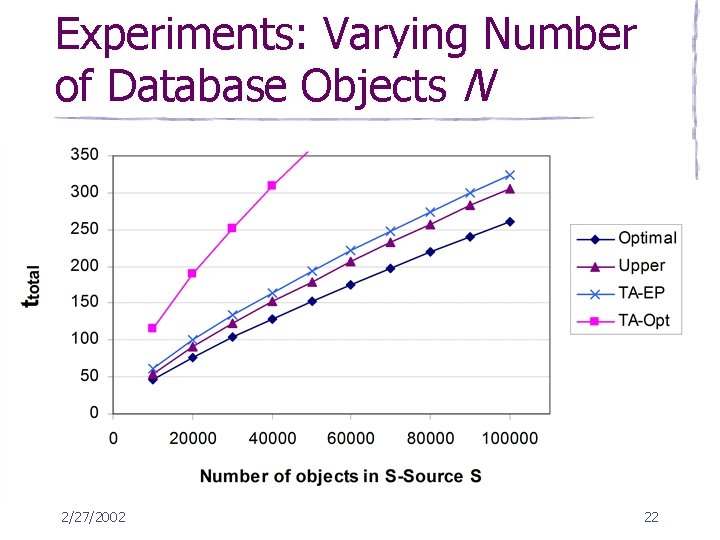

Experimental Setting: Synthetic Data Attribute scores randomly generated (three data sets: uniform, gaussian and correlated). t. R(Ri): integer between 1 and 10. t. S(S) {0. 1, 0. 2, …, 1. 0}. Query execution time: ttotal Default: k=50, 10000 objects, uniform data. Results: average ttotal of 100 queries. Optimal assumes complete knowledge (unrealistic, but useful performance bound) 2/27/2002 20

Experiments: Varying Number of Objects Requested k 2/27/2002 21

Experiments: Varying Number of Database Objects N 2/27/2002 22

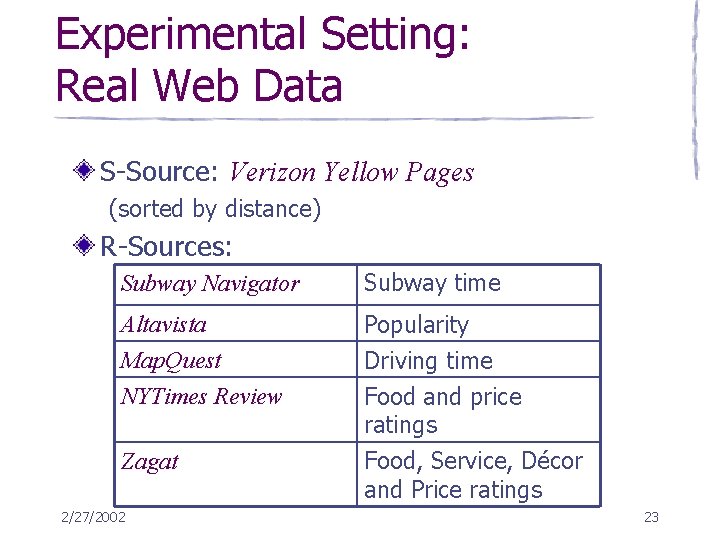

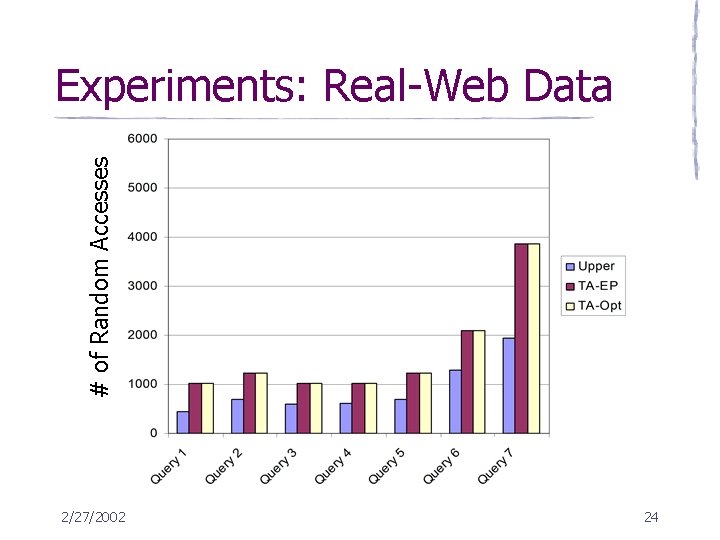

Experimental Setting: Real Web Data S-Source: Verizon Yellow Pages (sorted by distance) R-Sources: Subway Navigator Subway time Altavista Map. Quest NYTimes Review Popularity Driving time Food and price ratings Zagat Food, Service, Décor and Price ratings 2/27/2002 23

# of Random Accesses Experiments: Real-Web Data 2/27/2002 24

Evaluation Conclusions TA-EP and TA-Opt much faster than TAAdapt. Upper significantly better than all versions of TA. Upper close to optimal. Real data experiments: Upper faster than TA adaptations. 2/27/2002 25

Conclusion Introduced first algorithm for top-k processing over R-Sources. Adapted TA to this scenario. Presented new algorithms: Upper and Pick (see paper) Evaluated our new algorithms with both real and synthetic data. Upper close to optimal 2/27/2002 26

Current and Future Work Relaxation of the Source Model Current source model limited Any number of R-Sources and SR-Sources Upper has good results even with only SR-Sources Parallelism Define a query model for parallel access to sources Adapt our algorithms to this model Approximate Queries 2/27/2002 27

References Top-k Queries: Evaluating Top-k Selection Queries, S. Chaudhuri and L. Gravano. VLDB 1999 TA algorithm: Optimal Aggregation Algorithms for Middleware, R. Fagin, A. Lotem, and M. Naor. PODS 2001 Variations of TA: Query Processing Issues on Image (Multimedia) Databases, S. Nepal and V. Ramakrishna. ICDE 1999 Optimizing Multi-Feature Queries for Image Databases, U. Güntzer, W. -T. Balke, and W. Kießling. VLDB 2000 Expensive Predicates Predicate Migration: Optimizing queries with Expensive Predicates, J. M. Hellerstein and M. Stonebraker. SIGMOD 2/27/2002 1993 28

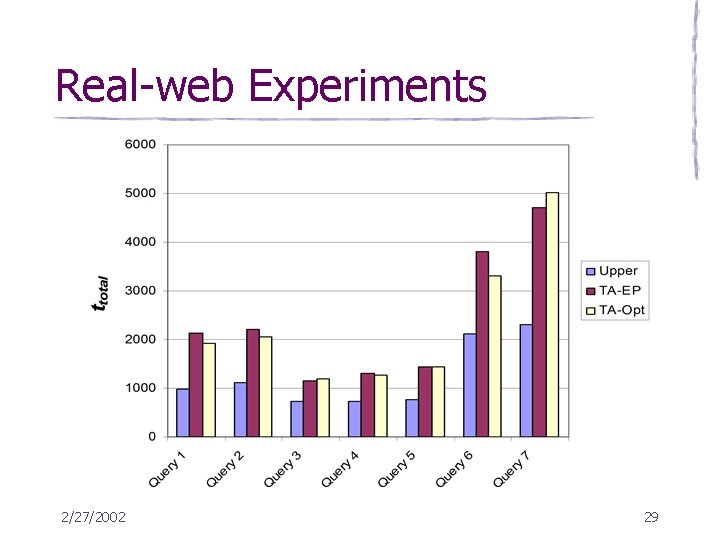

Real-web Experiments 2/27/2002 29

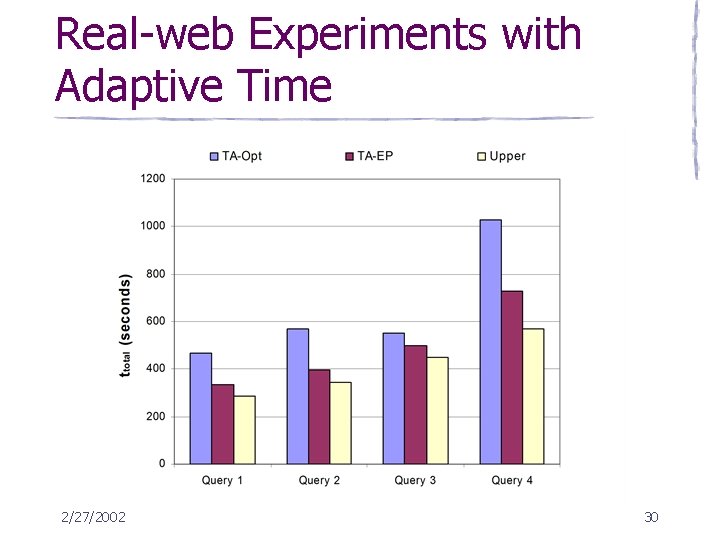

Real-web Experiments with Adaptive Time 2/27/2002 30

Relaxing the Source Model TA-EP Upper 2/27/2002 31

Upcoming Journal Paper Variations of Upper Select best source Data Structures Complexity Analysis Relaxing Source Model Adaptation of our Algorithms New Algorithms Variations of Data and Query Model to handle real web data 2/27/2002 32

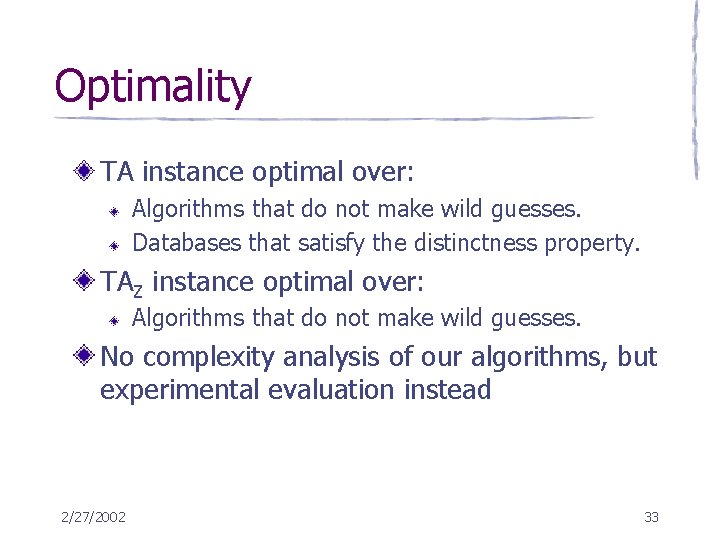

Optimality TA instance optimal over: Algorithms that do not make wild guesses. Databases that satisfy the distinctness property. TAZ instance optimal over: Algorithms that do not make wild guesses. No complexity analysis of our algorithms, but experimental evaluation instead 2/27/2002 33

- Slides: 33