Evaluating the Validity of English Language Proficiency Assessments

- Slides: 23

Evaluating the Validity of English Language Proficiency Assessments (EVEA): A Consortium Approach National Conference on Student Assessment Detroit, Michigan June 22, 2010 8: 30 -10: 00 am

• Overview of the Project – Ellen Forte • The Role of the Research Partner – Alison Bailey • The State Perspective – Joe Willhoft • Questions

Overview of the Project • Project Partners, Tasks, and Timelines • The Argument-Based Approach to Validity Evaluation • ELPA Common Interpretive Argument • Moving Forward

The Evaluating the Validity of English Language Proficiency Assessments (EVEA) project: • is a federally funded, Enhanced Assessment Grant • spans October 2009 through March 2011 • brings together a consortium of five states with a team of researchers and a panel of experts to develop a coherent validity argument for English language proficiency (ELP) assessments • will build a common interpretive argument, and design a set of studies and instruments to support and test the claims within the argument • Builds off of previous, federally-funded projects (NHEAIEAG, NAAC-GSEG), which focused on technical documentation and validity arguments for AA-AAS.

Partners Five states • Washington (lead) • Oregon • Montana • Idaho • Indiana Five organizations • ed. Count, LLC • National Center for the Improvement of Educational Assessment • University of California Los Angeles • Synergy Enterprises, Inc. • Pacific Institute for Research and Evaluation

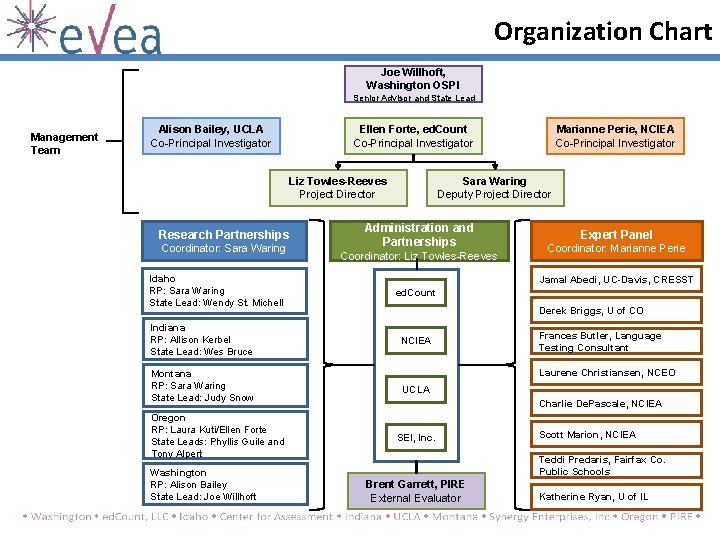

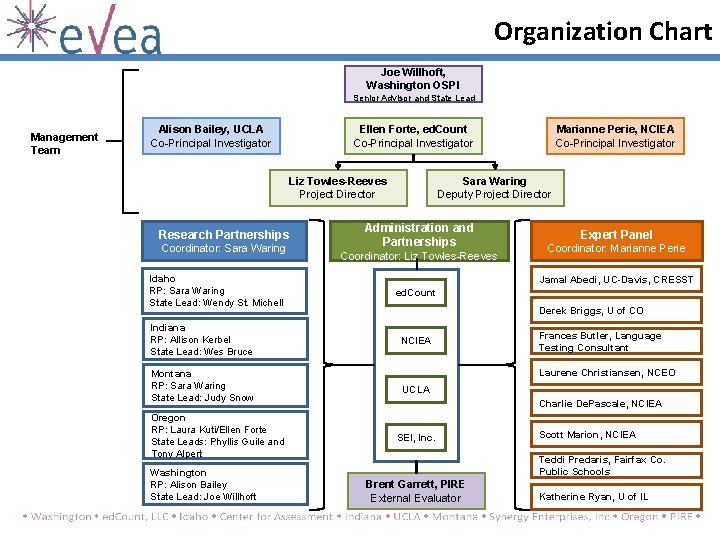

Organization Chart Joe Willhoft, Washington OSPI Senior Advisor and State Lead Management Team Alison Bailey, UCLA Co-Principal Investigator Ellen Forte, ed. Count Co-Principal Investigator Liz Towles-Reeves Project Director Research Partnerships Coordinator: Sara Waring Idaho RP: Sara Waring State Lead: Wendy St. Michell Indiana RP: Allison Kerbel State Lead: Wes Bruce Montana RP: Sara Waring State Lead: Judy Snow Oregon RP: Laura Kuti/Ellen Forte State Leads: Phyllis Guile and Tony Alpert Washington RP: Alison Bailey State Lead: Joe Willhoft Marianne Perie, NCIEA Co-Principal Investigator Sara Waring Deputy Project Director Administration and Partnerships Coordinator: Liz Towles-Reeves Expert Panel Coordinator: Marianne Perie Jamal Abedi, UC-Davis, CRESST ed. Count Derek Briggs, U of CO NCIEA Frances Butler, Language Testing Consultant Laurene Christiansen, NCEO UCLA Charlie De. Pascale, NCIEA SEI, Inc. Scott Marion, NCIEA Teddi Predaris, Fairfax Co. Public Schools Brent Garrett, PIRE External Evaluator Katherine Ryan, U of IL

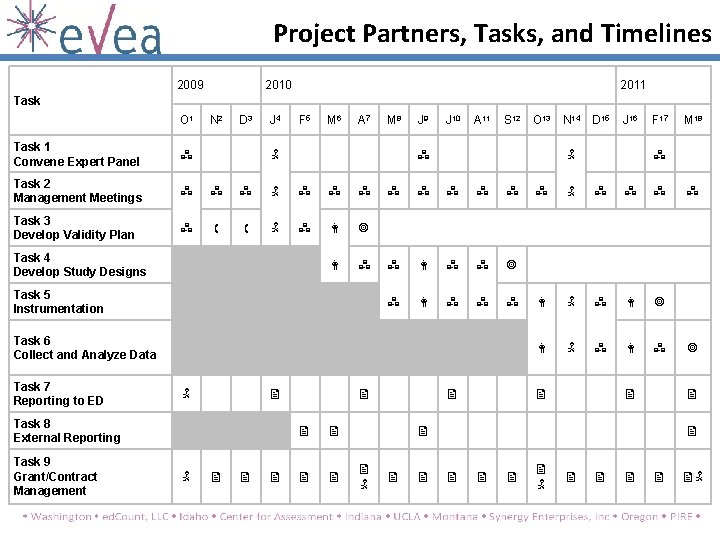

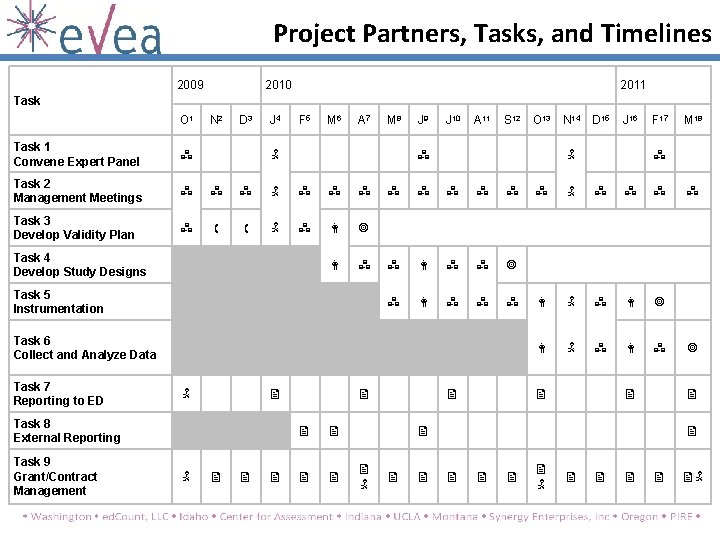

Project Partners, Tasks, and Timelines 2009 2010 2011 Task O 1 N 2 D 3 J 4 F 5 M 6 A 7 Task 1 Convene Expert Panel Task 2 Management Meetings Task 3 Develop Validity Plan M 8 J 9 J 10 A 11 S 12 Task 5 Instrumentation Task 6 Collect and Analyze Data Task 8 External Reporting Task 9 Grant/Contract Management D 15 J 16 Task 4 Develop Study Designs Task 7 Reporting to ED O 13 N 14 F 17 M 18

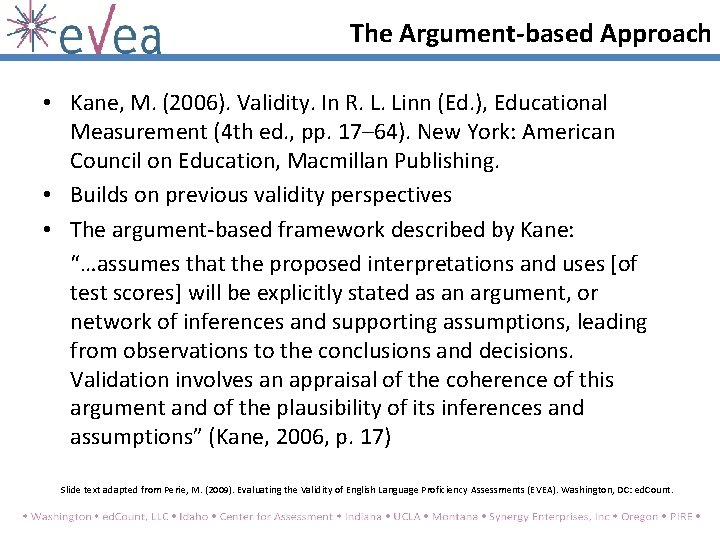

The Argument-based Approach • Kane, M. (2006). Validity. In R. L. Linn (Ed. ), Educational Measurement (4 th ed. , pp. 17– 64). New York: American Council on Education, Macmillan Publishing. • Builds on previous validity perspectives • The argument-based framework described by Kane: “…assumes that the proposed interpretations and uses [of test scores] will be explicitly stated as an argument, or network of inferences and supporting assumptions, leading from observations to the conclusions and decisions. Validation involves an appraisal of the coherence of this argument and of the plausibility of its inferences and assumptions” (Kane, 2006, p. 17) Slide text adapted from Perie, M. (2009). Evaluating the Validity of English Language Proficiency Assessments (EVEA). Washington, DC: ed. Count.

The Argument-based Framework • Arguments – An interpretative argument specifies the proposed interpretations and uses of test results by laying out the network of inferences and assumptions leading from the observed performances to the conclusions and decisions based on the performances. – The validity argument provides an evaluation of the interpretative argument (Kane, 2006). Slide text adapted from Perie, M. (2009). Evaluating the Validity of English Language Proficiency Assessments (EVEA). Washington, DC: ed. Count.

The Argument-based Framework • Interpretive Argument – Illustration of theory that underlies test score interpretation and use – Identifies claims about the test and the assumptions underlying those claims – Allows for the prioritization of evidence needs for claims and assumptions – Used as a logic model; for example: • IF the assessment adequately represents the construct… • IF teachers administer the instrument correctly… • IF the tasks are scored accurately… • … THEN we can make claims about a student’s knowledge of that construct based on his score Slide text adapted from Perie, M. (2009). Evaluating the Validity of English Language Proficiency Assessments (EVEA). Washington, DC: ed. Count.

The Argument-based Framework • The outcome: tested claims from the interpretive argument – Claims that survive the evaluation are included in the final validity argument. – Claims can be deleted or modified and additional claims can be added based on findings in the final validity argument. • The validity argument includes evidence. Slide text adapted from Perie, M. (2009). Evaluating the Validity of English Language Proficiency Assessments (EVEA). Washington, DC: ed. Count.

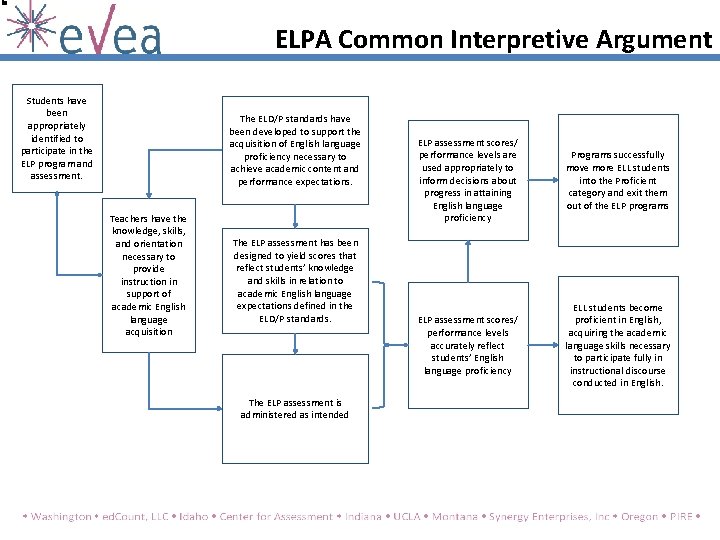

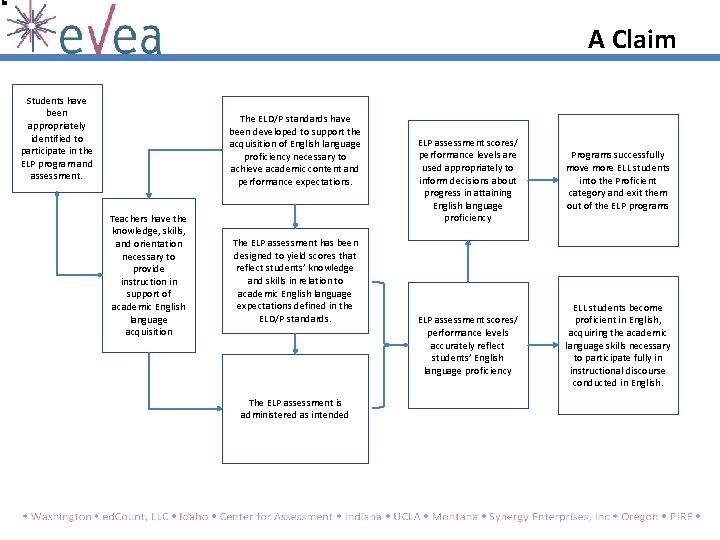

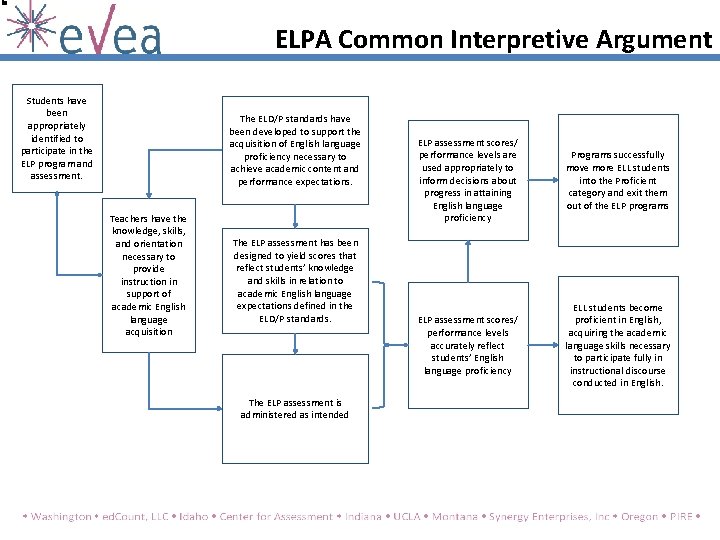

ELPA Common Interpretive Argument Students have been appropriately identified to participate in the ELP program and assessment. The ELD/P standards have been developed to support the acquisition of English language proficiency necessary to achieve academic content and performance expectations. Teachers have the knowledge, skills, and orientation necessary to provide instruction in support of academic English language acquisition The ELP assessment has been designed to yield scores that reflect students’ knowledge and skills in relation to academic English language expectations defined in the ELD/P standards. The ELP assessment is administered as intended ELP assessment scores/ performance levels are used appropriately to inform decisions about progress in attaining English language proficiency Programs successfully move more ELL students into the Proficient category and exit them out of the ELP programs ELP assessment scores/ performance levels accurately reflect students’ English language proficiency ELL students become proficient in English, acquiring the academic language skills necessary to participate fully in instructional discourse conducted in English.

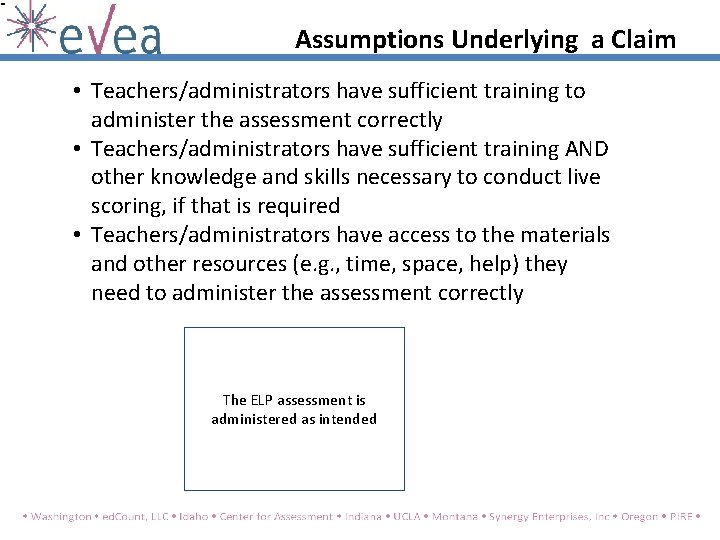

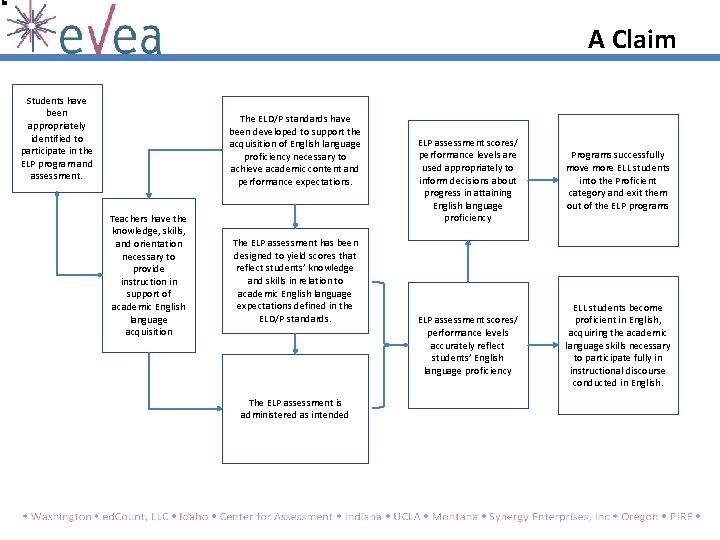

A Claim Students have been appropriately identified to participate in the ELP program and assessment. The ELD/P standards have been developed to support the acquisition of English language proficiency necessary to achieve academic content and performance expectations. Teachers have the knowledge, skills, and orientation necessary to provide instruction in support of academic English language acquisition The ELP assessment has been designed to yield scores that reflect students’ knowledge and skills in relation to academic English language expectations defined in the ELD/P standards. The ELP assessment is administered as intended ELP assessment scores/ performance levels are used appropriately to inform decisions about progress in attaining English language proficiency Programs successfully move more ELL students into the Proficient category and exit them out of the ELP programs ELP assessment scores/ performance levels accurately reflect students’ English language proficiency ELL students become proficient in English, acquiring the academic language skills necessary to participate fully in instructional discourse conducted in English.

Assumptions Underlying a Claim • Teachers/administrators have sufficient training to administer the assessment correctly • Teachers/administrators have sufficient training AND other knowledge and skills necessary to conduct live scoring, if that is required • Teachers/administrators have access to the materials and other resources (e. g. , time, space, help) they need to administer the assessment correctly The ELP assessment is administered as intended

Moving Forward • Common Interpretive Argument to Individual State Interpretive Arguments • Individual State Validity Evaluation Plans – Prioritization of Claims and Assumptions – Research Studies Designed to Test the Claims and Assumptions • Targeting common issues to develop methodologies for investigation through pilot studies

Moving Forward • Dissemination of products – CIA – Study Methodologies (piloted) – Instrumentation (piloted) – Generic Foundations Document – Home Language Survey White Paper – Table of Contents for an ELPA Technical Manual Everything will be freely available to the public at www. eveaproject. com

The Research Partner Perspective

The State Perspective

Benefits for States EVEA’s benefits for partner states • All states in EVEA use a different ELP test – Opportunity to develop across-state analyses – Mutual learning opportunities – distribution of common issues – Learn from other states of approaches to common issues

Benefits for States EVEA’s benefits for partner states • Support provided by the Research Partner – Experienced and knowledgeable research partners provide assistance with state-specific research and analysis issues for each partner state – Opportunity to collaborate with external expert on development of a validity framework

Benefits for States EVEA’s benefits for partner states • Development of a state-specific validity framework – Hands-on experience with elaboration of a validity framework – Expanding sophistication of state-level assessment staff with the argument-based approach to validity – Able to work in an assessment context with a standard set of operational criteria and a manageable theory of action

Contact Information Ellen Forte, Ph. D. President, ed. Count, LLC 5335 Wisconsin Avenue, NW, Suite 440 Washington, DC 20015 eforte@ed. Count. com 202/895 -1502 Alison Bailey, Ph. D. Faculty Associate Researcher (CRESST) and Associate Professor, UCLA Department of Education CRESST/UCLA abailey@gseis. ucla. edu 310/825 -1731

Contact Information Joe Willhoft, Ph. D. Assistant Superintendent for Assessment and Student Information Washington State Office of Superintendent of Public Instruction 600 Washington St. , SE Olympia, WA 98501 Joe. Willhoft@k 12. wa. us 360/725 -6334 Project Website: www. eveaproject. com