Evaluating Diagnostic Tests Payam Kabiri MD Ph D

Evaluating Diagnostic Tests Payam Kabiri, MD. Ph. D. Clinical Epidemiologist Tehran University of Medical Sciences

Seven question to evaluate the utility of a diagnostic test Can the test be reliably performed? n Was the test evaluated on an appropriate population? n Was an appropriate gold standard used? n Was an appropriate cut-off value chosen to optimize sensitivity and specificity? n

Seven question to evaluate the utility of a diagnostic test What are the positive and negative likelihood ratios? n How well does the test perform in specific populations? n What is the balance between cost of the disease and cost of the test? n

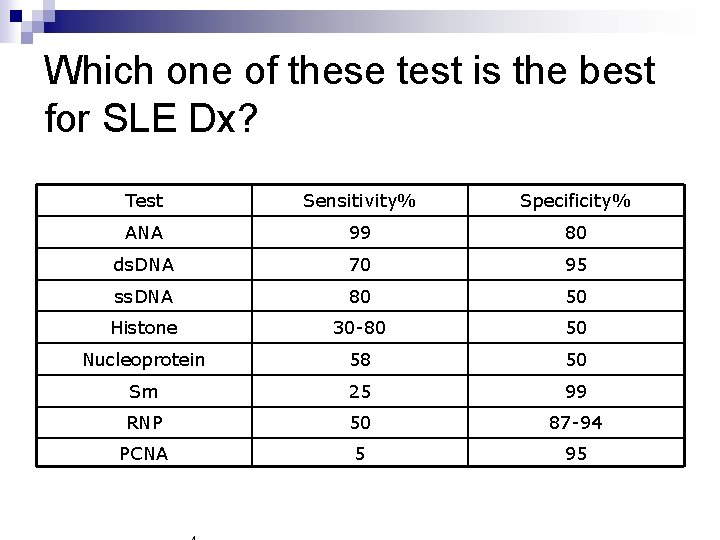

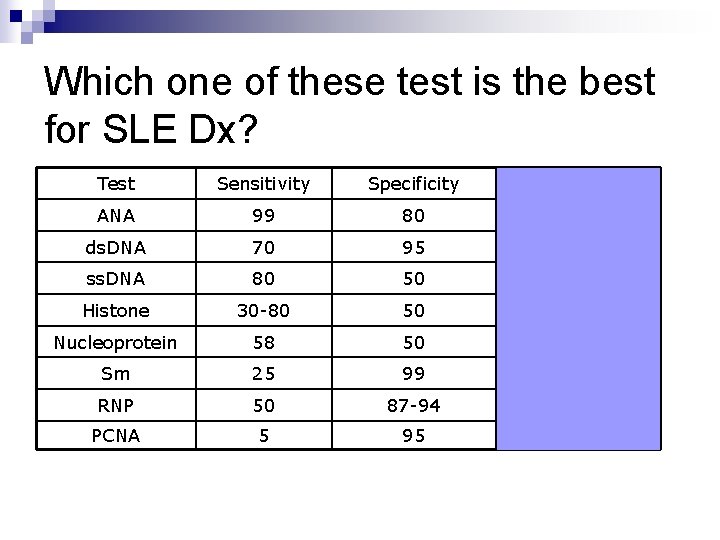

Which one of these test is the best for SLE Dx? Test Sensitivity% Specificity% ANA 99 80 ds. DNA 70 95 ss. DNA 80 50 Histone 30 -80 50 Nucleoprotein 58 50 Sm 25 99 RNP 50 87 -94 PCNA 5 95

Diagnostic Tests Characteristics n Sensitivity n Specificity n Predictive Value n Likelihood Ratio 5

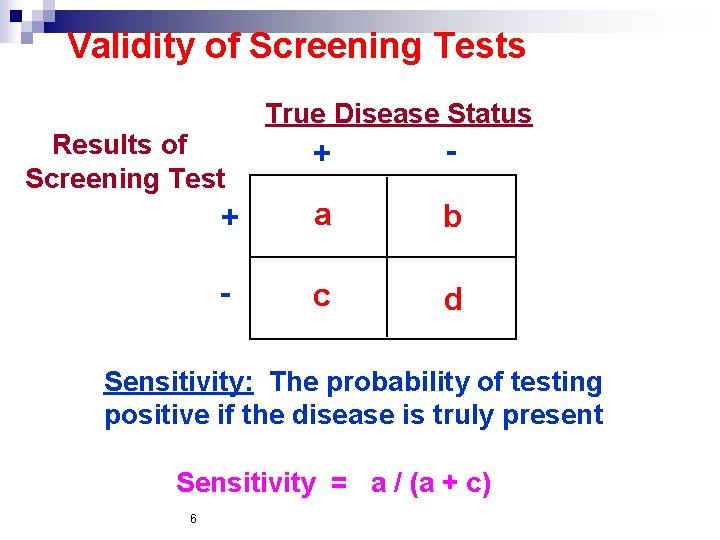

Validity of Screening Tests True Disease Status Results of Screening Test + - + a b - c d Sensitivity: The probability of testing positive if the disease is truly present Sensitivity = a / (a + c) 6

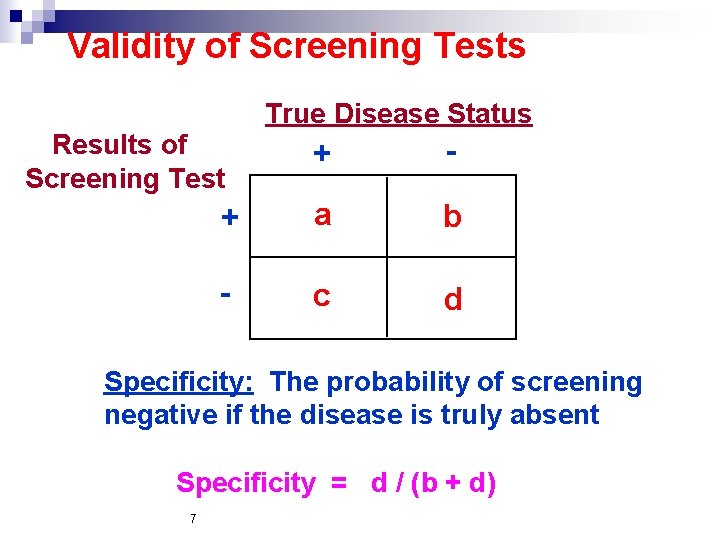

Validity of Screening Tests True Disease Status Results of Screening Test + - + a b - c d Specificity: The probability of screening negative if the disease is truly absent Specificity = d / (b + d) 7

Two-by-two tables can also be used for calculating the false positive and false negative rates. n The false positive rate = false positives / (false positives + true negatives). It is also equal to 1 - specificity. n

The false negative rate = false negatives / (false negatives + true positives). It is also equal to 1 – sensitivity. n An ideal test maximizes both sensitivity and specificity, thereby minimizing the false positive and false negative rates. n

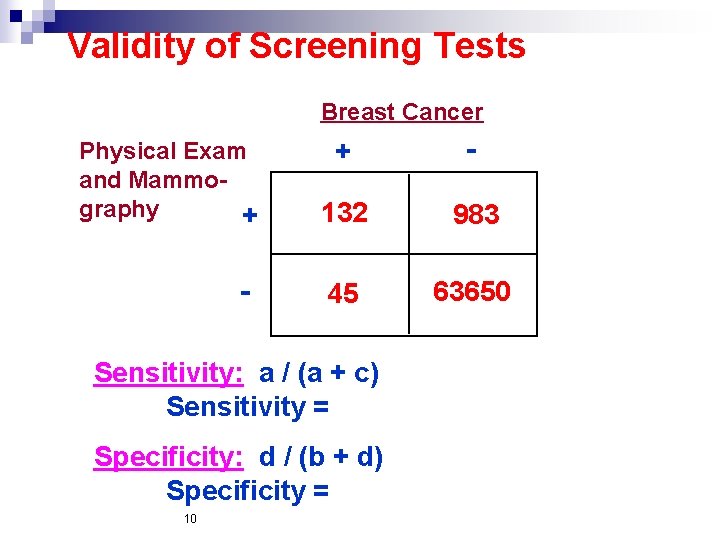

Validity of Screening Tests Breast Cancer Physical Exam and Mammography + - 132 983 45 63650 Sensitivity: a / (a + c) Sensitivity = Specificity: d / (b + d) Specificity = 10

Validity of Screening Tests Breast Cancer Physical Exam and Mammography + - 132 983 45 63650 Sensitivity: a / (a + c) Sensitivity = 132 / (132 + 45) = 74. 6% Specificity: d / (b + d) Specificity = 63650 / (983 + 63650) = 98. 5% 11

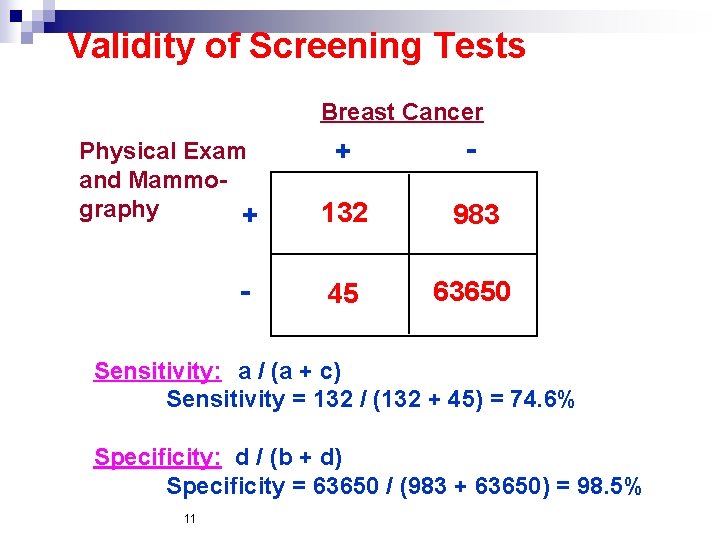

2 X 2 table + Disease - Positive predictive value + Test Sensitivity

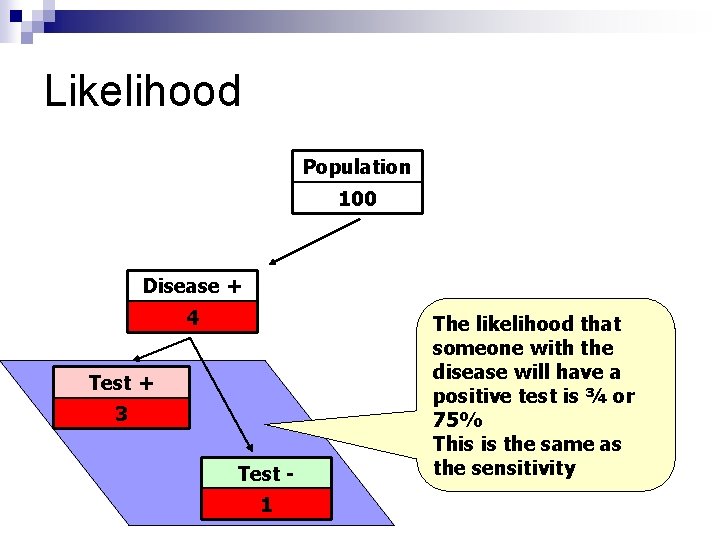

Natural Frequencies Tree Population 100

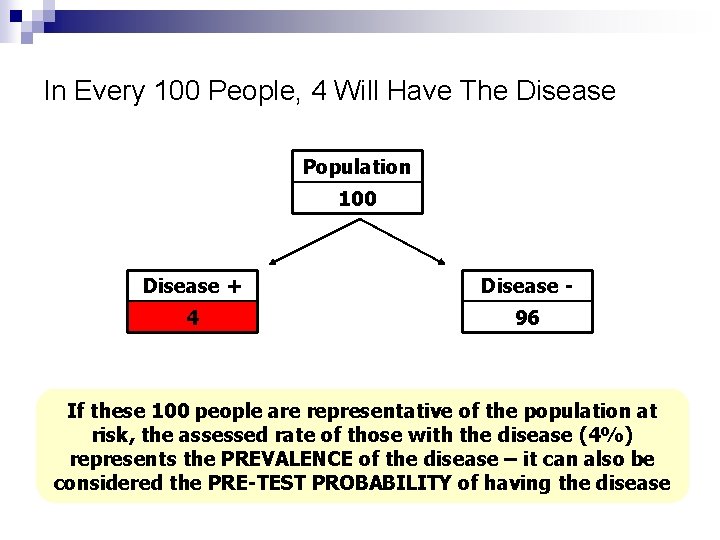

In Every 100 People, 4 Will Have The Disease Population 100 Disease + Disease - 4 96 If these 100 people are representative of the population at risk, the assessed rate of those with the disease (4%) represents the PREVALENCE of the disease – it can also be considered the PRE-TEST PROBABILITY of having the disease

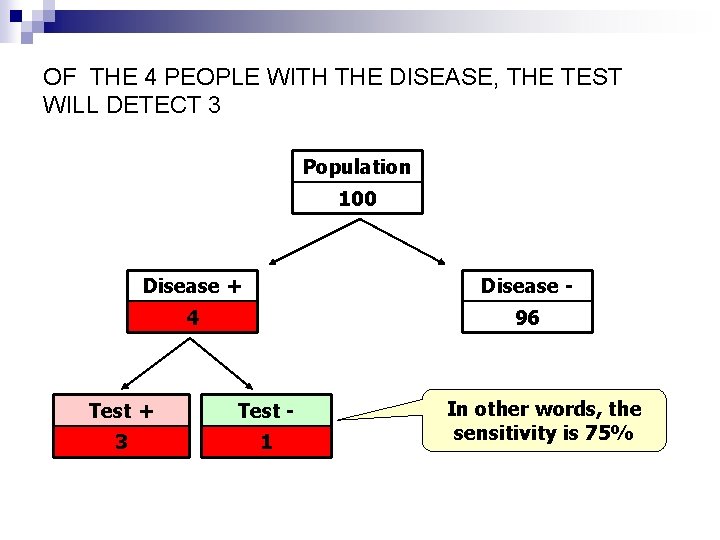

OF THE 4 PEOPLE WITH THE DISEASE, THE TEST WILL DETECT 3 Population 100 Disease + Disease - 4 96 Test + Test - 3 1 In other words, the sensitivity is 75%

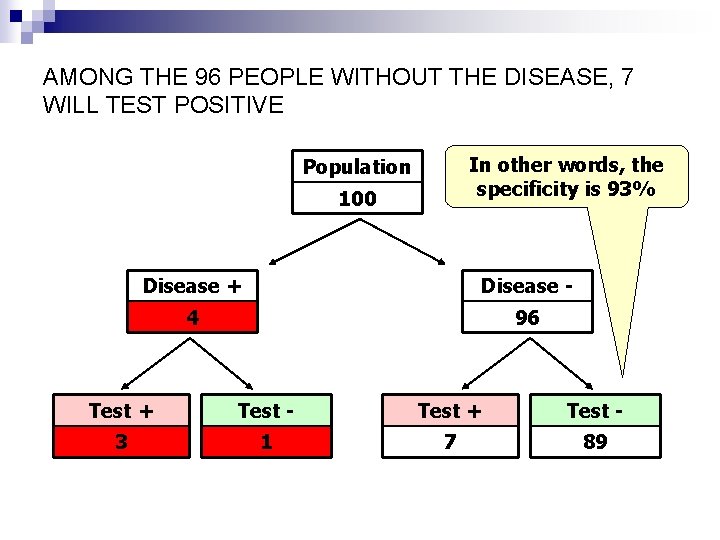

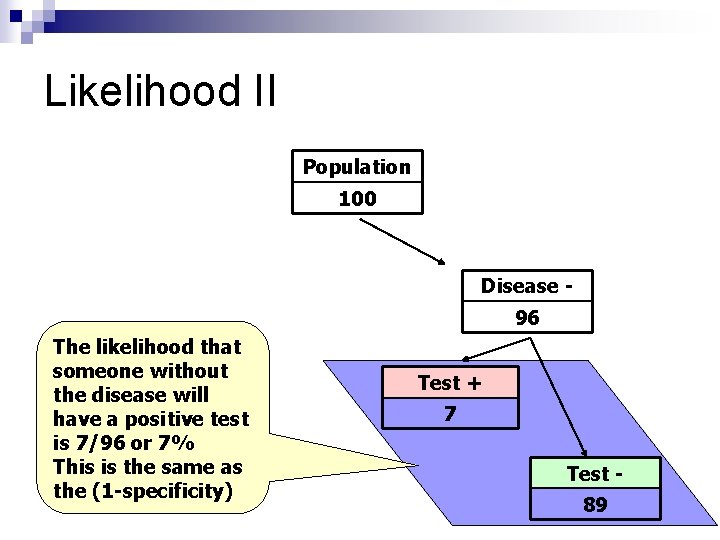

AMONG THE 96 PEOPLE WITHOUT THE DISEASE, 7 WILL TEST POSITIVE In other words, the specificity is 93% Population 100 Disease + Disease - 4 96 Test + Test - 3 1 7 89

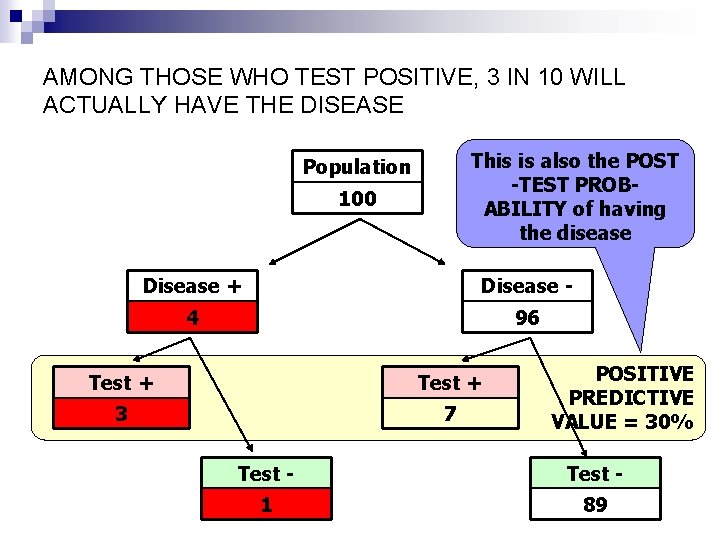

AMONG THOSE WHO TEST POSITIVE, 3 IN 10 WILL ACTUALLY HAVE THE DISEASE This is also the POST -TEST PROBABILITY of having the disease Population 100 Disease + Disease - 4 96 Test + 3 7 POSITIVE PREDICTIVE VALUE = 30% Test - 1 89

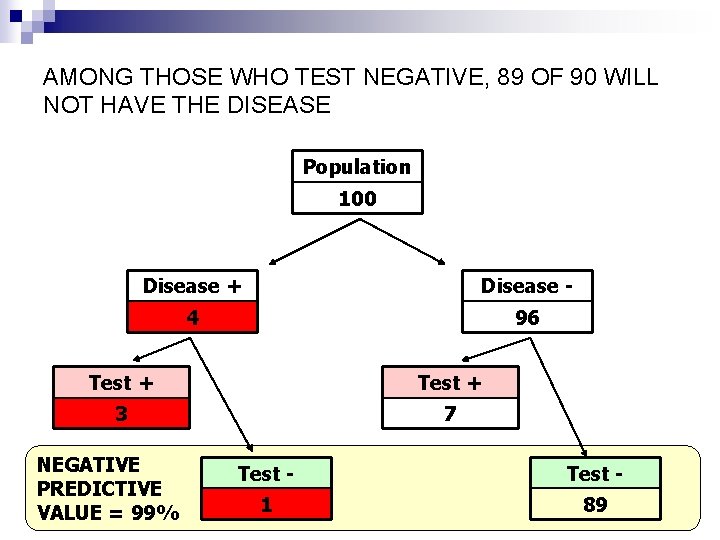

AMONG THOSE WHO TEST NEGATIVE, 89 OF 90 WILL NOT HAVE THE DISEASE Population 100 Disease + Disease - 4 96 Test + 3 7 NEGATIVE PREDICTIVE VALUE = 99% Test - 1 89

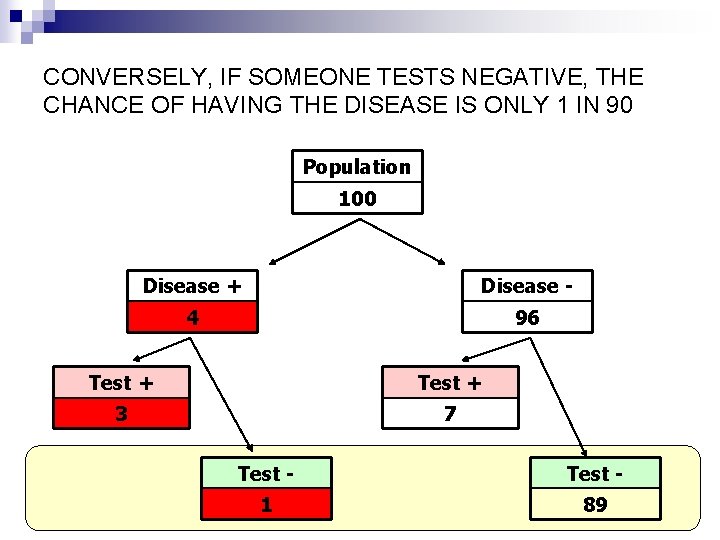

CONVERSELY, IF SOMEONE TESTS NEGATIVE, THE CHANCE OF HAVING THE DISEASE IS ONLY 1 IN 90 Population 100 Disease + Disease - 4 96 Test + 3 7 Test - 1 89

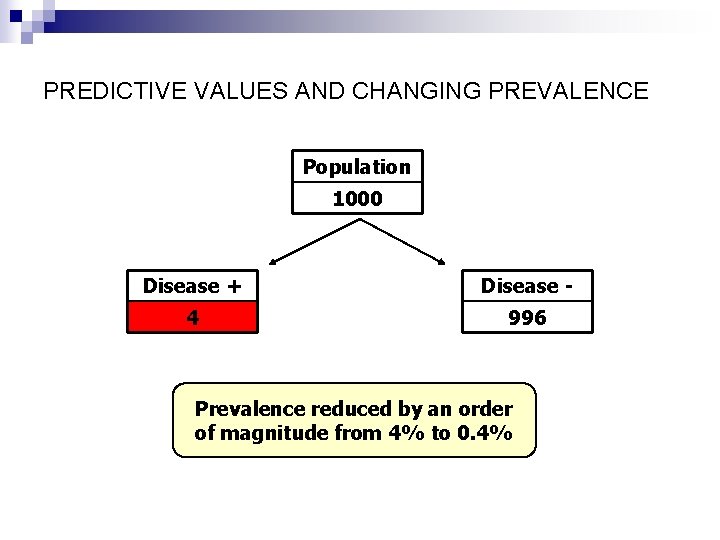

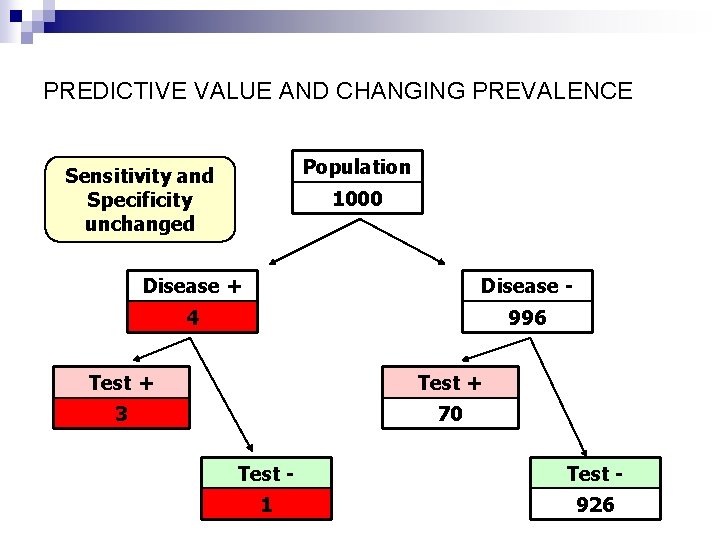

PREDICTIVE VALUES AND CHANGING PREVALENCE Population 1000 Disease + Disease - 4 996 Prevalence reduced by an order of magnitude from 4% to 0. 4%

PREDICTIVE VALUE AND CHANGING PREVALENCE Population Sensitivity and Specificity unchanged 1000 Disease + Disease - 4 996 Test + 3 70 Test - 1 926

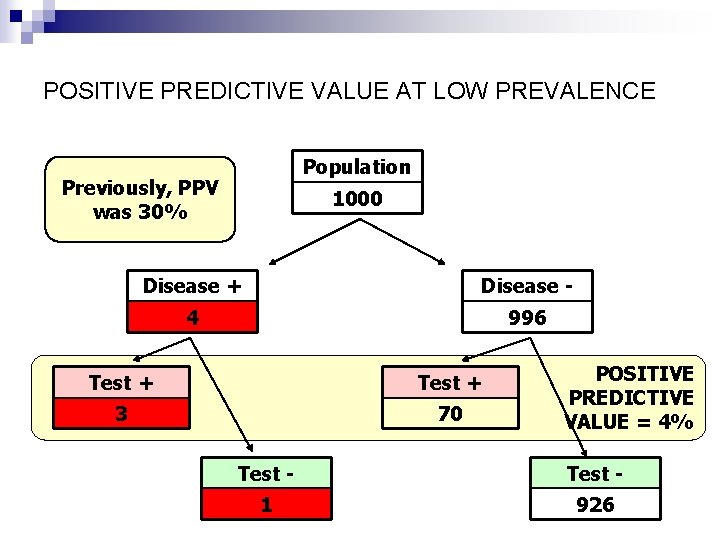

POSITIVE PREDICTIVE VALUE AT LOW PREVALENCE Population Previously, PPV was 30% 1000 Disease + Disease - 4 996 Test + 3 70 POSITIVE PREDICTIVE VALUE = 4% Test - 1 926

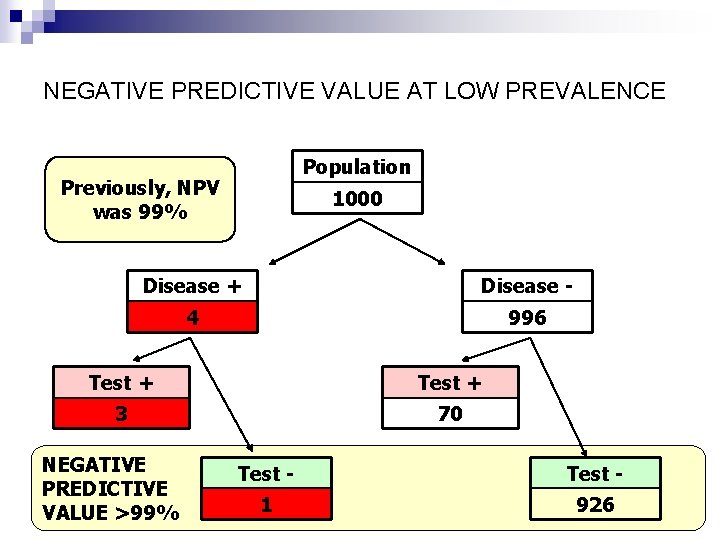

NEGATIVE PREDICTIVE VALUE AT LOW PREVALENCE Population Previously, NPV was 99% 1000 Disease + Disease - 4 996 Test + 3 70 NEGATIVE PREDICTIVE VALUE >99% Test - 1 926

Prediction Of Low Prevalence Events Even highly specific tests, when applied to low prevalence events, yield a high number of false positive results n Because of this, under such circumstances, the Positive Predictive Value of a test is low n However, this has much less influence on the Negative Predictive Value n

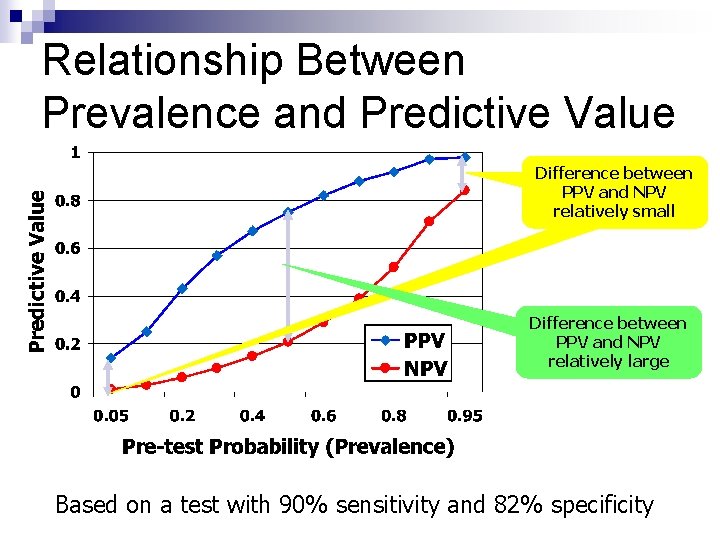

Relationship Between Prevalence and Predictive Value Difference between PPV and NPV relatively small Difference between PPV and NPV relatively large Based on a test with 90% sensitivity and 82% specificity

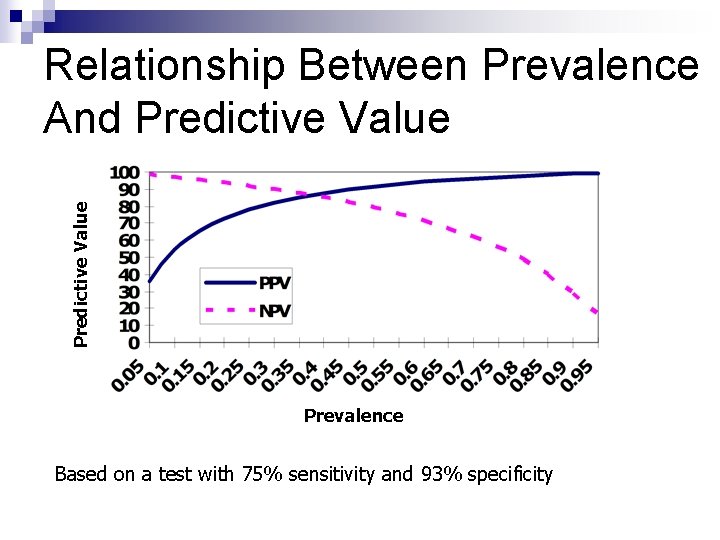

Predictive Value Relationship Between Prevalence And Predictive Value Prevalence Based on a test with 75% sensitivity and 93% specificity

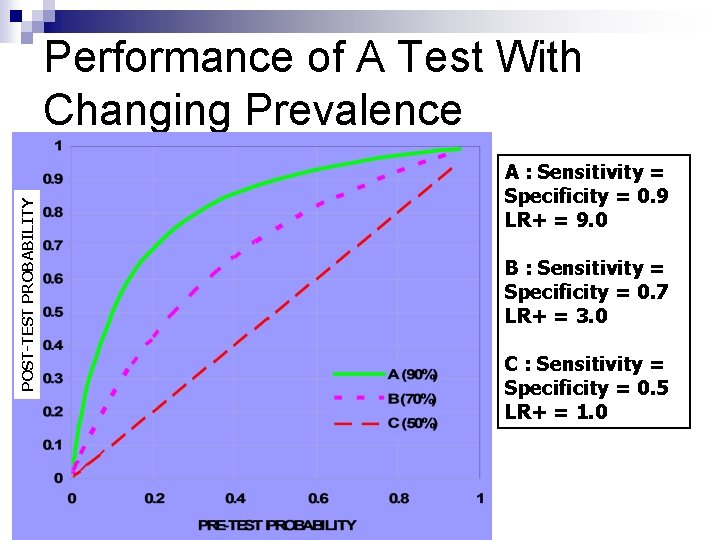

POST-TEST PROBABILITY Performance of A Test With Changing Prevalence A : Sensitivity = Specificity = 0. 9 LR+ = 9. 0 B : Sensitivity = Specificity = 0. 7 LR+ = 3. 0 C : Sensitivity = Specificity = 0. 5 LR+ = 1. 0

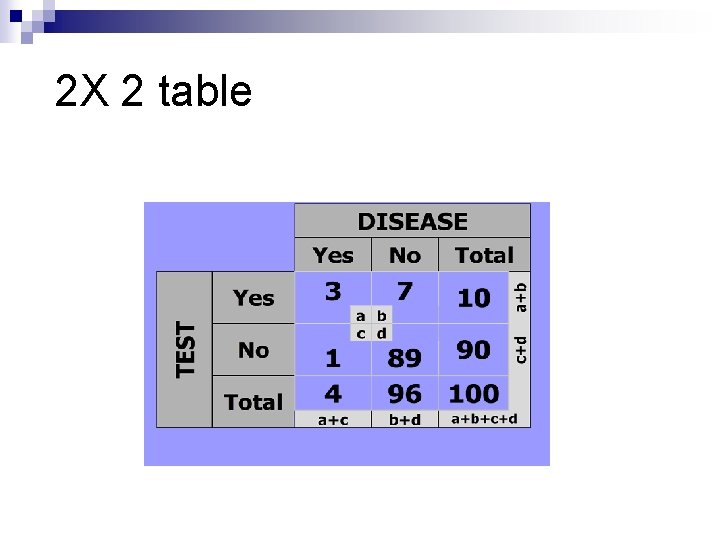

2 X 2 table

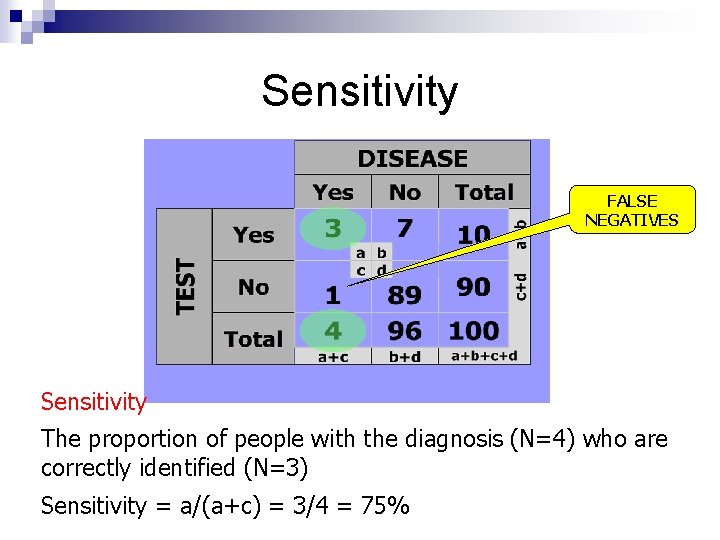

Sensitivity FALSE NEGATIVES Sensitivity The proportion of people with the diagnosis (N=4) who are correctly identified (N=3) Sensitivity = a/(a+c) = 3/4 = 75%

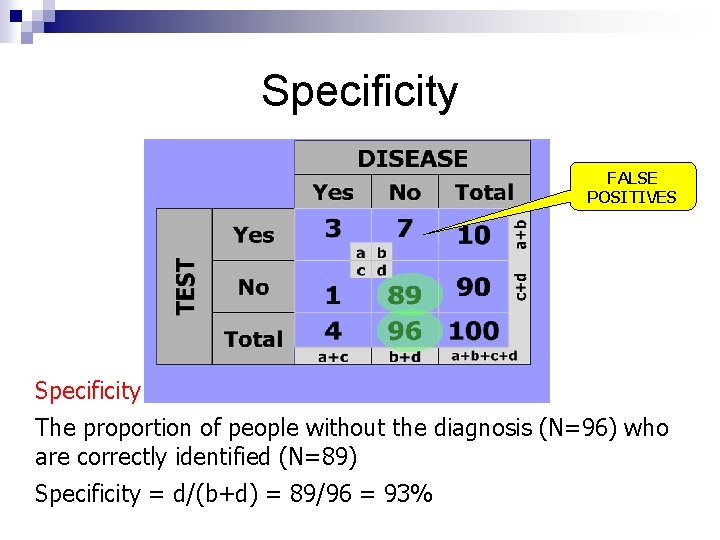

Specificity FALSE POSITIVES Specificity The proportion of people without the diagnosis (N=96) who are correctly identified (N=89) Specificity = d/(b+d) = 89/96 = 93%

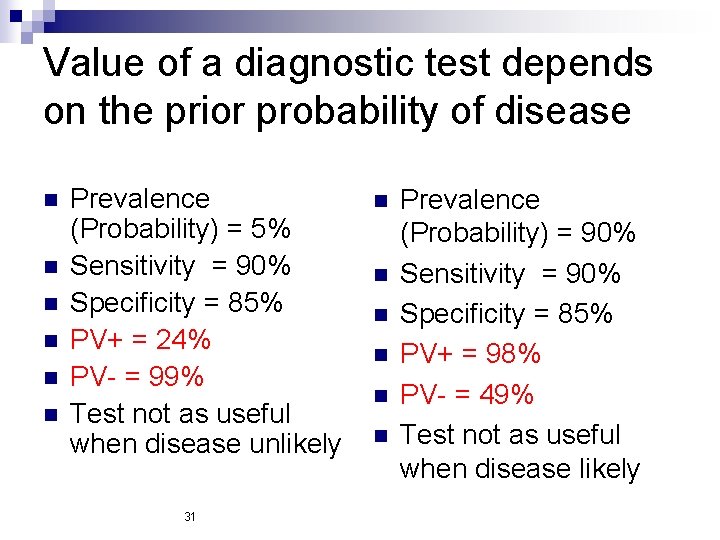

Value of a diagnostic test depends on the prior probability of disease n n n Prevalence (Probability) = 5% Sensitivity = 90% Specificity = 85% PV+ = 24% PV- = 99% Test not as useful when disease unlikely 31 n n n Prevalence (Probability) = 90% Sensitivity = 90% Specificity = 85% PV+ = 98% PV- = 49% Test not as useful when disease likely

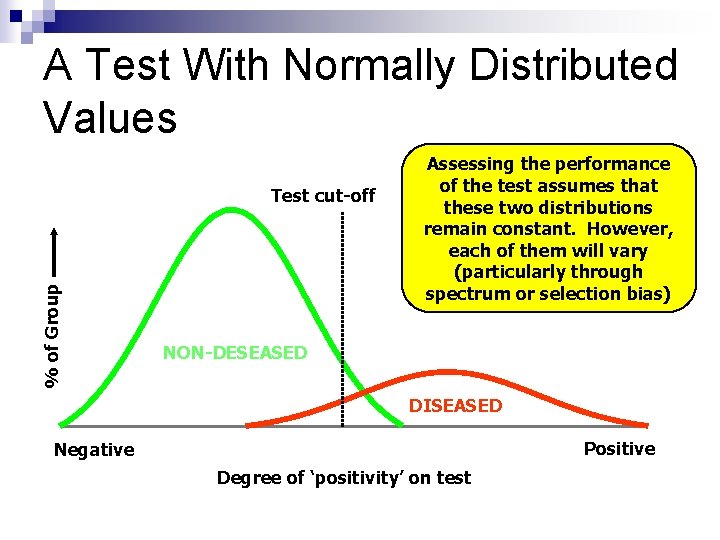

A Test With Normally Distributed Values % of Group Test cut-off Assessing the performance of the test assumes that these two distributions remain constant. However, each of them will vary (particularly through spectrum or selection bias) NON-DESEASED DISEASED Positive Negative Degree of ‘positivity’ on test

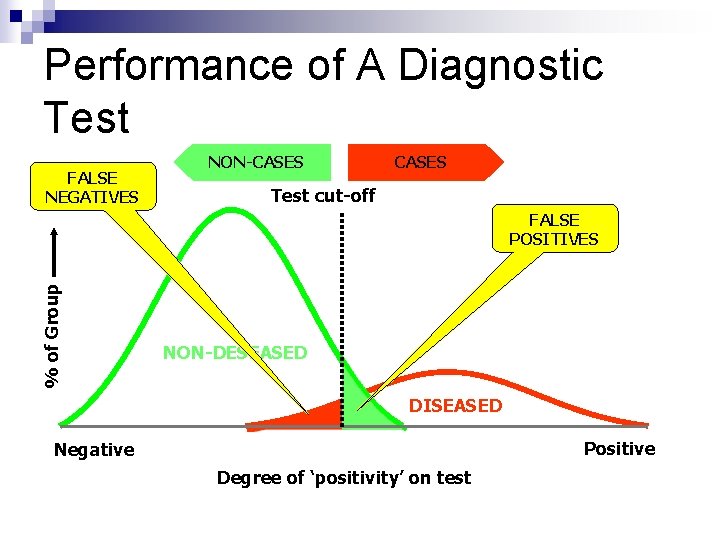

Performance of A Diagnostic Test FALSE NEGATIVES NON-CASES Test cut-off % of Group FALSE POSITIVES NON-DESEASED DISEASED Positive Negative Degree of ‘positivity’ on test

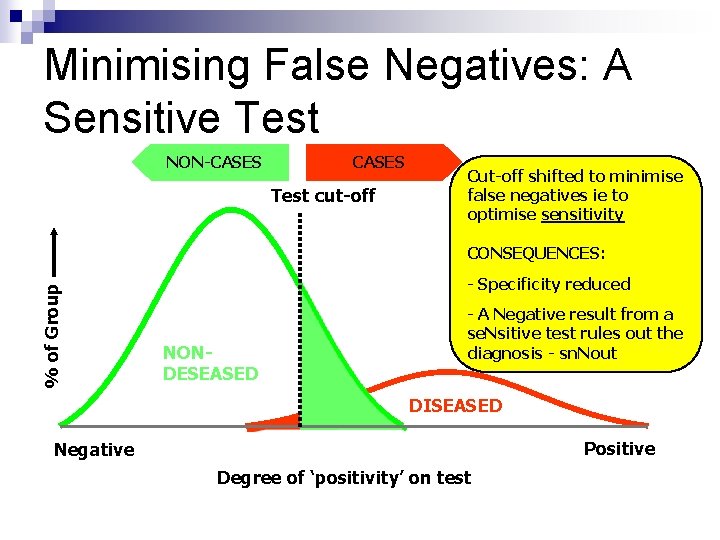

Minimising False Negatives: A Sensitive Test NON-CASES Test cut-off Cut-off shifted to minimise false negatives ie to optimise sensitivity % of Group CONSEQUENCES: - Specificity reduced NONDESEASED - A Negative result from a se. Nsitive test rules out the diagnosis - sn. Nout DISEASED Positive Negative Degree of ‘positivity’ on test

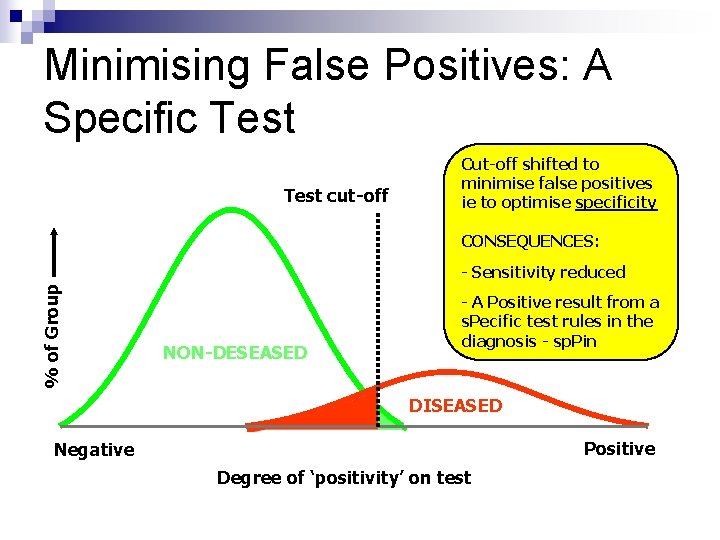

Minimising False Positives: A Specific Test cut-off Cut-off shifted to minimise false positives ie to optimise specificity CONSEQUENCES: % of Group - Sensitivity reduced NON-DESEASED - A Positive result from a s. Pecific test rules in the diagnosis - sp. Pin DISEASED Positive Negative Degree of ‘positivity’ on test

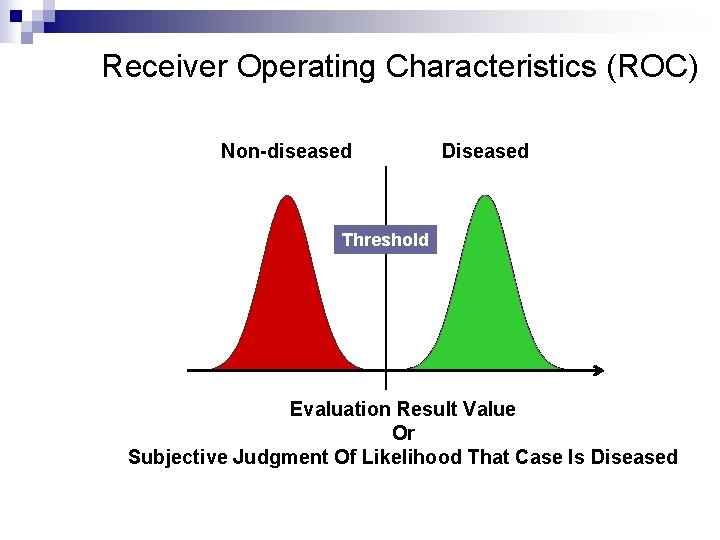

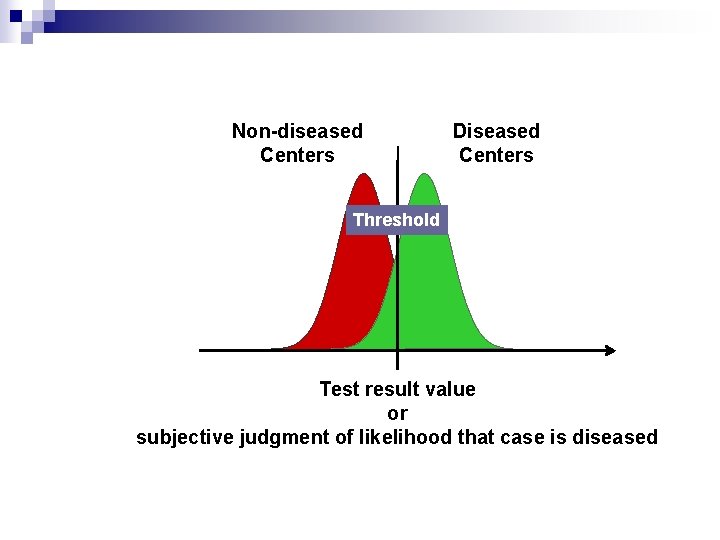

Receiver Operating Characteristics (ROC) Non-diseased Diseased Threshold Evaluation Result Value Or Subjective Judgment Of Likelihood That Case Is Diseased

Non-diseased Centers Diseased Centers Threshold Test result value or subjective judgment of likelihood that case is diseased

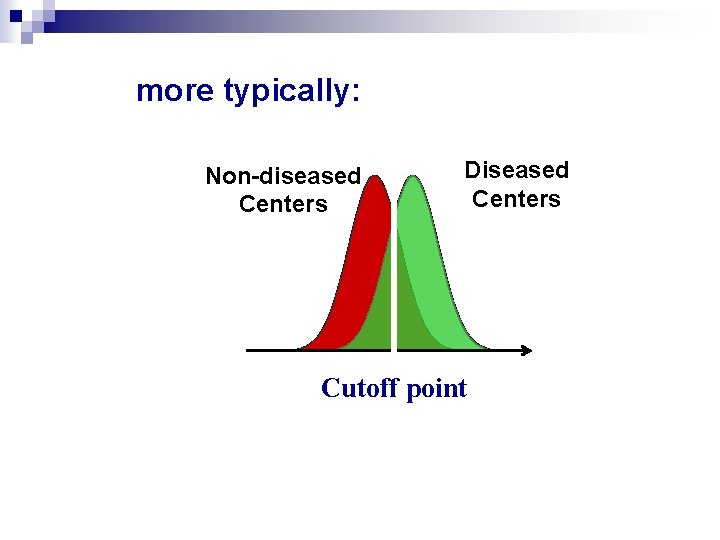

more typically: Non-diseased Centers Diseased Centers Cutoff point

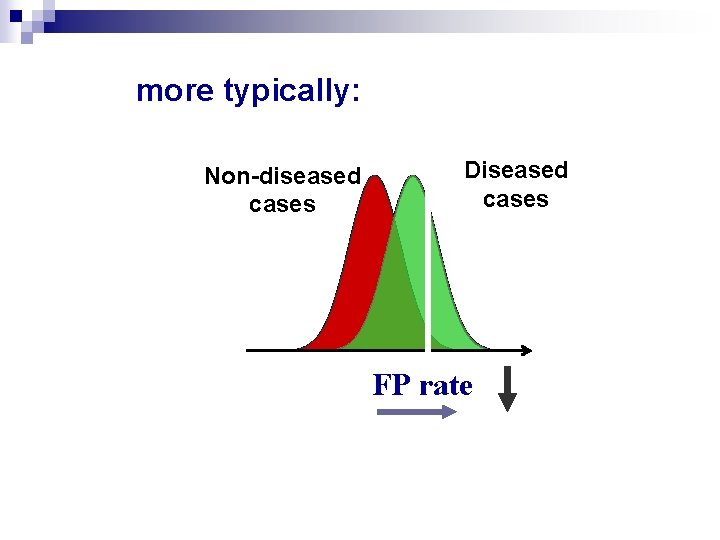

more typically: Non-diseased cases Diseased cases FP rate

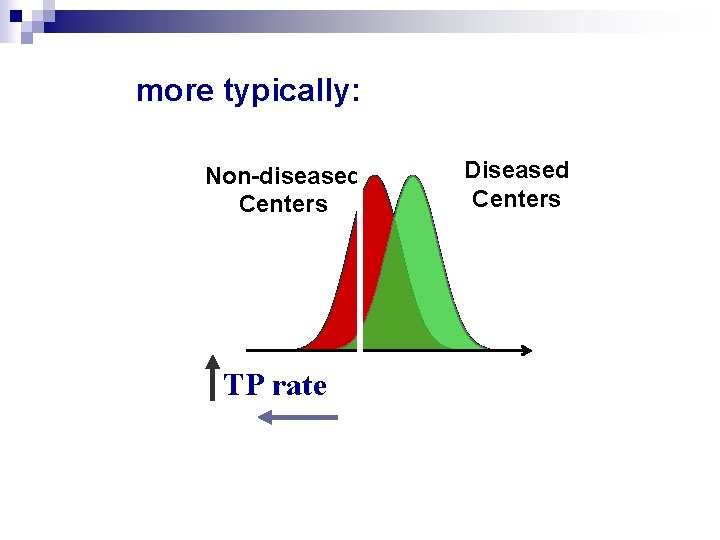

more typically: Non-diseased Centers TP rate Diseased Centers

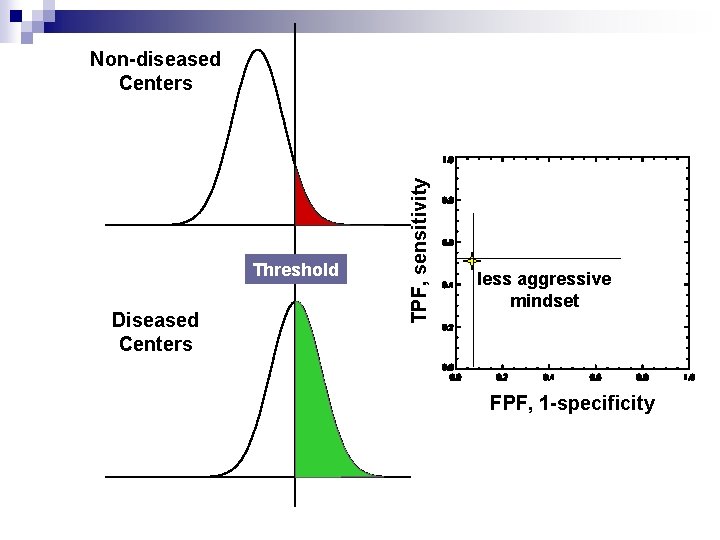

Threshold Diseased Centers TPF, sensitivity Non-diseased Centers less aggressive mindset FPF, 1 -specificity

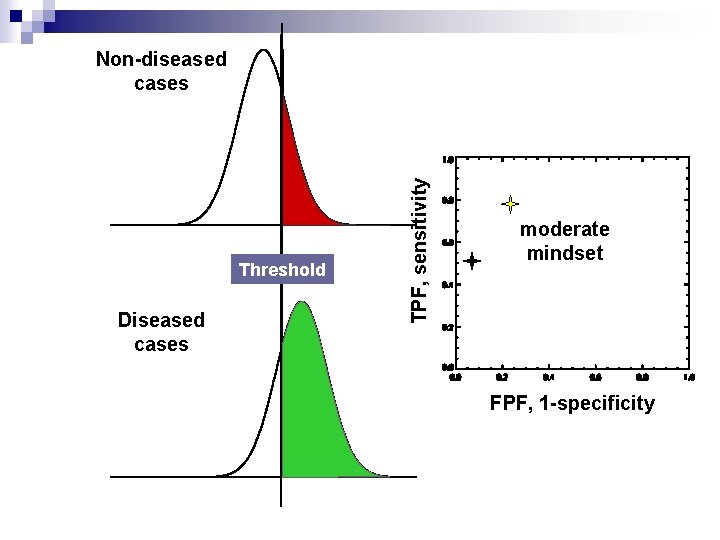

Threshold Diseased cases TPF, sensitivity Non-diseased cases moderate mindset FPF, 1 -specificity

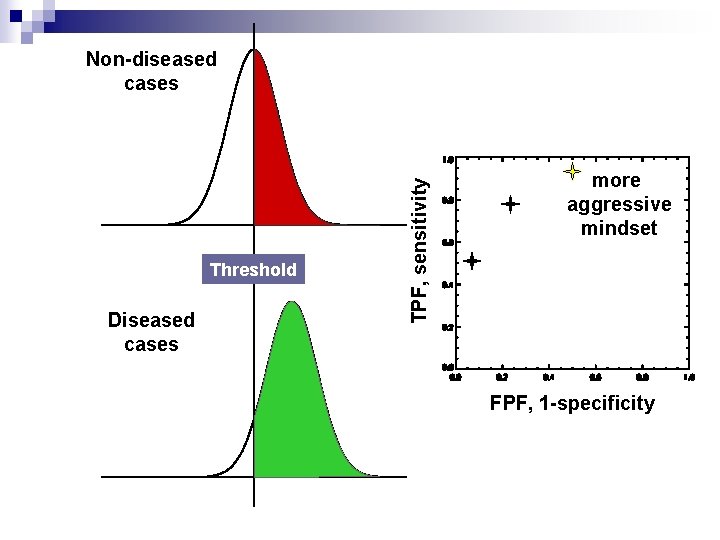

Threshold Diseased cases TPF, sensitivity Non-diseased cases more aggressive mindset FPF, 1 -specificity

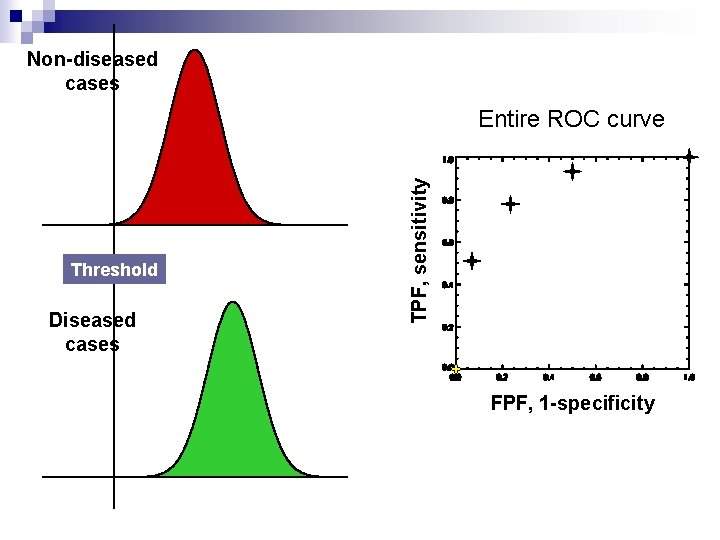

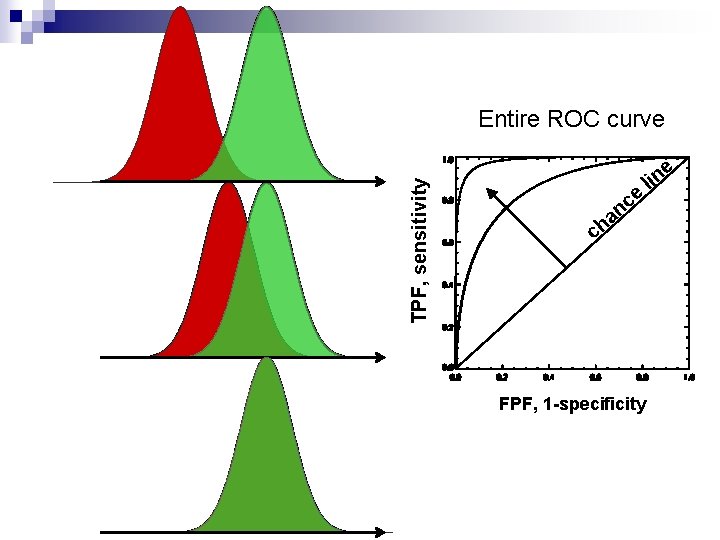

Non-diseased cases Threshold Diseased cases TPF, sensitivity Entire ROC curve FPF, 1 -specificity

TPF, sensitivity Entire ROC curve e e c n a h c FPF, 1 -specificity lin

n Check this out: http: //www. anaesthetist. com/mnm/stats/ro c/Findex. htm

Likelihood Ratios

Pre-test & post-test probability Pre-test probability of disease can be compared with the estimated later probability of disease using the information provided by a diagnostic test. n The difference between the previous probability and the later probability is an effective way to analyze the efficiency of a diagnostic method. n

It tells you how much a positive or negative result changes the likelihood that a patient would have the disease. n The likelihood ratio incorporates both the sensitivity and specificity of the test and provides a direct estimate of how much a test result will change the odds of having a disease n

n The likelihood ratio for a positive result (LR+) tells you how much the odds of the disease increase when a test is positive. n The likelihood ratio for a negative result (LR-) tells you how much the odds of the disease decrease when a test is negative.

Positive & Negative Likelihood Ratios n We can judge diagnostic tests: positive and negative likelihood ratios. n Like sensitivity and specificity, are independent of disease prevalence.

Likelihood Ratios (Odds( The probability of a test result in those with the disease divided by the probability of the result in those without the disease. n How many more times (or less) likely a test result is to be found in the disease compared with the non-diseased. n 52

Positive Likelihood Ratios This ratio divides the probability that a diseased patient will test positive by the probability that a healthy patient will test positive. n The positive likelihood ratio +LR = sensitivity/(1 – specificity) n

False Positive Rate The false positive rate = false positives / (false positives + true negatives). It is also equal to 1 - specificity. n The false negative rate = false negatives / (false negatives + true positives). It is also equal to 1 – sensitivity. n

Positive Likelihood Ratios It can also be written as the true positive rate/false positive rate. n Thus, the higher the positive likelihood ratio, the better the test (a perfect test has a positive likelihood ratio equal to infinity). n

Negative Likelihood Ratio This ratio divides the probability that a diseased patient will test negative by the probability that a healthy patient will test negative. n The negative likelihood ratio –LR = (1 – sensitivity)/specificity. n

False Negative Rate The false negative rate = false negatives / (false negatives + true positives). n It is also equal to 1 – sensitivity. n

Negative Likelihood Ratio It can also be written as the false negative rate/true negative rate. n Therefore, the lower the negative likelihood ratio, the better the test (a perfect test has a negative likelihood ratio of zero). n

Positive & Negative Likelihood Ratios n Although likelihood ratios are independent of disease prevalence, their direct validity is only within the original study population.

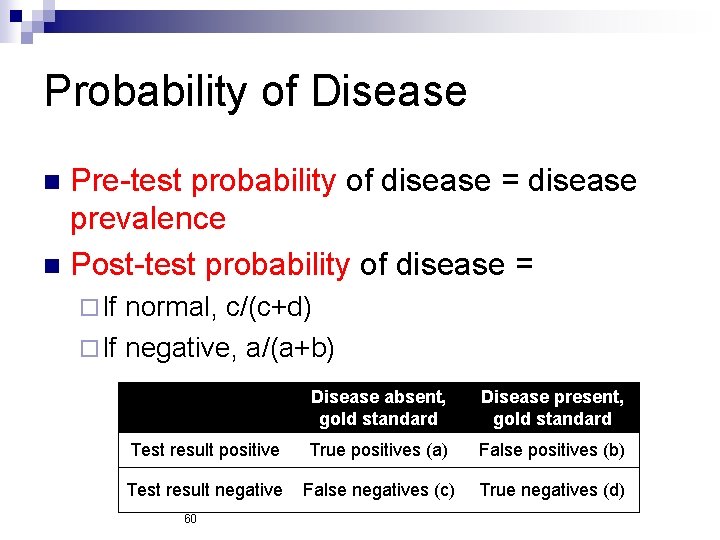

Probability of Disease Pre-test probability of disease = disease prevalence n Post-test probability of disease = n ¨ If normal, c/(c+d) ¨ If negative, a/(a+b) Disease absent, gold standard Disease present, gold standard Test result positive True positives (a) False positives (b) Test result negative False negatives (c) True negatives (d) 60

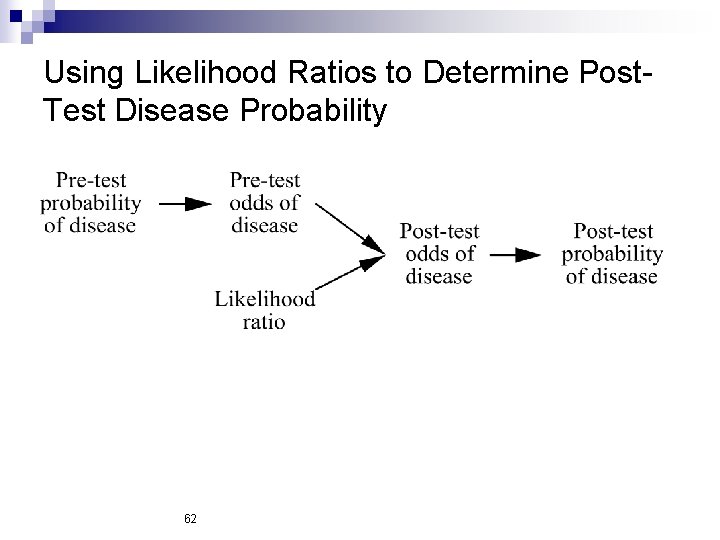

Bayes Theorem Post-test Odds = Likelihood Ratio X Pre-test Odds

Using Likelihood Ratios to Determine Post. Test Disease Probability 62

Pre-test & post-test probability “Post-test probability” depends on the accuracy of the diagnostic test and the pre -test probability of disease n A test result cannot be interpreted without some knowledge of the pre-test probability n

Where does “pre-test probability” come from? Clinical experience n Epidemiological data n “Clinical decision rules” n Guess n

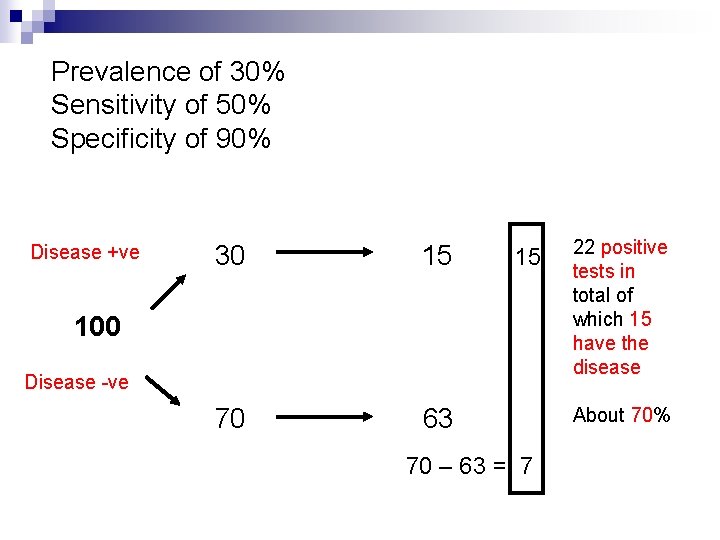

what is the likelihood that this patient has the disease? A disease with a prevalence of 30% must be diagnosed. n There is a test for this disease. n It has a sensitivity of 50% and a specificity of 90%. n

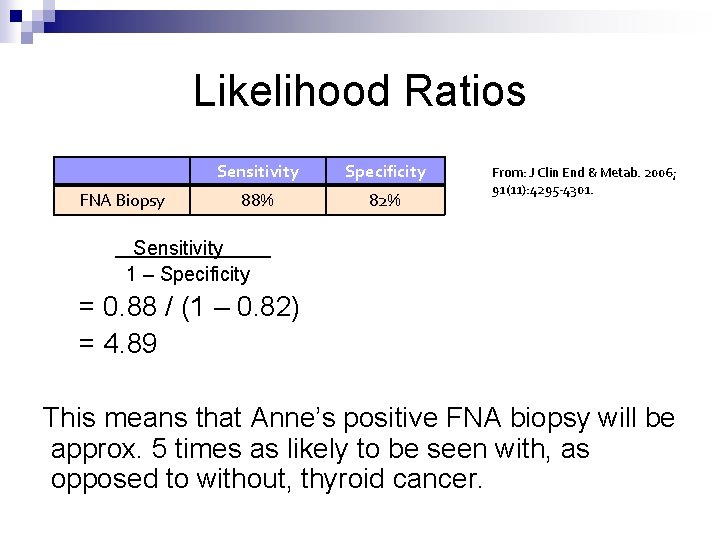

Likelihood Ratios FNA Biopsy Sensitivity Specificity 88% 82% From: J Clin End & Metab. 2006; 91(11): 4295 -4301. Sensitivity 1 – Specificity = 0. 88 / (1 – 0. 82) = 4. 89 This means that Anne’s positive FNA biopsy will be approx. 5 times as likely to be seen with, as opposed to without, thyroid cancer.

Prevalence of 30% Sensitivity of 50% Specificity of 90% Disease +ve 30 15 70 63 15 100 Disease -ve 70 – 63 = 7 22 positive tests in total of which 15 have the disease About 70%

Likelihood Population 100 Disease + 4 Test + 3 Test 1 The likelihood that someone with the disease will have a positive test is ¾ or 75% This is the same as the sensitivity

Likelihood II Population 100 Disease 96 The likelihood that someone without the disease will have a positive test is 7/96 or 7% This is the same as the (1 -specificity) Test + 7 Test 89

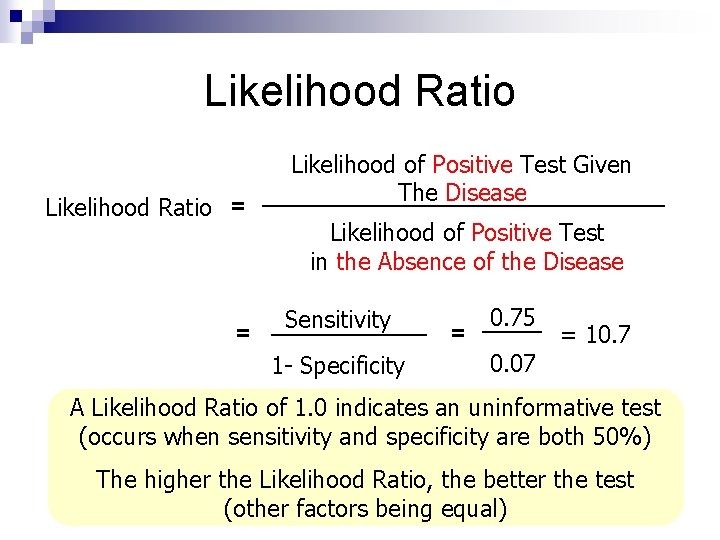

Likelihood Ratio = = Likelihood of Positive Test Given The Disease Likelihood of Positive Test in the Absence of the Disease Sensitivity 1 - Specificity = 0. 75 = 10. 7 0. 07 A Likelihood Ratio of 1. 0 indicates an uninformative test (occurs when sensitivity and specificity are both 50%) The higher the Likelihood Ratio, the better the test (other factors being equal)

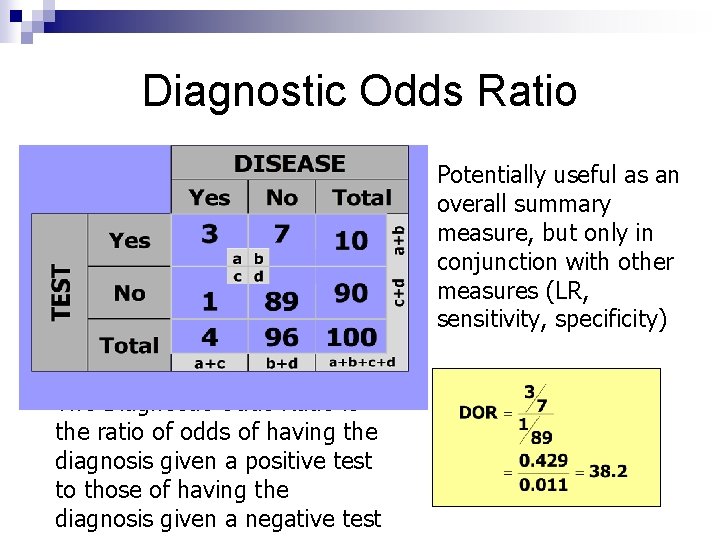

Diagnostic Odds Ratio Potentially useful as an overall summary measure, but only in conjunction with other measures (LR, sensitivity, specificity) The Diagnostic Odds Ratio is the ratio of odds of having the diagnosis given a positive test to those of having the diagnosis given a negative test

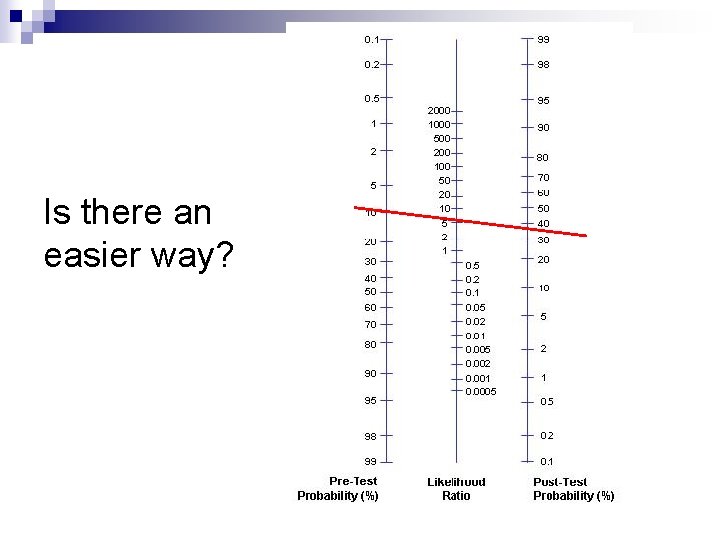

Is there an easier way?

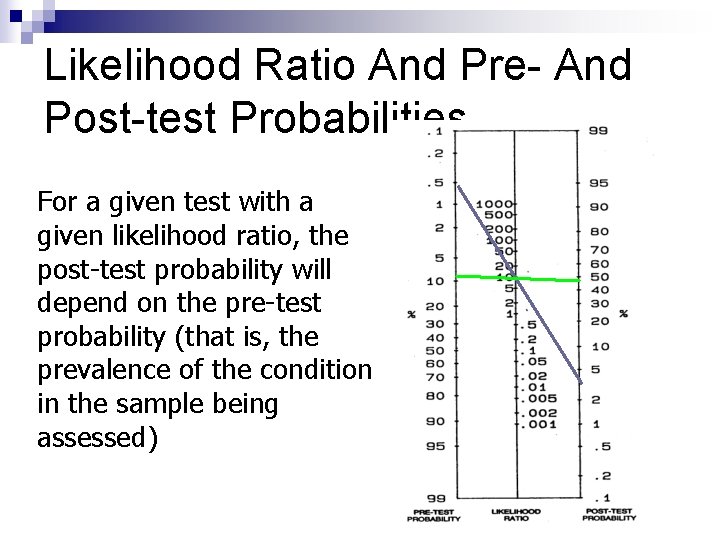

Likelihood Ratio And Pre- And Post-test Probabilities For a given test with a given likelihood ratio, the post-test probability will depend on the pre-test probability (that is, the prevalence of the condition in the sample being assessed)

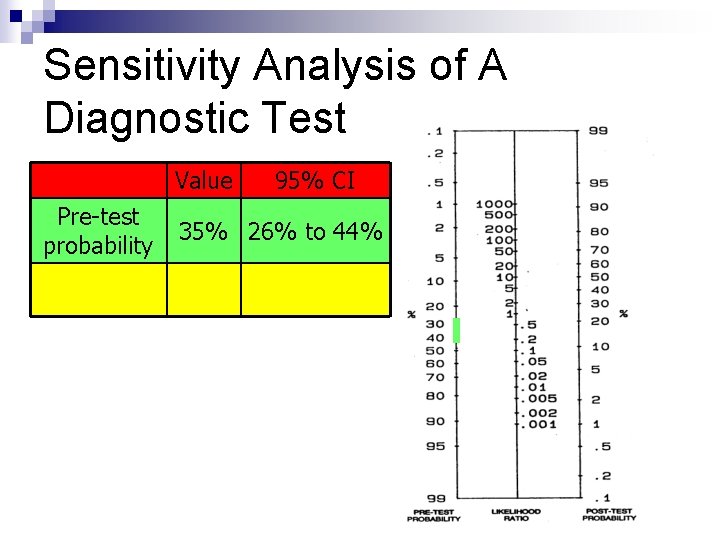

Sensitivity Analysis of A Diagnostic Test Value Pre-test probability 95% CI 35% 26% to 44%

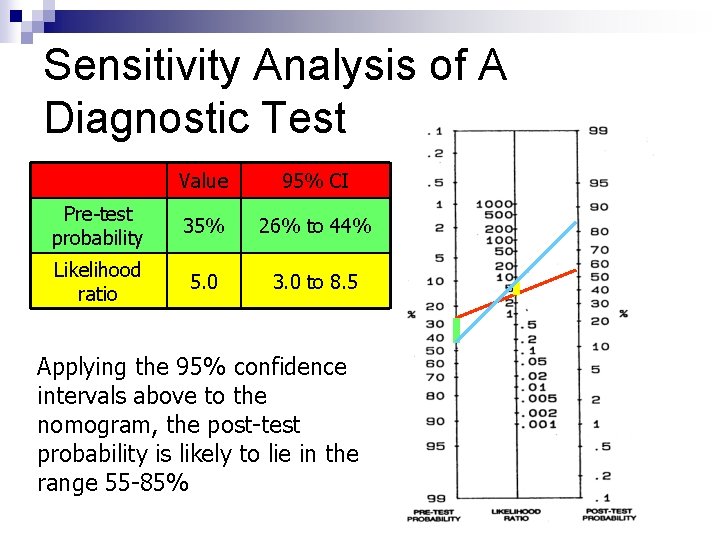

Sensitivity Analysis of A Diagnostic Test Value 95% CI Pre-test probability 35% 26% to 44% Likelihood ratio 5. 0 3. 0 to 8. 5 Applying the 95% confidence intervals above to the nomogram, the post-test probability is likely to lie in the range 55 -85%

Applying A Diagnostic Test In Different Settings ¨ The Positive Predictive Value of a test will vary (according to the prevalence of the condition in the chosen setting) ¨ Sensitivity and Specificity are usually considered properties of the test rather than the setting, and are therefore usually considered to remain constant ¨ However, sensitivity and specificity are likely to be influenced by complexity of differential diagnoses and a multitude of other factors (cf spectrum bias)

Likelihood Ratios (Odds( n This is an alternative way of describing the performance of a diagnostic test. Similar to S and S, and can be used to calculate the probability of disease after a positive or negative test (predictive value). Advantage of this is that it can be used at multiple levels of test results. 77

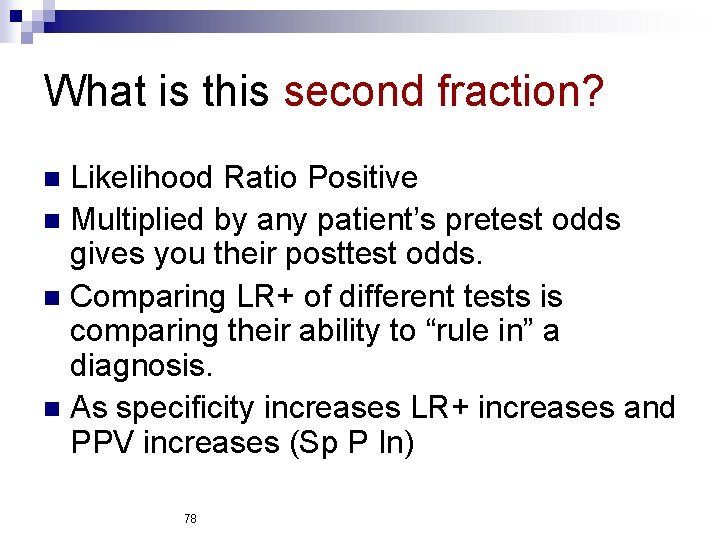

What is this second fraction? Likelihood Ratio Positive n Multiplied by any patient’s pretest odds gives you their posttest odds. n Comparing LR+ of different tests is comparing their ability to “rule in” a diagnosis. n As specificity increases LR+ increases and PPV increases (Sp P In) n 78

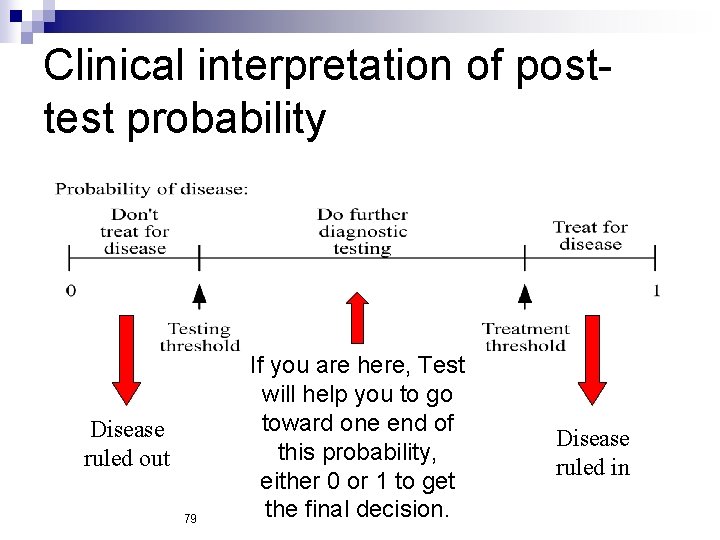

Clinical interpretation of posttest probability Disease ruled out 79 If you are here, Test will help you to go toward one end of this probability, either 0 or 1 to get the final decision. Disease ruled in

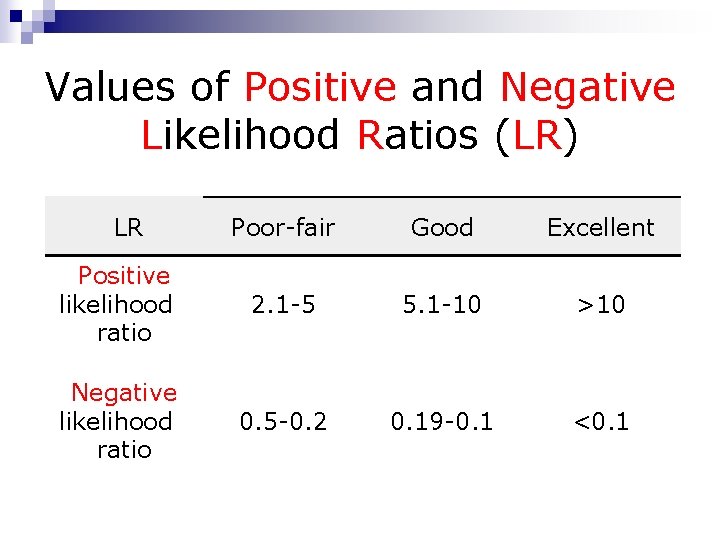

Values of Positive and Negative Likelihood Ratios (LR) LR Poor-fair Good Excellent Positive likelihood ratio 2. 1 -5 5. 1 -10 >10 Negative likelihood ratio 0. 5 -0. 2 0. 19 -0. 1 <0. 1

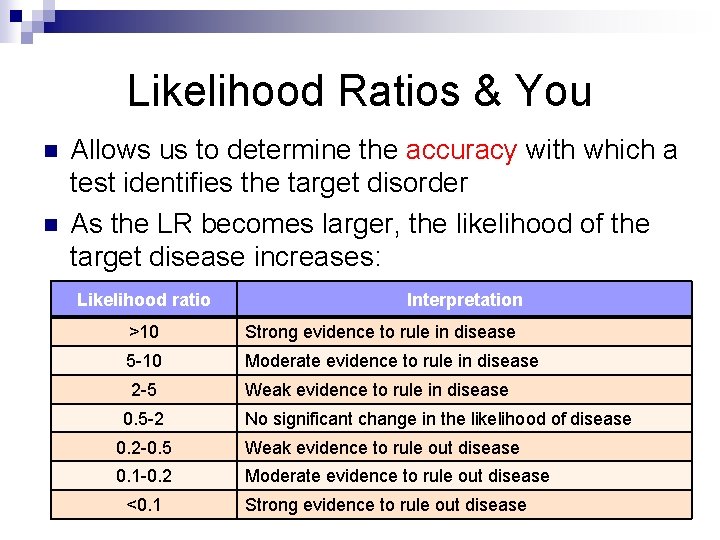

Likelihood Ratios & You n n Allows us to determine the accuracy with which a test identifies the target disorder As the LR becomes larger, the likelihood of the target disease increases: Likelihood ratio Interpretation >10 Strong evidence to rule in disease 5 -10 Moderate evidence to rule in disease 2 -5 Weak evidence to rule in disease 0. 5 -2 No significant change in the likelihood of disease 0. 2 -0. 5 Weak evidence to rule out disease 0. 1 -0. 2 Moderate evidence to rule out disease <0. 1 Strong evidence to rule out disease

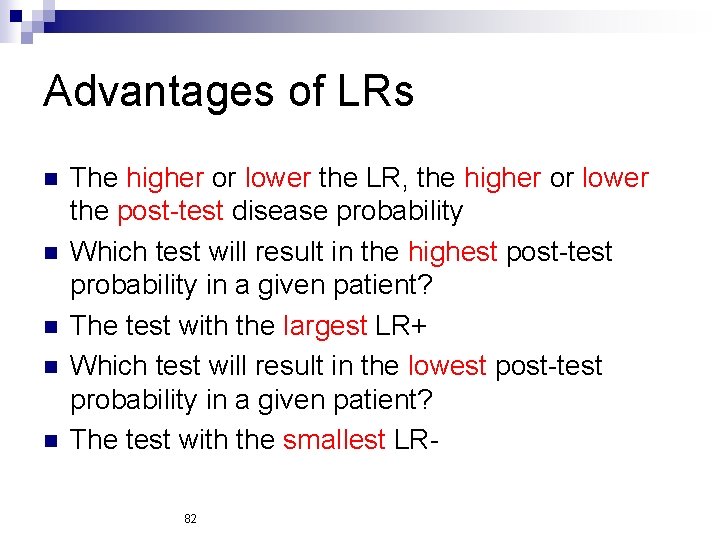

Advantages of LRs n n n The higher or lower the LR, the higher or lower the post-test disease probability Which test will result in the highest post-test probability in a given patient? The test with the largest LR+ Which test will result in the lowest post-test probability in a given patient? The test with the smallest LR 82

Advantages of LRs n Clear separation of test characteristics from disease probability. 83

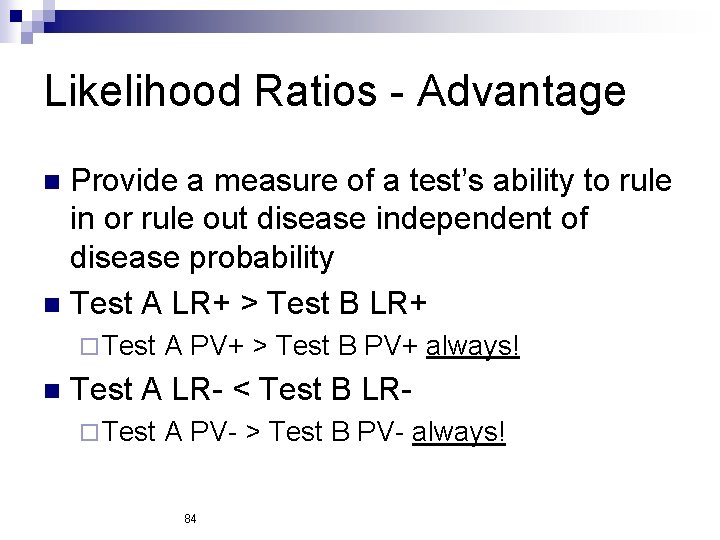

Likelihood Ratios - Advantage Provide a measure of a test’s ability to rule in or rule out disease independent of disease probability n Test A LR+ > Test B LR+ n ¨ Test n A PV+ > Test B PV+ always! Test A LR- < Test B LR¨ Test A PV- > Test B PV- always! 84

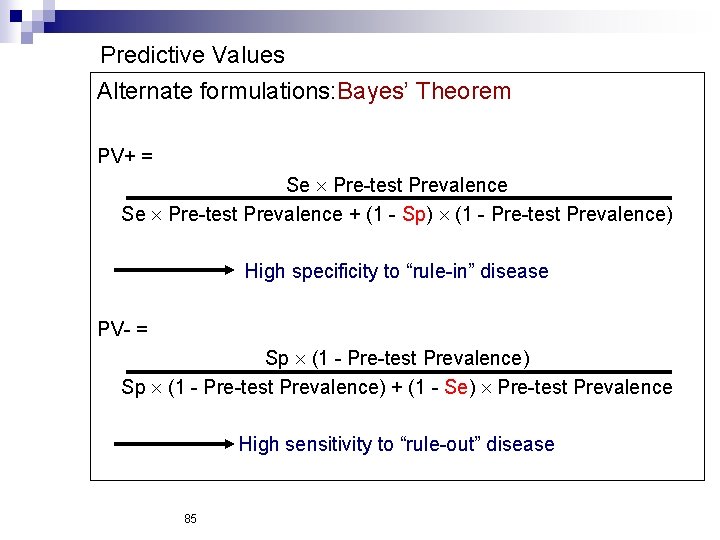

Predictive Values Alternate formulations: Bayes’ Theorem PV+ = Se Pre-test Prevalence + (1 - Sp) (1 - Pre-test Prevalence) High specificity to “rule-in” disease PV- = Sp (1 - Pre-test Prevalence) + (1 - Se) Pre-test Prevalence High sensitivity to “rule-out” disease 85

Clinical Interpretation: Predictive Values 86

If Predictive value is more useful why not reported? Should they report it? n Only if everyone is tested. n And even then. n You need sensitivity and specificity from literature. Add YOUR OWN pretest probability. n 87

So how do you figure pretest probability? n n n Start with disease prevalence. Refine to local population. Refine to population you serve. Refine according to patient’s presentation. Add in results of history and exam (clinical suspicion). Also consider your own threshold for testing. 88

Pretest Probability: Clinical Significance Expected test result means more than unexpected. n Same clinical findings have different meaning in different settings (e. g. scheduled versus unscheduled visit). Heart sound, tender area. n Neurosurgeon. n Lupus nephritis. n 89

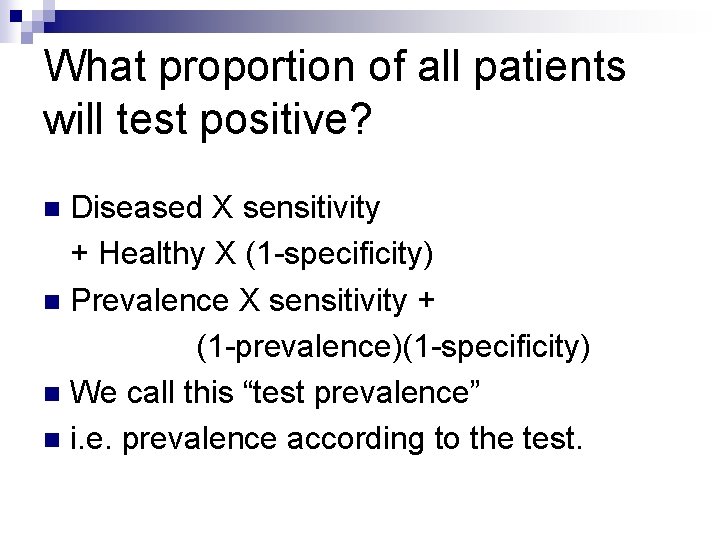

What proportion of all patients will test positive? Diseased X sensitivity + Healthy X (1 -specificity) n Prevalence X sensitivity + (1 -prevalence)(1 -specificity) n We call this “test prevalence” n i. e. prevalence according to the test. n

Some Examples Diabetes mellitus (type 2( n Check out this:

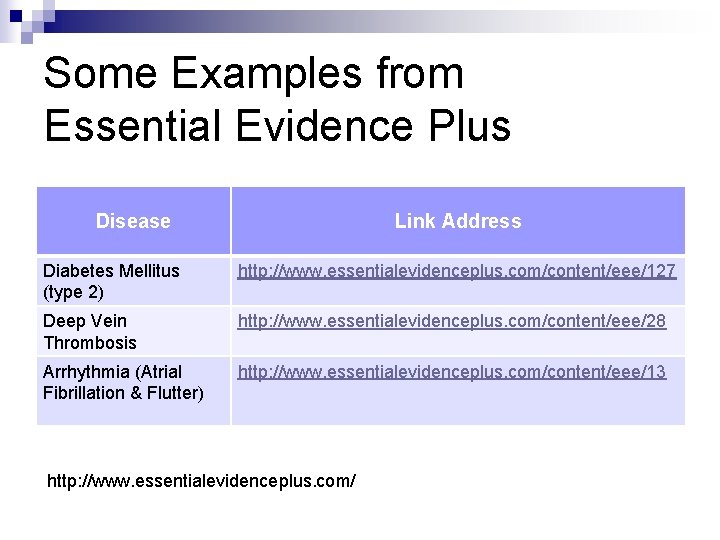

Some Examples from Essential Evidence Plus Disease Link Address Diabetes Mellitus (type 2) http: //www. essentialevidenceplus. com/content/eee/127 Deep Vein Thrombosis http: //www. essentialevidenceplus. com/content/eee/28 Arrhythmia (Atrial Fibrillation & Flutter) http: //www. essentialevidenceplus. com/content/eee/13 http: //www. essentialevidenceplus. com/

Which one of these test is the best for SLE Dx? Test Sensitivity Specificity LR(+) ANA 99 80 4. 95 ds. DNA 70 95 14 ss. DNA 80 50 1. 6 Histone 30 -80 50 1. 1 Nucleoprotein 58 50 1. 16 Sm 25 99 25 RNP 50 87 -94 3. 8 -8. 3 PCNA 5 95 1

Was it clear enough !

Key References Sedlmeier P and Gigerenzer G. Teaching Bayesian reasoning in less than two hours. Journal of Experimental Psychology: General. 130 (3): 380 -400, 2001. Knotternus JA (ed). The Evidence Base of Clinical Diagnosis. London: BMJ Books, 2002. Sackett DL, Haynes RB, Guyatt G, and Tugwell P. Clinical Epidemiology : A Basic Science for Clinical Medicine. Boston, Mass: Little, Brown & Co, 1991. Loong TW. Understanding sensitivity and specificity with the right side of the brain. BMJ 2003: 327: 716 -19.

- Slides: 96