Equating Margaret Wu University of Melbourne Why is

Equating Margaret Wu University of Melbourne

Why is equating needed? • Confounding between ability and test difficulty. • When a person performs well on a test, we do not know whether it is because the person is very able, or because the test is easy.

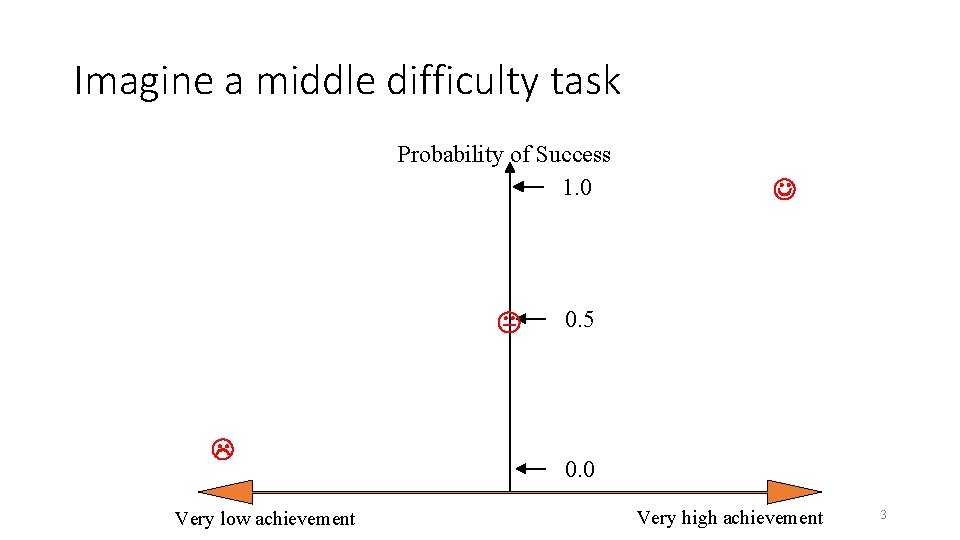

Imagine a middle difficulty task Probability of Success 1. 0 Very low achievement 0. 5 0. 0 Very high achievement 3

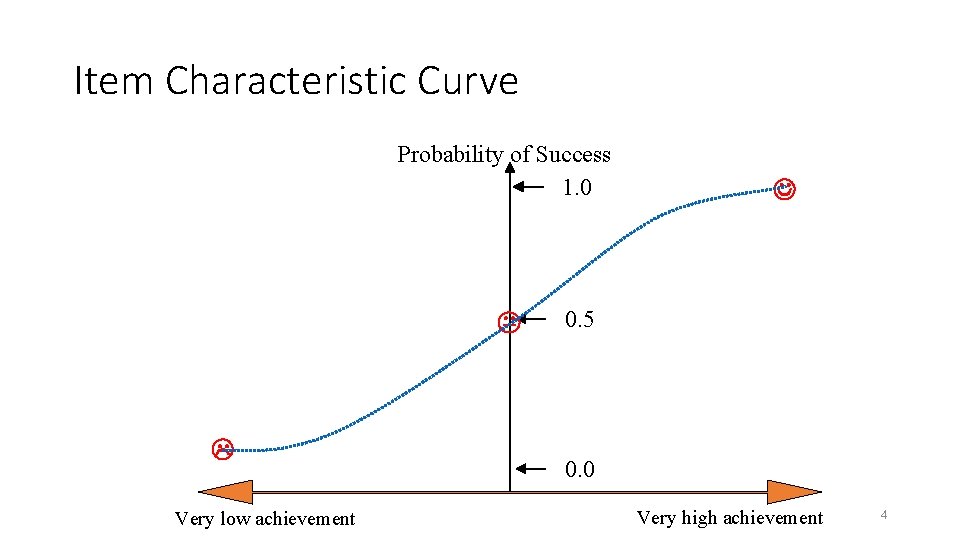

Item Characteristic Curve Probability of Success 1. 0 Very low achievement 0. 5 0. 0 Very high achievement 4

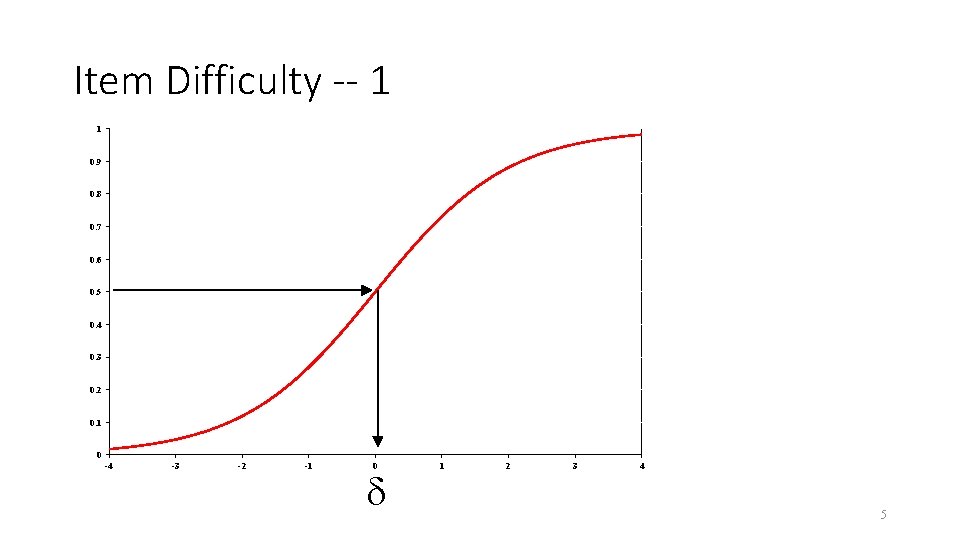

Item Difficulty -- 1 1 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 -4 -3 -2 -1 0 1 2 3 4 5

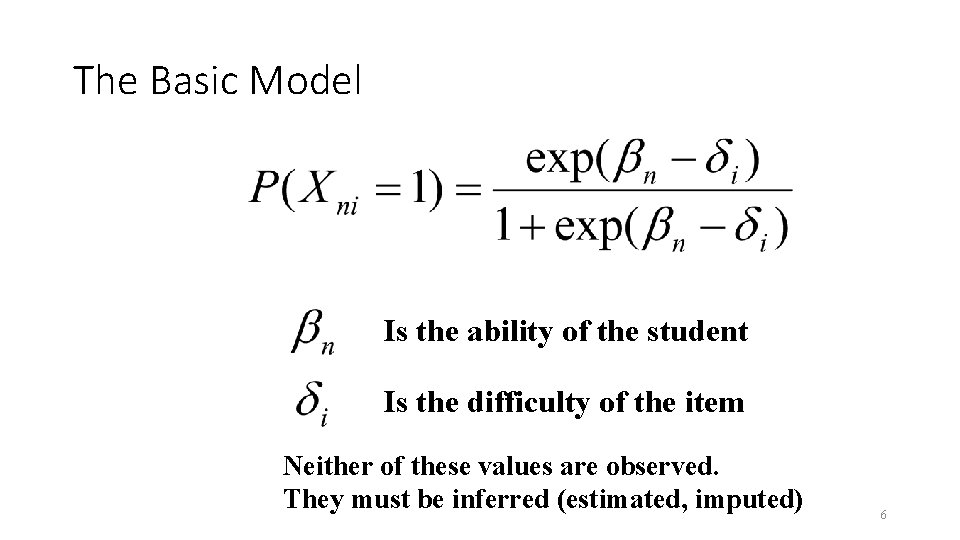

The Basic Model Is the ability of the student Is the difficulty of the item Neither of these values are observed. They must be inferred (estimated, imputed) 6

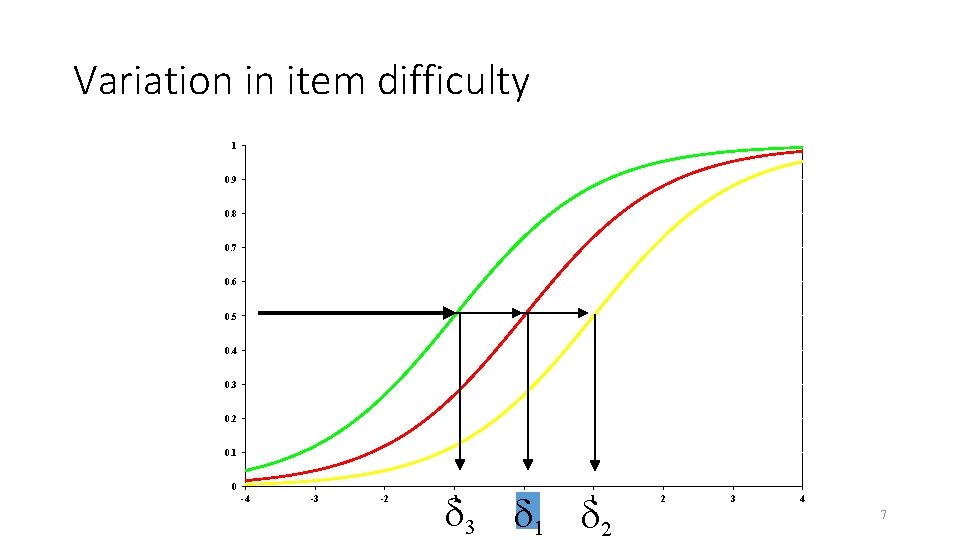

Variation in item difficulty 1 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 -4 -3 -2 3 1 2 -1 0 1 2 3 4 7

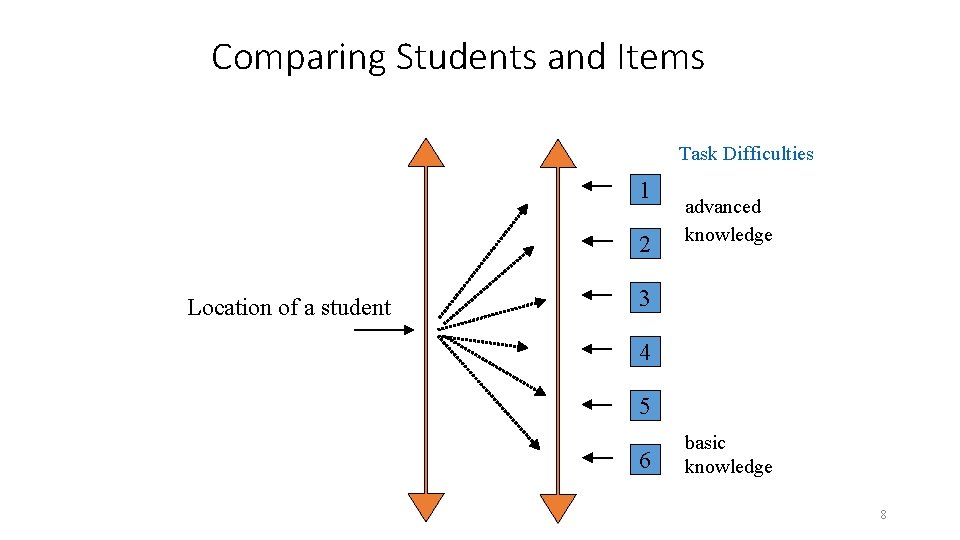

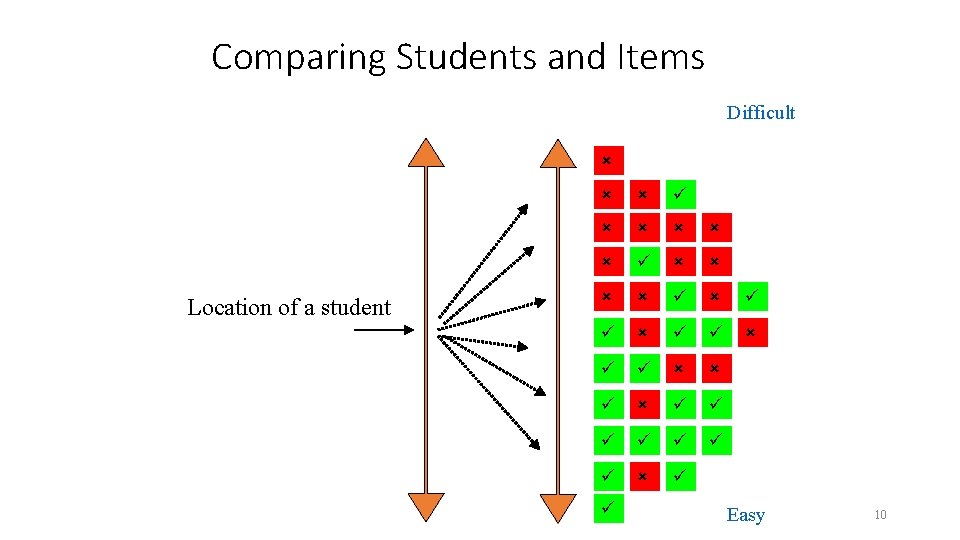

Comparing Students and Items Task Difficulties 1 2 Location of a student advanced knowledge 3 4 5 6 basic knowledge 8

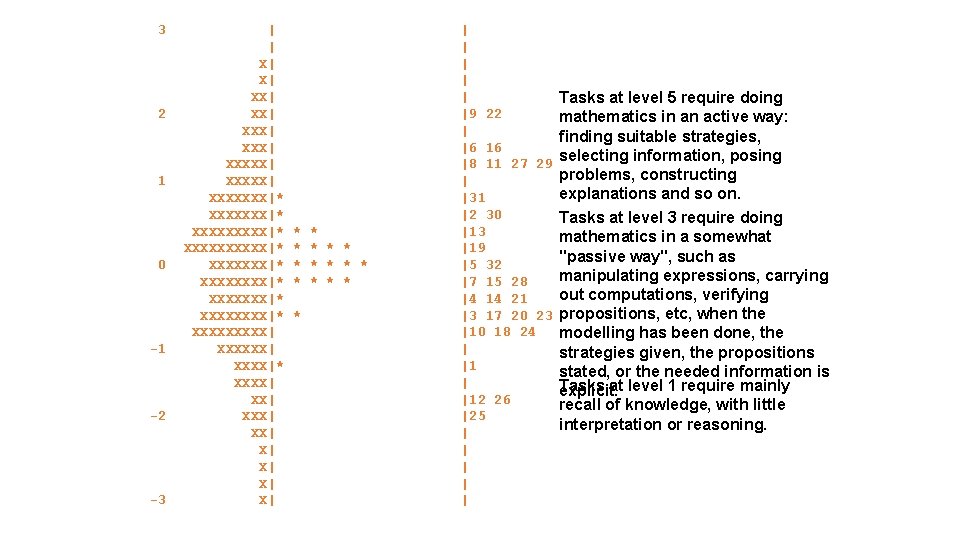

3 2 1 0 -1 -2 -3 | | X| X| XX| XXX| XXXXX| XXXXXXX|* XXXXXXXXX|* XXXXXXXX|* XXXXX| XXXXXX|* XXXX| XX| X| X| * * * * | | | |9 22 | |6 16 |8 11 27 29 | |31 |2 30 |13 |19 |5 32 |7 15 28 |4 14 21 |3 17 20 23 |10 18 24 | |12 26 |25 | | | Tasks at level 5 require doing mathematics in an active way: finding suitable strategies, selecting information, posing problems, constructing explanations and so on. Tasks at level 3 require doing mathematics in a somewhat "passive way", such as manipulating expressions, carrying out computations, verifying propositions, etc, when the modelling has been done, the strategies given, the propositions stated, or the needed information is Tasks at level 1 require mainly explicit. recall of knowledge, with little interpretation or reasoning.

Comparing Students and Items Difficult Location of a student Easy 10

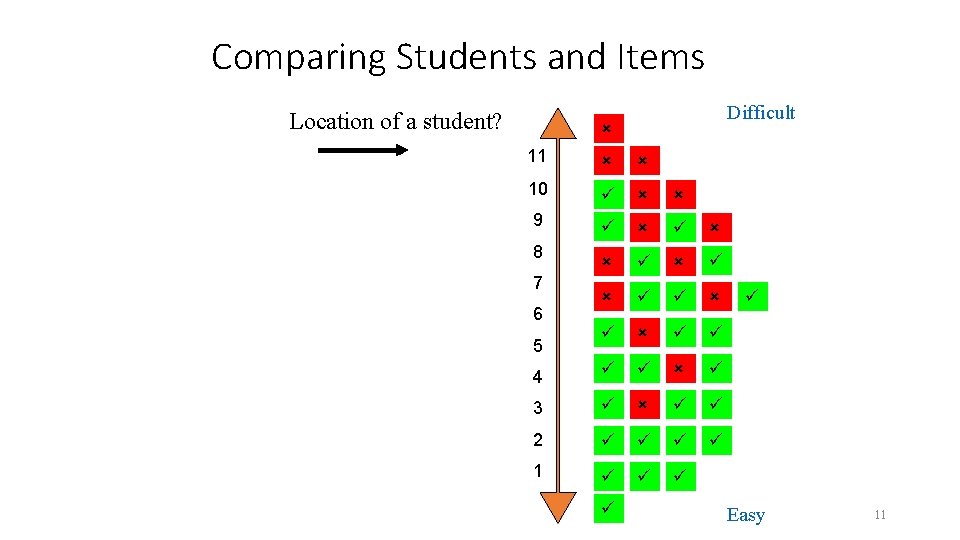

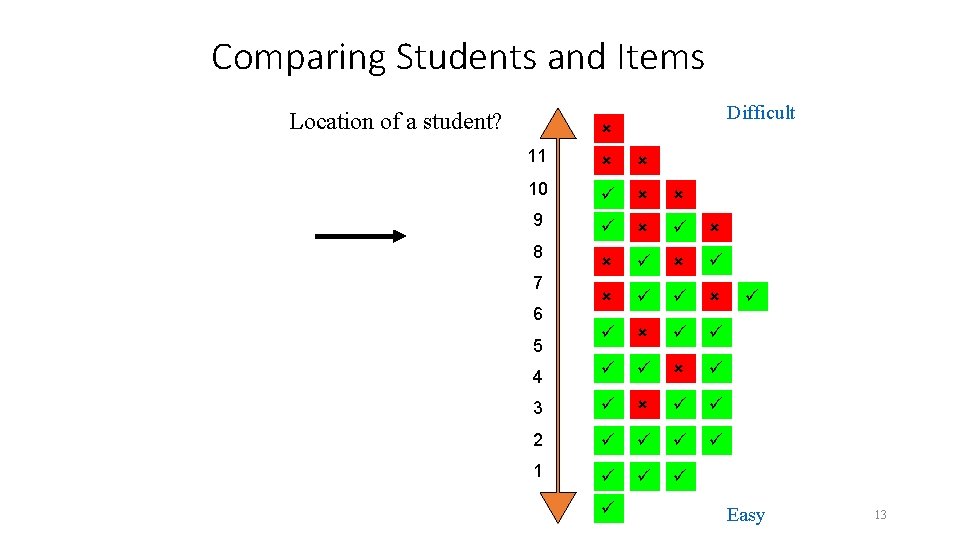

Comparing Students and Items Location of a student? Difficult 11 10 9 4 3 2 1 8 7 6 5 Easy 11

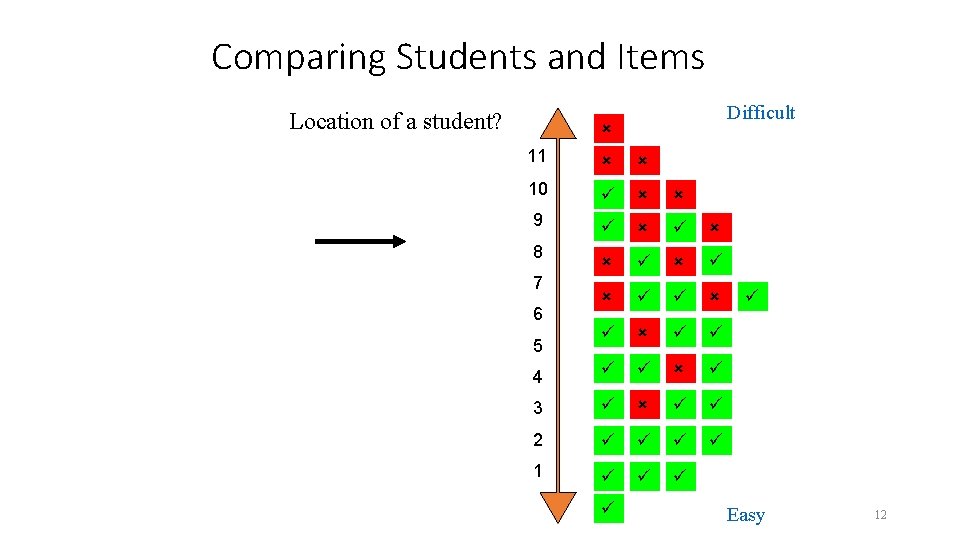

Comparing Students and Items Location of a student? Difficult 11 10 9 4 3 2 1 8 7 6 5 Easy 12

Comparing Students and Items Location of a student? Difficult 11 10 9 4 3 2 1 8 7 6 5 Easy 13

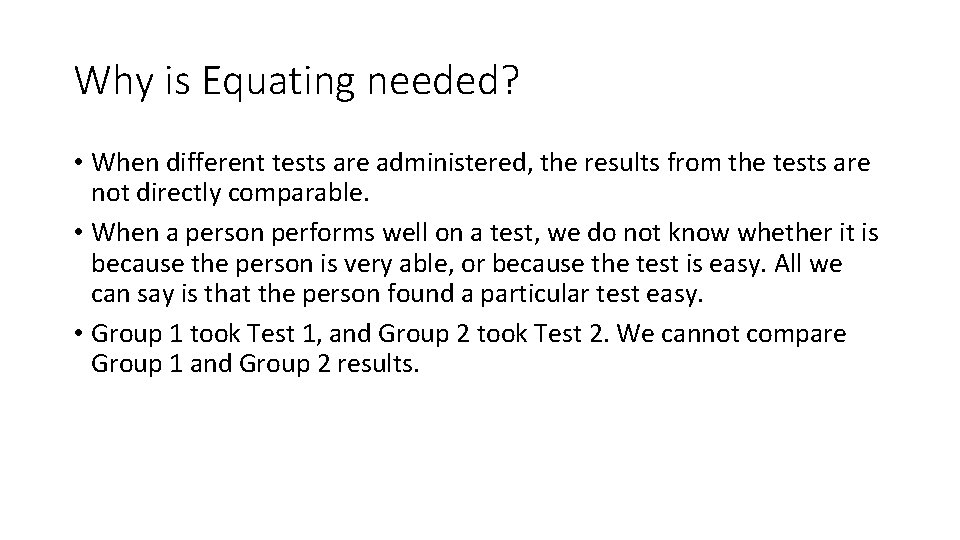

Why is Equating needed? • When different tests are administered, the results from the tests are not directly comparable. • When a person performs well on a test, we do not know whether it is because the person is very able, or because the test is easy. All we can say is that the person found a particular test easy. • Group 1 took Test 1, and Group 2 took Test 2. We cannot compare Group 1 and Group 2 results.

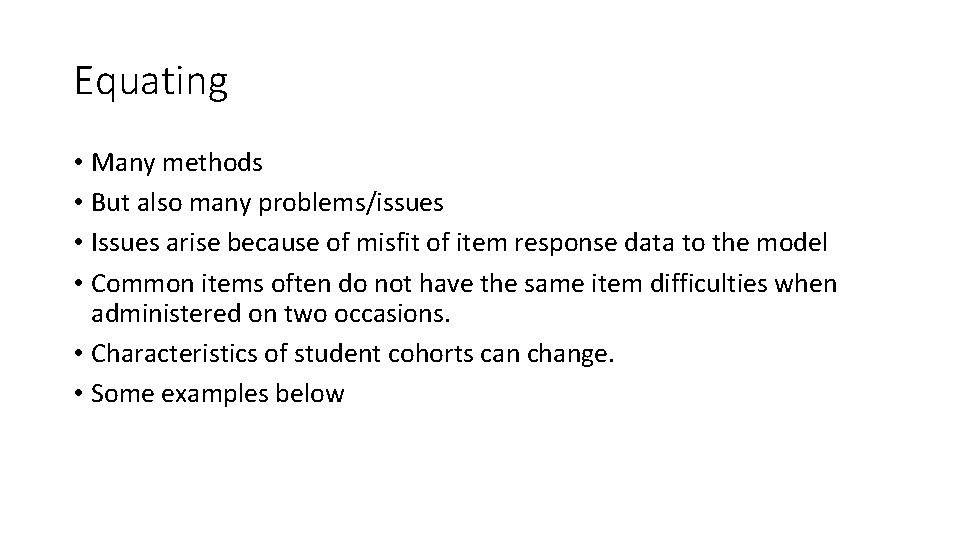

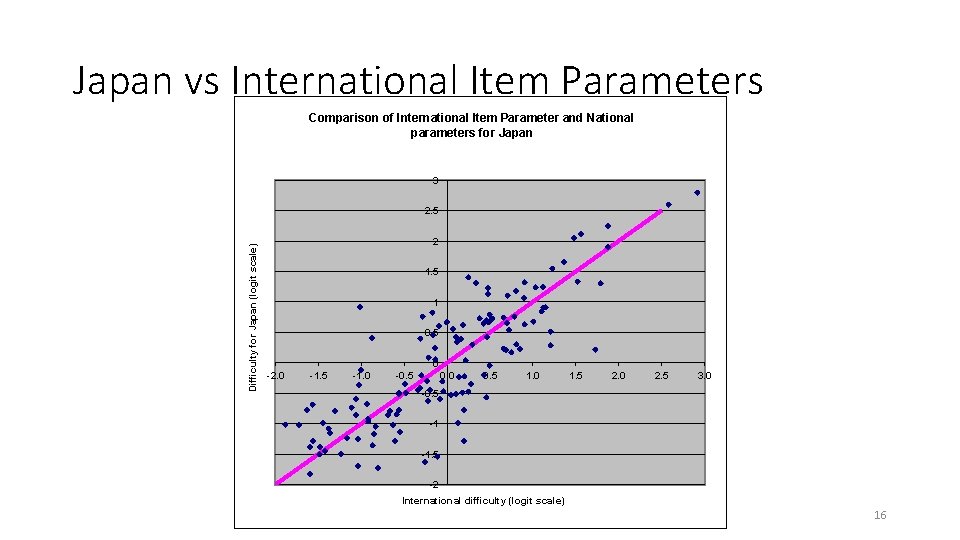

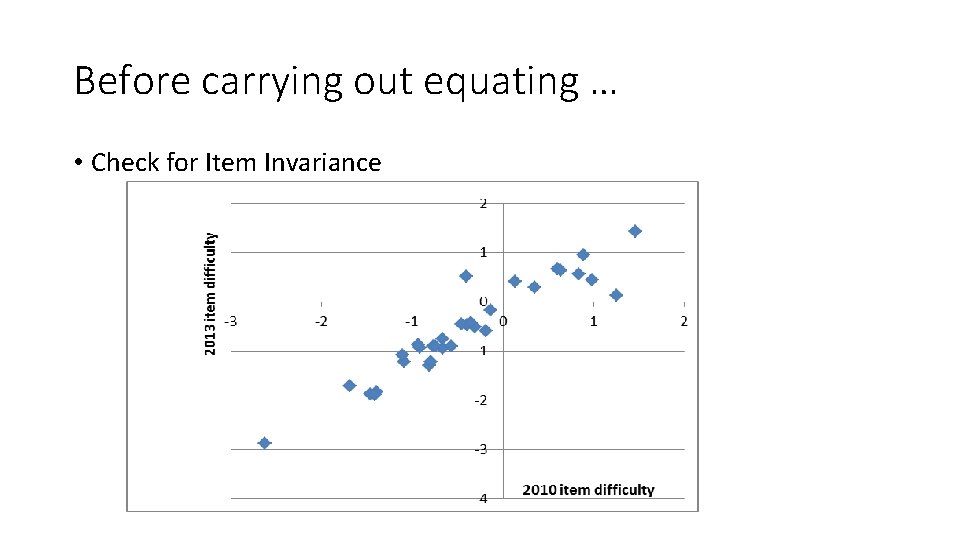

Equating • Many methods • But also many problems/issues • Issues arise because of misfit of item response data to the model • Common items often do not have the same item difficulties when administered on two occasions. • Characteristics of student cohorts can change. • Some examples below

Japan vs International Item Parameters Comparison of International Item Parameter and National parameters for Japan 3 Difficulty for Japan (logit scale) 2. 5 2 1. 5 1 0. 5 0 -2. 0 -1. 5 -1. 0 -0. 5 0. 0 0. 5 1. 0 1. 5 2. 0 2. 5 3. 0 -0. 5 -1 -1. 5 -2 International difficulty (logit scale) 16

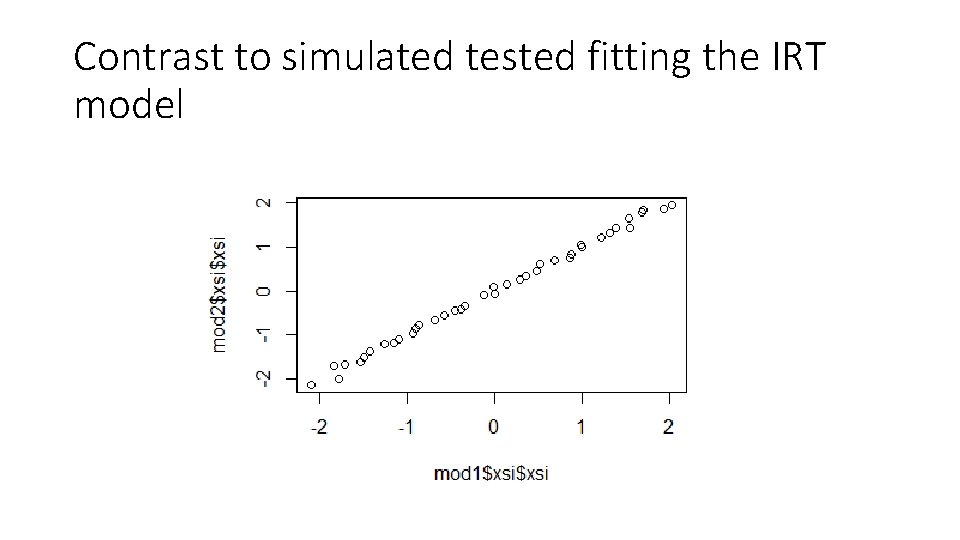

Contrast to simulated tested fitting the IRT model

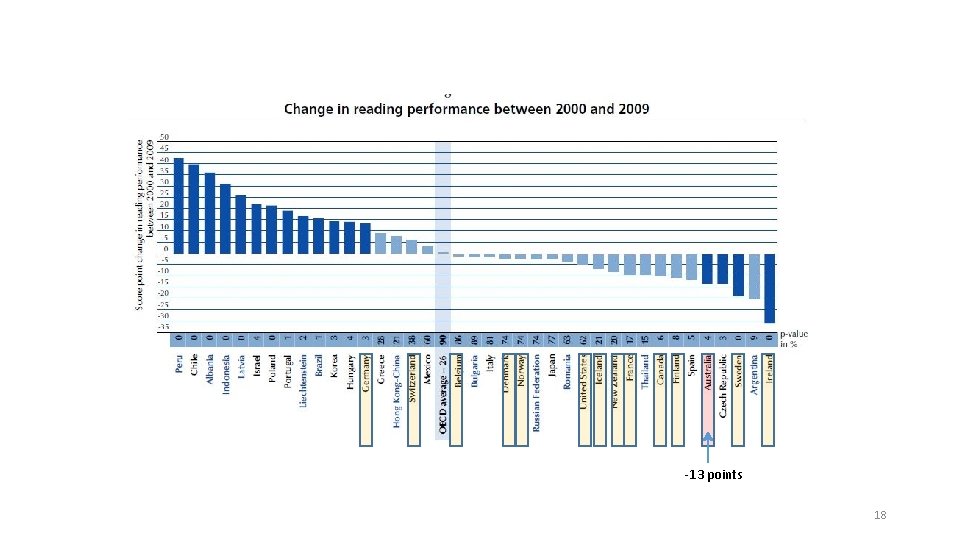

-13 points 18

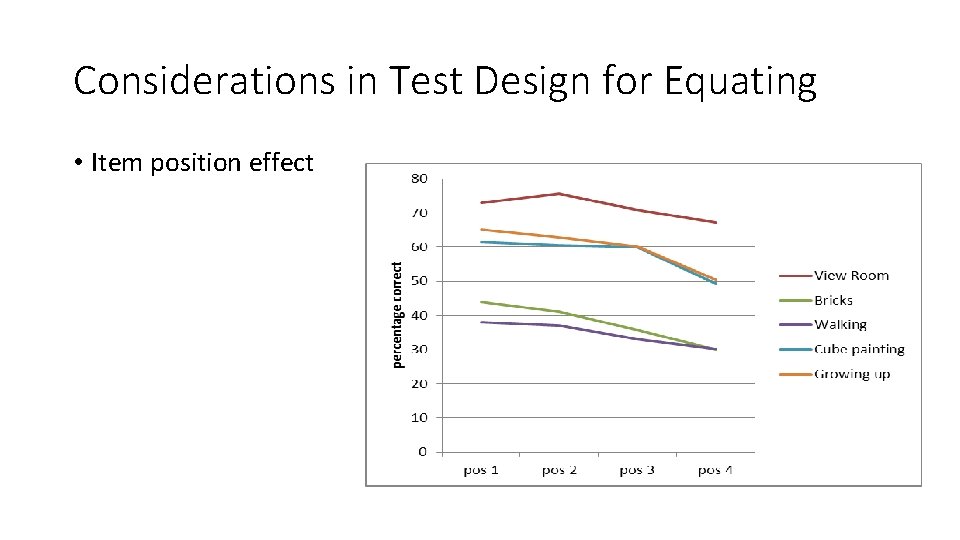

Considerations in Test Design for Equating • Item position effect

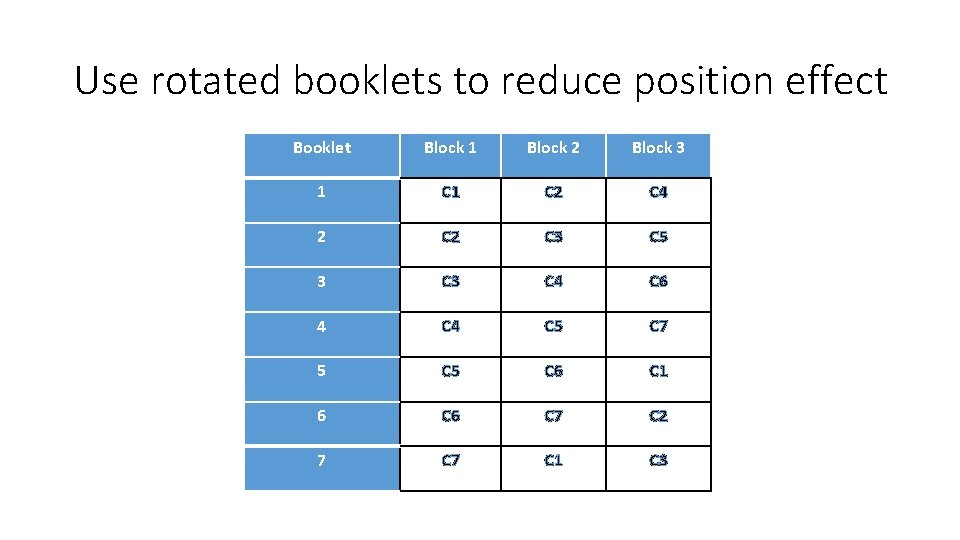

Use rotated booklets to reduce position effect Booklet Block 1 Block 2 Block 3 1 C 2 C 4 2 C 3 C 5 3 C 4 C 6 4 C 5 C 7 5 C 6 C 1 6 C 7 C 2 7 C 1 C 3

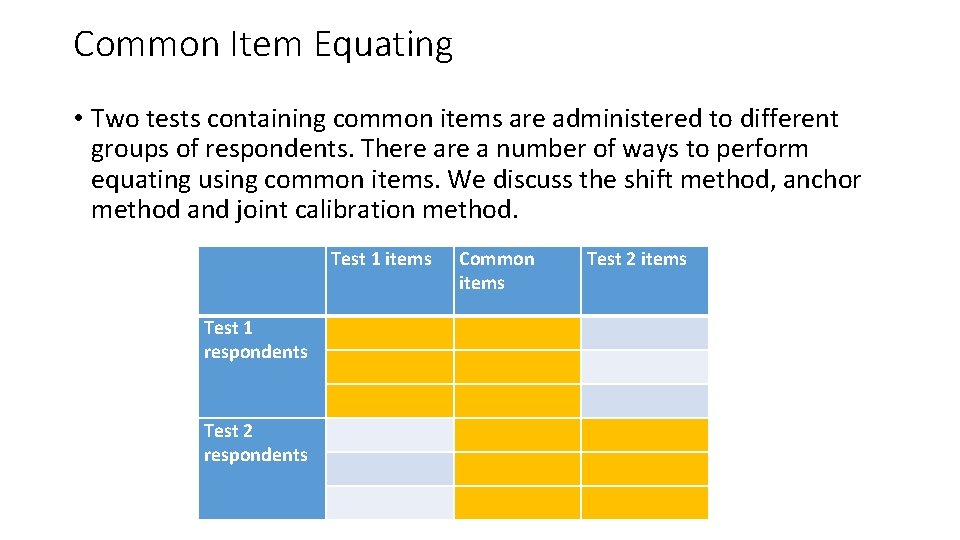

Common Item Equating • Two tests containing common items are administered to different groups of respondents. There a number of ways to perform equating using common items. We discuss the shift method, anchor method and joint calibration method. Test 1 items Test 1 respondents Test 2 respondents Common items Test 2 items

Before carrying out equating … • Check for Item Invariance

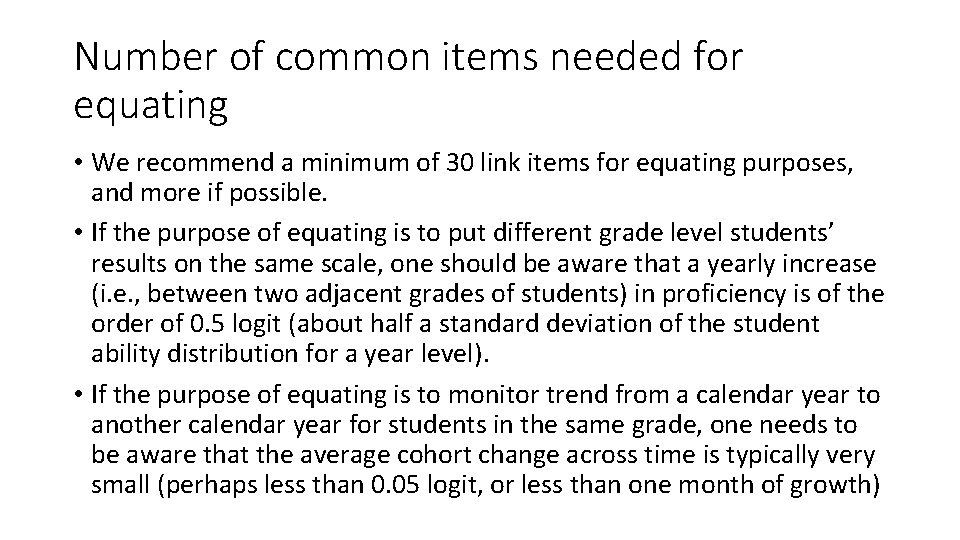

Number of common items needed for equating • We recommend a minimum of 30 link items for equating purposes, and more if possible. • If the purpose of equating is to put different grade level students’ results on the same scale, one should be aware that a yearly increase (i. e. , between two adjacent grades of students) in proficiency is of the order of 0. 5 logit (about half a standard deviation of the student ability distribution for a year level). • If the purpose of equating is to monitor trend from a calendar year to another calendar year for students in the same grade, one needs to be aware that the average cohort change across time is typically very small (perhaps less than 0. 05 logit, or less than one month of growth)

Factors influencing item difficulty • Curriculum change • Exposure to an item • Opportunity to learn • Item position in a test – fatigue effect • In international comparative studies, translation may also impact on item difficulty

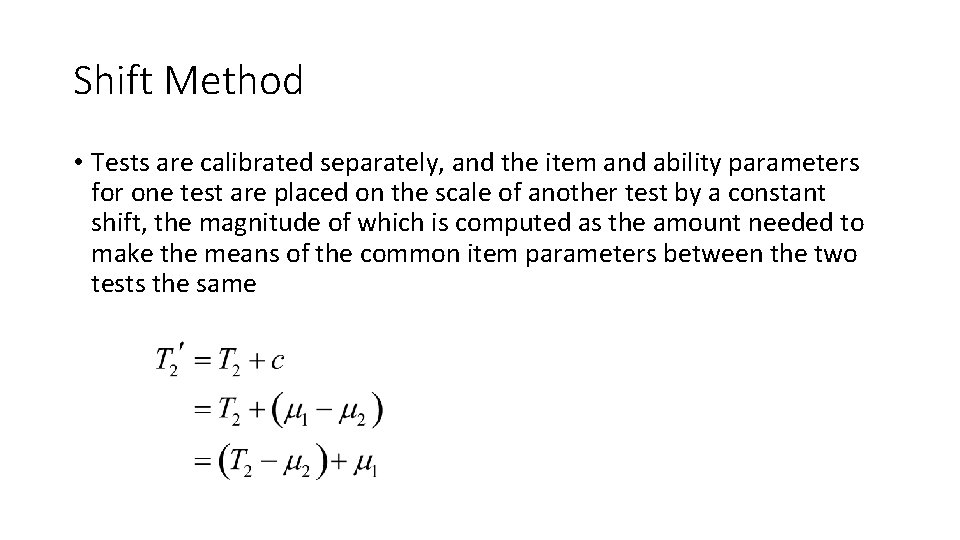

Shift Method • Tests are calibrated separately, and the item and ability parameters for one test are placed on the scale of another test by a constant shift, the magnitude of which is computed as the amount needed to make the means of the common item parameters between the two tests the same

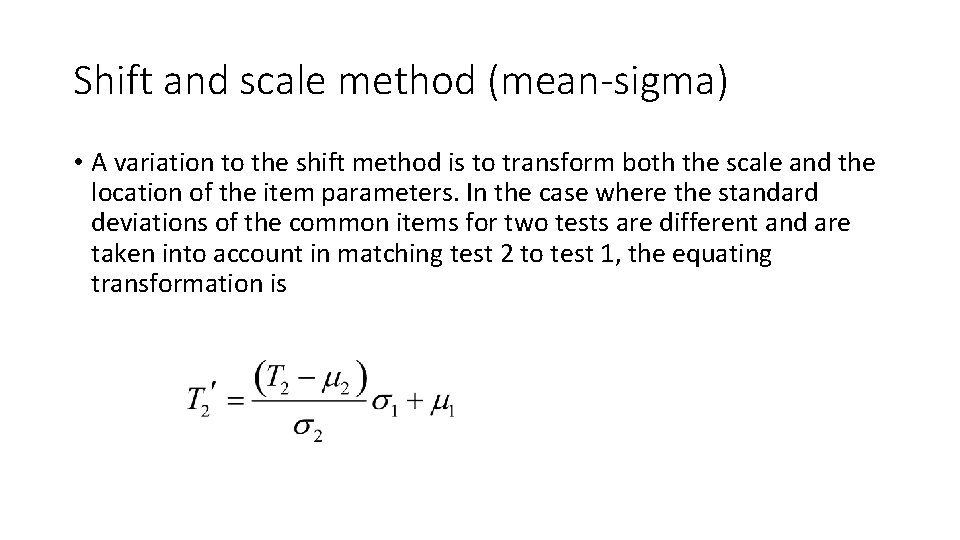

Shift and scale method (mean-sigma) • A variation to the shift method is to transform both the scale and the location of the item parameters. In the case where the standard deviations of the common items for two tests are different and are taken into account in matching test 2 to test 1, the equating transformation is

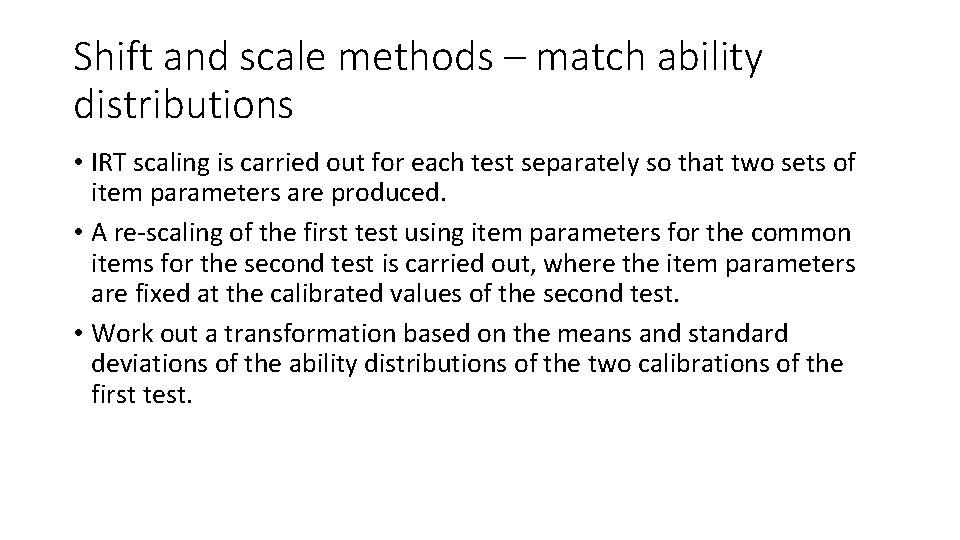

Shift and scale methods – match ability distributions • IRT scaling is carried out for each test separately so that two sets of item parameters are produced. • A re-scaling of the first test using item parameters for the common items for the second test is carried out, where the item parameters are fixed at the calibrated values of the second test. • Work out a transformation based on the means and standard deviations of the ability distributions of the two calibrations of the first test.

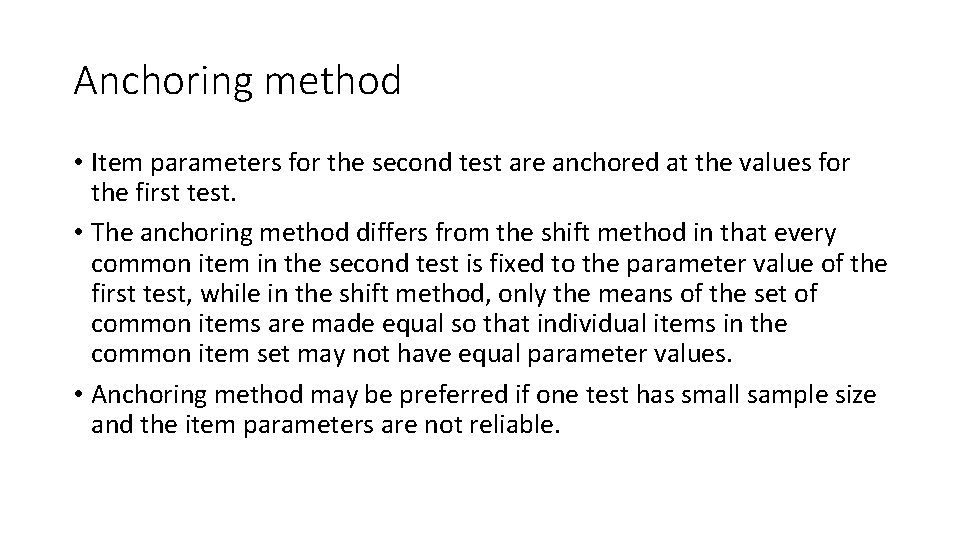

Anchoring method • Item parameters for the second test are anchored at the values for the first test. • The anchoring method differs from the shift method in that every common item in the second test is fixed to the parameter value of the first test, while in the shift method, only the means of the set of common items are made equal so that individual items in the common item set may not have equal parameter values. • Anchoring method may be preferred if one test has small sample size and the item parameters are not reliable.

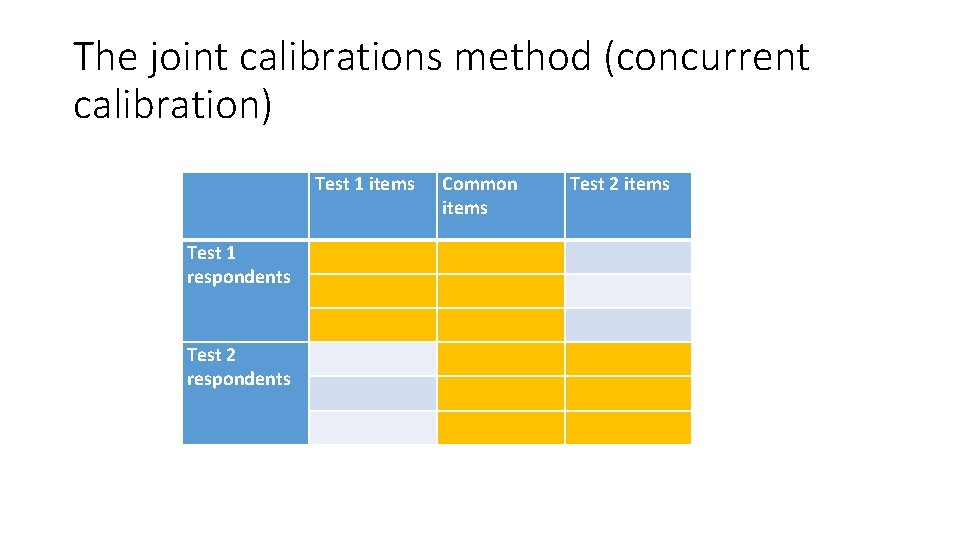

The joint calibrations method (concurrent calibration) Test 1 items Test 1 respondents Test 2 respondents Common items Test 2 items

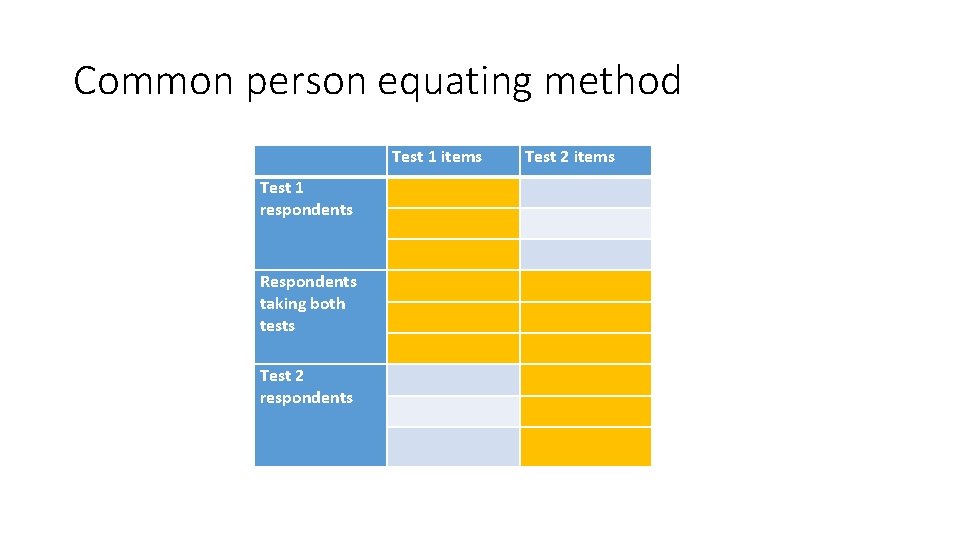

Common person equating method Test 1 items Test 1 respondents Respondents taking both tests Test 2 respondents Test 2 items

Horizontal and Vertical equating • The terms horizontal and vertical equating have been used to refer to equating tests aimed for the same target level of students (horizontal) and for different target levels of students (vertical). • Vertical equating is more challenging because item placements in the tests, also because of opportunities to learn.

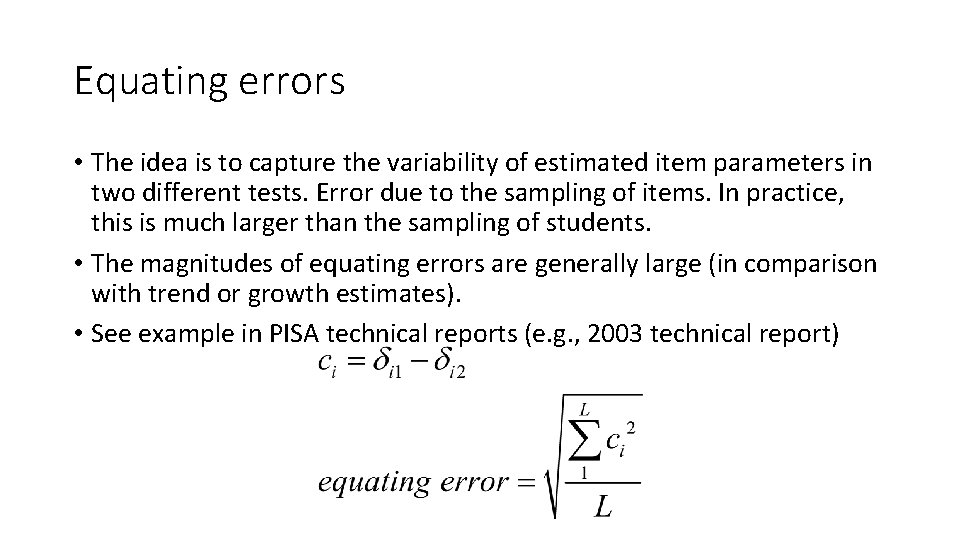

Equating errors • The idea is to capture the variability of estimated item parameters in two different tests. Error due to the sampling of items. In practice, this is much larger than the sampling of students. • The magnitudes of equating errors are generally large (in comparison with trend or growth estimates). • See example in PISA technical reports (e. g. , 2003 technical report)

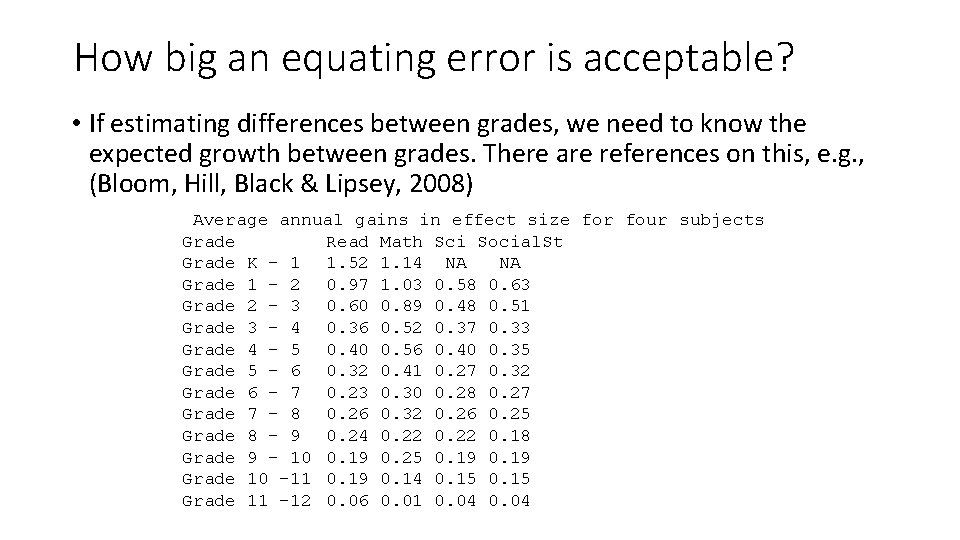

How big an equating error is acceptable? • If estimating differences between grades, we need to know the expected growth between grades. There are references on this, e. g. , (Bloom, Hill, Black & Lipsey, 2008) Average annual gains in effect size for four subjects Grade Read Math Sci Social. St Grade K – 1 1. 52 1. 14 NA NA Grade 1 – 2 0. 97 1. 03 0. 58 0. 63 Grade 2 – 3 0. 60 0. 89 0. 48 0. 51 Grade 3 – 4 0. 36 0. 52 0. 37 0. 33 Grade 4 – 5 0. 40 0. 56 0. 40 0. 35 Grade 5 – 6 0. 32 0. 41 0. 27 0. 32 Grade 6 – 7 0. 23 0. 30 0. 28 0. 27 Grade 7 – 8 0. 26 0. 32 0. 26 0. 25 Grade 8 – 9 0. 24 0. 22 0. 18 Grade 9 – 10 0. 19 0. 25 0. 19 Grade 10 – 11 0. 19 0. 14 0. 15 Grade 11 – 12 0. 06 0. 01 0. 04

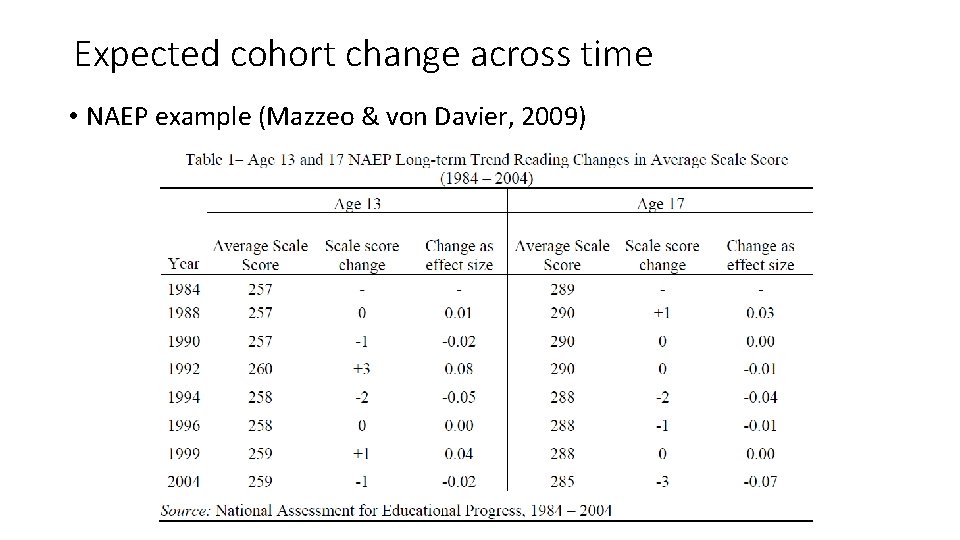

Expected cohort change across time • NAEP example (Mazzeo & von Davier, 2009)

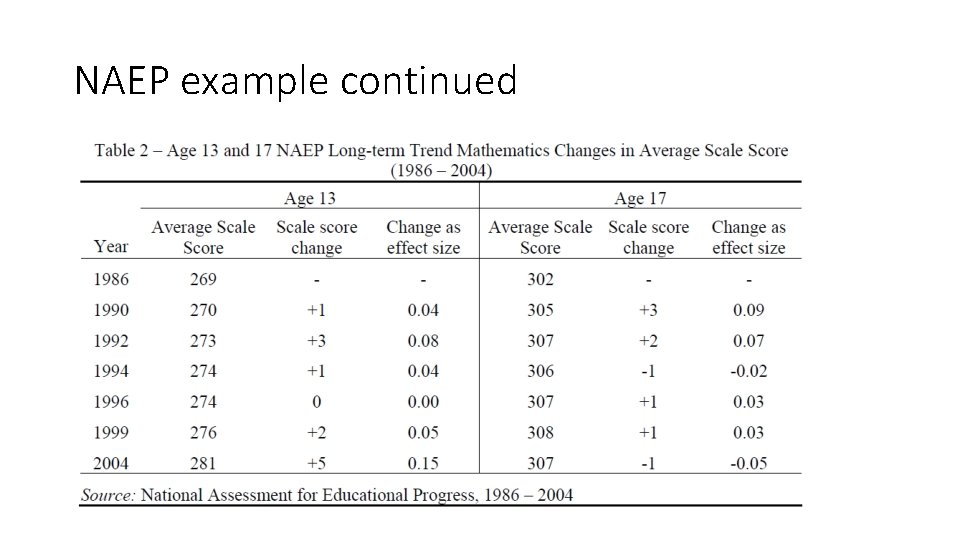

NAEP example continued

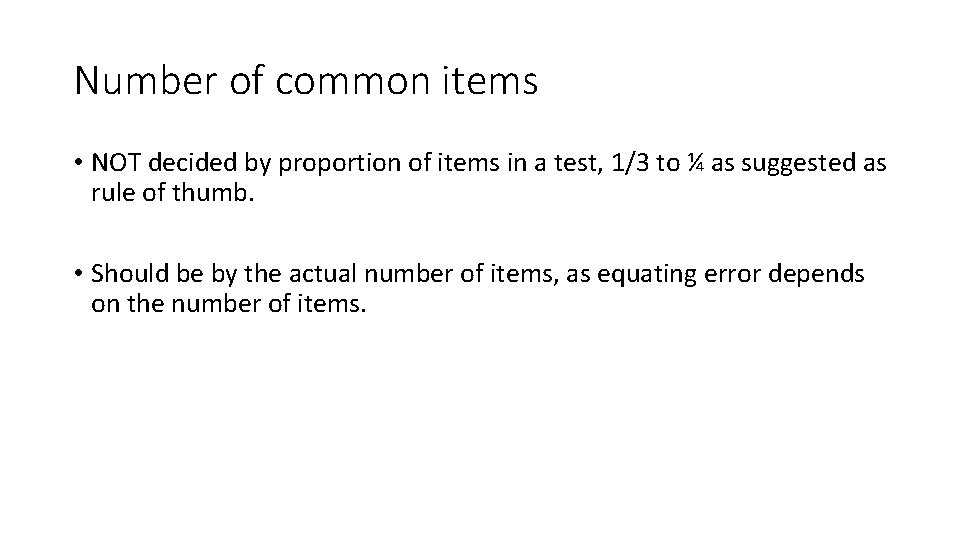

Number of common items • NOT decided by proportion of items in a test, 1/3 to ¼ as suggested as rule of thumb. • Should be by the actual number of items, as equating error depends on the number of items.

Challenges in test equating • real-life challenges of keeping test items invariant. • Curriculum changes • Item position effect • Fatigue • Sequencing of items • Balanced test design is essential • Computer adaptive tests can help reduce item position effect.

Further Exploration • Equating methods: • Moments methods and Characteristic Curves methods • Moments methods: • Mean-sigma (compute common items mean and standard deviation) • Mean-mean (compute mean of difficulty parameters and mean of discrimination parameters) • Characteristic Curves methods • Matching item characteristic curves (Haebara) • Matching test characteristic curves (Stocking-Lord) • R package equate. IRT

- Slides: 38