EPS Diagnostic Tools Renate Hagedorn European Centre for

- Slides: 35

EPS Diagnostic Tools Renate Hagedorn European Centre for Medium-Range Weather Forecasts ECMWF Reading, 18 May 2004

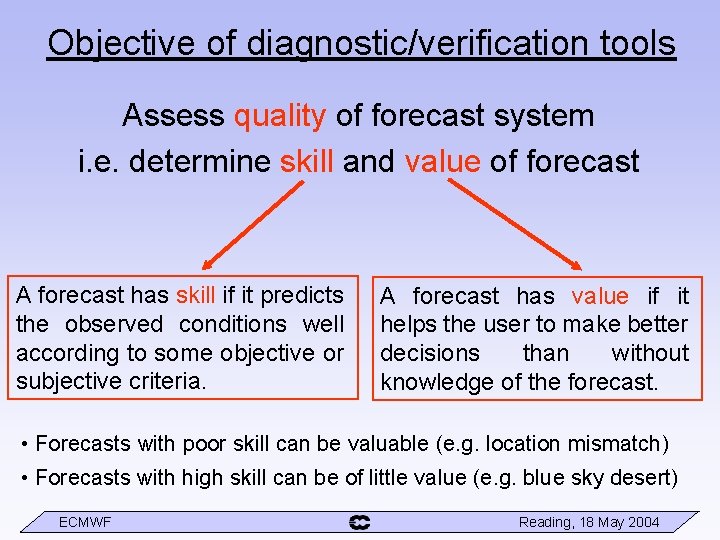

Objective of diagnostic/verification tools Assess quality of forecast system i. e. determine skill and value of forecast A forecast has skill if it predicts the observed conditions well according to some objective or subjective criteria. A forecast has value if it helps the user to make better decisions than without knowledge of the forecast. • Forecasts with poor skill can be valuable (e. g. location mismatch) • Forecasts with high skill can be of little value (e. g. blue sky desert) ECMWF Reading, 18 May 2004

Ensemble Prediction System • 1 control run + 50 perturbed runs (T 255 L 40) added dimension of ensemble members f(x, y, z, t, e) • How do we deal with added dimension when interpreting, verifying and diagnosing EPS output? ECMWF Reading, 18 May 2004

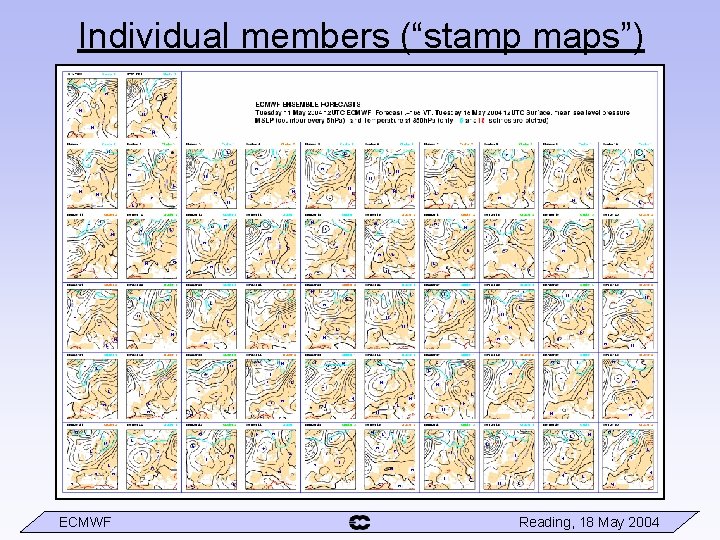

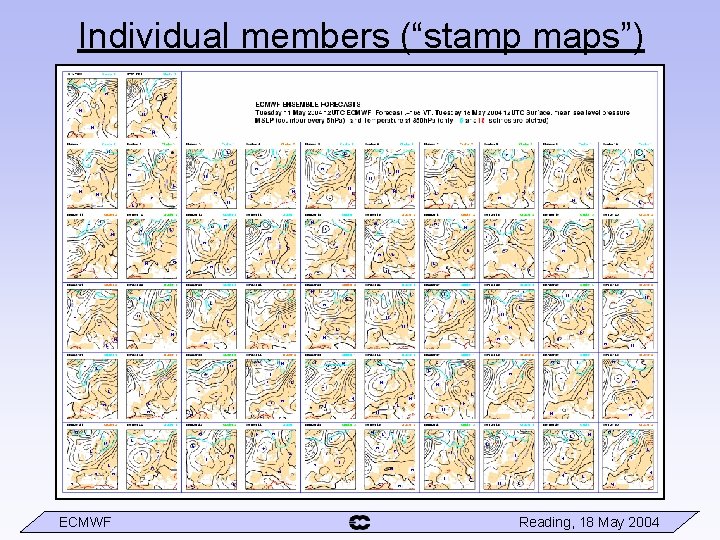

Individual members (“stamp maps”) ECMWF Reading, 18 May 2004

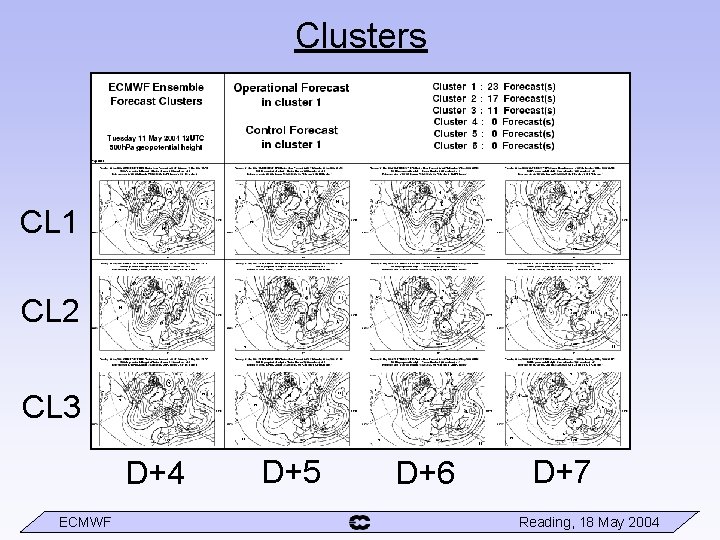

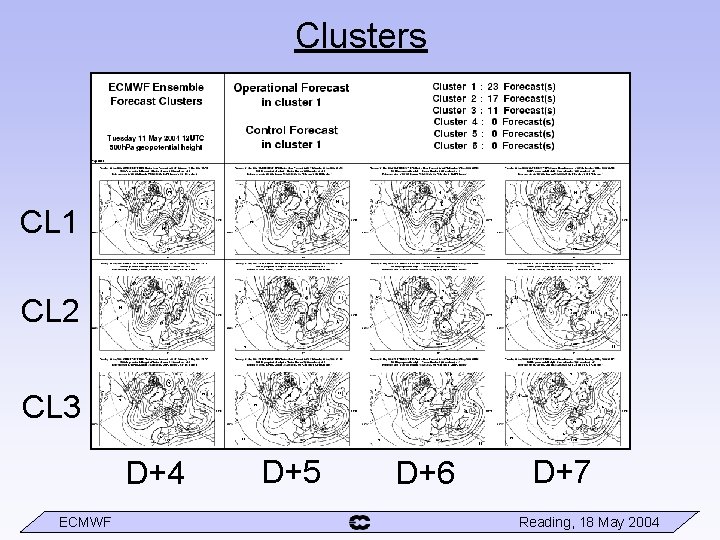

Clusters CL 1 CL 2 CL 3 D+4 ECMWF D+5 D+6 D+7 Reading, 18 May 2004

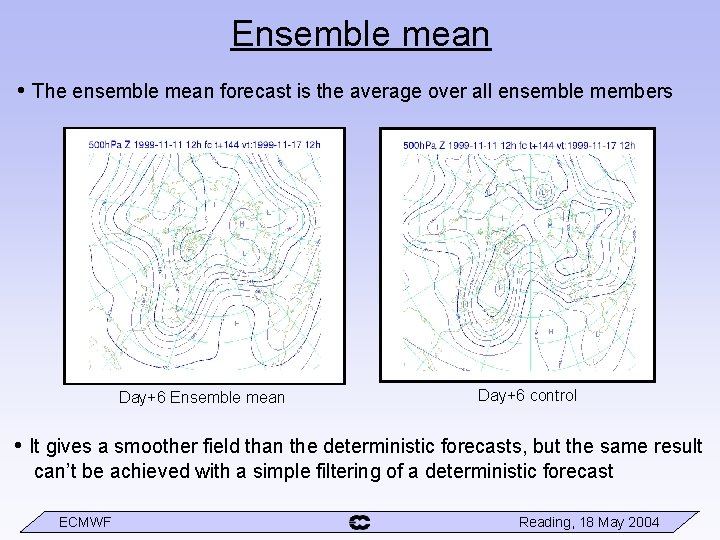

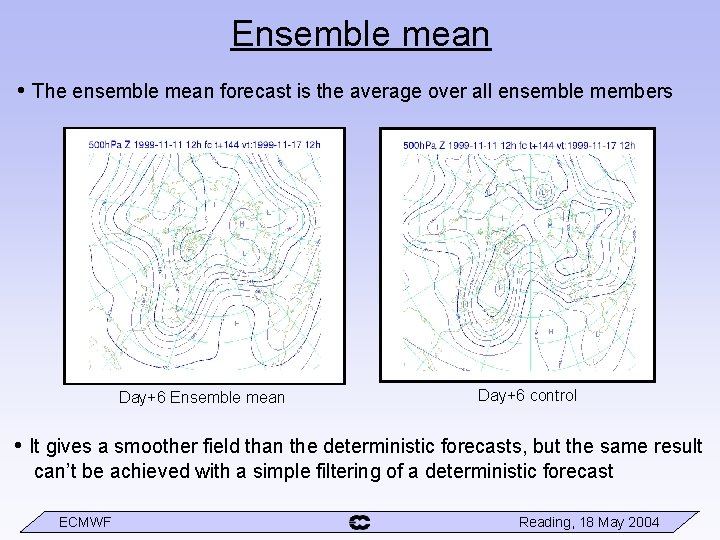

Ensemble mean • The ensemble mean forecast is the average over all ensemble members Day+6 Ensemble mean Day+6 control • It gives a smoother field than the deterministic forecasts, but the same result can’t be achieved with a simple filtering of a deterministic forecast ECMWF Reading, 18 May 2004

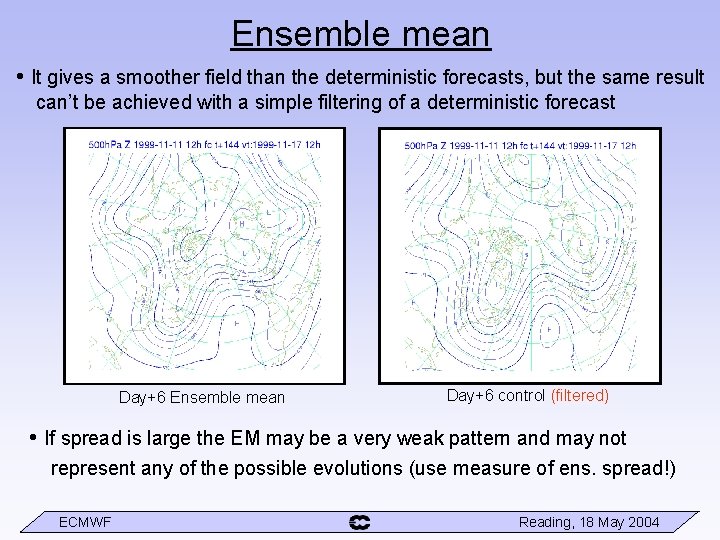

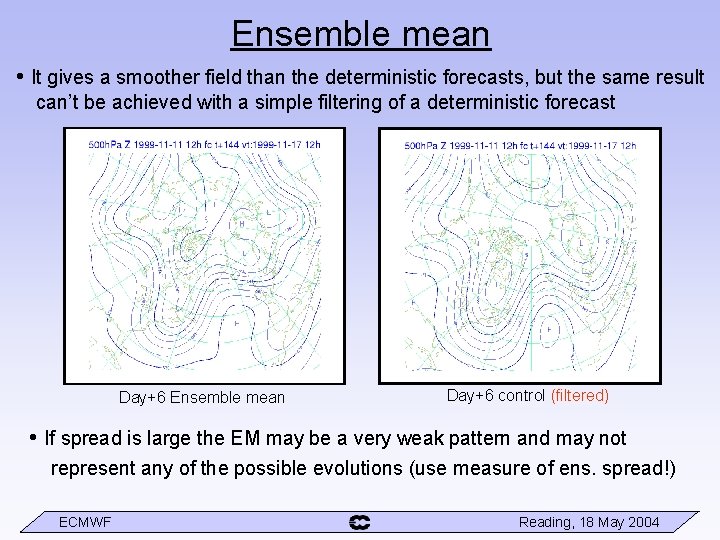

Ensemble mean • It gives a smoother field than the deterministic forecasts, but the same result can’t be achieved with a simple filtering of a deterministic forecast Day+6 Ensemble mean Day+6 control (filtered) • If spread is large the EM may be a very weak pattern and may not represent any of the possible evolutions (use measure of ens. spread!) ECMWF Reading, 18 May 2004

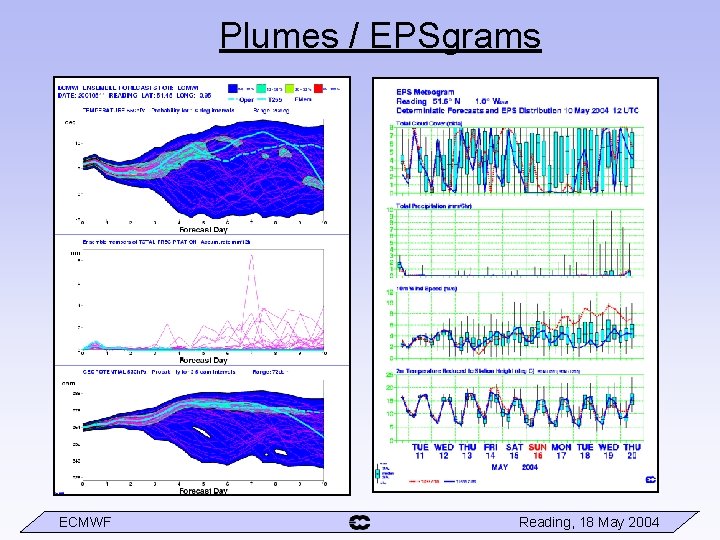

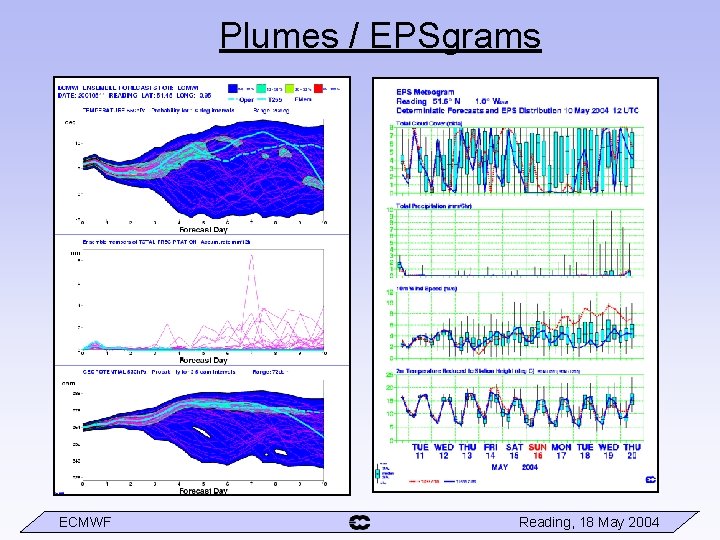

Plumes / EPSgrams ECMWF Reading, 18 May 2004

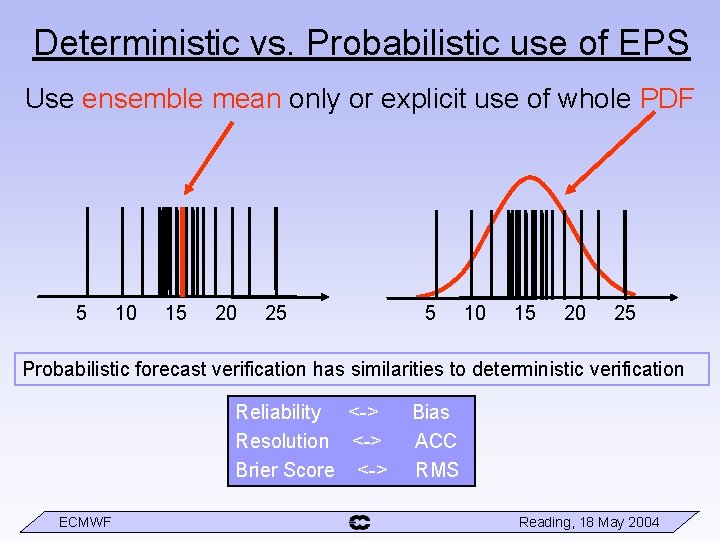

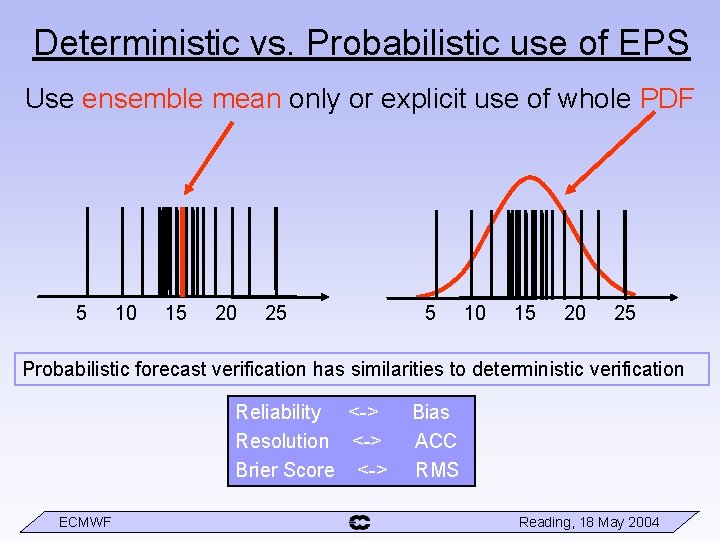

Deterministic vs. Probabilistic use of EPS Use ensemble mean only or explicit use of whole PDF 5 10 15 20 25 Probabilistic forecast verification has similarities to deterministic verification Reliability <-> Resolution <-> Brier Score <-> ECMWF Bias ACC RMS Reading, 18 May 2004

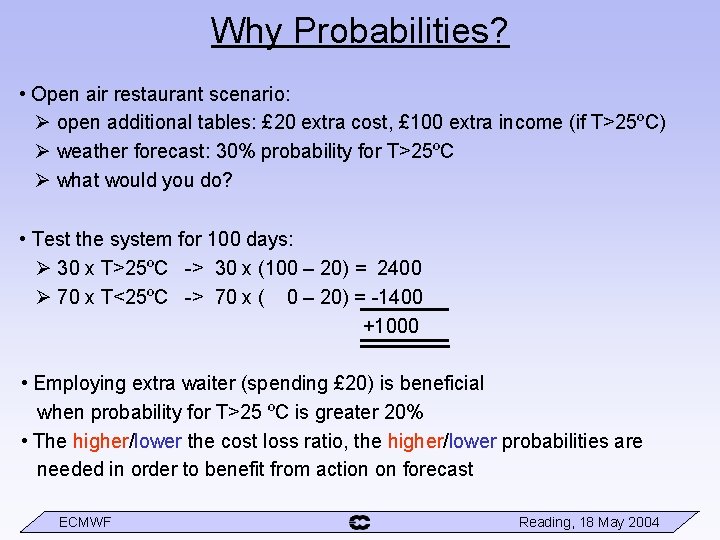

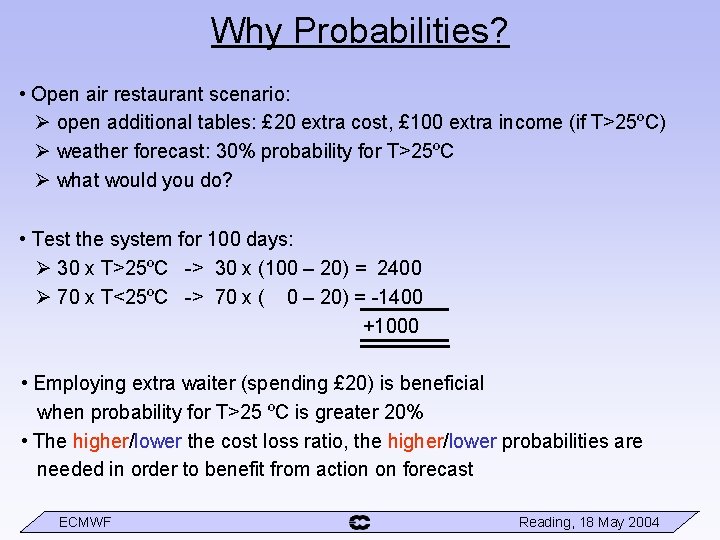

Why Probabilities? • Open air restaurant scenario: open additional tables: £ 20 extra cost, £ 100 extra income (if T>25ºC) weather forecast: 30% probability for T>25ºC what would you do? • Test the system for 100 days: 30 x T>25ºC -> 30 x (100 – 20) = 2400 70 x T<25ºC -> 70 x ( 0 – 20) = -1400 +1000 • Employing extra waiter (spending £ 20) is beneficial when probability for T>25 ºC is greater 20% • The higher/lower the cost loss ratio, the higher/lower probabilities are needed in order to benefit from action on forecast ECMWF Reading, 18 May 2004

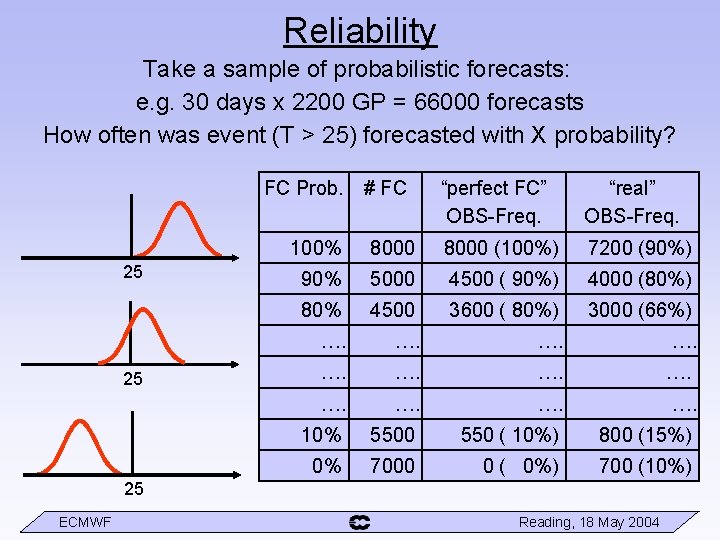

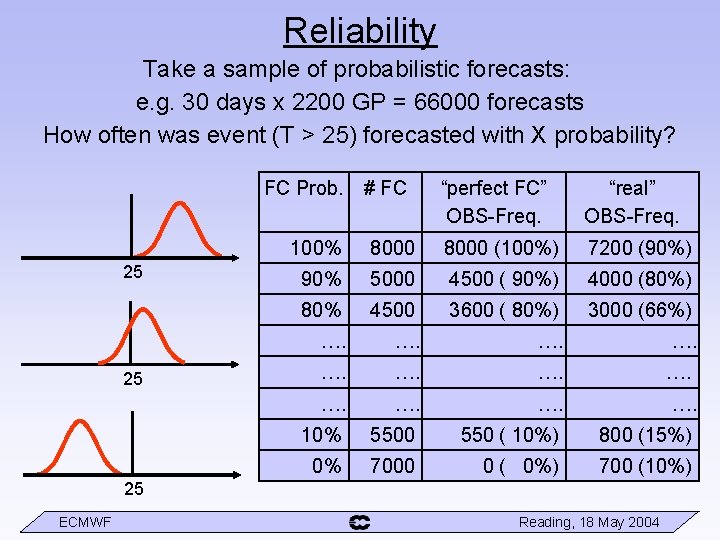

Reliability Take a sample of probabilistic forecasts: e. g. 30 days x 2200 GP = 66000 forecasts How often was event (T > 25) forecasted with X probability? FC Prob. 25 25 # FC “perfect FC” OBS-Freq. “real” OBS-Freq. 100% 8000 (100%) 7200 (90%) 90% 5000 4500 ( 90%) 4000 (80%) 80% 4500 3600 ( 80%) 3000 (66%) …. …. …. 10% 5500 550 ( 10%) 800 (15%) 0% 7000 0 ( 0%) 700 (10%) 25 ECMWF Reading, 18 May 2004

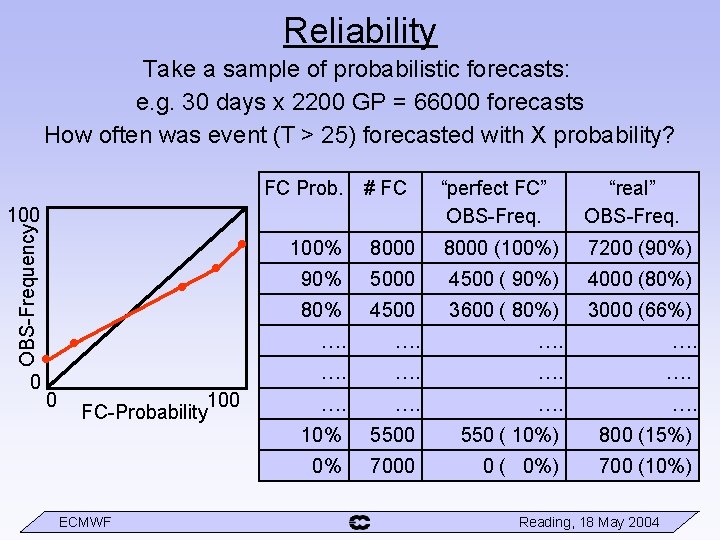

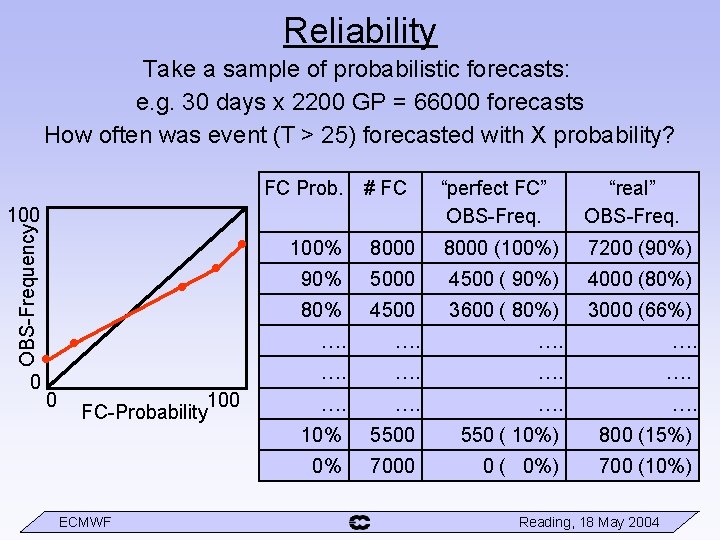

Reliability Take a sample of probabilistic forecasts: e. g. 30 days x 2200 GP = 66000 forecasts How often was event (T > 25) forecasted with X probability? FC Prob. OBS-Frequency 100 • • • 0 0 100 FC-Probability ECMWF # FC “perfect FC” OBS-Freq. “real” OBS-Freq. 100% 8000 (100%) 7200 (90%) 90% 5000 4500 ( 90%) 4000 (80%) 80% 4500 3600 ( 80%) 3000 (66%) …. …. …. 10% 5500 550 ( 10%) 800 (15%) 0% 7000 0 ( 0%) 700 (10%) Reading, 18 May 2004

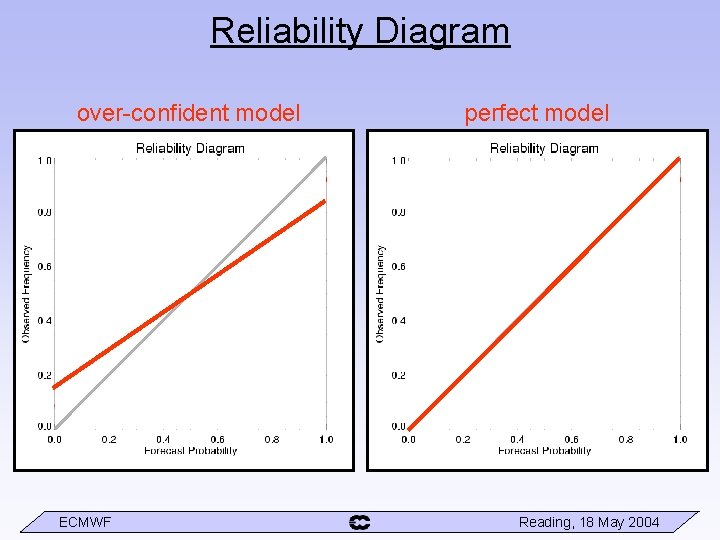

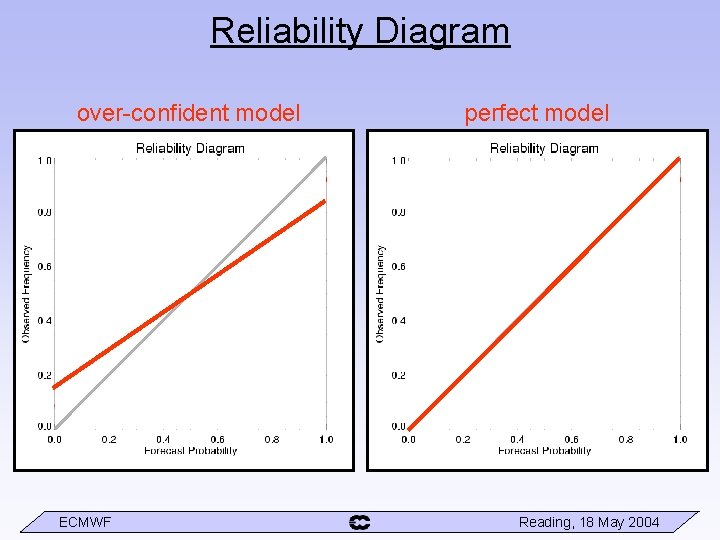

Reliability Diagram over-confident model ECMWF perfect model Reading, 18 May 2004

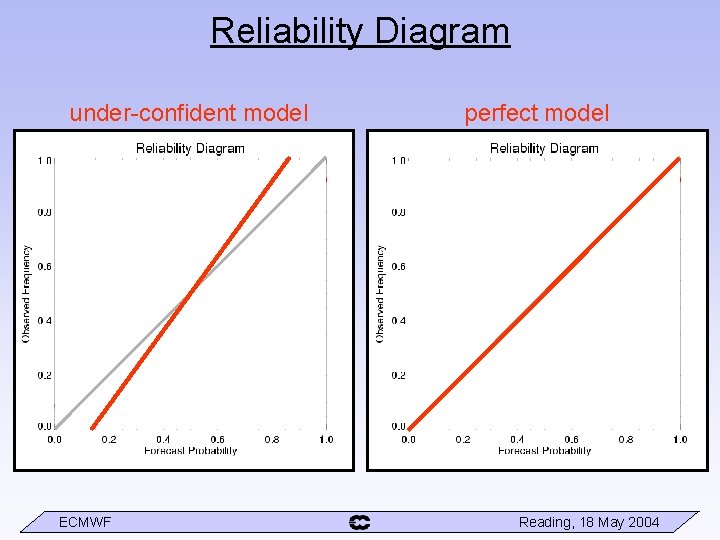

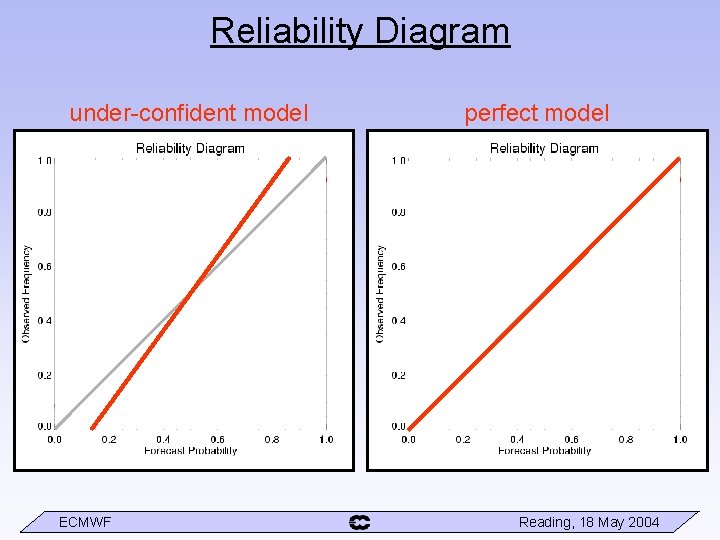

Reliability Diagram under-confident model ECMWF perfect model Reading, 18 May 2004

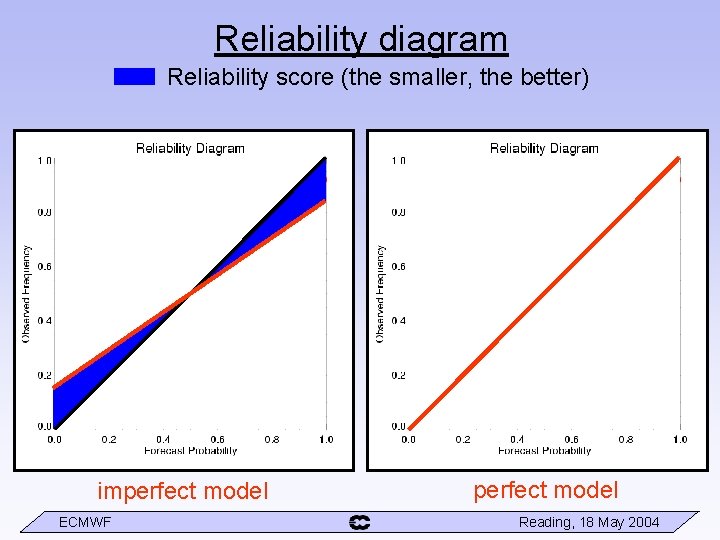

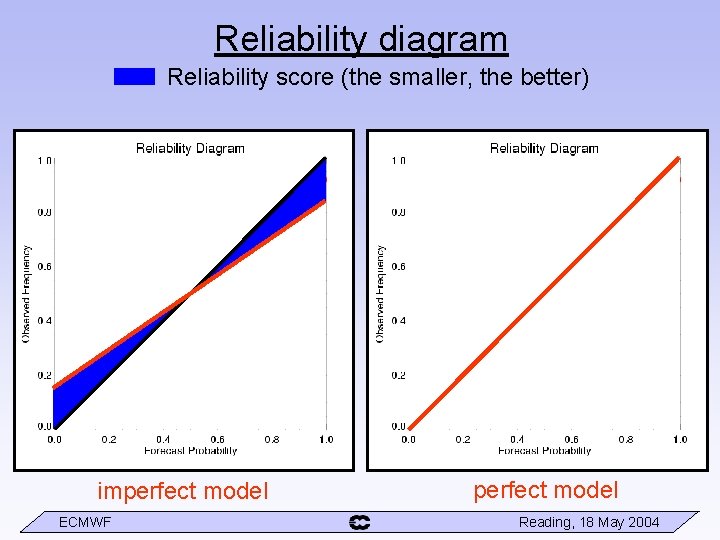

Reliability diagram Reliability score (the smaller, the better) imperfect model ECMWF perfect model Reading, 18 May 2004

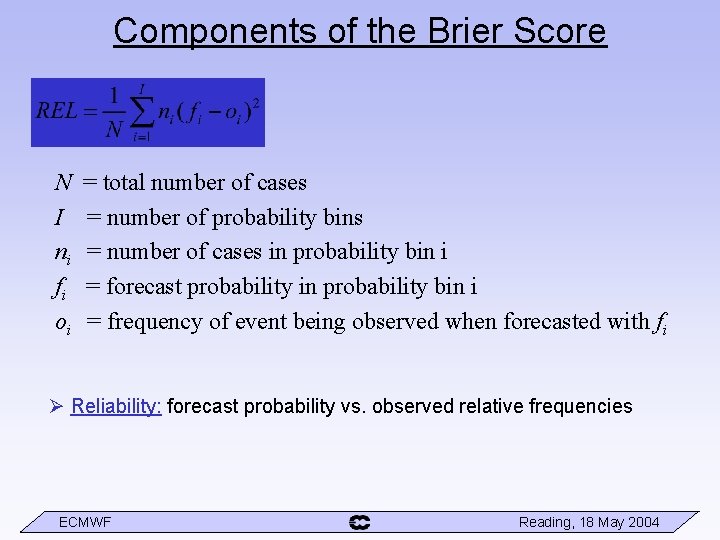

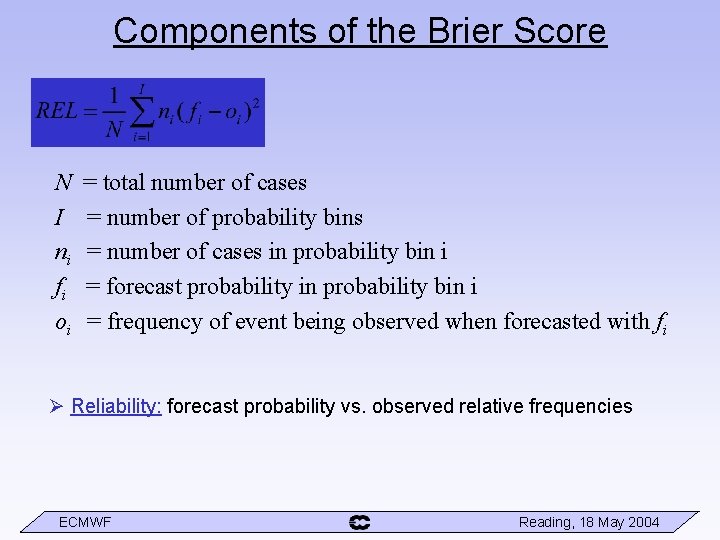

Components of the Brier Score N I ni fi oi = total number of cases = number of probability bins = number of cases in probability bin i = forecast probability in probability bin i = frequency of event being observed when forecasted with fi Reliability: forecast probability vs. observed relative frequencies ECMWF Reading, 18 May 2004

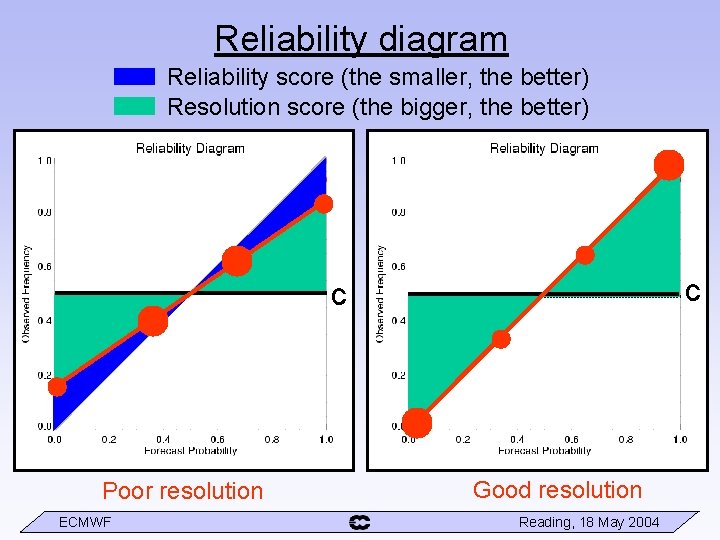

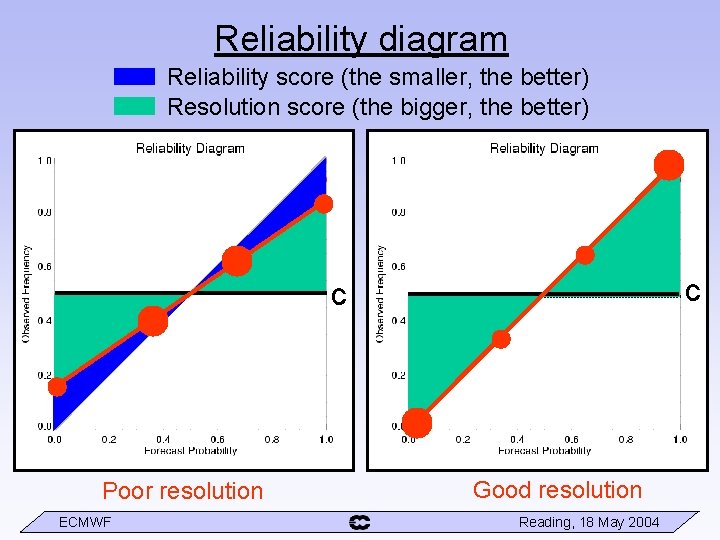

Reliability diagram Reliability score (the smaller, the better) Resolution score (the bigger, the better) c c Poor resolution ECMWF Good resolution Reading, 18 May 2004

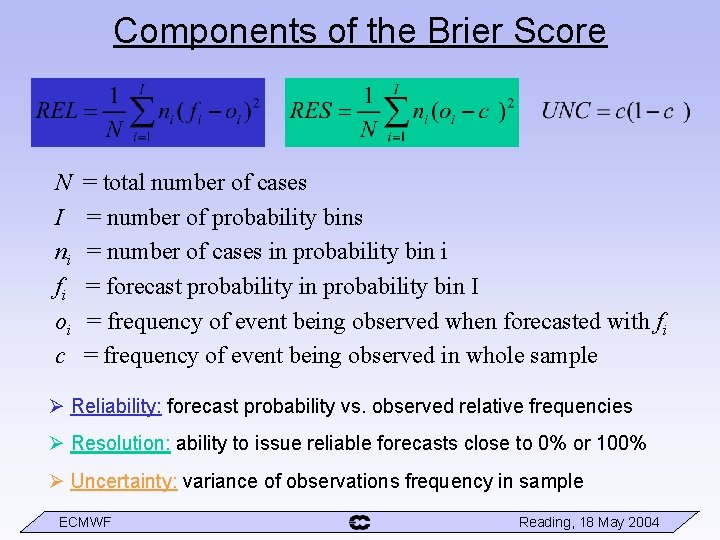

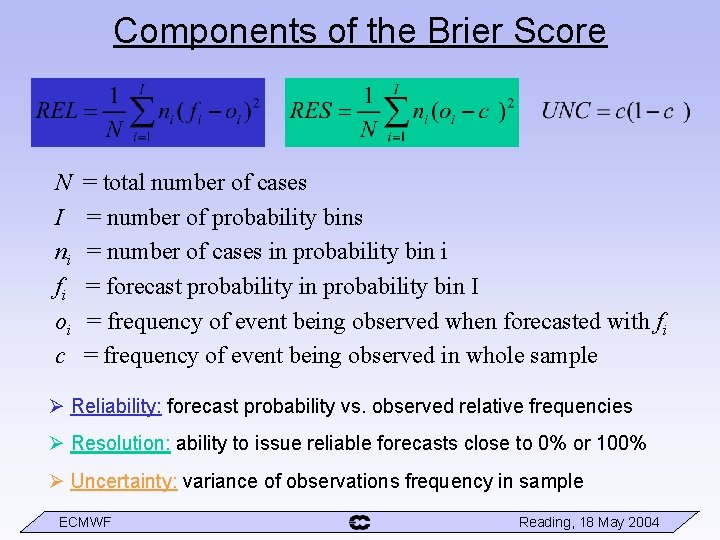

Components of the Brier Score N I ni fi oi c = total number of cases = number of probability bins = number of cases in probability bin i = forecast probability in probability bin I = frequency of event being observed when forecasted with fi = frequency of event being observed in whole sample Reliability: forecast probability vs. observed relative frequencies Resolution: ability to issue reliable forecasts close to 0% or 100% Uncertainty: variance of observations frequency in sample ECMWF Reading, 18 May 2004

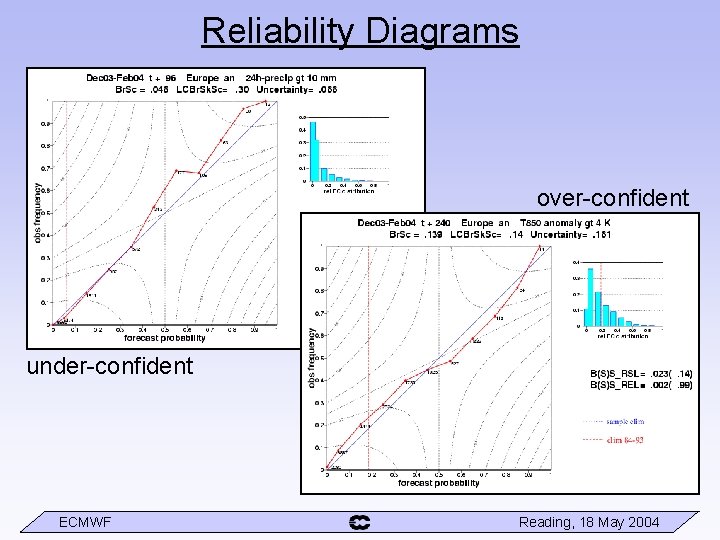

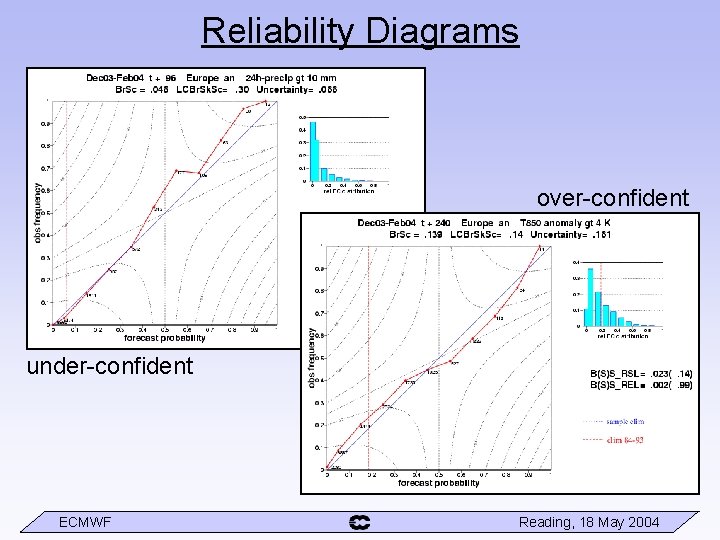

Reliability Diagrams over-confident under-confident ECMWF Reading, 18 May 2004

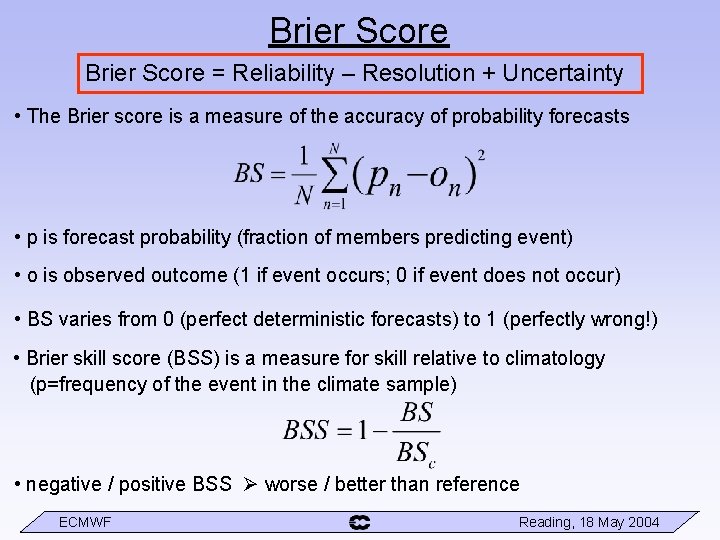

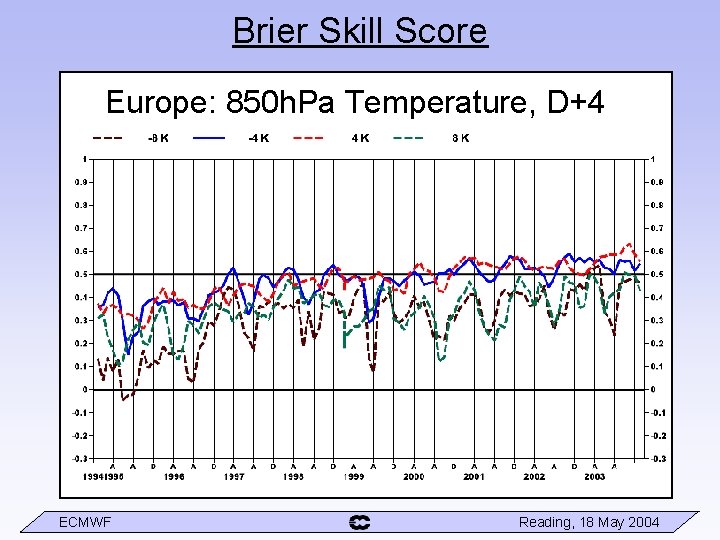

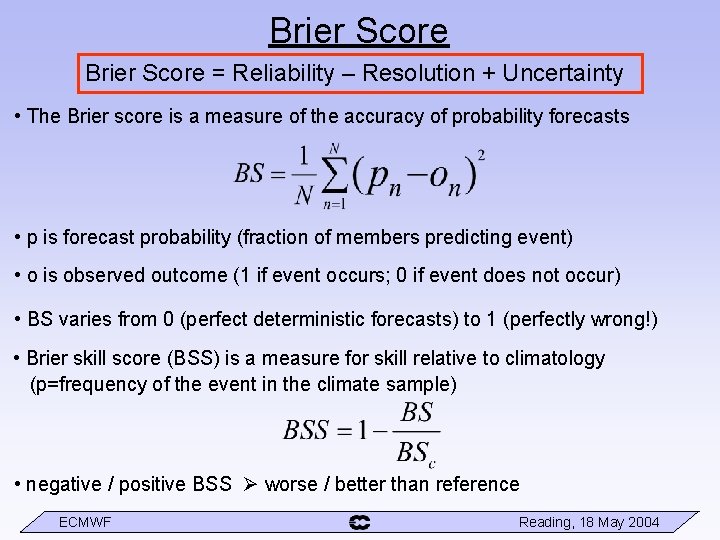

Brier Score = Reliability – Resolution + Uncertainty • The Brier score is a measure of the accuracy of probability forecasts • p is forecast probability (fraction of members predicting event) • o is observed outcome (1 if event occurs; 0 if event does not occur) • BS varies from 0 (perfect deterministic forecasts) to 1 (perfectly wrong!) • Brier skill score (BSS) is a measure for skill relative to climatology (p=frequency of the event in the climate sample) • negative / positive BSS worse / better than reference ECMWF Reading, 18 May 2004

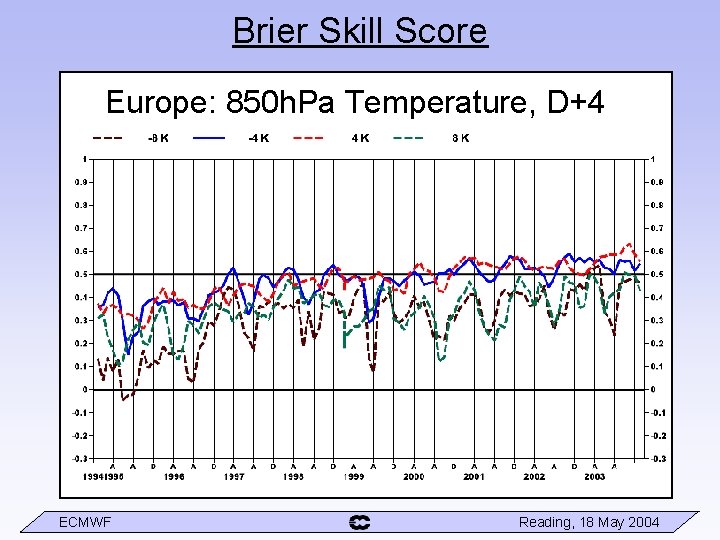

Brier Skill Score Europe: 850 h. Pa Temperature, D+4 ECMWF Reading, 18 May 2004

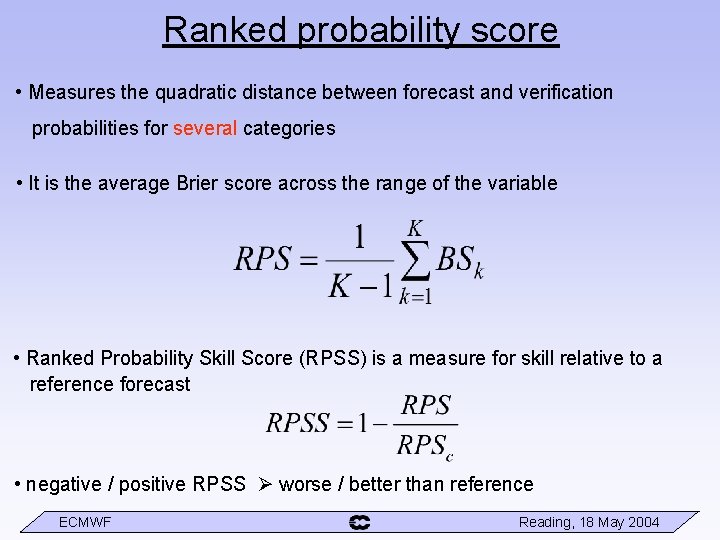

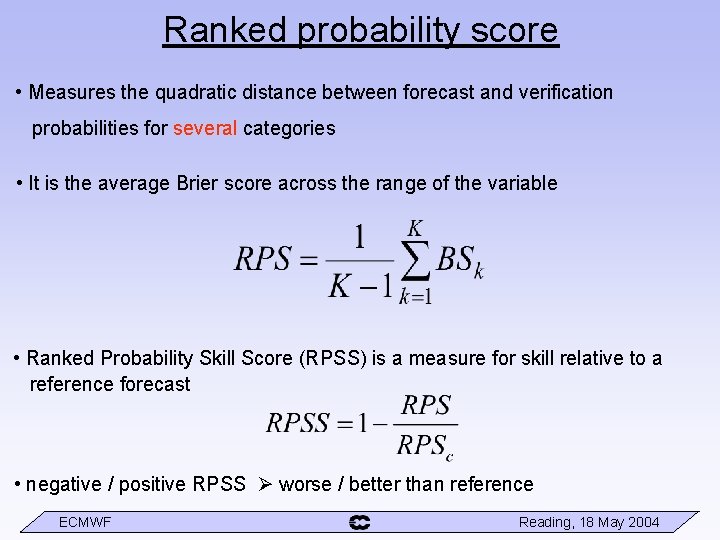

Ranked probability score • Measures the quadratic distance between forecast and verification probabilities for several categories • It is the average Brier score across the range of the variable • Ranked Probability Skill Score (RPSS) is a measure for skill relative to a reference forecast • negative / positive RPSS worse / better than reference ECMWF Reading, 18 May 2004

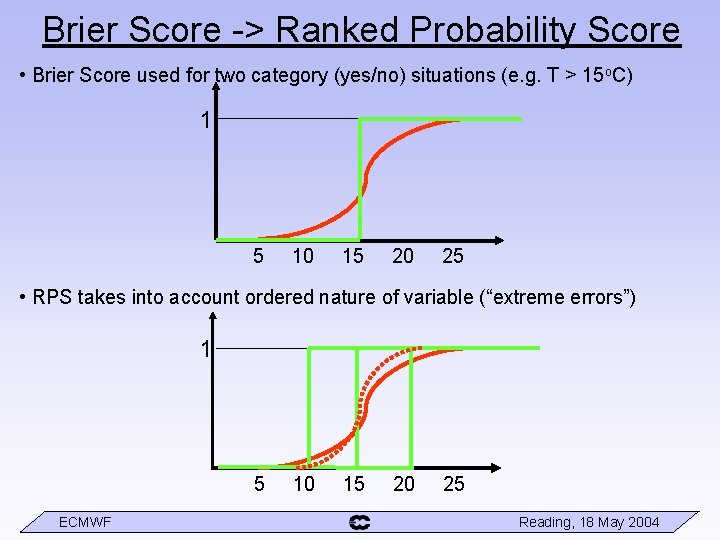

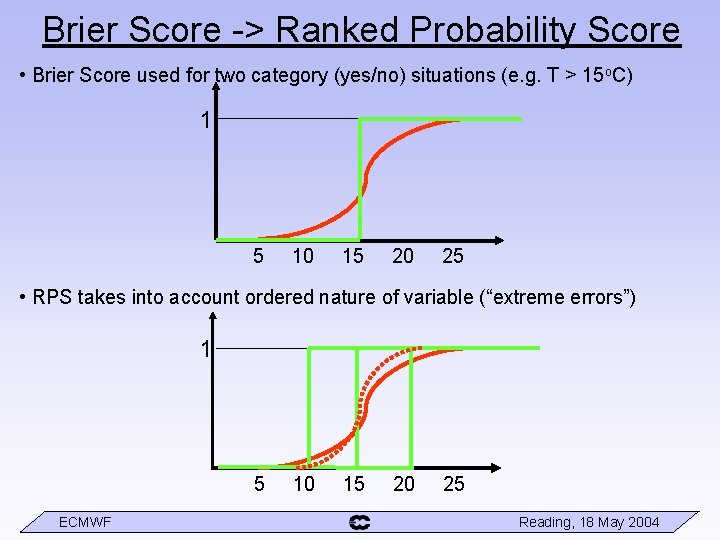

Brier Score -> Ranked Probability Score • Brier Score used for two category (yes/no) situations (e. g. T > 15 o. C) 1 5 10 15 20 25 • RPS takes into account ordered nature of variable (“extreme errors”) 1 5 ECMWF 10 15 20 25 Reading, 18 May 2004

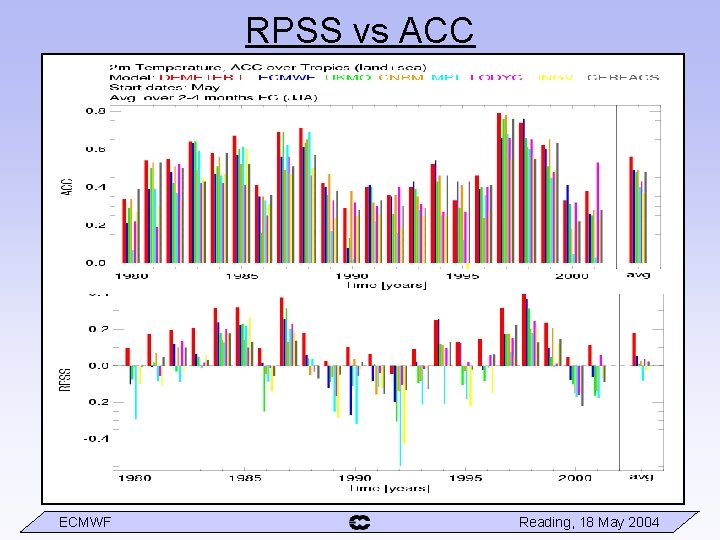

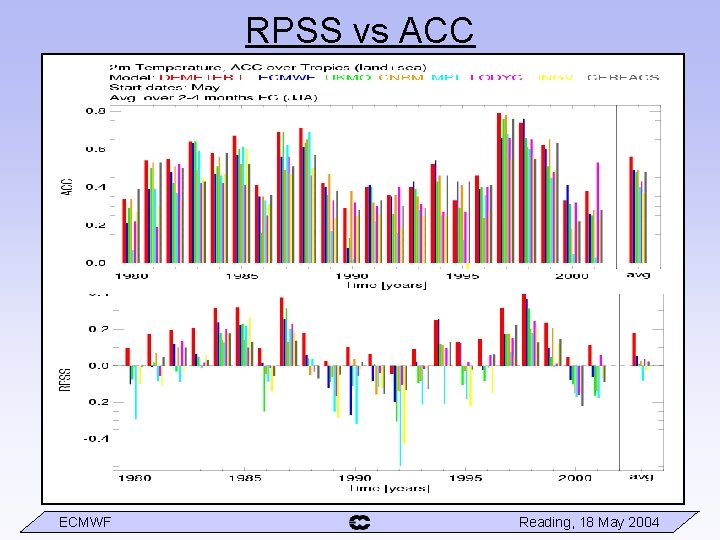

RPSS vs ACC ECMWF Reading, 18 May 2004

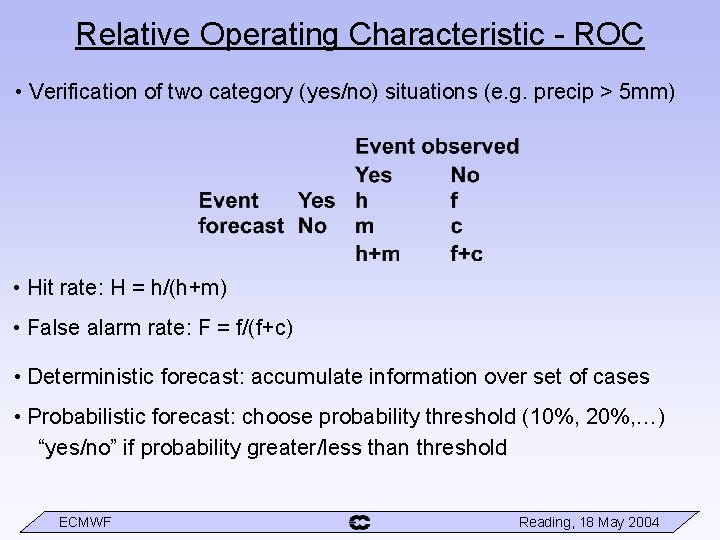

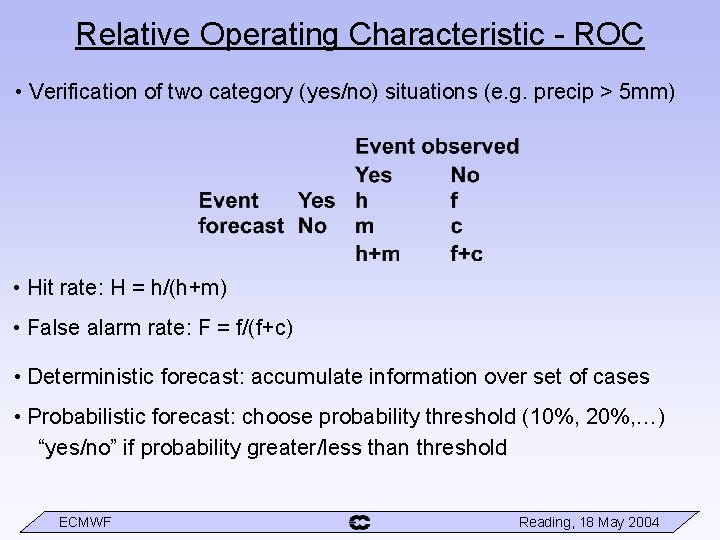

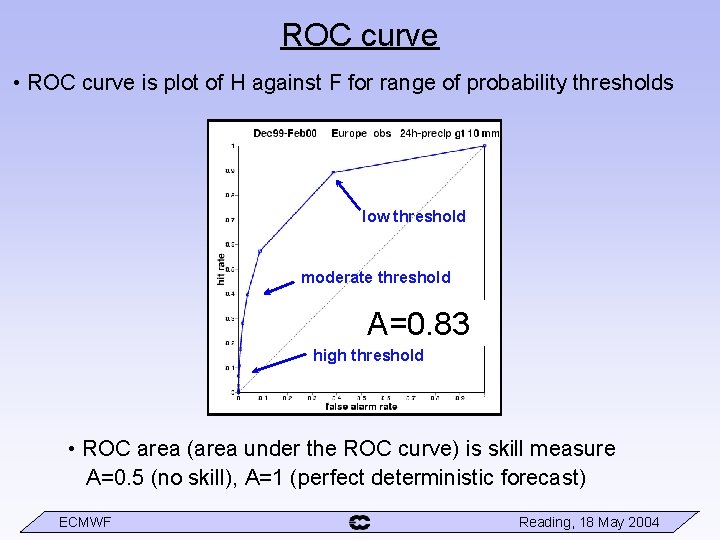

Relative Operating Characteristic - ROC • Verification of two category (yes/no) situations (e. g. precip > 5 mm) • Hit rate: H = h/(h+m) • False alarm rate: F = f/(f+c) • Deterministic forecast: accumulate information over set of cases • Probabilistic forecast: choose probability threshold (10%, 20%, …) “yes/no” if probability greater/less than threshold ECMWF Reading, 18 May 2004

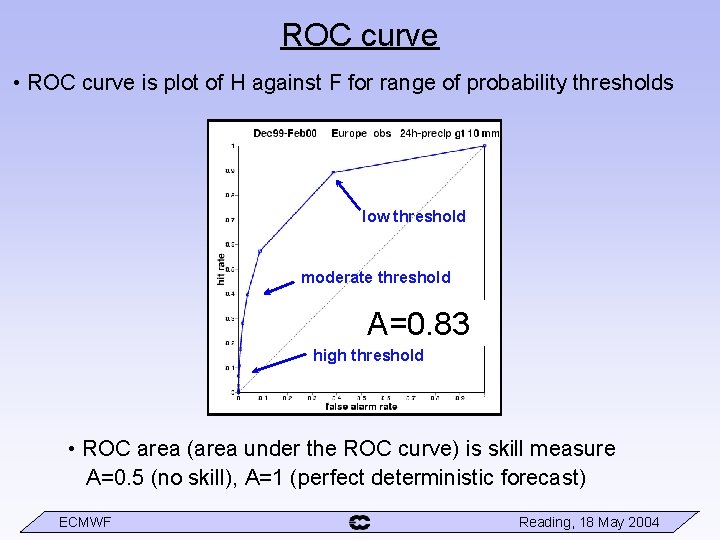

ROC curve • ROC curve is plot of H against F for range of probability thresholds low threshold moderate threshold A=0. 83 high threshold • ROC area (area under the ROC curve) is skill measure A=0. 5 (no skill), A=1 (perfect deterministic forecast) ECMWF Reading, 18 May 2004

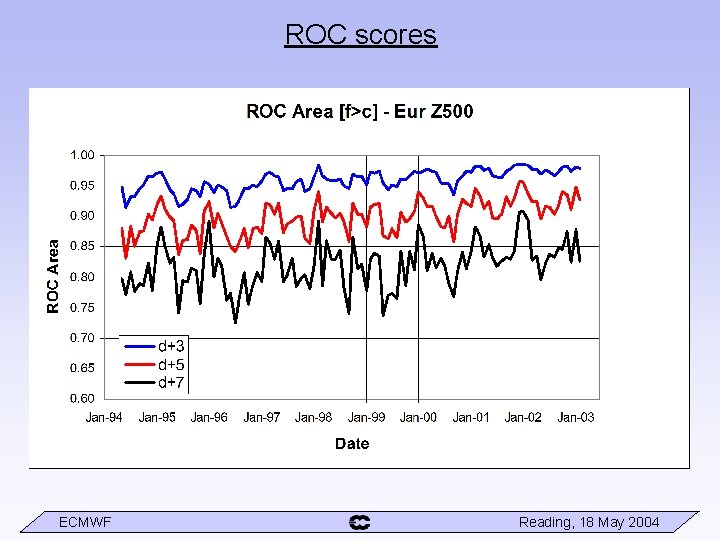

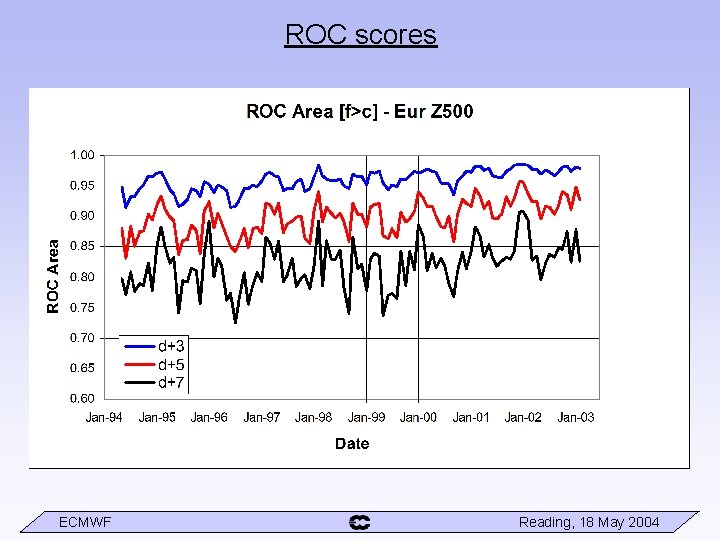

ROC scores ECMWF Reading, 18 May 2004

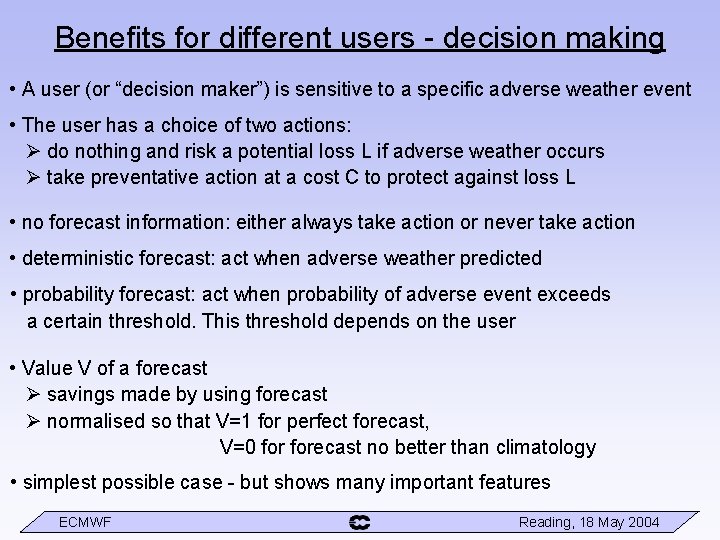

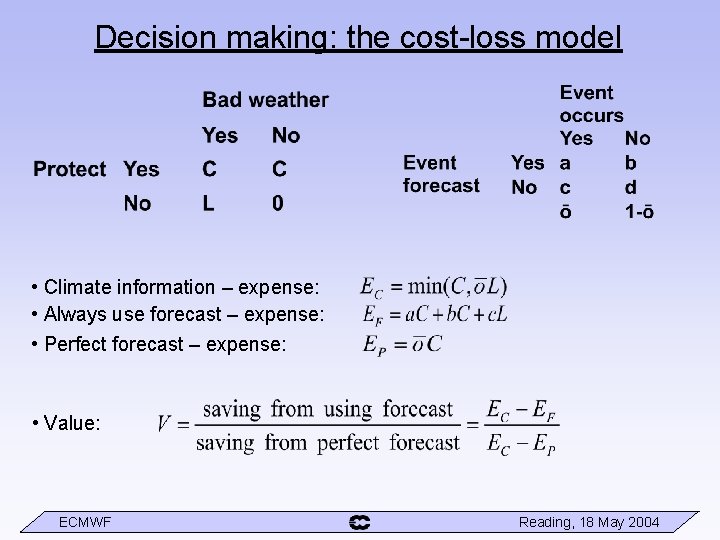

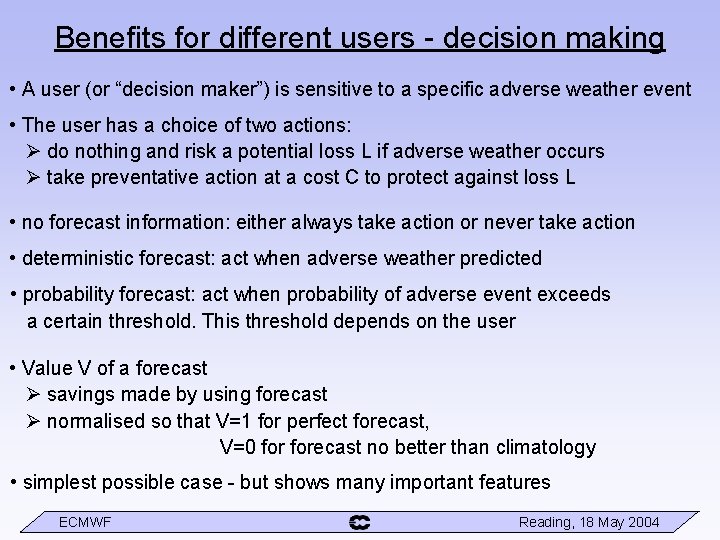

Benefits for different users - decision making • A user (or “decision maker”) is sensitive to a specific adverse weather event • The user has a choice of two actions: do nothing and risk a potential loss L if adverse weather occurs take preventative action at a cost C to protect against loss L • no forecast information: either always take action or never take action • deterministic forecast: act when adverse weather predicted • probability forecast: act when probability of adverse event exceeds a certain threshold. This threshold depends on the user • Value V of a forecast savings made by using forecast normalised so that V=1 for perfect forecast, V=0 forecast no better than climatology • simplest possible case - but shows many important features ECMWF Reading, 18 May 2004

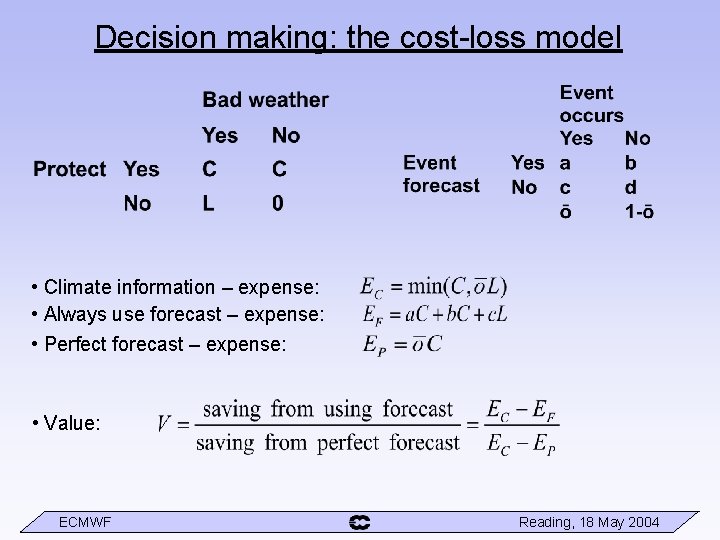

Decision making: the cost-loss model • Climate information – expense: • Always use forecast – expense: • Perfect forecast – expense: • Value: ECMWF Reading, 18 May 2004

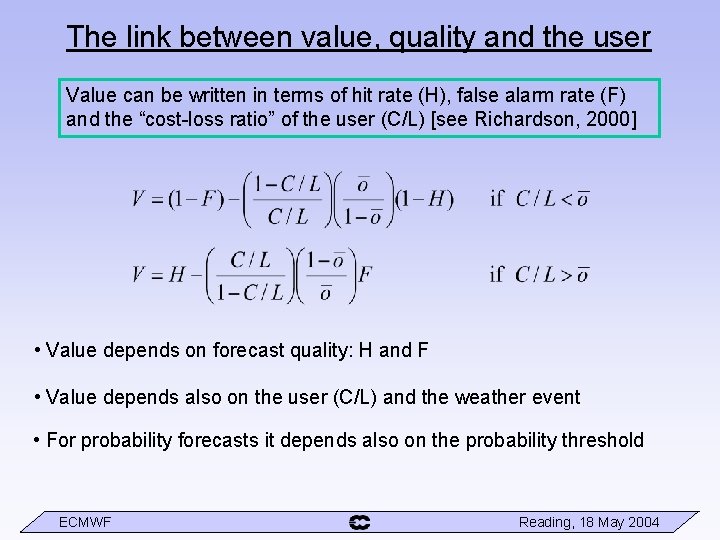

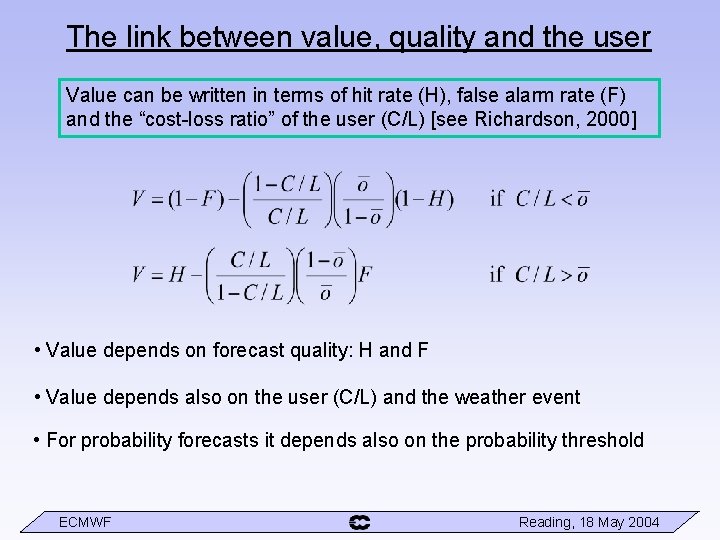

The link between value, quality and the user Value can be written in terms of hit rate (H), false alarm rate (F) and the “cost-loss ratio” of the user (C/L) [see Richardson, 2000] • Value depends on forecast quality: H and F • Value depends also on the user (C/L) and the weather event • For probability forecasts it depends also on the probability threshold ECMWF Reading, 18 May 2004

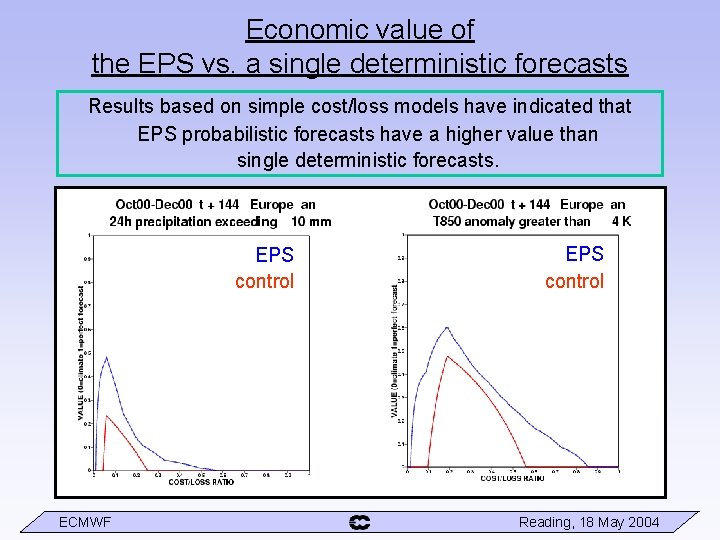

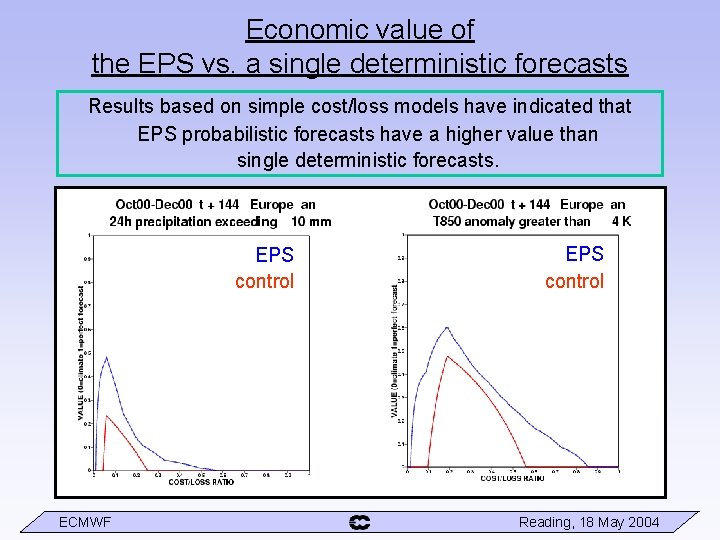

Economic value of the EPS vs. a single deterministic forecasts Results based on simple cost/loss models have indicated that EPS probabilistic forecasts have a higher value than single deterministic forecasts. EPS control ECMWF EPS control Reading, 18 May 2004

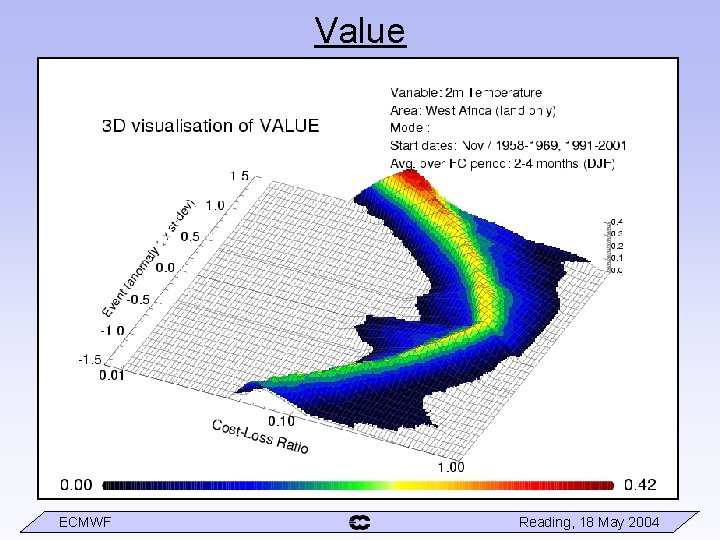

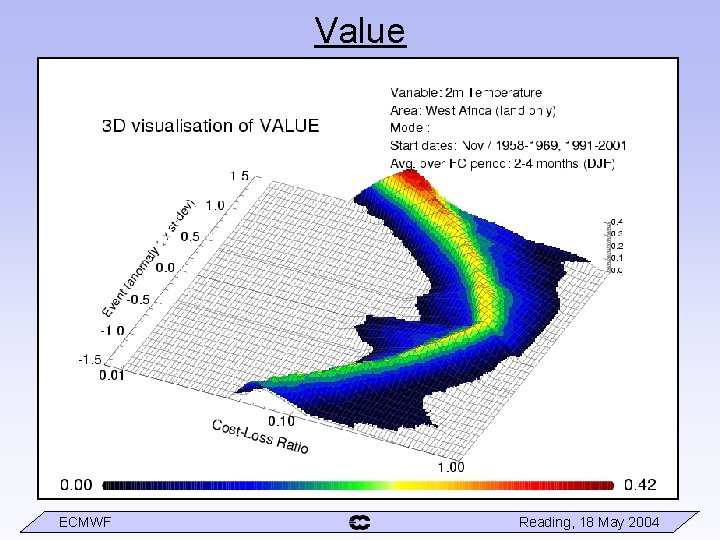

Value ECMWF Reading, 18 May 2004

Summary • Different ways of incorporating added dimension of EPS (EM vs. PDF) • Ensemble mean is best deterministic forecast EM should be used together with measure of spread • Verification of probability forecast different scores measure different aspects of forecast performance Reliability / Resolution, Brier Score (BSS), RPS (RPSS), ROC, … Perception of usefulness of ensemble may vary with score used It is important to understand the behaviour of different scores and choose appropriately • Potential economic value Decision making is user dependent Cost-Loss model a simple illustration – but shows many useful features ECMWF Reading, 18 May 2004

References and further reading • Wilks, D. S. , 1995. Statistical methods in the atmospheric sciences. Academic Press, pp. 464 • Katz, R. W. , & Murphy, A. H. , 1997: Economic value of weather and climate forecasting. Cambridge University Press, pp. 222. • I. T. Jolliffe and D. B. Stephenson, 2003. Forecast Verification. A Practitioner’s Guide in Atmospheric Science. Wiley, pp. 240 • Richardson, D. S. , 2000. Skill and relative economic value of the ECMWF Ensemble Prediction System. Q. J. R. Meteorol. Soc. , 126, 649 -668. • Richardson, D. S. , 2001. Measures of skill and value of ensemble prediction systems, their interrelationship and the effect of ensemble size. Q. J. R. Meteorol. Soc. , 127, 2473 -2489. • ECMWF newsletter for updates on EPS performance ECMWF Reading, 18 May 2004

Questions? ECMWF Reading, 18 May 2004