Environmental Data Analysis with Mat Lab Lecture 22

Environmental Data Analysis with Mat. Lab Lecture 22: Hypothesis Testing

Housekeeping Last home assigned today Due next Monday Factor Analysis Worksheet Available today

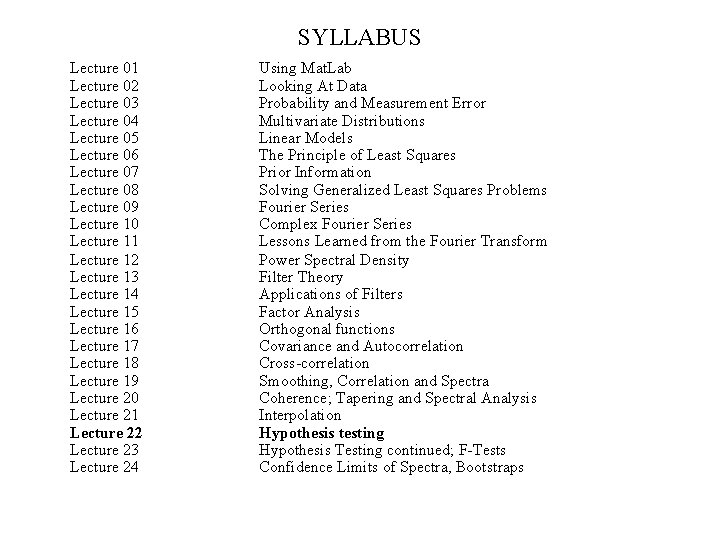

SYLLABUS Lecture 01 Lecture 02 Lecture 03 Lecture 04 Lecture 05 Lecture 06 Lecture 07 Lecture 08 Lecture 09 Lecture 10 Lecture 11 Lecture 12 Lecture 13 Lecture 14 Lecture 15 Lecture 16 Lecture 17 Lecture 18 Lecture 19 Lecture 20 Lecture 21 Lecture 22 Lecture 23 Lecture 24 Using Mat. Lab Looking At Data Probability and Measurement Error Multivariate Distributions Linear Models The Principle of Least Squares Prior Information Solving Generalized Least Squares Problems Fourier Series Complex Fourier Series Lessons Learned from the Fourier Transform Power Spectral Density Filter Theory Applications of Filters Factor Analysis Orthogonal functions Covariance and Autocorrelation Cross-correlation Smoothing, Correlation and Spectra Coherence; Tapering and Spectral Analysis Interpolation Hypothesis testing Hypothesis Testing continued; F-Tests Confidence Limits of Spectra, Bootstraps

purpose of the lecture to introduce Hypothesis Testing the process of determining the statistical significance of results

Part 1 motivation random variation as a spurious source of patterns

d x

looks pretty linear d x

![actually, its just a bunch of random numbers! figure(1); for i = [1: 100] actually, its just a bunch of random numbers! figure(1); for i = [1: 100]](http://slidetodoc.com/presentation_image_h2/11d443a988c31ac74b2a2ebfc0c62fcc/image-8.jpg)

actually, its just a bunch of random numbers! figure(1); for i = [1: 100] clf; axis( [1, 8, -5, 5] ); hold on; t = [2: 7]'; d = random('normal', 0, 1, 6, 1); plot( t, d, 'k-', 'Line. Width', 2 ); plot( t, d, 'ko', 'Line. Width', 2 ); [x, y]=ginput(1); if( x<1 ) break; end the script makes end plot after plot, and lets you stop when you see one you like

the linearity was due to random variation!

4 more random plots d d x x

scenario test of a drug Group A Group B given drug at start of illness given placebo at start of illness

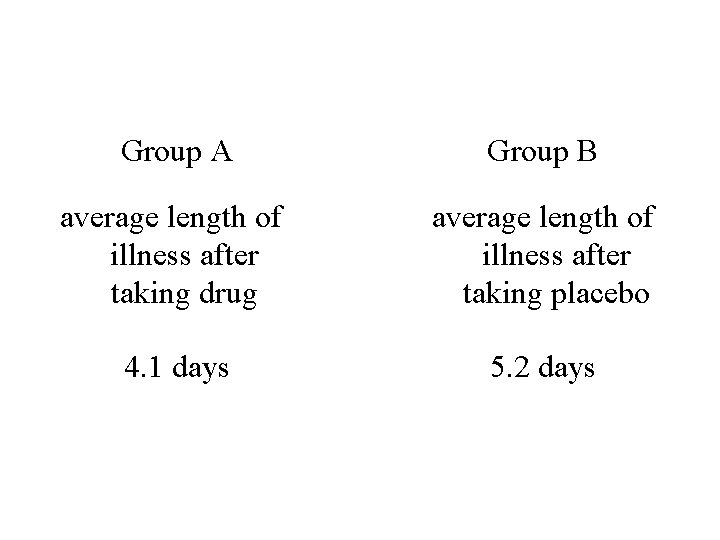

Group A Group B average length of illness after taking drug average length of illness after taking placebo 4. 1 days 5. 2 days

the logic people’s immune systems differ some naturally get better faster than others perhaps the drug test just happened - by random chance to have naturally faster people in Group A ?

How much confidence should you have that the difference between 4. 1 and 5. 2 is not due to random variation ?

67% 90% 95% 99%

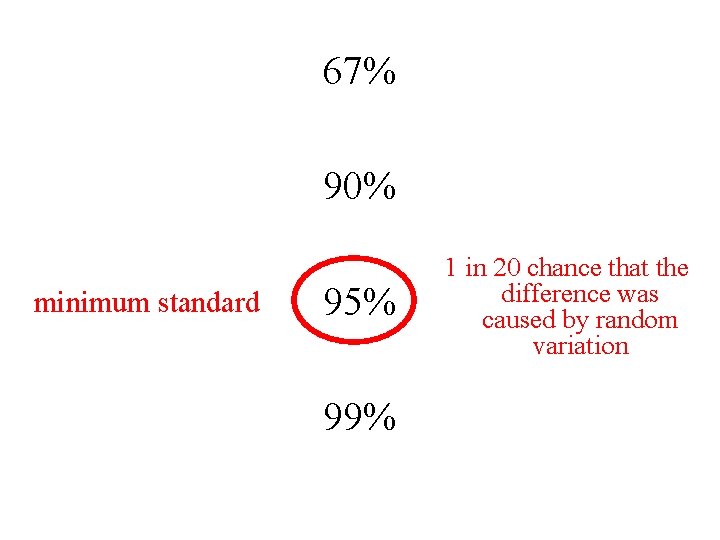

67% 90% minimum standard 95% 99% 1 in 20 chance that the difference was caused by random variation

the goal of this lecture is to develop techniques for quantifying the probability that a result is not due to random variation

Part 2 the distribution of the total error

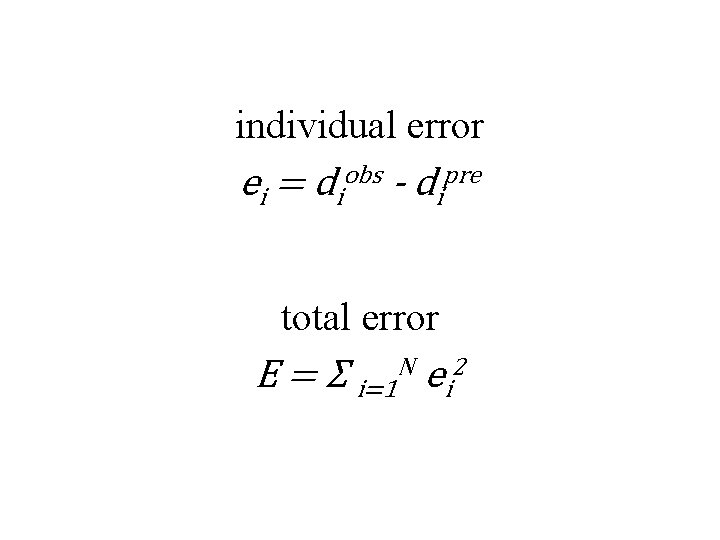

individual error ei = diobs - dipre total error E = Σ i=1 N ei 2

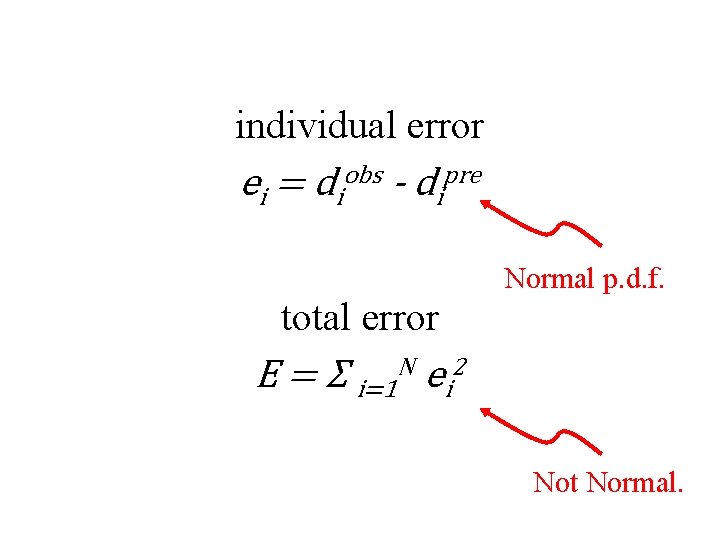

individual error ei = diobs - dipre Normal p. d. f. total error E = Σ i=1 N ei 2 Not Normal.

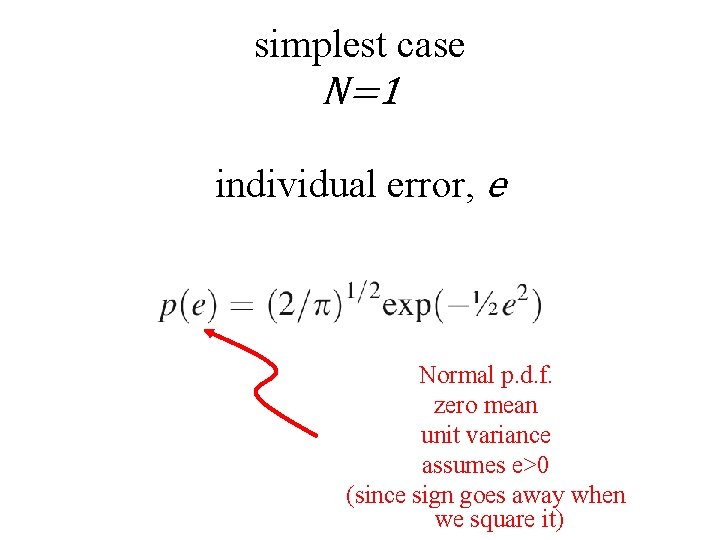

simplest case N=1 individual error, e Normal p. d. f. zero mean unit variance assumes e>0 (since sign goes away when we square it)

![total error, E=e 2 p(E)= p[e(E)] |de/d. E| e=E ½ so de/d. E = total error, E=e 2 p(E)= p[e(E)] |de/d. E| e=E ½ so de/d. E =](http://slidetodoc.com/presentation_image_h2/11d443a988c31ac74b2a2ebfc0c62fcc/image-22.jpg)

total error, E=e 2 p(E)= p[e(E)] |de/d. E| e=E ½ so de/d. E = ½E -½

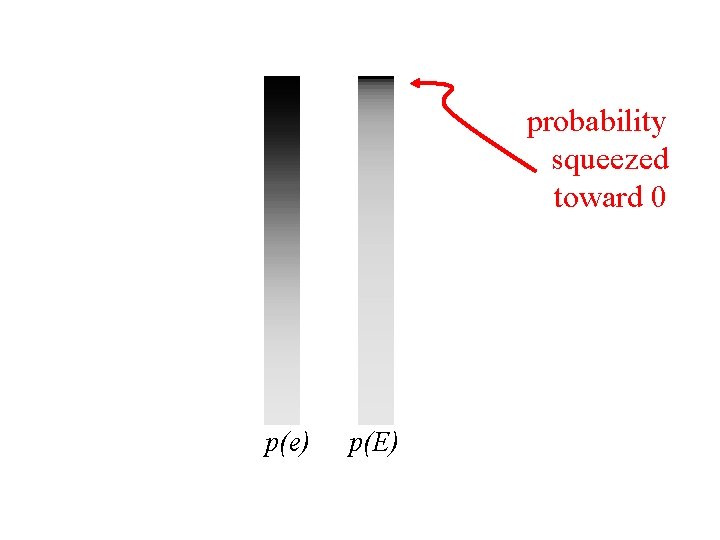

probability squeezed toward 0 p(e) p(E)

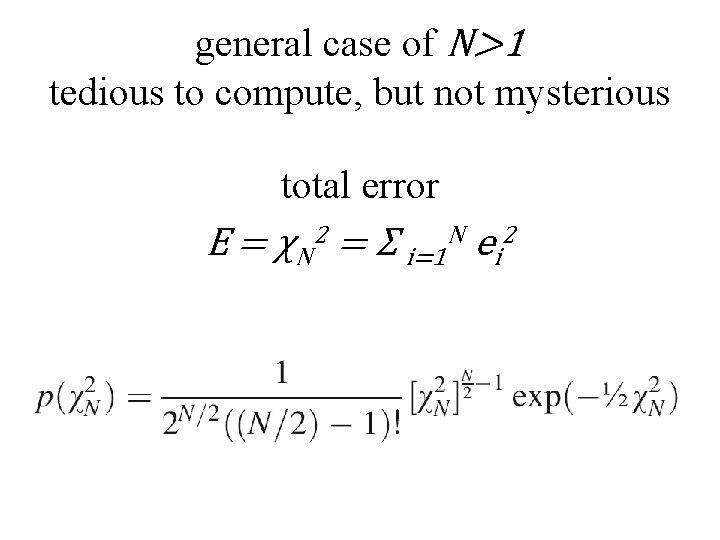

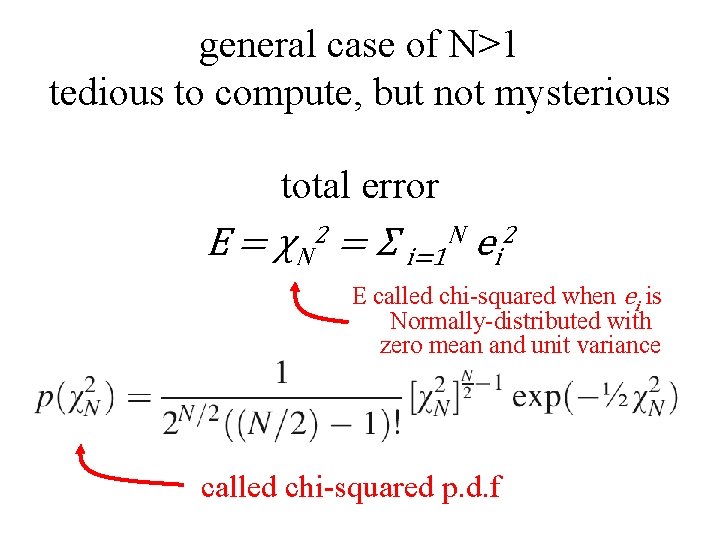

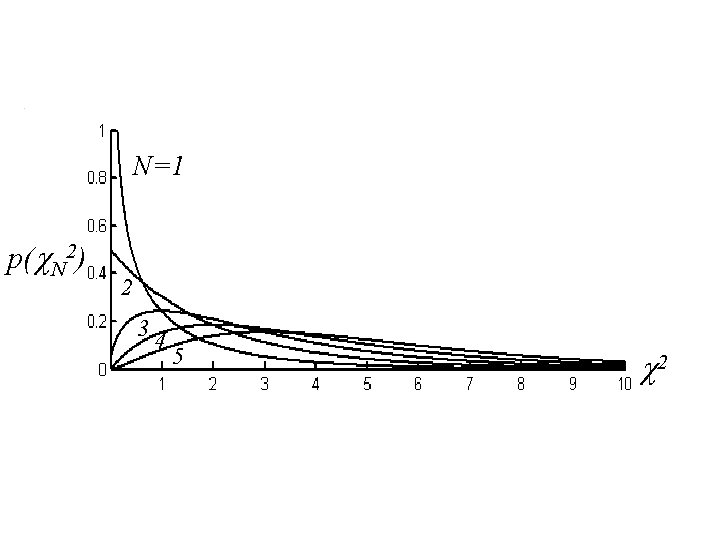

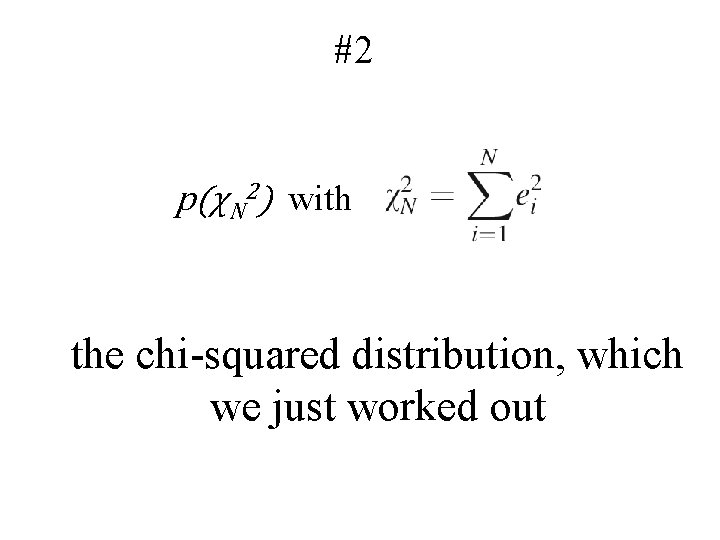

general case of N>1 tedious to compute, but not mysterious total error E = χN 2 = Σ i=1 N ei 2

general case of N>1 tedious to compute, but not mysterious total error E = χN 2 = Σ i=1 N ei 2 E called chi-squared when ei is Normally-distributed with zero mean and unit variance called chi-squared p. d. f

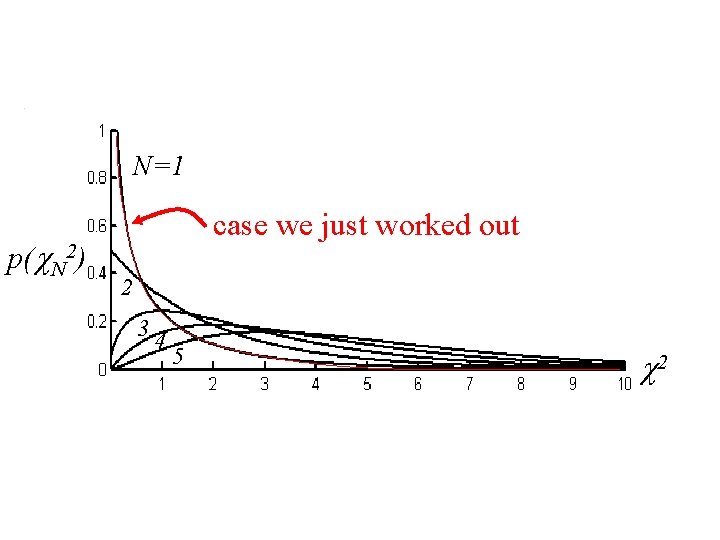

N=1 p(c. N 2) 2 34 5 c 2

N=1 p(c. N 2) case we just worked out 2 34 5 c 2

Chi-Squared p. d. f. N called “the degrees of freedom” mean N variance 2 N

In Mat. Lab

Four Important Distributions used in hypothesis testing

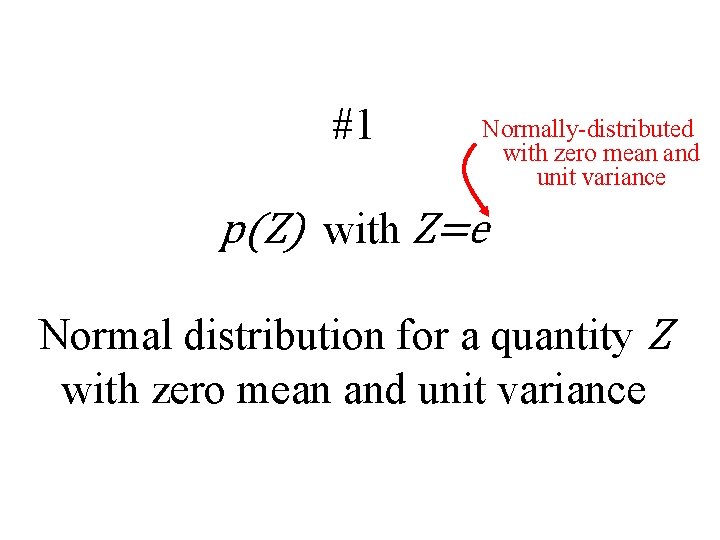

#1 Normally-distributed with zero mean and unit variance p(Z) with Z=e Normal distribution for a quantity Z with zero mean and unit variance

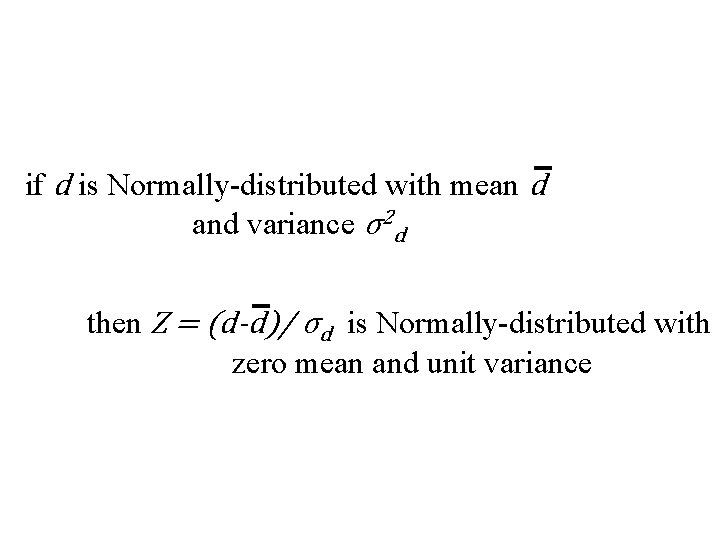

if d is Normally-distributed with mean d and variance σ2 d then Z = (d-d)/ σd is Normally-distributed with zero mean and unit variance

#2 p(χN 2) with the chi-squared distribution, which we just worked out

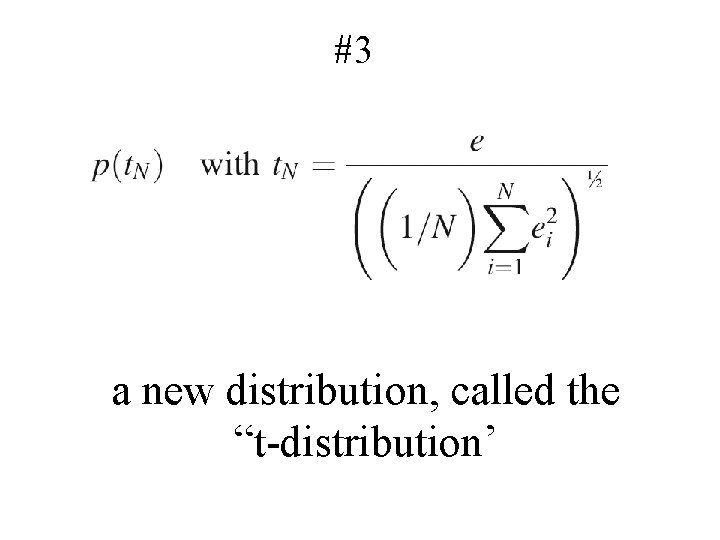

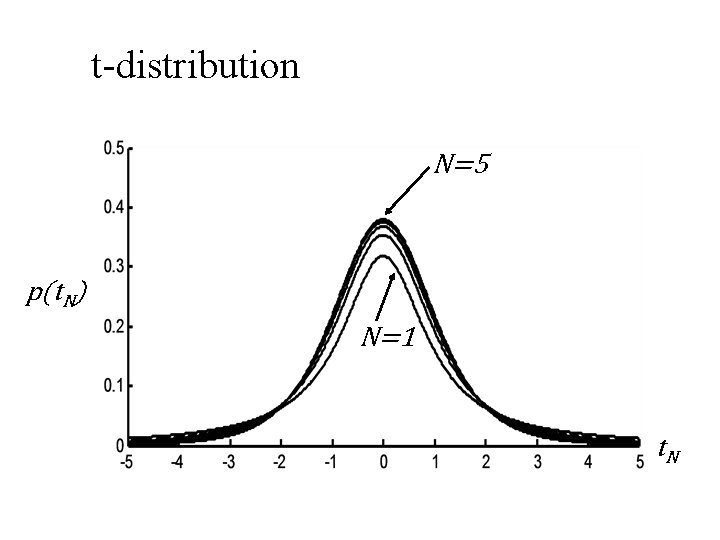

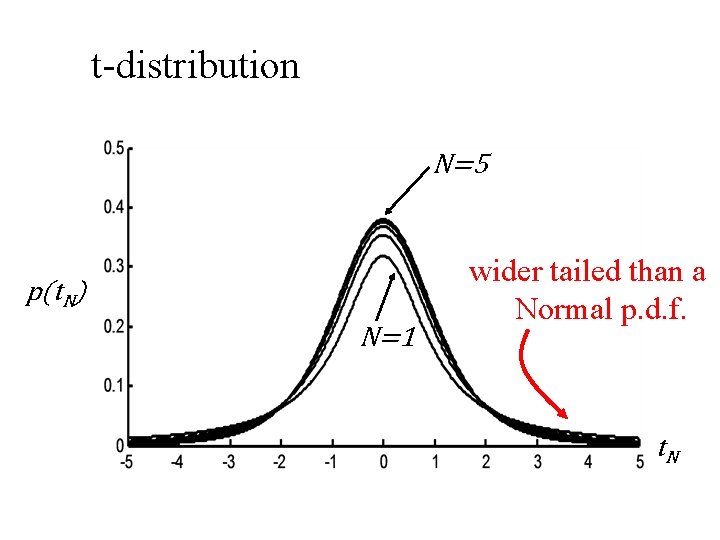

#3 a new distribution, called the “t-distribution’

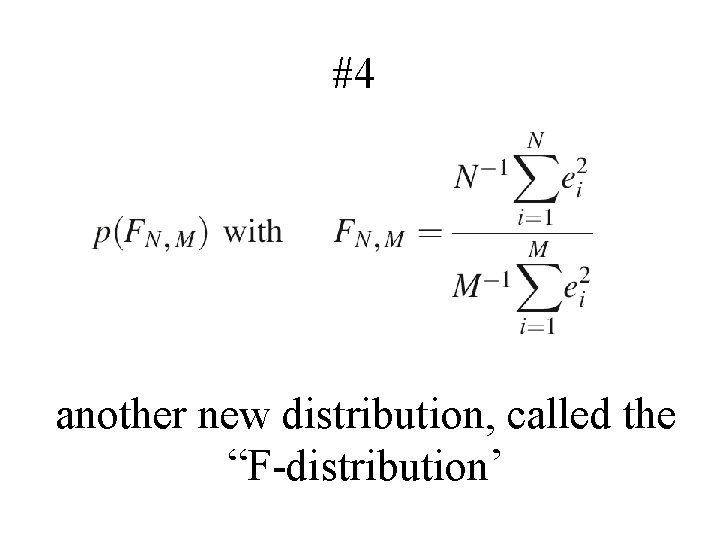

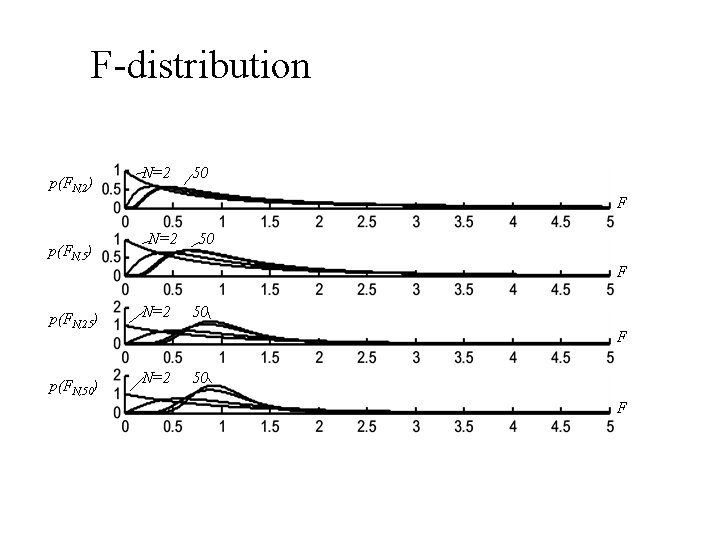

#4 another new distribution, called the “F-distribution’

t-distribution N=5 p(t. N) N=1 t. N

t-distribution N=5 p(t. N) N=1 wider tailed than a Normal p. d. f. t. N

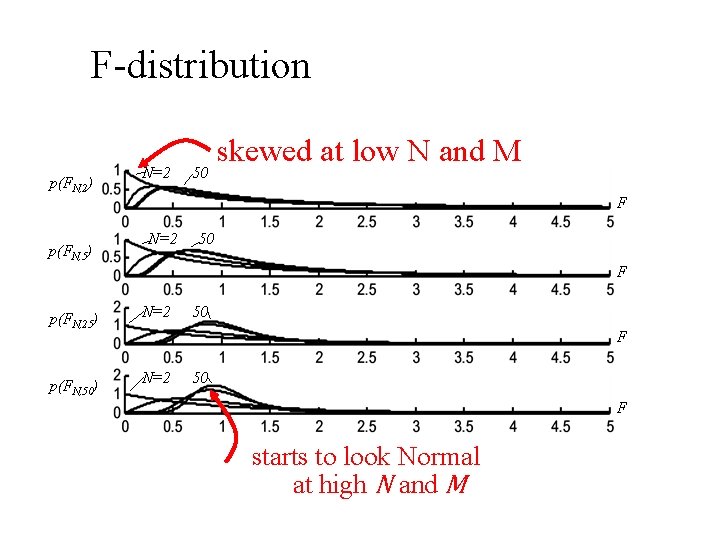

F-distribution p(FN, 2) p(FN, 5) N=2 50 F p(FN, 25) p(FN, 50) N=2 50 F

F-distribution p(FN, 2) p(FN, 5) N=2 50 skewed at low N and M F N=2 50 F p(FN, 25) p(FN, 50) N=2 50 F starts to look Normal at high N and M

Part 4 Hypothesis Testing

Step 1. State a Null Hypothesis some variation of the result is due to random variation

Step 1. State a Null Hypothesis some variation of the result is due to random variation e. g. the means of the Group A and Group B are different only because of random variation

Step 2. Focus on a quantity that is unlikely to be large when the Null Hypothesis is true

Step 2. Focus on a quantity that is unlikely to be large called a “statistic” when the Null Hypothesis is true

Step 2. Focus on a quantity that is unlikely to be large when the Null Hypothesis is true e. g. the difference in the means Δm=(mean. A – mean. B) is unlikely to be large if the Null Hypothesis is true

Step 3. Determine the value of statistic for your problem

Step 3. Determine the value of statistic for your problem e. g. Δm = (mean. A – mean. B) = 5. 2 – 4. 1 = 1. 1

Step 4. Calculate that the probability that a the observed value or greater would occur if the Null Hypothesis were true

Step 4. Calculate that the probability that a the observed value or greater would occur if the Null Hypothesis were true P( Δm ≥ 1. 1 ) = ?

Step 4. Reject the Null Hypothesis if such large values occur less than 5% of the time

Step 4. Reject the Null Hypothesis if such large values occur less than 5% of the time rejecting the Null Hypothesis means that your result is unlikely to be due to random variation

Part 5 An example test of a particle size measuring device

manufacturer's specs machine is perfectly calibrated particle diameters scatter about true value measurement error is σd 2 = 1 nm 2

your test of the machine purchase batch of 25 test particles each exactly 100 nm in diameter measure and tabulate their diameters repeat with another batch a few weeks later

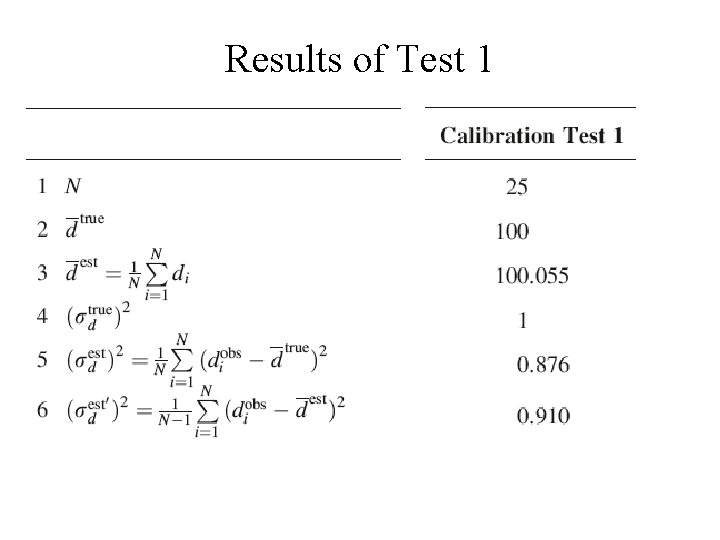

Results of Test 1

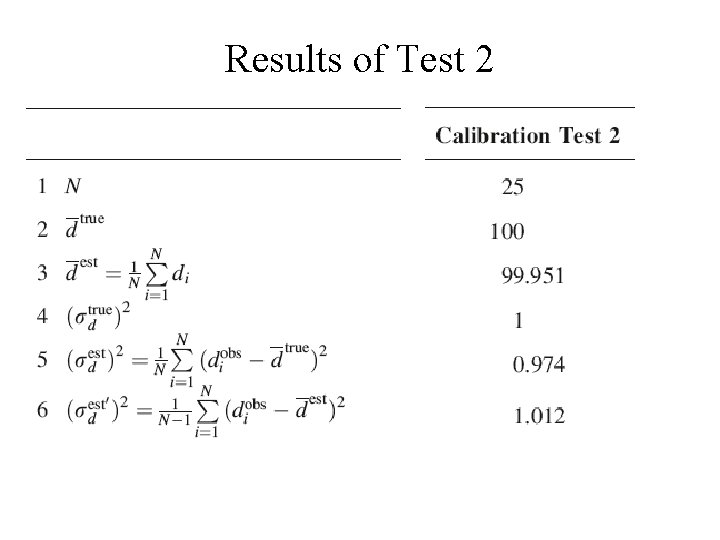

Results of Test 2

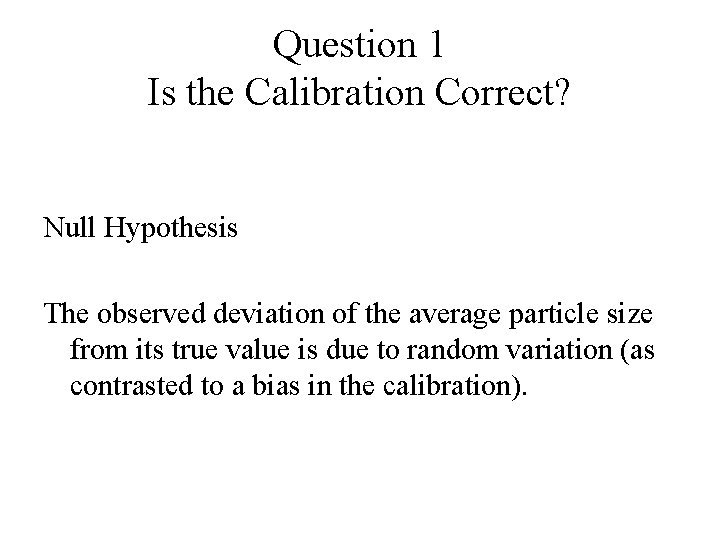

Question 1 Is the Calibration Correct? Null Hypothesis The observed deviation of the average particle size from its true value is due to random variation (as contrasted to a bias in the calibration).

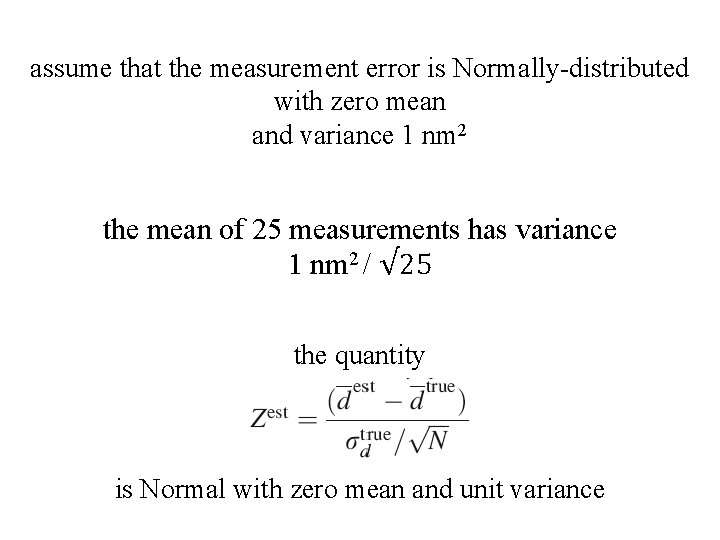

assume that the measurement error is Normally-distributed with zero mean and variance 1 nm 2 the mean of 25 measurements has variance 1 nm 2 / √ 25 the quantity is Normal with zero mean and unit variance

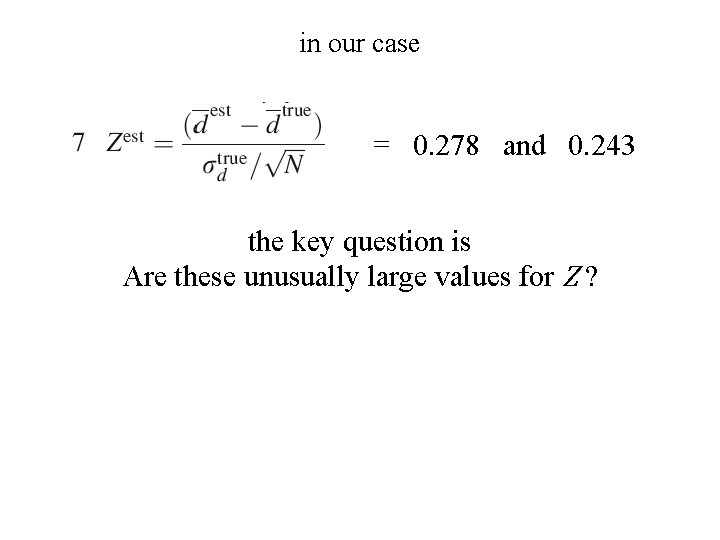

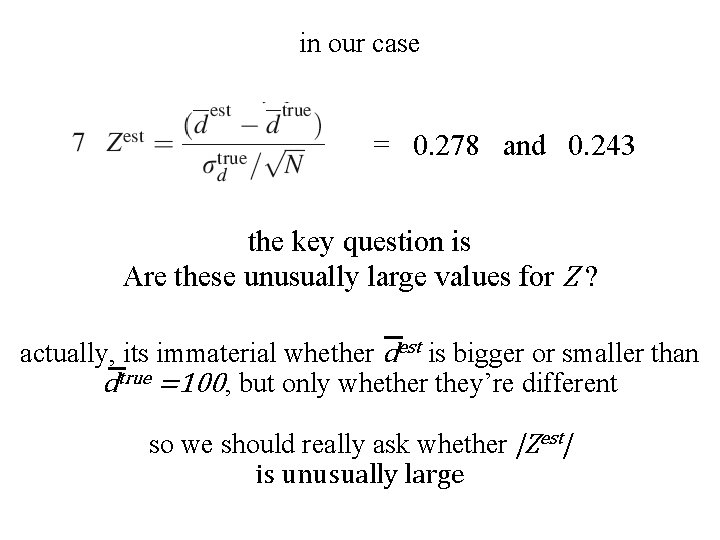

in our case = 0. 278 and 0. 243 the key question is Are these unusually large values for Z ?

in our case = 0. 278 and 0. 243 the key question is Are these unusually large values for Z ? actually, its immaterial whether dest is bigger or smaller than dtrue =100, but only whether they’re different so we should really ask whether |Zest| is unusually large

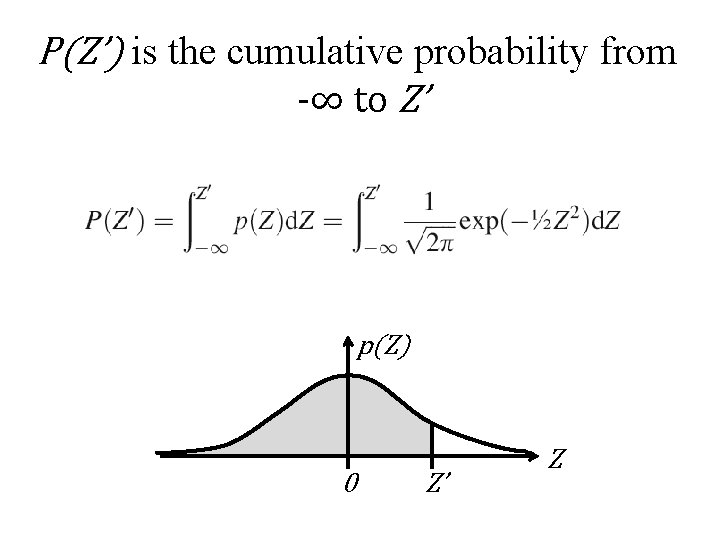

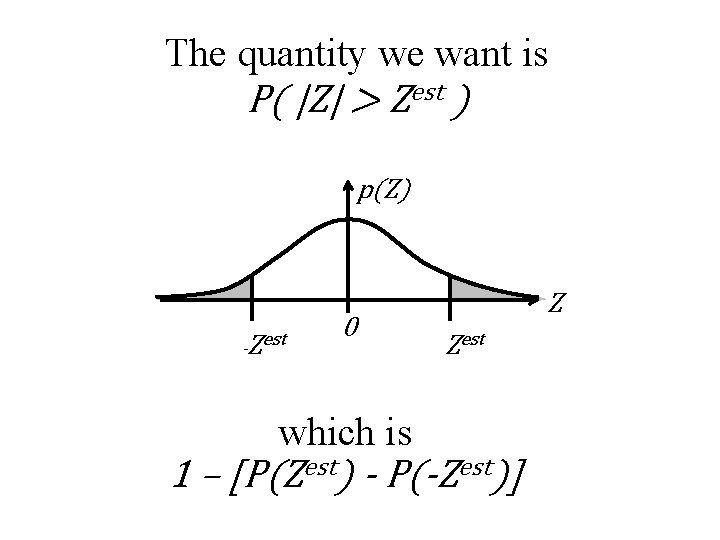

P(Z’) is the cumulative probability from -∞ to Z’ p(Z) 0 Z’ Z

The quantity we want is P( |Z| > Zest ) p(Z) -Zest 0 which is Z Zest 1 – [P(Zest) - P(-Zest)]

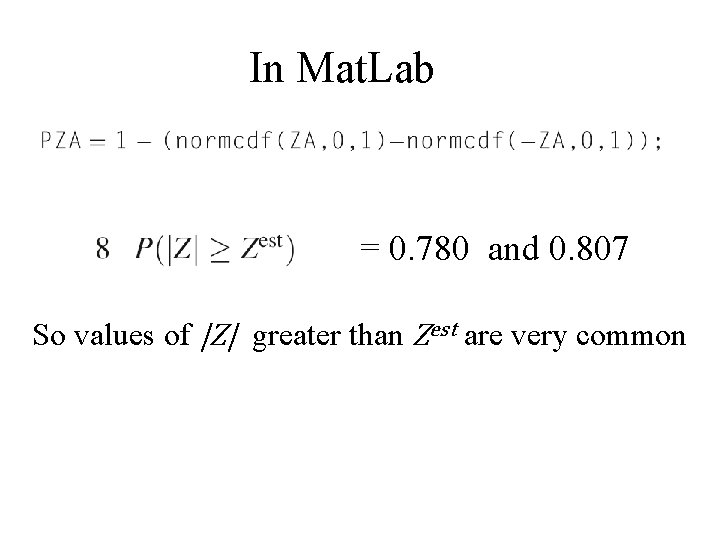

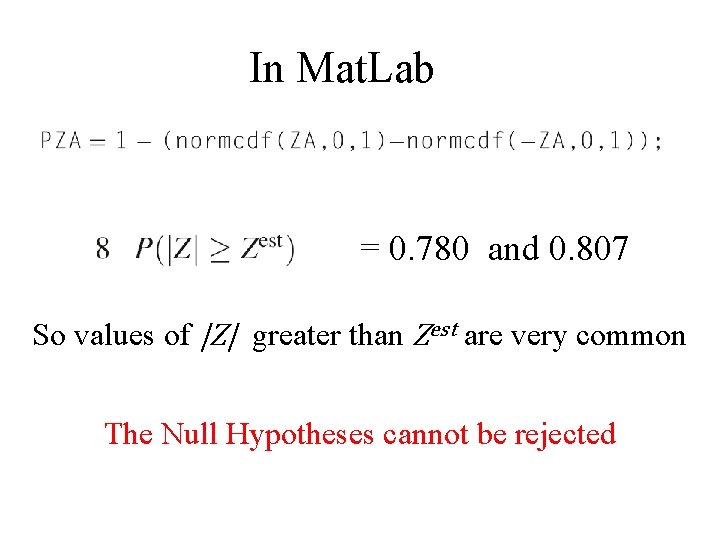

In Mat. Lab = 0. 780 and 0. 807 So values of |Z| greater than Zest are very common

In Mat. Lab = 0. 780 and 0. 807 So values of |Z| greater than Zest are very common The Null Hypotheses cannot be rejected

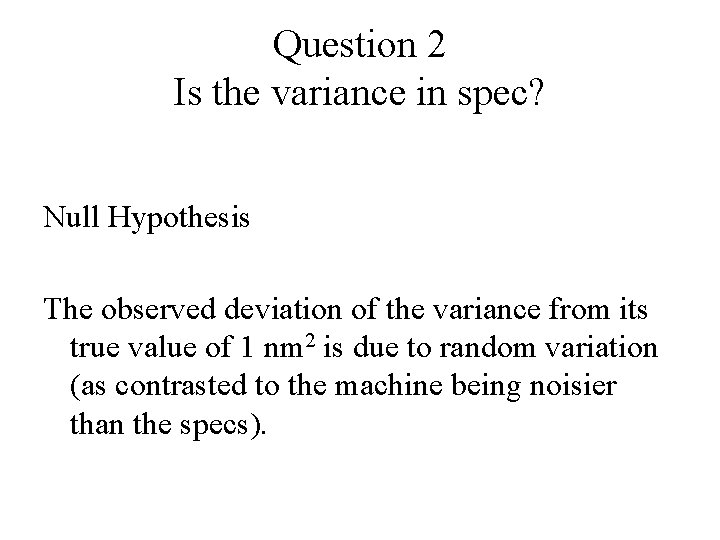

Question 2 Is the variance in spec? Null Hypothesis The observed deviation of the variance from its true value of 1 nm 2 is due to random variation (as contrasted to the machine being noisier than the specs).

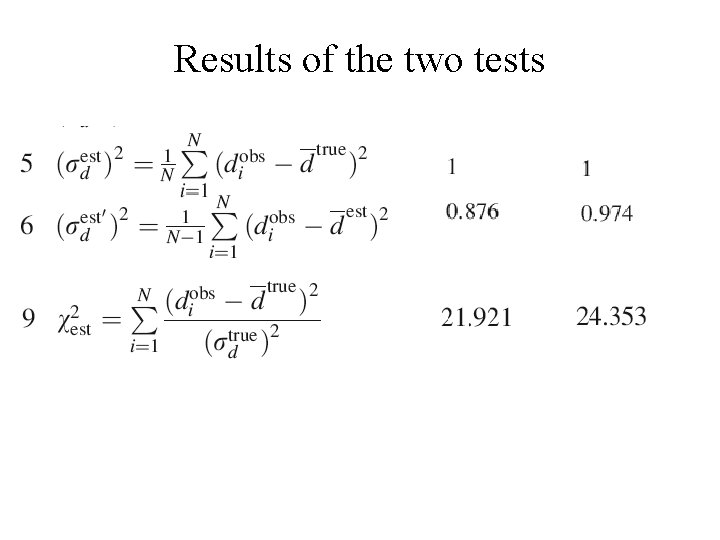

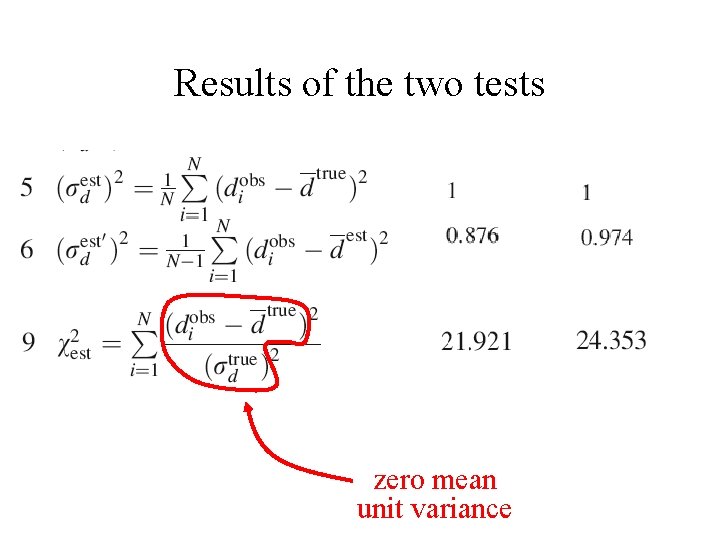

Results of the two tests

Results of the two tests zero mean unit variance

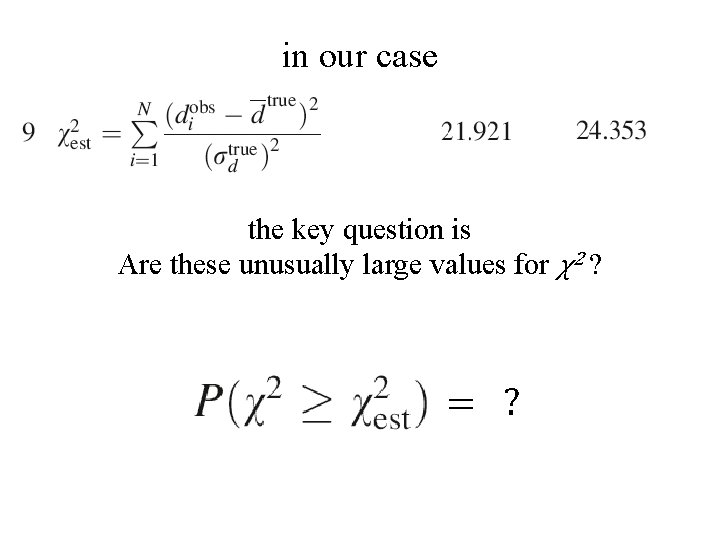

in our case the key question is Are these unusually large values for χ2 ? = ?

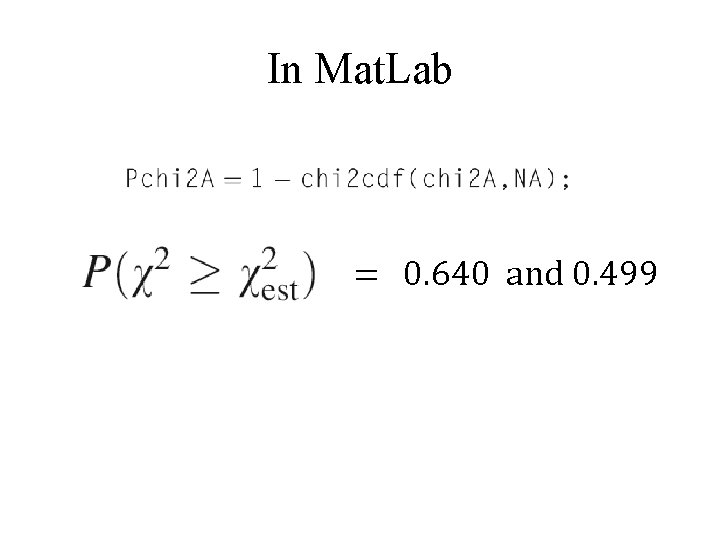

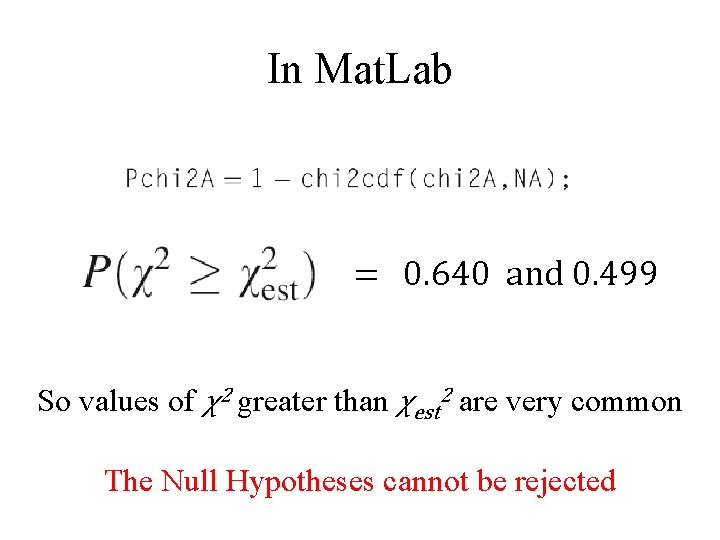

In Mat. Lab = 0. 640 and 0. 499

In Mat. Lab = 0. 640 and 0. 499 So values of χ2 greater than χest 2 are very common The Null Hypotheses cannot be rejected

we will continue this scenario in the next lecture

- Slides: 71