Environmental Data Analysis with Mat Lab 2 nd

Environmental Data Analysis with Mat. Lab 2 nd Edition Lecture 14: Applications of Filters

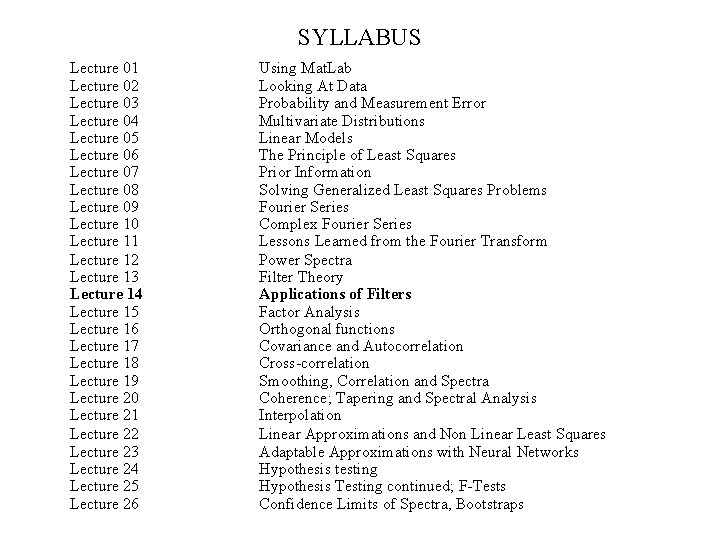

SYLLABUS Lecture 01 Lecture 02 Lecture 03 Lecture 04 Lecture 05 Lecture 06 Lecture 07 Lecture 08 Lecture 09 Lecture 10 Lecture 11 Lecture 12 Lecture 13 Lecture 14 Lecture 15 Lecture 16 Lecture 17 Lecture 18 Lecture 19 Lecture 20 Lecture 21 Lecture 22 Lecture 23 Lecture 24 Lecture 25 Lecture 26 Using Mat. Lab Looking At Data Probability and Measurement Error Multivariate Distributions Linear Models The Principle of Least Squares Prior Information Solving Generalized Least Squares Problems Fourier Series Complex Fourier Series Lessons Learned from the Fourier Transform Power Spectra Filter Theory Applications of Filters Factor Analysis Orthogonal functions Covariance and Autocorrelation Cross-correlation Smoothing, Correlation and Spectra Coherence; Tapering and Spectral Analysis Interpolation Linear Approximations and Non Linear Least Squares Adaptable Approximations with Neural Networks Hypothesis testing Hypothesis Testing continued; F-Tests Confidence Limits of Spectra, Bootstraps

Goals of the lecture further develop the idea of the Linear Filter and its applications

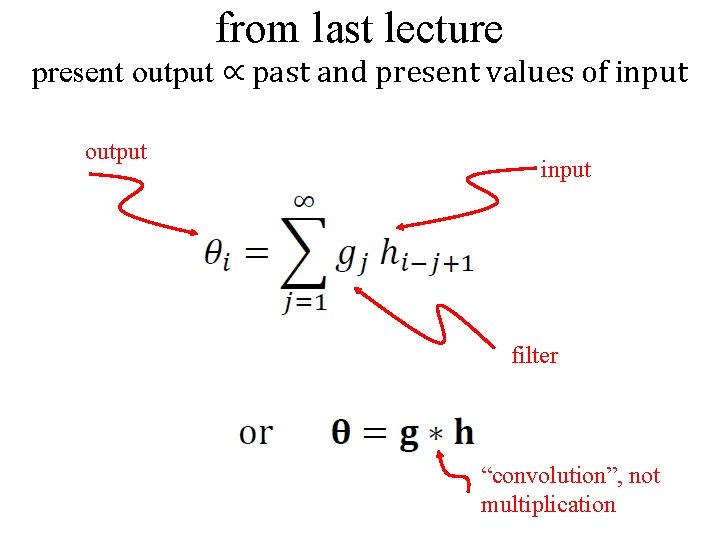

from last lecture present output ∝ past and present values of input output input filter “convolution”, not multiplication

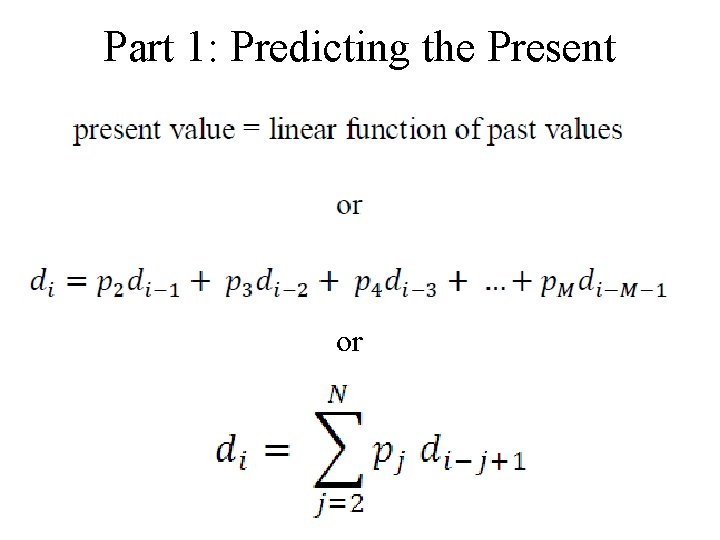

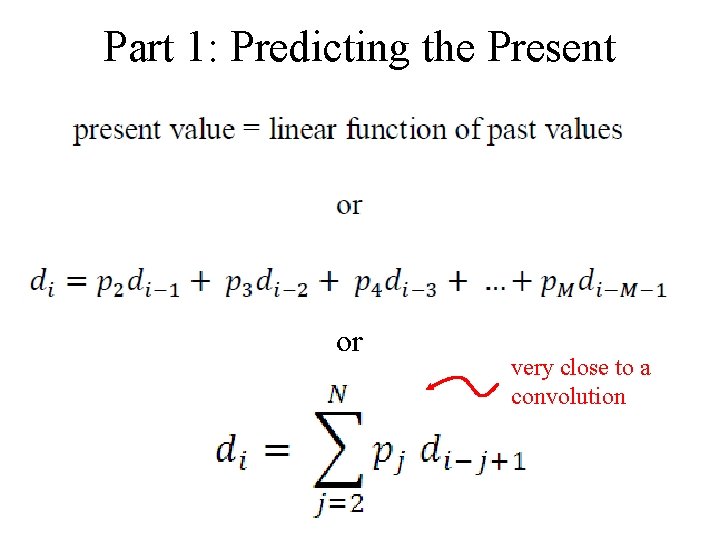

Part 1: Predicting the Present or

Part 1: Predicting the Present or very close to a convolution

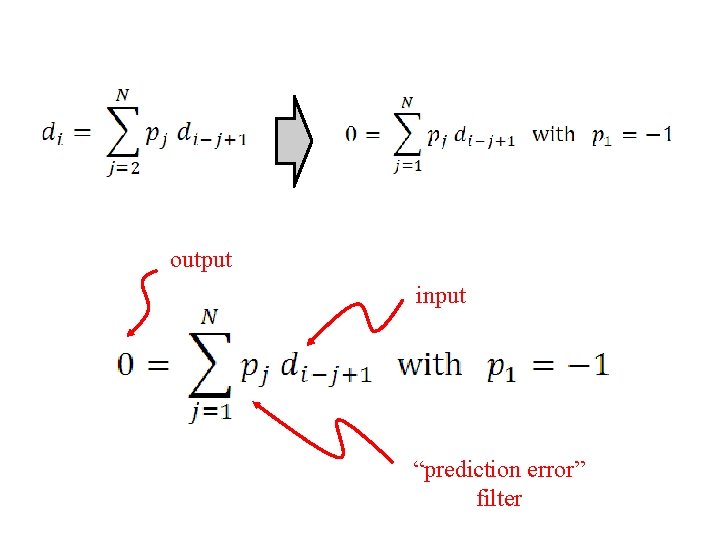

output input “prediction error” filter

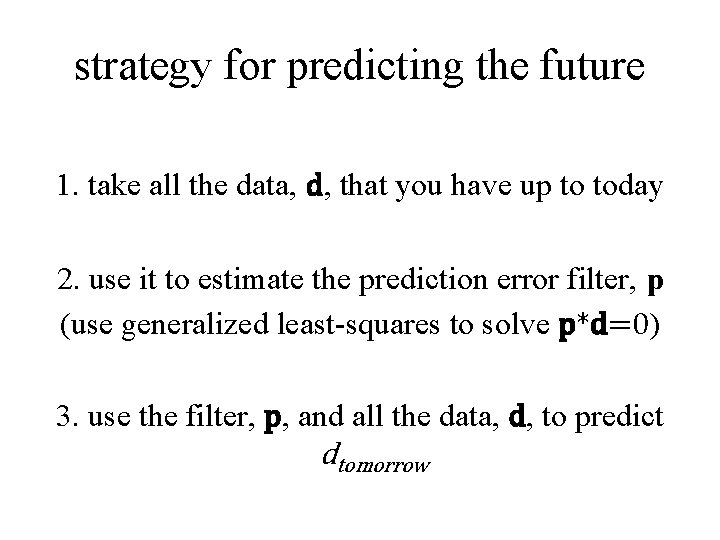

strategy for predicting the future 1. take all the data, d, that you have up to today 2. use it to estimate the prediction error filter, p (use generalized least-squares to solve p*d=0) 3. use the filter, p, and all the data, d, to predict dtomorrow

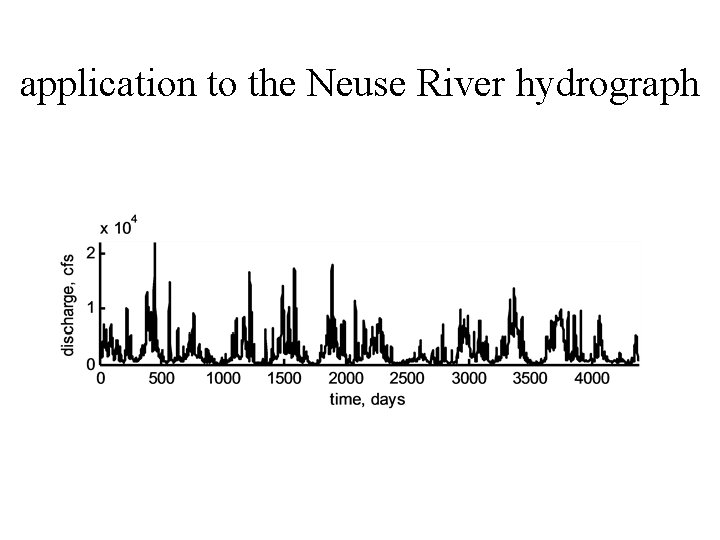

application to the Neuse River hydrograph

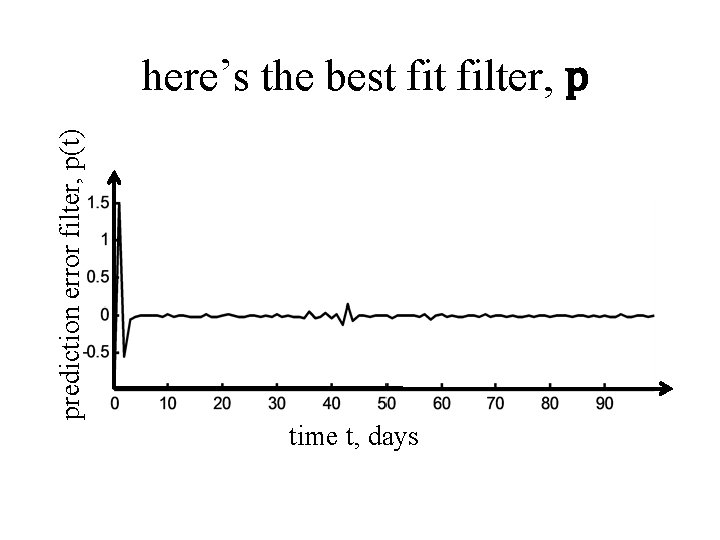

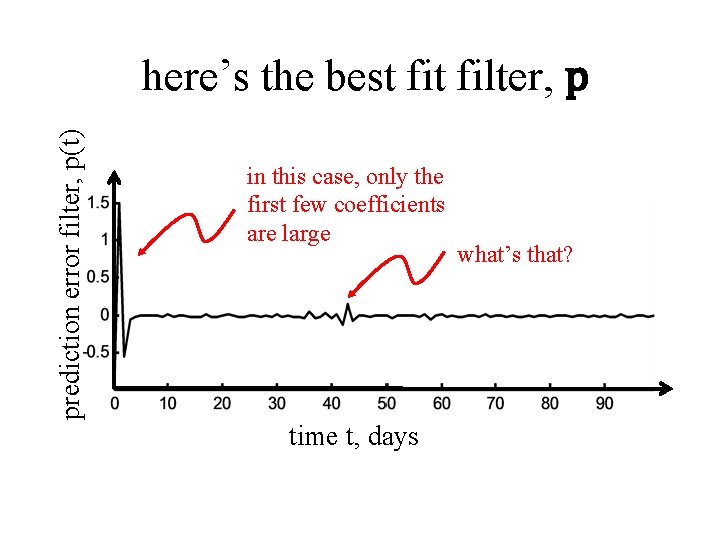

prediction error filter, p(t) here’s the best filter, p time t, days

prediction error filter, p(t) here’s the best filter, p in this case, only the first few coefficients are large time t, days what’s that?

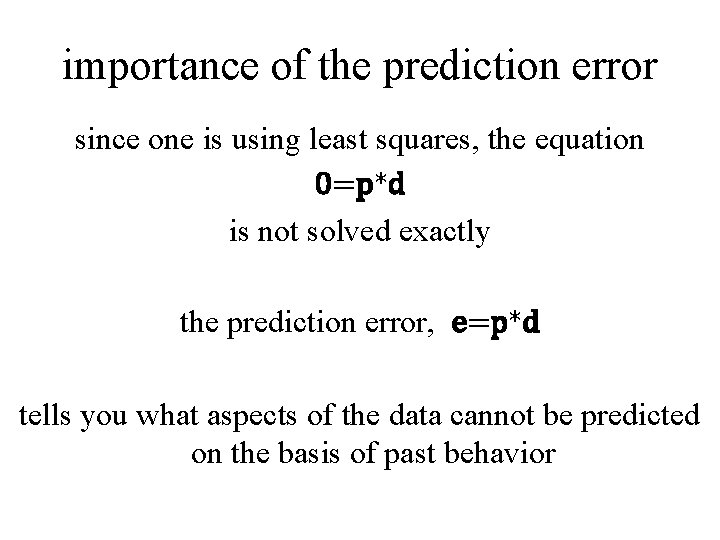

importance of the prediction error since one is using least squares, the equation 0=p*d is not solved exactly the prediction error, e=p*d tells you what aspects of the data cannot be predicted on the basis of past behavior

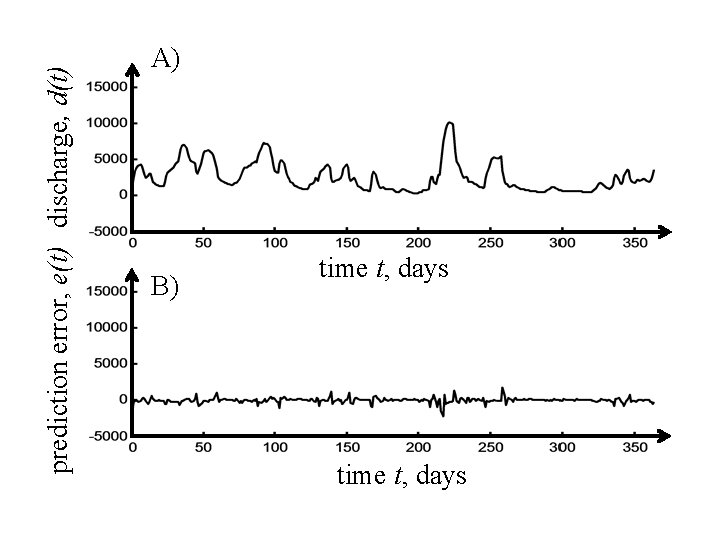

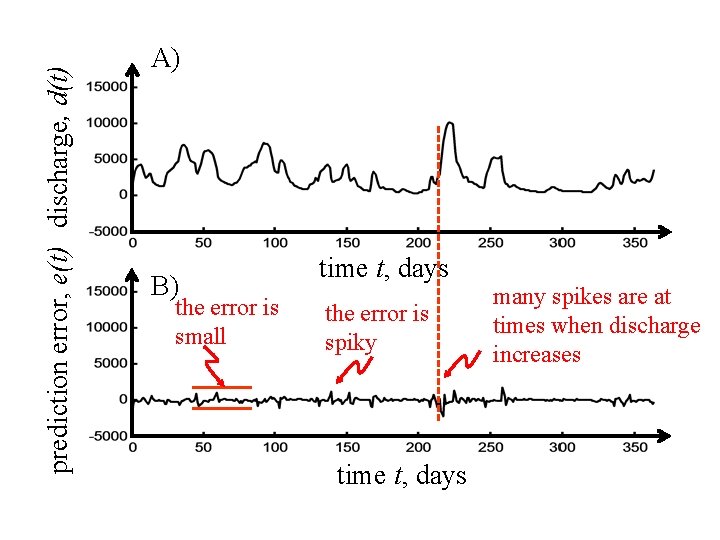

prediction error, e(t) discharge, d(t) A) B) time t, days

prediction error, e(t) discharge, d(t) A) B) the error is small time t, days the error is spiky time t, days many spikes are at times when discharge increases

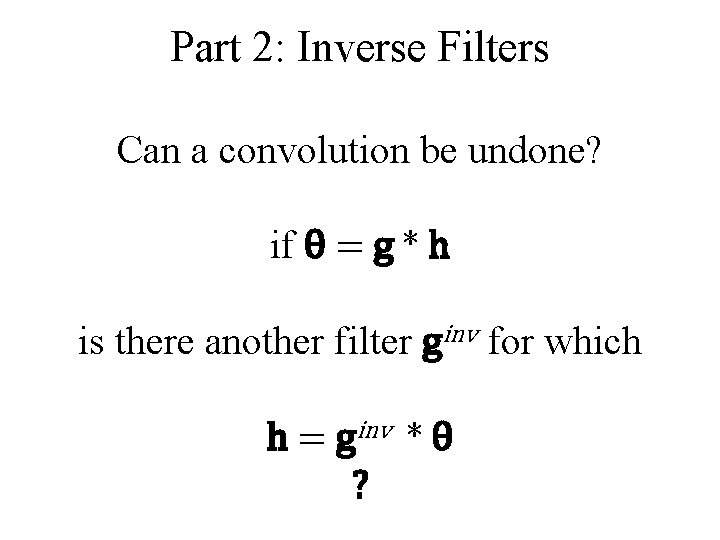

Part 2: Inverse Filters Can a convolution be undone? if θ = g * h is there another filter ginv for which h = ginv * θ ?

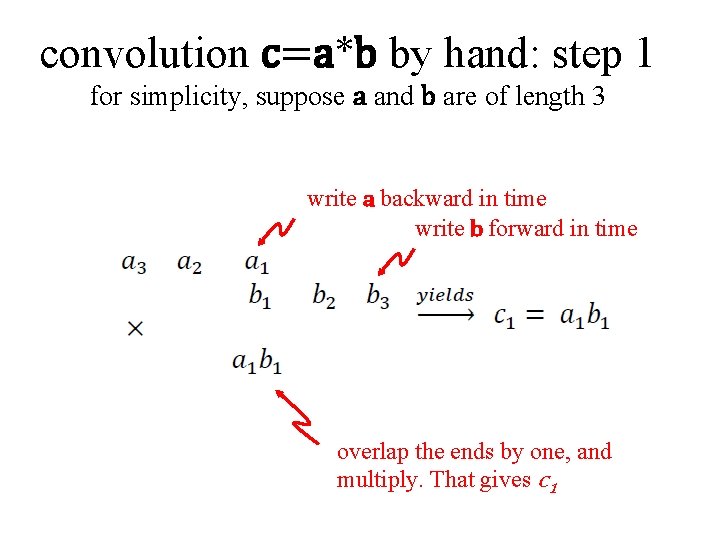

convolution c=a*b by hand: step 1 for simplicity, suppose a and b are of length 3 write a backward in time write b forward in time overlap the ends by one, and multiply. That gives c 1

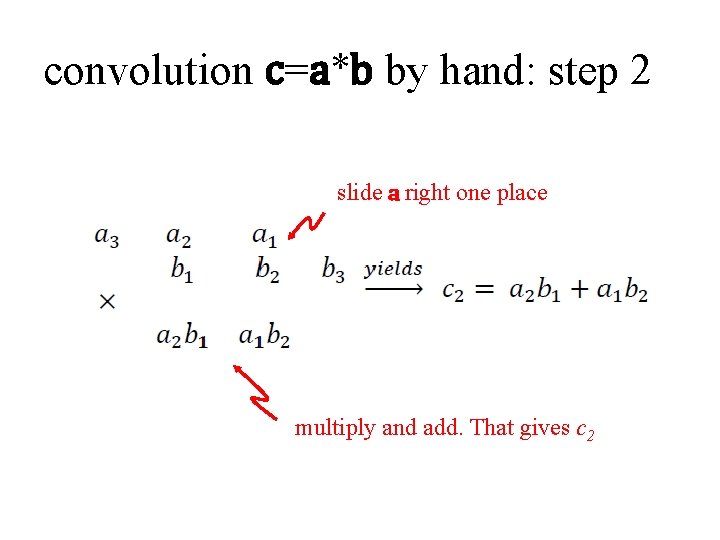

convolution c=a*b by hand: step 2 slide a right one place multiply and add. That gives c 2

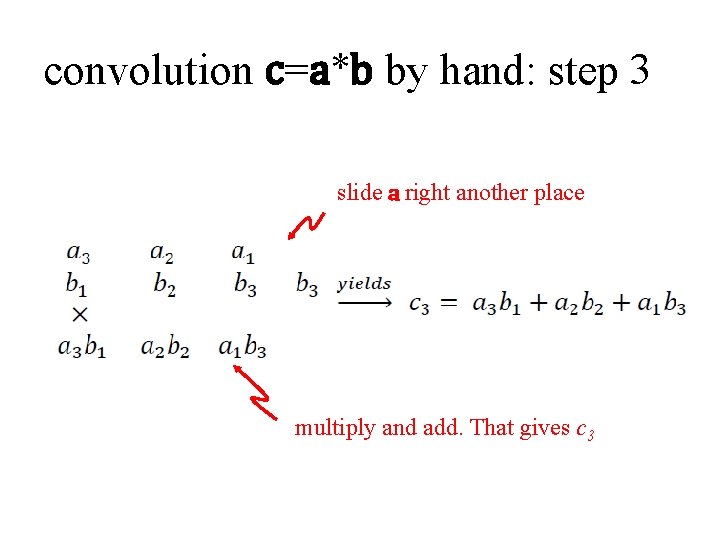

convolution c=a*b by hand: step 3 slide a right another place multiply and add. That gives c 3

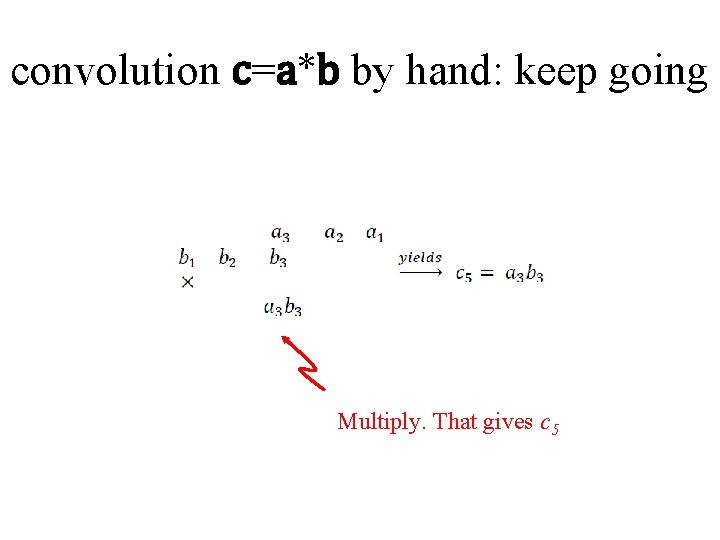

convolution c=a*b by hand: keep going Multiply. That gives c 5

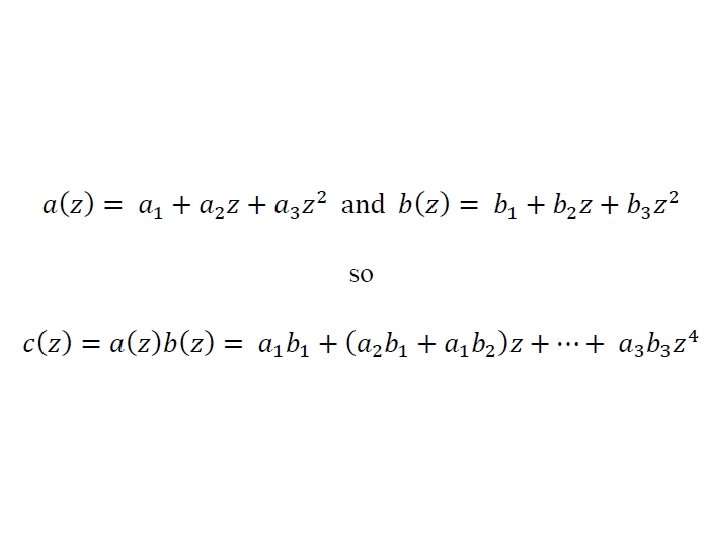

an important observation this is the same pattern that we obtain when we multiply polynomials

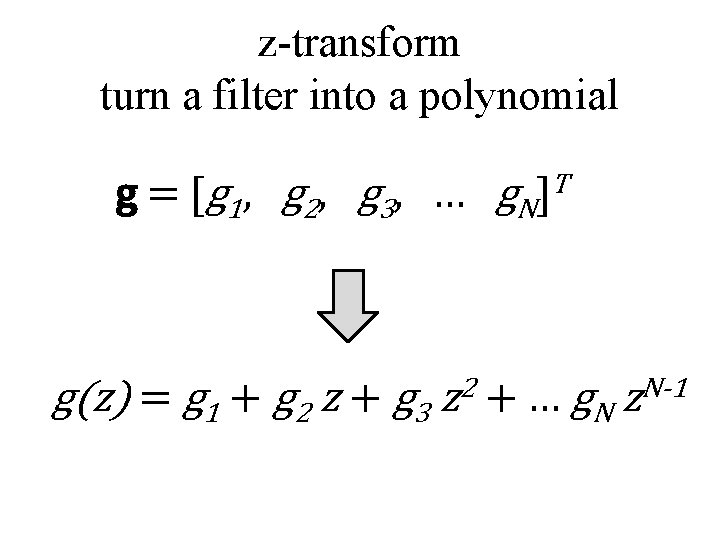

z-transform turn a filter into a polynomial g = [ g 1 , g 2 , g 3 , … g N ]T g(z) = g 1 + g 2 z + g 3 z 2 + … g. N z. N-1

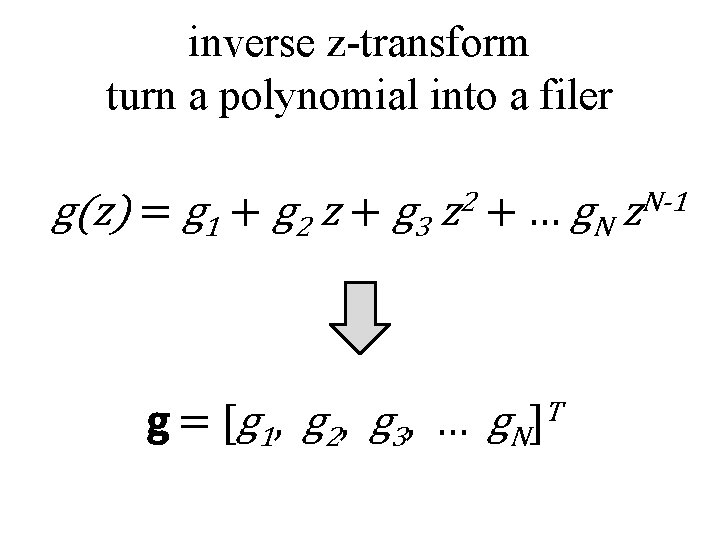

inverse z-transform turn a polynomial into a filer g(z) = g 1 + g 2 z + g 3 2 z + … g. N g = [ g 1 , g 2 , g 3 , … g N ]T N-1 z

why would we want to do this? because we know a lot about polynomials

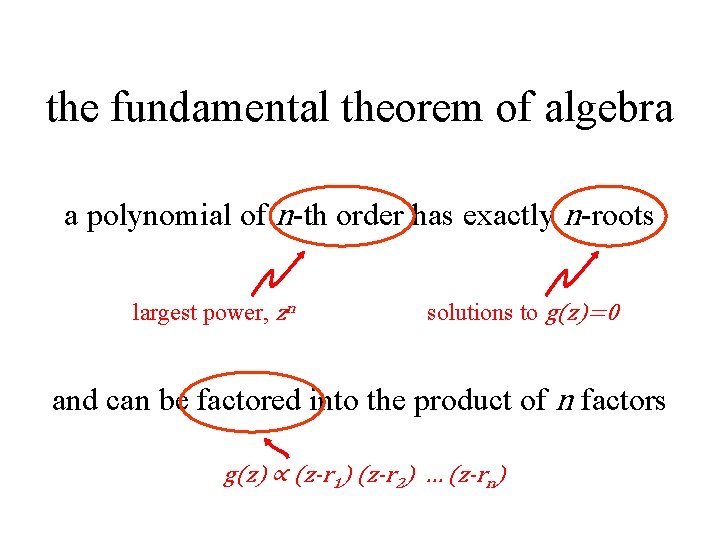

the fundamental theorem of algebra a polynomial of n-th order has exactly n roots and thus can be factored into the product of n factors

the fundamental theorem of algebra a polynomial of n-th order has exactly n-roots largest power, zn solutions to g(z)=0 and can be factored into the product of n factors g(z) ∝ (z-r 1) (z-r 2) … (z-rn)

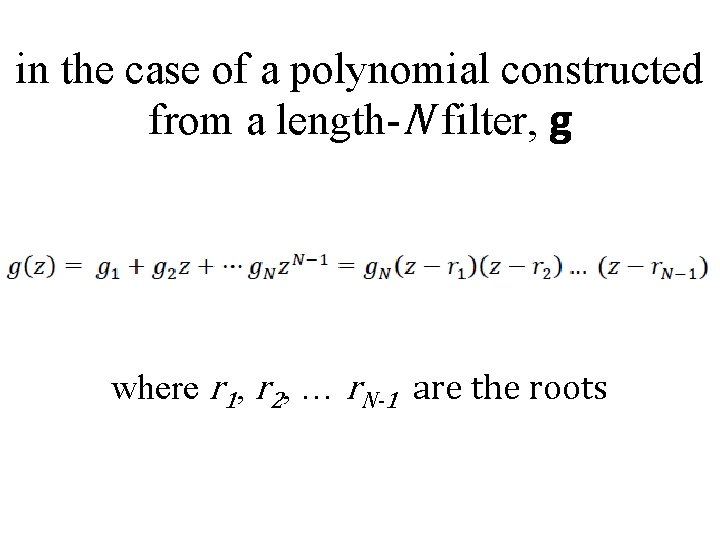

in the case of a polynomial constructed from a length-N filter, g where r 1, r 2, … r. N-1 are the roots

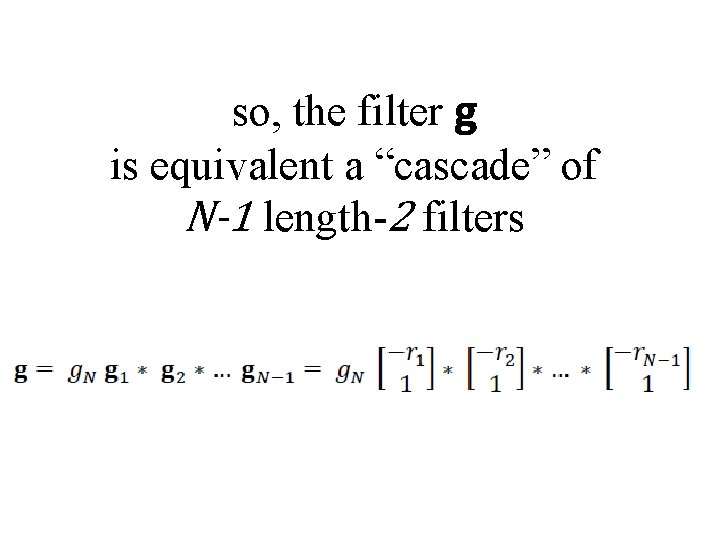

so, the filter g is equivalent a “cascade” of N-1 length-2 filters

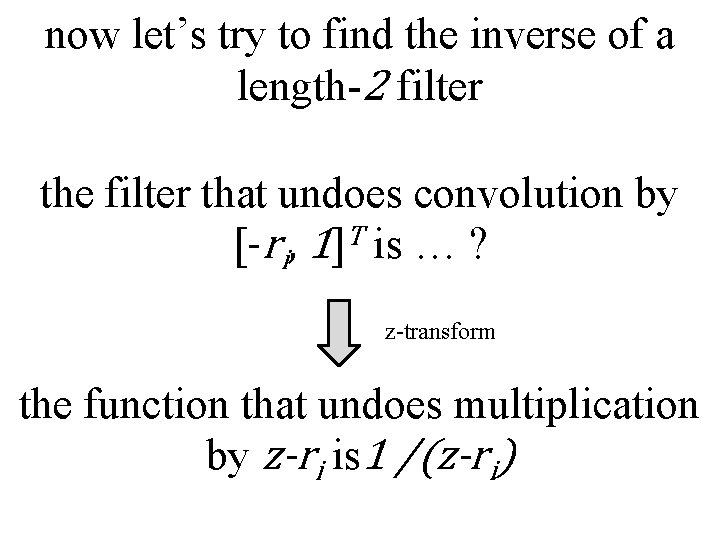

now let’s try to find the inverse of a length-2 filter the filter that undoes convolution by [-ri, 1]T is … ? z-transform the function that undoes multiplication by z-ri is 1 /(z-ri)

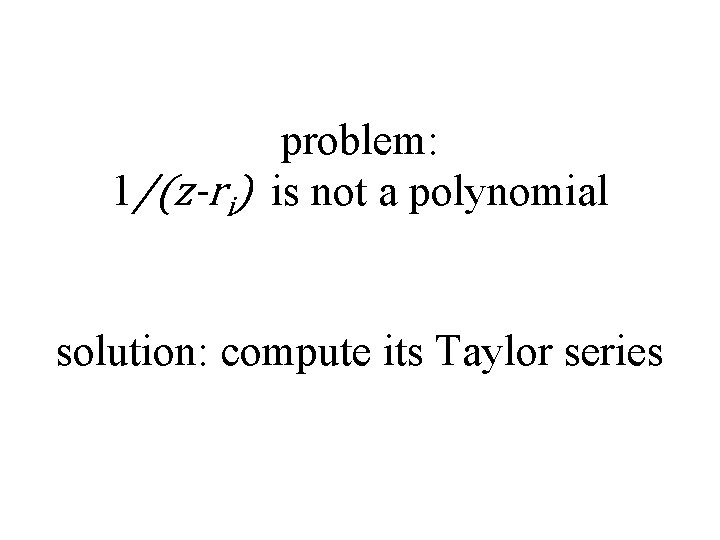

problem: 1/(z-ri) is not a polynomial solution: compute its Taylor series

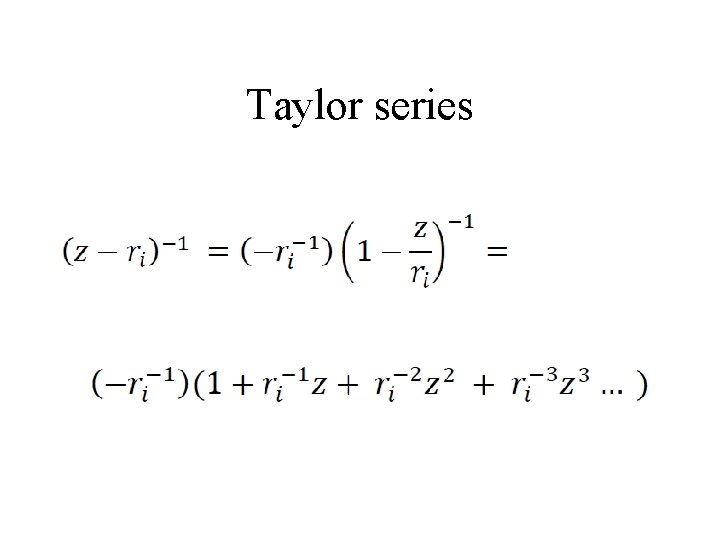

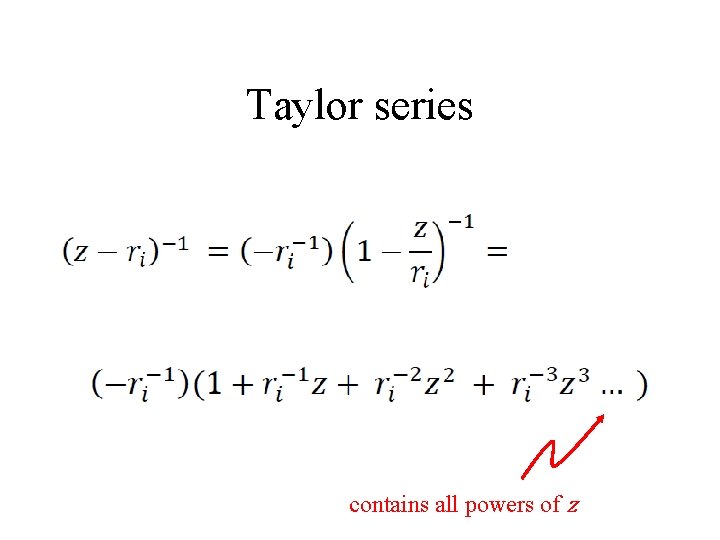

Taylor series

Taylor series contains all powers of z

![so the filter that undoes convolution by [-ri, 1]T is … an indefinitely long so the filter that undoes convolution by [-ri, 1]T is … an indefinitely long](http://slidetodoc.com/presentation_image_h2/b7a7d9713841c8c9390a82a5f8d0ddc6/image-33.jpg)

so the filter that undoes convolution by [-ri, 1]T is … an indefinitely long filter

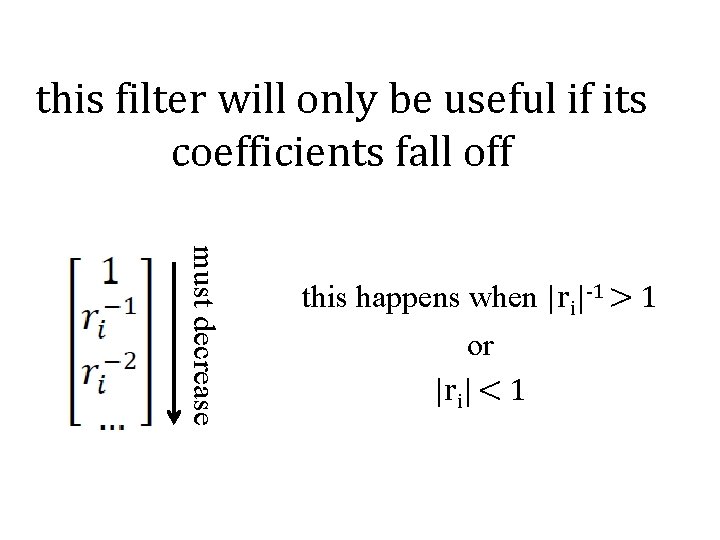

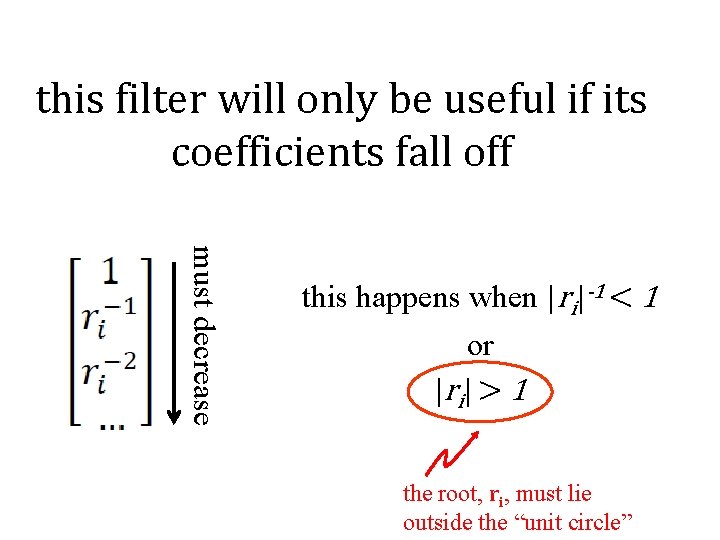

this filter will only be useful if its coefficients fall off must decrease this happens when |ri|-1 > 1 or |ri| < 1

this filter will only be useful if its coefficients fall off must decrease this happens when |ri|-1 < 1 or |r i | > 1 the root, ri, must lie outside the “unit circle”

the inverse filter for a length-N filter g step 1: find roots of g(z) step 2: check that roots are inside the unit circle step 3: construct inverse filter associated with each root step 4: convolve them all together

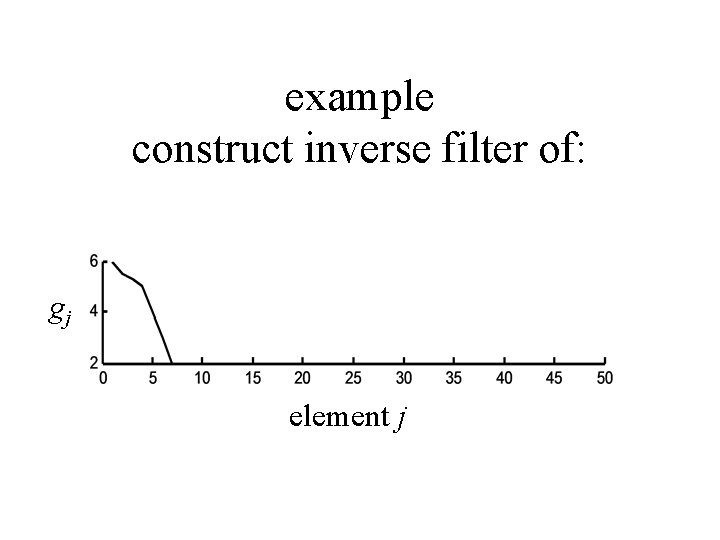

example construct inverse filter of: gj element j

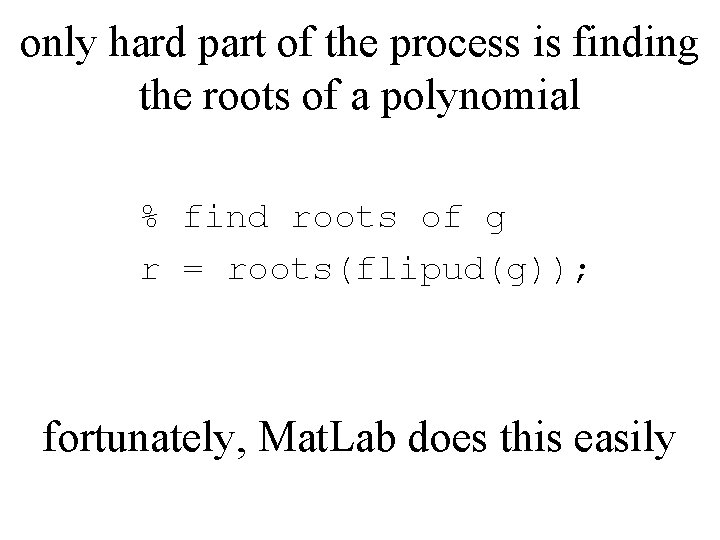

only hard part of the process is finding the roots of a polynomial % find roots of g r = roots(flipud(g)); fortunately, Mat. Lab does this easily

![gj element j gjinv element j [ginv*g]j element j gj element j gjinv element j [ginv*g]j element j](http://slidetodoc.com/presentation_image_h2/b7a7d9713841c8c9390a82a5f8d0ddc6/image-39.jpg)

gj element j gjinv element j [ginv*g]j element j

![short time series gj element j long timeseries gjinv spike element j [ginv*g]j element short time series gj element j long timeseries gjinv spike element j [ginv*g]j element](http://slidetodoc.com/presentation_image_h2/b7a7d9713841c8c9390a82a5f8d0ddc6/image-40.jpg)

short time series gj element j long timeseries gjinv spike element j [ginv*g]j element j

Part 3: Recursive Filters a way to make approximate a long filter with two short ones

in the standard filtering formula we compute the output θ 1, θ 2, θ 3, … in sequence but without using our knowledge of θ 1 when we compute θ 2 or θ 2 when we compute θ 3 etc

that’s wasted information

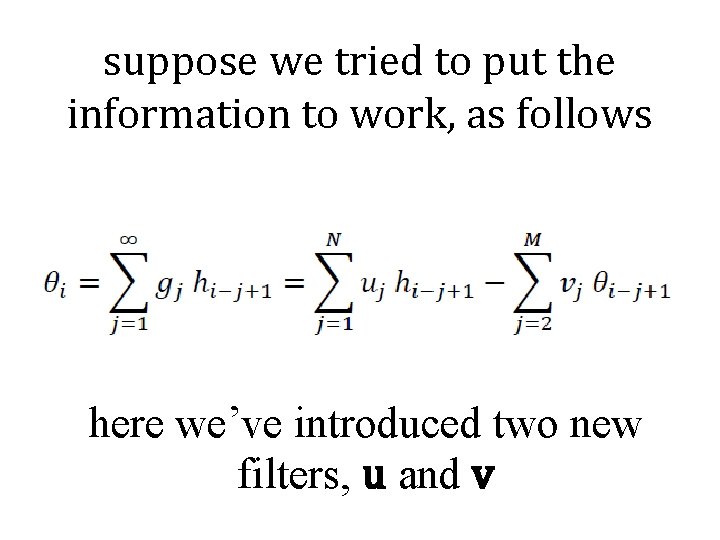

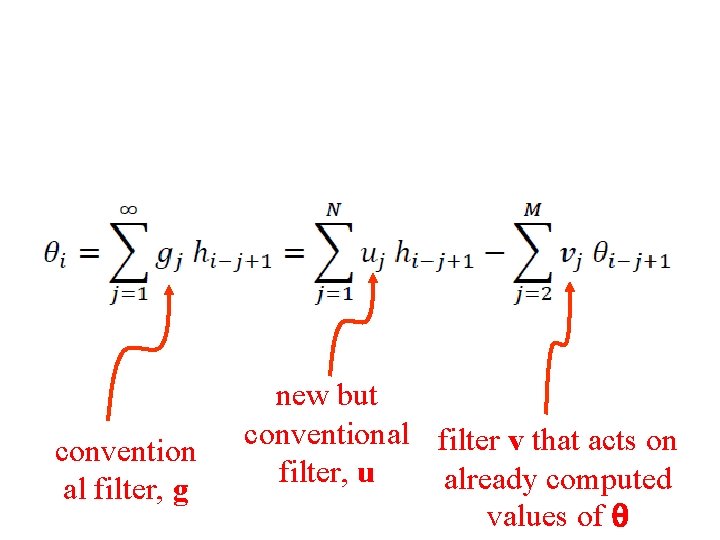

suppose we tried to put the information to work, as follows here we’ve introduced two new filters, u and v

convention al filter, g new but conventional filter v that acts on filter, u already computed values of θ

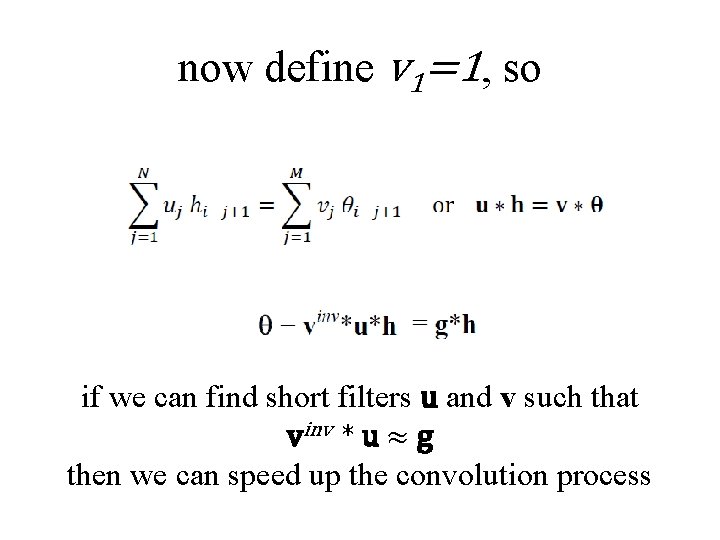

now define v 1=1, so if we can find short filters u and v such that vinv * u ≈ g then we can speed up the convolution process

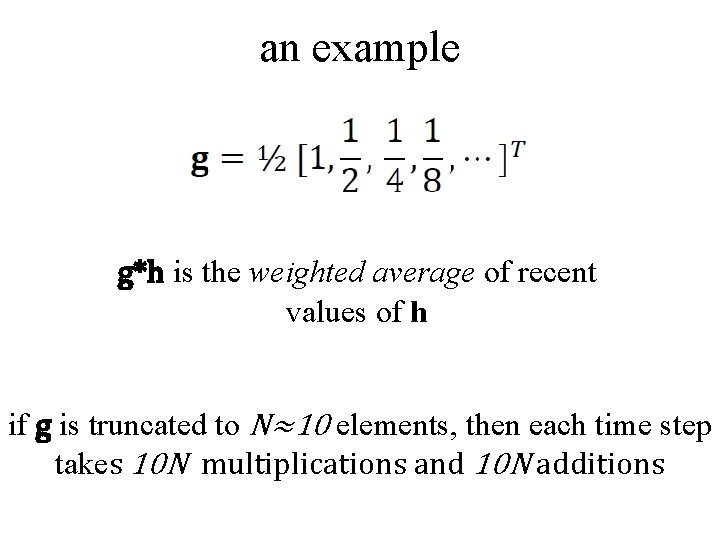

an example g*h is the weighted average of recent values of h if g is truncated to N≈10 elements, then each time step takes 10 N multiplications and 10 N additions

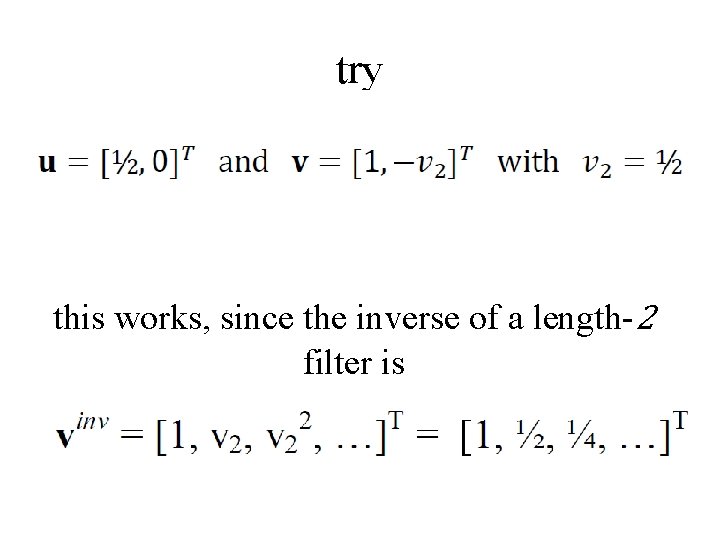

try this works, since the inverse of a length-2 filter is

the convolution then becomes which requires only one addition and one multiplication per time step a savings of a factor of about ten

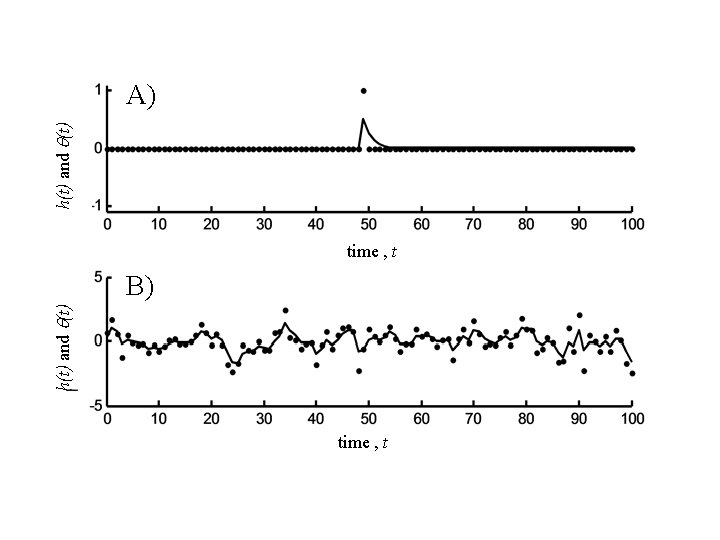

h(t) and q(t) A) time , t B) time , t

- Slides: 50