Ensemble Contextual Bandits for Personalized Recommendation Liang Tang

Ensemble Contextual Bandits for Personalized Recommendation Liang Tang, Yexi Jiang, Lei Li, Tao Li Florida International University 6/16/2021 ACM Rec. Sys 2014 1

Cold Start Problem for Learning based Recommendation • Issue: Do not have enough appropriate data. – Historical user log data is biased. – User interest may change over time. – New items (or users) are added. • Approach: Exploitation and Exploration – Contextual Multi-Arm Bandit Algorithm The contextual information are item features and user features 6/16/2021 ACM Rec. Sys 2014 2

Contextual Bandit Algorithm with Personalized Recommendation • Contextual Bandit – Let a 1, …, am be a set of arms. – Given a context xt, the model decides which arm to pull. – After each pull, you receive a random reward, which is determined by the pulled arm and xt. – Goal: maximize the total received reward. • Online Recommendation – Arm – Context – Reward 6/16/2021 Item User feature Click Pull Recommend ACM Rec. Sys 2014 3

Problem Statement • Problem Setting: have many different recommendation models (or policies): – Different CTR Prediction Algorithms. – Different Exploration-Exploitation Algorithms. – Different Parameter Choices. • No data to do model validation • Problem Statement: how to build an ensemble model that is close to the best model in the cold start situation ? 6/16/2021 ACM Rec. Sys 2014 4

How Ensemble? • Classifier ensemble method does not work in this setting – Recommendation decision is NOT purely based on the predicted CTR. • Each individual model only tells us: – Which item to recommend. 6/16/2021 ACM Rec. Sys 2014 5

Ensemble Method • Our Method: – Allocate recommendation chances to individual models. • Problem: – Better models should have more chances. – We do not know which one is good or bad in advance. – Ideal solution: allocate all chances to the best one. 6/16/2021 ACM Rec. Sys 2014 6

Current Practice: Online Evaluation (or A/B testing) Let π1, π2 … πm be the individual models. 1. Deploy π1, π2 … πm into the online system at the same time. 2. Dispatch a small percent user traffic to each model. 3. After a period, choose the model having the best CTR as the production model. 6/16/2021 ACM Rec. Sys 2014 7

Current Practice: Online Evaluation (or A/B testing) Let π1, π2 … πm be the individual models. 1. Deploy π1, π2 … πm into the online system at the same time. 2. Dispatch a small percent user traffic to each model. 3. After a period, choose the model having the best CTR as the production model. If we have too many models, this will hurt the performance of the online system. 6/16/2021 ACM Rec. Sys 2014 8

Our Idea 1 (Hyper. TS) • The CTR of model πi is a random unknown variable, Ri. • Goal: CTR of our ensemble model – maximize , rt is a random number drawn from Rs(t), s(t)=1, 2, …, or m. For each t=1, …, N, we decide s(t). • Solution: – Bernoulli Thompson Sampling (flat prior: beta(1, 1)). – π1, π2 … πm are bandit arms. 6/16/2021 ACM Rec. Sys 2014 No tricky parameters 9

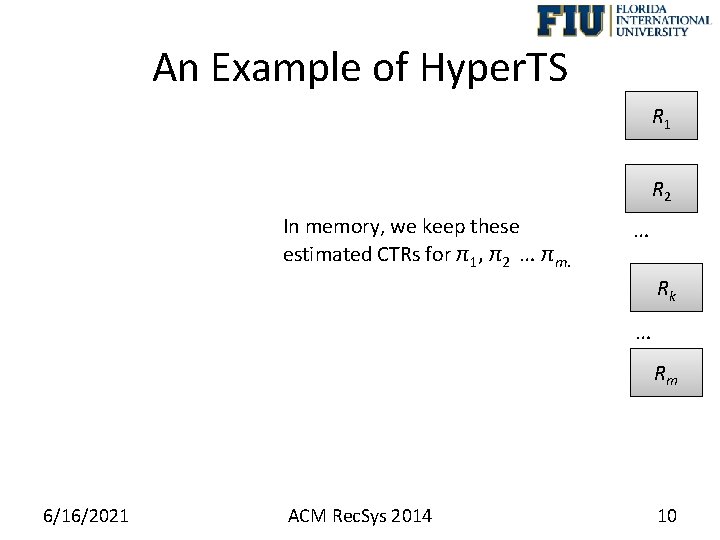

An Example of Hyper. TS R 1 R 2 In memory, we keep these estimated CTRs for π1, π2 … πm. … Rk … Rm 6/16/2021 ACM Rec. Sys 2014 10

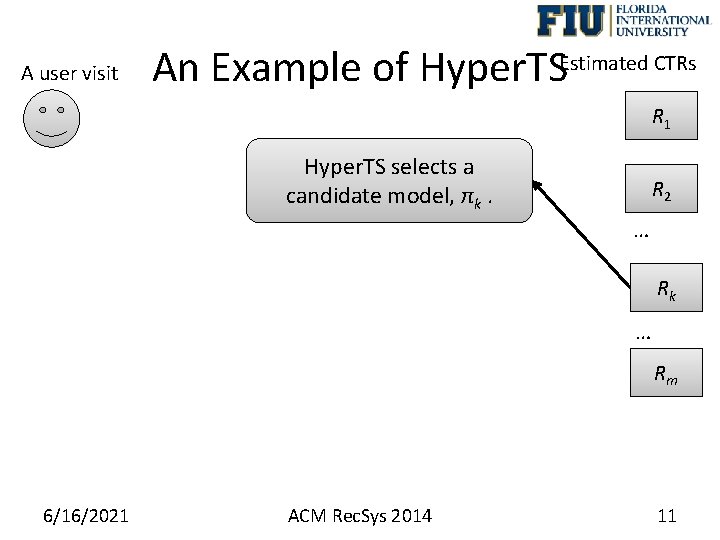

A user visit An Example of Hyper. TSEstimated CTRs R 1 Hyper. TS selects a candidate model, πk. R 2 … Rk … Rm 6/16/2021 ACM Rec. Sys 2014 11

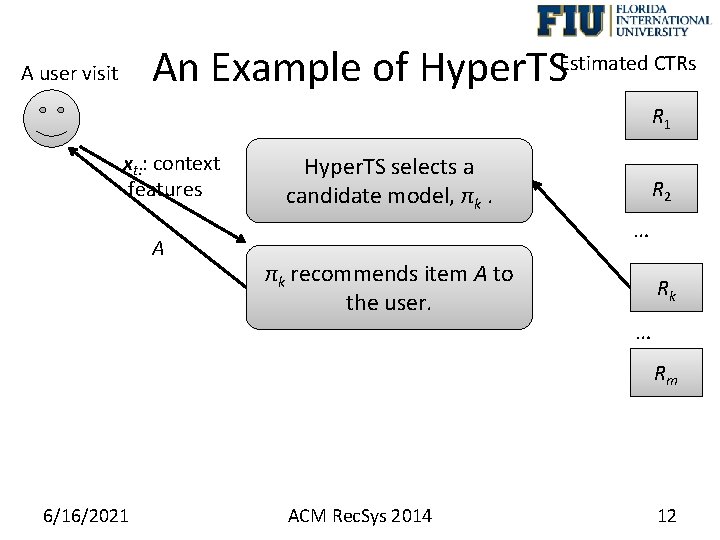

An Example of Hyper. TSEstimated CTRs A user visit R 1 xt: : context features A Hyper. TS selects a candidate model, πk. R 2 … πk recommends item A to the user. Rk … Rm 6/16/2021 ACM Rec. Sys 2014 12

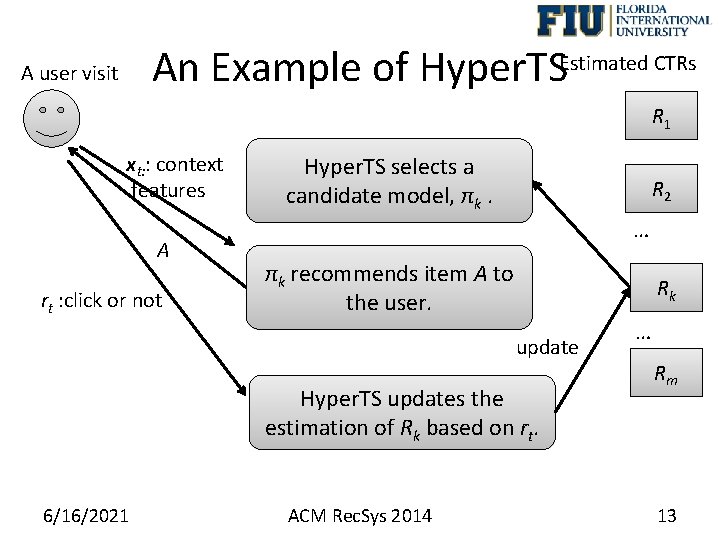

An Example of Hyper. TSEstimated CTRs A user visit R 1 xt: : context features A rt : click or not Hyper. TS selects a candidate model, πk. R 2 … πk recommends item A to the user. Rk update Hyper. TS updates the estimation of Rk based on rt. 6/16/2021 ACM Rec. Sys 2014 … Rm 13

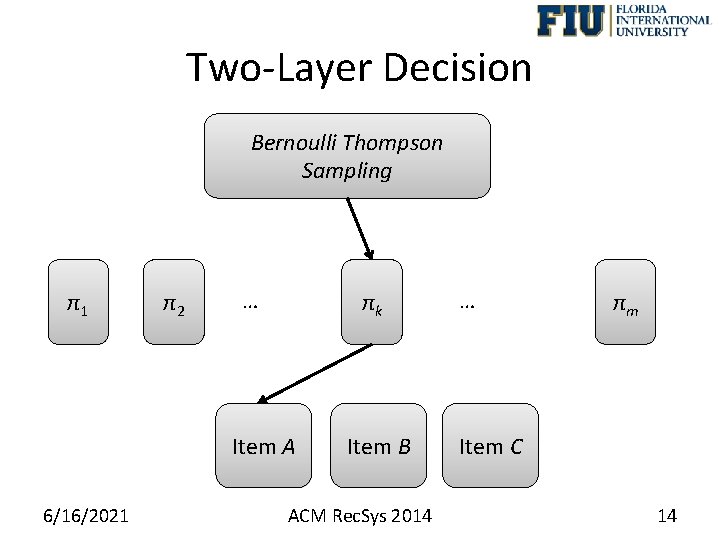

Two-Layer Decision Bernoulli Thompson Sampling π1 π2 … πk Item A 6/16/2021 Item B ACM Rec. Sys 2014 … πm Item C 14

Our Idea 2 (Hyper. TSFB) • Limitation of Previous Idea: – For each recommendation, user feedback is used by only one individual model (e. g. , πk). • Motivation: – Can we update all R 1, R 2, …, Rm by every user feedback? (Share every user feedback to every individual model). 6/16/2021 ACM Rec. Sys 2014 15

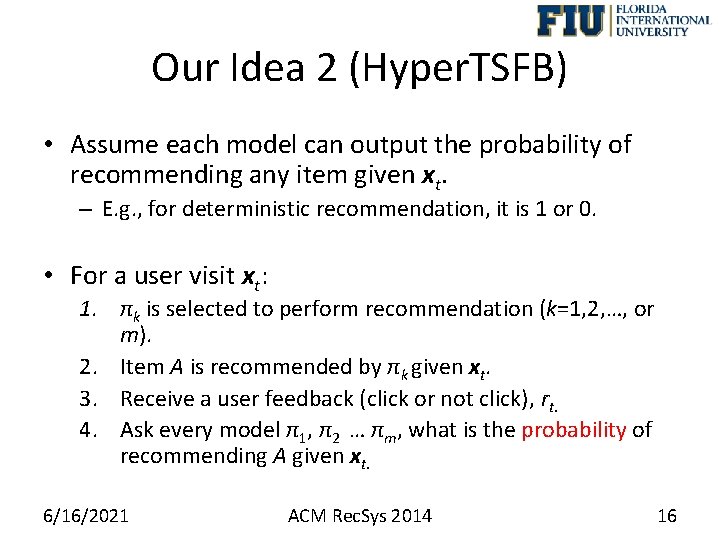

Our Idea 2 (Hyper. TSFB) • Assume each model can output the probability of recommending any item given xt. – E. g. , for deterministic recommendation, it is 1 or 0. • For a user visit xt: 1. πk is selected to perform recommendation (k=1, 2, …, or m). 2. Item A is recommended by πk given xt. 3. Receive a user feedback (click or not click), rt. 4. Ask every model π1, π2 … πm, what is the probability of recommending A given xt. 6/16/2021 ACM Rec. Sys 2014 16

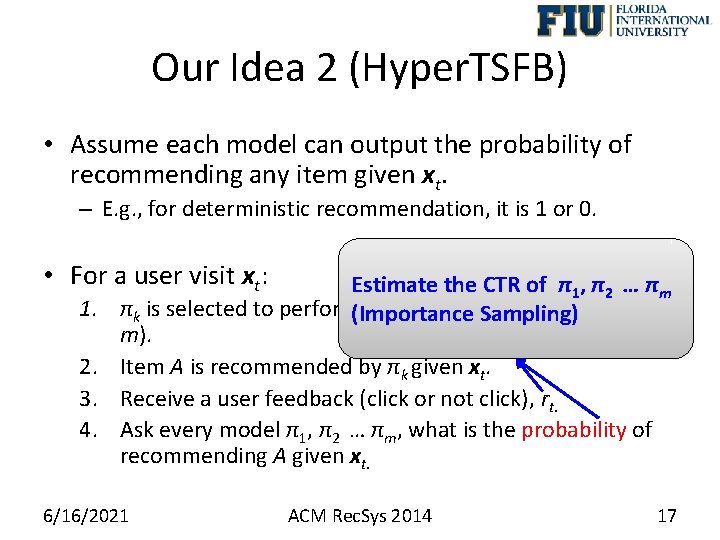

Our Idea 2 (Hyper. TSFB) • Assume each model can output the probability of recommending any item given xt. – E. g. , for deterministic recommendation, it is 1 or 0. • For a user visit xt: 1. 2. 3. 4. Estimate the CTR of π1, π2 … πm πk is selected to perform(Importance recommendation (k=1, 2, …, or Sampling) m). Item A is recommended by πk given xt. Receive a user feedback (click or not click), rt. Ask every model π1, π2 … πm, what is the probability of recommending A given xt. 6/16/2021 ACM Rec. Sys 2014 17

Experimental Setup • Experimental Data – Yahoo! Today News data logs (randomly displayed). – KDD Cup 2012 Online Advertising data set. • Evaluation Methods – Yahoo! Today News: Replay (see Lihong Li et. al’s WSDM 2011 paper). – KDD Cup 2012 Data: Simulation by a Logistic Regression Model. 6/16/2021 ACM Rec. Sys 2014 18

Comparative Methods • CTR Prediction Algorithm – Logistic Regression • Exploitation-Exploration Algorithms – Random, ε-greedy, Lin. UCB, Softmax, Epochgreedy, Thompson sampling • Hyper. TS and Hyper. TSFB 6/16/2021 ACM Rec. Sys 2014 19

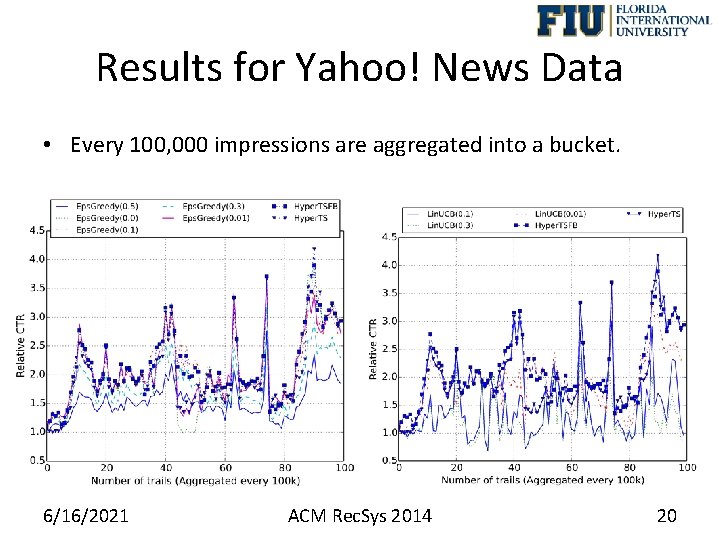

Results for Yahoo! News Data • Every 100, 000 impressions are aggregated into a bucket. 6/16/2021 ACM Rec. Sys 2014 20

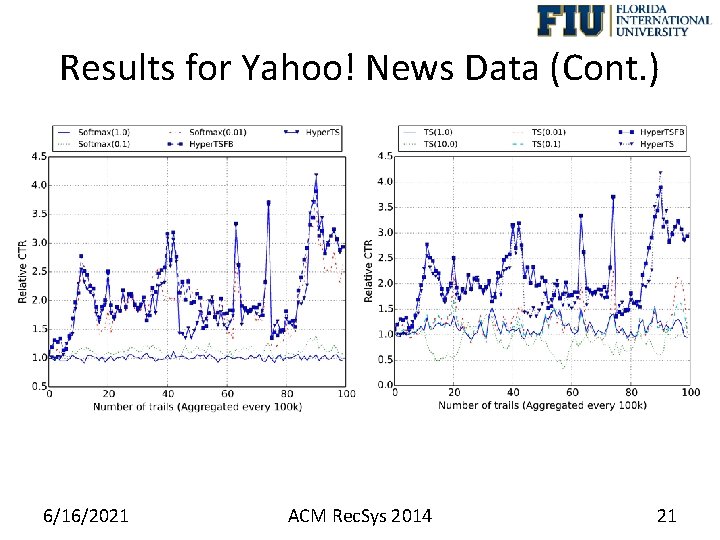

Results for Yahoo! News Data (Cont. ) 6/16/2021 ACM Rec. Sys 2014 21

Conclusions for Experimental Results 1. The performance of baseline exploitation-exploration algorithms is very sensitive to the parameter setting. – In cold-start situation, no enough data to tune parameter. 2. Hyper. TS and Hyper. TSFB can be close to the optimal baseline algorithm (No guarantee be better than the optimal one), even though some bad individual models are included. 3. For contextual Thompson sampling, the performance depends on the choice of prior distribution for the logistic regression. – For online Bayesian learning, the posterior distribution approximation is not accurate(cannot store the past data). 6/16/2021 ACM Rec. Sys 2014 22

Question & Thank you • Thank you! • Question? 6/16/2021 ACM Rec. Sys 2014 23

- Slides: 23