Enhancing the subband modulation spectra of speech features

Enhancing the sub-band modulation spectra of speech features via nonnegative matrix factorization for robust speech recognition Hao-teng Fan, Yi-chang Tsai and Jeih-weih Hung Presenter : 張庭豪

Outline INTRODUCTION PROPOSED METHOD EXPERIMENTAL SETUP EXPERIMENTAL RESULTS AND DISCUSSIONS CONCLUDING REMARKS AND FUTURE WORKS 2

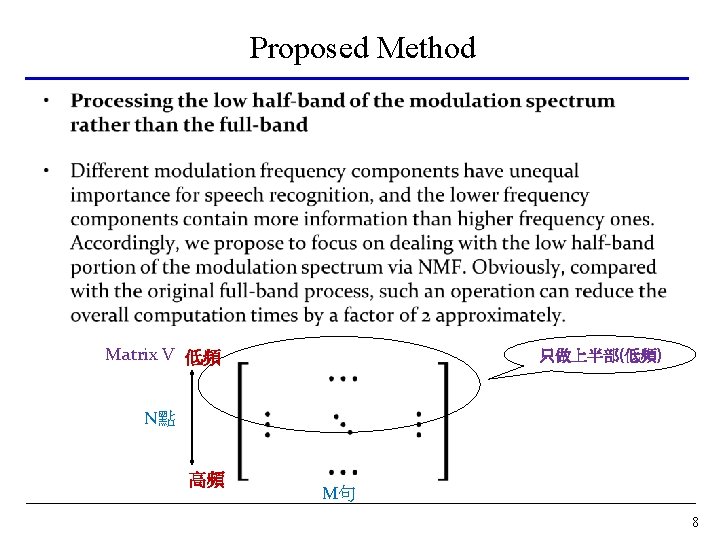

Introduction • NMF does not provide a close-form solution, the aforementioned basis spectra as well as the new modulation spectrum are obtained in an iterative manner, which often requires relative high computation complexity. • Most of the useful linguistic information is encapsulated in the low modulation frequency components approximately within the subband. • We propose to promote the computational efficiency of NMF in two directions: One is to use the orthogonal projection in place of the iterative procedures. The other is to process the low-half band, rather than the entire band, of the modulation spectrum. 3

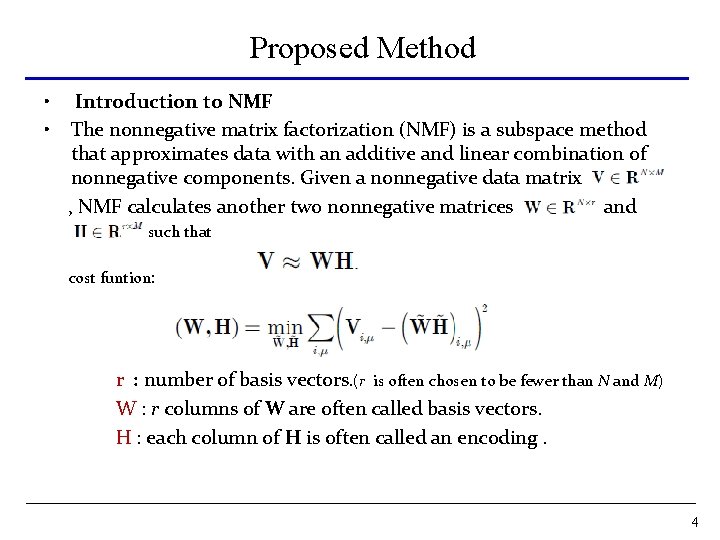

Proposed Method • • Introduction to NMF The nonnegative matrix factorization (NMF) is a subspace method that approximates data with an additive and linear combination of nonnegative components. Given a nonnegative data matrix , NMF calculates another two nonnegative matrices and such that cost funtion: r : number of basis vectors. (r is often chosen to be fewer than N and M) W : r columns of W are often called basis vectors. H : each column of H is often called an encoding. 4

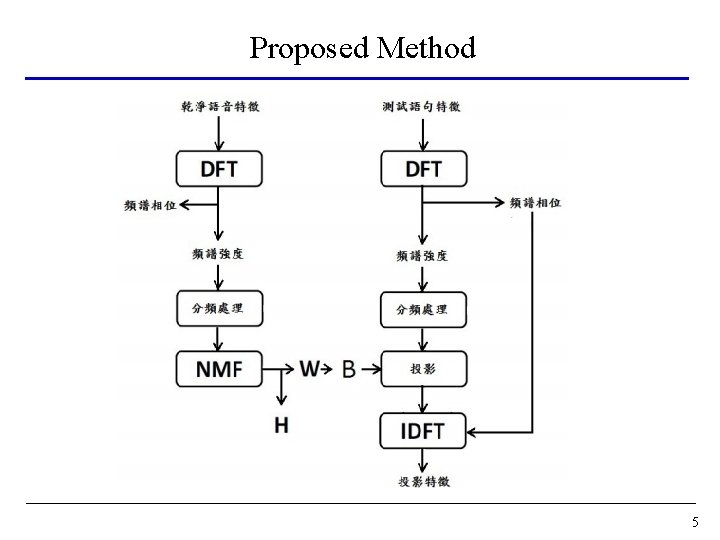

Proposed Method 5

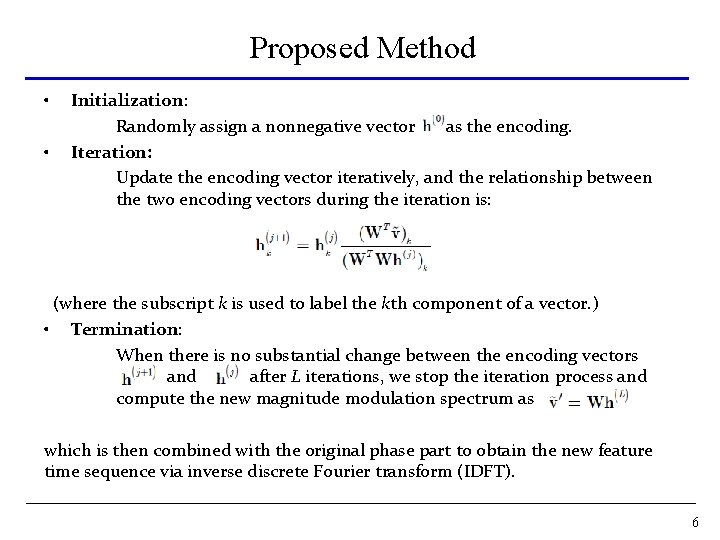

Proposed Method • • Initialization: Randomly assign a nonnegative vector as the encoding. Iteration: Update the encoding vector iteratively, and the relationship between the two encoding vectors during the iteration is: (where the subscript k is used to label the kth component of a vector. ) • Termination: When there is no substantial change between the encoding vectors and after L iterations, we stop the iteration process and compute the new magnitude modulation spectrum as which is then combined with the original phase part to obtain the new feature time sequence via inverse discrete Fourier transform (IDFT). 6

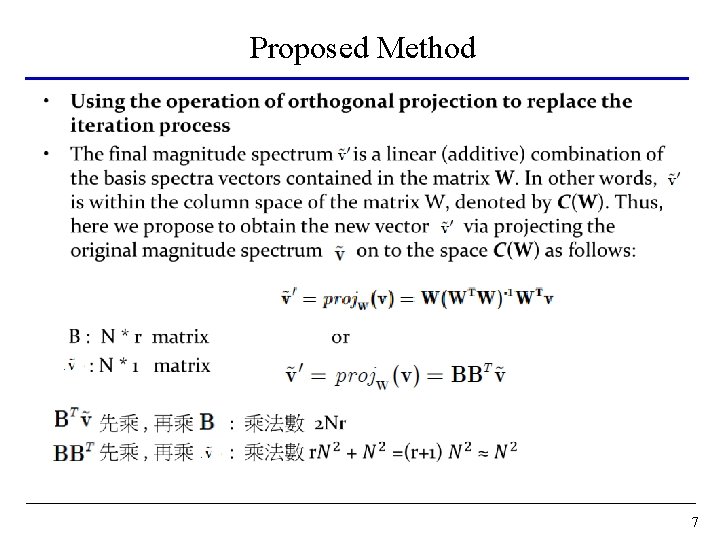

Proposed Method • 7

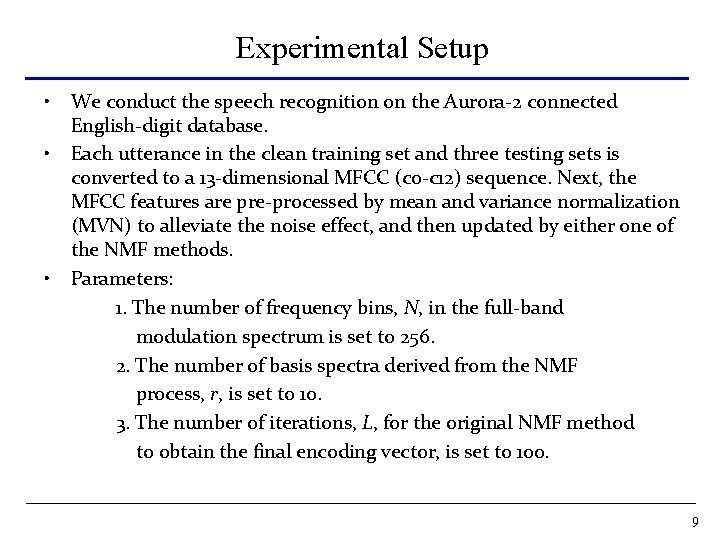

Experimental Setup • • • We conduct the speech recognition on the Aurora-2 connected English-digit database. Each utterance in the clean training set and three testing sets is converted to a 13 -dimensional MFCC (c 0 -c 12) sequence. Next, the MFCC features are pre-processed by mean and variance normalization (MVN) to alleviate the noise effect, and then updated by either one of the NMF methods. Parameters: 1. The number of frequency bins, N, in the full-band modulation spectrum is set to 256. 2. The number of basis spectra derived from the NMF process, r, is set to 10. 3. The number of iterations, L, for the original NMF method to obtain the final encoding vector, is set to 100. 9

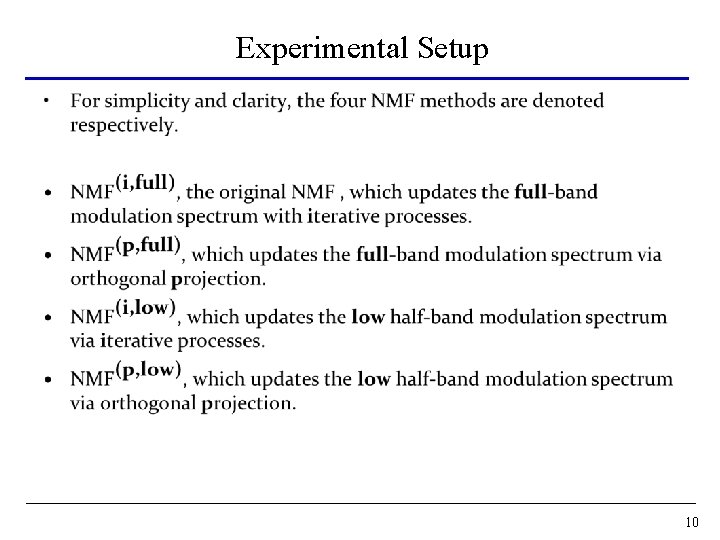

Experimental Setup • 10

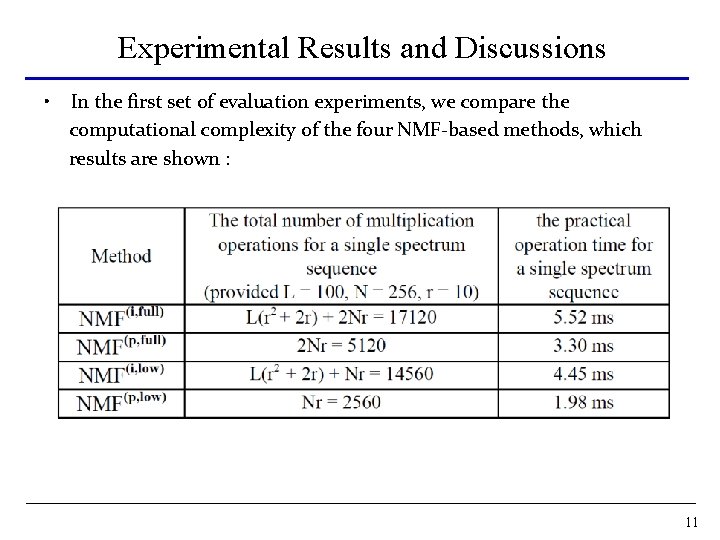

Experimental Results and Discussions • In the first set of evaluation experiments, we compare the computational complexity of the four NMF-based methods, which results are shown : 11

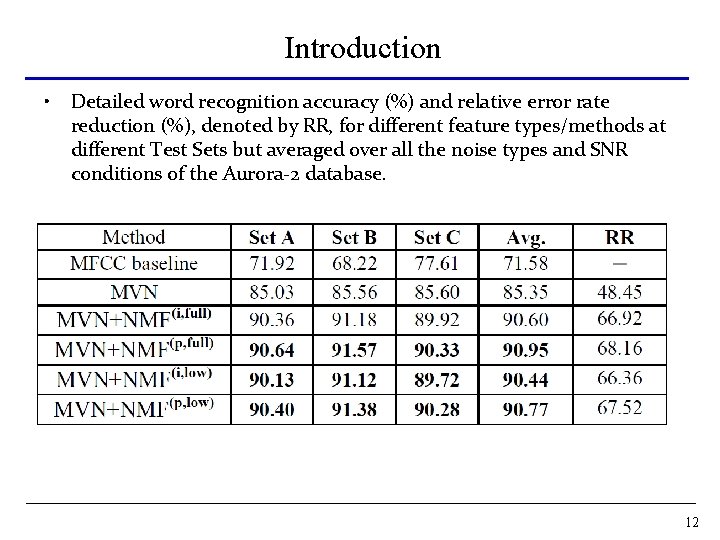

Introduction • Detailed word recognition accuracy (%) and relative error rate reduction (%), denoted by RR, for different feature types/methods at different Test Sets but averaged over all the noise types and SNR conditions of the Aurora-2 database. 12

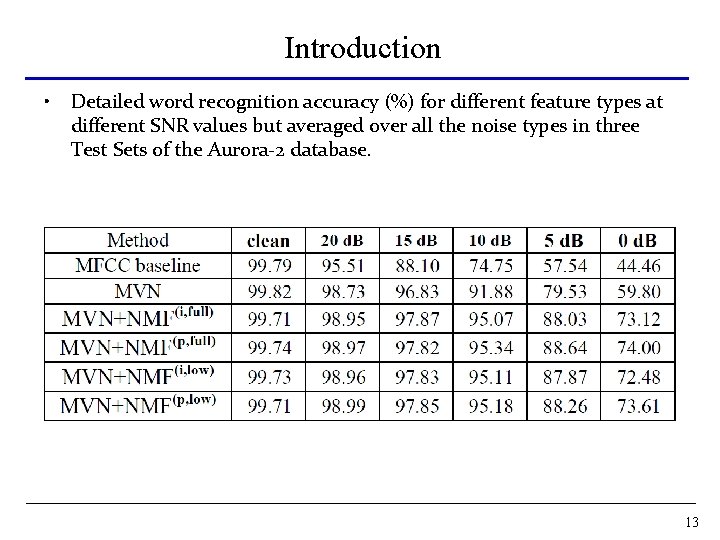

Introduction • Detailed word recognition accuracy (%) for different feature types at different SNR values but averaged over all the noise types in three Test Sets of the Aurora-2 database. 13

Concluding Remarks And Future Works • In this paper, we present two procedures in order to refine the NMF approach for enhancing the modulation spectra of speech features in noise robustness. • Experimental results reveal that, compared with the original NMF, the resulting new scheme reduces the computational complexity as well as remains very similar recognition performance. • In the future, we plan to reduce the bandwidth of the low sub-band or to update the different sub-bands separately in NMF processing to investigate whether higher computation efficiency and/or better recognition accuracy can be achieved. 14

- Slides: 14