EndUser Debugging of Machine Learning Systems WengKeen Wong

End-User Debugging of Machine Learning Systems Weng-Keen Wong Oregon State University School of Electrical Engineering and Computer Science http: //www. eecs. oregonstate. edu/~wong

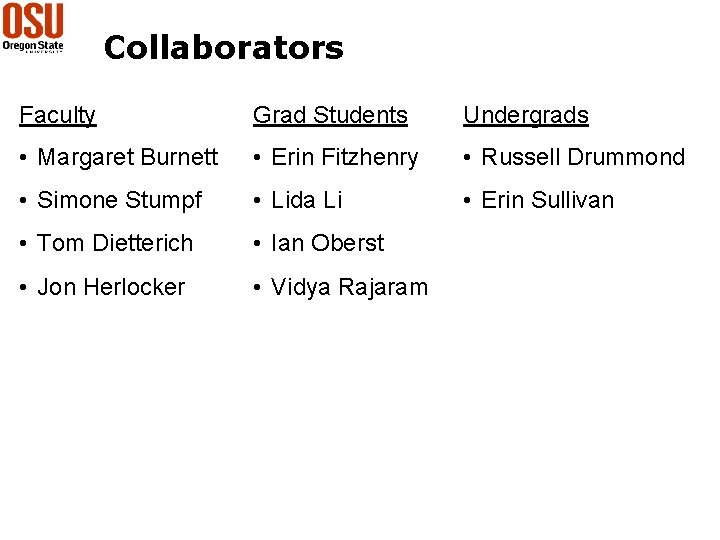

Collaborators Faculty Grad Students Undergrads • Margaret Burnett • Erin Fitzhenry • Russell Drummond • Simone Stumpf • Lida Li • Erin Sullivan • Tom Dietterich • Ian Oberst • Jon Herlocker • Vidya Rajaram

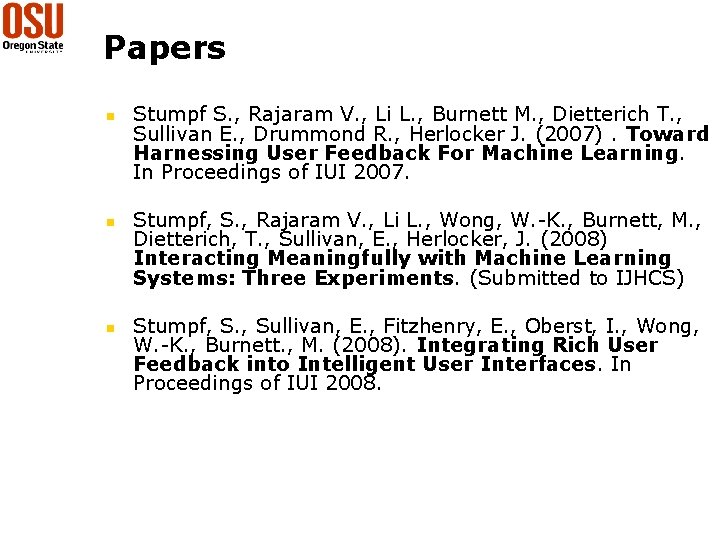

Papers n n n Stumpf S. , Rajaram V. , Li L. , Burnett M. , Dietterich T. , Sullivan E. , Drummond R. , Herlocker J. (2007). Toward Harnessing User Feedback For Machine Learning. In Proceedings of IUI 2007. Stumpf, S. , Rajaram V. , Li L. , Wong, W. -K. , Burnett, M. , Dietterich, T. , Sullivan, E. , Herlocker, J. (2008) Interacting Meaningfully with Machine Learning Systems: Three Experiments. (Submitted to IJHCS) Stumpf, S. , Sullivan, E. , Fitzhenry, E. , Oberst, I. , Wong, W. -K. , Burnett. , M. (2008). Integrating Rich User Feedback into Intelligent User Interfaces. In Proceedings of IUI 2008.

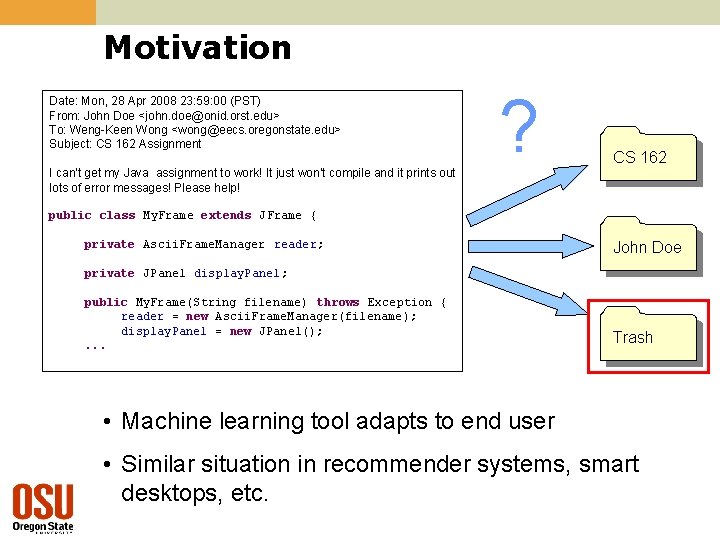

Motivation Date: Mon, 28 Apr 2008 23: 59: 00 (PST) From: John Doe <john. doe@onid. orst. edu> To: Weng-Keen Wong <wong@eecs. oregonstate. edu> Subject: CS 162 Assignment I can’t get my Java assignment to work! It just won’t compile and it prints out lots of error messages! Please help! ? CS 162 public class My. Frame extends JFrame { private Ascii. Frame. Manager reader; John Doe private JPanel display. Panel; public My. Frame(String filename) throws Exception { reader = new Ascii. Frame. Manager(filename); display. Panel = new JPanel(); . . . Trash • Machine learning tool adapts to end user • Similar situation in recommender systems, smart desktops, etc.

Motivation Date: Mon, 28 Apr 2008 23: 51: 00 (PST) From: Bella Bose <bose@eecs. oregonstate. edu> To: Weng-Keen Wong <wong@eecs. oregonstate. edu> Subject: Teaching Assignments I’ve compiled the teaching preferences for all the faculty. Here are the teaching assignments for next year: Trash Fall Quarter CS 160 (Computer Science Orientation) – Paulson CS 161 (Introduction to Programming I) – Chris Wallace CS 162 (Introduction to Programming II) – Weng-Keen Wong. . . • Machine Learning systems are great when they work correctly, aggravating when they don’t • The end user is the only person at the computer • Can we let end users correct machine learning systems?

Motivation n Learn to correct behavior quickly n n n Sparse data on start Concept drift Rich end-user knowledge n n Effects of user feedback on accuracy? Effects on users? 6

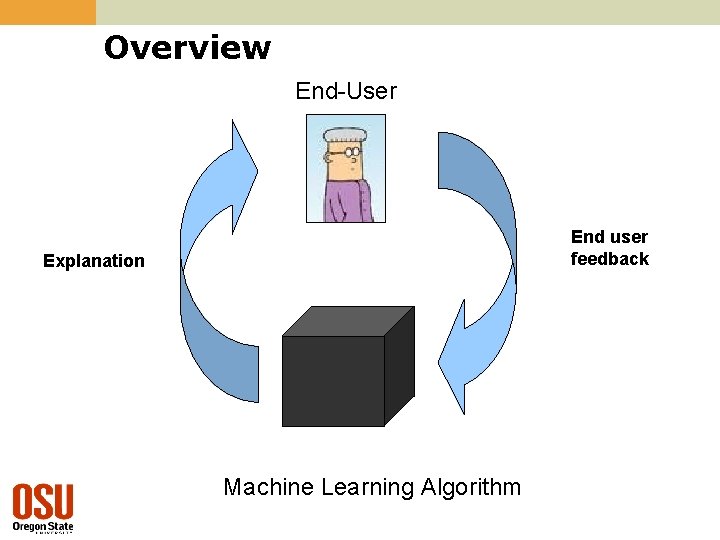

Overview End-User End user feedback Explanation Machine Learning Algorithm

Related Work Explanation • Expert Systems (Swartout 83, Wick and Thompson 92) • TREPAN (Craven and Shavlik 95) • Description Logics (Mc. Guinness 96) • Bayesian networks (La. Cave and Diez 00) • Additive classifiers (Poulin et al. 06) • Others (Crawford et al. 02, Herlocker et al. 00) End user interaction • Active Learning (Cohn et al. 96, many others) • Constraints (Altendorf et al. 05, Huang and Mitchell 06) • Ranks (Radlinski and Joachims 05) • Feature Selection (Raghavan et al. 06) • Crayons (Fails and Olsen 03) • Programming by Demonstration (Cypher 93, Lau and Weld 99, Lieberman 01)

Outline 1. What types of explanations do end users understand? What types of corrective feedback could end users provide? (IUI 2007) 2. How do we incorporate this feedback into a ML algorithm? (IJHCS 2008) 3. What happens when we put this together? (IUI 2008) 9

What Types of Explanations do End Users Understand? n n Thinkaloud study with 13 participants Classify Enron emails Explanation systems: rule-based, keyword-based, similarity-based Findings: n n Rule-based best but not a clear winner Evidence indicates multiple explanation paradigms needed

What types of corrective feedback could end users provide? Suggested corrective feedback in response to explanations: 1. Adjust importance of word 2. Add/remove word from consideration 3. Parse / extract text in a different way 4. Word combinations 5. Relationships between messages/people

Outline 1. What types of explanations do end users understand? What types of corrective feedback could end users provide? (IUI 2007) 2. How do we incorporate this feedback into a ML algorithm? (IJHCS 2008) 3. What happens when we put this together? (IUI 2008) 12

Incorporating Feedback into ML Algorithms Two approaches: n Constraint-based n User co-training

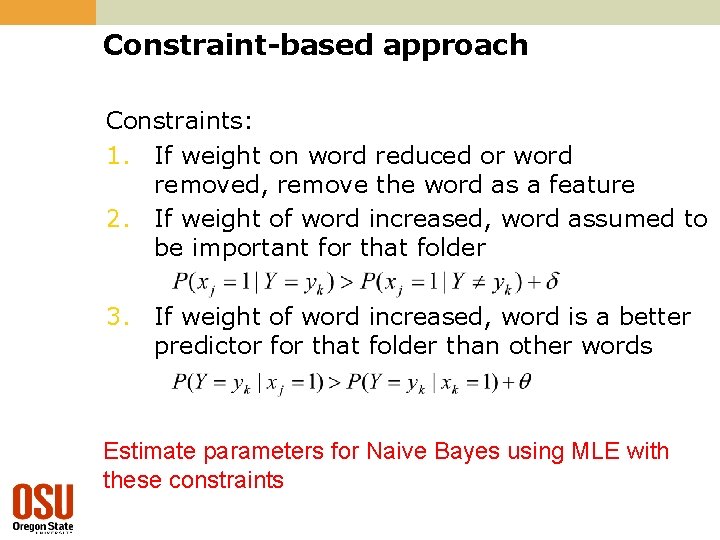

Constraint-based approach Constraints: 1. If weight on word reduced or word removed, remove the word as a feature 2. If weight of word increased, word assumed to be important for that folder 3. If weight of word increased, word is a better predictor for that folder than other words Estimate parameters for Naive Bayes using MLE with these constraints

Standard Co-training Create classifiers C 1 and C 2 based on the two independent feature sets. Repeat i times Add most confidently classified messages by any classifier to training data Rebuild C 1 and C 2 with the new training data

User Co-training CUSER = “Classifier” based on user feedback CML = Machine learning algorithm For each “session” of user feedback Add most confidently classified messages by CUSER to training data Rebuild CML with the new training data

User Co-training CUSER = “Classifier” based on user feedback CML = Machine learning algorithm For each “session” of user feedback Add most confidently classified messages by CUSER to training data Rebuild CML with the new training data We’ll expand the inner loop on the next slide

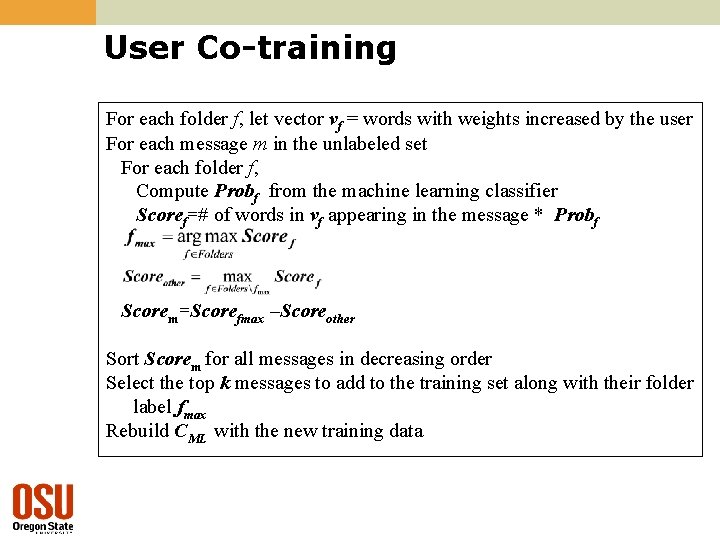

User Co-training For each folder f, let vector vf = words with weights increased by the user For each message m in the unlabeled set For each folder f, Compute Probf from the machine learning classifier Scoref=# of words in vf appearing in the message * Probf Scorem=Scorefmax –Scoreother Sort Scorem for all messages in decreasing order Select the top k messages to add to the training set along with their folder label fmax Rebuild CML with the new training data

Constraint-based vs User co-training n Constraint-based n n n Difficult to set “hardness” of constraint Constraints often already satisfied End-user can over-constrain the learning algorithm Slow User co-training n n Requires unlabeled emails in inbox Better accuracy than constraint-based

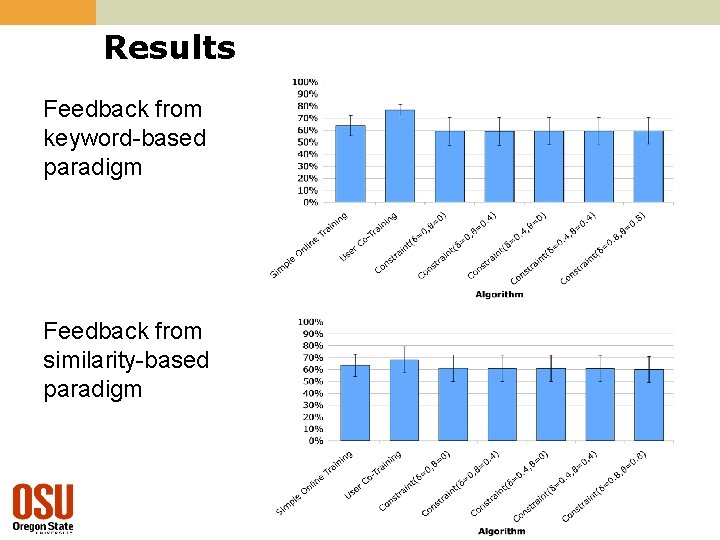

Results Feedback from keyword-based paradigm Feedback from similarity-based paradigm

Outline 1. What types of explanations work for end users? What types of corrective feedback could end users provide? (IUI 2007) 2. How do we incorporate this feedback into a ML algorithm? (IJHCS 2008) 3. What happens when we put this together? (IUI 2008) 21

Experiment: Email program 22

Experiment: Procedure n Intelligent email system to classify emails into folders n n 43 English-speaking, non-CS students Background questionnaire Tutorial (email program and folders) Experiment task on feedback set n n n Correct folders. Add, remove, change weight on keywords. 30 interaction logs Post-session questionnaire 23

Experiment: Data n Enron data set n n 9 folders 50 training messages n n 50 feedback messages n n n 10 each for 5 folders with folder labels For use in experiment Same for each participant 1051 test messages n For evaluation after experiment 24

Experiment: Classification algorithm n “User co-training” n n Two classifiers: User, Naïve Bayes Slight modification on user classifier n Scoref=sum of weights in vf appearing in the message n Weights can be modified interactively by user 25

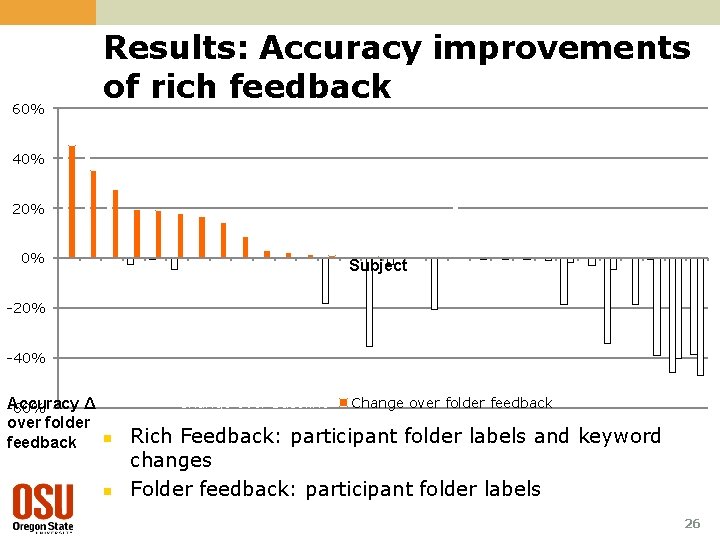

60% Results: Accuracy improvements of rich feedback 40% 20% 0% Subject -20% -40% Change over baseline Accuracy Δ -60% over folder feedback n n Change over folder feedback Rich Feedback: participant folder labels and keyword changes Folder feedback: participant folder labels 26

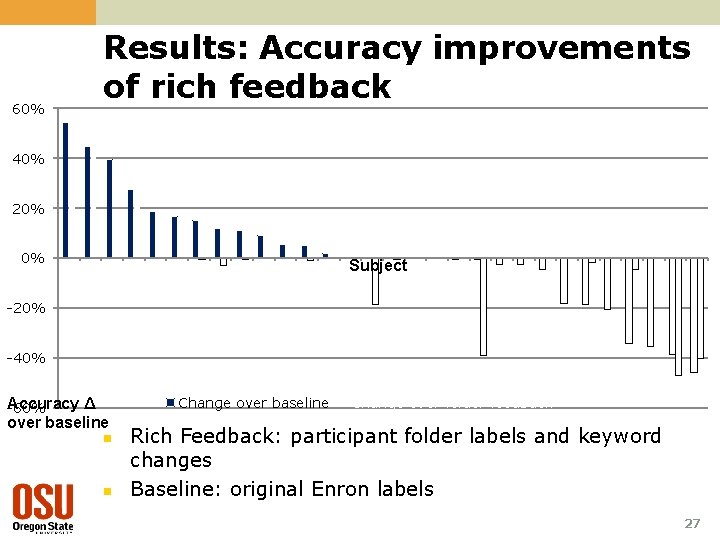

60% Results: Accuracy improvements of rich feedback 40% 20% 0% Subject -20% -40% Change over baseline Accuracy Δ -60% over baseline n n Change over folder feedback Rich Feedback: participant folder labels and keyword changes Baseline: original Enron labels 27

Results: Accuracy summary n n n 60% of participants saw accuracy improvements, some very substantial Some dramatic decreases More time between filing emails or more folder assignments → higher accuracy 29

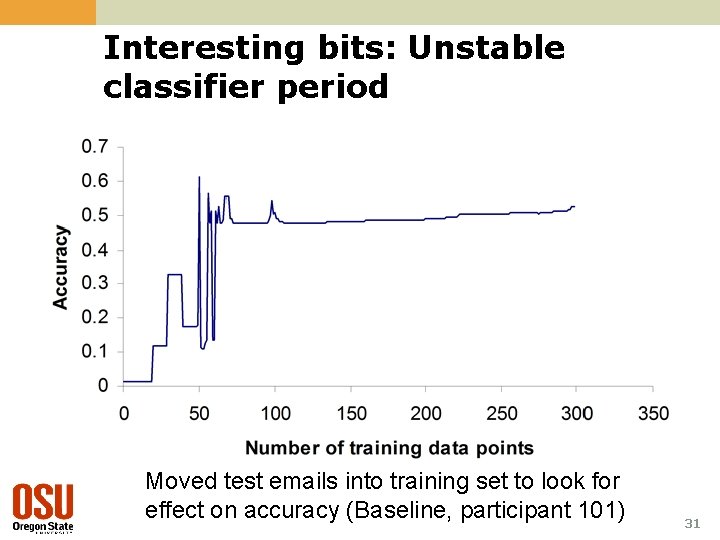

Interesting bits 1. 2. Need to communicate the effects of the user’s corrective feedback Unstable classifier period n n n With sparse training data, a single new training example can dramatically change the classifier’s decision boundaries Wild fluctuations in classifier’s predictions frustrate end users Causes “wall of red”

Interesting bits: Unstable classifier period Moved test emails into training set to look for effect on accuracy (Baseline, participant 101) 31

Interesting bits 3. 4. “Unlearning” important, especially to correct undesirable changes Gender differences n Females took longer to complete n Females added twice as many keywords n Comment more on unlearning

Interesting directions for HCI Gender differences More directed debugging Other forms of feedback Communicating effects of corrective feedback 1. 2. 3. 4. n Users need to detect the system is listening to their feedback Explanations 5. n n Form Fidelity

Interesting directions for Machine Learning 1. 2. 3. 4. Algorithms for learning from corrective feedback Modeling reliability of user feedback Explanations Incorporating new features

Future work n ML Whyline (with Andy Ko) 35

For more information wong@eecs. oregonstate. edu www. eecs. oregonstate. edu/~wong 36

- Slides: 35