Enabling Data Intensive Science with Tactical Storage Systems

Enabling Data Intensive Science with Tactical Storage Systems Prof. Douglas Thain University of Notre Dame http: //www. cse. nd. edu/~dthain

The Cooperative Computing Lab Our model of computer science research: – Understand how users with complex, large-scale applications need to interact with computing systems. – Design novel computing systems that can be applied by many different users == basic CS research. – Deploy code in real systems with real users, suffer real bugs, and learn real lessons == applied CS. Application Areas: – Astronomy, Bioinformatics, Biometrics, Molecular Dynamics, Physics, Game Theory, . . . ? ? ? External Support: NSF, IBM, Sun http: //www. cse. nd. edu/~ccl

Two Talks in One Paper at Supercomputing Applications of Tactical

Abstract Users of distributed systems encounter many practical barriers between their jobs and the data they wish to access. Problem: Users have access to many resources (disks), but are stuck with the abstractions (cluster NFS) provided by administrators. Solution: Tactical Storage Systems allow any user to create, reconfigure, and tear down abstractions without bugging the administrator.

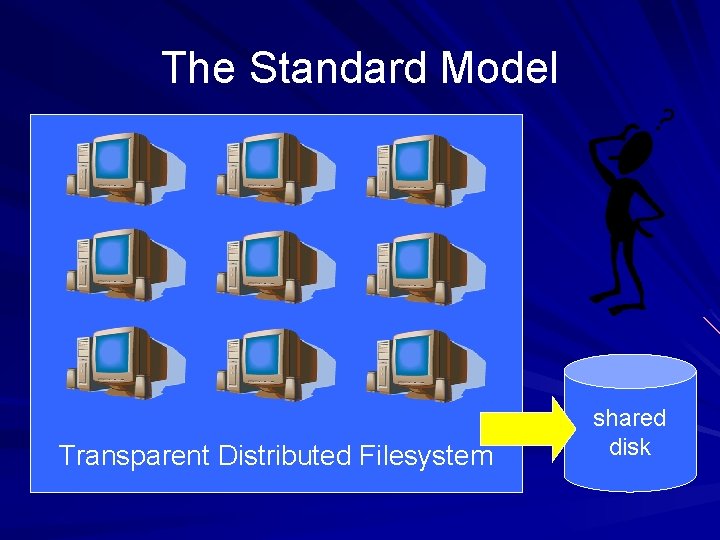

The Standard Model Transparent Distributed Filesystem shared disk

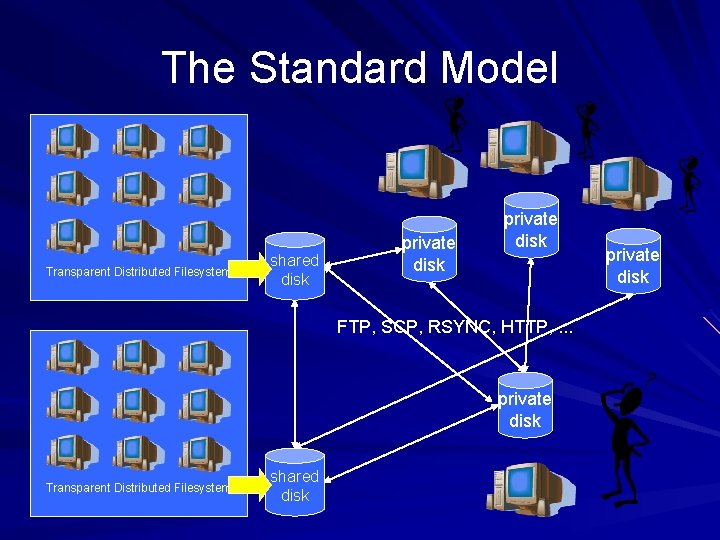

The Standard Model Transparent Distributed Filesystem shared disk private disk FTP, SCP, RSYNC, HTTP, . . . private disk Transparent Distributed Filesystem shared disk private disk

Problems with the Standard Model Users encounter partitions in the WAN. – – – Easy to access data inside cluster, hard outside. Must use different mechanisms on diff links. Difficult to combine resources together. Different access modes for different purposes. – File transfer: preparing system for intended use. – File system: access to data for running jobs. Resources go unused. – Disks on each node of a cluster. – Unorganized resources in a department/lab. A global file system can’t satisfy everyone!

What if. . . Users could easily access any storage? I could borrow an unused disk for NFS? An entire cluster can be used as storage? Multiple clusters could be combined? I could reconfigure structures without root? – (Or bugging the administrator daily. ) Solution: Tactical Storage System (TSS)

Outline Problems with the Standard Model Tactical Storage Systems – File Servers, Catalogs, Abstractions, Adapters Applications: – Remote Database Access for Ba. Bar Code – Remote Dynamic Linking for CDF Code – Logical Data Access for Bioinformatics Code – Expandable Database for MD Simulation Improving the OS for Grid Computing

Tactical Storage Systems (TSS) A TSS allows any node to serve as a file server or as a file system client. All components can be deployed without special privileges – but with security. Users can build up complex structures. – Filesystems, databases, caches, . . . Two Independent Concepts: – Resources – The raw storage to be used. – Abstractions – The organization of storage.

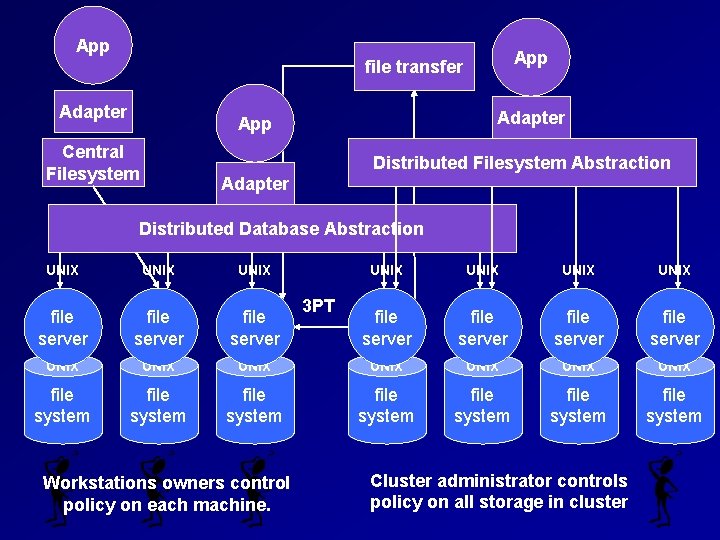

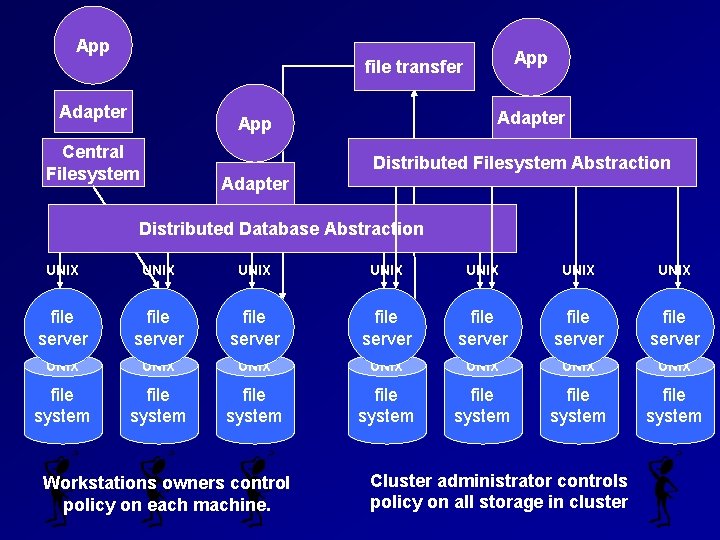

App file transfer Adapter ? ? ? Adapter App Central Filesystem Distributed Filesystem Abstraction Adapter Distributed Database Abstraction UNIX UNIX file server file server UNIX UNIX file system file system Workstations owners control policy on each machine. 3 PT Cluster administrator controls policy on all storage in cluster

Components of a TSS: 1 – File Servers 2 – Catalogs 3 – Abstractions 4 – Adapters

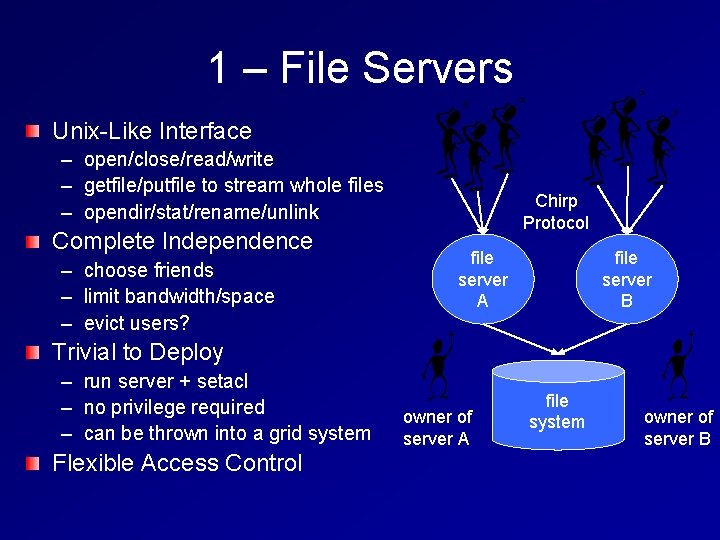

1 – File Servers Unix-Like Interface – open/close/read/write – getfile/putfile to stream whole files – opendir/stat/rename/unlink Complete Independence – choose friends – limit bandwidth/space – evict users? Chirp Protocol file server A file server B Trivial to Deploy – – – run server + setacl no privilege required can be thrown into a grid system Flexible Access Control owner of server A file system owner of server B

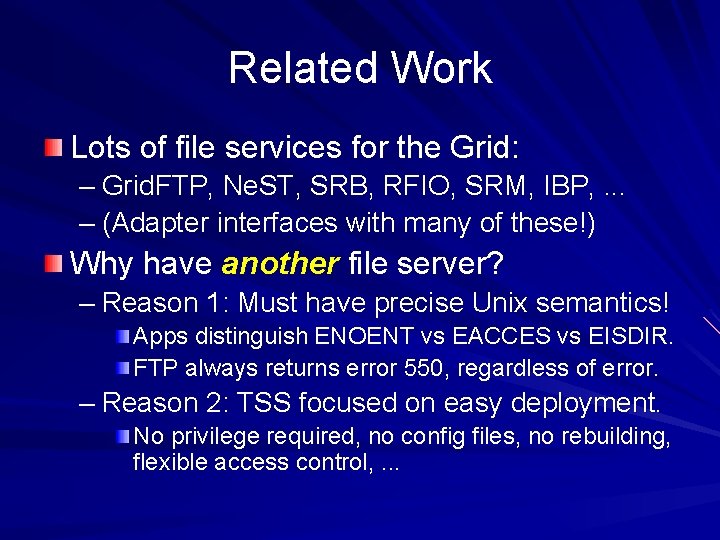

Related Work Lots of file services for the Grid: – Grid. FTP, Ne. ST, SRB, RFIO, SRM, IBP, . . . – (Adapter interfaces with many of these!) Why have another file server? – Reason 1: Must have precise Unix semantics! Apps distinguish ENOENT vs EACCES vs EISDIR. FTP always returns error 550, regardless of error. – Reason 2: TSS focused on easy deployment. No privilege required, no config files, no rebuilding, flexible access control, . . .

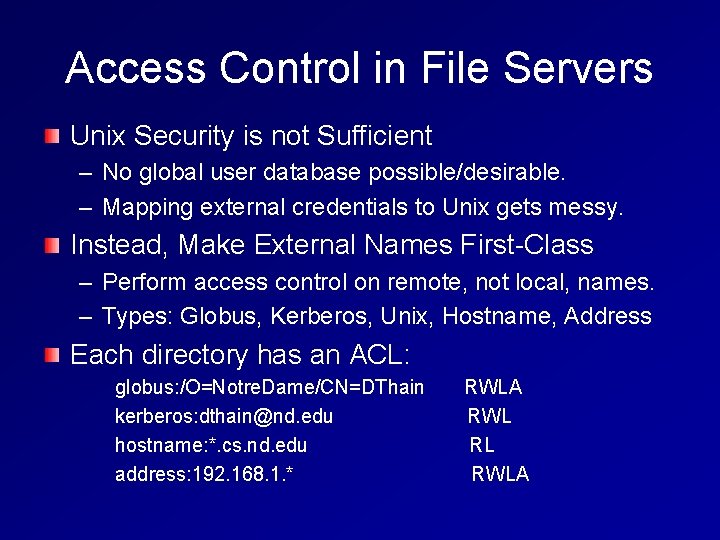

Access Control in File Servers Unix Security is not Sufficient – No global user database possible/desirable. – Mapping external credentials to Unix gets messy. Instead, Make External Names First-Class – Perform access control on remote, not local, names. – Types: Globus, Kerberos, Unix, Hostname, Address Each directory has an ACL: globus: /O=Notre. Dame/CN=DThain kerberos: dthain@nd. edu hostname: *. cs. nd. edu address: 192. 168. 1. * RWLA RWL RL RWLA

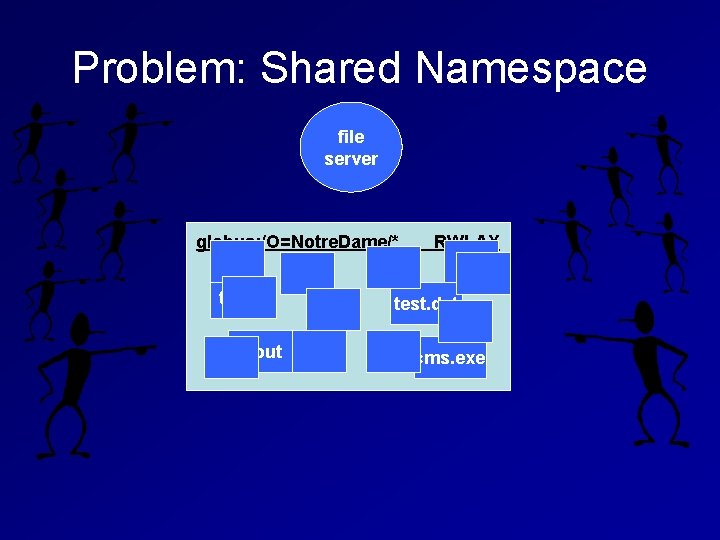

Problem: Shared Namespace file server globus: /O=Notre. Dame/* test. c a. out RWLAX test. dat cms. exe

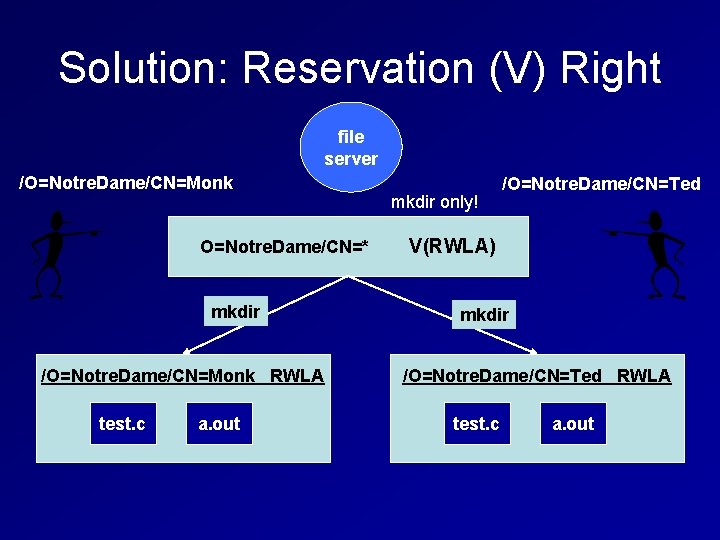

Solution: Reservation (V) Right file server /O=Notre. Dame/CN=Monk O=Notre. Dame/CN=* mkdir /O=Notre. Dame/CN=Monk RWLA test. c a. out mkdir only! /O=Notre. Dame/CN=Ted V(RWLA) mkdir /O=Notre. Dame/CN=Ted RWLA test. c a. out

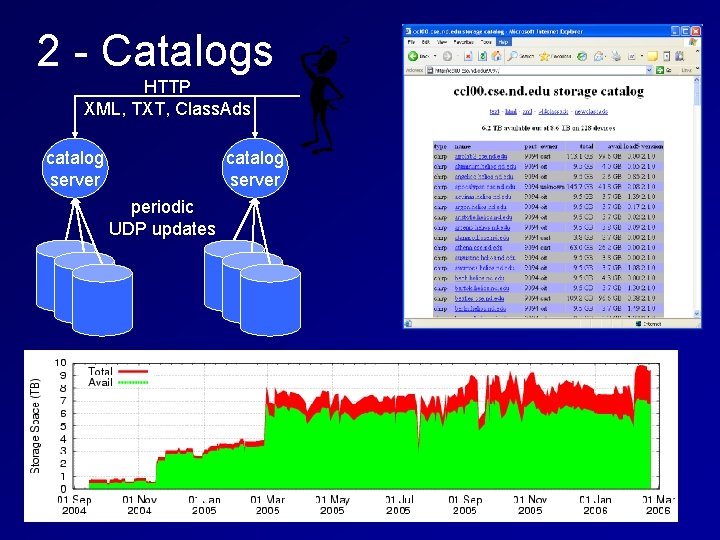

2 - Catalogs HTTP XML, TXT, Class. Ads catalog server periodic UDP updates

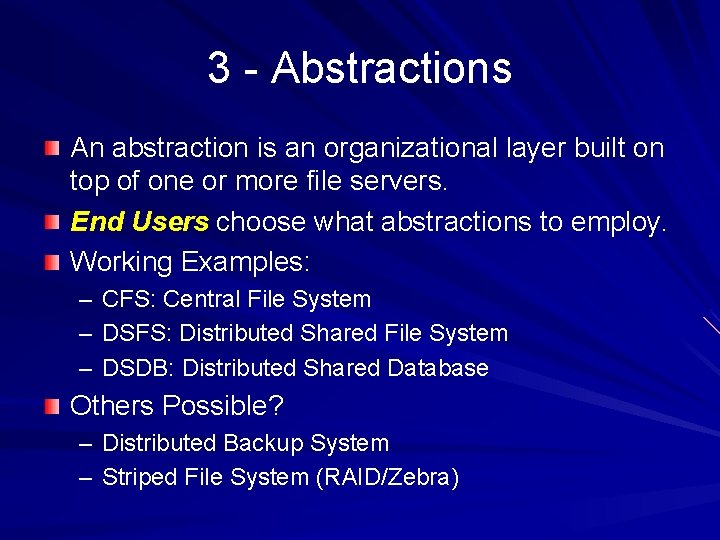

3 - Abstractions An abstraction is an organizational layer built on top of one or more file servers. End Users choose what abstractions to employ. Working Examples: – – – CFS: Central File System DSFS: Distributed Shared File System DSDB: Distributed Shared Database Others Possible? – Distributed Backup System – Striped File System (RAID/Zebra)

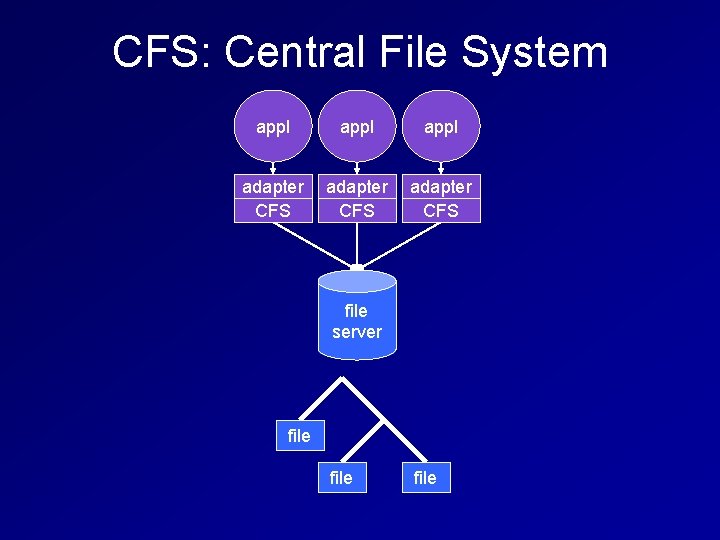

CFS: Central File System appl adapter CFS file server file

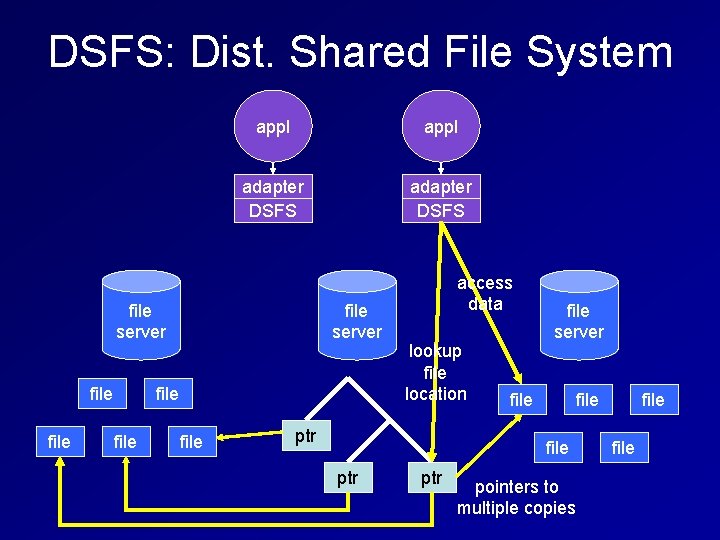

DSFS: Dist. Shared File System appl adapter DSFS file server file file access data lookup file location ptr file server file ptr pointers to multiple copies file

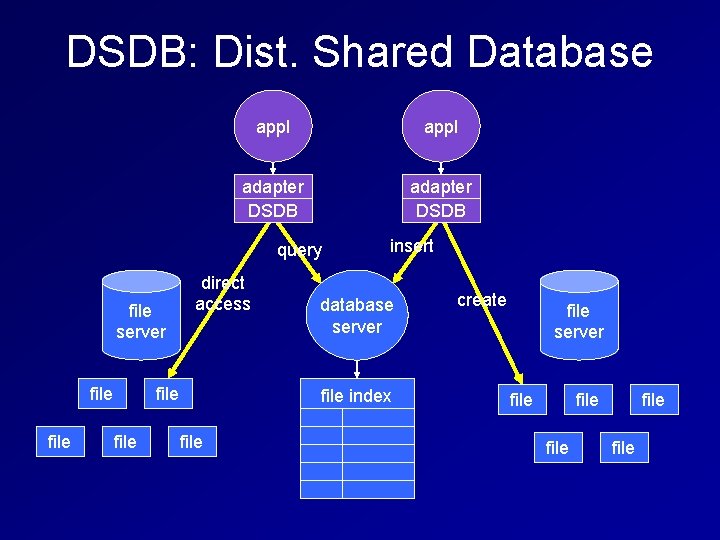

DSDB: Dist. Shared Database appl adapter DSDB query file server file direct access file insert database server file index file create file server file file

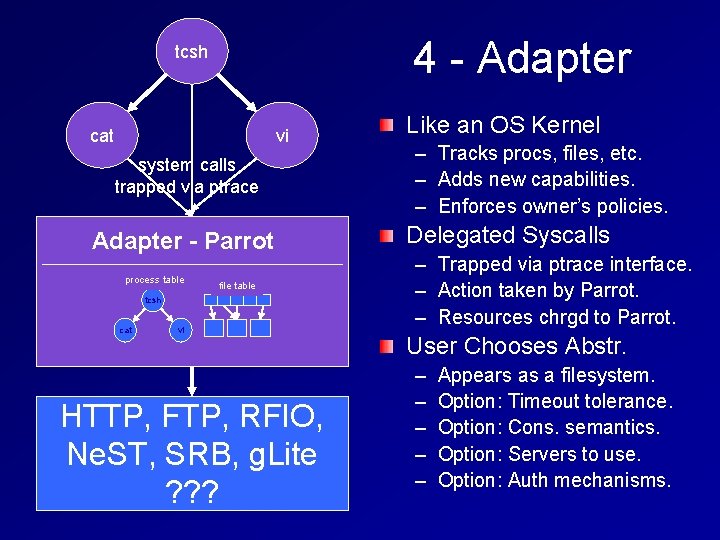

4 - Adapter tcsh cat vi system calls trapped via ptrace Adapter - Parrot process table file table tcsh cat vi HTTP, FTP, RFIO, Abstractions: Ne. ST, SRB, g. Lite CFS – DSFS - DSDB ? ? ? Like an OS Kernel – – – Tracks procs, files, etc. Adds new capabilities. Enforces owner’s policies. Delegated Syscalls – – – Trapped via ptrace interface. Action taken by Parrot. Resources chrgd to Parrot. User Chooses Abstr. – – – Appears as a filesystem. Option: Timeout tolerance. Option: Cons. semantics. Option: Servers to use. Option: Auth mechanisms.

App file transfer Adapter ? ? ? Adapter App Central Filesystem Distributed Filesystem Abstraction Adapter Distributed Database Abstraction UNIX UNIX file server file server UNIX UNIX file system file system Workstations owners control policy on each machine. Cluster administrator controls policy on all storage in cluster

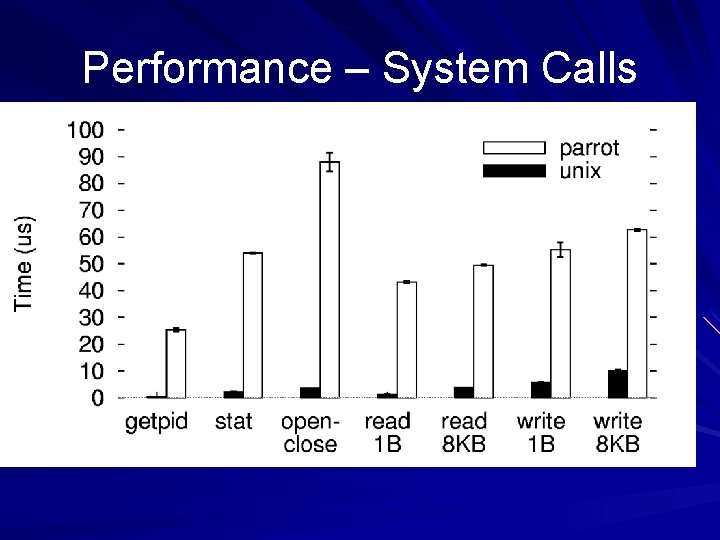

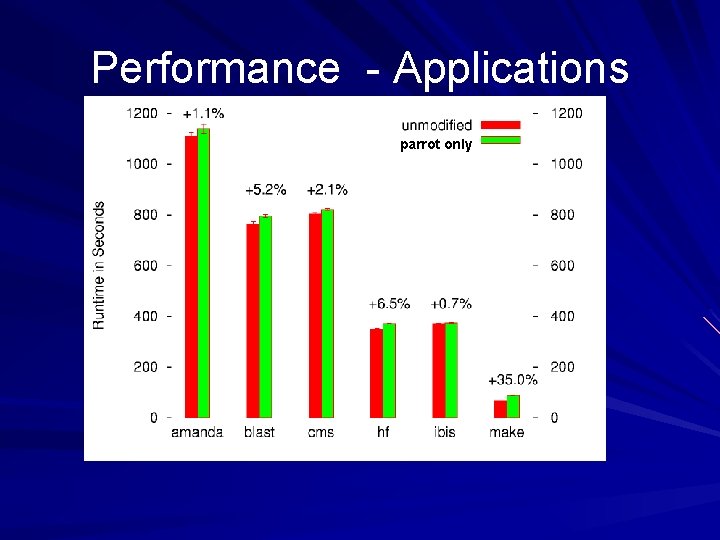

Performance Summary Nothing comes for free! – System calls: order of magnitude slower. – Memory bandwidth overhead: extra copies. However: – – – TSS can take full advantage of bandwidth (!NFS) TSS can drive network/switch to limits. Typical slowdown on real apps: 5 -10 percent. Allows one to harness resources that would go unused. Observation: Most users constrained by functionality.

Outline Problems with the Standard Model Tactical Storage Systems – File Servers, Catalogs, Abstractions, Adapters Applications: – Remote Database Access for Ba. Bar Code – Remote Dynamic Linking for CDF Code – Logical Data Access for Bioinformatics Code – Expandable Database for MD Simulation Improving the OS for Grid Computing

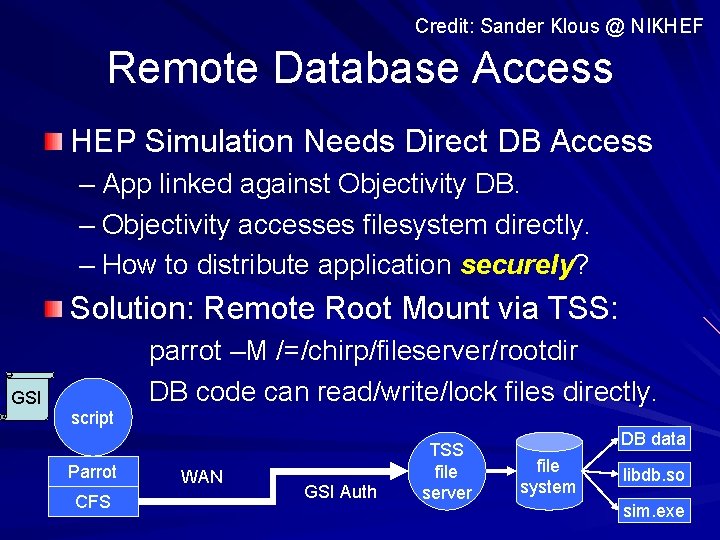

Credit: Sander Klous @ NIKHEF Remote Database Access HEP Simulation Needs Direct DB Access – App linked against Objectivity DB. – Objectivity accesses filesystem directly. – How to distribute application securely? Solution: Remote Root Mount via TSS: GSI script Parrot CFS parrot –M /=/chirp/fileserver/rootdir DB code can read/write/lock files directly. WAN GSI Auth TSS file server DB data file system libdb. so sim. exe

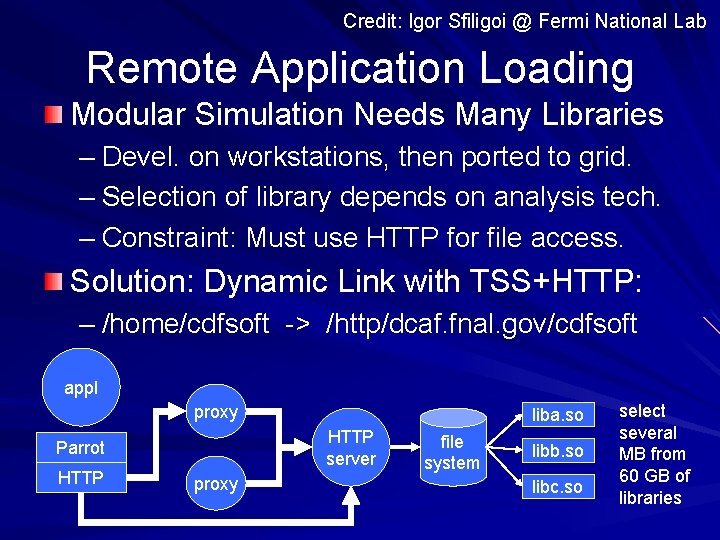

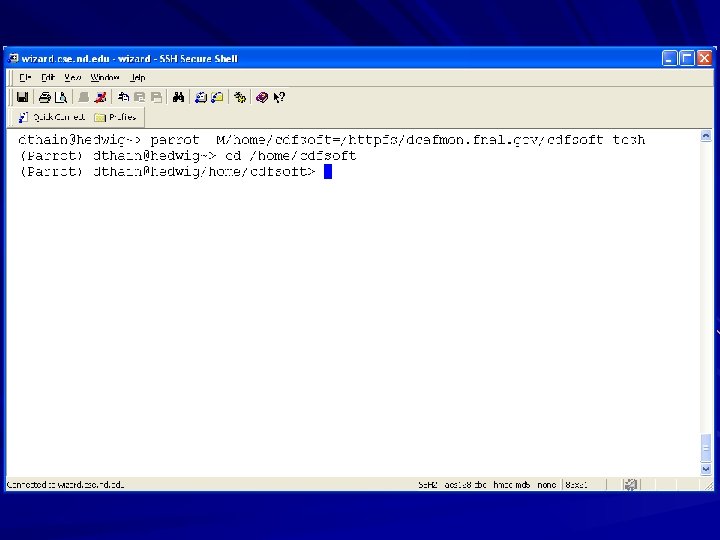

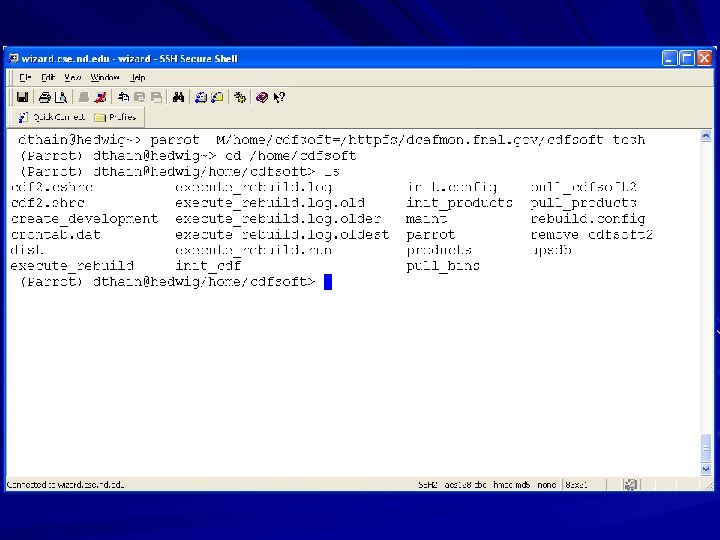

Credit: Igor Sfiligoi @ Fermi National Lab Remote Application Loading Modular Simulation Needs Many Libraries – Devel. on workstations, then ported to grid. – Selection of library depends on analysis tech. – Constraint: Must use HTTP for file access. Solution: Dynamic Link with TSS+HTTP: – /home/cdfsoft -> /http/dcaf. fnal. gov/cdfsoft appl proxy HTTP server Parrot HTTP liba. so proxy file system libb. so libc. so select several MB from 60 GB of libraries

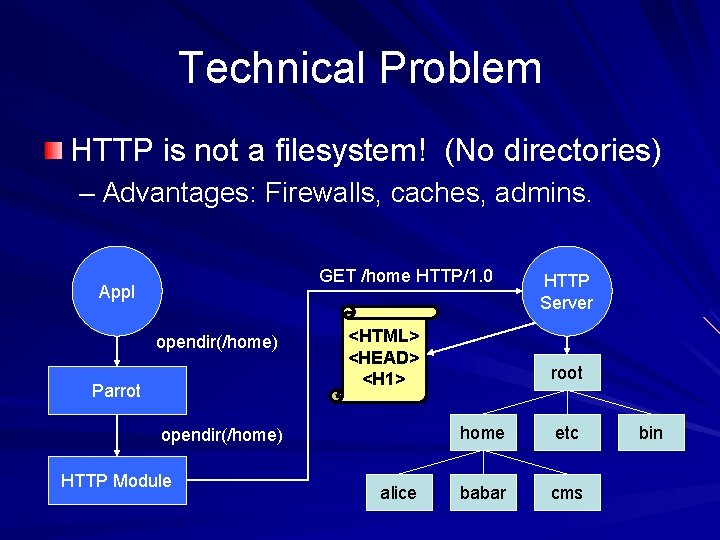

Technical Problem HTTP is not a filesystem! (No directories) – Advantages: Firewalls, caches, admins. GET /home HTTP/1. 0 Appl opendir(/home) Parrot <HTML> <HEAD> <H 1> opendir(/home) HTTP Module alice HTTP Server root home etc babar cms bin

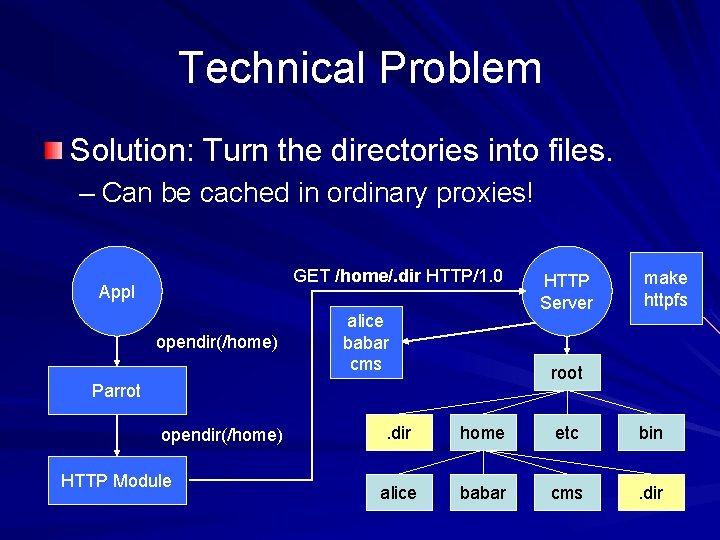

Technical Problem Solution: Turn the directories into files. – Can be cached in ordinary proxies! GET /home/. dir HTTP/1. 0 Appl opendir(/home) alice babar cms HTTP Module make httpfs root Parrot opendir(/home) HTTP Server . dir home etc bin alice babar cms . dir

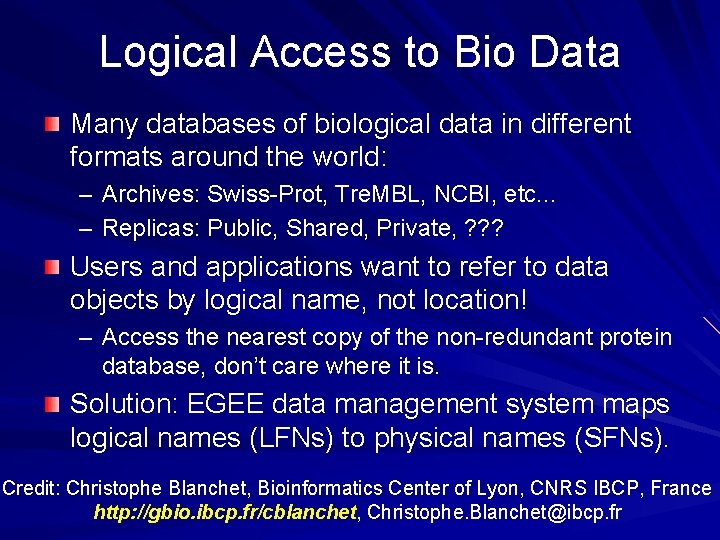

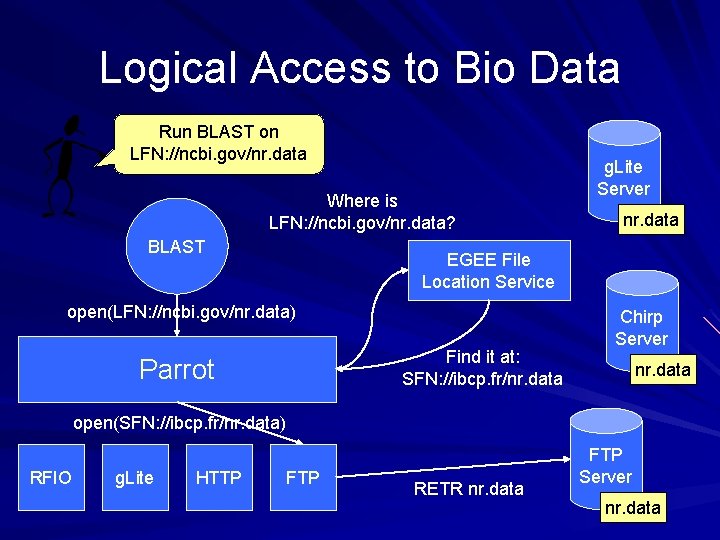

Logical Access to Bio Data Many databases of biological data in different formats around the world: – Archives: Swiss-Prot, Tre. MBL, NCBI, etc. . . – Replicas: Public, Shared, Private, ? ? ? Users and applications want to refer to data objects by logical name, not location! – Access the nearest copy of the non-redundant protein database, don’t care where it is. Solution: EGEE data management system maps logical names (LFNs) to physical names (SFNs). Credit: Christophe Blanchet, Bioinformatics Center of Lyon, CNRS IBCP, France http: //gbio. ibcp. fr/cblanchet, Christophe. Blanchet@ibcp. fr

Logical Access to Bio Data Run BLAST on LFN: //ncbi. gov/nr. data Where is LFN: //ncbi. gov/nr. data? BLAST g. Lite Server nr. data EGEE File Location Service open(LFN: //ncbi. gov/nr. data) Find it at: SFN: //ibcp. fr/nr. data Parrot Chirp Server nr. data open(SFN: //ibcp. fr/nr. data) RFIO g. Lite HTTP FTP RETR nr. data FTP Server nr. data

Appl: Distributed MD Database State of Molecular Dynamics Research: – – – Easy to run lots of simulations! Difficult to understand the “big picture” Hard to systematically share results and ask questions. Desired Questions and Activities: – – “What parameters have I explored? ” “How can I share results with friends? ” “Replicate these items five times for safety. ” “Recompute everything that relied on this machine. ” GEMS: Grid Enabled Molecular Sims – Distributed database for MD siml at Notre Dame. – XML database for indexing, TSS for storage/policy.

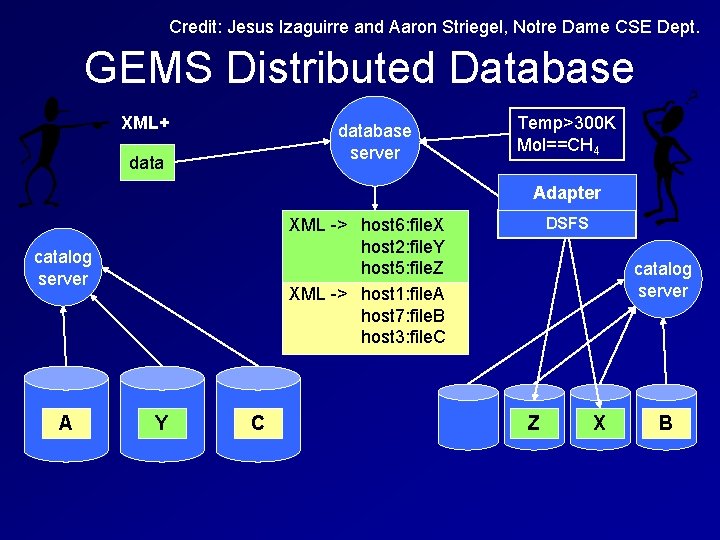

Credit: Jesus Izaguirre and Aaron Striegel, Notre Dame CSE Dept. GEMS Distributed Database XML+ database server data XML -> host 6: file. X host 2: file. Y host 5: file. Z XML -> host 1: file. A host 7: file. B host 3: file. C catalog server A Y C Temp>300 K Mol==CH 4 Adapter host 5: file. Z host 6: file. X DSFS catalog server Z X B

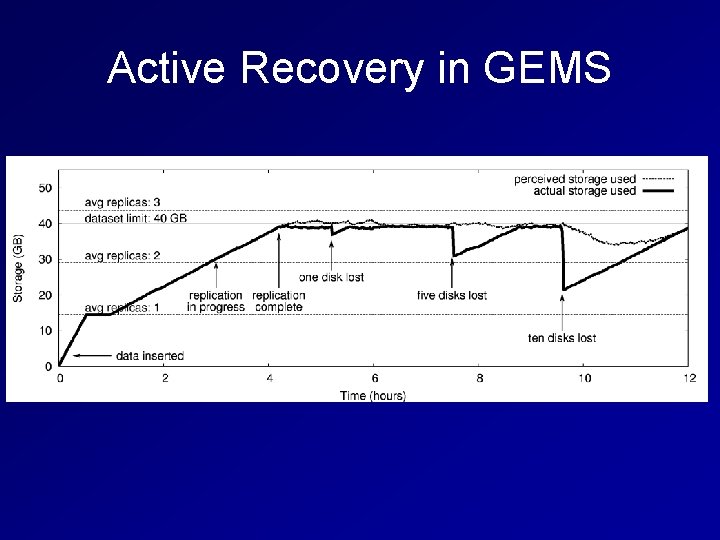

Active Recovery in GEMS

GEMS and Tactical Storage Dynamic System Configuration – Add/remove servers, discovered via catalog Policy Control in File Servers – Groups can Collaborate within Constraints – Security Implemented within File Servers Direct Access via Adapters – Unmodified Simulations can use Database – Alternate Web/Viz Interfaces for Users.

Outline Problems with the Standard Model Tactical Storage Systems – File Servers, Catalogs, Abstractions, Adapters Applications: – Remote Database Access for Ba. Bar Code – Remote Dynamic Linking for CDF Code – Logical Data Access for Bioinformatics Code – Expandable Database for MD Simulation Improving the OS for Grid Computing

OS Support for Grid Computing Distributed computing in general suffers because of limitations in the operating system. How can we improve the OS in the long term? Resource allocation: – Cannot reserve space -> jobs crash – Hard to clean up procs -> unreliable systems Security and permissions: – No ACLs -> hard to share data – Only root can setuid -> hard to secure services.

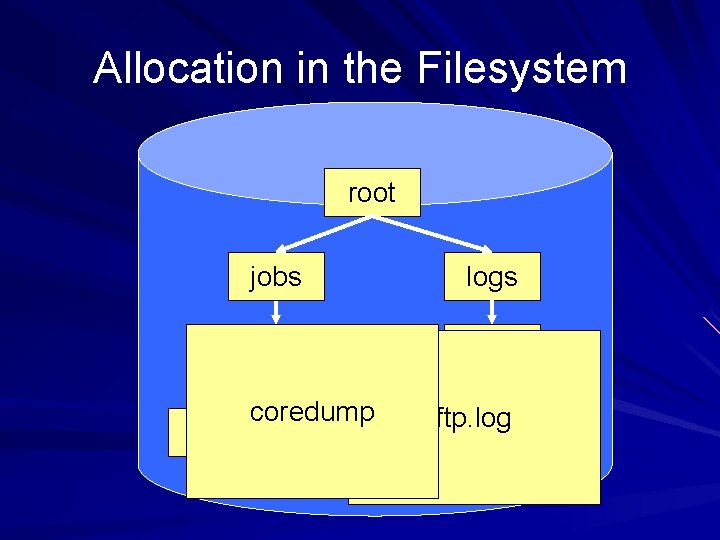

Allocation in the Filesystem root jobs logs job 23 ftp coredump input output ftp. log

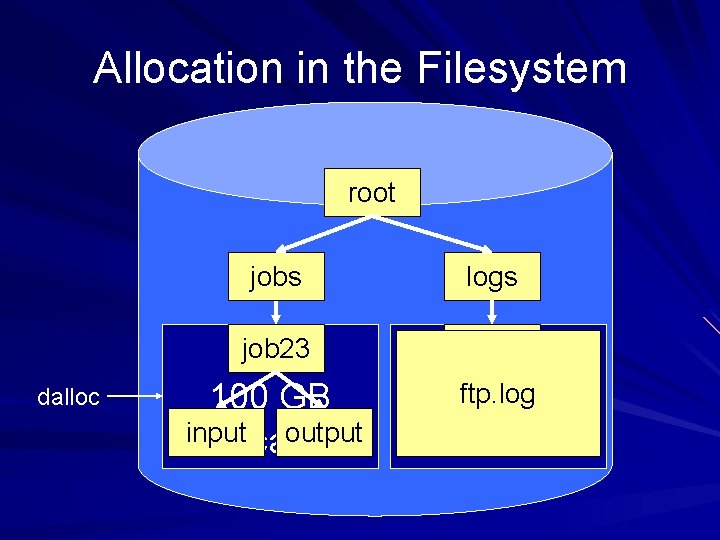

Allocation in the Filesystem root dalloc jobs logs job 23 ftp 100 GB input output allocation ftp. log 200 GB ftp. log allocation

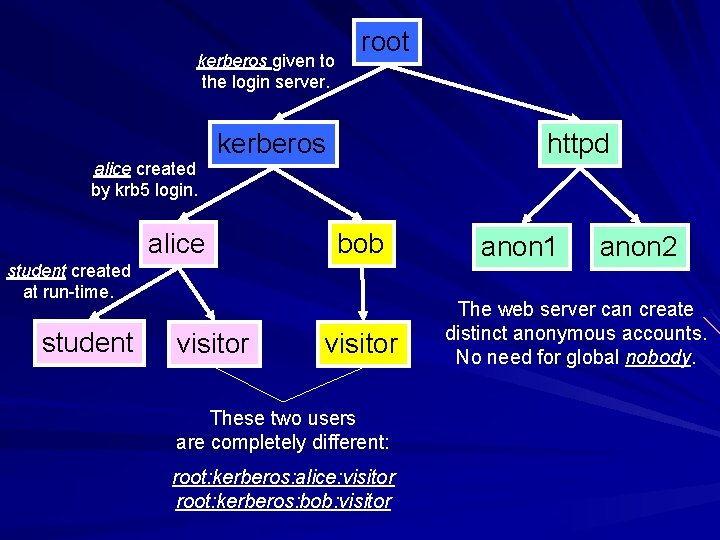

kerberos given to the login server. alice created by krb 5 login. root kerberos alice httpd bob student created at run-time. student visitor These two users are completely different: root: kerberos: alice: visitor root: kerberos: bob: visitor anon 1 anon 2 The web server can create distinct anonymous accounts. No need for global nobody.

Approach by Degrees What can we do as an ordinary user? – Simulate OS functionality within Parrot. – Drawback: Performance / Assurance. What can we do as root? – Setuid toolkit to manage system on request. – Drawback: Limitations in Policy / Expr. What can we do by modifying the OS? – Modify kernel/FS to support to new features. – Drawback: Deployment.

Tactical Storage Systems Separate Abstractions from Resources Components: – Servers, catalogs, abstractions, adapters. – Completely user level. – Performance acceptable for real applications. Independent but Cooperating Components – Owners of file servers set policy. – Users must work within policies. – Within policies, users are free to build.

Parting Thought Many users of the grid are constrained by functionality, not performance. TSS allows end users to build the structures that they need for the moment without involving an admin. Analogy: building blocks for distributed storage.

Acknowledgments Science Collaborators: – – – Christophe Blanchet Sander Klous Peter Kunzst Erwin Laure John Poirer Igor Sfiligoi CS Collaborators: – Jesus Izaguirre – Aaron Striegel CS Students: – – – – Paul Brenner James Fitzgerald Jeff Hemmes Paul Madrid Chris Moretti Phil Snowberger Justin Wozniak

For more information. . . Cooperative Computing Lab http: //www. cse. nd. edu/~ccl Cooperative Computing Tools http: //www. cctools. org Douglas Thain – dthain@cse. nd. edu – http: //www. cse. nd. edu/~dthain

Performance – System Calls

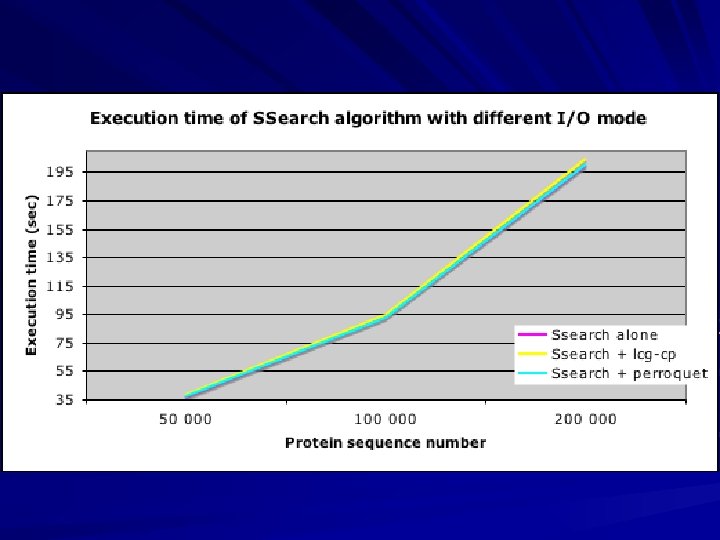

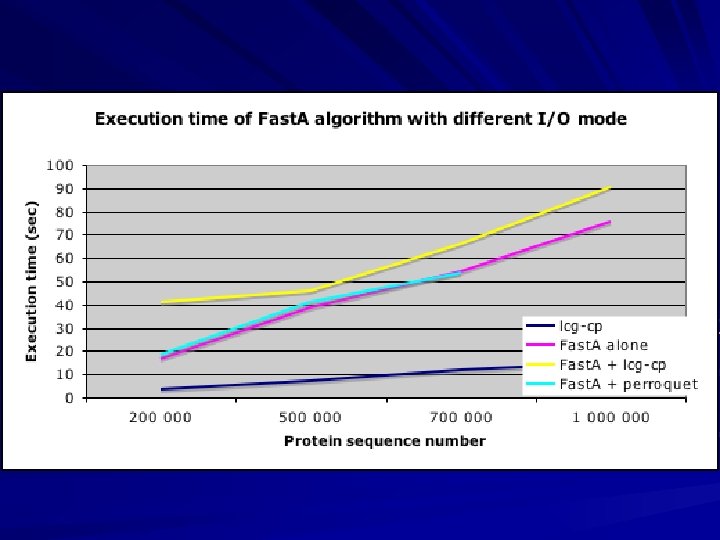

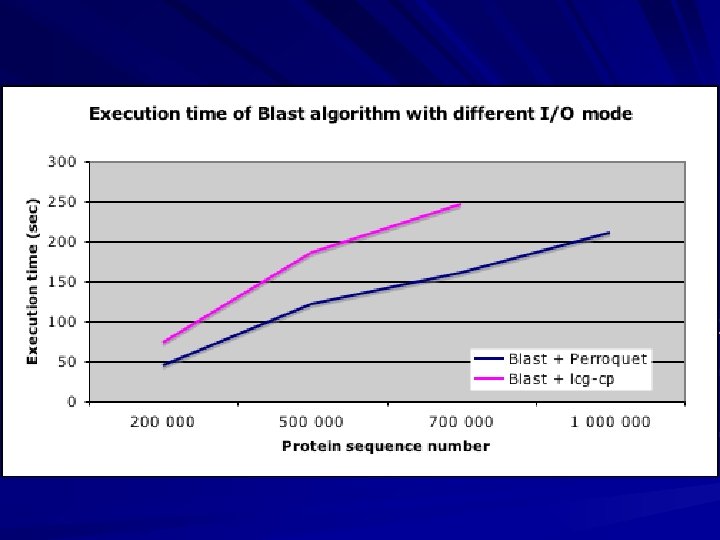

Performance - Applications parrot only

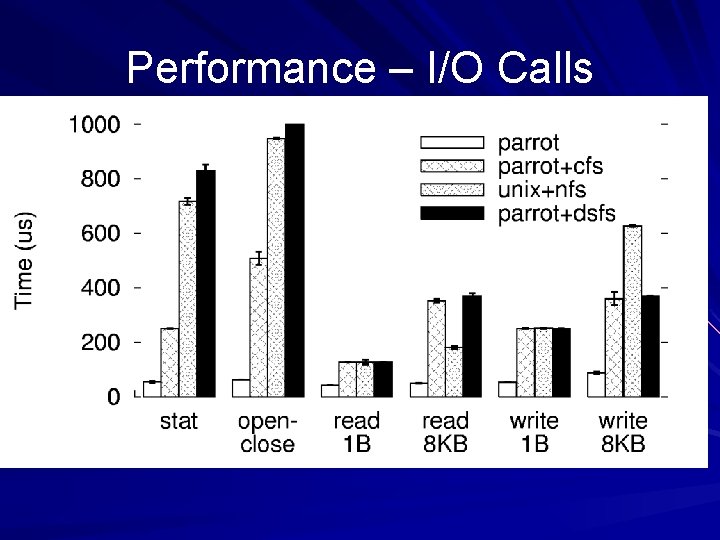

Performance – I/O Calls

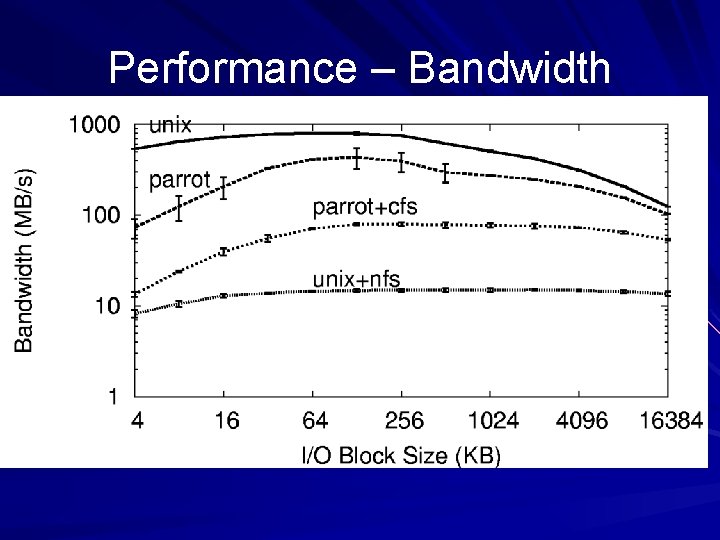

Performance – Bandwidth

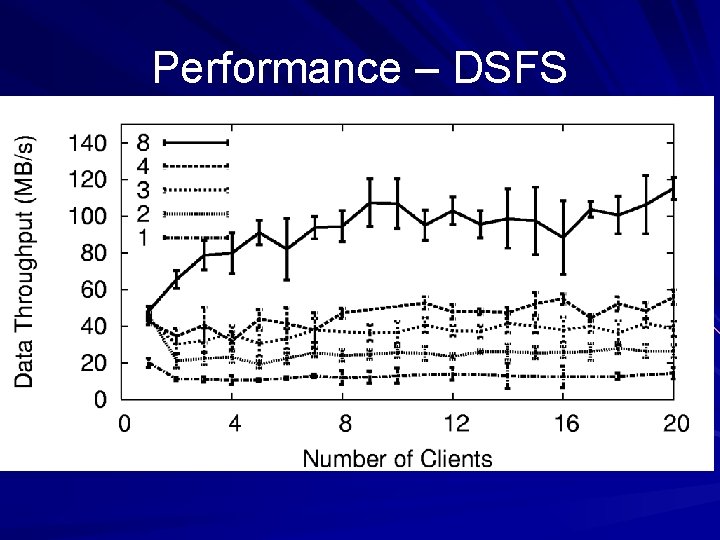

Performance – DSFS

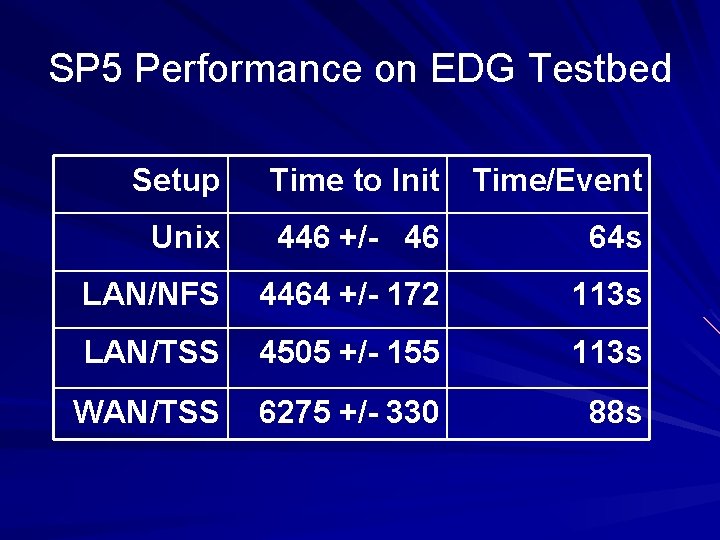

SP 5 Performance on EDG Testbed Setup Time to Init Time/Event Unix 446 +/- 46 64 s LAN/NFS 4464 +/- 172 113 s LAN/TSS 4505 +/- 155 113 s WAN/TSS 6275 +/- 330 88 s

- Slides: 59