Embracing and Enhancing Data Quality AACTE Annual Meeting

Embracing and Enhancing Data Quality AACTE Annual Meeting Feb. 28, 2020

Welcome! Dr. Kristen Smith, Director of Assessment Dr. Christina O’Connor, Director of Professional Education Preparation, Policy, and Accountability

CAEP’s Stance on Data Quality 5. 1 The provider’s quality assurance system is comprised of multiple measures that can monitor candidate progress, completer achievements, and provider operational effectiveness. Evidence demonstrates that the provider satisfies all CAEP standards. 5. 2 The provider’s quality assurance system relies on relevant, verifiable, representative, cumulative and actionable measures, and produces empirical evidence that interpretations of data are valid and consistent.

Data Quality Standards: Other Contexts idity • val bility & ia • rel nistration dmi ing a t s e • t scor arability comp e r o • sc • validity ty • reliabili • test on i t a r t s i n i adm ty i d i l a v • and y t i l i b a • reli ata d f o y t i integr

What does CAEP expect? Evaluation Framework for EPP Created Assessments

Administration and Purpose a. The point or points when the assessment is administered during the preparation program are explicit. b. The purpose of the assessment and its use in candidate monitoring or decisions on progression are specified and appropriate. c. Instructions provided to candidates (or respondents to surveys) about what they are expected to do are informative and unambiguous. d. The basis for judgment (criterion for success, or what is “good enough”) is made explicit for candidates (or respondents to surveys). e. Evaluation categories or assessment tasks are aligned with CAEP, In. TASC, national/professional and state standards.

Content a. Indicators assess explicitly identified aspects of CAEP, In. TASC, national/professional and state standards. b. Indicators reflect the degree of difficulty or level of effort described in the standards. c. Indicators unambiguously describe the proficiencies to be evaluated. d. When the standards being informed address higher level functioning, the indicators require higher levels of intellectual behavior (e. g. , create, evaluate, analyze, & apply). e. Most indicators (at least those comprising 80% of the total score) require observers to judge consequential attributes of candidate proficiencies in the standards.

Scoring a. The basis for judging candidate performance is well defined. b. Each Proficiency Level Descriptor (PLD) is qualitatively defined by specific criteria aligned with indicators. c. PLDs represent a developmental sequence from level to level (to provide raters with explicit guidelines for evaluating candidate performance and for providing candidates with explicit feedback on their performance). d. Feedback provided to candidates is actionable—it is directly related to the preparation program and can be used for program improvement as well as for feedback to the candidate. e. Proficiency level attributes are defined in actionable, performance-based, or observable behavior terms.

Reliability a. A description or plan is provided that details the type of reliability that is being investigated or has been established (e. g. , test-retest, parallel forms, inter-rater, internal. consistency, etc. ) and the steps the EPP took to ensure the reliability of the data from the assessment. b. Training of scorers and checking on inter-rater agreement and reliability are documented. c. The described steps meet accepted research standards for establishing reliability.

Validity a. A description or plan is provided that details steps the EPP has taken or is taking to ensure the validity of the assessment and its use. b. The plan details the types of validity that are under investigation or have been established (e. g. , construct, content, concurrent, predictive, etc. ) and how they were established. c. If the assessment is new or revised, a pilot was conducted. d. The EPP details its current process or plans for analyzing and interpreting results from the assessment. e. The described steps meet accepted research standards for establishing the validity of data from an assessment.

Surveys Content • Aligned to mission & standards • Single subject items, unambiguous • No leading questions • Behaviors and practices rather than opinions • Clear connection to quality teaching (Dispositions surveys) Data Quality • Qualitatively defined choices • Actionable feedback • Questions piloted

Action Planning- Steps 1 & 2 1. Think about an EPP Created Assessment at your institution that you really like 2. Turn to a partner, and share some thoughts about how your assessment would measure up to the CAEP Evaluation Framework 3. Be prepared to share something you noticed in relation to data quality, reliability, validity, and use.

What is reliability? • Form teams of 2 -3 OR work independently • Draw what reliability means to you OR visually represent it through an image you find online • Consider: How do you conceptualize reliability as it relates to data quality for your unit, department, or org?

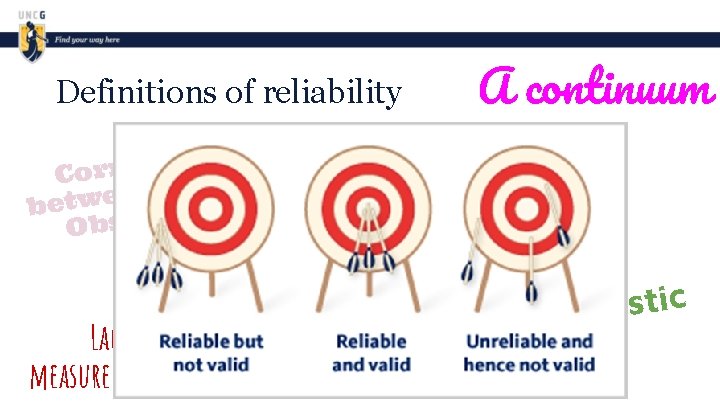

Definitions of reliability on i t a l e & Corr e u r T n e e w t s be e r o c s Obs. A continuum Prec ision Consistency of measurement Lacking measurement error c i t s i r e t c a r a h c A of data

Definitions of reliability on i t a l e & Corr e u r T n e e w t s be e r o c s Obs. A continuum Prec ision Consistency of measurement Lacking measurement error c i t s i r e t c a r a h c A of data

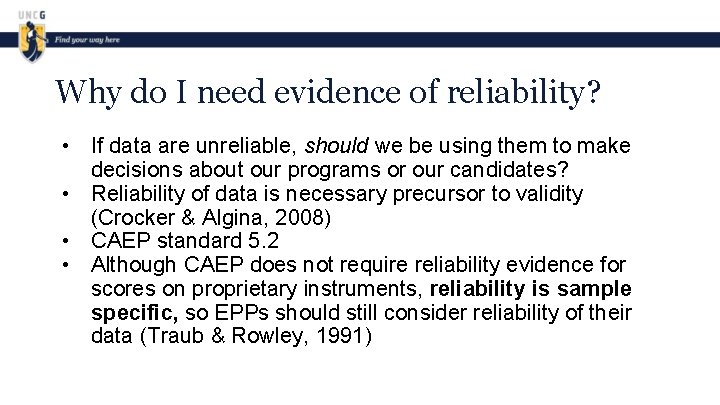

Why do I need evidence of reliability? • If data are unreliable, should we be using them to make decisions about our programs or our candidates? • Reliability of data is necessary precursor to validity (Crocker & Algina, 2008) • CAEP standard 5. 2 • Although CAEP does not require reliability evidence for scores on proprietary instruments, reliability is sample specific, so EPPs should still consider reliability of their data (Traub & Rowley, 1991)

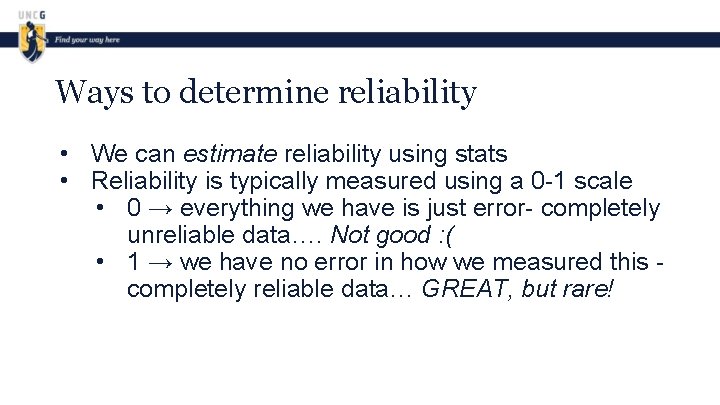

Ways to determine reliability • We can estimate reliability using stats • Reliability is typically measured using a 0 -1 scale • 0 → everything we have is just error- completely unreliable data…. Not good : ( • 1 → we have no error in how we measured this completely reliable data… GREAT, but rare!

Ways to determine reliability • Common Measure of Internal Consistency • Cronbachs alpha • Do items on a test or elements of a rubric relate to each other? • Do data allow us to consistently rank order students? • Useful when you only have on administration of the assessment or instrument

Ways to determine reliability • Inter-rater Agreement • Consistency across raters or reviewers • Absolute • All raters came to the exact same conclusions • More stringent…. Harder to find • Adjacent • Raters came to similar or “adjacent” conclusions • Less stringent… Easier to find

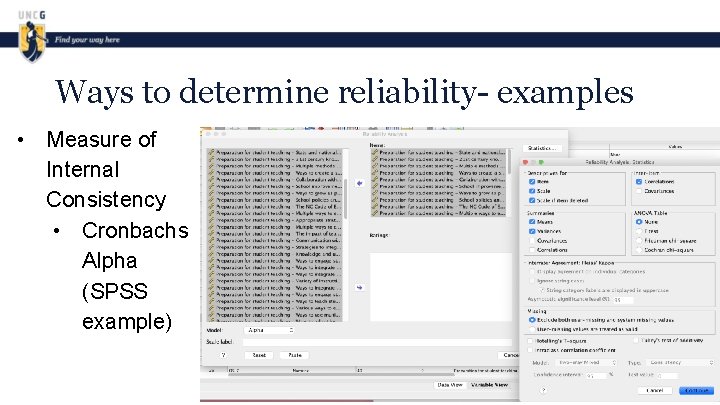

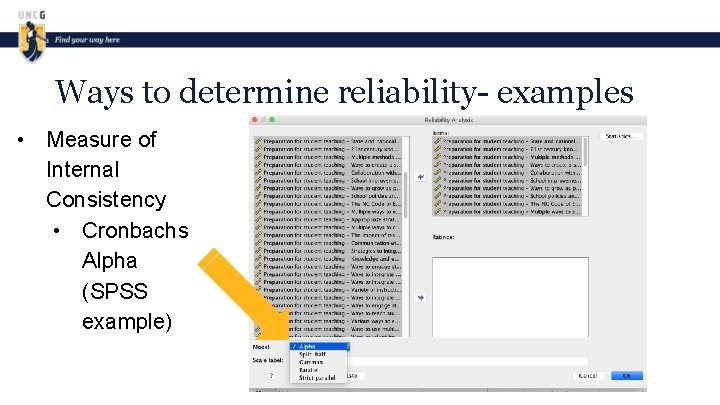

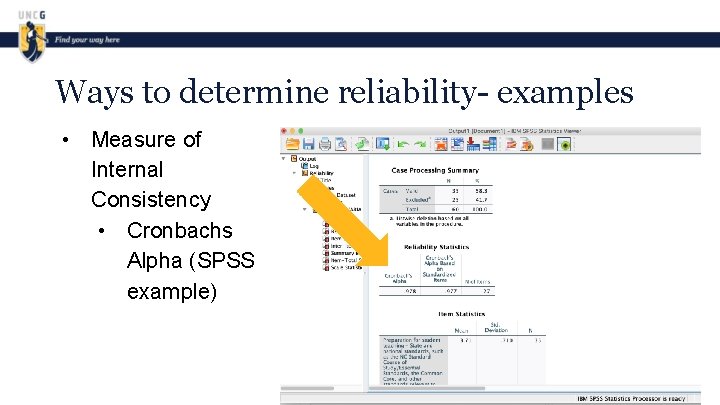

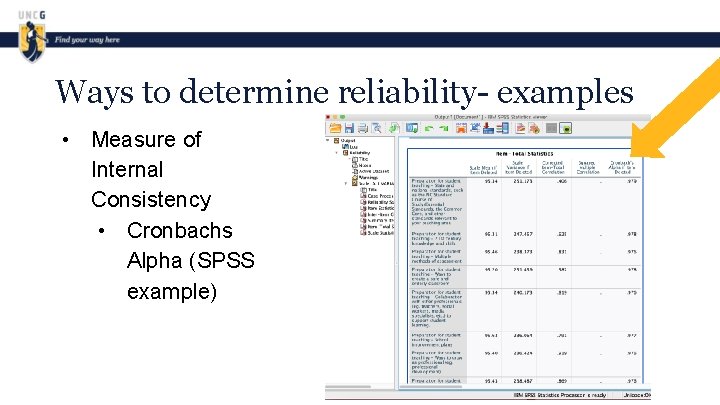

Ways to determine reliability- examples • Measure of Internal Consistency • Cronbachs Alpha (SPSS example) • Inter-rater Agreement • Absolute (excel template example ) • Adjacent (excel template example)

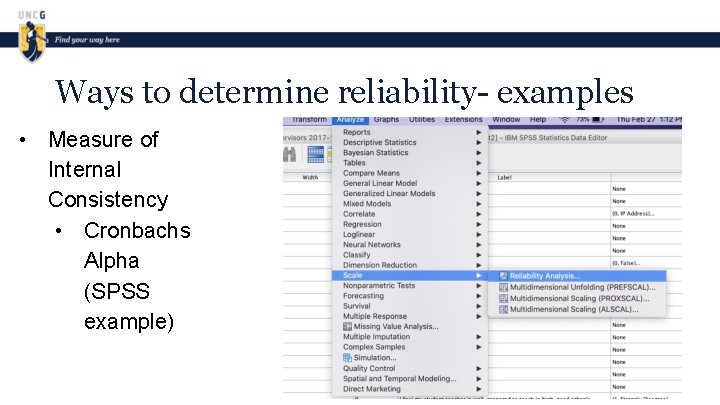

Ways to determine reliability- examples • Measure of Internal Consistency • Cronbachs Alpha (SPSS example)

Ways to determine reliability- examples • Measure of Internal Consistency • Cronbachs Alpha (SPSS example)

Ways to determine reliability- examples • Measure of Internal Consistency • Cronbachs Alpha (SPSS example)

Ways to determine reliability- examples • Measure of Internal Consistency • Cronbachs Alpha (SPSS example)

Ways to determine reliability- examples • Measure of Internal Consistency • Cronbachs Alpha (SPSS example)

Ways to determine reliability- examples • Inter-rater Agreement • Absolute (excel template example ) • Adjacent (excel template example) • Excel Template for IRA

Action Planning- Step 3 • • In your groups (or on your own), think about sources of evidence of reliability and critically assess reliability of data for the EPP-created assessment you’ve been working on Use Step 3 your Action Planning worksheet

What is Validity? • Form teams of 2 -3 OR work independently • Draw what validity means to you OR visually represent it through an image you find online • Consider: How do you conceptualize validity as it relates to data quality for your unit, department, or org?

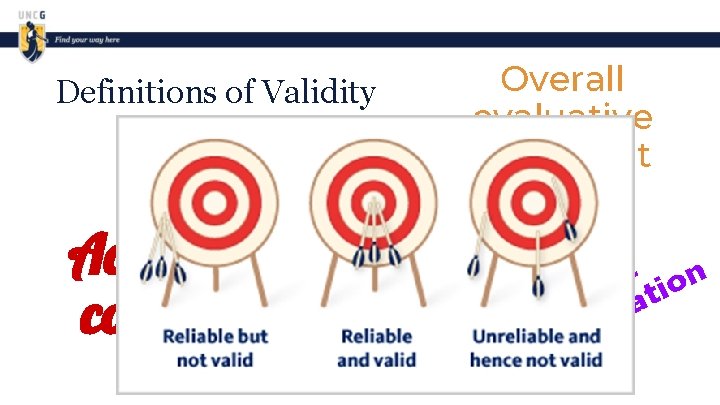

Definitions of Validity m u u n i t n o c Overall evaluative judgment On go p ro ing A ce Accuracy or ss correctness t on c re ati r Co ret p r e t in

Definitions of Validity Overall evaluative judgment On pr goin oc g e Accuracy or ss correctness t on c re ati r Co ret p r e t in

Why is validity important? • If data lack validity evidence, how do we know we are making accurate conclusions about our candidates? • Even if data are reliable, they may not necessarily be valid • Validity evidence helps support the accuracy of the conclusions that you draw from assessment data or scores

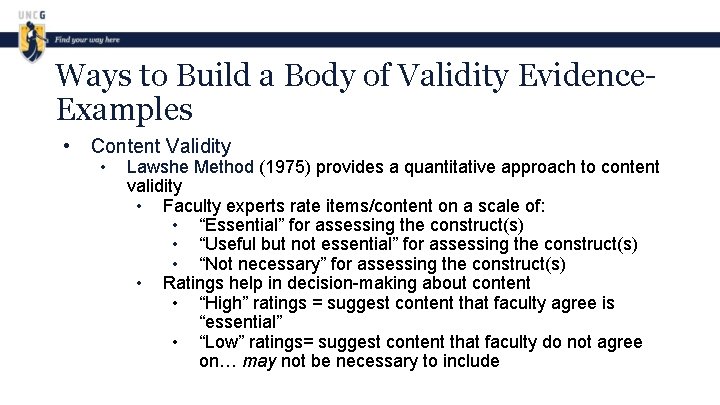

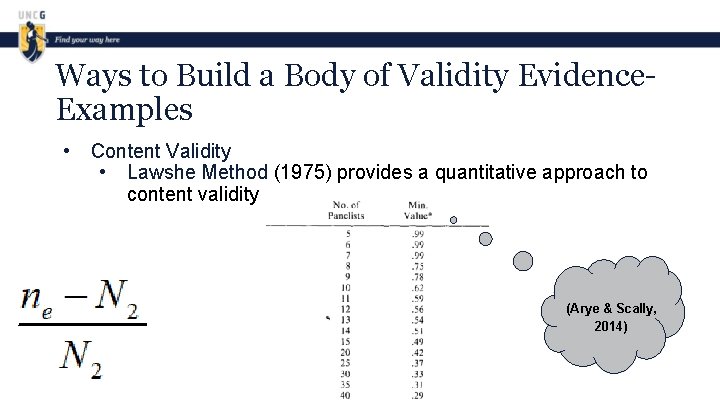

Ways to Build a Body of Validity Evidence. Examples • Content Validity • Lawshe Method (1975) provides a quantitative approach to content validity • Faculty experts rate items/content on a scale of: • “Essential” for assessing the construct(s) • “Useful but not essential” for assessing the construct(s) • “Not necessary” for assessing the construct(s) • Ratings help in decision-making about content • “High” ratings = suggest content that faculty agree is “essential” • “Low” ratings= suggest content that faculty do not agree on… may not be necessary to include

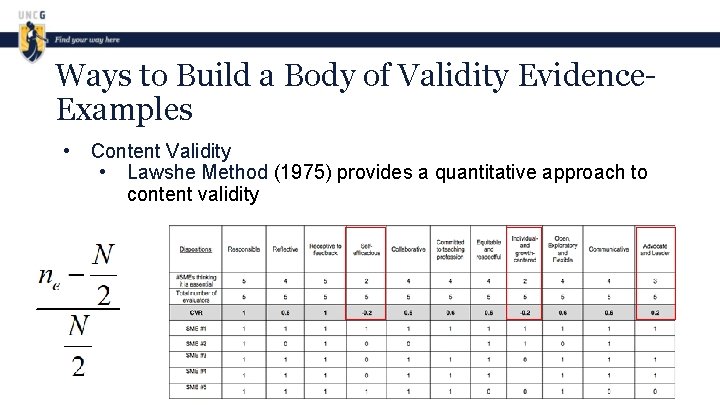

Ways to Build a Body of Validity Evidence. Examples • Content Validity • Lawshe Method (1975) provides a quantitative approach to content validity

Ways to Build a Body of Validity Evidence. Examples • Content Validity • Lawshe Method (1975) provides a quantitative approach to content validity (Arye & Scally, 2014)

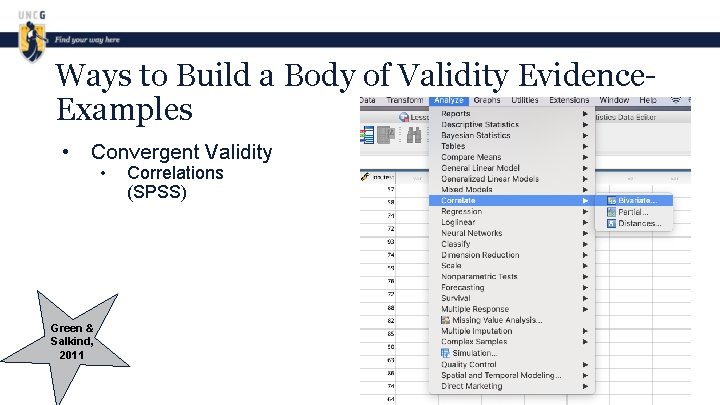

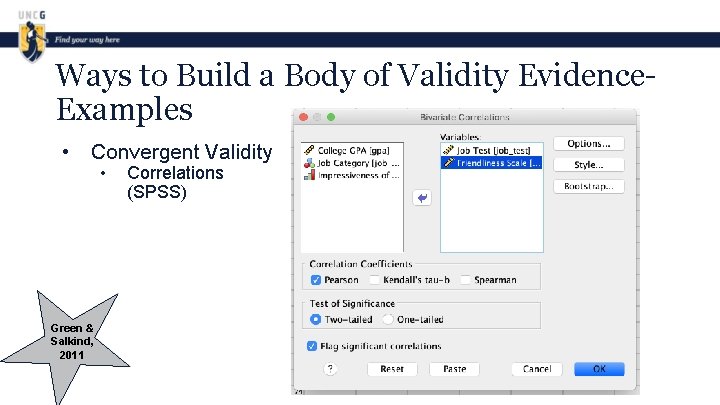

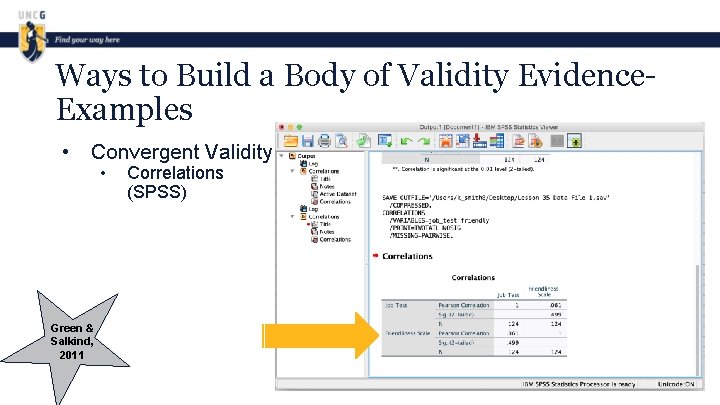

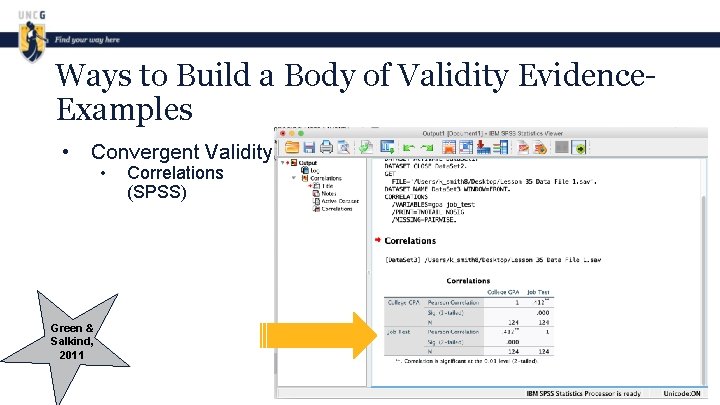

Ways to Build a Body of Validity Evidence. Examples • Convergent Validity Green & Salkind, 2011 • Correlations (SPSS)

Ways to Build a Body of Validity Evidence. Examples • Convergent Validity Green & Salkind, 2011 • Correlations (SPSS)

Ways to Build a Body of Validity Evidence. Examples • Convergent Validity Green & Salkind, 2011 • Correlations (SPSS)

Ways to Build a Body of Validity Evidence. Examples • Convergent Validity Green & Salkind, 2011 • Correlations (SPSS)

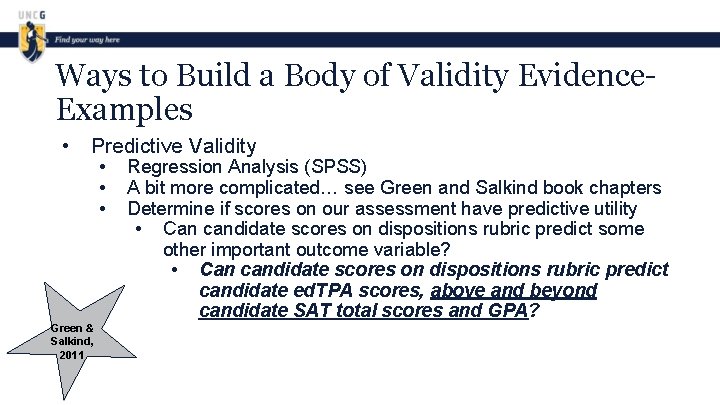

Ways to Build a Body of Validity Evidence. Examples • Predictive Validity Green & Salkind, 2011 • • • Regression Analysis (SPSS) A bit more complicated… see Green and Salkind book chapters Determine if scores on our assessment have predictive utility • Can candidate scores on dispositions rubric predict some other important outcome variable? • Can candidate scores on dispositions rubric predict candidate ed. TPA scores, above and beyond candidate SAT total scores and GPA?

Action Planning- Step 4 • • In your groups (or on your own), think about sources of evidence of reliability and critically assess reliability of data for the EPP-created assessment you’ve been working on Use Step 4 your Action Planning worksheet

Contact us Kristen Smith k_smith 8@uncg. edu Christina O’Connor ckoconno@uncg. edu

References American Educational Research Association, American Psychological Association, & National Council on Measurement in Education, & Joint Committee on Standards for Educational and Psychological Testing. (2014). Standards for educational and psychological testing. Washington, DC: AERA. Arye, C. , & Scally, A. J. (2014). Critical values for lawshe’s content validity ratio. Measurement and Evaluation in Counseling and Development, 47(1) 79– 86. Crocker, L. , & Algina, J. (2008). Introduction to Classical and Modern Test Theory. Mason, OH: Cengage Learning. Feldt, L. S. , & Brennan, R. L. (1989). Reliability. In R. L. Linn (Ed. ), Educational measurement (3 rd ed. , pp. 105 -146). Washington, DC: American Council on Education. Green, S. B. , & Salkind, N. J. Using SPSS for windows and macintosh: Analyzing and understanding data. Upper Saddle River, NJ: Prentice Hall. Kane, M. T. (2013). Validating the interpretations and uses of test scores. Journal of Educational Measurement 50(1), 1 -73. Lawshe, C. H. (1975). A quantitative approach to content validity. Personnel Psychology, 28, 563 -575. Messick, S. (1995). Validity of psychological assessment: Validation of inferences from persons’ responses and performances as scientific inquiry into score meaning. American Psychologist, 50(9), 741 -749. Traub, R. E. , & Rowley, G. L. (1991). An NCME instructional module on understanding reliability. Instructional Topics in Educational Measurement, 8, 37 -45. Wilkinson, L. Statistical methods in psychology journals: Guidelines and explanations. American Psychologist, 54(8), 594 -604.

- Slides: 42