Embarrassingly Parallel or pleasantly parallel Definition Problems that

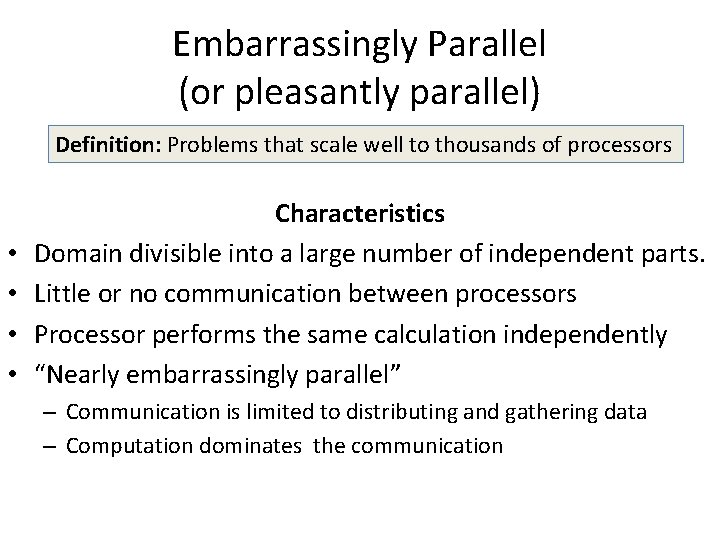

Embarrassingly Parallel (or pleasantly parallel) Definition: Problems that scale well to thousands of processors • • Characteristics Domain divisible into a large number of independent parts. Little or no communication between processors Processor performs the same calculation independently “Nearly embarrassingly parallel” – Communication is limited to distributing and gathering data – Computation dominates the communication

Embarrassingly Parallel Examples P 0 P 1 P 2 Embarrassingly Parallel Application Send Data P 0 P 1 P 2 P 3 Receive Data Nearly Embarrassingly Parallel Application

Low Level Image Processing Note: Does not include communication to a graphics adapter • Storage – A two dimensional array of pixels. – One bit, one byte, or three bytes may represent pixels – Operations may only involve local data • Image Applications – Shift: new. X=x+delta; new. Y=y+delta – Scale: new. X = x*scale; new. Y = y*scale – Rotate a point about the origin new. X = x cos. F+y sin. F; new. Y=-xsin. F+ycos. F – Clip new. X = x if minx<=x< maxx; 0 otherwise new. Y = y if miny<=y<=maxy; 0 otherwise

Non-trivial Image Processing • Smoothing – A function that captures important patterns, while eliminating noise or artifacts – Linear smoothing: Apply a linear transformation to a picture – Convolution: Pnew(x, y) = ∑j=0, m-1∑k=0, n-1 P(x, y, j, k)old f(j, k) • Edge Detection – A function that searches for discontinuities or variations in depth, surface, or color – Purpose: Significantly reduce follow-up processing – Uses: Pattern recognition and computer vision – One approach: differentiate to identify large changes • Pattern Matching – Match an image against a template or a group of features – Example: ∑i=0, X∑j=1, Y (Picture(x+I, y+i) – Template(x, y)) Note: This is another digital signal processing application

Array Storage Cow –major (left most dimensions) are stored one after another Column-major (right most dimensions) are stored one after another • The C language stores arrays in row-major order, Matlab and Fortran use column-major order • Loops can be extremely slow in C if the outer loop processes columns due to the system memory cache operation • int A[2][3] = { {1, 2, 3}, {4, 5, 6} }; In memory: 1 2 3 4 5 6 • int A[2][3][2] = {{{1, 2}, {3, 4}, {5, 6}}, {{7, 8}, {9, 10}, {11, 12}}}; • In memory: 1 2 3 4 5 6 7 8 9 10 11 12 • Translate multi-dimension indices to single dimension offsets – Two Dimensions: offset = row*COLS + column – Three Dimensions: offset = i*DIM 2*DIM 3 + j*DIM 3+ k – What is the formula for four dimensions?

Process Partitioning 1024 Note: 128 rows per displayed cell 768 Pixel 2053 Pixel 21 Row 2, column 5 Rows 0: 0 -7 0: 0 -1023 2: 8 -15 2: 2048 -3071 3: 16 -23 Note: 128 columns per displayed cell Partitioning might assign groups of rows or columns to processors

Typical Static Partitioning • Master – Scatter or broadcast the image along with assigned processor rows – Gather the updated data back and perform final updates if necessary • Slave – Receive Data – Compute translated coordinates – Perform collective gather operation • Questions – How does the master decide how much to assign to each processor? – Is the load balanced (all processors working equally)? • Notes on the Text shift example – Employs individual sends/receives, which is much slower – However, if coordinate positions change or results do not represent contiguous pixel positions, this might be required

Mandelbrot Set Definition: Those points C = (x, y) = x + iy in the complex plane that iterate with a function (normally: zn+1 = zn 2 + C) converge to a finite value Implementation Z 0 = 0 + 0 * i For each (x, y) from [-2, +2] Iterate zn until either • The iteration stops when the iteration count reaches a limit (in the set) • Zn is out of bounds ( |zn|>2 (not in the set) Save the iteration count which will map to a display color Complex plane Display horizontal axis: real values vertical axis: imaginary values

Scaling and Zooming • Display range of points – From cmin = xmin + iymin to cmax = xmax + iymax • Display range of pixels – From the pixel at (0, 0) to the pixel at (ROWS, COLUMNS) • Pseudo code For row = rowmin to rowend For col = 0 to COLUMNS cy = ymin+(ymax-ymin)* row/ROWS cx = xmin+(xmax-xmin)* col/COLUMNS color = mandelbrot(cx, cy) picture[COLUMNS*row+col] = color

Pseudo code (mandelbrot(cx, cy)) SET z = zreal + i*zimaginary = 0 + i 0 SET iterations = 0 DO SET z = z 2 + C // temp = zreal; zreal=zreal 2–zimaginary 2 + cx // zimaginary = 2 * temp * zimaginary + cy SET value = zreal 2 + zimaginary 2 iterations++ WHILE value<=4 and iterations<max RETURN iterations Notes: 1. The final iteration count determines each point’s color 2. Some points converge quickly; others slowly, and others not at all 3. Non-converging points are in the Mandelbrot Set (black on the previous slide) 4. Note 4½ = 2, so we don't need to compute the square root when setting value

Parallel Implementation Both the Static and Dynamic algorithms are examples of load balancing • Load-balancing – Algorithms used to avoid processors from becoming idle – Note: A balanced load does NOT require even same work loads • Static Approach – The load is assigned once at the start of the run – Mandelbrot: assign each processor a group of rows – Deficiencies: Not load balanced • Dynamic Approach – The load is dynamically assigned during the run – Mandelbrot: Slaves ask for work when they complete a section

The Dynamic Approach 1. The Master's work is increased somewhat a. Must send rows when receive requests from slaves b. Must be responsive to slave requests. A separate thread might help or the master can make use of MPI's asynchronous receive calls. 2. Termination a. Slaves terminate when receiving "no work" indication in received messages b. The master must not terminate until all of the slaves complete 3. Partitioning of the load a. Master receives blocks of pixels, Slaves receive ranges of (x, y) ranges b. Partitions can be in columns or in rows. Which is better? 4. Refinement: Ask for work before completion (double buffering)

Monte Carlo Methods Pseudo-code (Throw darts to converge at a solution) 1. Compute a definite integral While more iterations needed pick a random point total += f(x) result = 1/iterations * total 1/N ∑ 1 N f(pick. x) (xmax – xmin) 2. Calculation of PI While more iterations needed Randomly pick a point If point is in circle within++ Compute PI = 4 * within / iterations Using the upper right quadrant eliminates the 4 in the equation Note: Parallel programs shouldn't use the standard random number generator

Computation of PI ∫(1 -x 2)1/2 dx = π; -1<=x<=1 ∫(1 -x 2)1/2 dx = π/4; 0<=x<=1 Within if (point. x 2 + point. y 2) ≤ 1 Total points/Points within = Total Area/Area in shape Questions: • How to handle the boundary condition? • What is the best accuracy that we can achieve?

Parallel Random Number Generator • Numbers of a pseudo random sequence are – Uniformly, large period, repeatable, statistically independent – Each processor must generate a unique sequence – Accuracy depends upon random sequence precision • Sequential linear generator (a and m are prime; c=0) 1. Xi+1 = (axi +c) mod m (ex: a=16807, m=231 – 1, c=0) 2. Many other generators are possible • Parallel linear generator with unique sequences 1. Xi+k = (Axi + C) mod m where k is the "jump" constant 2. A=ak, C=c (ak-1 + ak-2 + … + a 1 + a 0) 3. if k = P, we can compute A and C and the first k random numbers to get started x 1 x 2 x. P-1 x. P Parallel Random Sequence x. P+1 x 2 P-2 x 2 P-1

- Slides: 15