Eliciting Honest Feedback 1 Eliciting Honest Feedback The

Eliciting Honest Feedback 1. Eliciting Honest Feedback: The Peer-Prediction Model (Miller, Resnick, Zeckhauser) Nikhil Srivastava 2. Minimum Payments that Reward Honest Feedback (Jurca, Faltings) Hao-Yuh Su

Eliciting Feedback • Fundamental purpose of reputation systems • Review: general setup

Eliciting Feedback • Fundamental purpose of reputation systems • Review: general setup – Information distributed among individuals about value of some item • external goods (Net. Flix, Amazon, e. Pinions, admissions) • each other (e. Bay, Page. Rank) – Aggregated information valuable for individual or group decisions – How to gather and disseminate information?

Challenges • Underprovision – “inconvenience” cost of contributing • Dishonesty – niceness, fear of retaliation – conflicting motivations

Challenges • Underprovision – “inconvenience” cost of contributing • Dishonesty – niceness, fear of retaliation – conflicting motivations • Reward systems – motivate participation, honest feedback – monetary (prestige, privilege, pure competition)

Overcoming Dishonesty • Need to distinguish “good” from “bad” reports – explicit reward systems require objective outcome, public knowledge – stock, weather forecasting • But what if … – subjective? (product quality/taste) – private? (breakdown frequency, seller reputability)

Overcoming Dishonesty • Need to distinguish “good” from “bad” reports – explicit reward systems require objective outcome, public knowledge – stock, weather forecasting • But what if … – subjective? (product quality/taste) – private? (breakdown frequency, seller reputability) • Naive solution: reward peer agreement – Information cascade, herding

Peer Prediction • Basic idea – reports determine probability distribution on other reports – reward based on “predictive power” of user’s report for a reference rater’s report – taking advantage of proper scoring rules, honest reporting is a Nash equilibrium

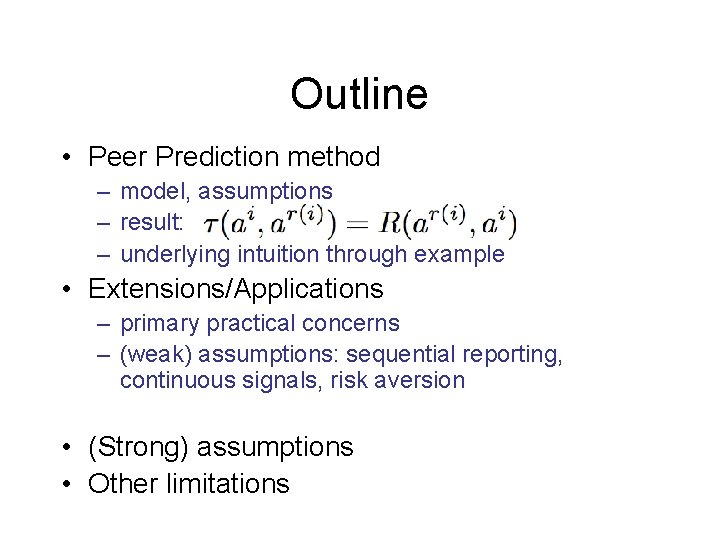

Outline • Peer Prediction method – model, assumptions – result: – underlying intuition through example • Extensions/Applications – primary practical concerns – (weak) assumptions: sequential reporting, continuous signals, risk aversion • (Strong) assumptions • Other limitations

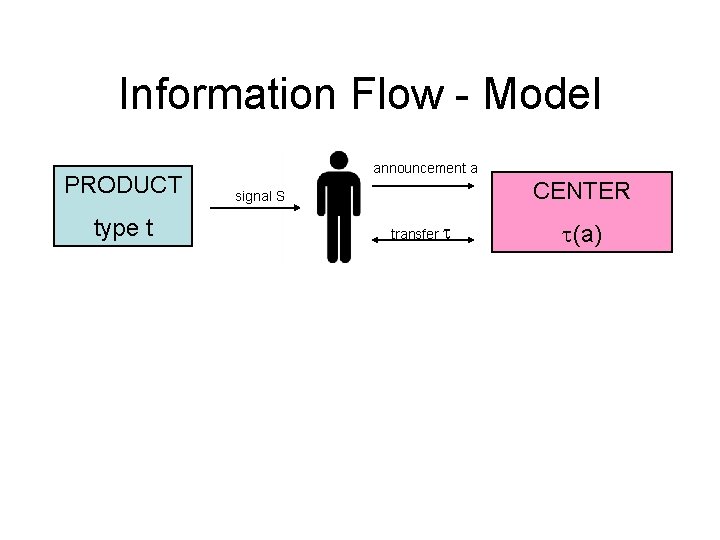

Information Flow - Model PRODUCT type t announcement a CENTER signal S transfer (a)

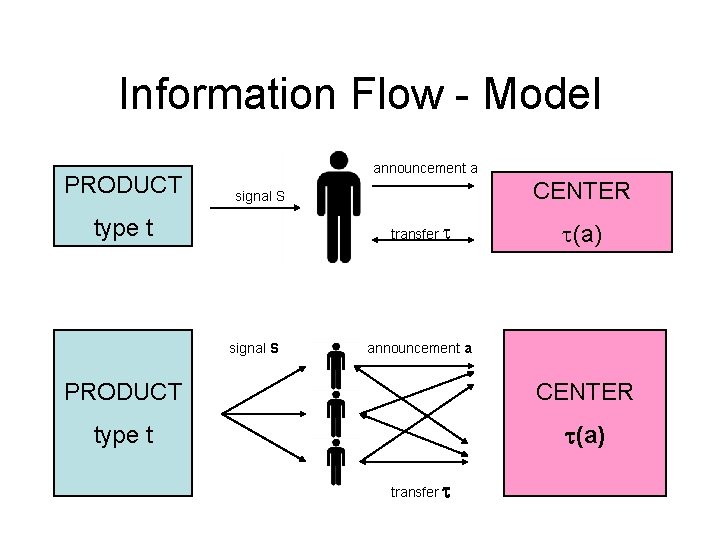

Information Flow - Model PRODUCT announcement a CENTER signal S type t transfer signal S (a) announcement a PRODUCT CENTER type t (a) transfer

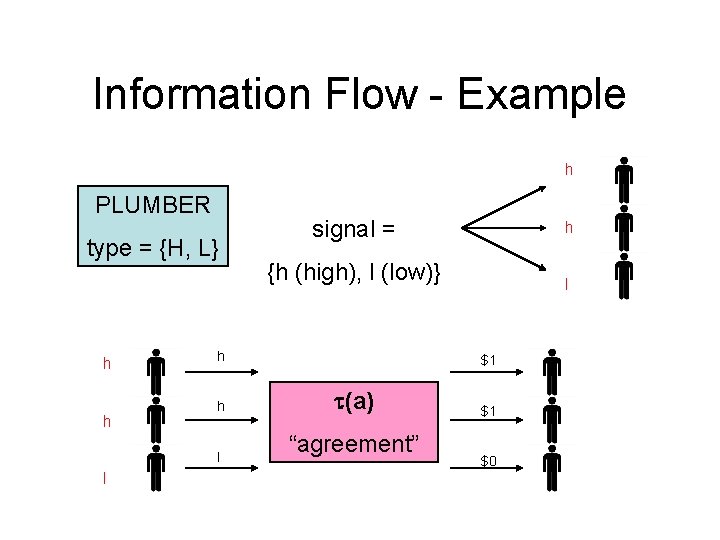

Information Flow - Example h PLUMBER type = {H, L} h h {h (high), l (low)} h l l signal = l $1 (a) “agreement” $1 $0

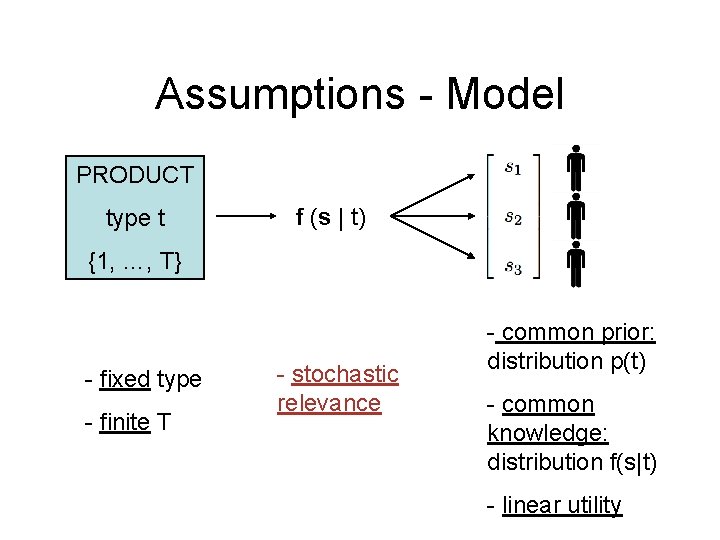

Assumptions - Model PRODUCT type t f (s | t) {1, …, T} - fixed type - finite T - stochastic relevance - common prior: distribution p(t) - common knowledge: distribution f(s|t) - linear utility

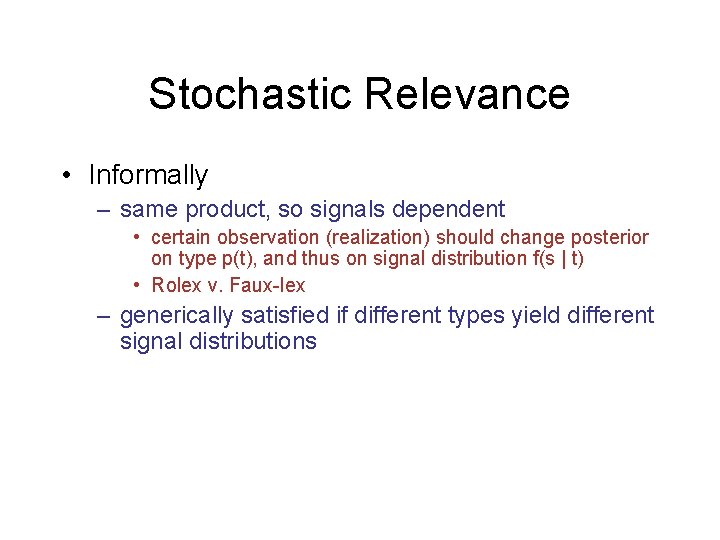

Stochastic Relevance • Informally – same product, so signals dependent • certain observation (realization) should change posterior on type p(t), and thus on signal distribution f(s | t) • Rolex v. Faux-lex – generically satisfied if different types yield different signal distributions

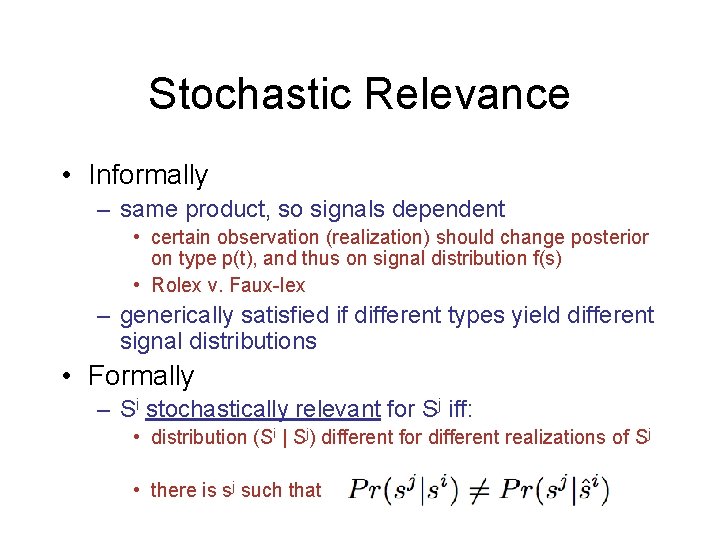

Stochastic Relevance • Informally – same product, so signals dependent • certain observation (realization) should change posterior on type p(t), and thus on signal distribution f(s) • Rolex v. Faux-lex – generically satisfied if different types yield different signal distributions • Formally – Si stochastically relevant for Sj iff: • distribution (Si | Sj) different for different realizations of Sj • there is sj such that

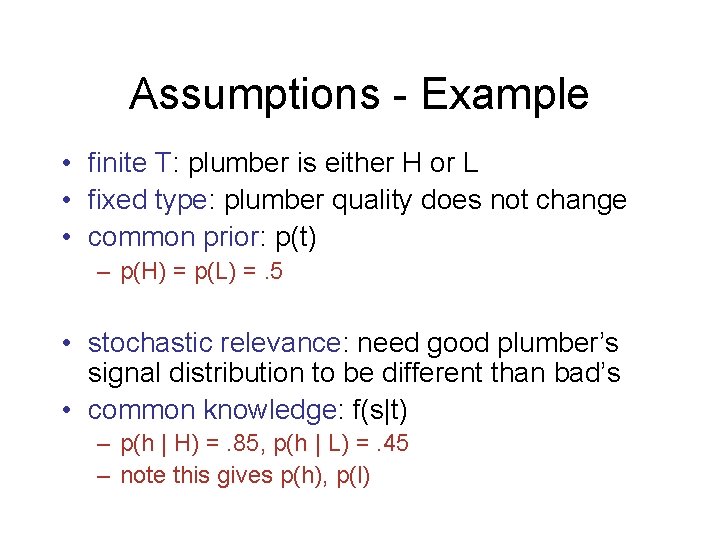

Assumptions - Example • finite T: plumber is either H or L • fixed type: plumber quality does not change • common prior: p(t) – p(H) = p(L) =. 5 • stochastic relevance: need good plumber’s signal distribution to be different than bad’s • common knowledge: f(s|t) – p(h | H) =. 85, p(h | L) =. 45 – note this gives p(h), p(l)

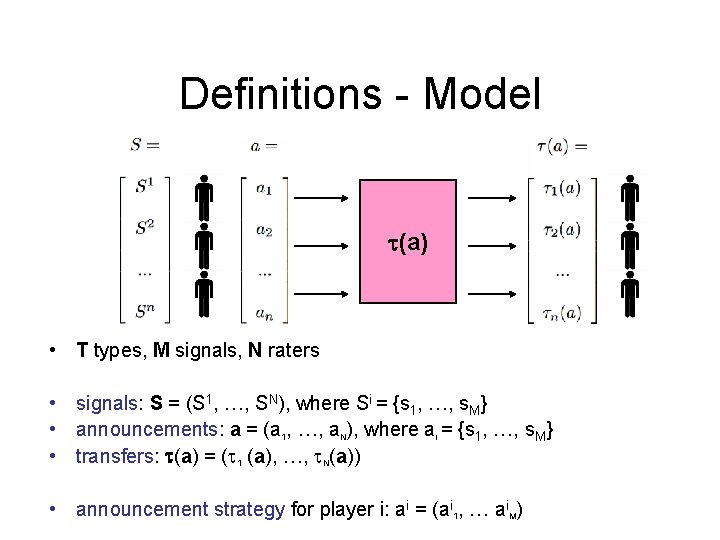

Definitions - Model (a) • T types, M signals, N raters • signals: S = (S 1, …, SN), where Si = {s 1, …, s. M} • announcements: a = (a 1, …, a. N), where ai = {s 1, …, s. M} • transfers: (a) = ( 1 (a), …, N(a)) • announcement strategy for player i: ai = (ai 1, … ai. M)

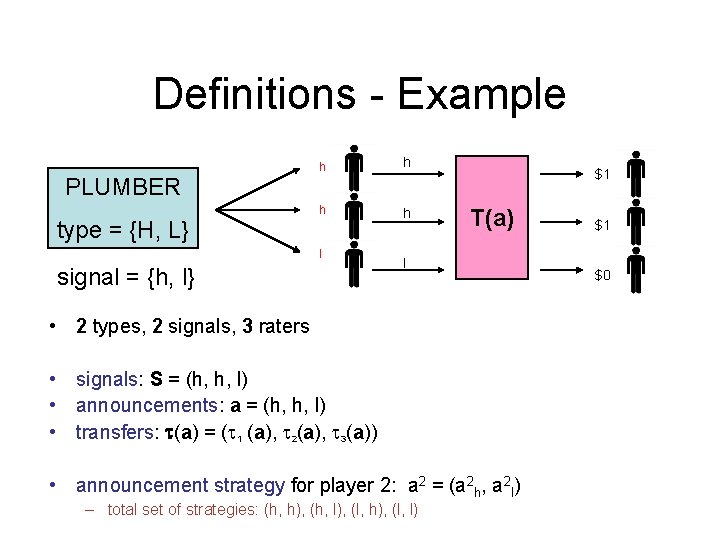

Definitions - Example PLUMBER type = {H, L} h h l signal = {h, l} $1 T(a) l • 2 types, 2 signals, 3 raters • signals: S = (h, h, l) • announcements: a = (h, h, l) • transfers: (a) = ( 1 (a), 2(a), 3(a)) • announcement strategy for player 2: a 2 = (a 2 h, a 2 l) – total set of strategies: (h, h), (h, l), (l, h), (l, l) $1 $0

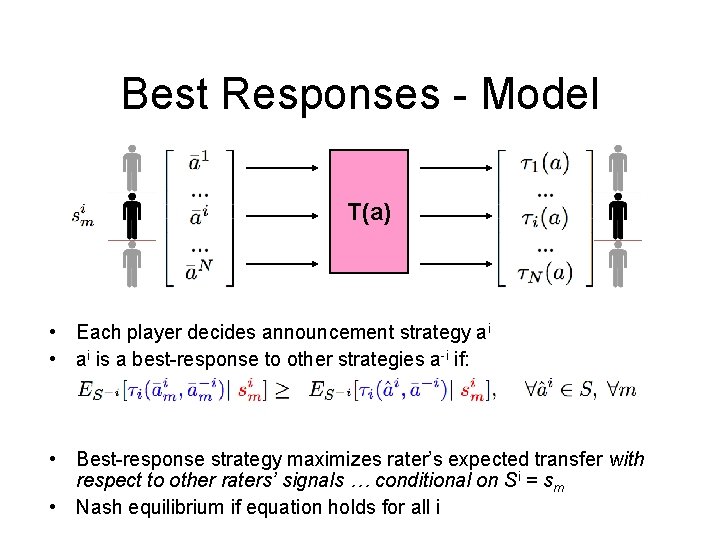

Best Responses - Model T(a) • Each player decides announcement strategy ai • ai is a best-response to other strategies a-i if: • Best-response strategy maximizes rater’s expected transfer with respect to other raters’ signals … conditional on Si = sm • Nash equilibrium if equation holds for all i

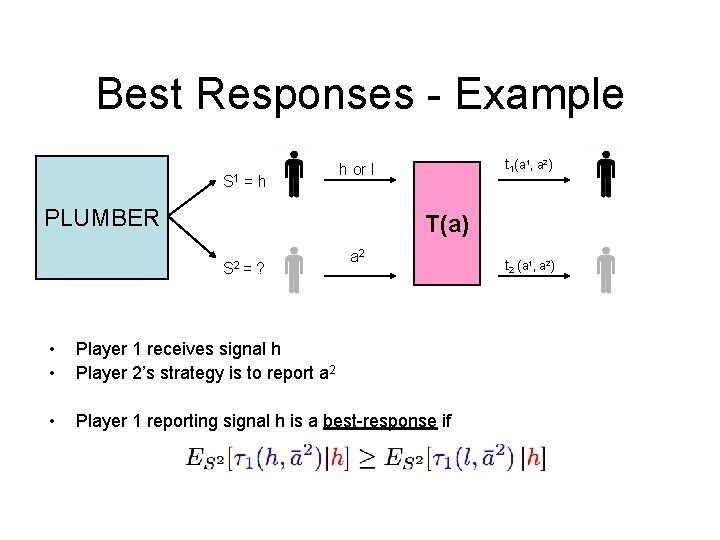

Best Responses - Example S 1 = h t 1(a 1, a 2) h or l PLUMBER T(a) S 2 = ? a 2 • • Player 1 receives signal h Player 2’s strategy is to report a 2 • Player 1 reporting signal h is a best-response if t 2 (a 1, a 2)

Peer Prediction • Find reward mechanism that induces honest reporting – where ai = Si for all i is a Nash equilibrium • Will need Proper Scoring Rules

Proper Scoring Rules • Definition: – for two variables Si and Sj, a scoring rule assigns to each announcement ai of Si a score for each realization of Sj – R ( sj | ai ) – proper if score maximized by the announcement of the true realization of Si

Applying Scoring Rules • Before: predictive markets (Hanson) – Si = Sj = reality, ai = agent report – R ( reality | report ) – Proper scoring rules ensure honest reports: ai = Si • stochastic relevance for private info and public signal • automatically satisfied – What if there’s no public signal?

Applying Scoring Rules • Before: predictive markets (Hanson) – Si = Sj = reality, ai = agent report – R ( reality | report ) – Proper scoring rules ensure honest reports: ai = Si • stochastic relevance for private info and public signal • automatically satisfied – What if there’s no public signal? Use other reports • Now: predictive peers – Si = my signal, Sj = your signal, ai = my report – R ( your report | my report )

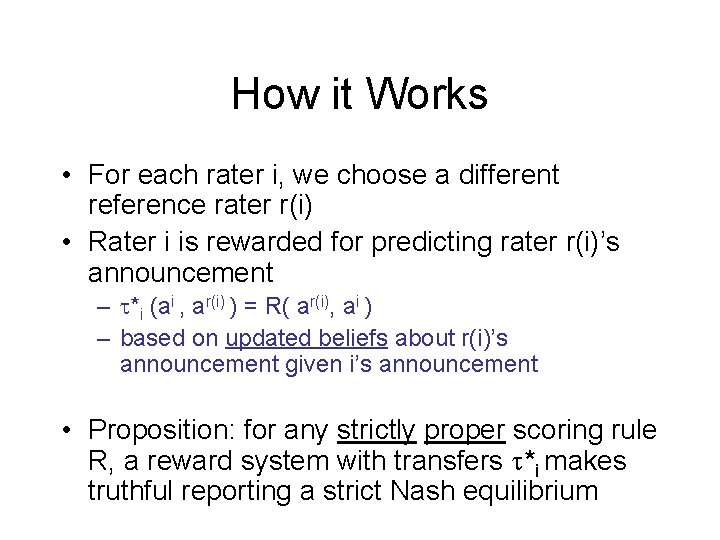

How it Works • For each rater i, we choose a different reference rater r(i) • Rater i is rewarded for predicting rater r(i)’s announcement – *i (ai , ar(i) ) = R( ar(i), ai ) – based on updated beliefs about r(i)’s announcement given i’s announcement • Proposition: for any strictly proper scoring rule R, a reward system with transfers *i makes truthful reporting a strict Nash equilibrium

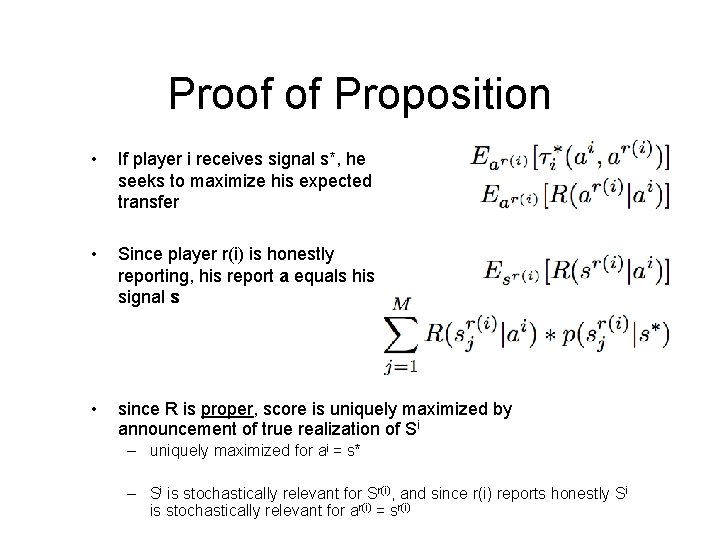

Proof of Proposition • If player i receives signal s*, he seeks to maximize his expected transfer • Since player r(i) is honestly reporting, his report a equals his signal s • since R is proper, score is uniquely maximized by announcement of true realization of Si – uniquely maximized for ai = s* – Si is stochastically relevant for Sr(i), and since r(i) reports honestly Si is stochastically relevant for ar(i) = sr(i)

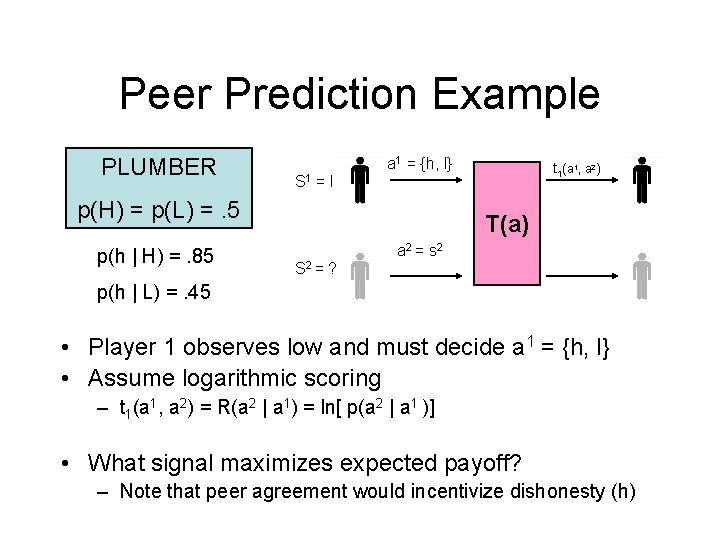

Peer Prediction Example PLUMBER a 1 = {h, l} S 1 =l p(H) = p(L) =. 5 p(h | H) =. 85 t 1(a 1, a 2) T(a) a 2 = s 2 S 2 = ? p(h | L) =. 45 • Player 1 observes low and must decide a 1 = {h, l} • Assume logarithmic scoring – t 1(a 1, a 2) = R(a 2 | a 1) = ln[ p(a 2 | a 1 )] • What signal maximizes expected payoff? – Note that peer agreement would incentivize dishonesty (h)

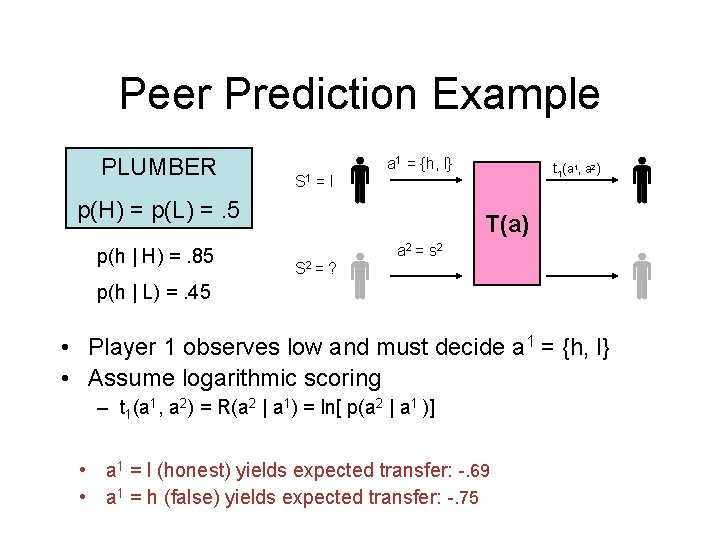

Peer Prediction Example PLUMBER a 1 = {h, l} S 1 =l p(H) = p(L) =. 5 p(h | H) =. 85 t 1(a 1, a 2) T(a) a 2 = s 2 S 2 = ? p(h | L) =. 45 • Player 1 observes low and must decide a 1 = {h, l} • Assume logarithmic scoring – t 1(a 1, a 2) = R(a 2 | a 1) = ln[ p(a 2 | a 1 )] • a 1 = l (honest) yields expected transfer: -. 69 • a 1 = h (false) yields expected transfer: -. 75

Things to Note • Players don’t have to perform complicated Bayesian reasoning if they: – trust the center to accurately compute posteriors – believe other players will report honestly • Not unique equilibrium – collusion

Primary Practical Concerns • Examples – – inducing effort: fixed cost c > 0 of reporting better information: users seek multiple samples participation constraints budget balancing

Primary Practical Concerns • Examples – – inducing effort: fixed cost c > 0 of reporting better information: users seek multiple samples participation constraints budget balancing • Basic idea: – affine rescaling (a*x + b) to overcome obstacle – preserves honesty incentive – increases budget … see 2 nd paper

Extensions to Model • Sequential reporting – allows immediate use of reports – let rater i predict report of rater i + 1 – scoring rule must reflect changed beliefs about product type due to (1, …, i - 1) reports

Extensions to Model • Sequential reporting – allows immediate use of reports – let rater i predict report of rater i + 1 – scoring rule must reflect changed beliefs about product type due to (1, …, i - 1) reports • Continuous signals – analytic comparison of three common rules – eliciting “coarse” reports from exact information • for two types, raters will choose closest bin (complicated)

Common Prior Assumption • Practical concern - how do we know p(t), ? – needed by center to compute payments – needed by raters to compute posteriors Ø define types with respect to past products, signals Ø types t = {1, …, 9} where f(h | t) = t/10 Ø for new product, p(t) based on past product signals Ø update beliefs with new signals Ø note f(s | t) given automatically

Common Prior Assumption • Practical concern - how do we know p(t)? • Theoretical concern - are p(t), f(s|t) public? – raters trust center to compute appropriate posterior distributions for reference rater’s signal – rater with private information has no guarantee • center will not report true posterior beliefs • rater might skew report to reflect appropriate posteriors Ø report both private information and announcement Ø two scoring mechanisms, one for distribution implied by private priors, another for distribution implied by announcement

Limitations • Collusion – could a subset of raters gain higher transfers? higher balanced transfers? – can such strategies: • overcome random pairings • avoid suspicious patterns • Multidimensional signals – economist with knowledge of computer science • Understanding/trust in the system – complicated Bayesian reasoning, payoff rules Ø rely on experts to ensure public confidence

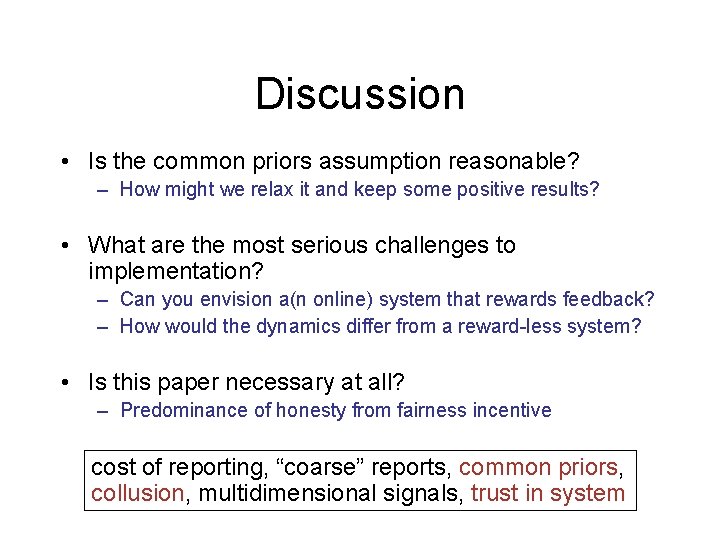

Discussion • Is the common priors assumption reasonable? – How might we relax it and keep some positive results? • What are the most serious challenges to implementation? – Can you envision a(n online) system that rewards feedback? – How would the dynamics differ from a reward-less system? • Is this paper necessary at all? – Predominance of honesty from fairness incentive cost of reporting, “coarse” reports, common priors, collusion, multidimensional signals, trust in system

- Slides: 37