EKONOMETRIKA BASIC ECONOMETRICS Damodar N Gujarati Fourth Edition

EKONOMETRIKA BASIC ECONOMETRICS Damodar N Gujarati Fourth Edition, International Edition Mc. Graw-Hill Companies, Inc. , 2003. 17/09/2020 ghozalimaski@ymail. com 1

POKOK-POKOK BAHASAN Single - Equation Models: 1. Multiple Regression * The Problem of Estimation * The Problem of Inference 2. Dummy Variables Regression Model 3. Relaxing The Assumptions of CLRM * Multicollinearity * Heteroscedasticity * Autocorrelation 17/09/2020 ghozalimaski@ymail. com 2

4. Econometric Modeling : Model Specification and Diagnostic Testing 5. Qualitative Response Regression Models 6. Panel Data Regression Models 7. Dynamic Econometric Models : Autoregressive an Distributed Lag Models 8. Simultaneous – Equation Models 17/09/2020 ghozalimaski@ymail. com 3

PENDAHULUAN Apakah Ekonometrika itu ? Bidang ilmu yang melakukan evaluasi teori ekonomi secara kuantitatif Ruang Lingkup Ekonometri Ilmu ekonometri didefinisikan sebagai ilmu sosial yang menerapkan peralatan teori ekonomi, matematik, dan statistik inferensi untuk menganalisis fenomena ekonomi (Arthur, S Goldberger, 1964) 17/09/2020 ghozalimaski@ymail. com 4

Ilmu ekonometri juga didefinisikan sebagai suatu analisis kuantitatif fenomena ekonomi nyata berdasarkan perkembangan teori dan pengamatan yang dikaitkan dengan metode-metode inferensi yang sesuai (Samuelson, K. , dan Stones, 1954) Ekononometri adalah suatu cabang ilmu yang menerapkan kombinasi ilmu ekonomi, matematika dan statistik. Ekonometri seolah-olah merupakan suatu perbatasan kedua cabang ilmu, dengan kelebihan dan kekurangannya (J. Tinbergen, 1953 ) 17/09/2020 ghozalimaski@ymail. com 5

METODOLOGI EKONOMETRIKA § Spesifikasi § Disain sampel § Estimasi § Verifikasi § Aplikasi 17/09/2020 ghozalimaski@ymail. com 6

TUJUAN EKONOMETRIKA Menguji Validitas teori-teori ekonomi. § Digunakan untuk keperluan kebijakan ekonomi. § Digunakan untuk meramalkan nilai besaran-besaran ekonomi. § 17/09/2020 ghozalimaski@ymail. com 7

MULTIPLE REGRESSION ANALYSIS THE THREE VARIABLE MODEL: NOTATION AND ASSUMPTION 17/09/2020 ghozalimaski@ymail. com 8

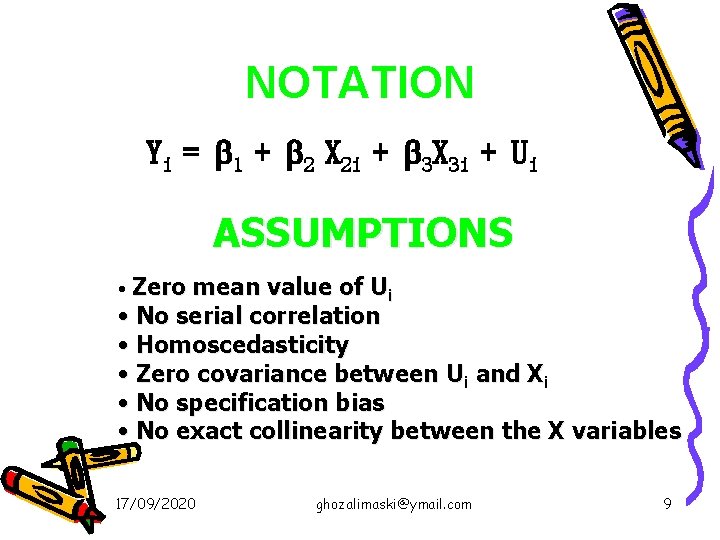

NOTATION Yi = 1 + 2 X 2 i + 3 X 3 i + Ui ASSUMPTIONS Zero mean value of Ui • No serial correlation • Homoscedasticity • Zero covariance between Ui and Xi • No specification bias • No exact collinearity between the X variables • 17/09/2020 ghozalimaski@ymail. com 9

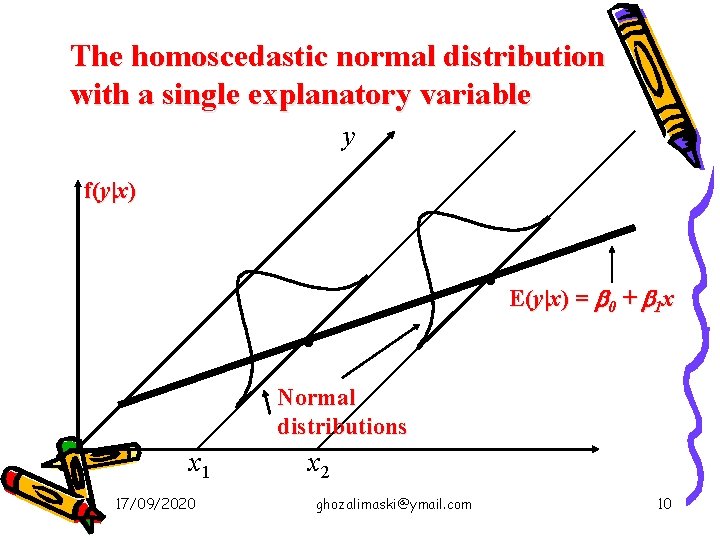

The homoscedastic normal distribution with a single explanatory variable y f(y|x) . E(y|x) = b + b x . 0 1 Normal distributions x 1 17/09/2020 x 2 ghozalimaski@ymail. com 10

ESTIMATION 17/09/2020 ghozalimaski@ymail. com 11

Beta Coefficients • Occasional you’ll see reference to a “standardized coefficient” or “beta coefficient” which has a specific meaning • Idea is to replace y and each x variable with a standardized version – i. e. subtract mean and divide by standard deviation • Coefficient reflects standard deviation of y for a one standard deviation change in x 17/09/2020 ghozalimaski@ymail. com 12

VARIANCE AND STANDARD ERROR 17/09/2020 ghozalimaski@ymail. com 13

The t Test 17/09/2020 ghozalimaski@ymail. com 14

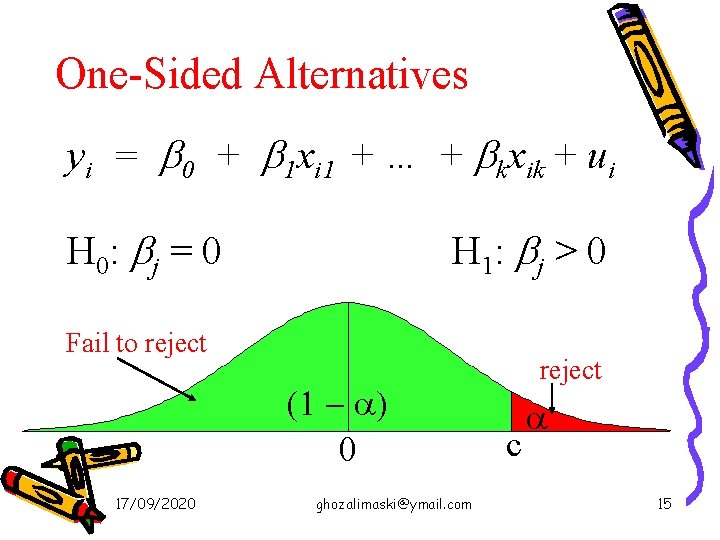

One-Sided Alternatives yi = b 0 + b 1 xi 1 + … + bkxik + ui H 0: bj = 0 H 1: b j > 0 Fail to reject (1 - a) 0 17/09/2020 ghozalimaski@ymail. com reject c a 15

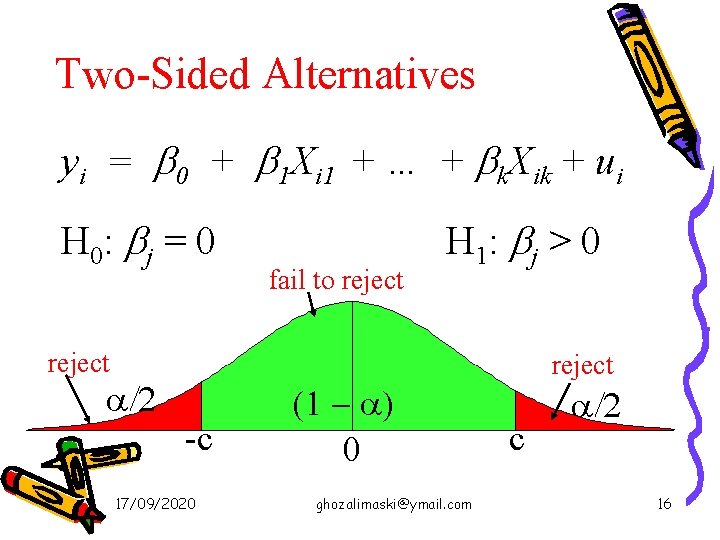

Two-Sided Alternatives yi = b 0 + b 1 Xi 1 + … + bk. Xik + ui H 0: bj = 0 fail to reject H 1: b j > 0 reject a/2 -c 17/09/2020 (1 - a) 0 ghozalimaski@ymail. com reject c a/2 16

THE COEFFICIENT OF DETERMINATION A MEASURE OF “GOODNESS OF FIT” Verbally, R-square measure the proportion or percentage of the total variation in Y explained by the regression model. 17/09/2020 ghozalimaski@ymail. com 17

Adjusted R-Squared • Recall that the R 2 will always increase as more variables are added to the model • The adjusted R 2 takes into account the number of variables in a model, and may decrease 17/09/2020 ghozalimaski@ymail. com 18

• It’s easy to see that the adjusted R 2 is just (1 – R 2)(n – 1) / (n – k – 1), but most packages will give you both R 2 and adj-R 2 • You can compare the fit of 2 models (with the same y) by comparing the adj-R 2 • You cannot use the adj-R 2 to compare models with different y’s (e. g. y vs. ln(y)) 17/09/2020 ghozalimaski@ymail. com 19

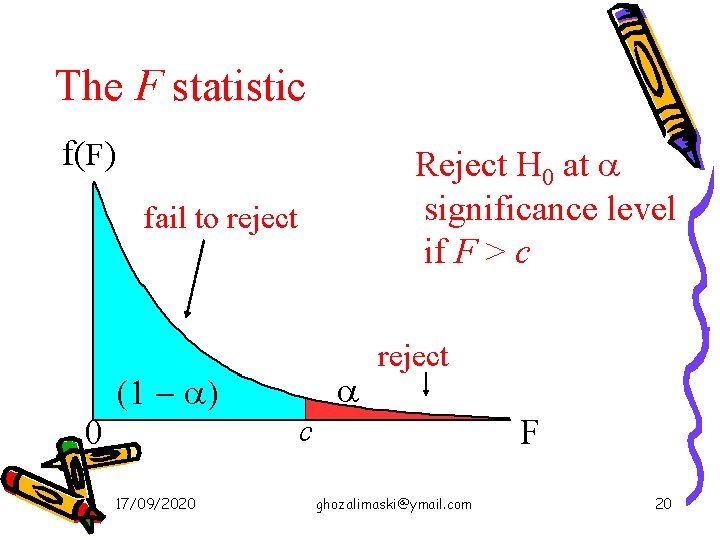

The F statistic f(F) Reject H 0 at a significance level if F > c fail to reject a (1 - a) reject c 0 17/09/2020 F ghozalimaski@ymail. com 20

Restricted Least squares: Testing Linear Equality Restrictions m = number of linear restriction ( 1 ) k = number of parameters in the UR 17/09/2020 ghozalimaski@ymail. com 21

TESTING THE STABILITY OF REGRESSION COEFFICIENTS : WHEN INCREASING THE SIZE OF THE SAMPLE IS SMALL When increasing the size of the sample is LARGE, see the Chow test, nex slide…. . 17/09/2020 ghozalimaski@ymail. com 22

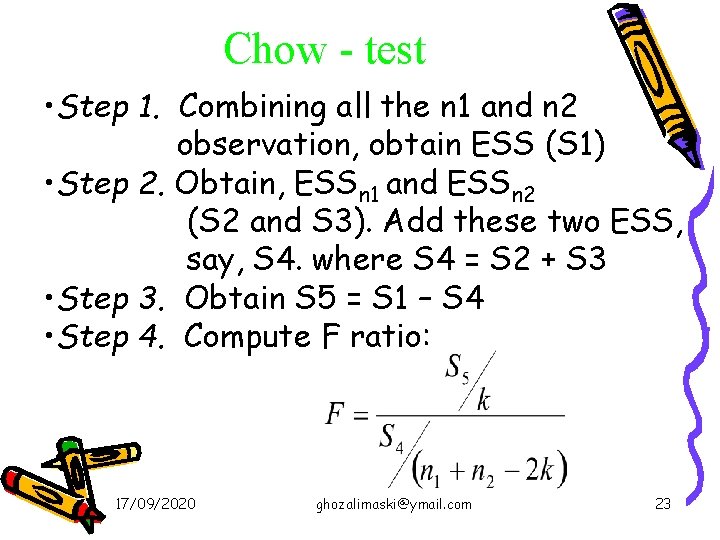

Chow - test • Step 1. Combining all the n 1 and n 2 observation, obtain ESS (S 1) • Step 2. Obtain, ESSn 1 and ESSn 2 (S 2 and S 3). Add these two ESS, say, S 4. where S 4 = S 2 + S 3 • Step 3. Obtain S 5 = S 1 – S 4 • Step 4. Compute F ratio: 17/09/2020 ghozalimaski@ymail. com 23

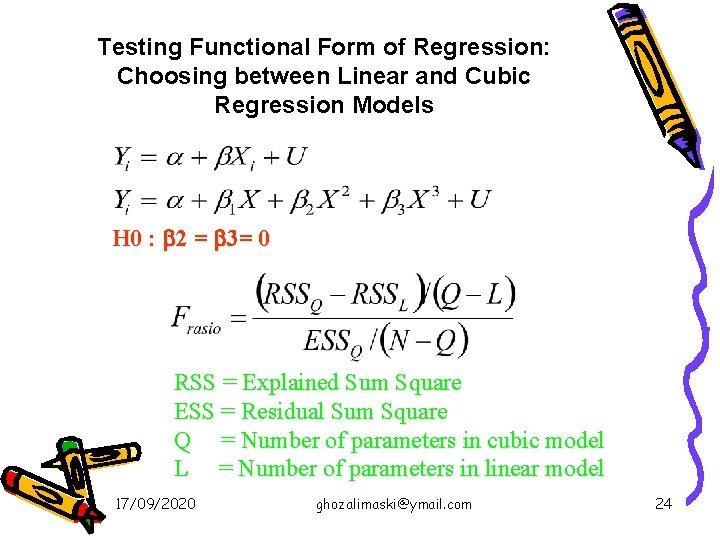

Testing Functional Form of Regression: Choosing between Linear and Cubic Regression Models H 0 : 2 = 3= 0 RSS = Explained Sum Square ESS = Residual Sum Square Q = Number of parameters in cubic model L = Number of parameters in linear model 17/09/2020 ghozalimaski@ymail. com 24

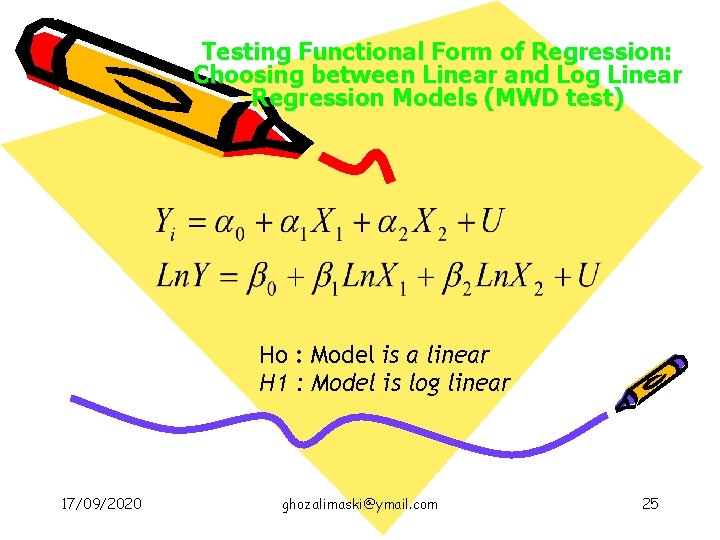

Testing Functional Form of Regression: Choosing between Linear and Log Linear Regression Models (MWD test) Ho : Model is a linear H 1 : Model is log linear 17/09/2020 ghozalimaski@ymail. com 25

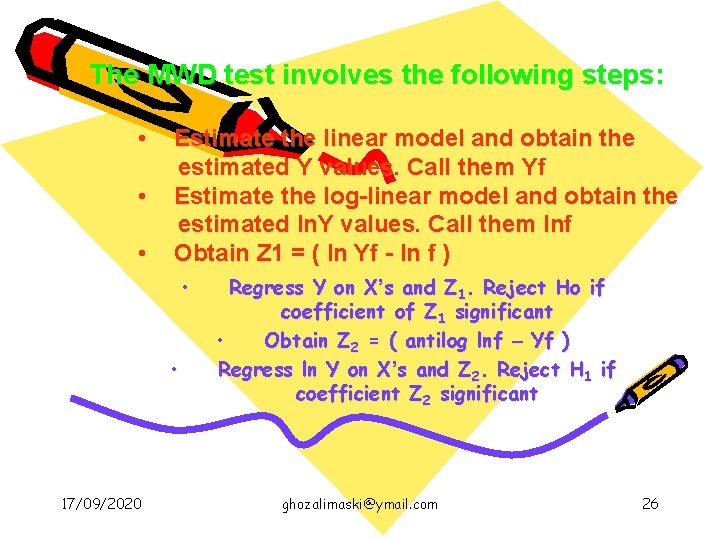

The MWD test involves the following steps: • • • Estimate the linear model and obtain the estimated Y values. Call them Yf Estimate the log-linear model and obtain the estimated ln. Y values. Call them lnf Obtain Z 1 = ( ln Yf - ln f ) • • 17/09/2020 Regress Y on X’s and Z 1. Reject Ho if coefficient of Z 1 significant • Obtain Z 2 = ( antilog lnf – Yf ) Regress ln Y on X’s and Z 2. Reject H 1 if coefficient Z 2 significant ghozalimaski@ymail. com 26

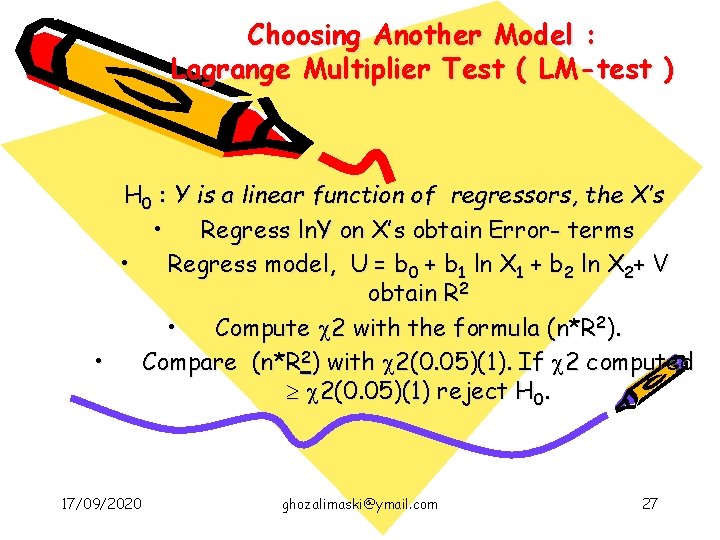

Choosing Another Model : Lagrange Multiplier Test ( LM-test ) H 0 : Y is a linear function of regressors, the X’s • Regress ln. Y on X’s obtain Error- terms • Regress model, U = b 0 + b 1 ln X 1 + b 2 ln X 2+ V obtain R 2 • Compute 2 with the formula (n*R 2). • Compare (n*R 2) with 2(0. 05)(1). If 2 computed 2(0. 05)(1) reject H 0. 17/09/2020 ghozalimaski@ymail. com 27

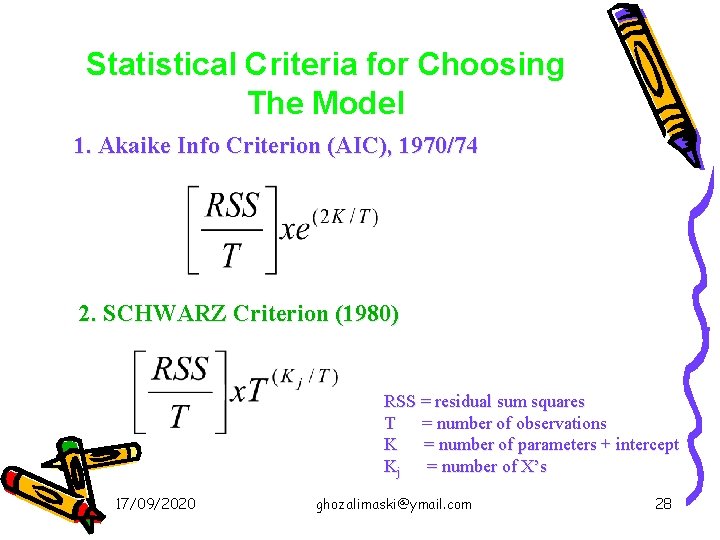

Statistical Criteria for Choosing The Model 1. Akaike Info Criterion (AIC), 1970/74 2. SCHWARZ Criterion (1980) RSS = residual sum squares T = number of observations K = number of parameters + intercept Kj = number of X’s 17/09/2020 ghozalimaski@ymail. com 28

Multiple Regression Analysis y = b 0 + b 1 x 1 + b 2 x 2 +. . . bkxk + u Dummy Variables 17/09/2020 ghozalimaski@ymail. com 29

Dummy Variables A dummy variable is a variable that takes on the value 1 or 0 Examples: male (= 1 if are male, 0 otherwise), south (= 1 if in the south, 0 otherwise), etc. Dummy variables are also called binary variables, for obvious reasons 17/09/2020 ghozalimaski@ymail. com 30

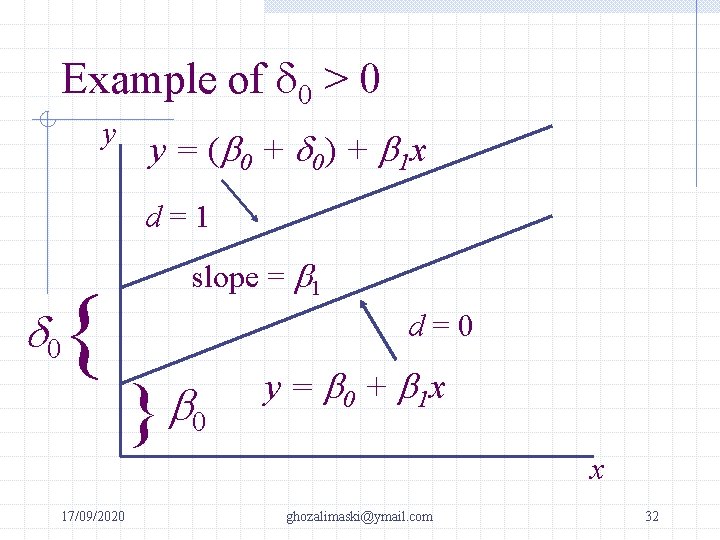

A Dummy Independent Variable Consider a simple model with one continuous variable (x) and one dummy (d) y = b 0 + d 0 d + b 1 x + u This can be interpreted as an intercept shift If d = 0, then y = b 0 + b 1 x + u If d = 1, then y = (b 0 + d 0) + b 1 x + u The case of d = 0 is the base group 17/09/2020 ghozalimaski@ymail. com 31

Example of d 0 > 0 y y = ( b 0 + d 0) + b 1 x d=1 { slope = b 1 d 0 d=0 b } 0 y = b 0 + b 1 x x 17/09/2020 ghozalimaski@ymail. com 32

Dummies for Multiple Categories We can use dummy variables to control for something with multiple categories Suppose everyone in your data is either a HS dropout, HS grad only, or college grad To compare HS and college grads to HS dropouts, include 2 dummy variables hsgrad = 1 if HS grad only, 0 otherwise; and colgrad = 1 if college grad, 0 otherwise 17/09/2020 ghozalimaski@ymail. com 33

Multiple Categories (cont) Any categorical variable can be turned into a set of dummy variables Because the base group is represented by the intercept, if there are n categories there should be “n – 1” dummy variables If there a lot of categories, it may make sense to group some together Example: top 10 ranking, 11 – 25, etc. 17/09/2020 ghozalimaski@ymail. com 34

Interactions Among Dummies Interacting dummy variables is like subdividing the group Example: have dummies for male, as well as hsgrad and colgrad Add male*hsgrad and male*colgrad, for a total of 5 dummy variables –> 6 categories Base group is female HS dropouts hsgrad is for female HS grads, colgrad is for female college grads The interactions reflect male HS grads and male college grads 17/09/2020 ghozalimaski@ymail. com 35

More on Dummy Interactions Formally, the model is y = b 0 + d 1 male + d 2 hsgrad + d 3 colgrad + d 4 male*hsgrad + d 5 male*colgrad + b 1 x + u, then, for example: If male = 0 and hsgrad = 0 and colgrad = 0 y = b 0 + b 1 x + u If male = 0 and hsgrad = 1 and colgrad = 0 y = b 0 + d 2 hsgrad + b 1 x + u If male = 1 and hsgrad = 0 and colgrad = 1 y = b 0 + d 1 male + d 3 colgrad + d 5 male*colgrad + b 1 x +u 17/09/2020 ghozalimaski@ymail. com 36

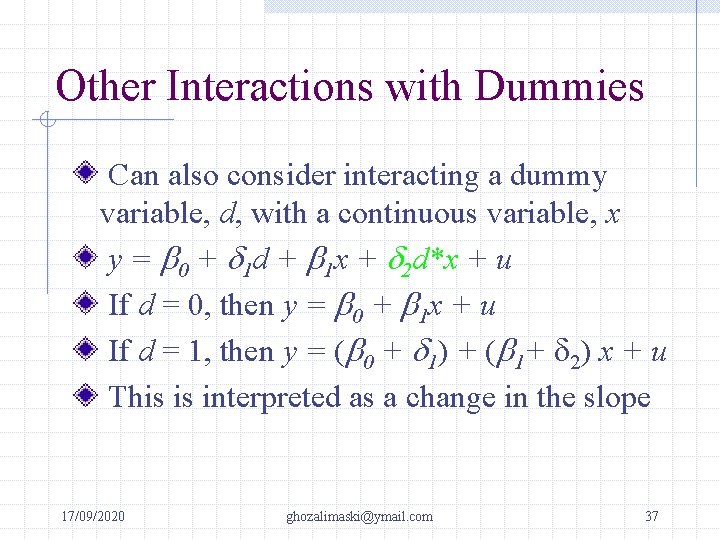

Other Interactions with Dummies Can also consider interacting a dummy variable, d, with a continuous variable, x y = b 0 + d 1 d + b 1 x + d 2 d*x + u If d = 0, then y = b 0 + b 1 x + u If d = 1, then y = (b 0 + d 1) + (b 1+ d 2) x + u This is interpreted as a change in the slope 17/09/2020 ghozalimaski@ymail. com 37

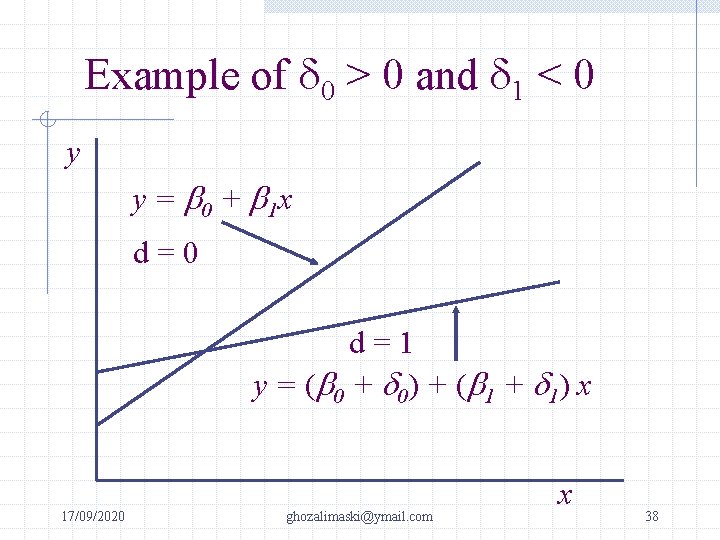

Example of d 0 > 0 and d 1 < 0 y y = b 0 + b 1 x d=0 d=1 y = (b 0 + d 0) + (b 1 + d 1) x 17/09/2020 ghozalimaski@ymail. com x 38

Testing for Differences Across Groups Testing whether a regression function is different for one group versus another can be thought of as simply testing for the joint significance of the dummy and its interactions with all other x variables So, you can estimate the model with all the interactions and without and form an F statistic, but this could be unwieldy 17/09/2020 ghozalimaski@ymail. com 39

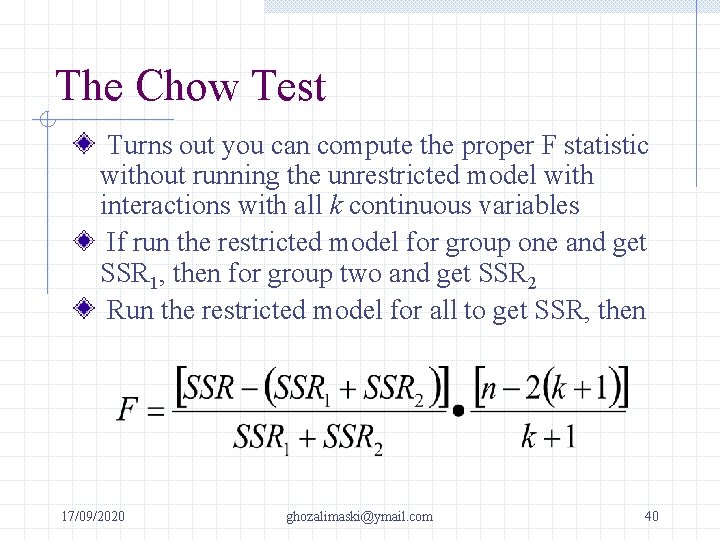

The Chow Test Turns out you can compute the proper F statistic without running the unrestricted model with interactions with all k continuous variables If run the restricted model for group one and get SSR 1, then for group two and get SSR 2 Run the restricted model for all to get SSR, then 17/09/2020 ghozalimaski@ymail. com 40

The Chow Test (continued) The Chow test is really just a simple F test for exclusion restrictions, but we have realized that SSRur = SSR 1 + SSR 2 Note, we have k + 1 restrictions (each of the slope coefficients and the intercept) Note the unrestricted model would estimate 2 different intercepts and 2 different slope coefficients, so the df is n – 2 k – 2 17/09/2020 ghozalimaski@ymail. com 41

MULTICOLLINEARITY The term multicollinearity is due to Ragnar Frisch. Originally it meant the existence of a perfect or exact linear relationship among some or all explanatory variables of a regression model. It does not rule out nonlinear relationships among them. Why does the classical linear regression model assume that there is no multicollinearity among the X’s? The reasoning is : if multicollinearity is perfect, the regression coefficients of the X’s are indeterminate and their standard errors are infinite. if multicollinearity is less than perfect, the regression coefficients, although determinate, posses large standard errors. 17/09/2020 ghozalimaski@ymail. com 42

SOURCES OF MULTICOLLINEARITY The data collection method employed, for example, sampling over a limited range of the values taken by the regressors in the population. Constraints on the model or in the population being sampled. Model specification, adding polynomial terms to the model. An over determined model, the model has more X’s than the number of observations 17/09/2020 ghozalimaski@ymail. com 43

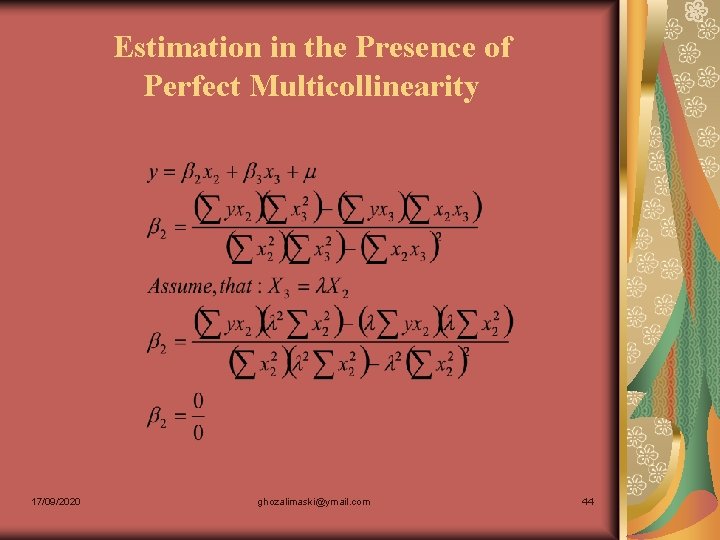

Estimation in the Presence of Perfect Multicollinearity 17/09/2020 ghozalimaski@ymail. com 44

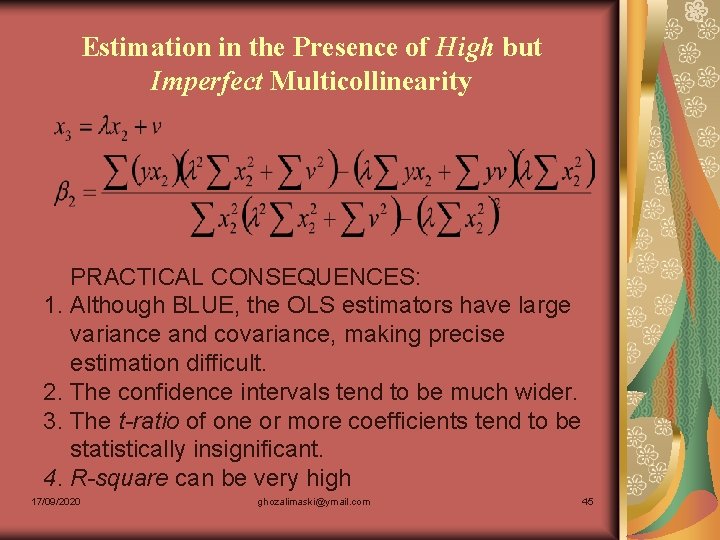

Estimation in the Presence of High but Imperfect Multicollinearity PRACTICAL CONSEQUENCES: 1. Although BLUE, the OLS estimators have large variance and covariance, making precise estimation difficult. 2. The confidence intervals tend to be much wider. 3. The t-ratio of one or more coefficients tend to be statistically insignificant. 4. R-square can be very high 17/09/2020 ghozalimaski@ymail. com 45

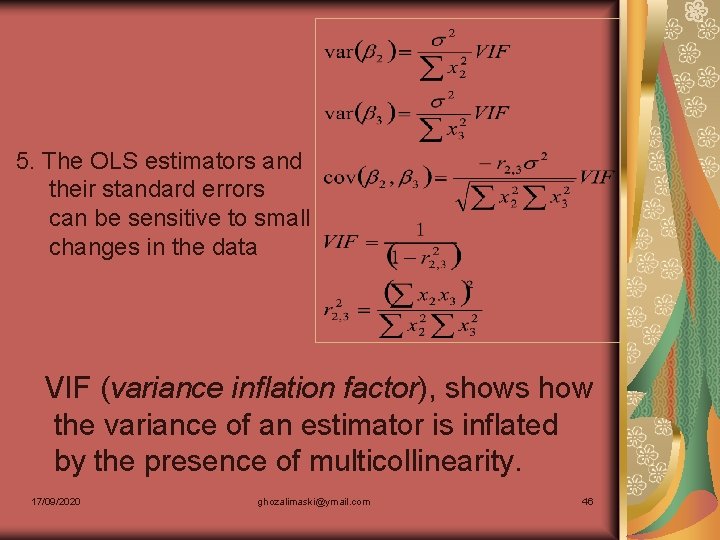

5. The OLS estimators and their standard errors can be sensitive to small changes in the data VIF (variance inflation factor), shows how the variance of an estimator is inflated by the presence of multicollinearity. 17/09/2020 ghozalimaski@ymail. com 46

Detection of Multicollinearity High R-square but few significant t-ratio High pair-wise correlation among regressors Examination of partial correlations (Farrar and Glauber) Auxiliary regressions (Fi) Klein’s rule of thumb (R 2 aux; overall R 2) Eigenvalues and condition index Tolerance and variance inflation factor 17/09/2020 ghozalimaski@ymail. com 47

Remedial Measures A priori information Combining cross-sectional and time-series data Dropping a variable(s) and specification bias Transformation of variables Additional or new data Reducing collinearity in polynomial regressions Factor analysis, principal component and ridge regression 17/09/2020 ghozalimaski@ymail. com 48

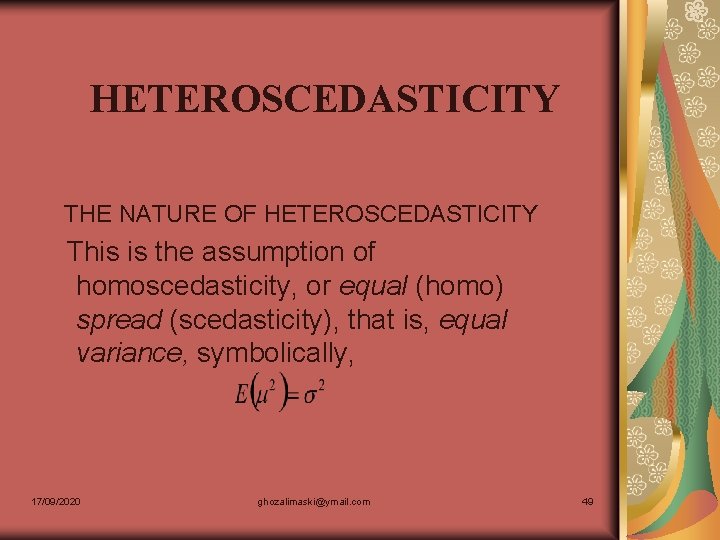

HETEROSCEDASTICITY THE NATURE OF HETEROSCEDASTICITY This is the assumption of homoscedasticity, or equal (homo) spread (scedasticity), that is, equal variance, symbolically, 17/09/2020 ghozalimaski@ymail. com 49

There are several reason why variance of error-terms may be variable Error-learning models Discretionary income As data collecting technique improve Outliers Violating assumption 9 of CLRM, the regression model is correctly specified 17/09/2020 ghozalimaski@ymail. com 50

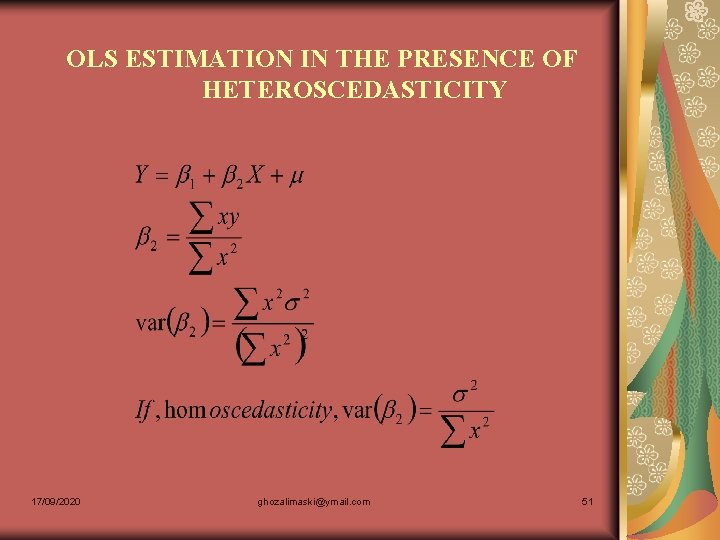

OLS ESTIMATION IN THE PRESENCE OF HETEROSCEDASTICITY 17/09/2020 ghozalimaski@ymail. com 51

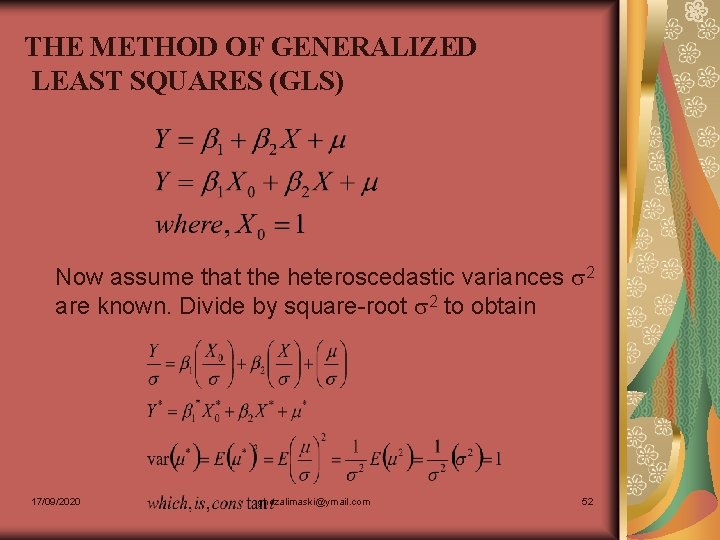

THE METHOD OF GENERALIZED LEAST SQUARES (GLS) Now assume that the heteroscedastic variances 2 are known. Divide by square-root 2 to obtain 17/09/2020 ghozalimaski@ymail. com 52

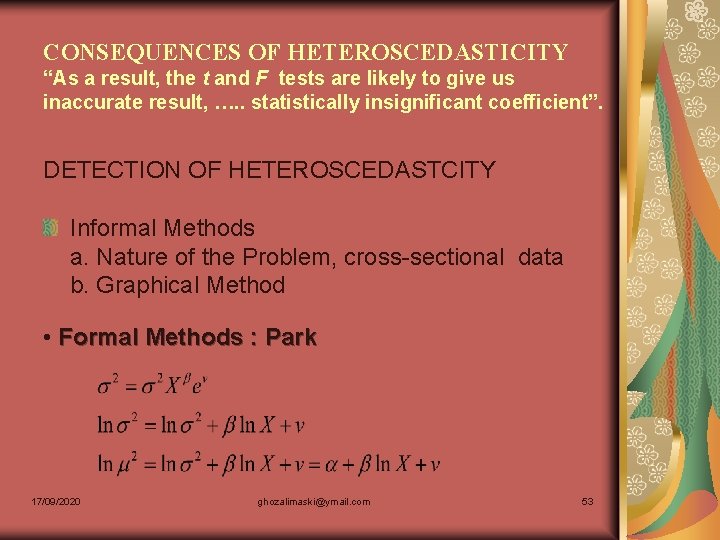

CONSEQUENCES OF HETEROSCEDASTICITY “As a result, the t and F tests are likely to give us inaccurate result, …. . statistically insignificant coefficient”. DETECTION OF HETEROSCEDASTCITY Informal Methods a. Nature of the Problem, cross-sectional data b. Graphical Method • Formal Methods : Park 17/09/2020 ghozalimaski@ymail. com 53

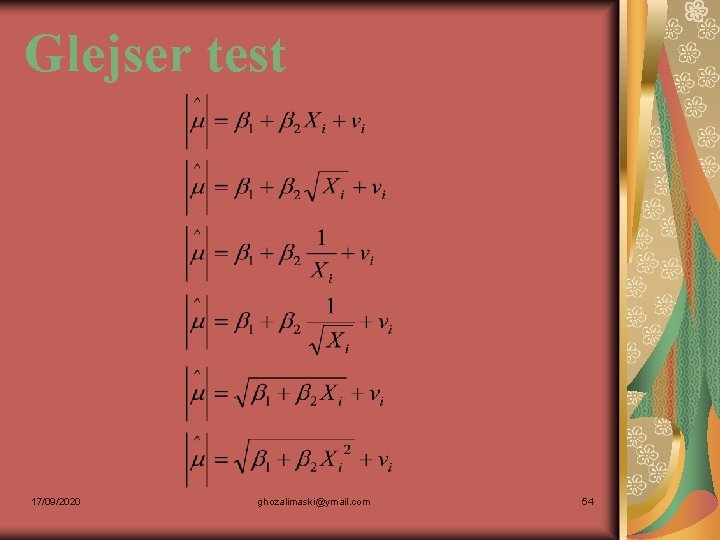

Glejser test 17/09/2020 ghozalimaski@ymail. com 54

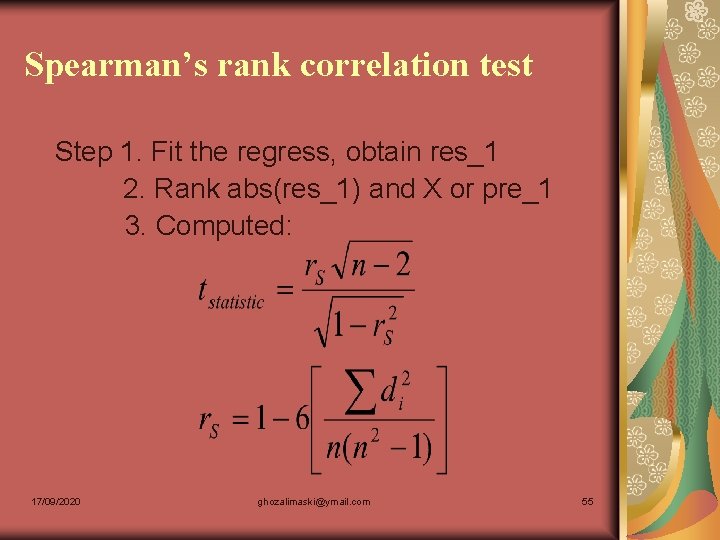

Spearman’s rank correlation test Step 1. Fit the regress, obtain res_1 2. Rank abs(res_1) and X or pre_1 3. Computed: 17/09/2020 ghozalimaski@ymail. com 55

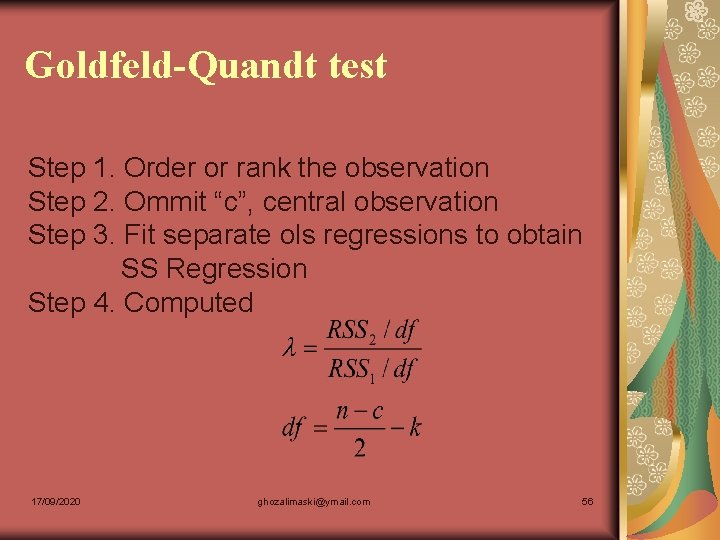

Goldfeld-Quandt test Step 1. Order or rank the observation Step 2. Ommit “c”, central observation Step 3. Fit separate ols regressions to obtain SS Regression Step 4. Computed 17/09/2020 ghozalimaski@ymail. com 56

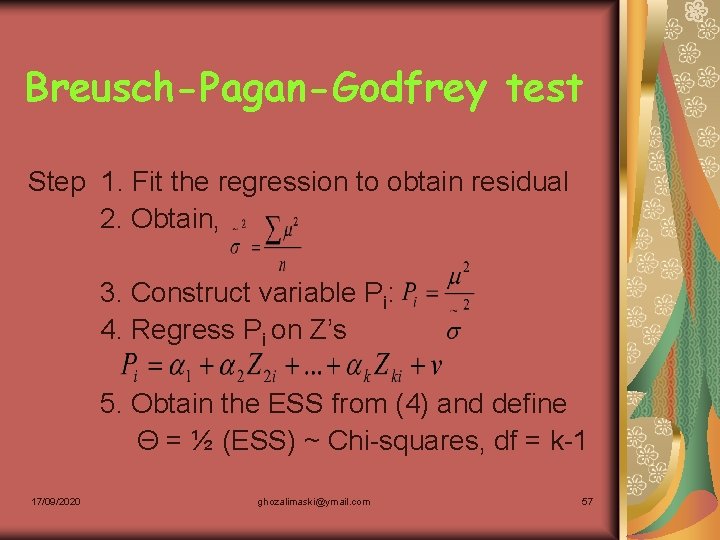

Breusch-Pagan-Godfrey test Step 1. Fit the regression to obtain residual 2. Obtain, 3. Construct variable Pi: 4. Regress Pi on Z’s 5. Obtain the ESS from (4) and define Θ = ½ (ESS) ~ Chi-squares, df = k-1 17/09/2020 ghozalimaski@ymail. com 57

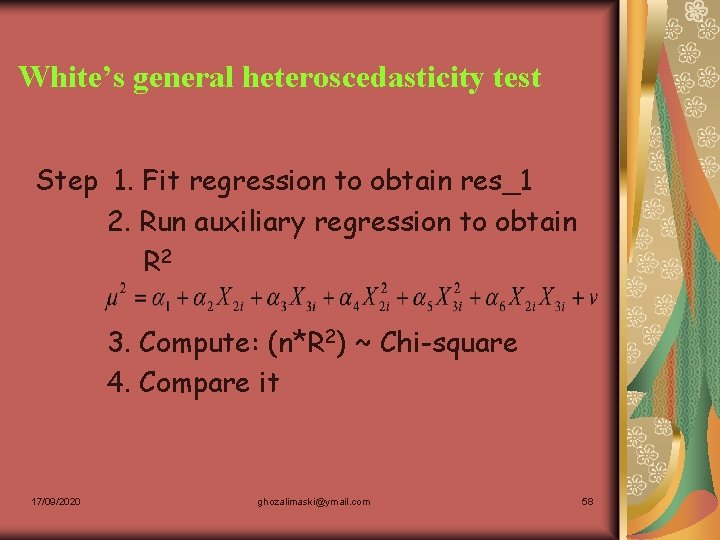

White’s general heteroscedasticity test Step 1. Fit regression to obtain res_1 2. Run auxiliary regression to obtain R 2 3. Compute: (n*R 2) ~ Chi-square 4. Compare it 17/09/2020 ghozalimaski@ymail. com 58

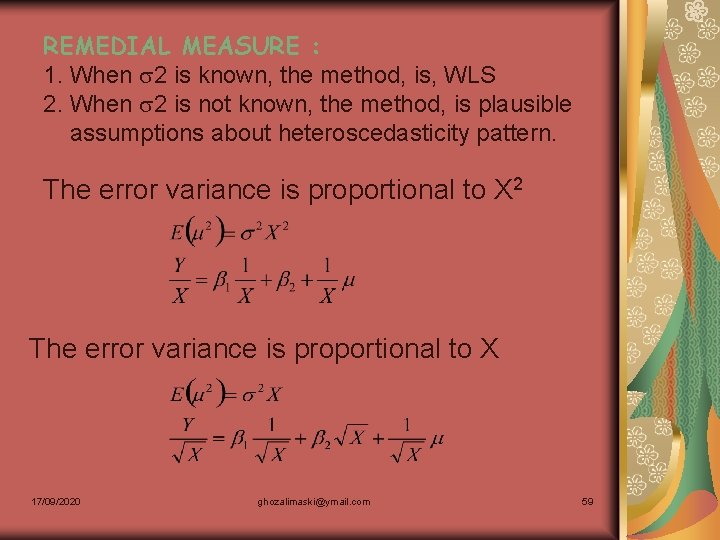

REMEDIAL MEASURE : 1. When 2 is known, the method, is, WLS 2. When 2 is not known, the method, is plausible assumptions about heteroscedasticity pattern. The error variance is proportional to X 2 The error variance is proportional to X 17/09/2020 ghozalimaski@ymail. com 59

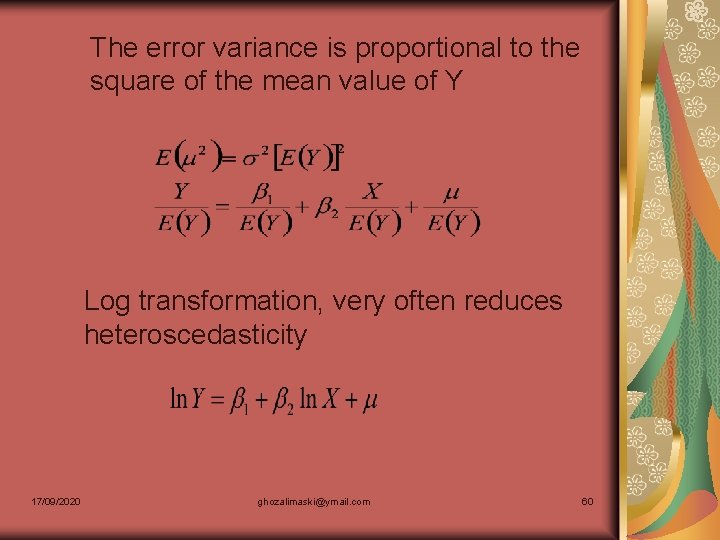

The error variance is proportional to the square of the mean value of Y Log transformation, very often reduces heteroscedasticity 17/09/2020 ghozalimaski@ymail. com 60

AUTOCORRELATION The term autocorrelation may be defined as correlation between members of series of observations ordered in time (as in time series data) or space (as in cross-sectional data). In the regression context, the classical linear regression model assumes that such autocorrelation does not exist in the disturbances . Symbolically, 17/09/2020 ghozalimaski@ymail. com 61

There are several reason, why does serial correlation occur? Inertia Specification bias: excluded variables case Specification bias: incorrect functional form Cobweb phenomenon Lags Manipulation of data 17/09/2020 ghozalimaski@ymail. com 62

CONCEQUENCES OF AUTOCORRELATION The OLS estimators are unbiased The variance , is underestimate The variance OLS estimators are to be larger The prediction based on the OLS estimators will be inefficient 17/09/2020 ghozalimaski@ymail. com 63

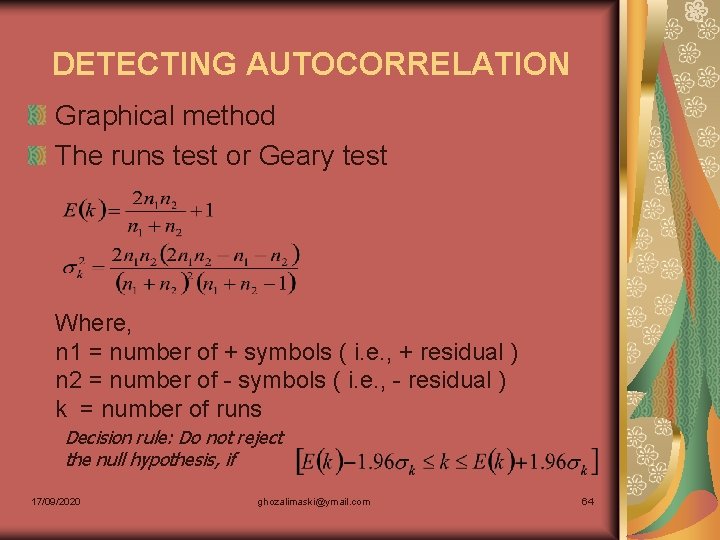

DETECTING AUTOCORRELATION Graphical method The runs test or Geary test Where, n 1 = number of + symbols ( i. e. , + residual ) n 2 = number of - symbols ( i. e. , - residual ) k = number of runs Decision rule: Do not reject the null hypothesis, if 17/09/2020 ghozalimaski@ymail. com 64

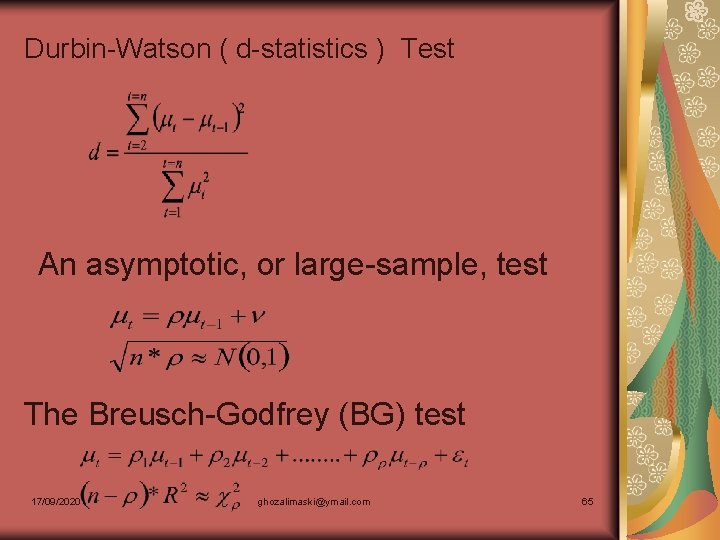

Durbin-Watson ( d-statistics ) Test An asymptotic, or large-sample, test The Breusch-Godfrey (BG) test 17/09/2020 ghozalimaski@ymail. com 65

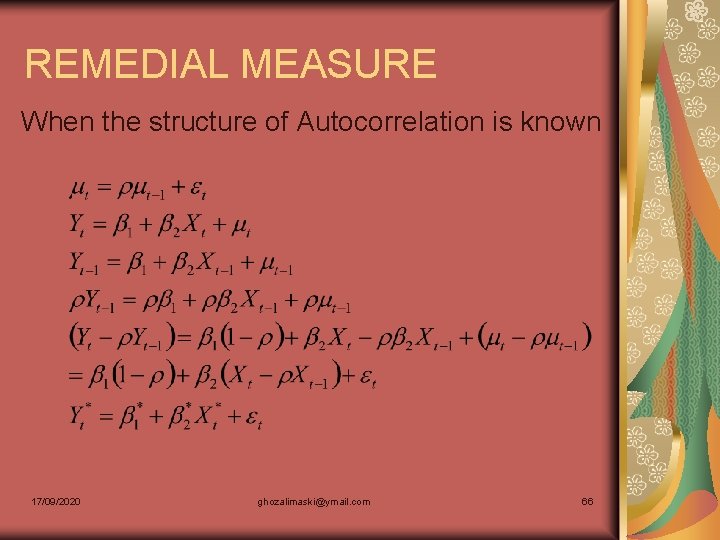

REMEDIAL MEASURE When the structure of Autocorrelation is known 17/09/2020 ghozalimaski@ymail. com 66

REMEDIAL MEASURE When is not known The First-difference method 17/09/2020 ghozalimaski@ymail. com 67

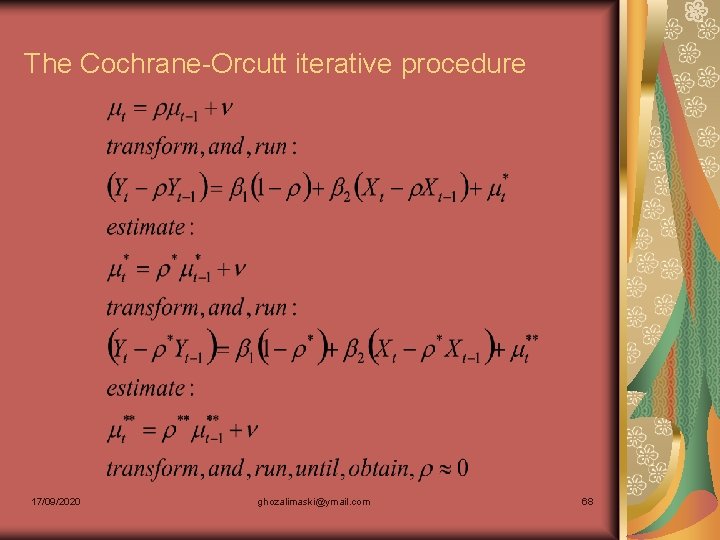

The Cochrane-Orcutt iterative procedure 17/09/2020 ghozalimaski@ymail. com 68

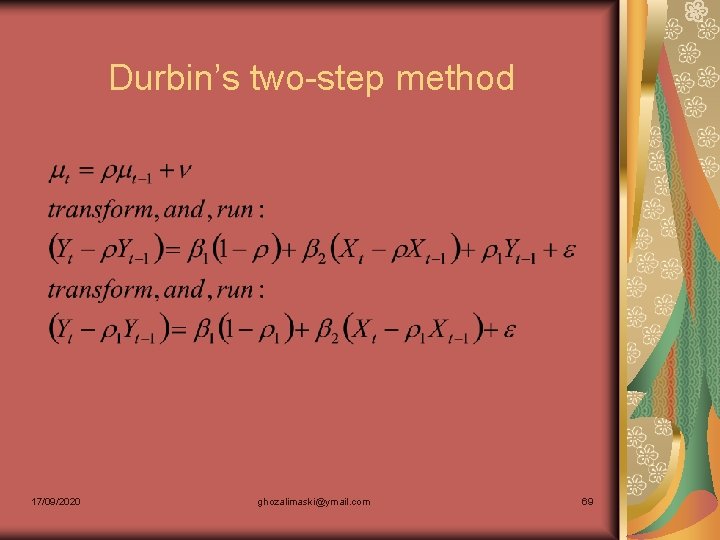

Durbin’s two-step method 17/09/2020 ghozalimaski@ymail. com 69

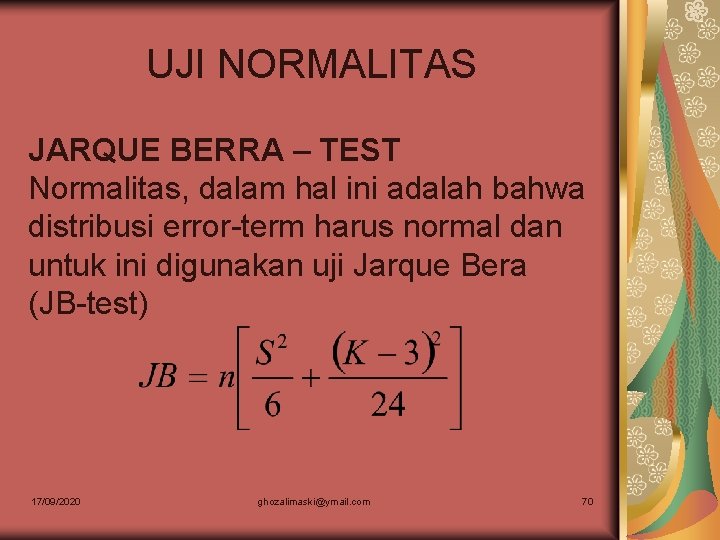

UJI NORMALITAS JARQUE BERRA – TEST Normalitas, dalam hal ini adalah bahwa distribusi error-term harus normal dan untuk ini digunakan uji Jarque Bera (JB-test) 17/09/2020 ghozalimaski@ymail. com 70

- Slides: 70