Efficient Semantic Deduction and Approximate Matching over Compact

- Slides: 32

Efficient Semantic Deduction and Approximate Matching over Compact Parse Forests Roy Bar-Haim 1, Jonathan Berant 2, Ido Dagan 1, Iddo Greental 2, Shachar Mirkin 1, Eyal Shnarch 1, Idan Szpektor 1 1 Bar-Ilan University 2 Tel-Aviv University

Our RTE-3 Recipe 1. Represent t and h as parse trees 2. Try to prove h from t based on available knowledge 3. Measure the “distance” from h to the generated consequents of t. 4. Determine entailment based on distance 5. Cross your fingers … I forgot one thing. . . 6. Wait a few hours. . . This year: hours minutes

Textual Entailment – Where do we want to get to? n Long term goal: robust semantic inference engine q q n To be used as a generic component in text understanding applications Encapsulating all required inferences Based on compact, well defined formalism: q Knowledge representation q Inference mechanisms

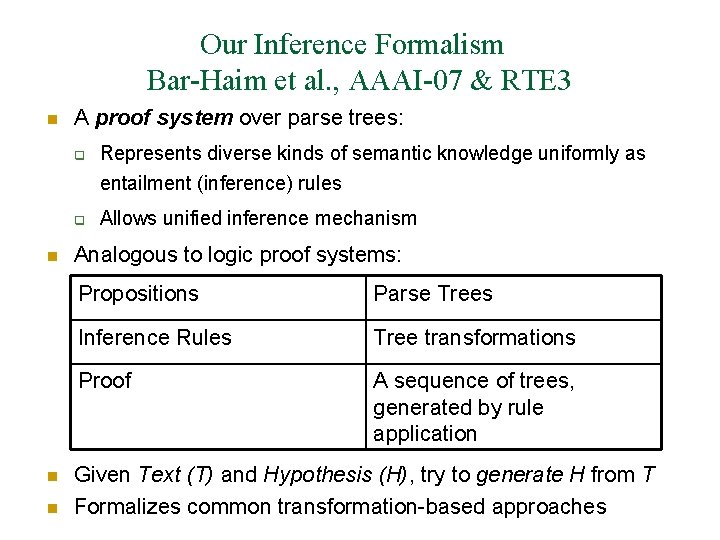

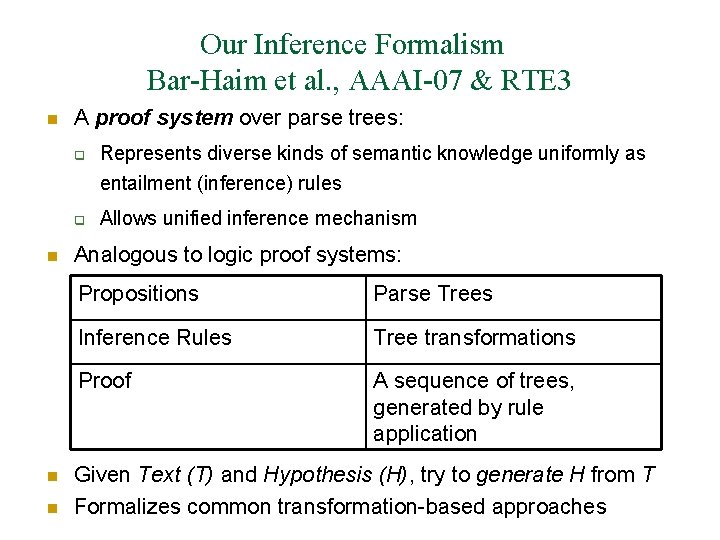

Our Inference Formalism Bar-Haim et al. , AAAI-07 & RTE 3 n A proof system over parse trees: q Represents diverse kinds of semantic knowledge uniformly as entailment (inference) rules q n n n Allows unified inference mechanism Analogous to logic proof systems: Propositions Parse Trees Inference Rules Tree transformations Proof A sequence of trees, generated by rule application Given Text (T) and Hypothesis (H), try to generate H from T Formalizes common transformation-based approaches

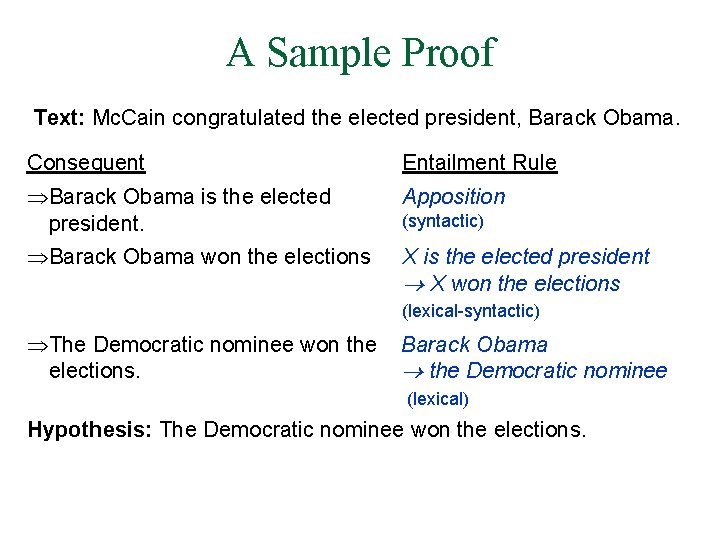

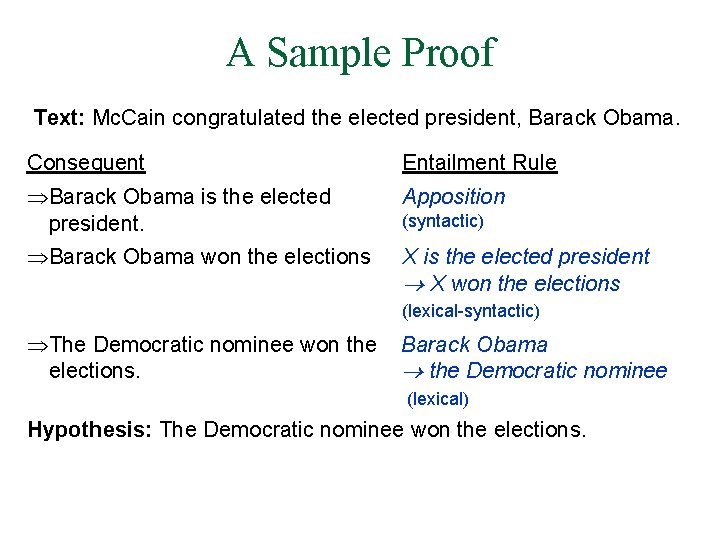

A Sample Proof Text: Mc. Cain congratulated the elected president, Barack Obama. Consequent Entailment Rule Barack Obama is the elected president. Apposition Barack Obama won the elections X is the elected president X won the elections (syntactic) (lexical-syntactic) The Democratic nominee won the elections. Barack Obama the Democratic nominee (lexical) Hypothesis: The Democratic nominee won the elections.

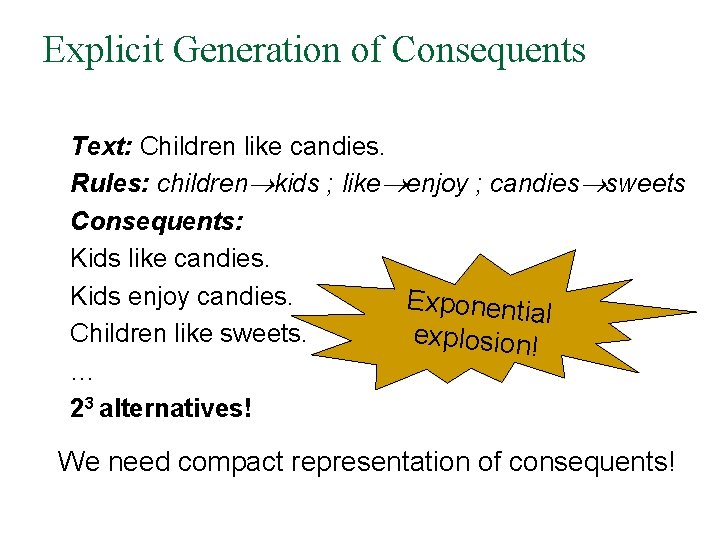

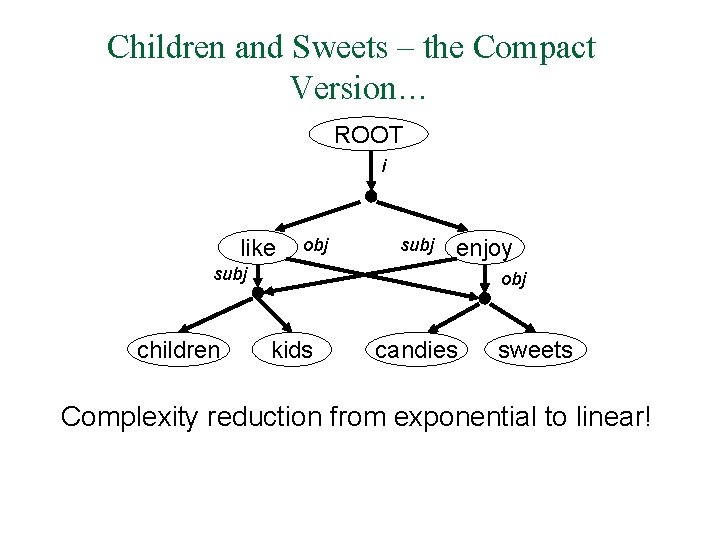

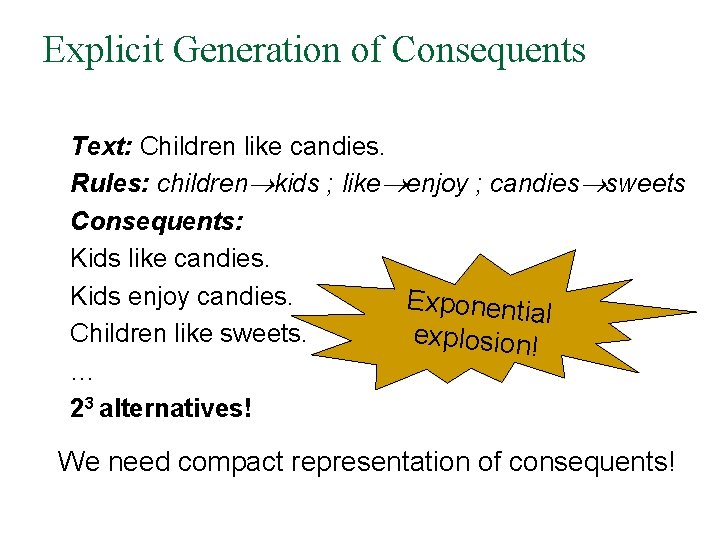

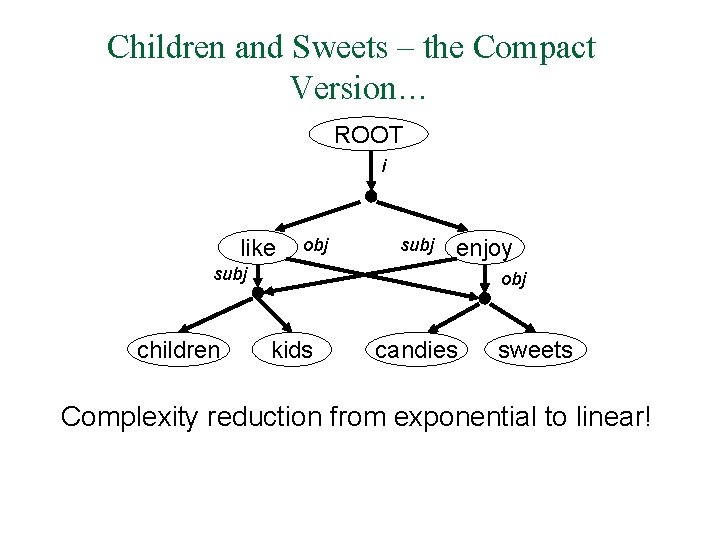

Explicit Generation of Consequents Text: Children like candies. Rules: children kids ; like enjoy ; candies sweets Consequents: Kids like candies. Kids enjoy candies. Exponentia l Children like sweets. explosion! … 23 alternatives! We need compact representation of consequents!

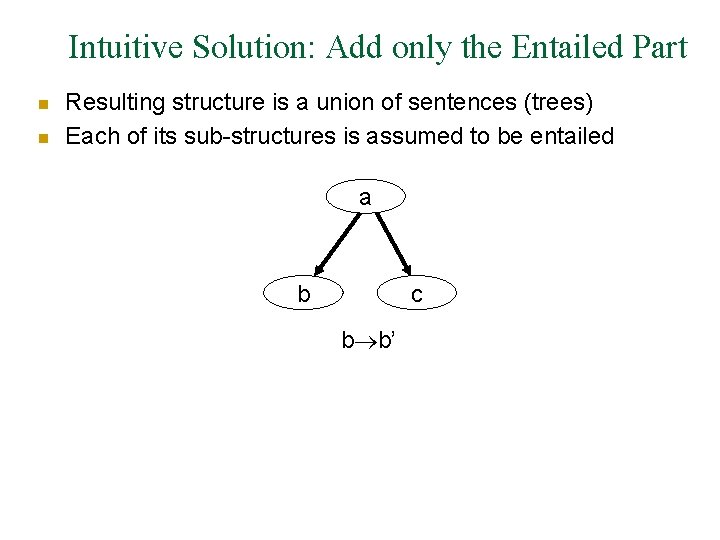

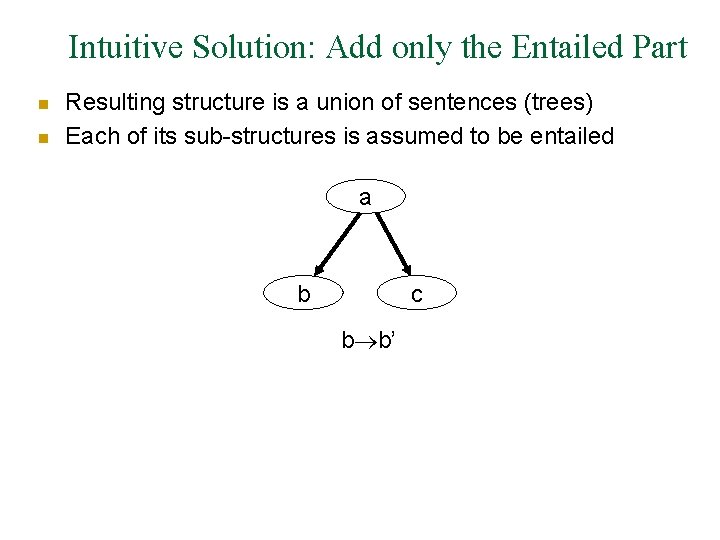

Intuitive Solution: Add only the Entailed Part n n Resulting structure is a union of sentences (trees) Each of its sub-structures is assumed to be entailed a b c b b’

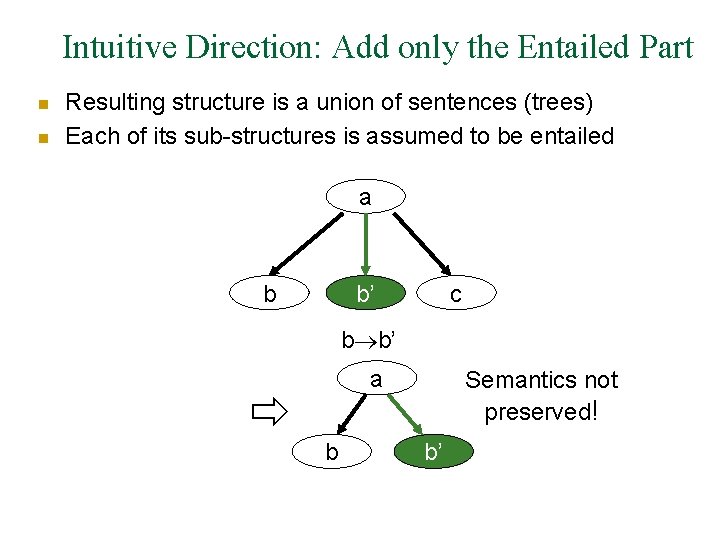

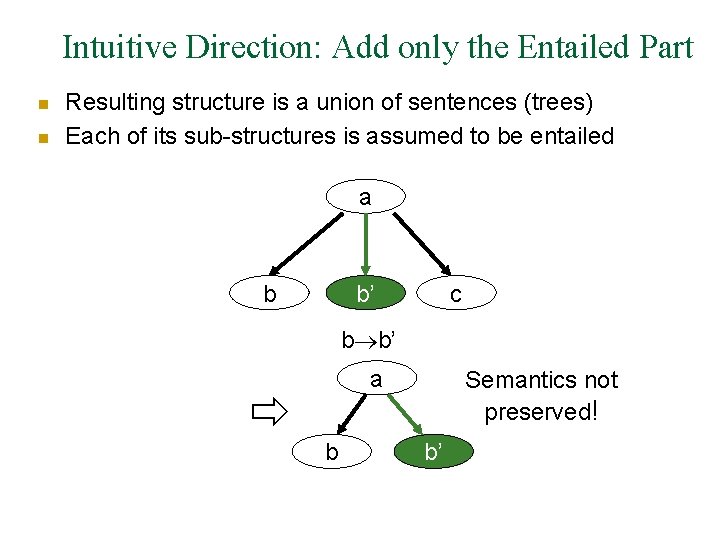

Intuitive Direction: Add only the Entailed Part n n Resulting structure is a union of sentences (trees) Each of its sub-structures is assumed to be entailed a b b’ c b b’ a b Semantics not preserved! b’

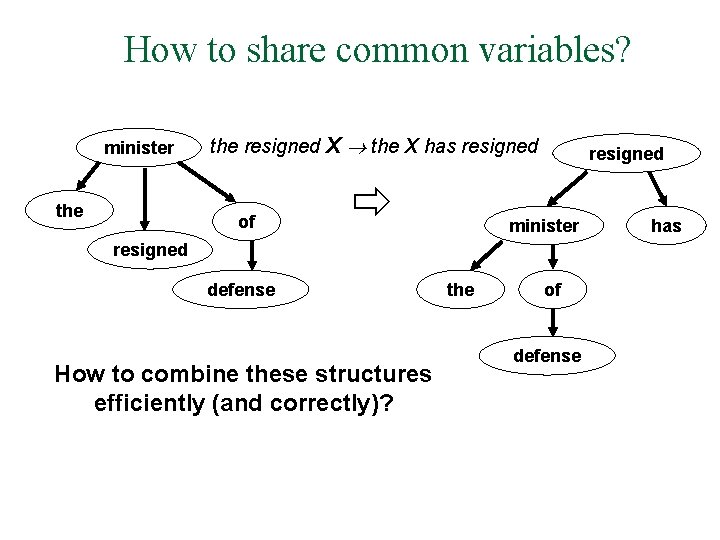

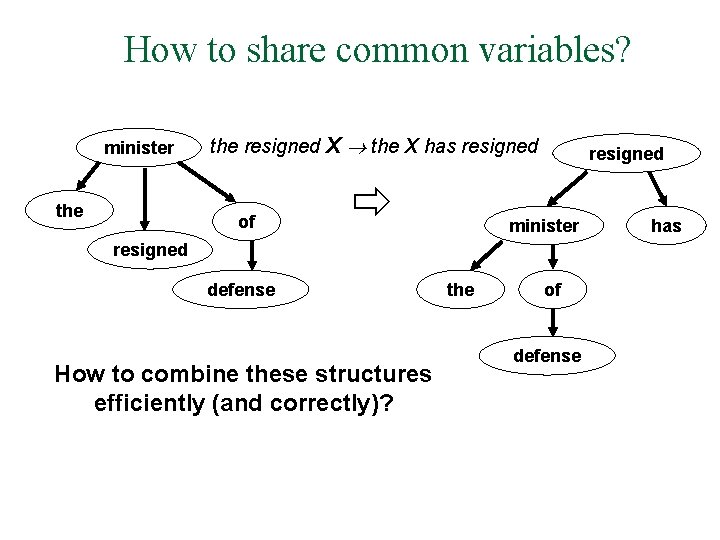

How to share common variables? minister the resigned X the X has resigned of resigned minister resigned defense How to combine these structures efficiently (and correctly)? the of defense has

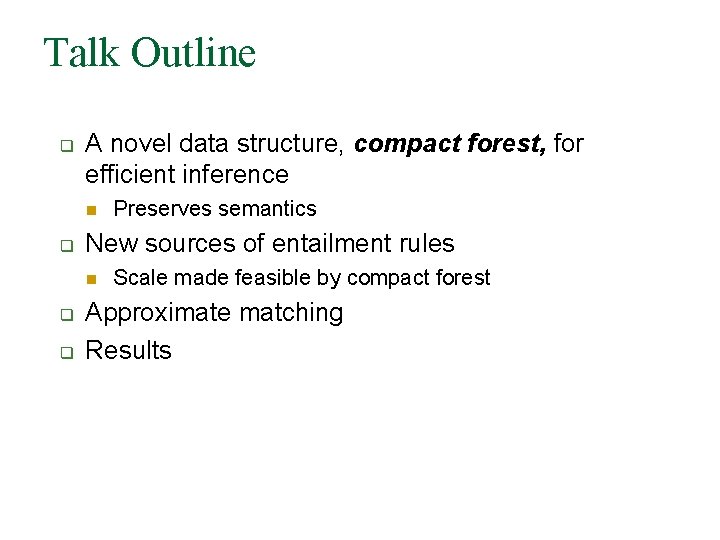

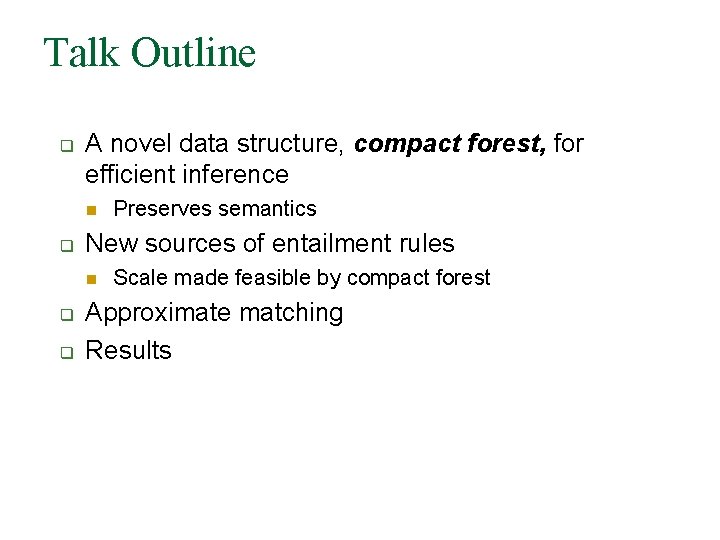

Talk Outline q A novel data structure, compact forest, for efficient inference n q New sources of entailment rules n q q Preserves semantics Scale made feasible by compact forest Approximate matching Results

The Compact Forest

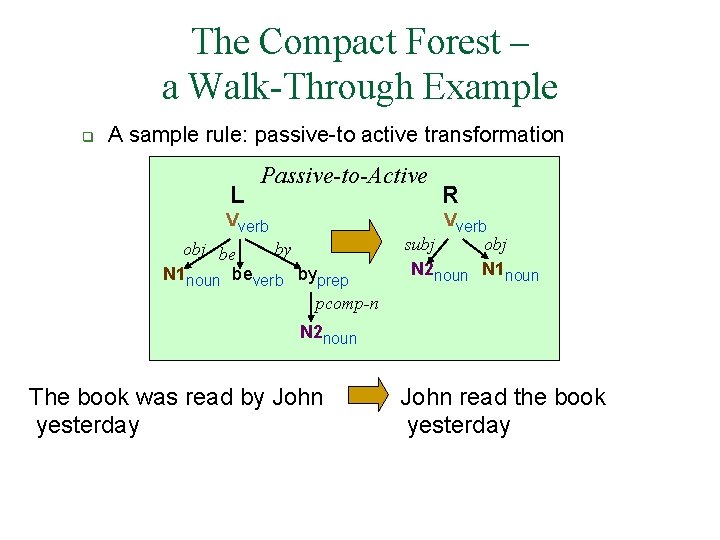

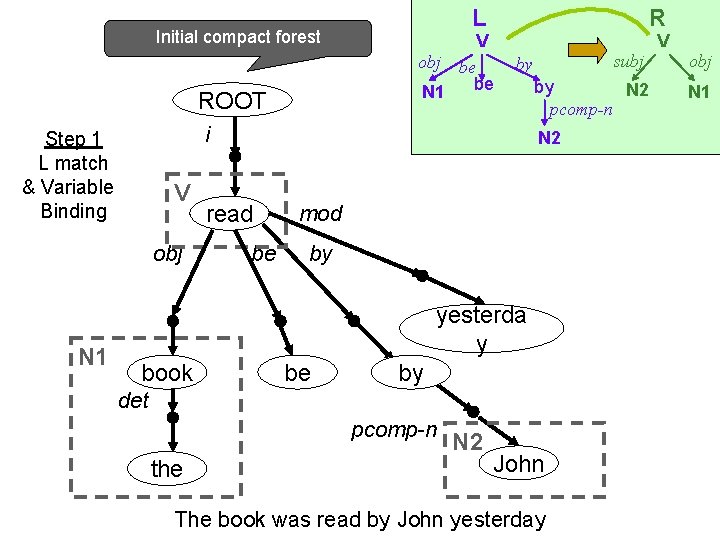

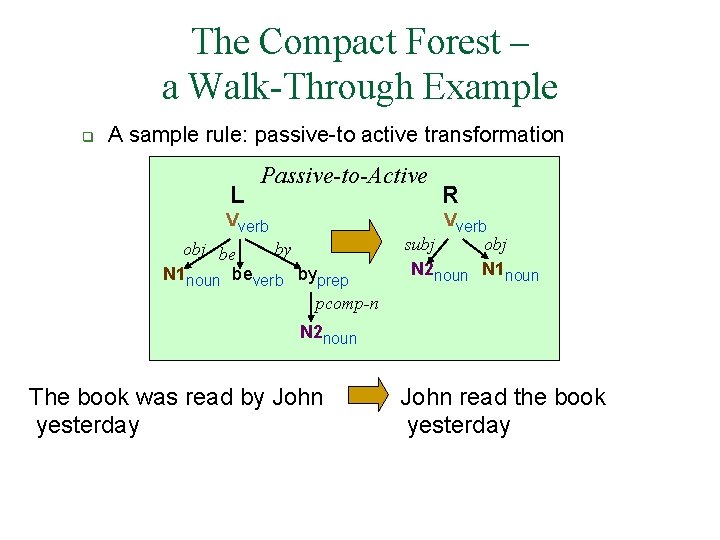

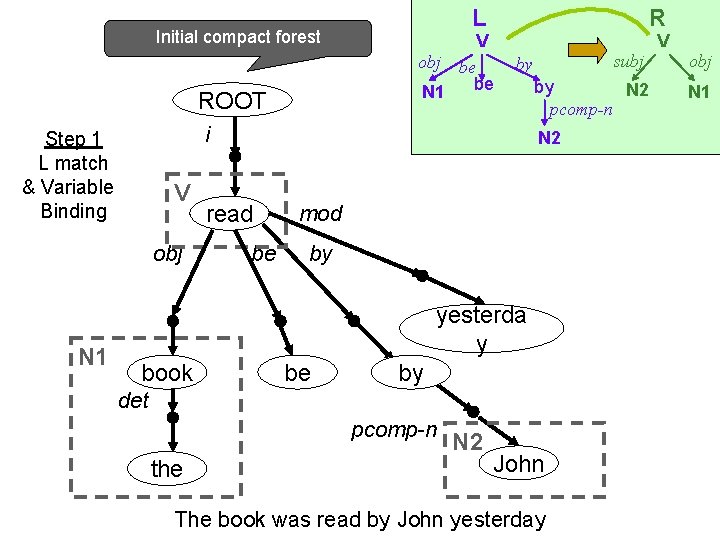

The Compact Forest – a Walk-Through Example q A sample rule: passive-to active transformation L Passive-to-Active Vverb obj be by N 1 noun beverb byprep pcomp-n R Vverb subj obj N 2 noun N 1 noun N 2 noun The book was read by John yesterday John read the book yesterday

Initial compact forest ROOT R V obj be be N 1 V obj subj by i Step 1 L match & Variable Binding N 1 L by N 2 pcomp-n N 2 read be mod by yesterda y book be by det pcomp-n the N 2 John The book was read by John yesterday V obj N 1

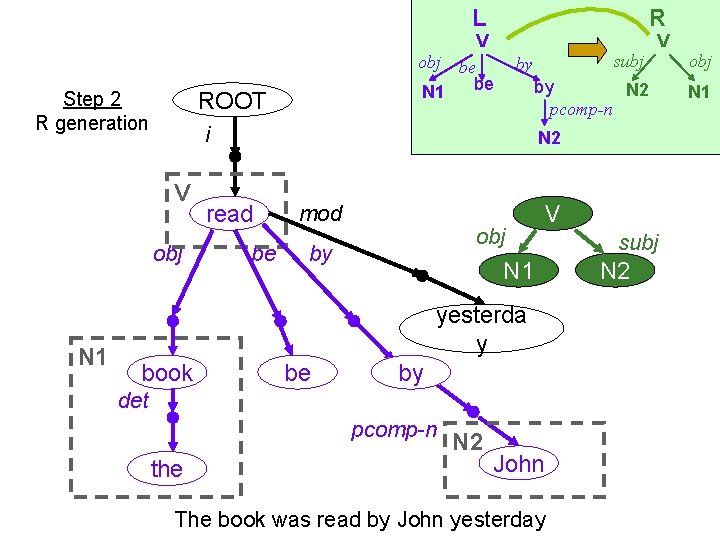

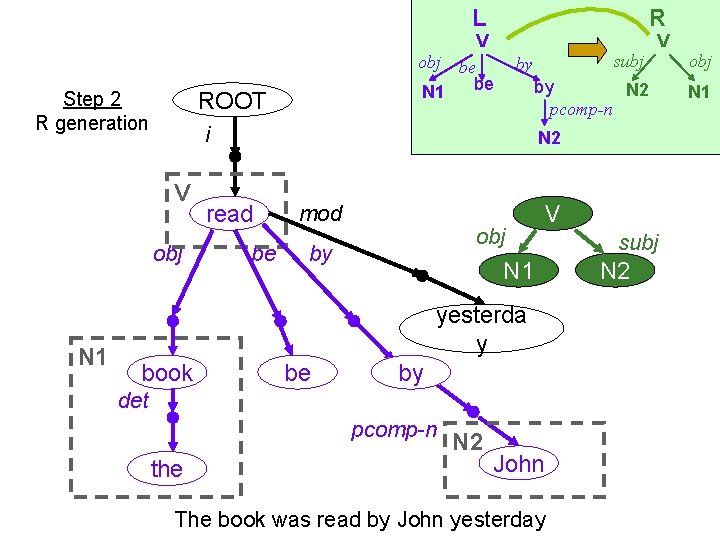

L obj subj by N 2 read be mod obj by V N 1 yesterda y book be by det pcomp-n the V by N 2 pcomp-n i V N 1 V obj be be N 1 ROOT Step 2 R generation R N 2 John The book was read by John yesterday subj N 2 obj N 1

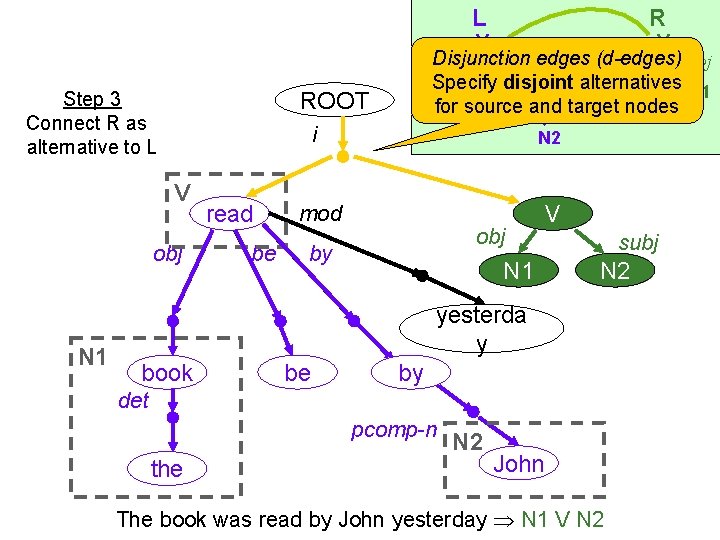

L ROOT Step 3 Connect R as alternative to L V V subj obj. Disjunction by edges (d-edges) be Specify be disjoint by alternatives N 2 N 1 for source and target nodes pcomp-n i V obj N 1 R read be N 2 mod obj by N 1 V subj N 2 yesterda y book be by det pcomp-n the N 2 John The book was read by John yesterday N 1 V N 2

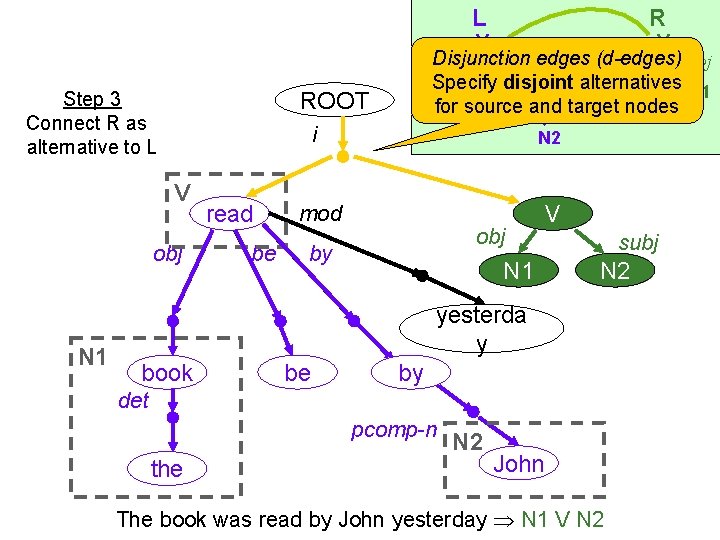

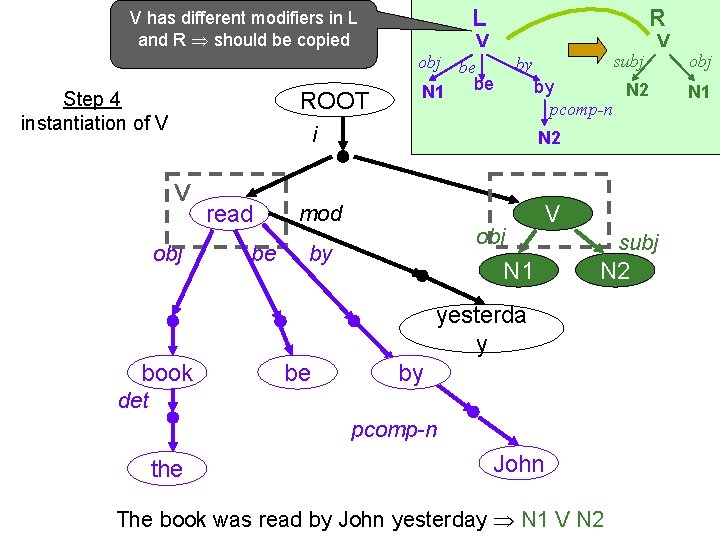

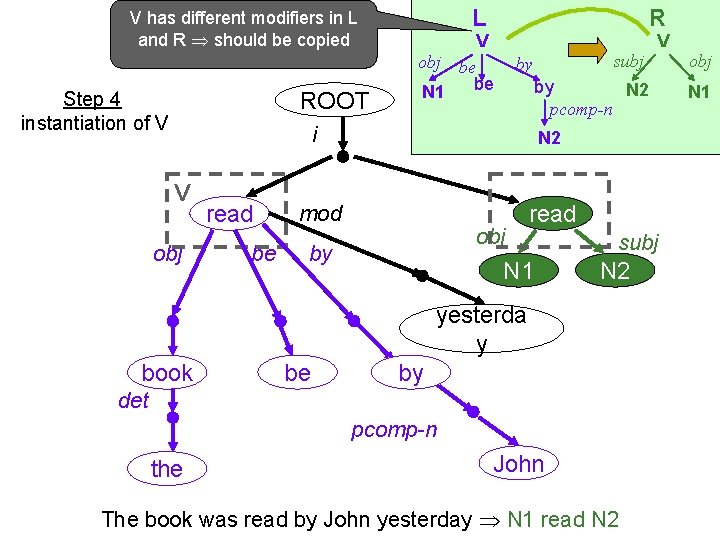

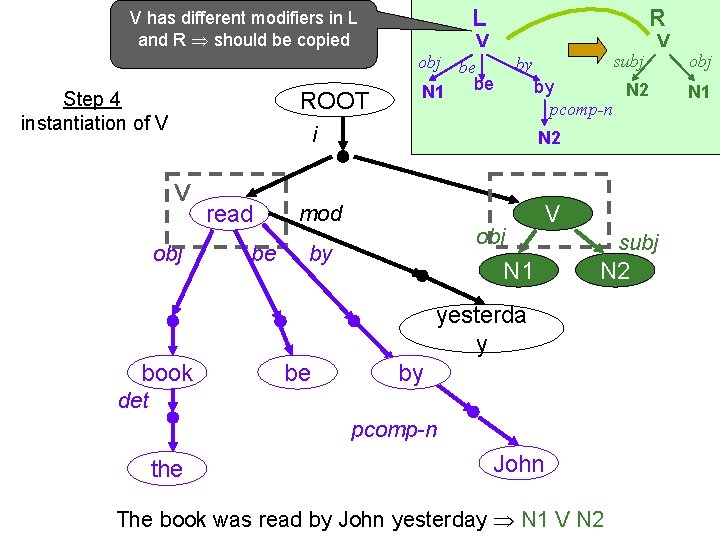

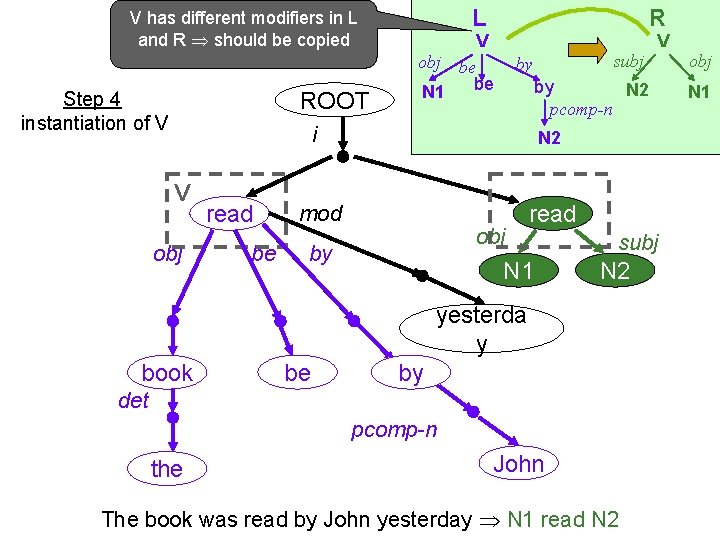

V has different modifiers in L and R should be copied ROOT Step 4 instantiation of V L R V obj be be N 1 by N 2 pcomp-n i V obj read be subj by N 2 mod obj by N 1 V subj N 2 yesterda y book be by det pcomp-n the V John The book was read by John yesterday N 1 V N 2 obj N 1

V has different modifiers in L and R should be copied ROOT Step 4 instantiation of V L R V obj be be N 1 by N 2 pcomp-n i V obj read be subj by N 2 mod obj by read N 1 subj N 2 yesterda y book be by det pcomp-n the V John The book was read by John yesterday N 1 read N 2 obj N 1

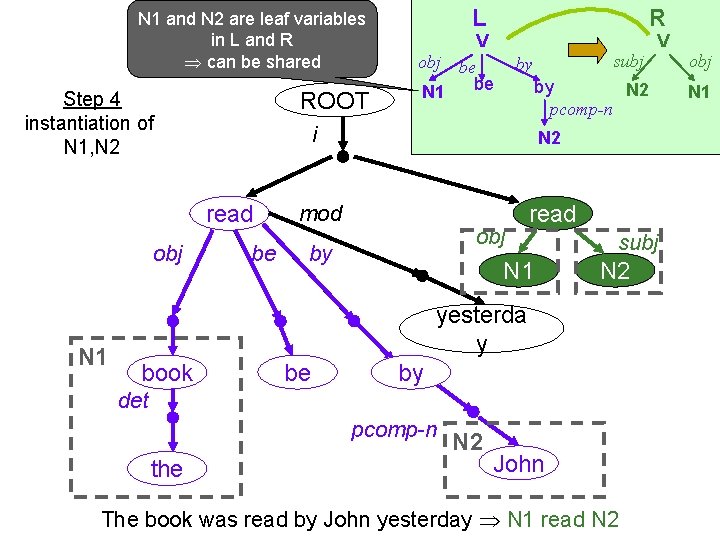

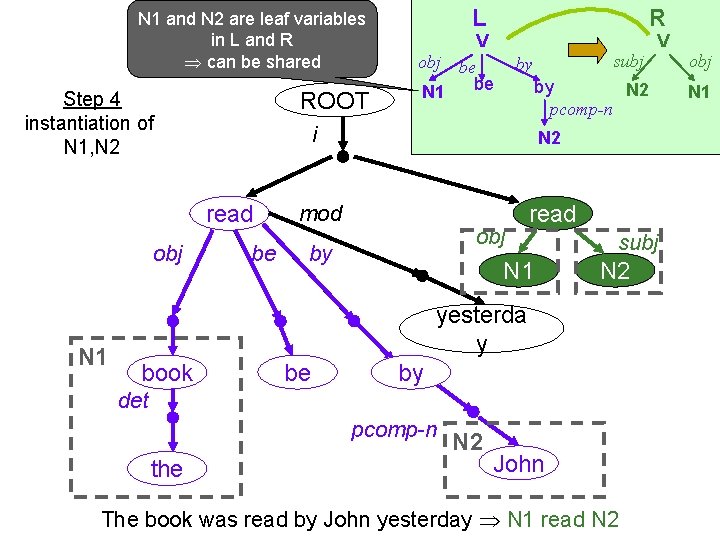

N 1 and N 2 are leaf variables in L and R can be shared ROOT Step 4 instantiation of N 1, N 2 N 1 R V obj be be N 1 be subj by N 2 mod obj by read N 1 subj N 2 yesterda y book be by det pcomp-n the V by N 2 pcomp-n i read obj L N 2 John The book was read by John yesterday N 1 read N 2 obj N 1

N 1 and N 2 are leaf variables in L and R can be shared ROOT Step 4 instantiation of N 1, N 2 R V obj be be N 1 be subj by i read obj L by N 2 pcomp-n N 2 mod read obj by subj N 1 yesterda y book be by det pcomp-n the N 2 John The book was read by John yesterday John read the book V obj N 1

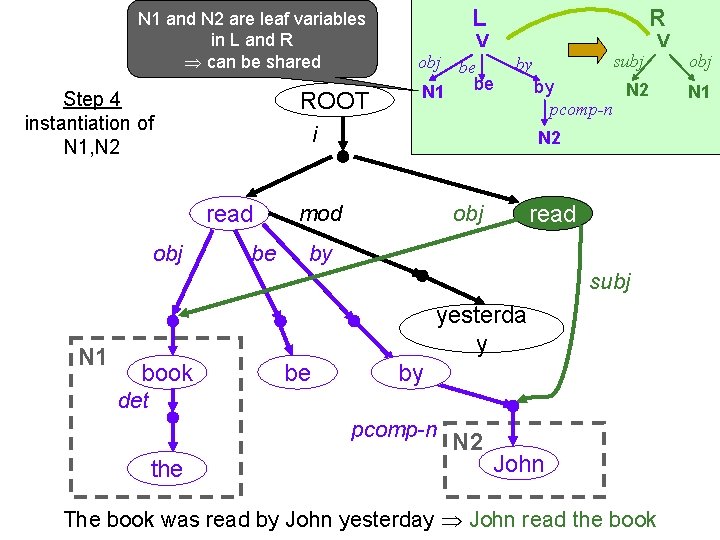

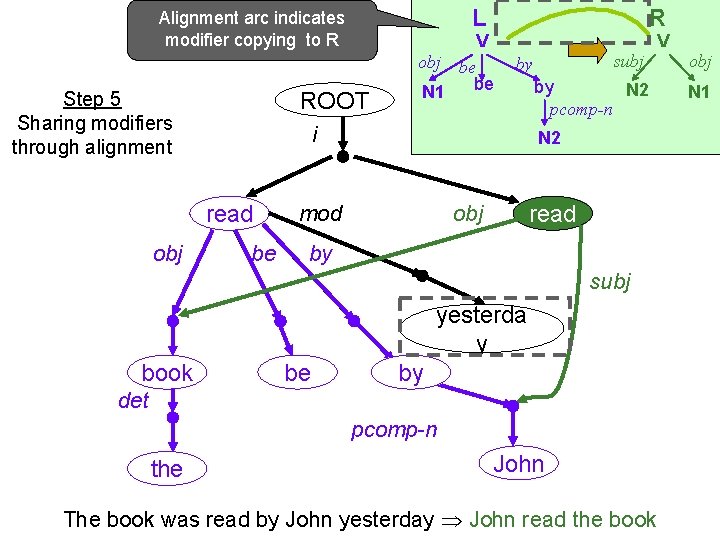

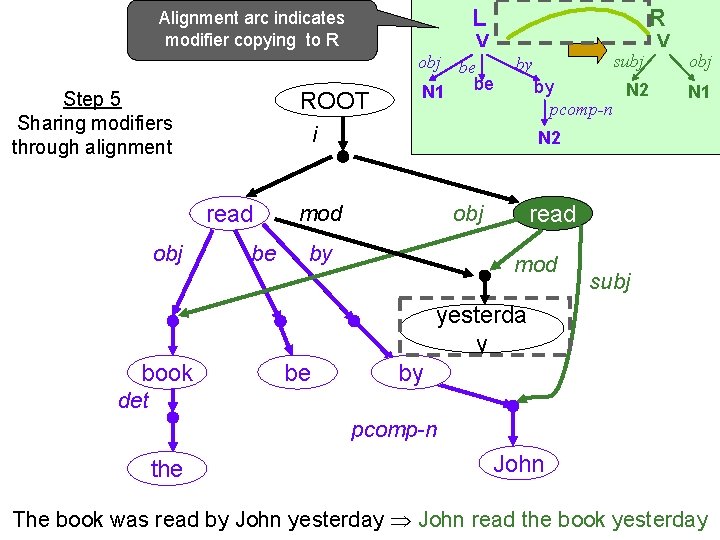

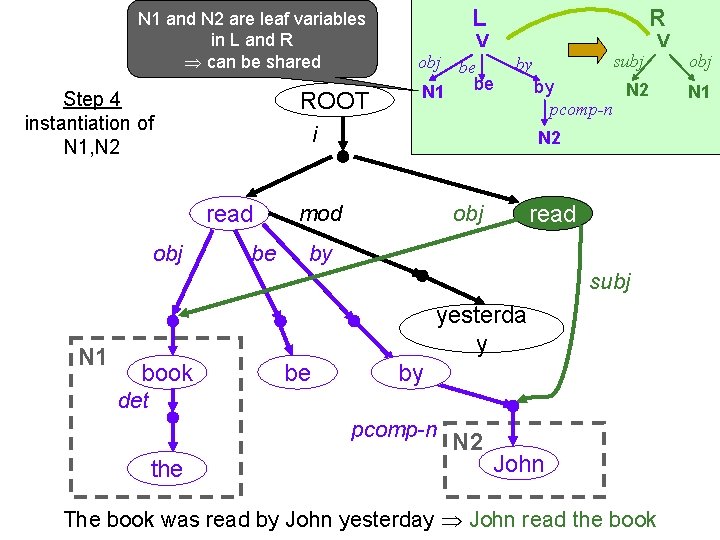

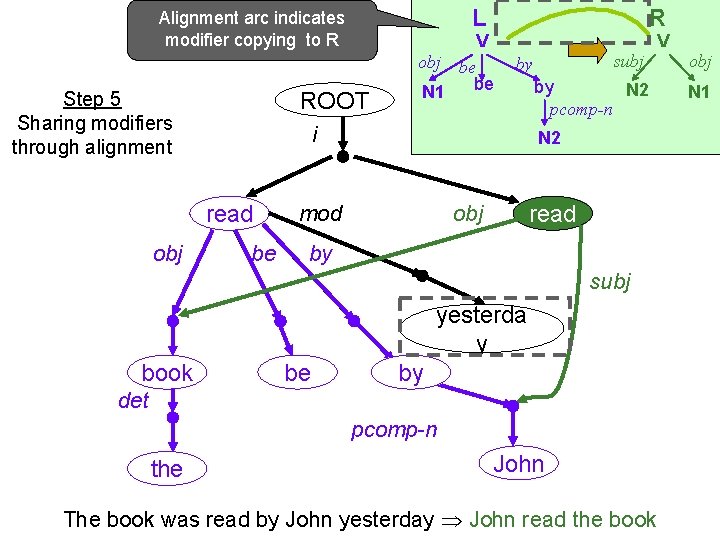

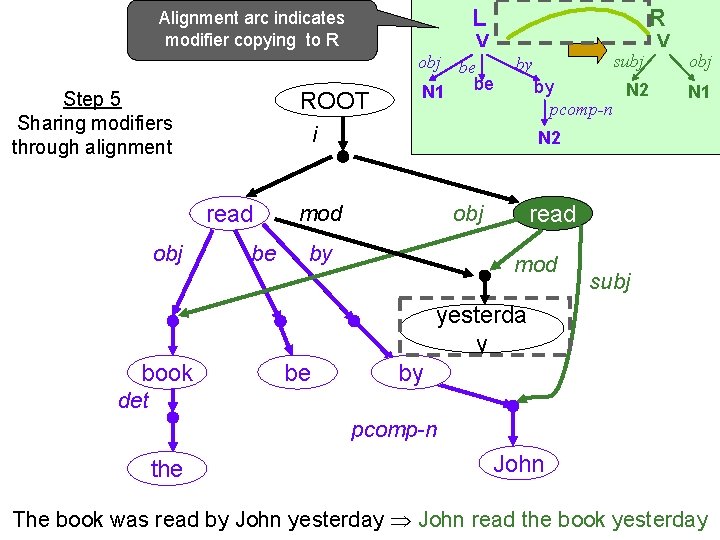

L Alignment arc indicates modifier copying to R ROOT Step 5 Sharing modifiers through alignment V obj be be N 1 be subj by i read obj R by N 2 pcomp-n N 2 mod read obj by subj yesterda y book be by det pcomp-n the John The book was read by John yesterday John read the book V obj N 1

L Alignment arc indicates modifier copying to R ROOT Step 5 Sharing modifiers through alignment V obj be be N 1 be subj by by N 2 pcomp-n i read obj R V obj N 1 N 2 mod read obj by mod subj yesterda y book be by det pcomp-n the John The book was read by John yesterday John read the book yesterday

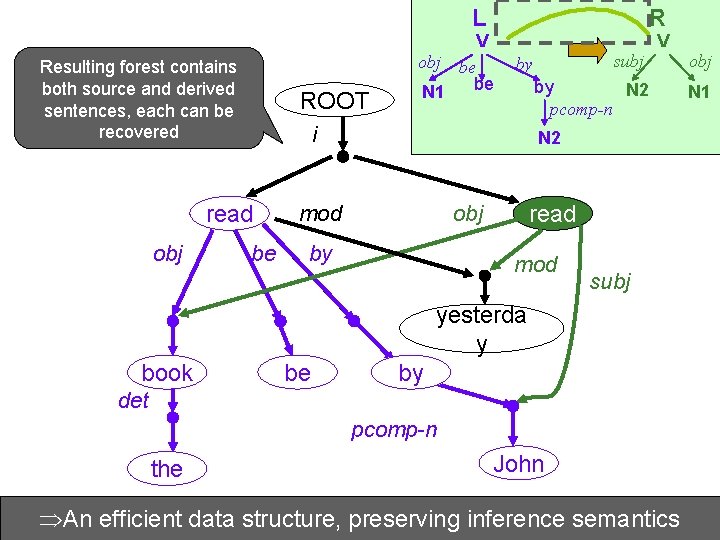

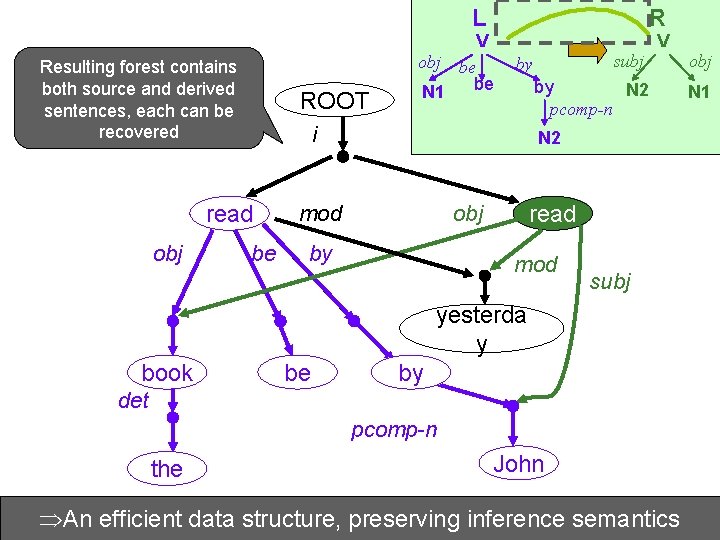

L Resulting forest contains both source and derived sentences, each can be recovered ROOT V obj be be N 1 be subj by by N 2 pcomp-n i read obj R V obj N 1 N 2 mod read obj by mod subj yesterda y book be by det pcomp-n the John structure, preserving semantics The An bookefficient was readdata by John yesterday John inference read the book yesterday

Children and Sweets – the Compact Version… ROOT i like obj subj enjoy subj children obj kids candies sweets Complexity reduction from exponential to linear!

New Knowledge Sources

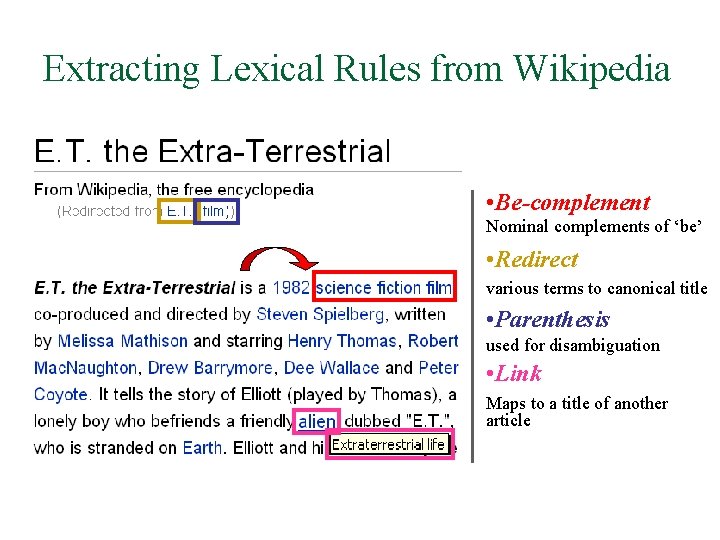

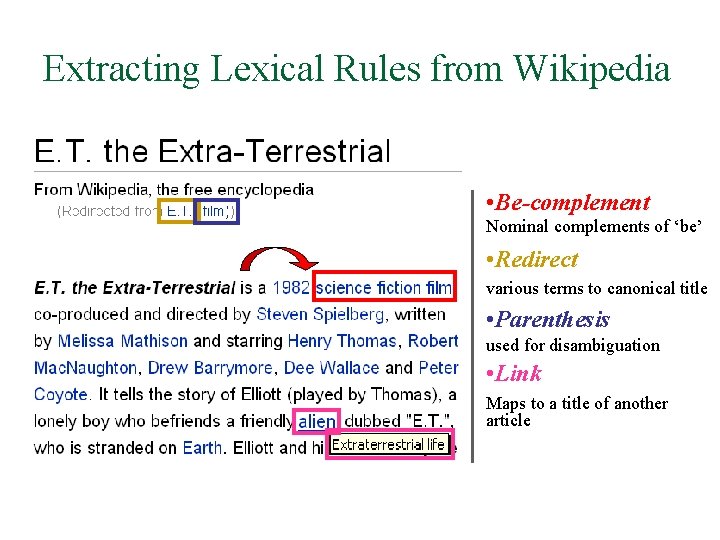

Extracting Lexical Rules from Wikipedia • Be-complement Nominal complements of ‘be’ • Redirect various terms to canonical title • Parenthesis used for disambiguation • Link Maps to a title of another article

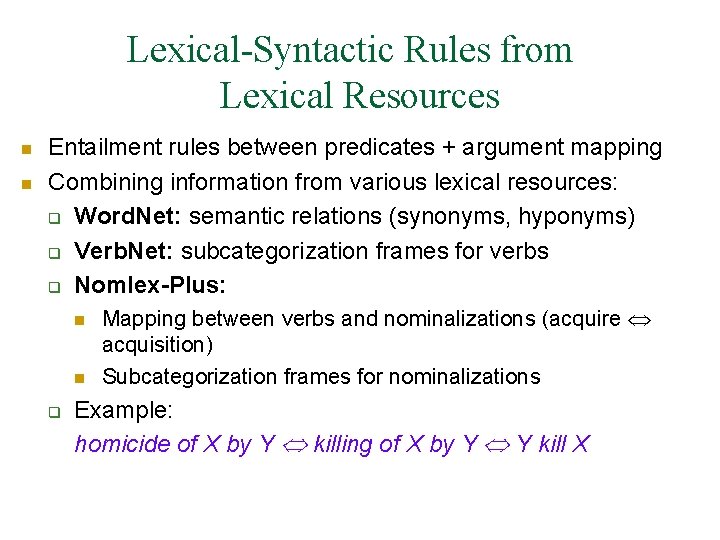

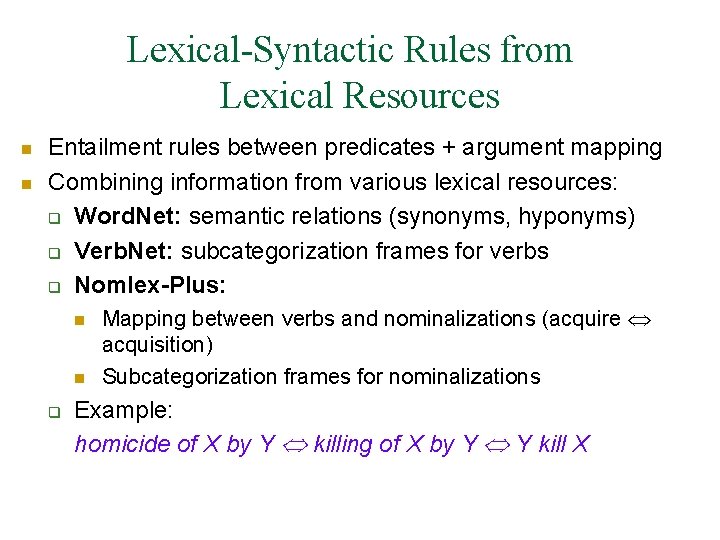

Lexical-Syntactic Rules from Lexical Resources n n Entailment rules between predicates + argument mapping Combining information from various lexical resources: q Word. Net: semantic relations (synonyms, hyponyms) q Verb. Net: subcategorization frames for verbs q Nomlex-Plus: n n q Mapping between verbs and nominalizations (acquire acquisition) Subcategorization frames for nominalizations Example: homicide of X by Y killing of X by Y Y kill X

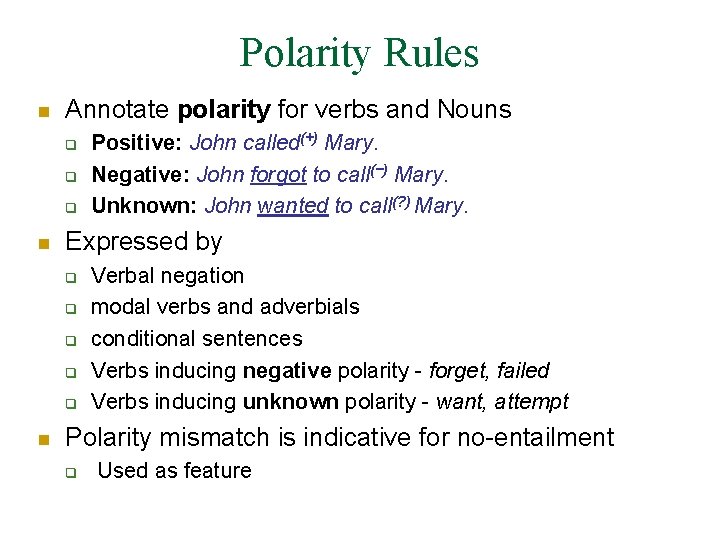

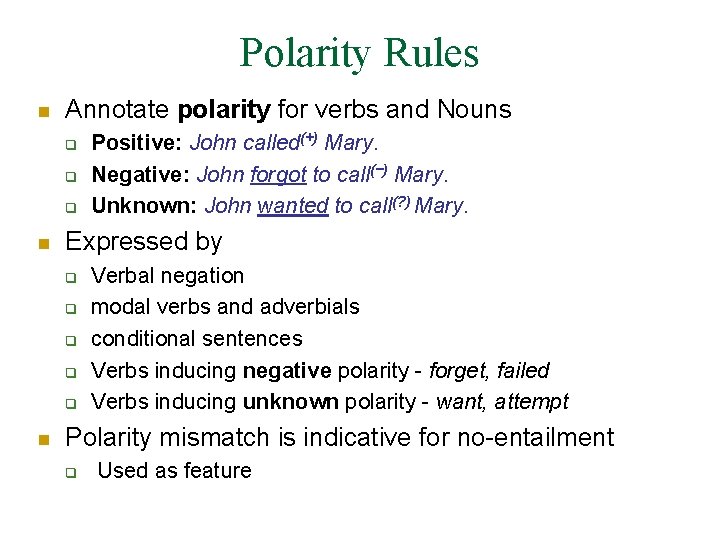

Polarity Rules n Annotate polarity for verbs and Nouns q q q n Expressed by q q q n Positive: John called(+) Mary. Negative: John forgot to call(−) Mary. Unknown: John wanted to call(? ) Mary. Verbal negation modal verbs and adverbials conditional sentences Verbs inducing negative polarity - forget, failed Verbs inducing unknown polarity - want, attempt Polarity mismatch is indicative for no-entailment q Used as feature

Adding Approximate Matching

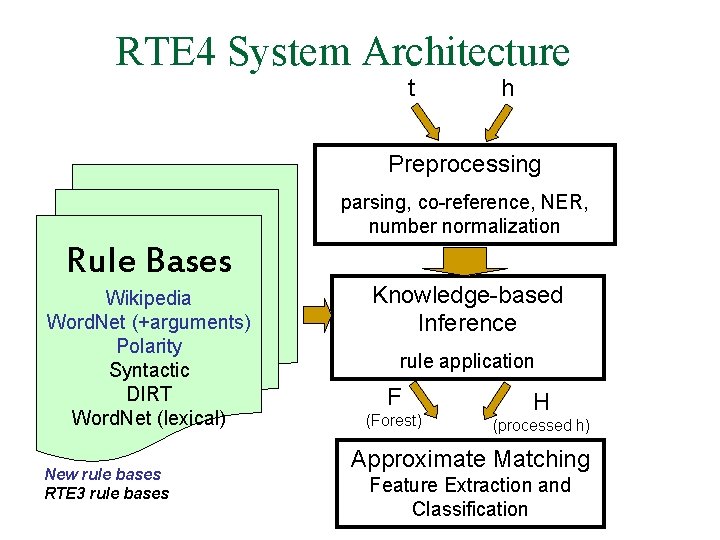

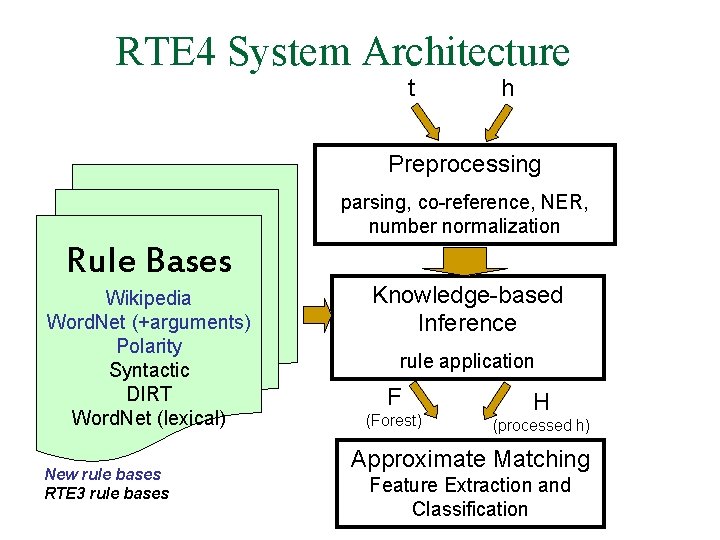

RTE 4 System Architecture t h Preprocessing parsing, co-reference, NER, number normalization Rule Bases Wikipedia Word. Net (+arguments) Polarity Syntactic DIRT Word. Net (lexical) New rule bases RTE 3 rule bases Knowledge-based Inference rule application F (Forest) H (processed h) Approximate Matching Feature Extraction and Classification

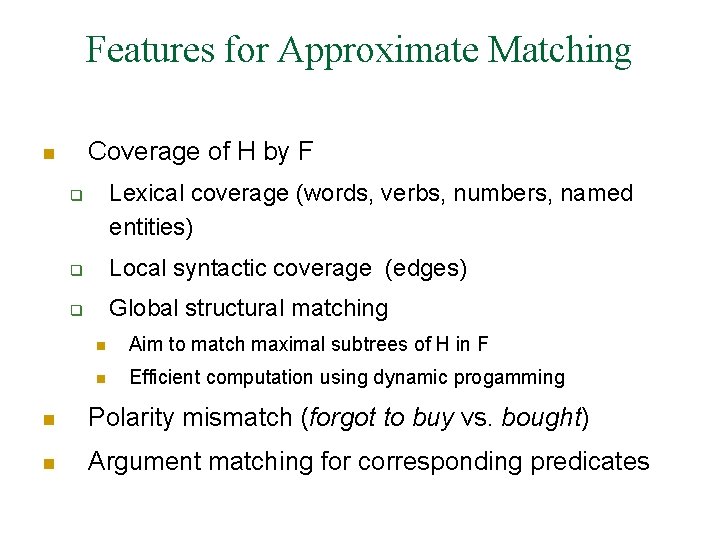

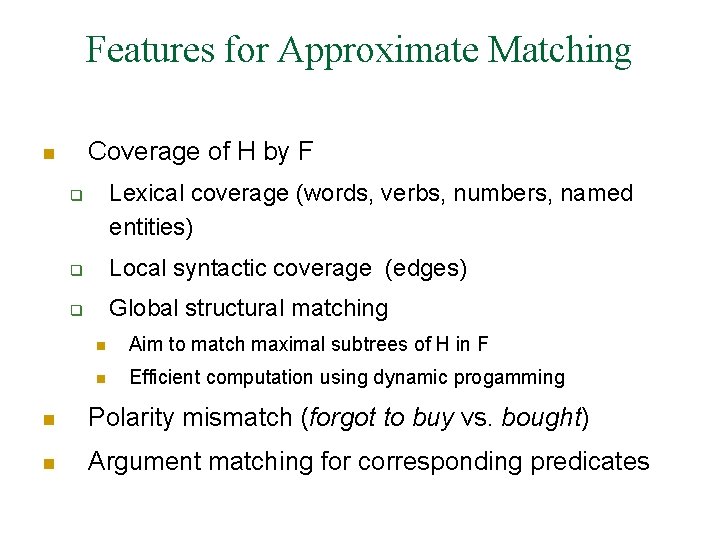

Features for Approximate Matching Coverage of H by F n Lexical coverage (words, verbs, numbers, named entities) q q Local syntactic coverage (edges) q Global structural matching n Aim to match maximal subtrees of H in F n Efficient computation using dynamic progamming n Polarity mismatch (forgot to buy vs. bought) n Argument matching for corresponding predicates

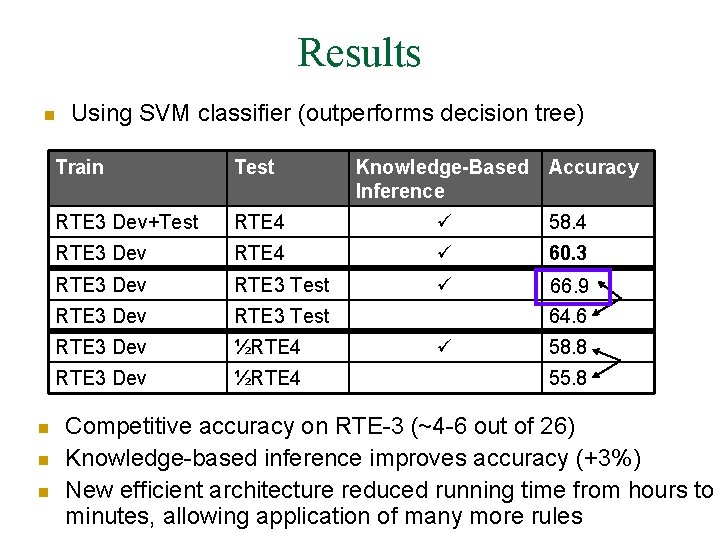

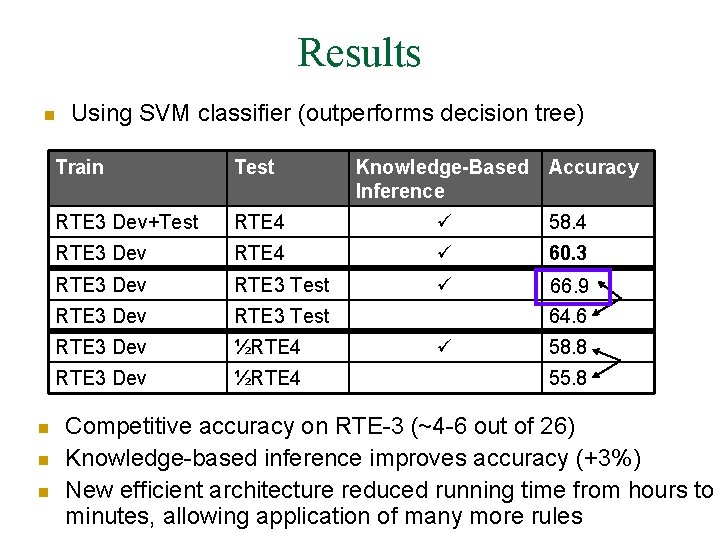

Results n n Using SVM classifier (outperforms decision tree) Train Test Knowledge-Based Accuracy Inference RTE 3 Dev+Test RTE 4 58. 4 RTE 3 Dev RTE 4 60. 3 RTE 3 Dev RTE 3 Test 66. 9 64. 6 RTE 3 Dev ½RTE 4 58. 8 RTE 3 Dev ½RTE 4 55. 8 Competitive accuracy on RTE-3 (~4 -6 out of 26) Knowledge-based inference improves accuracy (+3%) New efficient architecture reduced running time from hours to minutes, allowing application of many more rules

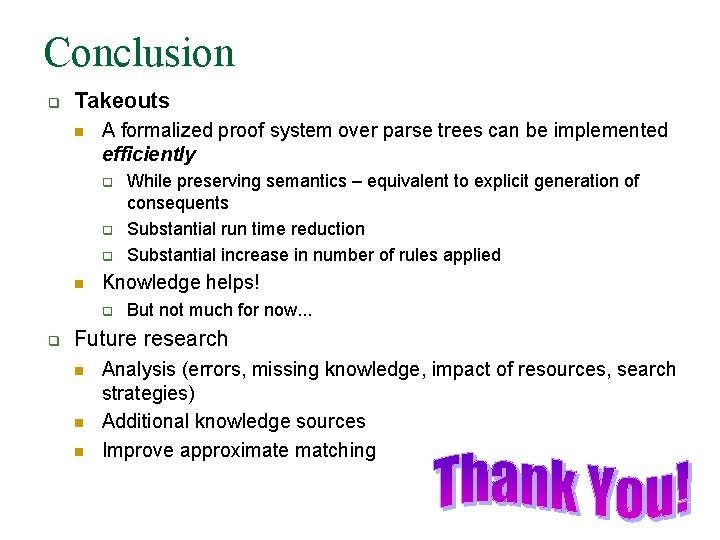

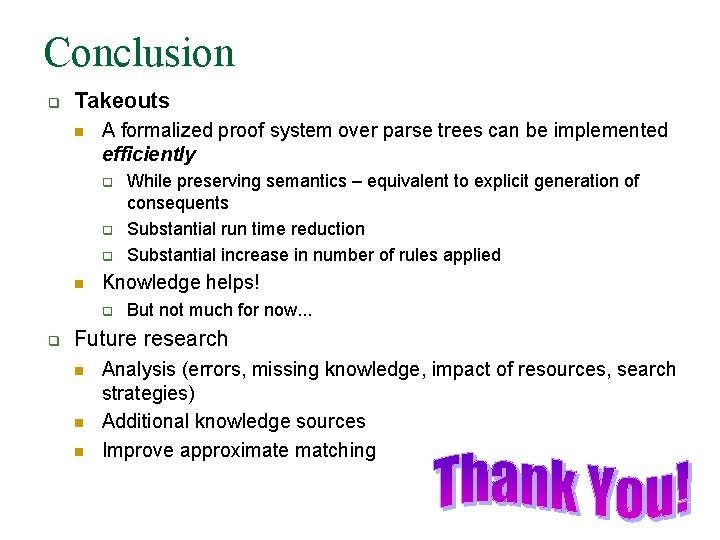

Conclusion q Takeouts n A formalized proof system over parse trees can be implemented efficiently q q q n Knowledge helps! q q While preserving semantics – equivalent to explicit generation of consequents Substantial run time reduction Substantial increase in number of rules applied But not much for now. . . Future research n n n Analysis (errors, missing knowledge, impact of resources, search strategies) Additional knowledge sources Improve approximate matching