Efficient Computer Interfaces Using Continuous Gestures Language Models

- Slides: 16

Efficient Computer Interfaces Using Continuous Gestures, Language Models, and Speech Keith Vertanen July 30 th, 2004

The problem l l Speech recognizers make mistakes Correcting mistakes is inefficient Ø Ø Ø l 140 WPM 14 WPM 32 WPM Uncorrected dictation Corrected dictation, mouse/keyboard Corrected typing, mouse/keyboard Voice-only correction is even slower and more frustrating

Research overview l Make correction of dictation: Ø Ø Ø l More efficient More fun More accessible Approach: Ø Ø Build a word lattice from a recognizer’s n-best list Expand lattice to cover likely recognition errors Make a language model from expanded lattice Use model in a continuous gesture interface to perform confirmation and correction

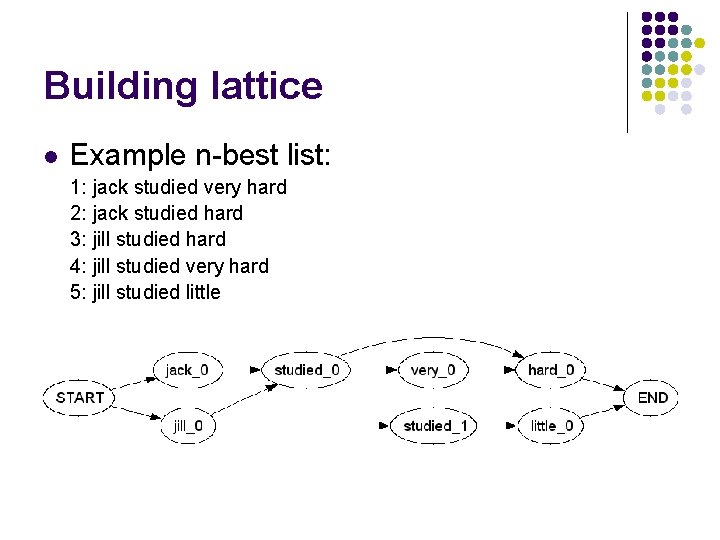

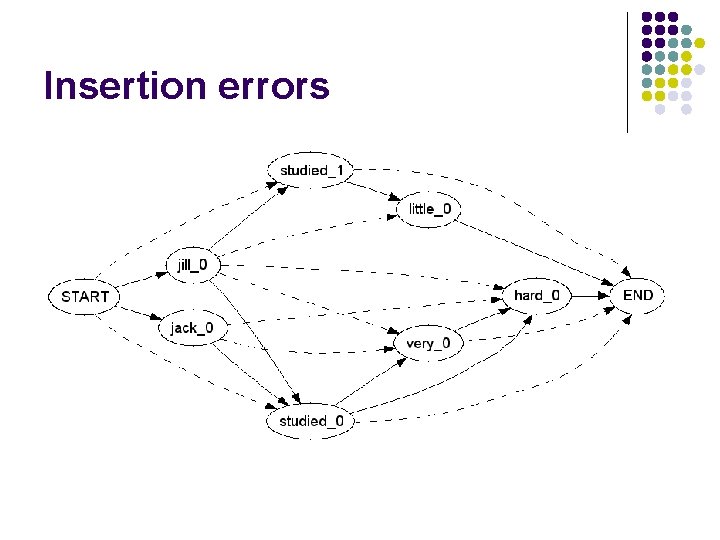

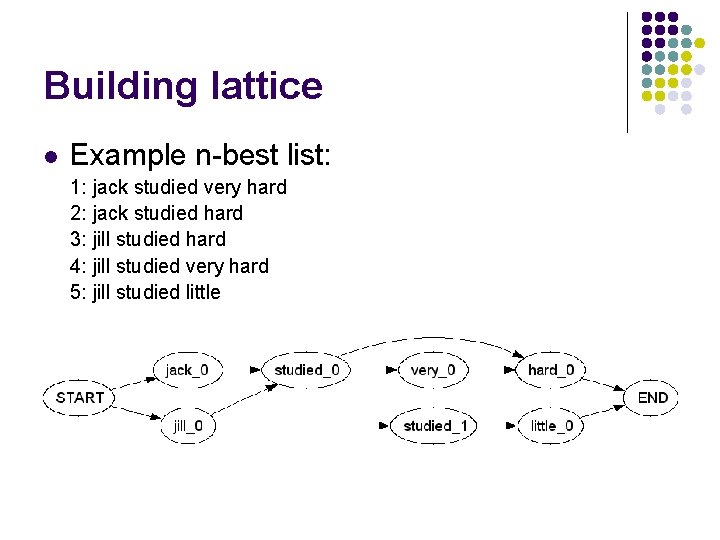

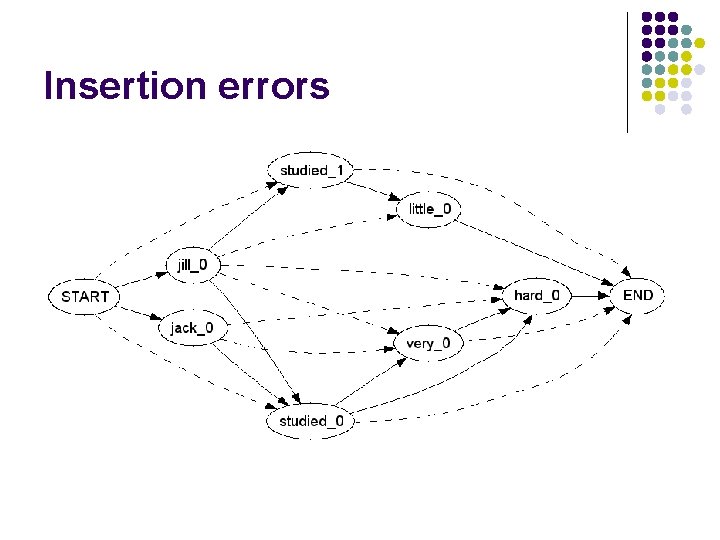

Building lattice l Example n-best list: 1: jack studied very hard 2: jack studied hard 3: jill studied hard 4: jill studied very hard 5: jill studied little

Insertion errors

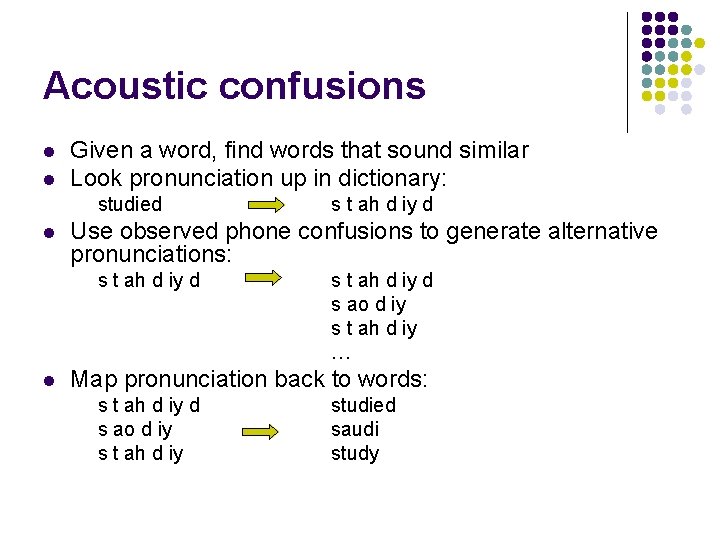

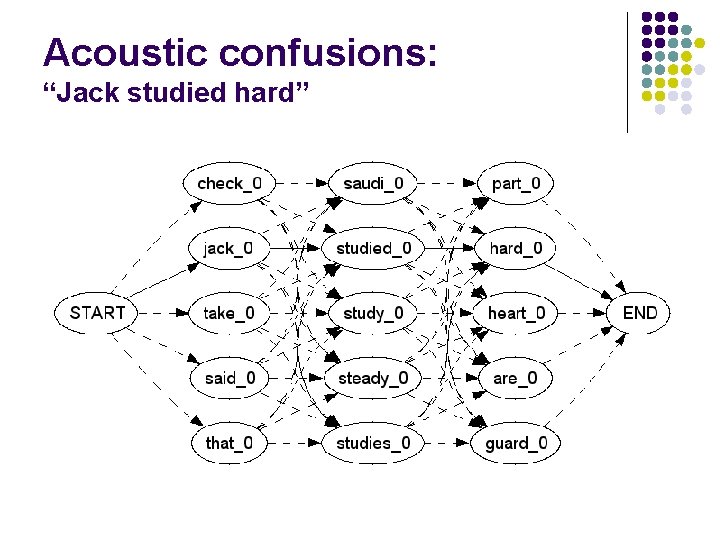

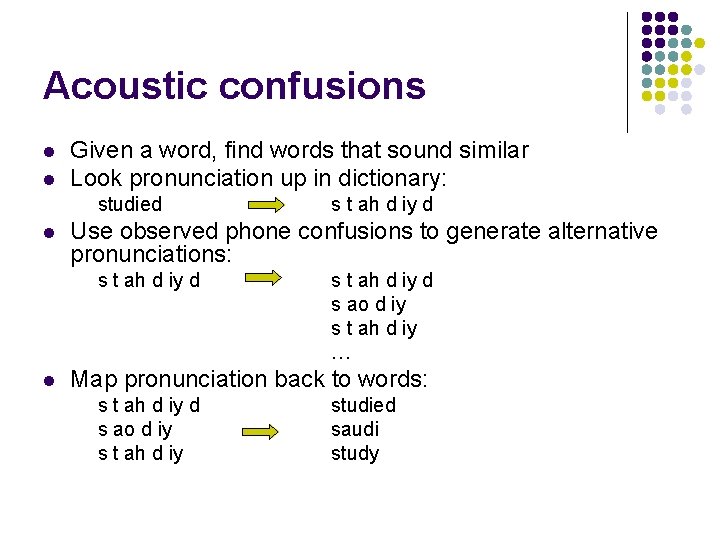

Acoustic confusions l l Given a word, find words that sound similar Look pronunciation up in dictionary: studied l Use observed phone confusions to generate alternative pronunciations: s t ah d iy d l s t ah d iy d s ao d iy s t ah d iy … Map pronunciation back to words: s t ah d iy d s ao d iy s t ah d iy studied saudi study

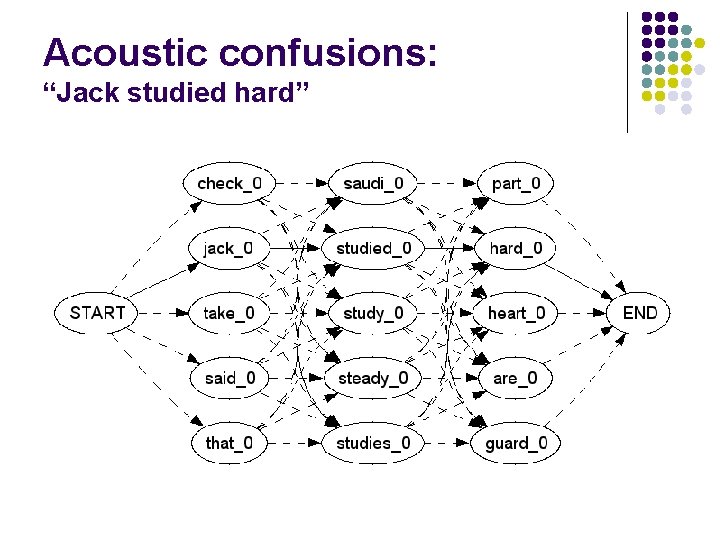

Acoustic confusions: “Jack studied hard”

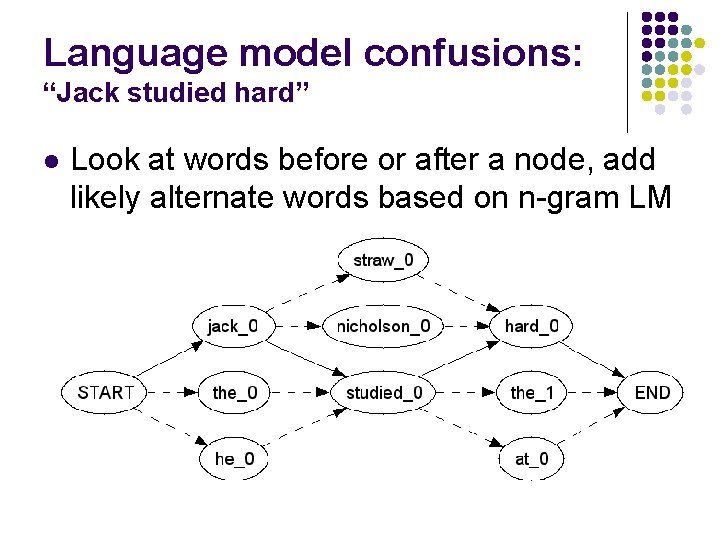

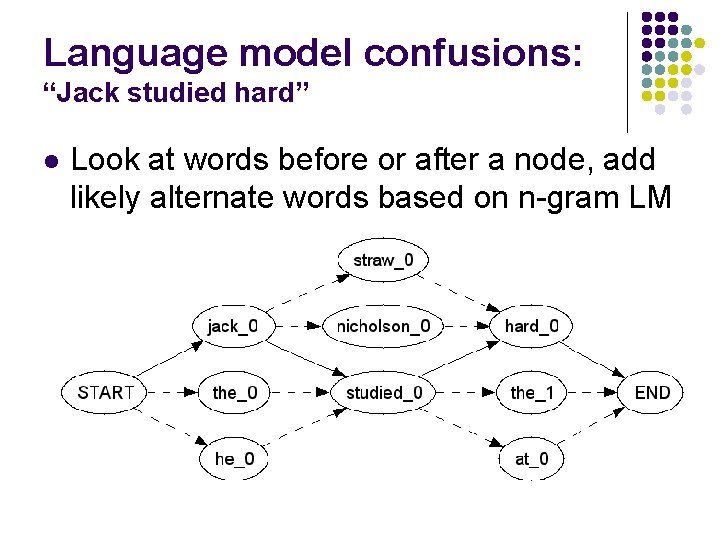

Language model confusions: “Jack studied hard” l Look at words before or after a node, add likely alternate words based on n-gram LM

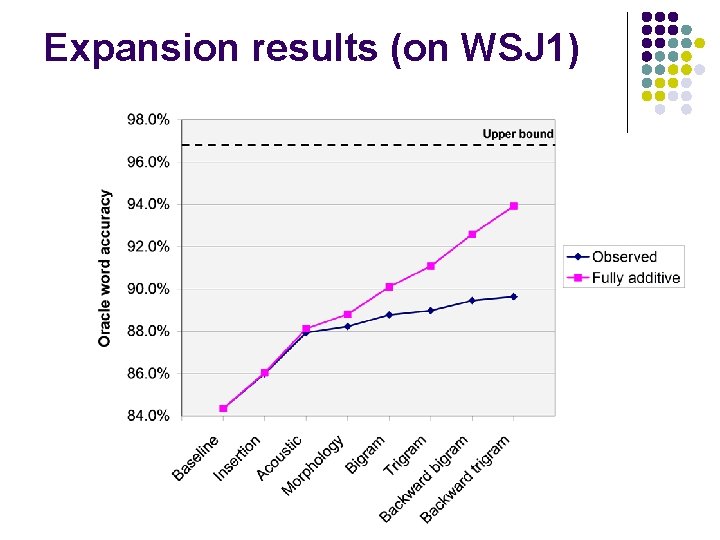

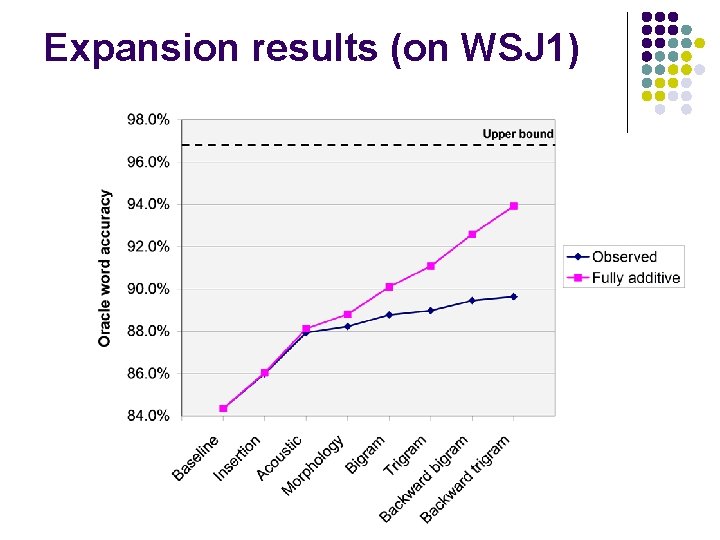

Expansion results (on WSJ 1)

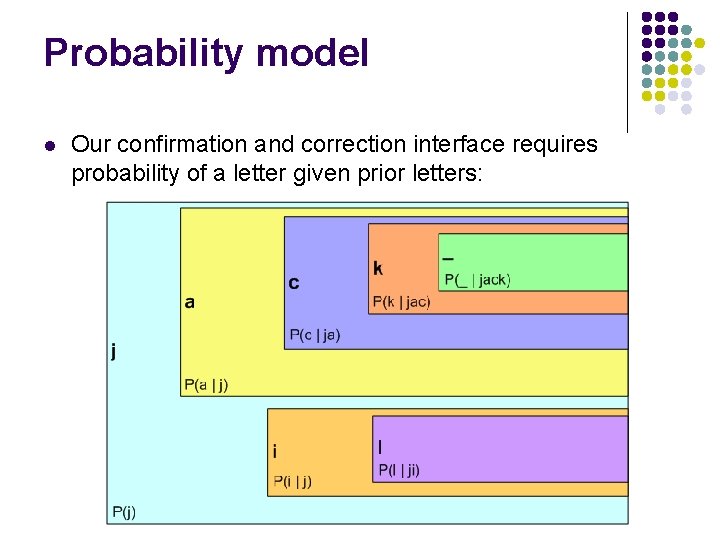

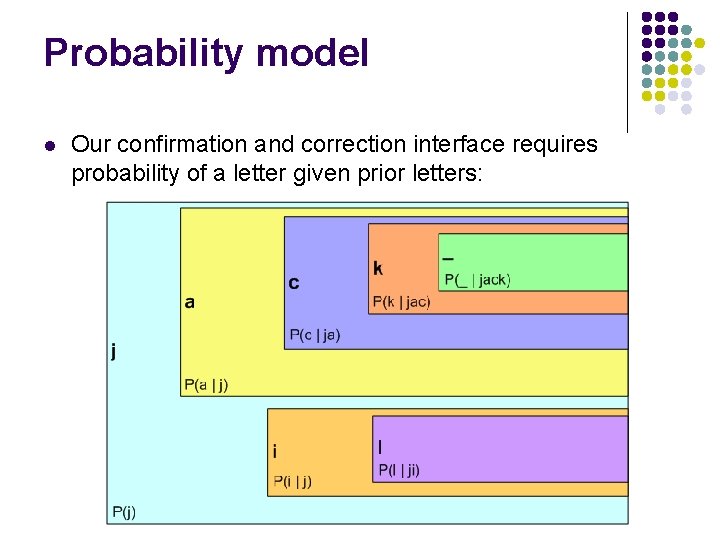

Probability model l Our confirmation and correction interface requires probability of a letter given prior letters:

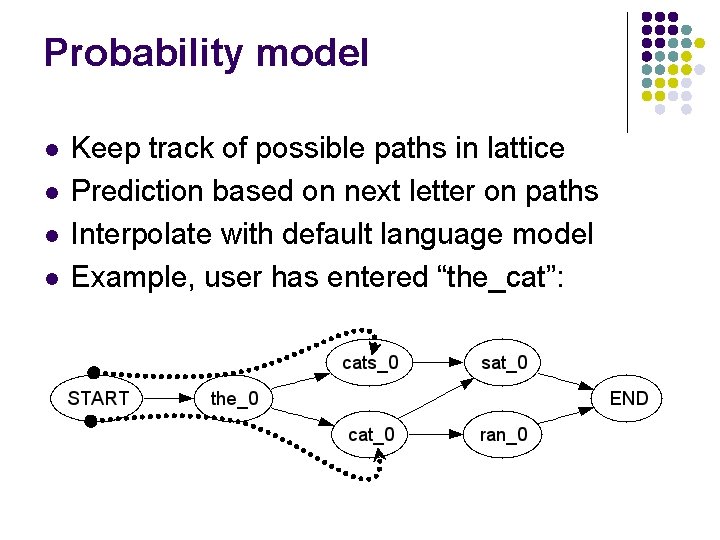

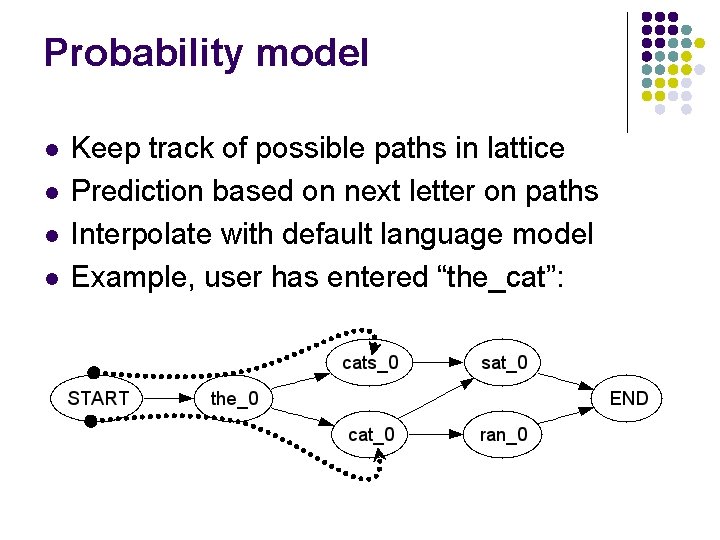

Probability model l l Keep track of possible paths in lattice Prediction based on next letter on paths Interpolate with default language model Example, user has entered “the_cat”:

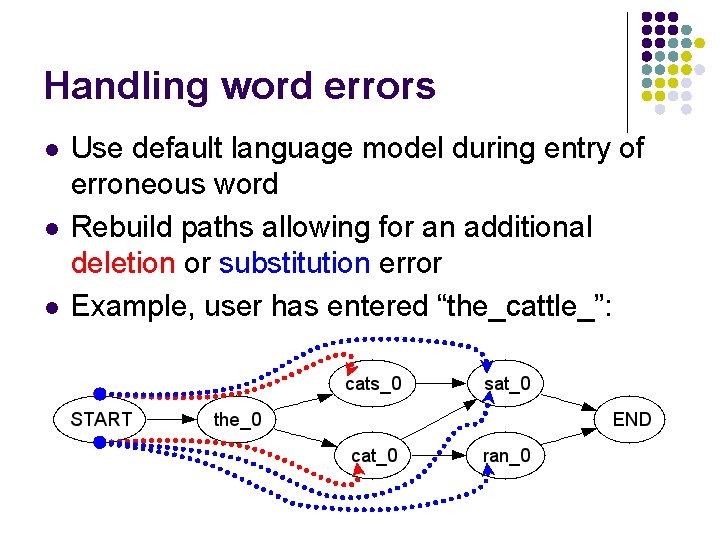

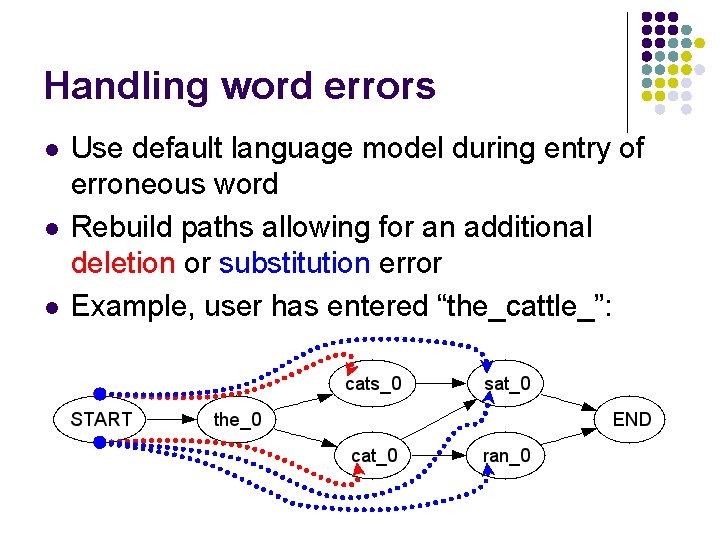

Handling word errors l l l Use default language model during entry of erroneous word Rebuild paths allowing for an additional deletion or substitution error Example, user has entered “the_cattle_”:

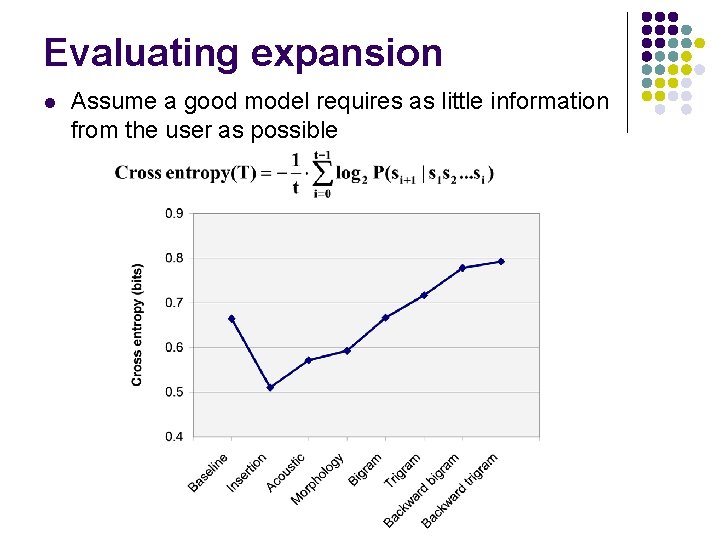

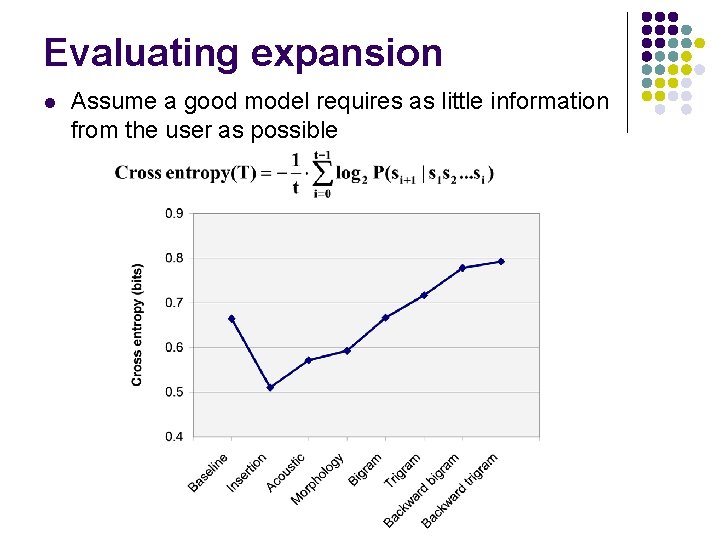

Evaluating expansion l Assume a good model requires as little information from the user as possible

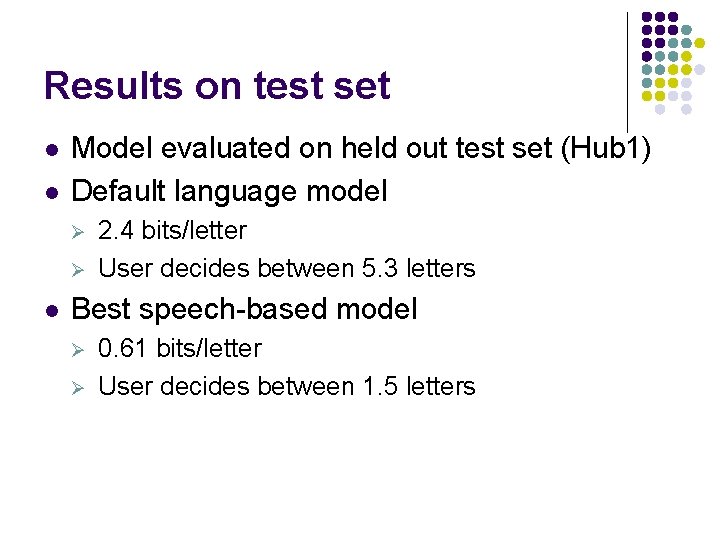

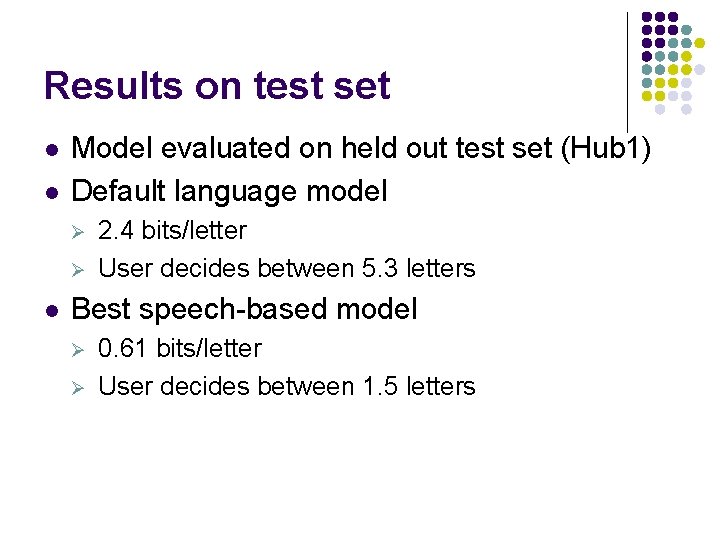

Results on test set l l Model evaluated on held out test set (Hub 1) Default language model Ø Ø l 2. 4 bits/letter User decides between 5. 3 letters Best speech-based model Ø Ø 0. 61 bits/letter User decides between 1. 5 letters

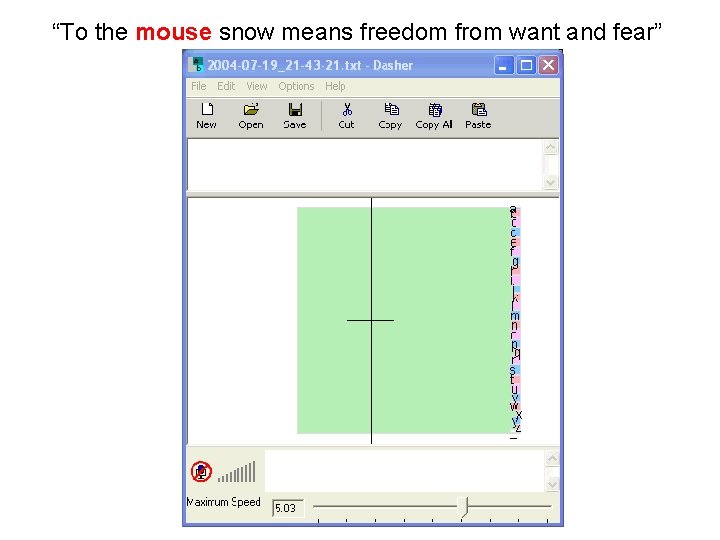

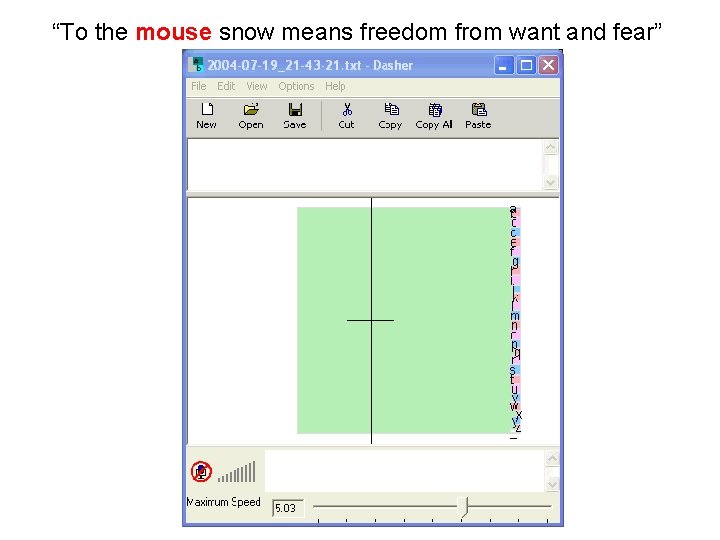

“To the mouse snow means freedom from want and fear”

Questions?