Efficient Complex Operators for Irregular Codes Jack Sampson

- Slides: 42

Efficient Complex Operators for Irregular Codes Jack Sampson, Ganesh Venkatesh, Nathan Goulding-Hotta, Saturnino Garcia, Steven Swanson, Michael Bedford Taylor Department of Computer Science and Engineering University of California, San Diego 1

The Utilization Wall With each successive process generation, the percentage of a chip that can actively switch drops exponentially due to power constraints. [Venkatesh, Chakraborty] 2

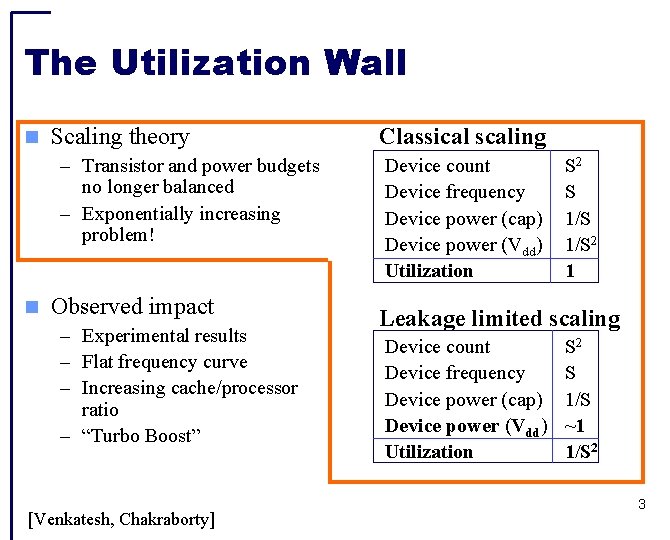

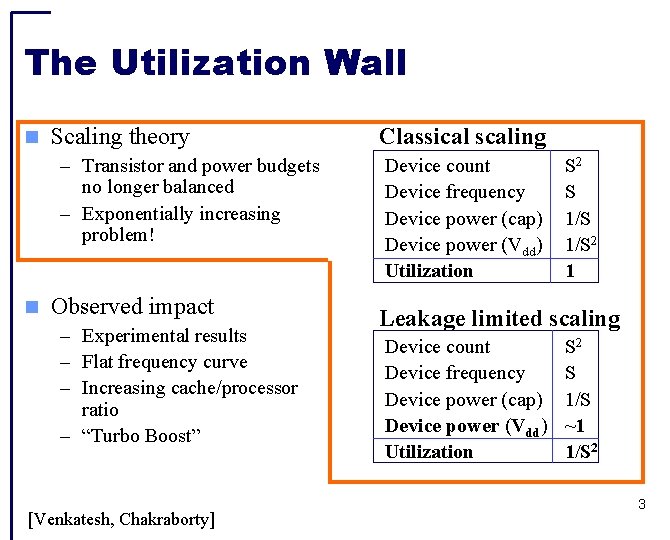

The Utilization Wall Scaling theory – Transistor and power budgets no longer balanced – Exponentially increasing problem! Observed impact – Experimental results – Flat frequency curve – Increasing cache/processor ratio – “Turbo Boost” [Venkatesh, Chakraborty] Classical scaling Device count Device frequency Device power (cap) Device power (Vdd) Utilization S 2 S 1/S 2 1 Leakage limited scaling Device count Device frequency Device power (cap) Device power (Vdd) Utilization S 2 S 1/S ~1 1/S 2 3

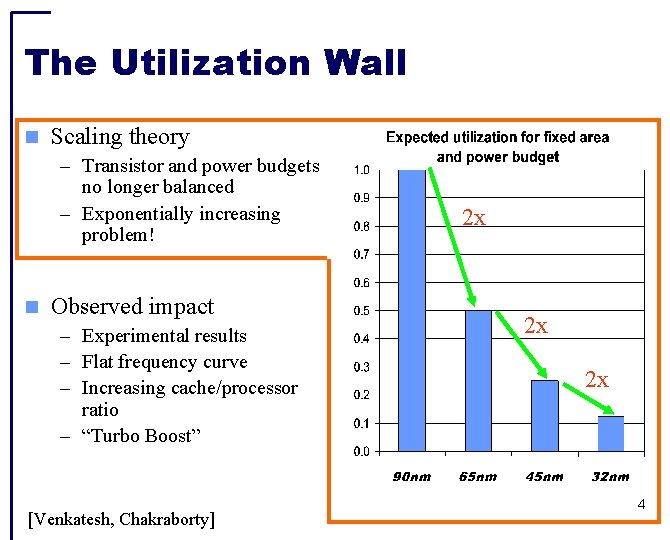

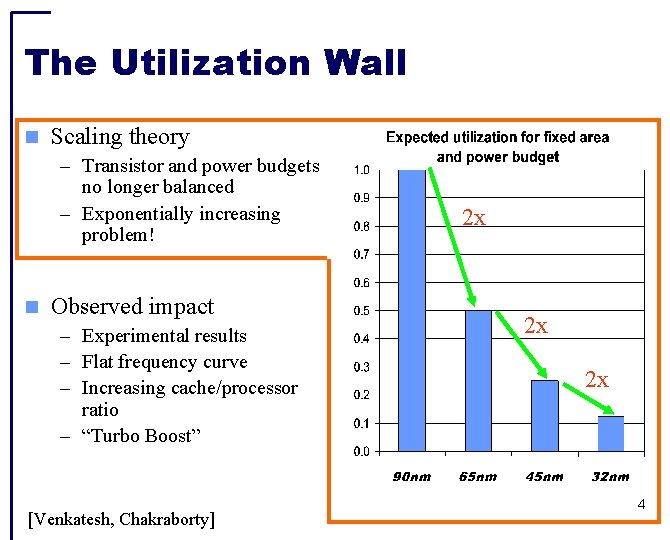

The Utilization Wall Scaling theory – Transistor and power budgets no longer balanced – Exponentially increasing problem! Observed impact – Experimental results – Flat frequency curve – Increasing cache/processor ratio – “Turbo Boost” [Venkatesh, Chakraborty] 2 x 2 x 2 x 4

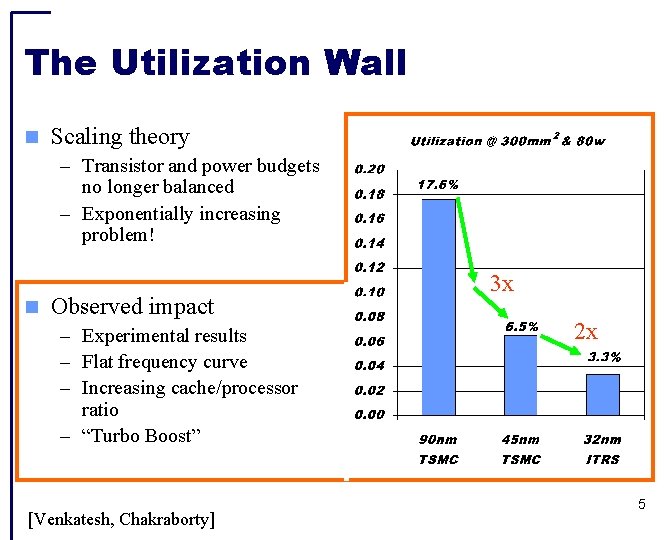

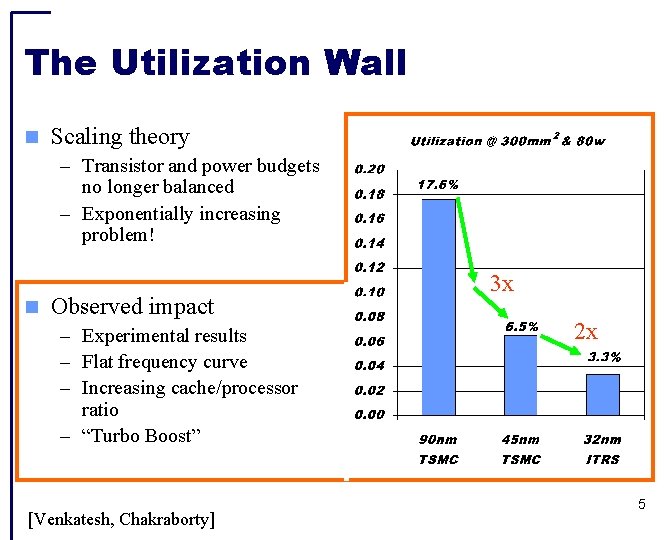

The Utilization Wall Scaling theory – Transistor and power budgets no longer balanced – Exponentially increasing problem! Observed impact – Experimental results – Flat frequency curve – Increasing cache/processor ratio – “Turbo Boost” [Venkatesh, Chakraborty] 3 x 2 x 5

Dealing with the Utilization Wall Insights: – Power is now more expensive than area – Specialized logic has been shown as an effective way to improve energy efficiency (10 -1000 x) Our Approach: – Use area for specialized cores to save energy on common apps – Can apply power savings to other programs, increasing throughput Specialized coprocessors provide an architectural way to trade area for an effective increase in power budget – Challenge: coprocessors for all types of applications 6

Specializing Irregular Codes Effectiveness of specialization dependent on coverage – Need to cover many types of code – Both regular and irregular What is irregular code? – Lacks easily exploited structure / parallelism – Found broadly across desktop workloads How can we make it efficient? – Reduce per-op overheads with complex operators – Improve latency for serial portions 7

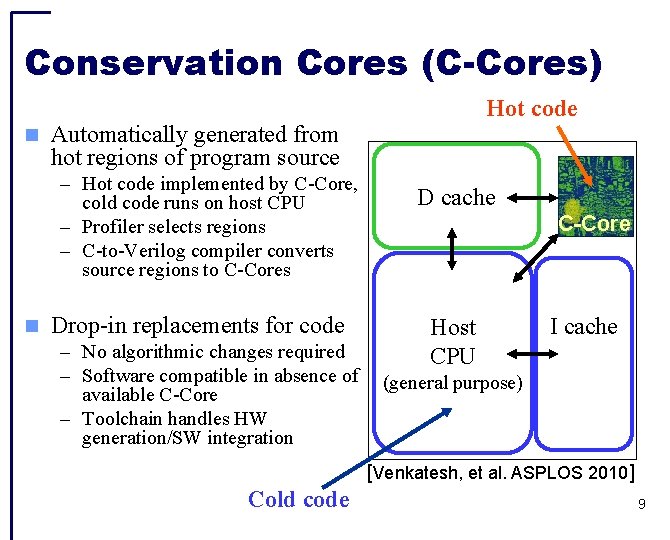

Candidates for Irregular Codes Microprocessors – Handle all codes – Poor scaling of performance vs. energy – Utilization wall aggravates scaling problems Accelerators – Require parallelizable, highly structured code – Memory system challenging to integrate with conventional memory – Target performance over energy Conservation Cores (C-Cores) [Venkatesh, et al. ASPLOS 2010] – Handle arbitrary code – Share L 1 cache with host processor – Target energy over performance 8

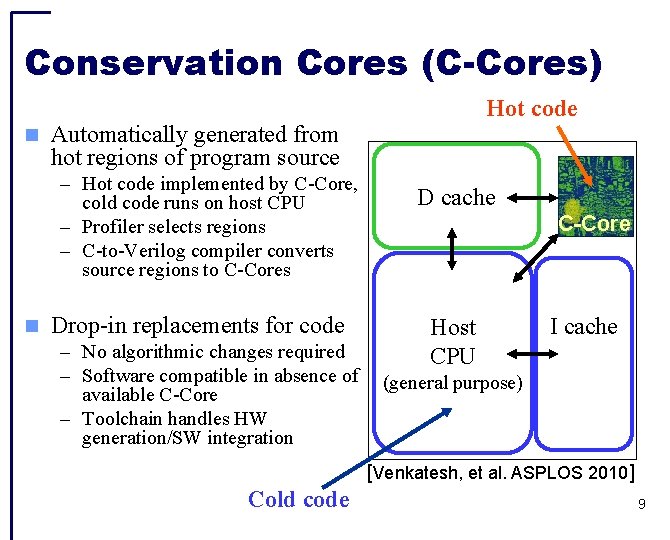

Conservation Cores (C-Cores) Automatically generated from hot regions of program source – Hot code implemented by C-Core, cold code runs on host CPU – Profiler selects regions – C-to-Verilog compiler converts source regions to C-Cores Hot code Drop-in replacements for code – No algorithmic changes required – Software compatible in absence of available C-Core – Toolchain handles HW generation/SW integration D cache C-Core Host CPU I cache (general purpose) [Venkatesh, et al. ASPLOS 2010] Cold code 9

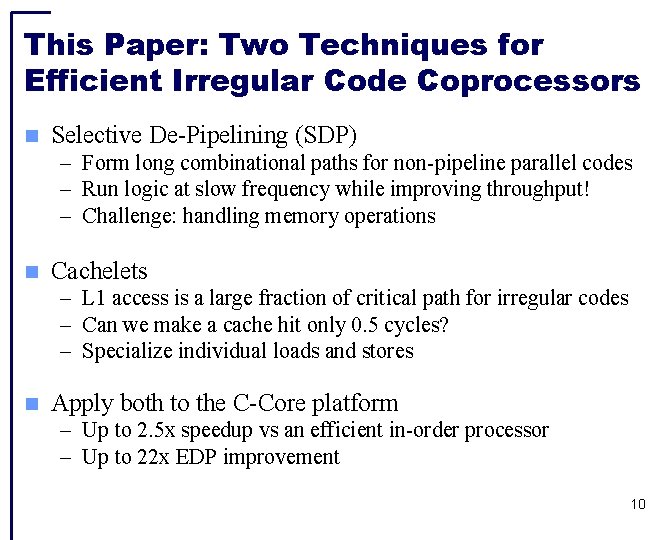

This Paper: Two Techniques for Efficient Irregular Code Coprocessors Selective De-Pipelining (SDP) – Form long combinational paths for non-pipeline parallel codes – Run logic at slow frequency while improving throughput! – Challenge: handling memory operations Cachelets – L 1 access is a large fraction of critical path for irregular codes – Can we make a cache hit only 0. 5 cycles? – Specialize individual loads and stores Apply both to the C-Core platform – Up to 2. 5 x speedup vs an efficient in-order processor – Up to 22 x EDP improvement 10

Outline Efficiency through specialization Baseline C-Core Microarchitecture Selective De-Pipelining Cachelets Conclusion 11

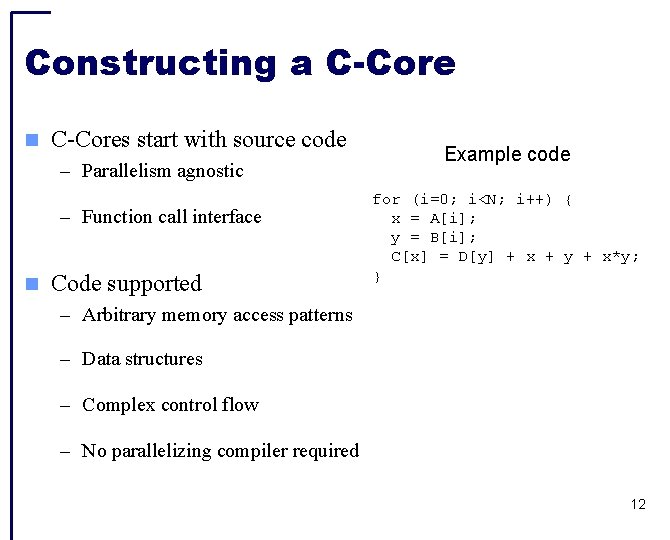

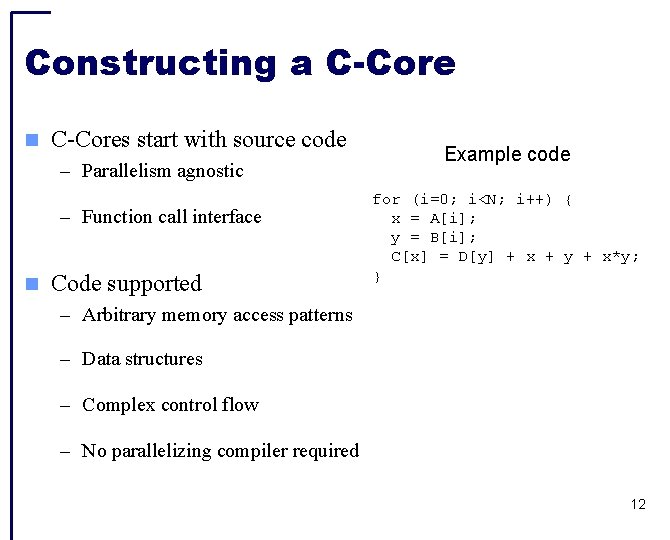

Constructing a C-Cores start with source code – Parallelism agnostic – Function call interface Code supported Example code for (i=0; i<N; i++) { x = A[i]; y = B[i]; C[x] = D[y] + x + y + x*y; } – Arbitrary memory access patterns – Data structures – Complex control flow – No parallelizing compiler required 12

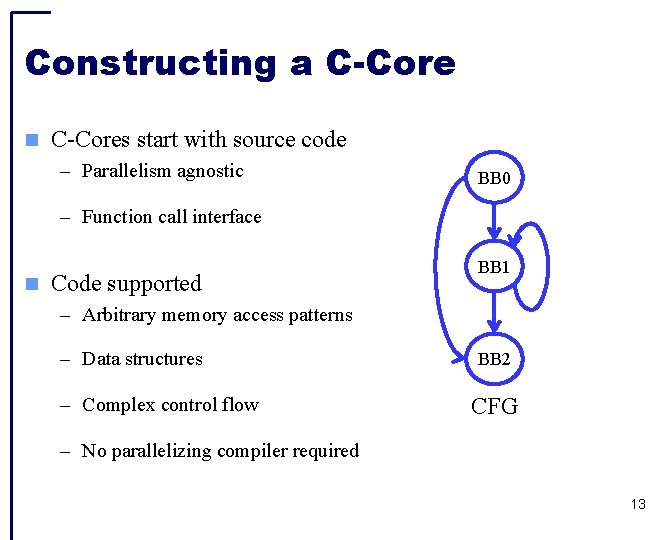

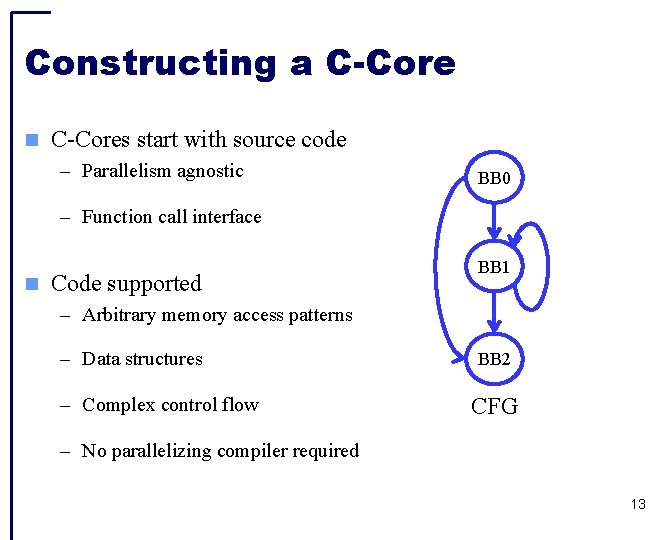

Constructing a C-Cores start with source code – Parallelism agnostic BB 0 – Function call interface Code supported BB 1 – Arbitrary memory access patterns – Data structures – Complex control flow BB 2 CFG – No parallelizing compiler required 13

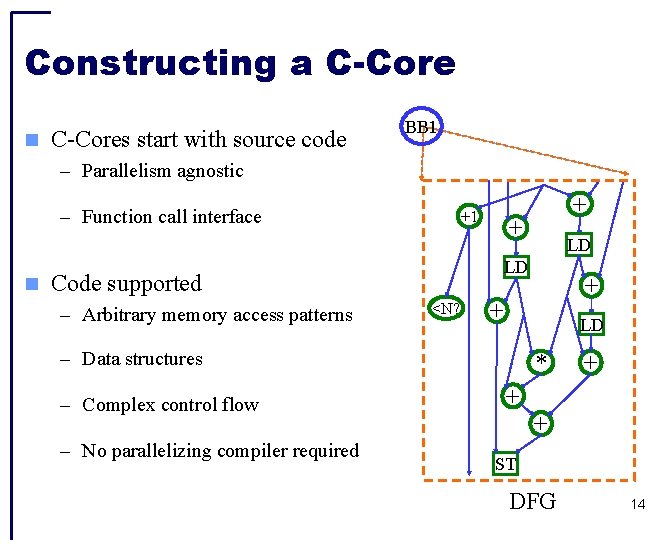

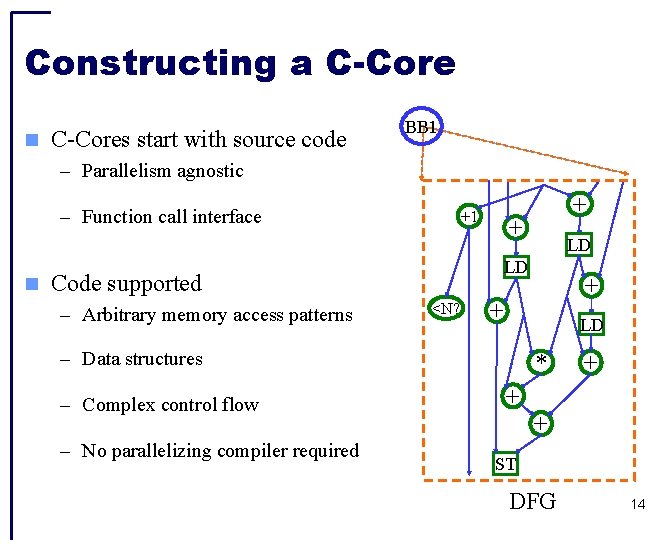

Constructing a C-Cores start with source code BB 1 – Parallelism agnostic – Function call interface +1 + <N? – No parallelizing compiler required + + LD – Data structures – Complex control flow LD LD Code supported – Arbitrary memory access patterns + * + + + ST DFG 14

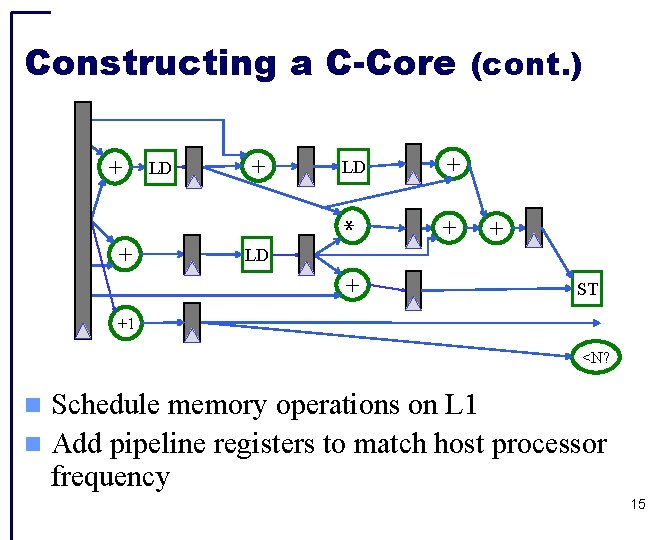

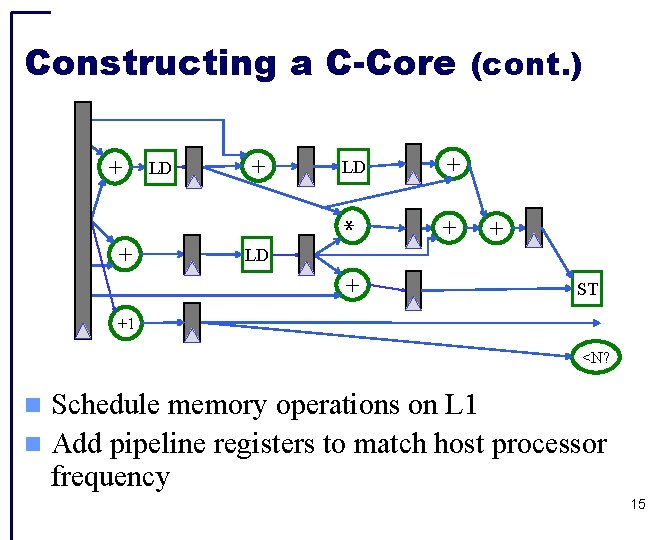

+ + + LD * + LD LD + + + Constructing a C-Core (cont. ) ST +1 <N? Schedule memory operations on L 1 Add pipeline registers to match host processor frequency 15

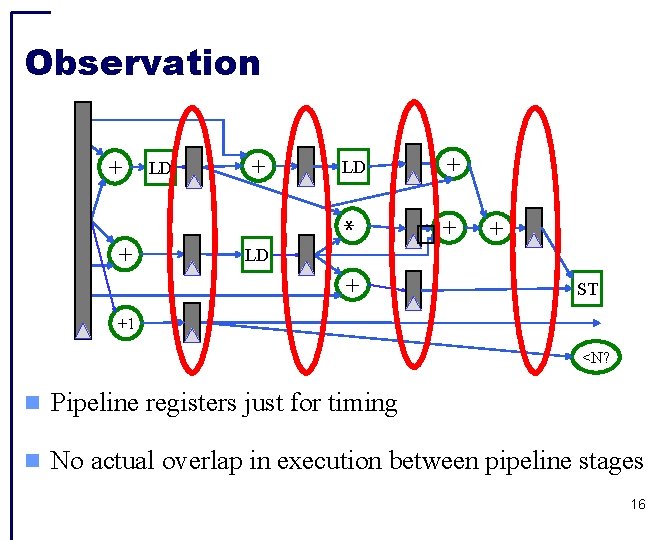

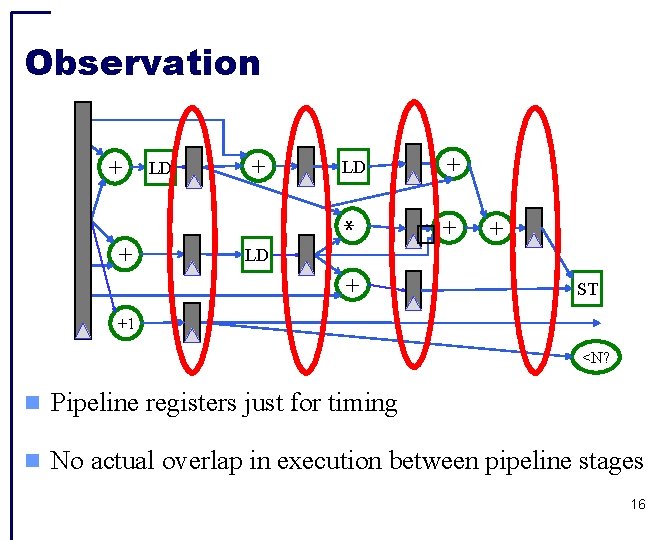

+ + LD � + + + LD * LD + + Observation ST +1 <N? Pipeline registers just for timing No actual overlap in execution between pipeline stages 16

Outline Efficiency through specialization Baseline C-Core Microarchitecture Selective De-Pipelining Cachelets Conclusion 17

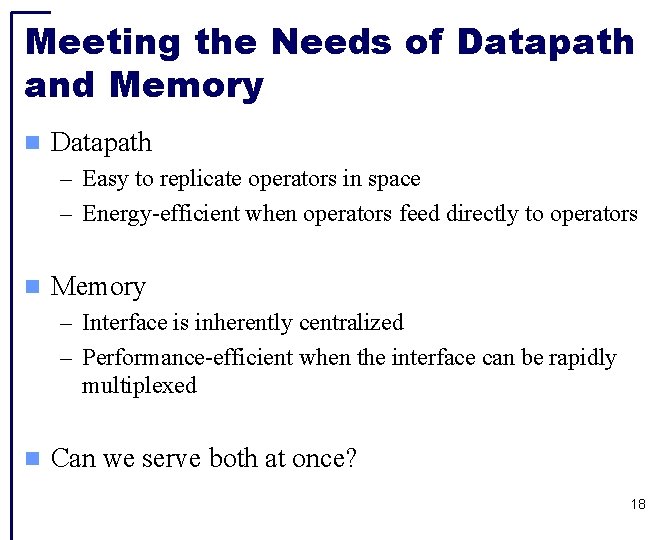

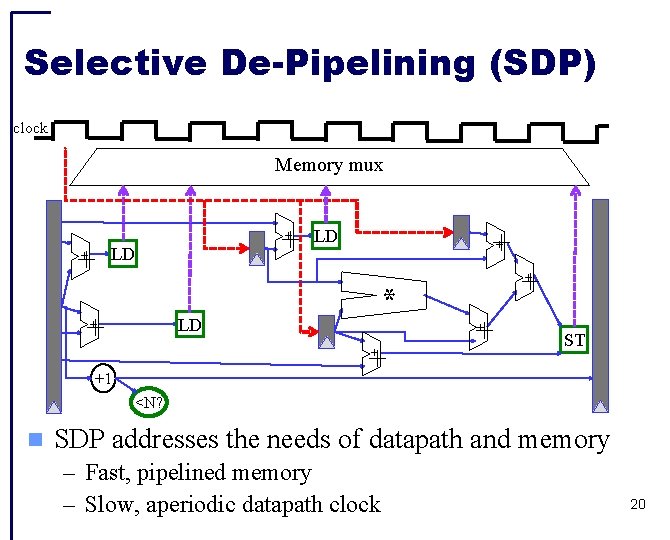

Meeting the Needs of Datapath and Memory Datapath – Easy to replicate operators in space – Energy-efficient when operators feed directly to operators Memory – Interface is inherently centralized – Performance-efficient when the interface can be rapidly multiplexed Can we serve both at once? 18

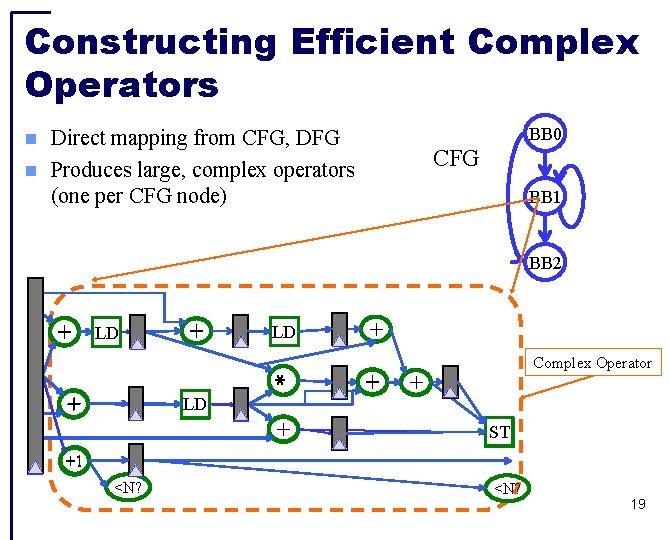

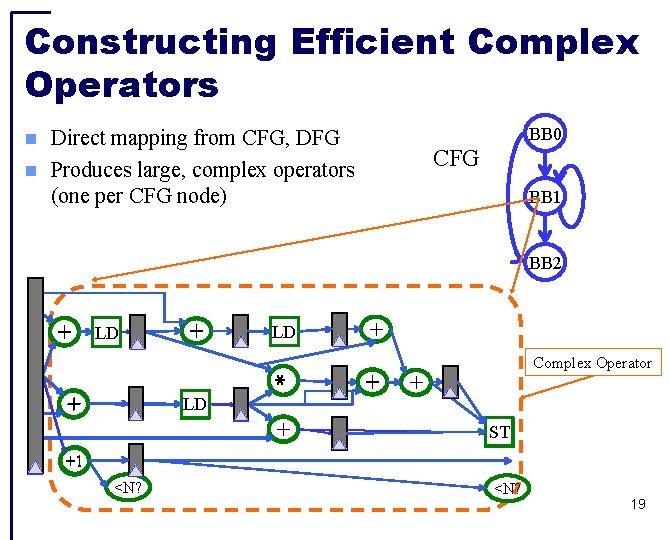

Constructing Efficient Complex Operators BB 0 Direct mapping from CFG, DFG Produces large, complex operators (one per CFG node) CFG BB 1 LD + + BB 2 + + * Complex Operator + + LD ST +1 <N? 19

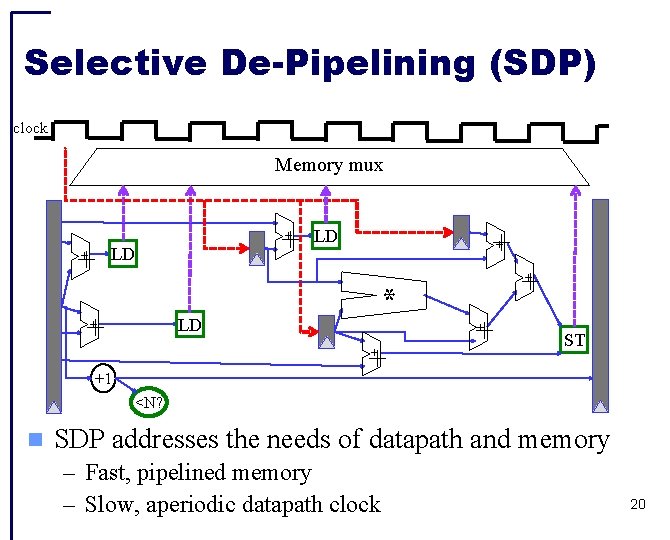

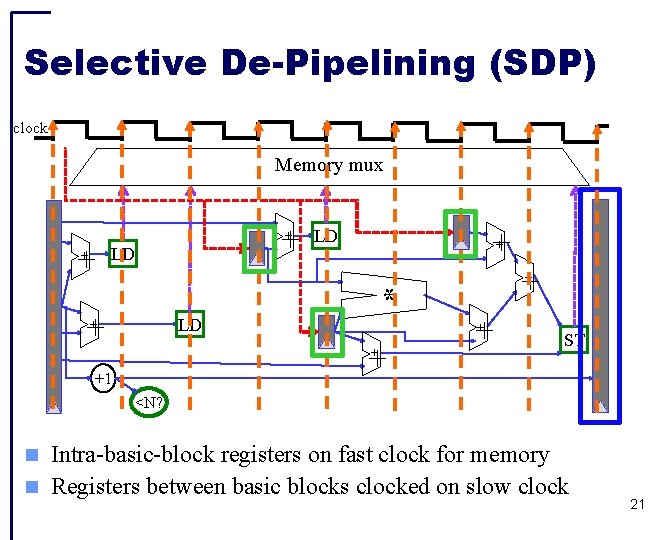

Selective De-Pipelining (SDP) clock Memory mux + LD ++ * + ++ ++ LD + + +1 + + ST <N? SDP addresses the needs of datapath and memory – Fast, pipelined memory – Slow, aperiodic datapath clock 20

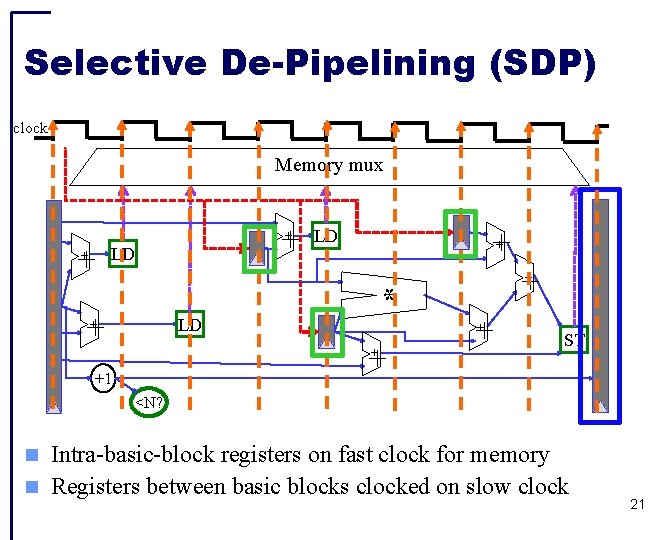

Selective De-Pipelining (SDP) clock Memory mux + LD ++ * + ++ ++ LD + + +1 + + ST <N? Intra-basic-block registers on fast clock for memory Registers between basic blocks clocked on slow clock 21

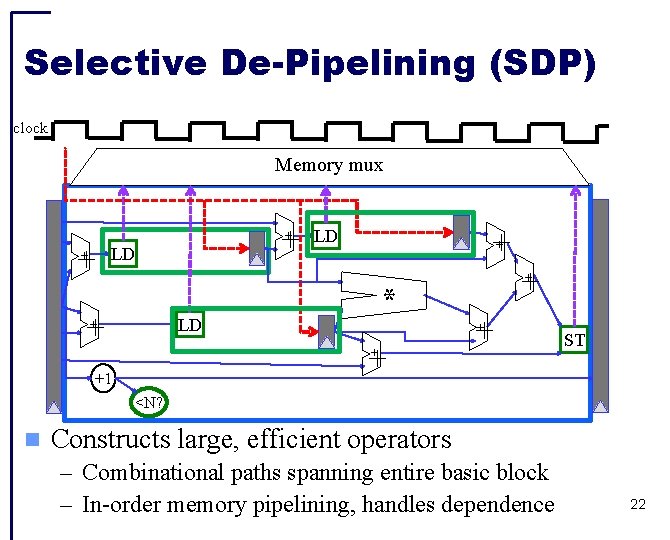

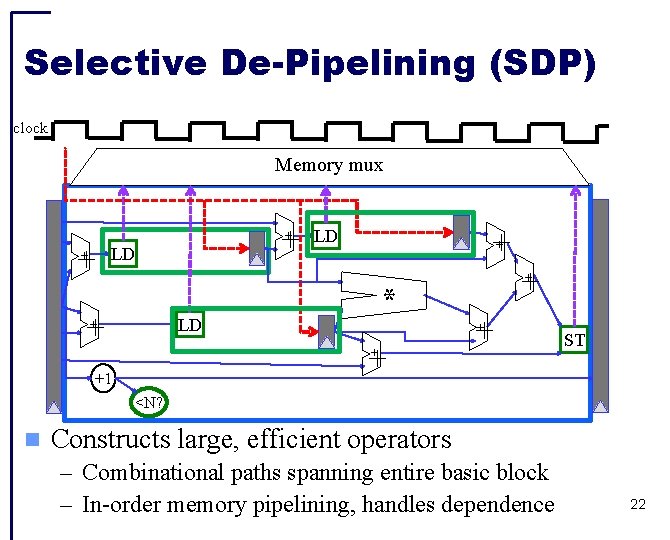

Selective De-Pipelining (SDP) clock Memory mux + LD ++ * + ++ ++ LD + + +1 + + ST <N? Constructs large, efficient operators – Combinational paths spanning entire basic block – In-order memory pipelining, handles dependence 22

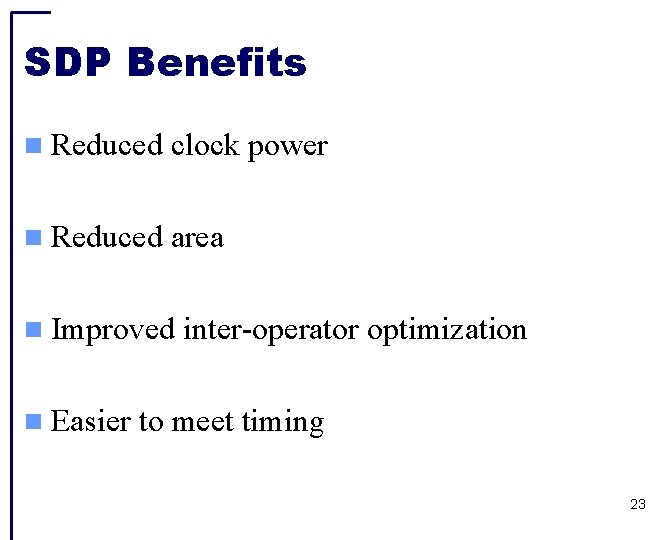

SDP Benefits Reduced clock power Reduced area Improved Easier inter-operator optimization to meet timing 23

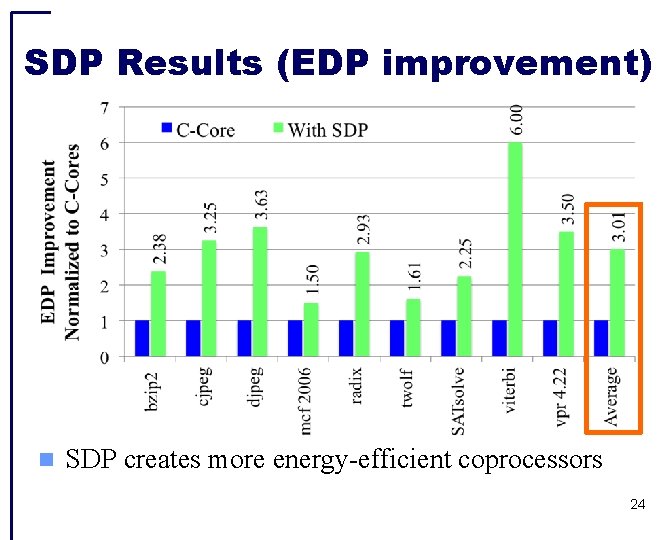

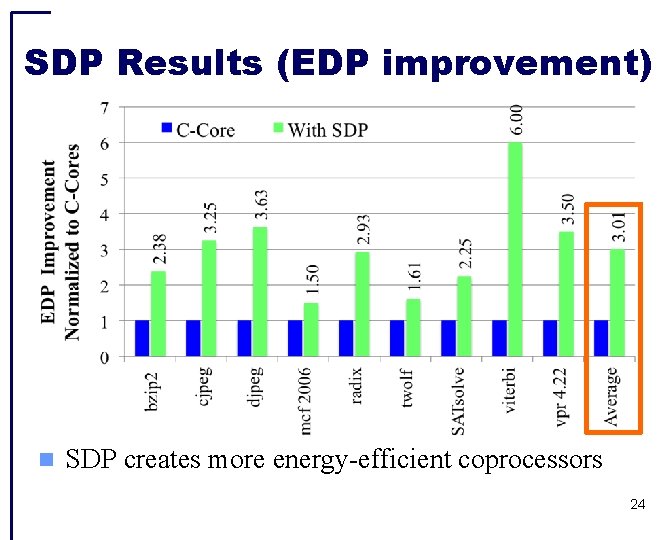

SDP Results (EDP improvement) SDP creates more energy-efficient coprocessors 24

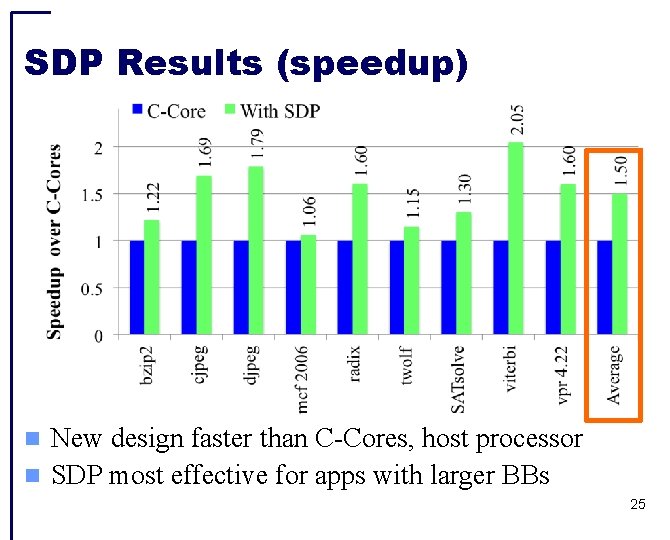

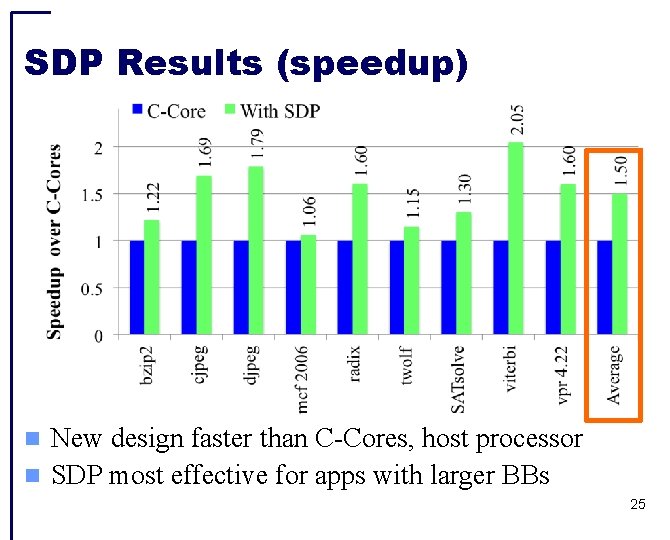

SDP Results (speedup) New design faster than C-Cores, host processor SDP most effective for apps with larger BBs 25

Outline Efficiency through specialization Baseline C-Core Microarchitecture Selective De-Pipelining Cachelets Conclusion 26

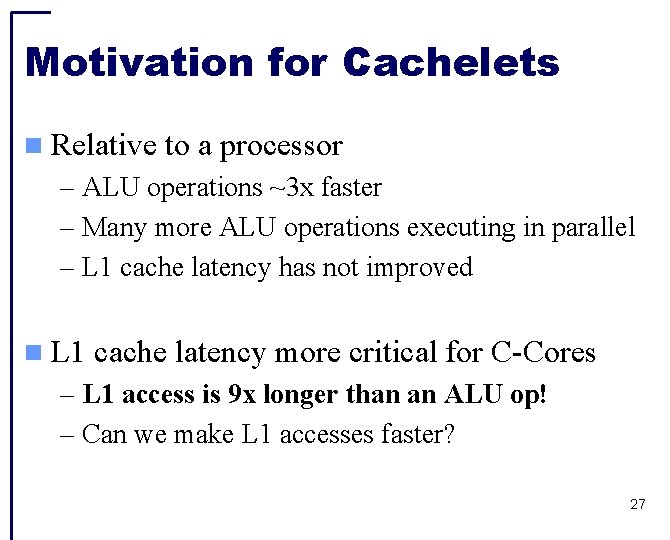

Motivation for Cachelets Relative to a processor – ALU operations ~3 x faster – Many more ALU operations executing in parallel – L 1 cache latency has not improved L 1 cache latency more critical for C-Cores – L 1 access is 9 x longer than an ALU op! – Can we make L 1 accesses faster? 27

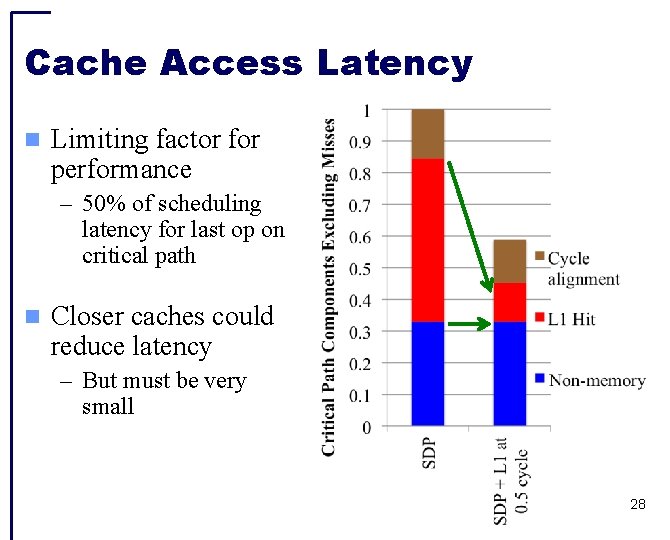

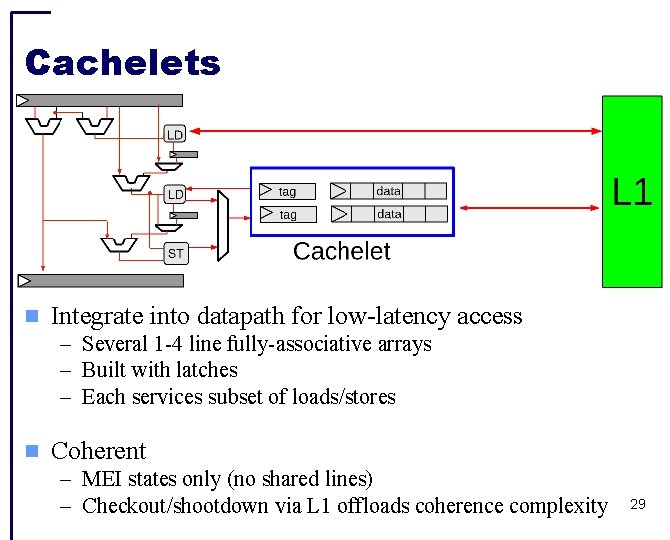

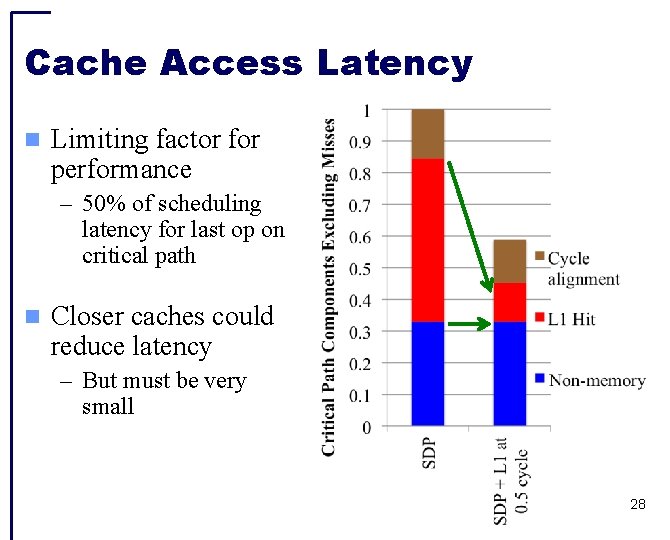

Cache Access Latency Limiting factor for performance – 50% of scheduling latency for last op on critical path Closer caches could reduce latency – But must be very small 28

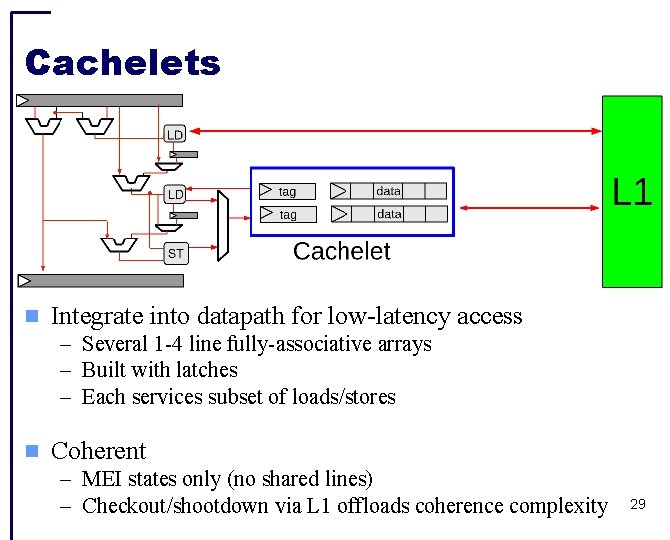

Cachelets Integrate into datapath for low-latency access – Several 1 -4 line fully-associative arrays – Built with latches – Each services subset of loads/stores Coherent – MEI states only (no shared lines) – Checkout/shootdown via L 1 offloads coherence complexity 29

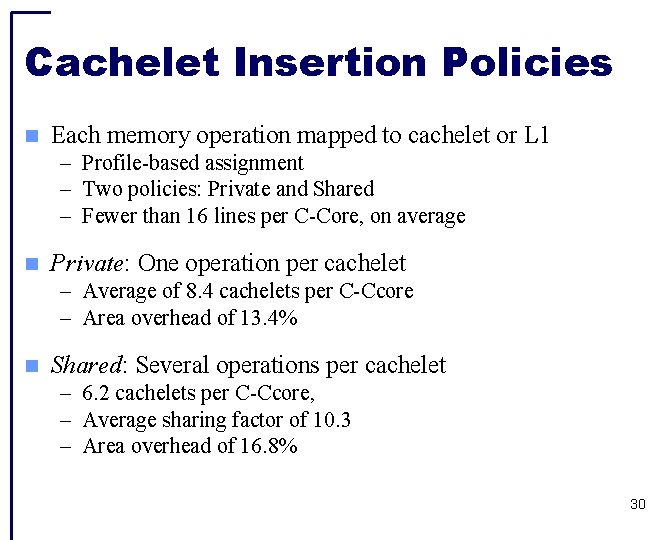

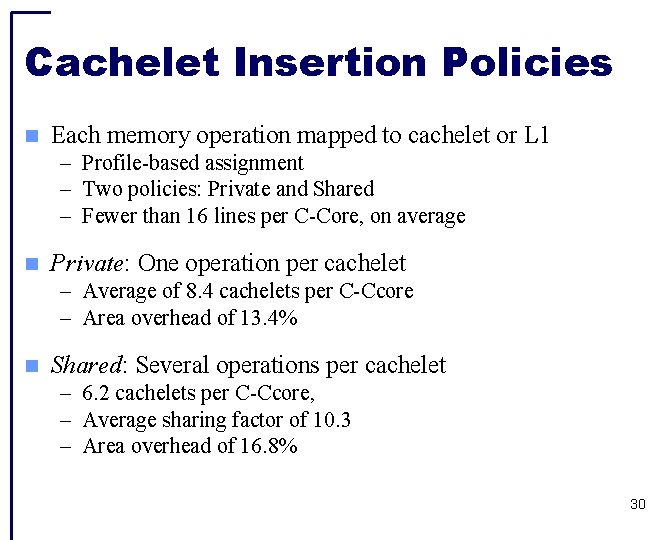

Cachelet Insertion Policies Each memory operation mapped to cachelet or L 1 – Profile-based assignment – Two policies: Private and Shared – Fewer than 16 lines per C-Core, on average Private: One operation per cachelet – Average of 8. 4 cachelets per C-Ccore – Area overhead of 13. 4% Shared: Several operations per cachelet – 6. 2 cachelets per C-Ccore, – Average sharing factor of 10. 3 – Area overhead of 16. 8% 30

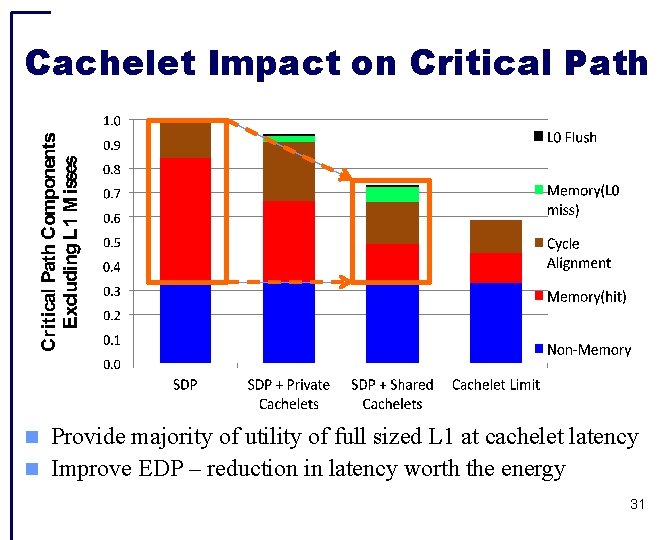

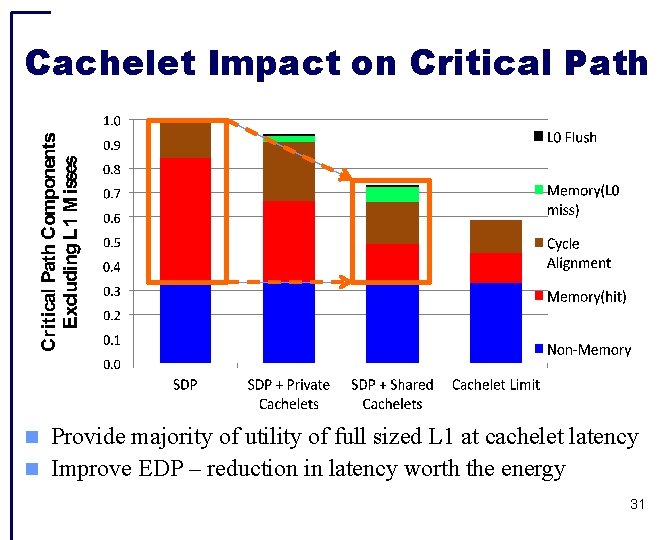

Cachelet Impact on Critical Path Provide majority of utility of full sized L 1 at cachelet latency Improve EDP – reduction in latency worth the energy 31

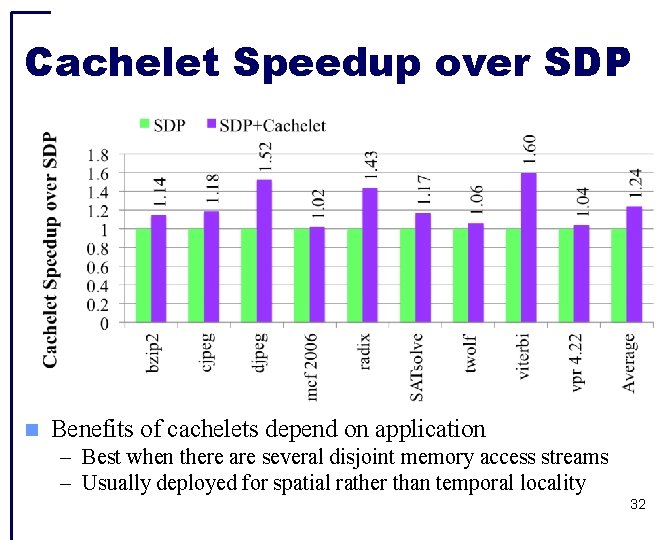

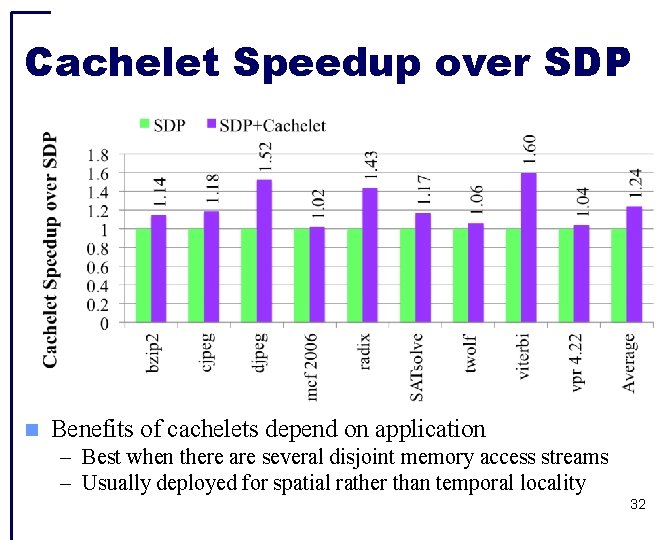

Cachelet Speedup over SDP Benefits of cachelets depend on application – Best when there are several disjoint memory access streams – Usually deployed for spatial rather than temporal locality 32

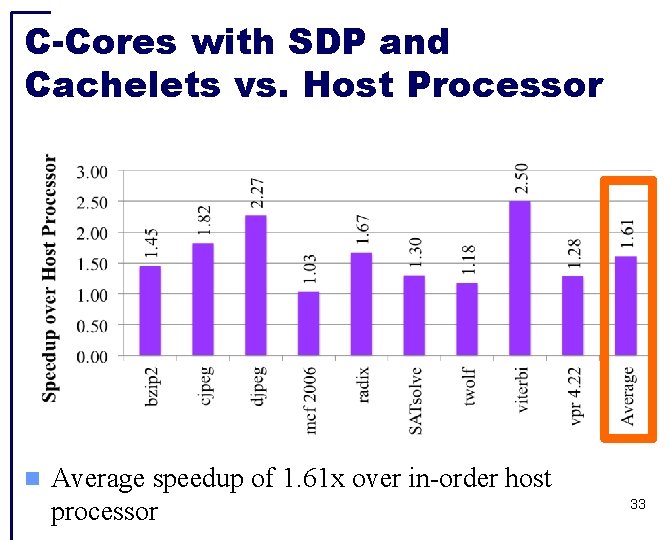

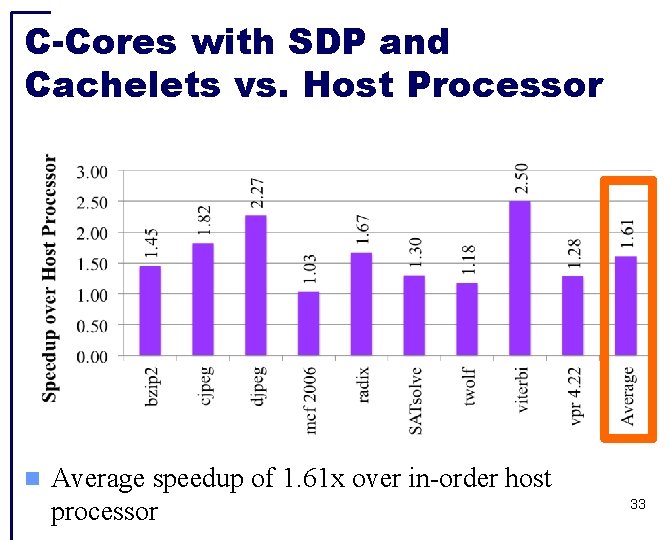

C-Cores with SDP and Cachelets vs. Host Processor Average speedup of 1. 61 x over in-order host processor 33

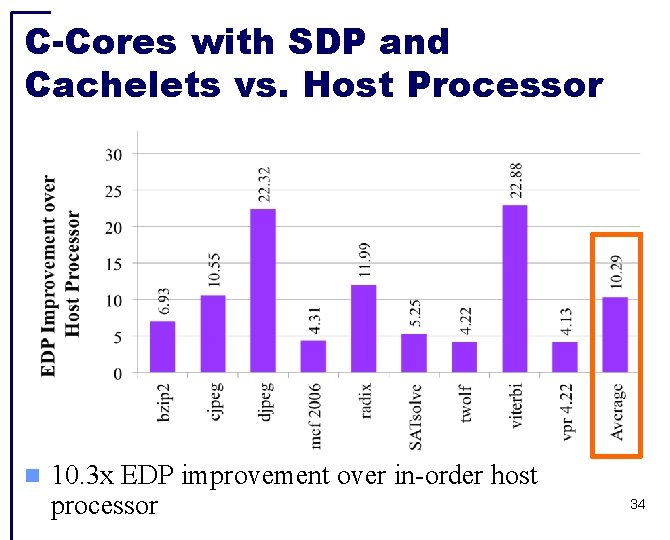

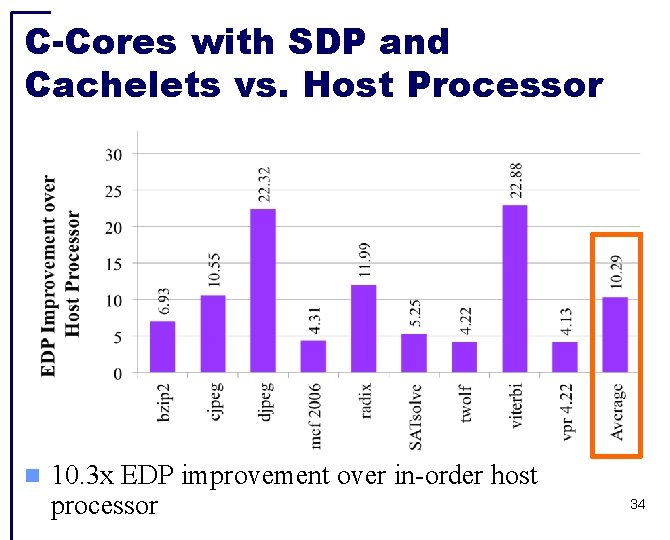

C-Cores with SDP and Cachelets vs. Host Processor 10. 3 x EDP improvement over in-order host processor 34

Conclusion Achieving high coverage with specialization requires handling both irregular and regular codes Selective De-Pipelining addresses the divergent needs of memory and datapath Cachelets reduce cache access time by a factor of 6 for subset of memory operations Using SDP and cachelets, we provide both 10. 3 x EDP 1. 6 x performance improvements for irregular code 35

36

Backup Slides 37

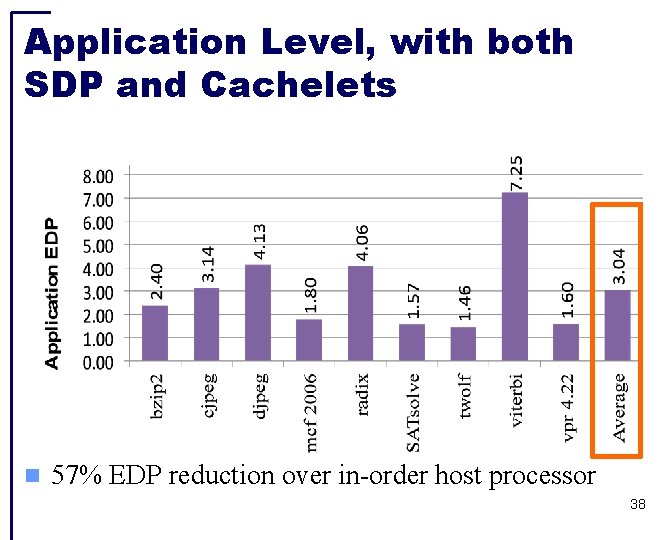

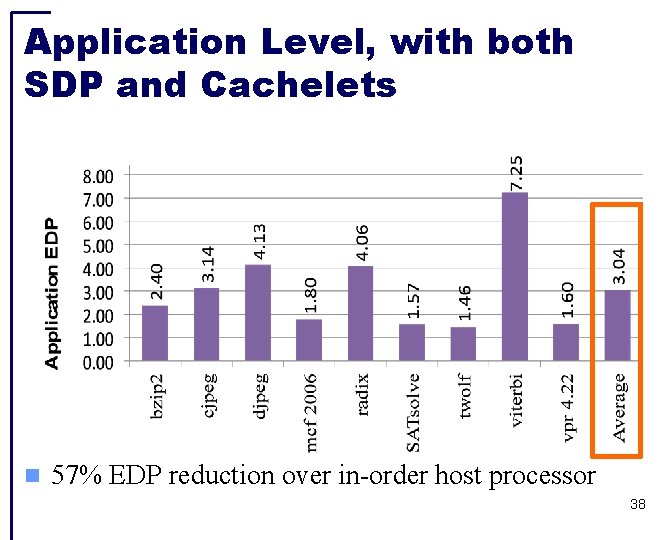

Application Level, with both SDP and Cachelets 57% EDP reduction over in-order host processor 38

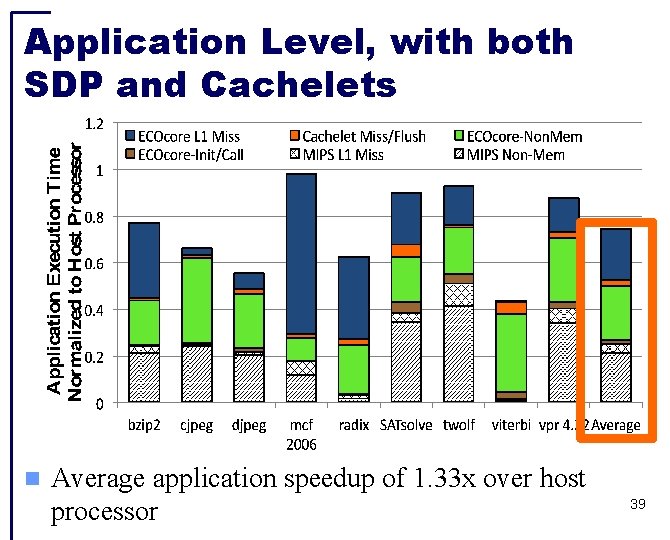

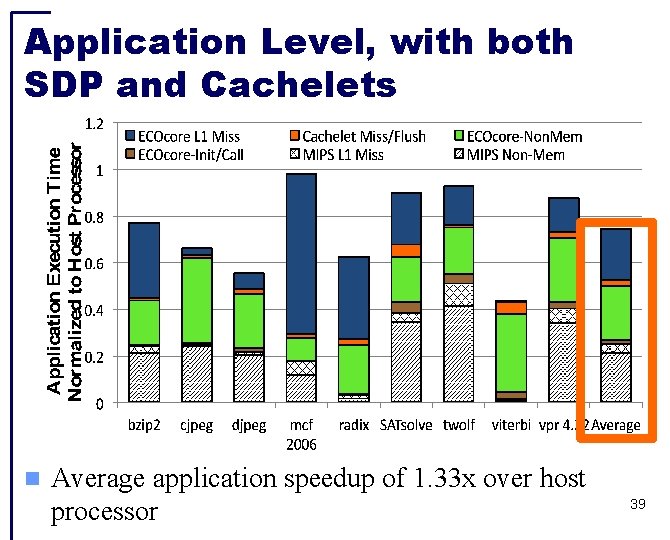

Application Level, with both SDP and Cachelets Average application speedup of 1. 33 x over host processor 39

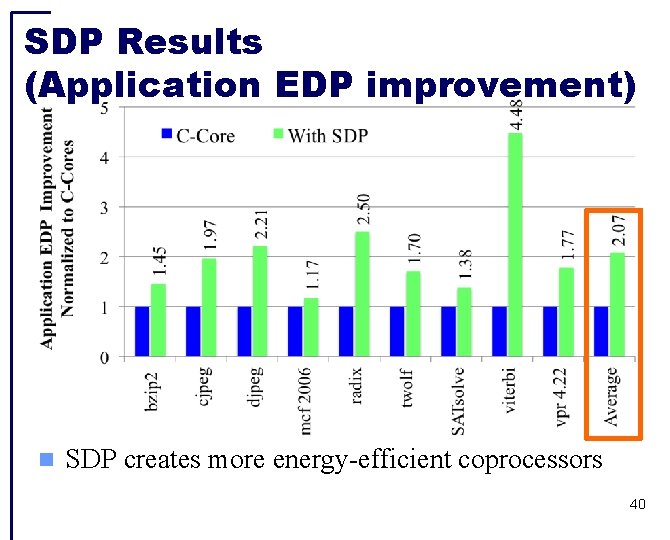

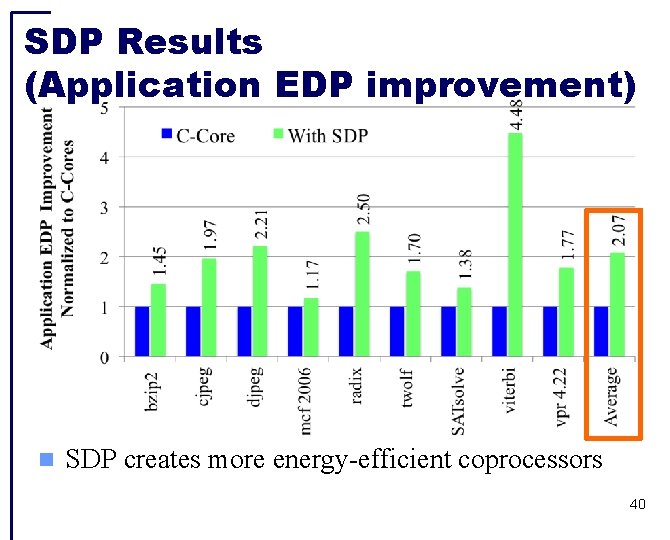

SDP Results (Application EDP improvement) SDP creates more energy-efficient coprocessors 40

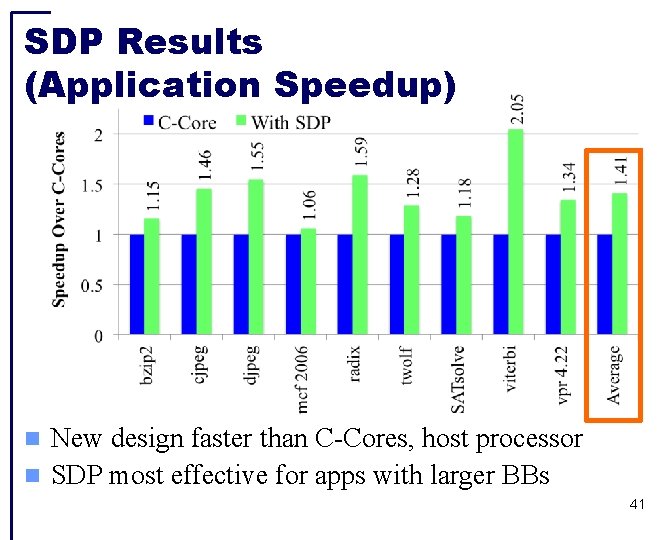

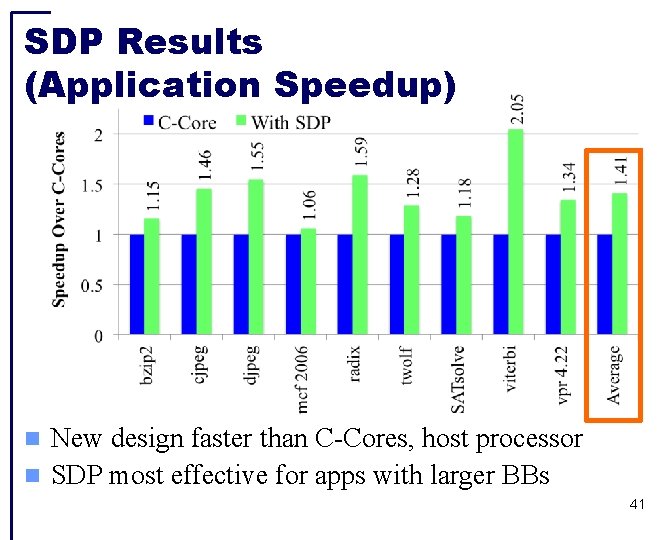

SDP Results (Application Speedup) New design faster than C-Cores, host processor SDP most effective for apps with larger BBs 41

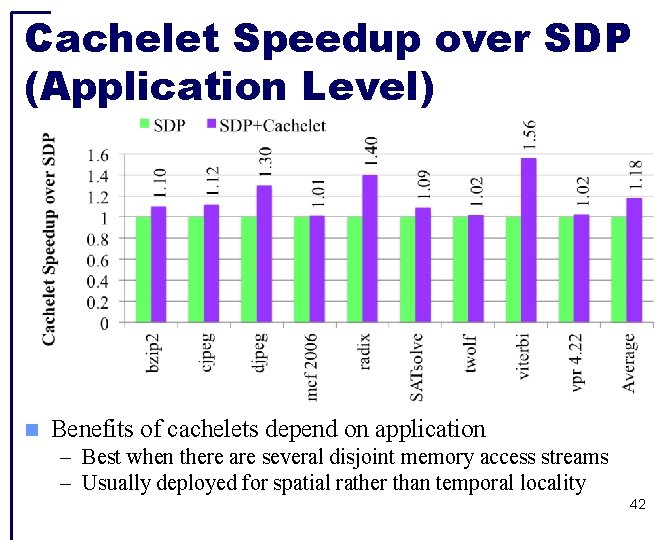

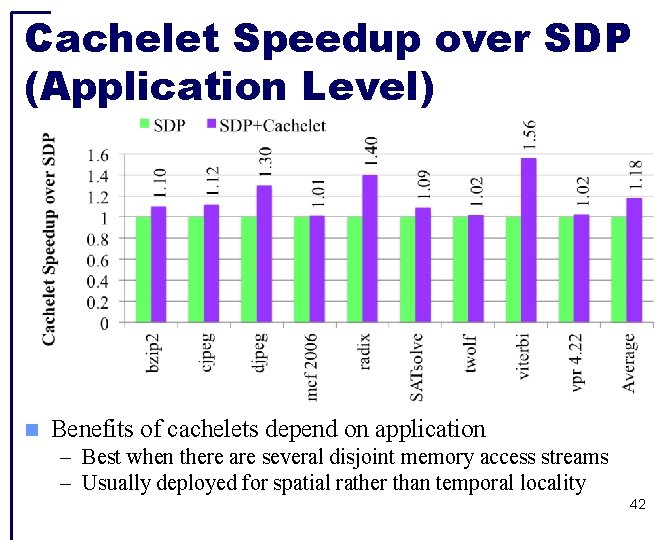

Cachelet Speedup over SDP (Application Level) Benefits of cachelets depend on application – Best when there are several disjoint memory access streams – Usually deployed for spatial rather than temporal locality 42