EFCOG CAS WORKING GROUP DOE Oversight Benchmarking Initiative

EFCOG CAS WORKING GROUP DOE Oversight Benchmarking Initiative Al Mac. Dougall DOE National Training Center Office of Enterprise Assessments April 24, 2018

Outline • Background Oversight Process – Oversight Curriculum – Oversight Qualification – • Why Benchmarking • Outcome and Approach • Preliminary Observations • Path Forward EFCOG CAS WG – April 24, 2018 2

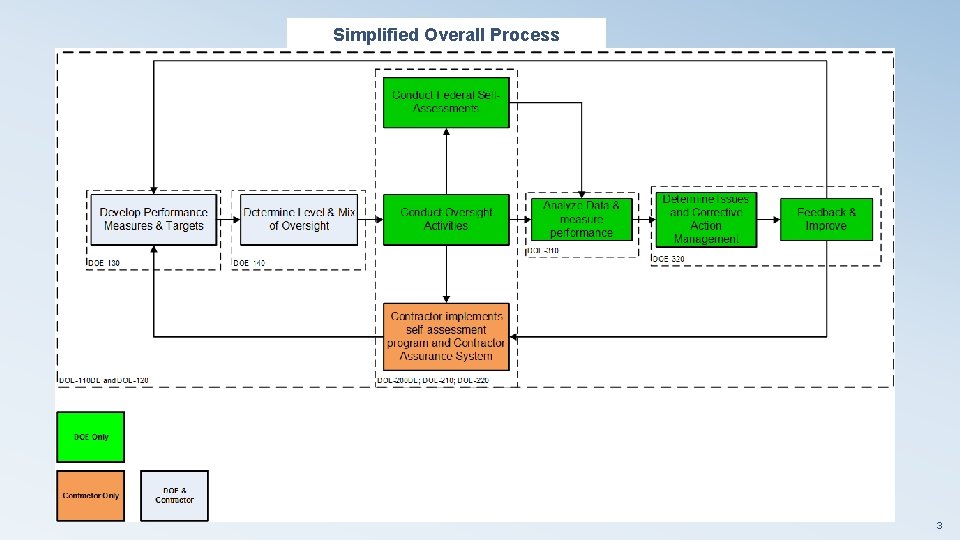

Simplified Overall Process 3

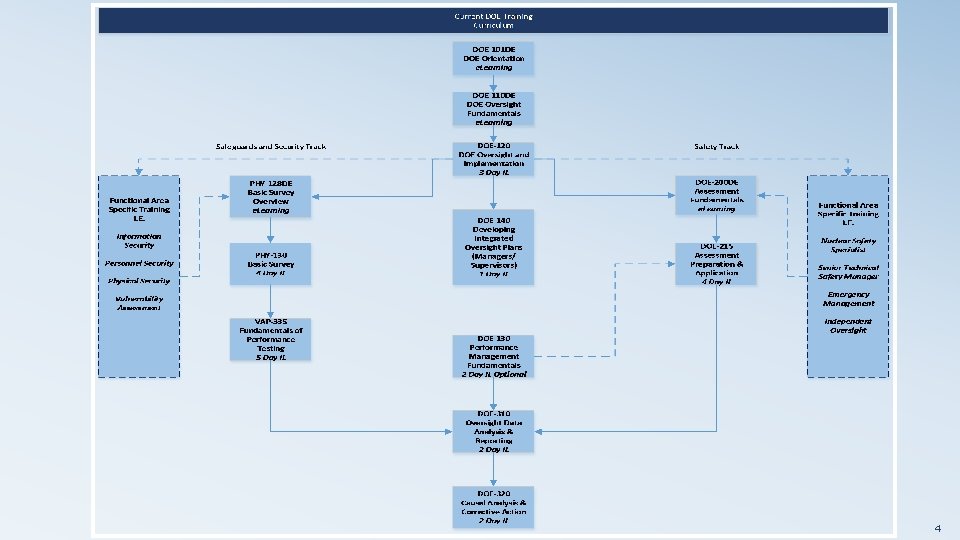

4

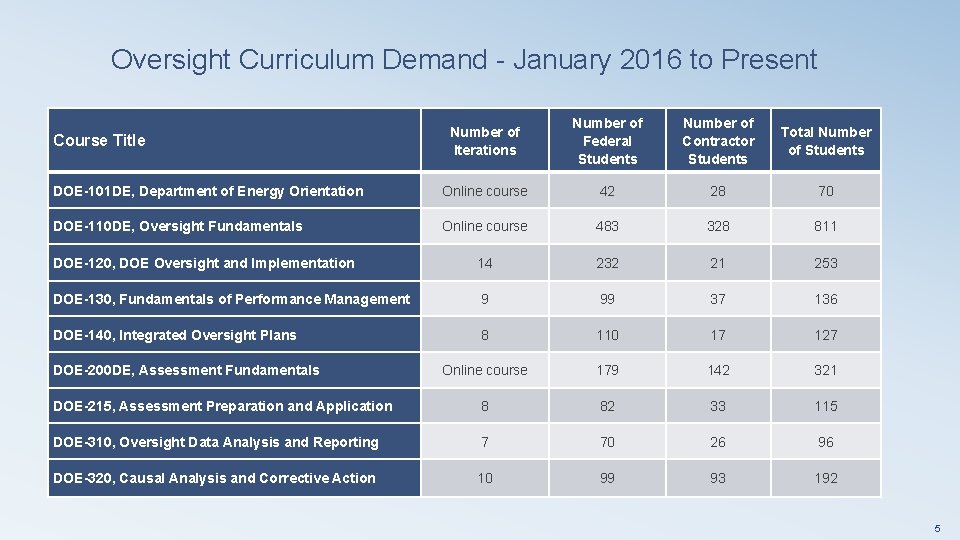

Oversight Curriculum Demand - January 2016 to Present Number of Iterations Number of Federal Students Number of Contractor Students Total Number of Students DOE-101 DE, Department of Energy Orientation Online course 42 28 70 DOE-110 DE, Oversight Fundamentals Online course 483 328 811 DOE-120, DOE Oversight and Implementation 14 232 21 253 DOE-130, Fundamentals of Performance Management 9 99 37 136 DOE-140, Integrated Oversight Plans 8 110 17 127 Online course 179 142 321 DOE-215, Assessment Preparation and Application 8 82 33 115 DOE-310, Oversight Data Analysis and Reporting 7 70 26 96 DOE-320, Causal Analysis and Corrective Action 10 99 93 192 Course Title DOE-200 DE, Assessment Fundamentals 5

General Technical Base Qualification Standard Part B Oversight Performance • From the 6 tasks in the simplified oversight process derived three Performance Competencies (PC) and 5 Mandatory Performance Activities (MPAs) • PC # 1 - Conduct Oversight Activities MPA - Develop or tailor applicable oversight Plan MPA - Perform at a minimum one OAA and one Assessment • PC #2 - Evaluate the adequacy of contractor issue resolution MPA - Evaluate the adequacy of a contractor corrective action plan MPA - Evaluate corrective action closure documentation • PC #3 - Provide feedback on contractor periodic performancereview MPA - Evaluate and provide input on contractor periodic performance review 6

MPA Evaluation Requirements MPA - Perform oversight activities to include at a minimum one operational awareness activity and participation in one assessment MPA Evaluation Requirements: a. Develop, or tailor existing evaluation criteria, to define the depth and breadth of assigned oversight activities. b. Conduct oversight activities using appropriate techniques and determine whether evaluation criteria are met. c. Properly categorize oversight activity results (e. g. noteworthy practices, discrepancies, findings, opportunities for improvement) based on approved organizational definitions. d. Properly categorize oversight activity results (e. g. noteworthy practices, discrepancies, findings, opportunities for improvement) based on approved organizational definitions. 7

Why Oversight Benchmarking? • • During training we are often asked “Who does a particular part of the process well? ” – Didn’t have current insight into how overall process was being implemented – Didn’t have procedures and templates that could be provided as examples to enhance effectiveness of overall federal oversight We needed to validate that the process and tools presented in the oversight curriculum lined up with actual practice in the field 8

Oversight Benchmarking Objectives • Collect information on how the various DOE offices are implementing the major steps of the oversight process defined in the new GTB Part B and presented in the NTC oversight curriculum • Determine how the federal staff uses CAS information as part of their oversight process • Analyze data collected with the following general outcomes: – Validate and adjust as needed the current NTC oversight process – Identify best practices in the implementation of oversight process elements and incorporate into the NTC training – Gain insight and awareness on current implementation of oversight in order to provide relevant feedback to managers and students during training – Provide feedback into updates of applicable DOE policy and guidance 9

Oversight Data Collection Approach • Meet with Federal oversight process owners and review documented oversight process in order to understand how the basic steps of the process are expected to be conducted with a focus on the following: – – – How are oversight requirements identified and incorporated into oversight planning? How are specific oversight activities identified and what inputs are used to tailor the level and mix of these oversight activities? How is CAS data validated and used throughout the oversight process? How is oversight data analyzed and the results used in the overall process? How are issues categorized and provided to the contractor for action? How is oversight data used to provide feedback into contractor performance? 10

Oversight Data Collection Approach - Continued • Interview functional area oversight leads and supervisors using following standard set of questions: – How do you plan your oversight activities including what you will look at, how often, and what scope will you use? – How do you conduct your oversight activities including what type of oversight activities do you perform? – How do you identify, categorize, and communicate issues identified during oversight activities? – How do you analyze oversight data in your area of responsibility? – How do you follow up on federal and contractor identified issues? 11

Oversight Data Collection Approach – Continued • Identify individuals to interview from a representative sample of major functional areas (Nuclear Safety, Environmental, Worker Safety, Safeguards and Security, Projects and Programs) • At completion of visit, provide informal feedback to oversight process owner and managers/supervisors if requested. – We constantly emphasize that this is not an assessment – We try to understand how the office expects the oversight process to be implemented and then document how individuals actually conduct the standard oversight process tasks – Sites typically have a good understanding on areas where implementation of the process is not consistently performed as expected 12

Schedule • Sites completed NNSA Nevada Field Office – January 2018 (Pilot) – NNSA Livermore Field Office – February 2018 – EM SRS Operations Office and NA-SRS Field Office – March 2018 – • Sites Scheduled Office Of Science Chicago Integrated Support Center, Argonne National Lab Site Office, and Fermi Site Office – May 15 -17, 2018 – NE-ID and EM-ID – June 12 -14, 2018 – 13

Some Initial Observations from Site Visits • Assessment Planning Typically includes just baseline “order required” assessments – Few supplemental assessments based on analysis of data – Programmatic requirements are not systematically evaluated by the functional area oversight lead unless comprehensive federal assessment is required – • Grouping of Functional/Topical Areas Lots of variations on how functional/topical areas are grouped – In some cases they are grouped at a high level and as a result may lose visibility of key topical areas – Common grouping between Federal and contractor oversight is helpful – 14

Initial Observations - Continued • Functional Area Data Analysis – Functional area oversight personnel don’t routinely evaluate CAS data to inform the scope of upcoming assessments and to determine the focus of any operational awareness activities – Functional area oversight personnel typically use knowledge gained from routine meetings and their own oversight activities to determine what to focus on for upcoming assessments – Some functional area oversight personnel did not know what information from CAS is available – Some functional area oversight personnel and supervisors know where to get CAS information but the process to gain access is not user friendly so they don’t routinely use the information – The functional area oversight lead may pull data from federal or contractor issues management system to validate a potential adverse trend based on general awareness of program status through operational awareness activities 15

Initial Observations- Continued Formal data analysis using the issues management database if done is limited to frequency counts • Only one example of a more comprehensive qualitative analysis based on coding • Standard Lines of Inquiry to support periodic programmatic assessments are not common • • Potential Best Practice is the SRS approach to Radiation Protection Program Oversight – Use contractor assessment results and data from CAS to develop and focus LOIs to address elements of program being evaluated Use field staff to gather data on implementation – Adjust scope of next quarterly assessment and LOIs based on any trends identified – 16

Initial Observations- Continued • SRS Site Tracking, Analysis, and Reporting System (STAR) appears to be a good system that has capability for data analysis • In some cases the federal staff appeared to be almost completely focused on completing assigned assessments and following up on discrete issues – S&S annual surveys and other compliance assessments – Challenge to find the right balance between oversight data collection, data analysis (both at functional and system level), and analysis and correction of systemic issues – There still seems to be a tendency to focus on “feeding” the assessment and issues management “Machine” – Takes continual management attention to provide opportunities to take a step back and look at the big picture and not get caught up primarily in data collection efforts 17

Potential Areas For Follow Up • Based on the initial observations from the data collection effort the following questions will be evaluated by the team: – Should federal oversight process include a systematic evaluation of important program requirements over a certain periodicity or should this only be done if explicitly required by DOE directives? – What level of data analysis is expected at the Functional Level and at the group or office level? – What are expectations for federal staff evaluation, validation, and use of CAS data (both raw and analyzed data)? 18

Path Forward • Complete visits in May and June • Analyze data using data mining software based on coding of answers to questions (June –July 2018) – Initially set up about 50 codes but based revised questions only using about 10 -15 common codes – Working with NNSA on a similar effort in data analytics 19

Path Forward - Continued • Use insights to inform Integrated Nuclear Facility Federal Oversight Job Analysis initiative (June –August 2018) • Document results and identify needed changes to DOE NTC Oversight Curriculum (August– December 2018) – Identify best practices and include tools/templates in oversight curriculum – Modify overall process as needed 20

Path Forward – Continued • Continue visits in CY 2019 and identify any follow-on actions – RL/ORP – NNSA – NPO (Y-12/Pantex) – PNNL – Other Science Laboratories – DOE HQ Program Offices 21

Questions? EFCOG CAS WG – April 24, 2018 22

- Slides: 22