EDN 568 Program Design and Evaluation Background and

EDN 568 Program Design and Evaluation Background and History

The Birth of Evaluation In the beginning, God created Heaven and Earth… And God saw everything he had made. “Behold, ” God said, “it is very good. ”

And the evening and the morning were the sixth day. And on the seventh day, God rested from all his work. And on the seventh day, God’s Arch. Angel came unto Him asking, “God, how do you know what you created is ‘good? ’ What are your criteria? On what data do you base your judgment? Just exactly what results were you expecting to attain? And God, aren’t you a little too close to the situation to make a fair and unbiased evaluation? ”

God thought very carefully about these questions the Arch. Angel had asked him all that day…and His rest was greatly disturbed. So on the eighth day God rose up, faced the Arch. Angel, and said…

Well, Lucifer… “YOU CAN JUST GO TO HELL!” And thus, evaluation was born in a blaze of glory

So, now that we know how evaluation was conceived, What do we need to know about it? • Evaluation is a tool which can be used to help judge whether a school program or instructional approach is being implemented as planned • It allows us to assess the extent to which our goals and objectives are being achieved.

Helps Answer These Questions • Are we doing the things for our students and teachers that we said we would? • Are our students learning what we set out to teach them? • How can we make improvements to the curriculum, teaching methods, and school programs?

Evaluation Myths • Many people believed evaluation was a useless activity that generates lots of boring data with useless conclusions. • This was a problem with evaluations in the past when program evaluation methods were chosen largely on the basis of achieving complete scientific accuracy, reliability and validity. • This approach often generated extensive data from which very carefully chosen conclusions were drawn.

• Many people used to believe that evaluation was about proving the success or failure of a program. • This myth assumed that success is implementing the perfect program and never having to deal with it again- the program will now run itself perfectly. • This doesn't happen in real life. • Success is remaining open to continuing feedback and adjusting the program accordingly. • Evaluation gives you this continuing feedback.

Evaluation Myths • Many people believed that evaluation was a highly unique and complex process that occurred at a certain time in a certain way, and almost always had to include the use of outside experts. • Many people also believed they must always demonstrate validity and reliability.

Evaluation Myths • For many years, people did not make generalizations & recommendations following program evaluations. • As a result, evaluation reports usually stated the obvious and left program administrators disappointed and confused. • Thanks to Michael Patton's development of utilization assessment, program evaluation has focused on utility, relevance and practicality.

Qualitative Case Study Analysis Patton, M. Q. (2002). Qualitative research and evaluation methods (3 rd ed. ). Thousand Oaks, CA: Sage. The Guru of Qualitative Program Evaluation & Analysis

NCLB & Qualitative Studies o o Case study research analysis allows us to measure the extent to which the educational performance and the future potential for students has improved. Case study analysis is context specific and therefore a “good fit” for educational program evaluation (when coupled with quantitative data).

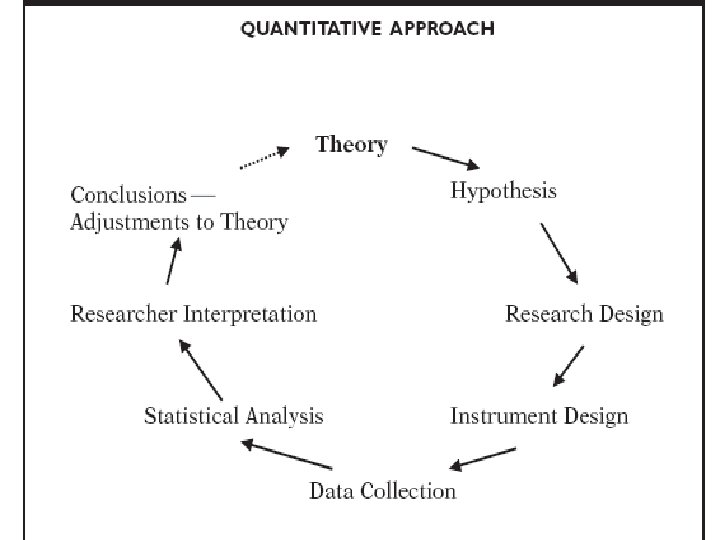

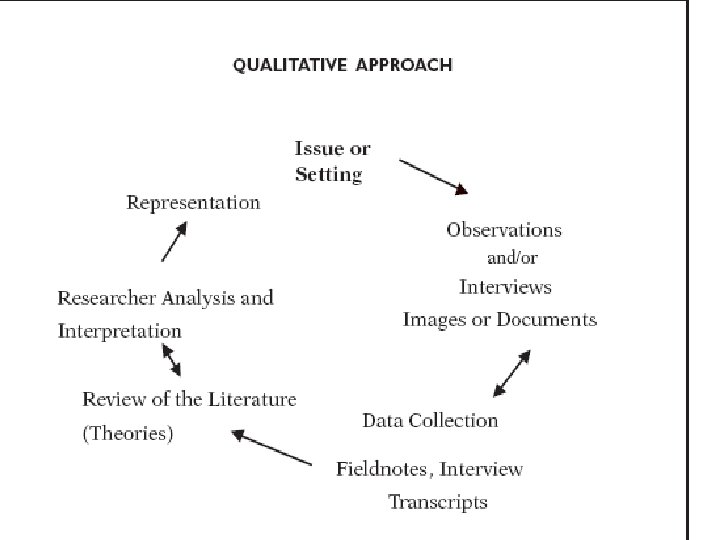

Quantitative versus Qualitative Quantitative Evaluation is based the principles of the scientific method. The goal to “prove” something based on valid and reliable outcome measures. Qualitative Evaluation is context-based analyzes the comprehensive program impact effects on participants.

Program Evaluation Defined • Public school programs are organized methods to provide certain related services to students and other educational stakeholders • Public School programs must be evaluated to decide if the programs are indeed useful for our stakeholders.

Program Evaluation Defined • Program evaluation is a process by which society learns about itself. • Program evaluations contribute to enlightened discussion of alternative plans for social action. • Program evaluation must also be as much about political interaction as it is about determining facts.

The Politics of Program Evaluation It very important to remember in debates over controversial educational programs that sometimes liars figure & figures lie. The educational program evaluator has the responsibility to protect students and schools from both types of deception.

The Politics of Educational Program Evaluation The evaluator is an educator; his or her success is to be judged by what others learn. Those who shape policy should reach decisions with their eyes wide open…not half shut. It is the evaluator's task to illuminate the situation, not to dictate the decision.

How Did Program Evaluation Developed Into What is Today?

History of Program Evaluation During WW II, Program Evaluation developed at light speed to monitor soldier morale, evaluate personnel policies, and develop propaganda techniques.

The 1950 s Following the evaluation boom after WWII, program evaluation became a common tool for measuring all kinds of things in organizations. Investigators assessed medical treatments, housing programs, educational activities, etc.

The 1960 s Dramatic increase in articles and books on program evaluation in the 60 s. President Johnson’s War on Poverty and Great Society Initiative provided a need to evaluate new social programs throughout the country.

The 1970 s By the 70 s, evaluation research became a specialty field in all the social sciences. The first journal in evaluation, Evaluation Review, began in 1976. After that we were well on our way into the 1980 s, 1990 s, and the 21 st Century to….

EDN 568 Program Design & Evaluation Surfing Data & Evaluating Programs

Understanding Program Design and Evaluation In the words of Stephen Covey, first things first…. 1. What is a program? 2. What is program evaluation?

What Exactly Is a Program? • Organizations usually try to identify several goals which must be reached to accomplish their mission. • In nonprofit organizations, each of these goals often becomes a program.

Organizations & Program Evaluation If programs represent the goals of an organization, then there are two (2) basic ingredients required for program evaluation: 1. An organization & 2. A program (goal)

Program Evaluation • An assessment of an organization’s goals • An assessment of the worth, value, or merit of an intervention, innovation, or service • An assessment that identifies change – past and future • An assessment that tries to solve a problem • An assessment that carefully collects information about a program or some aspect of a program in order to make necessary decisions about the program.

Purpose & Uses of Program Evaluations of educational programs have expanded considerably over the past 40 years. Title I of the Elementary and Secondary Education Act (ESEA) of 1965 represented the first major piece of federal social legislation that included a mandate for evaluation

Title I Program Evaluation Legislation The 1965 legislation was passed with the evaluation requirement stated in very general language. This created quite a bit of controversy State and local school systems were allowed considerable flexibility for interpretation and discretion.

Title I Evaluation Act This evaluation requirement had two purposes: (1) to ensure that the funds were being used to address the needs of disadvantaged children; and (2) to provide information that would empower parents and communities to push for better education.

Title I Program Evaluation: A Means to Upgrade Schools Many people saw the use of evaluation information on Title I programs and their effectiveness as a means of encouraging schools to improve performance. Federal staff in the U. S. HEW welcomed the opportunity to have information about programs, populations served, and educational strategies used. The Secretary of HEW in 1965 promoted the evaluation requirement as a means of finding out "what works" as a first step to promoting the dissemination of effective practices.

1965 Viewpoints on Title I Program Evaluation • Expectation of reform and the view that evaluation was central to the development of change. • A common assumption that evaluation activities would generate objective, reliable, and useful reports, and that findings would be used as the basis of decision-making and improvement.

What Happened? • Unfortunately, the Title I Program Evaluations did not produce any of the intended results due to local schools’ failing to comply with the mandate • Widespread support for evaluation did not take place at the local level. • There was a concern that federal requirements for reporting would eventually lead to more federal control over schooling

The 1970 s & Title I Program Evaluation • It became clear in the 70 s that the evaluation requirements in federal education legislation were not generating their desired results. • The reauthorization of Title I of the federal Elementary and Secondary Education Act (ESEA) in 1974 strengthened the requirement for collecting information and reporting data by local grantees. • It required the U. S. Office of Education to develop evaluation standards and models for state and local agencies. • It also required the Office to provide technical assistance nationwide so exemplary programs could be identified and evaluation results could be disseminated.

TIERS The Title I Evaluation and Reporting System (TIERS) was part of a continuing development effort to improve accountability and program services. In 1980, the U. S. Department of Education created general administration regulations which established criteria for judging evaluation components of grant applications. These various changes in legislation and regulation reflected a continuing federal interest in evaluation data.

Use of Evaluation Results A system at the federal, state, or local levels was not in place to collect, analyze, and use the evaluation results to effect program or project improvements. In 1988, amendments to ESEA reauthorized the Title I program, and strengthened the emphasis on evaluation and local program improvement.

1988 Amendments The legislation required that state agencies identify programs: 1. That did not show aggregate student achievement gains 2. That did not make substantial progress toward the goals set by the local school district. Those programs that were identified as needing improvement were required to write program improvement plans. If, after one year, improvement was not sufficient, then the state agency was required to work with the local program to develop a program improvement process to raise student achievement

The 1990 s There was a further call for school reform, improvement, and accountability in the 1990 s. The National Education Goals were formalized through the Educate America Act of 1994. The new law issued a call for "world class" standards, assessment and accountability to challenge the nation's educators, parents, and students

2001 No Child Left Behind Act The Elementary and Secondary Education Act (ESEA), renamed "No Child Left Behind" (NCLB) in 2001, established goals of high standards, accountability for all, and the belief that all children can learn, regardless of their background or ability.

NCLB A Law of Accountability for Public Schools On January 8, 2002, President Bush signed into law the No Child Left Behind (NCLB) Act, the most sweeping education reform legislation in decades

No Child Left Behind A New Level of Educational Program Evaluation All educational programs evaluated on basis of student achievement and school performance measures An almost totally “quantitative” evaluation of public schools.

Purpose of NCLB To ensure that all children have fair and equal opportunities to reach proficiency on state academic achievement standards. Note emphasis on the word “opportunities. ”

NCLB and Program Evaluation The goal, worth, merit, and value of public schools shall be determined by student achievement and school performance measures. A series of performance targets that states, school districts, and schools must achieve each year to meet the proficiency requirements. To “meet” AYP, schools must be making adequate yearly progress towards the 2013/2014 NCLB goal.

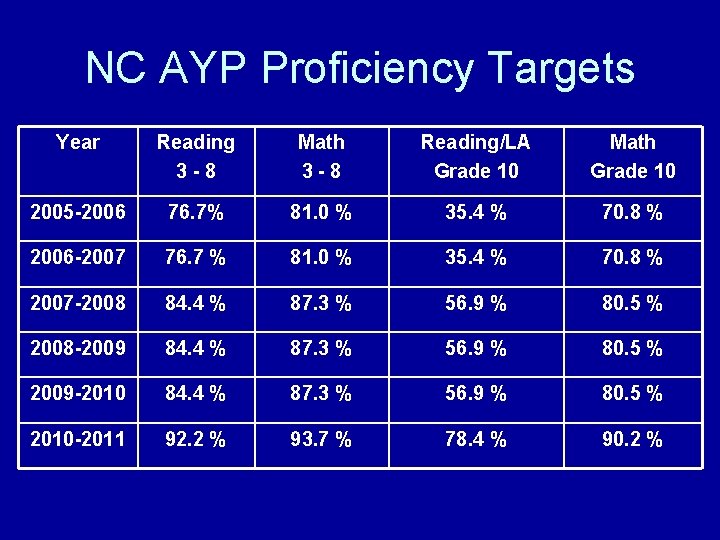

NC AYP Proficiency Targets Year Reading 3 -8 Math 3 -8 Reading/LA Grade 10 Math Grade 10 2005 -2006 76. 7% 81. 0 % 35. 4 % 70. 8 % 2006 -2007 76. 7 % 81. 0 % 35. 4 % 70. 8 % 2007 -2008 84. 4 % 87. 3 % 56. 9 % 80. 5 % 2008 -2009 84. 4 % 87. 3 % 56. 9 % 80. 5 % 2009 -2010 84. 4 % 87. 3 % 56. 9 % 80. 5 % 2010 -2011 92. 2 % 93. 7 % 78. 4 % 90. 2 %

AYP and Proficiency • The ultimate goal of NCLB is to bring all students to PROFICIENCY (Level III or Above) as defined by North Carolina, no later than 2013 -14. • For the purpose of school, district and state accountability, the interim benchmark for progressing toward the goal is Adequate Yearly Progress (AYP) in raising student achievement.

Target Groups and Subgroups • AYP focuses on all students and subgroups of students in schools, school districts, and states, with a goal of closing achievement gaps and increasing proficiency to 100 percent. • Ten student groups defined in NC public schools.

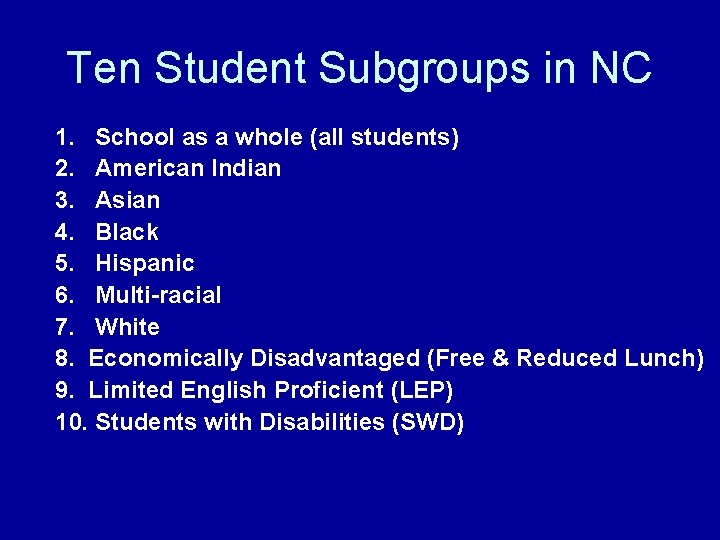

Ten Student Subgroups in NC 1. School as a whole (all students) 2. American Indian 3. Asian 4. Black 5. Hispanic 6. Multi-racial 7. White 8. Economically Disadvantaged (Free & Reduced Lunch) 9. Limited English Proficient (LEP) 10. Students with Disabilities (SWD)

Program Evaluation & NCLB • All program evaluation (until further notice) must include proficiency achievement, school safety, and teacher quality goals and objectives. • Regardless of the type of program evaluation conducted, NCLB requires program evaluators to include these standards and expectations.

Definitions & Concepts Program evaluation has a specific language relative to terms, vocabulary, concepts, standards, and strategies. It is very important to have an adequate content knowledge before creating, implementing, and reporting program evaluations.

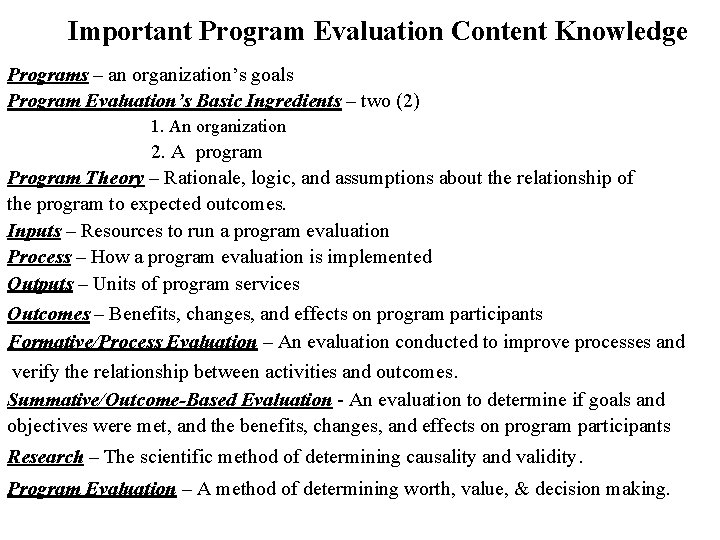

Important Program Evaluation Content Knowledge Programs – an organization’s goals Program Evaluation’s Basic Ingredients – two (2) 1. An organization 2. A program Program Theory – Rationale, logic, and assumptions about the relationship of the program to expected outcomes. Inputs – Resources to run a program evaluation Process – How a program evaluation is implemented Outputs – Units of program services Outcomes – Benefits, changes, and effects on program participants Formative/Process Evaluation – An evaluation conducted to improve processes and verify the relationship between activities and outcomes. Summative/Outcome-Based Evaluation - An evaluation to determine if goals and objectives were met, and the benefits, changes, and effects on program participants Research – The scientific method of determining causality and validity. Program Evaluation – A method of determining worth, value, & decision making.

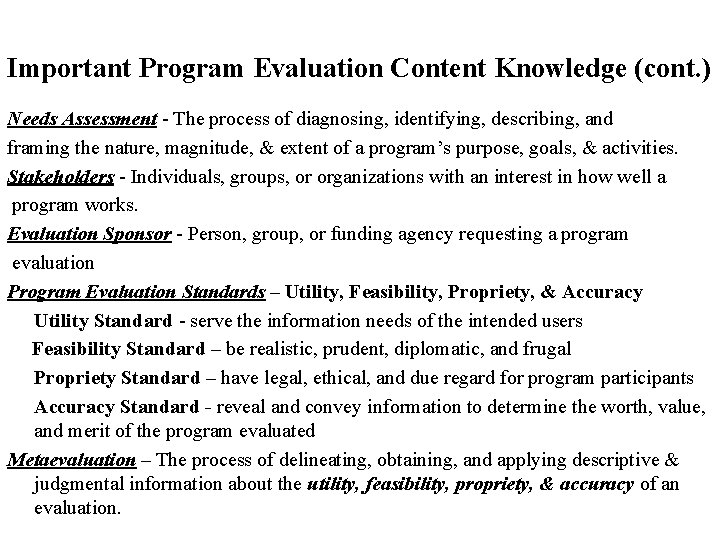

Important Program Evaluation Content Knowledge (cont. ) Needs Assessment - The process of diagnosing, identifying, describing, and framing the nature, magnitude, & extent of a program’s purpose, goals, & activities. Stakeholders - Individuals, groups, or organizations with an interest in how well a program works. Evaluation Sponsor - Person, group, or funding agency requesting a program evaluation Program Evaluation Standards – Utility, Feasibility, Propriety, & Accuracy Utility Standard - serve the information needs of the intended users Feasibility Standard – be realistic, prudent, diplomatic, and frugal Propriety Standard – have legal, ethical, and due regard for program participants Accuracy Standard - reveal and convey information to determine the worth, value, and merit of the program evaluated Metaevaluation – The process of delineating, obtaining, and applying descriptive & judgmental information about the utility, feasibility, propriety, & accuracy of an evaluation.

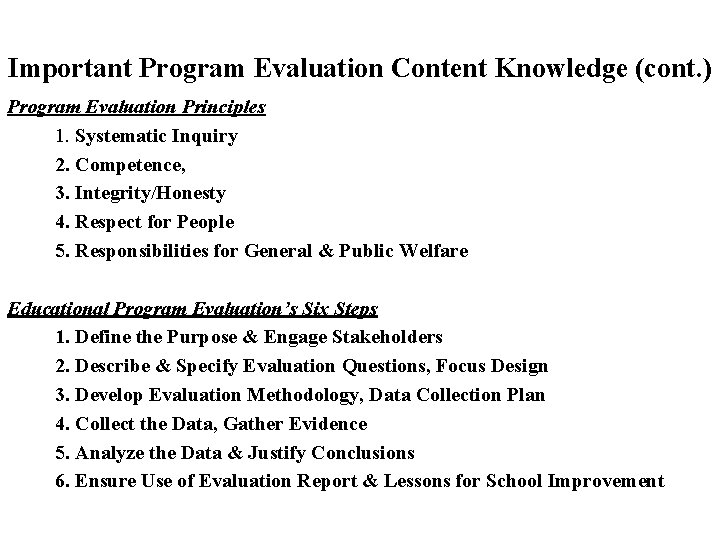

Important Program Evaluation Content Knowledge (cont. ) Program Evaluation Principles 1. Systematic Inquiry 2. Competence, 3. Integrity/Honesty 4. Respect for People 5. Responsibilities for General & Public Welfare Educational Program Evaluation’s Six Steps 1. Define the Purpose & Engage Stakeholders 2. Describe & Specify Evaluation Questions, Focus Design 3. Develop Evaluation Methodology, Data Collection Plan 4. Collect the Data, Gather Evidence 5. Analyze the Data & Justify Conclusions 6. Ensure Use of Evaluation Report & Lessons for School Improvement

Program Evaluation Six Steps 1. Defining Purpose & Engaging Stakeholders 2. Describe Program, Specify Evaluation Questions, Focus Design 3. Develop Evaluation Methodology, Data Collection Plan 4. Collect the Data, Gather Evidence 5. Analyze the Data & Justify Conclusions 6. Ensure Use of Evaluation Report & Lessons for School Improvement

1. Defining Purpose & Engaging Stakeholders • Stakeholders must be engaged in the inquiry to ensure that their perspectives are understood. • When stakeholders are not engaged, evaluation findings might be ignored, criticized, or resisted because they do not address the stakeholders' questions or values. • Stakeholders must have a voice in determining purpose. • After becoming involved, stakeholders help to execute the other steps

2. Describe Program, Specify Evaluation Questions, & Focus Design • Needs Statement - describes the problem or opportunity that the program addresses and implies how the program will respond. • Program descriptions convey the mission and objectives of the program being evaluated • Descriptions should be sufficiently detailed to ensure understanding of program goals and strategies. • Purpose, inputs, processes, output, & outcomes • Having a clear design that is focused on use helps persons who will conduct the evaluation to know precisely who will do what with the findings and who will benefit from being a part of the program evaluation.

3. Develop Evaluation Methodology & Data Collection Plan • Principals should strive to develop evaluation methods that will convey a well-rounded picture of the program and be seen as credible by the school’s stakeholders. • Typically, qualitative assessments, along with academic quantitative measurements, are the preferred evaluation methodology. • Information & evidence should be perceived by stakeholders as believable and relevant to increasing student achievement and overall school improvement. • The data collection plan should align with the SMART Goals identified in the program’s purpose and questions to be answered.

4. Collect Data and Gather Evidence 1. 2. 3. Sources: Sources of evidence in a qualitative program evaluation are the persons, documents, observations, and test scores. Quality: Quality refers to the appropriateness and integrity of information used in an evaluation. Quantity: Quantity refers to the amount of evidence gathered in an evaluation. The amount of information required should be estimated in advance, or where evolving processes are used, criteria should be set for deciding when to stop collecting data 4. Logistics: encompass the methods, timing, and physical infrastructure for gathering and handling evidence

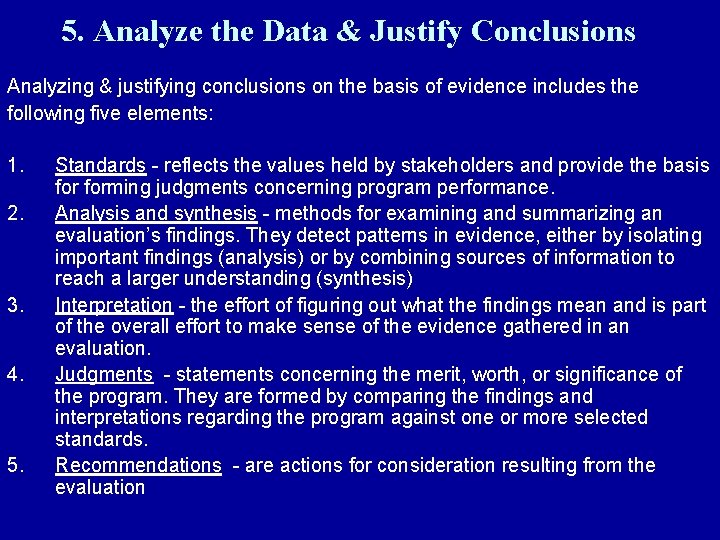

5. Analyze the Data & Justify Conclusions Analyzing & justifying conclusions on the basis of evidence includes the following five elements: 1. 2. 3. 4. 5. Standards - reflects the values held by stakeholders and provide the basis forming judgments concerning program performance. Analysis and synthesis - methods for examining and summarizing an evaluation’s findings. They detect patterns in evidence, either by isolating important findings (analysis) or by combining sources of information to reach a larger understanding (synthesis) Interpretation - the effort of figuring out what the findings mean and is part of the overall effort to make sense of the evidence gathered in an evaluation. Judgments - statements concerning the merit, worth, or significance of the program. They are formed by comparing the findings and interpretations regarding the program against one or more selected standards. Recommendations - are actions for consideration resulting from the evaluation

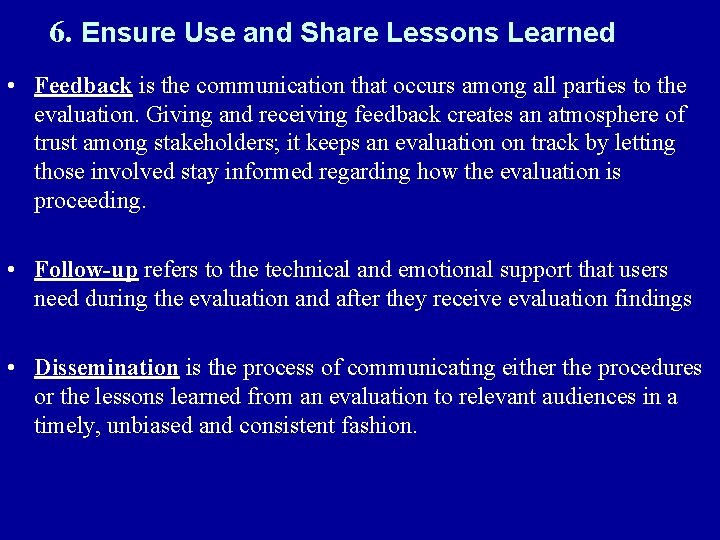

6. Ensure Use and Share Lessons Learned • Feedback is the communication that occurs among all parties to the evaluation. Giving and receiving feedback creates an atmosphere of trust among stakeholders; it keeps an evaluation on track by letting those involved stay informed regarding how the evaluation is proceeding. • Follow-up refers to the technical and emotional support that users need during the evaluation and after they receive evaluation findings • Dissemination is the process of communicating either the procedures or the lessons learned from an evaluation to relevant audiences in a timely, unbiased and consistent fashion.

Program Evaluation Six Steps 1. Defining Purpose & Engaging Stakeholders 2. Describe Program, Specify Evaluation Questions, Focus Design 3. Develop Evaluation Methodology, Data Collection Plan 4. Collect the Data, Gather Evidence 5. Analyze the Data & Justify Conclusions 6. Ensure Use of Report & Lessons for School Improvement

Types of Program Evaluation 1. Formative Evaluation - provides information to improve a program or process. 2. Summative Evaluation - provides shortterm effectiveness or long-term impact information to decide whether or not to adopt a product or process.

Formative evaluation implies that the results will be used in the formation and revision process of an educational effort. More narrative, qualitative methods are especially useful in formative assessments. Formative assessments are typically used in the improvement of educational programs.

Formative Program Evaluation Examples • Formative evaluation of new instructional materials would ideally be conducted with experts and selected target audience members prior to full-scale implementation. • Expert review of content by instructional designers or subject-matter experts could provide useful information for modifying or revising selected strategies. • Learner review is a process of determining if students can use the new materials, if they lack prerequisites, if they are motivated, and if they learn.

Formative/Process Evaluation Formative evaluations are conducted during program implementation in order to provide information that will strengthen or improve the program being studied. Example – An out-of-school tutoring program or initiative. Formative evaluation findings typically point to aspects of program implementation that can be improved for better results, like how services are provided, how staff are trained, or how leadership and staff decisions are made.

Summative/Outcome-Based Evaluation On the opposite pole, summative evaluation is for the purpose of documentation and dissemination of outcomes. It is used for reporting to stakeholders and granting agencies, producing self-reports for accreditation, and certifying the achievement of students Because of its documentary nature and the fact that its purpose is to judge value, summative assessment is frequently more quantitative than formative assessment. (However, this is not a hard and fast rule. )

Summative/Outcome-Based Program Evaluation Examples • It is important to specify what decisions will be made as a result of the evaluation, then develop a list of questions to be answered by the evaluation. • You may want to know if students met your objectives, if the innovation was costeffective, if the innovation was efficient in terms of time to completion, or if the innovation had any unexpected outcomes.

Formative/Process vs. Summative/Outcome-Based Program Evaluation Formative/Process Program Evaluation examines and assesses the implementation and effectiveness of specific instructional activities in order to make adjustments or changes in those activities. Process program evaluations are formative assessments, although summative assessments can also included in process evaluations. Summative/Outcome-Based Program Evaluation determines what benefits (if any) there were for participants and any changes that occurred with the participants after program implementation. Summative/Outcome-based program evaluation is a summary assessment of the worth, value, merit, and benefits of a program to its participants.

Formative/Process Evaluation in Schools The focus of formative/process evaluation could be a description and assessment of the curriculum, teaching methods used, staff experience and performance, in-service training, and adequacy of equipment and facilities. The changes made as a result of process evaluation may involve immediate small adjustments (e. g. , a change in how one particular curriculum unit is presented), minor changes in design (e. g. , a change in how aides are assigned to classrooms), or major design changes (e. g. , dropping the use of ability grouping in classrooms).

Formative/Process Evaluation Theory • Formative/Process evaluation occurs on a continuous basis. • At an informal level, whenever a teacher talks to another teacher or an administrator, they may be discussing adjustments to the curriculum or teaching methods. • More formally, Formative/Process evaluation refers to a set of activities in which administrator and/or evaluators observe classroom activities and interact with teaching staff and/or students in order to define and communicate more effective ways of addressing curriculum goals. • Formative/Process evaluation can be distinguished from outcome-based evaluation on the basis of the primary evaluation emphasis. • Process evaluation is focused on a continuing series of decisions concerning program improvements, while outcome evaluation is focused on the effects of a program on its intended target audience (i. e. , the students).

Formative/Process Program Evaluations • Formative/Process Program Evaluations focus on understanding how the program really works, and its strengths and weaknesses • Formative/Process Program Evaluations attempt to fully understand how a program works -- how does it produce that results that it does. • These evaluations are useful if programs are longstanding, have changed over the years, students and/or teachers report a large number of complaints about the program, and there appear to be large inefficiencies in delivering program services.

Formative/Process Evaluation Questions What is required of students and teachers in this program? What is the general process that students and teachers go through with the program or service? What do students and teachers consider to be strengths of the instructional program or service? What complaints are heard from students & teachers about the program or services? What do students & teachers recommend to improve the program or services?

Summative/Outcome-Based Evaluation Also called “Impact Assessment” A Summative/Outcomes-Based evaluation seeks to determine if your organization is really doing the right program activities to bring about the outcomes you believe (or better yet, you've verified) to be needed by students, rather than just engaging in busy activities which seem reasonable to do at the time.

Summative Outcome-Based Program Evaluation Outcomes are benefits to students from participation in the program or support services. Outcomes are usually in terms of enhanced learning (knowledge, perceptions/attitudes or skills) or conditions, e. g. , increased literacy, etc. Outcomes are often confused with program outputs or units of services, e. g. , the number of students Who went through a program.

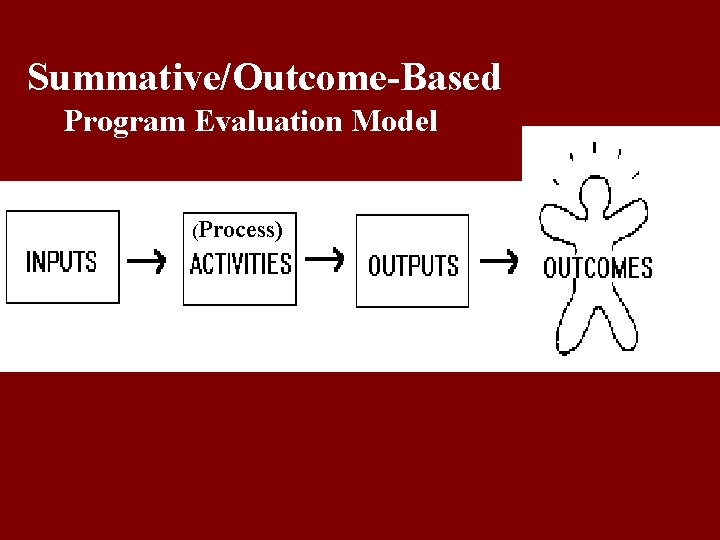

Summative/Outcome-Based Evaluation Model Design Components • Inputs include the resources dedicated to or consumed by the program. • Process/Activities are what the program does with the inputs to fulfill its mission. • Outputs are the direct products of program activities • Outcomes – benefits, changes, and decisions produced by program or services

Inputs – Resources • Examples are money, staff and staff time, volunteers and volunteer time, facilities, equipment, and supplies. For instance, inputs for a parent education class include the hours of staff time spent designing and delivering the program. Inputs also include constraints on the program, such as laws, regulations, and requirements for receipt of funding.

Process/Activities How the program is run. How the strategies, techniques, and types of treatment that comprise the program's service methodology are implemented. Examples would be training students in study skills and counseling them to prepare for and find jobs.

Outputs – Units of Program Service Measured in terms of the volume of work accomplished - for example, the numbers of classes taught, counseling sessions conducted, educational materials distributed, and participants served. Outputs have little inherent value in themselves. They are important because they are intended to lead to a desired benefit for participants or target populations.

Outcomes – Impact on Students Outcomes are benefits or changes for individuals or populations during or after participating in program activities. Outcomes may relate to behavior, skills, knowledge, attitudes, values, condition, or other attributes. Outcomes are what students know, think, or can do; or how they behave; or what their condition is or how they are different following the program.

Summative/Outcome-Based Program Evaluation Model Summative/Outcome-Based Model of Evaluation (Process)

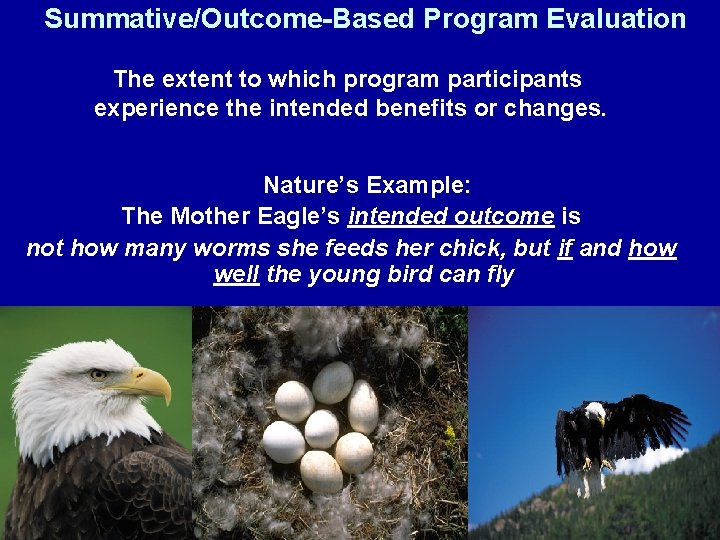

Summative/Outcome-Based Program Evaluation The extent to which program participants experience the intended benefits or changes. Nature’s Example: The Mother Eagle’s intended outcome is not how many worms she feeds her chick, but if and how well the young bird can fly

Definition of Program Evaluation Program evaluation is carefully collecting information about a program or some aspect of a program in order to make necessary decisions about the program. Program evaluation can include any or a variety of at least 35 different types of evaluation, such as for needs assessments, accreditation, cost/benefit analysis, effectiveness, efficiency, formative, summative, goalbased, process, outcomes, etc. We will be concerned primarily with Formative/Process & Summative/Outcome-Based Program Evaluation.

What is Program Design? Inputs, Process, Outputs, and Outcomes Inputs are the various resources needed to run the program, e. g. , money, facilities, program staff, etc. The Processes is how the program is carried out, e. g. , students are counseled, supported, cared for, and taught (activities). The Outputs are the units of service, e. g. , number of children to be served, the number of teaches to receive staff development. Outcomes are the impacts on the people receiving services, e. g. , Increased mental health, safe and secure development

Developing a Program Design • • Conducting a Needs Assessment Determining who is to be served Determining the purpose and the goals Defining inputs for implementation Creating implementation processes Identifying program outputs Determining anticipated & desired outcomes

Major Types of Program Evaluation Models • Formative/Process-Based • Summative/Outcomes-Based

When conducting program evaluations, we must always remember the words of wisdom coined by a famous researcher and evaluator “Not everything that can be counted counts…and not everything that counts can be counted. ” Einstein

- Slides: 88