Econometric Problems Econometric problems detection fixes Hands on

Econometric Problems • Econometric problems, detection & fixes? • Hands on problems

Regression Diagnostics • The required conditions for the model assessment to apply must be checked. – Is the error variance constant? – Are the errors independent? – Is the error variable normally distributed? – Is multicollinearity a problem?

Econometric Problems – Heteroskedasticity (Standard Errors not reliable) – Non-normal distribution of error term (t & Fstats not reliable) – Autocorrelation or Serial Correlation (Standard Errors not reliable) – Multicolinearity (t-stats may be biased downward)

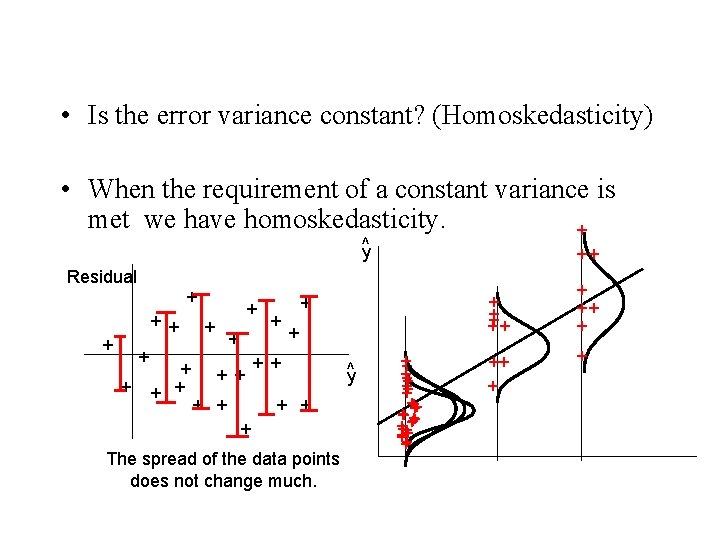

• Is the error variance constant? (Homoskedasticity) • When the requirement of a constant variance is met we have homoskedasticity. + ^y ++ Residual + + + + ++ + + + + The spread of the data points does not change much. y^ + + + +++ ++ + +

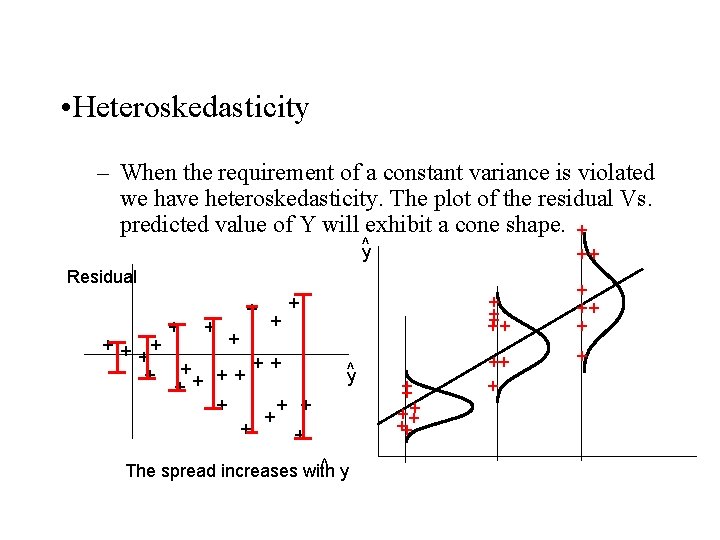

• Heteroskedasticity – When the requirement of a constant variance is violated we have heteroskedasticity. The plot of the residual Vs. predicted value of Y will exhibit a cone shape. + ^y ++ Residual + + + ++ + + + y^ ^ y The spread increases with + ++ ++ ++ + +

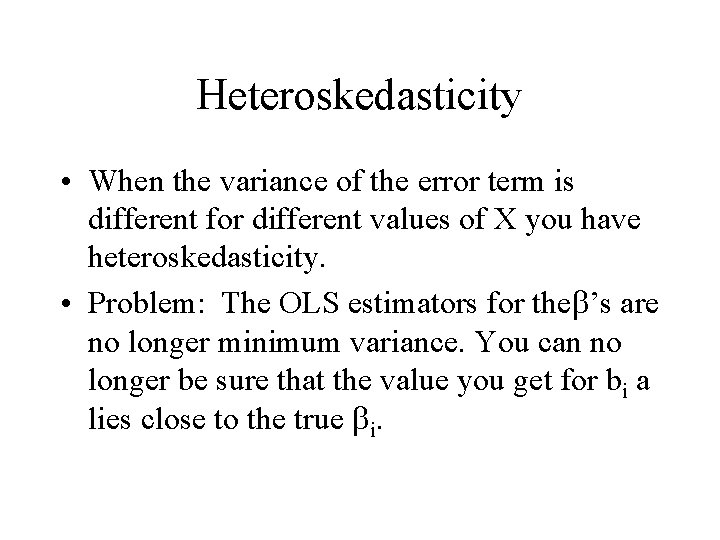

Heteroskedasticity • When the variance of the error term is different for different values of X you have heteroskedasticity. • Problem: The OLS estimators for the ’s are no longer minimum variance. You can no longer be sure that the value you get for bi a lies close to the true i.

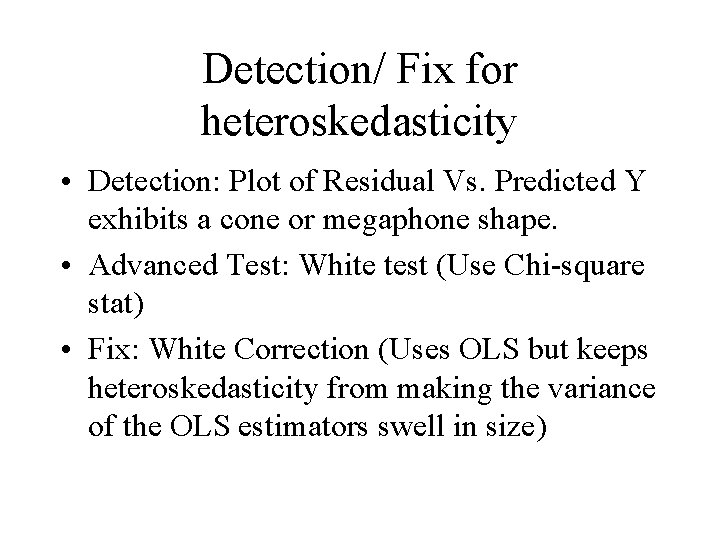

Detection/ Fix for heteroskedasticity • Detection: Plot of Residual Vs. Predicted Y exhibits a cone or megaphone shape. • Advanced Test: White test (Use Chi-square stat) • Fix: White Correction (Uses OLS but keeps heteroskedasticity from making the variance of the OLS estimators swell in size)

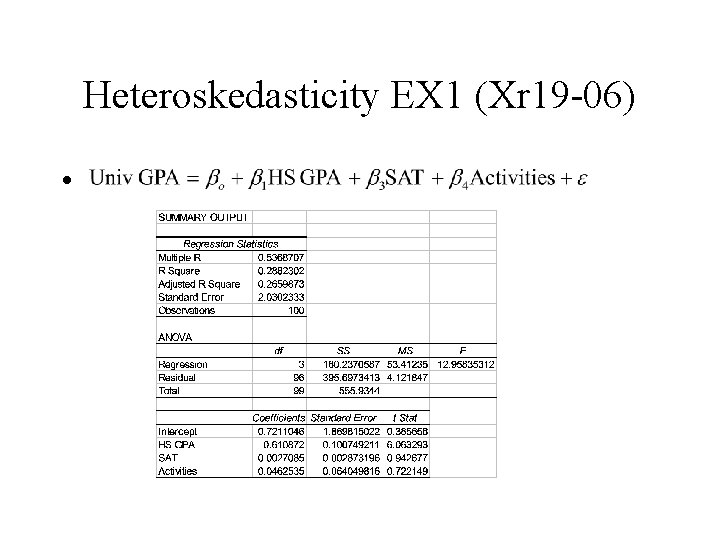

Heteroskedasticity EX 1 (Xr 19 -06) •

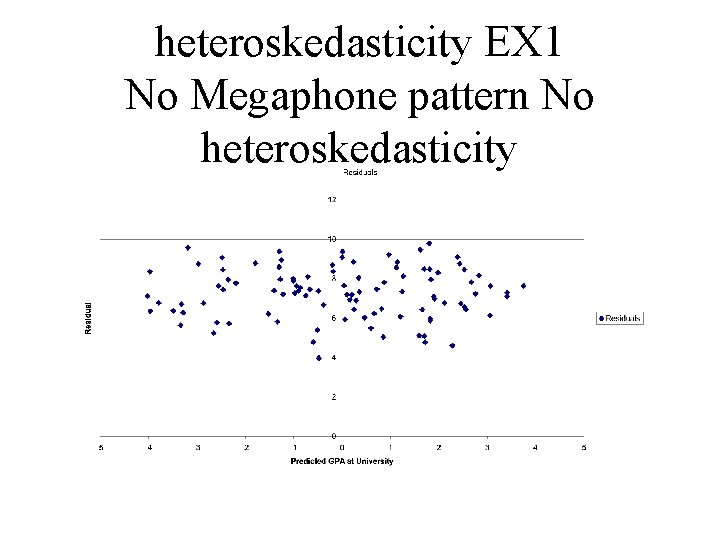

heteroskedasticity EX 1 No Megaphone pattern No heteroskedasticity

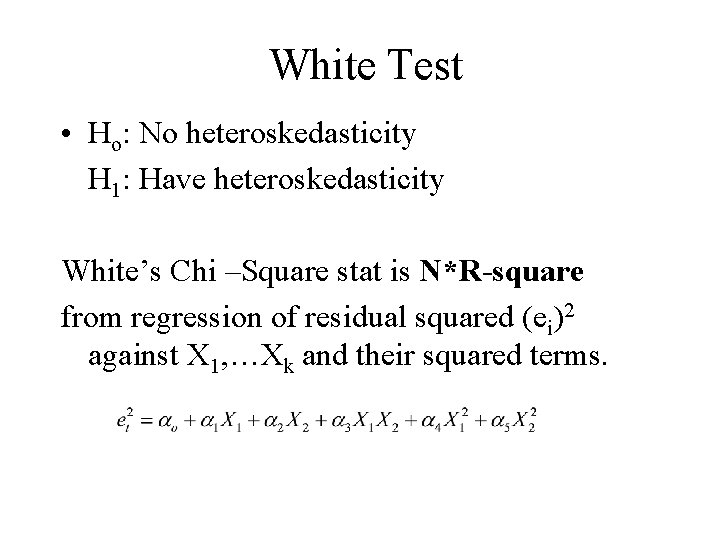

White Test • Ho: No heteroskedasticity H 1: Have heteroskedasticity White’s Chi –Square stat is N*R-square from regression of residual squared (ei)2 against X 1, …Xk and their squared terms.

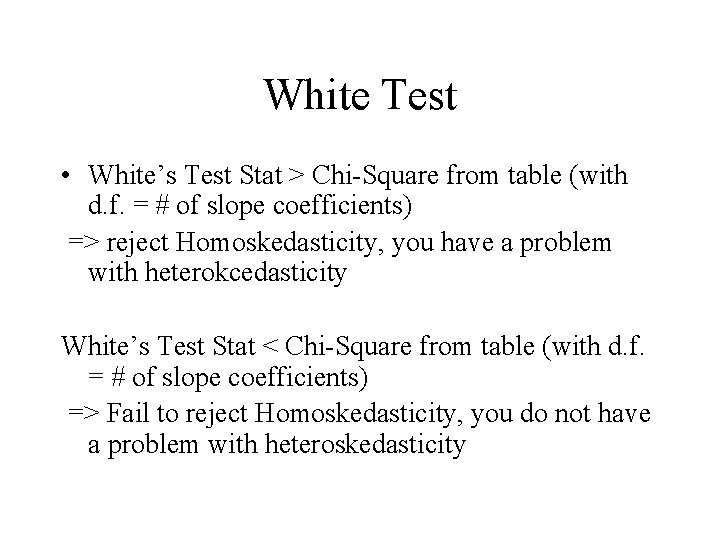

White Test • White’s Test Stat > Chi-Square from table (with d. f. = # of slope coefficients) => reject Homoskedasticity, you have a problem with heterokcedasticity White’s Test Stat < Chi-Square from table (with d. f. = # of slope coefficients) => Fail to reject Homoskedasticity, you do not have a problem with heteroskedasticity

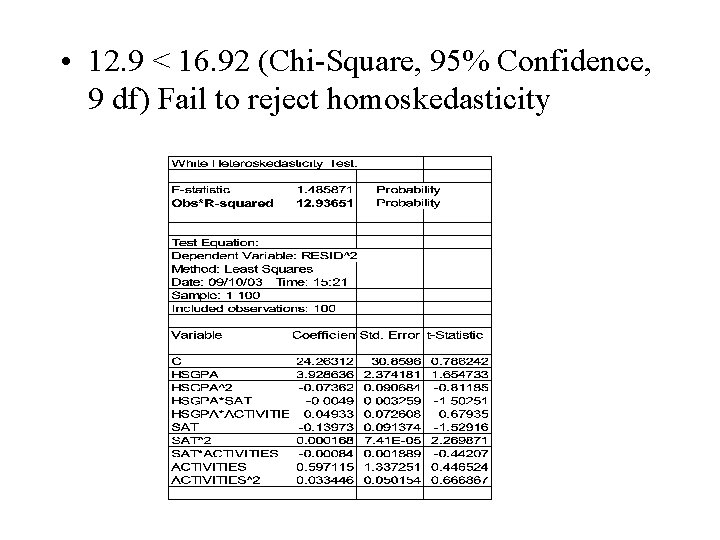

• 12. 9 < 16. 92 (Chi-Square, 95% Confidence, 9 df) Fail to reject homoskedasticity

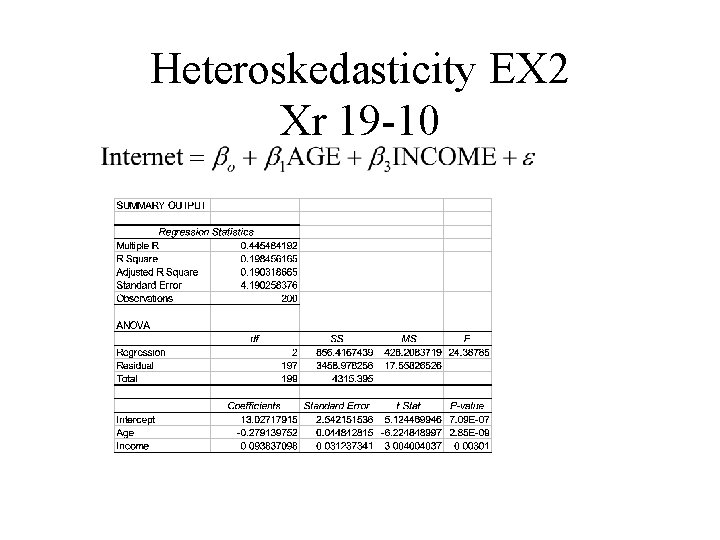

Heteroskedasticity EX 2 Xr 19 -10

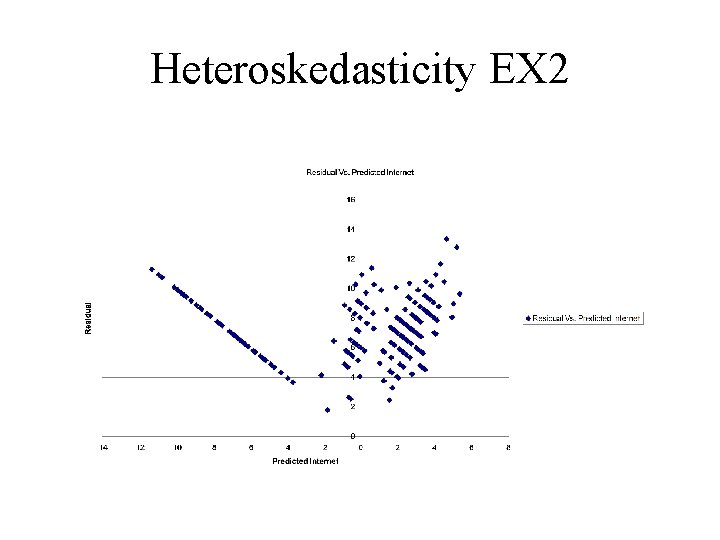

Heteroskedasticity EX 2

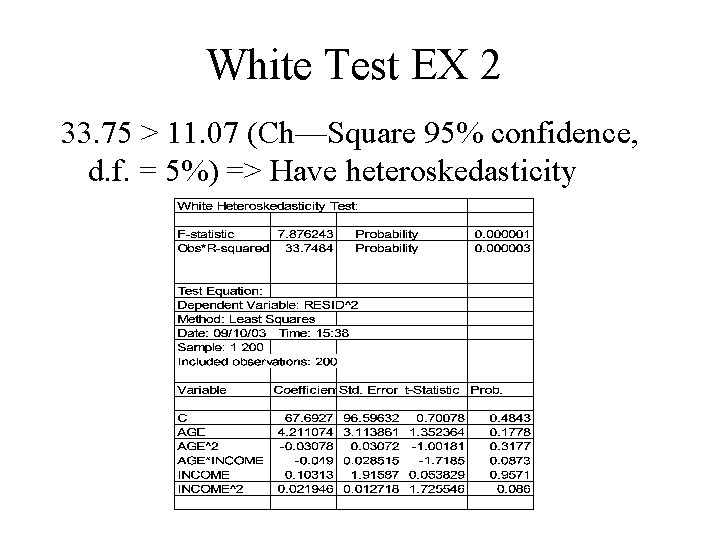

White Test EX 2 33. 75 > 11. 07 (Ch—Square 95% confidence, d. f. = 5%) => Have heteroskedasticity

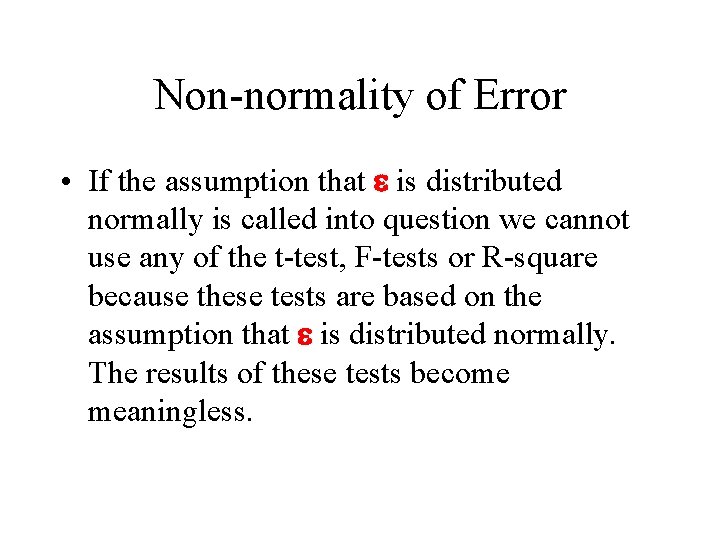

Non-normality of Error • If the assumption that e is distributed normally is called into question we cannot use any of the t-test, F-tests or R-square because these tests are based on the assumption that e is distributed normally. The results of these tests become meaningless.

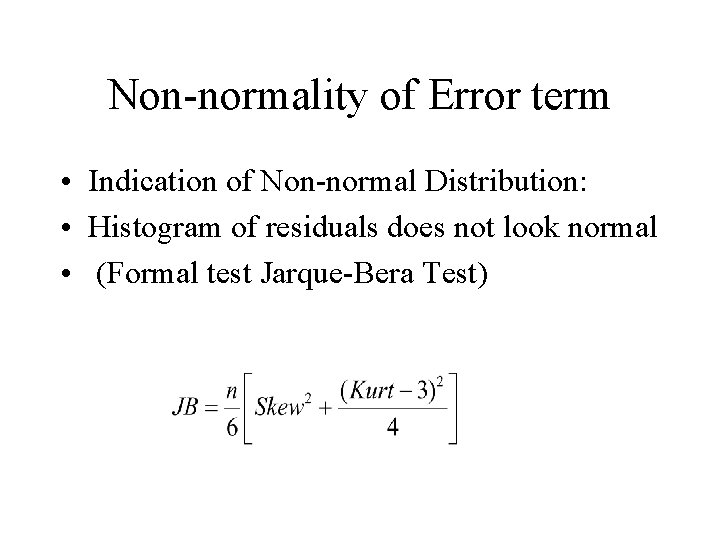

Non-normality of Error term • Indication of Non-normal Distribution: • Histogram of residuals does not look normal • (Formal test Jarque-Bera Test)

Non-normality of Error term • If the JB stat is smaller than a Chi-square with 2 degrees of freedom (5. 99 for 95% significance level) then you can relax!! Your error term follows a normal distribution. • If not, get more data or transform the dependent variable. Log(y), Square Y, Square Root of Y, 1/Y etc.

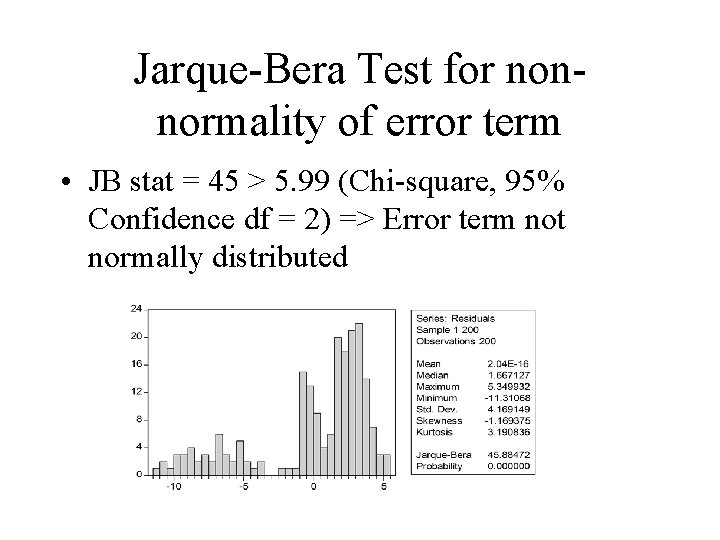

Jarque-Bera Test for nonnormality of error term • JB stat = 45 > 5. 99 (Chi-square, 95% Confidence df = 2) => Error term not normally distributed

Additional Fixes for Normality • Bootstrappig – Ask your advisor if this approach is right for you • Appeal to large, Assymptotic Sample theory. If your sample is large enough the t-and F tests are valid approximately.

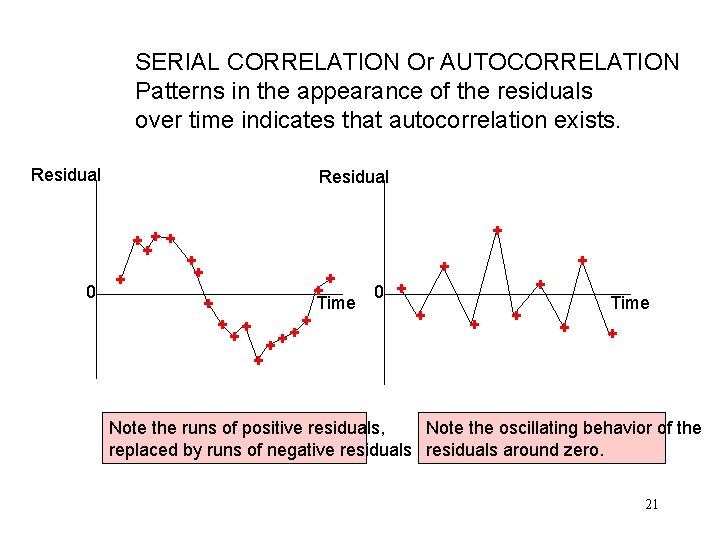

SERIAL CORRELATION Or AUTOCORRELATION Patterns in the appearance of the residuals over time indicates that autocorrelation exists. Residual + ++ + 0 + + ++ + 0 + Time + + Note the runs of positive residuals, Note the oscillating behavior of the replaced by runs of negative residuals around zero. 21

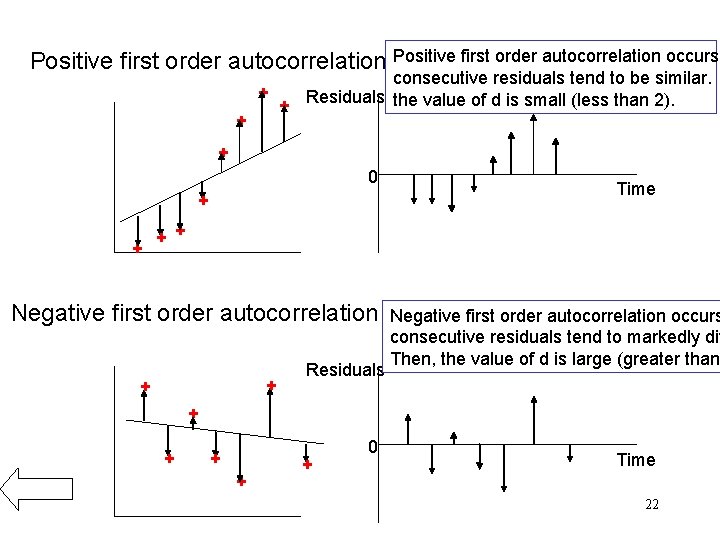

Positive first order autocorrelation occurs + + consecutive residuals tend to be similar. + Residuals the value of d is small (less than 2). + 0 + + Time + + Negative first order autocorrelation + + Residuals Negative first order autocorrelation occurs consecutive residuals tend to markedly dif Then, the value of d is large (greater than + + + 0 Time 22

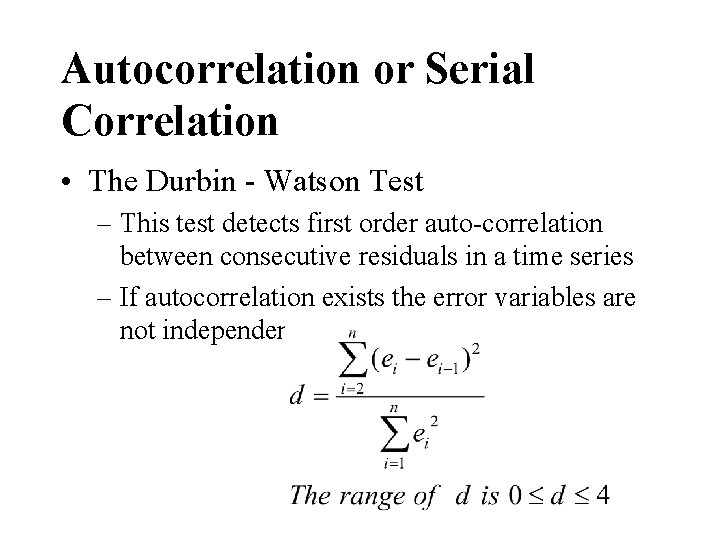

Autocorrelation or Serial Correlation • The Durbin - Watson Test – This test detects first order auto-correlation between consecutive residuals in a time series – If autocorrelation exists the error variables are not independent

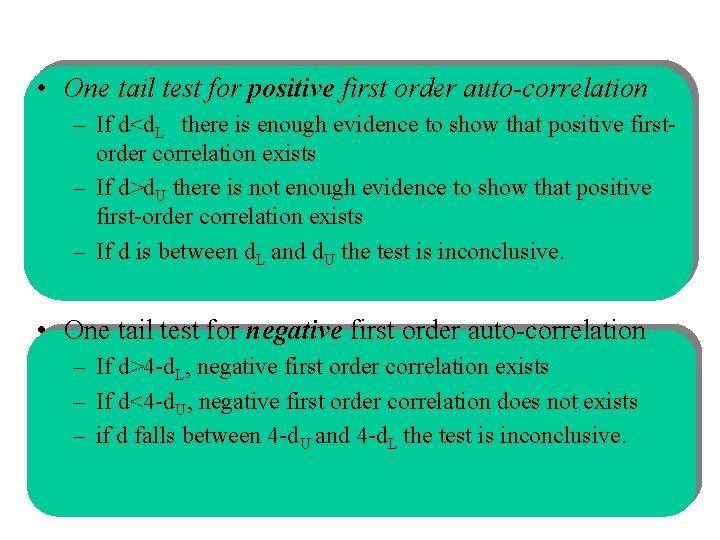

• One tail test for positive first order auto-correlation – If d<d. L there is enough evidence to show that positive firstorder correlation exists – If d>d. U there is not enough evidence to show that positive first-order correlation exists – If d is between d. L and d. U the test is inconclusive. • One tail test for negative first order auto-correlation – If d>4 -d. L, negative first order correlation exists – If d<4 -d. U, negative first order correlation does not exists – if d falls between 4 -d. U and 4 -d. L the test is inconclusive.

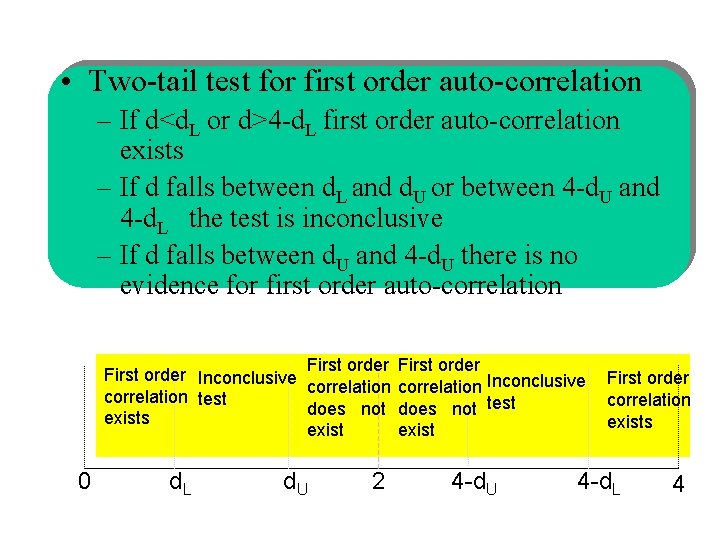

• Two-tail test for first order auto-correlation – If d<d. L or d>4 -d. L first order auto-correlation exists – If d falls between d. L and d. U or between 4 -d. U and 4 -d. L the test is inconclusive – If d falls between d. U and 4 -d. U there is no evidence for first order auto-correlation First order Inconclusive correlation test does not test exists exist 0 d. L d. U 2 4 -d. U First order correlation exists 4 -d. L 4

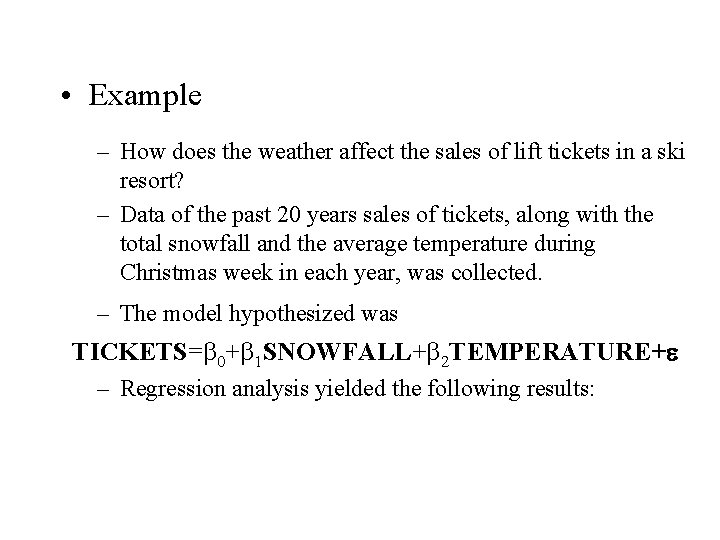

• Example – How does the weather affect the sales of lift tickets in a ski resort? – Data of the past 20 years sales of tickets, along with the total snowfall and the average temperature during Christmas week in each year, was collected. – The model hypothesized was TICKETS= 0+ 1 SNOWFALL+ 2 TEMPERATURE+e – Regression analysis yielded the following results:

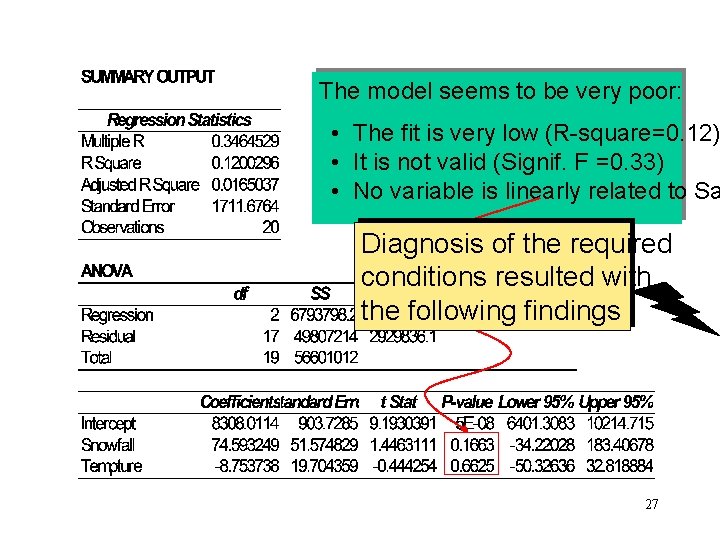

The model seems to be very poor: • The fit is very low (R-square=0. 12) • It is not valid (Signif. F =0. 33) • No variable is linearly related to Sa Diagnosis of the required conditions resulted with the following findings 27

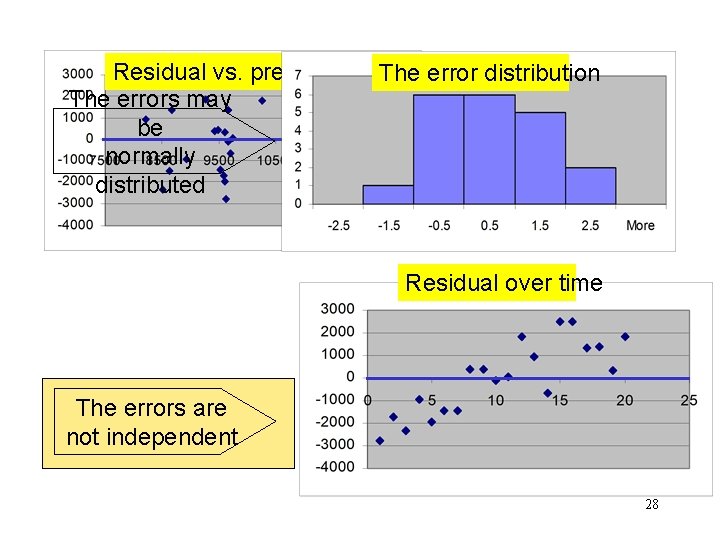

Residual vs. predicted y The error distribution The errors may variance be is constant normally distributed Residual over time The errors are not independent 28

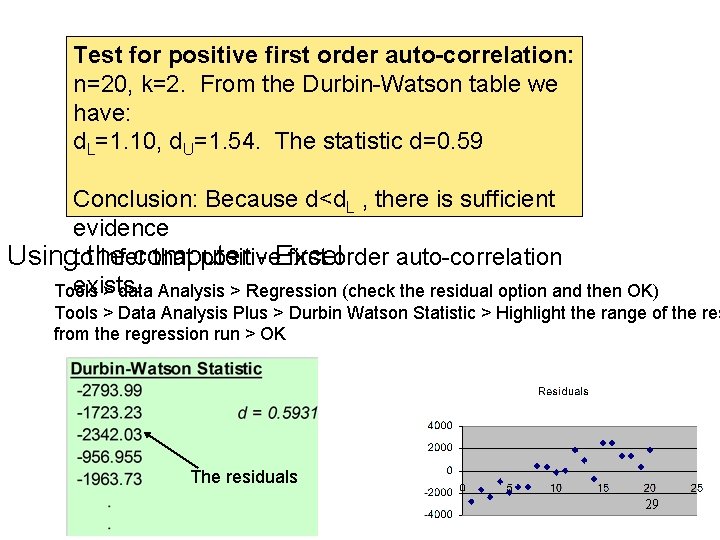

Test for positive first order auto-correlation: n=20, k=2. From the Durbin-Watson table we have: d. L=1. 10, d. U=1. 54. The statistic d=0. 59 Conclusion: Because d<d. L , there is sufficient evidence infer that positive first order auto-correlation Usingtothe computer - Excel exists. Tools > data Analysis > Regression (check the residual option and then OK) Tools > Data Analysis Plus > Durbin Watson Statistic > Highlight the range of the res from the regression run > OK The residuals 29

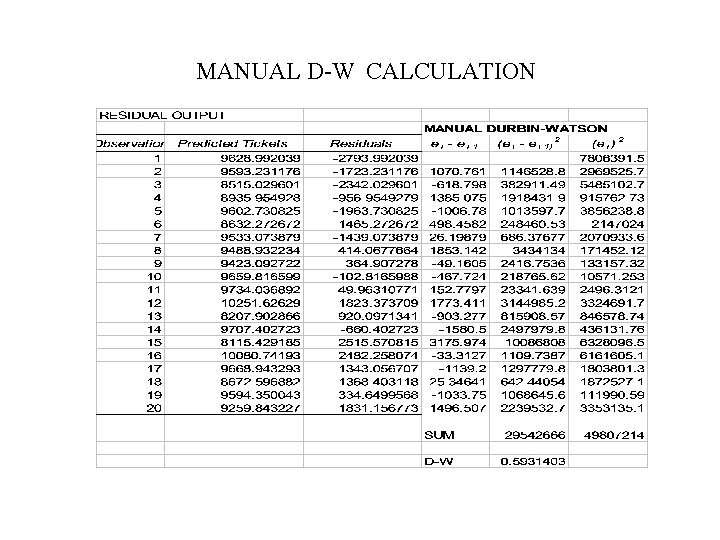

MANUAL D-W CALCULATION

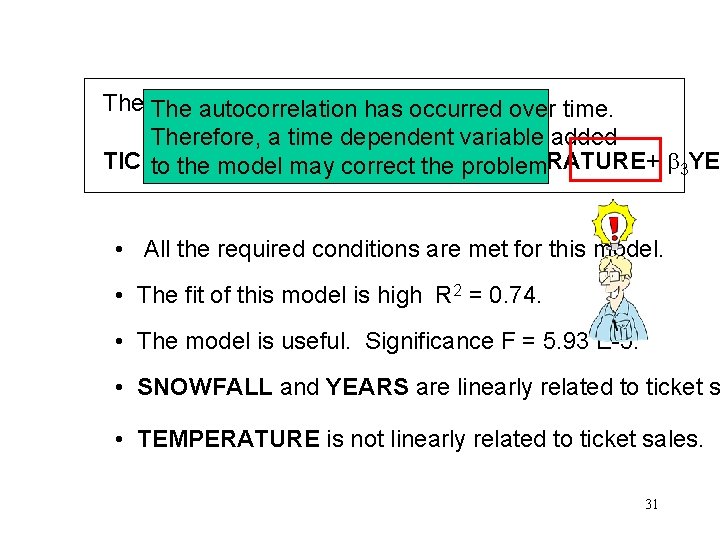

The modified regression has model autocorrelation occurred over time. Therefore, a time dependent variable added TICKETS= 2 problem TEMPERATURE+ 3 YE to the model may correct the 0+ 1 SNOWFALL+ • All the required conditions are met for this model. • The fit of this model is high R 2 = 0. 74. • The model is useful. Significance F = 5. 93 E-5. • SNOWFALL and YEARS are linearly related to ticket s • TEMPERATURE is not linearly related to ticket sales. 31

Multicollinearity • When two or more X’s are correlated you have multicollinearity. • Symptoms of multicollinearity include insignificant t-stats (due to inflated standard errors of coefficients) and a good R-square. • Test: Run a correlation matrix of all X variables. • Fix: More data, combine variables.

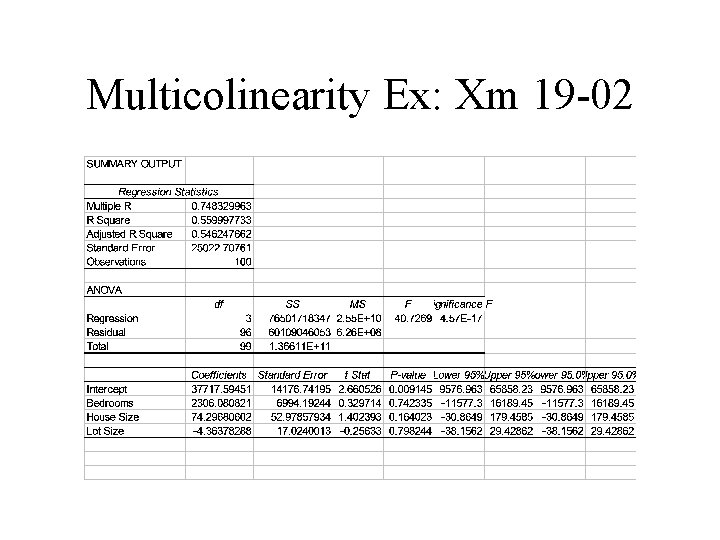

Multicolinearity Ex: Xm 19 -02

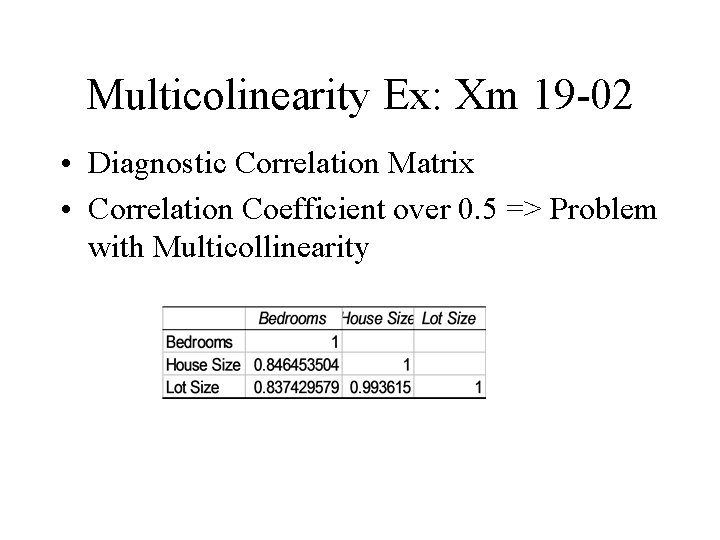

Multicolinearity Ex: Xm 19 -02 • Diagnostic Correlation Matrix • Correlation Coefficient over 0. 5 => Problem with Multicollinearity

- Slides: 34