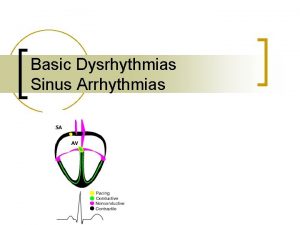

ECG Signal processing 2 ECE UA ECG signal

![ECG signal processing - Case [1] v. Diagnosis of Cardiovascular Abnormalities From Compressed ECG: ECG signal processing - Case [1] v. Diagnosis of Cardiovascular Abnormalities From Compressed ECG:](https://slidetodoc.com/presentation_image/21f353f0ae3ea291e137c9cf78e8dc8d/image-2.jpg)

- Slides: 88

ECG Signal processing (2) ECE, UA

![ECG signal processing Case 1 v Diagnosis of Cardiovascular Abnormalities From Compressed ECG ECG signal processing - Case [1] v. Diagnosis of Cardiovascular Abnormalities From Compressed ECG:](https://slidetodoc.com/presentation_image/21f353f0ae3ea291e137c9cf78e8dc8d/image-2.jpg)

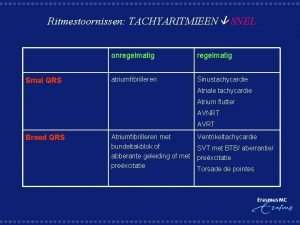

ECG signal processing - Case [1] v. Diagnosis of Cardiovascular Abnormalities From Compressed ECG: A Data Mining-Based Approach[1] IEEE TRANSACTIONS ON INFORMATION TECHNOLOGY IN BIOMEDICINE, VOL. 15, NO. 1, JANUARY 2011

Contents Introduction Application Scenario System and Method Implementation & Results Conclusion www. themegallery. com Company Logo

Introduction v Home monitoring and real-time diagnosis of CVD is important v ECG signals are enormous in size v Decomposing ECG produces time delay v High algorithm complex and hard to maintain

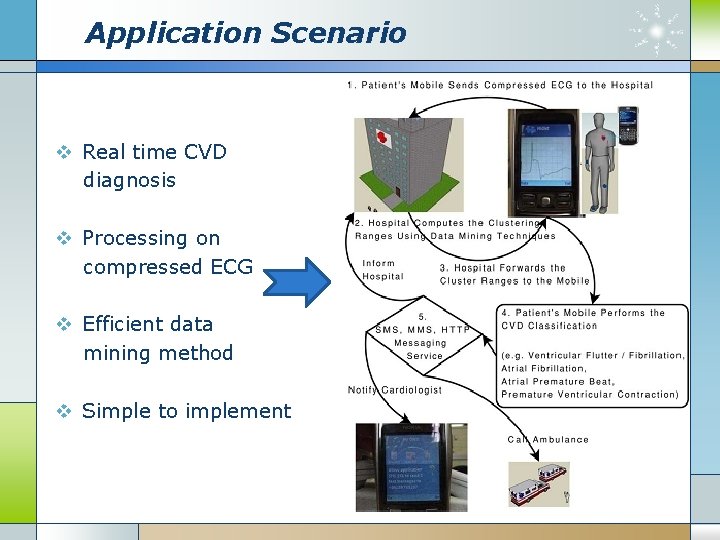

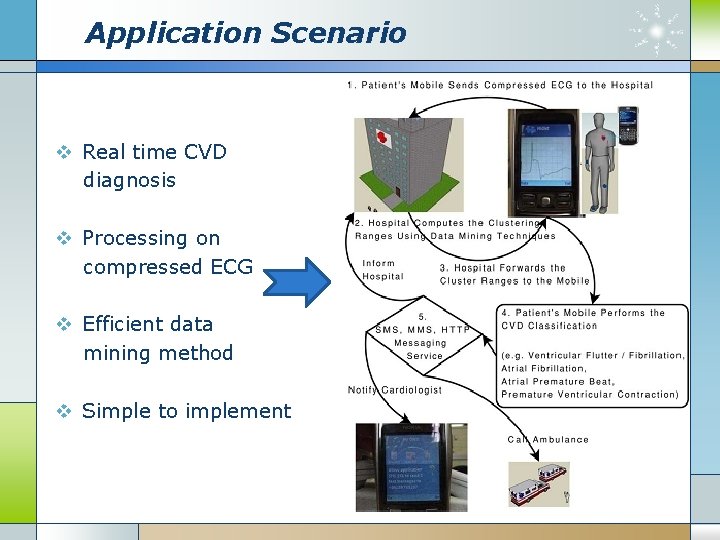

Application Scenario v Real time CVD diagnosis v Processing on compressed ECG v Efficient data mining method v Simple to implement

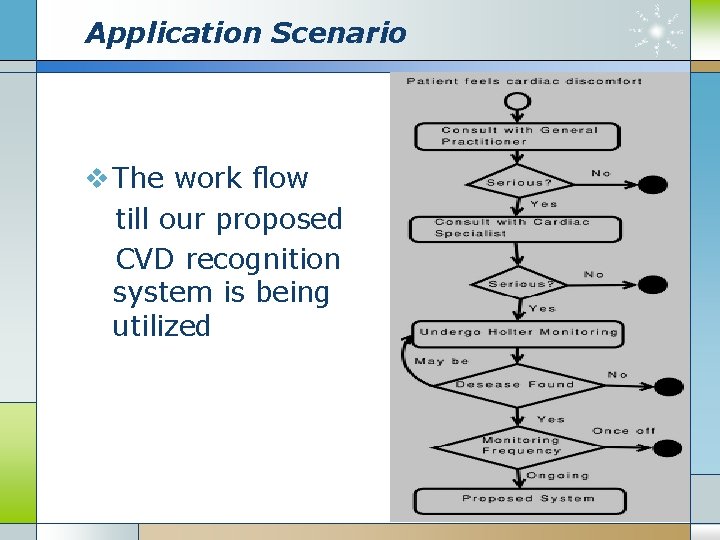

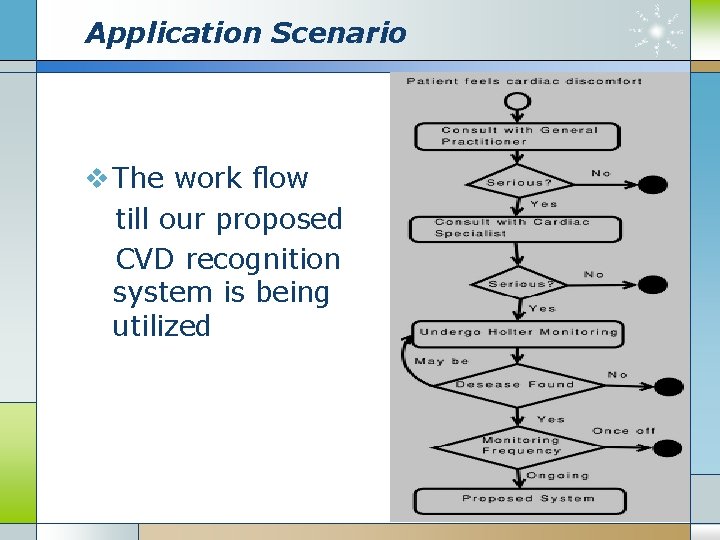

Application Scenario v The work flow till our proposed CVD recognition system is being utilized

System and Method v Mobile phone compress the ECG signal v Transmit by blue-tooth to hospital server v Use data mining methods to extract the attributes and get the cluster range v Detect and classify the CVD and make alert by the SMS system

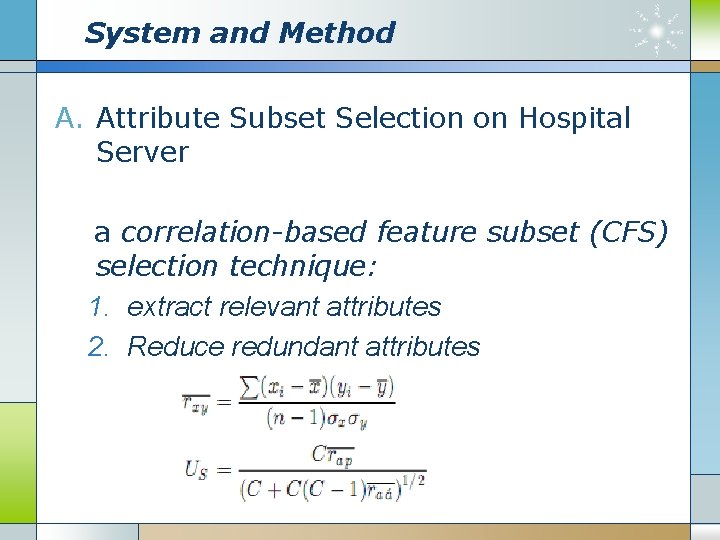

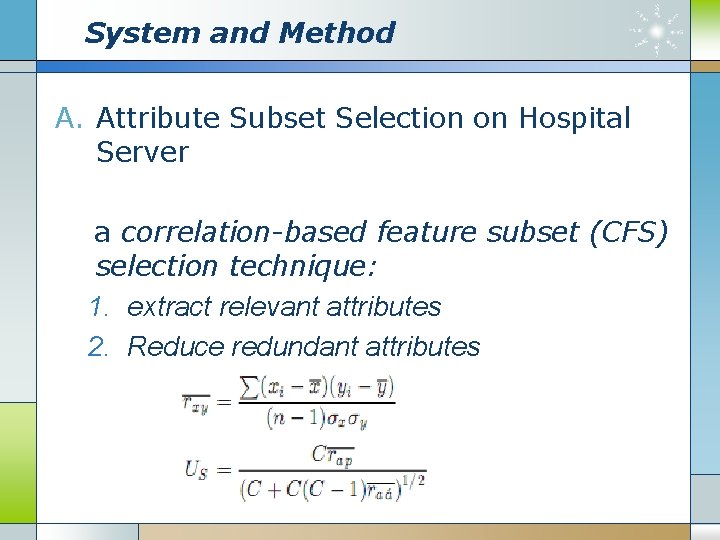

System and Method A. Attribute Subset Selection on Hospital Server a correlation-based feature subset (CFS) selection technique: 1. extract relevant attributes 2. Reduce redundant attributes

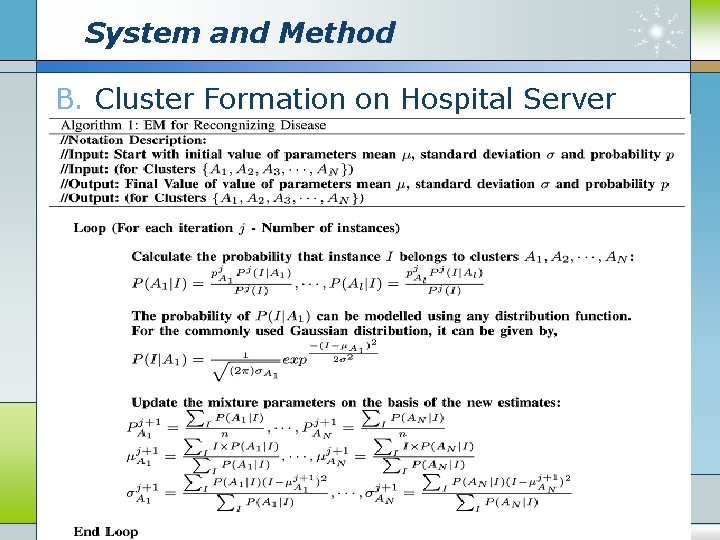

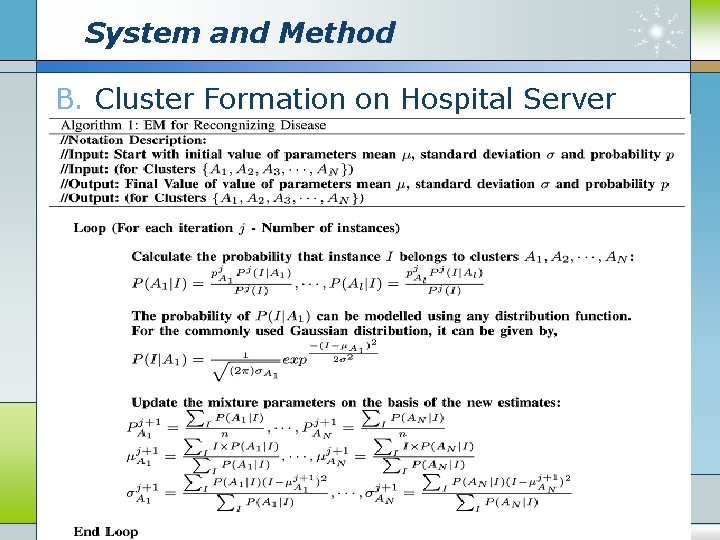

System and Method B. Cluster Formation on Hospital Server

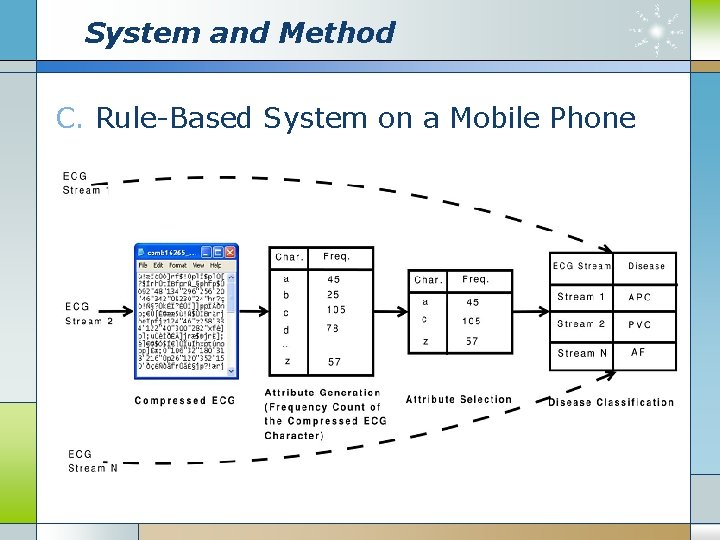

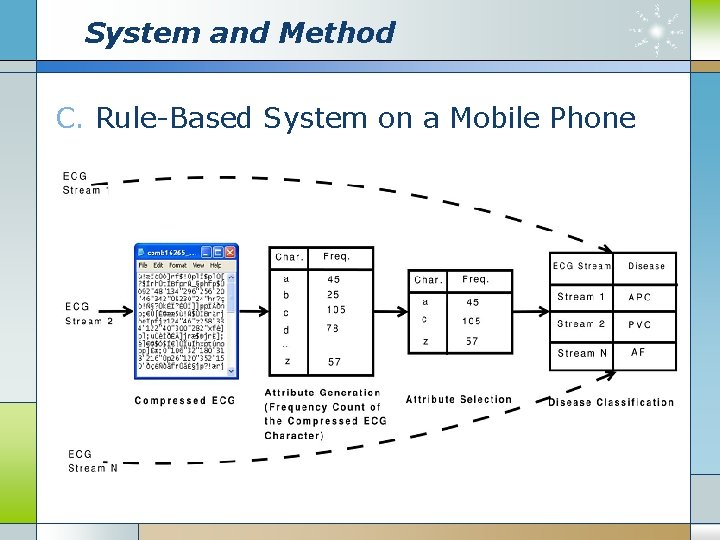

System and Method C. Rule-Based System on a Mobile Phone

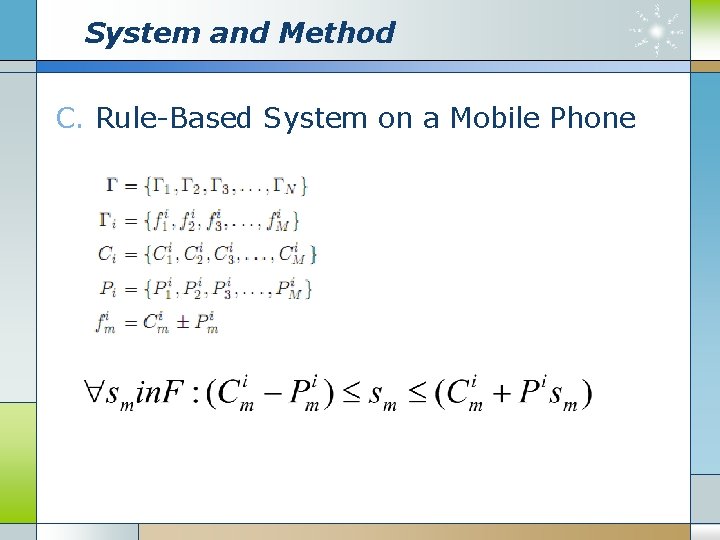

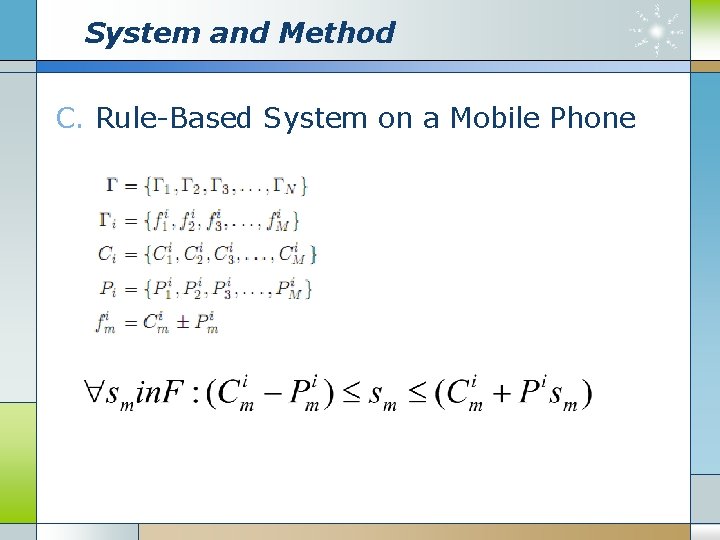

System and Method C. Rule-Based System on a Mobile Phone

Implementation & Results v Implementation ① programmed with J 2 EE using the Weka library ② Use Net. Beans Interface to develop a system on mobile phone as Nokia E 55 ③ Java Wireless Messaging API ④ Java Bluetooth API

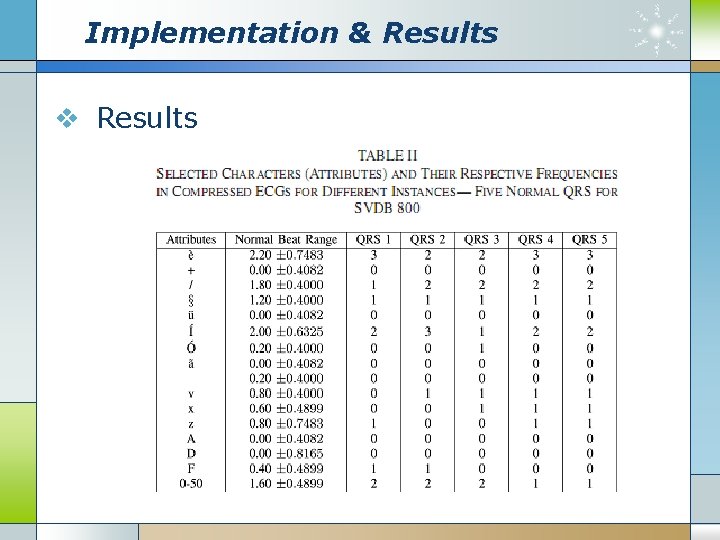

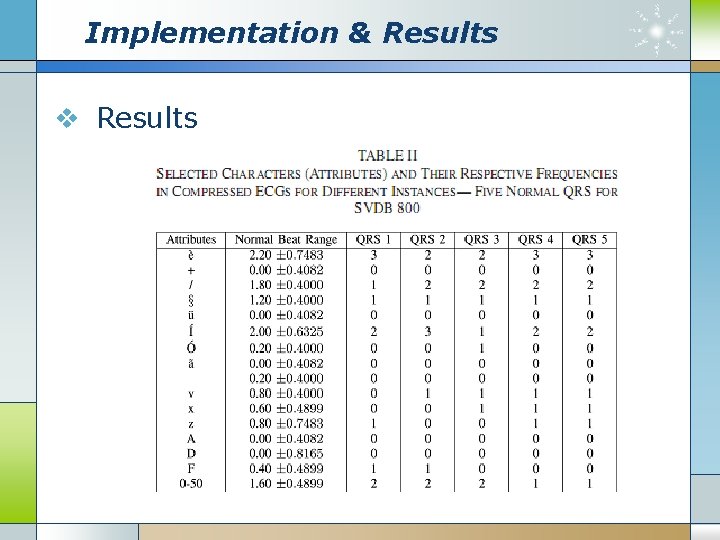

Implementation & Results v Results

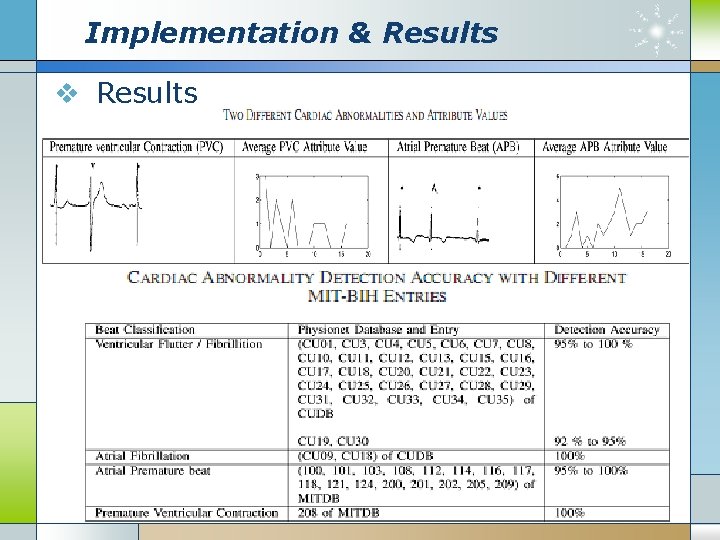

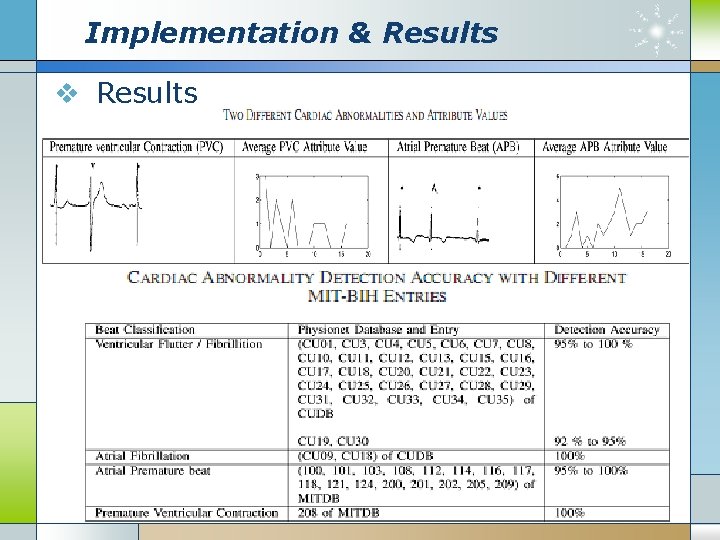

Implementation & Results v Results

Conclusion v Faster diagnosis is absolutely crucial for patient’s survival v CVD detection mechanism directly from compressed ECG v Using data mining techniques CFS, EM v A rule-based system was implemented on mobile platform

Case (2) v. Multiscale Recurrence Quantification Analysis of Spatial Cardiac Vectorcardiogram Signals IEEE TRANSACTIONS ON BIOMEDICAL ENGINEERING, VOL. 58, NO. 2, FEBRUARY 2011

Content v Introduction v Research Methods v Experimental Design v Results v Discussion and Conclusion

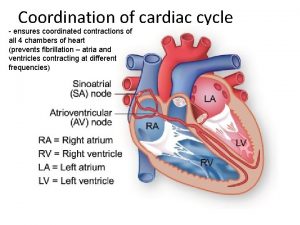

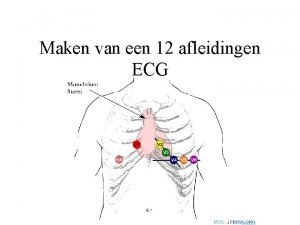

Introduction v MI (Myocardial Infarction) is one single leading cause of mortality in America. v The standard clinical method is an analysis the waveform pattern of ECG. v 12 -lead ECG(location) and 3 -lead vectorcardiogram (VCG), are designed for a multidirectional view of the cardiacelectrical activity.

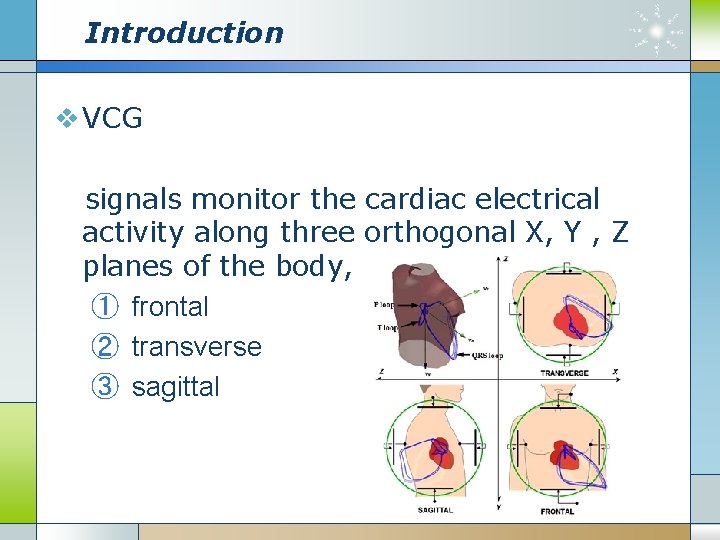

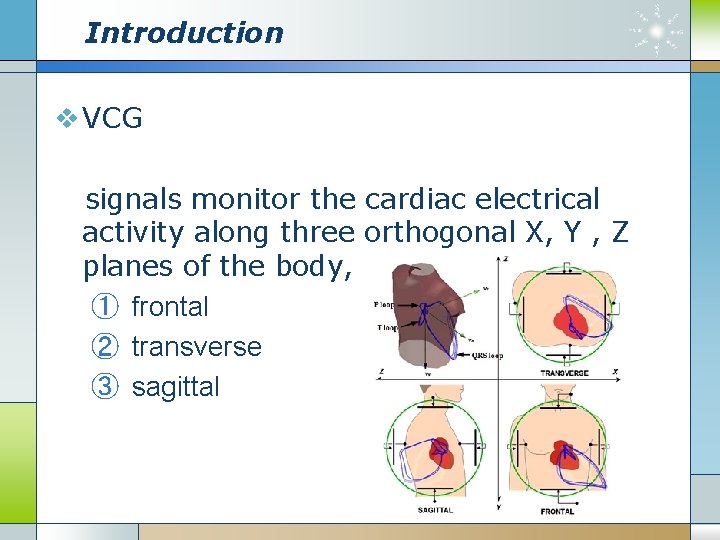

Introduction v VCG signals monitor the cardiac electrical activity along three orthogonal X, Y , Z planes of the body, ① frontal ② transverse ③ sagittal

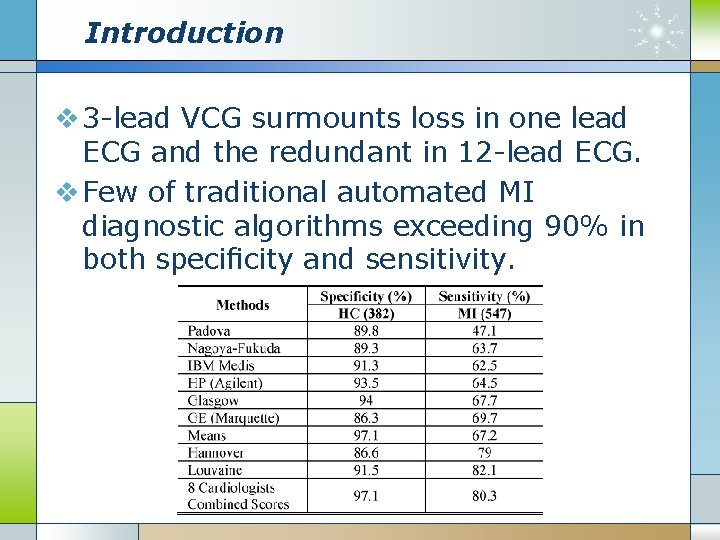

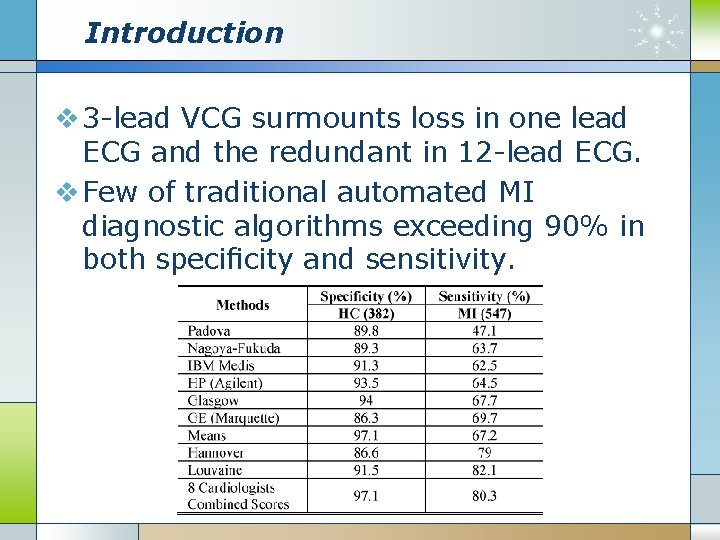

Introduction v 3 -lead VCG surmounts loss in one lead ECG and the redundant in 12 -lead ECG. v Few of traditional automated MI diagnostic algorithms exceeding 90% in both specificity and sensitivity.

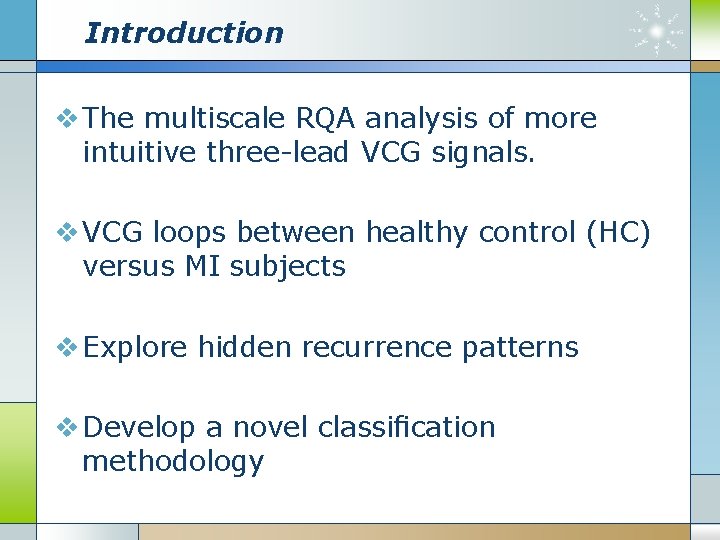

Introduction v The multiscale RQA analysis of more intuitive three-lead VCG signals. v VCG loops between healthy control (HC) versus MI subjects v Explore hidden recurrence patterns v Develop a novel classification methodology

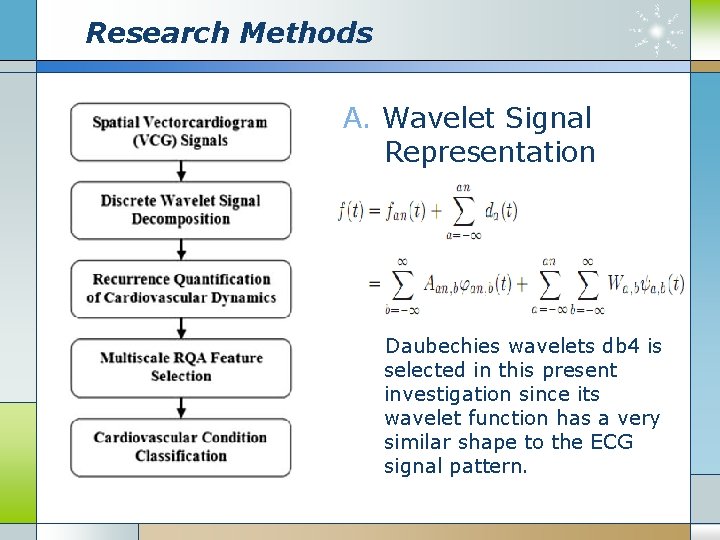

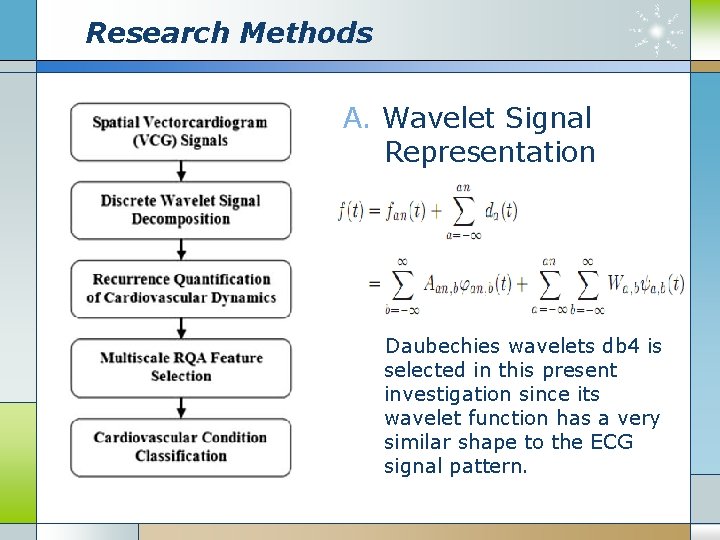

Research Methods A. Wavelet Signal Representation Daubechies wavelets db 4 is selected in this present investigation since its wavelet function has a very similar shape to the ECG signal pattern.

Research Methods B. Recurrence Quantification of Cardiovascular Dynamics

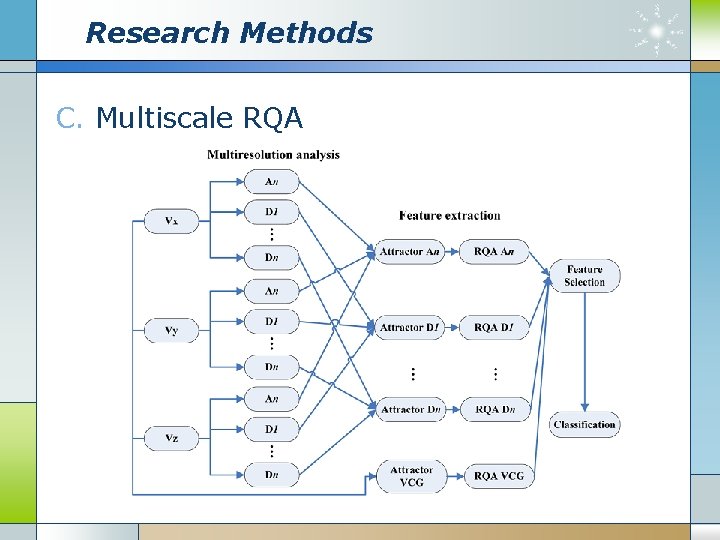

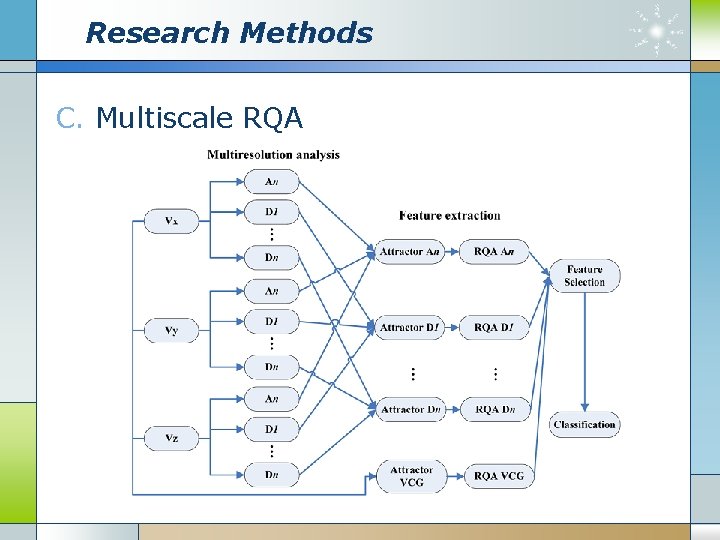

Research Methods C. Multiscale RQA

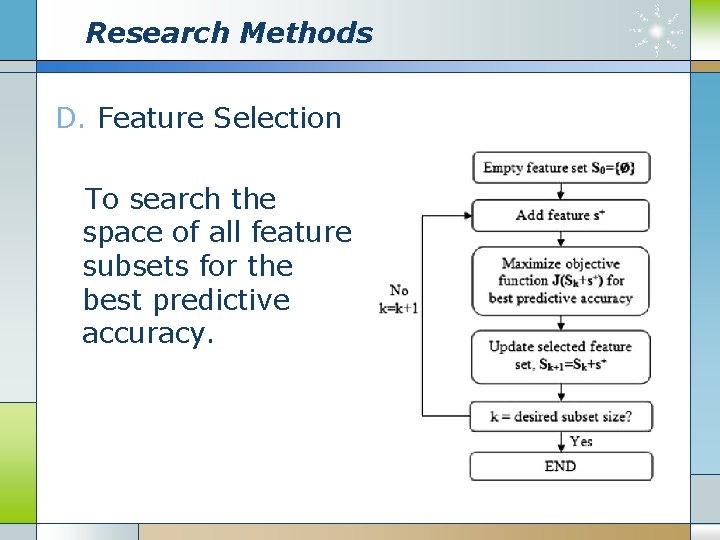

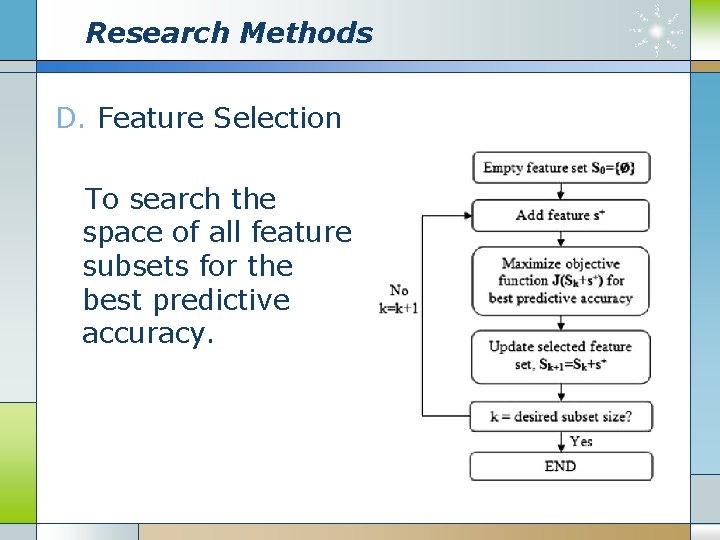

Research Methods D. Feature Selection To search the space of all feature subsets for the best predictive accuracy.

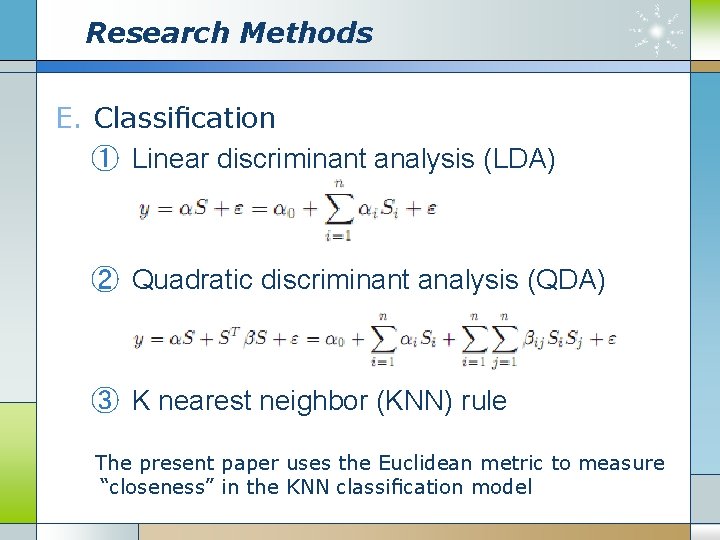

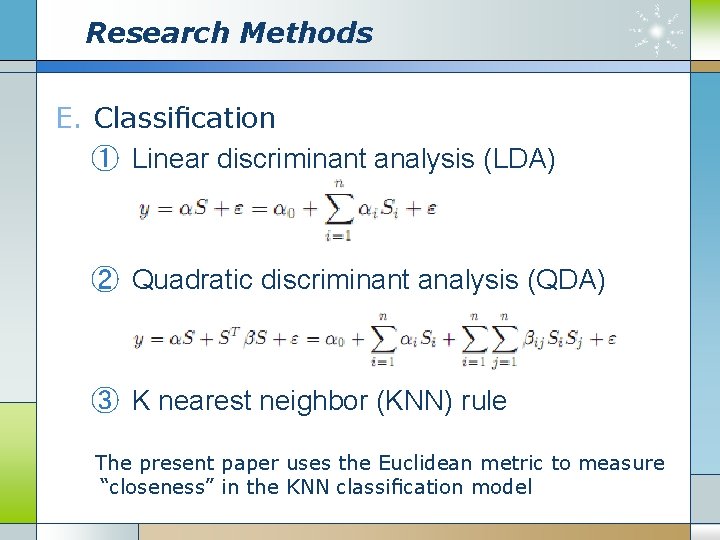

Research Methods E. Classification ① Linear discriminant analysis (LDA) ② Quadratic discriminant analysis (QDA) ③ K nearest neighbor (KNN) rule The present paper uses the Euclidean metric to measure “closeness” in the KNN classification model

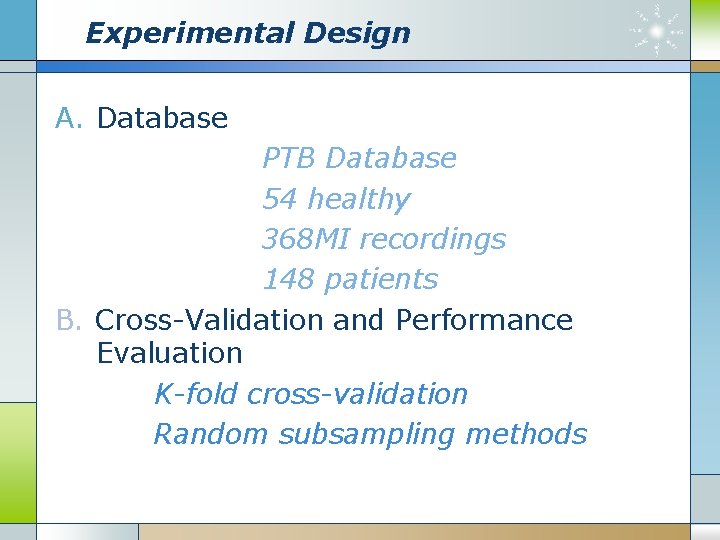

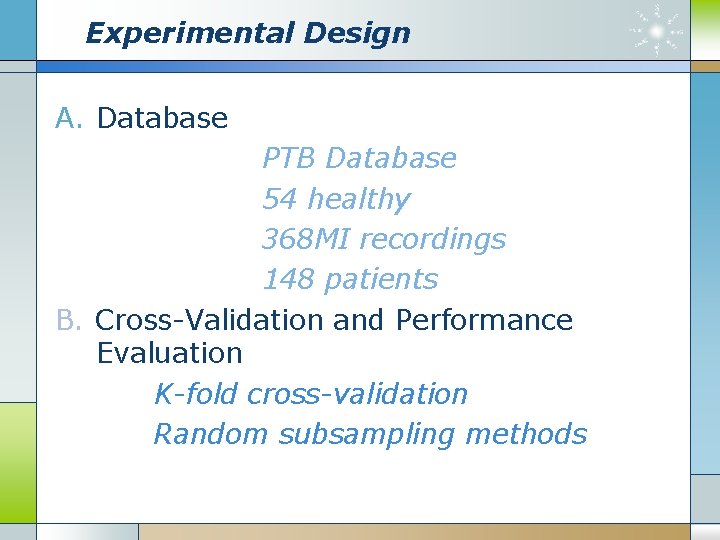

Experimental Design A. Database PTB Database 54 healthy 368 MI recordings 148 patients B. Cross-Validation and Performance Evaluation K-fold cross-validation Random subsampling methods

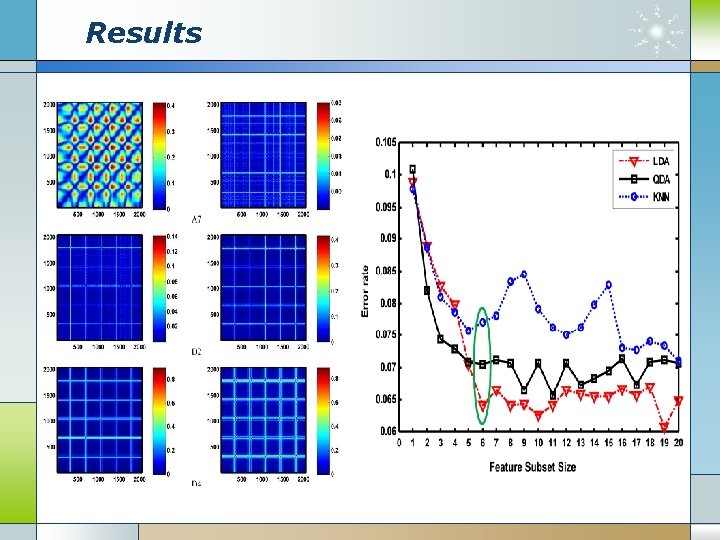

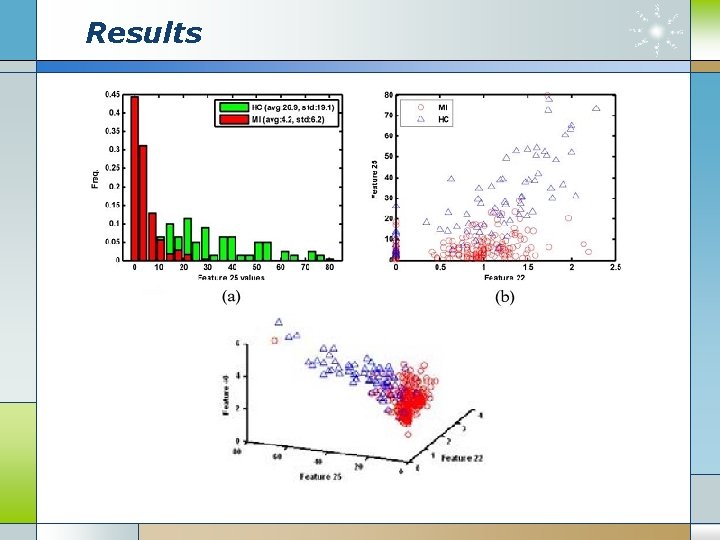

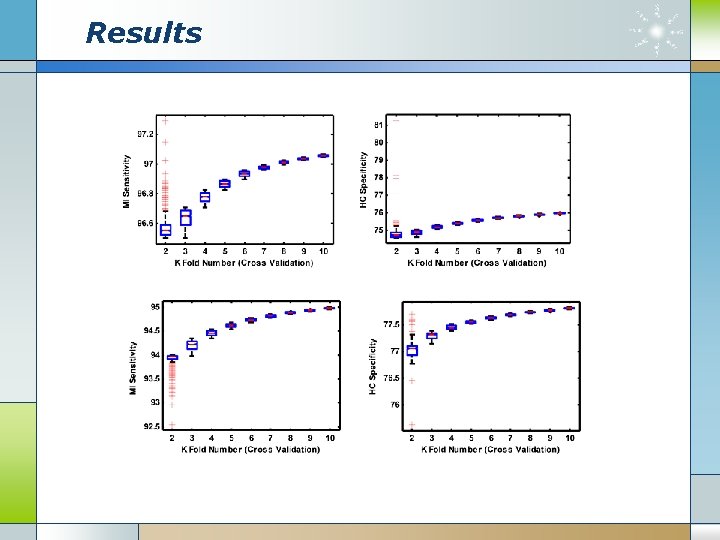

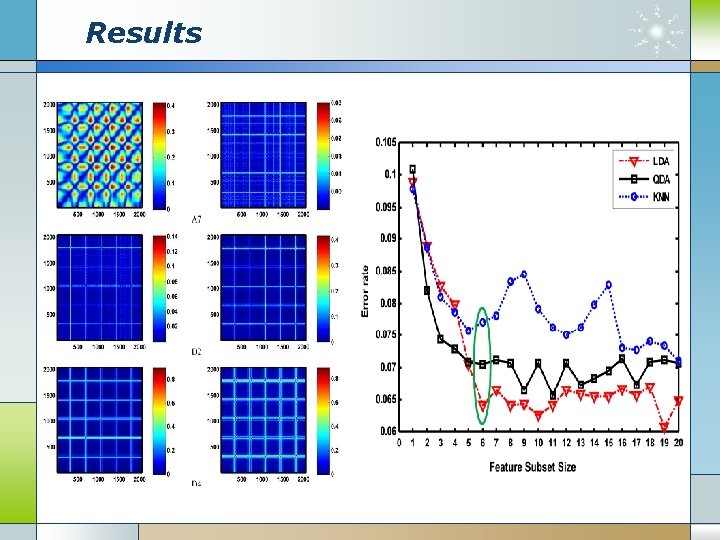

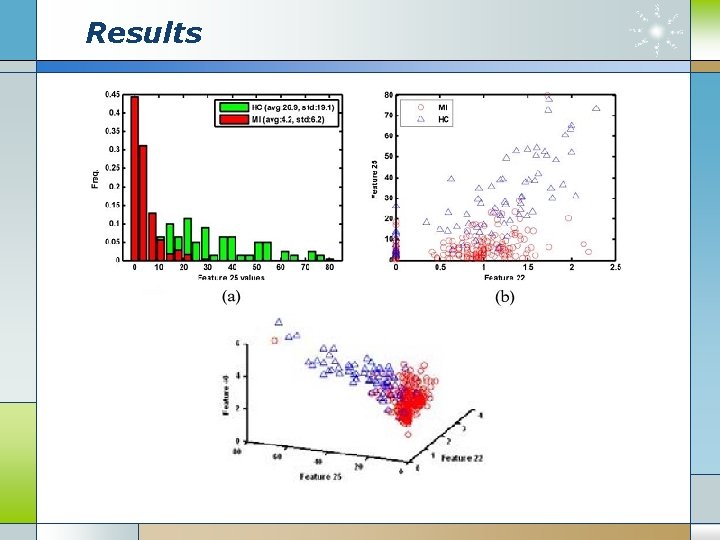

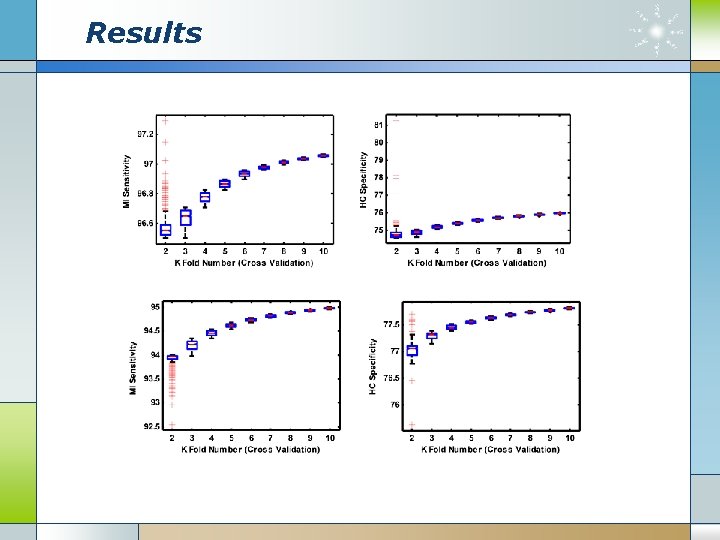

Results

Results

Results

Discussion and conclusion v Due to the various nidus locations, it is challenging to characterize and identify the ECG signal pathological patterns of MI. v ECG or VCG signals from MI are shown to have significantly complex patterns under a different combination of MI conditions. v This method present a much better performance than other standard methods

Case (3) v. Robust Detection of Premature Ventricular Contractions Using a Wave. Based Bayesian Framework IEEE TRANSACTIONS ON BIOMEDICAL ENGINEERING, VOL. 57, NO. 2, FEBRUARY 2010

Content v Introduction v Wave-based ECG Dynamical Model v Bayesian State Estimation Through EKF v Bayesian Detection of PVC v Results v Discussion and Conclusion

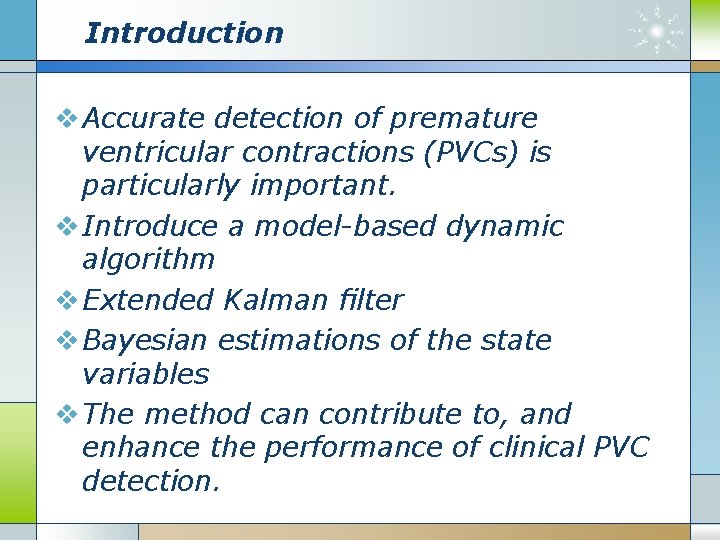

Introduction v Accurate detection of premature ventricular contractions (PVCs) is particularly important. v Introduce a model-based dynamic algorithm v Extended Kalman filter v Bayesian estimations of the state variables v The method can contribute to, and enhance the performance of clinical PVC detection.

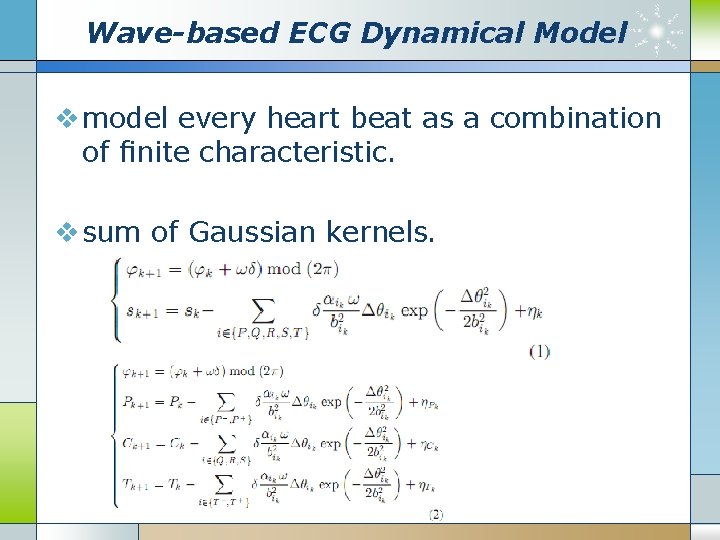

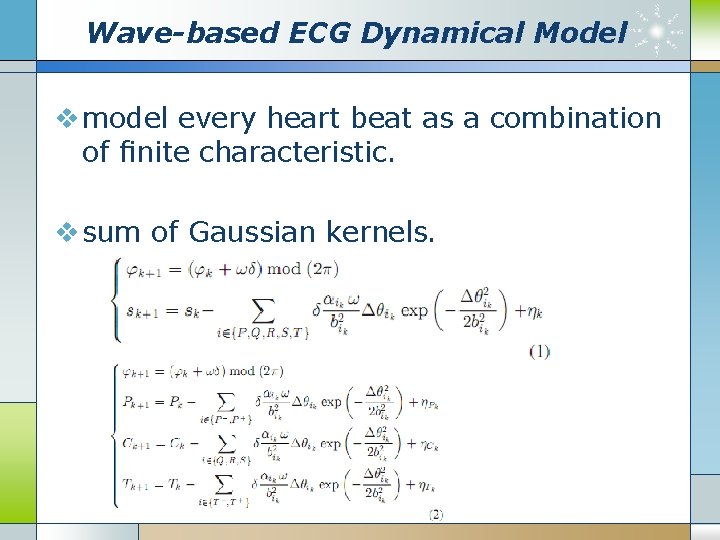

Wave-based ECG Dynamical Model v model every heart beat as a combination of finite characteristic. v sum of Gaussian kernels.

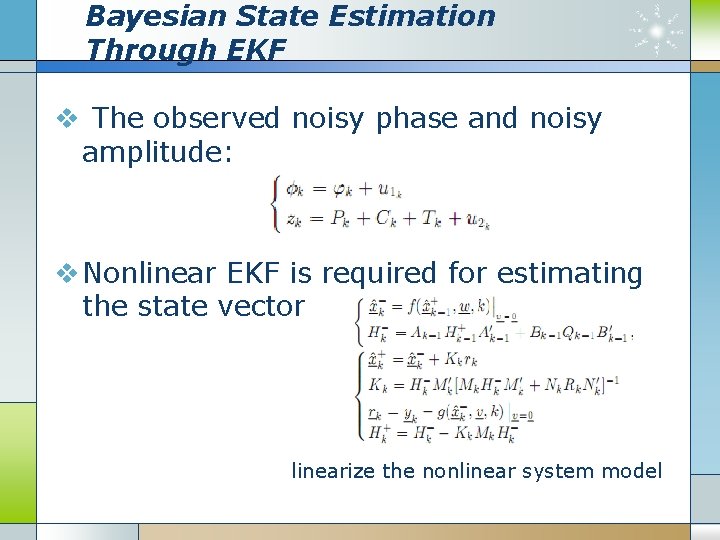

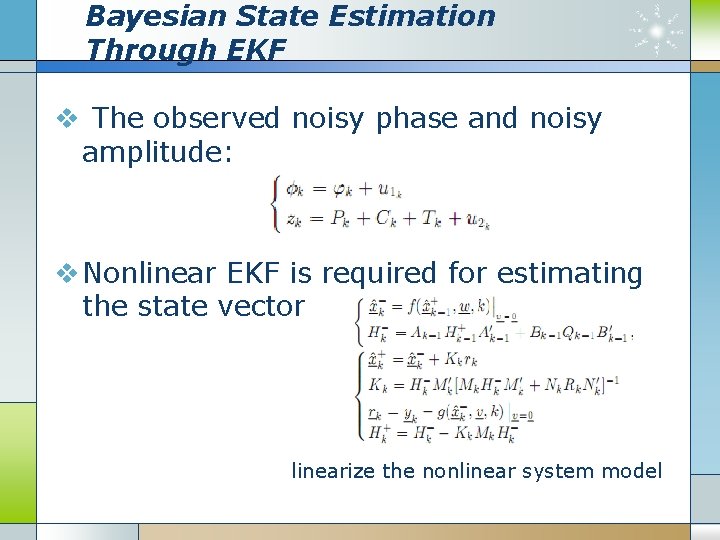

Bayesian State Estimation Through EKF v The observed noisy phase and noisy amplitude: v Nonlinear EKF is required for estimating the state vector linearize the nonlinear system model

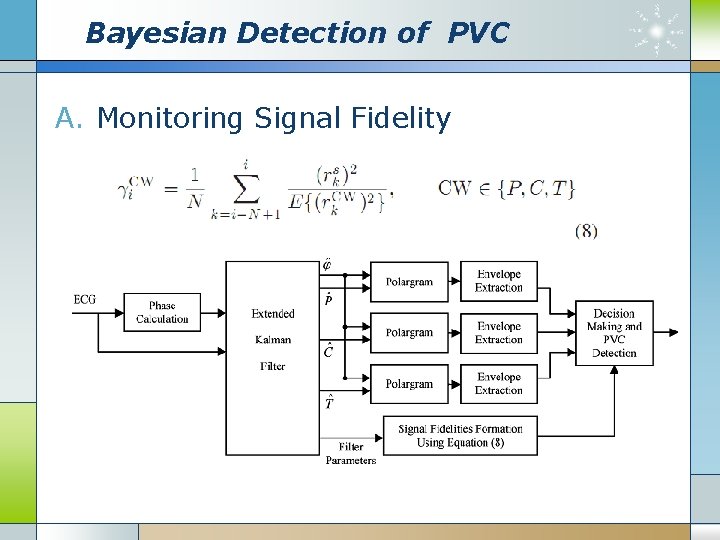

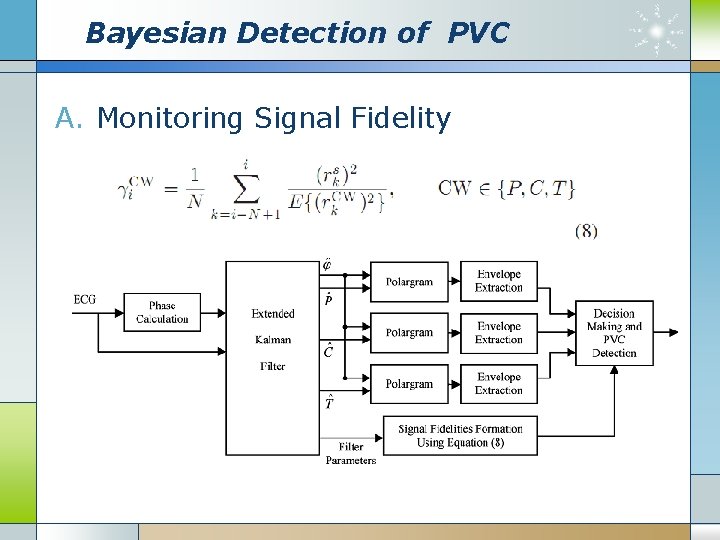

Bayesian Detection of PVC A. Monitoring Signal Fidelity

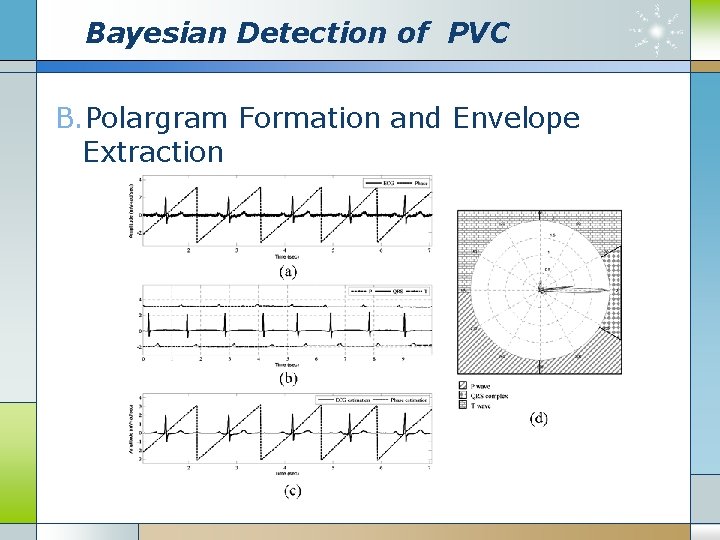

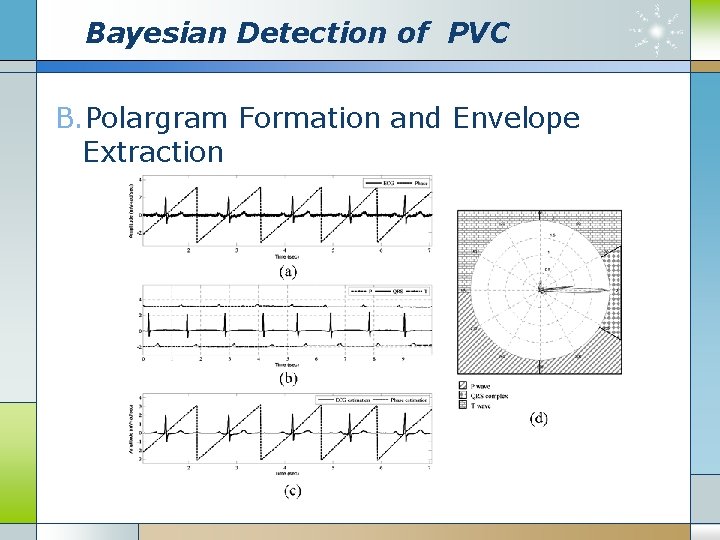

Bayesian Detection of PVC B. Polargram Formation and Envelope Extraction

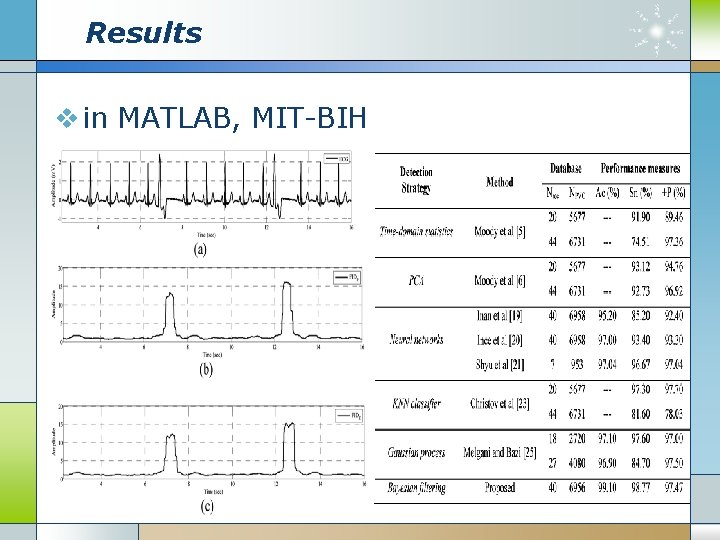

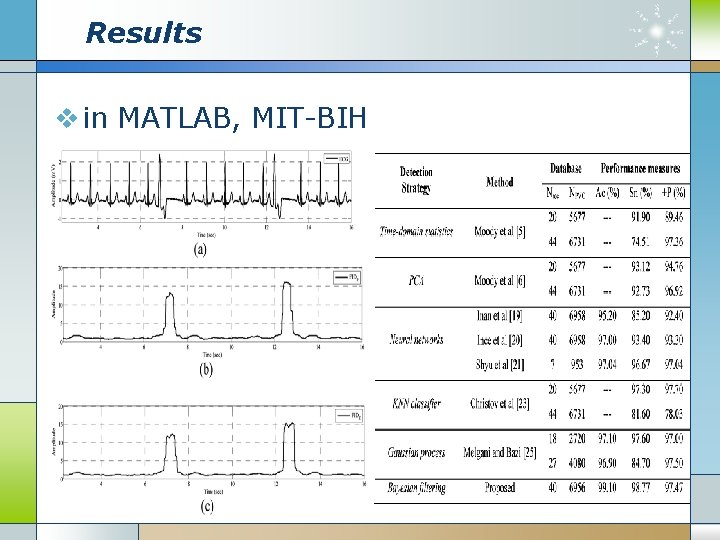

Results v in MATLAB, MIT-BIH

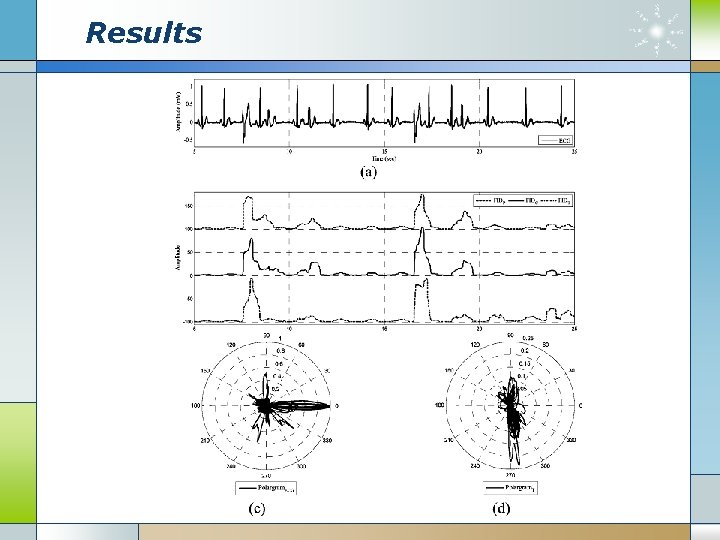

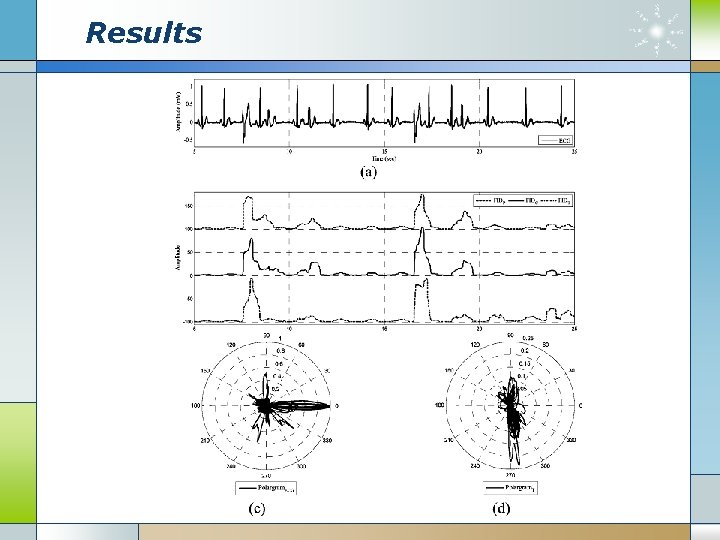

Results

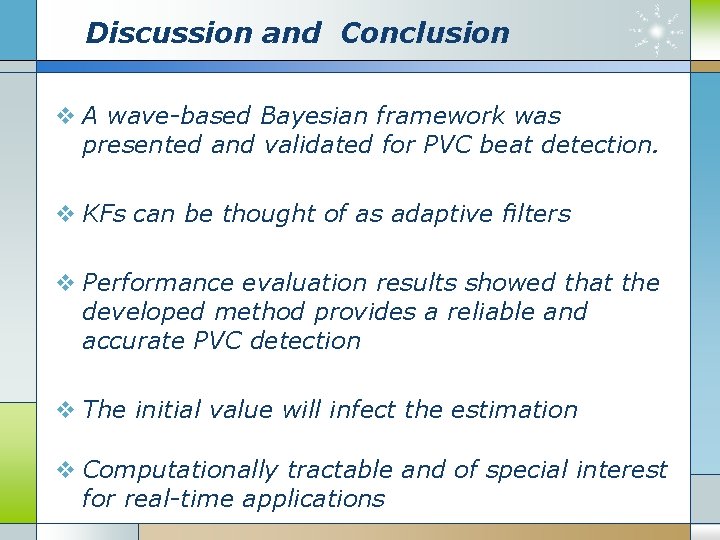

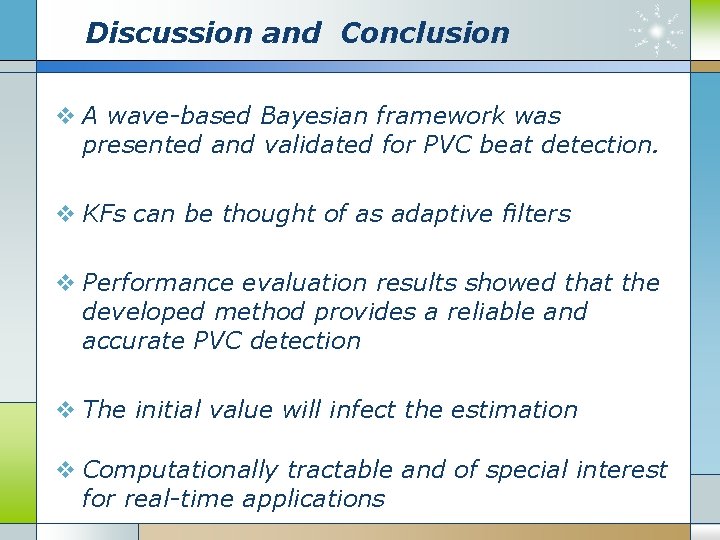

Discussion and Conclusion v A wave-based Bayesian framework was presented and validated for PVC beat detection. v KFs can be thought of as adaptive filters v Performance evaluation results showed that the developed method provides a reliable and accurate PVC detection v The initial value will infect the estimation v Computationally tractable and of special interest for real-time applications

Case (4) v. Active Learning Methods for Electrocardiographic Signal Classification IEEE TRANSACTIONS ON INFORMATION TECHNOLOGY IN BIOMEDICINE, VOL. 14, NO. 6, NOVEMBER 2010

Content v Introduction v Support Vector Machines v Active Learning Methods v Experiments & Results v Conclusion

Introduction v ECG signals represent a useful information source about the rhythm and functioning of the heart. v To obtain an efficient and robust ECG classification system v SVM classifier has a good generalization capability and is less sensitive to the curse of dimensionality. v Automatic construction of the set of training samples – active learning

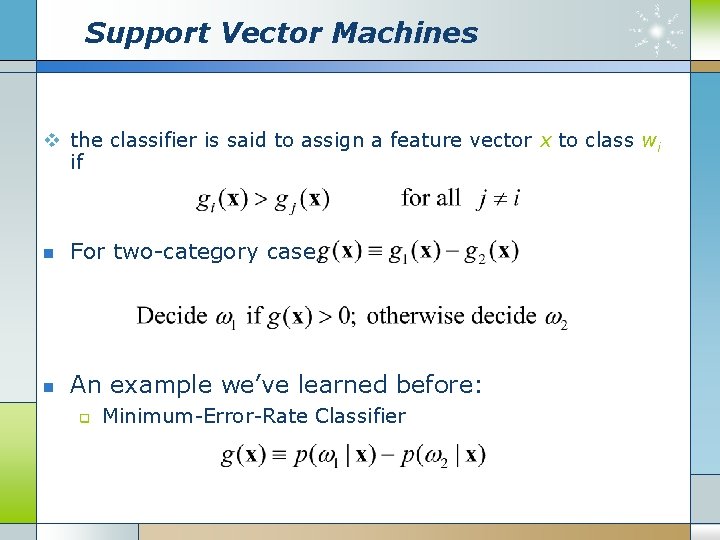

Support Vector Machines v the classifier is said to assign a feature vector x to class wi if n For two-category case, n An example we’ve learned before: q Minimum-Error-Rate Classifier

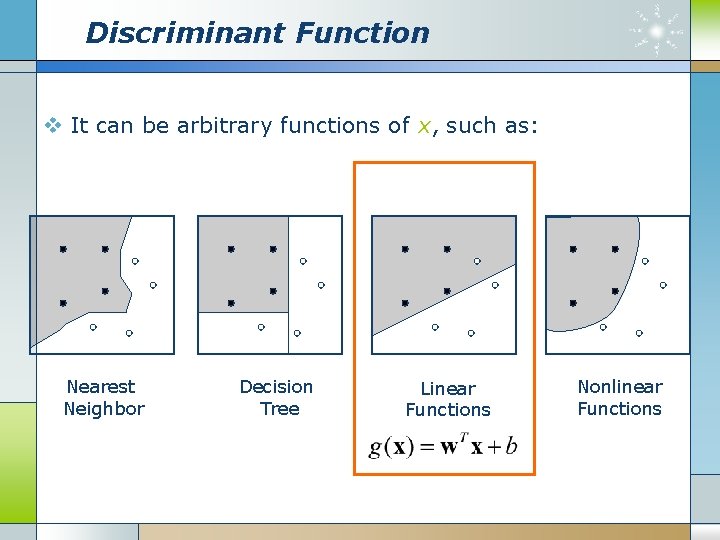

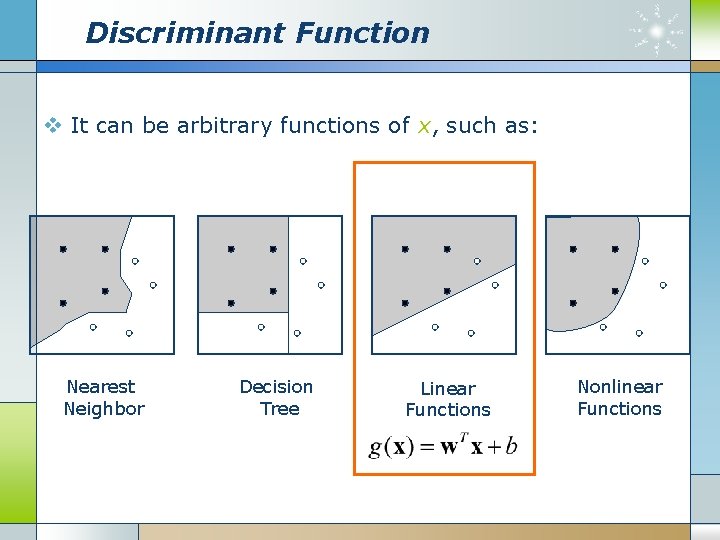

Discriminant Function v It can be arbitrary functions of x, such as: Nearest Neighbor Decision Tree Linear Functions Nonlinear Functions

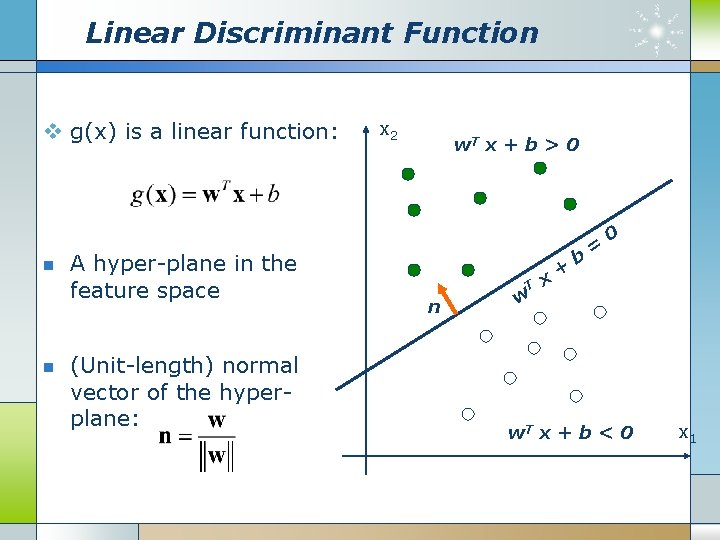

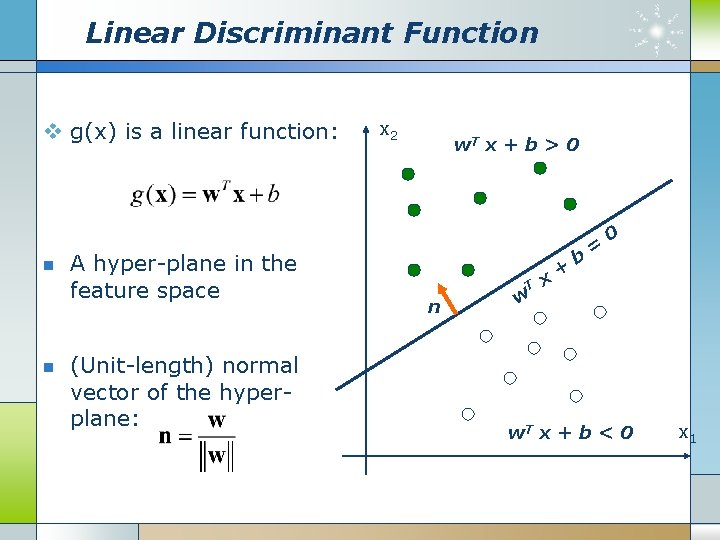

Linear Discriminant Function v g(x) is a linear function: n n A hyper-plane in the feature space (Unit-length) normal vector of the hyperplane: x 2 w. T x + b > 0 T n w x + b = 0 w. T x + b < 0 x 1

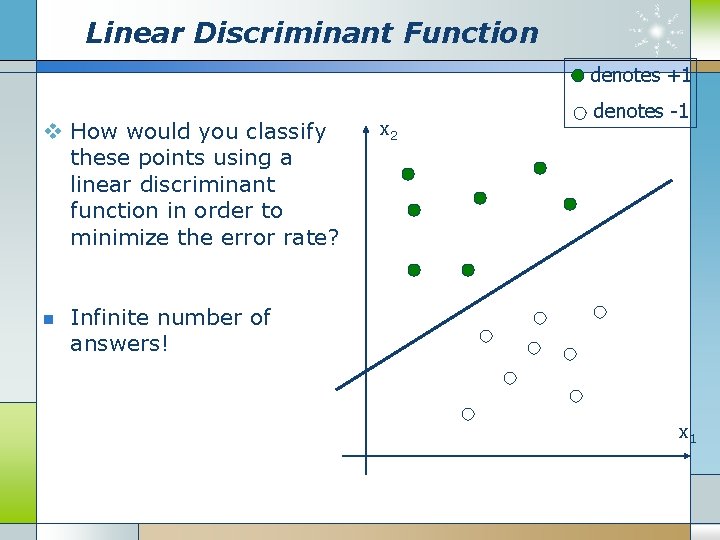

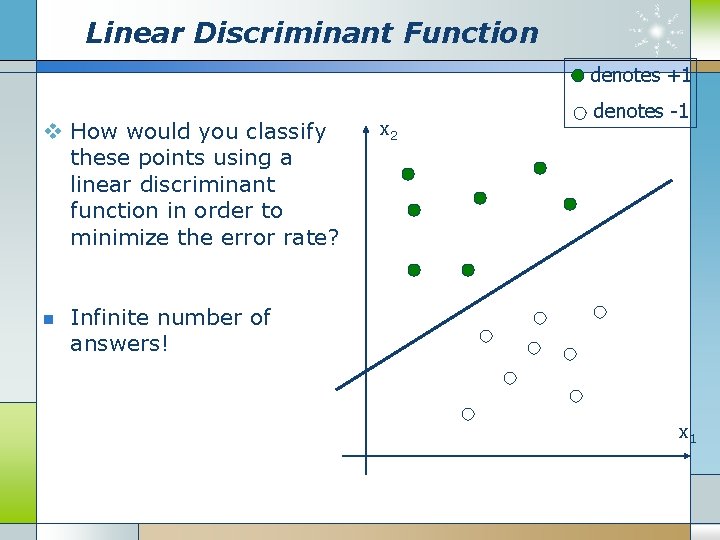

Linear Discriminant Function denotes +1 v How would you classify these points using a linear discriminant function in order to minimize the error rate? n x 2 denotes -1 Infinite number of answers! x 1

Linear Discriminant Function denotes +1 v How would you classify these points using a linear discriminant function in order to minimize the error rate? n x 2 denotes -1 Infinite number of answers! x 1

Linear Discriminant Function denotes +1 v How would you classify these points using a linear discriminant function in order to minimize the error rate? n x 2 denotes -1 Infinite number of answers! x 1

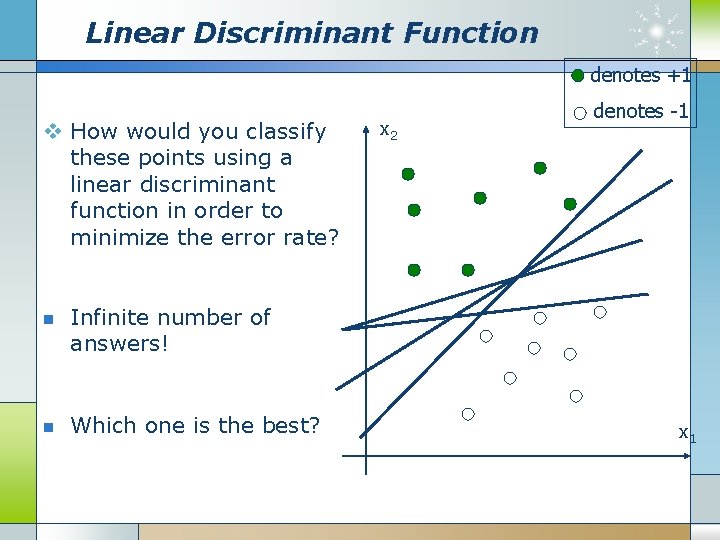

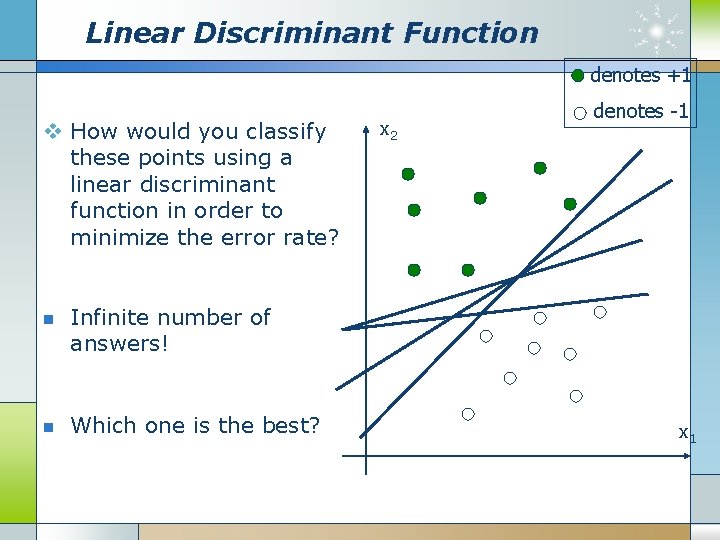

Linear Discriminant Function denotes +1 v How would you classify these points using a linear discriminant function in order to minimize the error rate? n n x 2 denotes -1 Infinite number of answers! Which one is the best? x 1

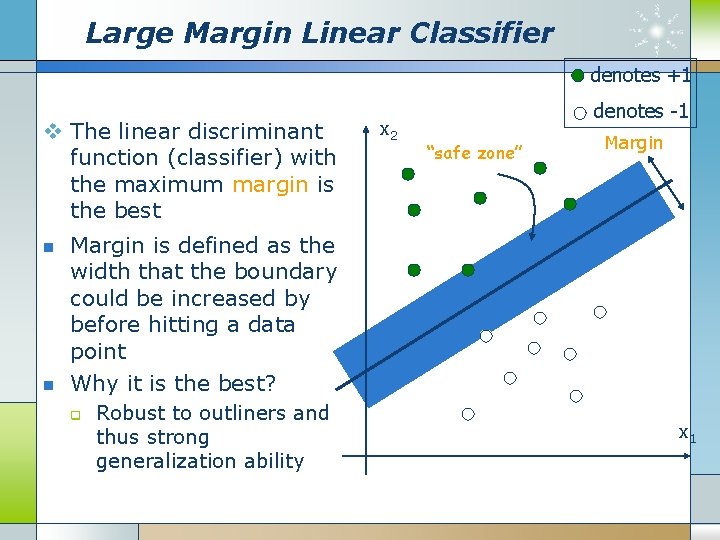

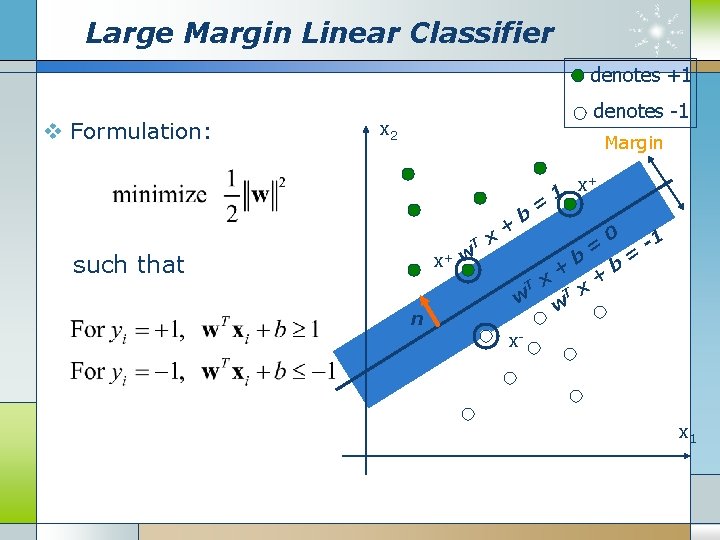

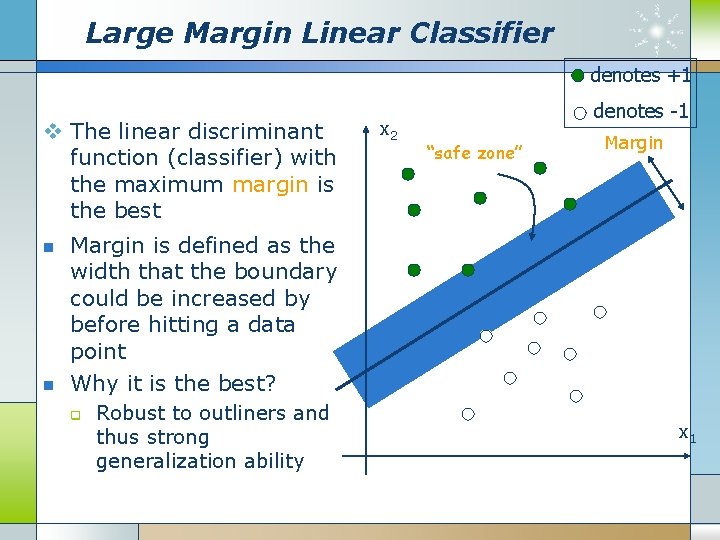

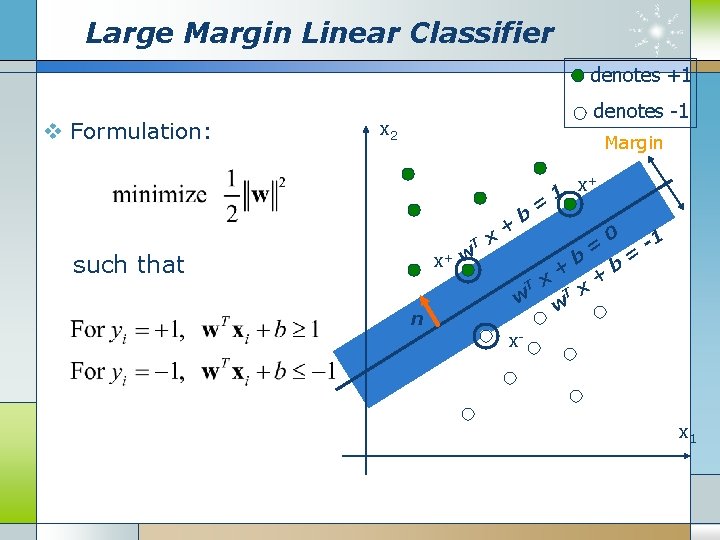

Large Margin Linear Classifier denotes +1 v The linear discriminant function (classifier) with the maximum margin is the best n n x 2 denotes -1 “safe zone” Margin is defined as the width that the boundary could be increased by before hitting a data point Why it is the best? q Robust to outliners and thus strong generalization ability x 1

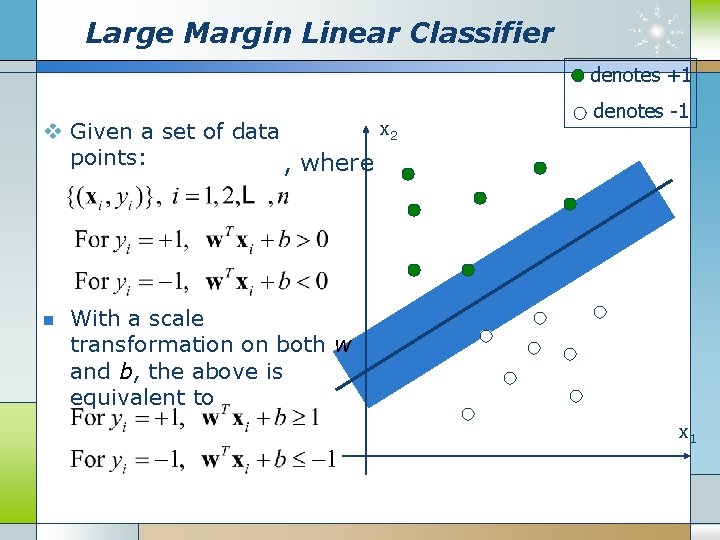

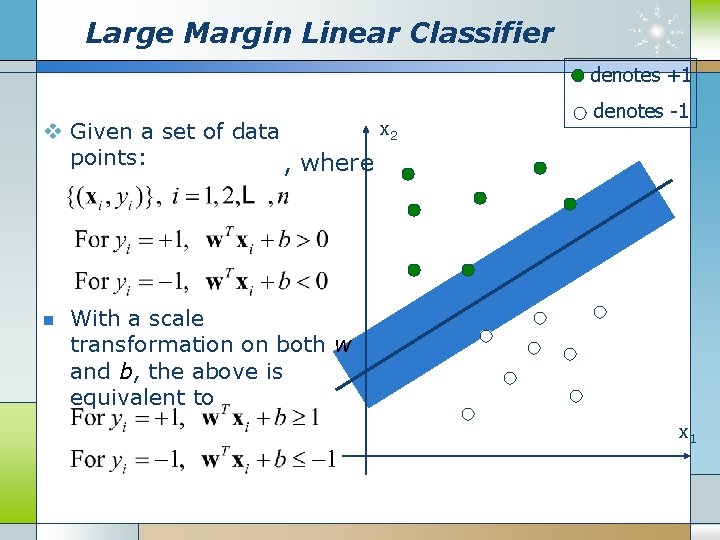

Large Margin Linear Classifier denotes +1 x 2 v Given a set of data points: , where n denotes -1 With a scale transformation on both w and b, the above is equivalent to x 1

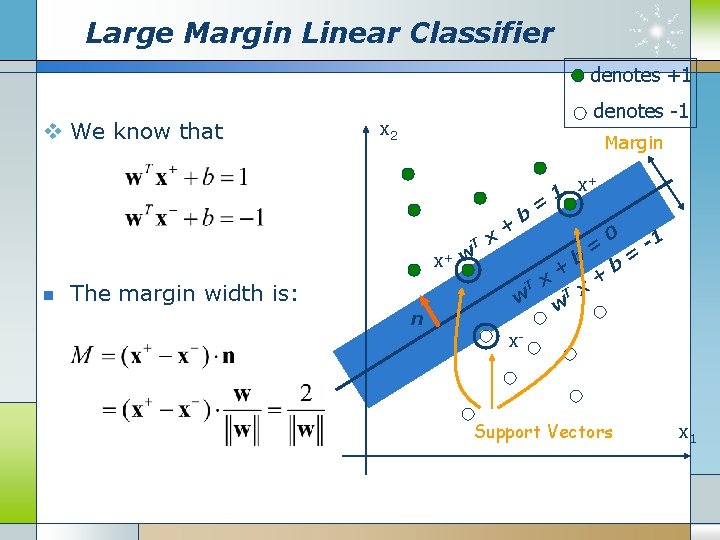

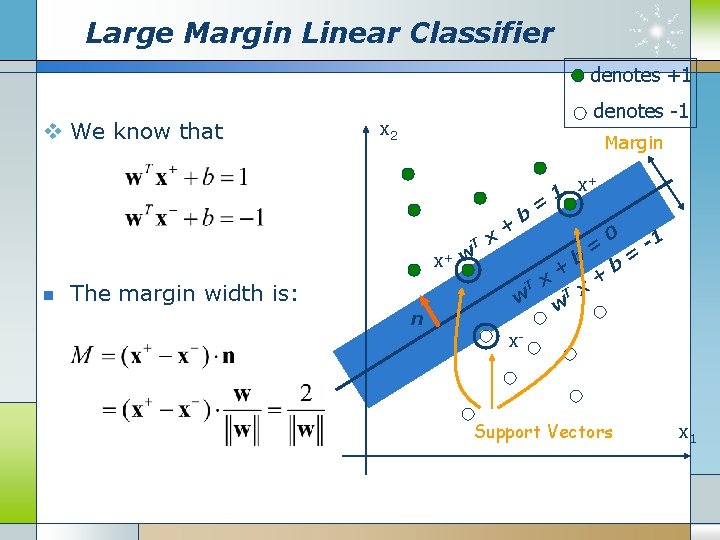

Large Margin Linear Classifier denotes +1 v We know that denotes -1 x 2 Margin T x+ w n x + b T The margin width is: n w = x x+ 1 + T w b x = 0 + b = -1 x- Support Vectors x 1

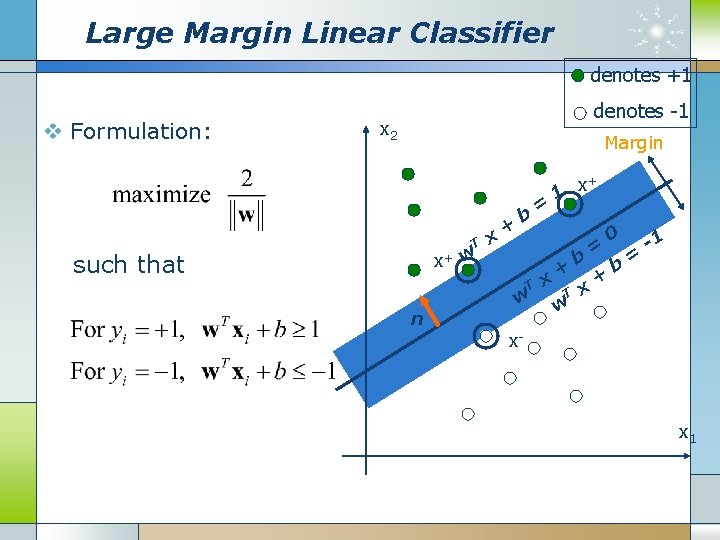

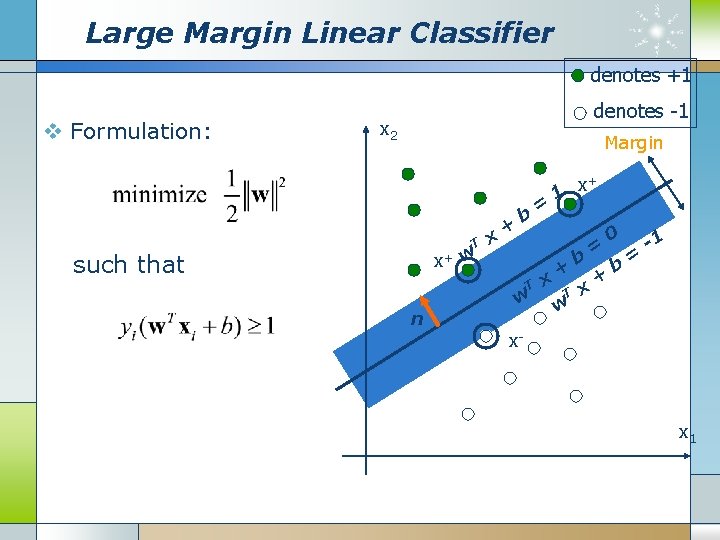

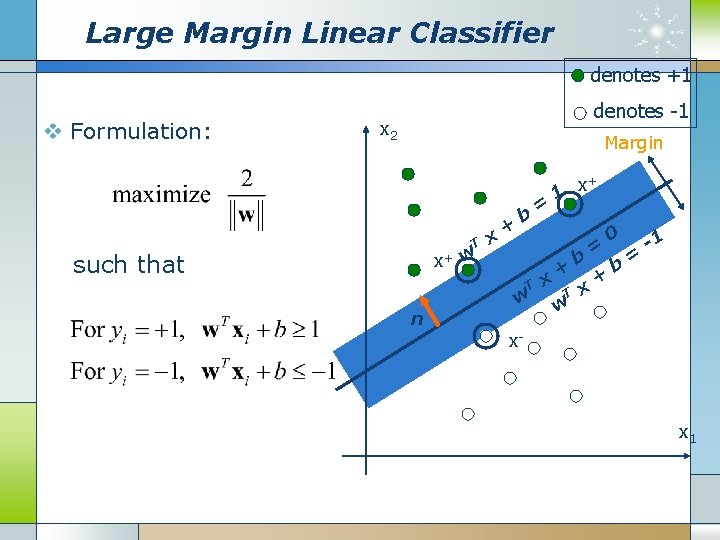

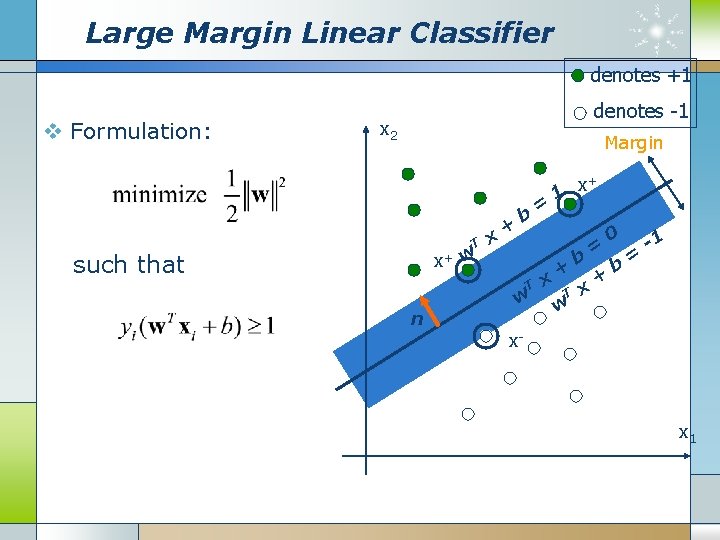

Large Margin Linear Classifier denotes +1 v Formulation: denotes -1 x 2 Margin T x+ w such that x + b T n w = x x+ 1 + T w b x = + 0 b = -1 x- x 1

Large Margin Linear Classifier denotes +1 v Formulation: denotes -1 x 2 Margin T x+ w such that x + b T n w = x x+ 1 + T w b x = + 0 b = -1 x- x 1

Large Margin Linear Classifier denotes +1 v Formulation: denotes -1 x 2 Margin T x+ w such that x + b T n w = x x+ 1 + T w b x = + 0 b = -1 x- x 1

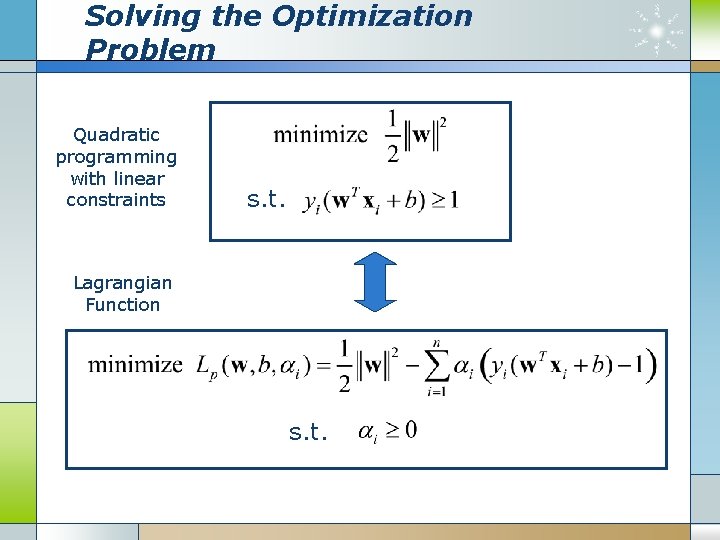

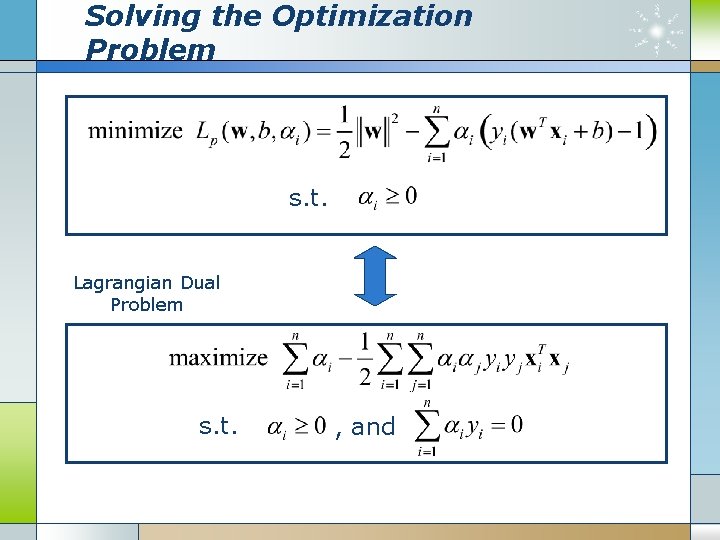

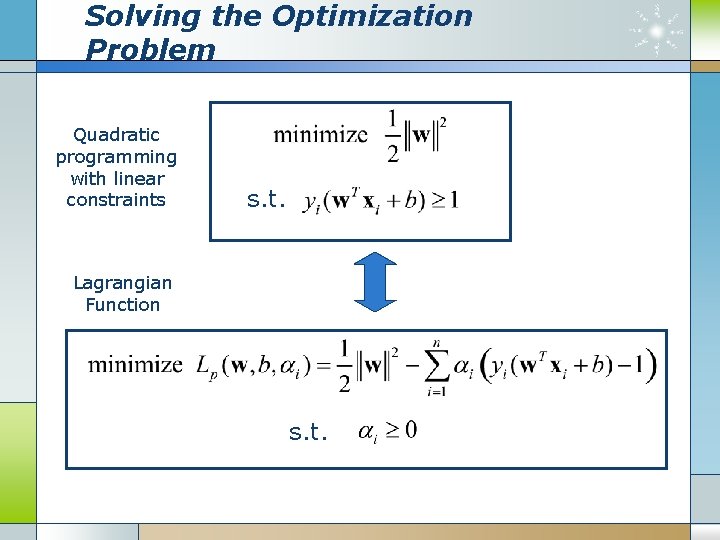

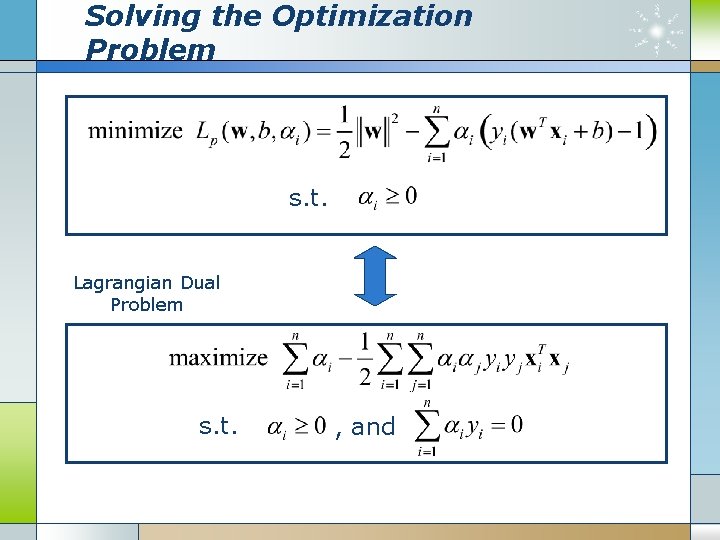

Solving the Optimization Problem Quadratic programming with linear constraints s. t. Lagrangian Function s. t.

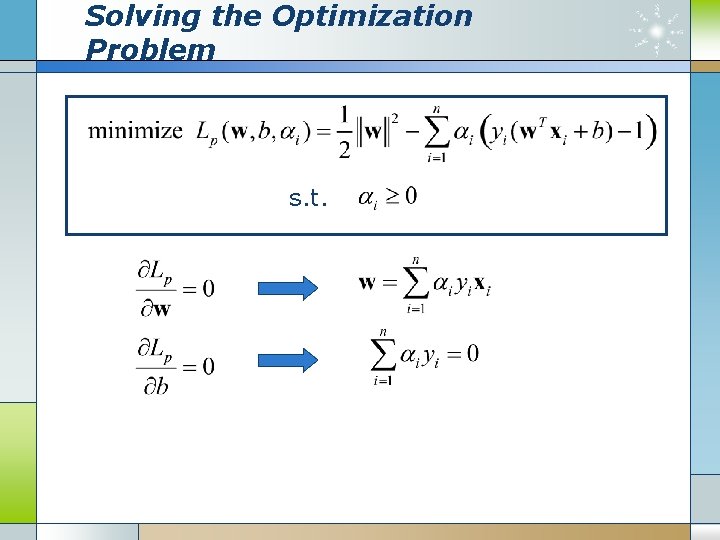

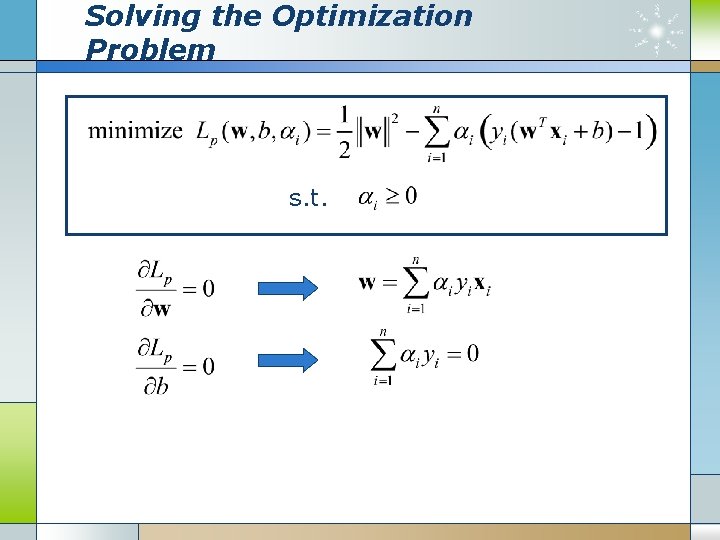

Solving the Optimization Problem s. t.

Solving the Optimization Problem s. t. Lagrangian Dual Problem s. t. , and

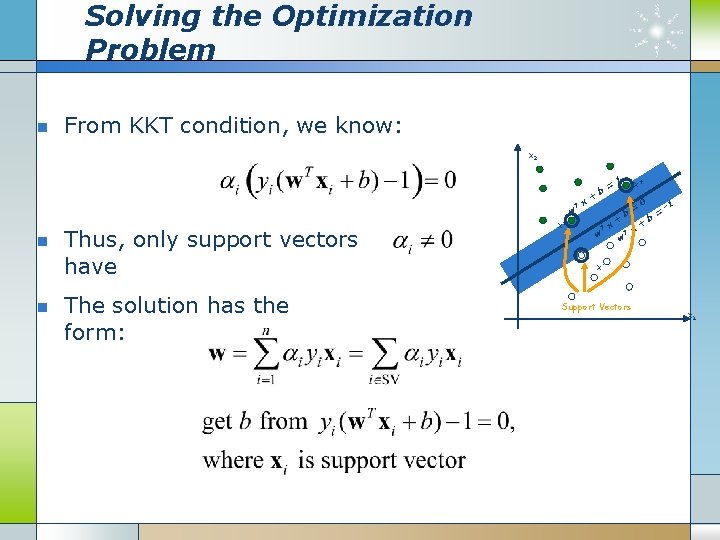

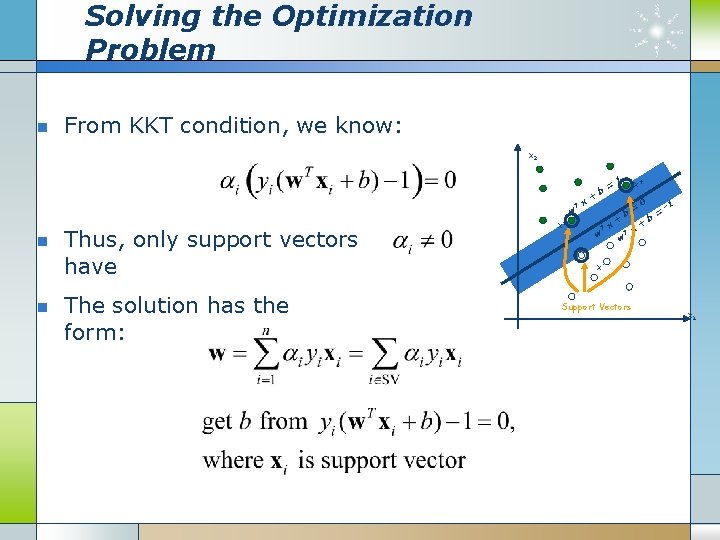

Solving the Optimization Problem n From KKT condition, we know: x 2 T w n n Thus, only support vectors have The solution has the form: x+ x + b T w = x 1 + x+ b T w = x 0 + b = -1 x- Support Vectors x 1

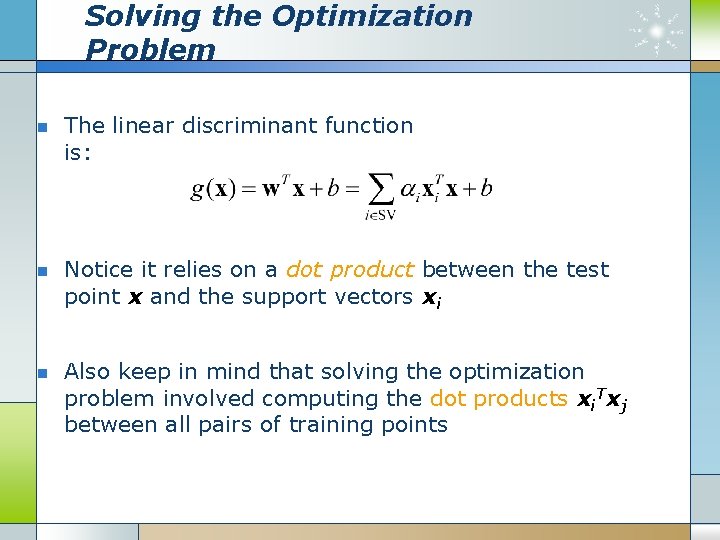

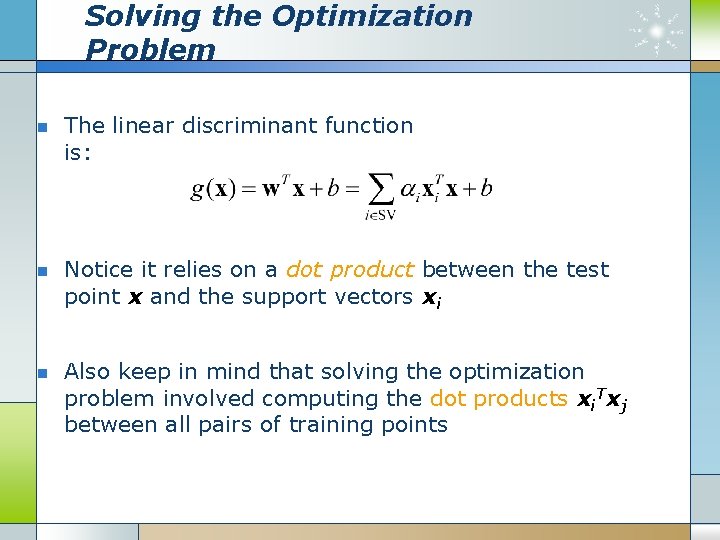

Solving the Optimization Problem n n n The linear discriminant function is: Notice it relies on a dot product between the test point x and the support vectors xi Also keep in mind that solving the optimization problem involved computing the dot products xi. Txj between all pairs of training points

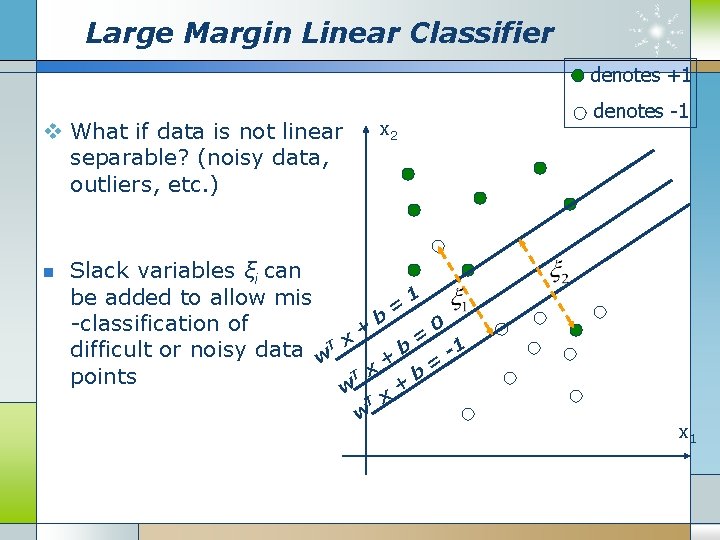

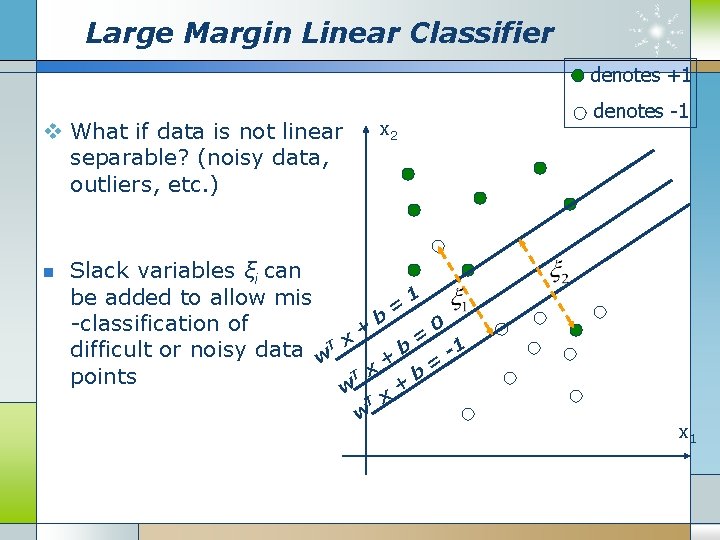

Large Margin Linear Classifier denotes +1 v What if data is not linear separable? (noisy data, outliers, etc. ) n x 2 denotes -1 Slack variables ξi can 1 be added to allow mis = b -classification of 0 + = difficult or noisy data w. T x + b -1 = T x b points w x+ T w x 1

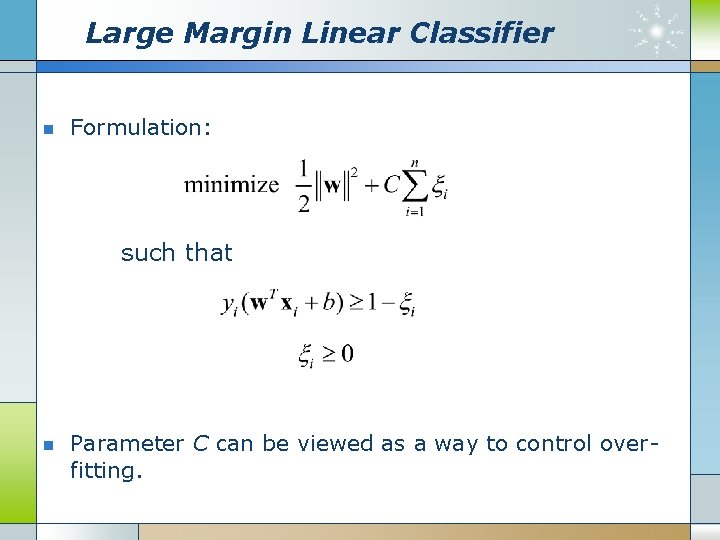

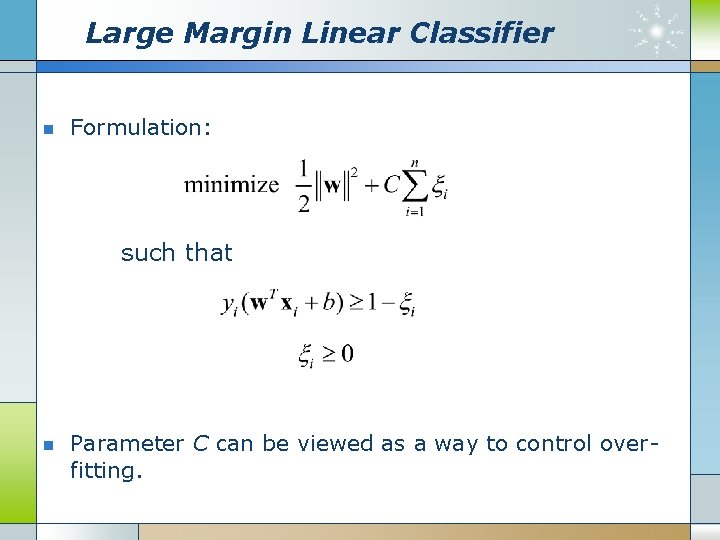

Large Margin Linear Classifier n Formulation: such that n Parameter C can be viewed as a way to control overfitting.

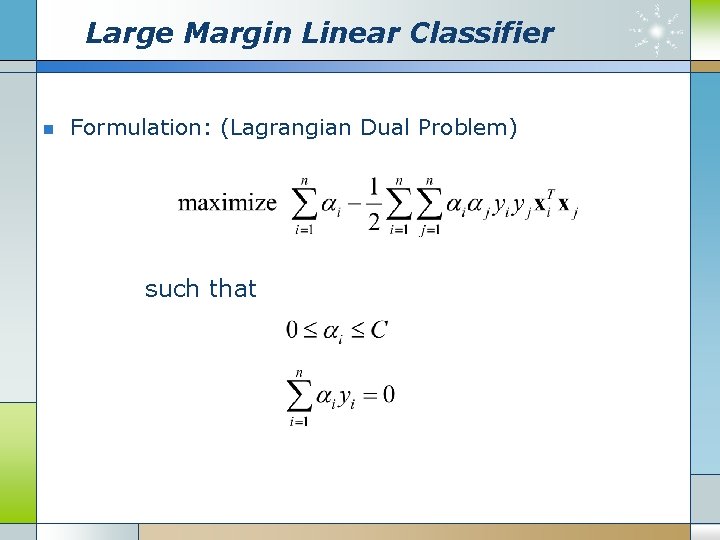

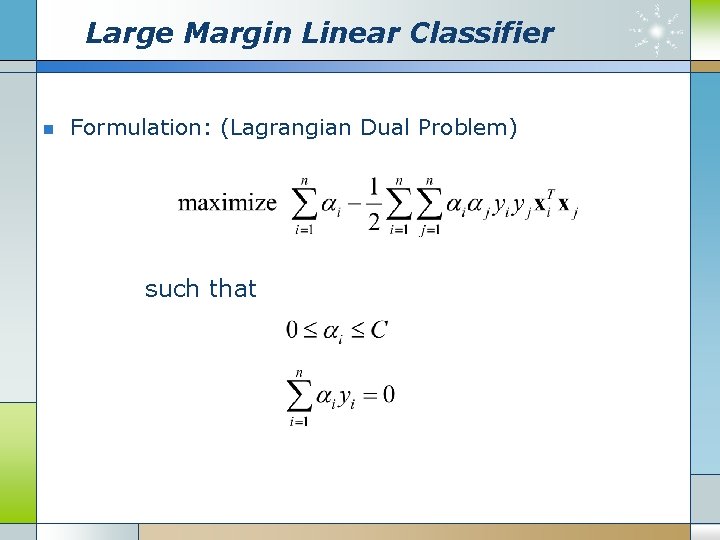

Large Margin Linear Classifier n Formulation: (Lagrangian Dual Problem) such that

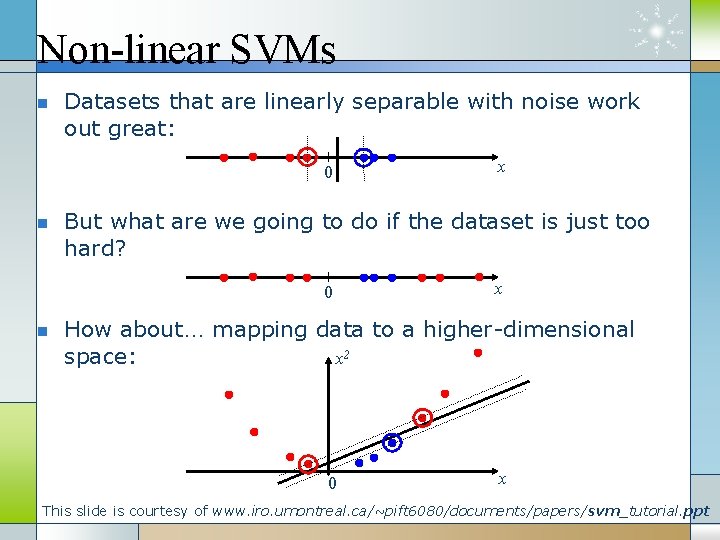

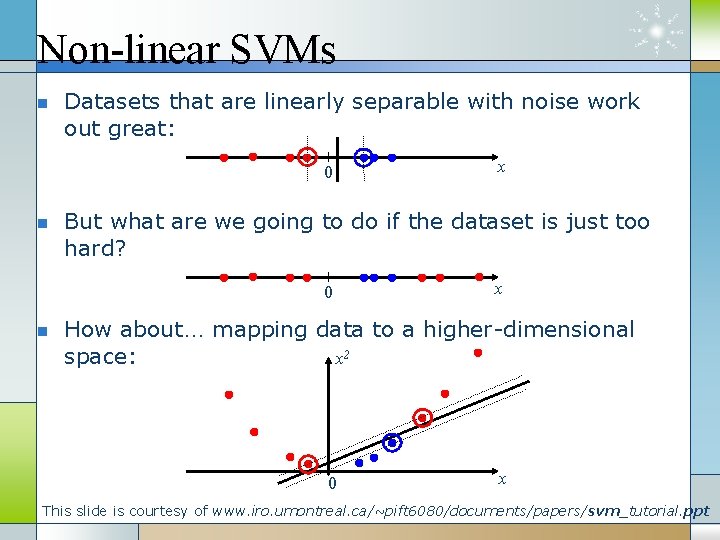

Non-linear SVMs n Datasets that are linearly separable with noise work out great: 0 n But what are we going to do if the dataset is just too hard? 0 n x x How about… mapping data to a higher-dimensional space: x 2 0 x This slide is courtesy of www. iro. umontreal. ca/~pift 6080/documents/papers/svm_tutorial. ppt

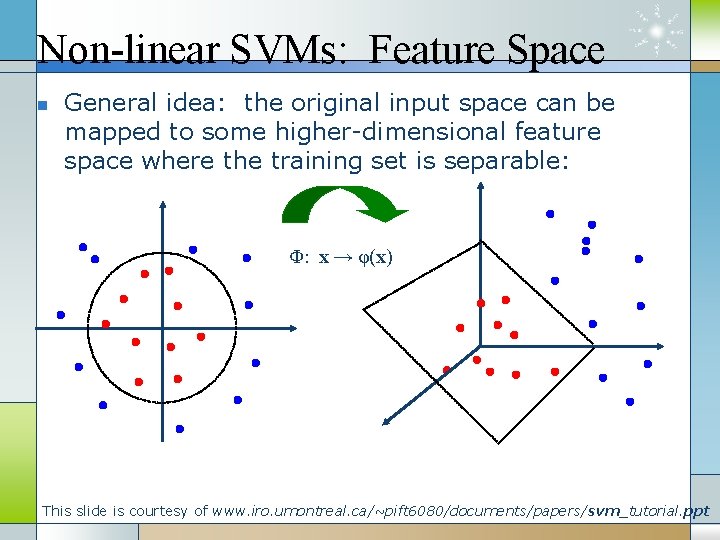

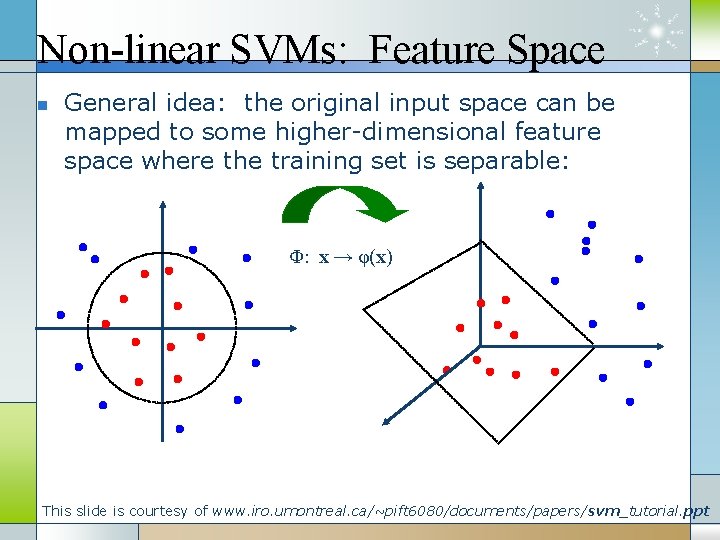

Non-linear SVMs: Feature Space n General idea: the original input space can be mapped to some higher-dimensional feature space where the training set is separable: Φ: x → φ(x) This slide is courtesy of www. iro. umontreal. ca/~pift 6080/documents/papers/svm_tutorial. ppt

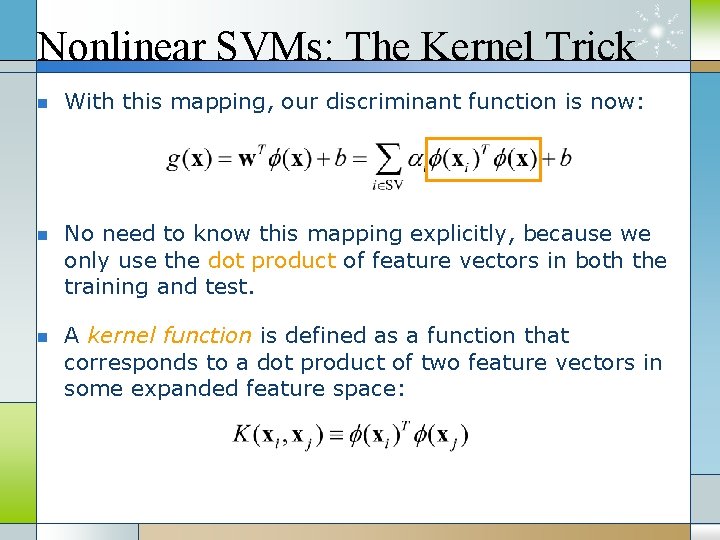

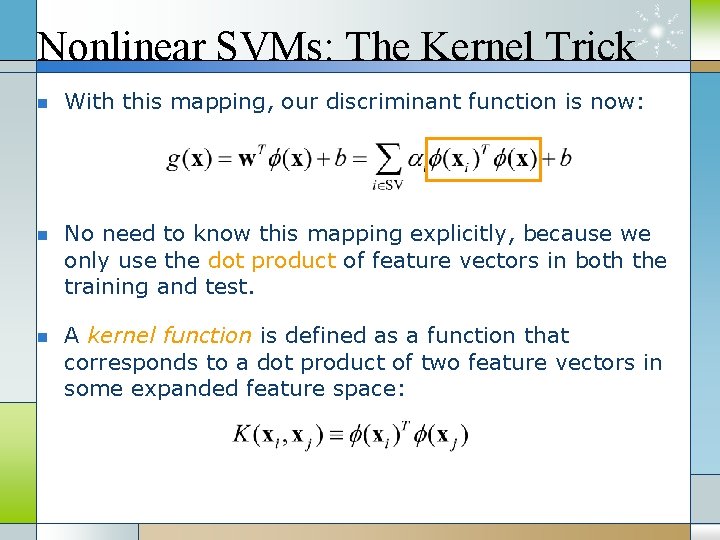

Nonlinear SVMs: The Kernel Trick n n n With this mapping, our discriminant function is now: No need to know this mapping explicitly, because we only use the dot product of feature vectors in both the training and test. A kernel function is defined as a function that corresponds to a dot product of two feature vectors in some expanded feature space:

Nonlinear SVMs: The Kernel Trick n An example: 2 -dimensional vectors x=[x 1 x 2]; let K(xi, xj)=(1 + xi. Txj)2, Need to show that K(xi, xj) = φ(xi) Tφ(xj): K(xi, xj)=(1 + xi. Txj)2, = 1+ xi 12 xj 12 + 2 xi 1 xj 1 xi 2 xj 2+ xi 22 xj 22 + 2 xi 1 xj 1 + 2 xi 2 xj 2 = [1 xi 12 √ 2 xi 1 xi 22 √ 2 xi 1 √ 2 xi 2]T [1 xj 12 √ 2 xj 1 xj 22 √ 2 xj 1 √ 2 xj 2] = φ(xi) Tφ(xj), where φ(x) = [1 x 12 √ 2 x 1 x 2 x 22 √ 2 x 1 √ 2 x 2] This slide is courtesy of www. iro. umontreal. ca/~pift 6080/documents/papers/svm_tutorial. ppt

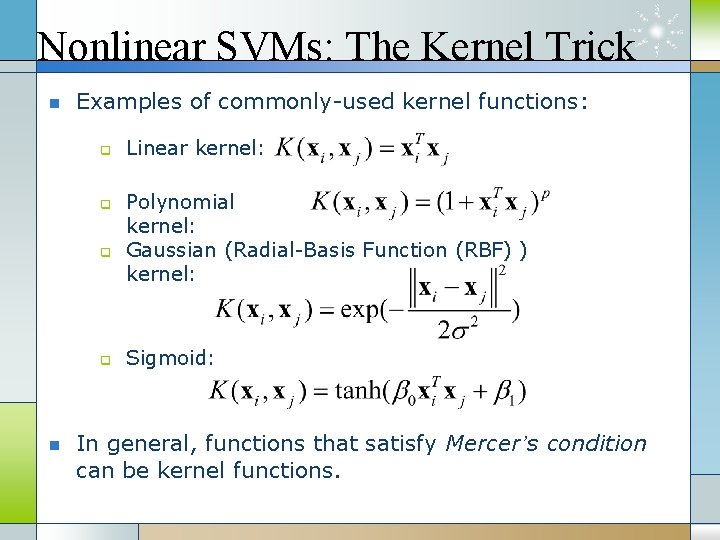

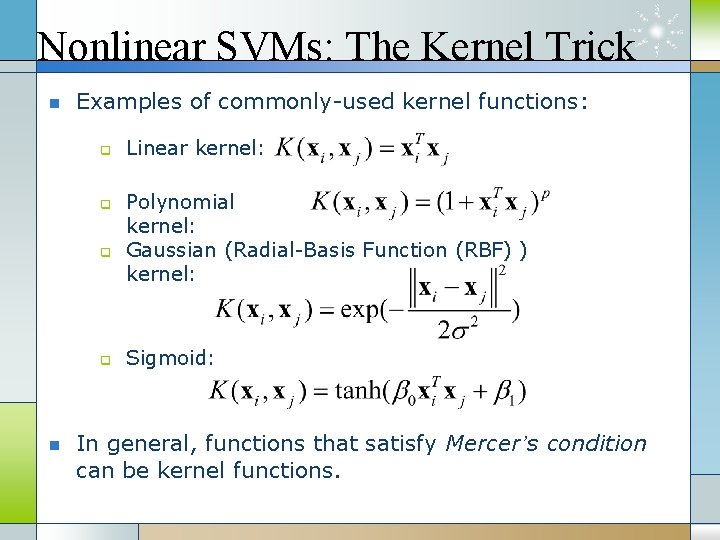

Nonlinear SVMs: The Kernel Trick n Examples of commonly-used kernel functions: q q n Linear kernel: Polynomial kernel: Gaussian (Radial-Basis Function (RBF) ) kernel: Sigmoid: In general, functions that satisfy Mercer’s condition can be kernel functions.

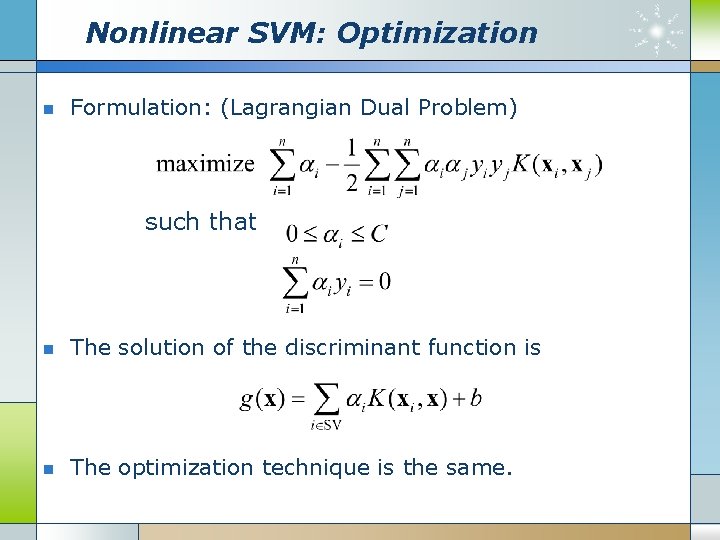

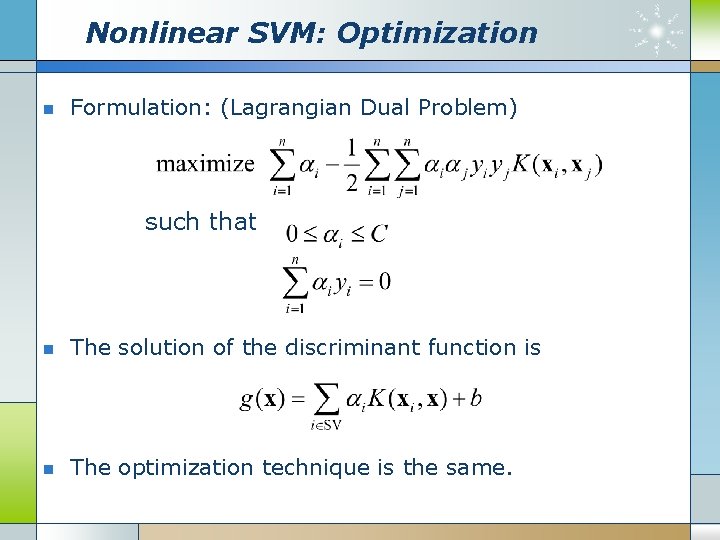

Nonlinear SVM: Optimization n Formulation: (Lagrangian Dual Problem) such that n The solution of the discriminant function is n The optimization technique is the same.

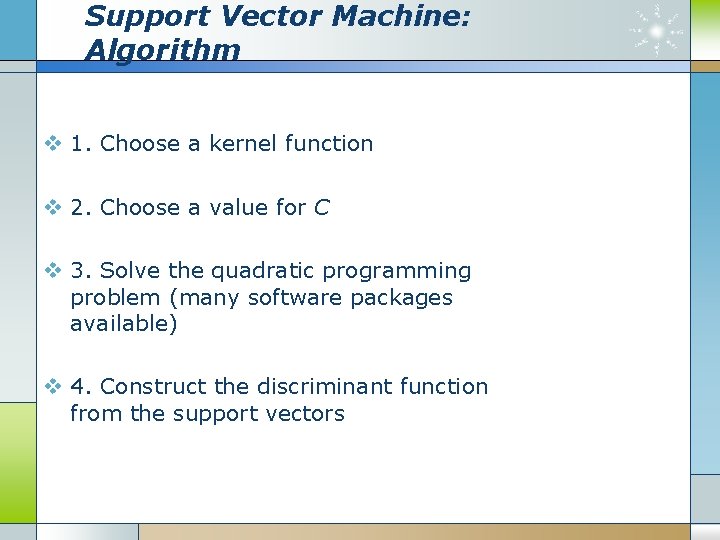

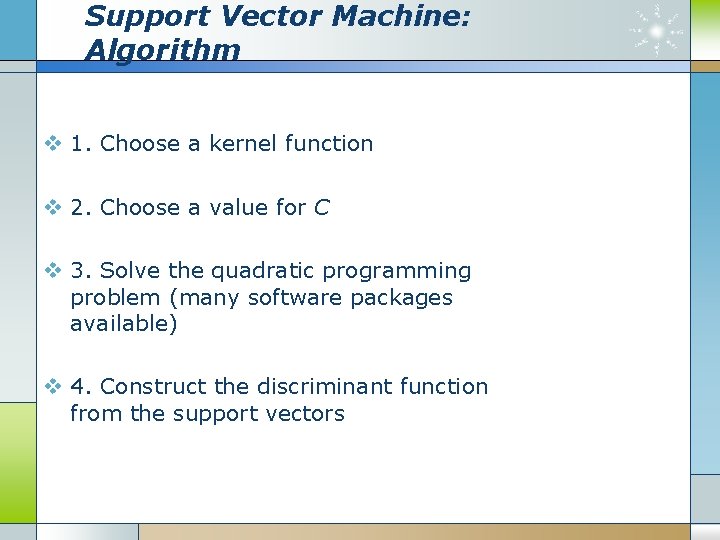

Support Vector Machine: Algorithm v 1. Choose a kernel function v 2. Choose a value for C v 3. Solve the quadratic programming problem (many software packages available) v 4. Construct the discriminant function from the support vectors

Some Issues v Choice of kernel - Gaussian or polynomial kernel is default - if ineffective, more elaborate kernels are needed - domain experts can give assistance in formulating appropriate similarity measures v Choice of kernel parameters - e. g. σ in Gaussian kernel - σ is the distance between closest points with different classifications - In the absence of reliable criteria, applications rely on the use of a validation set or cross-validation to set such parameters. v Optimization criterion – Hard margin v. s. Soft margin - a lengthy series of experiments in which various parameters are tested This slide is courtesy of www. iro. umontreal. ca/~pift 6080/documents/papers/svm_tutorial. ppt

Summary: Support Vector Machine v 1. Large Margin Classifier § Better generalization ability & less over-fitting v 2. The Kernel Trick § Map data points to higher dimensional space in order to make them linearly separable. § Since only dot product is used, we do not need to represent the mapping explicitly.

Active Learning Methods v Choosing samples properly so that to maximize the accuracy of the classification process a. Margin Sampling b. Posterior Probability Sampling c. Query by Committee

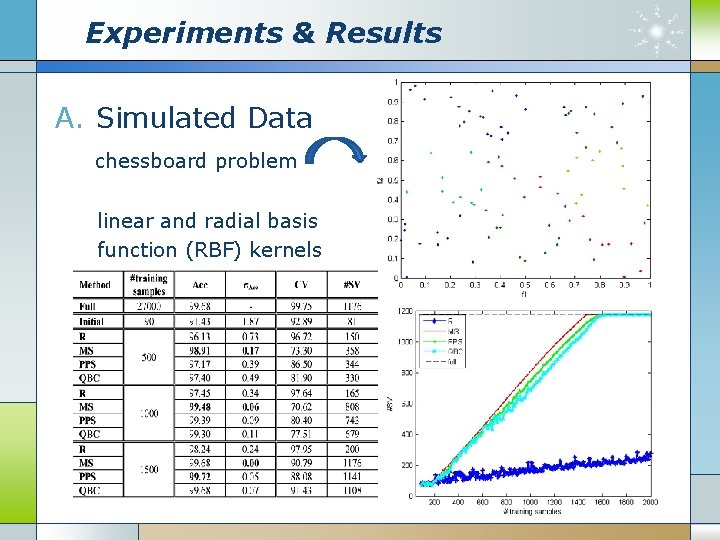

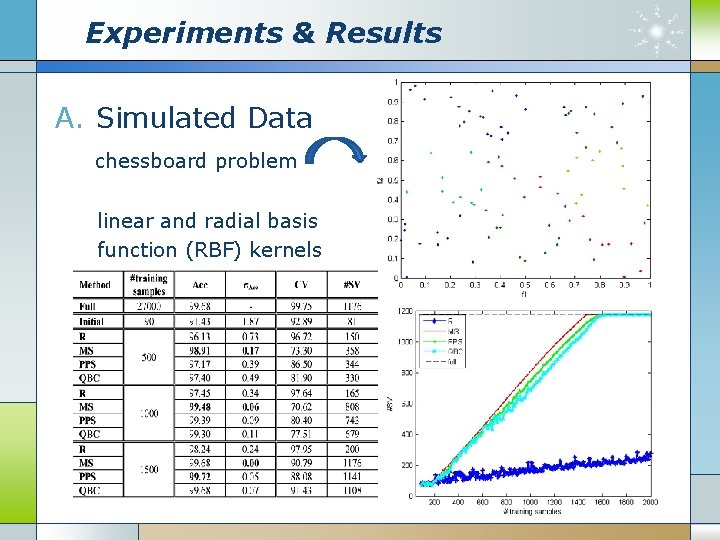

Experiments & Results A. Simulated Data chessboard problem linear and radial basis function (RBF) kernels

Experiments & Results B. Real Data MIT-BIH, morphology three ECG temporal features

Conclusion v Three active learning strategies for the SVM classification of electrocardiogram (ECG) signals have been presented. v Strategy based on the MS principle seems the best as it quickly selects the most informative samples. v A further increase of the accuracies could be achieved by feeding the classifier with other kinds of features

Case (5) v. Depolarization Changes During Acute Myocardial Ischemia by Evaluation of QRS Slopes: Standard Lead and Vectorial Approach IEEE TRANSACTIONS ON BIOMEDICAL ENGINEERING, VOL. 58, NO. 1, JANUARY 2011

Content v Introduction v Materials and Methods v Results v Conclusion

Introduction v Early diagnosis of patients with acute myocardial ischemia is essential to optimize treatment v Variability of the QRS slopes v Aims: § evaluate the normal variation of the QRS slopes § better quantification of pathophysiologically significant changes

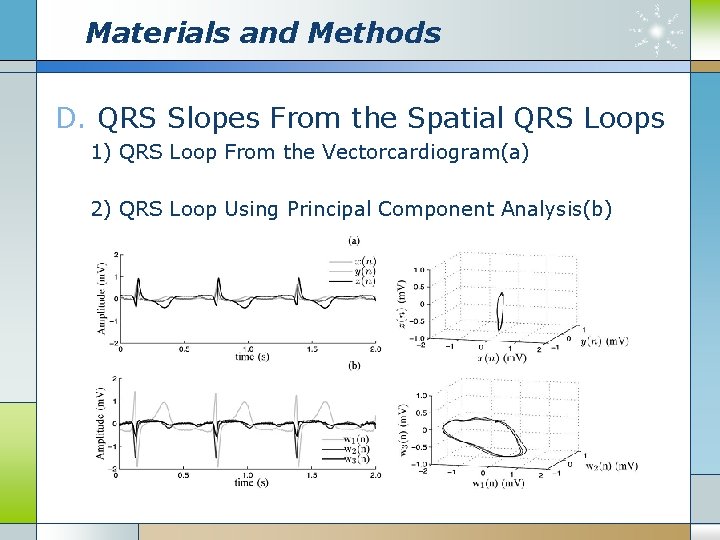

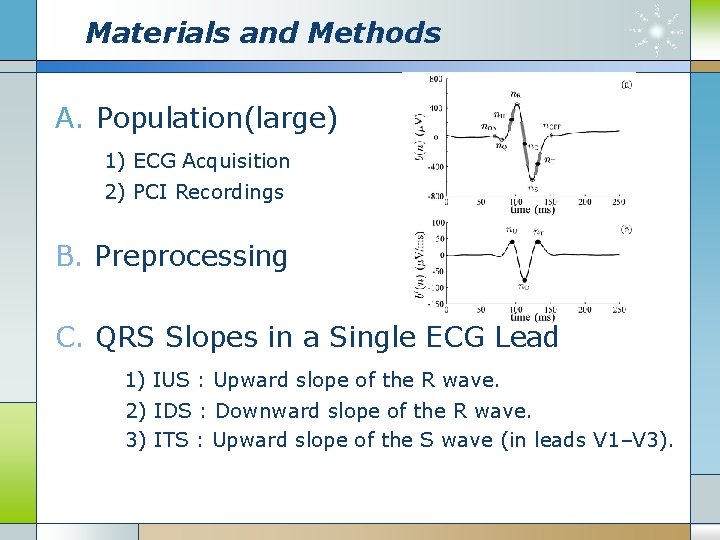

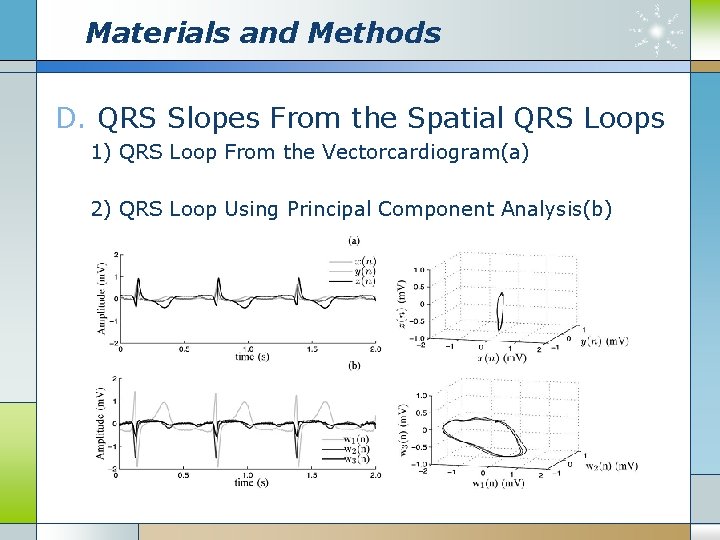

Materials and Methods A. Population(large) 1) ECG Acquisition 2) PCI Recordings B. Preprocessing C. QRS Slopes in a Single ECG Lead 1) IUS : Upward slope of the R wave. 2) IDS : Downward slope of the R wave. 3) ITS : Upward slope of the S wave (in leads V 1–V 3).

Materials and Methods D. QRS Slopes From the Spatial QRS Loops 1) QRS Loop From the Vectorcardiogram(a) 2) QRS Loop Using Principal Component Analysis(b)

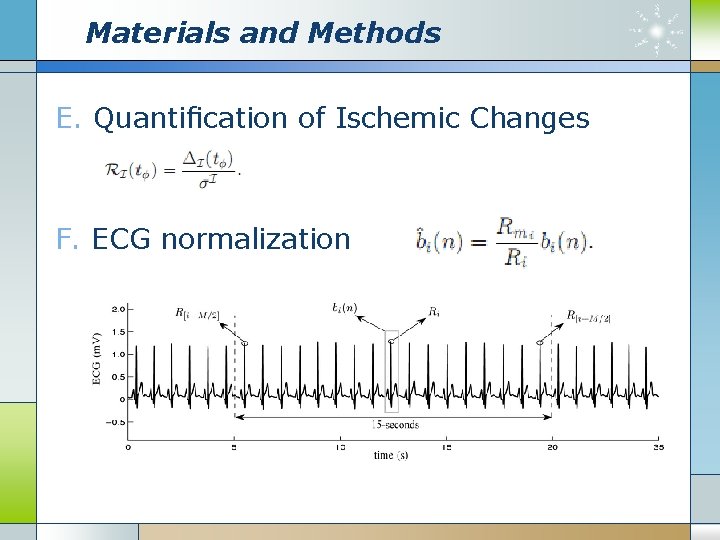

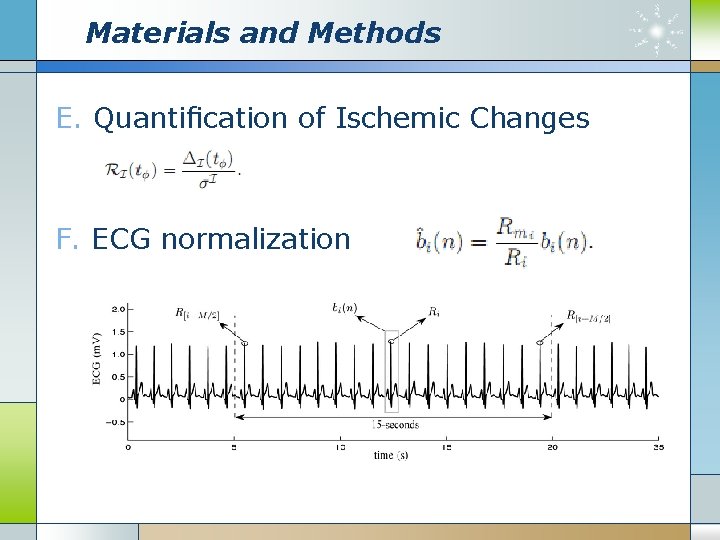

Materials and Methods E. Quantification of Ischemic Changes F. ECG normalization

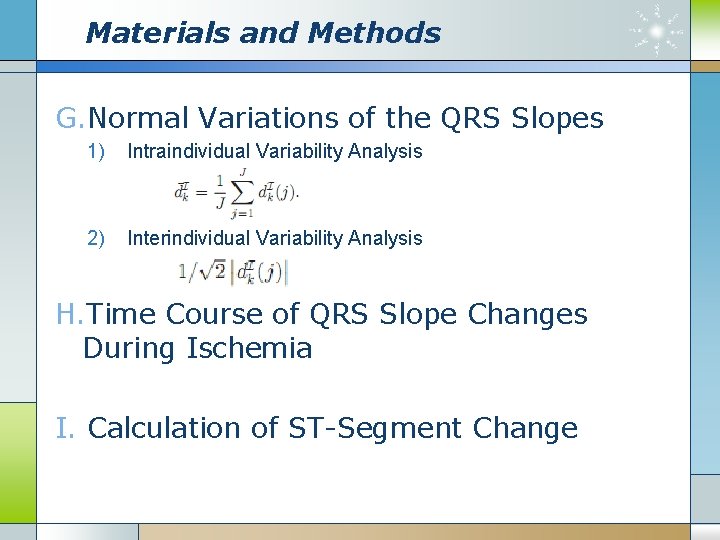

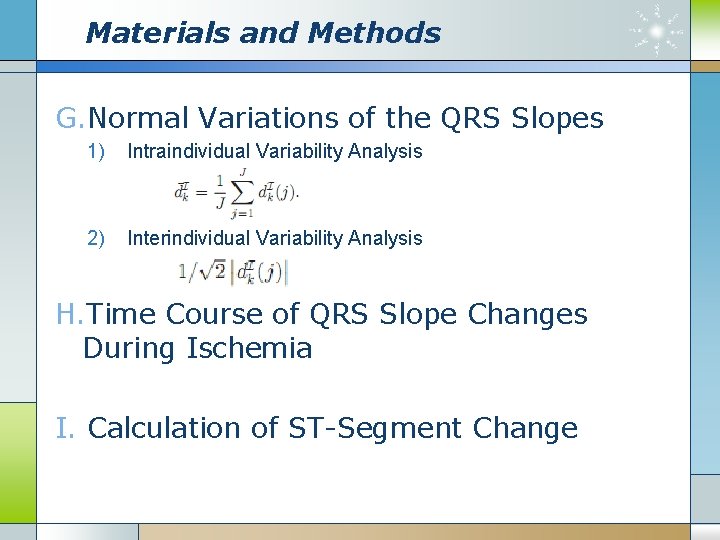

Materials and Methods G. Normal Variations of the QRS Slopes 1) Intraindividual Variability Analysis 2) Interindividual Variability Analysis H. Time Course of QRS Slope Changes During Ischemia I. Calculation of ST-Segment Change

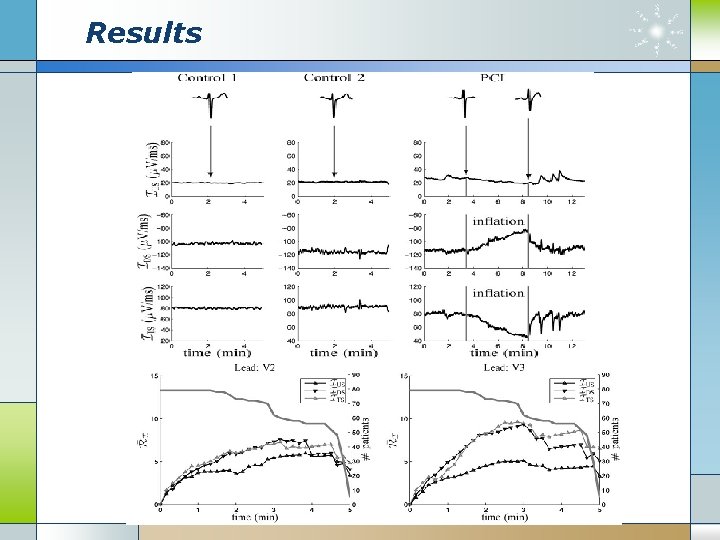

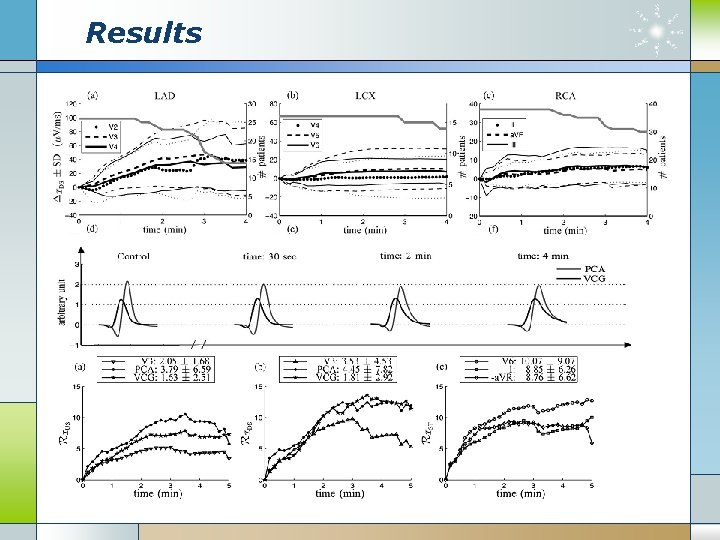

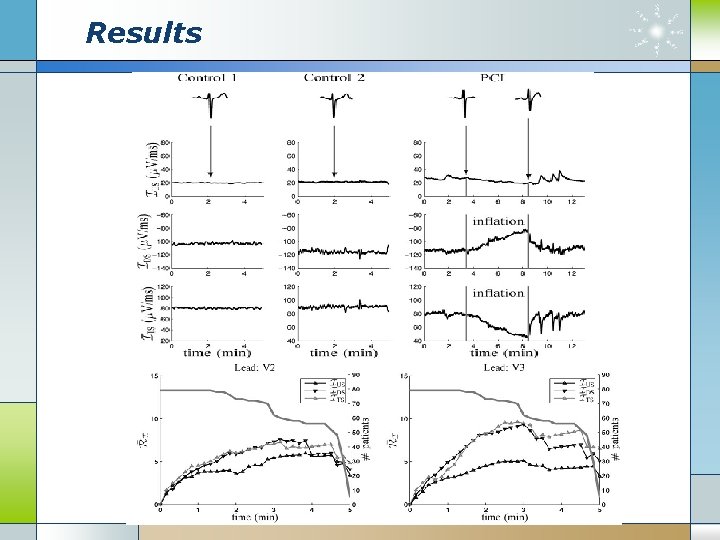

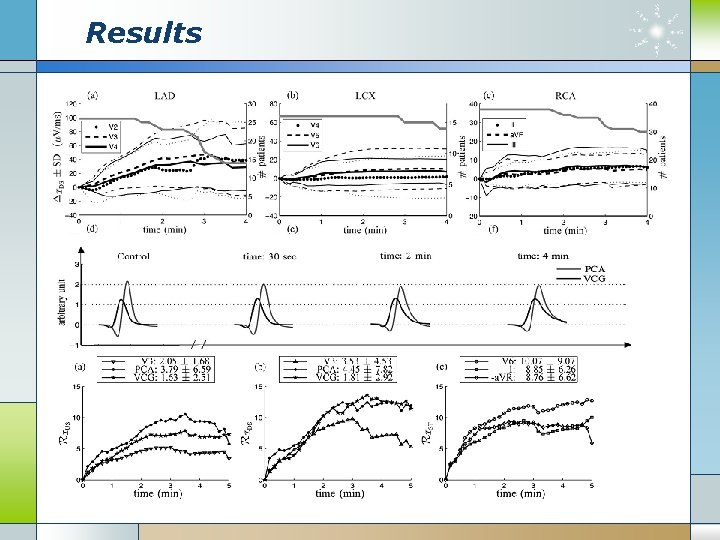

Results

Results

Conclusion v We measured the slopes of the QRS complex and assessed their performances for evaluation of myocardial ischemia induced by coronary occlusion during prolonged PCI. v The downward slope of the R with the most marked changes due to ischemia. v Results based on the QRS-loop approaches seem to be more sensitive v QRS-slope analysis could act as a robust method for changes in acute ischemia.

Digital signal as a composite analog signal

Digital signal as a composite analog signal Classification of signals

Classification of signals Baseband signal and bandpass signal

Baseband signal and bandpass signal Baseband signal and bandpass signal

Baseband signal and bandpass signal Digital signal processing

Digital signal processing What is digital signal processing

What is digital signal processing Parallel system tsample ---- tclock

Parallel system tsample ---- tclock Digital signal processing

Digital signal processing High-performance digital signal processing

High-performance digital signal processing Digital signal processing

Digital signal processing Digital signal processing

Digital signal processing Pca vs ica

Pca vs ica Unfolding in vlsi signal processing

Unfolding in vlsi signal processing Z transform

Z transform Open architecture radar

Open architecture radar Precision analog signal processing

Precision analog signal processing Digital signal processing

Digital signal processing Signal processing development kit presentation

Signal processing development kit presentation Financial signal processing

Financial signal processing Signal processing for big data

Signal processing for big data Signal processing filter

Signal processing filter Digital image processing

Digital image processing Digital signal processing

Digital signal processing Matlab signal processing toolbox

Matlab signal processing toolbox Genomic signal processing

Genomic signal processing 인과성

인과성 Super audio cd

Super audio cd Signal processing solutions

Signal processing solutions Types of signals

Types of signals Digital signal processing

Digital signal processing Digital signal processing

Digital signal processing Morphological

Morphological Neighborhood averaging in image processing

Neighborhood averaging in image processing Parallel processing vs concurrent processing

Parallel processing vs concurrent processing Top-down processing vs bottom-up processing

Top-down processing vs bottom-up processing Top-down processing example

Top-down processing example Example of primary processing

Example of primary processing A generalization of unsharp masking is

A generalization of unsharp masking is Gloria suarez

Gloria suarez Batch processing and interactive processing

Batch processing and interactive processing Point processing in image enhancement

Point processing in image enhancement What is point processing in digital image processing

What is point processing in digital image processing Top-down processing vs bottom-up processing

Top-down processing vs bottom-up processing Histogram processing in digital image processing

Histogram processing in digital image processing Ecg patterns

Ecg patterns Qs complex

Qs complex Slidetodoc

Slidetodoc R on t

R on t Sinus brady with pjc

Sinus brady with pjc Wandering atrial pacemaker

Wandering atrial pacemaker Hypercalcemia ecg

Hypercalcemia ecg Calcul rythme cardiaque ecg

Calcul rythme cardiaque ecg Smal qrs complex

Smal qrs complex 1500 method ecg

1500 method ecg J point ecg

J point ecg Ecg measurement

Ecg measurement Defibrillator circuit diagram

Defibrillator circuit diagram Eje cardiaco

Eje cardiaco Ecg rhythm strip interpretation, basic lesson 6

Ecg rhythm strip interpretation, basic lesson 6 Composition of ultrasound gel

Composition of ultrasound gel Ventricular escape rhythm ecg

Ventricular escape rhythm ecg Inappropriate sinus tachycardia ecg

Inappropriate sinus tachycardia ecg Bloqueio de ramo direito

Bloqueio de ramo direito Pc based ecg system

Pc based ecg system Sinus rhythm with couplets

Sinus rhythm with couplets Equiphasic approach ecg

Equiphasic approach ecg Avnrt

Avnrt Jake ecg

Jake ecg Einthoven

Einthoven Tira de ritmo ecg

Tira de ritmo ecg Hyperkalemia ecg

Hyperkalemia ecg Elias hanna md

Elias hanna md Ipl infarct

Ipl infarct Ecg mac 600

Ecg mac 600 Principle of ecg

Principle of ecg Ecg lead map

Ecg lead map Hbpg ecg

Hbpg ecg Stt ecg

Stt ecg Recesos pericardicos

Recesos pericardicos Floating electrodes for ecg

Floating electrodes for ecg Tdr ventriculaire

Tdr ventriculaire Infarto ecg

Infarto ecg Ecg phases

Ecg phases Vt dengan nadi

Vt dengan nadi Donkey analogy heart failure

Donkey analogy heart failure Ecg bbs

Ecg bbs Elektroden ecg

Elektroden ecg Normal ekg

Normal ekg Ecg rhythm identification

Ecg rhythm identification