ECE 8823 A GPU Architectures Module 2 Introduction

![Vector Addition – Conceptual View vector A vector B vector C A[0] A[1] A[2] Vector Addition – Conceptual View vector A vector B vector C A[0] A[1] A[2]](https://slidetodoc.com/presentation_image_h2/0fcdb4bf6d3b281dac7894e0beaefb7f/image-9.jpg)

- Slides: 32

ECE 8823 A GPU Architectures Module 2: Introduction to CUDA C © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 1

Objective • To understand the major elements of a CUDA program • Introduce the basic constructs of the programming model • Illustrate the preceding with a simple but complete CUDA program 2

Reading Assignment • Kirk and Hwu, “Programming Massively Parallel Processors: A Hands on Approach, ”, Chapter 3 • CUDA Programming Guide – http: //docs. nvidia. com/cuda-cprogramming-guide/#abstract 3

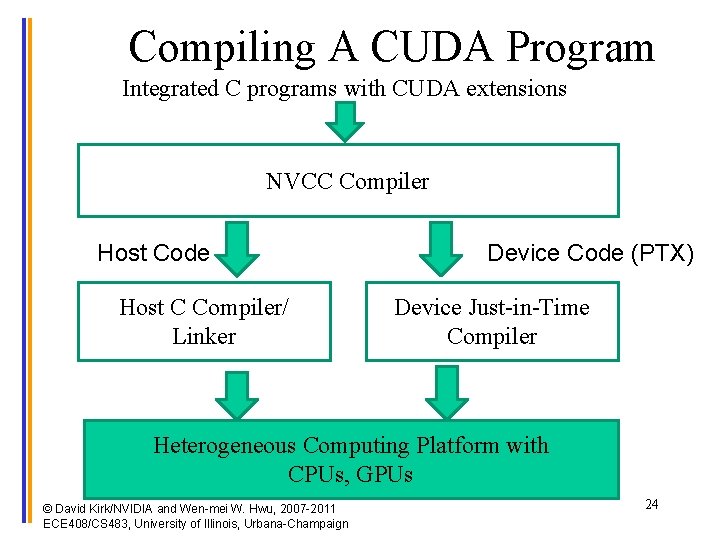

CUDA/Open. CL- Execution Model • Reflects a multicore processor + GPGPU execution model • Addition of device functions and declarations to stock C programs • Compilation separated into host and GPU paths 4

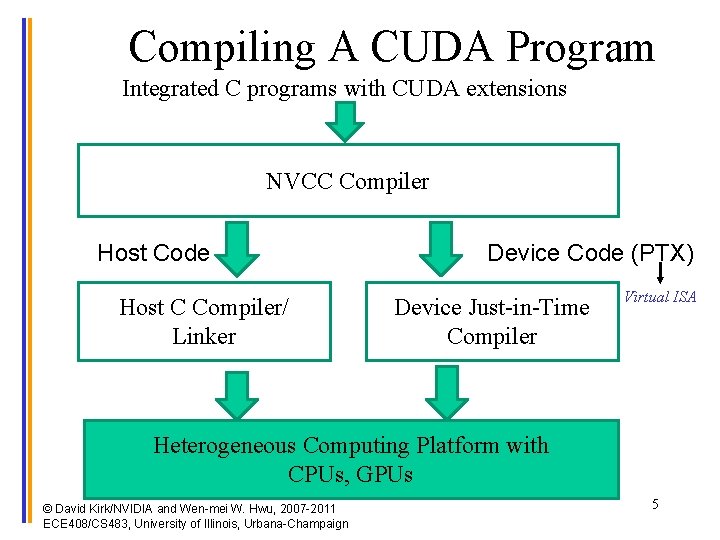

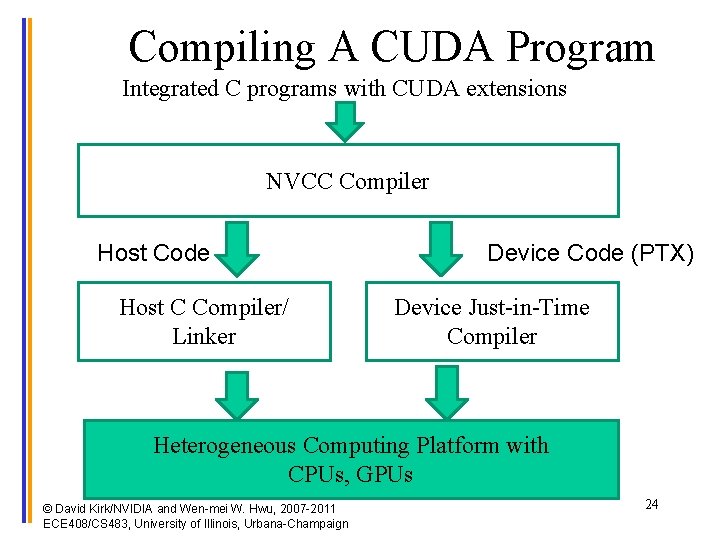

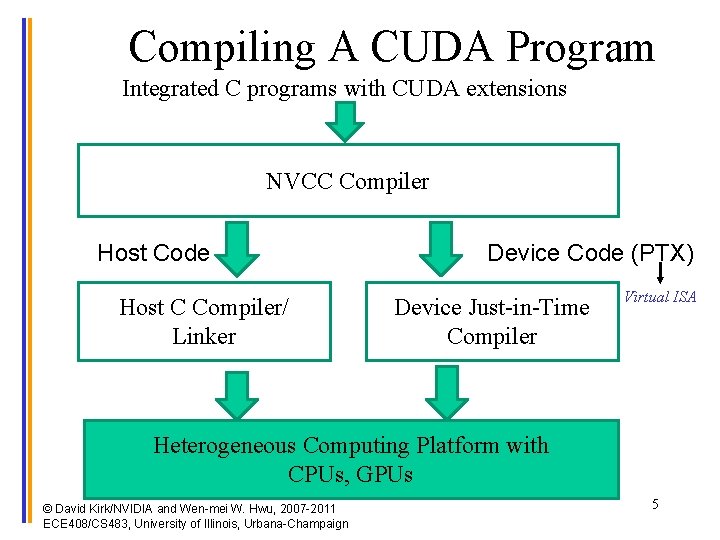

Compiling A CUDA Program Integrated C programs with CUDA extensions NVCC Compiler Host Code Host C Compiler/ Linker Device Code (PTX) Device Just-in-Time Compiler Virtual ISA Heterogeneous Computing Platform with CPUs, GPUs © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 5

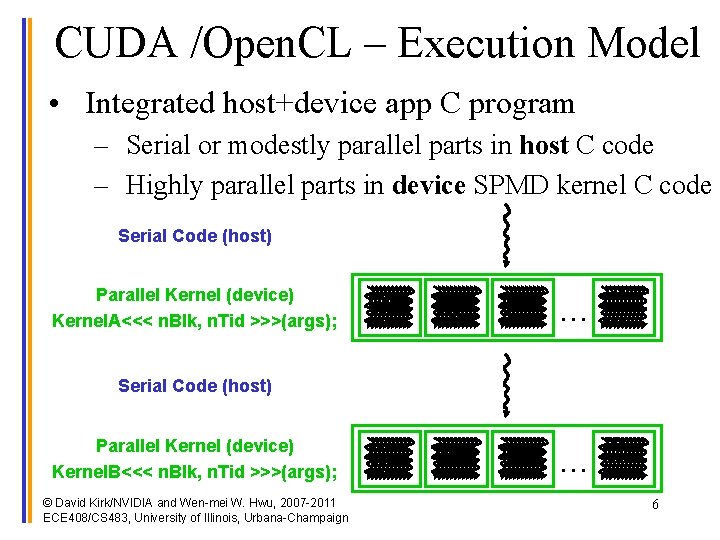

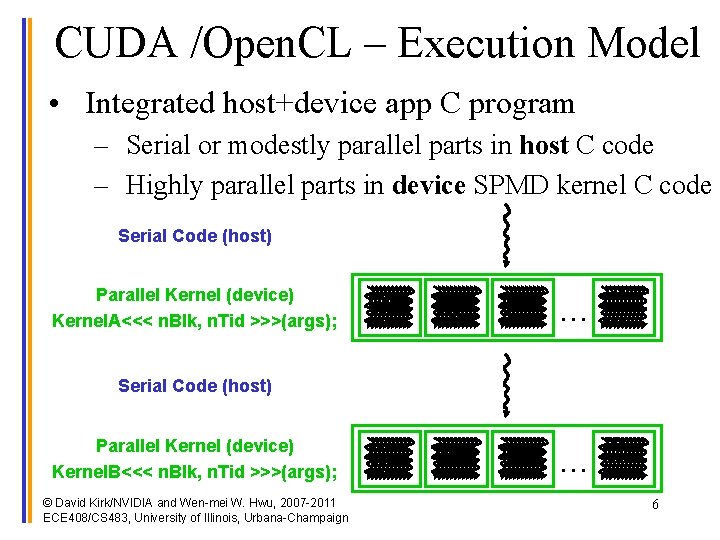

CUDA /Open. CL – Execution Model • Integrated host+device app C program – Serial or modestly parallel parts in host C code – Highly parallel parts in device SPMD kernel C code Serial Code (host) Parallel Kernel (device) Kernel. A<<< n. Blk, n. Tid >>>(args); . . . Serial Code (host) Parallel Kernel (device) Kernel. B<<< n. Blk, n. Tid >>>(args); © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign . . . 6

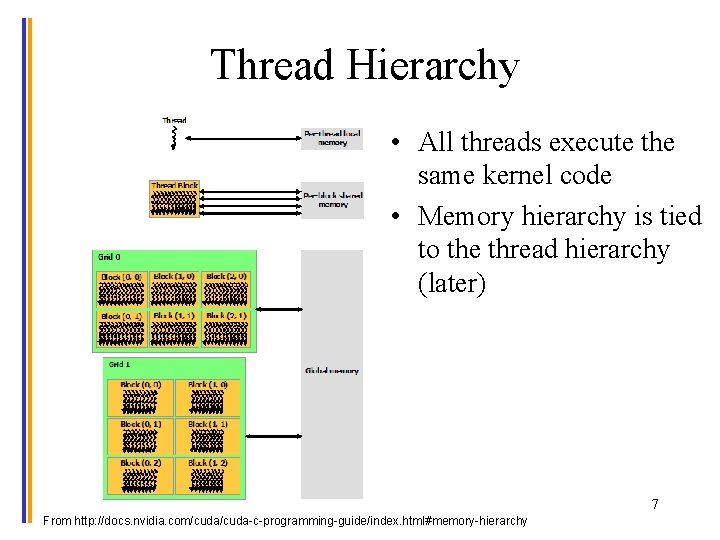

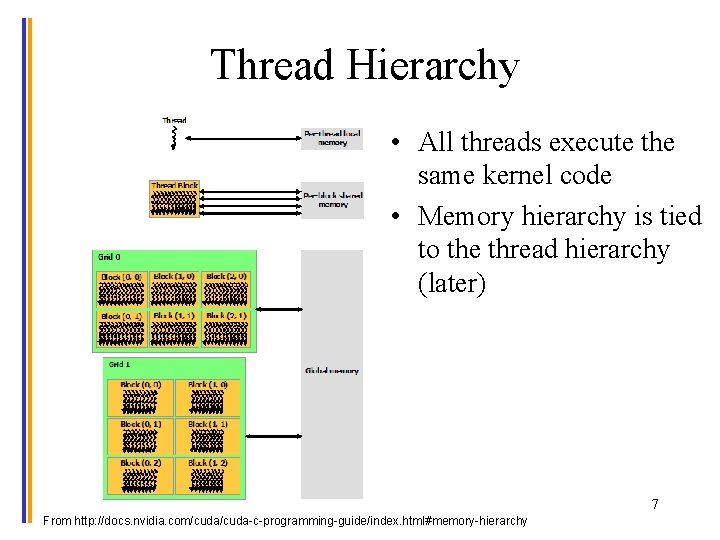

Thread Hierarchy • All threads execute the same kernel code • Memory hierarchy is tied to the thread hierarchy (later) 7 From http: //docs. nvidia. com/cuda-c-programming-guide/index. html#memory-hierarchy

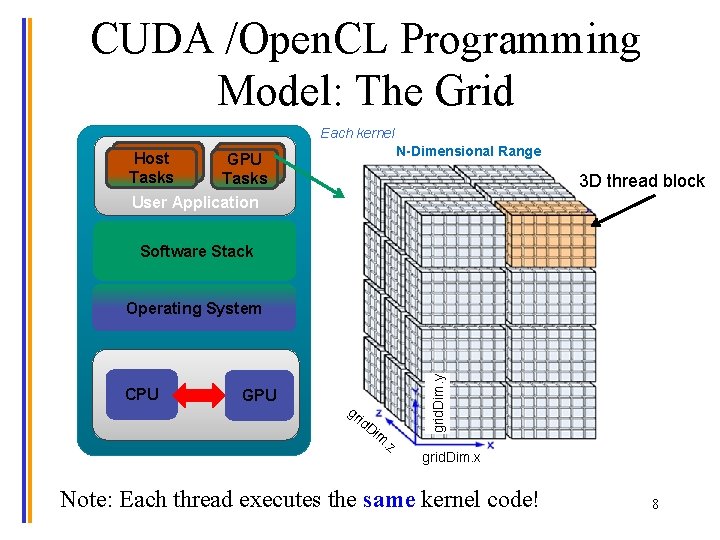

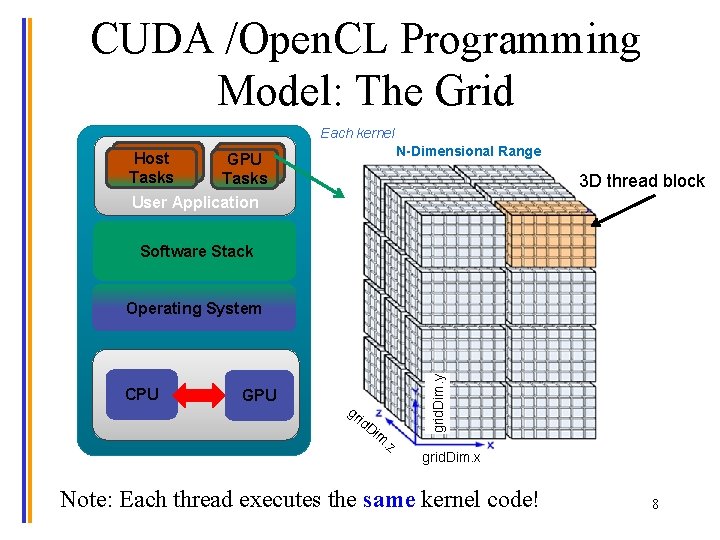

CUDA /Open. CL Programming Model: The Grid Each kernel N-Dimensional Range Host Tasks GPU Tasks User Application 3 D thread block Software Stack CPU GPU gr id Di m. z grid. Dim. y Operating System grid. Dim. x Note: Each thread executes the same kernel code! 8

![Vector Addition Conceptual View vector A vector B vector C A0 A1 A2 Vector Addition – Conceptual View vector A vector B vector C A[0] A[1] A[2]](https://slidetodoc.com/presentation_image_h2/0fcdb4bf6d3b281dac7894e0beaefb7f/image-9.jpg)

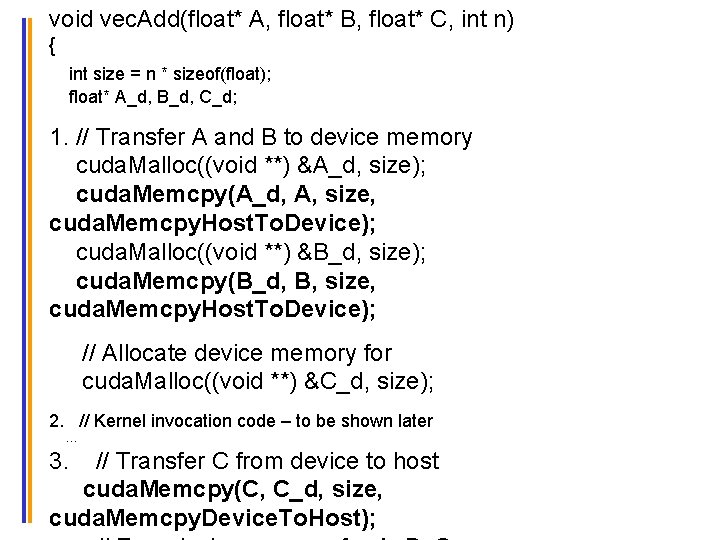

Vector Addition – Conceptual View vector A vector B vector C A[0] A[1] A[2] A[3] A[4] … A[N-1] B[0] B[1] B[2] B[3] B[4] … B[N-1] + + + C[0] C[1] C[2] C[3] C[4] © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign + … C[N-1] 9

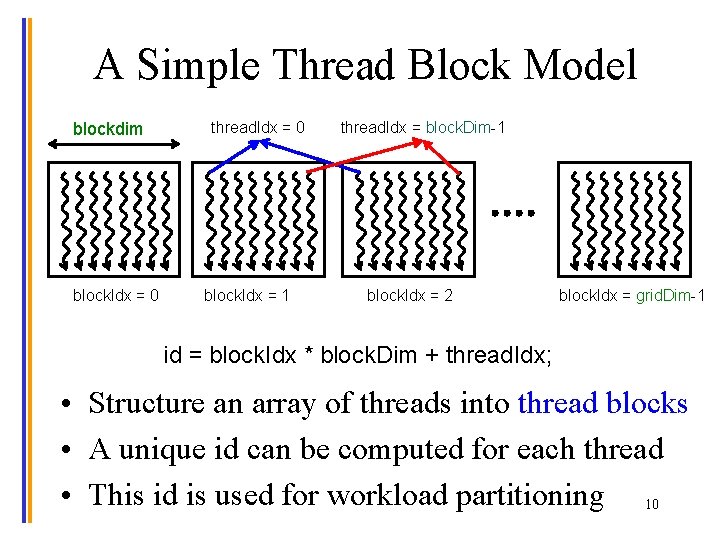

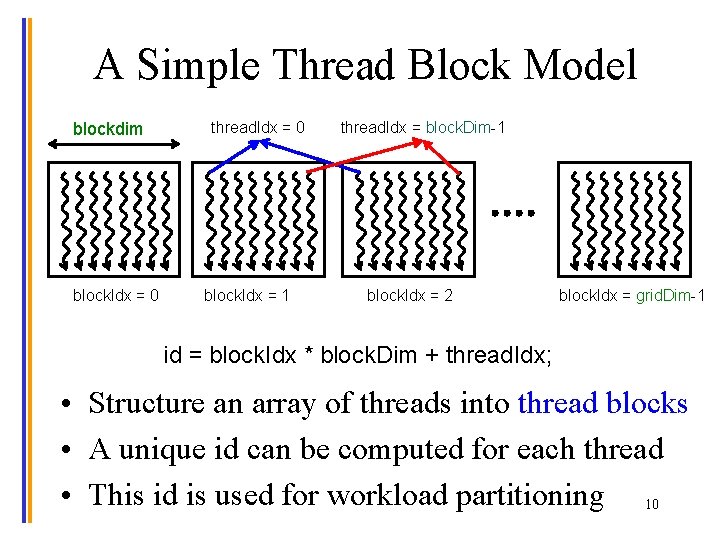

A Simple Thread Block Model blockdim block. Idx = 0 thread. Idx = 0 block. Idx = 1 thread. Idx = block. Dim-1 block. Idx = 2 block. Idx = grid. Dim-1 id = block. Idx * block. Dim + thread. Idx; • Structure an array of threads into thread blocks • A unique id can be computed for each thread • This id is used for workload partitioning 10

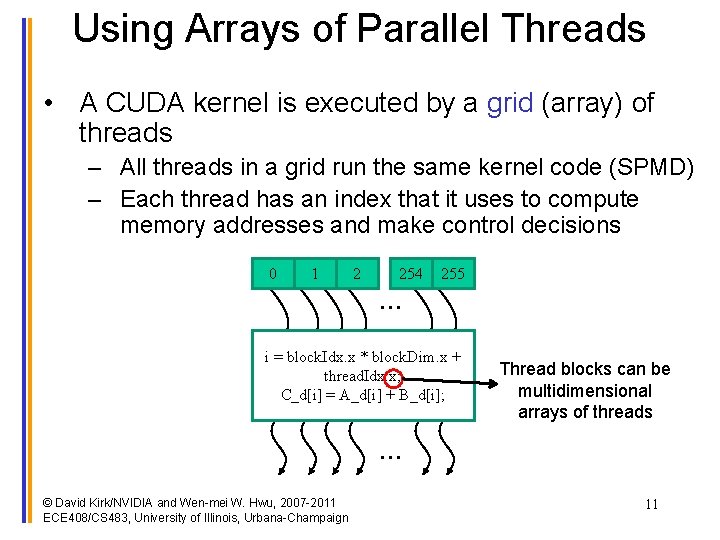

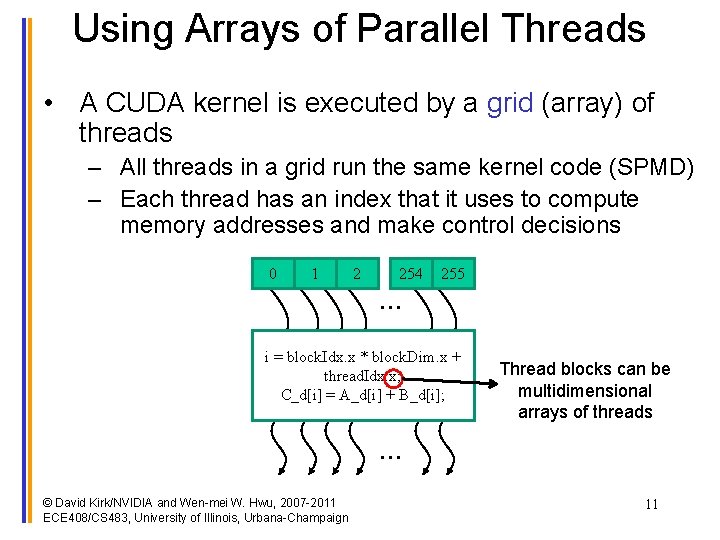

Using Arrays of Parallel Threads • A CUDA kernel is executed by a grid (array) of threads – All threads in a grid run the same kernel code (SPMD) – Each thread has an index that it uses to compute memory addresses and make control decisions 0 1 2 254 255 … i = block. Idx. x * block. Dim. x + thread. Idx. x; C_d[i] = A_d[i] + B_d[i]; Thread blocks can be multidimensional arrays of threads … © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 11

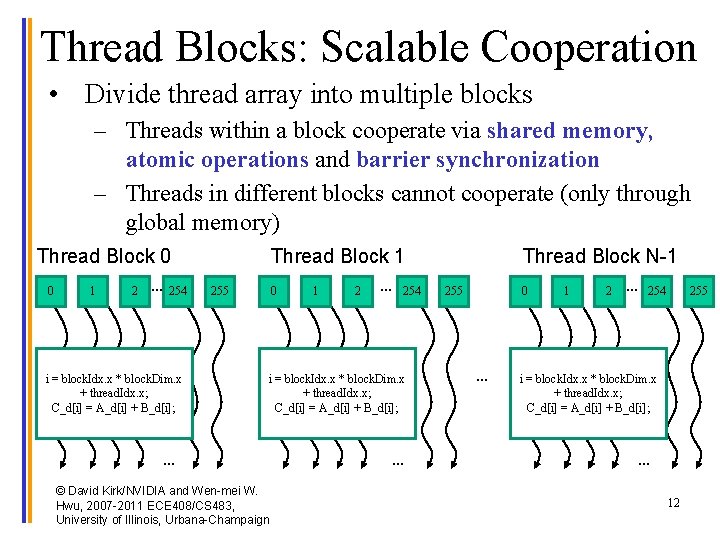

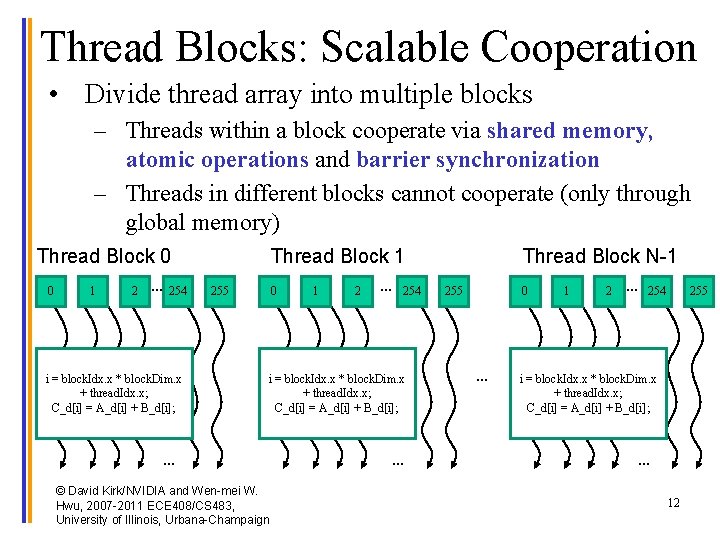

Thread Blocks: Scalable Cooperation • Divide thread array into multiple blocks – Threads within a block cooperate via shared memory, atomic operations and barrier synchronization – Threads in different blocks cannot cooperate (only through global memory) Thread Block 0 0 1 2 … 254 i = block. Idx. x * block. Dim. x + thread. Idx. x; C_d[i] = A_d[i] + B_d[i]; Thread Block 1 255 0 1 2 … 254 i = block. Idx. x * block. Dim. x + thread. Idx. x; C_d[i] = A_d[i] + B_d[i]; … © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign … Thread Block N-1 255 0 … 1 2 … 254 255 i = block. Idx. x * block. Dim. x + thread. Idx. x; C_d[i] = A_d[i] + B_d[i]; … 12

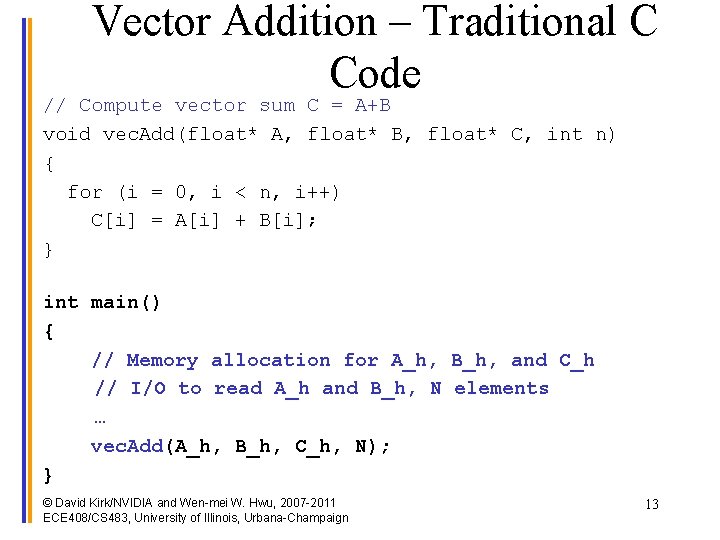

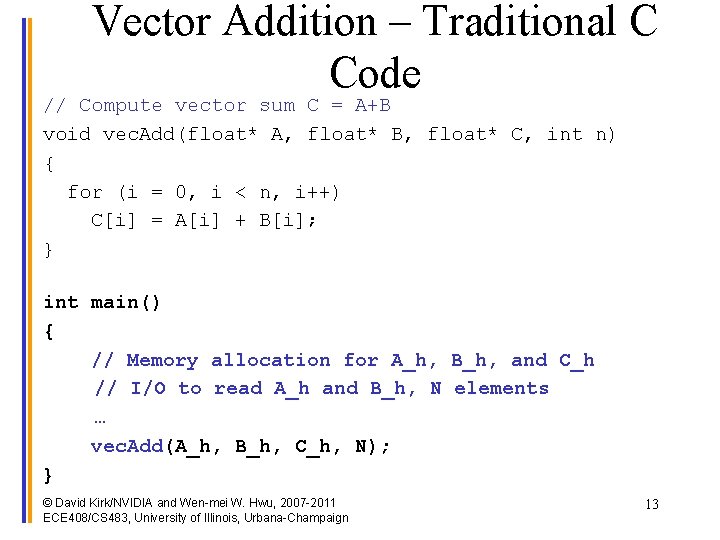

Vector Addition – Traditional C Code // Compute vector sum C = A+B void vec. Add(float* A, float* B, float* C, int n) { for (i = 0, i < n, i++) C[i] = A[i] + B[i]; } int main() { // Memory allocation for A_h, B_h, and C_h // I/O to read A_h and B_h, N elements … vec. Add(A_h, B_h, C_h, N); } © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 13

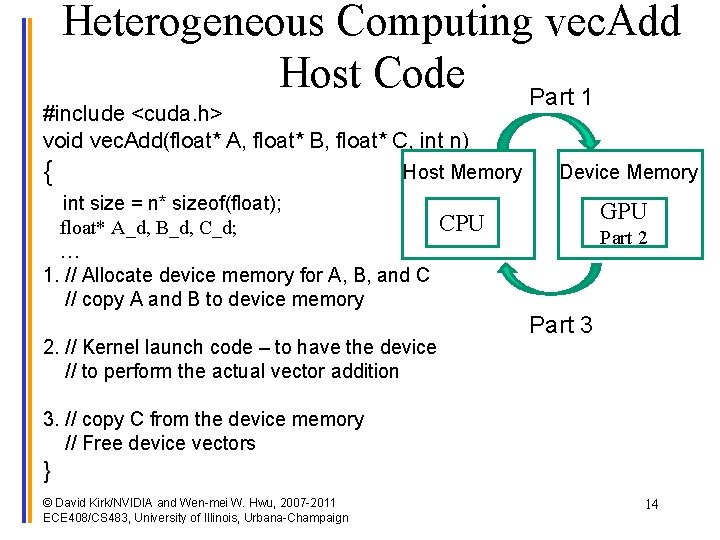

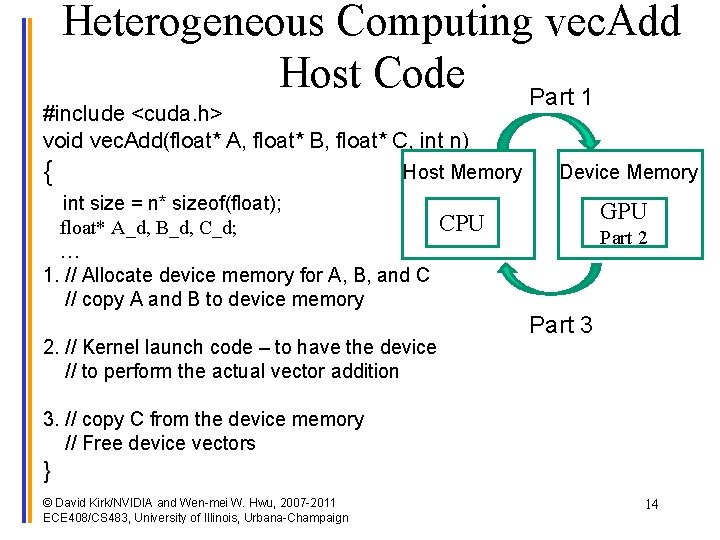

Heterogeneous Computing vec. Add Host Code Part 1 #include <cuda. h> void vec. Add(float* A, float* B, float* C, int n) { Host Memory int size = n* sizeof(float); float* A_d, B_d, C_d; … 1. // Allocate device memory for A, B, and C // copy A and B to device memory 2. // Kernel launch code – to have the device // to perform the actual vector addition Device Memory GPU CPU Part 2 Part 3 3. // copy C from the device memory // Free device vectors } © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 14

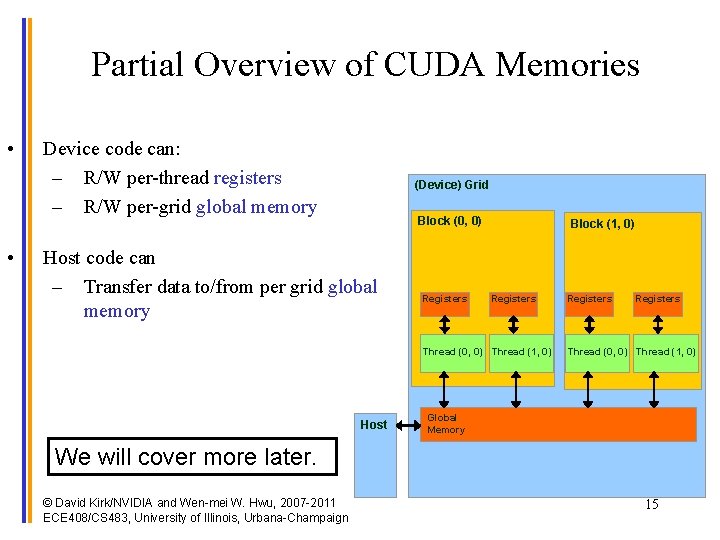

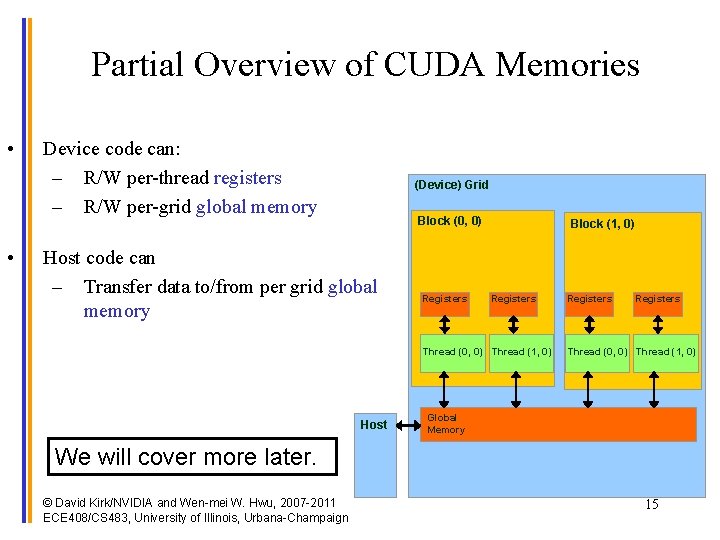

Partial Overview of CUDA Memories • • Device code can: – R/W per-thread registers – R/W per-grid global memory (Device) Grid Block (0, 0) Host code can – Transfer data to/from per grid global memory Registers Block (1, 0) Registers Thread (0, 0) Thread (1, 0) Host Registers Thread (0, 0) Thread (1, 0) Global Memory We will cover more later. © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 15

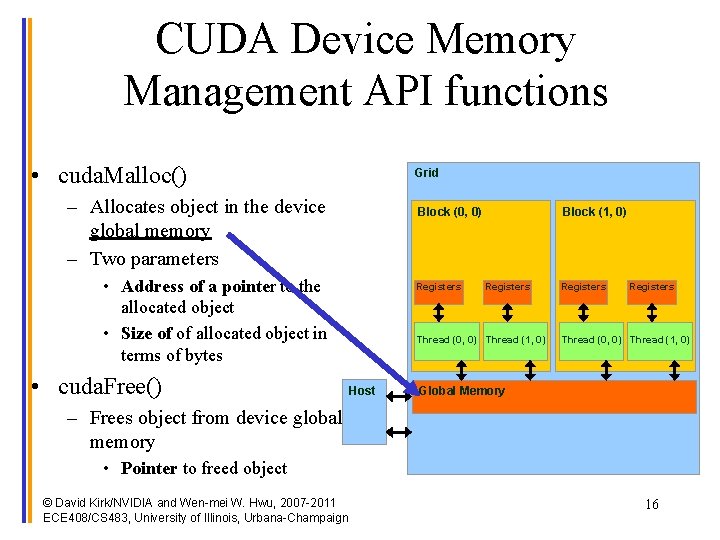

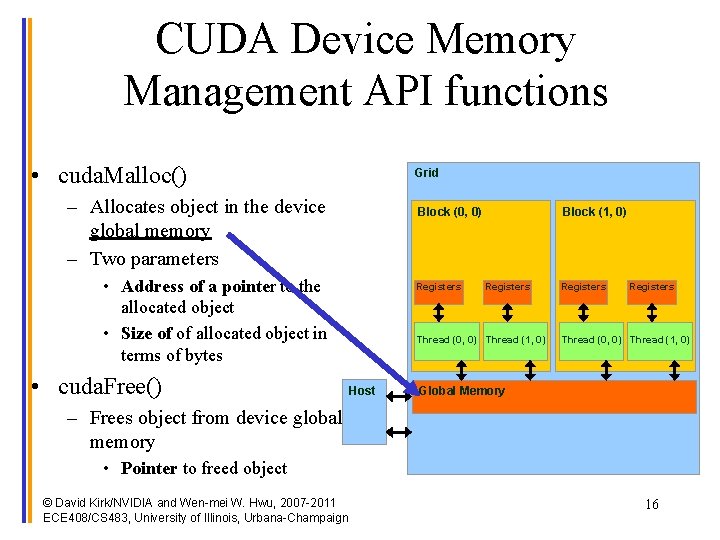

CUDA Device Memory Management API functions • cuda. Malloc() Grid – Allocates object in the device global memory – Two parameters Block (0, 0) • Address of a pointer to the allocated object • Size of of allocated object in terms of bytes • cuda. Free() Registers Block (1, 0) Registers Thread (0, 0) Thread (1, 0) Host Registers Thread (0, 0) Thread (1, 0) Global Memory – Frees object from device global memory • Pointer to freed object © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 16

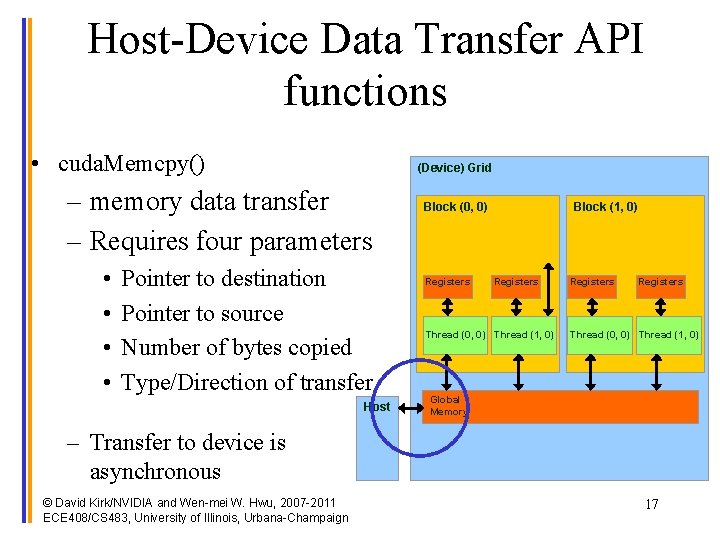

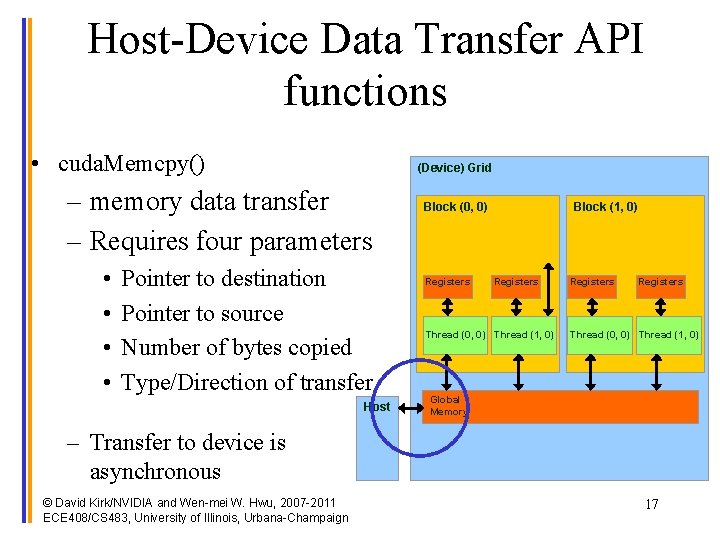

Host-Device Data Transfer API functions • cuda. Memcpy() (Device) Grid – memory data transfer – Requires four parameters • • Pointer to destination Pointer to source Number of bytes copied Type/Direction of transfer Host Block (0, 0) Registers Block (1, 0) Registers Thread (0, 0) Thread (1, 0) Global Memory – Transfer to device is asynchronous © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 17

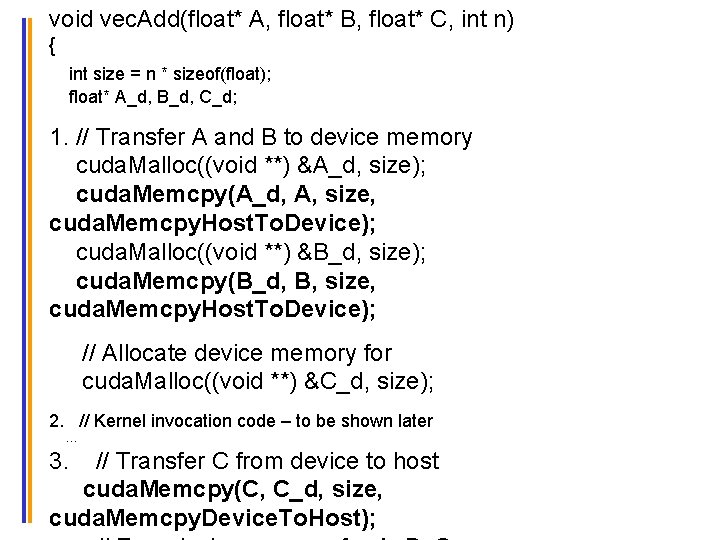

void vec. Add(float* A, float* B, float* C, int n) { int size = n * sizeof(float); float* A_d, B_d, C_d; 1. // Transfer A and B to device memory cuda. Malloc((void **) &A_d, size); cuda. Memcpy(A_d, A, size, cuda. Memcpy. Host. To. Device); cuda. Malloc((void **) &B_d, size); cuda. Memcpy(B_d, B, size, cuda. Memcpy. Host. To. Device); // Allocate device memory for cuda. Malloc((void **) &C_d, size); 2. // Kernel invocation code – to be shown later … 3. // Transfer C from device to host cuda. Memcpy(C, C_d, size, © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign cuda. Memcpy. Device. To. Host); 18

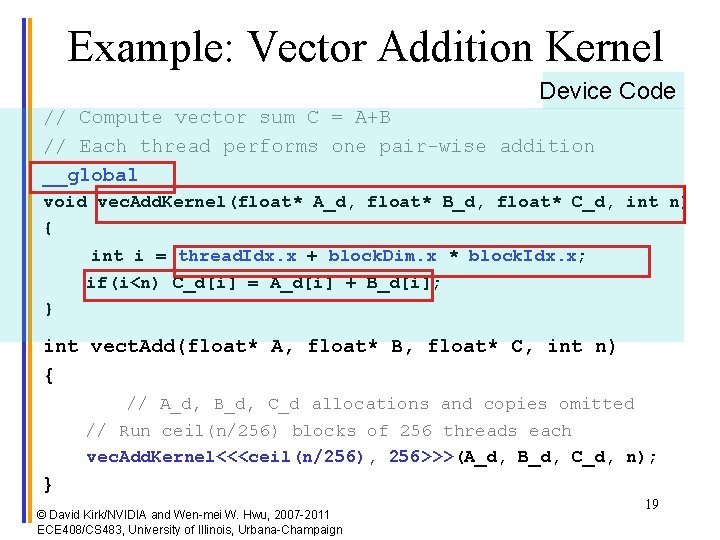

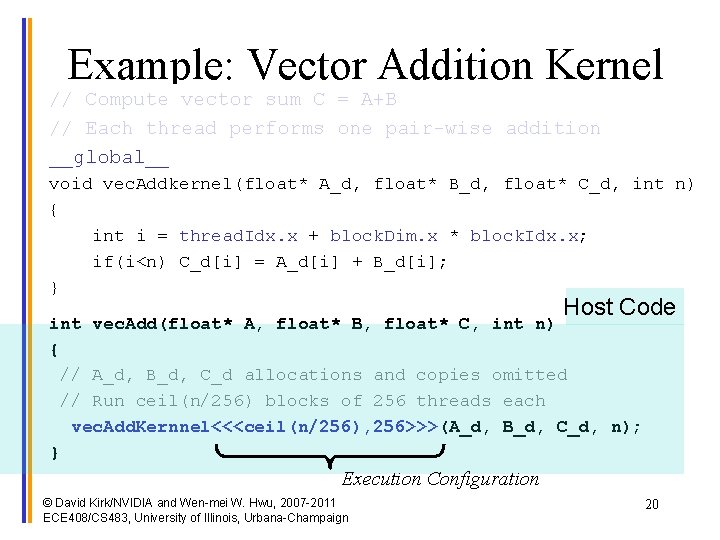

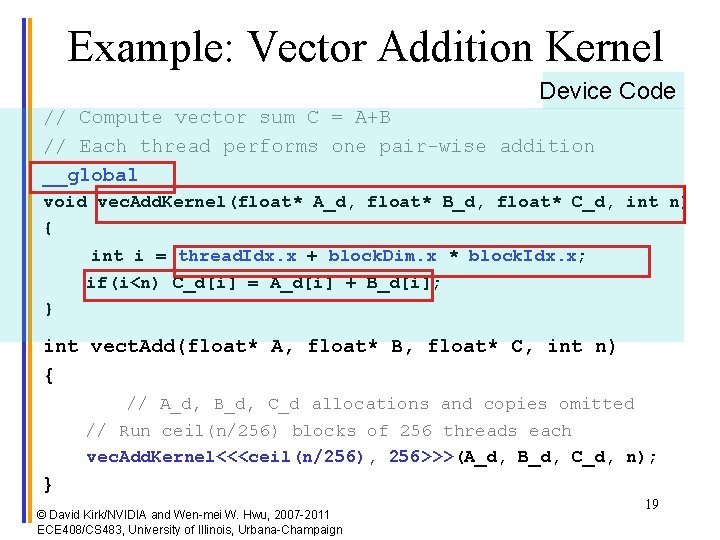

Example: Vector Addition Kernel Device Code // Compute vector sum C = A+B // Each thread performs one pair-wise addition __global__ void vec. Add. Kernel(float* A_d, float* B_d, float* C_d, int n) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; if(i<n) C_d[i] = A_d[i] + B_d[i]; } int vect. Add(float* A, float* B, float* C, int n) { // A_d, B_d, C_d allocations and copies omitted // Run ceil(n/256) blocks of 256 threads each vec. Add. Kernel<<<ceil(n/256), 256>>>(A_d, B_d, C_d, n); } © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 19

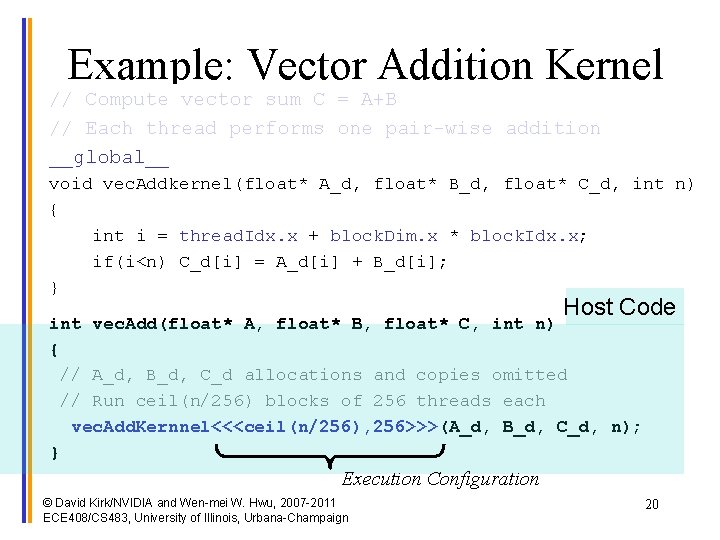

Example: Vector Addition Kernel // Compute vector sum C = A+B // Each thread performs one pair-wise addition __global__ void vec. Addkernel(float* A_d, float* B_d, float* C_d, int n) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; if(i<n) C_d[i] = A_d[i] + B_d[i]; } Host Code int vec. Add(float* A, float* B, float* C, int n) { // A_d, B_d, C_d allocations and copies omitted // Run ceil(n/256) blocks of 256 threads each vec. Add. Kernnel<<<ceil(n/256), 256>>>(A_d, B_d, C_d, n); } Execution Configuration © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 20

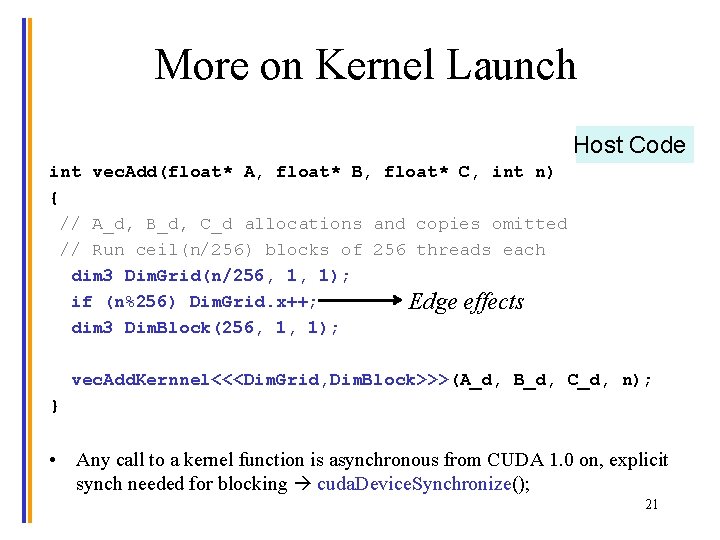

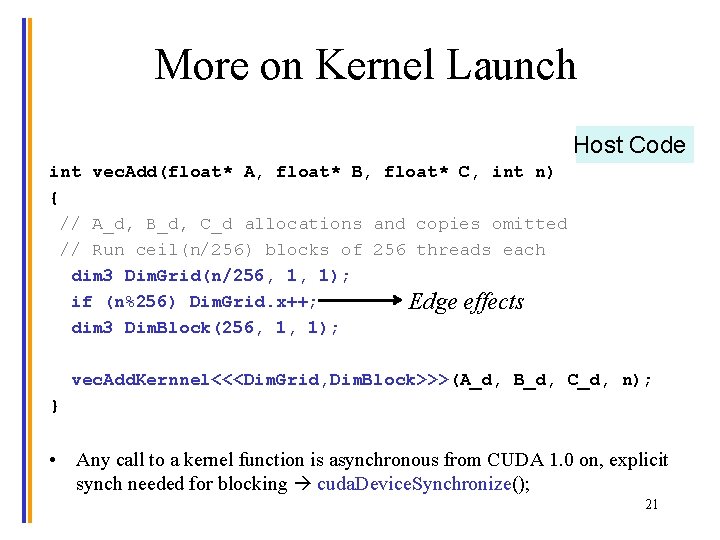

More on Kernel Launch Host Code int vec. Add(float* A, float* B, float* C, int n) { // A_d, B_d, C_d allocations and copies omitted // Run ceil(n/256) blocks of 256 threads each dim 3 Dim. Grid(n/256, 1, 1); if (n%256) Dim. Grid. x++; Edge effects dim 3 Dim. Block(256, 1, 1); vec. Add. Kernnel<<<Dim. Grid, Dim. Block>>>(A_d, B_d, C_d, n); } • Any call to a kernel function is asynchronous from CUDA 1. 0 on, explicit synch needed for blocking cuda. Device. Synchronize(); © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 21

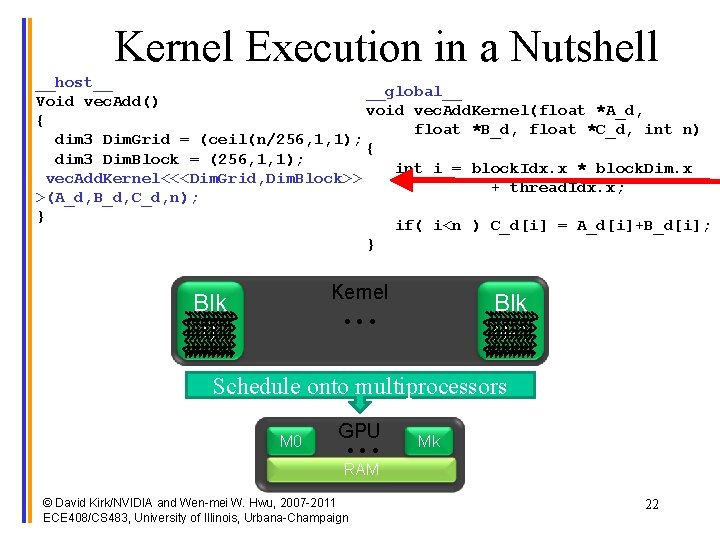

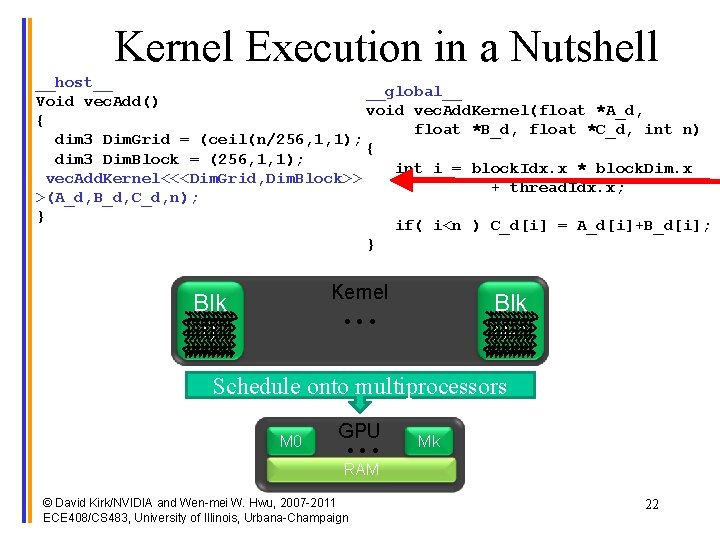

Kernel Execution in a Nutshell __host__ __global__ Void vec. Add() void vec. Add. Kernel(float *A_d, { float *B_d, float *C_d, int n) dim 3 Dim. Grid = (ceil(n/256, 1, 1); { dim 3 Dim. Block = (256, 1, 1); int i = block. Idx. x * block. Dim. x vec. Add. Kernel<<<Dim. Grid, Dim. Block>> + thread. Idx. x; >(A_d, B_d, C_d, n); } if( i<n ) C_d[i] = A_d[i]+B_d[i]; } Kernel • • • Blk 0 Blk N-1 Schedule onto multiprocessors M 0 GPU • • • Mk RAM © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 22

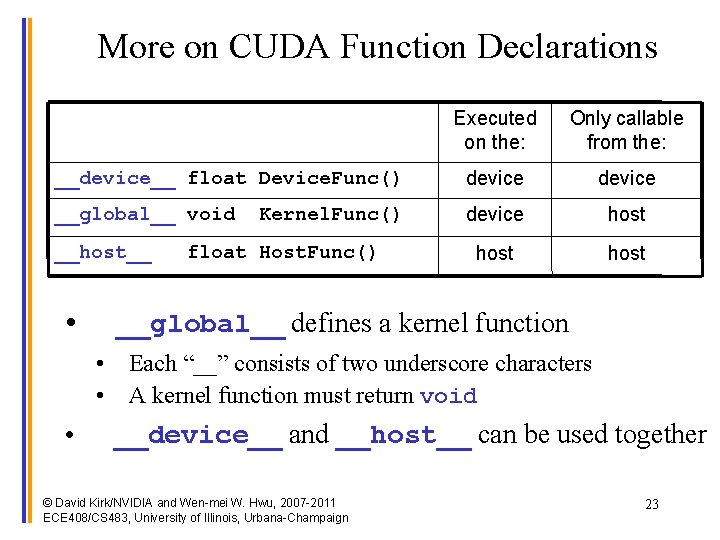

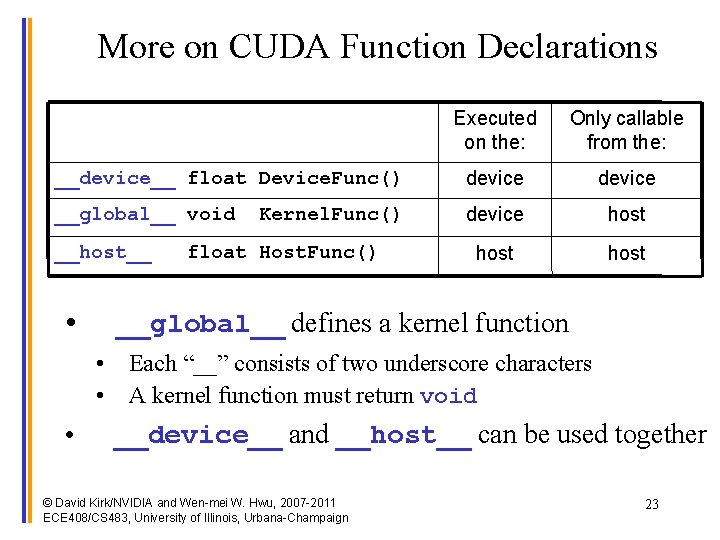

More on CUDA Function Declarations Executed on the: Only callable from the: __device__ float Device. Func() device __global__ void device host __host__ • Kernel. Func() float Host. Func() __global__ defines a kernel function • Each “__” consists of two underscore characters • A kernel function must return void • __device__ and __host__ can be used together © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 23

Compiling A CUDA Program Integrated C programs with CUDA extensions NVCC Compiler Host Code Host C Compiler/ Linker Device Code (PTX) Device Just-in-Time Compiler Heterogeneous Computing Platform with CPUs, GPUs © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 24

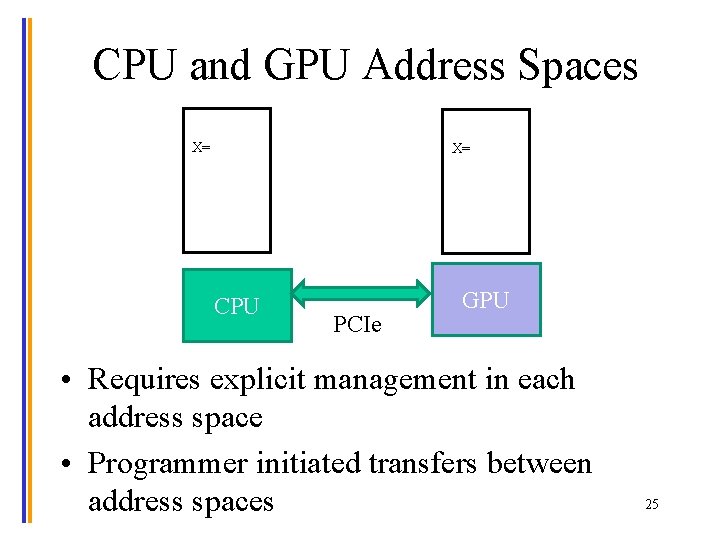

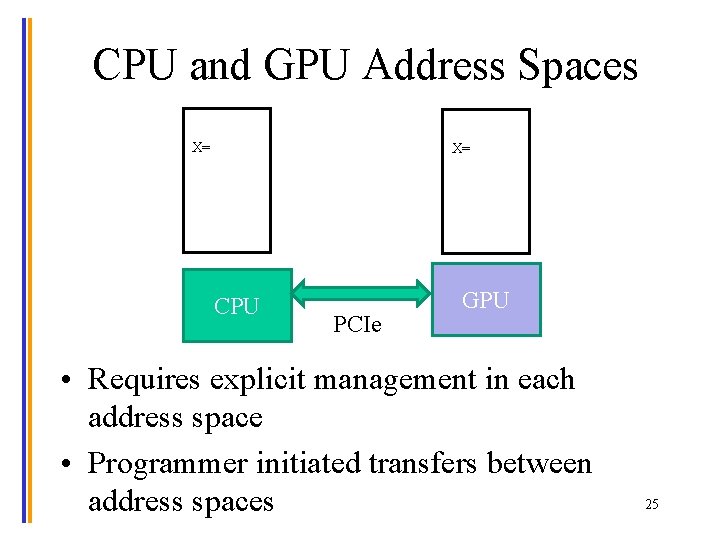

CPU and GPU Address Spaces X= X= CPU PCIe GPU • Requires explicit management in each address space • Programmer initiated transfers between address spaces 25

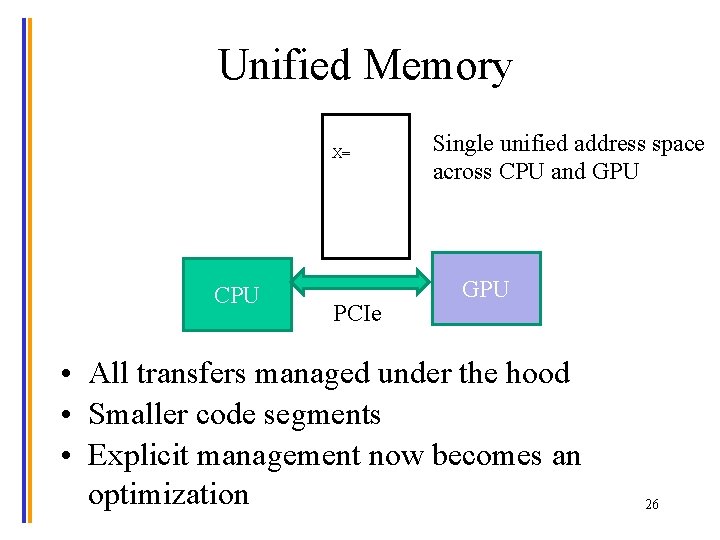

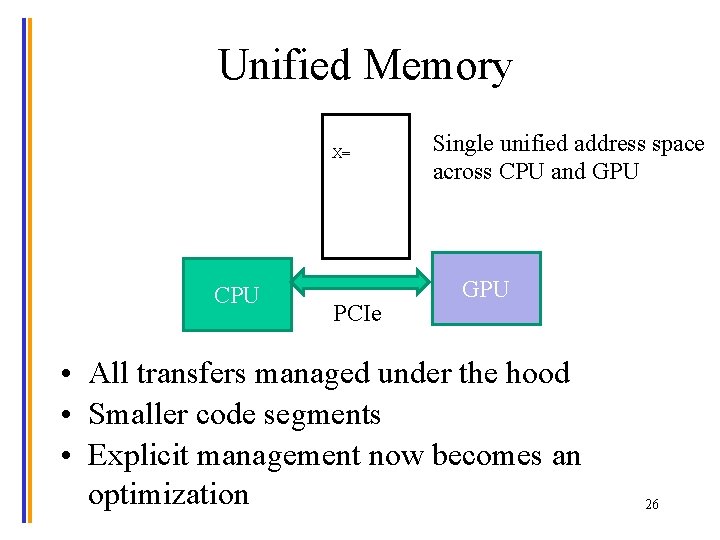

Unified Memory X= CPU PCIe Single unified address space across CPU and GPU • All transfers managed under the hood • Smaller code segments • Explicit management now becomes an optimization 26

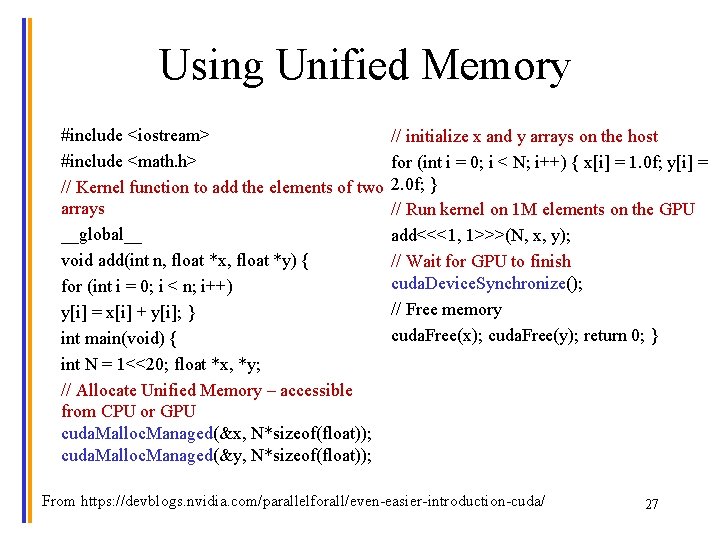

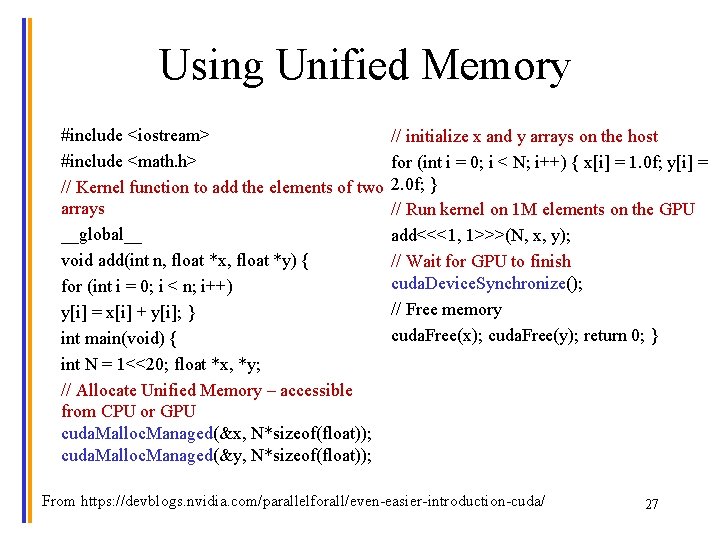

Using Unified Memory #include <iostream> #include <math. h> // Kernel function to add the elements of two arrays __global__ void add(int n, float *x, float *y) { for (int i = 0; i < n; i++) y[i] = x[i] + y[i]; } int main(void) { int N = 1<<20; float *x, *y; // Allocate Unified Memory – accessible from CPU or GPU cuda. Malloc. Managed(&x, N*sizeof(float)); cuda. Malloc. Managed(&y, N*sizeof(float)); // initialize x and y arrays on the host for (int i = 0; i < N; i++) { x[i] = 1. 0 f; y[i] = 2. 0 f; } // Run kernel on 1 M elements on the GPU add<<<1, 1>>>(N, x, y); // Wait for GPU to finish cuda. Device. Synchronize(); // Free memory cuda. Free(x); cuda. Free(y); return 0; } From https: //devblogs. nvidia. com/parallelforall/even-easier-introduction-cuda/ 27

The ISA • An Instruction Set Architecture (ISA) is a contract between the hardware and the software. • As the name suggests, it is a set of instructions that the architecture (hardware) can execute. © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 28

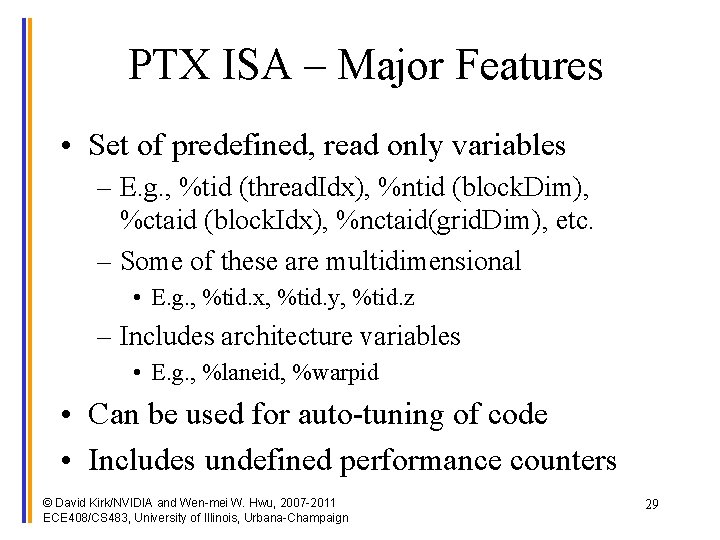

PTX ISA – Major Features • Set of predefined, read only variables – E. g. , %tid (thread. Idx), %ntid (block. Dim), %ctaid (block. Idx), %nctaid(grid. Dim), etc. – Some of these are multidimensional • E. g. , %tid. x, %tid. y, %tid. z – Includes architecture variables • E. g. , %laneid, %warpid • Can be used for auto-tuning of code • Includes undefined performance counters © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 29

PTX ISA – Major Features (2) • Multiple address spaces – Register, parameter – Constant global, etc. – More later • Predicated instruction execution © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 30

Versions • Compute capability – Architecture specific, e. g. , Volta is 7. 0 • CUDA version – Determines programming model features – Currently 9. 0 • PTX version – Virtual ISA version – Currently 6. 1 31

QUESTIONS? © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2011 ECE 408/CS 483, University of Illinois, Urbana-Champaign 32