ECE 1747 Parallel Programming Basics of Parallel Architectures

ECE 1747: Parallel Programming Basics of Parallel Architectures: Shared-Memory Machines

Two Parallel Architectures • Shared memory machines. • Distributed memory machines.

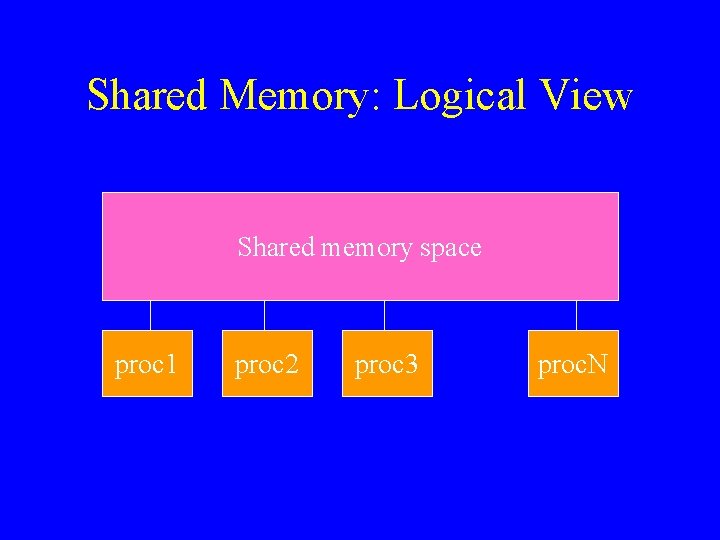

Shared Memory: Logical View Shared memory space proc 1 proc 2 proc 3 proc. N

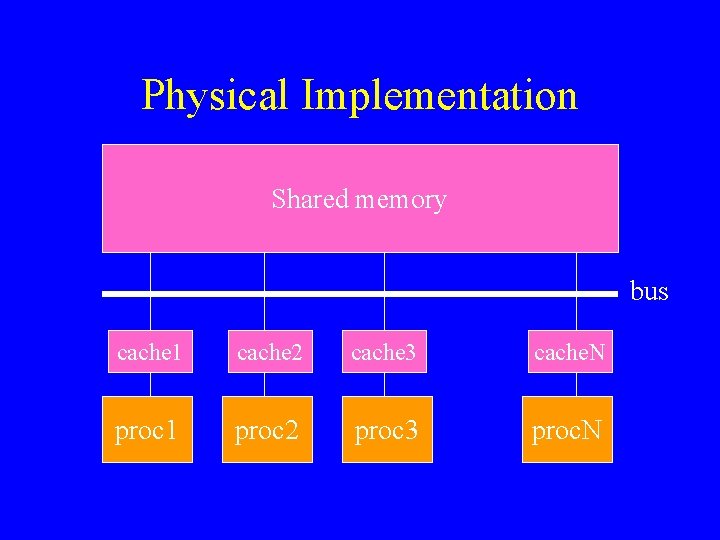

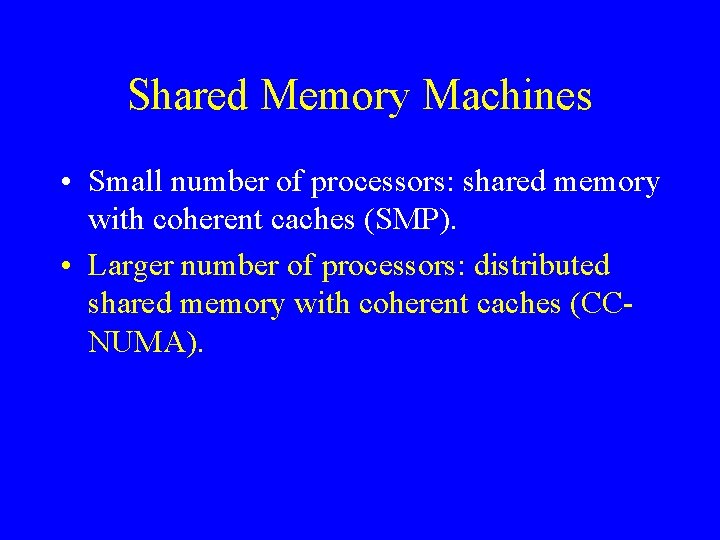

Shared Memory Machines • Small number of processors: shared memory with coherent caches (SMP). • Larger number of processors: distributed shared memory with coherent caches (CCNUMA).

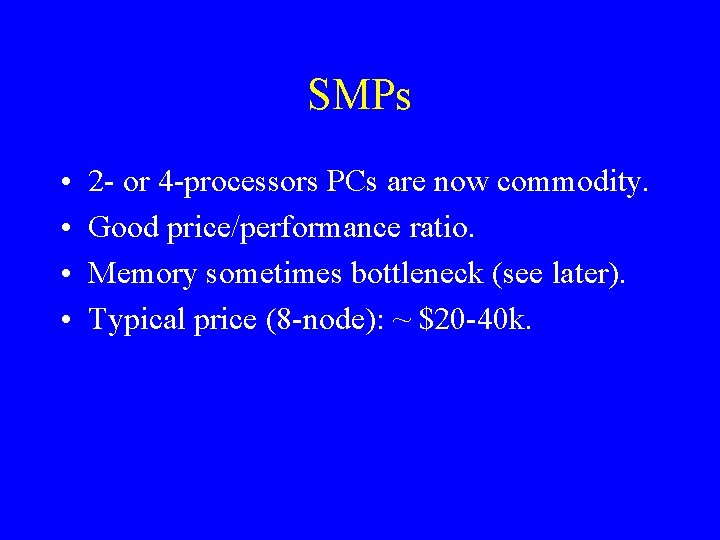

SMPs • • 2 - or 4 -processors PCs are now commodity. Good price/performance ratio. Memory sometimes bottleneck (see later). Typical price (8 -node): ~ $20 -40 k.

Physical Implementation Shared memory bus cache 1 cache 2 cache 3 cache. N proc 1 proc 2 proc 3 proc. N

Shared Memory Machines • Small number of processors: shared memory with coherent caches (SMP). • Larger number of processors: distributed shared memory with coherent caches (CCNUMA).

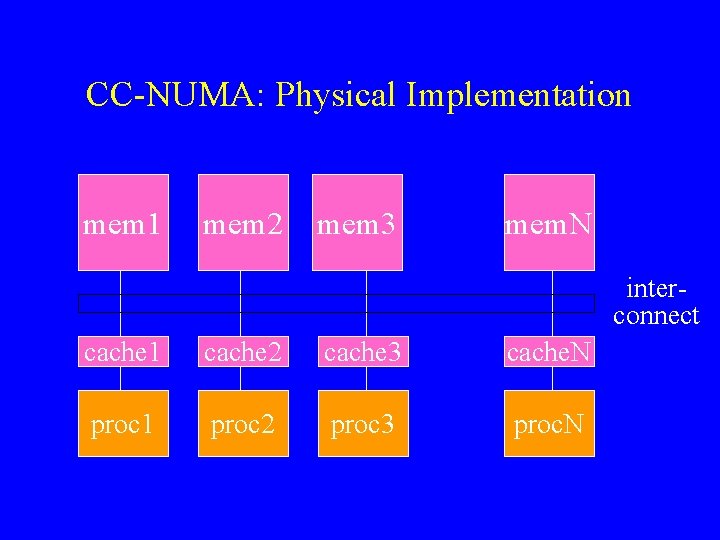

CC-NUMA: Physical Implementation mem 1 mem 2 mem 3 mem. N interconnect cache 1 cache 2 cache 3 cache. N proc 1 proc 2 proc 3 proc. N

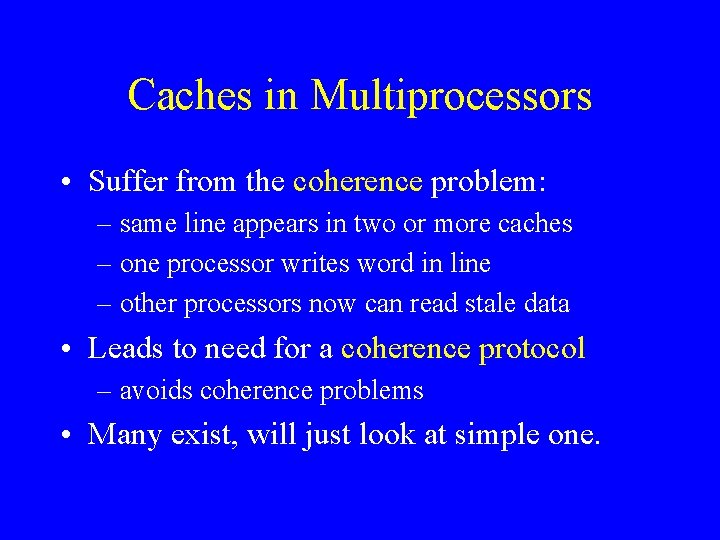

Caches in Multiprocessors • Suffer from the coherence problem: – same line appears in two or more caches – one processor writes word in line – other processors now can read stale data • Leads to need for a coherence protocol – avoids coherence problems • Many exist, will just look at simple one.

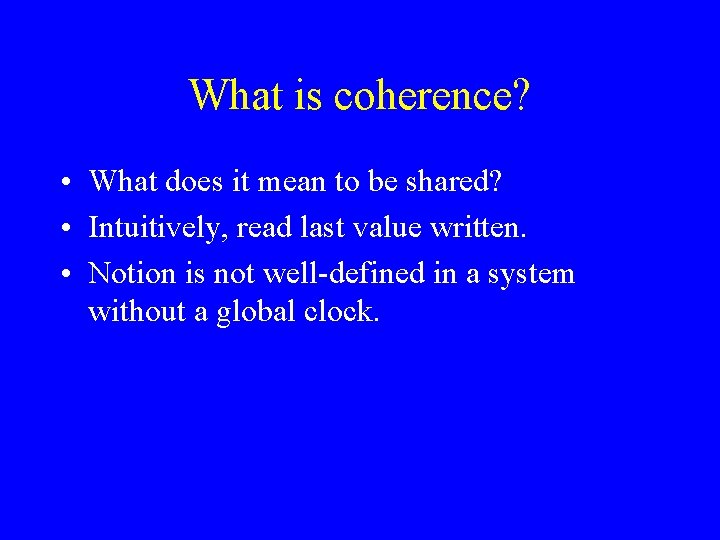

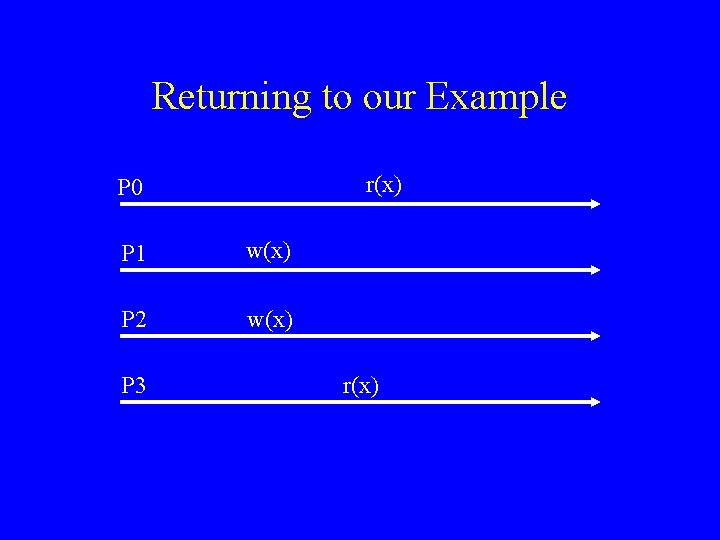

What is coherence? • What does it mean to be shared? • Intuitively, read last value written. • Notion is not well-defined in a system without a global clock.

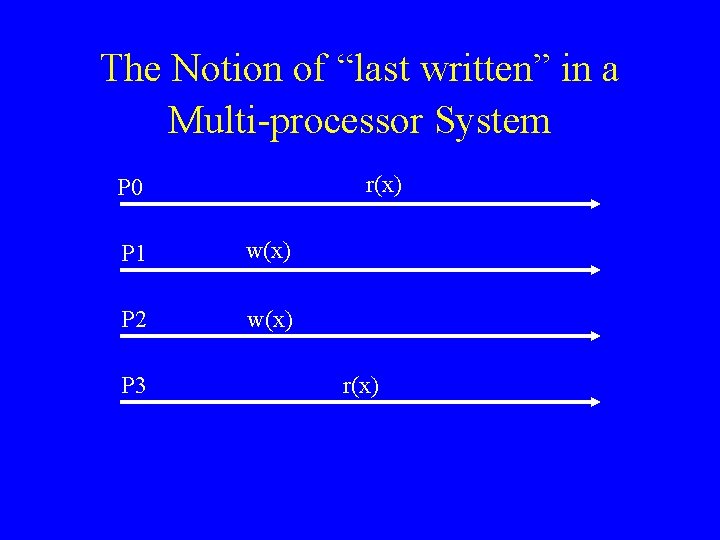

The Notion of “last written” in a Multi-processor System r(x) P 0 P 1 w(x) P 2 w(x) P 3 r(x)

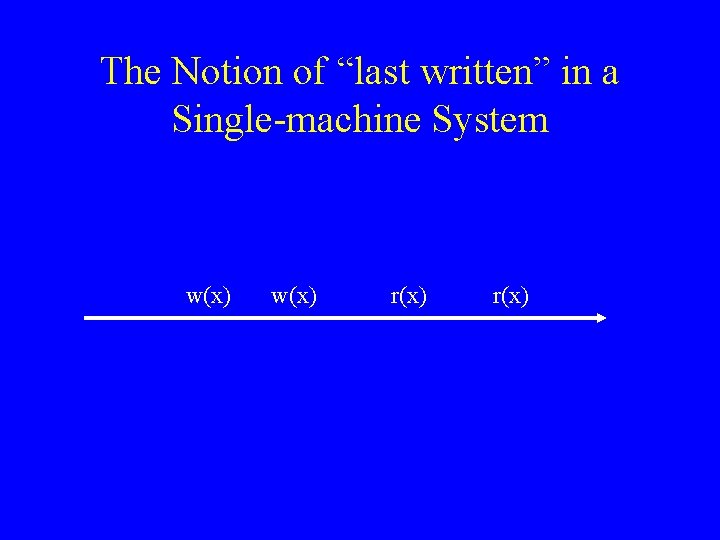

The Notion of “last written” in a Single-machine System w(x) r(x)

Coherence: a Clean Definition • Is achieved by referring back to the single machine case. • Called sequential consistency.

Sequential Consistency (SC) • Memory is sequentially consistent if and only if it behaves “as if” the processors were executing in a time-shared fashion on a single machine.

Returning to our Example r(x) P 0 P 1 w(x) P 2 w(x) P 3 r(x)

Another Way of Defining SC • All memory references of a single process execute in program order. • All writes are globally ordered.

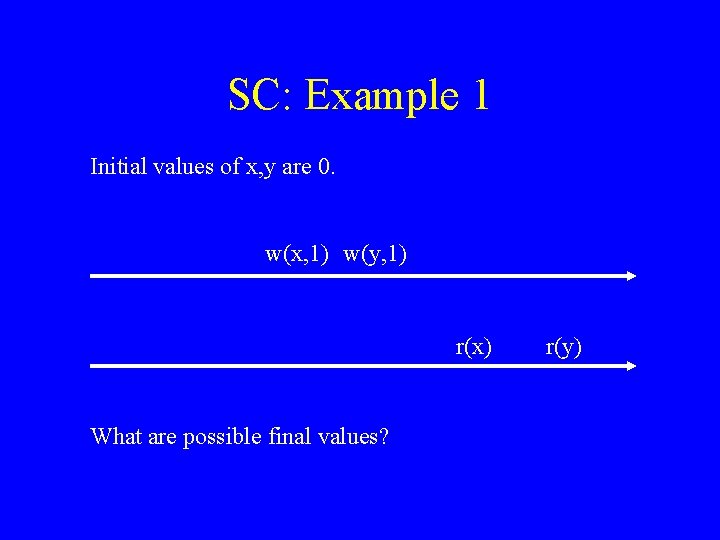

SC: Example 1 Initial values of x, y are 0. w(x, 1) w(y, 1) r(x) What are possible final values? r(y)

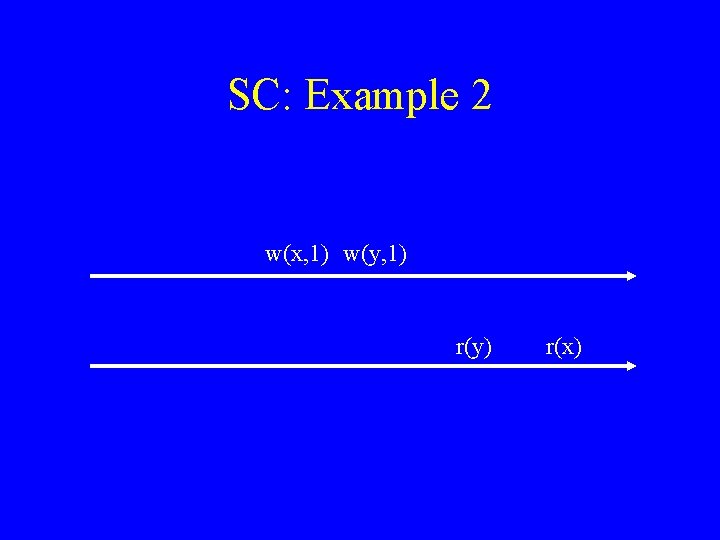

SC: Example 2 w(x, 1) w(y, 1) r(y) r(x)

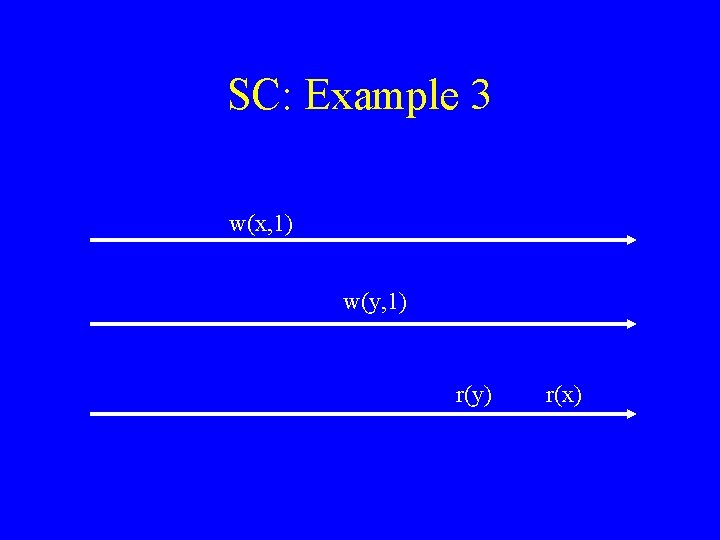

SC: Example 3 w(x, 1) w(y, 1) r(y) r(x)

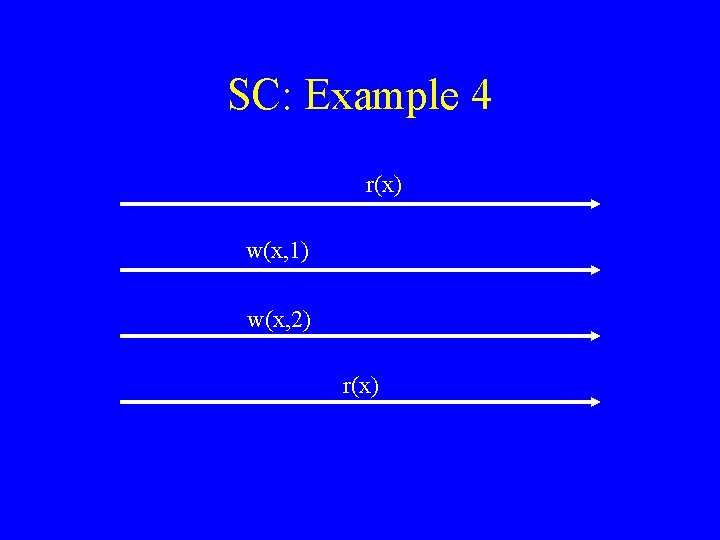

SC: Example 4 r(x) w(x, 1) w(x, 2) r(x)

Implementation • Many ways of implementing SC. • In fact, sometimes stronger conditions. • Will look at a simple one: MSI protocol.

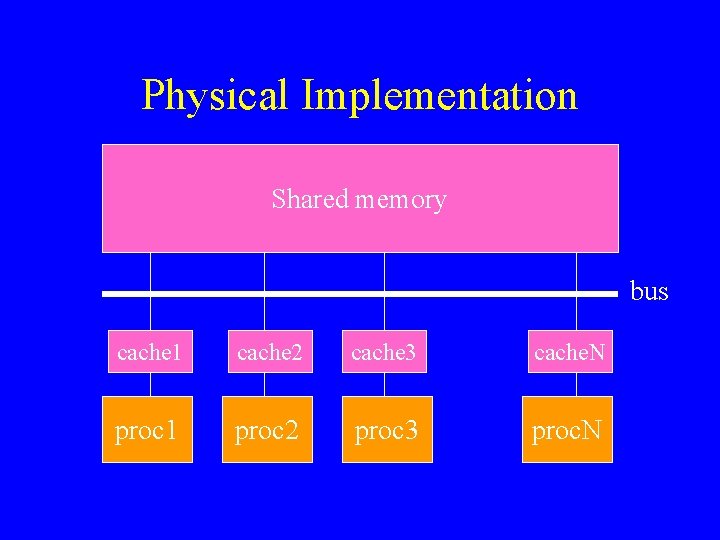

Physical Implementation Shared memory bus cache 1 cache 2 cache 3 cache. N proc 1 proc 2 proc 3 proc. N

Fundamental Assumption • The bus is a reliable, ordered broadcast bus. – Every message sent by a processor is received by all other processors in the same order. • Also called a snooping bus – Processors (or caches) snoop on the bus.

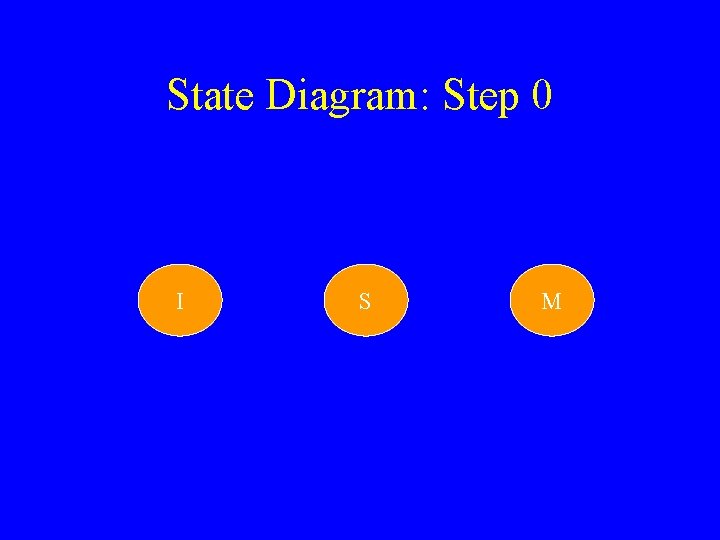

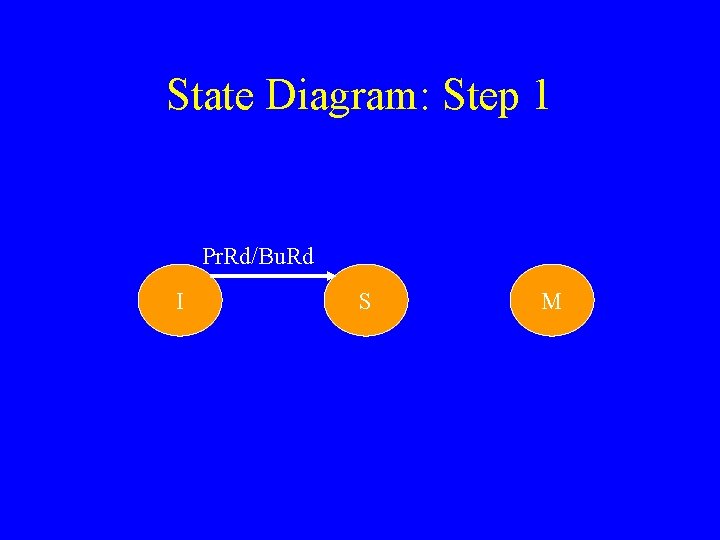

States of a Cache Line • Invalid • Shared – read-only, one of many cached copies • Modified – read-write, sole valid copy

Processor Transactions • processor read(x) • processor write(x)

Bus Transactions • bus read(x) – asks for copy with no intent to modify • bus read-exclusive(x) – asks for copy with intent to modify

State Diagram: Step 0 I S M

State Diagram: Step 1 Pr. Rd/Bu. Rd I S M

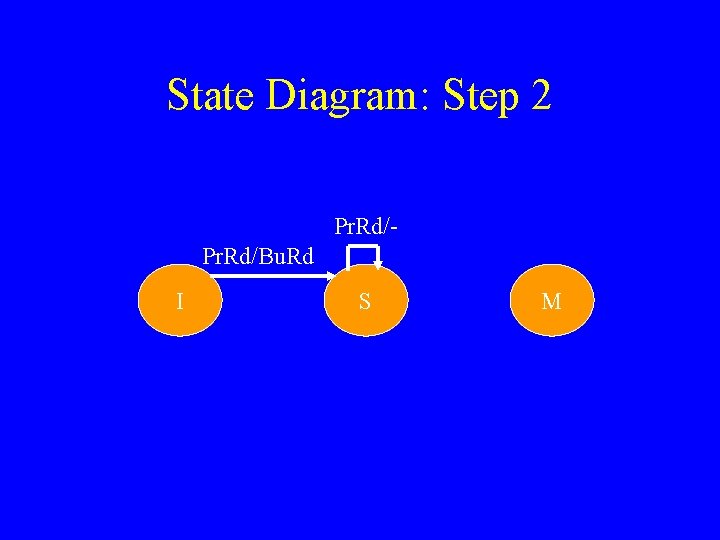

State Diagram: Step 2 Pr. Rd/Bu. Rd I S M

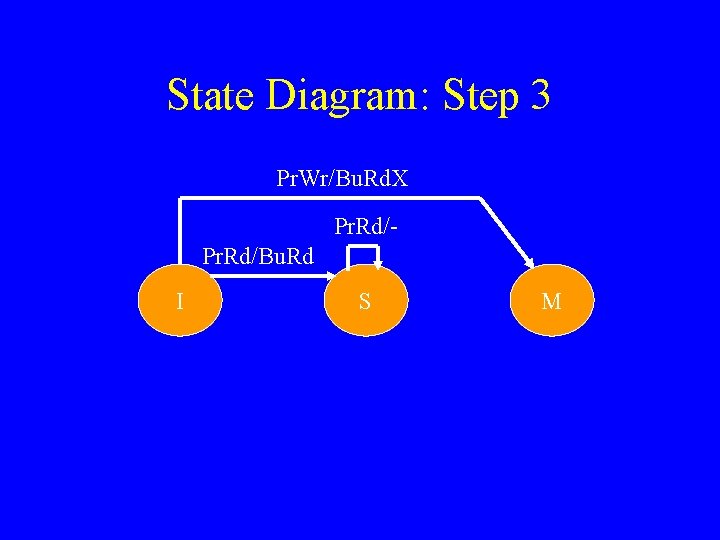

State Diagram: Step 3 Pr. Wr/Bu. Rd. X Pr. Rd/Bu. Rd I S M

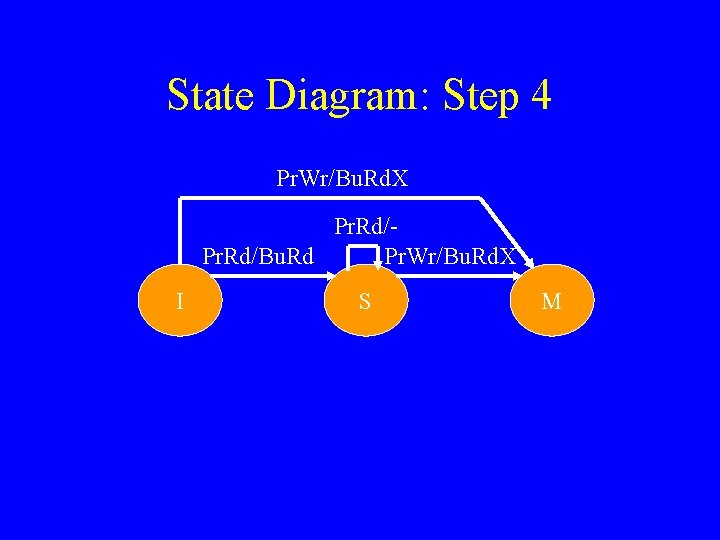

State Diagram: Step 4 Pr. Wr/Bu. Rd. X Pr. Rd/Bu. Rd Pr. Wr/Bu. Rd. X I S M

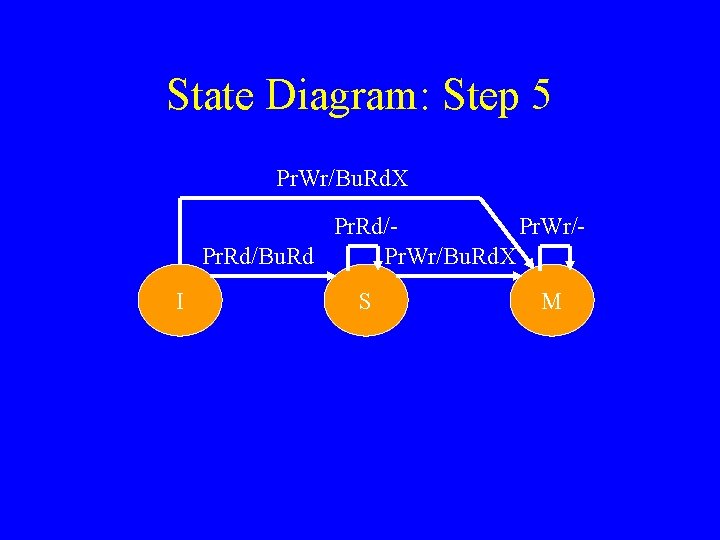

State Diagram: Step 5 Pr. Wr/Bu. Rd. X Pr. Rd/Pr. Wr/Pr. Rd/Bu. Rd Pr. Wr/Bu. Rd. X I S M

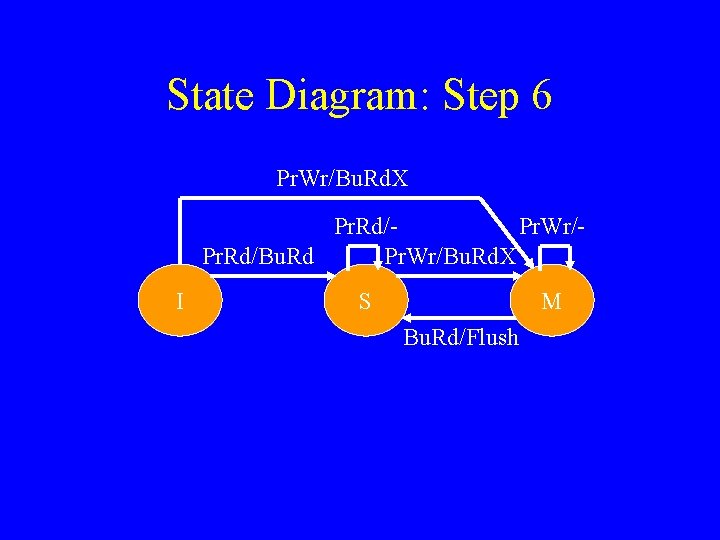

State Diagram: Step 6 Pr. Wr/Bu. Rd. X Pr. Rd/Pr. Wr/Pr. Rd/Bu. Rd Pr. Wr/Bu. Rd. X I S M Bu. Rd/Flush

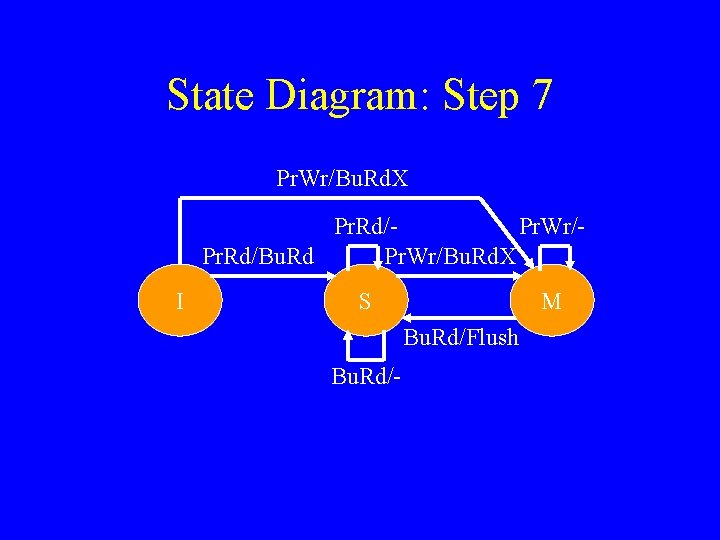

State Diagram: Step 7 Pr. Wr/Bu. Rd. X Pr. Rd/Pr. Wr/Pr. Rd/Bu. Rd Pr. Wr/Bu. Rd. X I S M Bu. Rd/Flush Bu. Rd/-

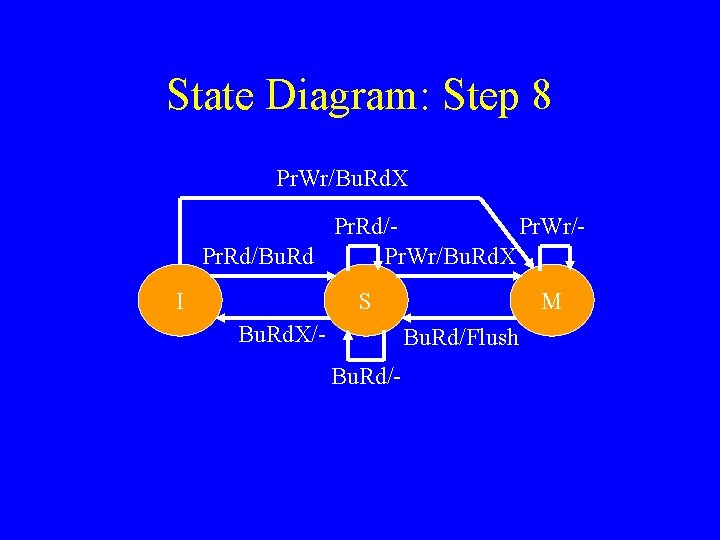

State Diagram: Step 8 Pr. Wr/Bu. Rd. X Pr. Rd/Pr. Wr/Pr. Rd/Bu. Rd Pr. Wr/Bu. Rd. X I S Bu. Rd. X/- M Bu. Rd/Flush Bu. Rd/-

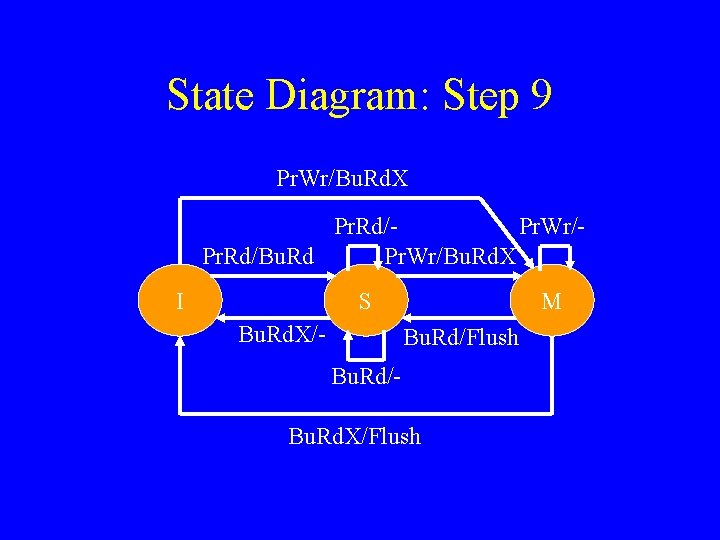

State Diagram: Step 9 Pr. Wr/Bu. Rd. X Pr. Rd/Pr. Wr/Pr. Rd/Bu. Rd Pr. Wr/Bu. Rd. X I S Bu. Rd. X/- M Bu. Rd/Flush Bu. Rd/Bu. Rd. X/Flush

In Reality • Most machines use a slightly more complicated protocol (4 states instead of 3). • See architecture books (MESI protocol).

Problem: False Sharing • Occurs when two or more processors access different data in same cache line, and at least one of them writes. • Leads to ping-pong effect.

![False Sharing: Example (1 of 3) for( i=0; i<n; i++ ) a[i] = b[i]; False Sharing: Example (1 of 3) for( i=0; i<n; i++ ) a[i] = b[i];](http://slidetodoc.com/presentation_image_h2/106db9ffcf9cd6efd343c2b1afb8a49a/image-39.jpg)

False Sharing: Example (1 of 3) for( i=0; i<n; i++ ) a[i] = b[i]; • Let’s assume we parallelize code: –p=2 – element of a takes 4 words – cache line has 32 words

![False Sharing: Example (2 of 3) cache line a[0] a[1] a[2] a[3] a[4] a[5] False Sharing: Example (2 of 3) cache line a[0] a[1] a[2] a[3] a[4] a[5]](http://slidetodoc.com/presentation_image_h2/106db9ffcf9cd6efd343c2b1afb8a49a/image-40.jpg)

False Sharing: Example (2 of 3) cache line a[0] a[1] a[2] a[3] a[4] a[5] a[6] a[7] Written by processor 0 Written by processor 1

![False Sharing: Example (3 of 3) a[0] inv a[1] a[2] a[4] P 0 . False Sharing: Example (3 of 3) a[0] inv a[1] a[2] a[4] P 0 .](http://slidetodoc.com/presentation_image_h2/106db9ffcf9cd6efd343c2b1afb8a49a/image-41.jpg)

False Sharing: Example (3 of 3) a[0] inv a[1] a[2] a[4] P 0 . . . data a[3] a[5] P 1

Summary • Sequential consistency. • Bus-based coherence protocols. • False sharing.

- Slides: 42