Early Hearing Detection and Intervention EHDI Interoperability Pilot

Early Hearing Detection and Intervention (EHDI) Interoperability Pilot Project Presentation to CDC Health Information Innovation Consortium by Xidong Deng 1 Dina Dickerson 2 August 11, 2015 1. National Center on Birth Defects and Developmental Disabilities, Centers for Disease Control and Prevention 2. Oregon Health Authority 1

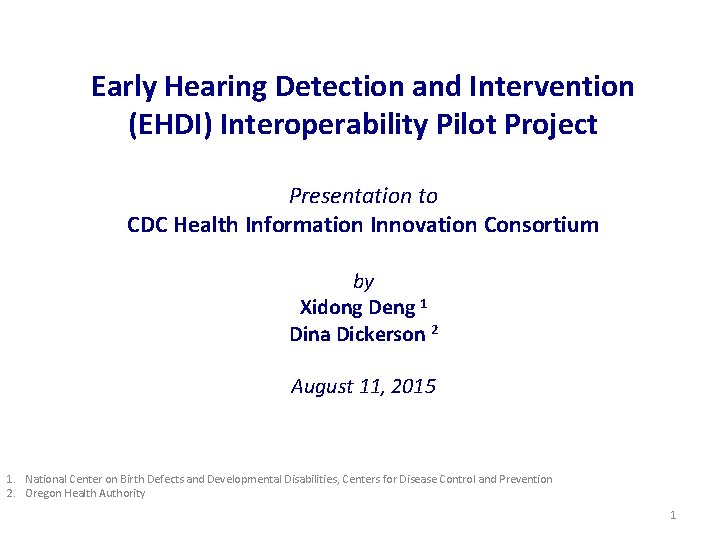

EHDI-IS and EHDI HIT Standards • State-based EHDI information systems capable of identifying, matching, collecting, and reporting data on all occurrent births through the three components of the EHDI process (screening, diagnosis, and early intervention). Name Type Description Standard System [1] Newborn Screening Coding and Terminology Guide Data Provides codes and terminology for newborn hearing screening procedures, results, and risk factors for infant hearing loss. LOINC/SNOMED-CT [2] HL 7 Version 2. 6 Implementation Guide: Early Hearing Detection and Intervention (EHDI) Results Message Standardizes how newborn hearing screening information is transmitted from a point of care device to an interested consumer, such as public health. HL 7 v 2 [3] IHE Quality, Research and Public Health Technical Framework Supplement: Newborn Admission Notification Information (NANI) Message Describes the content needed to communicate a timely newborn admission notification electronically from a birthing facility to public health to be used by newborn screening programs. HL 7 v 2, v 3 message [4] IHE Quality, Research and Public Health Technical Framework Supplement: Early Hearing Detection and Intervention Document Defines how to exchange data required to populate a newborn’s Hearing Plan of Care document. HL 7 CDA R 2 [5] HL 7 EHR-System Public Health Functional Profile Functional Defines functional requirements and criteria to support public healthclinical information collection, management and exchanges for specific public health programs (domains). HL 7 EHR-S Functional Model [6] IHE Quality, Research and Public Health Technical Framework Supplement: Quality Measure Execution. Early Hearing (QME-EH) Quality Describes the content needed to communicate patient-level data to electronically monitor the performance of EHDI initiatives for newborns and young children. HL 7 QRDA [7] Hearing Screening Before Hospital Discharge (NQF 1354 /CMS 31 v 3) Quality Electronic clinical quality measure definition for newborn hearing screening quality reporting , adopted by the CMS EHR Incentive Program for Hospitals and Critical Access Hospitals HL 7 HQMF

![EHDI Standard-based Information Exchange EHDI Standards-based Information Exchange [3, 4] [4] Provider’s EHR Hospital EHDI Standard-based Information Exchange EHDI Standards-based Information Exchange [3, 4] [4] Provider’s EHR Hospital](http://slidetodoc.com/presentation_image_h2/d39e06fc9d8fcb8f8517035b5e700a15/image-3.jpg)

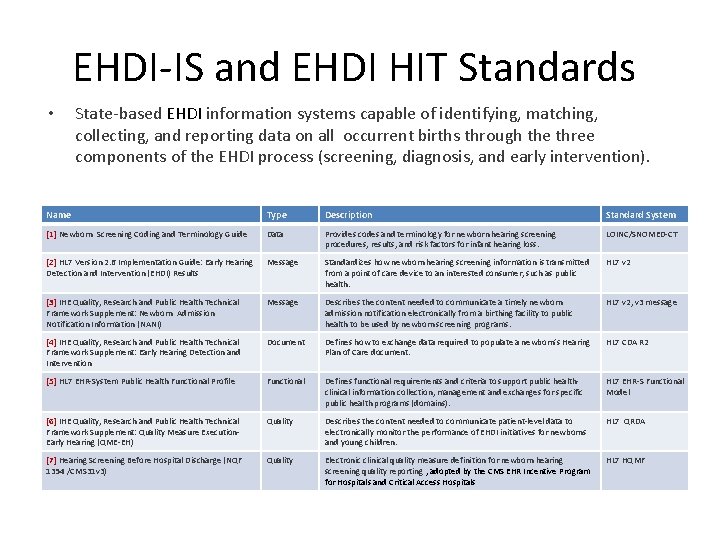

EHDI Standard-based Information Exchange EHDI Standards-based Information Exchange [3, 4] [4] Provider’s EHR Hospital EHR System State EHDI Information System Labor & Delivery [5] [6] [2] Newborn Hearing Screening Device Federal Reporting

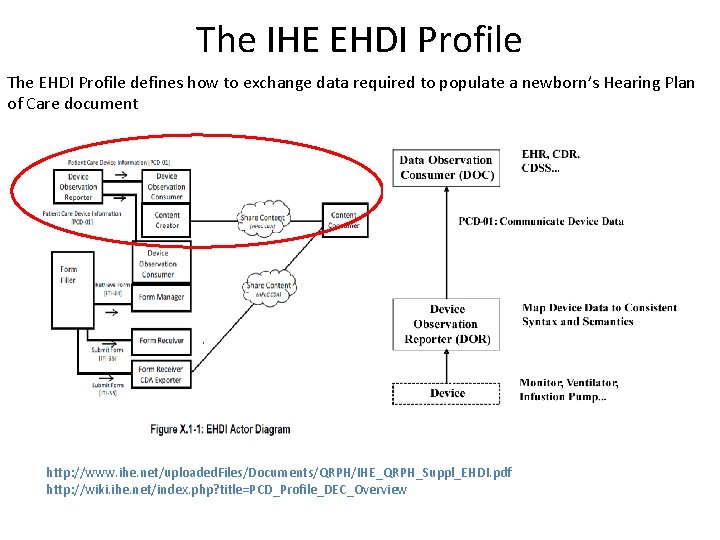

The IHE EHDI Profile The EHDI Profile defines how to exchange data required to populate a newborn’s Hearing Plan of Care document http: //www. ihe. net/uploaded. Files/Documents/QRPH/IHE_QRPH_Suppl_EHDI. pdf http: //wiki. ihe. net/index. php? title=PCD_Profile_DEC_Overview

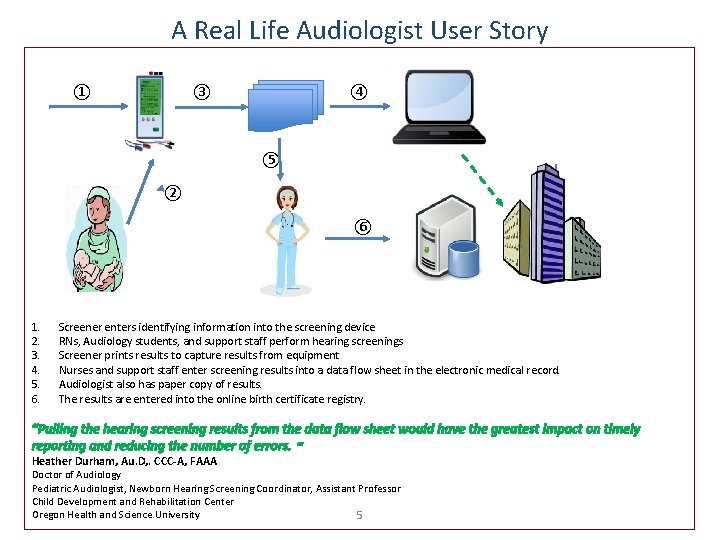

A Real Life Audiologist User Story ① ③ ④ ⑤ ② ⑥ 1. 2. 3. 4. 5. 6. Screener enters identifying information into the screening device RNs, Audiology students, and support staff perform hearing screenings Screener prints results to capture results from equipment Nurses and support staff enter screening results into a data flow sheet in the electronic medical record. Audiologist also has paper copy of results. The results are entered into the online birth certificate registry. “Pulling the hearing screening results from the data flow sheet would have the greatest impact on timely reporting and reducing the number of errors. “ Heather Durham, Au. D, . CCC-A, FAAA Doctor of Audiology Pediatric Audiologist, Newborn Hearing Screening Coordinator, Assistant Professor Child Development and Rehabilitation Center Oregon Health and Science University 5

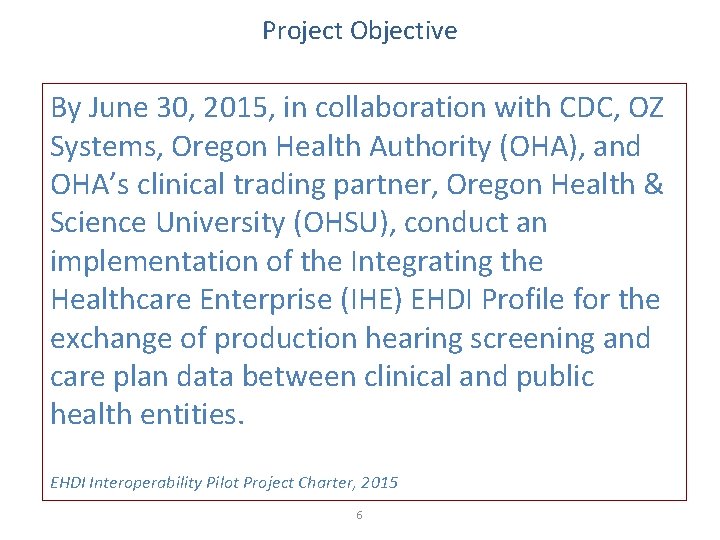

Project Objective By June 30, 2015, in collaboration with CDC, OZ Systems, Oregon Health Authority (OHA), and OHA’s clinical trading partner, Oregon Health & Science University (OHSU), conduct an implementation of the Integrating the Healthcare Enterprise (IHE) EHDI Profile for the exchange of production hearing screening and care plan data between clinical and public health entities. EHDI Interoperability Pilot Project Charter, 2015 6

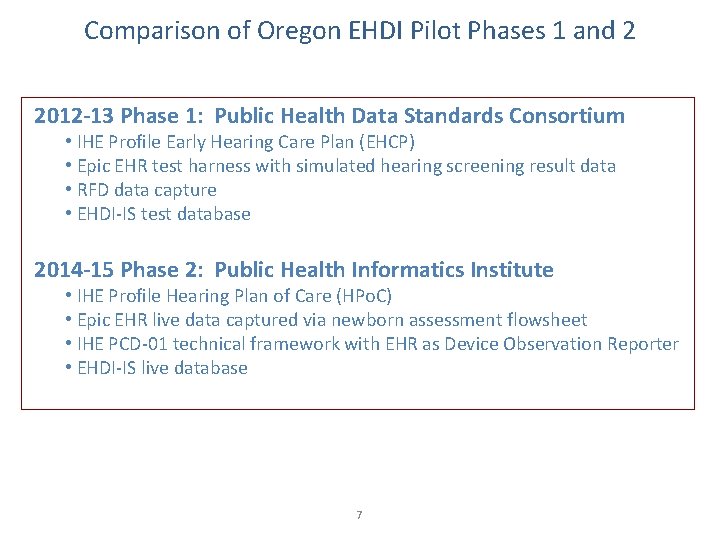

Comparison of Oregon EHDI Pilot Phases 1 and 2 2012 -13 Phase 1: Public Health Data Standards Consortium • IHE Profile Early Hearing Care Plan (EHCP) • Epic EHR test harness with simulated hearing screening result data • RFD data capture • EHDI-IS test database 2014 -15 Phase 2: Public Health Informatics Institute • IHE Profile Hearing Plan of Care (HPo. C) • Epic EHR live data captured via newborn assessment flowsheet • IHE PCD-01 technical framework with EHR as Device Observation Reporter • EHDI-IS live database 7

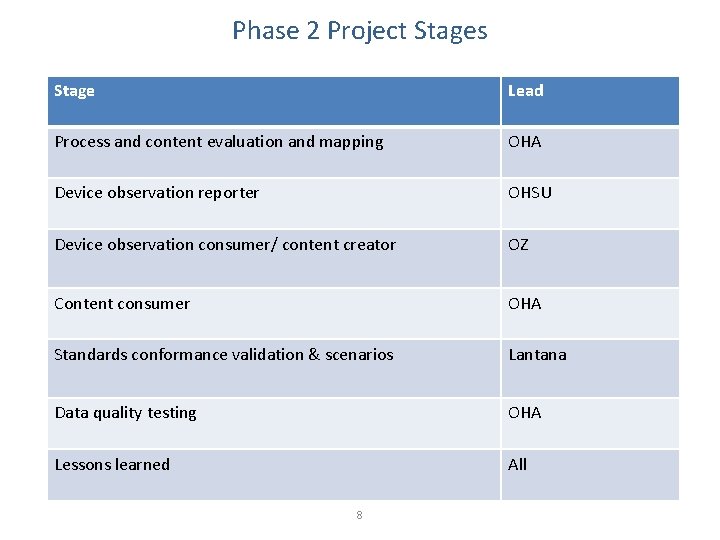

Phase 2 Project Stages Stage Lead Process and content evaluation and mapping OHA Device observation reporter OHSU Device observation consumer/ content creator OZ Content consumer OHA Standards conformance validation & scenarios Lantana Data quality testing OHA Lessons learned All 8

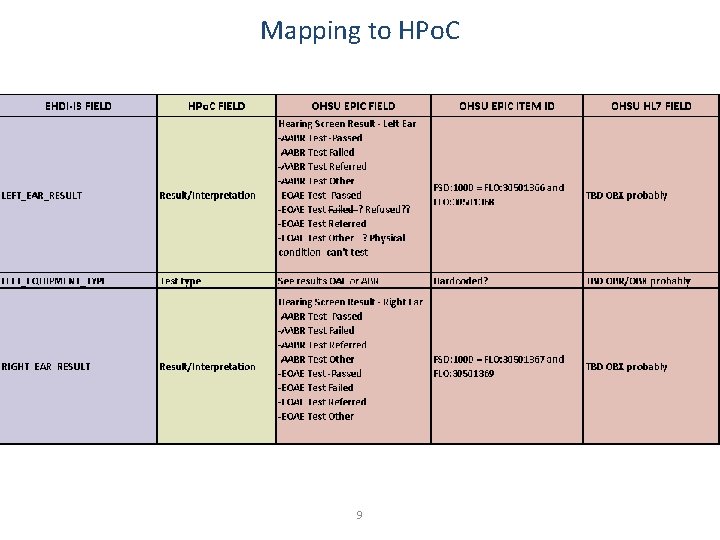

Mapping to HPo. C 9

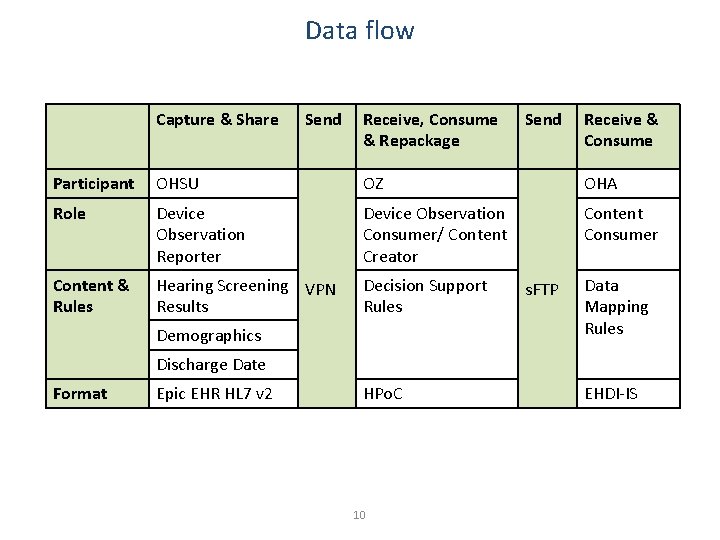

Data flow Capture & Share Send Receive, Consume & Repackage Send Receive & Consume Participant OHSU OZ OHA Role Device Observation Reporter Device Observation Consumer/ Content Creator Content Consumer Content & Rules Hearing Screening VPN Results Decision Support Rules Demographics s. FTP Data Mapping Rules Discharge Date Format Epic EHR HL 7 v 2 HPo. C 10 EHDI-IS

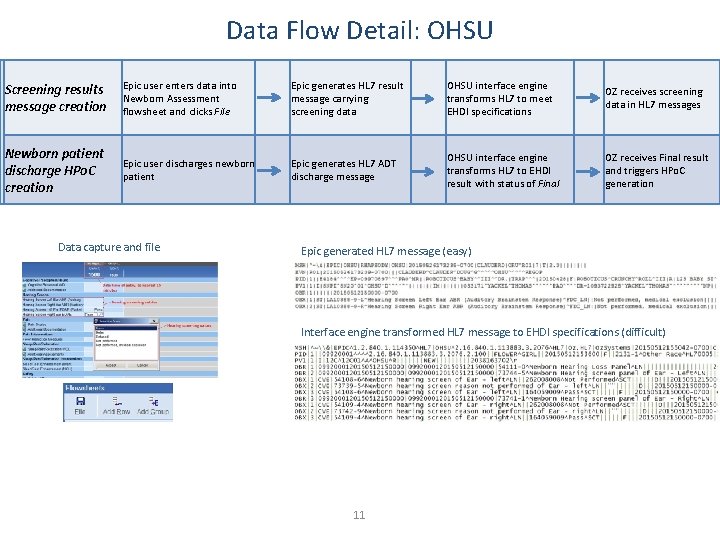

Data Flow Detail: OHSU Screening results message creation Epic user enters data into Newborn Assessment flowsheet and clicks File Epic generates HL 7 result message carrying screening data OHSU interface engine transforms HL 7 to meet EHDI specifications OZ receives screening data in HL 7 messages Newborn patient discharge HPo. C creation Epic user discharges newborn patient Epic generates HL 7 ADT discharge message OHSU interface engine transforms HL 7 to EHDI result with status of Final OZ receives Final result and triggers HPo. C generation Data capture and file Epic generated HL 7 message (easy) Interface engine transformed HL 7 message to EHDI specifications (difficult) 11

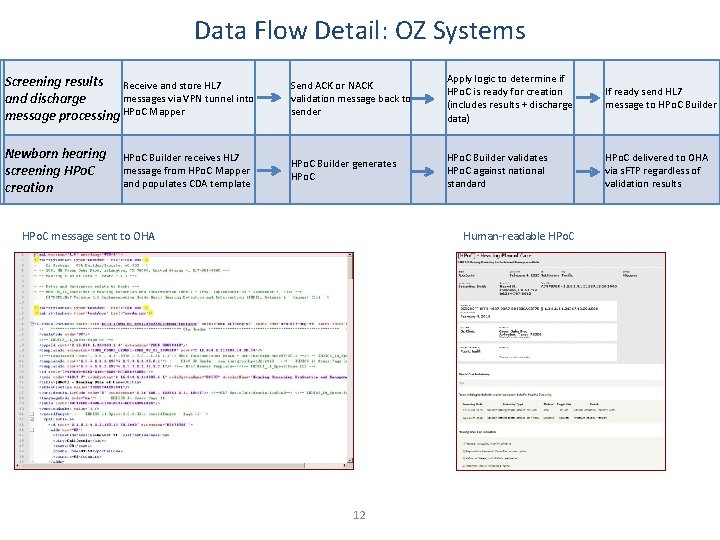

Data Flow Detail: OZ Systems Screening results Receive and store HL 7 messages via VPN tunnel into and discharge message processing HPo. C Mapper Send ACK or NACK validation message back to sender Apply logic to determine if HPo. C is ready for creation (includes results + discharge data) If ready send HL 7 message to HPo. C Builder Newborn hearing screening HPo. C creation HPo. C Builder generates HPo. C Builder validates HPo. C against national standard HPo. C delivered to OHA via s. FTP regardless of validation results HPo. C Builder receives HL 7 message from HPo. C Mapper and populates CDA template HPo. C message sent to OHA Human-readable HPo. C 12

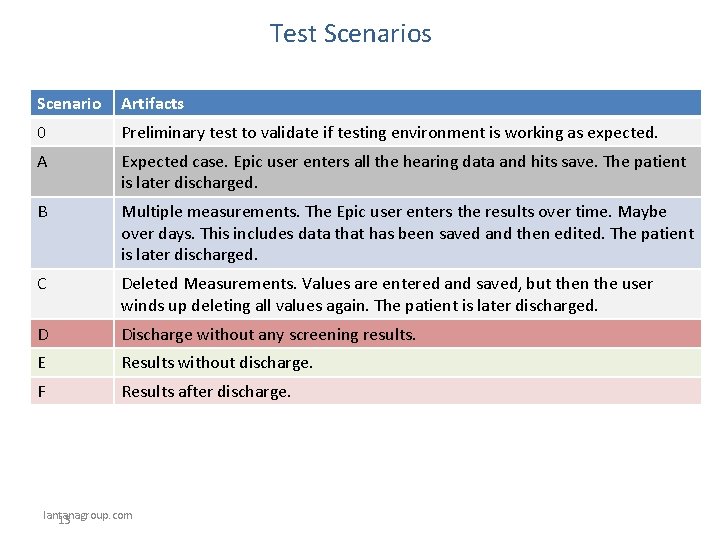

Test Scenarios Scenario Artifacts 0 Preliminary test to validate if testing environment is working as expected. A Expected case. Epic user enters all the hearing data and hits save. The patient is later discharged. B Multiple measurements. The Epic user enters the results over time. Maybe over days. This includes data that has been saved and then edited. The patient is later discharged. C Deleted Measurements. Values are entered and saved, but then the user winds up deleting all values again. The patient is later discharged. D Discharge without any screening results. E Results without discharge. F Results after discharge. lantanagroup. com 13

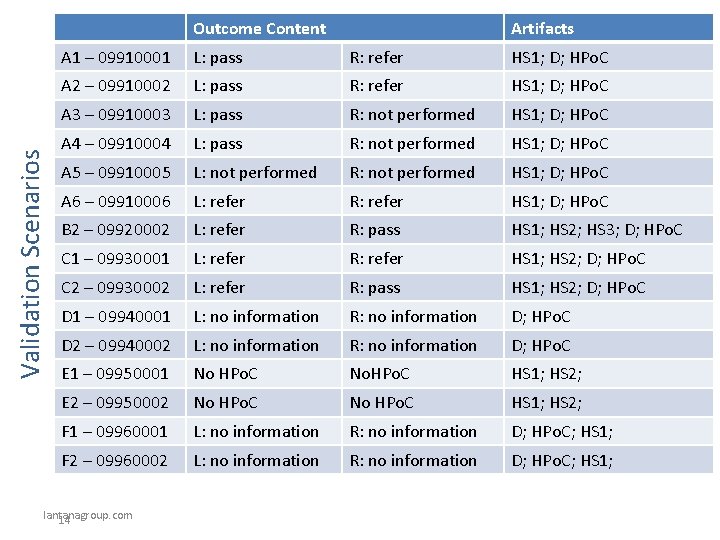

Validation Scenarios Outcome Content Artifacts A 1 – 09910001 L: pass R: refer HS 1; D; HPo. C A 2 – 09910002 L: pass R: refer HS 1; D; HPo. C A 3 – 09910003 L: pass R: not performed HS 1; D; HPo. C A 4 – 09910004 L: pass R: not performed HS 1; D; HPo. C A 5 – 09910005 L: not performed R: not performed HS 1; D; HPo. C A 6 – 09910006 L: refer R: refer HS 1; D; HPo. C B 2 – 09920002 L: refer R: pass HS 1; HS 2; HS 3; D; HPo. C C 1 – 09930001 L: refer R: refer HS 1; HS 2; D; HPo. C C 2 – 09930002 L: refer R: pass HS 1; HS 2; D; HPo. C D 1 – 09940001 L: no information R: no information D; HPo. C D 2 – 09940002 L: no information R: no information D; HPo. C E 1 – 09950001 No HPo. C No. HPo. C HS 1; HS 2; E 2 – 09950002 No HPo. C HS 1; HS 2; F 1 – 09960001 L: no information R: no information D; HPo. C; HS 1; F 2 – 09960002 L: no information R: no information D; HPo. C; HS 1; lantanagroup. com 14

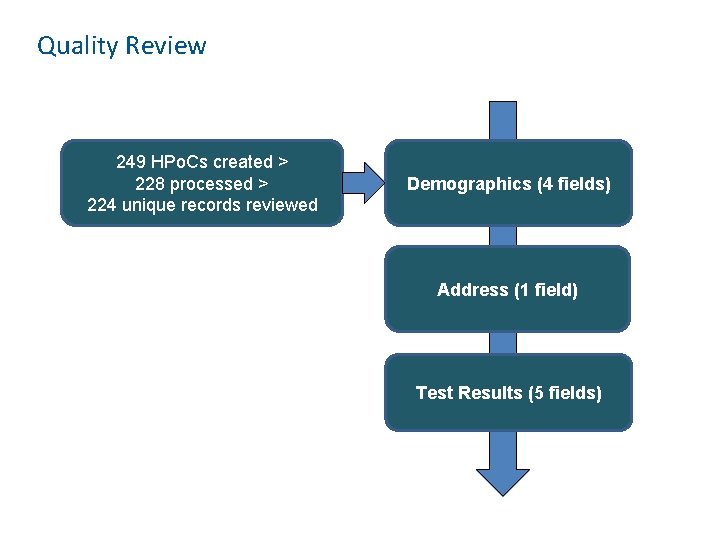

Quality Review 249 HPo. Cs created > 228 processed > 224 unique records reviewed Demographics (4 fields) Address (1 field) Test Results (5 fields)

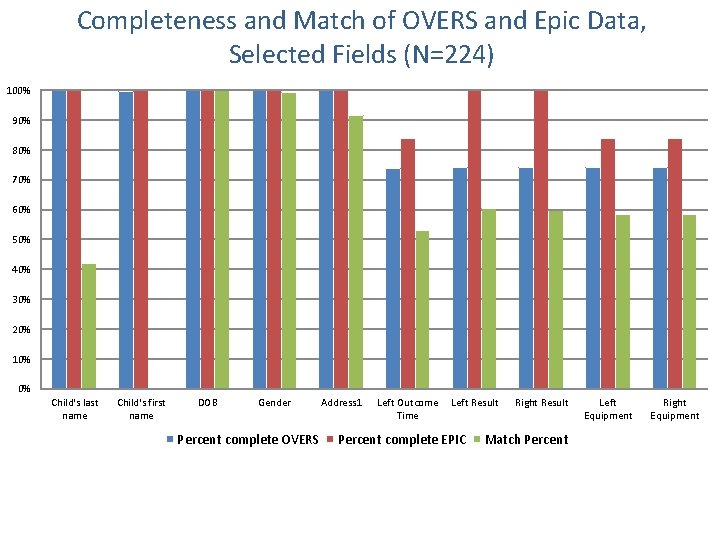

Completeness and Match of OVERS and Epic Data, Selected Fields (N=224) 100% 90% 80% 70% 60% 50% 40% 30% 20% 10% 0% Child's last name Child's first name DOB Gender Percent complete OVERS Address 1 Left Outcome Time Left Result Percent complete EPIC Right Result Match Percent Left Equipment Right Equipment

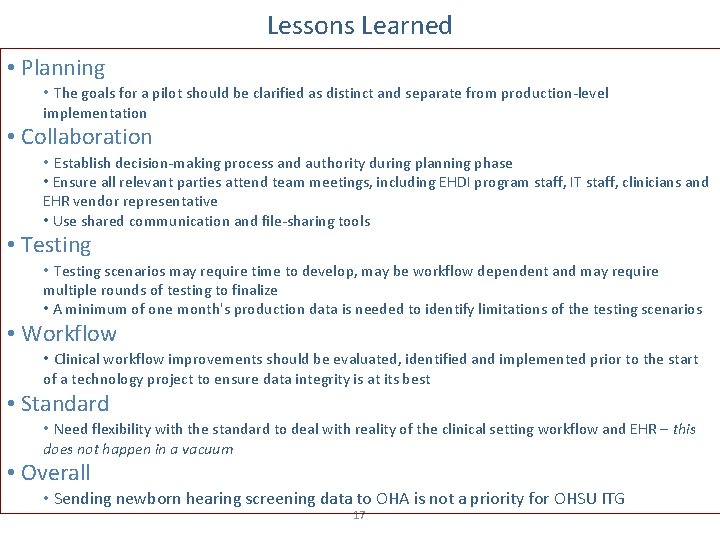

Lessons Learned • Planning • The goals for a pilot should be clarified as distinct and separate from production-level implementation • Collaboration • Establish decision-making process and authority during planning phase • Ensure all relevant parties attend team meetings, including EHDI program staff, IT staff, clinicians and EHR vendor representative • Use shared communication and file-sharing tools • Testing scenarios may require time to develop, may be workflow dependent and may require multiple rounds of testing to finalize • A minimum of one month's production data is needed to identify limitations of the testing scenarios • Workflow • Clinical workflow improvements should be evaluated, identified and implemented prior to the start of a technology project to ensure data integrity is at its best • Standard • Need flexibility with the standard to deal with reality of the clinical setting workflow and EHR – this does not happen in a vacuum • Overall • Sending newborn hearing screening data to OHA is not a priority for OHSU ITG 17

Recommendations • Develop testing mode capability that allows test cases to be re-run while preserving test scenario data to ease pilot testing for others • Move HPo. C creation to the State rather than hospitals • Focus more on improving data quality and less on transport • Funding and timelines need to be realistic • Define the minimum/core standards, allow local control of implementation decisions • National/academic standards specifications should be responsive and flexible to real life – rigidity is not realistic, and need for data trumps fidelity to the model 18

Acknowledgements Oregon Health Authority Meuy Swafford Heather Morrow-Almeida Trong Nguyen Chia-Hua Yu Claudia Bingham Oregon Health & Science University Heather Durham Doug Clauder Tom Drury OZ Systems Teresa Finitzo Sarah Shaw Ken Pool Public Health Informatics Institute Jim Jellison Trish Miller Lantana Consulting Group Lisa Nelson CDC John Eichwald Marcus Gaffney 19

Questions? Dina Dickerson dinapdx@gmail. com 503. 804. 8430 Xidong Deng xdeng@cdc. gov 404. 498. 6746 20

- Slides: 20