Early Experiences with NFS over RDMA Open Fabric

Early Experiences with NFS over RDMA Open. Fabric Workshop San Francisco, September 25, 2006 Sandia National Laboratories, CA Helen Y. Chen, Dov Cohen, Joe Kenny Jeff Decker, and Noah Fischer hycsw, idcoehn, jcdecke, nfische@sandia. gov SAND 2006 -4293 C

Outline • • Motivation RDMA technologies NFS over RDMA Testbed hardware and software Preliminary results and analysis Conclusion Ongoing work and Future Plans 2

What is NFS • A network attached storage file access protocol layered on RPC, typically carried over UDP/TCP over IP • Allow files to be shared among multiple clients across LAN and WAN • Standard, stable and mature protocol adopted for cluster platform 3

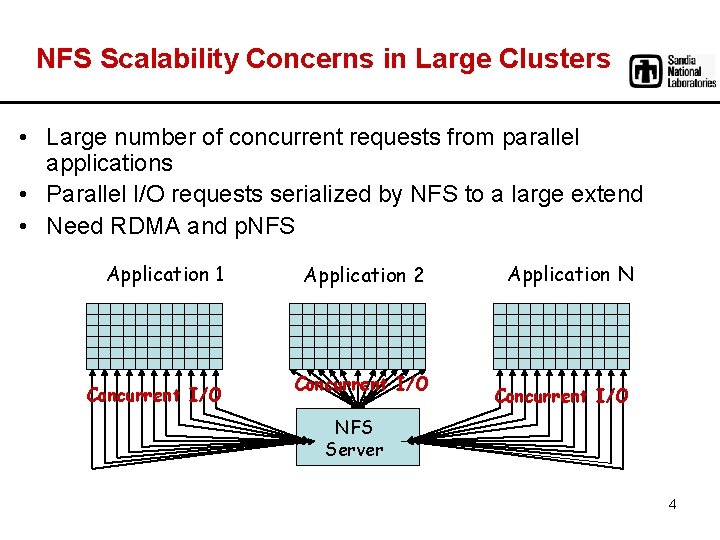

NFS Scalability Concerns in Large Clusters • Large number of concurrent requests from parallel applications • Parallel I/O requests serialized by NFS to a large extend • Need RDMA and p. NFS Application 1 Concurrent I/O Application 2 Concurrent I/O Application N Concurrent I/O NFS Server 4

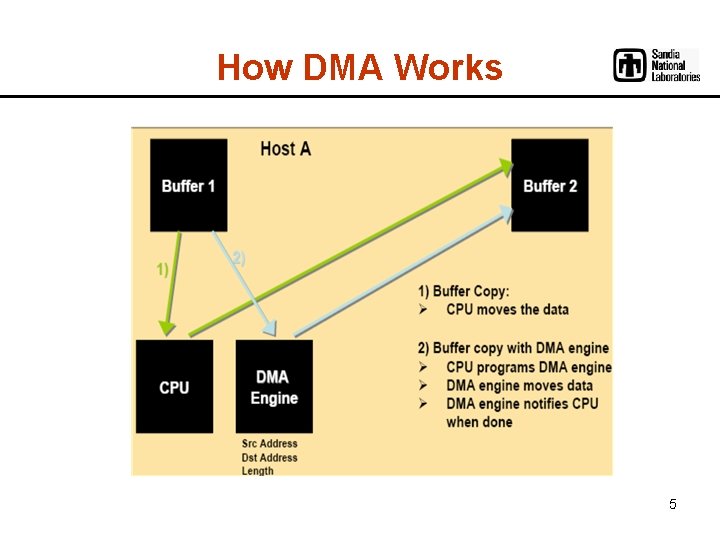

How DMA Works 5

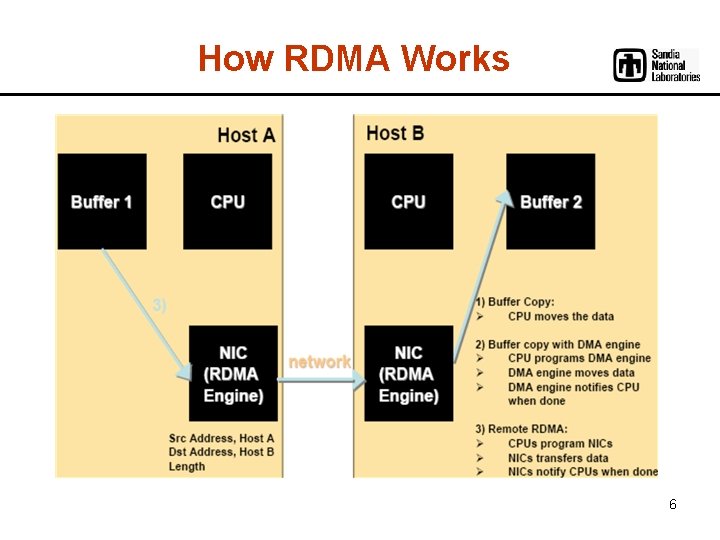

How RDMA Works 6

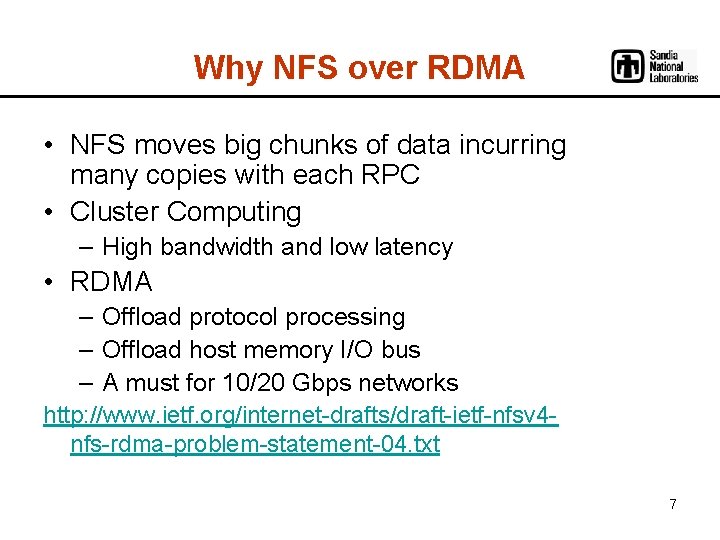

Why NFS over RDMA • NFS moves big chunks of data incurring many copies with each RPC • Cluster Computing – High bandwidth and low latency • RDMA – Offload protocol processing – Offload host memory I/O bus – A must for 10/20 Gbps networks http: //www. ietf. org/internet-drafts/draft-ietf-nfsv 4 nfs-rdma-problem-statement-04. txt 7

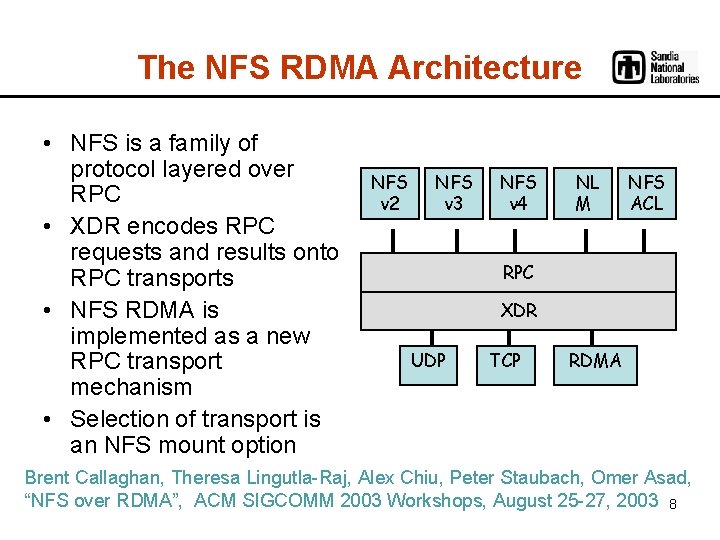

The NFS RDMA Architecture • NFS is a family of protocol layered over RPC • XDR encodes RPC requests and results onto RPC transports • NFS RDMA is implemented as a new RPC transport mechanism • Selection of transport is an NFS mount option NFS v 2 NFS v 3 NFS v 4 NL M NFS ACL RPC XDR UDP TCP RDMA Brent Callaghan, Theresa Lingutla-Raj, Alex Chiu, Peter Staubach, Omer Asad, “NFS over RDMA”, ACM SIGCOMM 2003 Workshops, August 25 -27, 2003 8

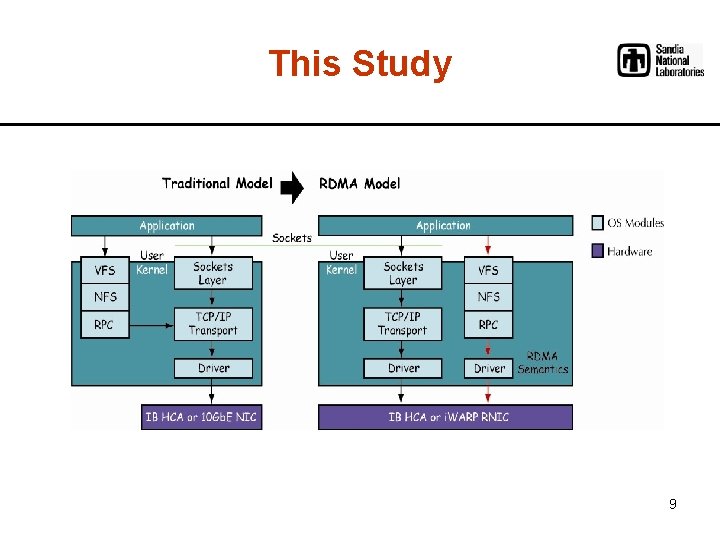

This Study 9

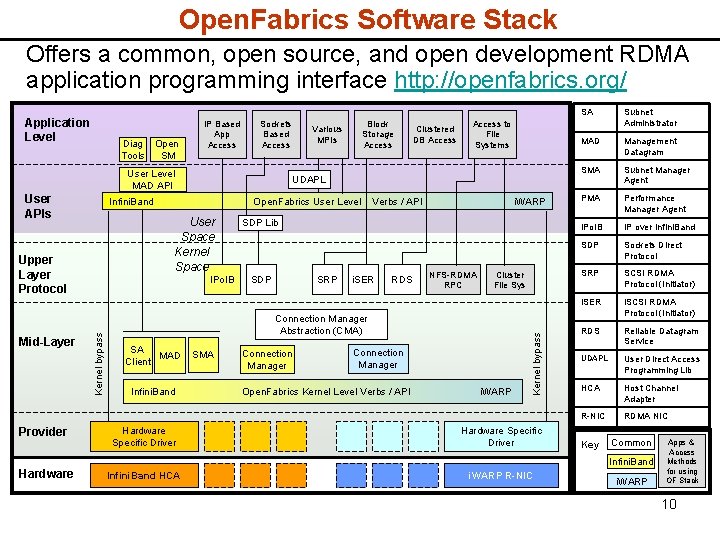

Open. Fabrics Software Stack Offers a common, open source, and open development RDMA application programming interface http: //openfabrics. org/ Diag Open Tools SM IP Based App Access Sockets Based Access User Level MAD API User APIs Provider Open. Fabrics User Level Verbs / API User Space Kernel Space IPo. IB Kernel bypass Mid-Layer Clustered DB Access to File Systems UDAPL Infini. Band Upper Layer Protocol Various MPIs Block Storage Access i. WARP SDP Lib SDP SRP i. SER RDS NFS-RDMA RPC Cluster File Sys Connection Manager Abstraction (CMA) SA MAD Client Infini. Band SMA Connection Manager Open. Fabrics Kernel Level Verbs / API i. WARP Kernel bypass Application Level Hardware Specific Driver Infini. Band HCA i. WARP R-NIC SA Subnet Administrator MAD Management Datagram SMA Subnet Manager Agent PMA Performance Manager Agent IPo. IB IP over Infini. Band SDP Sockets Direct Protocol SRP SCSI RDMA Protocol (Initiator) i. SER i. SCSI RDMA Protocol (Initiator) RDS Reliable Datagram Service UDAPL User Direct Access Programming Lib HCA Host Channel Adapter R-NIC RDMA NIC Key Common Infini. Band Hardware i. WARP Apps & Access Methods for using OF Stack 10

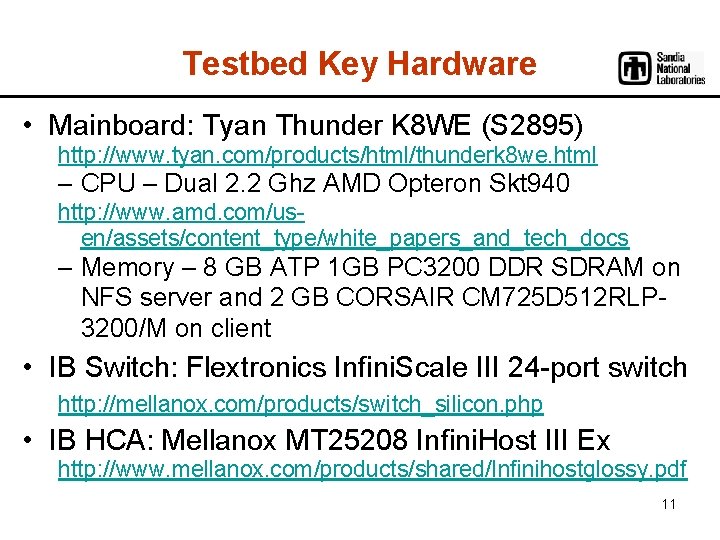

Testbed Key Hardware • Mainboard: Tyan Thunder K 8 WE (S 2895) http: //www. tyan. com/products/html/thunderk 8 we. html – CPU – Dual 2. 2 Ghz AMD Opteron Skt 940 http: //www. amd. com/usen/assets/content_type/white_papers_and_tech_docs – Memory – 8 GB ATP 1 GB PC 3200 DDR SDRAM on NFS server and 2 GB CORSAIR CM 725 D 512 RLP 3200/M on client • IB Switch: Flextronics Infini. Scale III 24 -port switch http: //mellanox. com/products/switch_silicon. php • IB HCA: Mellanox MT 25208 Infini. Host III Ex http: //www. mellanox. com/products/shared/Infinihostglossy. pdf 11

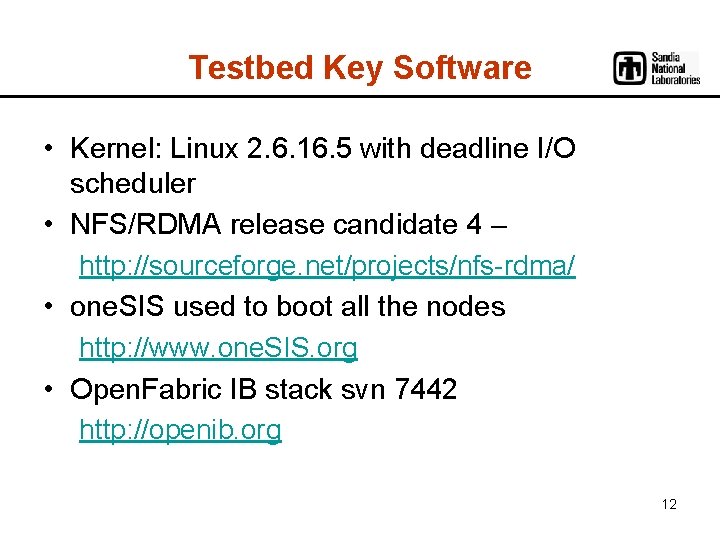

Testbed Key Software • Kernel: Linux 2. 6. 16. 5 with deadline I/O scheduler • NFS/RDMA release candidate 4 – http: //sourceforge. net/projects/nfs-rdma/ • one. SIS used to boot all the nodes http: //www. one. SIS. org • Open. Fabric IB stack svn 7442 http: //openib. org 12

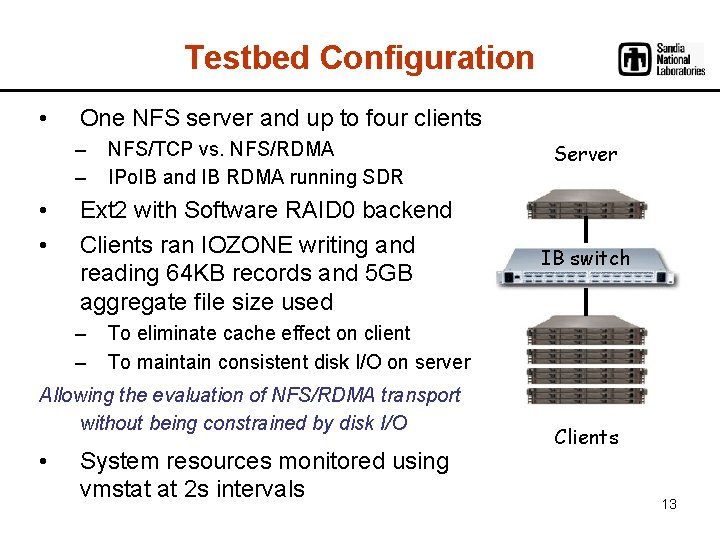

Testbed Configuration • One NFS server and up to four clients – – • • NFS/TCP vs. NFS/RDMA IPo. IB and IB RDMA running SDR Ext 2 with Software RAID 0 backend Clients ran IOZONE writing and reading 64 KB records and 5 GB aggregate file size used – – IB switch To eliminate cache effect on client To maintain consistent disk I/O on server Allowing the evaluation of NFS/RDMA transport without being constrained by disk I/O • Server System resources monitored using vmstat at 2 s intervals Clients 13

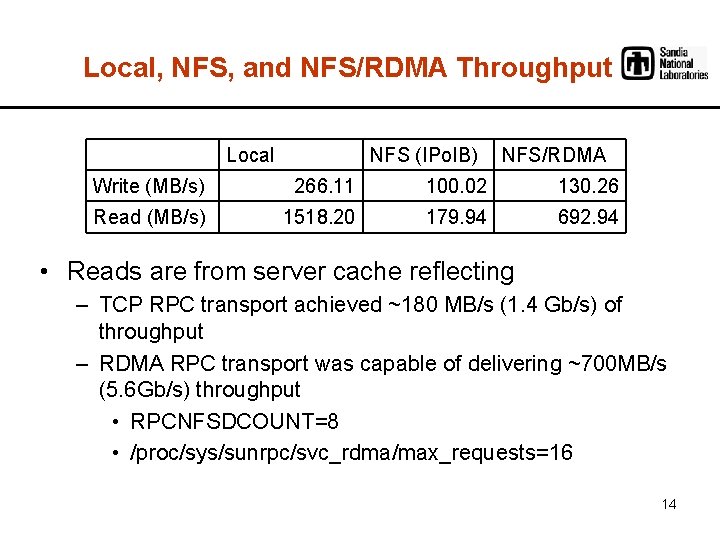

Local, NFS, and NFS/RDMA Throughput Local NFS (IPo. IB) NFS/RDMA Write (MB/s) 266. 11 100. 02 130. 26 Read (MB/s) 1518. 20 179. 94 692. 94 • Reads are from server cache reflecting – TCP RPC transport achieved ~180 MB/s (1. 4 Gb/s) of throughput – RDMA RPC transport was capable of delivering ~700 MB/s (5. 6 Gb/s) throughput • RPCNFSDCOUNT=8 • /proc/sys/sunrpc/svc_rdma/max_requests=16 14

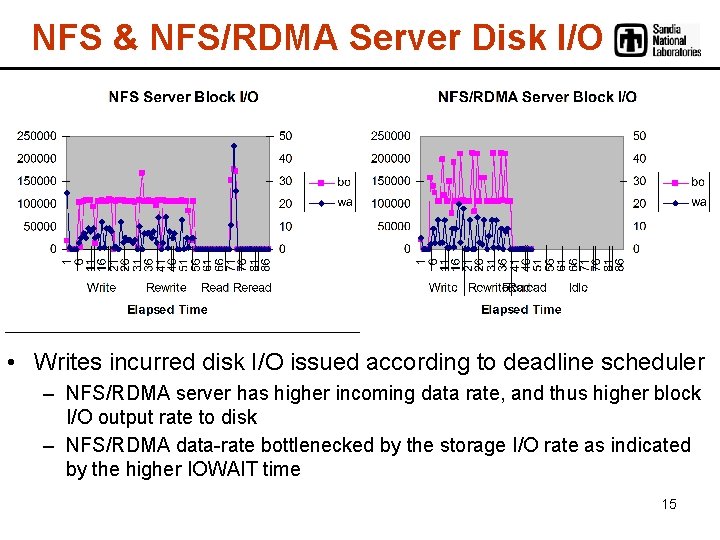

NFS & NFS/RDMA Server Disk I/O • Writes incurred disk I/O issued according to deadline scheduler – NFS/RDMA server has higher incoming data rate, and thus higher block I/O output rate to disk – NFS/RDMA data-rate bottlenecked by the storage I/O rate as indicated by the higher IOWAIT time 15

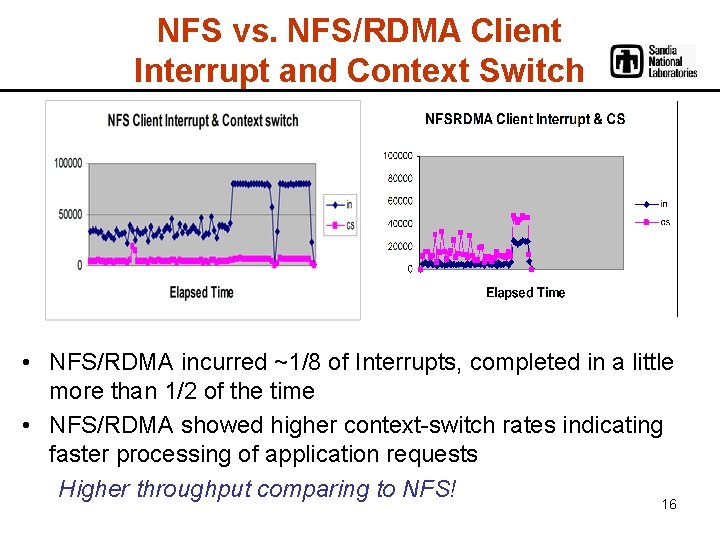

NFS vs. NFS/RDMA Client Interrupt and Context Switch • NFS/RDMA incurred ~1/8 of Interrupts, completed in a little more than 1/2 of the time • NFS/RDMA showed higher context-switch rates indicating faster processing of application requests Higher throughput comparing to NFS! 16

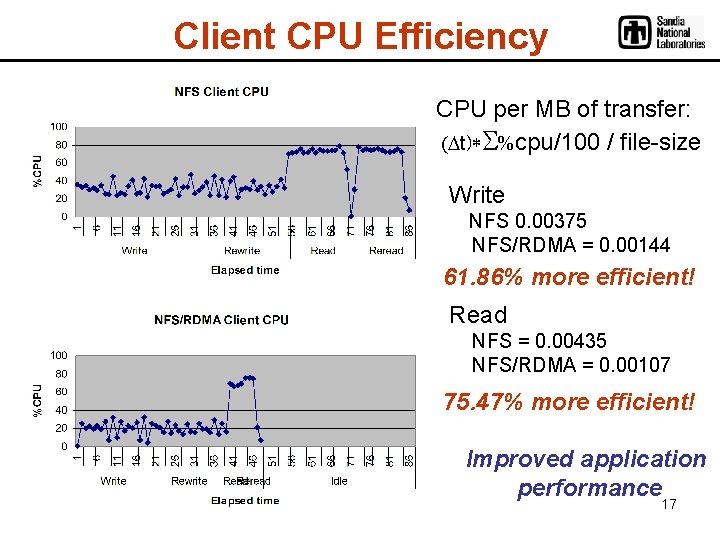

Client CPU Efficiency CPU per MB of transfer: (Dt)*S%cpu/100 / file-size Write NFS 0. 00375 NFS/RDMA = 0. 00144 61. 86% more efficient! Read NFS = 0. 00435 NFS/RDMA = 0. 00107 75. 47% more efficient! Improved application performance 17

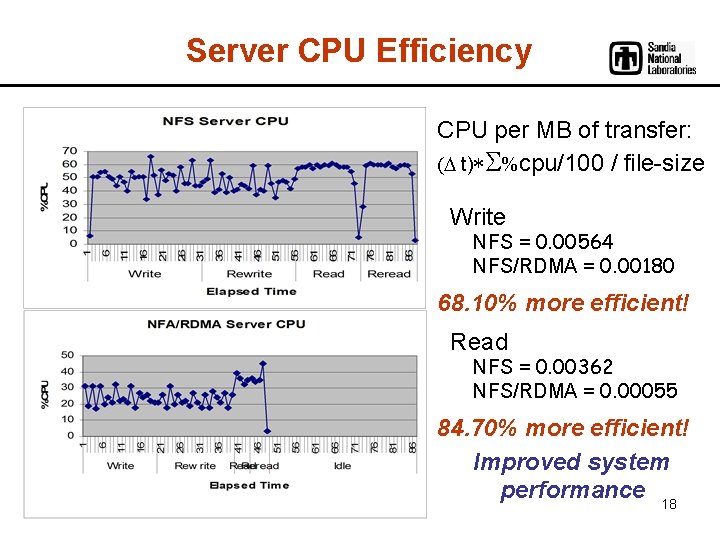

Server CPU Efficiency CPU per MB of transfer: (D t)*S%cpu/100 / file-size Write NFS = 0. 00564 NFS/RDMA = 0. 00180 68. 10% more efficient! Read NFS = 0. 00362 NFS/RDMA = 0. 00055 84. 70% more efficient! Improved system performance 18

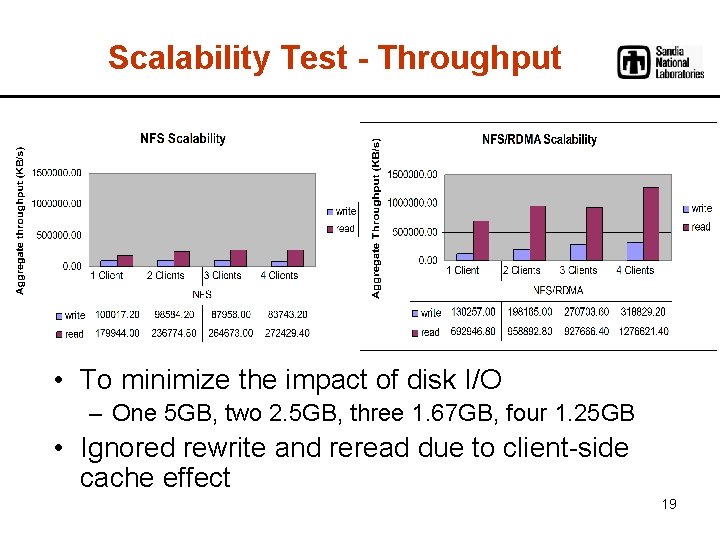

Scalability Test - Throughput • To minimize the impact of disk I/O – One 5 GB, two 2. 5 GB, three 1. 67 GB, four 1. 25 GB • Ignored rewrite and reread due to client-side cache effect 19

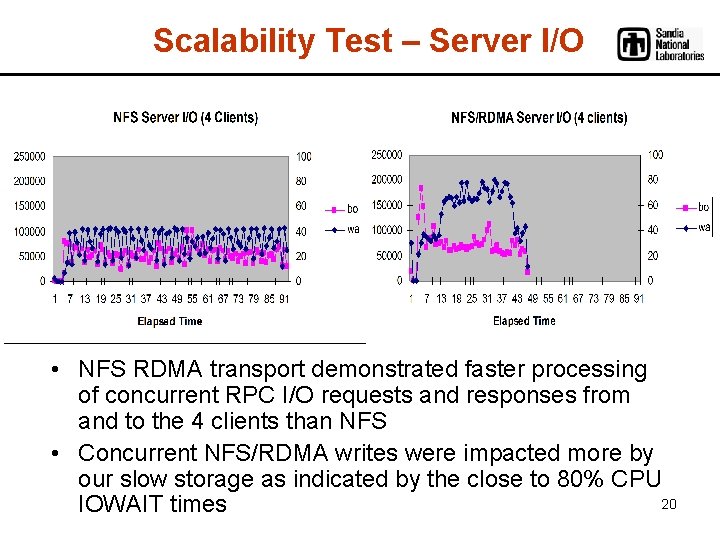

Scalability Test – Server I/O • NFS RDMA transport demonstrated faster processing of concurrent RPC I/O requests and responses from and to the 4 clients than NFS • Concurrent NFS/RDMA writes were impacted more by our slow storage as indicated by the close to 80% CPU 20 IOWAIT times

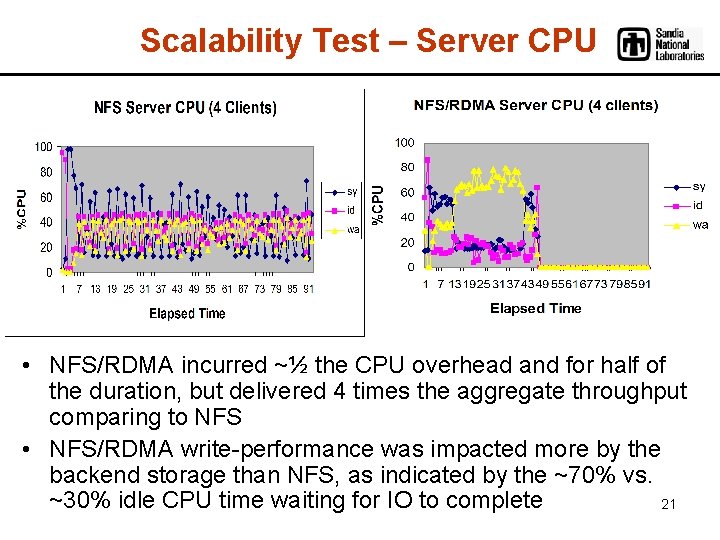

Scalability Test – Server CPU • NFS/RDMA incurred ~½ the CPU overhead and for half of the duration, but delivered 4 times the aggregate throughput comparing to NFS • NFS/RDMA write-performance was impacted more by the backend storage than NFS, as indicated by the ~70% vs. ~30% idle CPU time waiting for IO to complete 21

Preliminary Conclusion • Compared to NFS, NFS/RDMA demonstrated: – impressive CPU efficiency – and promising scalability NFS/RDMA will Improve application and system level performance! NFS/RDMA can easily take advantage of the bandwidth in 10/20 Gigabit network for large file accesses 22

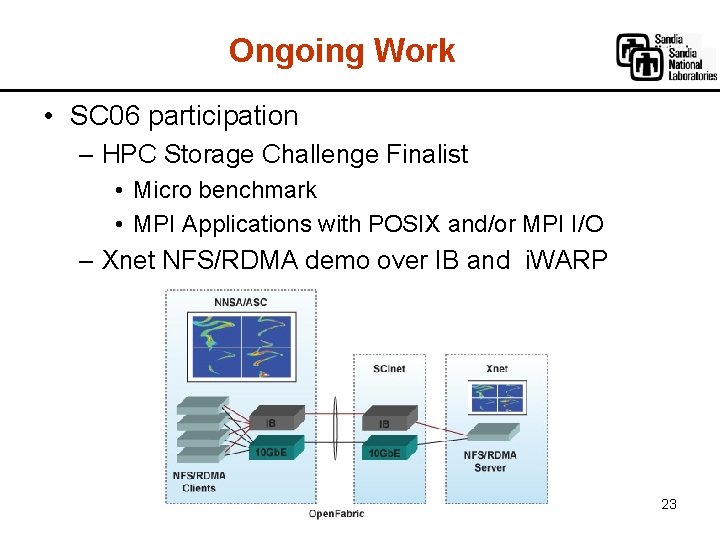

Ongoing Work • SC 06 participation – HPC Storage Challenge Finalist • Micro benchmark • MPI Applications with POSIX and/or MPI I/O – Xnet NFS/RDMA demo over IB and i. WARP 23

Future Plans • Initiate study of NFSv 4 p. NFS performance with RDMA storage – Blocks (SRP, i. SER) – File (NFSv 4/RDMA) – Object (i. SCSI-OSD)? 24

Why NFSv 4 • NFSv 3 – Use of ancillary Network Lock Manager (NLM) protocol adds complexity and limits scalability in parallel I/O – No attribute caching requirement squelches performance • NFSv 4 – Use of Integrated lock management allows byte range locking required for Parallel I/O – Compound operations improves efficiency of data movement and … 25

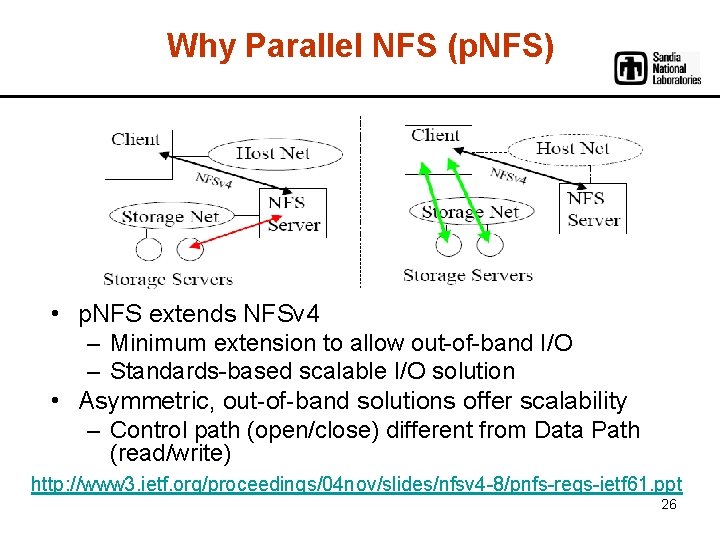

Why Parallel NFS (p. NFS) • p. NFS extends NFSv 4 – Minimum extension to allow out-of-band I/O – Standards-based scalable I/O solution • Asymmetric, out-of-band solutions offer scalability – Control path (open/close) different from Data Path (read/write) http: //www 3. ietf. org/proceedings/04 nov/slides/nfsv 4 -8/pnfs-reqs-ietf 61. ppt 26

Acknowledgement • The authors would like to thank the following for their technical input – Tom Talpey and James Lentini from Net. App – Tom Tucker from Open Grid Computing – James Ting from Mellanox – Matt Leininger and Mitch Sukalski from Sandia 27

- Slides: 27