Dynamic Vision Dynamic vision copes with Moving or

- Slides: 36

Dynamic Vision • Dynamic vision copes with – Moving or changing objects (size, structure, shape) – Changing illumination – Changing viewpoints • Input: sequence of image frames – Frame: Image at a particular instant of time – Differences between frames: due to motion of camera or object, illumination changes, changes of objects • Output: detect changes, compute motion of camera or object, recognize moving objects etc. E. G. M. Petrakis Dynamic Vision 1

• There are four possibilities: – Stationary Camera, Stationary Objects (SCSO) – Stationary Camera, Moving Objects (SCMO) – Moving Camera, Stationary Objects (MCSO) – Moving Camera, Moving Objects (MCMO) • Different techniques for each case – SCSO is simply static scene analysis: simplest – MCSO most general and complex case – MCSO, MCMO in navigation applications • Dynamic scene analysis more info can be easier than static scene analysis E. G. M. Petrakis Dynamic Vision 2

• Frame sequence: F(x, y, t) – Intensity of pixel x, y at time t – Assume that t represents the t-th frame – The image is acquired by camera at the origin of the 3 -D coordinate system • Detect changes in F(x, y, t) between successive frames – At pixel, edge, region level – Aggregate changes to obtain useful info (e. g. , trajectories) E. G. M. Petrakis Dynamic Vision 3

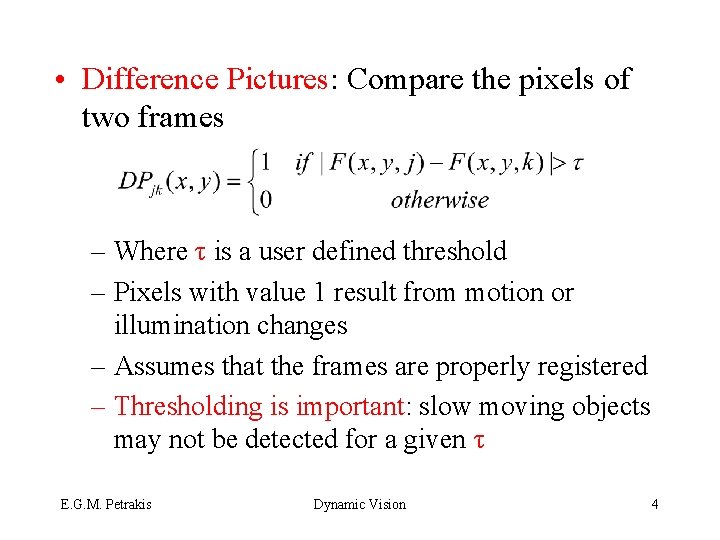

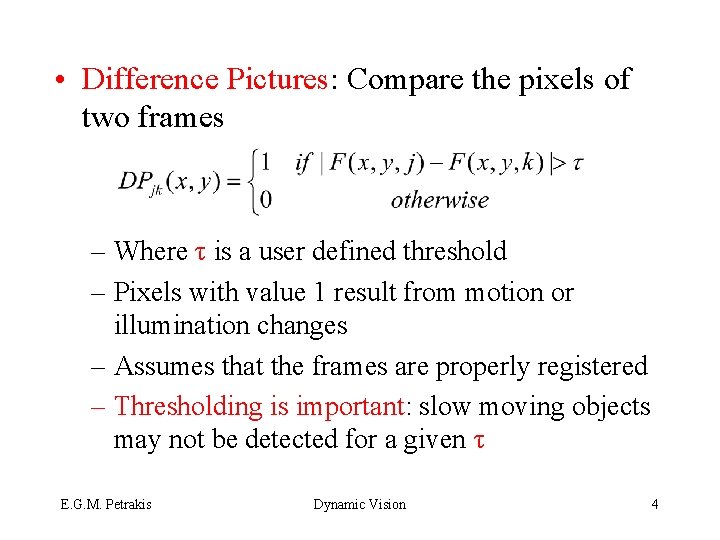

• Difference Pictures: Compare the pixels of two frames – Where τ is a user defined threshold – Pixels with value 1 result from motion or illumination changes – Assumes that the frames are properly registered – Thresholding is important: slow moving objects may not be detected for a given τ E. G. M. Petrakis Dynamic Vision 4

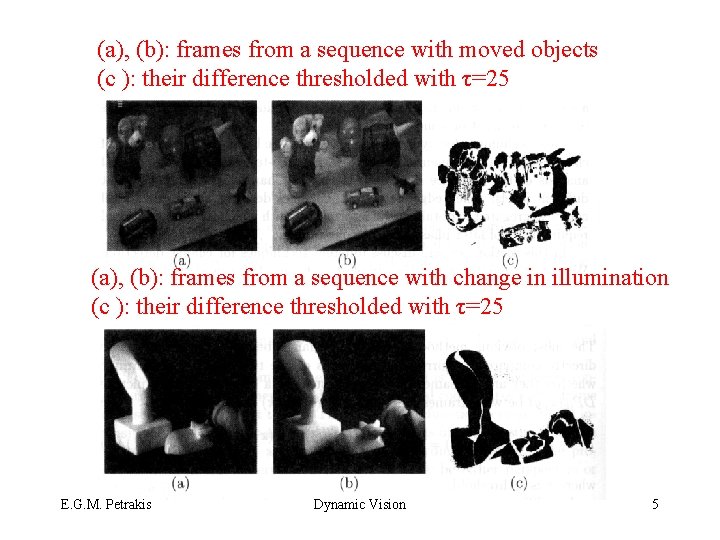

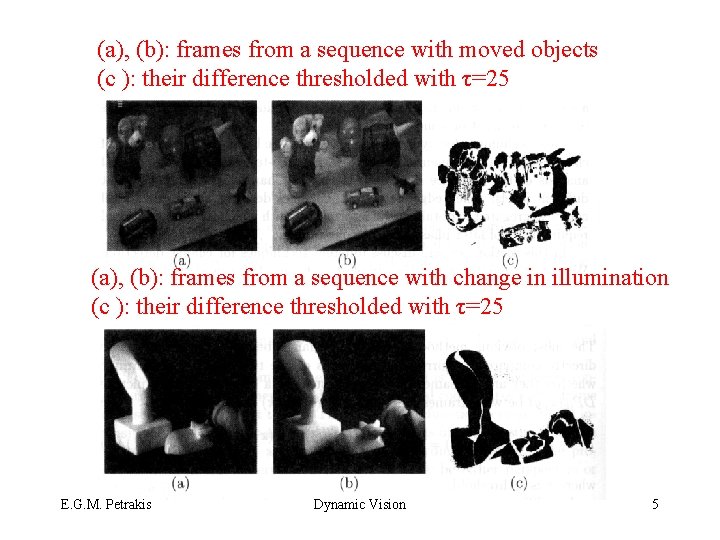

(a), (b): frames from a sequence with moved objects (c ): their difference thresholded with τ=25 (a), (b): frames from a sequence with change in illumination (c ): their difference thresholded with τ=25 E. G. M. Petrakis Dynamic Vision 5

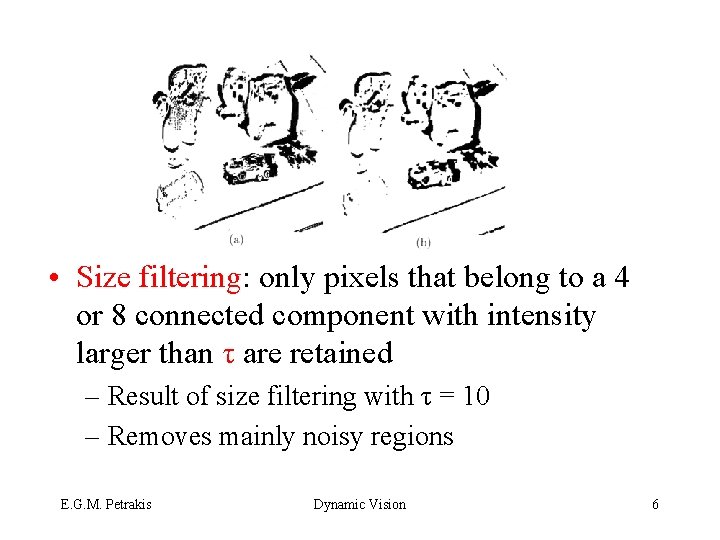

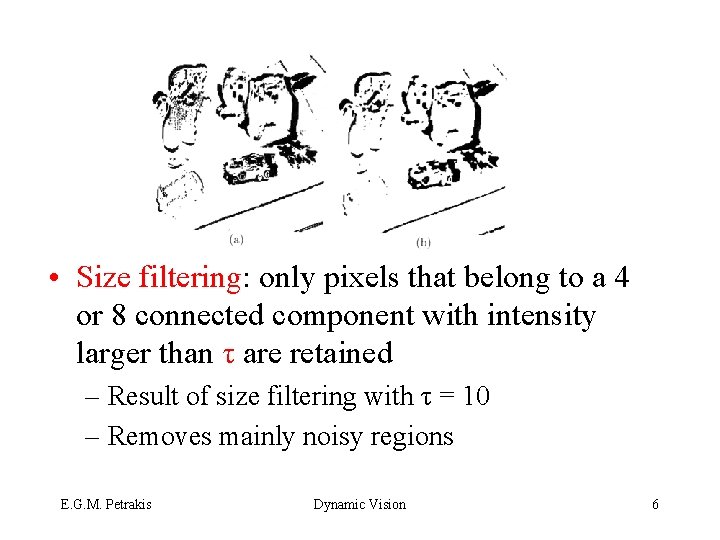

• Size filtering: only pixels that belong to a 4 or 8 connected component with intensity larger than τ are retained – Result of size filtering with τ = 10 – Removes mainly noisy regions E. G. M. Petrakis Dynamic Vision 6

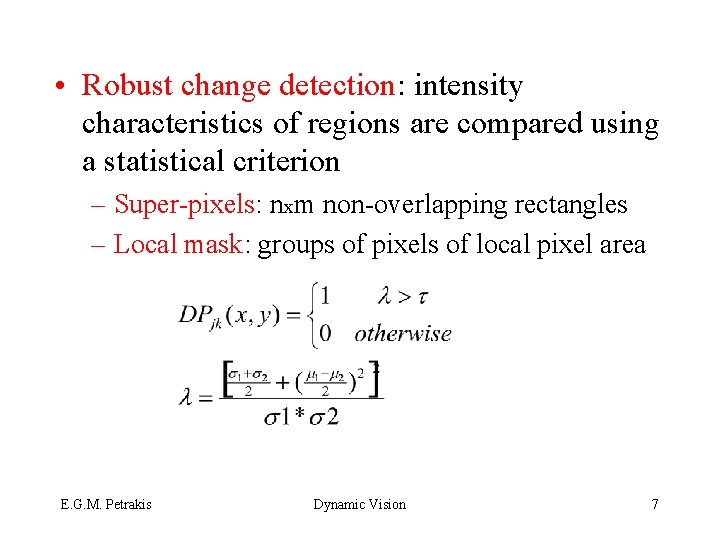

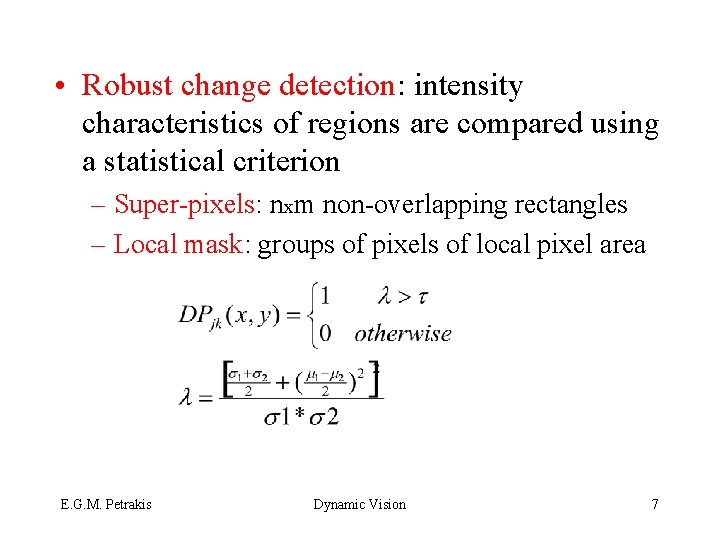

• Robust change detection: intensity characteristics of regions are compared using a statistical criterion – Super-pixels: nxm non-overlapping rectangles – Local mask: groups of pixels of local pixel area E. G. M. Petrakis Dynamic Vision 7

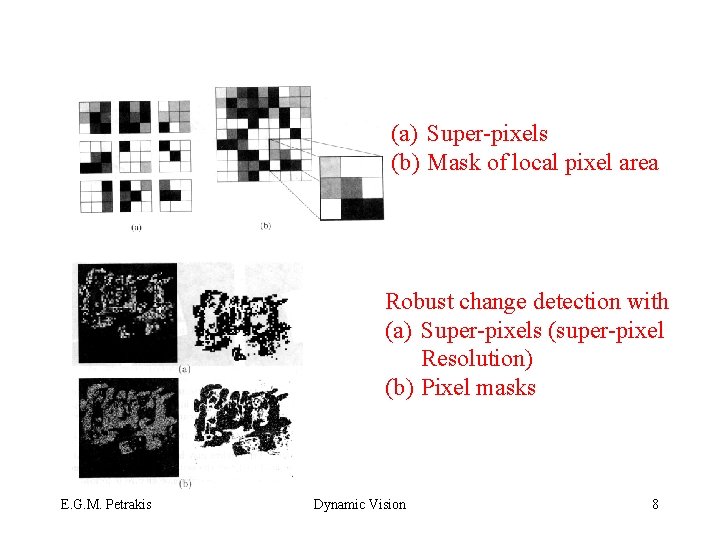

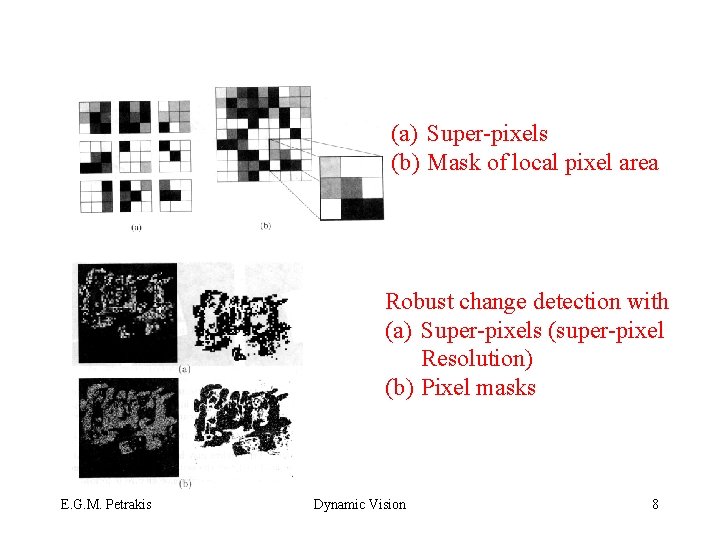

(a) Super-pixels (b) Mask of local pixel area Robust change detection with (a) Super-pixels (super-pixel Resolution) (b) Pixel masks E. G. M. Petrakis Dynamic Vision 8

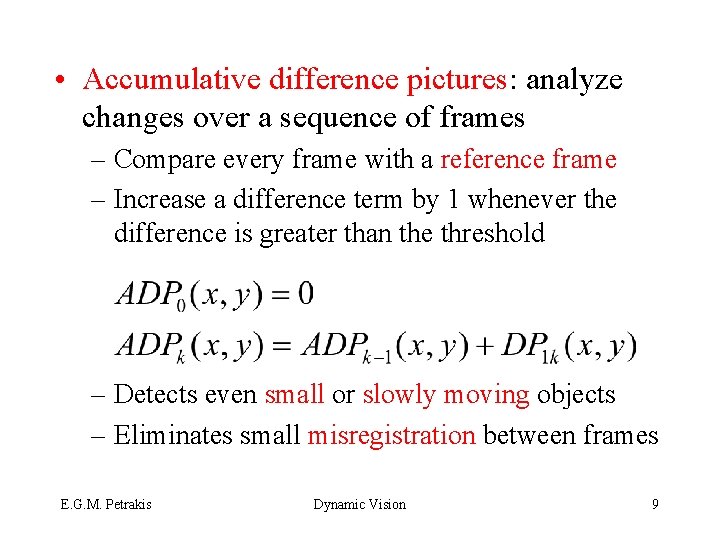

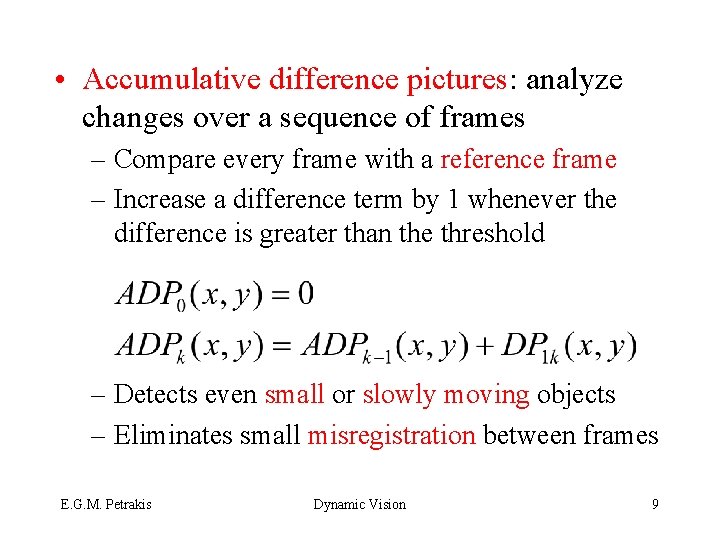

• Accumulative difference pictures: analyze changes over a sequence of frames – Compare every frame with a reference frame – Increase a difference term by 1 whenever the difference is greater than the threshold – Detects even small or slowly moving objects – Eliminates small misregistration between frames E. G. M. Petrakis Dynamic Vision 9

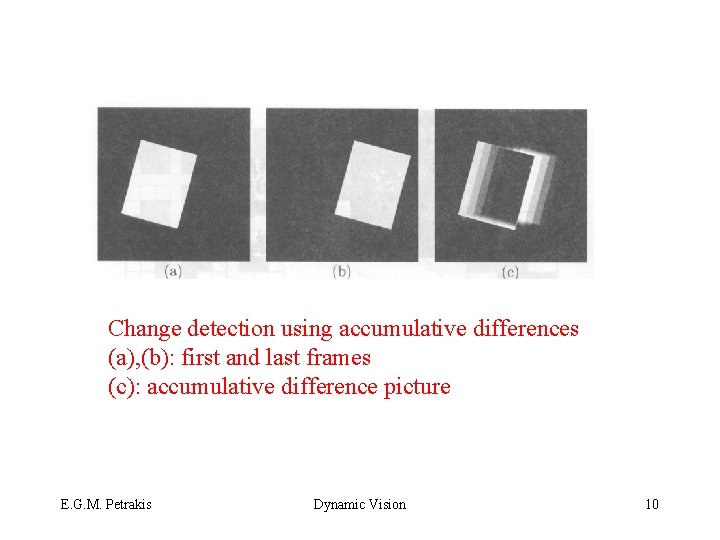

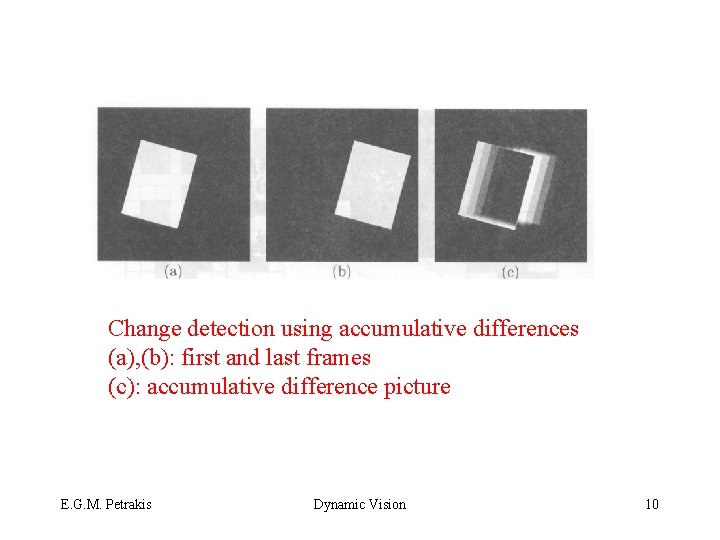

Change detection using accumulative differences (a), (b): first and last frames (c): accumulative difference picture E. G. M. Petrakis Dynamic Vision 10

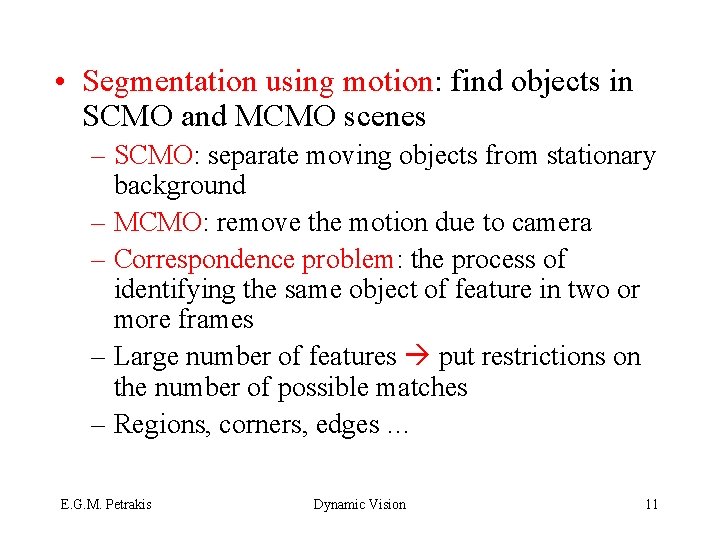

• Segmentation using motion: find objects in SCMO and MCMO scenes – SCMO: separate moving objects from stationary background – MCMO: remove the motion due to camera – Correspondence problem: the process of identifying the same object of feature in two or more frames – Large number of features put restrictions on the number of possible matches – Regions, corners, edges … E. G. M. Petrakis Dynamic Vision 11

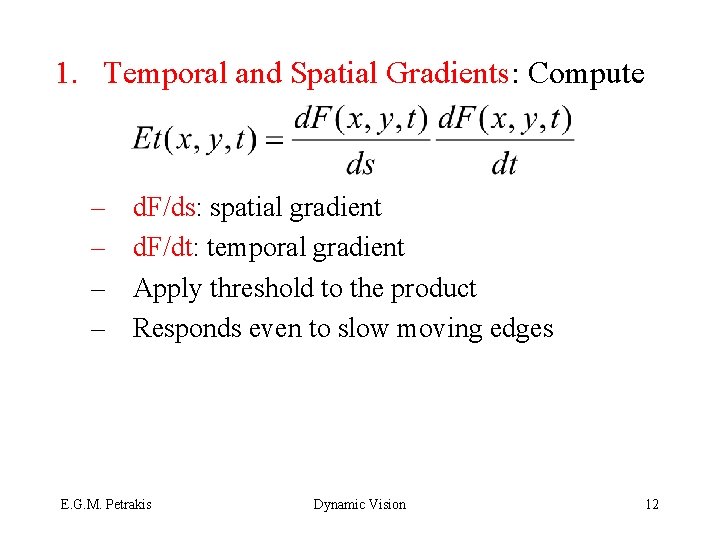

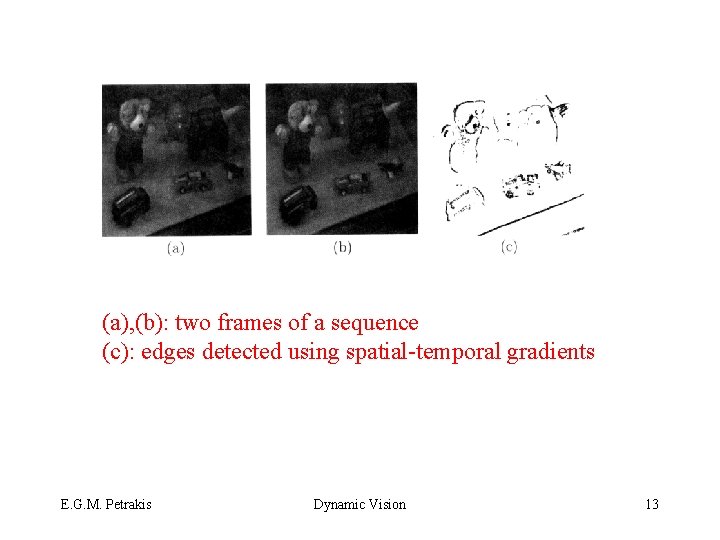

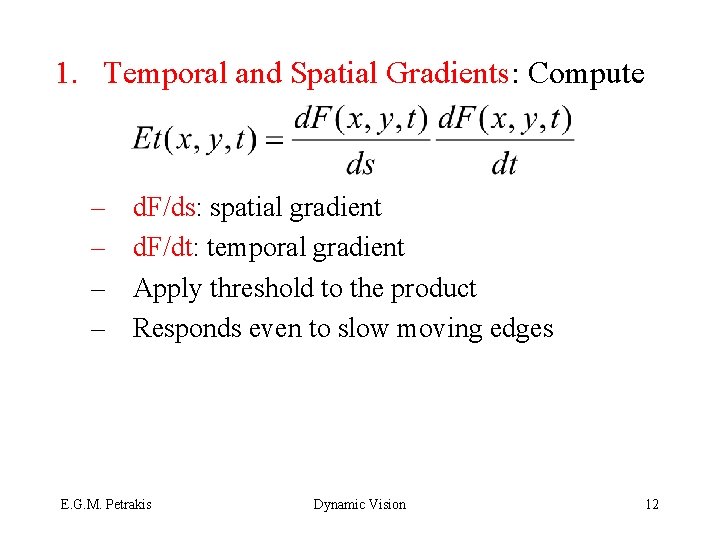

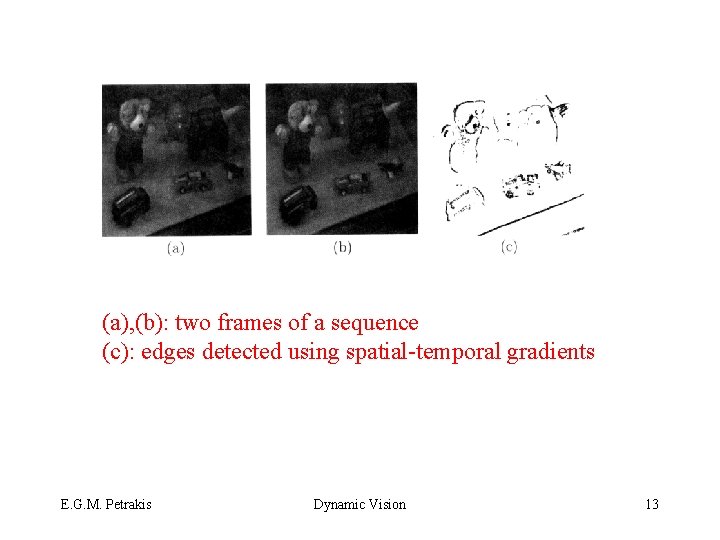

1. Temporal and Spatial Gradients: Compute – – d. F/ds: spatial gradient d. F/dt: temporal gradient Apply threshold to the product Responds even to slow moving edges E. G. M. Petrakis Dynamic Vision 12

(a), (b): two frames of a sequence (c): edges detected using spatial-temporal gradients E. G. M. Petrakis Dynamic Vision 13

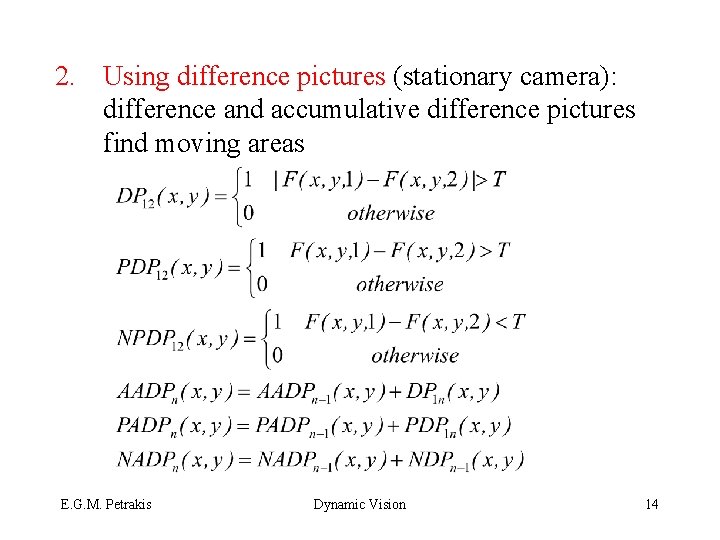

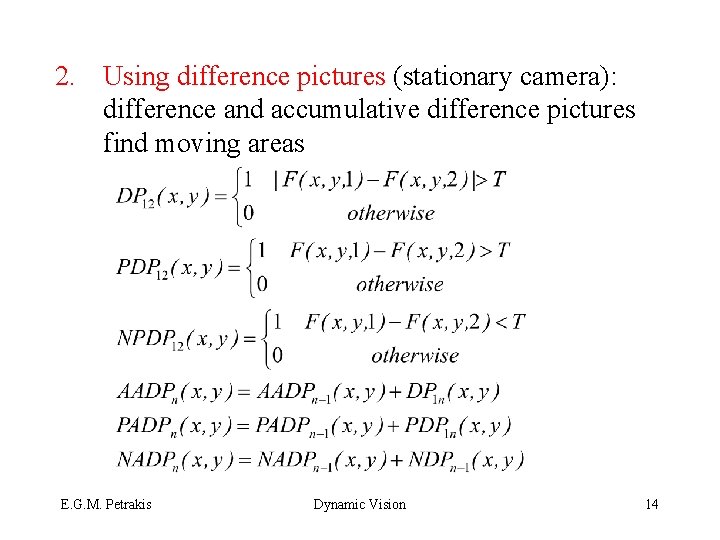

2. Using difference pictures (stationary camera): difference and accumulative difference pictures find moving areas E. G. M. Petrakis Dynamic Vision 14

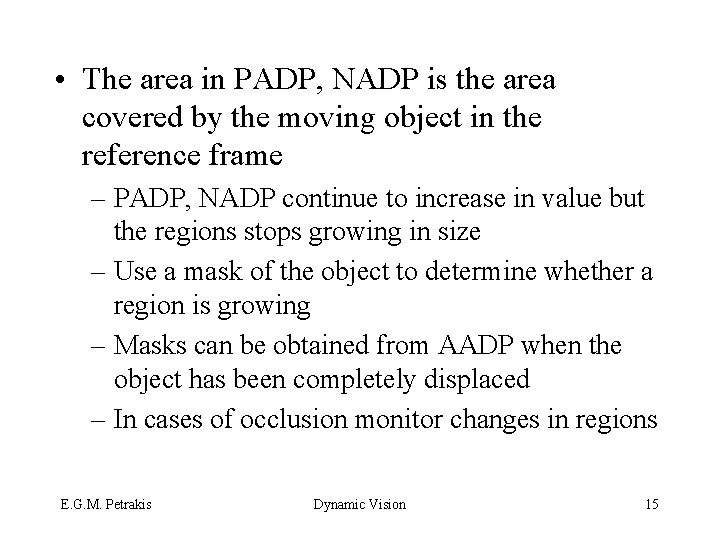

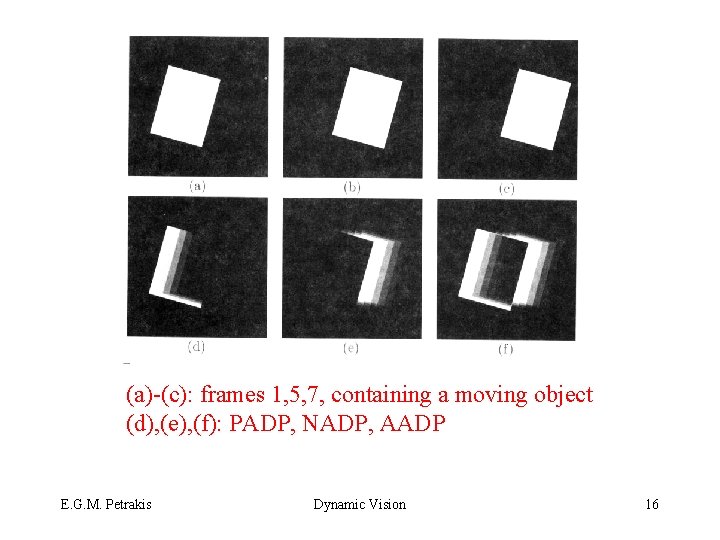

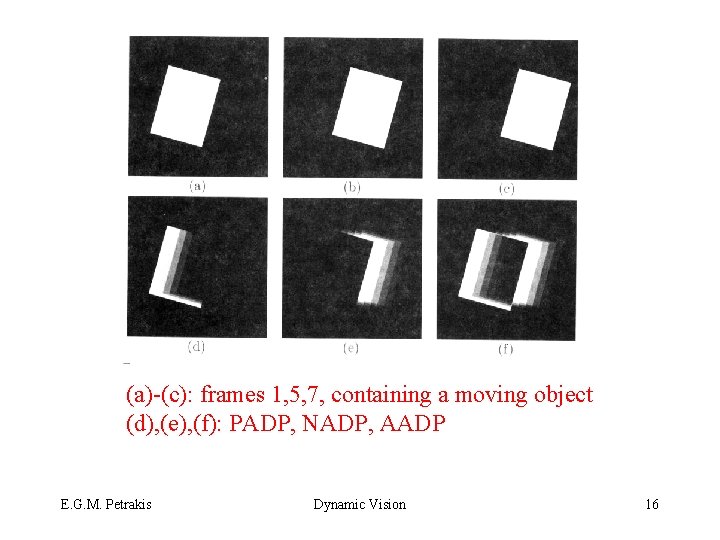

• The area in PADP, NADP is the area covered by the moving object in the reference frame – PADP, NADP continue to increase in value but the regions stops growing in size – Use a mask of the object to determine whether a region is growing – Masks can be obtained from AADP when the object has been completely displaced – In cases of occlusion monitor changes in regions E. G. M. Petrakis Dynamic Vision 15

(a)-(c): frames 1, 5, 7, containing a moving object (d), (e), (f): PADP, NADP, AADP E. G. M. Petrakis Dynamic Vision 16

• Motion correspondence: to determine the motion of objects establish a correspondence between features in two frames – Correspondence problem: pair a point pi=(xi, yi) in the first image with a point pj=(xj, yj) in the second image – Disparity: dij=(xi - xj, yi - yj) – Compute disparity using relaxation labeling – Questions: how are points selected for matching? how are the correct matches chosen? what constraints? E. G. M. Petrakis Dynamic Vision 17

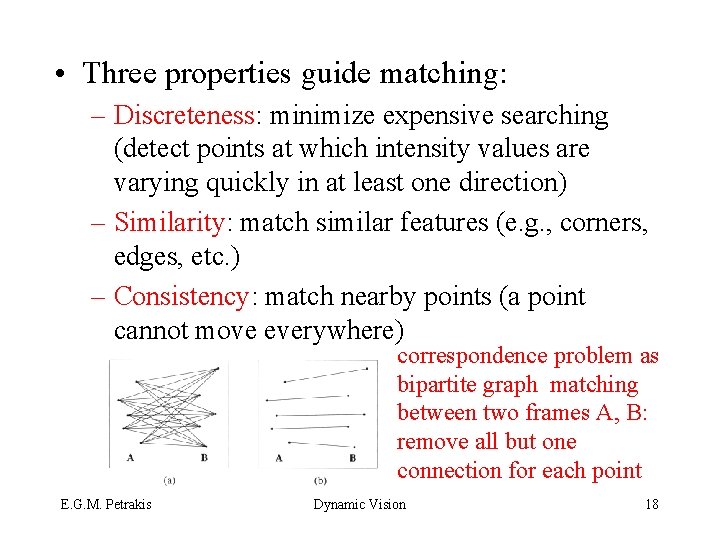

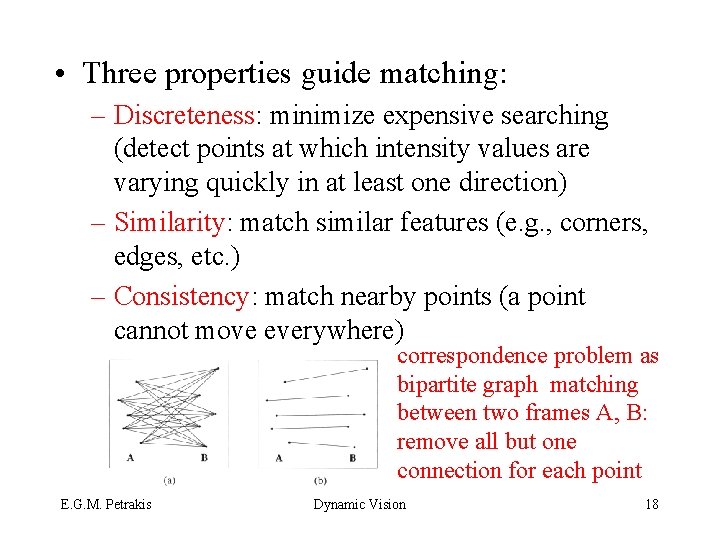

• Three properties guide matching: – Discreteness: minimize expensive searching (detect points at which intensity values are varying quickly in at least one direction) – Similarity: match similar features (e. g. , corners, edges, etc. ) – Consistency: match nearby points (a point cannot move everywhere) correspondence problem as bipartite graph matching between two frames A, B: remove all but one connection for each point E. G. M. Petrakis Dynamic Vision 18

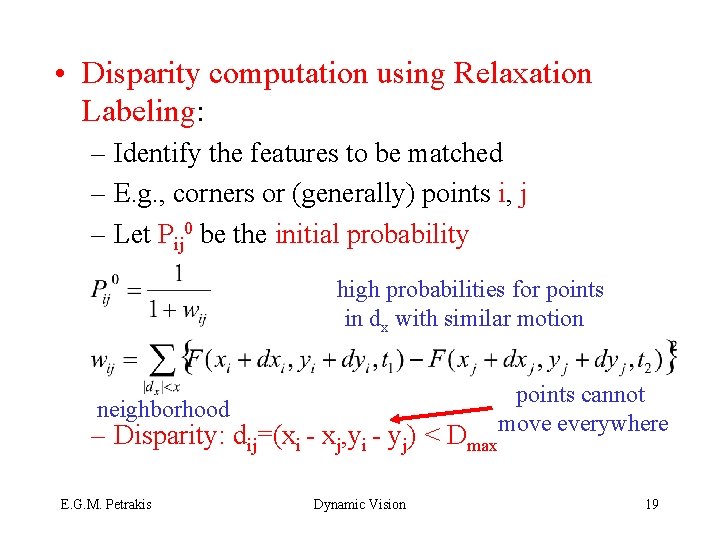

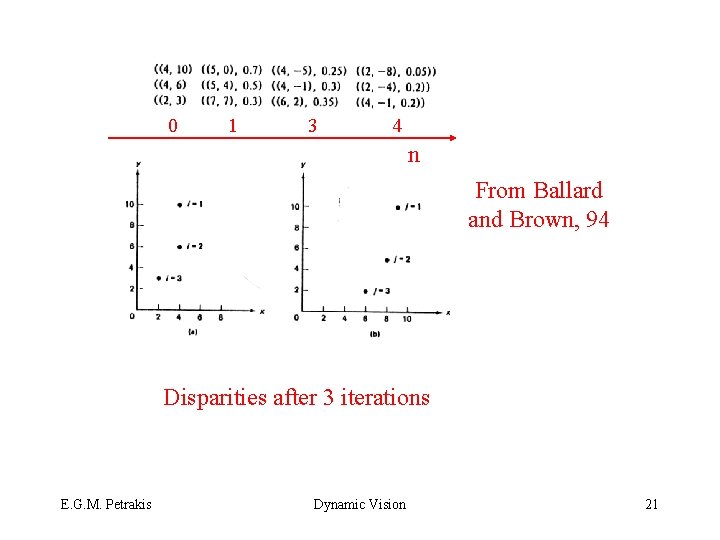

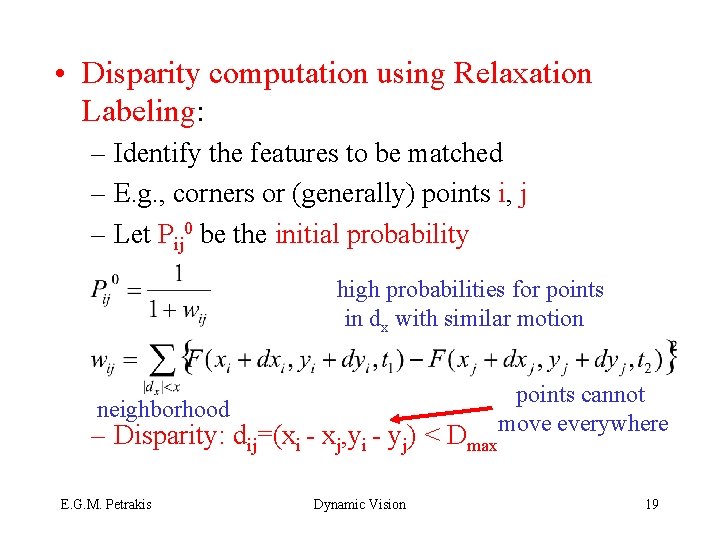

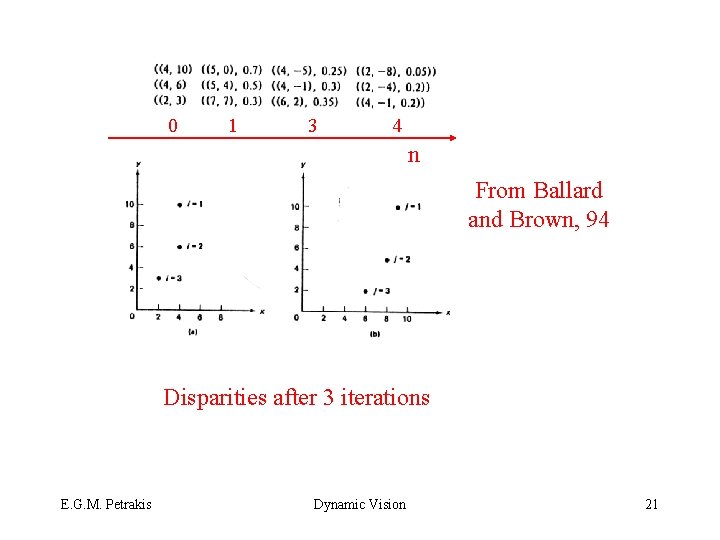

• Disparity computation using Relaxation Labeling: – Identify the features to be matched – E. g. , corners or (generally) points i, j – Let Pij 0 be the initial probability high probabilities for points in dx with similar motion points cannot move everywhere neighborhood – Disparity: dij=(xi - xj, yi - yj) < Dmax E. G. M. Petrakis Dynamic Vision 19

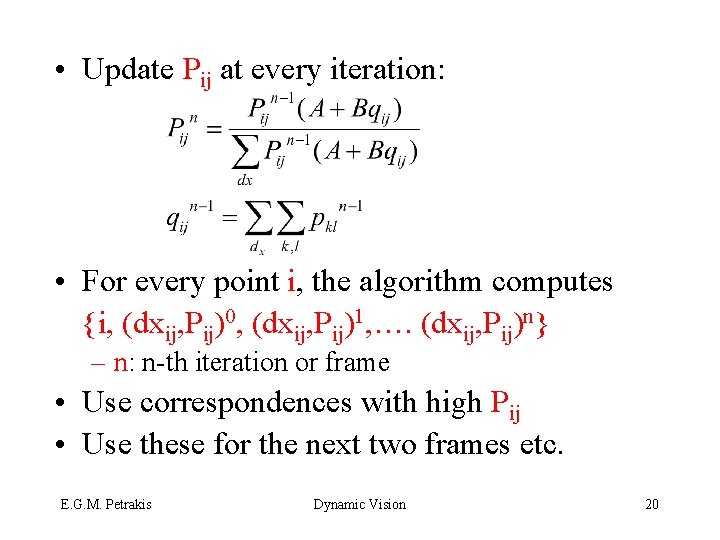

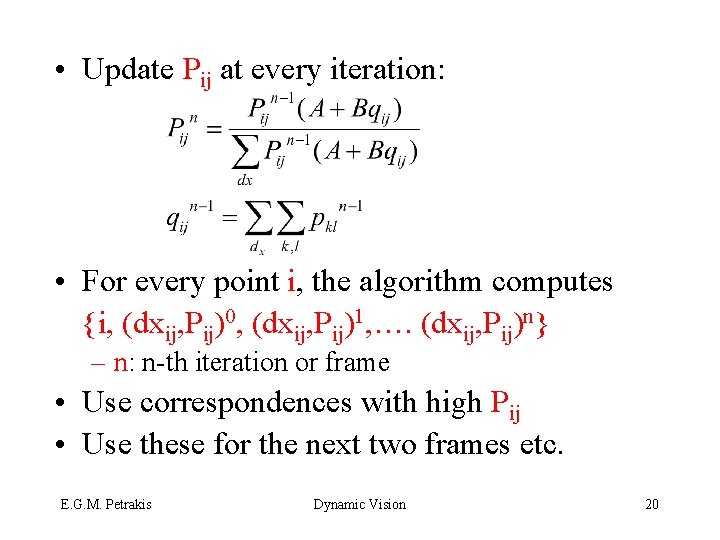

• Update Pij at every iteration: • For every point i, the algorithm computes {i, (dxij, Pij)0, (dxij, Pij)1, …. (dxij, Pij)n} – n: n-th iteration or frame • Use correspondences with high Pij • Use these for the next two frames etc. E. G. M. Petrakis Dynamic Vision 20

0 1 3 4 n From Ballard and Brown, 94 Disparities after 3 iterations E. G. M. Petrakis Dynamic Vision 21

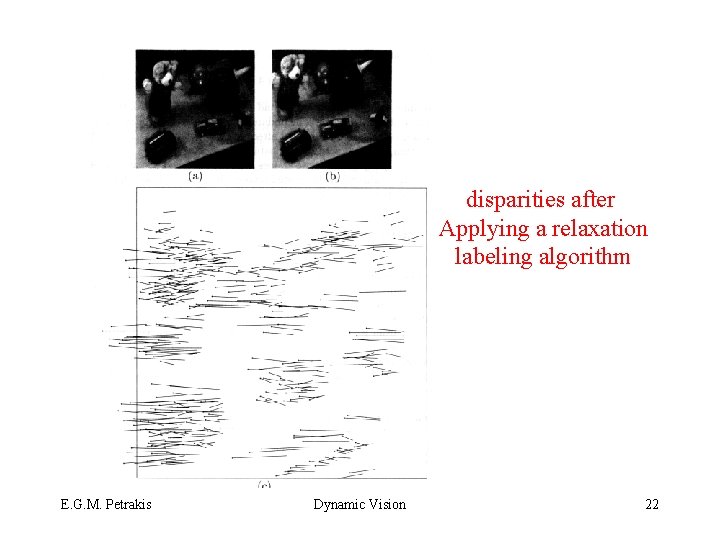

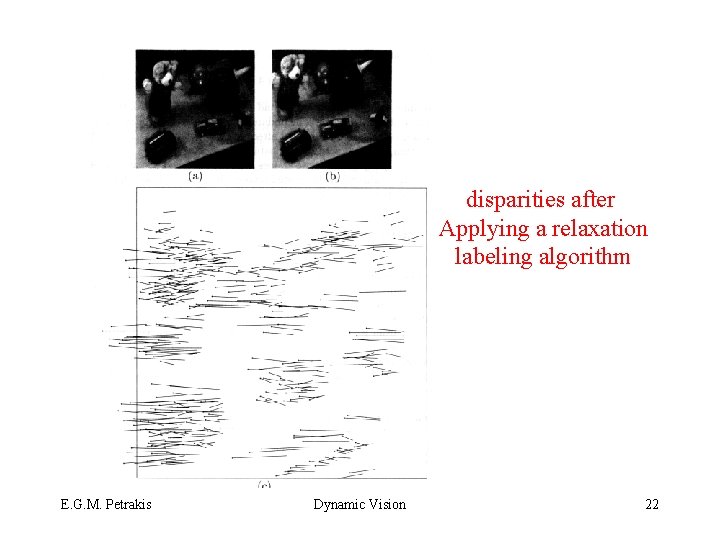

disparities after Applying a relaxation labeling algorithm E. G. M. Petrakis Dynamic Vision 22

• Image flow: velocity field in the image due to motion of the observer, objects or both – Velocity vector of each pixel – Image flow is computed at each pixel – SCMO: most pixels will have zero velocity • Methods: pixel and feature based methods compute image flow for all pixels or for specific features (e. g. , corners) – Relaxation labeling methods – Gradient Based methods E. G. M. Petrakis Dynamic Vision 23

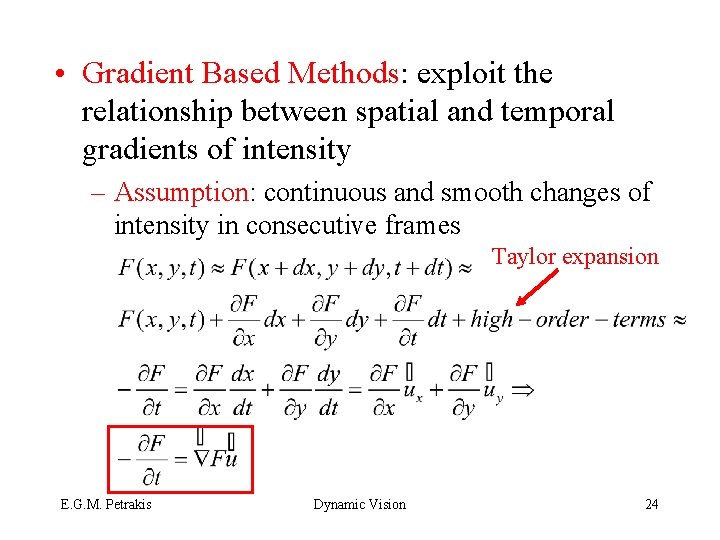

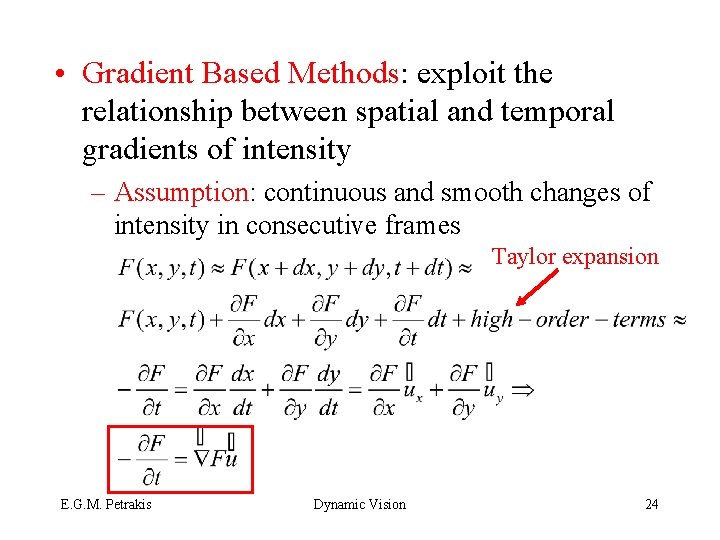

• Gradient Based Methods: exploit the relationship between spatial and temporal gradients of intensity – Assumption: continuous and smooth changes of intensity in consecutive frames Taylor expansion E. G. M. Petrakis Dynamic Vision 24

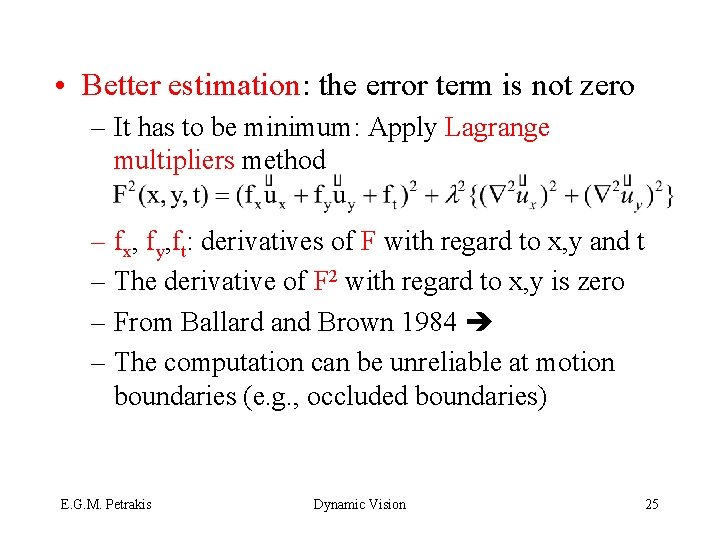

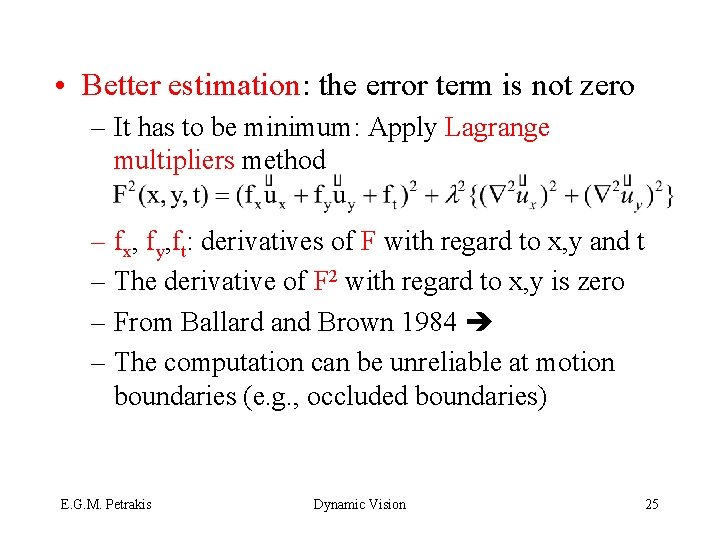

• Better estimation: the error term is not zero – It has to be minimum: Apply Lagrange multipliers method – fx, fy, ft: derivatives of F with regard to x, y and t – The derivative of F 2 with regard to x, y is zero – From Ballard and Brown 1984 – The computation can be unreliable at motion boundaries (e. g. , occluded boundaries) E. G. M. Petrakis Dynamic Vision 25

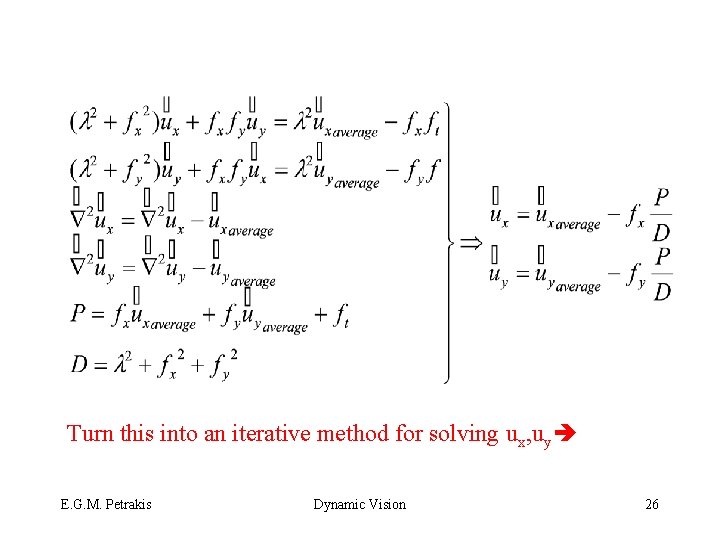

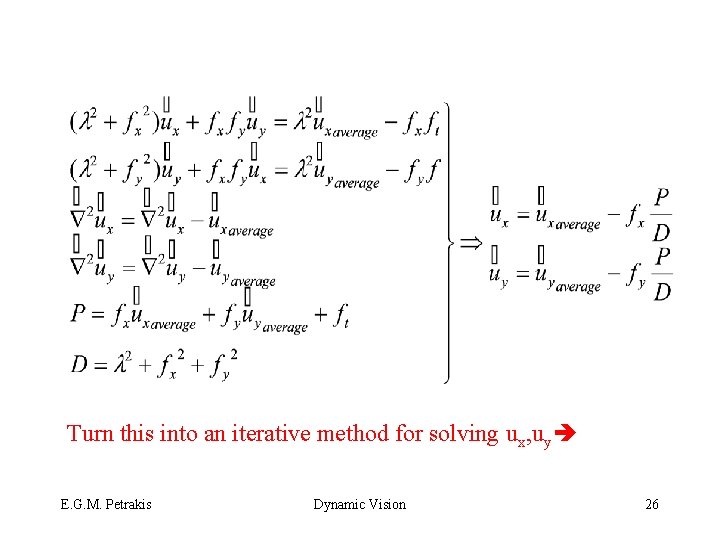

Turn this into an iterative method for solving ux, uy E. G. M. Petrakis Dynamic Vision 26

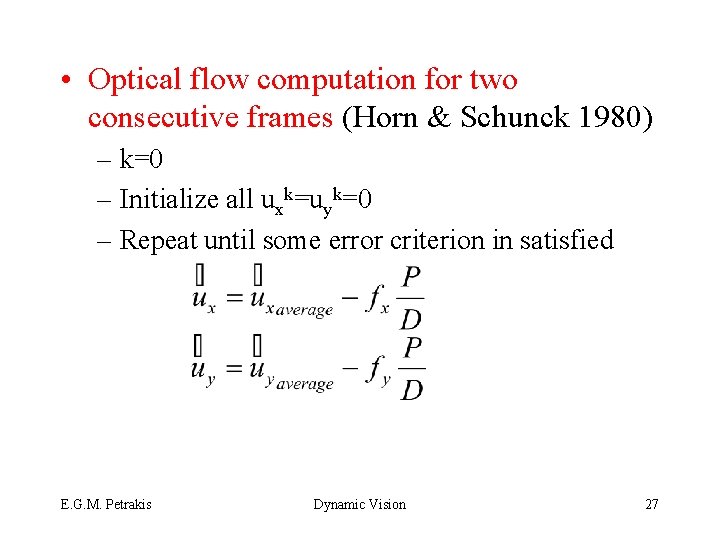

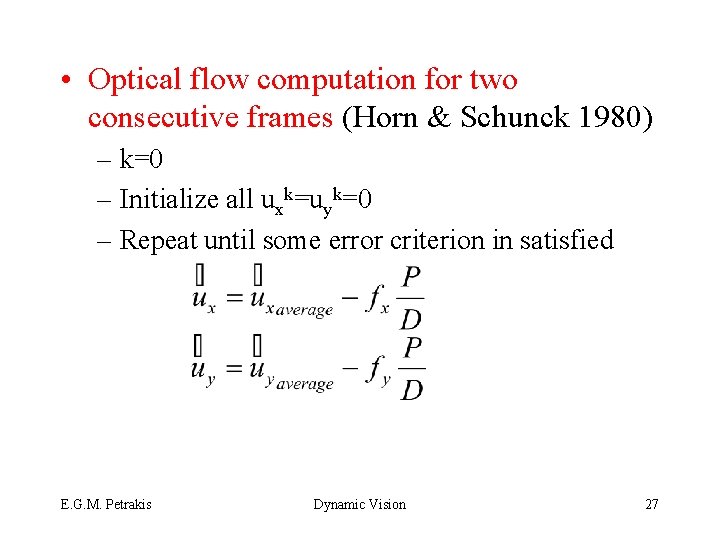

• Optical flow computation for two consecutive frames (Horn & Schunck 1980) – k=0 – Initialize all uxk=uyk=0 – Repeat until some error criterion in satisfied E. G. M. Petrakis Dynamic Vision 27

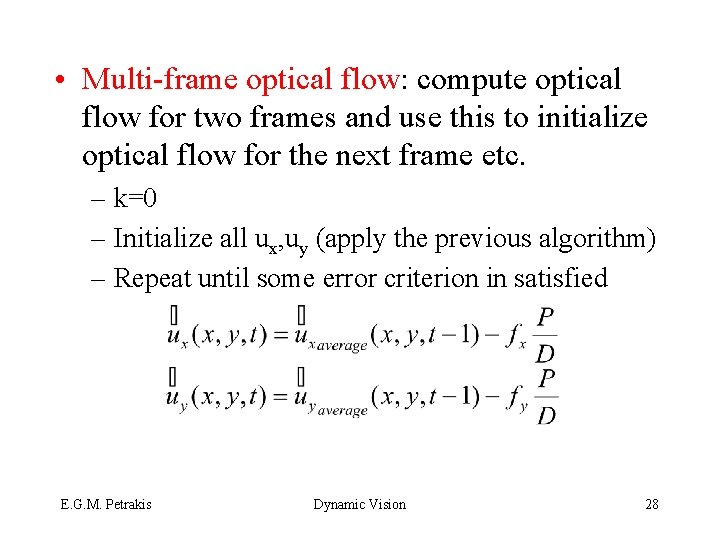

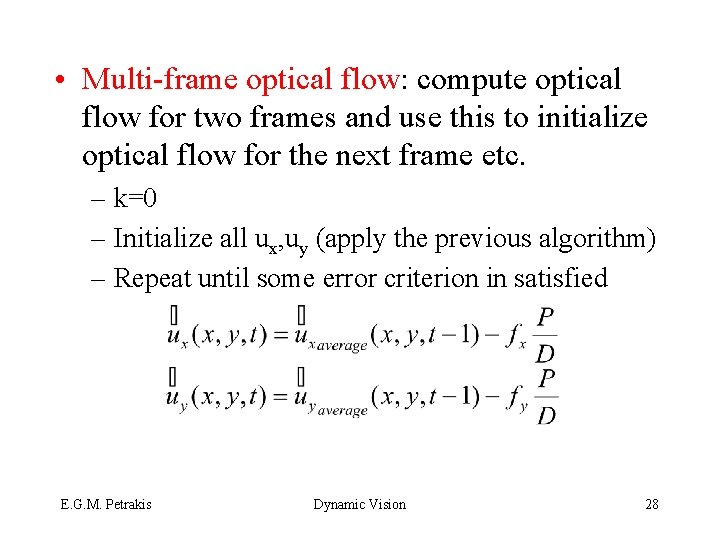

• Multi-frame optical flow: compute optical flow for two frames and use this to initialize optical flow for the next frame etc. – k=0 – Initialize all ux, uy (apply the previous algorithm) – Repeat until some error criterion in satisfied E. G. M. Petrakis Dynamic Vision 28

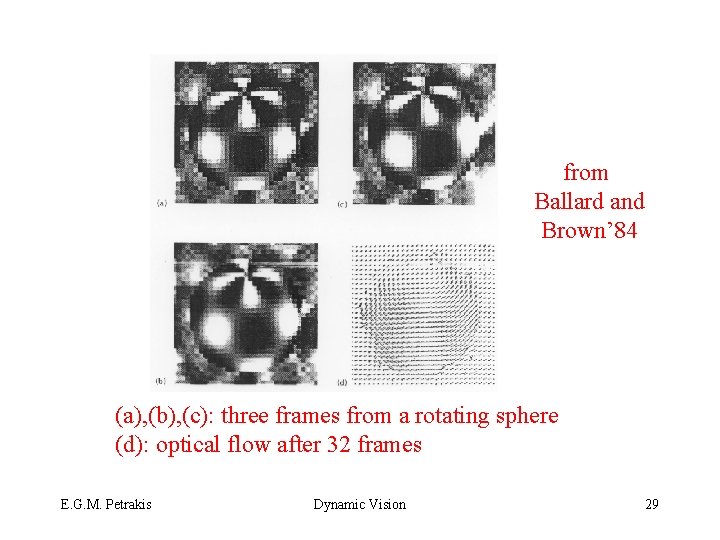

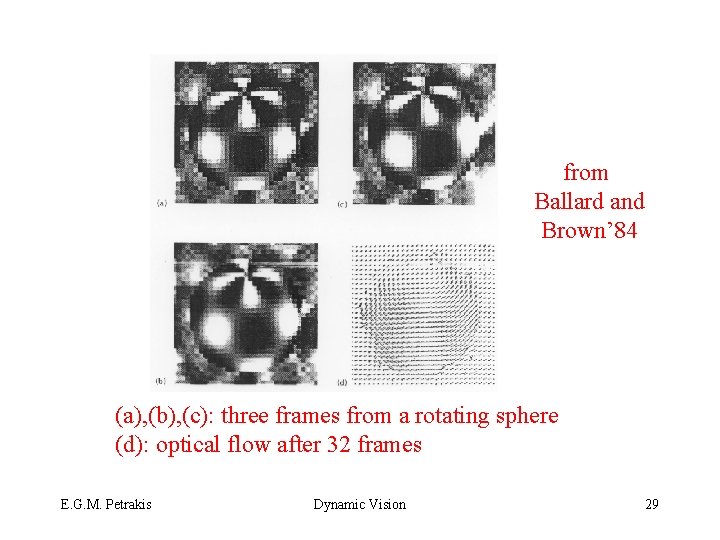

from Ballard and Brown’ 84 (a), (b), (c): three frames from a rotating sphere (d): optical flow after 32 frames E. G. M. Petrakis Dynamic Vision 29

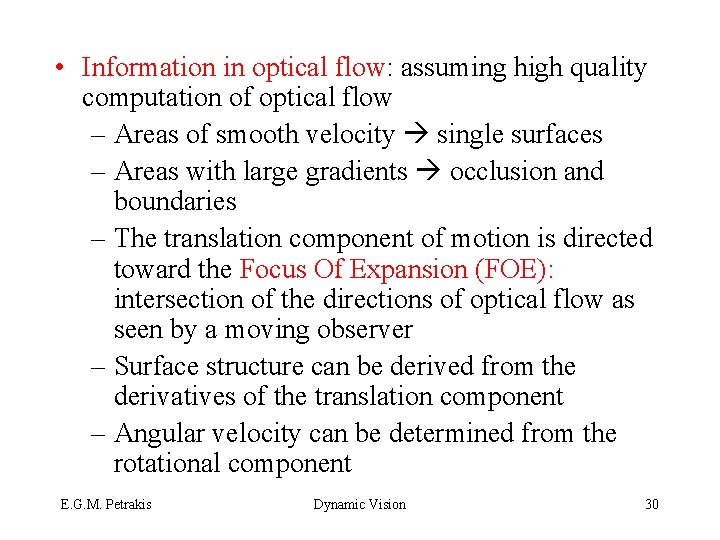

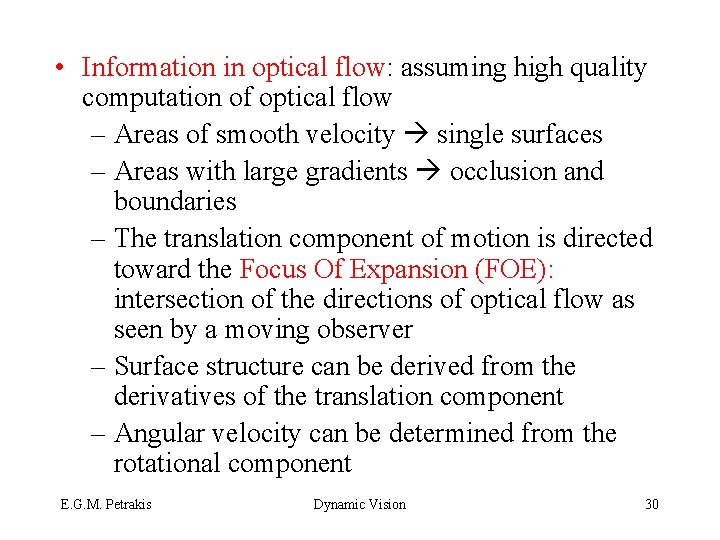

• Information in optical flow: assuming high quality computation of optical flow – Areas of smooth velocity single surfaces – Areas with large gradients occlusion and boundaries – The translation component of motion is directed toward the Focus Of Expansion (FOE): intersection of the directions of optical flow as seen by a moving observer – Surface structure can be derived from the derivatives of the translation component – Angular velocity can be determined from the rotational component E. G. M. Petrakis Dynamic Vision 30

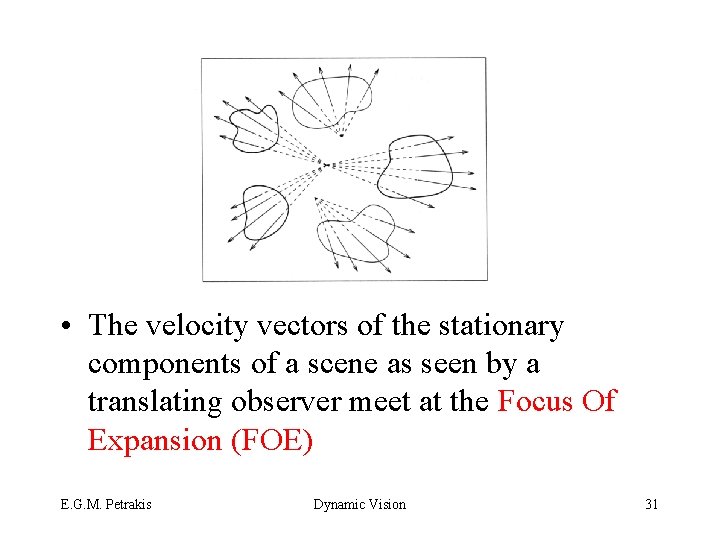

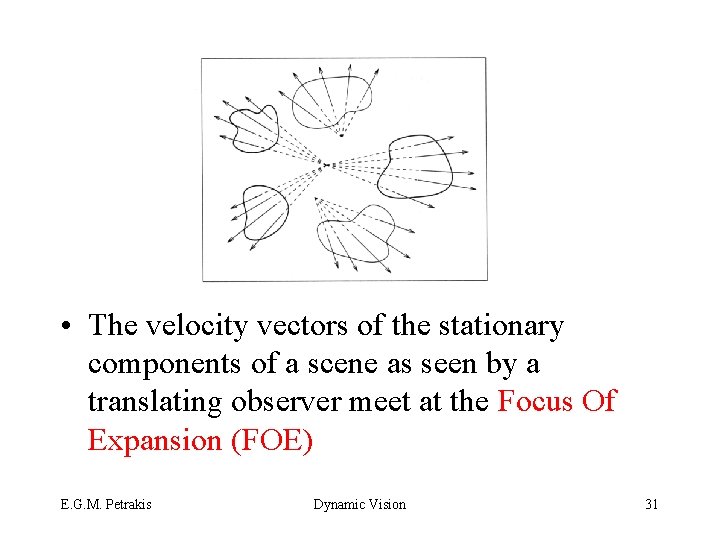

• The velocity vectors of the stationary components of a scene as seen by a translating observer meet at the Focus Of Expansion (FOE) E. G. M. Petrakis Dynamic Vision 31

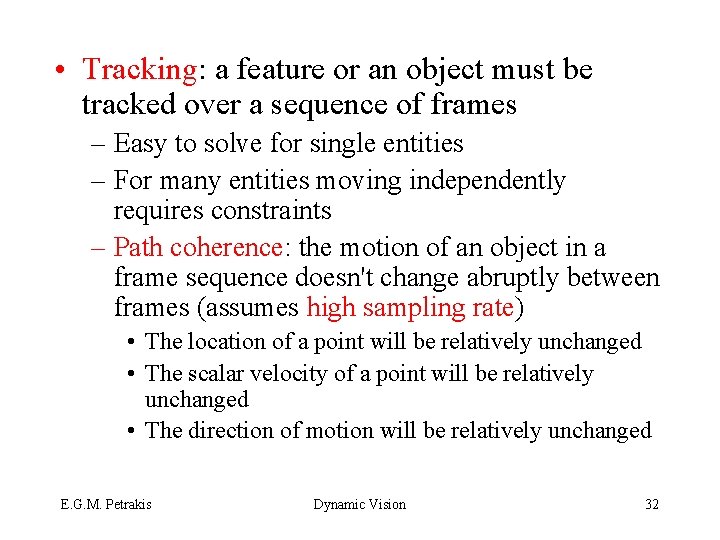

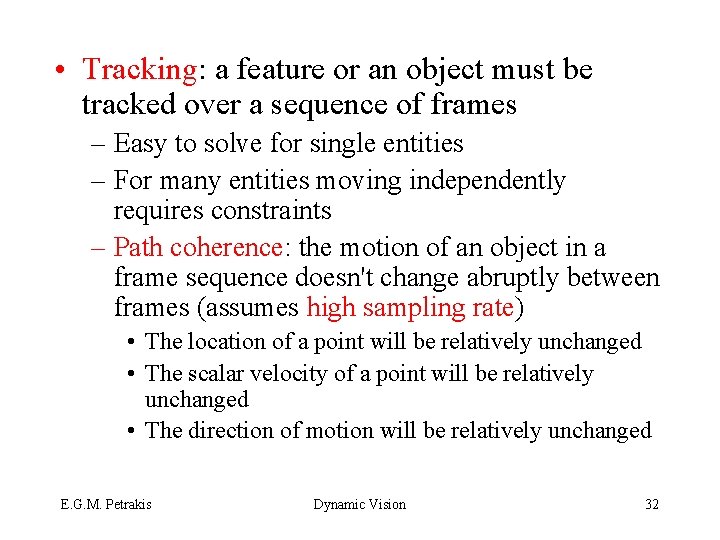

• Tracking: a feature or an object must be tracked over a sequence of frames – Easy to solve for single entities – For many entities moving independently requires constraints – Path coherence: the motion of an object in a frame sequence doesn't change abruptly between frames (assumes high sampling rate) • The location of a point will be relatively unchanged • The scalar velocity of a point will be relatively unchanged • The direction of motion will be relatively unchanged E. G. M. Petrakis Dynamic Vision 32

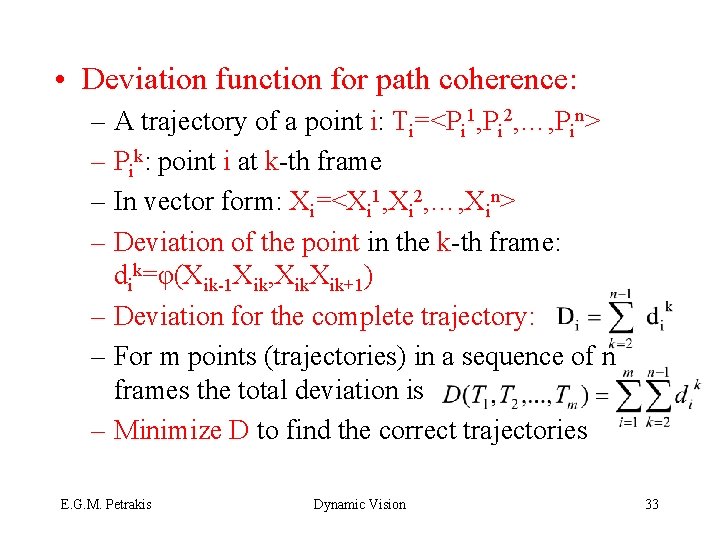

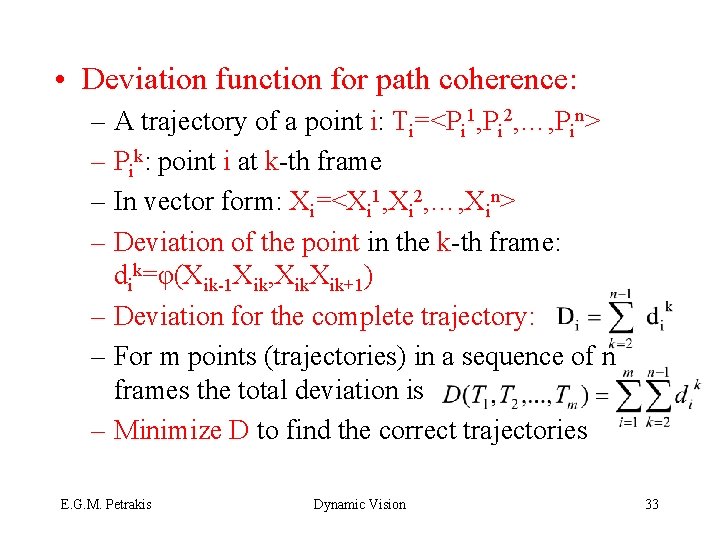

• Deviation function for path coherence: – A trajectory of a point i: Ti=<Pi 1, Pi 2, …, Pin> – Pik: point i at k-th frame – In vector form: Xi=<Xi 1, Xi 2, …, Xin> – Deviation of the point in the k-th frame: dik=φ(Xik-1 Xik, Xik+1) – Deviation for the complete trajectory: – For m points (trajectories) in a sequence of n frames the total deviation is – Minimize D to find the correct trajectories E. G. M. Petrakis Dynamic Vision 33

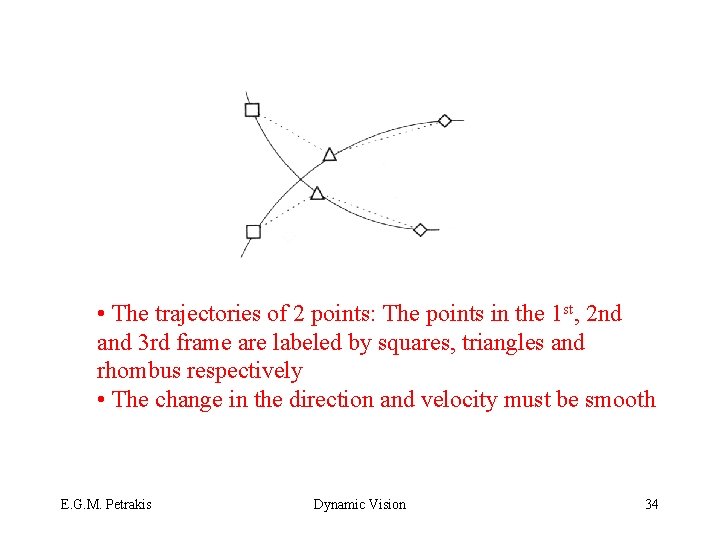

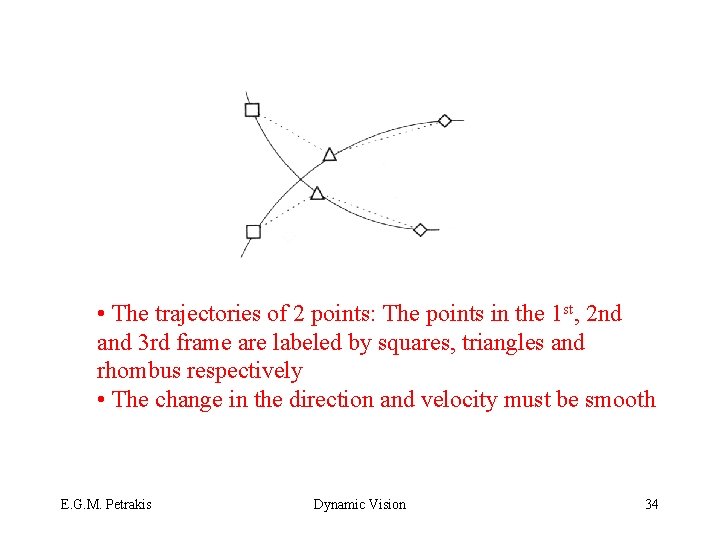

• The trajectories of 2 points: The points in the 1 st, 2 nd and 3 rd frame are labeled by squares, triangles and rhombus respectively • The change in the direction and velocity must be smooth E. G. M. Petrakis Dynamic Vision 34

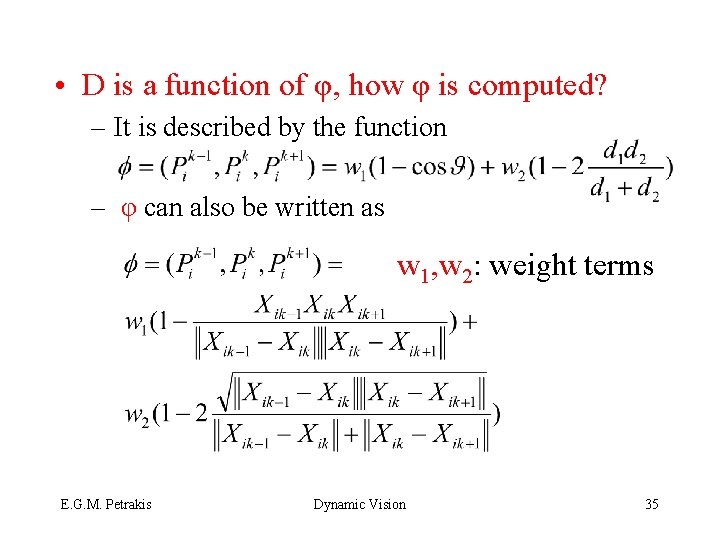

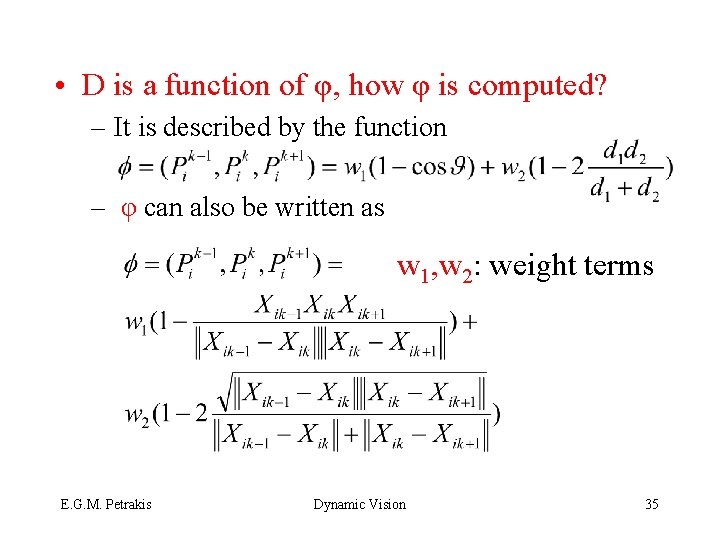

• D is a function of φ, how φ is computed? – It is described by the function – φ can also be written as w 1, w 2: weight terms E. G. M. Petrakis Dynamic Vision 35

• Direction coherence: the first term – Dot product of displacement vectors • Speed coherence: the second term – Geometric/arithmetic mean of magnitude • Limitations: same number of features in every frame – Objects may disappear, appear or occlude – Changes of geometry, illumination – Lead to false correspondences – Force the trajectories to satisfy certain local constraints E. G. M. Petrakis Dynamic Vision 36