Dynamic Disk Pools Feature Introduction 1 IBM storage

Dynamic Disk Pools Feature Introduction 1

IBM storage terms vs. Net. App terms ¡ This presentation is using Net. App generic product terms. Below is a key for some basic terms used in today’s presentation. Volume(s) – IBM term is LUN’s or Logical Drives Volume Group – IBM term is array SANtricity – Storage Manager Snapshot – Flash. Copy Pikes Peak – Net. App controller not an IBM product at this time. 2

Overview ¡ ¡ ¡ ¡ Introduction to Dynamic Disk Pools (DDP) DDP vs. Traditional RAID Initial Implementation Guidelines How DDP Works Drive Failure and Capacity Expansion Examples Volume Architecture Compatibility Database Backup Mechanism 3

Shortcomings of Traditional RAID ¡ Low utilization of storage capacity – Unused capacity stranded inside volume groups ¡ I/O performance limited by # of drives in a Volume Group ¡ Drive failures can affect a system for days or weeks ¡ – The system is susceptible to additional failures – Performance is significantly impacted while degraded – poor Qo. S – Drives growing to 4 TB and beyond compound the problem Mean Time To Data Loss shortens as the size of configurations grow 4

What is a Dynamic Disk Pool? A new way of delivering RAID protection / performance: ¡ ¡ ¡ Dynamic Disk Pool (DDP) distributes data, protection information, and spare capacity across a pool of drives Intelligent algorithm defines which drives should be used for segment placement (7 patents pending) Segments are reconstructed/redistributed to maintain a balanced distribution: – On drive failure, segments are recreated elsewhere – On drive addition, segments are immediately rebalanced 5

Dynamic Disk Pools, aka… ¡ “Dynamic Disk Pools” has gone through several name revisions: – – – Disk Pools (term used in Santricity) Adaptive Disk Pools Simple Disk Pools CRUSH Pools Dynamic-RAID Distributed-RAID 6

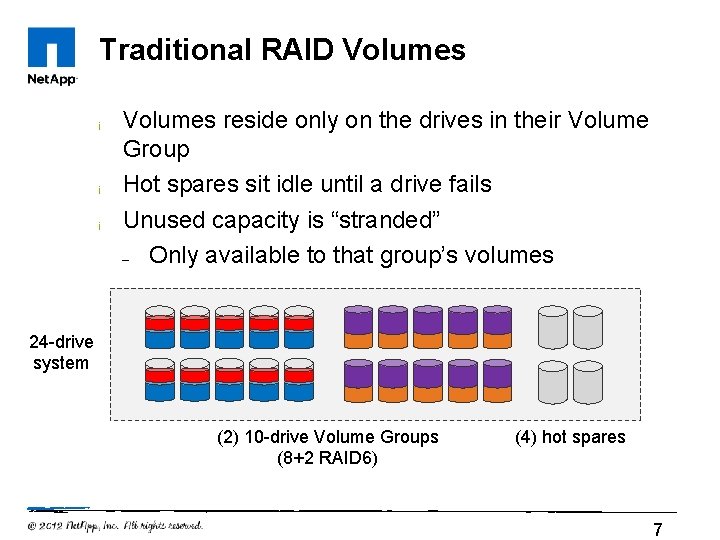

Traditional RAID Volumes ¡ ¡ ¡ Volumes reside only on the drives in their Volume Group Hot spares sit idle until a drive fails Unused capacity is “stranded” – Only available to that group’s volumes 24 -drive system (2) 10 -drive Volume Groups (8+2 RAID 6) (4) hot spares 7

DDP Volumes ¡ ¡ ¡ Each volume’s data and spare capacity is spread across all drives in disk pool All drives are active; none are idle Spare capacity is available to all volumes Spare capacity Volume A Volume B Volume C Volume D (1) 24 -drive pool 8

Advantages of Dynamic Disk Pools ¡ Simplify administration and storage allocation – – ¡ ¡ All volumes share most attributes (RAID level, segment size, stripe width) Improve system Quality of Service under normal and failure scenarios since all drives contribute to volume I/O Improve drive reconstruction times – ¡ No need for Hot Spares Shorter recovery times reduce exposure to multiple cascading disk failures Mean Time To Data Loss remains constant as the size of configurations grow 9

Consistent Qo. S ¡ ¡ Large pool of spindles reduces hot spots Performance drop is reduced following drive failure Performance Impact of a Drive Failure DDP Optimal Volumes return to an optimal state in shorter time ¡ Performance Acceptable RAID Rebuild Time 10

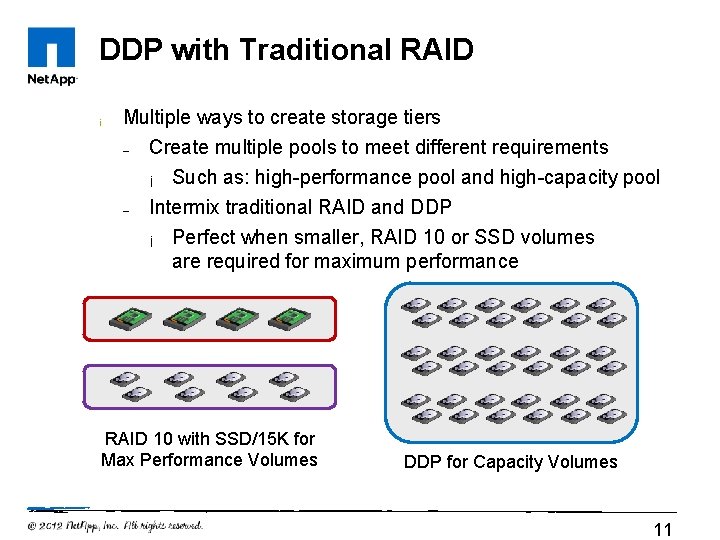

DDP with Traditional RAID ¡ Multiple ways to create storage tiers – – Create multiple pools to meet different requirements ¡ Such as: high-performance pool and high-capacity pool Intermix traditional RAID and DDP ¡ Perfect when smaller, RAID 10 or SSD volumes are required for maximum performance RAID 10 with SSD/15 K for Max Performance Volumes DDP for Capacity Volumes 11

DDP with Traditional RAID ¡ Support for intermixing Traditional Volume Groups and DDP in the same storage system – ¡ ¡ Useful for backward compatibility, SSDs, different drive speeds and types Support for remote asynchronous/synchronous mirroring between DDP and traditional volumes Support for Volume Copy to/from DDP and traditional volumes – Enables conversion of legacy volumes to Disk Pool Volumes 12

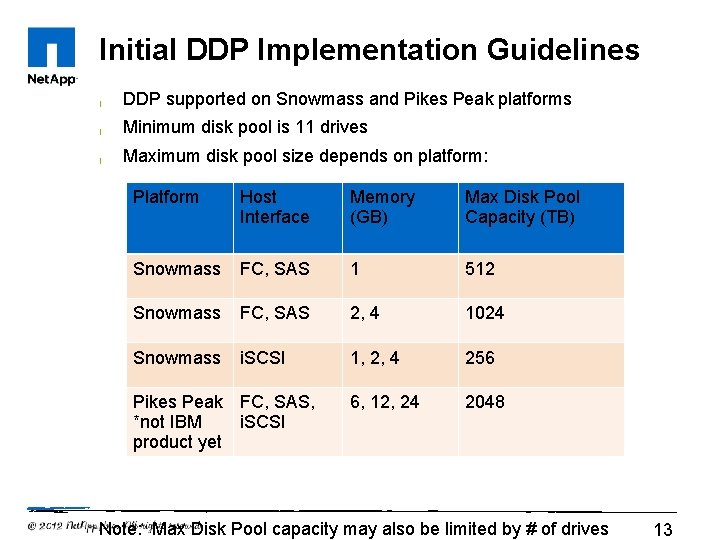

Initial DDP Implementation Guidelines ¡ DDP supported on Snowmass and Pikes Peak platforms ¡ Minimum disk pool is 11 drives ¡ Maximum disk pool size depends on platform: Platform Host Interface Memory (GB) Max Disk Pool Capacity (TB) Snowmass FC, SAS 1 512 Snowmass FC, SAS 2, 4 1024 Snowmass i. SCSI 1, 2, 4 256 6, 12, 24 2048 Pikes Peak FC, SAS, *not IBM i. SCSI product yet Note: Max Disk Pool capacity may also be limited by # of drives 13

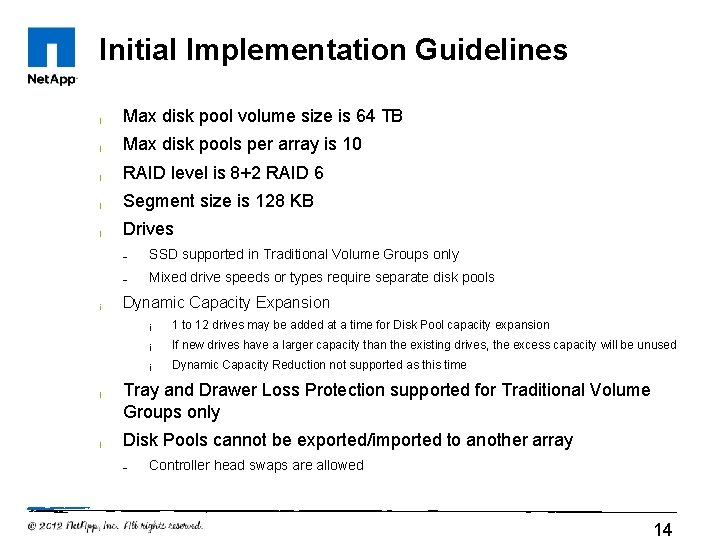

Initial Implementation Guidelines ¡ Max disk pool volume size is 64 TB ¡ Max disk pools per array is 10 ¡ RAID level is 8+2 RAID 6 ¡ Segment size is 128 KB ¡ Drives ¡ ¡ ¡ – SSD supported in Traditional Volume Groups only – Mixed drive speeds or types require separate disk pools Dynamic Capacity Expansion ¡ 1 to 12 drives may be added at a time for Disk Pool capacity expansion ¡ If new drives have a larger capacity than the existing drives, the excess capacity will be unused ¡ Dynamic Capacity Reduction not supported as this time Tray and Drawer Loss Protection supported for Traditional Volume Groups only Disk Pools cannot be exported/imported to another array – Controller head swaps are allowed 14

How DDP Works 15

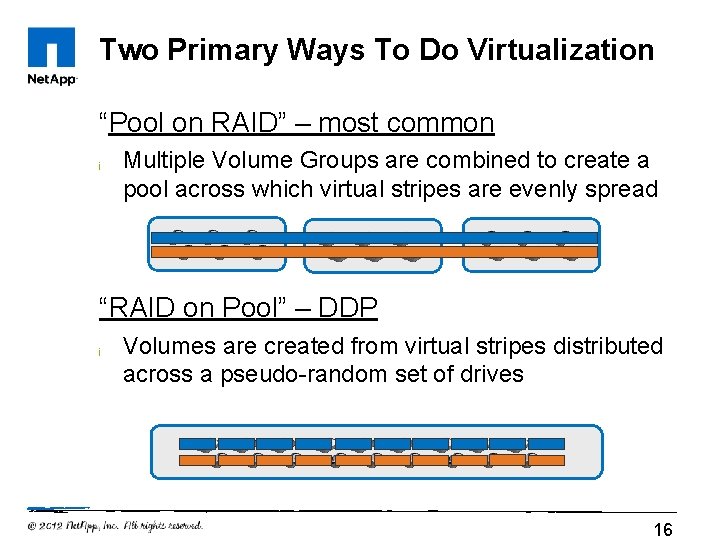

Two Primary Ways To Do Virtualization “Pool on RAID” – most common ¡ Multiple Volume Groups are combined to create a pool across which virtual stripes are evenly spread “RAID on Pool” – DDP ¡ Volumes are created from virtual stripes distributed across a pseudo-random set of drives 16

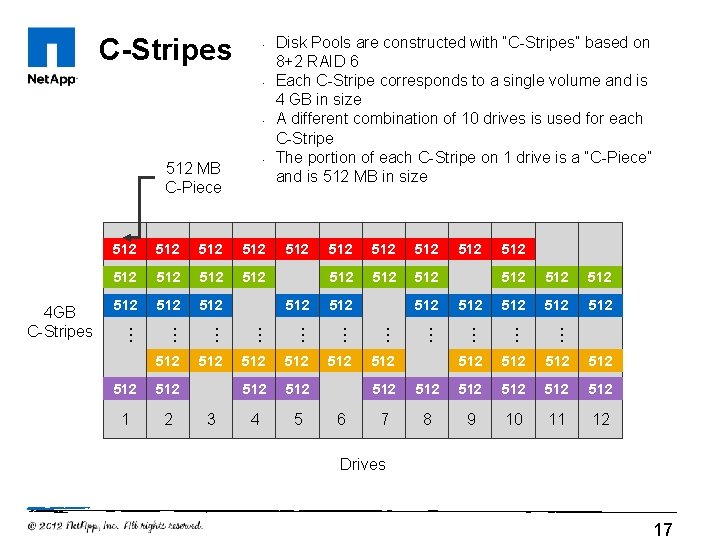

C-Stripes • • 512 MB C-Piece 512 512 512 512 6 512 512 512 … 4 512 … 512 512 … 3 512 … 2 512 … 1 512 … 512 512 512 … … … 4 GB C-Stripes 512 Disk Pools are constructed with “C-Stripes” based on 8+2 RAID 6 Each C-Stripe corresponds to a single volume and is 4 GB in size A different combination of 10 drives is used for each C-Stripe The portion of each C-Stripe on 1 drive is a “C-Piece” and is 512 MB in size 512 512 512 7 8 9 10 11 12 Drives 17

• C-Pieces • Based on traditional 8+2 RAID 6 stripes • Segment size = 128 KB • • 4, 096 RAID stripes on each C-Piece from a complete 4 GB C-Stripe Example: 1 TB volume consists of 250 C-Stripes 128 128 128 128 128 128 128 128 128 128 128 128 128 1 2 3 4 5 6 7 8 9 10 Drives 4 GB C-Stripe … … … … … (4, 096) 1 MB Stripes A C-Piece is one drive’s worth of C-Stripe data (512 MB) and corresponds to 1 Drive Extent 11 12 512 MB C-Piece 18

C-Stripe Allocation ¡ ¡ # of 4 GB C-Stripes allocated determined by size of volume – Ex: 500 GB Volume consists of 125 C-Stripes are allocated at time of volume creation Placed at first free drive extent starting from lowest LBA C-Stripes are allocated sequentially on a per-volume basis – Ex: 3 Volumes created at time of Disk Pool creation. All C-Stripes for Volume 1 are allocated followed by Volume 2, then 3. 19

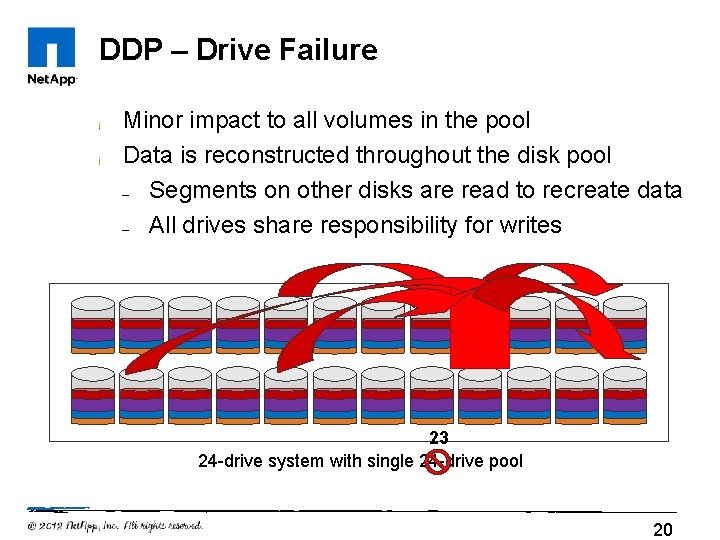

DDP – Drive Failure ¡ ¡ Minor impact to all volumes in the pool Data is reconstructed throughout the disk pool – Segments on other disks are read to recreate data – All drives share responsibility for writes 23 24 -drive system with single 24 -drive pool 20

DDP Single Drive Failure Handling ¡ Drive failures cause some percentage of every volume to be reconstructed – Traditional RAID: VG’s assigned to subset of the drive pool ¡ – ¡ ¡ ¡ With DDP, segments spread across all drives The percentage of affected C-stripes is typically small – ¡ Drive failure causes significant performance drop on the affected volume group Example: stripe width/num drives => 10/100 = 10% of C-Stripes in each volume All drives are read from and written to during reconstruction Because all drives are participating, multiple C-Pieces can be reconstructed in parallel All volumes suffer the same performance penalty while a reconstruction is active – Consistent Qo. S 21

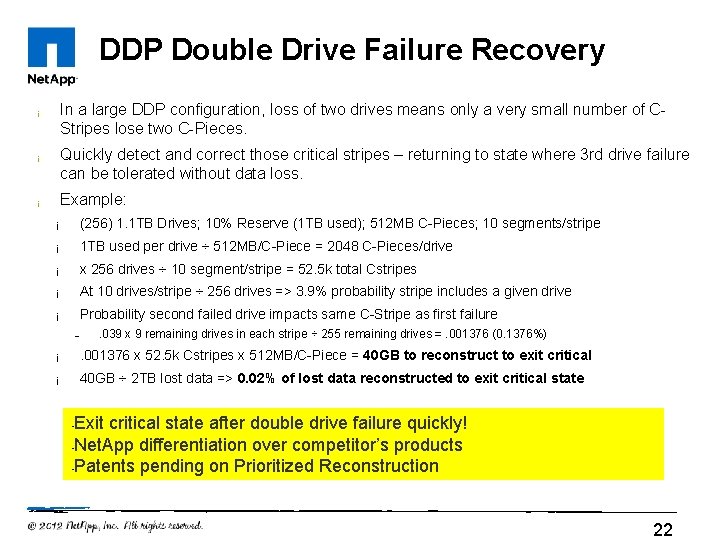

DDP Double Drive Failure Recovery ¡ ¡ ¡ In a large DDP configuration, loss of two drives means only a very small number of CStripes lose two C-Pieces. Quickly detect and correct those critical stripes – returning to state where 3 rd drive failure can be tolerated without data loss. Example: ¡ (256) 1. 1 TB Drives; 10% Reserve (1 TB used); 512 MB C-Pieces; 10 segments/stripe ¡ 1 TB used per drive ÷ 512 MB/C-Piece = 2048 C-Pieces/drive ¡ x 256 drives ÷ 10 segment/stripe = 52. 5 k total Cstripes ¡ At 10 drives/stripe ÷ 256 drives => 3. 9% probability stripe includes a given drive ¡ Probability second failed drive impacts same C-Stripe as first failure – . 039 x 9 remaining drives in each stripe ÷ 255 remaining drives =. 001376 (0. 1376%) ¡ . 001376 x 52. 5 k Cstripes x 512 MB/C-Piece = 40 GB to reconstruct to exit critical ¡ 40 GB ÷ 2 TB lost data => 0. 02% of lost data reconstructed to exit critical state Exit critical state after double drive failure quickly! • Net. App differentiation over competitor’s products • Patents pending on Prioritized Reconstruction • 22

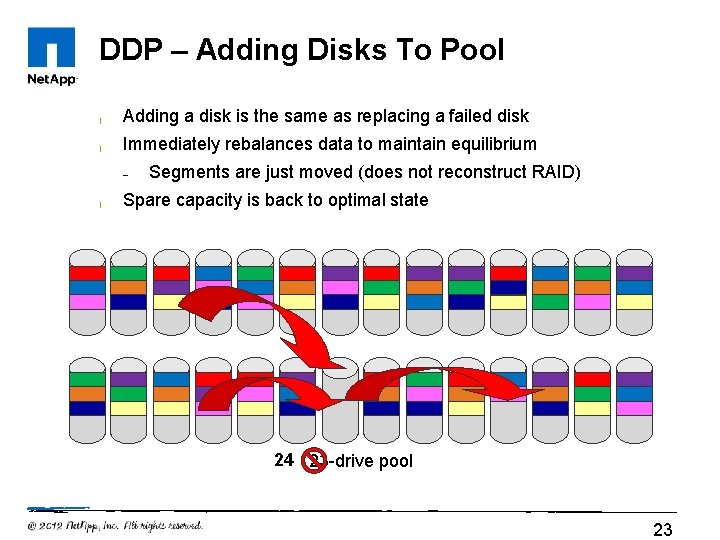

DDP – Adding Disks To Pool ¡ Adding a disk is the same as replacing a failed disk ¡ Immediately rebalances data to maintain equilibrium – ¡ Segments are just moved (does not reconstruct RAID) Spare capacity is back to optimal state 24 23 -drive pool 23

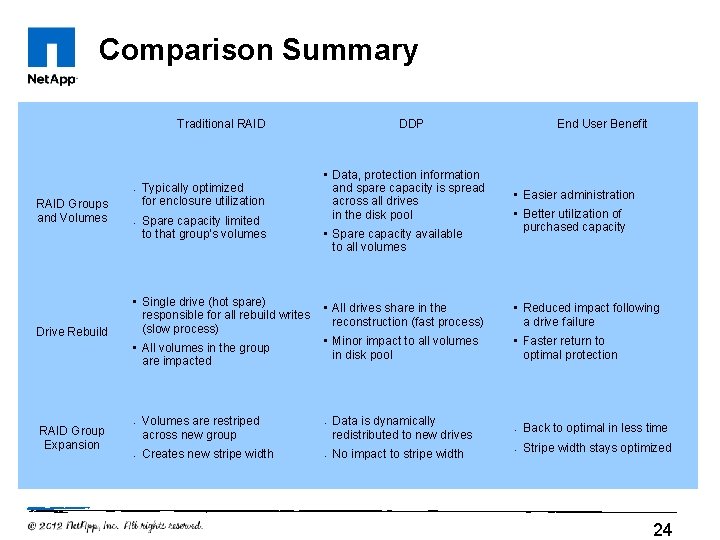

Comparison Summary Traditional RAID • RAID Groups and Volumes Drive Rebuild • Typically optimized for enclosure utilization Spare capacity limited to that group’s volumes • Single drive (hot spare) responsible for all rebuild writes (slow process) • All volumes in the group are impacted RAID Group Expansion • • Volumes are restriped across new group Creates new stripe width DDP • Data, protection information and spare capacity is spread across all drives in the disk pool • Spare capacity available to all volumes End User Benefit • Easier administration • Better utilization of purchased capacity • All drives share in the reconstruction (fast process) • Reduced impact following a drive failure • Minor impact to all volumes in disk pool • Faster return to optimal protection • • Data is dynamically redistributed to new drives No impact to stripe width • Back to optimal in less time • Stripe width stays optimized 24

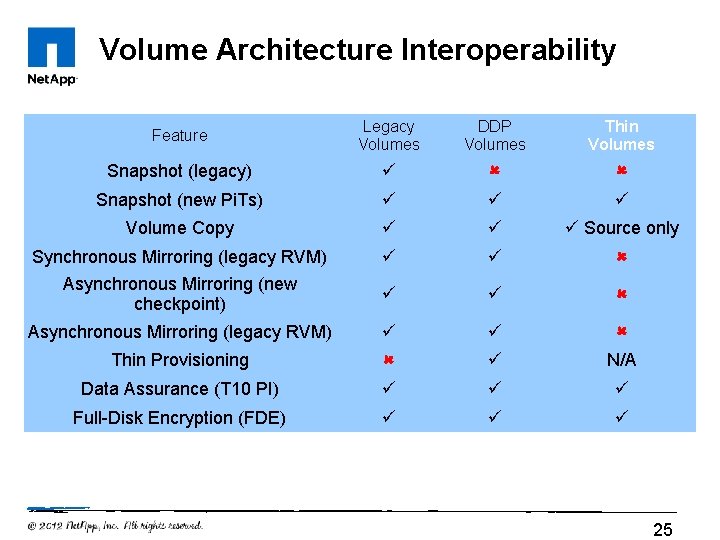

Volume Architecture Interoperability Feature Legacy Volumes DDP Volumes Thin Volumes Snapshot (legacy) Snapshot (new Pi. Ts) Volume Copy Source only Synchronous Mirroring (legacy RVM) Asynchronous Mirroring (new checkpoint) Asynchronous Mirroring (legacy RVM) Thin Provisioning N/A Data Assurance (T 10 PI) Full-Disk Encryption (FDE) 25

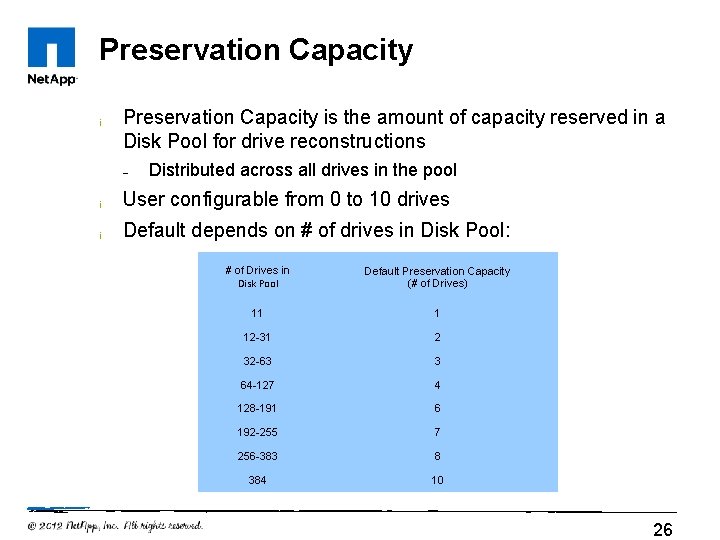

Preservation Capacity ¡ Preservation Capacity is the amount of capacity reserved in a Disk Pool for drive reconstructions – Distributed across all drives in the pool ¡ User configurable from 0 to 10 drives ¡ Default depends on # of drives in Disk Pool: # of Drives in Disk Pool Default Preservation Capacity (# of Drives) 11 1 12 -31 2 32 -63 3 64 -127 4 128 -191 6 192 -255 7 256 -383 8 384 10 26

Database Backup Mechanism ¡ Configuration Database changes rapidly when an operation such as reconstruction or pool expansion is in progress – ¡ The current database retrieve/restore mechanism may not be recent enough to restore an accurate image of the volume mapping data A new Backup DB will contain a real-time copy of the configuration database ¡ The backup copy can be used in the event of a DB corruption or loss ¡ User can select which DB to use through Recovery Mode in Santricity – Backup Database is stored in cache on each controller ¡ Not mirrored across controllers ¡ Written to persistent cache in the event of power loss, controller FW/NVSRAM upgrade, or battery failure 27

Summary: Why DDP? ¡ ¡ ¡ Prioritized Reconstruction Performance under failure Hot-Spot elimination Greater protection of data from multiple disk failures Adding capacity is simplified Reduced service actions 28

29

- Slides: 29