DSBS Discussion Multiple Testing 28 May 2009 Discussion

DSBS Discussion: Multiple Testing 28 May 2009 Discussion on Multiple Testing Prepared and presented by Lars Endahl

DSBS Discussion: Multiple Testing Outline • The multiplicity issue • Questions to the audience • Regulatory guidance • Discussion 28 May 2009 2

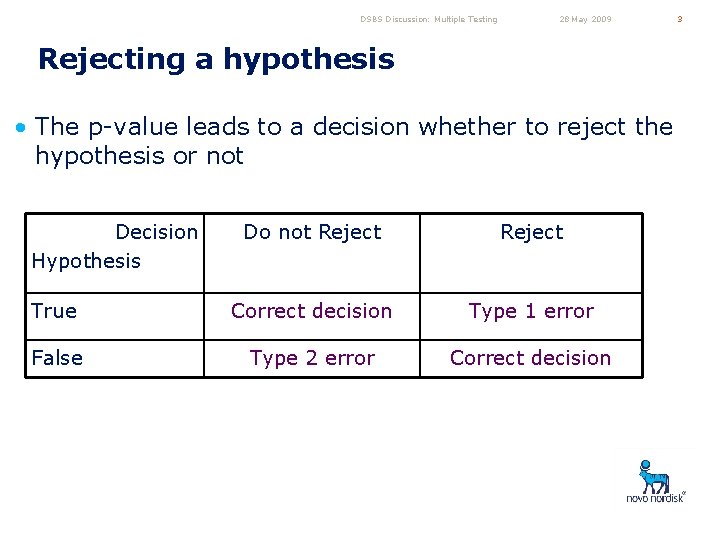

DSBS Discussion: Multiple Testing 28 May 2009 Rejecting a hypothesis • The p-value leads to a decision whether to reject the hypothesis or not Decision Hypothesis Do not Reject True Correct decision Type 1 error False Type 2 error Correct decision 3

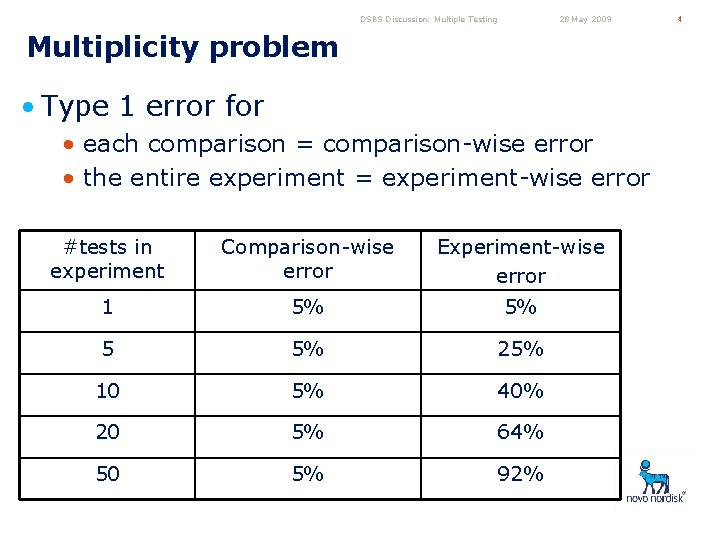

DSBS Discussion: Multiple Testing 28 May 2009 Multiplicity problem • Type 1 error for • each comparison = comparison-wise error • the entire experiment = experiment-wise error #tests in experiment Comparison-wise error Experiment-wise error 1 5% 5% 5 5% 25% 10 5% 40% 20 5% 64% 50 5% 92% 4

DSBS Discussion: Multiple Testing 28 May 2009 5 Regulatory Perspective • Regulatory authorities requires the type 1 error frequency to be low • do not want to grant labelling claims that are not a true reflection of the drug capabilities

DSBS Discussion: Multiple Testing 28 May 2009 Show of Hands • How many of you have • analysed data from a confirmatory trial? • except for bioequivalence trials • routinely provided p-values as inferential statistics? • routinely adjusted the analyses for multiplicity? 6

DSBS Discussion: Multiple Testing 28 May 2009 Show of Hands • For those of you who do NOT adjust for multiplicity, what’s the reason • • • I only test one hypothesis per trial I hardly know anything about multiplicity It’s not needed to get the wanted label claims Project management does not want it It’s too difficult to achieve significance It prevents me from cherry picking 7

DSBS Discussion: Multiple Testing 28 May 2009 8 Show of Hands • For those of you who do adjust for multiplicity, on what endpoints do you adjust • • • All efficacy and safety endpoints All efficacy endpoints (but not safety endpoints) All safety endpoints (but not efficacy endpoints) Selected efficacy and safety endpoints Selected efficacy endpoints Selected safety endpoints

DSBS Discussion: Multiple Testing 28 May 2009 Show of Hands • For those of you who do adjust for multiplicity, what is your preferred method • • • Bonferroni methods (incl. Holm, Hochberg etc. ) Closed testing Hierarchical testing (incl. Win-on-All) ANOVA methods (Tukey, Fisher, Duncan etc. ) O’Brien & Fleming (and related methods) Controlling the false discovery rate 9

DSBS Discussion: Multiple Testing Regulatory Guidelines 28 May 2009 10 • ICH-E 9: • when multiplicity is present, the usual frequentist approach to the analysis of clinical trial data may necessitate an adjustment to the type 1 error • the method of controlling type 1 error should be given in the protocol.

DSBS Discussion: Multiple Testing Regulatory Guidelines 28 May 2009 • CPMP Points-to-consider on multiplicity issues • Significant effects in secondary variables can be considered for an additional claim only after the primary objective of the clinical trial has been achieved, and if they were part of the confirmatory strategy • There are many situations where no multiplicity concern arises, for example, having predefined primary variables and all secondary variable are declared supportive • In case of adverse effects, p-values are of very limited value as substantial difference will raise concern, …, irrespective of the p-value 11

DSBS Discussion: Multiple Testing Regulatory Guidelines 28 May 2009 12 • FDA Guidance on Diabetes Mellitus (draft) • If statistical significance is achieved on the primary endpoint, secondary assessments of efficacy can be considered. • Type 1 error should be controlled across all clinically relevant secondary efficacy endpoints that may be intended for product labelling to provide statistical support for their inclusion in the label.

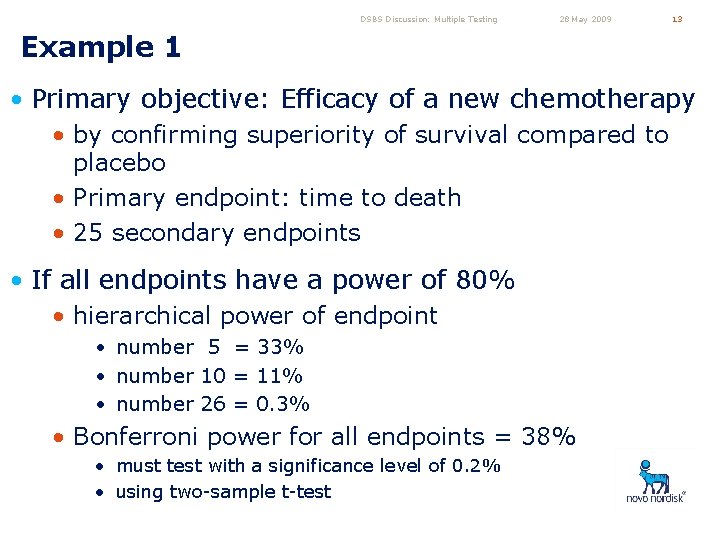

DSBS Discussion: Multiple Testing 28 May 2009 13 Example 1 • Primary objective: Efficacy of a new chemotherapy • by confirming superiority of survival compared to placebo • Primary endpoint: time to death • 25 secondary endpoints • If all endpoints have a power of 80% • hierarchical power of endpoint • number 5 = 33% • number 10 = 11% • number 26 = 0. 3% • Bonferroni power for all endpoints = 38% • must test with a significance level of 0. 2% • using two-sample t-test

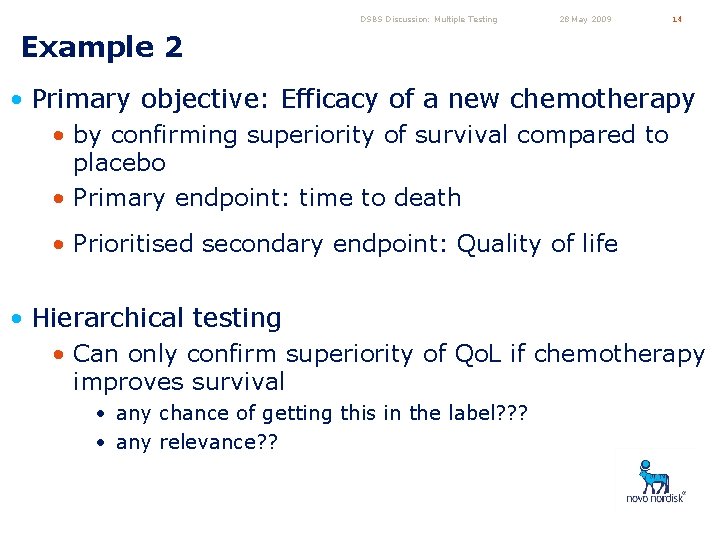

DSBS Discussion: Multiple Testing 28 May 2009 14 Example 2 • Primary objective: Efficacy of a new chemotherapy • by confirming superiority of survival compared to placebo • Primary endpoint: time to death • Prioritised secondary endpoint: Quality of life • Hierarchical testing • Can only confirm superiority of Qo. L if chemotherapy improves survival • any chance of getting this in the label? ? ? • any relevance? ?

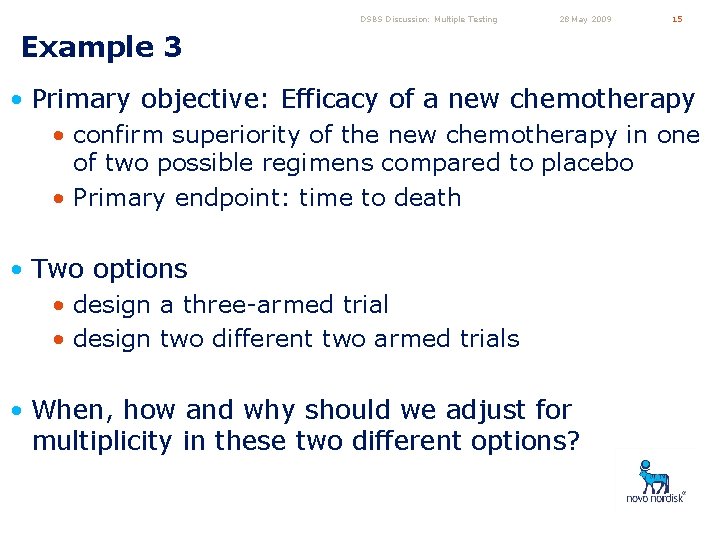

DSBS Discussion: Multiple Testing 28 May 2009 15 Example 3 • Primary objective: Efficacy of a new chemotherapy • confirm superiority of the new chemotherapy in one of two possible regimens compared to placebo • Primary endpoint: time to death • Two options • design a three-armed trial • design two different two armed trials • When, how and why should we adjust for multiplicity in these two different options?

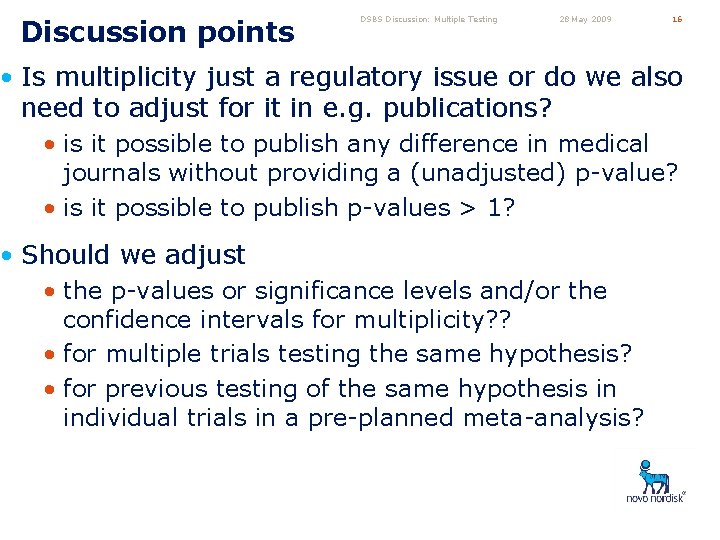

Discussion points DSBS Discussion: Multiple Testing 28 May 2009 16 • Is multiplicity just a regulatory issue or do we also need to adjust for it in e. g. publications? • is it possible to publish any difference in medical journals without providing a (unadjusted) p-value? • is it possible to publish p-values > 1? • Should we adjust • the p-values or significance levels and/or the confidence intervals for multiplicity? ? • for multiple trials testing the same hypothesis? • for previous testing of the same hypothesis in individual trials in a pre-planned meta-analysis?

DSBS Discussion: Multiple Testing 28 May 2009 17 More discussion points • What should we do when testing for superiority in a non-inferiority trial and we wish to continue if noninferiority is confirmed for the primary endpoint • avoid using hierarchical approaches? • test all relevant intersections? • regard the superiority test as part of the noninferiority comparison? • Should we report p-values for hypotheses that has not be tested? • default in e. g. SAS

DSBS Discussion: Multiple Testing 28 May 2009 18

- Slides: 18