Dr Roger Bennett R A BennettReading ac uk

Dr Roger Bennett R. A. Bennett@Reading. ac. uk Rm. 23 Xtn. 8559 Lecture 13

Entropy changes – the universe • Imagine heating a beaker of water by putting it in contact with a reservoir whose temperature remains fixed and the system remains at constant pressure. • In lecture 11 we calculated the entropy change under different process conditions. • However as entropy is a function of state the change in entropy is defined by the end points not the process. • What about the reservoir itself?

Entropy changes • The reservoir donates heat Q = Cp(Tf - Ti) at the temperature of the reservoir Tf. • The total entropy change of the universe (system + reservoir) can therefore be calculated. • Plugging in Ti= 273 K and Tf = 373 K

Entropy changes • The entropy of the universe increases as we expect. • What happens if we employ an intermediate reservoir to get the water to 50°C and then the original reservoir to 100 °C? • Plugging in Ti= 273 K, T 1 = 323 K and Tf = 373 K

Entropy changes • The entropy of the universe increases as we expect but by using two reservoirs the entropy change is reduced. • Why? • What happens if we have an infinite number of reservoirs that track the temperature of the water? • This was our original version in lecture 11 – we used it to calculate the reversible change in entropy. • The total change of the entropy of the universe for the reversible process is zero.

Enthalpy • Many experiments are carried out at constant pressure –worthwhile developing a method. • Changes in volume at constant pressure lead to work being done on or by the system. – d. U = đQR - Pd. V • The heat added to the system at constant pressure can be written. – đQR = d. U + Pd. V = d(U + PV) which is true as undertaken at constant pressure so d. P = 0. • H = U + PV, is called the Enthalpy – d. H = d(U + PV) = d. U + Vd. P + Pd. V = d. QR + Vd. P – This is a general expression, the enthalpy is a state function with units of energy.

Enthalpy • Change in volume at constant pressure – changes of phase, boiling, melting or changing crystal structure. • E. g. 1 mole of Ca. CO 3 could change crystal structure at 1 bar (105 Pa) with an increase in internal energy of 210 J and a density change from 2710 -2930 kgm-3. • The change in enthalpy is: • H = 210 + 105 (34 -37) 10 -6 = 209. 7 J. • H is very similar to U as the PV term is small at low pressure for solids. This isn’t true at high pressure or for gases.

Measuring Entropy and Latent Heats • Consider heat being added reversibly to a system which is undergoing a phase transition. • At the transition temperature heat flows but the temperature remains constant. The H that flows is the latent heat. +values are endothermic –ve values exothermic.

Measuring Entropy • To measure the entropy assume the lowest temp. we can attain is T 0 and we measure Cp as a function of T from T 0 up to Tf.

Measuring Entropy • Cp(T) can typically be measured by calorimetry except at absolute zero as we cannot get there. • Most non-metallic solids show Cp(T) = a. T 3 so we can extrapolate to find S(0). • These tend to converge on the same value of S(T=0) so we define S(T=0).

Third Law of Thermodynamics • If the entropy of every element in its stable state at T=0 is taken to be zero, then every substance has a positive entropy which at T=0 may become zero, and does become zero for all perfectly ordered states of condensed matter. • Such a definition makes the entropy proportional to the size (number elements) in the system – ie entropy is extensive. • By defining S(0) we can find entropy by experiment and define new state functions such as the Helmholtz free energy F = U-TS. • This is central to statistical mechanics that we will develop in detail.

Dr Roger Bennett R. A. Bennett@Reading. ac. uk Rm. 23 Xtn. 8559 Lecture 14

Third Law of Thermodynamics • If the entropy of every element in its stable state at T=0 is taken to be zero, then every substance has a positive entropy which at T=0 may become zero, and does become zero for all perfectly ordered states of condensed matter. • Such a definition makes the entropy proportional to the size (number elements) in the system – ie entropy is extensive. • By defining S(0) we can find entropy by experiment and define new state functions such as the Helmholtz free energy F = U-TS. • This is central to statistical mechanics that we will develop in detail.

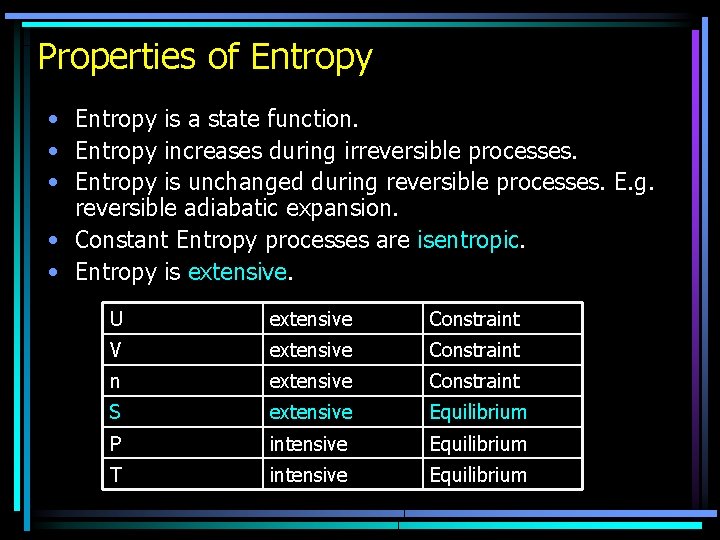

Properties of Entropy • Entropy is a state function. • Entropy increases during irreversible processes. • Entropy is unchanged during reversible processes. E. g. reversible adiabatic expansion. • Constant Entropy processes are isentropic. • Entropy is extensive. U extensive Constraint V extensive Constraint n extensive Constraint S extensive Equilibrium P intensive Equilibrium T intensive Equilibrium

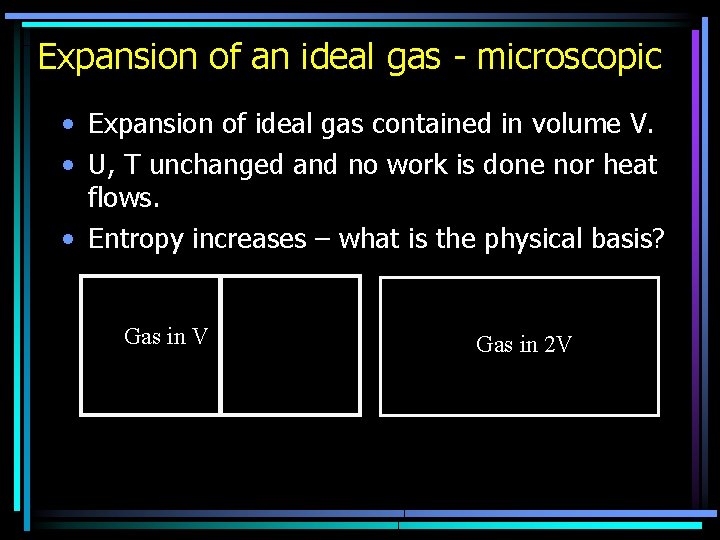

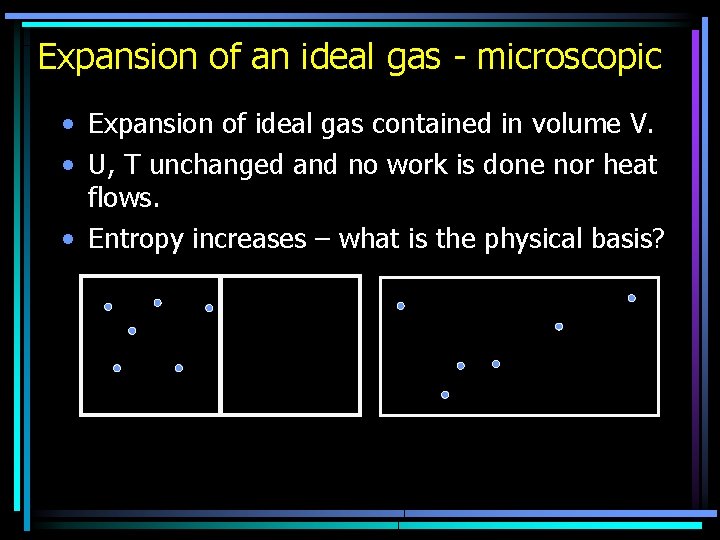

Expansion of an ideal gas - microscopic • Expansion of ideal gas contained in volume V. • U, T unchanged and no work is done nor heat flows. • Entropy increases – what is the physical basis? Gas in V Gas in 2 V

Free expansion • No temperature change means no change in kinetic energy distribution. • The only physical difference is that the atoms have more space in which to move. • We may imagine that there are more ways in which the atoms may be arranged in the larger volume. • Statistical mechanics takes this viewpoint and analyses how many different states are possible that give rise to the same macroscopic properties.

Statistical View • The constraints on the system (U, V and n) define the macroscopic state of the system (macrostate). • We need to know how many microscopic states (microstates or quantum states) satisfy the macrostate. • A microstate for a system is one for which everything that can in principle be known is known. • The number of microstates that give rise to a macrostate is called thermodynamic probability, , of that macrostate. (alternatively the Statistical Weight W) • The largest thermodynamic probability dominates. • E. g. think about rolling two dice, how many ways are there of rolling 7, 2 or 12? • The essential assumption of statistical mechanics is that each microstate is equally likely.

Statistical View • Boltzmann’s Hypothesis: • The Entropy is a function of the statistical weight or thermodynamic probability: S = ø(W) • If we have two systems A and B each with entropy SA and SB respectively. Then we expect the total entropy of the two systems to be SAB = SA + SB (extensive). • Think about the probabilities. • WAB = WA WB • So SAB = ø(WA) + ø(WB) = ø(WAWB)

Statistical View • • Boltzmann’s Hypothesis: SAB = ø(WA) + ø(WB) = ø(WAWB) The only functions that behave like this are logarithms. S = k ln(W) Boltzmann relation • The microscopic viewpoint thus interprets the increase in entropy for an isolated system as a consequence of the natural tendency of the system to move from a less probable to a more probable state.

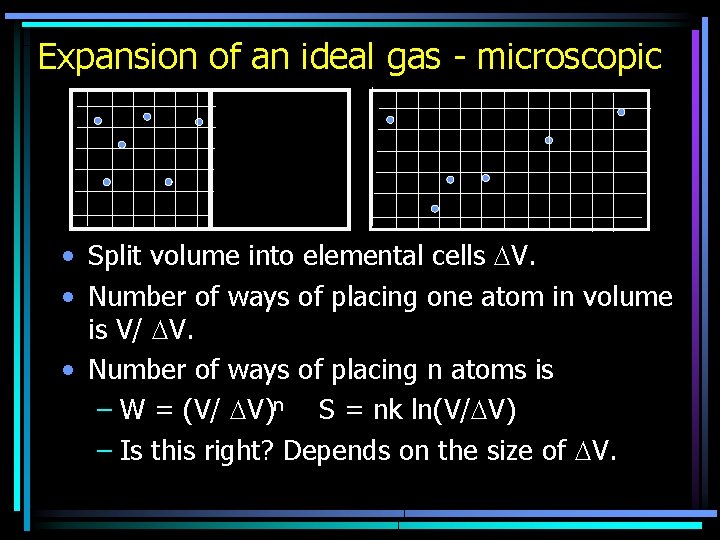

Expansion of an ideal gas - microscopic • Expansion of ideal gas contained in volume V. • U, T unchanged and no work is done nor heat flows. • Entropy increases – what is the physical basis?

Expansion of an ideal gas - microscopic • Split volume into elemental cells V. • Number of ways of placing one atom in volume is V/ V. • Number of ways of placing n atoms is – W = (V/ V)n S = nk ln(V/ V) – Is this right? Depends on the size of V.

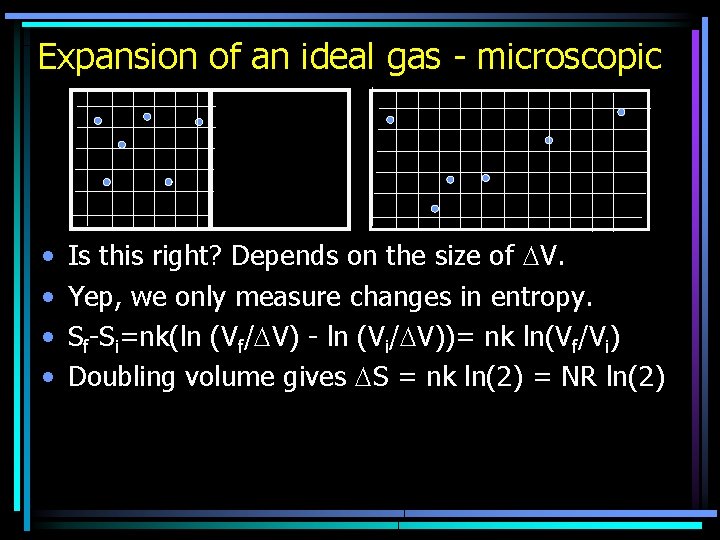

Expansion of an ideal gas - microscopic • • Is this right? Depends on the size of V. Yep, we only measure changes in entropy. Sf-Si=nk(ln (Vf/ V) - ln (Vi/ V))= nk ln(Vf/Vi) Doubling volume gives S = nk ln(2) = NR ln(2)

- Slides: 22