Doing Basic Program Evaluation in Your School Camille

Doing Basic Program Evaluation in Your School Camille Whitney Head of Research 1/24/17

Contents of this presentation 1. Defining program evaluation 2. How to decide whether to participate in program evaluation or academic research 3. How to conduct program evaluation

What is program evaluation? Definition: Systematic gathering and analysis of data that allows you to make judgments about the value or merit of an intervention. Purposes: 1. Improve program and related practices/policies 2. Promote and demonstrate accountability

What is program evaluation? (2) In addition to assessing program effectiveness, program evaluation may also try to answer: 1. How was program implemented (compared to our plans)? 2. Which aspects of implementation seemed to help or hinder effectiveness?

Contents of this presentation 1. Defining program evaluation 2. How to decide whether to participate in program evaluation or academic research 3. How to conduct program evaluation

Conduct program evaluation? Should you conduct program evaluation? Yes, if you want or need to: 1. Use results to improve program and related practices/policies 2. Promote and demonstrate accountability However, you may want to take a more informal approach – especially when starting out your program. Example: Have students and teachers fill out post-program evaluation forms and conduct interviews/focus groups with key stakeholders such as students and school staff. Can also videotape some of the responses.

Participate in academic research? Academic research purpose is to contribute to general knowledge Target study to “hole” in the literature Generally need: A lot of your time for a few years A good research partner (Ph. D student? ) Sufficient number of willing participants

Contents of this presentation 1. Defining program evaluation 2. How to decide whether to participate in program evaluation or academic research 3. How to conduct program evaluation

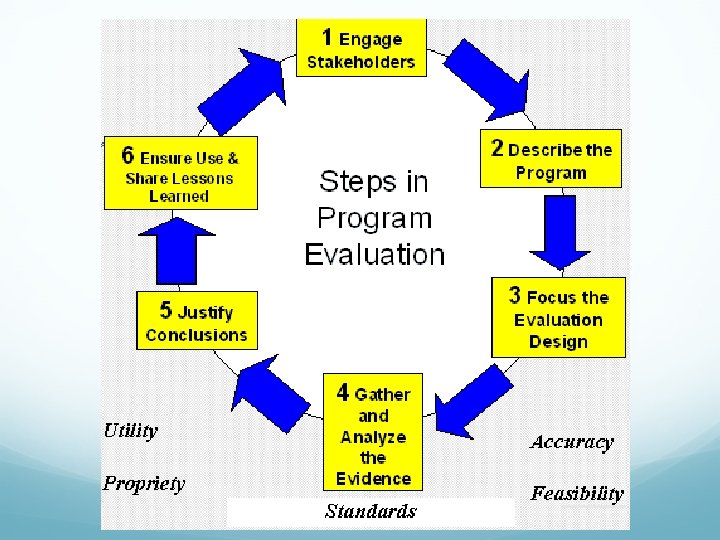

1. Engage Stakeholders Conduct needs assessment à Hear from the stakeholders the biggest problems they would like to see addressed at the school à Problem-solution framework Discuss need for program evaluation & address concerns à Stakeholder input and buy-in Form a committee to oversee program evaluation -? à Inclusive leadership

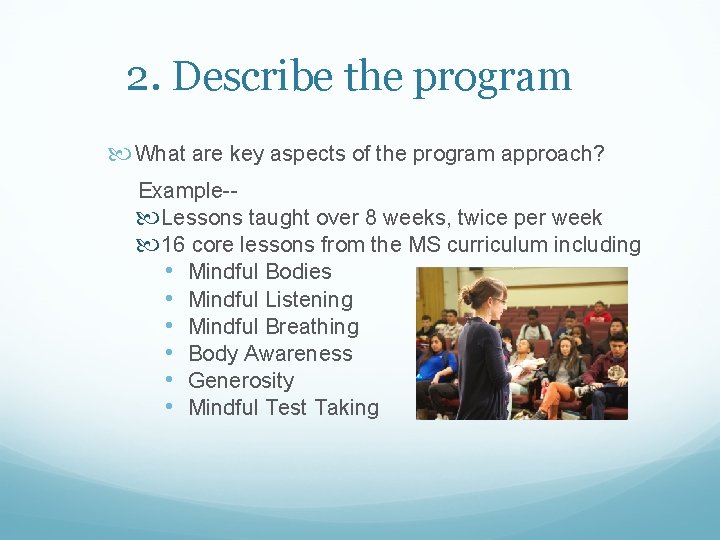

2. Describe the program What are key aspects of the program approach? Example- Lessons taught over 8 weeks, twice per week 16 core lessons from the MS curriculum including • Mindful Bodies • Mindful Listening • Mindful Breathing • Body Awareness • Generosity • Mindful Test Taking

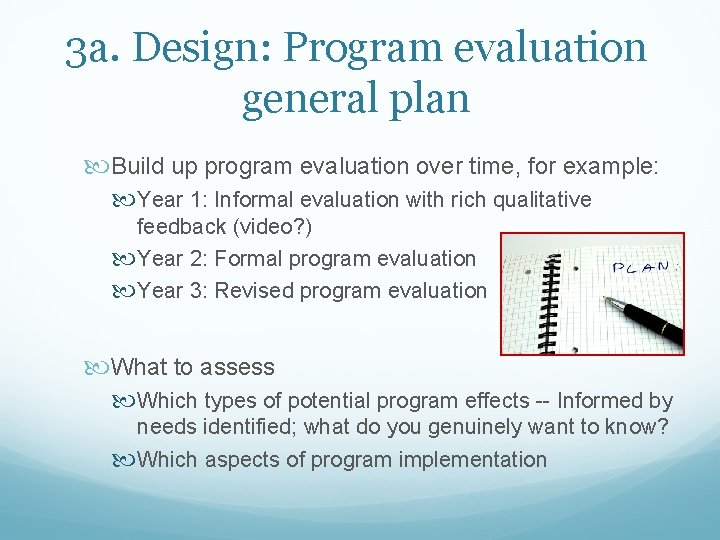

3 a. Design: Program evaluation general plan Build up program evaluation over time, for example: Year 1: Informal evaluation with rich qualitative feedback (video? ) Year 2: Formal program evaluation Year 3: Revised program evaluation What to assess Which types of potential program effects -- Informed by needs identified; what do you genuinely want to know? Which aspects of program implementation

3 b. Design: Procedure of who, what, when, where, how Start this process early – end of the last school year or summer, at least Inform stakeholders such as parents of procedure Ask educators for cooperation and any specific data collection commitments What equipment do you need? Expenses?

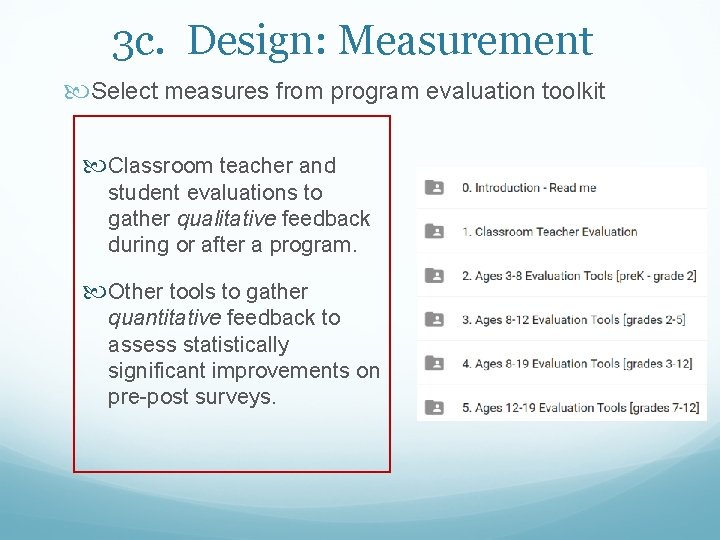

3 c. Design: Measurement Select measures from program evaluation toolkit Classroom teacher and student evaluations to gather qualitative feedback during or after a program. Other tools to gather quantitative feedback to assess statistically significant improvements on pre-post surveys.

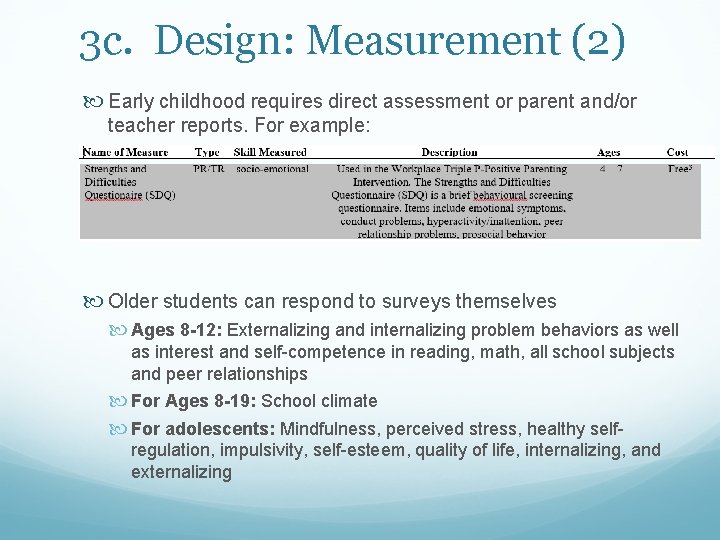

3 c. Design: Measurement (2) Early childhood requires direct assessment or parent and/or teacher reports. For example: Older students can respond to surveys themselves Ages 8 -12: Externalizing and internalizing problem behaviors as well as interest and self-competence in reading, math, all school subjects and peer relationships For Ages 8 -19: School climate For adolescents: Mindfulness, perceived stress, healthy selfregulation, impulsivity, self-esteem, quality of life, internalizing, and externalizing

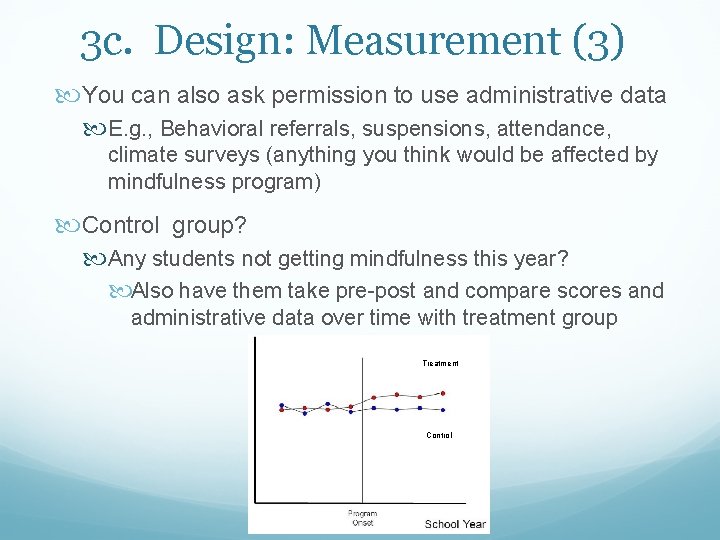

3 c. Design: Measurement (3) You can also ask permission to use administrative data E. g. , Behavioral referrals, suspensions, attendance, climate surveys (anything you think would be affected by mindfulness program) Control group? Any students not getting mindfulness this year? Also have them take pre-post and compare scores and administrative data over time with treatment group Treatment Control

4. Gather and analyze the evidence

Example of selecting measures uppose you are working with students age _ and want to look at effects on _

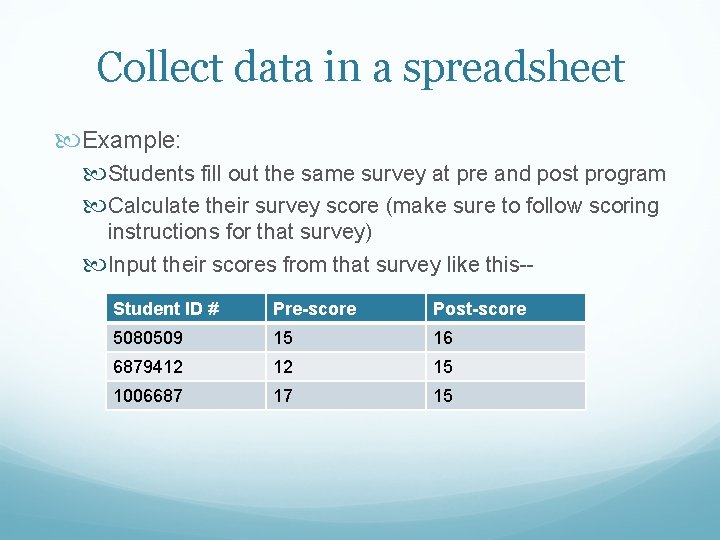

Collect data in a spreadsheet Example: Students fill out the same survey at pre and post program Calculate their survey score (make sure to follow scoring instructions for that survey) Input their scores from that survey like this-- Student ID # Pre-score Post-score 5080509 15 16 6879412 12 15 1006687 17 15

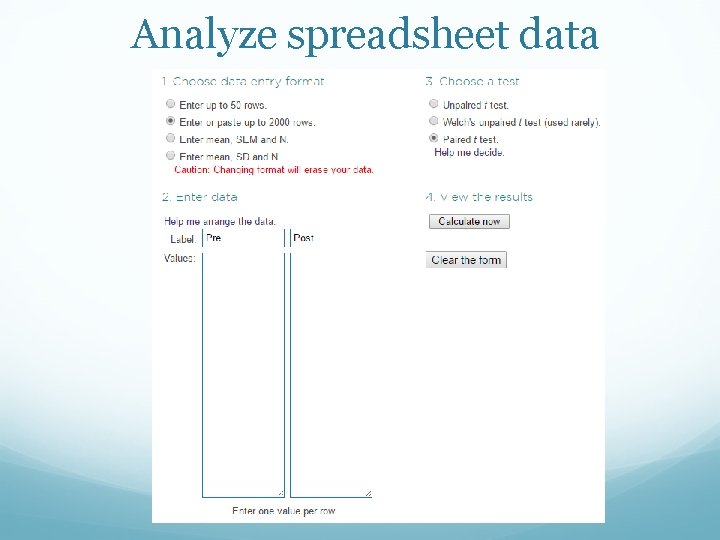

Analyze spreadsheet data

5. Justify conclusions Describe if you found statistically significant improvements on pre-post scales, if applicable Describe patterns and themes in the data Compelling anecdotal evidence, like quotes (videos!)

6. Ensure use and share lessons learned Report out to relevant stakeholder groups Highlight a few key takeaways Suggest and get feedback on next steps Tweaks to the program approach based on findings?

Appendix

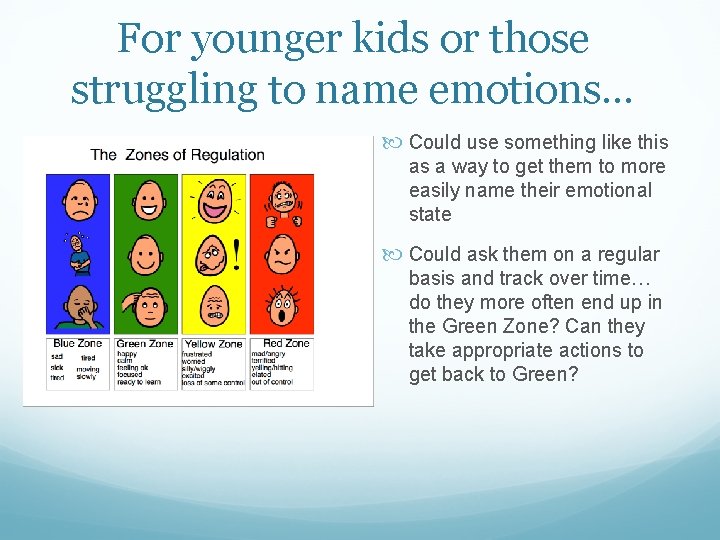

For younger kids or those struggling to name emotions… Could use something like this as a way to get them to more easily name their emotional state Could ask them on a regular basis and track over time… do they more often end up in the Green Zone? Can they take appropriate actions to get back to Green?

- Slides: 24