Distributed Systems Lecture 1 Introduction to distributed systems

Distributed Systems Lecture 1 Introduction to distributed systems 1

Distributed systems • • • “A collection of (probably heterogeneous) automata whose distribution is transparent to the user so that the system appears as one local machine. This is in contrast to a network, where the user is aware that there are several machines, and their location, storage replication, load balancing and functionality is not transparent. Distributed systems usually use some kind of client-server organization. ” – FOLDOC “A Distributed System comprises several single components on different computers, which normally do not operate using shared memory and as a consequence communicate via the exchange of messages. The various components involved cooperate to achieve a common objective such as the performing of a business process. ” – Schill & Springer Main characteristics – Components: • • • Multiple spatially separated individual components Components posses own memory Cooperation towards a common objective – Resources: • Access to common resources (e. g. , databases, file systems) – Communication: • Communication via messages – Infrastructure: • Heterogeneous hardware infrastructure & software middleware 2

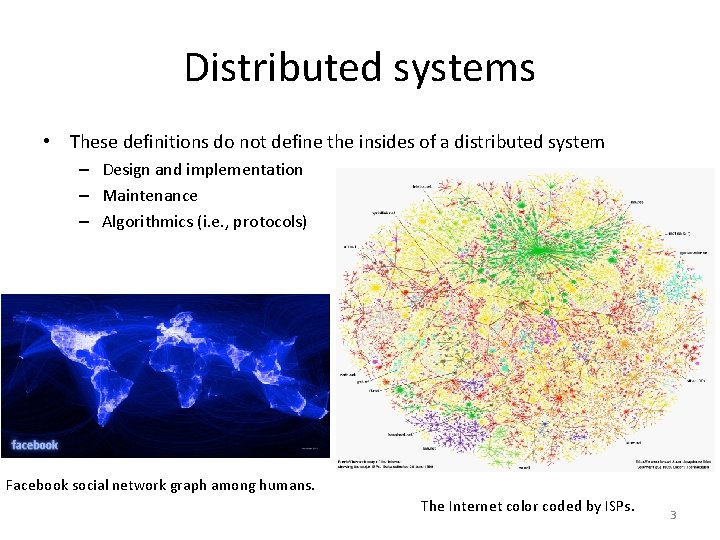

Distributed systems • These definitions do not define the insides of a distributed system – Design and implementation – Maintenance – Algorithmics (i. e. , protocols) Facebook social network graph among humans. The Internet color coded by ISPs. 3

A working definition • “A distributed system (DS) is a collection of entities, each of which is autonomous, programmable, asynchronous and failure-prone, and which communicate through an unreliable communication medium. ” • Terms – Entity = process on a device (PC, server, tablet, smartphone) – Communication medium = wired or wireless network • Course objective: – Design and implementation of distributed systems Source: https: //courses. engr. illinois. edu/cs 425/fa 2013/lectures. html 4

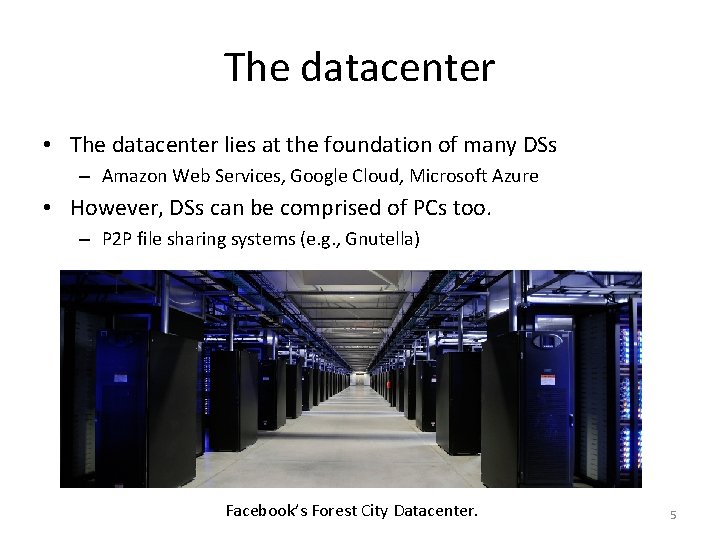

The datacenter • The datacenter lies at the foundation of many DSs – Amazon Web Services, Google Cloud, Microsoft Azure • However, DSs can be comprised of PCs too. – P 2 P file sharing systems (e. g. , Gnutella) Facebook’s Forest City Datacenter. 5

Example – Gnutella P 2 P • What are the entities and communication medium? 6

Example – web domains • What are the entities and communication medium? 7

The Internet • Used by many distributed systems • Vast collection of heterogeneous computer networks • ISPs – companies that provide services for accessing and using the Internet • Intranets – subnetworks operated by companies and organizations – Offer services unavailable to the public from the Internet – Can be the ISP’s core routers – Linked by backbones • High bandwidth network links 8

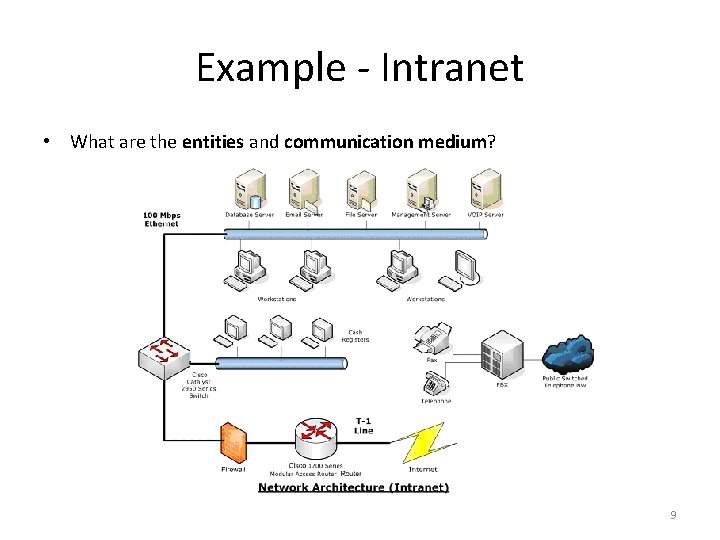

Example - Intranet • What are the entities and communication medium? 9

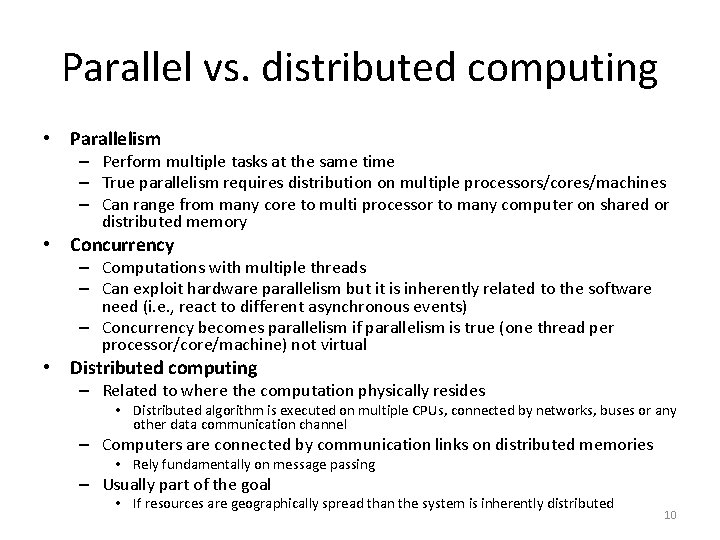

Parallel vs. distributed computing • Parallelism – Perform multiple tasks at the same time – True parallelism requires distribution on multiple processors/cores/machines – Can range from many core to multi processor to many computer on shared or distributed memory • Concurrency – Computations with multiple threads – Can exploit hardware parallelism but it is inherently related to the software need (i. e. , react to different asynchronous events) – Concurrency becomes parallelism if parallelism is true (one thread per processor/core/machine) not virtual • Distributed computing – Related to where the computation physically resides • Distributed algorithm is executed on multiple CPUs, connected by networks, buses or any other data communication channel – Computers are connected by communication links on distributed memories • Rely fundamentally on message passing – Usually part of the goal • If resources are geographically spread than the system is inherently distributed 10

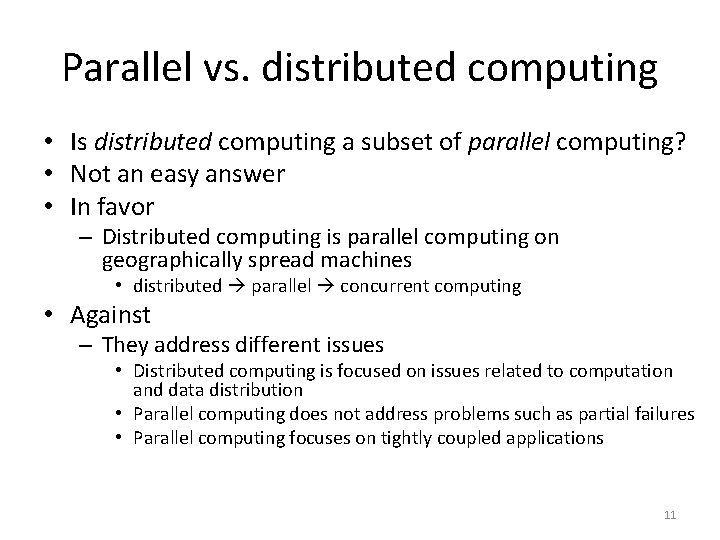

Parallel vs. distributed computing • Is distributed computing a subset of parallel computing? • Not an easy answer • In favor – Distributed computing is parallel computing on geographically spread machines • distributed parallel concurrent computing • Against – They address different issues • Distributed computing is focused on issues related to computation and data distribution • Parallel computing does not address problems such as partial failures • Parallel computing focuses on tightly coupled applications 11

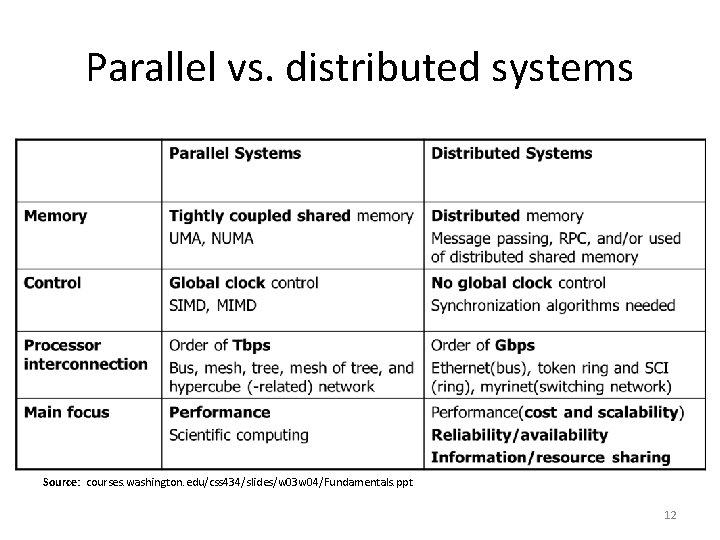

Parallel vs. distributed systems Source: courses. washington. edu/css 434/slides/w 03 w 04/Fundamentals. ppt 12

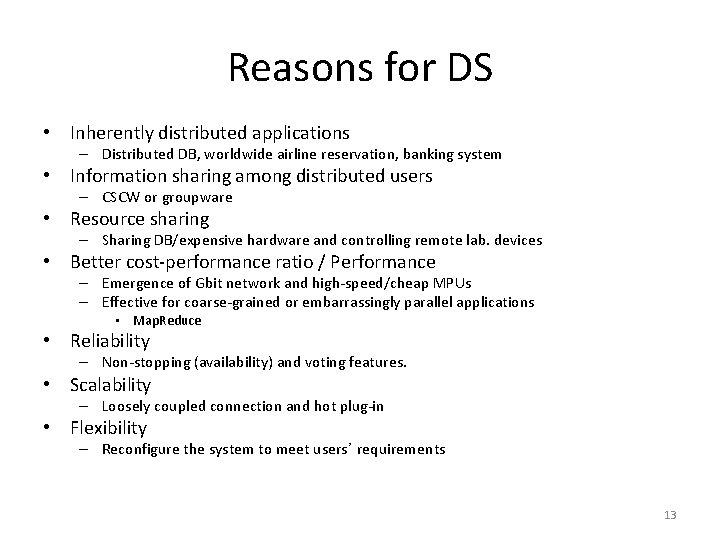

Reasons for DS • Inherently distributed applications – Distributed DB, worldwide airline reservation, banking system • Information sharing among distributed users – CSCW or groupware • Resource sharing – Sharing DB/expensive hardware and controlling remote lab. devices • Better cost-performance ratio / Performance – Emergence of Gbit network and high-speed/cheap MPUs – Effective for coarse-grained or embarrassingly parallel applications • Map. Reduce • Reliability – Non-stopping (availability) and voting features. • Scalability – Loosely coupled connection and hot plug-in • Flexibility – Reconfigure the system to meet users’ requirements 13

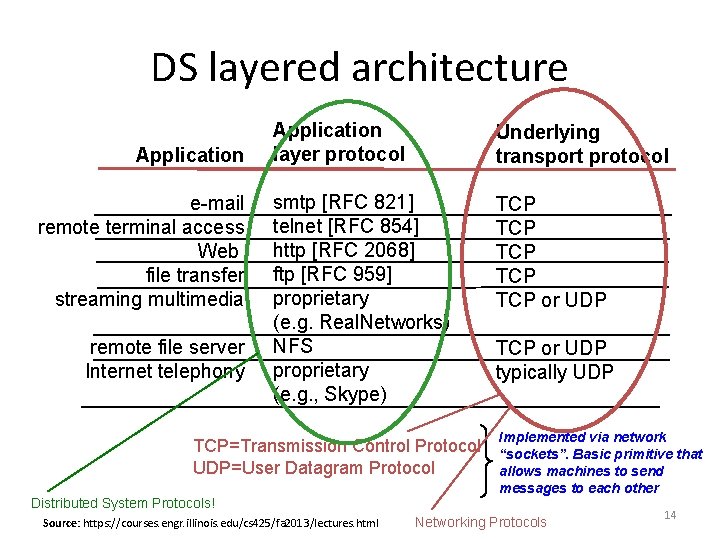

DS layered architecture Application e-mail remote terminal access Web file transfer streaming multimedia remote file server Internet telephony Application layer protocol Underlying transport protocol smtp [RFC 821] telnet [RFC 854] http [RFC 2068] ftp [RFC 959] proprietary (e. g. Real. Networks) NFS proprietary (e. g. , Skype) TCP TCP TCP or UDP TCP=Transmission Control Protocol UDP=User Datagram Protocol TCP or UDP typically UDP Implemented via network “sockets”. Basic primitive that allows machines to send messages to each other Distributed System Protocols! Source: https: //courses. engr. illinois. edu/cs 425/fa 2013/lectures. html Networking Protocols 14

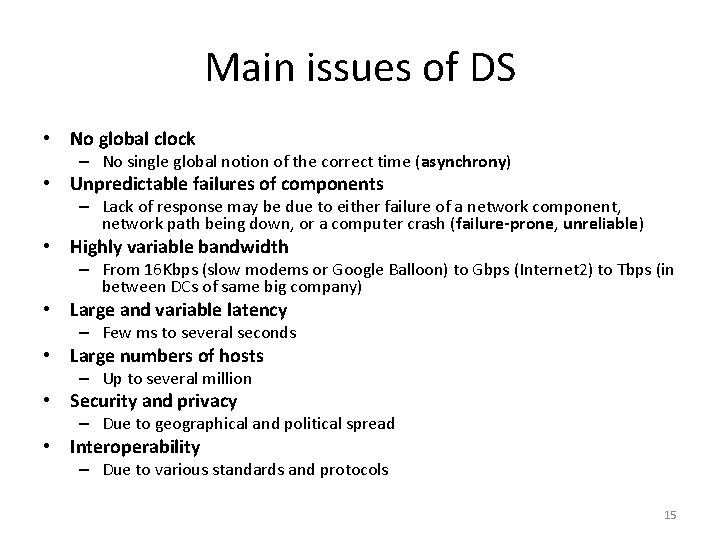

Main issues of DS • No global clock – No single global notion of the correct time (asynchrony) • Unpredictable failures of components – Lack of response may be due to either failure of a network component, network path being down, or a computer crash (failure-prone, unreliable) • Highly variable bandwidth – From 16 Kbps (slow modems or Google Balloon) to Gbps (Internet 2) to Tbps (in between DCs of same big company) • Large and variable latency – Few ms to several seconds • Large numbers of hosts – Up to several million • Security and privacy – Due to geographical and political spread • Interoperability – Due to various standards and protocols 15

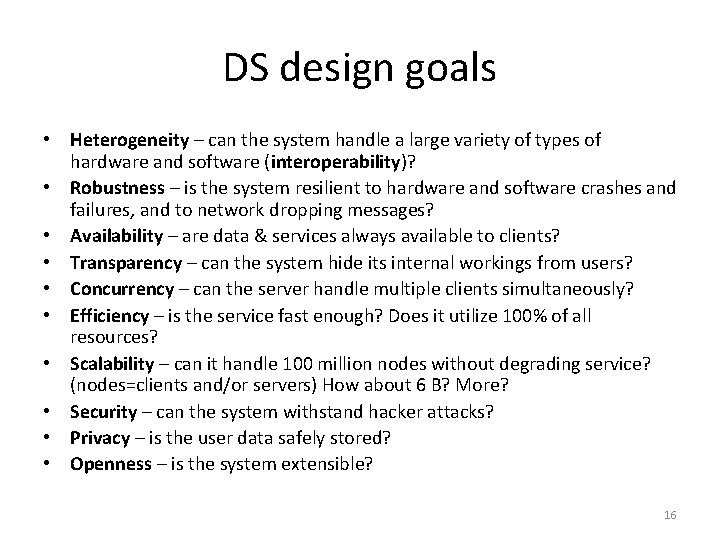

DS design goals • Heterogeneity – can the system handle a large variety of types of hardware and software (interoperability)? • Robustness – is the system resilient to hardware and software crashes and failures, and to network dropping messages? • Availability – are data & services always available to clients? • Transparency – can the system hide its internal workings from users? • Concurrency – can the server handle multiple clients simultaneously? • Efficiency – is the service fast enough? Does it utilize 100% of all resources? • Scalability – can it handle 100 million nodes without degrading service? (nodes=clients and/or servers) How about 6 B? More? • Security – can the system withstand hacker attacks? • Privacy – is the user data safely stored? • Openness – is the system extensible? 16

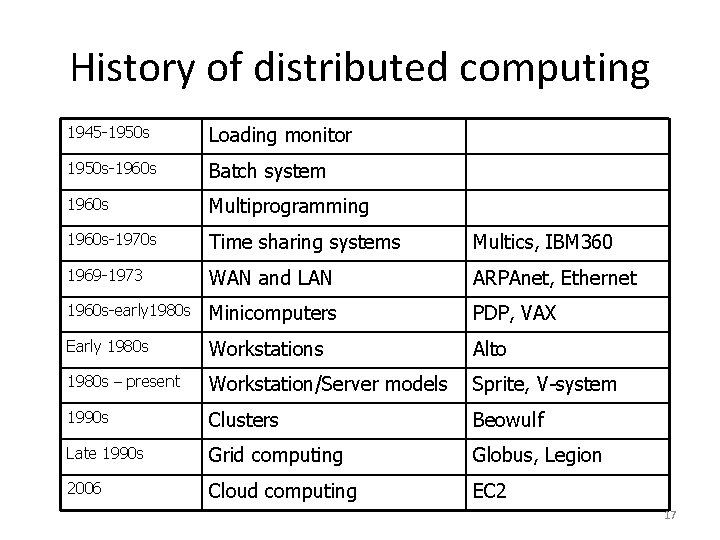

History of distributed computing 1945 -1950 s Loading monitor 1950 s-1960 s Batch system 1960 s Multiprogramming 1960 s-1970 s Time sharing systems Multics, IBM 360 1969 -1973 WAN and LAN ARPAnet, Ethernet 1960 s-early 1980 s Minicomputers PDP, VAX Early 1980 s Workstations Alto 1980 s – present Workstation/Server models Sprite, V-system 1990 s Clusters Beowulf Late 1990 s Grid computing Globus, Legion 2006 Cloud computing EC 2 17

DS system models • • • Minicomputer model Workstation-server model Processor-pool model Cluster model Grid computing 18

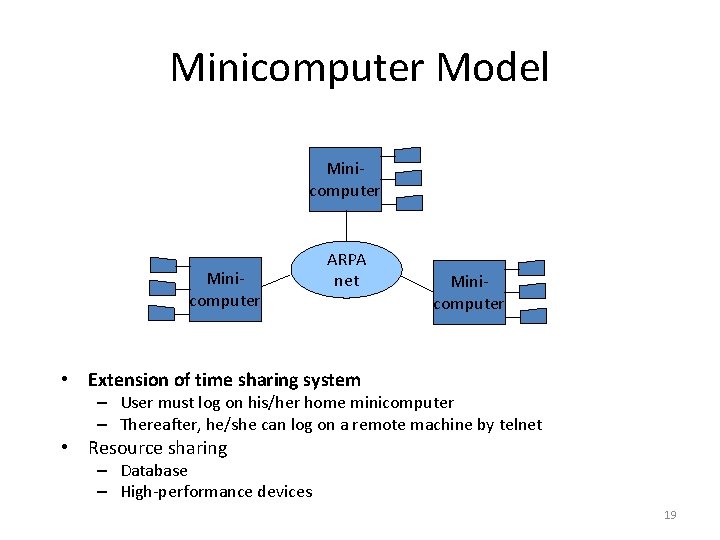

Minicomputer Model Minicomputer ARPA net Minicomputer • Extension of time sharing system – User must log on his/her home minicomputer – Thereafter, he/she can log on a remote machine by telnet • Resource sharing – Database – High-performance devices 19

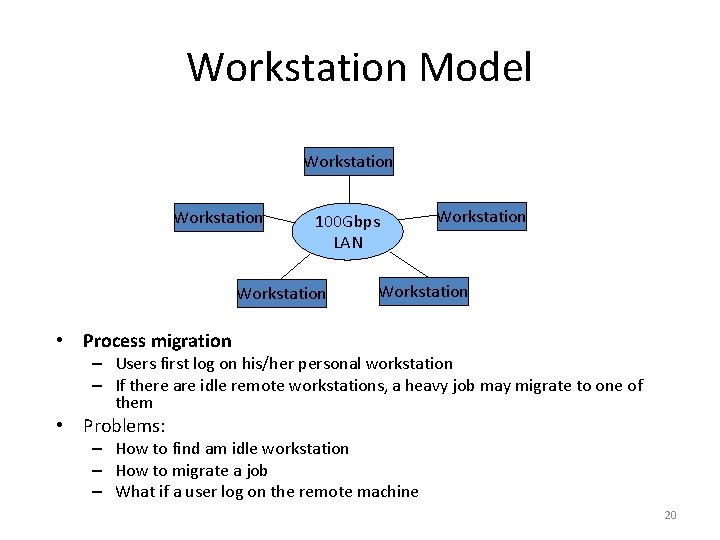

Workstation Model Workstation 100 Gbps LAN Workstation • Process migration – Users first log on his/her personal workstation – If there are idle remote workstations, a heavy job may migrate to one of them • Problems: – How to find am idle workstation – How to migrate a job – What if a user log on the remote machine 20

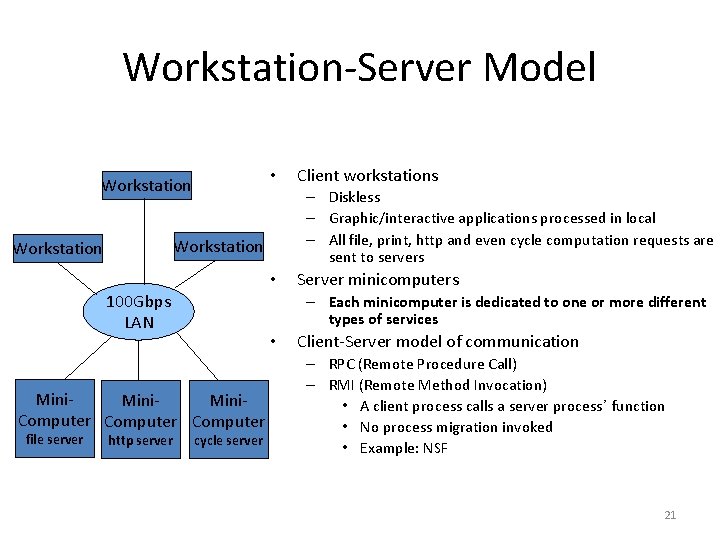

Workstation-Server Model • Workstation – Diskless – Graphic/interactive applications processed in local – All file, print, http and even cycle computation requests are sent to servers Workstation • 100 Gbps LAN http server Server minicomputers – Each minicomputer is dedicated to one or more different types of services • Mini. Computer file server Client workstations cycle server Client-Server model of communication – RPC (Remote Procedure Call) – RMI (Remote Method Invocation) • A client process calls a server process’ function • No process migration invoked • Example: NSF 21

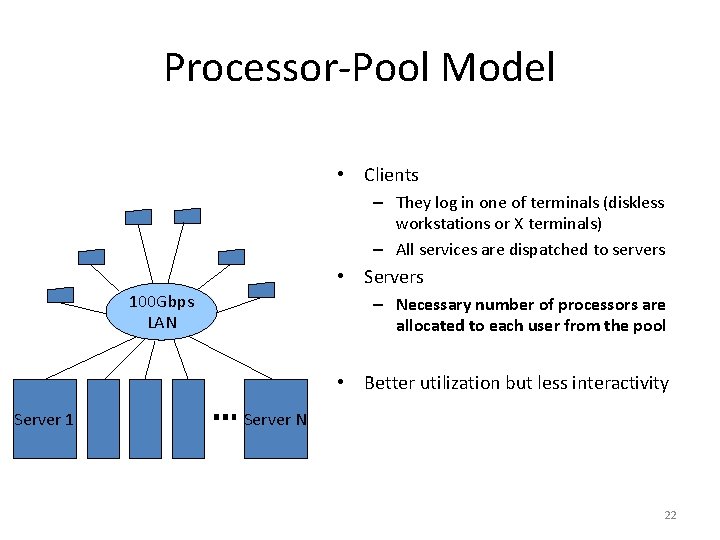

Processor-Pool Model • Clients – They log in one of terminals (diskless workstations or X terminals) – All services are dispatched to servers • Servers 100 Gbps LAN – Necessary number of processors are allocated to each user from the pool • Better utilization but less interactivity Server 1 Server N 22

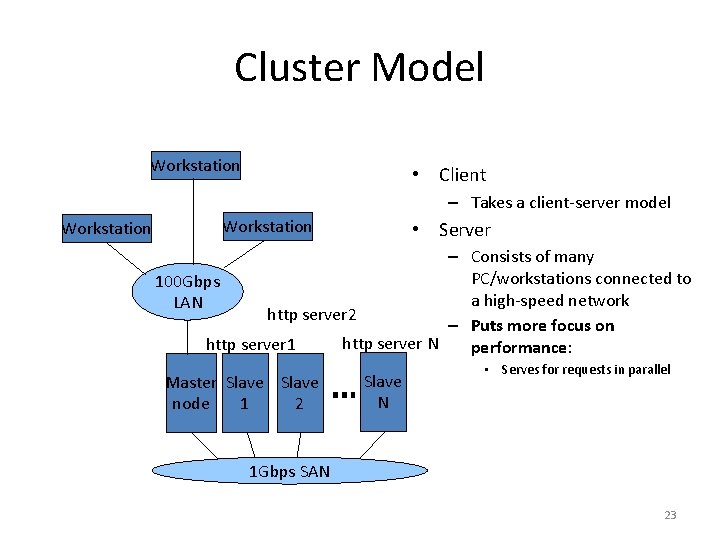

Cluster Model Workstation • Client – Takes a client-server model Workstation • Server – Consists of many PC/workstations connected to 100 Gbps a high-speed network LAN http server 2 – Puts more focus on http server N http server 1 performance: Master Slave node 1 2 Slave N • Serves for requests in parallel 1 Gbps SAN 23

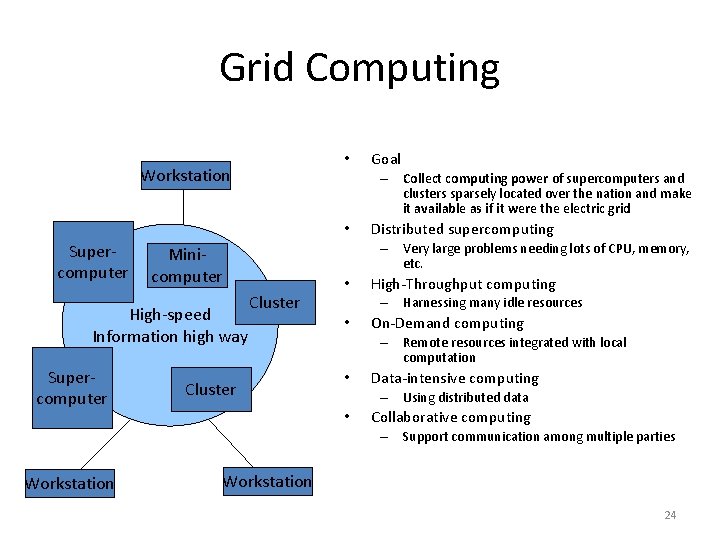

Grid Computing • Workstation – Collect computing power of supercomputers and clusters sparsely located over the nation and make it available as if it were the electric grid • Supercomputer Distributed supercomputing – Very large problems needing lots of CPU, memory, etc. Minicomputer High-speed Information high way Goal Cluster • High-Throughput computing – Harnessing many idle resources • On-Demand computing – Remote resources integrated with local computation • Data-intensive computing – Using distributed data • Collaborative computing – Support communication among multiple parties Workstation 24

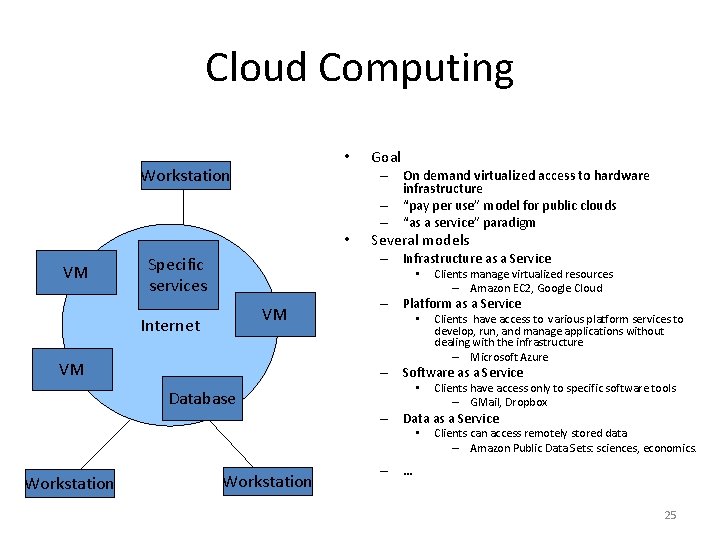

Cloud Computing • Workstation – On demand virtualized access to hardware infrastructure – “pay per use” model for public clouds – “as a service” paradigm • VM Goal Several models – Infrastructure as a Service Specific services • VM Internet VM Clients manage virtualized resources – Amazon EC 2, Google Cloud – Platform as a Service • Clients have access to various platform services to develop, run, and manage applications without dealing with the infrastructure – Microsoft Azure – Software as a Service • Database Clients have access only to specific software tools – GMail, Dropbox – Data as a Service • Workstation Clients can access remotely stored data – Amazon Public Data Sets: sciences, economics. – … 25

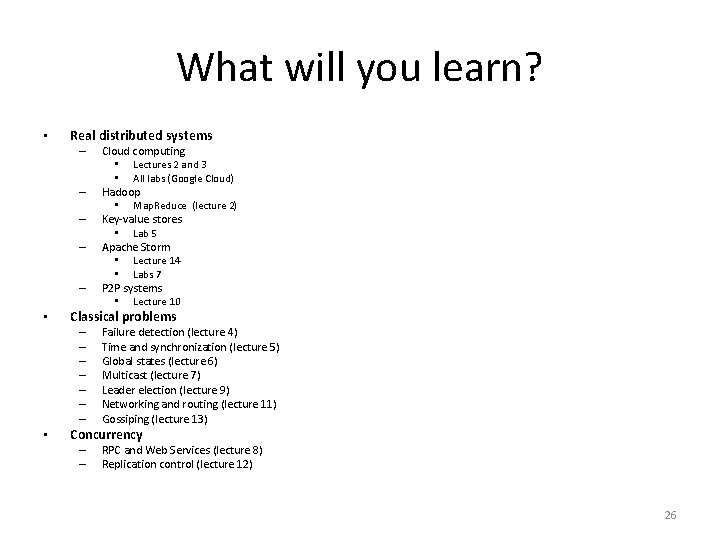

What will you learn? • Real distributed systems – – – • Classical problems – – – – • Cloud computing • Lectures 2 and 3 • All labs (Google Cloud) Hadoop • Map. Reduce (lecture 2) Key-value stores • Lab 5 Apache Storm • Lecture 14 • Labs 7 P 2 P systems • Lecture 10 Failure detection (lecture 4) Time and synchronization (lecture 5) Global states (lecture 6) Multicast (lecture 7) Leader election (lecture 9) Networking and routing (lecture 11) Gossiping (lecture 13) Concurrency – – RPC and Web Services (lecture 8) Replication control (lecture 12) 26

Grading • Scientific paper analysis (20%) – Students will have to pick a paper from a top conference (published in the last 3 years) and present it • Lab assigments (80%) – Assignments given during lab hours • Documentation – Lecture slides, references inside the slides, Google cloud, Science. Direct, IEEE Explore, Research. Gate. 27

Next lecture • Introduction to cloud computing 28

- Slides: 28