Distributed Systems Fall 2010 Distributed transactions Outline Flat

Distributed Systems Fall 2010 Distributed transactions

Outline • Flat and nested distributed transactions • Atomic commit – Two-phase commit protocol • Concurrency control – Locking – Optimistic concurrency control • Distributed deadlock – Edge chasing • Summary Fall 2010 5 DV 020 3

Flat and nested distributed transactions • Distributed transaction: – Transactions dealing with objects managed by different processes • Allows for even better performance – At the price of increased complexity • Transaction coordinators and object servers – Participants in the transaction Fall 2010 5 DV 020 4

Atomic commit • If client is told that the transaction is committed, it must be committed at all object servers –. . . at the same time –. . . in spite of (crash) failures and asynchronous systems Fall 2010 5 DV 020 5

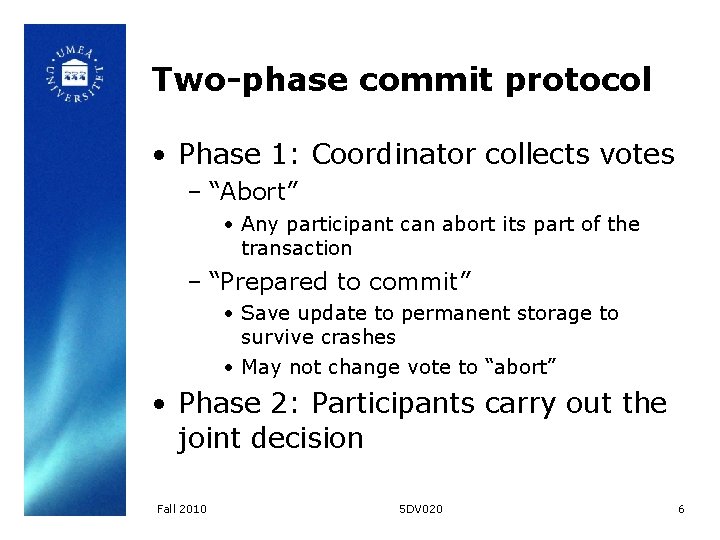

Two-phase commit protocol • Phase 1: Coordinator collects votes – “Abort” • Any participant can abort its part of the transaction – “Prepared to commit” • Save update to permanent storage to survive crashes • May not change vote to “abort” • Phase 2: Participants carry out the joint decision Fall 2010 5 DV 020 6

Two-phase commit protocol (in detail) • Phase 1 (voting): – Coordinator sends “can. Commit? ” to each participant – Participants answer “yes” or “no” • “Yes”: update saved to permanent storage • “No”: abort immediately Fall 2010 5 DV 020 7

Two-phase commit protocol (in detail) • Phase 2 (completion): – Coordinator collects votes (including own) • No failures and all votes are “yes”? Send “do. Commit” to each participant, otherwise, send “do. Abort” – Participants are in the “uncertain” state until they receive “do. Commit” or “do. Abort”, and may act accordingly • Confirm commit via “have. Committed” Fall 2010 5 DV 020 8

Two-phase commit protocol • If coordinator fails – Participants are “uncertain” • If some have received an answer (or they can figure it out themselves), they can coordinate themselves – Participants can request status – If participant has not received “can. Commit? ” and waits too long, it may abort Fall 2010 5 DV 020 9

Two-phase commit protocol • If participant fails – No reply to “can. Commit? ” in time? • Coordinator can abort – Crash after “can. Commit? ” • Use permanent storage to get up to speed Fall 2010 5 DV 020 10

Two-phase commit protocol for nested transactions • Subtransactions a “provisional commit” – Nothing written to permanent storage • Ancestor could still abort! – If they crash, the replacement cannot commit • Status information is passed upward in tree – List of provisionally committed subtransactions eventually reach top level Fall 2010 5 DV 020 11

Two-phase commit protocol for nested transactions • Top-level transaction initiates voting phase with provisionally committed transactions – If they have crashed since the provisional commit, they must abort – Before voting “yes”, must prepare to commit data • At this point we use permanent storage – Hierarchic or flat voting Fall 2010 5 DV 020 12

Hierarchic voting • Responsibility to vote passed one level/generation at a time, through the tree Fall 2010 5 DV 020 13

Flat voting • Contact coordinators directly using parameters – Transaction ID – List of transactions that are reported as aborted • Coordinators may manage more than one subtransaction, and due to crashes, this information may be required Fall 2010 5 DV 020 14

Concurrency control revisited • Locks – Release locks when transaction can finish • After phase 1 if transaction should abort • After phase 2 if transaction should commit – Distributed deadlock, oh my! • Optimistic concurrency control – Validate access to local objects – Commitment deadlock if serial – Different transaction order if parallel – Interesting problem! Read book! Fall 2010 5 DV 020 15

Distributed deadlock • Local and distributed deadlocks – Phantom deadlocks • Simplest solution – Manager collects local wait-for information and constructs global waitfor graph • Single point of failure, bad performance, does not scale, what about availability, etc. • Distributed solution Fall 2010 5 DV 020 16

Edge chasing • Initiation: a server notices that T waits for U for object A, so sends <T → U> to server handling A (where U may be blocked) Fall 2010 5 DV 020 17

Edge chasing • Detection: servers handle incoming requests by inspecting if the relevant transaction (U) is also waiting for another transaction (V) – if so, updates probe (<T → U → V>) and sends it along – Loops (e. g. <T → U → V → T>) indicate deadlock Fall 2010 5 DV 020 18

Edge chasing • Resolution: abort a transaction in the cycle • Servers communicate with the coordinators for each transaction to find out what they wait for Fall 2010 5 DV 020 19

Edge chasing • Any problem with the algorithm? – What if all coordinators initiate it, and then (when they detect the loop) start aborting left and right? • Totally ordered transaction priorities – Abort lowest priority! Fall 2010 5 DV 020 20

Edge chasing • Optimization: only initiate probe if a transaction with higher priority waits for a lower one – Also only forward probes to transactions of lower priority Fall 2010 5 DV 020 21

Edge chasing • Any problem with the optimized algorithm? – If higher transactions wait for a lower one (but the lower one is not blocked when the request comes), and it then becomes blocked, it will not initiate probing Fall 2010 5 DV 020 22

Edge chasing • Add probe queues! – All probes that are related to a transaction are saved, and are sent (by the coordinator) to the server of the object with the request for access – Avoids order-related problems (whether deadlock is detected depends on order of transactions) – Works, but increases complexity – Probe queues must be maintained Fall 2010 5 DV 020 23

Summary • Distributed transactions • Atomic commit protocol – Two-phase commit protocol • Vote, then carry out order • Flat transactions • Nested transactions – Voting schemes • Concurrency control – Problems! – Distributed deadlock Fall 2010 • Edge chasing 5 DV 020 24

Next lecture • Daniel takes over! • (The return of) Gossip Fall 2010 5 DV 020 25

- Slides: 25