Distributed Shared Memory DSM Systems build the shared

Distributed Shared Memory (DSM) Systems build the shared memory abstract on top of the distributed memory machines The users have a virtual global address space and the message passing underneath is sorted out by DSM transparently from the users Then we can use shared memory programming techniques Software of implementing DSM http: //www. ics. uci. edu/~javid/dsm/page. html Computer Science, University of Warwick 1

Three types of DSM implementations àPage-based technique q The virtual global address space is divided into equal sized chunks (pages) which are spread over the machines q Page is the minimal sharing unit q The request by a process to access a non-local piece of memory results in a page fault q a trap occurs and the DSM software fetches the required page of memory and restarts the instruction q a decision has to be made whether to replicate pages or maintain only one copy of any page and move it around the network q The granularity of the pages has to be decided before implementation Computer Science, University of Warwick 2

Three types of DSM implementations àShared-variable based technique q only the variables and data structures required by more than one process are shared. q Variable is minimal sharing unit q Trade-off between consistency and network traffic Computer Science, University of Warwick 3

Three types of DSM implementations àObject-based technique q memory can be conceptualized as an abstract space filled with objects (including data and methods) q Object is minimal sharing unit q Trade-off between consistency and network traffic Computer Science, University of Warwick 4

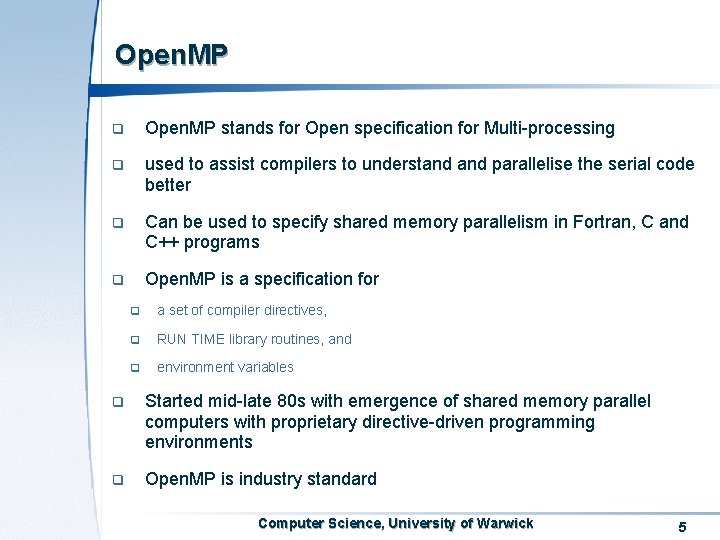

Open. MP q Open. MP stands for Open specification for Multi-processing q used to assist compilers to understand parallelise the serial code better q Can be used to specify shared memory parallelism in Fortran, C and C++ programs q Open. MP is a specification for q a set of compiler directives, q RUN TIME library routines, and q environment variables q Started mid-late 80 s with emergence of shared memory parallel computers with proprietary directive-driven programming environments q Open. MP is industry standard Computer Science, University of Warwick 5

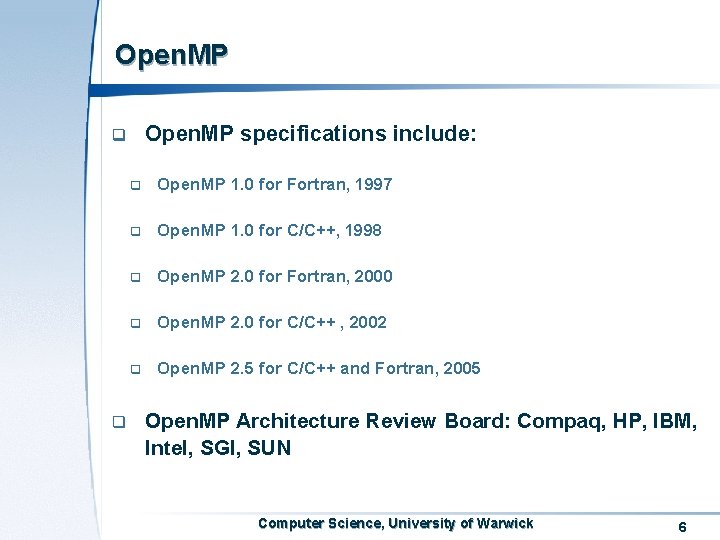

Open. MP specifications include: q q q Open. MP 1. 0 for Fortran, 1997 q Open. MP 1. 0 for C/C++, 1998 q Open. MP 2. 0 for Fortran, 2000 q Open. MP 2. 0 for C/C++ , 2002 q Open. MP 2. 5 for C/C++ and Fortran, 2005 Open. MP Architecture Review Board: Compaq, HP, IBM, Intel, SGI, SUN Computer Science, University of Warwick 6

Open. MP programming model Shared Memory, thread-based parallelism Explicit parallelism Fork-join model Computer Science, University of Warwick 7

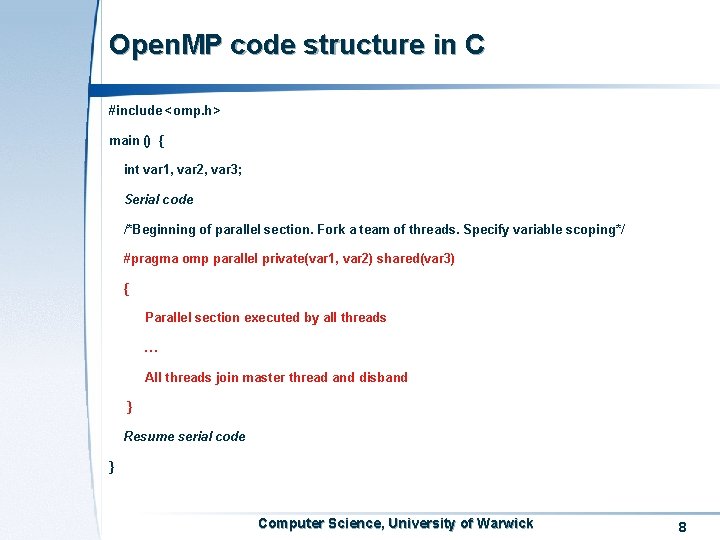

Open. MP code structure in C #include <omp. h> main () { int var 1, var 2, var 3; Serial code /*Beginning of parallel section. Fork a team of threads. Specify variable scoping*/ #pragma omp parallel private(var 1, var 2) shared(var 3) { Parallel section executed by all threads … All threads join master thread and disband } Resume serial code } Computer Science, University of Warwick 8

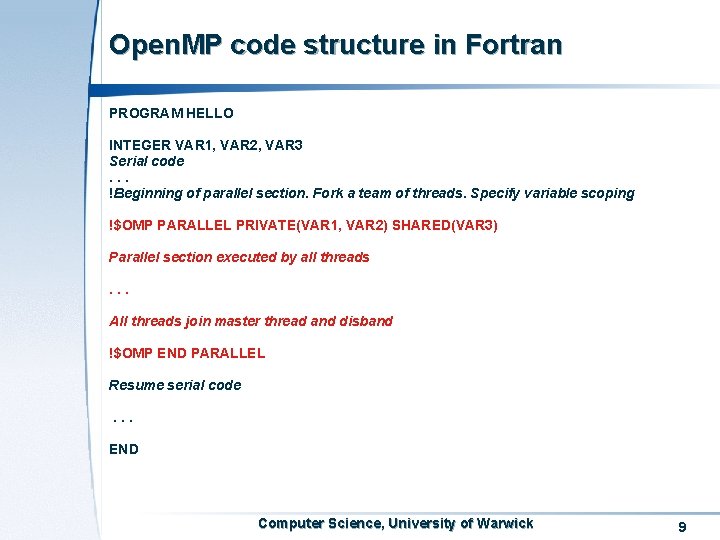

Open. MP code structure in Fortran PROGRAM HELLO INTEGER VAR 1, VAR 2, VAR 3 Serial code. . . !Beginning of parallel section. Fork a team of threads. Specify variable scoping !$OMP PARALLEL PRIVATE(VAR 1, VAR 2) SHARED(VAR 3) Parallel section executed by all threads. . . All threads join master thread and disband !$OMP END PARALLEL Resume serial code. . . END Computer Science, University of Warwick 9

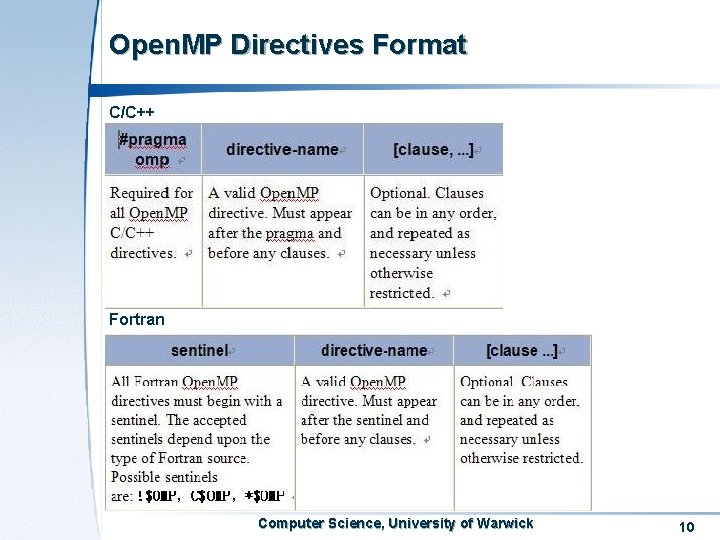

Open. MP Directives Format C/C++ Fortran Computer Science, University of Warwick 10

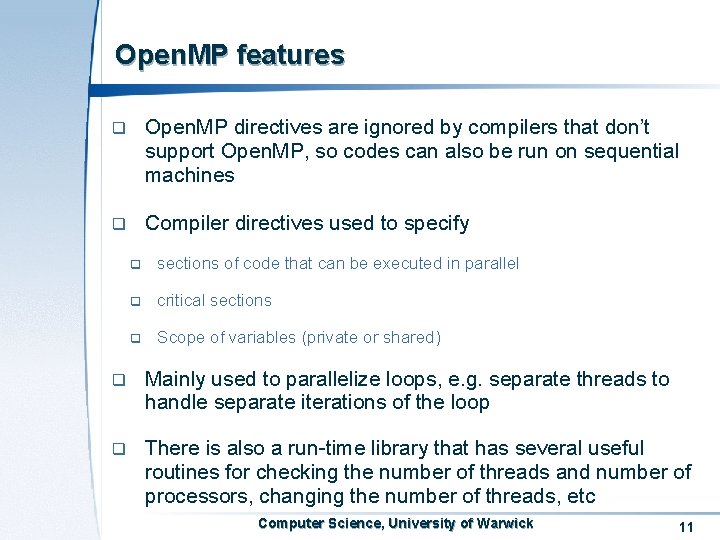

Open. MP features q Open. MP directives are ignored by compilers that don’t support Open. MP, so codes can also be run on sequential machines q Compiler directives used to specify q sections of code that can be executed in parallel q critical sections q Scope of variables (private or shared) q Mainly used to parallelize loops, e. g. separate threads to handle separate iterations of the loop q There is also a run-time library that has several useful routines for checking the number of threads and number of processors, changing the number of threads, etc Computer Science, University of Warwick 11

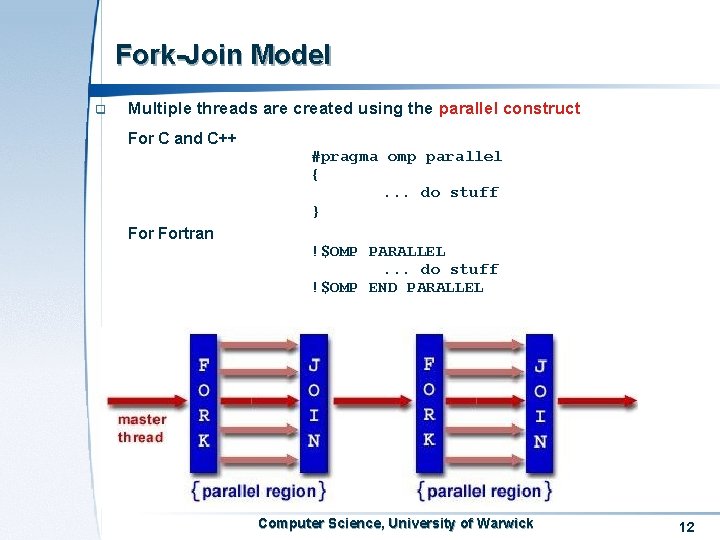

Fork-Join Model q Multiple threads are created using the parallel construct For C and C++ #pragma omp parallel {. . . do stuff } Fortran !$OMP PARALLEL. . . do stuff !$OMP END PARALLEL Computer Science, University of Warwick 12

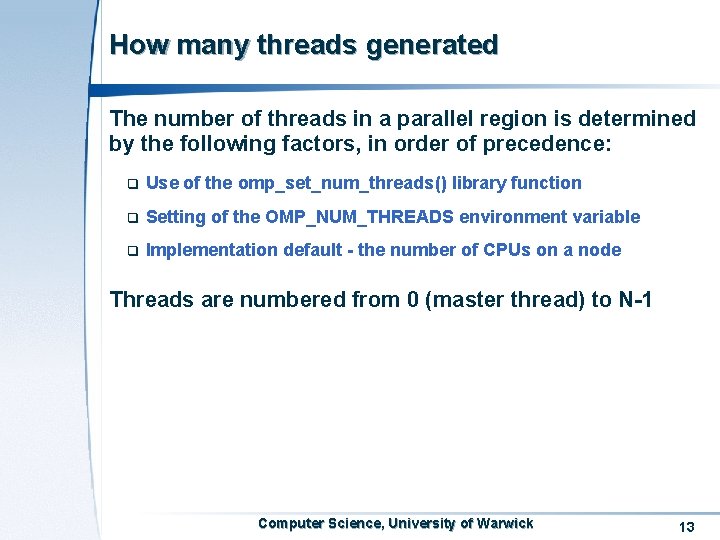

How many threads generated The number of threads in a parallel region is determined by the following factors, in order of precedence: q Use of the omp_set_num_threads() library function q Setting of the OMP_NUM_THREADS environment variable q Implementation default - the number of CPUs on a node Threads are numbered from 0 (master thread) to N-1 Computer Science, University of Warwick 13

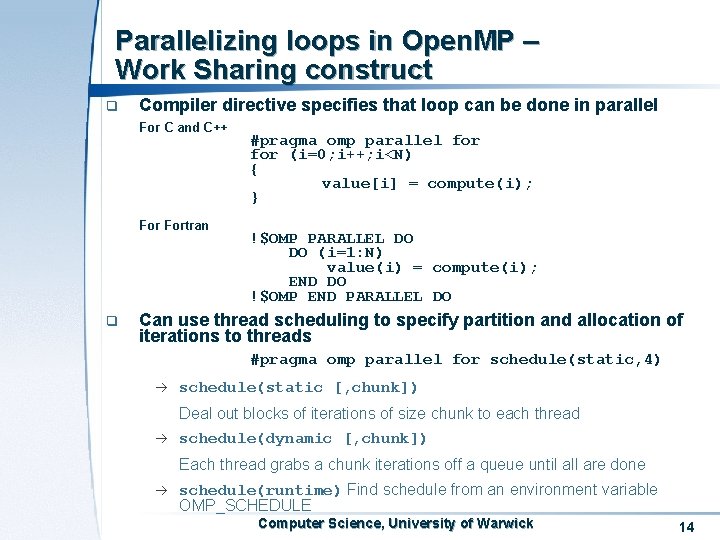

Parallelizing loops in Open. MP – Work Sharing construct q Compiler directive specifies that loop can be done in parallel For C and C++ Fortran q #pragma omp parallel for (i=0; i++; i<N) { value[i] = compute(i); } !$OMP PARALLEL DO DO (i=1: N) value(i) = compute(i); END DO !$OMP END PARALLEL DO Can use thread scheduling to specify partition and allocation of iterations to threads #pragma omp parallel for schedule(static, 4) à schedule(static [, chunk]) Deal out blocks of iterations of size chunk to each thread à schedule(dynamic [, chunk]) Each thread grabs a chunk iterations off a queue until all are done à schedule(runtime) Find schedule from an environment variable OMP_SCHEDULE Computer Science, University of Warwick 14

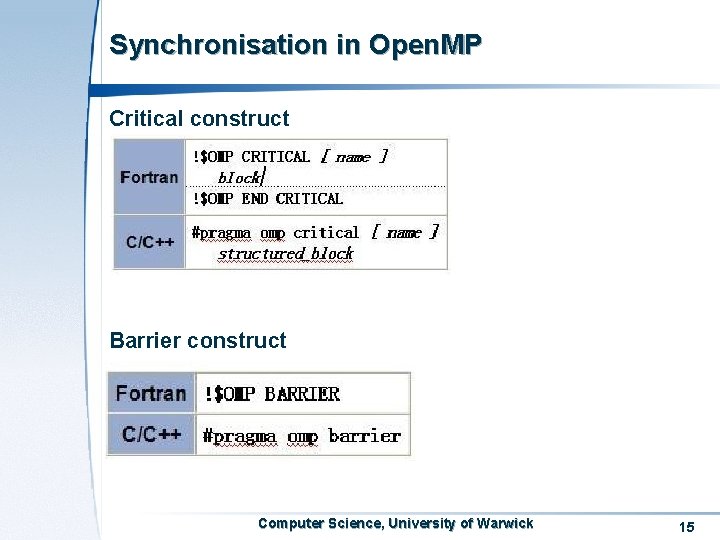

Synchronisation in Open. MP Critical construct Barrier construct Computer Science, University of Warwick 15

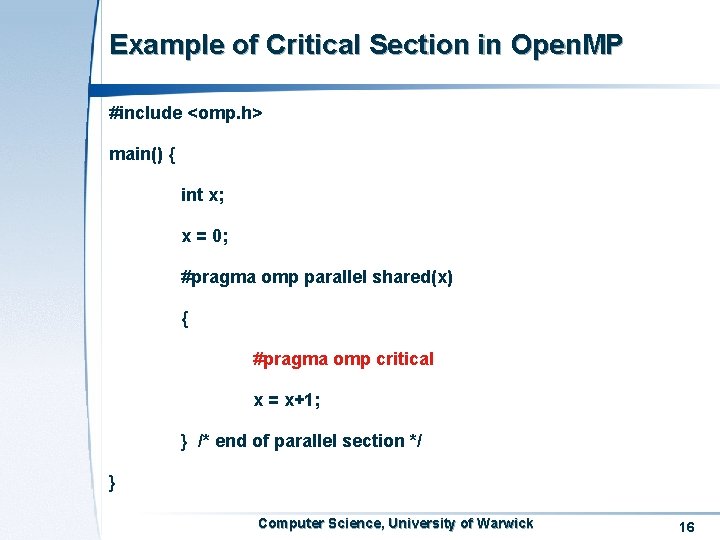

Example of Critical Section in Open. MP #include <omp. h> main() { int x; x = 0; #pragma omp parallel shared(x) { #pragma omp critical x = x+1; } /* end of parallel section */ } Computer Science, University of Warwick 16

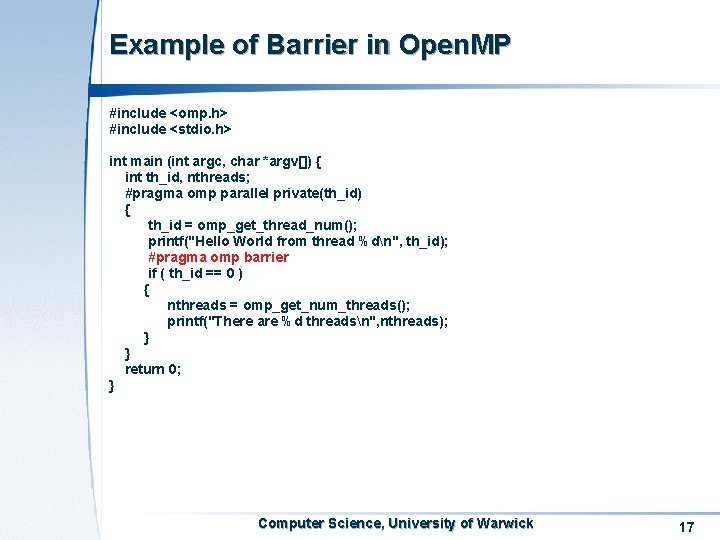

Example of Barrier in Open. MP #include <omp. h> #include <stdio. h> int main (int argc, char *argv[]) { int th_id, nthreads; #pragma omp parallel private(th_id) { th_id = omp_get_thread_num(); printf("Hello World from thread %dn", th_id); #pragma omp barrier if ( th_id == 0 ) { nthreads = omp_get_num_threads(); printf("There are %d threadsn", nthreads); } } return 0; } Computer Science, University of Warwick 17

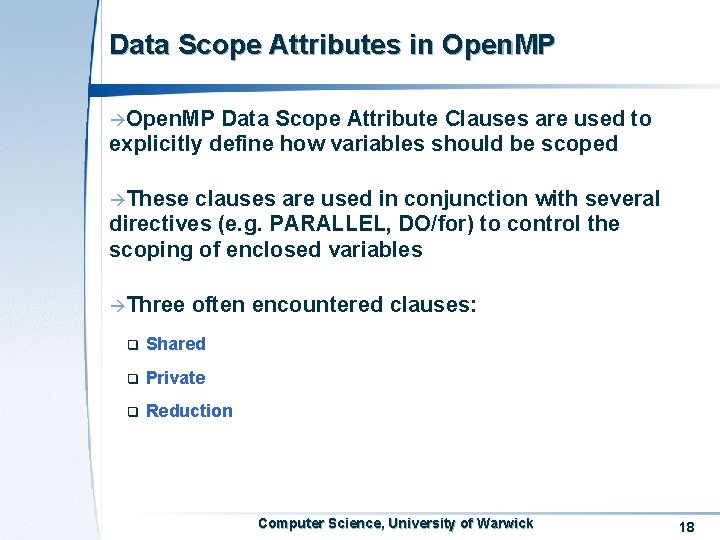

Data Scope Attributes in Open. MP àOpen. MP Data Scope Attribute Clauses are used to explicitly define how variables should be scoped àThese clauses are used in conjunction with several directives (e. g. PARALLEL, DO/for) to control the scoping of enclosed variables àThree often encountered clauses: q Shared q Private q Reduction Computer Science, University of Warwick 18

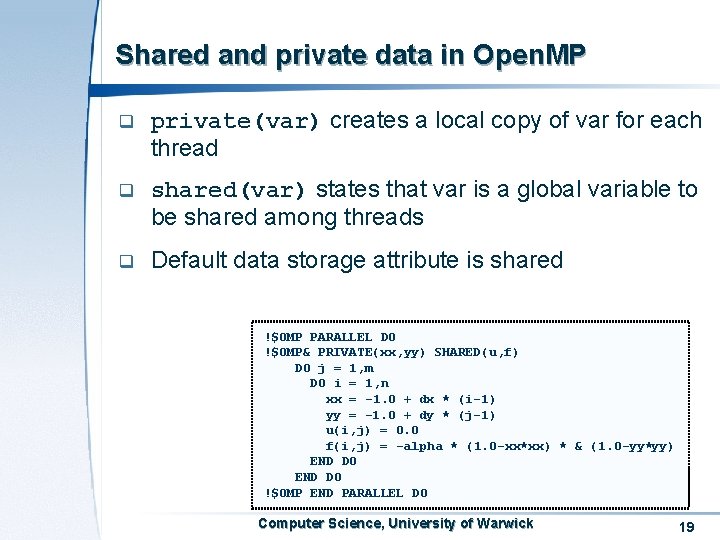

Shared and private data in Open. MP q private(var) creates a local copy of var for each thread q shared(var) states that var is a global variable to be shared among threads q Default data storage attribute is shared !$OMP PARALLEL DO !$OMP& PRIVATE(xx, yy) SHARED(u, f) DO j = 1, m DO i = 1, n xx = -1. 0 + dx * (i-1) yy = -1. 0 + dy * (j-1) u(i, j) = 0. 0 f(i, j) = -alpha * (1. 0 -xx*xx) * & (1. 0 -yy*yy) END DO !$OMP END PARALLEL DO Computer Science, University of Warwick 19

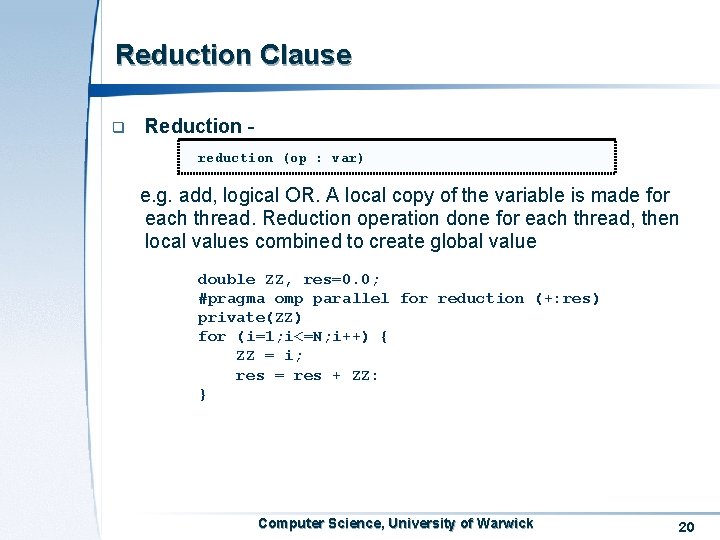

Reduction Clause q Reduction reduction (op : var) e. g. add, logical OR. A local copy of the variable is made for each thread. Reduction operation done for each thread, then local values combined to create global value double ZZ, res=0. 0; #pragma omp parallel for reduction (+: res) private(ZZ) for (i=1; i<=N; i++) { ZZ = i; res = res + ZZ: } Computer Science, University of Warwick 20

Run-Time Library Routines àCan perform a variety of functions, including q Query the number of threads/thread no. q Set number of threads q … Computer Science, University of Warwick 21

Run-Time Library Routines qquery routines allow you to get the number of threads and the ID of a specific thread id = omp_get_thread_num(); //thread no. Nthreads = omp_get_num_threads(); //number of threads q. Can specify number of threads at runtime omp_set_num_threads(Nthreads); Computer Science, University of Warwick 22

Environment Variable àControlling àFour the execution of parallel code environment variables q OMP_SCHEDULE: how iterations of a loop are scheduled q OMP_NUM_THREADS: maximum number of threads q OMP_DYNAMIC: enable or disable dynamic adjustment of the number of threads q OMP_NESTED: enable or disable nested parallelism Computer Science, University of Warwick 23

Open. MP compilers q Since parallelism is mostly achieved by parallelising loops using shared memory, Open. MP compilers work well for multiprocessor SMPs and vector machines q Open. MP could work for distributed memory machines, but would need to use a good distributed shared memory (DSM) implementation q For more information on Open. MP, see www. openmp. org Computer Science, University of Warwick 24

High Performance Computing Course Notes 2007 -2008 Message Passing Programming I

Message Passing Programming q Message Passing is the most widely used parallel programming model q Message passing works by creating a number of tasks, uniquely named, that interact by sending and receiving messages to and from one another (hence the message passing) q q Generally, processes communicate through sending the data from the address space of one process to that of another q Communication of processes (via files, pipe, socket) q Communication of threads within a process (via global data area) Programs based on message passing can be based on standard sequential language programs (C/C++, Fortran), augmented with calls to library functions for sending and receiving messages Computer Science, University of Warwick 26

Message Passing Interface (MPI) àMPI q is a specification, not a particular implementation Does not specify process startup, error codes, amount of system buffer, etc àMPI is a library, not a language àThe goals of MPI: functionality, portability and efficiency àMessage passing model > MPI specification > MPI implementation Computer Science, University of Warwick 27

Open. MP vs MPI In a nutshell MPI is used on distributed-memory systems Open. MP is used for code parallelisation on shared-memory systems q Both are explicit parallelism q High-level control (Open. MP), lower-level control (MPI) Computer Science, University of Warwick 28

A little history q Message-passing libraries developed for a number of early distributed memory computers q By 1993 there were loads of vendor specific implementations q By 1994 MPI-1 came into being q By 1996 MPI-2 was finalized Computer Science, University of Warwick 29

The MPI programming model q MPI standards q MPI-1 (1. 1, 1. 2), MPI-2 (2. 0) q Forwards compatibility preserved between versions q Standard bindings - for C, C++ and Fortran. Have seen MPI bindings for Python, Java etc (all non-standard) q We will stick to the C binding, for the lectures and coursework. More info on MPI www. mpi-forum. org q Implementations - For your laptop pick up MPICH (free portable implementation of MPI (http: //www-unix. mcs. anl. gov/mpich/index. htm) q Coursework will use MPICH Computer Science, University of Warwick 30

MPI is a complex system comprising of 129 functions with numerous parameters and variants Six of them are indispensable, but can write a large number of useful programs already Other functions add flexibility (datatype), robustness (non-blocking send/receive), efficiency (ready-mode communication), modularity (communicators, groups) or convenience (collective operations, topology). In the lectures, we are going to cover most commonly encountered functions Computer Science, University of Warwick 31

The MPI programming model q Computation comprises one or more processes that communicate via library routines and sending and receiving messages to other processes q (Generally) a fixed set of processes created at outset, one process per processor q Different from PVM Computer Science, University of Warwick 32

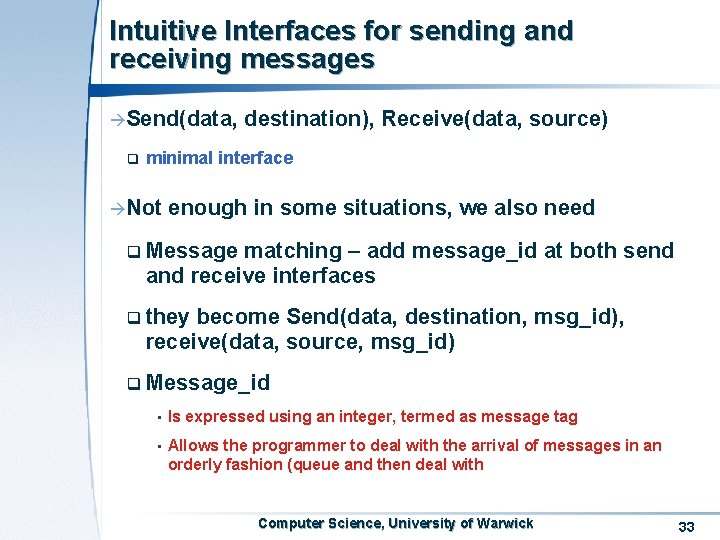

Intuitive Interfaces for sending and receiving messages àSend(data, q destination), Receive(data, source) minimal interface àNot enough in some situations, we also need q Message matching – add message_id at both send and receive interfaces q they become Send(data, destination, msg_id), receive(data, source, msg_id) q Message_id • Is expressed using an integer, termed as message tag • Allows the programmer to deal with the arrival of messages in an orderly fashion (queue and then deal with Computer Science, University of Warwick 33

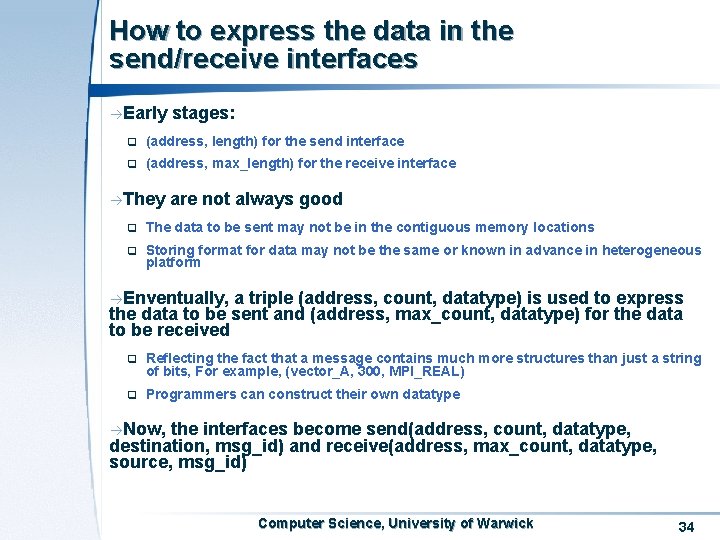

How to express the data in the send/receive interfaces àEarly stages: q (address, length) for the send interface q (address, max_length) for the receive interface àThey are not always good q The data to be sent may not be in the contiguous memory locations q Storing format for data may not be the same or known in advance in heterogeneous platform àEnventually, a triple (address, count, datatype) is used to express the data to be sent and (address, max_count, datatype) for the data to be received q Reflecting the fact that a message contains much more structures than just a string of bits, For example, (vector_A, 300, MPI_REAL) q Programmers can construct their own datatype àNow, the interfaces become send(address, count, datatype, destination, msg_id) and receive(address, max_count, datatype, source, msg_id) Computer Science, University of Warwick 34

How to distinguish messages àMessage àSo, tag is necessary, but not sufficient communicator is introduced … Computer Science, University of Warwick 35

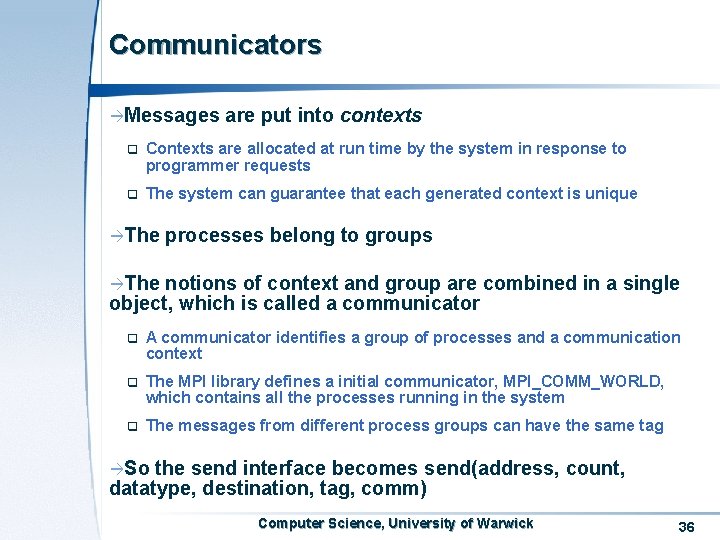

Communicators àMessages are put into contexts q Contexts are allocated at run time by the system in response to programmer requests q The system can guarantee that each generated context is unique àThe processes belong to groups àThe notions of context and group are combined in a single object, which is called a communicator q A communicator identifies a group of processes and a communication context q The MPI library defines a initial communicator, MPI_COMM_WORLD, which contains all the processes running in the system q The messages from different process groups can have the same tag àSo the send interface becomes send(address, count, datatype, destination, tag, comm) Computer Science, University of Warwick 36

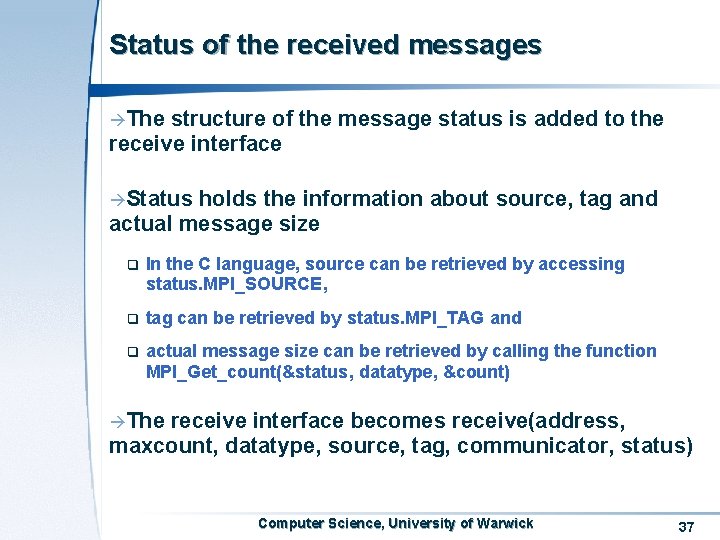

Status of the received messages àThe structure of the message status is added to the receive interface àStatus holds the information about source, tag and actual message size q In the C language, source can be retrieved by accessing status. MPI_SOURCE, q tag can be retrieved by status. MPI_TAG and q actual message size can be retrieved by calling the function MPI_Get_count(&status, datatype, &count) àThe receive interface becomes receive(address, maxcount, datatype, source, tag, communicator, status) Computer Science, University of Warwick 37

How to express source and destination àThe processes in a communicator (group) are identified by ranks àIf a communicator contains n processes, process ranks are integers from 0 to n-1 àSource and destination processes in the send/receive interface are the ranks Computer Science, University of Warwick 38

Some other issues In the receive interface, tag can be a wildcard, which means any message will be received In the receive interface, source can also be a wildcard, which match any source Computer Science, University of Warwick 39

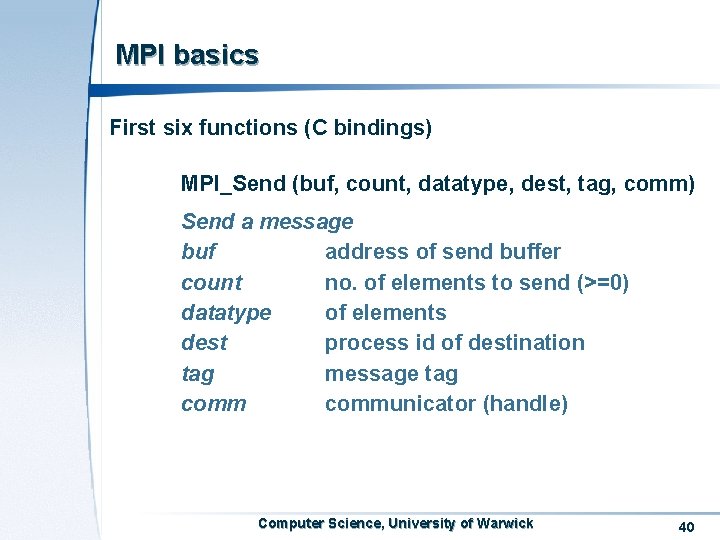

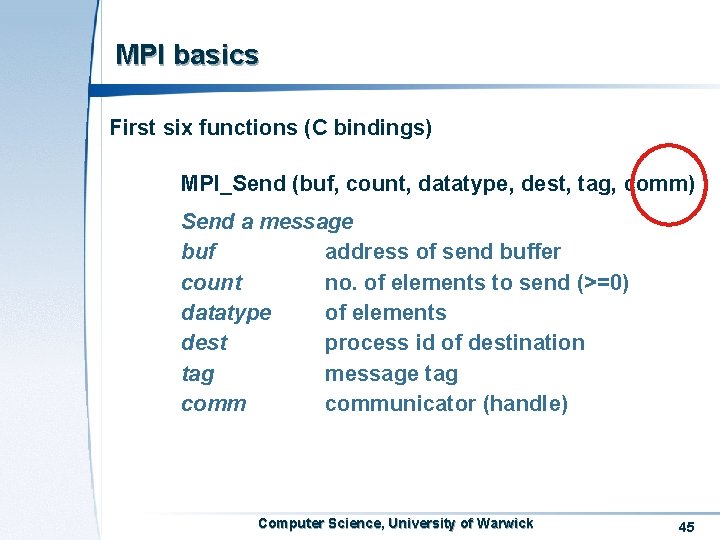

MPI basics First six functions (C bindings) MPI_Send (buf, count, datatype, dest, tag, comm) Send a message buf address of send buffer count no. of elements to send (>=0) datatype of elements dest process id of destination tag message tag communicator (handle) Computer Science, University of Warwick 40

MPI basics First six functions (C bindings) MPI_Send (buf, count, datatype, dest, tag, comm) Send a message buf address of send buffer count no. of elements to send (>=0) datatype of elements dest process id of destination tag message tag communicator (handle) Computer Science, University of Warwick 41

MPI basics First six functions (C bindings) MPI_Send (buf, count, datatype, dest, tag, comm) Send a message buf address of send buffer count no. of elements to send (>=0) datatype of elements dest process id of destination tag message tag communicator (handle) Computer Science, University of Warwick 42

MPI basics First six functions (C bindings) MPI_Send (buf, count, datatype, dest, tag, comm) Calculating the size of the data to be send … buf address of send buffer count * sizeof (datatype) bytes of data Computer Science, University of Warwick 43

MPI basics First six functions (C bindings) MPI_Send (buf, count, datatype, dest, tag, comm) Send a message buf address of send buffer count no. of elements to send (>=0) datatype of elements dest process id of destination tag message tag communicator (handle) Computer Science, University of Warwick 44

MPI basics First six functions (C bindings) MPI_Send (buf, count, datatype, dest, tag, comm) Send a message buf address of send buffer count no. of elements to send (>=0) datatype of elements dest process id of destination tag message tag communicator (handle) Computer Science, University of Warwick 45

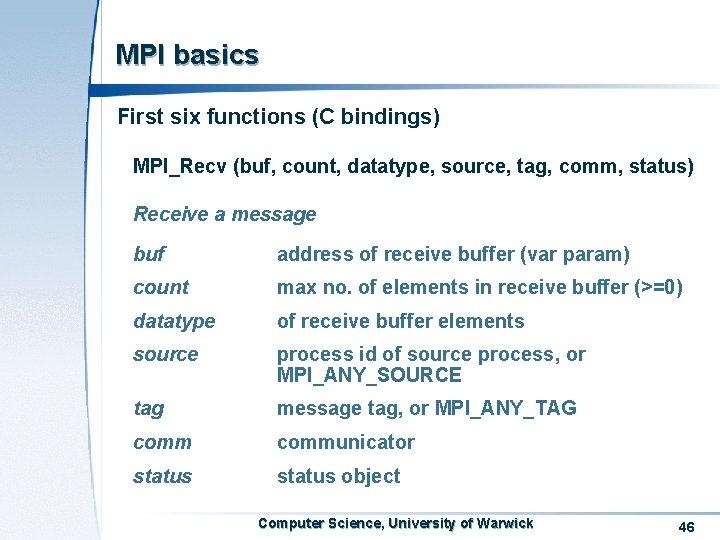

MPI basics First six functions (C bindings) MPI_Recv (buf, count, datatype, source, tag, comm, status) Receive a message buf address of receive buffer (var param) count max no. of elements in receive buffer (>=0) datatype of receive buffer elements source process id of source process, or MPI_ANY_SOURCE tag message tag, or MPI_ANY_TAG communicator status object Computer Science, University of Warwick 46

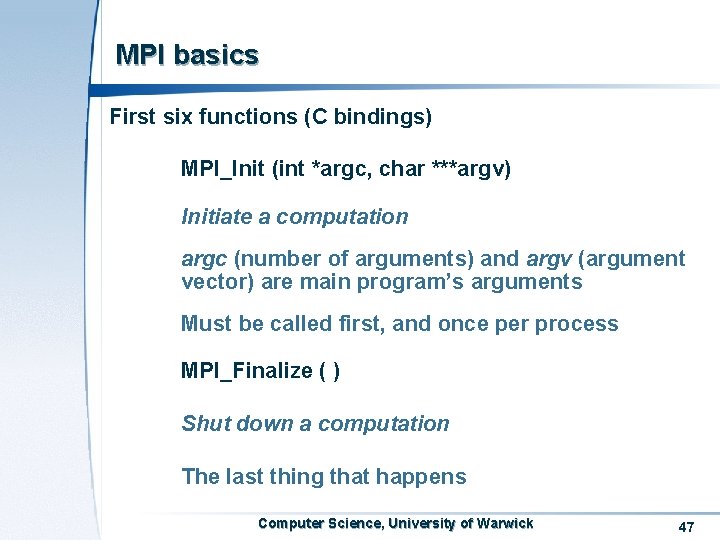

MPI basics First six functions (C bindings) MPI_Init (int *argc, char ***argv) Initiate a computation argc (number of arguments) and argv (argument vector) are main program’s arguments Must be called first, and once per process MPI_Finalize ( ) Shut down a computation The last thing that happens Computer Science, University of Warwick 47

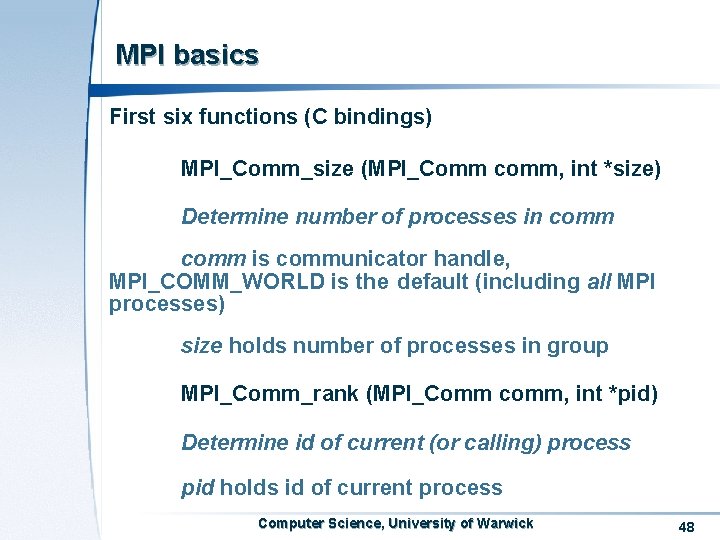

MPI basics First six functions (C bindings) MPI_Comm_size (MPI_Comm comm, int *size) Determine number of processes in comm is communicator handle, MPI_COMM_WORLD is the default (including all MPI processes) size holds number of processes in group MPI_Comm_rank (MPI_Comm comm, int *pid) Determine id of current (or calling) process pid holds id of current process Computer Science, University of Warwick 48

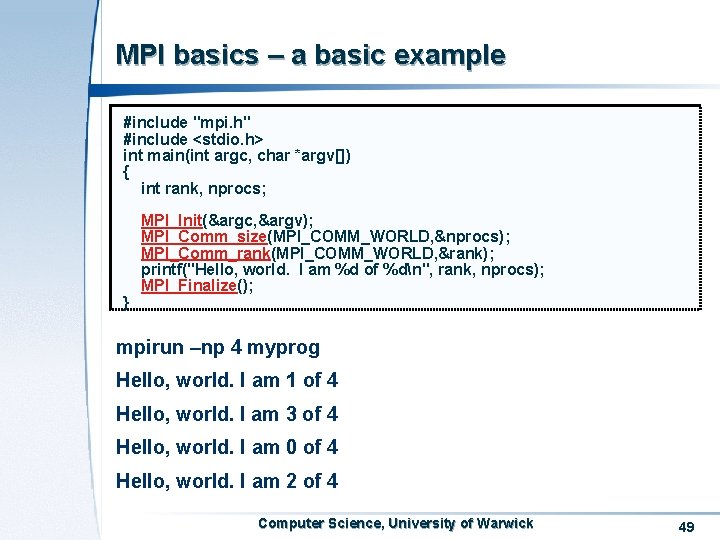

MPI basics – a basic example #include "mpi. h" #include <stdio. h> int main(int argc, char *argv[]) { int rank, nprocs; } MPI_Init(&argc, &argv); MPI_Comm_size(MPI_COMM_WORLD, &nprocs); MPI_Comm_rank(MPI_COMM_WORLD, &rank); printf("Hello, world. I am %d of %dn", rank, nprocs); MPI_Finalize(); mpirun –np 4 myprog Hello, world. I am 1 of 4 Hello, world. I am 3 of 4 Hello, world. I am 0 of 4 Hello, world. I am 2 of 4 Computer Science, University of Warwick 49

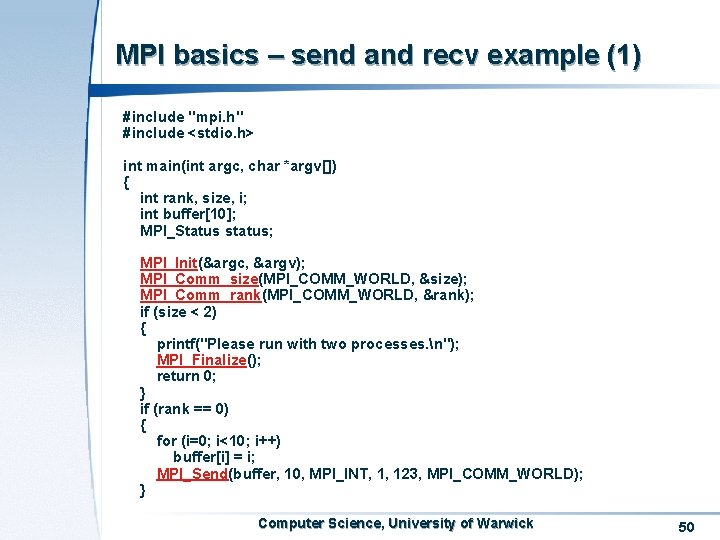

MPI basics – send and recv example (1) #include "mpi. h" #include <stdio. h> int main(int argc, char *argv[]) { int rank, size, i; int buffer[10]; MPI_Status status; MPI_Init(&argc, &argv); MPI_Comm_size(MPI_COMM_WORLD, &size); MPI_Comm_rank(MPI_COMM_WORLD, &rank); if (size < 2) { printf("Please run with two processes. n"); MPI_Finalize(); return 0; } if (rank == 0) { for (i=0; i<10; i++) buffer[i] = i; MPI_Send(buffer, 10, MPI_INT, 1, 123, MPI_COMM_WORLD); } Computer Science, University of Warwick 50

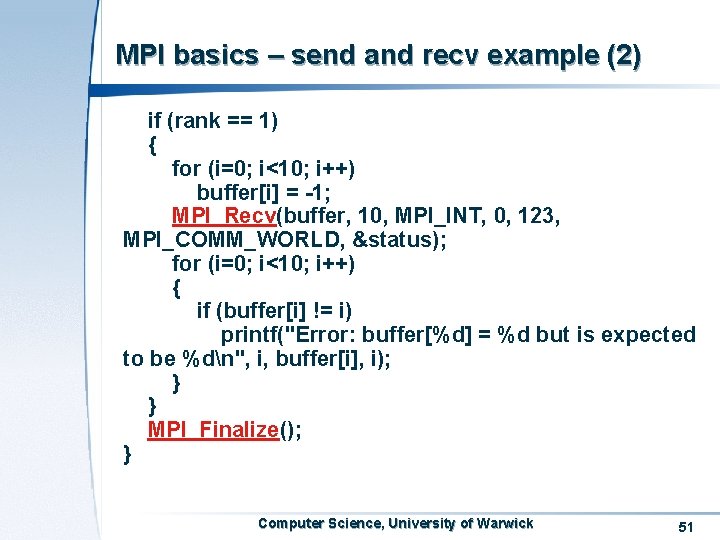

MPI basics – send and recv example (2) if (rank == 1) { for (i=0; i<10; i++) buffer[i] = -1; MPI_Recv(buffer, 10, MPI_INT, 0, 123, MPI_COMM_WORLD, &status); for (i=0; i<10; i++) { if (buffer[i] != i) printf("Error: buffer[%d] = %d but is expected to be %dn", i, buffer[i], i); } } MPI_Finalize(); } Computer Science, University of Warwick 51

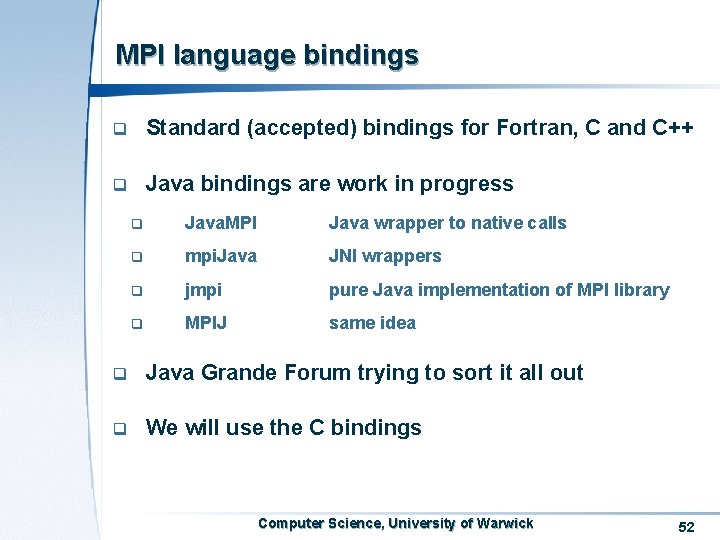

MPI language bindings q Standard (accepted) bindings for Fortran, C and C++ q Java bindings are work in progress q Java. MPI Java wrapper to native calls q mpi. Java JNI wrappers q jmpi pure Java implementation of MPI library q MPIJ same idea q Java Grande Forum trying to sort it all out q We will use the C bindings Computer Science, University of Warwick 52

- Slides: 52