Distributed File Systems Andy Wang Operating Systems COP

Distributed File Systems Andy Wang Operating Systems COP 4610 / CGS 5765

Distributed File System l Provides transparent access to files stored on a remote disk l Recurrent themes of design issues ¡ Failure handling ¡ Performance optimizations ¡ Cache consistency

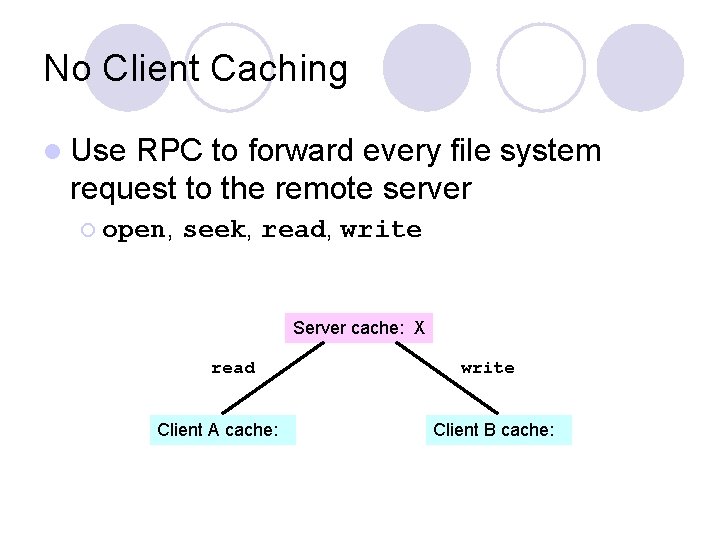

No Client Caching l Use RPC to forward every file system request to the remote server ¡ open, seek, read, write Server cache: X read Client A cache: write Client B cache:

No Client Caching + Server always has a consistent view of the file system - Poor performance - Server is a single point of failure

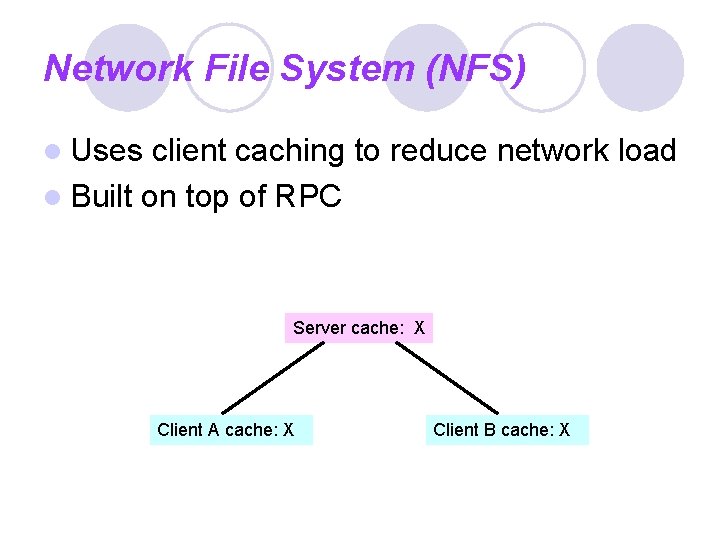

Network File System (NFS) l Uses client caching to reduce network load l Built on top of RPC Server cache: X Client A cache: X Client B cache: X

Network File System (NFS) + Performance better than no caching - Has to handle failures - Has to handle consistency

Failure Modes l If the server crashes ¡ Uncommitted data in memory are lost ¡ Current file positions may be lost ¡ The client may ask the server to perform unacknowledged operations again l If a client crashes ¡ Modified data in the client cache may be lost

NFS Failure Handling 1. Write-through caching 2. Stateless protocol: the server keeps no state about the client ¡ read ¡ No open, seek, read, close server recovery after a failure 3. Idempotent operations: repeated operations get the same result ¡ No static variables

NFS Failure Handling 4. Transparent failures to clients ¡ Two options The client waits until the server comes back l The client can return an error to the user application l • Do you check the return value of close?

NFS Weak Consistency Protocol l. A write updates the server immediately l Other clients poll the server periodically for changes l No guarantees for multiple writers

NFS Summary + Simple and highly portable - May become inconsistent sometimes ¡ Does not happen very often

Andrew File System (AFS) l Developed at CMU l Design principles ¡ Files l are cached on each client’s disks NFS caches only in clients’ memory ¡ Callbacks: The server records who has the copy of a file ¡ Write-back cache on file close. The server then tells all clients that own an old copy. ¡ Session semantics: Updates are only visible on close

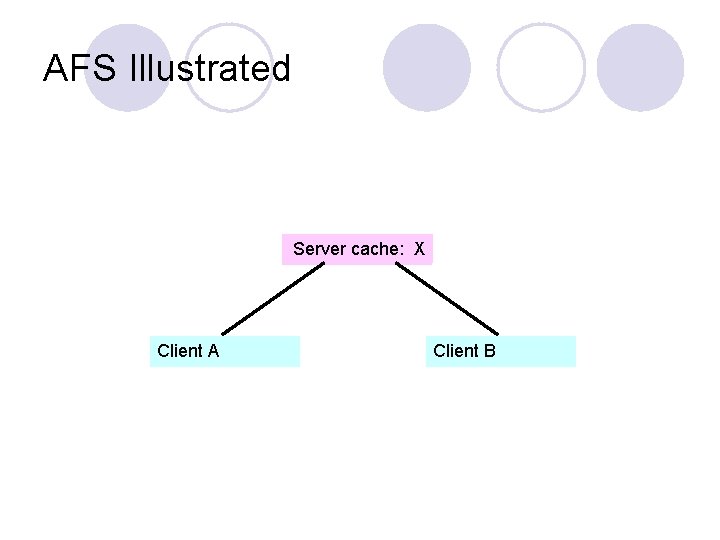

AFS Illustrated Server cache: X Client A Client B

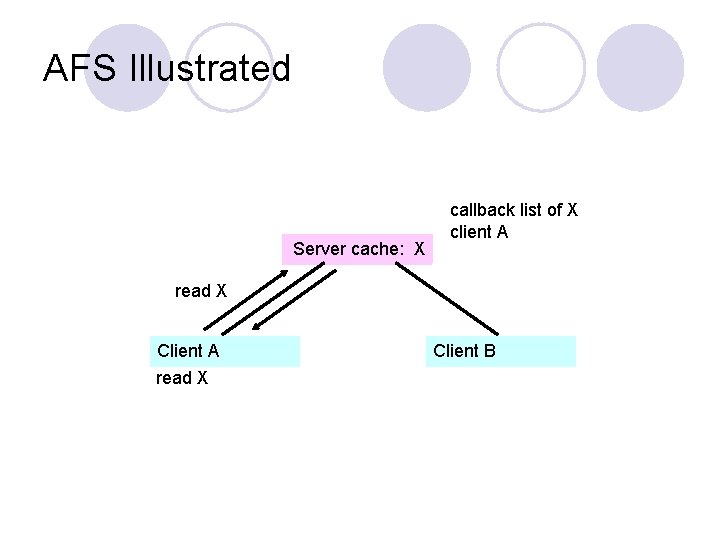

AFS Illustrated Server cache: X callback list of X client A read X Client B

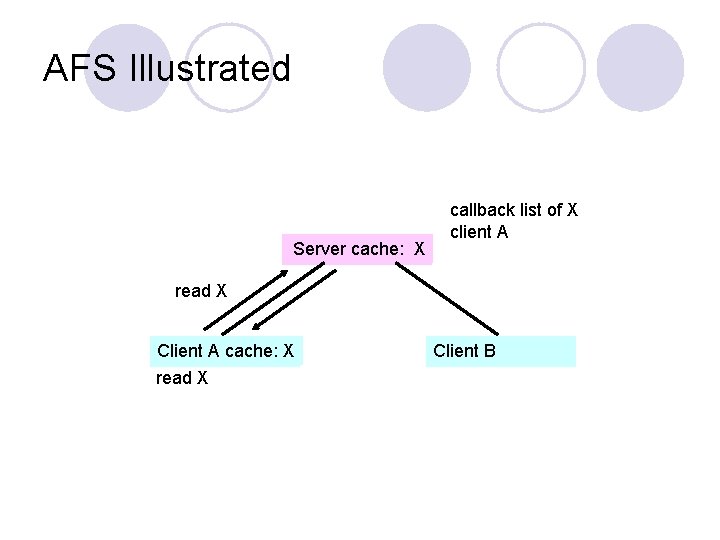

AFS Illustrated Server cache: X callback list of X client A read X Client A cache: X read X Client B

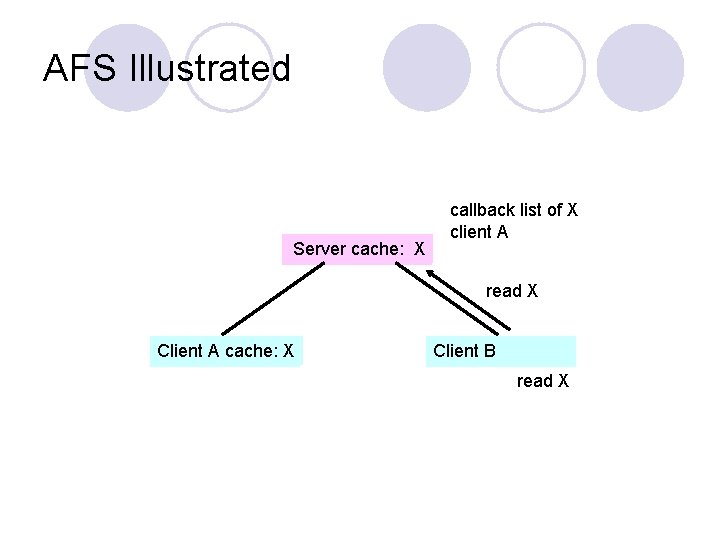

AFS Illustrated Server cache: X callback list of X client A read X Client A cache: X Client B read X

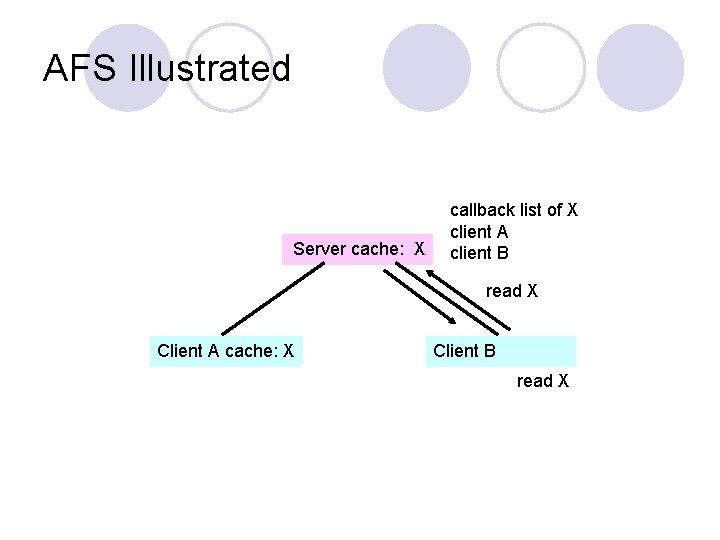

AFS Illustrated Server cache: X callback list of X client A client B read X Client A cache: X Client B read X

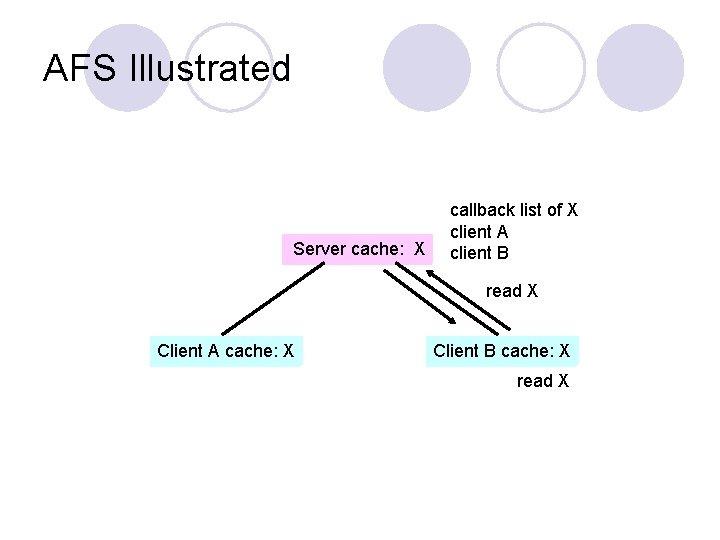

AFS Illustrated Server cache: X callback list of X client A client B read X Client A cache: X Client B cache: X read X

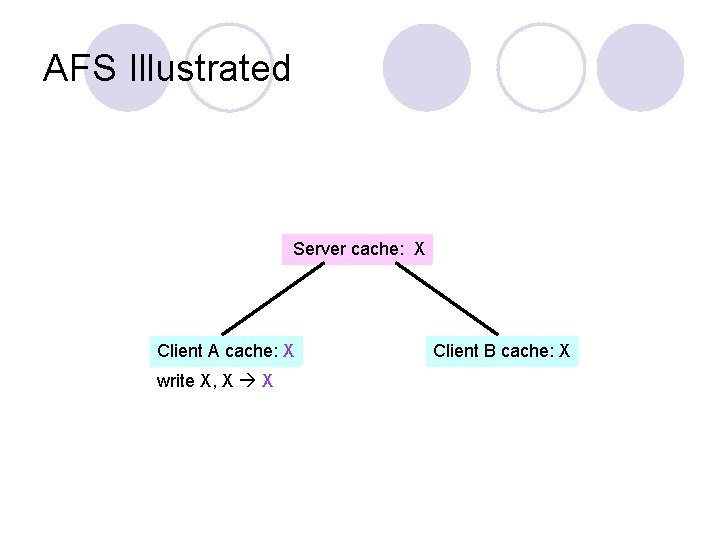

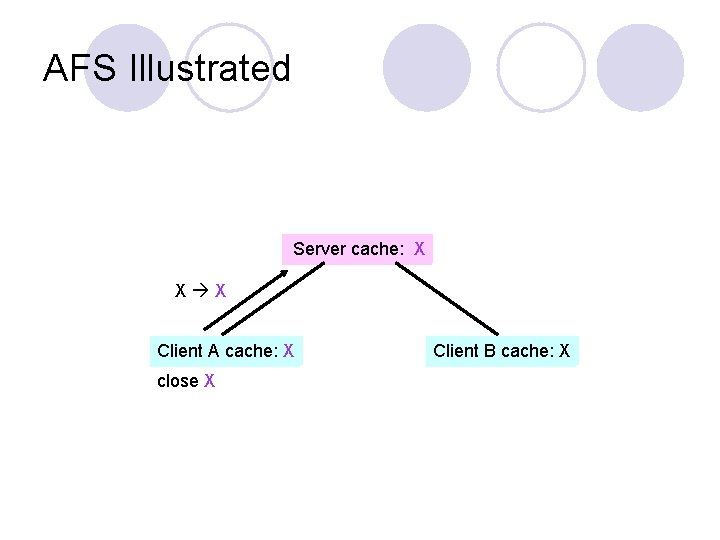

AFS Illustrated Server cache: X Client A cache: X write X, X X Client B cache: X

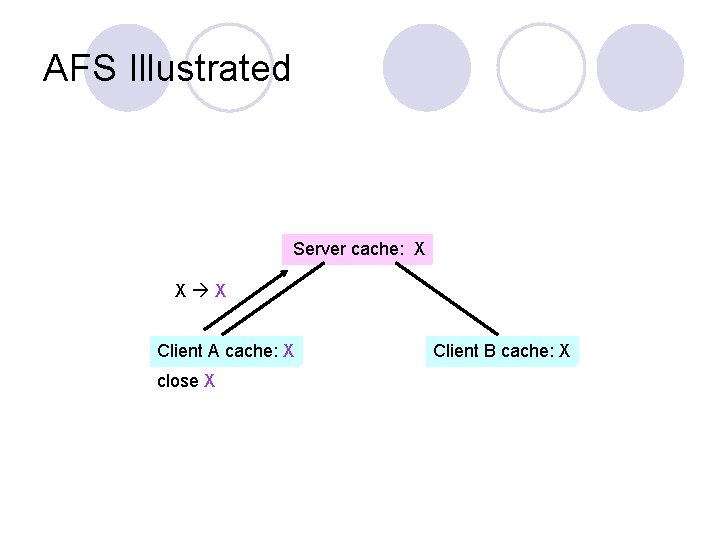

AFS Illustrated Server cache: X X X Client A cache: X close X Client B cache: X

AFS Illustrated Server cache: X X X Client A cache: X close X Client B cache: X

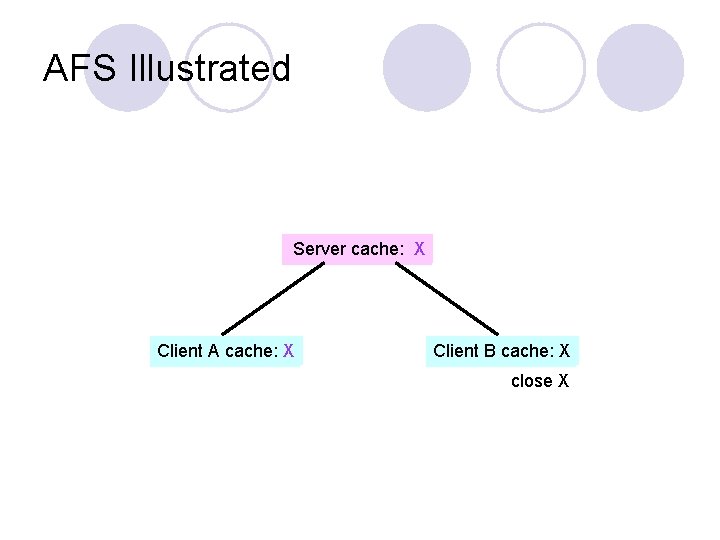

AFS Illustrated Server cache: X Client A cache: X Client B cache: X close X

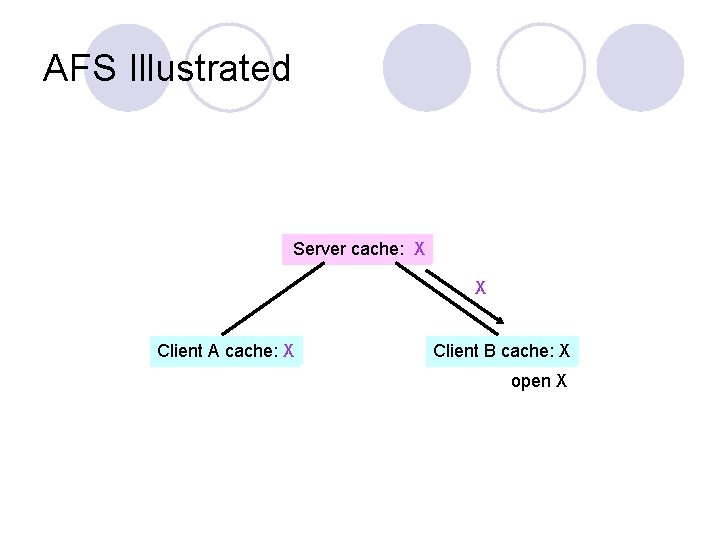

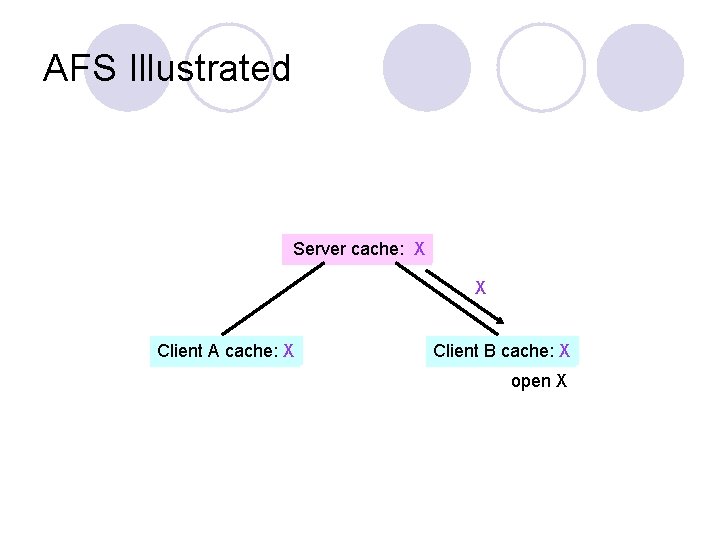

AFS Illustrated Server cache: X X Client A cache: X Client B cache: X open X

AFS Illustrated Server cache: X X Client A cache: X Client B cache: X open X

AFS Failure Handling l If the server crashes, it asks all clients to reconstruct the callback states

AFS vs. NFS l AFS ¡ Less server load due to clients’ disk caches ¡ Not involved for read-only files l Both AFS and NFS ¡ Server is a performance bottleneck ¡ Single point of failure

Serverless Network File Service (x. FS) l Idea: construct a file system as a parallel program and exploit the high-speed LAN ¡ Four major pieces Cooperative caching l Write-ownership cache coherence l Software RAID l Distributed control l

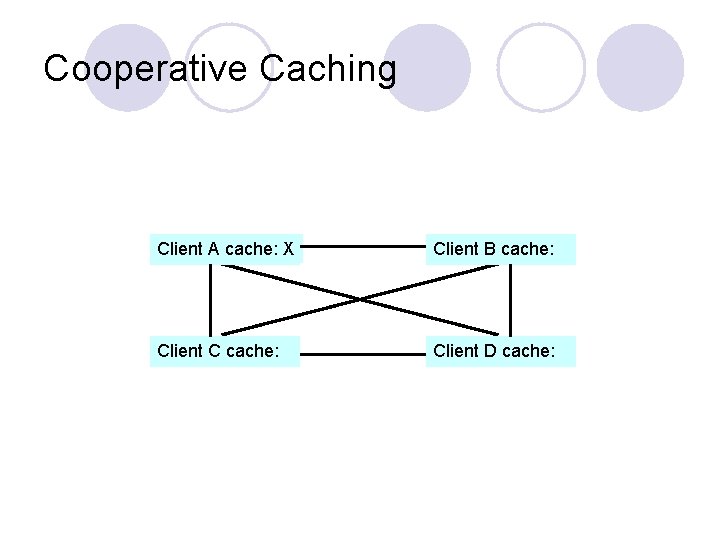

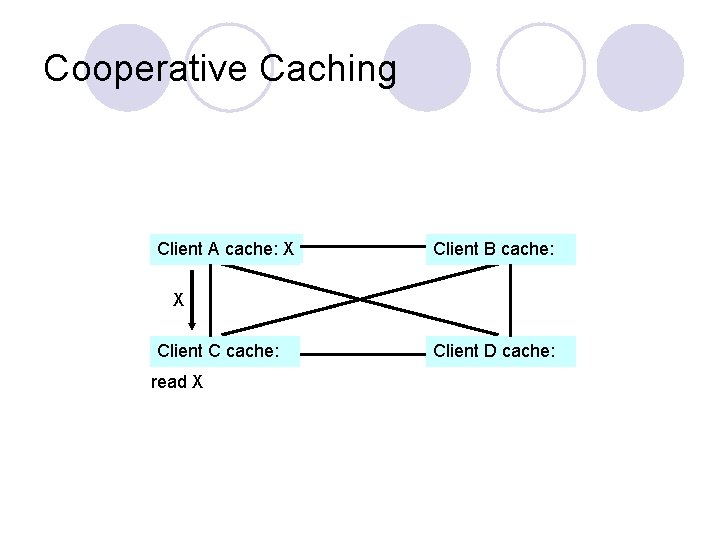

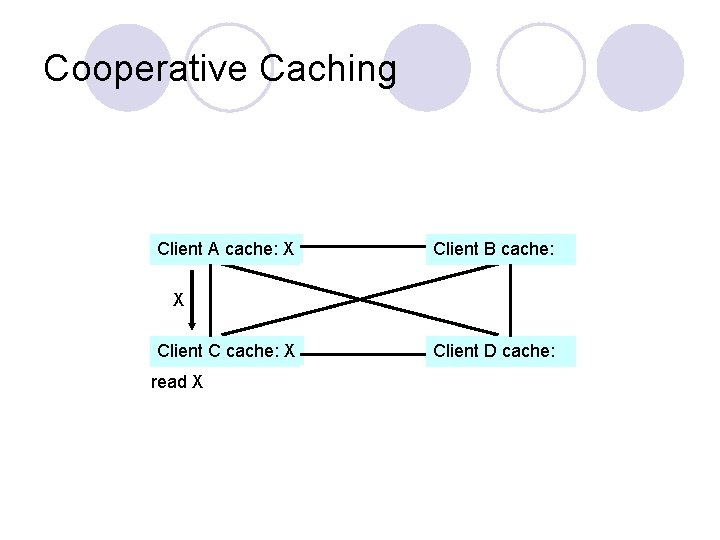

Cooperative Caching l Uses remote memory to avoid going to disk ¡ On a cache miss, check the local memory and remote memory, before checking the disk ¡ Before discarding the last cached memory copy, send the content to remote memory if possible

Cooperative Caching Client A cache: X Client B cache: Client C cache: Client D cache:

Cooperative Caching Client A cache: X Client B cache: X Client C cache: read X Client D cache:

Cooperative Caching Client A cache: X Client B cache: X Client C cache: X read X Client D cache:

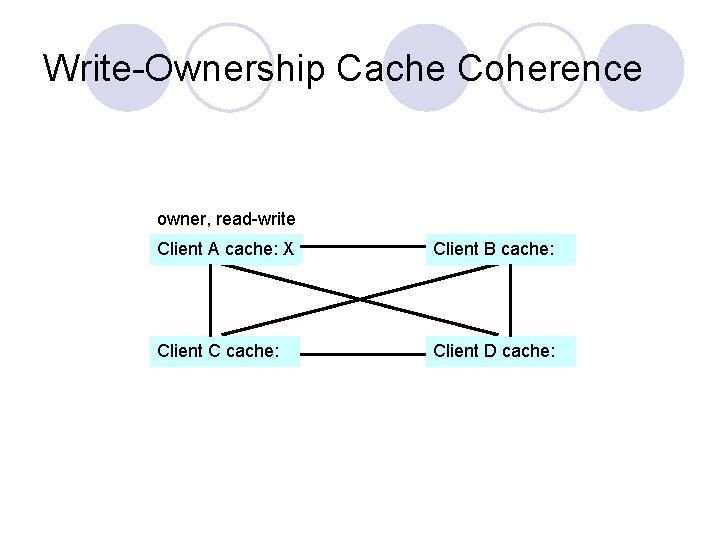

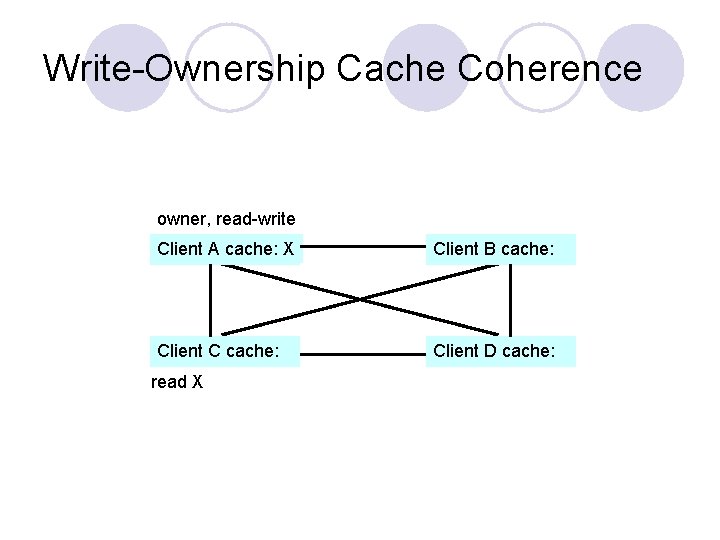

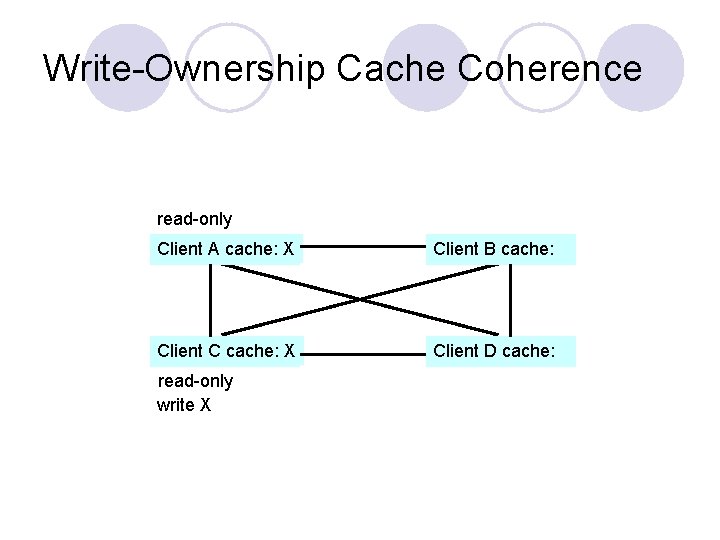

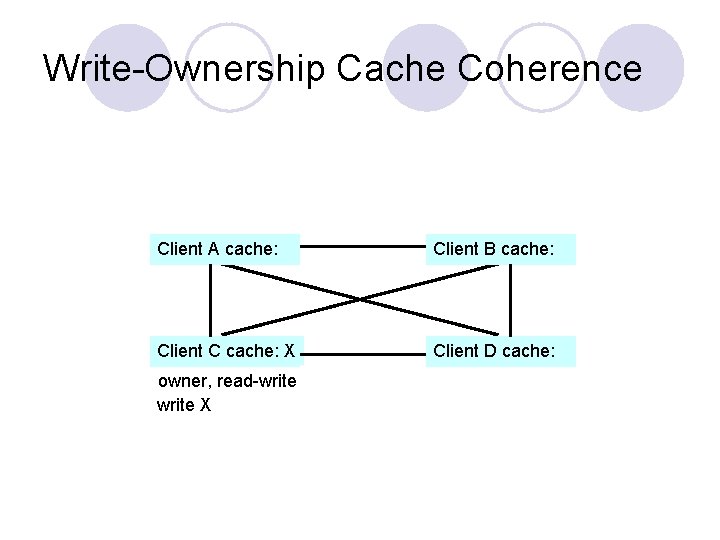

Write-Ownership Cache Coherence l Declares a client to be a owner of the file at writes ¡ No one else can have a copy

Write-Ownership Cache Coherence owner, read-write Client A cache: X Client B cache: Client C cache: Client D cache:

Write-Ownership Cache Coherence owner, read-write Client A cache: X Client B cache: Client C cache: Client D cache: read X

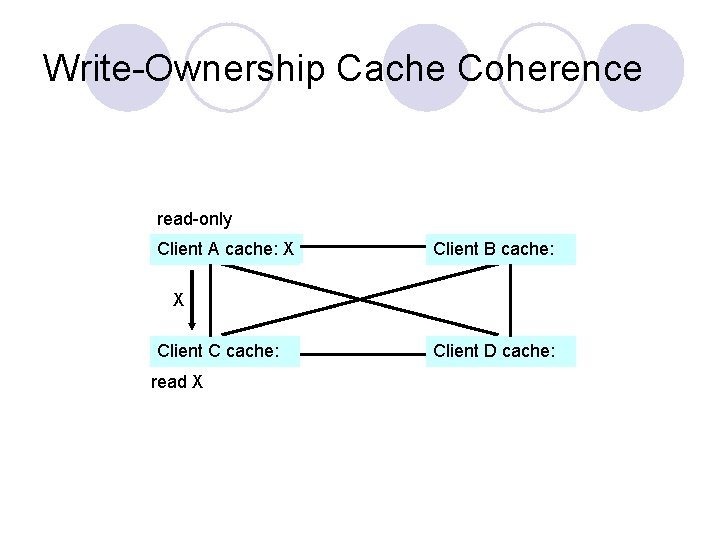

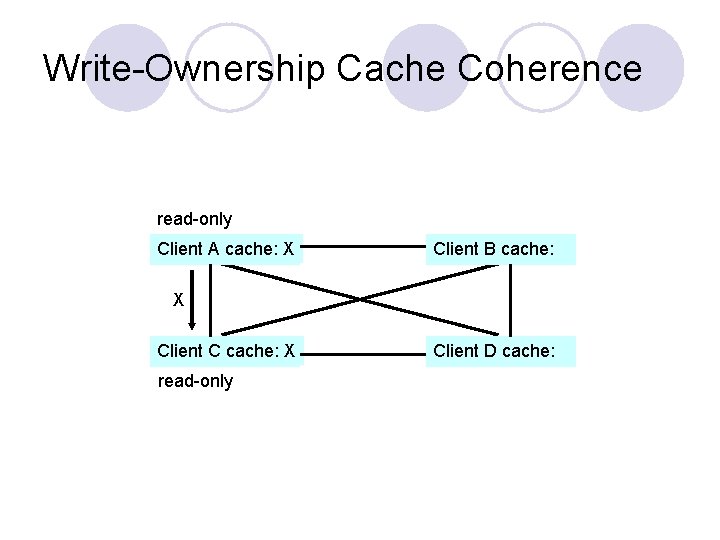

Write-Ownership Cache Coherence read-only Client A cache: X Client B cache: X Client C cache: read X Client D cache:

Write-Ownership Cache Coherence read-only Client A cache: X Client B cache: X Client C cache: X read-only Client D cache:

Write-Ownership Cache Coherence read-only Client A cache: X Client B cache: Client C cache: X Client D cache: read-only write X

Write-Ownership Cache Coherence Client A cache: Client B cache: Client C cache: X Client D cache: owner, read-write X

Other components l Software ¡ Stripe RAID data redundantly over multiple disks l Distributed ¡ File control system managers are spread across all machines

x. FS Summary l Built on small, unreliable components l Data, metadata, and control can live on any machine l If one machine goes down, everything else continues to work l When machines are added, x. FS starts to use their resources

x. FS Summary - Complexity and associated performance degradation - Hard to upgrade software while keeping everything running

- Slides: 41