Distributed Data Storage Access Zachary G Ives University

Distributed Data Storage & Access Zachary G. Ives University of Pennsylvania CIS 455 / 555 – Internet and Web Systems Some slide content courtesy Tanenbaum & van Steen

Reminders § Homework 2 Milestone 2 due tomorrow 2

The Google File System (GFS) § Goals: § Support millions of huge (many-TB) files § Partition & replicate data across thousands of unreliable machines, in multiple racks (and even data centers) § Willing to make some compromises to get there: § Modified APIs – doesn’t plug into POSIX APIs In fact, relies on being built over Linux file system § Doesn’t provide transparent consistency to apps! App must detect duplicate or bad records, support checkpoints § Performance is only good with a particular class of apps: Stream-based reads Atomic record appends 3

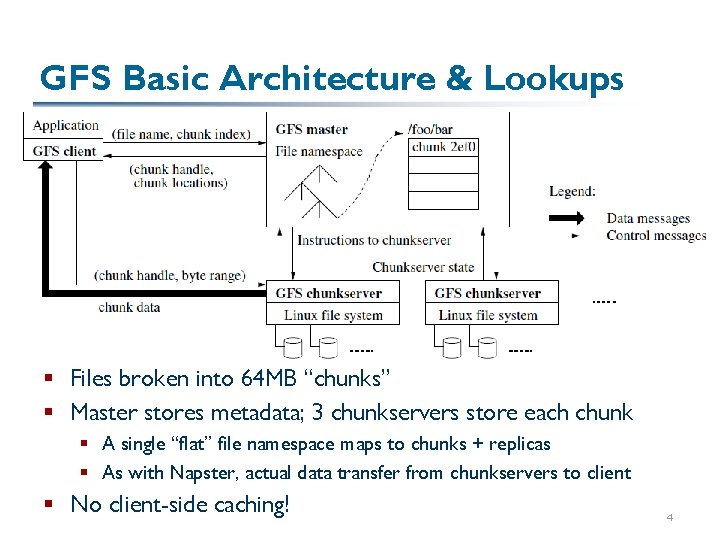

GFS Basic Architecture & Lookups § Files broken into 64 MB “chunks” § Master stores metadata; 3 chunkservers store each chunk § A single “flat” file namespace maps to chunks + replicas § As with Napster, actual data transfer from chunkservers to client § No client-side caching! 4

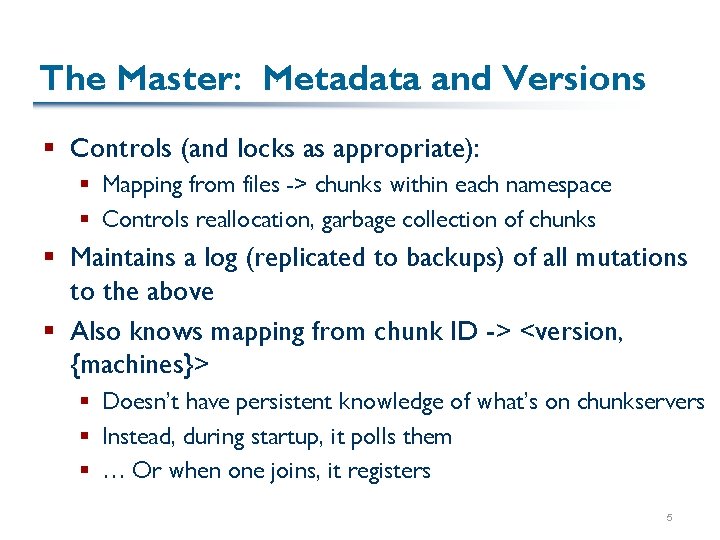

The Master: Metadata and Versions § Controls (and locks as appropriate): § Mapping from files -> chunks within each namespace § Controls reallocation, garbage collection of chunks § Maintains a log (replicated to backups) of all mutations to the above § Also knows mapping from chunk ID -> <version, {machines}> § Doesn’t have persistent knowledge of what’s on chunkservers § Instead, during startup, it polls them § … Or when one joins, it registers 5

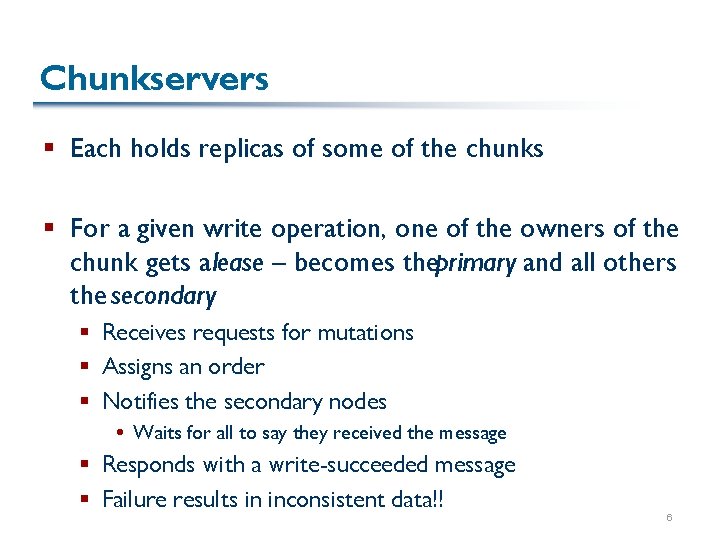

Chunkservers § Each holds replicas of some of the chunks § For a given write operation, one of the owners of the chunk gets a lease – becomes theprimary and all others the secondary § Receives requests for mutations § Assigns an order § Notifies the secondary nodes Waits for all to say they received the message § Responds with a write-succeeded message § Failure results in inconsistent data!! 6

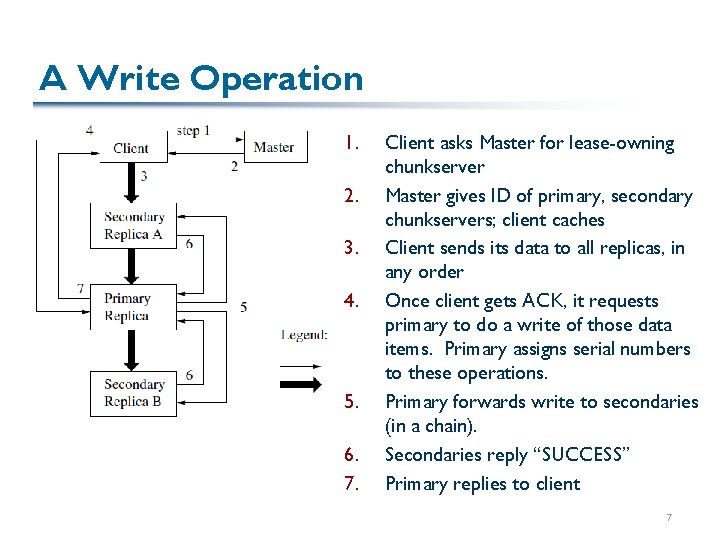

A Write Operation 1. 2. 3. 4. 5. 6. 7. Client asks Master for lease-owning chunkserver Master gives ID of primary, secondary chunkservers; client caches Client sends its data to all replicas, in any order Once client gets ACK, it requests primary to do a write of those data items. Primary assigns serial numbers to these operations. Primary forwards write to secondaries (in a chain). Secondaries reply “SUCCESS” Primary replies to client 7

Append § GFS supports atomic append that multiple machines can use at the same time § Primary will interleave the requests in any order § Will be written “at least once”! § Primary determines a position for the write, forwards this to th secondaries 8

Failures and the Client § If there is a failure in a record write or append, the client will generally retry § If there was “partial success” in a previous append, there might be more than one copy on some nodes – and inconsistency § Client must handle this through checksums, record IDs, and periodic checkpointing 9

GFS Performance § Many performance numbers in the paper § Not enough context here to discuss them in much detail – would need to see how they compare with other approaches! § But: validate high scalability in terms of concurrent reads, concurrent appends, with data partitioned and replicated across many machines § Also show fast recovery from failed nodes § Not the only approach to many of these problems, but one shown to work at industrial-strength! 10

GFS: An Interesting Reduced-Consistenc Model § Google uses it to stage data in processing / analysis § A record-oriented file storage system, where the records can sometimes be corrupted or duplicated § A relaxed consistency model § Later: We’ll revisit consistency in the context of replication – and see the PNUTS platform under Yahoo 11

A Popular Distributed Programming Mod Map. Reduce § In many circles, considered the key building block for much of Google’s data analysis § A programming language built on it: Sawzall, http: //labs. google. com/papers/sawzall. html § … Sawzall has become one of the most widely used programming languages at Google. … [O]n one dedicated Workqueue cluster with 1500 Xeon CPUs, there were 32, 580 Sawzall jobs launched, using an average of 220 machines each. While running those jobs, 18, 636 failures occurred (application failure, network outage, system crash, etc. ) that triggered rerunning some portion of the job. The jobs read a total of 3. 2 x 1015 bytes of data (2. 8 PB) and wrote 9. 9 x 1012 bytes (9. 3 TB). § Other similar languages: Yahoo’s Pig Latin and Pig; Microsoft’s Dryad § Cloned in open source: Hadoop, http: //hadoop. apache. org/core/ § So what is it? What’s it good for? 12

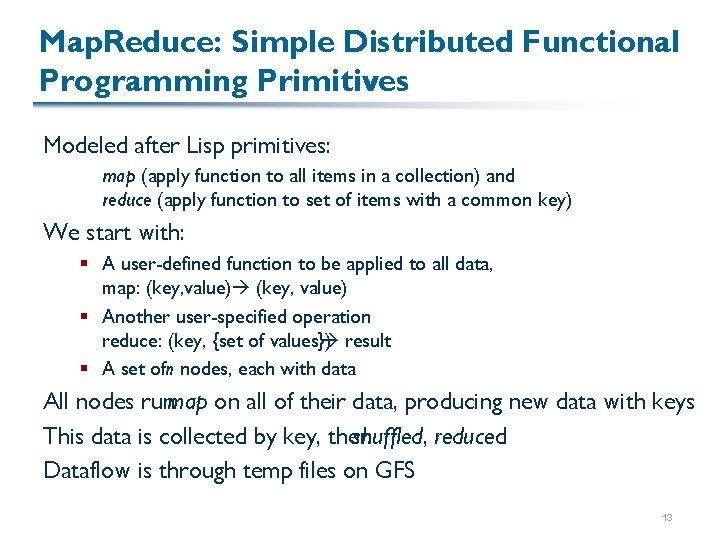

Map. Reduce: Simple Distributed Functional Programming Primitives Modeled after Lisp primitives: map (apply function to all items in a collection) and reduce (apply function to set of items with a common key) We start with: § A user-defined function to be applied to all data, map: (key, value) § Another user-specified operation reduce: (key, {set of values}) result § A set ofn nodes, each with data All nodes runmap on all of their data, producing new data with keys This data is collected by key, then shuffled, reduced Dataflow is through temp files on GFS 13

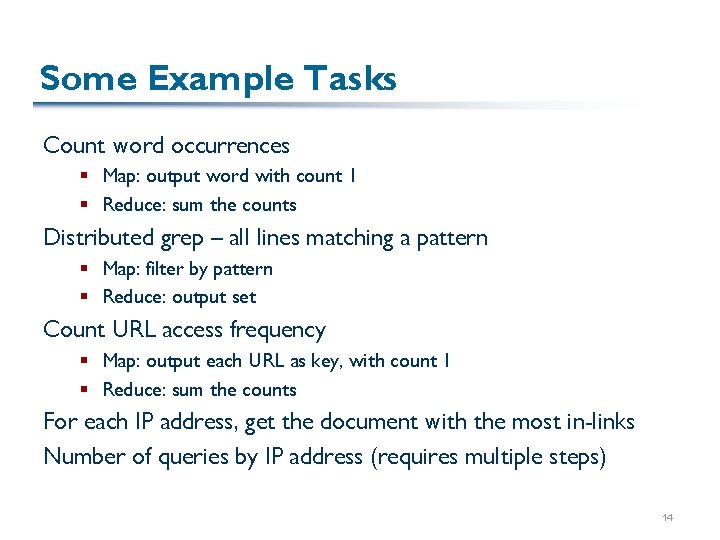

Some Example Tasks Count word occurrences § Map: output word with count 1 § Reduce: sum the counts Distributed grep – all lines matching a pattern § Map: filter by pattern § Reduce: output set Count URL access frequency § Map: output each URL as key, with count 1 § Reduce: sum the counts For each IP address, get the document with the most in-links Number of queries by IP address (requires multiple steps) 14

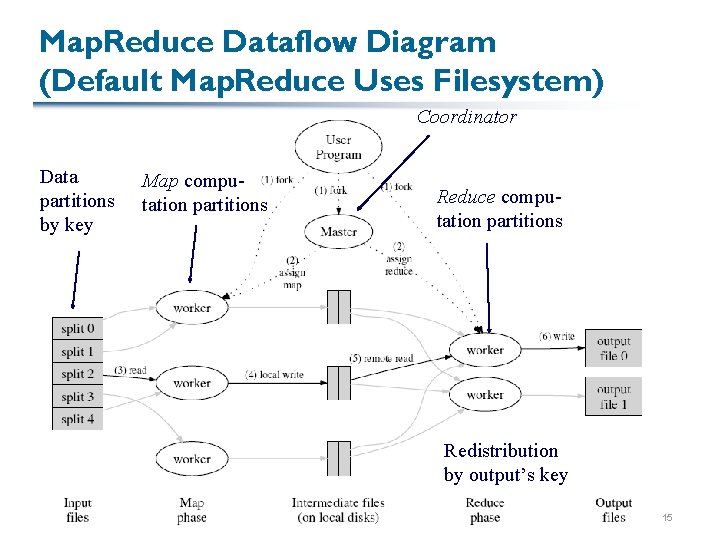

Map. Reduce Dataflow Diagram (Default Map. Reduce Uses Filesystem) Coordinator Data partitions by key Map computation partitions Reduce computation partitions Redistribution by output’s key 15

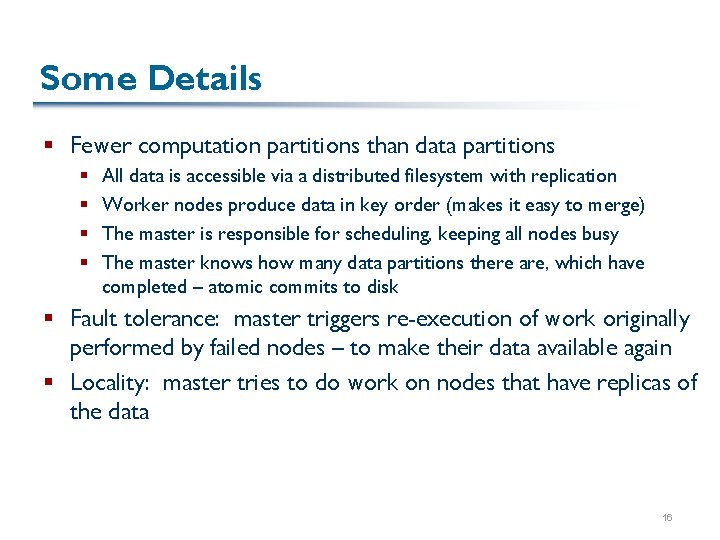

Some Details § Fewer computation partitions than data partitions § § All data is accessible via a distributed filesystem with replication Worker nodes produce data in key order (makes it easy to merge) The master is responsible for scheduling, keeping all nodes busy The master knows how many data partitions there are, which have completed – atomic commits to disk § Fault tolerance: master triggers re-execution of work originally performed by failed nodes – to make their data available again § Locality: master tries to do work on nodes that have replicas of the data 16

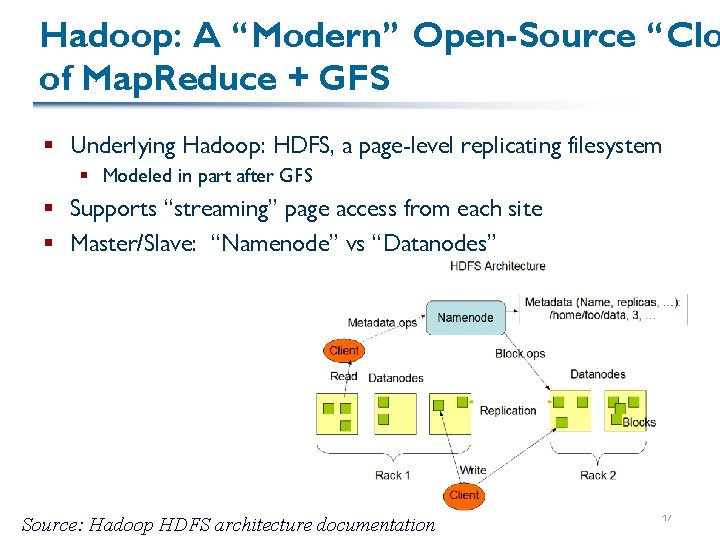

Hadoop: A “Modern” Open-Source “Clo of Map. Reduce + GFS § Underlying Hadoop: HDFS, a page-level replicating filesystem § Modeled in part after GFS § Supports “streaming” page access from each site § Master/Slave: “Namenode” vs “Datanodes” Source: Hadoop HDFS architecture documentation 17

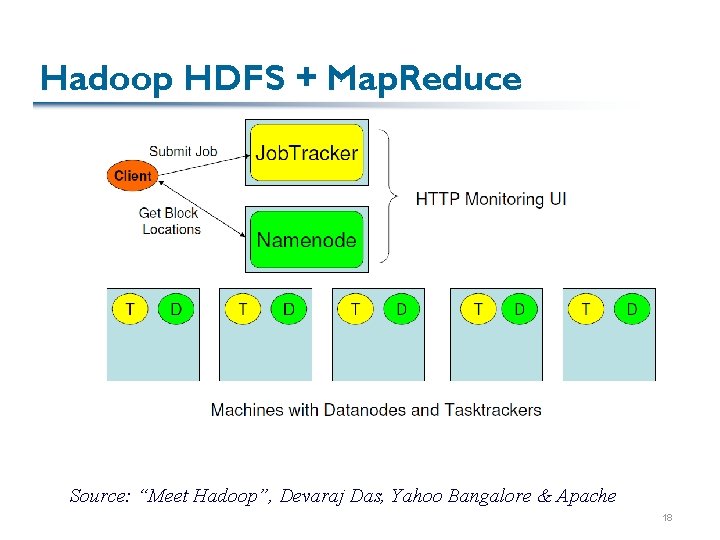

Hadoop HDFS + Map. Reduce Source: “Meet Hadoop”, Devaraj Das, Yahoo Bangalore & Apache 18

Hadoop Map. Reduce Architecture § “Jobtracker” (Master): § Accepts jobs submitted by users § Gives tasks to Tasktrackers – makes scheduling decisions, colocates tasks to data § Monitors task, tracker status, re-executes tasks if needed § “Tasktrackers” (Slaves): § Run Map and Reduce tasks § Manage storage, transmission of intermediate output 19

How Does this Relate to DHTs? § Consider replacing the filesystem with the DHT… 20

What Does Map. Reduce Do Well? § What are its strengths? § What about weaknesses? 21

Map. Reduce is a Particular Programming Model … But it’s not especially general (though things like Pig Latin improve it) Suppose we have autonomous application components that wish to communicate We’ve already seen a few strategies: § Request/response from client to server HTTP itself § Asynchronous messages Router “gossip” protocols P 2 P “finger tables”, etc. Are there general mechanisms and principles? (Of course!) … Let’s first look at what happens if we need in-order messaging 22

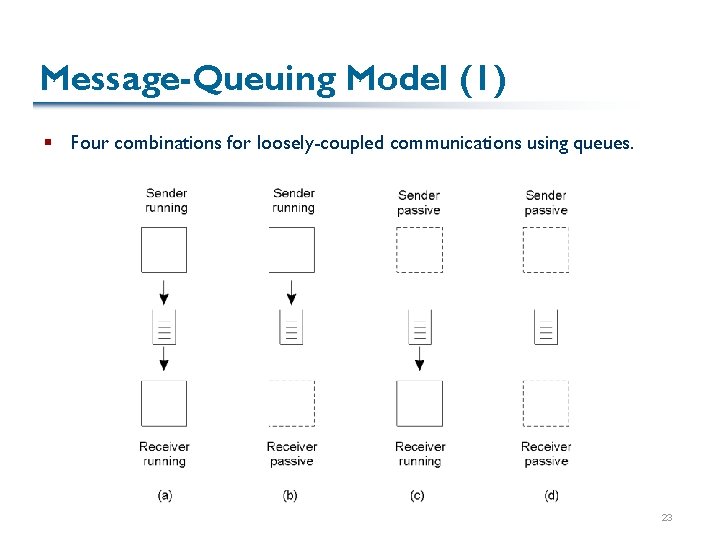

Message-Queuing Model (1) § Four combinations for loosely-coupled communications using queues. 2 -26 23

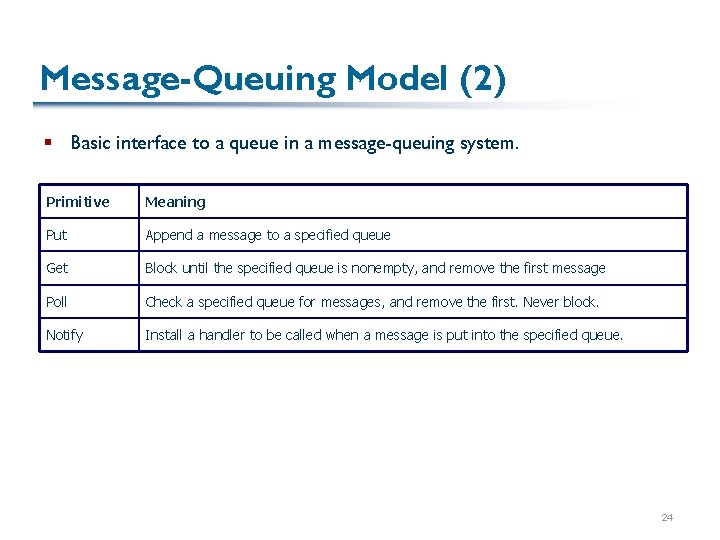

Message-Queuing Model (2) § Basic interface to a queue in a message-queuing system. Primitive Meaning Put Append a message to a specified queue Get Block until the specified queue is nonempty, and remove the first message Poll Check a specified queue for messages, and remove the first. Never block. Notify Install a handler to be called when a message is put into the specified queue. 24

General Architecture of a Message-Queuing System (1) § The relationship between queue-level addressing and network-level addressing. 25

General Architecture of a Message-Queuing System (2) § The general organization of a message-queuing system with routers. 2 -29 26

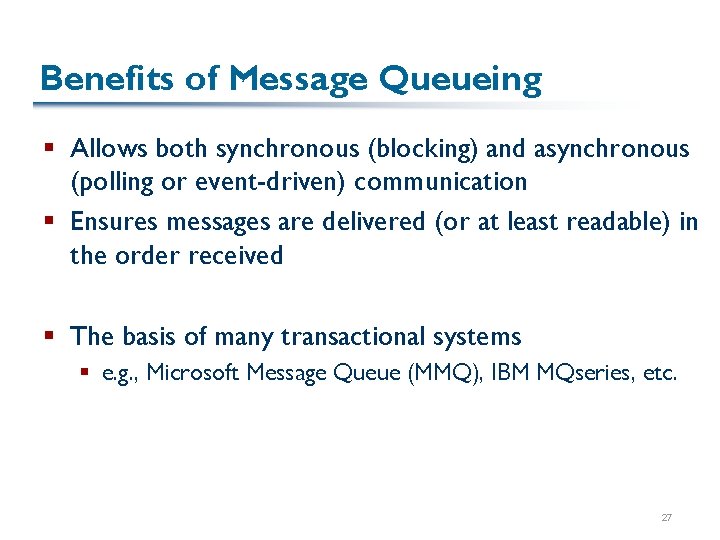

Benefits of Message Queueing § Allows both synchronous (blocking) and asynchronous (polling or event-driven) communication § Ensures messages are delivered (or at least readable) in the order received § The basis of many transactional systems § e. g. , Microsoft Message Queue (MMQ), IBM MQseries, etc. 27

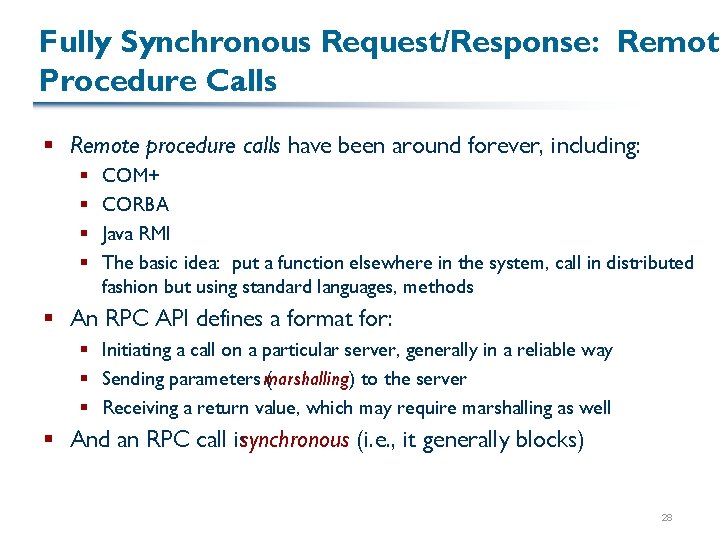

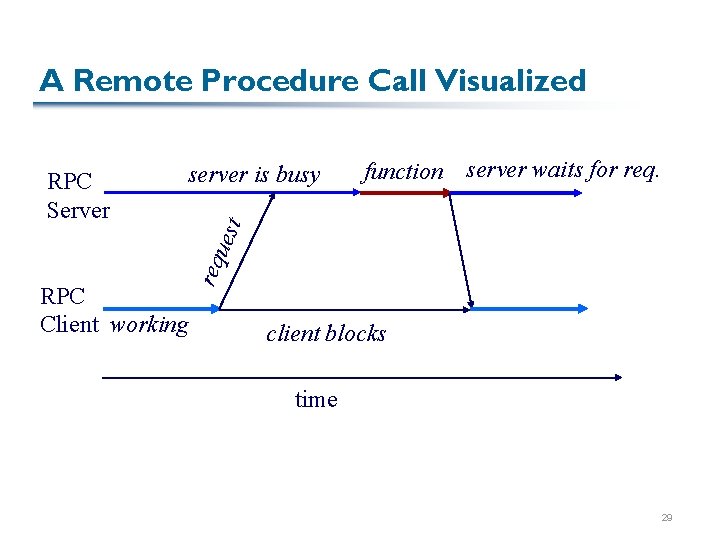

Fully Synchronous Request/Response: Remote Procedure Calls § Remote procedure calls have been around forever, including: § § COM+ CORBA Java RMI The basic idea: put a function elsewhere in the system, call in distributed fashion but using standard languages, methods § An RPC API defines a format for: § Initiating a call on a particular server, generally in a reliable way § Sending parameters marshalling) ( to the server § Receiving a return value, which may require marshalling as well § And an RPC call issynchronous (i. e. , it generally blocks) 28

A Remote Procedure Call Visualized function server waits for req. t server is busy RPC Client working req ues RPC Server client blocks time 29

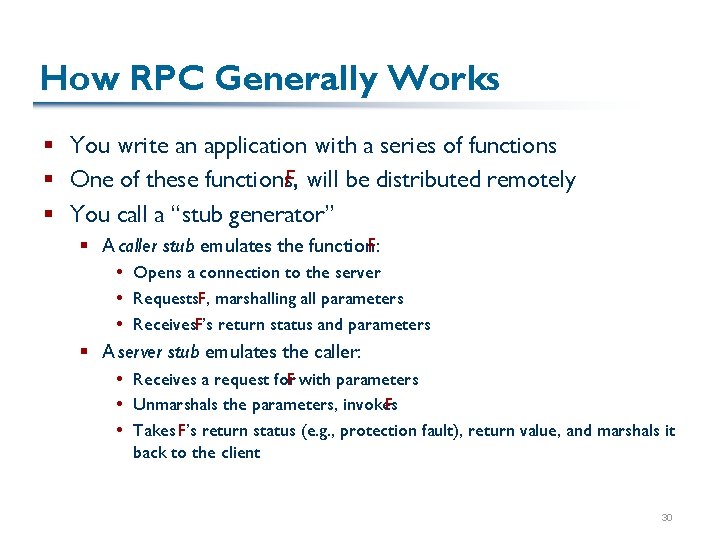

How RPC Generally Works § You write an application with a series of functions § One of these functions, F, will be distributed remotely § You call a “stub generator” § A caller stub emulates the function. F: Opens a connection to the server Requests. F, marshalling all parameters Receives. F’s return status and parameters § A server stub emulates the caller: Receives a request for F with parameters Unmarshals the parameters, invokes F Takes F’s return status (e. g. , protection fault), return value, and marshals it back to the client 30

Passing Value Parameters § Steps involved in doing remote computation through RPC 2 -8 31

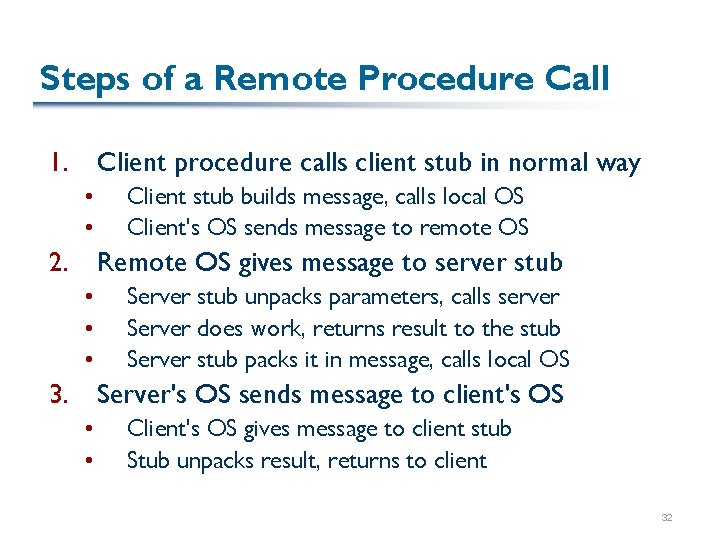

Steps of a Remote Procedure Call 1. Client procedure calls client stub in normal way • • Client stub builds message, calls local OS Client's OS sends message to remote OS 2. Remote OS gives message to server stub • • • Server stub unpacks parameters, calls server Server does work, returns result to the stub Server stub packs it in message, calls local OS 3. Server's OS sends message to client's OS • • Client's OS gives message to client stub Stub unpacks result, returns to client 32

RPC Components § Generally, you need to write: § Your function, in a compatible language § An interface definition, analogous to a C header file, so other people can program for. F without having its source § Generally, software will take the interface definition and generate the appropriate stubs (In the case of Java, RMIC knows enough about Java to run directly on the source file) § The server stubs will generally run in some type of daemon process on the server § Each function will need a globally unique name or GUID 33

Parameter Passing Can Be Tricky Because of References § The situation when passing an object by reference or by value. 2 -18 34

What Are the Hard Problems with RPC? Esp. Inter-Language RPC? § Resolving different data formats between languages (e. g. , Java vs. Fortran arrays) § Reliability, security § Finding remote procedures in the first place § Extensibility/maintainability § (Some of these might look familiar from when we talked about data exchange!) 35

Web Services § Goal: provide an infrastructure for connecting components, building applications in a way similar to hyperlinks between data § It’s another distributed computing platform for the Web § Goal: Internet-scale, language-independent, upwards-compatible where possible § This one is based on many familiar concepts § Standard protocols: HTTP § Standard marshalling formats: XML-based, XML Schemas § All new data formats are XML-based 36

Three Parts to Web Services 1. “Wire” protocols § Data encodings, RPC calls or document passing, etc. 2. Describing what goes on the wire § Schemas for the data 3. “Service discovery” § Means of finding web services 37

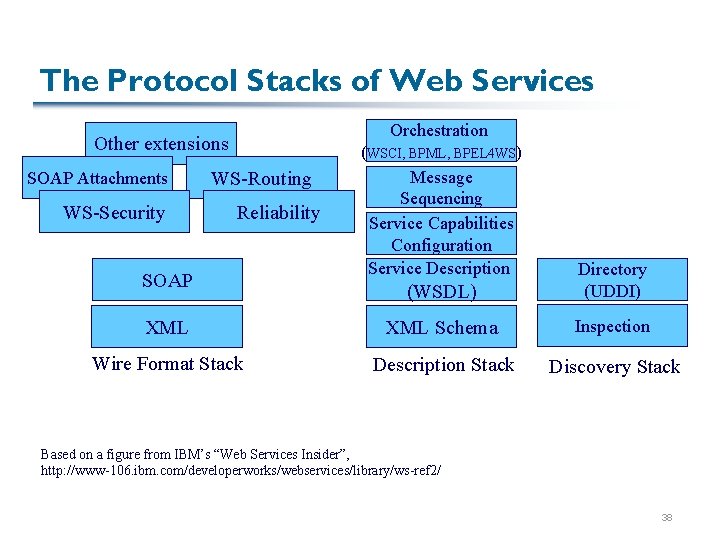

The Protocol Stacks of Web Services Orchestration Other extensions SOAP Attachments WS-Security WS-Routing Reliability (WSCI, BPML, BPEL 4 WS) Message Sequencing Service Capabilities Configuration Service Description (WSDL) Directory (UDDI) XML Schema Inspection Wire Format Stack Description Stack Discovery Stack SOAP Based on a figure from IBM’s “Web Services Insider”, http: //www-106. ibm. com/developerworks/webservices/library/ws-ref 2/ 38

- Slides: 38