DistanceConstraint Reachability Computation in Uncertain Graphs Ruoming Jin

Distance-Constraint Reachability Computation in Uncertain Graphs Ruoming Jin, Lin Liu Kent State University Bolin Ding UIUC Haixun Wang MSRA

Why Uncertain Graphs? Increasing importance of graph/network data Social Network, Biological Network, Traffic/Transportation Network, Peer-to-Peer Network Probabilistic perspective gets more and more attention recently. Uncertainty is ubiquitous! Protein-Protein Social Networks Interaction Networks Probabilistic Trust/Influence False Positive > 45% Model

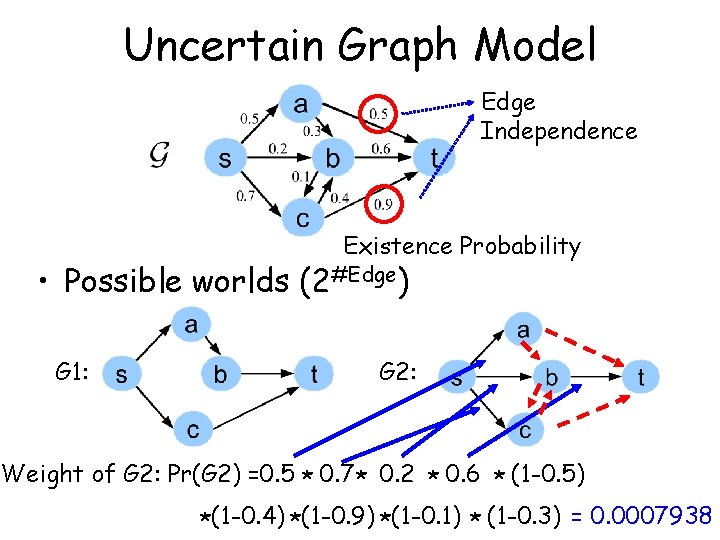

Uncertain Graph Model Edge Independence Existence Probability • Possible worlds (2#Edge) G 1: G 2: Weight of G 2: Pr(G 2) =0. 5 * 0. 7 * 0. 2 * 0. 6 * (1 -0. 5) *(1 -0. 4) *(1 -0. 9) *(1 -0. 1) * (1 -0. 3) = 0. 0007938

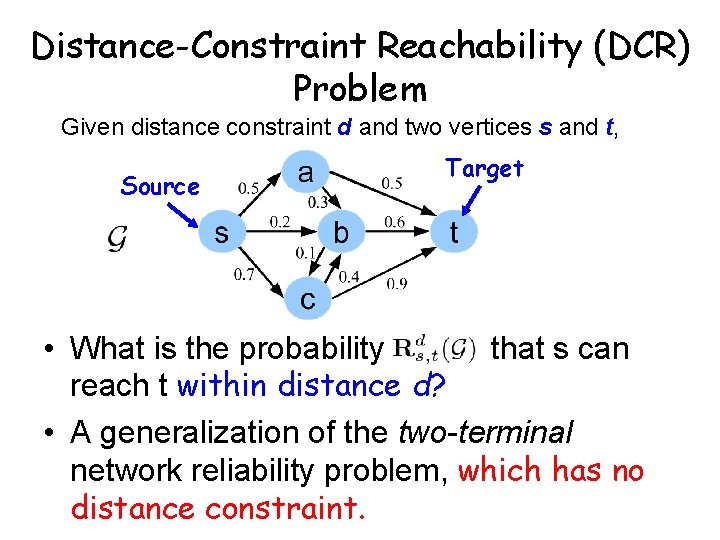

Distance-Constraint Reachability (DCR) Problem Given distance constraint d and two vertices s and t, Source Target • What is the probability that s can reach t within distance d? • A generalization of the two-terminal network reliability problem, which has no distance constraint.

Important Applications • Peer-to-Peer (P 2 P) Networks – Communication happens only when node distance is limited. • Social Networks – Trust/Influence can only be propagated only through small number of hops. • Traffic Networks – Travel distance (travel time) query – What is the probability that we can reach the airport within one hour?

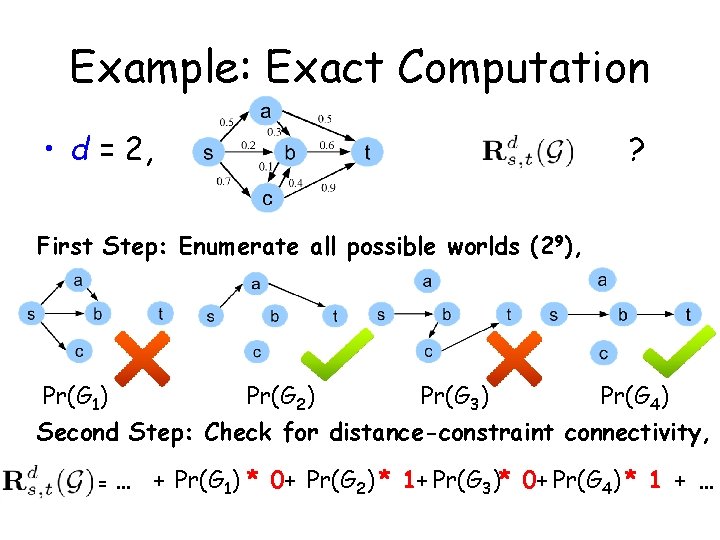

Example: Exact Computation • d = 2, ? First Step: Enumerate all possible worlds (29), Pr(G 1) Pr(G 2) Pr(G 3) Pr(G 4) Second Step: Check for distance-constraint connectivity, = … + Pr(G 1) * 0+ Pr(G 2) * 1+ Pr(G 3)* 0+ Pr(G 4) * 1 + …

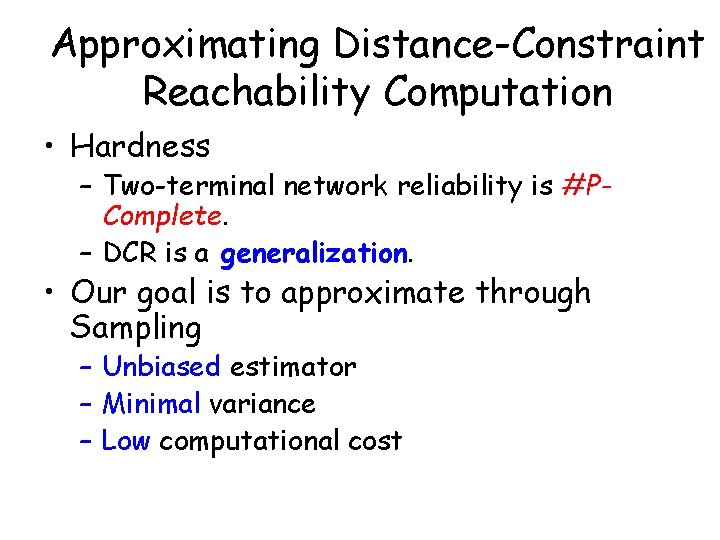

Approximating Distance-Constraint Reachability Computation • Hardness – Two-terminal network reliability is #PComplete. – DCR is a generalization. • Our goal is to approximate through Sampling – Unbiased estimator – Minimal variance – Low computational cost

Start from the most intuitive estimators, right?

Direct Sampling Approach • Sampling Process – Sample n graphs – Sample each graph according to edge probability

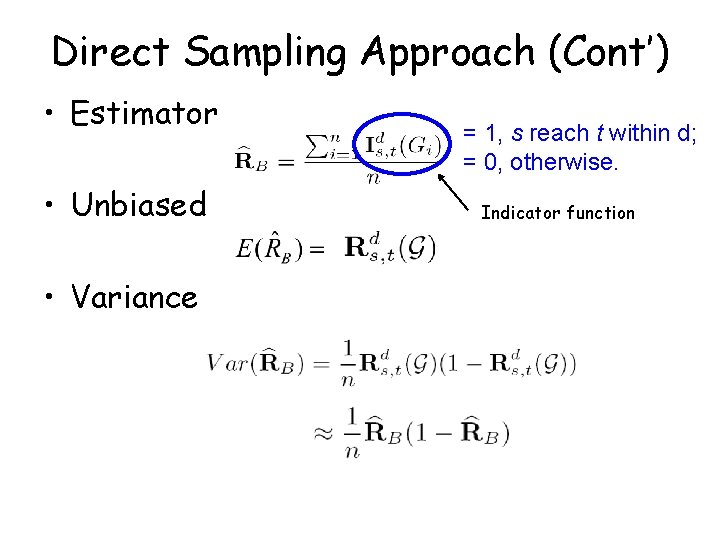

Direct Sampling Approach (Cont’) • Estimator • Unbiased • Variance = 1, s reach t within d; = 0, otherwise. Indicator function

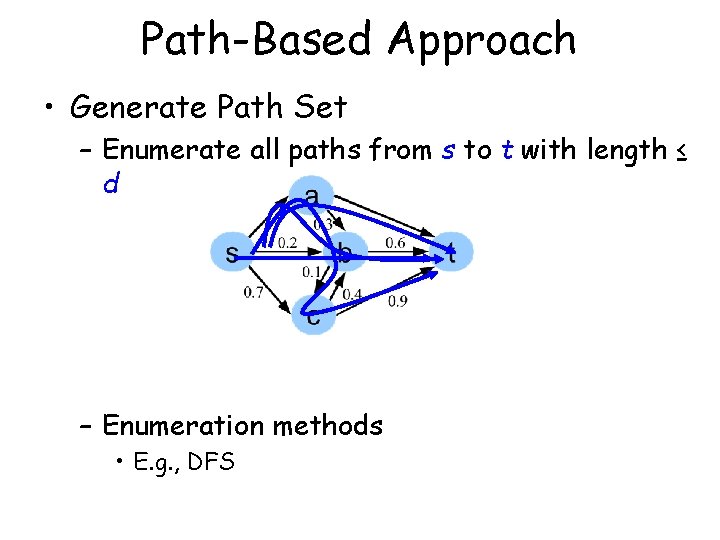

Path-Based Approach • Generate Path Set – Enumerate all paths from s to t with length ≤ d – Enumeration methods • E. g. , DFS

Path-Based Approach (Cont’) • Path set • • Exactly computed by Inclusion-Exclusion principle • Approximated by Monte-Carlo Algorithm by R. M. Karp and M. G. Luby ( ) • Unbiased • Variance

Can we do better?

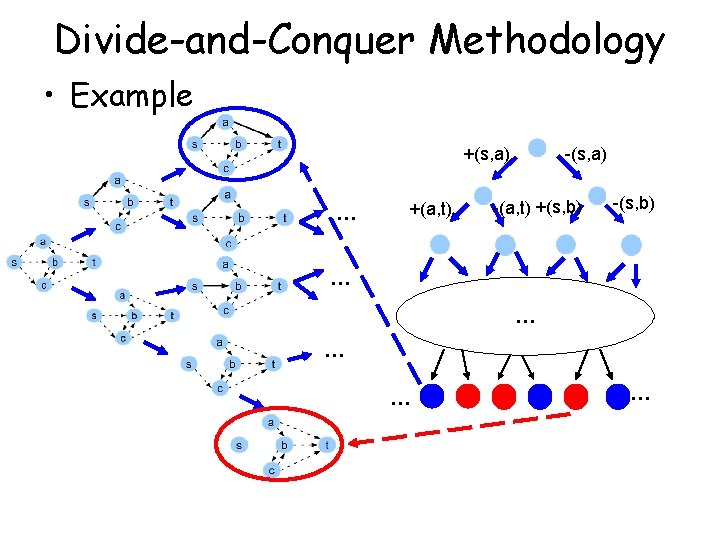

Divide-and-Conquer Methodology • Example +(s, a) +(a, t) … -(s, a) -(a, t) +(s, b) -(s, b) … … …

Divide and Conquer (Cont’) Summarize: Graphs having e 1 1. # of leaf all possible worlds nodes is smaller than 2|E|. Graphs not Having e 1 2. Each possible world exists only in one leaf node. 3. Reachability is the sum of the weights of blue nodes. 4. Leaf nodes form a nice sample space. s can reach t. s can not reach t.

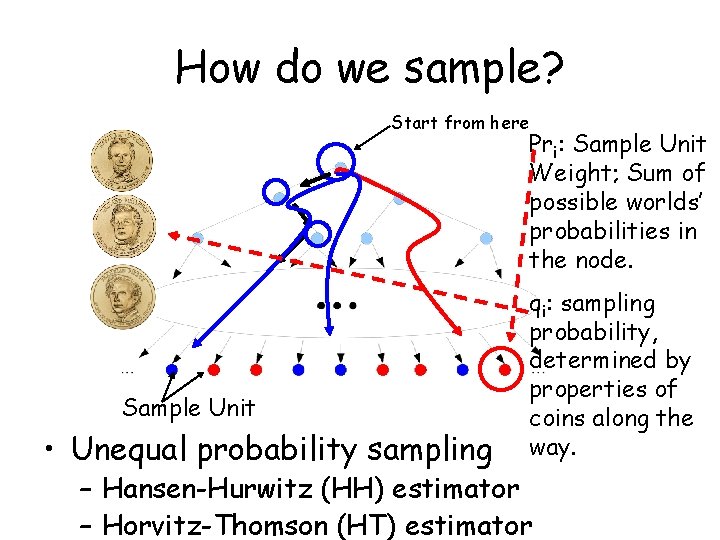

How do we sample? Start from here Sample Unit • Unequal probability sampling Pri: Sample Unit Weight; Sum of possible worlds’ probabilities in the node. qi: sampling probability, determined by properties of coins along the way. – Hansen-Hurwitz (HH) estimator – Horvitz-Thomson (HT) estimator

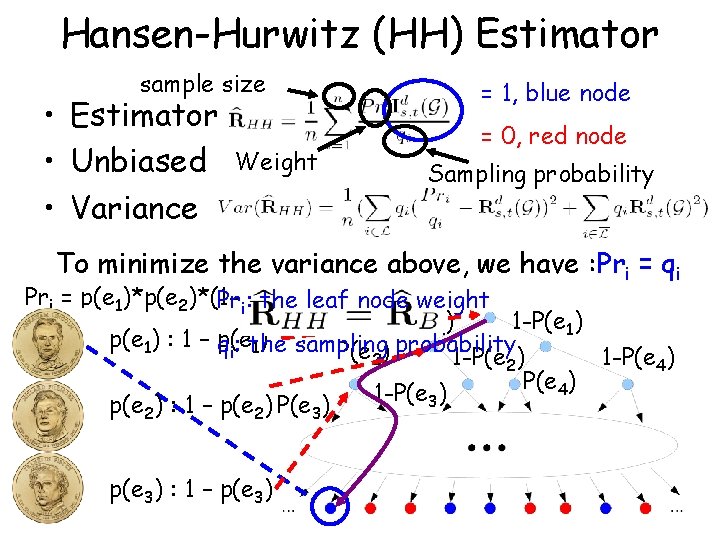

Hansen-Hurwitz (HH) Estimator sample size • Estimator • Unbiased • Variance = 1, blue node Weight = 0, red node Sampling probability To minimize the variance above, we have : Pri = qi Pri = p(e 1)*p(e 2)*(1 -p(e Pri: the leaf node weight 3))*… P(e 1) 1 -P(e 1) p(e 1) : 1 – p(e qi: the sampling 1) P(e 2) probability 1 -P(e 2) 1 -P(e 4) 1 -P(e ) 3 p(e ) : 1 – p(e ) P(e ) 2 2 p(e 3) : 1 – p(e 3) 3

Horvitz-Thomson (HT) Estimator • Estimator # of Unique sample units • Unbiased • Variance – To minimize vairance, we find Pri = qi – Smaller variance than HH estimator

Can we further reduce the variance and computational cost?

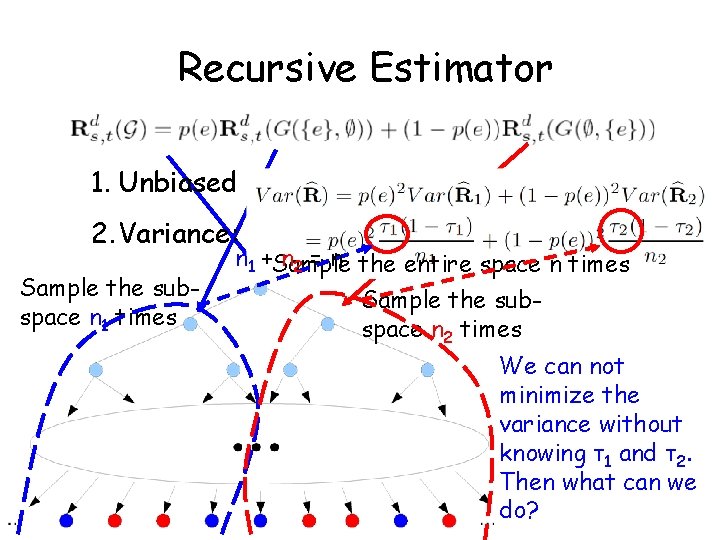

Recursive Estimator 1. Unbiased 2. Variance: Sample the subspace n 1 times n 1 +Sample n 2 = n the entire space n times Sample the subspace n 2 times We can not minimize the variance without knowing τ1 and τ2. Then what can we do?

Sample Allocation • We guess: What if – n 1 = n*p(e) – n 2 = n*(1 -p(e))? • We find: Variance reduced! – HH Estimator: – HT Estimator:

Sample Allocation (Cont’) • Sampling Time Reduced!! Sample size = n Directly allocate samples n 1=n*p(e 1) n 3=n 1*p(e 2) Toss coin when sample size is small n 4=n 1*(1 -p(e 2)) n 2=n*(1 -p(e 1))

Experimental Setup • Experiment setting – Goal: • Relative Error • Variance • Computational Time – System Specification • 2. 0 GHz Dual Core AMD Opteron CPU • 4. 0 GB RAM • Linux

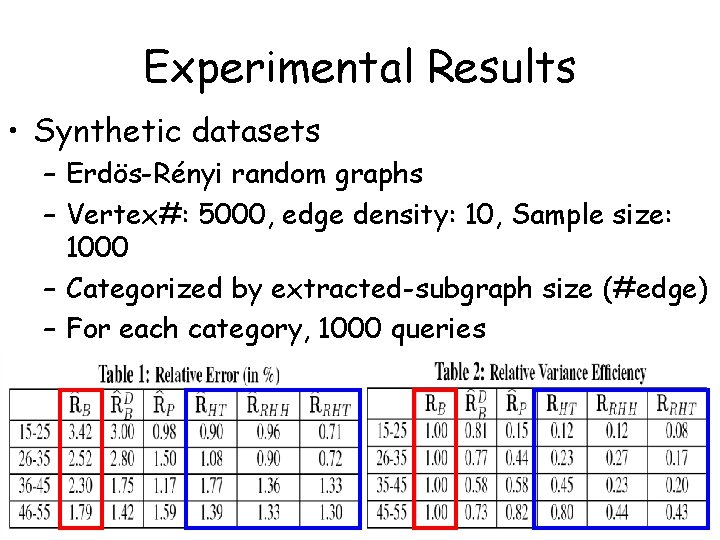

Experimental Results • Synthetic datasets – Erdös-Rényi random graphs – Vertex#: 5000, edge density: 10, Sample size: 1000 – Categorized by extracted-subgraph size (#edge) – For each category, 1000 queries

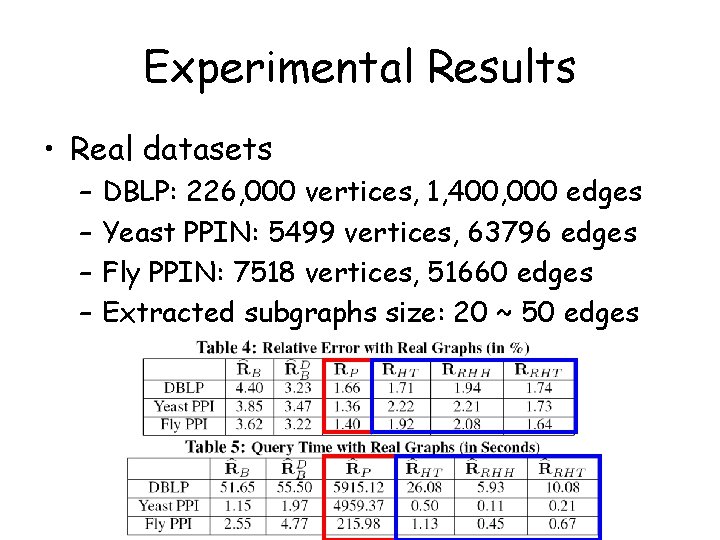

Experimental Results • Real datasets – – DBLP: 226, 000 vertices, 1, 400, 000 edges Yeast PPIN: 5499 vertices, 63796 edges Fly PPIN: 7518 vertices, 51660 edges Extracted subgraphs size: 20 ~ 50 edges

Conclusions • We first propose a novel s-t distance-constraint reachability problem in uncertain graphs. • One efficient exact computation algorithm is developed based on a divide-and-conquer scheme. • Compared with two classic reachability estimators, two significant unequal probability sampling estimators Hansen-Hurwitz (HH) estimator and Horvitz-Thomson (HT) estimator. • Based on the enumeration tree framework, two recursive estimators Recursive HH, and Recursive HT are constructed to reduce estimation variance and time. • Experiments demonstrate the accuracy and efficiency of our estimators.

Thank you ! Questions?

- Slides: 27