Discrete Random Variables Discrete random variables For a

Discrete Random Variables

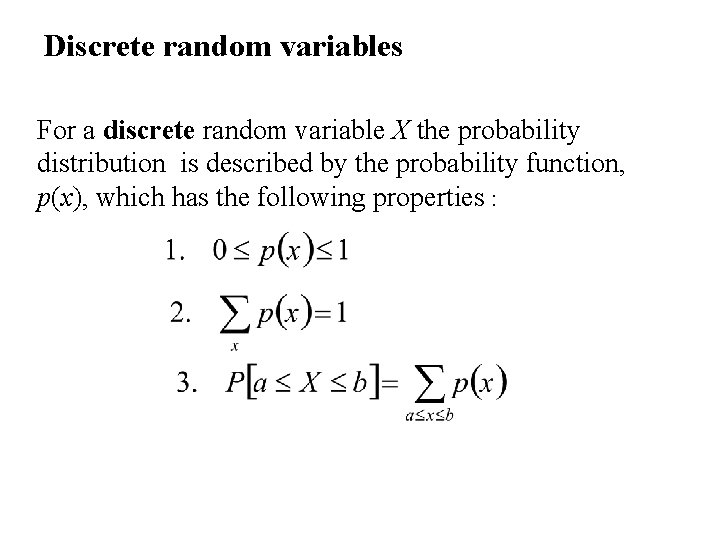

Discrete random variables For a discrete random variable X the probability distribution is described by the probability function, p(x), which has the following properties :

Discrete distributions

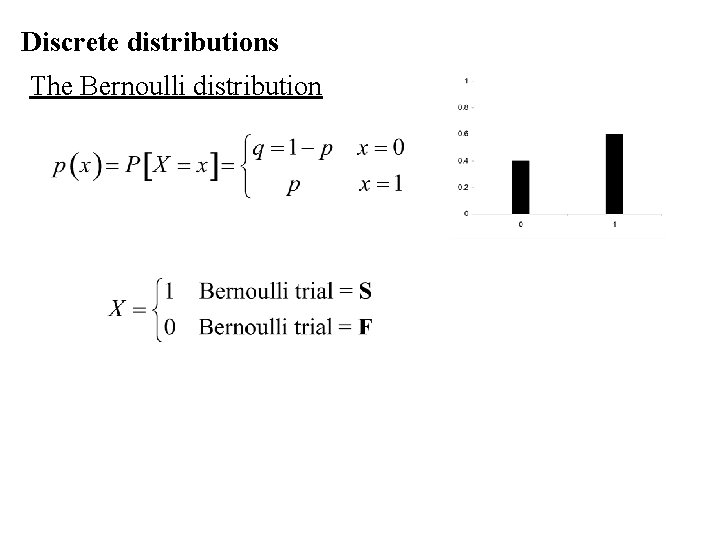

Discrete distributions The Bernoulli distribution

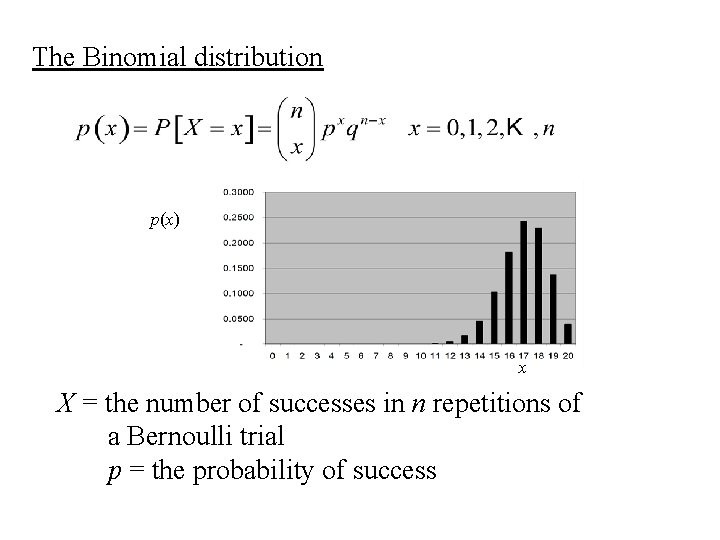

The Binomial distribution p(x) x X = the number of successes in n repetitions of a Bernoulli trial p = the probability of success

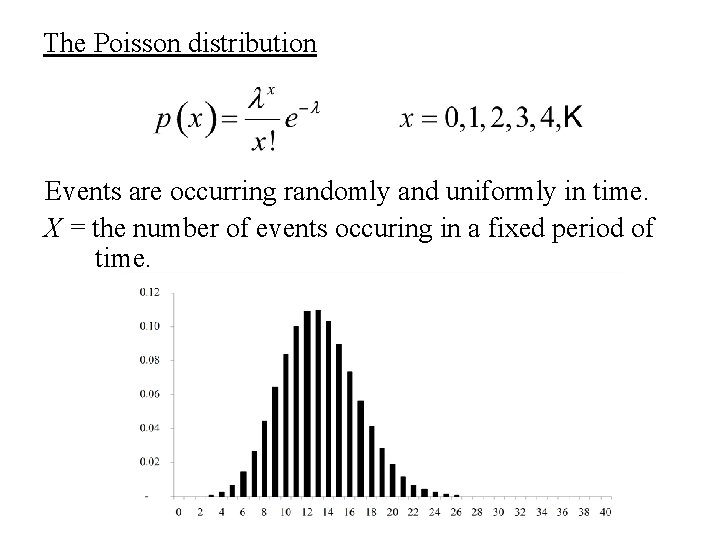

The Poisson distribution Events are occurring randomly and uniformly in time. X = the number of events occuring in a fixed period of time.

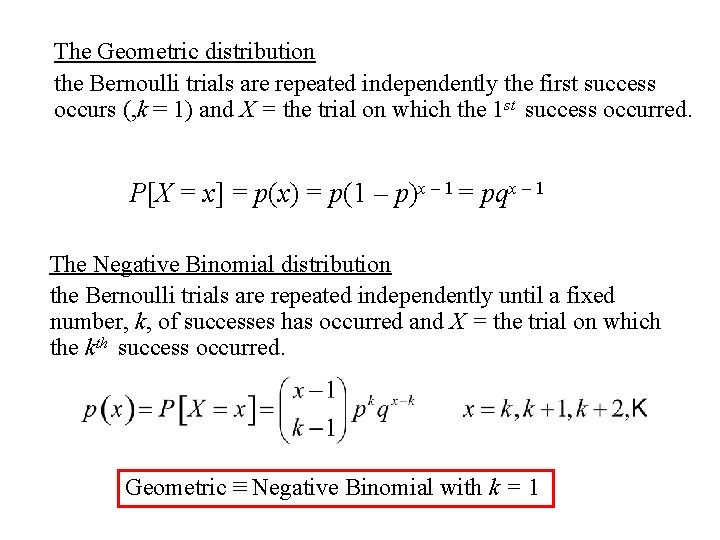

The Geometric distribution the Bernoulli trials are repeated independently the first success occurs (, k = 1) and X = the trial on which the 1 st success occurred. P[X = x] = p(x) = p(1 – p)x – 1 = pqx – 1 The Negative Binomial distribution the Bernoulli trials are repeated independently until a fixed number, k, of successes has occurred and X = the trial on which the kth success occurred. Geometric ≡ Negative Binomial with k = 1

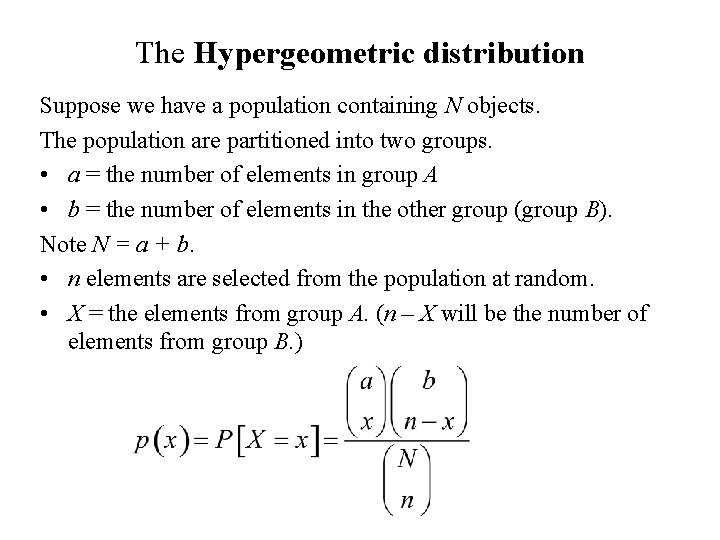

The Hypergeometric distribution Suppose we have a population containing N objects. The population are partitioned into two groups. • a = the number of elements in group A • b = the number of elements in the other group (group B). Note N = a + b. • n elements are selected from the population at random. • X = the elements from group A. (n – X will be the number of elements from group B. )

Continuous Distributions

Continuous random variables For a continuous random variable X the probability distribution is described by the probability density function f(x), which has the following properties : 1. f(x) ≥ 0

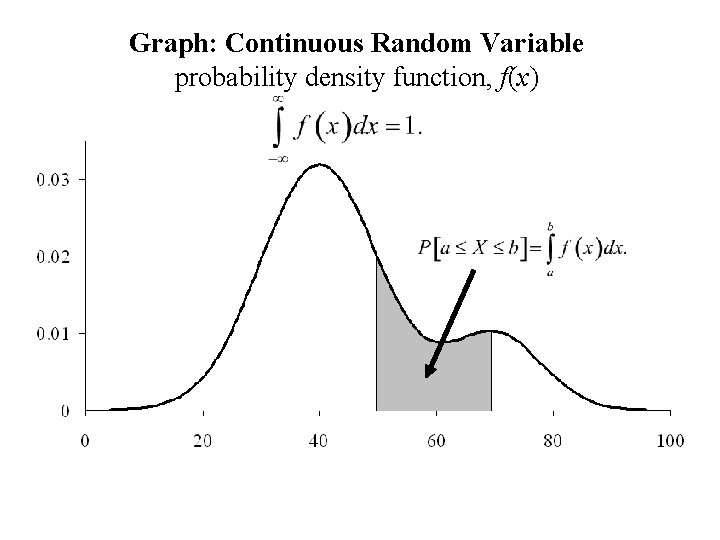

Graph: Continuous Random Variable probability density function, f(x)

The Uniform distribution from a to b

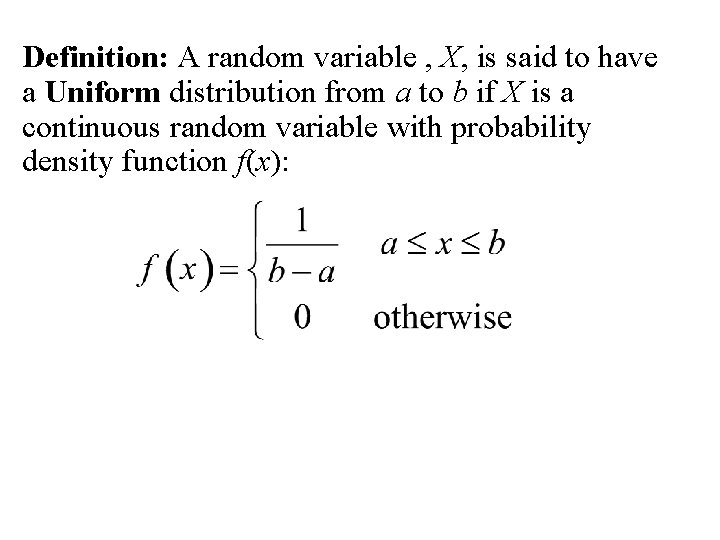

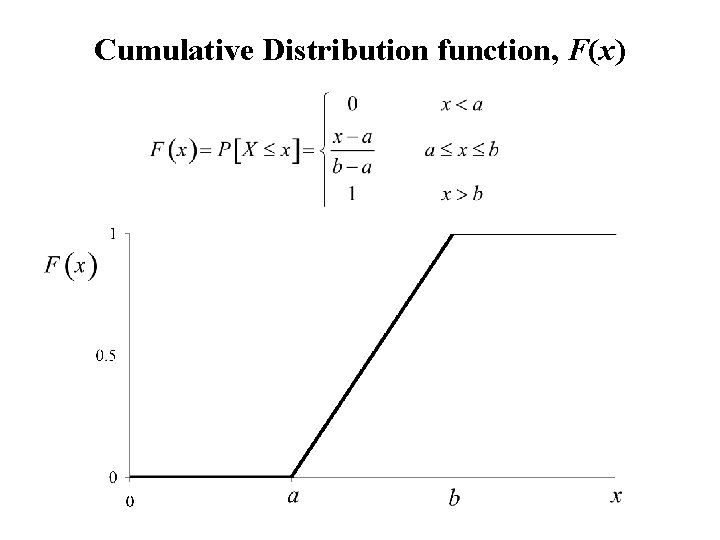

Definition: A random variable , X, is said to have a Uniform distribution from a to b if X is a continuous random variable with probability density function f(x):

Graph: the Uniform Distribution (from a to b)

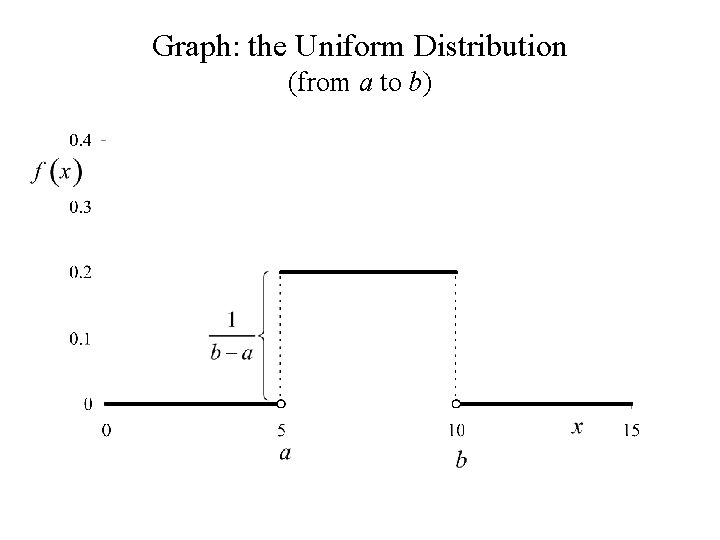

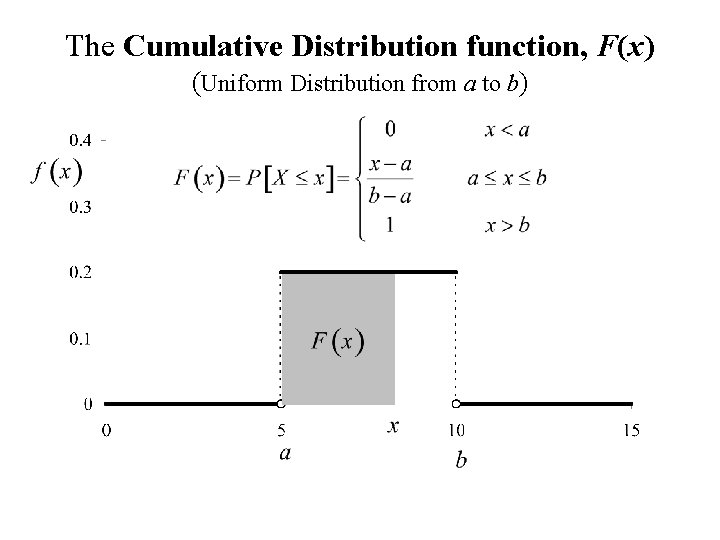

The Cumulative Distribution function, F(x) (Uniform Distribution from a to b)

Cumulative Distribution function, F(x)

The Normal distribution

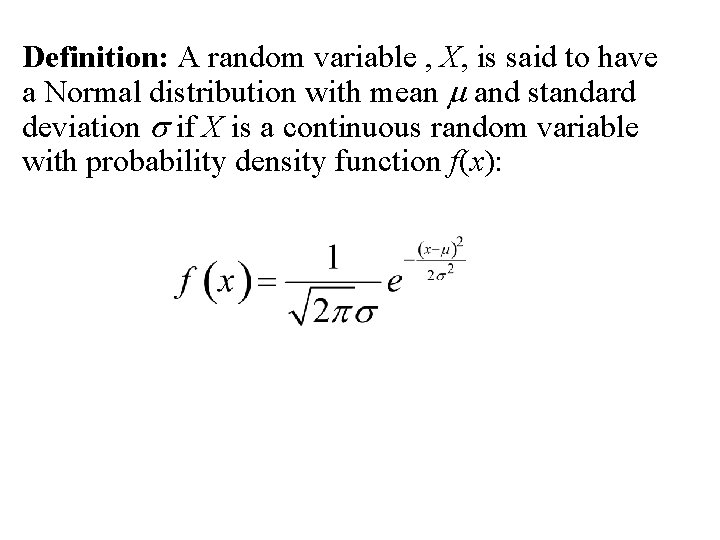

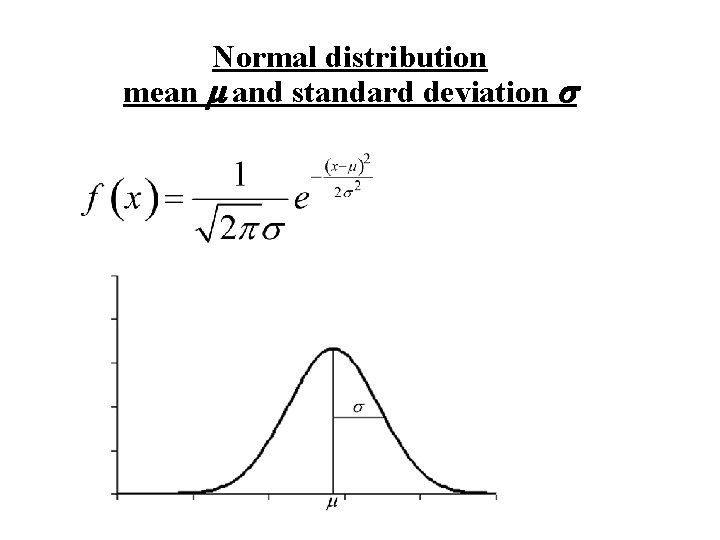

Definition: A random variable , X, is said to have a Normal distribution with mean m and standard deviation s if X is a continuous random variable with probability density function f(x):

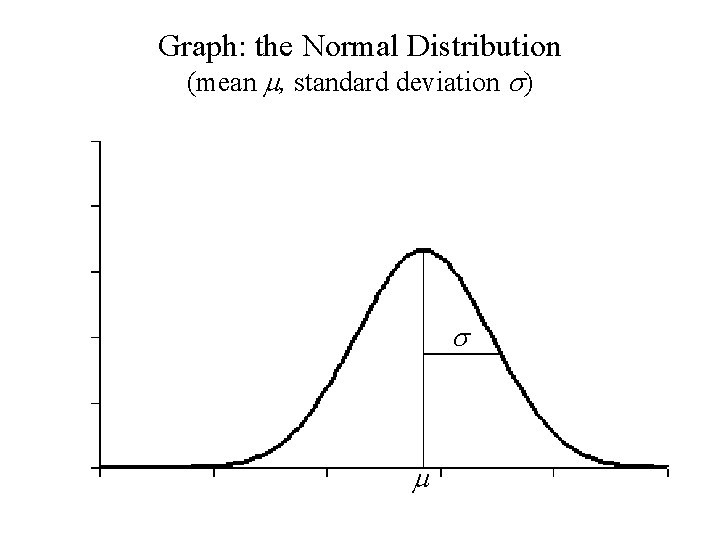

Graph: the Normal Distribution (mean m, standard deviation s) s m

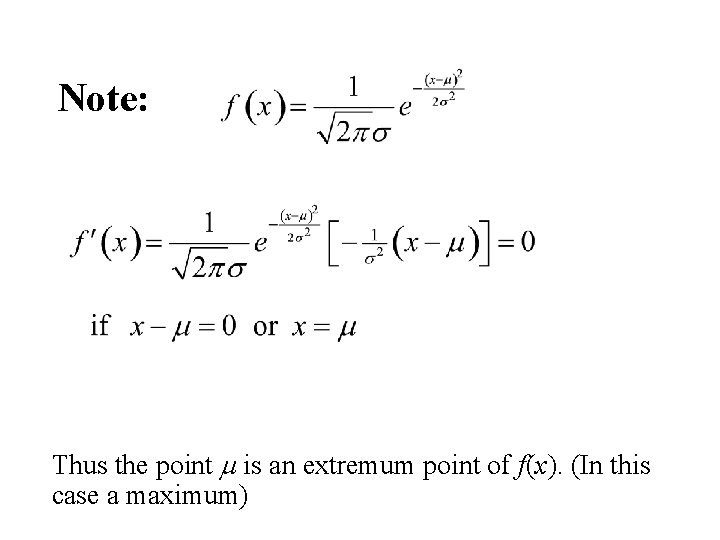

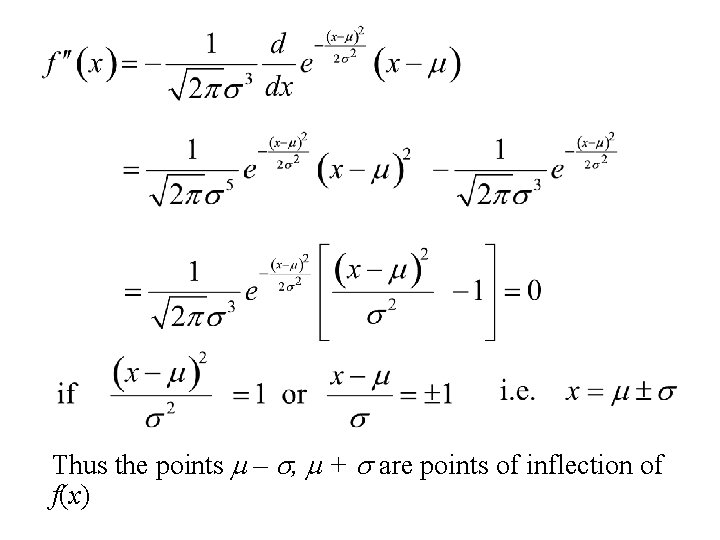

Note: Thus the point m is an extremum point of f(x). (In this case a maximum)

Thus the points m – s, m + s are points of inflection of f(x)

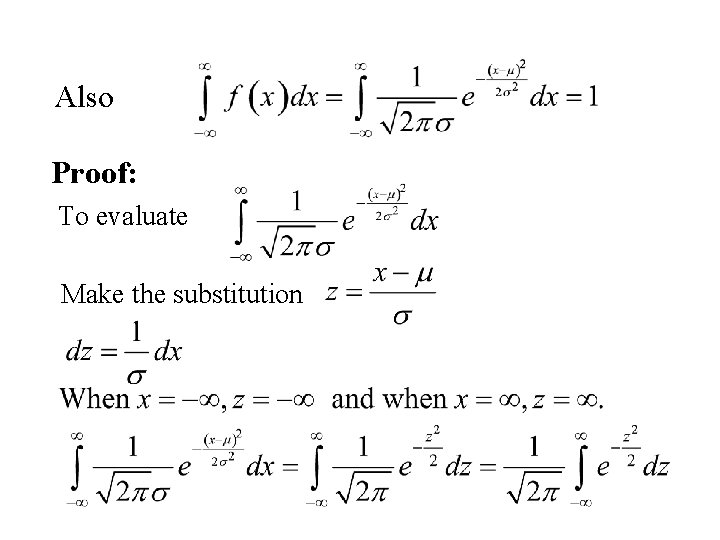

Also Proof: To evaluate Make the substitution

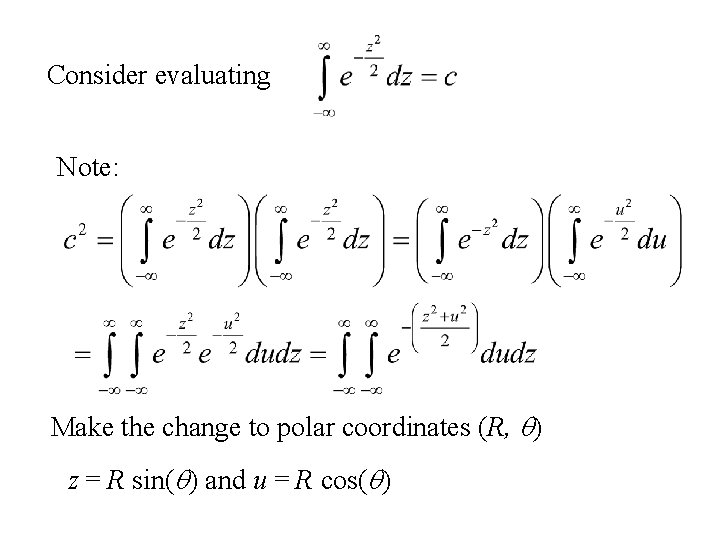

Consider evaluating Note: Make the change to polar coordinates (R, q) z = R sin(q) and u = R cos(q)

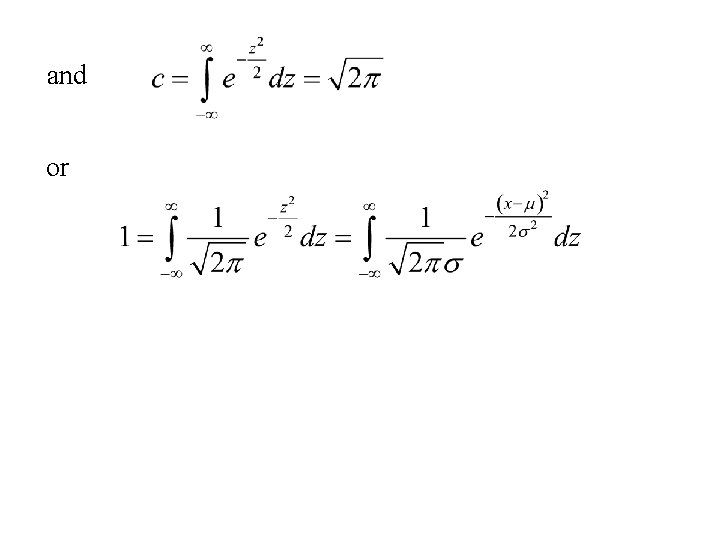

Hence or Using and

and or

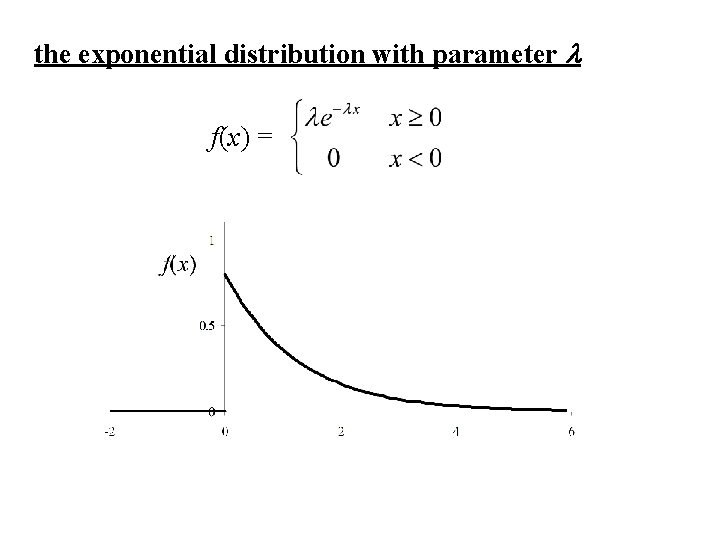

The Exponential distribution

Consider a continuous random variable, X with the following properties: 1. 2. P[X ≥ 0] = 1, and P[X ≥ a + b] = P[X ≥ a] P[X ≥ b] for all a > 0, b > 0. These two properties are reasonable to assume if X = lifetime of an object that doesn’t age. The second property implies:

The property: models the non-aging property i. e. Given the object has lived to age a, the probability that is lives a further b units is the same as if it was born at age a.

![Let F(x) = P[X ≤ x] and G(x) = P[X ≥ x]. Since X Let F(x) = P[X ≤ x] and G(x) = P[X ≥ x]. Since X](http://slidetodoc.com/presentation_image_h2/28384d89874b1b18abadeb8b81c6eb67/image-29.jpg)

Let F(x) = P[X ≤ x] and G(x) = P[X ≥ x]. Since X is a continuous RV then G(x) = 1 – F(x) (P[X = x] = 0 for all x. ) The two properties can be written in terms of G(x): 1. 2. G(0) = 1, and G(a + b) = G(a) G(b) for all a > 0, b > 0. We can show that the only continuous function, G(x), that satisfies 1. and 2. is a exponential function

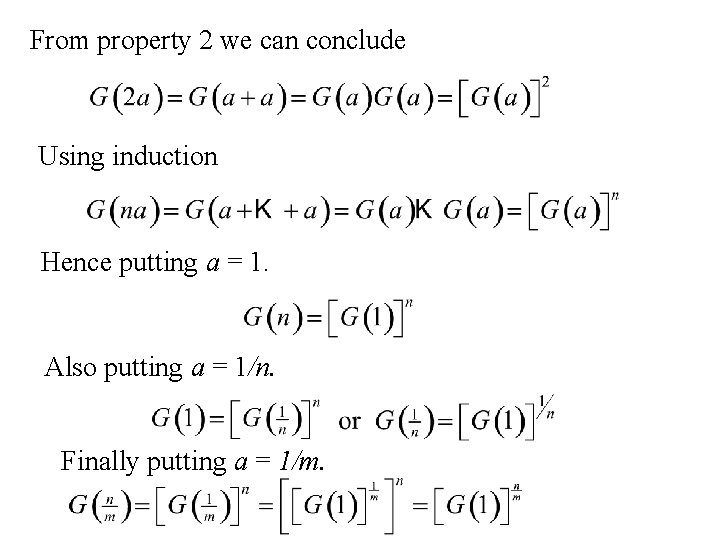

From property 2 we can conclude Using induction Hence putting a = 1. Also putting a = 1/n. Finally putting a = 1/m.

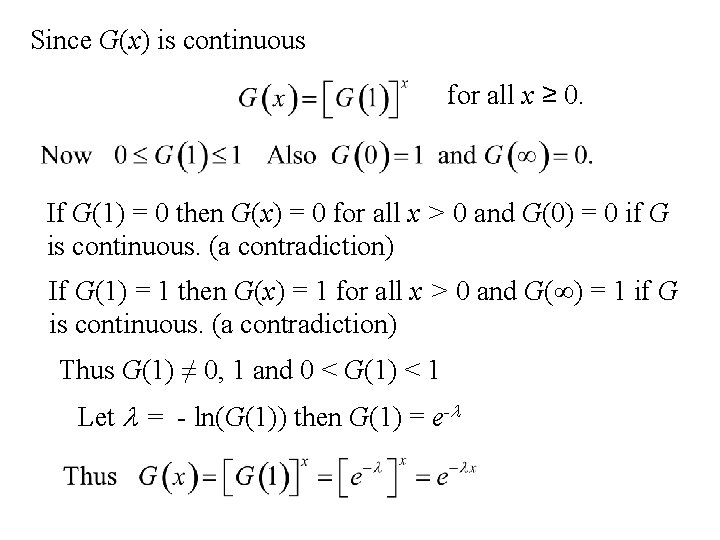

Since G(x) is continuous for all x ≥ 0. If G(1) = 0 then G(x) = 0 for all x > 0 and G(0) = 0 if G is continuous. (a contradiction) If G(1) = 1 then G(x) = 1 for all x > 0 and G(∞) = 1 if G is continuous. (a contradiction) Thus G(1) ≠ 0, 1 and 0 < G(1) < 1 Let l = - ln(G(1)) then G(1) = e-l

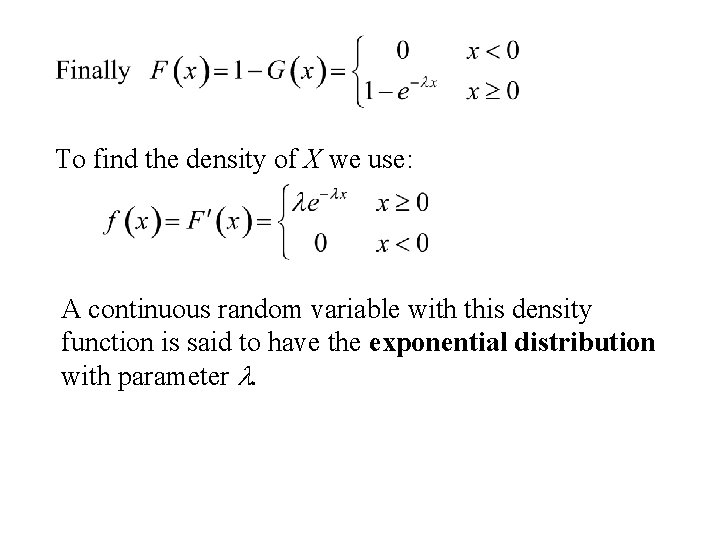

To find the density of X we use: A continuous random variable with this density function is said to have the exponential distribution with parameter l.

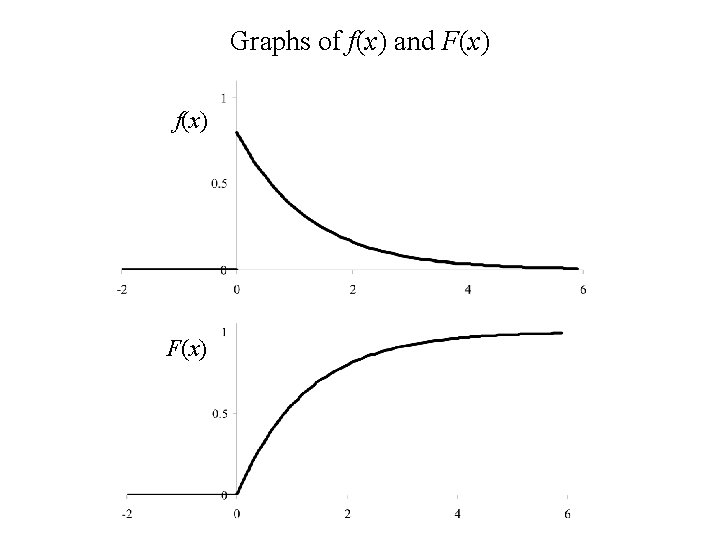

Graphs of f(x) and F(x) f(x) F(x)

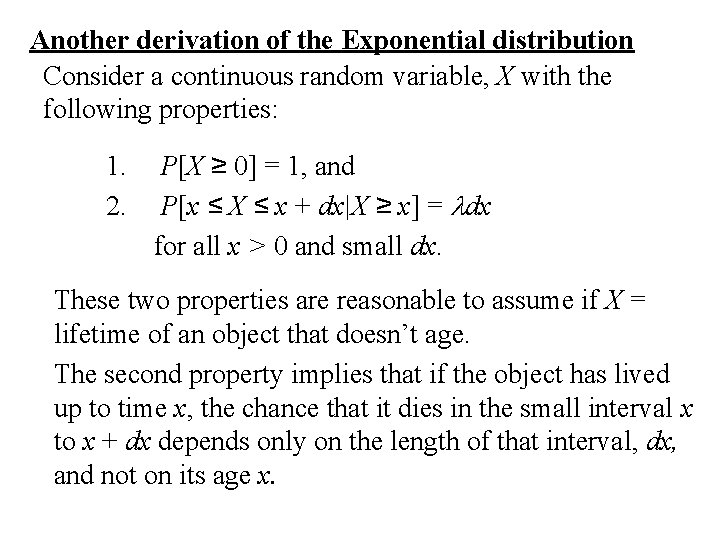

Another derivation of the Exponential distribution Consider a continuous random variable, X with the following properties: 1. 2. P[X ≥ 0] = 1, and P[x ≤ X ≤ x + dx|X ≥ x] = ldx for all x > 0 and small dx. These two properties are reasonable to assume if X = lifetime of an object that doesn’t age. The second property implies that if the object has lived up to time x, the chance that it dies in the small interval x to x + dx depends only on the length of that interval, dx, and not on its age x.

![Determination of the distribution of X Let F (x ) = P[X ≤ x] Determination of the distribution of X Let F (x ) = P[X ≤ x]](http://slidetodoc.com/presentation_image_h2/28384d89874b1b18abadeb8b81c6eb67/image-35.jpg)

Determination of the distribution of X Let F (x ) = P[X ≤ x] = the cumulative distribution function of the random variable, X. Then P[X ≥ 0] = 1 implies that F(0) = 0. Also P[x ≤ X ≤ x + dx|X ≥ x] = ldx implies

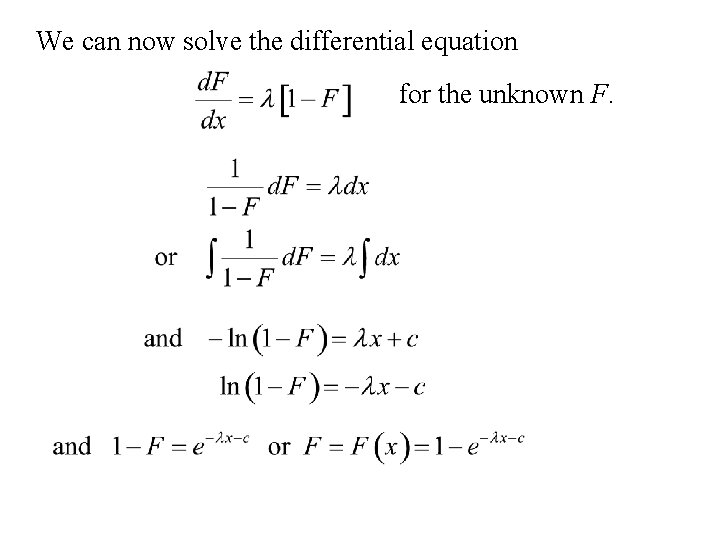

We can now solve the differential equation for the unknown F.

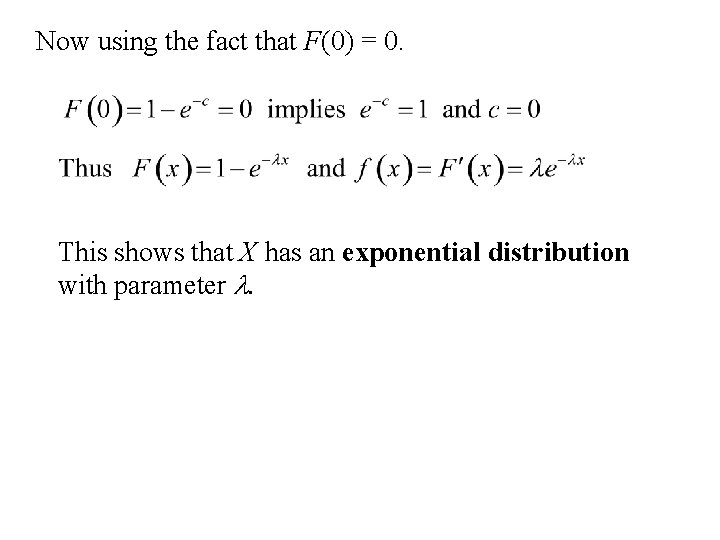

Now using the fact that F(0) = 0. This shows that X has an exponential distribution with parameter l.

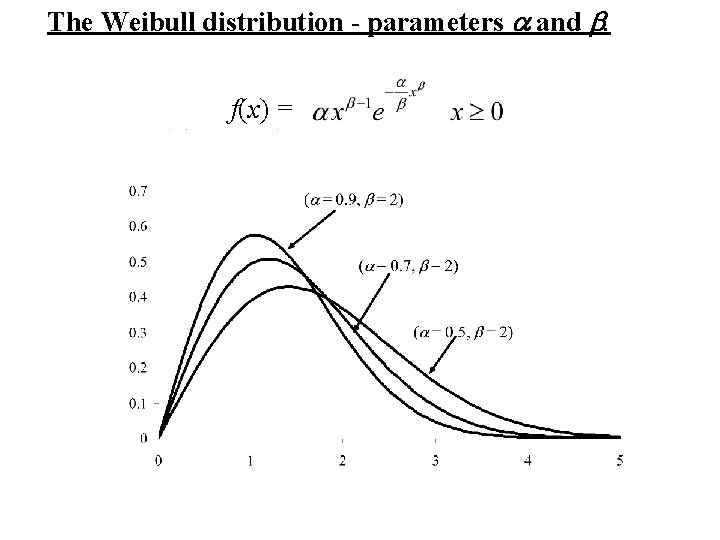

The Weibull distribution A model for the lifetime of objects that do age.

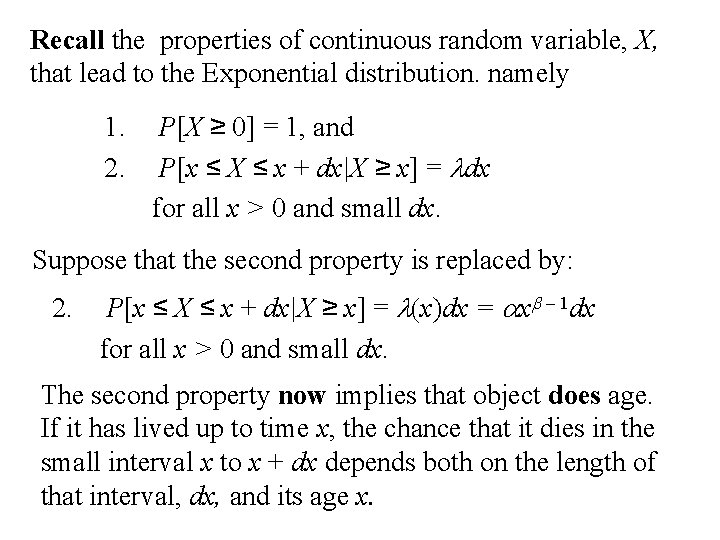

Recall the properties of continuous random variable, X, that lead to the Exponential distribution. namely 1. 2. P[X ≥ 0] = 1, and P[x ≤ X ≤ x + dx|X ≥ x] = ldx for all x > 0 and small dx. Suppose that the second property is replaced by: 2. P[x ≤ X ≤ x + dx|X ≥ x] = l(x)dx = axb – 1 dx for all x > 0 and small dx. The second property now implies that object does age. If it has lived up to time x, the chance that it dies in the small interval x to x + dx depends both on the length of that interval, dx, and its age x.

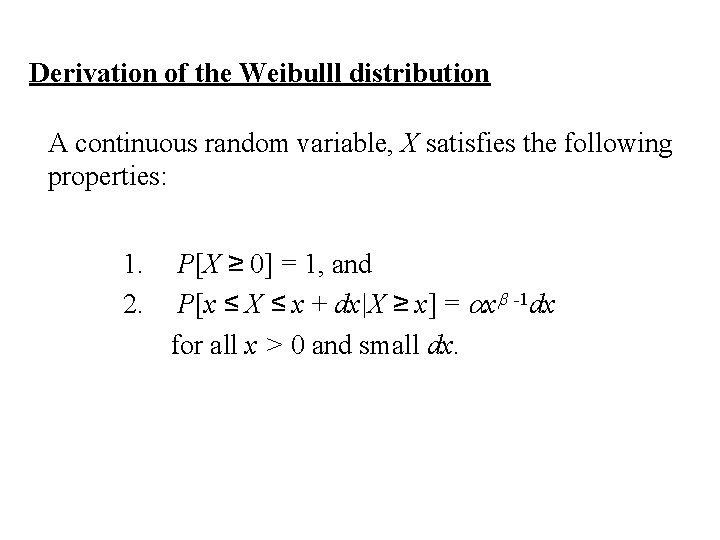

Derivation of the Weibulll distribution A continuous random variable, X satisfies the following properties: 1. 2. P[X ≥ 0] = 1, and P[x ≤ X ≤ x + dx|X ≥ x] = axb -1 dx for all x > 0 and small dx.

![Let F (x ) = P[X ≤ x] = the cumulative distribution function of Let F (x ) = P[X ≤ x] = the cumulative distribution function of](http://slidetodoc.com/presentation_image_h2/28384d89874b1b18abadeb8b81c6eb67/image-41.jpg)

Let F (x ) = P[X ≤ x] = the cumulative distribution function of the random variable, X. Then P[X ≥ 0] = 1 implies that F(0) = 0. Also P[x ≤ X ≤ x + dx|X ≥ x] = axb -1 dx implies

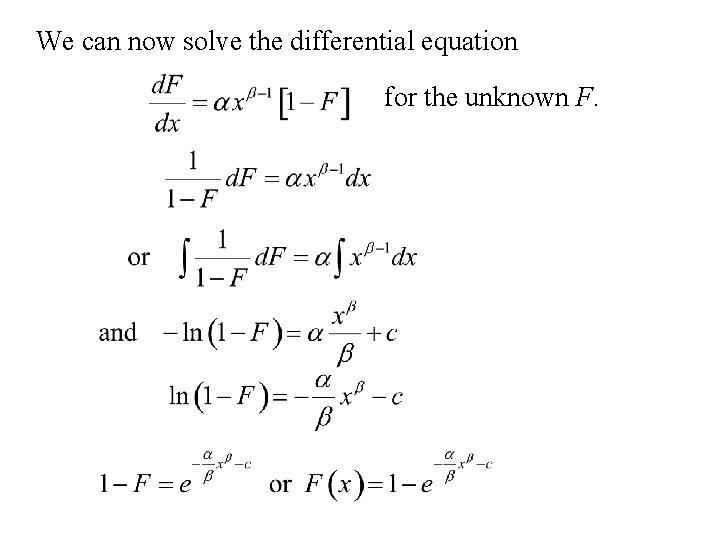

We can now solve the differential equation for the unknown F.

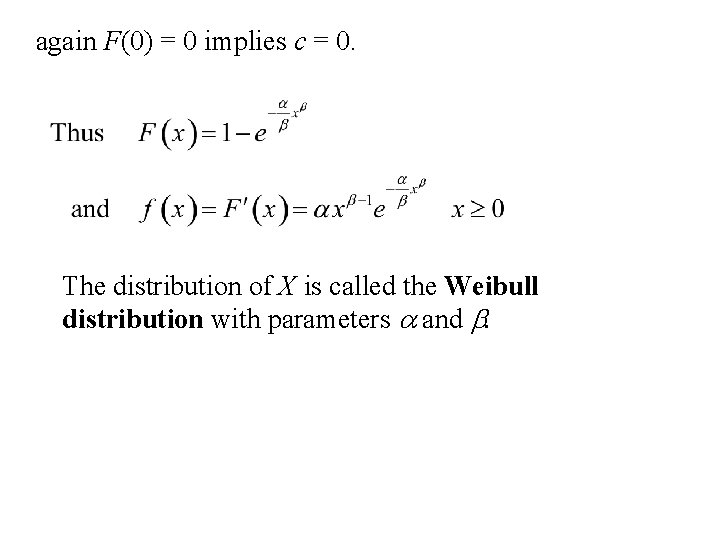

again F(0) = 0 implies c = 0. The distribution of X is called the Weibull distribution with parameters a and b.

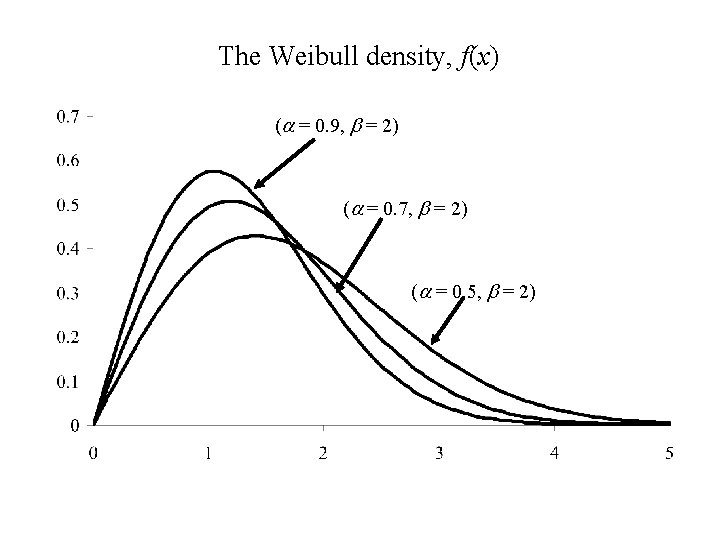

The Weibull density, f(x) (a = 0. 9, b = 2) (a = 0. 7, b = 2) (a = 0. 5, b = 2)

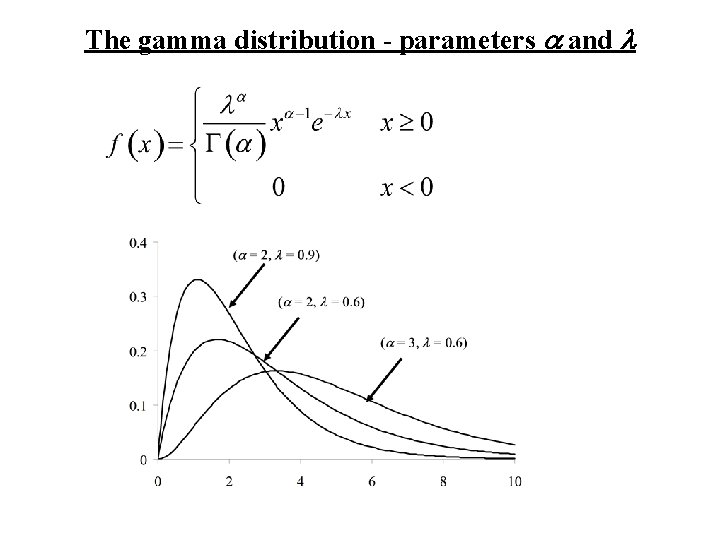

The Gamma distribution An important family of distibutions

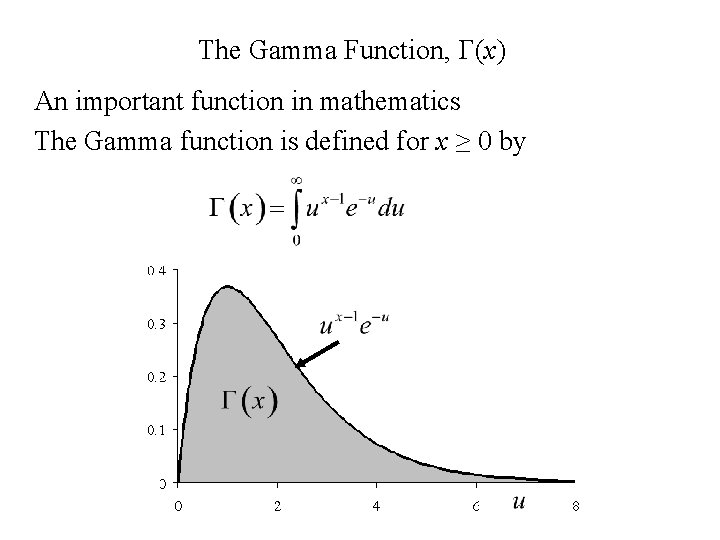

The Gamma Function, G(x) An important function in mathematics The Gamma function is defined for x ≥ 0 by

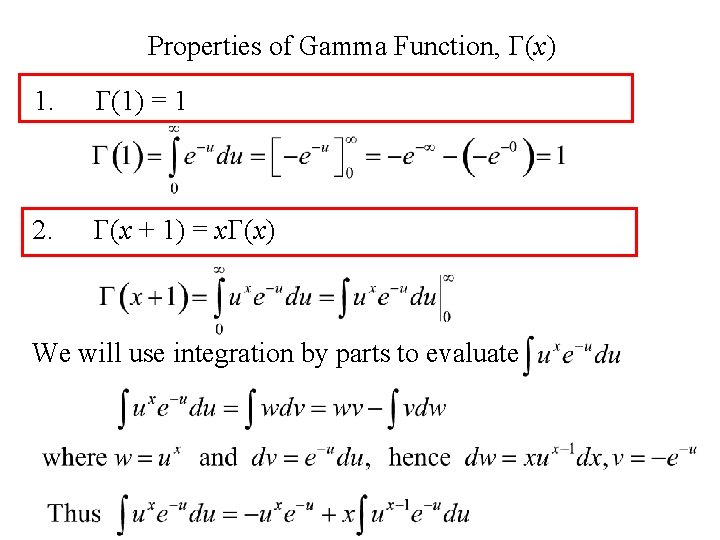

Properties of Gamma Function, G(x) 1. G(1) = 1 2. G(x + 1) = x. G(x) We will use integration by parts to evaluate

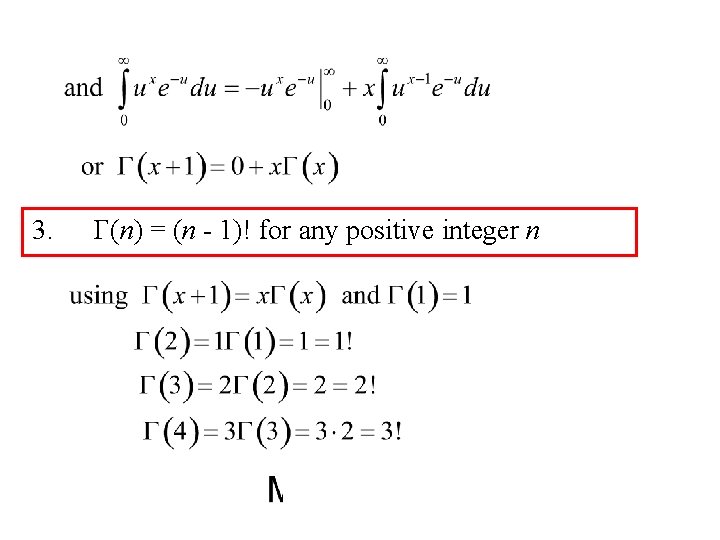

3. G(n) = (n - 1)! for any positive integer n

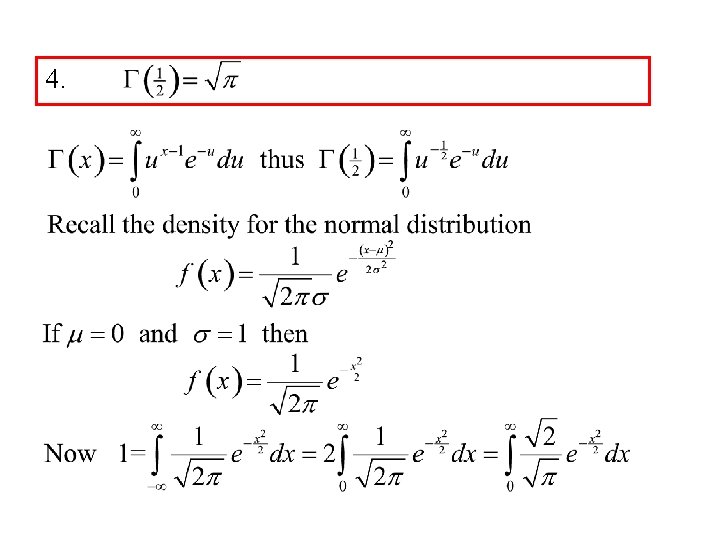

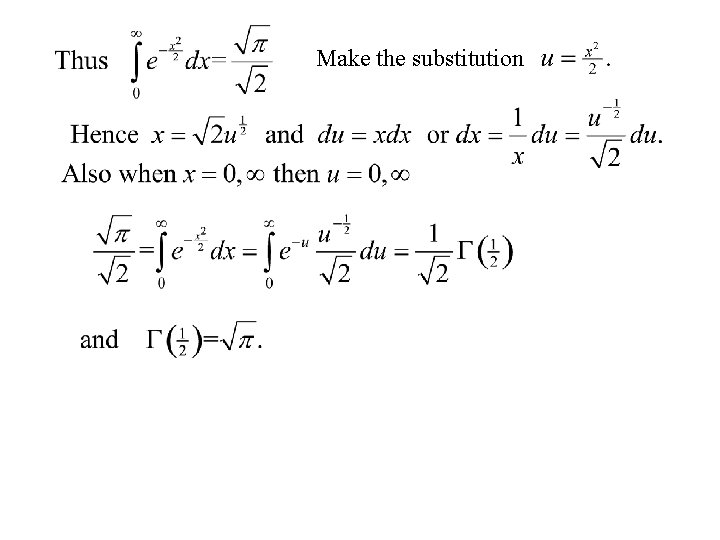

4.

Make the substitution

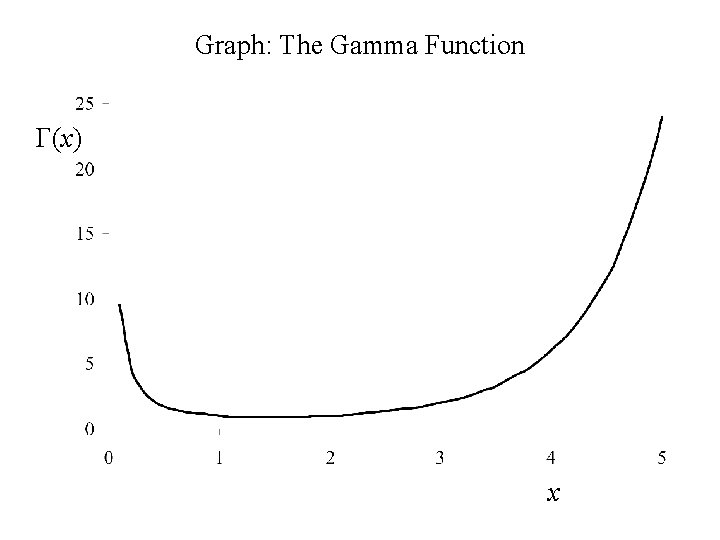

Graph: The Gamma Function G(x) x

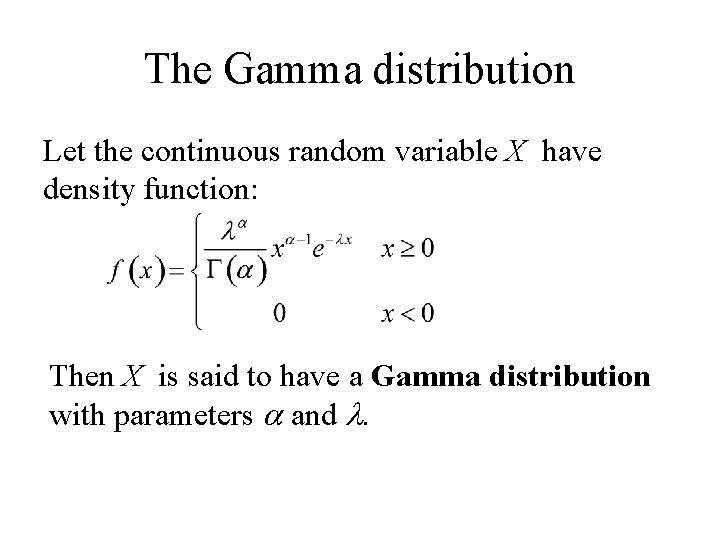

The Gamma distribution Let the continuous random variable X have density function: Then X is said to have a Gamma distribution with parameters a and l.

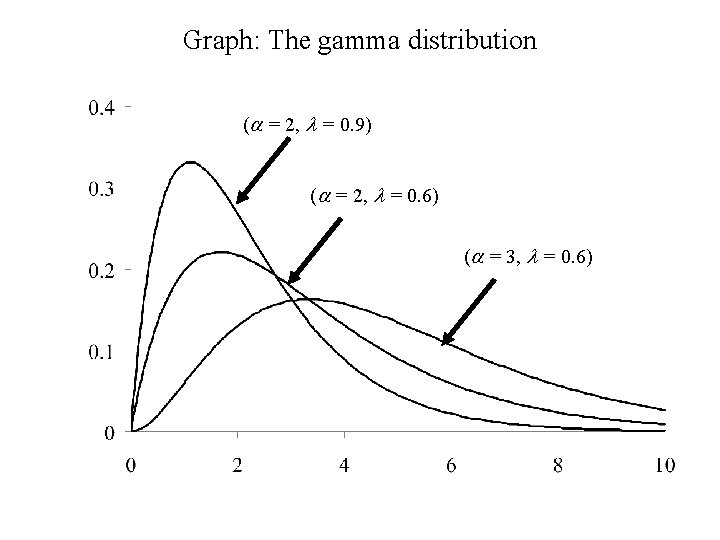

Graph: The gamma distribution (a = 2, l = 0. 9) (a = 2, l = 0. 6) (a = 3, l = 0. 6)

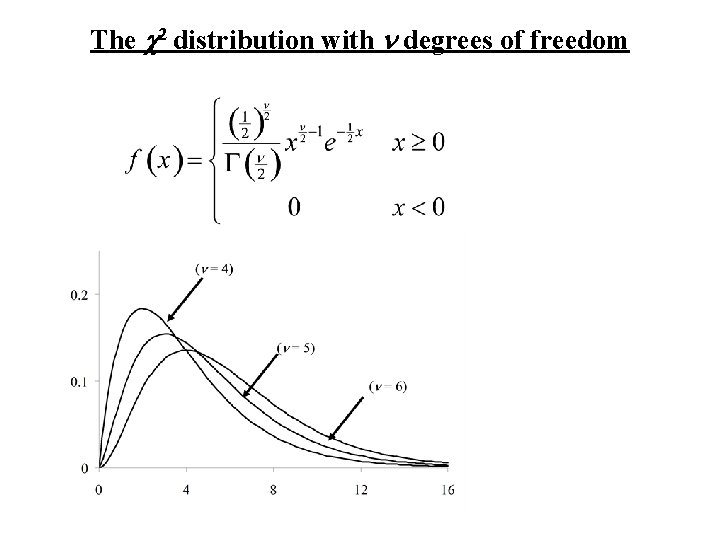

Comments 1. The set of gamma distributions is a family of distributions (parameterized by a and l). 2. Contained within this family are other distributions a. The Exponential distribution – in this case a = 1, the gamma distribution becomes the exponential distribution with parameter l. The exponential distribution arises if we are measuring the lifetime, X, of an object that does not age. It is also used a distribution for waiting times between events occurring uniformly in time. b. The Chi-square distribution – in the case a = n/2 and l = ½, the gamma distribution becomes the chi- square (c 2) distribution with n degrees of freedom. Later we will see that sum of squares of independent standard normal variates have a chi-square distribution, degrees of freedom = the number of independent terms in the sum of squares.

The Exponential distribution The Chi-square (c 2) distribution with n d. f.

Graph: The c 2 distribution (n = 4) (n = 5) (n = 6)

Summary Important continuous distributions

Uniform distribution from a to b

Normal distribution mean m and standard deviation s

the exponential distribution with parameter l f(x) =

The Weibull distribution - parameters a and b. f(x) =

The gamma distribution - parameters a and l

The c 2 distribution with n degrees of freedom

- Slides: 64