DIMENSIONALITY REDUCTION KEVIN LABILLE SUSAN GAUCH UNIVERSITY OF

DIMENSIONALITY REDUCTION KEVIN LABILLE & SUSAN GAUCH UNIVERSITY OF ARKANSAS 1

DIMENSIONALITY REDUCTION • Large dimensional space makes computation really expensive for any NLP or Machine learning task • Often, the features represented in the space are correlated and redundant • Dimensionality reduction techniques aim to find a compact low-dimensional subset of the high-dimensional feature space • Algebraic techniques based on Singular Value Decomposition (SVD): – Principal Component Analysis (PCA) – Latent Semantic Analysis (LSA) – Latent Semantic Indexing (LSI) • Probabilistic techniques: – Probabilistic Latent Semantic Analysis (p. LSA) – Latent Dirichlet Allocation (LDA) Kevin Labille & Susan Gauch - 2018 2

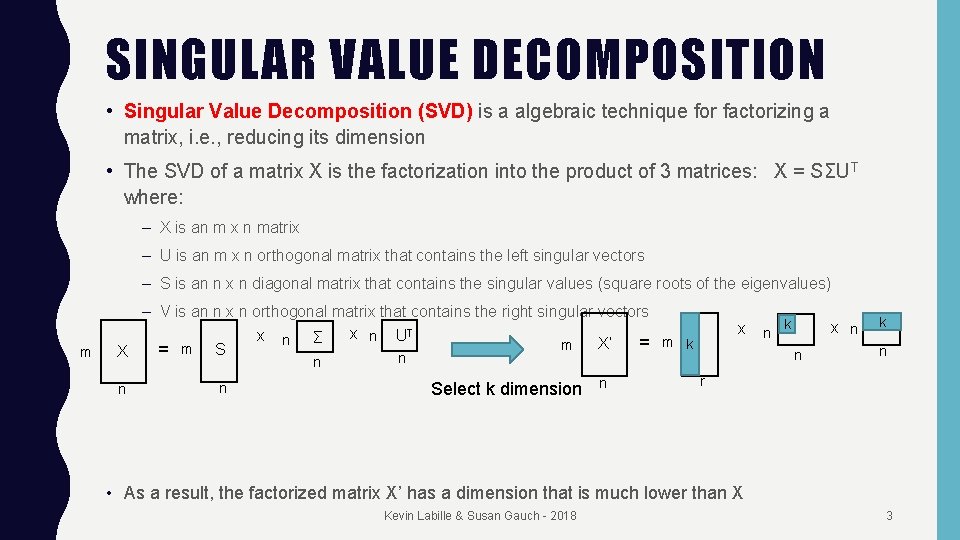

SINGULAR VALUE DECOMPOSITION • Singular Value Decomposition (SVD) is a algebraic technique for factorizing a matrix, i. e. , reducing its dimension • The SVD of a matrix X is the factorization into the product of 3 matrices: X = SΣU T where: – X is an m x n matrix – U is an m x n orthogonal matrix that contains the left singular vectors – S is an n x n diagonal matrix that contains the singular values (square roots of the eigenvalues) m – V is an n x n orthogonal matrix that contains the right singular vectors x n UT x n Σ X’ = m k m = m S X n x n Select k dimension n k x n n n k n r • As a result, the factorized matrix X’ has a dimension that is much lower than X Kevin Labille & Susan Gauch - 2018 3

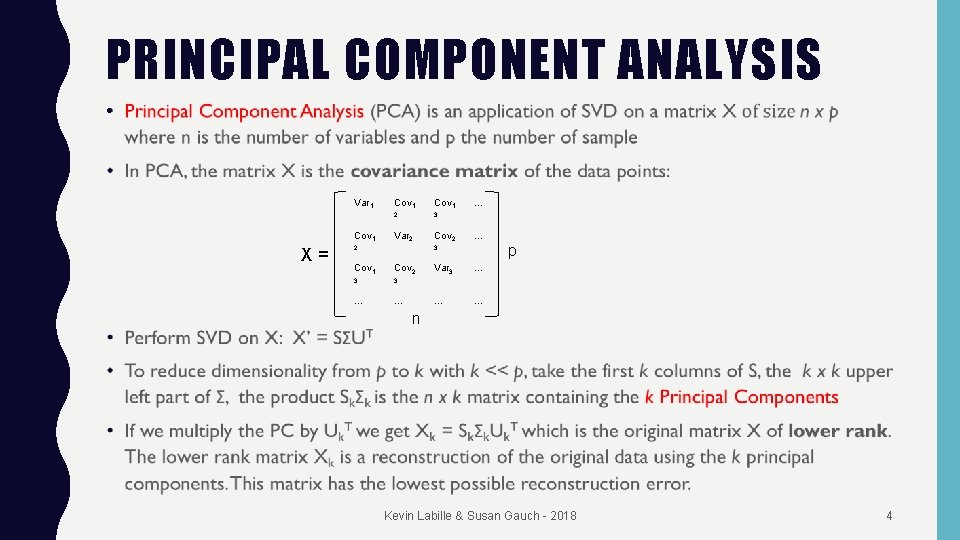

PRINCIPAL COMPONENT ANALYSIS • Var 1 Cov 1, X= Cov 1, 2 3 Var 2 Cov 2, 2 … … p 3 Cov 1, Cov 2, 3 3 … … Var 3 … … … n Kevin Labille & Susan Gauch - 2018 4

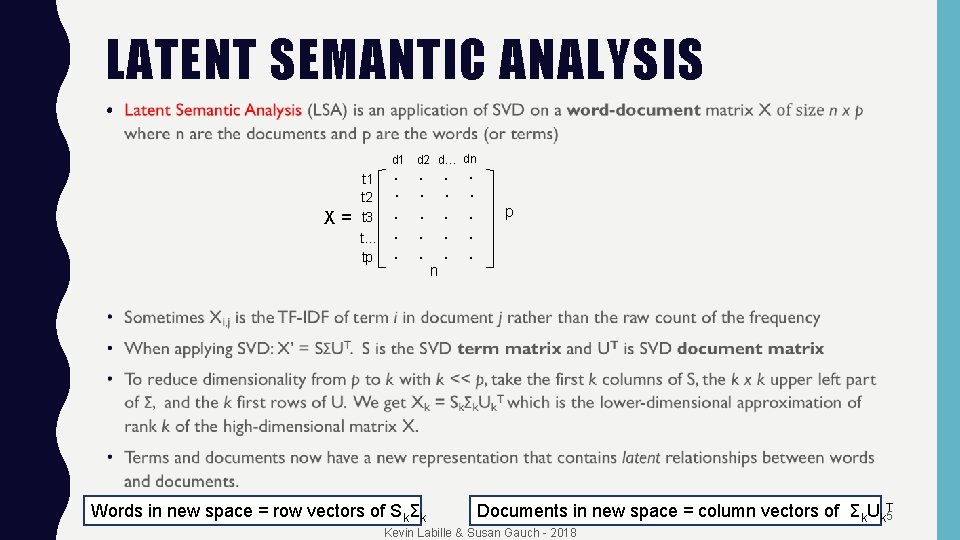

LATENT SEMANTIC ANALYSIS • d 1 d 2 d… dn X= t 1 t 2 t 3 t… tp ∙ ∙ ∙ ∙ n∙ Words in new space = row vectors of SkΣk ∙ ∙ ∙ p Documents in new space = column vectors of Σk. Uk. T 5 Kevin Labille & Susan Gauch - 2018

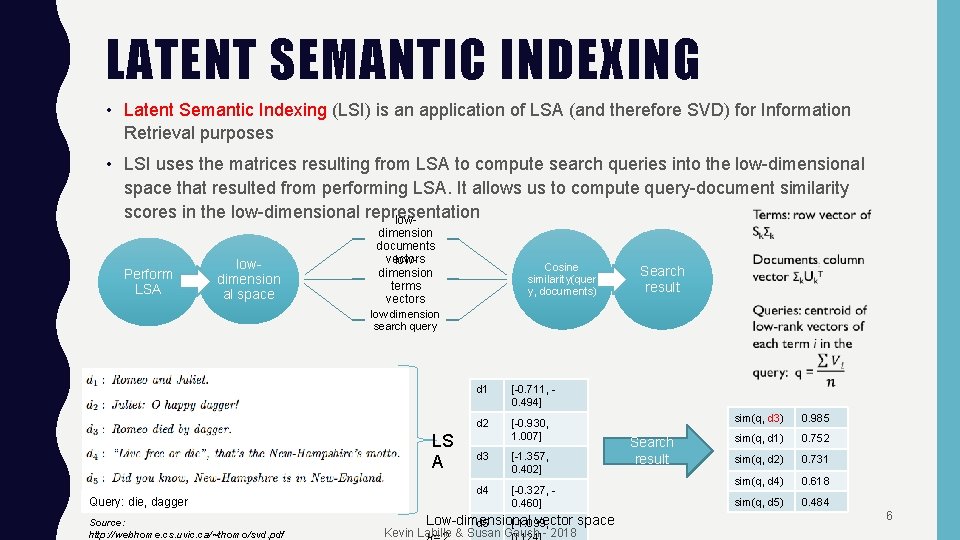

LATENT SEMANTIC INDEXING • Latent Semantic Indexing (LSI) is an application of LSA (and therefore SVD) for Information Retrieval purposes • LSI uses the matrices resulting from LSA to compute search queries into the low-dimensional space that resulted from performing LSA. It allows us to compute query-document similarity scores in the low-dimensional representation low. Perform LSA lowdimension al space dimension documents vectors lowdimension terms vectors Cosine similarity(quer y, documents) Search result low dimension search query LS A Query: die, dagger Source: http: //webhome. cs. uvic. ca/~thomo/svd. pdf d 1 [-0. 711, 0. 494] d 2 [-0. 930, 1. 007] d 3 [-1. 357, 0. 402] d 4 [-0. 327, 0. 460] Low-dimensional vector space d 5 [-1. 099, Kevin Labille & Susan Gauch - 2018 Search result sim(q, d 3) 0. 985 sim(q, d 1) 0. 752 sim(q, d 2) 0. 731 sim(q, d 4) 0. 618 sim(q, d 5) 0. 484 6

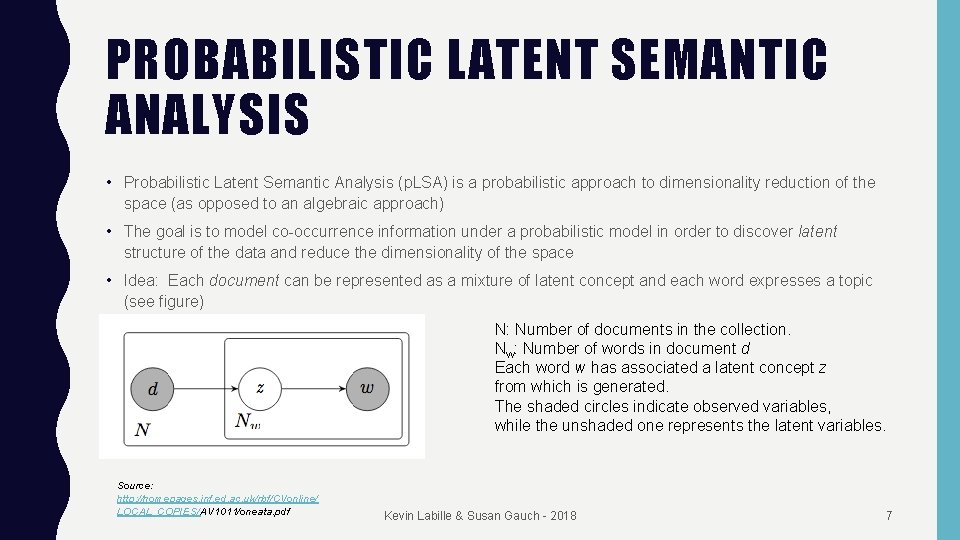

PROBABILISTIC LATENT SEMANTIC ANALYSIS • Probabilistic Latent Semantic Analysis (p. LSA) is a probabilistic approach to dimensionality reduction of the space (as opposed to an algebraic approach) • The goal is to model co-occurrence information under a probabilistic model in order to discover latent structure of the data and reduce the dimensionality of the space • Idea: Each document can be represented as a mixture of latent concept and each word expresses a topic (see figure) N: Number of documents in the collection. Nw: Number of words in document d Each word w has associated a latent concept z from which is generated. The shaded circles indicate observed variables, while the unshaded one represents the latent variables. Source: http: //homepages. inf. ed. ac. uk/rbf/CVonline/ LOCAL_COPIES/AV 1011/oneata. pdf Kevin Labille & Susan Gauch - 2018 7

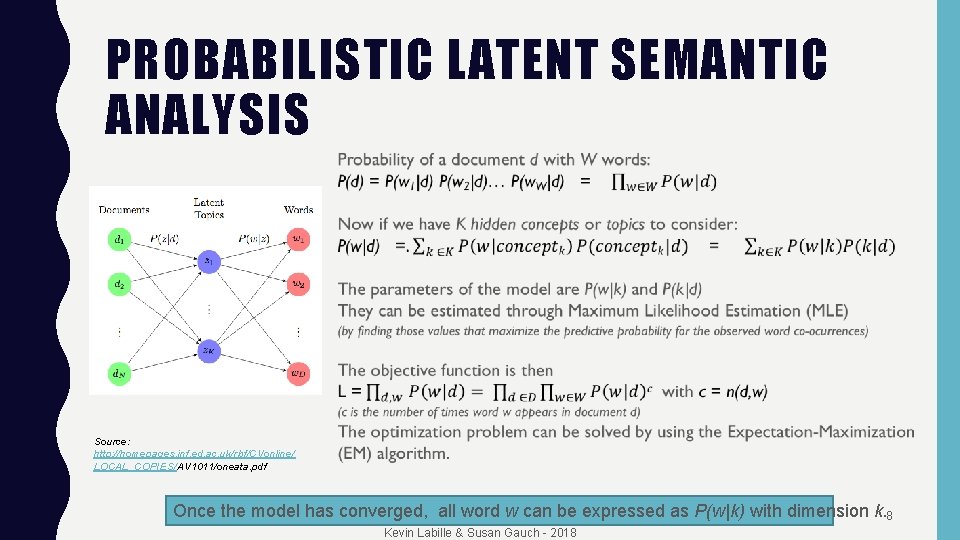

PROBABILISTIC LATENT SEMANTIC ANALYSIS Source: http: //homepages. inf. ed. ac. uk/rbf/CVonline/ LOCAL_COPIES/AV 1011/oneata. pdf Once the model has converged, all word w can be expressed as P(w|k) with dimension k. 8 Kevin Labille & Susan Gauch - 2018

TOPIC MODELING • There are many ways to obtain “topics” from text • LDA (Latent Dirichlet Allocation) is most popular – Really, just a dimensionality reduction technique – V unique words map onto K dimensions (K << V) – These K dimensions are assumed to be “topics” since many v reduce into each k Kevin Labille & Susan Gauch - 2018 9

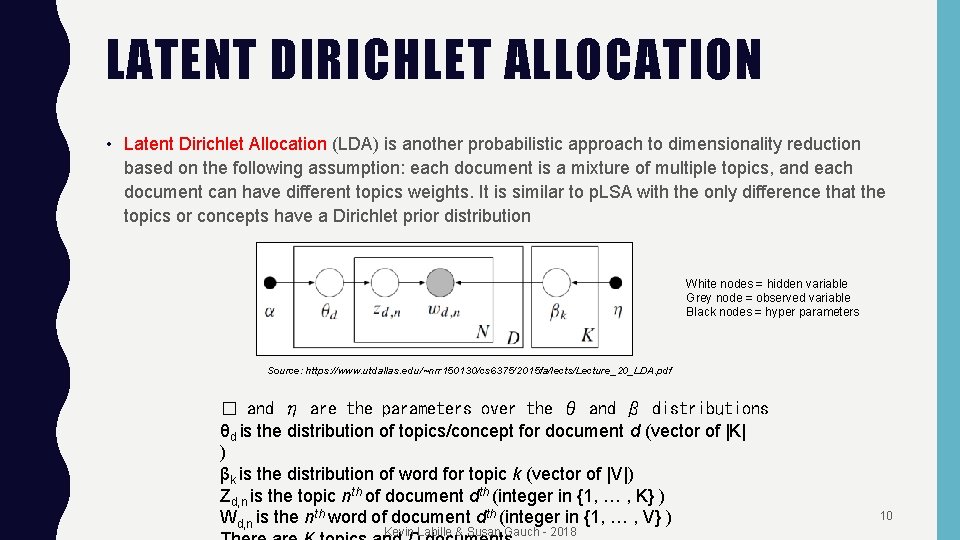

LATENT DIRICHLET ALLOCATION • Latent Dirichlet Allocation (LDA) is another probabilistic approach to dimensionality reduction based on the following assumption: each document is a mixture of multiple topics, and each document can have different topics weights. It is similar to p. LSA with the only difference that the topics or concepts have a Dirichlet prior distribution White nodes = hidden variable Grey node = observed variable Black nodes = hyper parameters Source: https: //www. utdallas. edu/~nrr 150130/cs 6375/2015 fa/lects/Lecture_20_LDA. pdf � and η are the parameters over the θ and β distributions θd is the distribution of topics/concept for document d (vector of |K| ) βk is the distribution of word for topic k (vector of |V|) Zd, n is the topic nth of document dth (integer in {1, … , K} ) Wd, n is the nth word of document dth (integer in {1, … , V} ) Kevin Labille & Susan Gauch - 2018 10

LATENT DIRICHLET ALLOCATION GENERATIVE PROCESS • Draw K sample distributions (each of size V) from a Dirichlet distribution βk ~Dir(η) – They are the topics or concepts distribution • These distributions are called βk • For each document: – Draw another sample distribution (of size K) from a Dirichlet distribution θd ~Dir(�) • This distribution is called θd – For each word in the document: • Draw topic Zd, n ~Multi(θd ) • Draw word Wd, n~Multi(βZd, n) from the topic • Find the parameters α and β which maximize the likelihood of the observed data – Use an Expectation-Maximization based approach called variational EM • Not very successful Kevin Labille & Susan Gauch - 2018 11

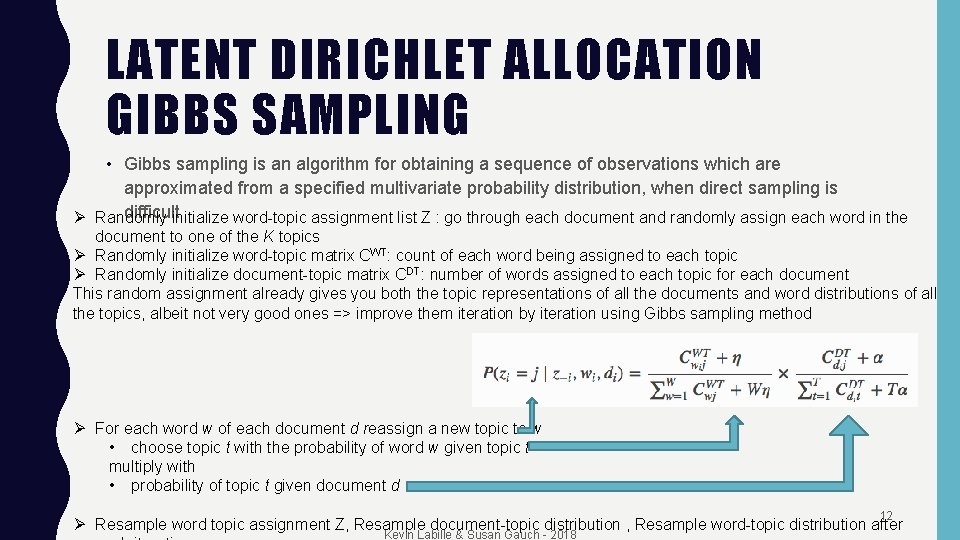

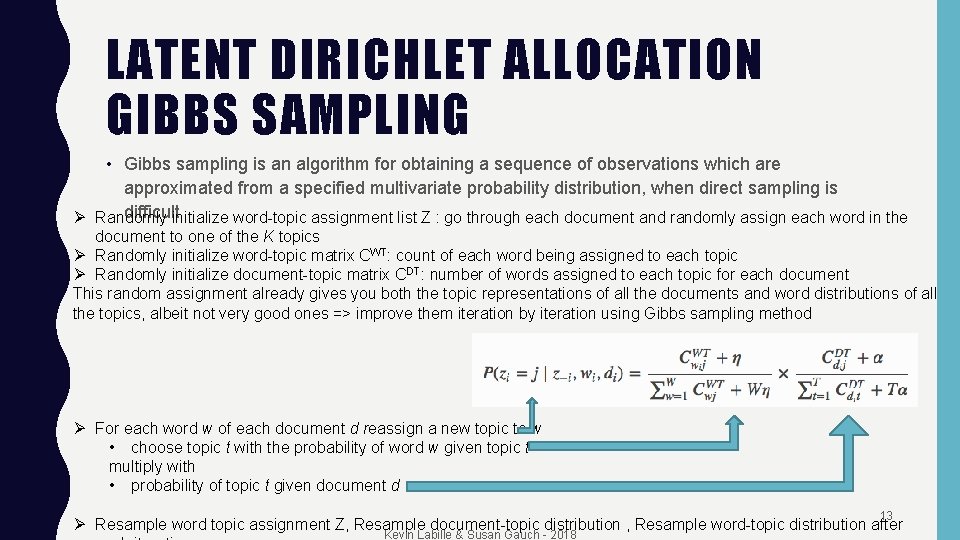

LATENT DIRICHLET ALLOCATION GIBBS SAMPLING • Gibbs sampling is an algorithm for obtaining a sequence of observations which are approximated from a specified multivariate probability distribution, when direct sampling is difficultinitialize word-topic assignment list Z : go through each document and randomly assign each word in the Ø Randomly document to one of the K topics Ø Randomly initialize word-topic matrix CWT: count of each word being assigned to each topic Ø Randomly initialize document-topic matrix CDT: number of words assigned to each topic for each document This random assignment already gives you both the topic representations of all the documents and word distributions of all the topics, albeit not very good ones => improve them iteration by iteration using Gibbs sampling method Ø For each word w of each document d reassign a new topic to w • choose topic t with the probability of word w given topic t multiply with • probability of topic t given document d 12 Ø Resample word topic assignment Z, Resample document-topic distribution , Resample word-topic distribution after Kevin Labille & Susan Gauch - 2018

LATENT DIRICHLET ALLOCATION GIBBS SAMPLING • Gibbs sampling is an algorithm for obtaining a sequence of observations which are approximated from a specified multivariate probability distribution, when direct sampling is difficultinitialize word-topic assignment list Z : go through each document and randomly assign each word in the Ø Randomly document to one of the K topics Ø Randomly initialize word-topic matrix CWT: count of each word being assigned to each topic Ø Randomly initialize document-topic matrix CDT: number of words assigned to each topic for each document This random assignment already gives you both the topic representations of all the documents and word distributions of all the topics, albeit not very good ones => improve them iteration by iteration using Gibbs sampling method Ø For each word w of each document d reassign a new topic to w • choose topic t with the probability of word w given topic t multiply with • probability of topic t given document d 13 Ø Resample word topic assignment Z, Resample document-topic distribution , Resample word-topic distribution after Kevin Labille & Susan Gauch - 2018

CONCEPTUAL SEARCH BASED ON ONTOLOGIES • “Semantic” approach to dimensionality reduction • Rather than using math to “learn” lower number of dimensions, use an existing ontology/concept hierarchy to represent the documents • Gauch: – Select appropriate ontology source (Magellan, Yahoo!, Open Directory Project (ODP), ACM CCS, Wikipedia, …) • Use reasonable subset (top 3 levels – 1, 000 categories, top 4 levels -> 10, 000 categories) – Train categorizer using linked documents – Categorize your documents • Creates a vector or category weights (dimensionality 1, 000 vs 1, 000) • Actually, a tree of category weights Kevin Labille & Susan Gauch - 2018 14

WHY REDUCE DIMENSIONALITY? • Machine learning – Cannot easily train on millions of dimensions • Classification • Recommendation • Augment search (reduces ambiguity) – Increase recall – Decrease precision Kevin Labille & Susan Gauch - 2018 15

RESOURCES • LDA python and R – https: //wiseodd. github. io/techblog/2017/09/07/lda-gibbs/ – https: //ethen 8181. github. io/machine-learning/clustering_old/topic_model/LDA. html Kevin Labille & Susan Gauch - 2018 16

- Slides: 16