Dimension Reduction and Feature Selection Craig A Struble

Dimension Reduction and Feature Selection Craig A. Struble, Ph. D. Department of Mathematics, Statistics, and Computer Science Marquette University MSCS 282: Data Mining Craig A. Struble

Overview w Dimension Reduction n Correlation Principal Component Analysis Singular Value Decomposition w Feature Selection n n Information Content … MSCS 282: Data Mining - Craig A. Struble 2

Dimension Reduction w The number of attributes causes complexity of learning, clustering, etc. to grow exponentially n “Curse of dimensionality” w We need methods to reduce the number of attributes w Dimension reduction reduces attributes without (directly) considering relevance of the attribute. n Not really removing attributes, but combining/recasting them. MSCS 282: Data Mining - Craig A. Struble 3

Correlation w A causal, complementary, parallel, or reciprocal relationship w The simultaneous change in value of two numerically valued random variables w So, if one attribute’s value changes in a predictable way whenever another one changes, why keep them both? MSCS 282: Data Mining - Craig A. Struble 4

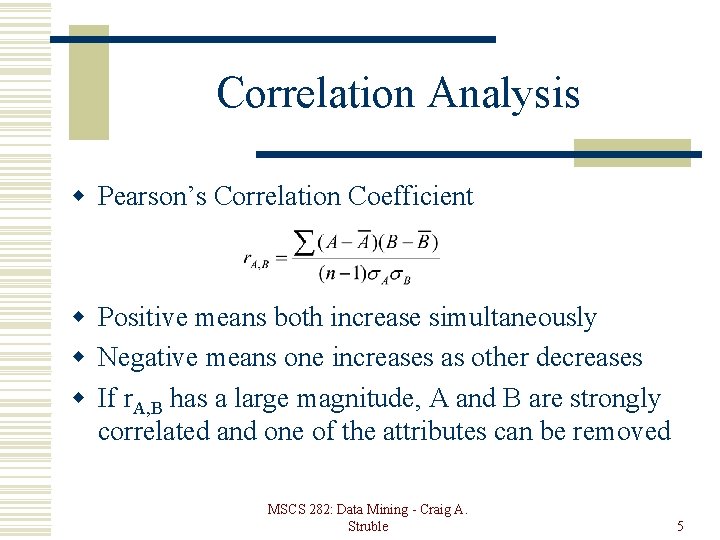

Correlation Analysis w Pearson’s Correlation Coefficient w Positive means both increase simultaneously w Negative means one increases as other decreases w If r. A, B has a large magnitude, A and B are strongly correlated and one of the attributes can be removed MSCS 282: Data Mining - Craig A. Struble 5

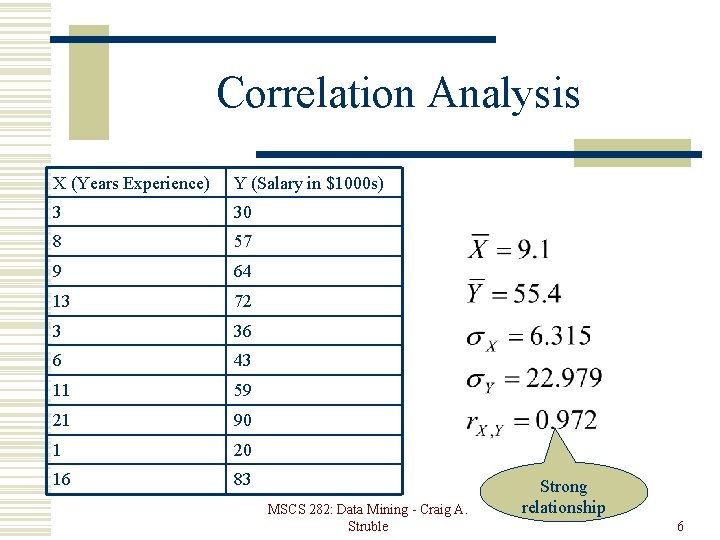

Correlation Analysis X (Years Experience) Y (Salary in $1000 s) 3 30 8 57 9 64 13 72 3 36 6 43 11 59 21 90 1 20 16 83 MSCS 282: Data Mining - Craig A. Struble Strong relationship 6

Principal Component Analysis w Karhunen-Loeve or K-L method w Combine “essence” of attributes to create a (hopefully) smaller set of variables the describe the data w An instance with k attributes is a point in kdimensional space w Find c k-dimensional orthogonal vectors that best represent the data such that c <= k w These vectors are combinations of attributes. MSCS 282: Data Mining - Craig A. Struble 7

Principal Component Analysis w Normalize the data w Compute c orthonormal vectors, which are the principal components w Sort in order of decreasing “significance” n Measured in terms of data variance w Can reduce data dimension by choosing only the most significant principal components MSCS 282: Data Mining - Craig A. Struble 8

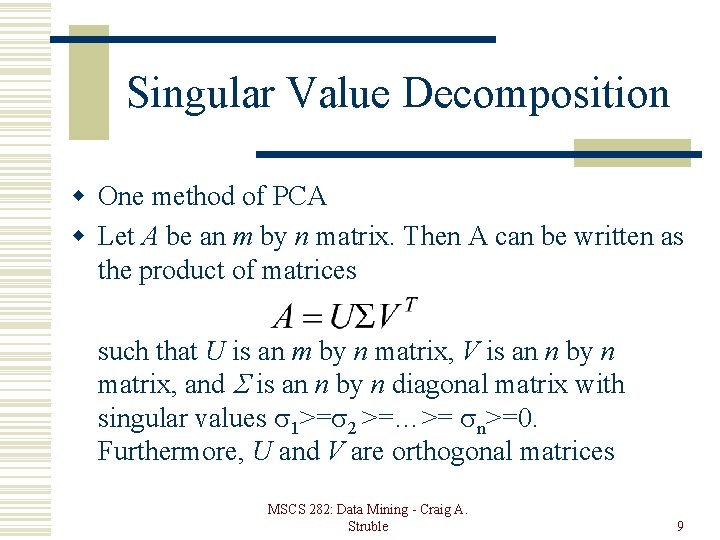

Singular Value Decomposition w One method of PCA w Let A be an m by n matrix. Then A can be written as the product of matrices such that U is an m by n matrix, V is an n by n matrix, and is an n by n diagonal matrix with singular values 1>= 2 >=…>= n>=0. Furthermore, U and V are orthogonal matrices MSCS 282: Data Mining - Craig A. Struble 9

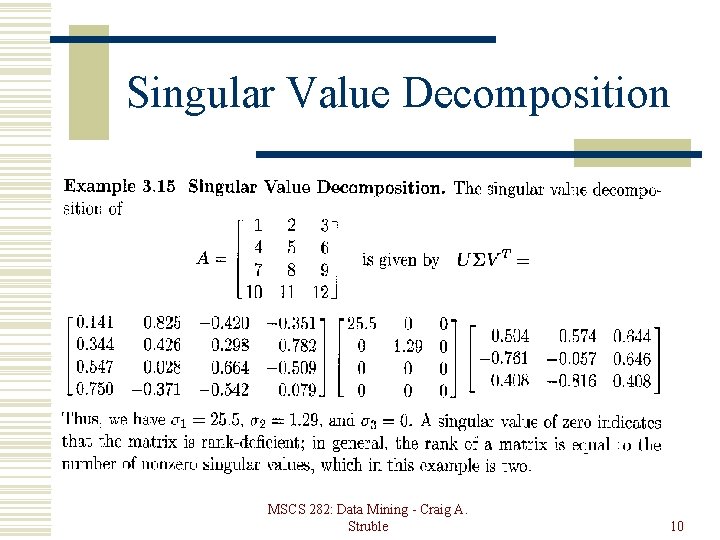

Singular Value Decomposition MSCS 282: Data Mining - Craig A. Struble 10

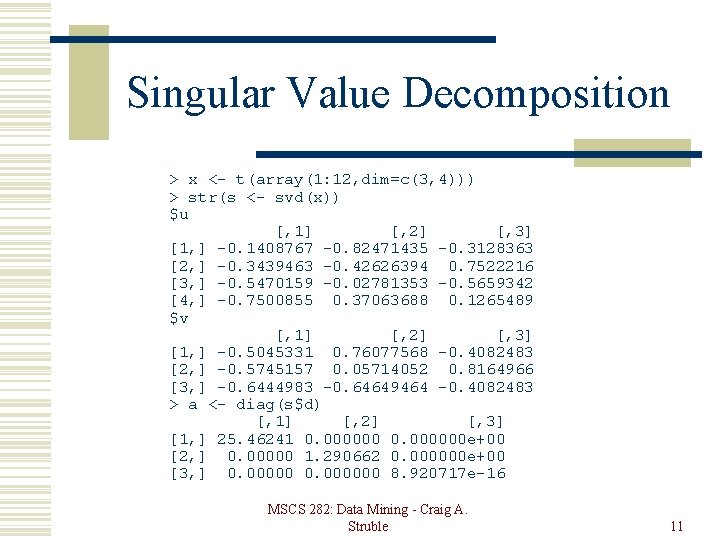

Singular Value Decomposition > x <- t(array(1: 12, dim=c(3, 4))) > str(s <- svd(x)) $u [, 1] [, 2] [, 3] [1, ] -0. 1408767 -0. 82471435 -0. 3128363 [2, ] -0. 3439463 -0. 42626394 0. 7522216 [3, ] -0. 5470159 -0. 02781353 -0. 5659342 [4, ] -0. 7500855 0. 37063688 0. 1265489 $v [, 1] [, 2] [, 3] [1, ] -0. 5045331 0. 76077568 -0. 4082483 [2, ] -0. 5745157 0. 05714052 0. 8164966 [3, ] -0. 6444983 -0. 64649464 -0. 4082483 > a <- diag(s$d) [, 1] [, 2] [, 3] [1, ] 25. 46241 0. 000000 e+00 [2, ] 0. 00000 1. 290662 0. 000000 e+00 [3, ] 0. 000000 8. 920717 e-16 MSCS 282: Data Mining - Craig A. Struble 11

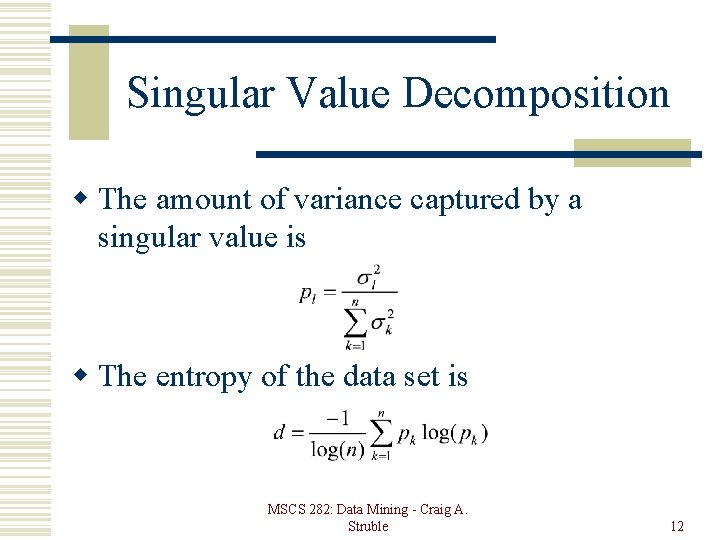

Singular Value Decomposition w The amount of variance captured by a singular value is w The entropy of the data set is MSCS 282: Data Mining - Craig A. Struble 12

Feature Selection w Select the most “relevant” subset of attributes w Wrapper approach n Features are selected as part of the mining algorithm w Filter approach n Features selected before mining algorithm w Wrapper approach is generally more accurate but also more computationally expensive MSCS 282: Data Mining - Craig A. Struble 13

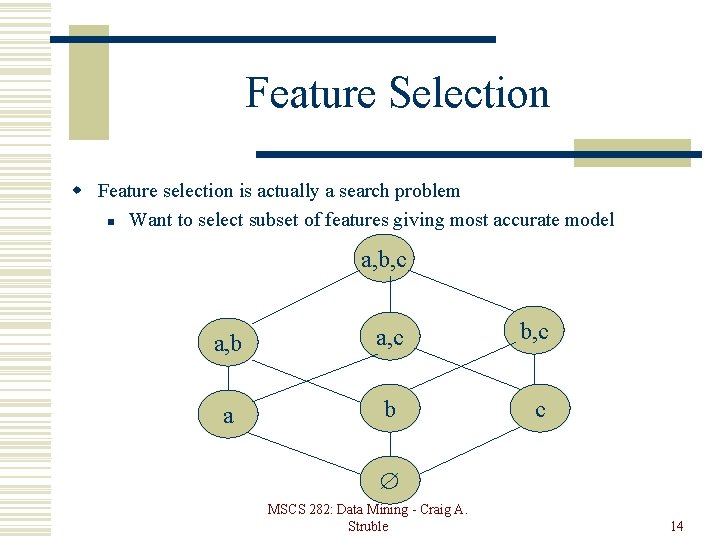

Feature Selection w Feature selection is actually a search problem n Want to select subset of features giving most accurate model a, b, c a, b a, c b, c a b c MSCS 282: Data Mining - Craig A. Struble 14

Feature Selection w Any search heuristics will work n n Branch and bound “Best-first” or A* Genetic algorithms etc. w Bigger problem is to estimate the relevance of attributes without building classifier. MSCS 282: Data Mining - Craig A. Struble 15

Feature Selection w Using entropy n n n Calculate information gain of each attribute Select the l attributes with the highest information gain Removes attributes that are the same for all data instances MSCS 282: Data Mining - Craig A. Struble 16

Feature Selection w Stepwise forward selection n n Start with empty attribute set Add “best” of attributes Add “best” of remaining attributes Repeat. Take the top l w Stepwise backward selection n Start with entire attribute set Remove “worst” of attributes Repeat until l are left. MSCS 282: Data Mining - Craig A. Struble 17

Feature Selection w Other methods n n n Sample data, build model for subset of data and attributes to estimate accuracy. Select attributes with most or least variance Select attributes most highly correlated with goal attribute. w What does feature selection provide you? n n Reduced data size Analysis of “most important” pieces of information to collect. MSCS 282: Data Mining - Craig A. Struble 18

- Slides: 18