Digital libraries A testbed for multimedia technology Yueting

Digital libraries: A testbed for multimedia technology Yueting Zhuang College of Computer Science Zhejiang University, P. R. China Nov. 2, 2005

Outline § Motivation § Video Analysis & Summarization § Automatic Image Annotation § Cross-Media Indexing & Retrieval § Chinese Calligraphy Retrieval &Visualization § Conclusion

Motivation § Digital Library • Universal access • “Digital”, other than text, in the form of audio/video/image/flash/3 D objects and so on…… • The proportion of multimedia to text is getting higher! Digital Library welcomes Multimedia!

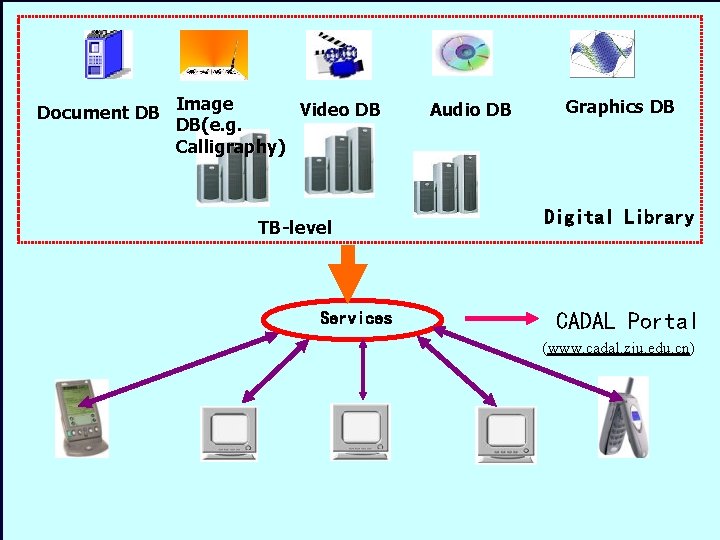

Image Video DB Document DB DB(e. g. Calligraphy) TB-level Services Audio DB Graphics DB Digital Library CADAL Portal (www. cadal. zju. edu. cn)

§ Multimedia technology • Hot research area: multimedia analysis and retrieval • Seeking killer-apps for years! For examples: CBR: content-based image retrieval needs a large collection of image files. Our ongoing CADAL Digital Library offers such a test bed.

The following multimedia technologies are to addressed: Video Analysis & Summarization Automatic Image Annotation Cross-Media Indexing & Retrieval Chinese Calligraphy Retrieval & Visualization

Video Analysis & Summarization Long video to short video (video hightlights)

Why Video Analysis & Summarization ? § Video, the main form of multimedia • Large, time consuming if it is played from the beginning to the end • Urgently need efficient retrieval § Unlike text-based retrieval, video is ill-structured • Rich in visual contents • Poor in semantic contents

Video Analysis & Summarization § Video semantic understanding & Annotation • Understanding and annotating video objects • Bridge Semantic Gap § Video structuring • Construct video Table-of-Content (VTOC) • Make it physically structured. • Support video browsing § Video shot detection & clustering § Video content mining & summarization • Find Meaningful Patterns for summary • Help the user to quickly grasp the content of a video contents • a new form of “Video Compression”

Video Semantic Understanding Machine Learning( SVM, HMM, Clustering, etc) Anchor Person News Topic Understanding Feature Space …. Semantics Understanding video semantics, such as “anchor”, “commercial”, “outdoors” and “sports” etc, to make video retrieval efficient

Video Semantic Annotation Similar to Machine Translation (Translate Video Frame to words) Person Name Topic Descriptor Annotation Feature Space …. Semantics Annotating video, such as “anchor”, “commercial”, “outdoors” and “sports” etc, to make video retrieval efficient

Video Structuring Video Concept Clustering Video Stream Table of Contents Scene Construction Grouping Shot boundary detection Shot Key Frame Temporal Features Spatial Features Key Frame Extraction

Video Shot Detection Original Video Shot Boundary Detection

Video Shot Detection- Finding meaningful objects Meaningful objects detection: explosion, waterfalls, football match……

e. g. explosion Audio explosion candidate is detected, check whether its visual features are changed during this duration We can see that some bins rapidly change during explosion

Flowchart of explosion scenes recognition MPEG stream Audio stream video stream corresponding video clips Features Extraction index Coarse SVM Other audio explosion-like audio Grained SVMs Check visual features Explosion video discard Hierarchical Coarse-Grained SVM Non-explosion video explosion scene

Result Detected Explosion scenes are indicated by red bar:

An experiment example

Video Shot Clustering Original Video Similar Video shots are Clustered together Video Shot

Video Shot Clustering ——Semantic Clustering • Some kinds of objects have semantic meaning. • Legible Faces have much semantic meaning, especially in video news Although the backgrounds of above video frames are different, they can be clustered based on legible face recognition

Video Shot Clustering —— Semantic-Face Template Input a block Component SVM Layer Mouth SVM Nose SVM Mouth Distribution Nose Distribution Left Eye SVM Right Eye SVM Left Eye Distribution Right Eye Distribution Component Distribution Layer Semantic-face

Video Shot Clustering —— Semantic-Face Template Input a block Component SVM Layer Mouth SVM Nose SVM Mouth Distribution Nose Distribution Left Eye SVM Right Eye SVM Left Eye Distribution Right Eye Distribution Component Distribution Layer Semantic-face

Video content mining Help the user to quickly grasp the content of a video Original Video (redundant) Video Content Mining Find Patterns Summarized Video (concise and informative )

Our Video Summarization Original Video Stream Feature Video Summary Extraction Meaningful Video Pattern Mining Shot Detection Similar Shot Clustering Key Frame Selection

Video Summarization-an experiment example 2 news video (shown as its 7 key frames), total length: 3 minutes

After video summarization, the video is 3 seconds,shown as above 3 key frames.

Video Summary Process Original Video Shot Sequence Video Shot Clustering Video Shot Cluster A Video Shot Cluster B Video Shot Cluster C Video Shot Cluster D Video Shot Cluster E

Video Summary Process (Continued) Original Video Shot Sequence A B C D A C D E A B C D Non-Trivial Repeating Patterns Mining Video Summary A B C D

Video Summary Result Video Shots

Automatic Image Annotation A lot of images in Libraries

similar Multimedia 1 (m 1) Knowledge (k) In feature Multimedia 2 (m 2) ? ? If similar(m 1, m 2) & has(m 1, k), then, Has(m 2, k)

Automatic Image Annotating images is helpful and irreplaceable for efficient retrieval: q Main Idea: • Segment images into categories with different semantic meanings • Construct semantic skeleton to describe the semantics of an image category. • Classify new images into categories using SVM • Compute the probability of key words with image regions by statistical learning • Annotating each image

Results of image segmentation based on Normalized Cuts Generation of Semantic Blob Set

The algorithm(1) § Suppose there exists a training collection blobs with annotated keywords § Annotation is a process of translating semantic blob into words: • is the smoothing parameter • and is computed by TF. IDF model • The conditional probability can be estimated as the expectation over the training images

The algorithm(2) § The conditional probability can be estimated as the expectation over the training images J∈Ti § The prior probability images in Ti is kept uniformly over all is the number of occurrences of blob and where b or word-blob pair (w, b) in J

One experiment Automatic annotation result. Words with “__” are the same as manual annotation

Cross-Media Indexing & Retrieval Using one media type to retrieve another media type, according to Cross-Reference Graph

Cross-Media Indexing and Retrieval Text ( Tiger) Together, describes a tiger’s daily life

Cross-Reference Graph § serves as a bridge in multimedia DL. § Calculate semantic level similarity between media object and the query § Trigger single modal search engine that performing content based retrieval based on clustering. § Adjust the cross-reference graph model based on relevance feedback conducted by users, which progressively improves the retrieval accuracy.

Cross-Reference Graph § Used to describe the semantic link among media objects § Defined as a weighted un-directional graph G=(V, E) • Each vertex represents one media object • The weight of Each edge semantic similarity between , where represents the and § The more semantic similar media object larger , and vice versa and ,the more

Link Analysis § Initialization: , § If and then , and respect media object and belong to one same multimedia document, § If and are pointed by one same multimedia document,then § If is pointed by one multimedia document which comprise media object , then

One Example of Link Analysis D 2 O 3 D 1 O 2 D 3 O 4 Cross Reference Graph R 12 = 1 R 13 = 1 R 14 = 1 R 23 = 1 R 24 = 1 R 34 = 2

Framework of Cross-Media Retrieval

One Retrieval Example Query by Image Video Audio

Chinese Calligraphy Retrieval & Visualization

Chinese Calligraphy Retrieval &Visualization ØA highest form of art with popularity for about 3, 000 years ØImportant criterion for selection of executives in imperial era ØCarries calligrapher's personality thoughts and soul Øhelps learners to obtain longevity Still used in newspaper mastheads:

Popular in

Preferred in

Preferred in:

Motivation One scanned page example by CADAL in Dj. Vu format, at 600 dpi These are images, OCR doesn't work How to manage? What services to offer?

Overview § Segmentation • Noise eliminating • Page image analyzing • Smoothing & Binarizing § Retrieval • Feature extraction • Shape matching • Speed up § Writing Process Simulating • Stroke extraction • Stroke sequence estimation • 3 D visualization

Segmentation § Preprocessing • Noise eliminating (such as seal), • Page image analyzing (detect where calligraphy block is located) • Binarizing (obtain the character, ignore the background )

Segmentation § Segment page into columns, and cut the columns into individual characters under certain constraints

Segmentation constraints (1) Let be the start and the end of x-coordinate of a cutting block, then (1) (2) where §By these two constraints: • Small noise blocks and unwanted blocks(such as shown at the right picture ) are filtered out • Big block (consists of more than one characters) which need further segmentation are detected

Segmentation constraints (2) Let be the width and height of a character block, then: (3) (4) According to our experimental experience, we let

One Segmentation Example Minimum bounding box

Feature Extraction 1. Canny detector was employed to obtain the edges 2. Use contour points to represent the calligraphy character 3. Keep the features of each individual calligraphy character in the data base

Database-The Map The map consists of 4 tables: ü the minimum-bounding box ( “top_X”, “top_Y”, “bottom_X” and “bottom_Y”) ü Contour points( “co_points” )

Retrieval

Retrieval Process - identify the kind of query § When submitting a query First, identify whether it is a regular script (or sketched by the user with dark color) or stone rubbing in order to extract right features Regular script and its binary image Where Stone rubbing and its binary image is the gray value of a pixel. If T >1 , then it is stone rubbing, else it is regular script

Retrieval Process - shape representation § Represent the character image as low dimensional features as possible. • Currently, we down-sampling the contour points to reach dimensionality reduction Normalize to 45 × 45 down-sampling Keep main structure Original image Contour points After down sampling In 69 × 63 pixel Total: 387 pixels Total: 245 pixels

Retrieval Process - shape matching #1 § Approximate shape matching • use polar coordinates: 32 bins(divide direction into 8 bins equally , divide cord into 4 bins use The center (one contour point)

Retrieval Process - shape matching #2 § Compute the matching cost of two calligraphy character images (1) matching cost for two random points : (2) Matching cost for i and its Corresponding point: (3)matching cost for two characters:

Retrieval Process - constraint Let be the points from the contour point of the query, and from a candidate character from the database, then: Where and are the positions of be the point and This is because according to the calligraphy writing experience, very few stroke composer of a character will translate above 1/3 of the whole scale space Here is the normalization scale

Speed up #1 Shape matching is time consuming, the matching for every 2 characters is about 0. 09 second. For 10000, it is about 900 second (15 minutes). Too long time for getting the retrieval result for the query How to speed up? Need more discriminative features.

Speed up #2 The discriminative features we added: § Character complexity index § Horizontal stroke density § Perpendicular stroke density §Left most point position range

Implementation Database: • 12067 individual calligraphy characters, segmented 27 collections named “Chinese calligraphy Collections” Perform on: • regular Intel(R)/256 RAM personal computer Average retrieval time for one query • 0. 31 minutes

The submitted query Query result

Browsing the original works Where it Comes from

Experimental comparison Comparison of average Recall and Precision rate

Writing Process Simulating § Target: Set a good writing example for learners: display how a calligraphy character was written § Main idea • Extract the stroke It’s doesn't matter if connected strokes extracted, as long as right writing sequence is estimated • Estimated stroke sequence Basic rules: (1)people always write a calligraphy character from left to right, from top to bottom. (2) people always write a calligraphy character as fast and convenient as possible. • Feedback currently, we add some man interaction when the writing sequence is not right.

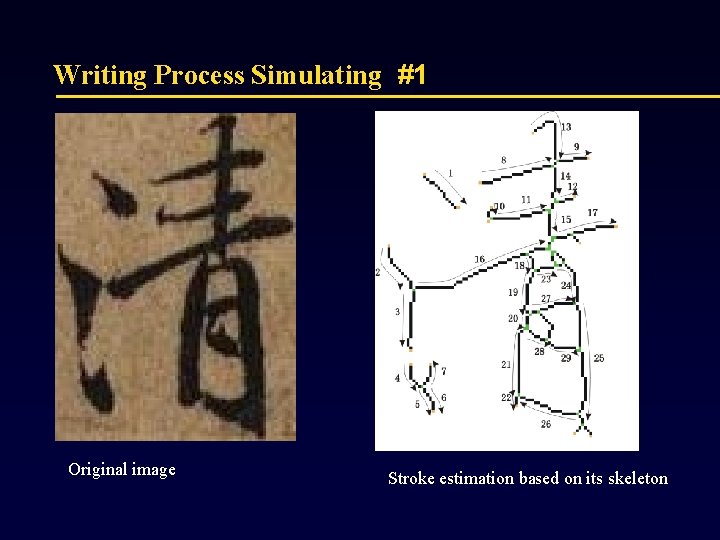

Writing Process Simulating #1 Original image Stroke estimation based on its skeleton

Writing Process Simulating #2 We develop a video to simulate how a calligraphist wrote a calligraphy character step by step. Thus set a writing example for the learner to follow. Original image Shots of our writing simulation

demo

Conclusion § In digital libraries, the multimedia information is getting more and more…. . § Search Engines/information retrieval usually deals with text -based…… trend is to have multimedia search engine, multimedia information retrieval…… § Multimedia technology is finding its way there!

Q&A Thanks

- Slides: 80