Digital Image Processing Basic Concepts How an image

Digital Image Processing Basic Concepts

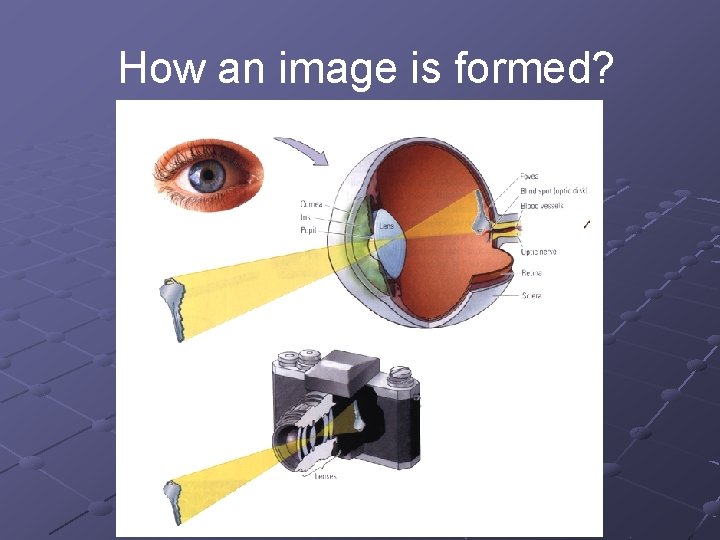

How an image is formed?

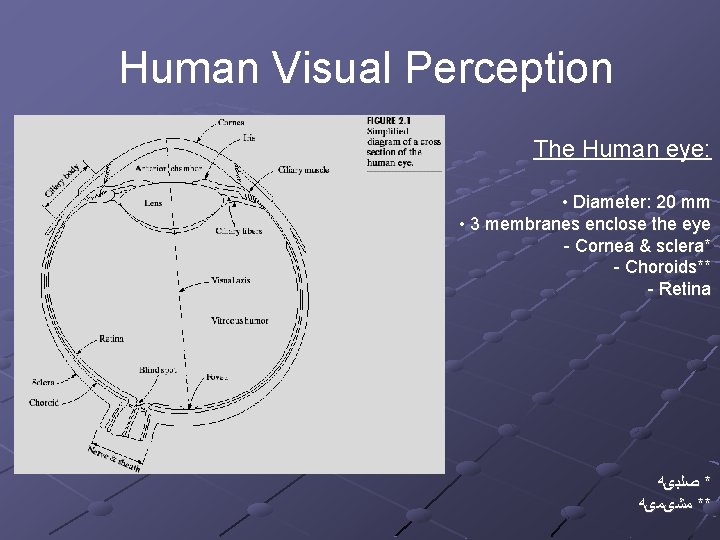

Human Visual Perception The Human eye: • Diameter: 20 mm • 3 membranes enclose the eye - Cornea & sclera* - Choroids** - Retina * ﺻﻠﺒیﻪ ** ﻣﺸیﻤیﻪ

The Choroid The choroid contains blood vessels for eye nutrition and is heavily pigmented to reduce extraneous light entrance and backscatter. It is divided into the ciliary body and the iris diaphragm, which controls the amount of light that enters the pupil (2 mm ~ 8 mm).

The Lens The lens is made up of fibrous cells and is suspended by fibers that attach it to the ciliary body. It is slightly yellow and absorbs approx. 8% of the visible light spectrum.

The Retina The retina lines the entire posterior portion. Discrete light receptors are distributed over the surface of the retina: n n cones (6 -7 million per eye) and rods (75 -150 million per eye)

Cones are mainly located in the fovea and are sensitive to colour. Each one is connected to its own nerve end. Cone vision is called photopic (or brightlight vision).

Rods are giving a general, overall picture of the field of view and are not involved in colour vision. Several rods are connected to a single nerve and are sensitive to low levels of illumination (scotopic or dim-light vision).

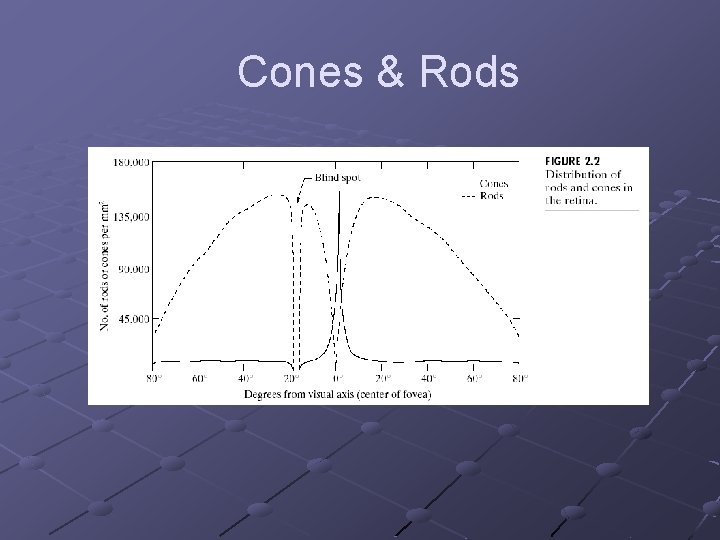

Receptor Distribution The distribution of receptors is radially symmetric about the fovea. Cones are most dense in the center of the fovea while rods increase in density from the center out to approximately 20% off axis and then decrease.

Cones & Rods

The Fovea The fovea is circular (1. 5 mm in diameter) but can be assumed to be a square sensor array (1. 5 mm x 1. 5 mm). The density of cones: 150, 000 elements/mm 2 ~ 337, 000 for the fovea. A CCD imaging chip of medium resolution needs 5 mm x 5 mm for this number of elements

Image Formation in the Eye The eye lens (if compared to an optical lens) is flexible. It gets controlled by the fibers of the ciliary body and to focus on distant objects it gets flatter (and vice versa).

Image Formation in the Eye Distance between the center of the lens and the retina (focal length): n varies from 17 mm to 14 mm (refractive power of lens goes from minimum to maximum). Objects farther than 3 m use minimum refractive lens powers (and vice versa).

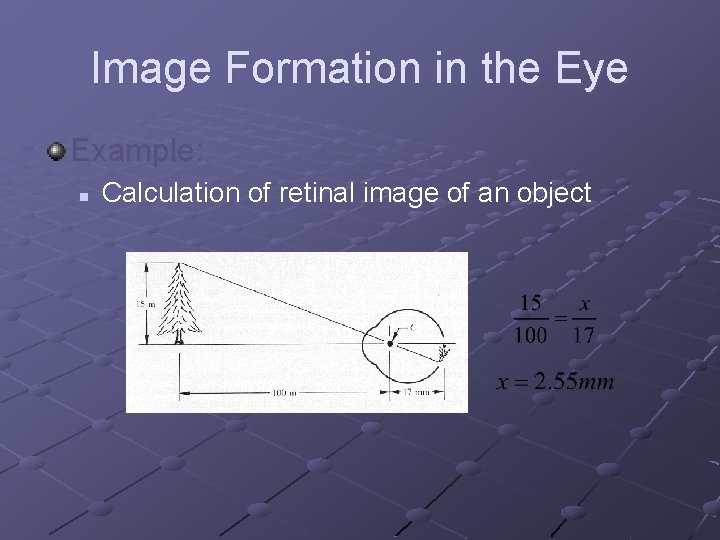

Image Formation in the Eye Example: n Calculation of retinal image of an object

Image Formation in the Eye Perception takes place by the relative excitation of light receptors. These receptors transform radiant energy into electrical impulses that are ultimately decoded by the brain.

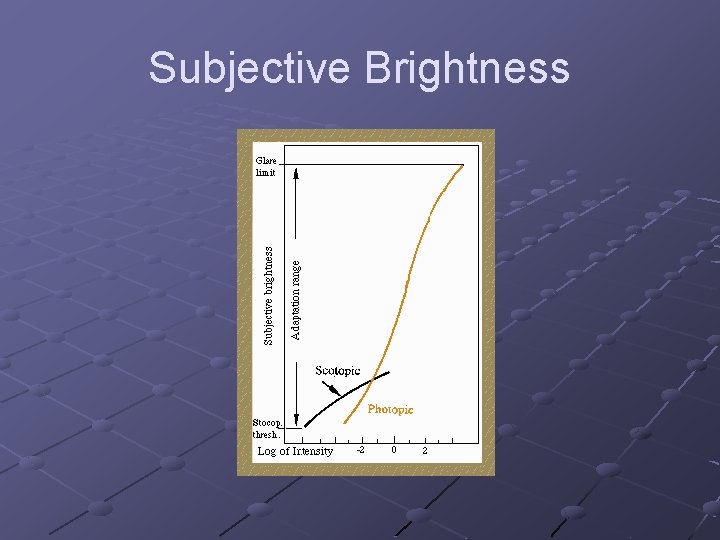

Brightness Adaptation & Discrimination Range of light intensity levels to which HVS (human visual system) can adapt: on the order of 1010. Subjective brightness (i. e. intensity as perceived by the HVS) is a logarithmic function of the light intensity incident on the eye.

Subjective Brightness

Brightness Adaptation & Discrimination The HVS cannot operate over such a range simultaneously. For any given set of conditions, the current sensitivity level of HVS is called the brightness adaptation level.

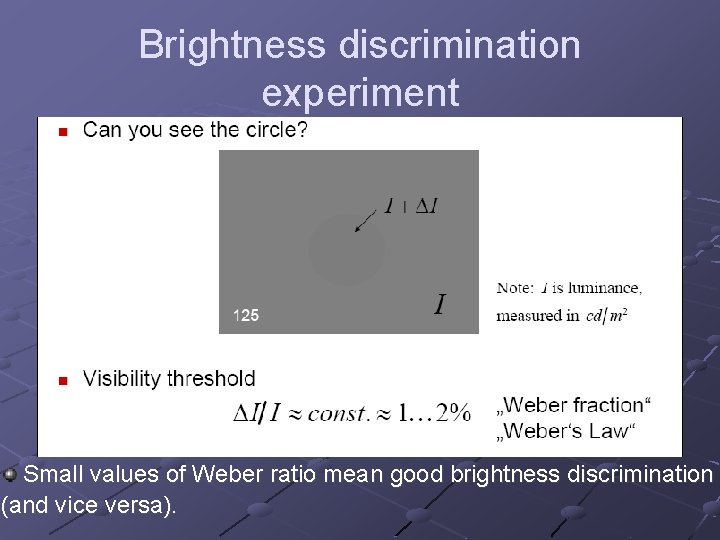

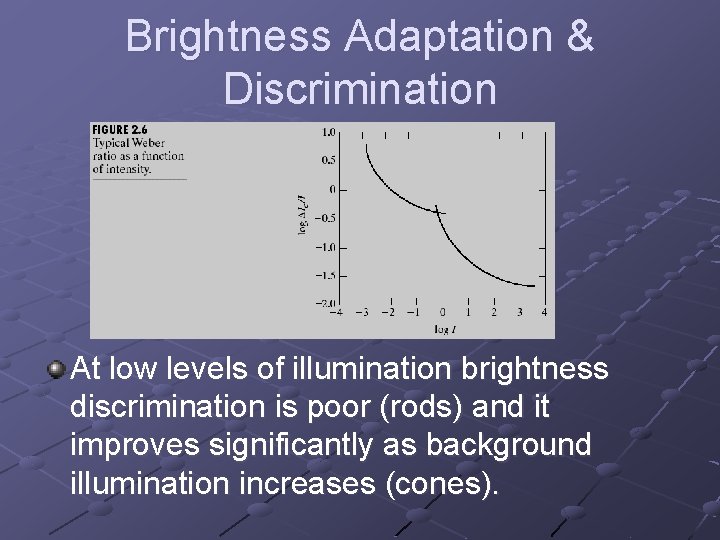

Brightness Adaptation & Discrimination The eye also discriminates between changes in brightness at any specific adaptation level. Where: Ic: the increment of illumination discriminateable 50% of the time I : background illumination

Brightness discrimination experiment Small values of Weber ratio mean good brightness discrimination (and vice versa).

Brightness Adaptation & Discrimination At low levels of illumination brightness discrimination is poor (rods) and it improves significantly as background illumination increases (cones).

Brightness Adaptation & Discrimination The typical observer can discern one to two dozen different intensity changes n i. e. the number of different intensities a person can see at any one point in a monochrome image

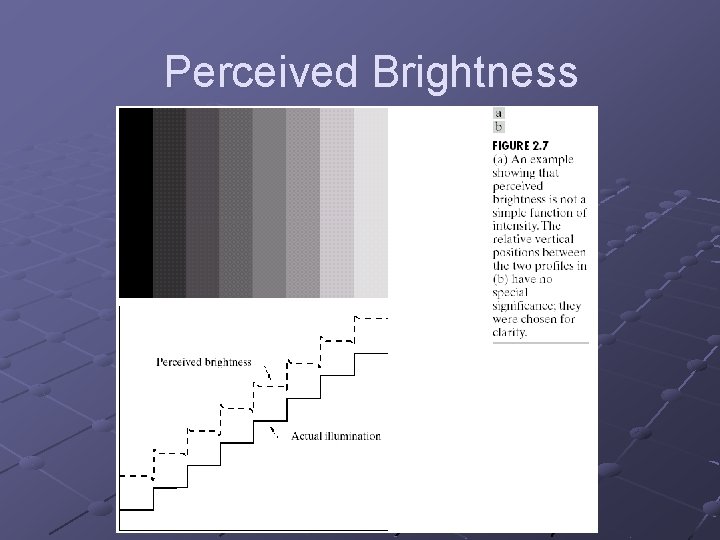

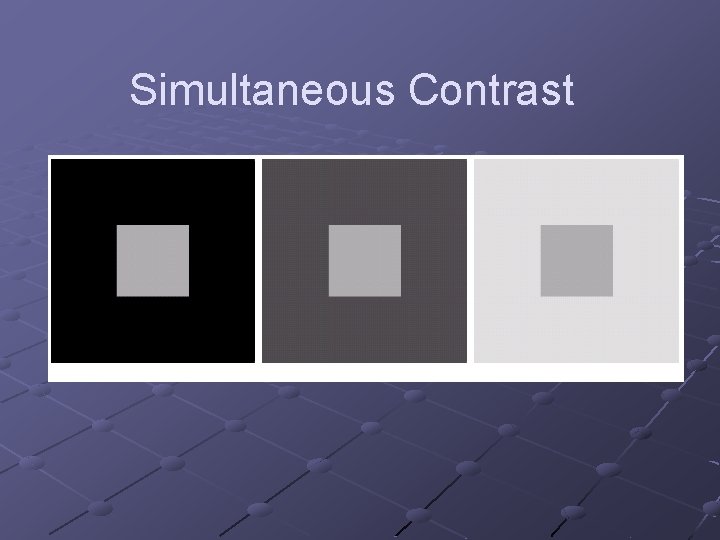

Brightness Adaptation & Discrimination Overall intensity discrimination is broad due to different set of incremental changes to be detected at each new adaptation level. Perceived brightness is not a simple function of intensity n n Scalloped effect, Mach band pattern Simultaneous contrast

Perceived Brightness

Simultaneous Contrast

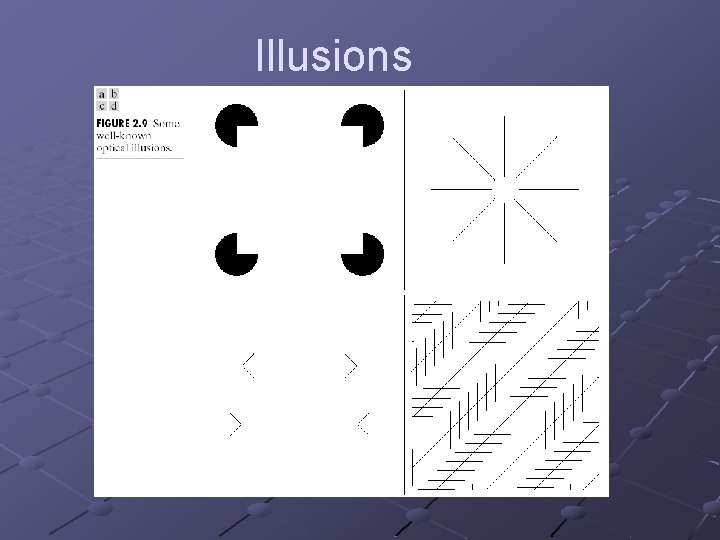

Illusions

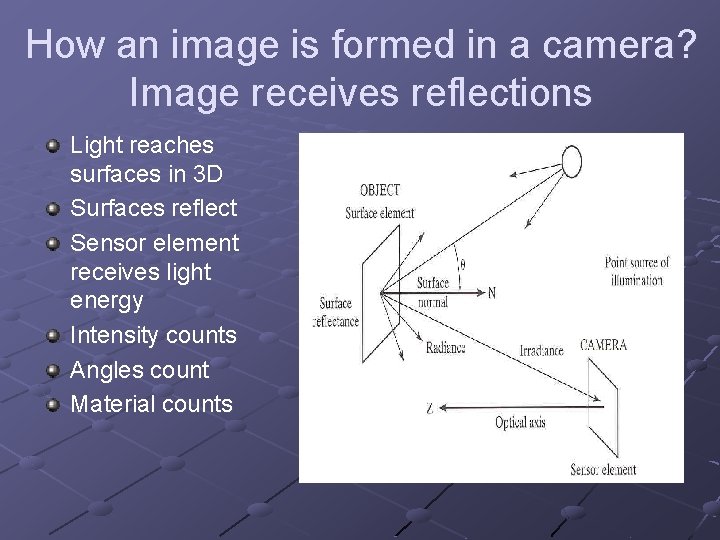

How an image is formed in a camera? Image receives reflections Light reaches surfaces in 3 D Surfaces reflect Sensor element receives light energy Intensity counts Angles count Material counts

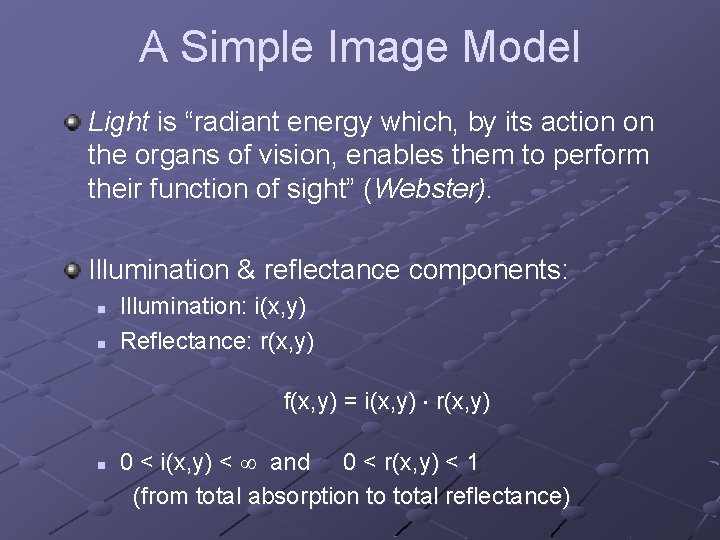

A Simple Image Model Light is “radiant energy which, by its action on the organs of vision, enables them to perform their function of sight” (Webster). Illumination & reflectance components: n n Illumination: i(x, y) Reflectance: r(x, y) f(x, y) = i(x, y) r(x, y) n 0 < i(x, y) < and 0 < r(x, y) < 1 (from total absorption to total reflectance)

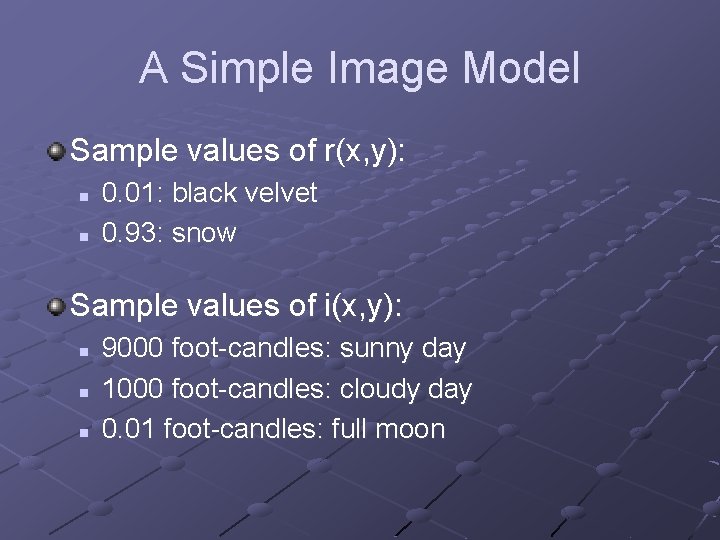

A Simple Image Model Sample values of r(x, y): n n 0. 01: black velvet 0. 93: snow Sample values of i(x, y): n n n 9000 foot-candles: sunny day 1000 foot-candles: cloudy day 0. 01 foot-candles: full moon

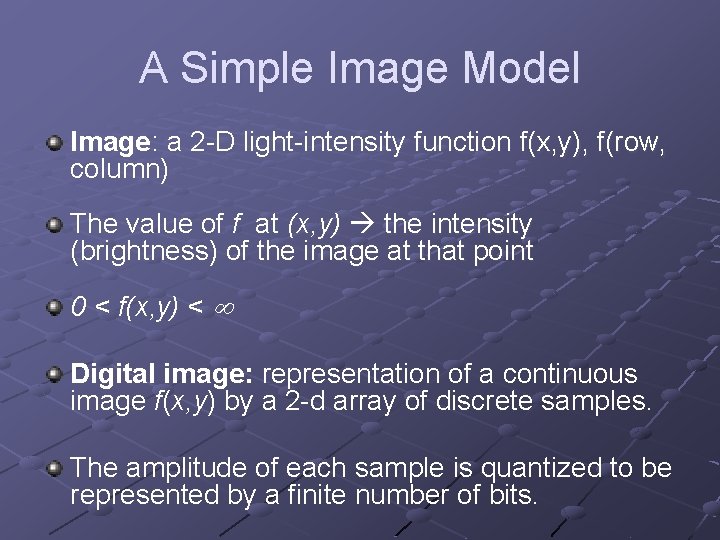

A Simple Image Model Image: a 2 -D light-intensity function f(x, y), f(row, column) The value of f at (x, y) the intensity (brightness) of the image at that point 0 < f(x, y) < Digital image: representation of a continuous image f(x, y) by a 2 -d array of discrete samples. The amplitude of each sample is quantized to be represented by a finite number of bits.

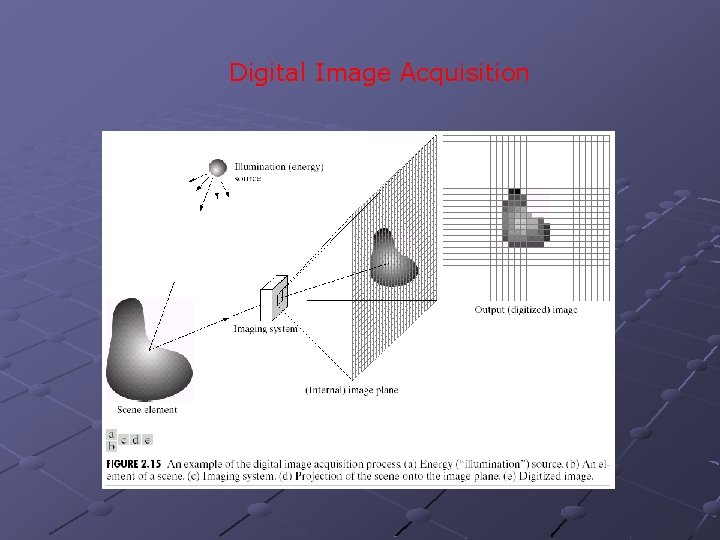

Digital Image Acquisition

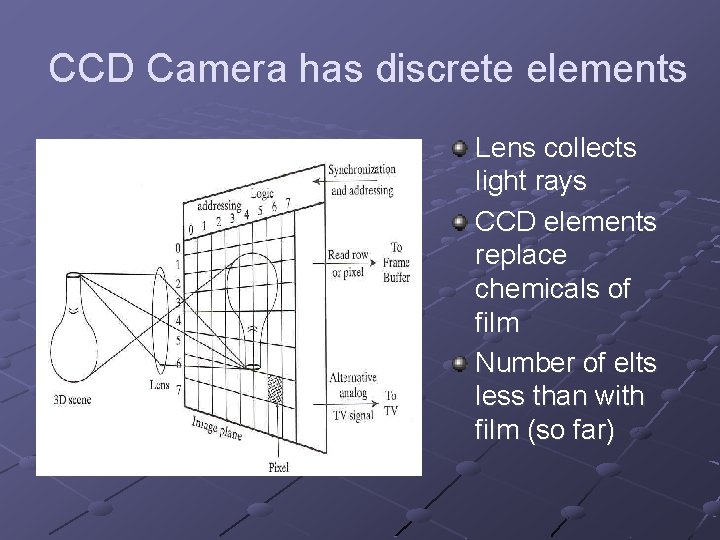

CCD Camera has discrete elements Lens collects light rays CCD elements replace chemicals of film Number of elts less than with film (so far)

A Simple Image Model Nature of f(x, y): n n The amount of source light incident on the scene being viewed The amount of light reflected by the objects in the scene

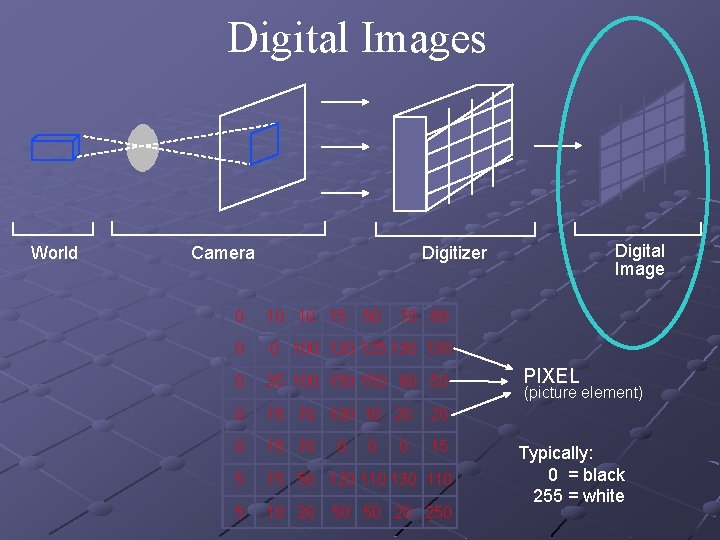

Digital Images World Camera Digital Image Digitizer 0 10 10 15 0 0 100 125 130 0 35 100 150 80 50 0 15 70 10 20 20 0 15 70 15 50 120 110 130 110 5 10 20 0 50 0 70 80 0 50 50 20 250 PIXEL (picture element) Typically: 0 = black 255 = white

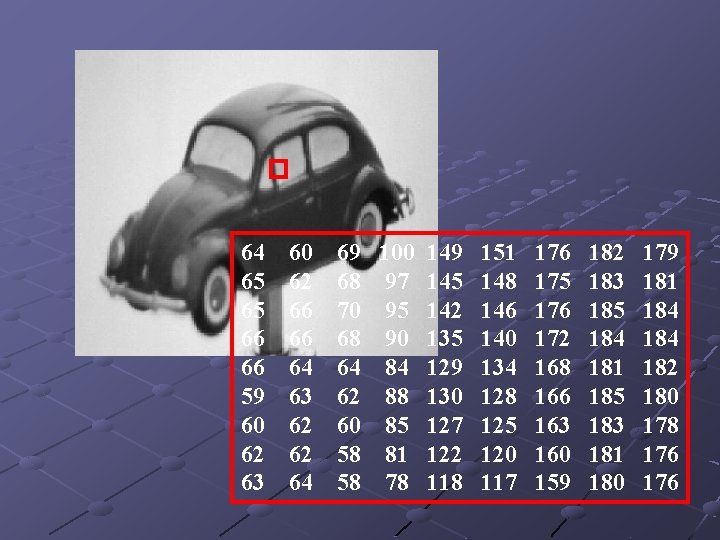

64 65 65 66 66 59 60 62 63 60 62 66 66 64 63 62 62 64 69 100 149 151 176 182 179 68 97 145 148 175 183 181 70 95 142 146 176 185 184 68 90 135 140 172 184 64 84 129 134 168 181 182 62 88 130 128 166 185 180 60 85 127 125 163 183 178 58 81 122 120 160 181 176 58 78 117 159 180 176

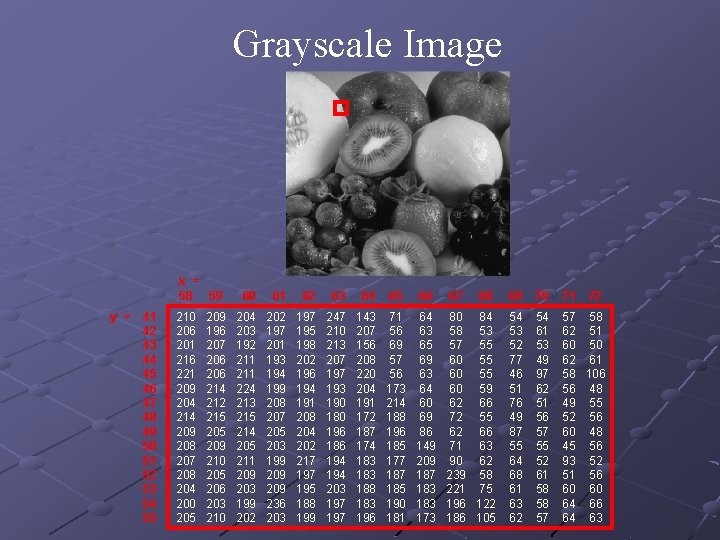

Grayscale Image x = 58 59 y = 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 210 206 201 216 221 209 204 214 209 208 207 208 204 200 205 209 196 207 206 214 212 215 209 210 205 206 203 210 60 61 62 63 64 65 66 67 68 69 70 71 72 204 203 192 211 224 213 215 214 205 211 209 203 199 202 197 201 193 194 199 208 207 205 203 199 209 236 203 197 195 198 202 196 194 191 208 204 202 217 195 188 199 247 210 213 207 193 190 180 196 186 194 203 197 143 207 156 208 220 204 191 172 187 174 183 188 183 196 71 56 69 57 56 173 214 188 196 185 177 185 190 181 64 63 65 69 63 64 60 69 86 149 209 187 183 173 80 84 58 53 57 55 60 59 62 66 72 55 62 66 71 63 90 62 239 58 221 75 196 122 186 105 54 53 52 77 46 51 76 49 87 55 64 68 61 63 62 54 61 53 49 97 62 51 56 57 55 52 61 58 58 57 57 58 62 51 60 50 62 61 58 106 56 48 49 55 52 56 60 48 45 56 93 52 51 56 60 60 64 66 64 63

![Three types of images: n Gray-scale images I(x, y) [0. . 255] n Binary Three types of images: n Gray-scale images I(x, y) [0. . 255] n Binary](http://slidetodoc.com/presentation_image_h2/262b403daa26d8c6f5c4ea04d7767e32/image-37.jpg)

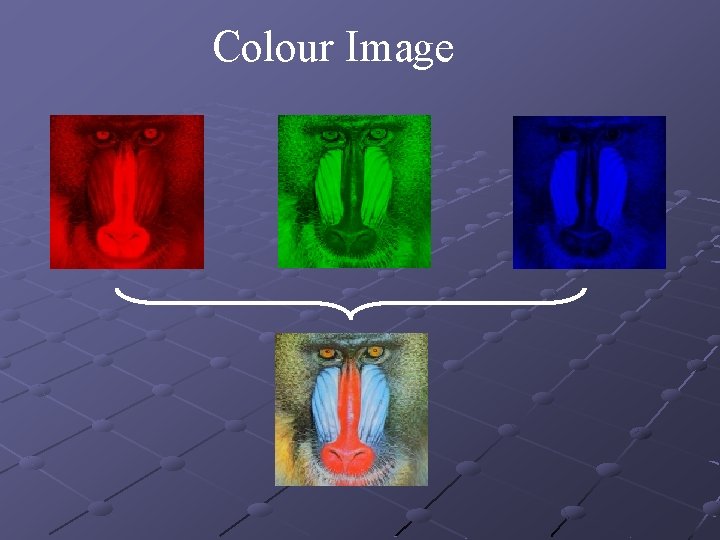

Three types of images: n Gray-scale images I(x, y) [0. . 255] n Binary images I(x, y) {0 , 1} n Colour images IR(x, y) IG(x, y) IB(x, y)

Colour Image

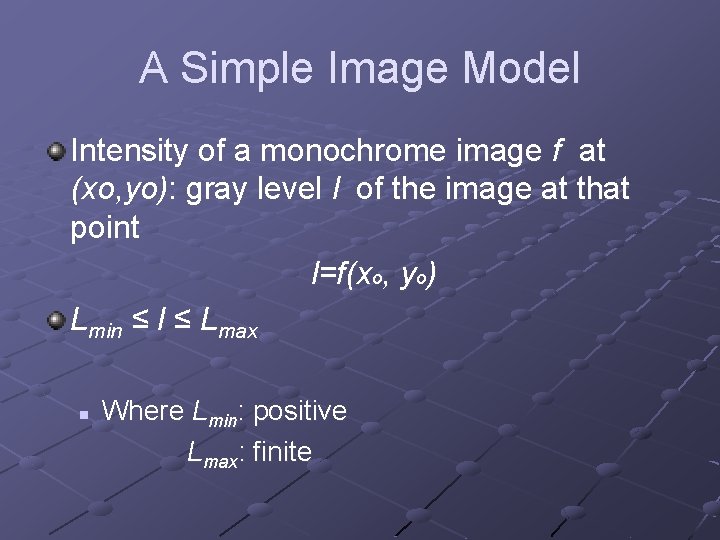

A Simple Image Model Intensity of a monochrome image f at (xo, yo): gray level l of the image at that point l=f(xo, yo) Lmin ≤ l ≤ Lmax n Where Lmin: positive Lmax: finite

A Simple Image Model In practice: n n Lmin = imin rmin and Lmax = imax rmax E. g. for indoor image processing: n Lmin ≈ 10 Lmax ≈ 1000 [Lmin, Lmax] : gray scale n Often shifted to [0, L-1] l=0: black l=L-1: white

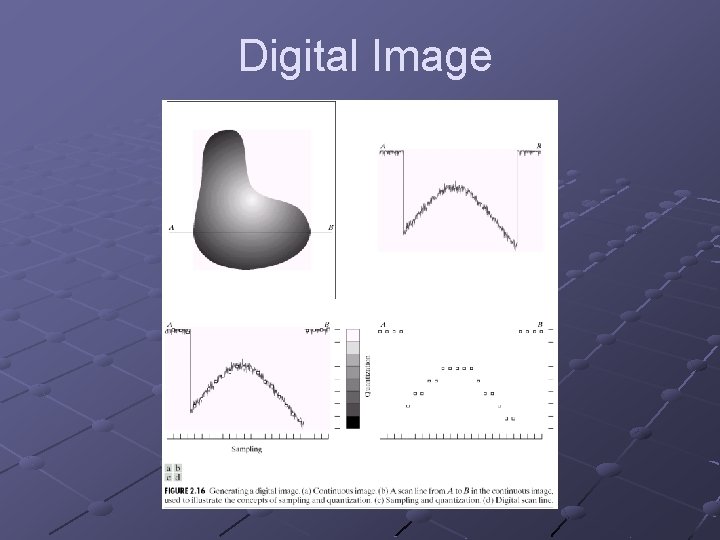

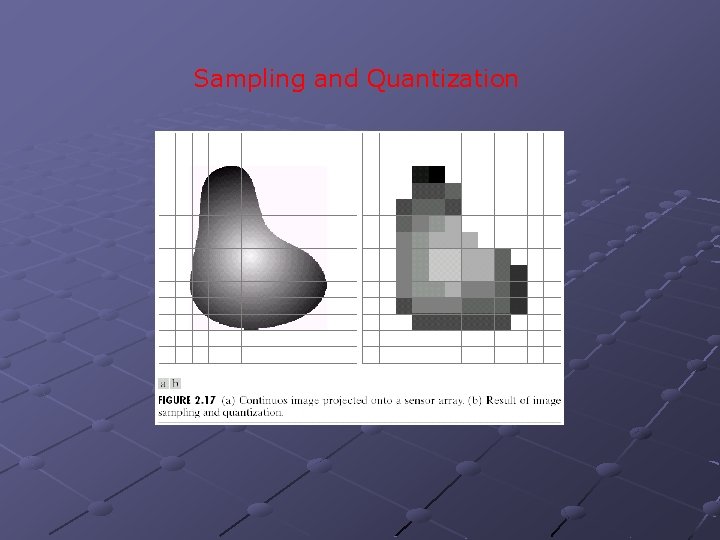

Sampling & Quantization The spatial and amplitude digitization of f(x, y) is called: n n image sampling when it refers to spatial coordinates (x, y) and gray-level quantization when it refers to the amplitude.

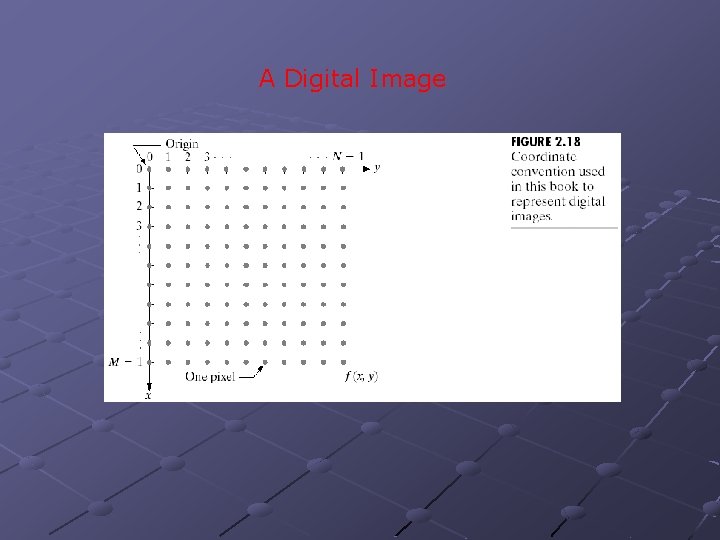

Digital Image

Sampling and Quantization

A Digital Image

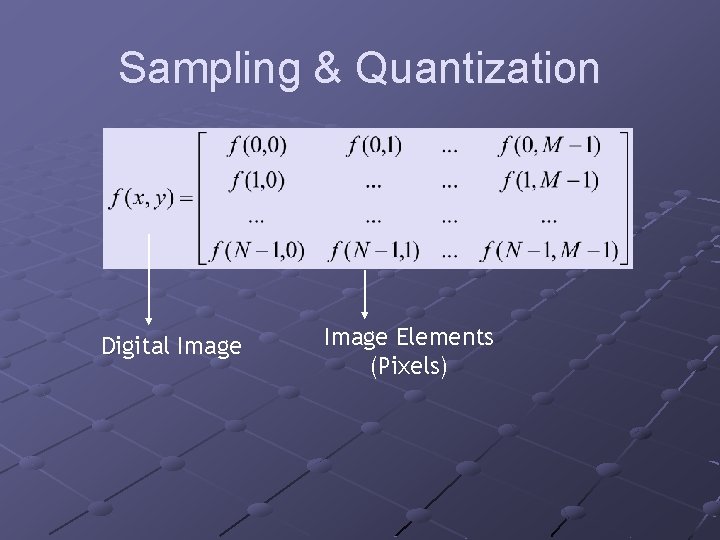

Sampling & Quantization Digital Image Elements (Pixels)

Sampling & Quantization Important terms for future discussion: n Z: set of real integers n R: set of real numbers

Sampling & Quantization Sampling: partitioning xy plane into a grid n the coordinate of the center of each grid is a pair of elements from the Cartesian product Z x Z (Z 2) Z 2 is the set of all ordered pairs of elements (a, b) with a and b being integers from Z.

Sampling & Quantization f(x, y) is a digital image if: n n (x, y) are integers from Z 2 and f is a function that assigns a gray-level value (from R) to each distinct pair of coordinates (x, y) [quantization] Gray levels are usually integers n then Z replaces R

Sampling & Quantization The digitization process requires decisions about: n values for N, M (where N x M: the image array) and n the number of discrete gray levels allowed for each pixel.

Sampling & Quantization Usually, in DIP these quantities are integer powers of two: N=2 n M=2 m and L=2 k number of gray levels Another assumption is that the discrete levels are equally spaced between 0 and L -1 in the gray scale.

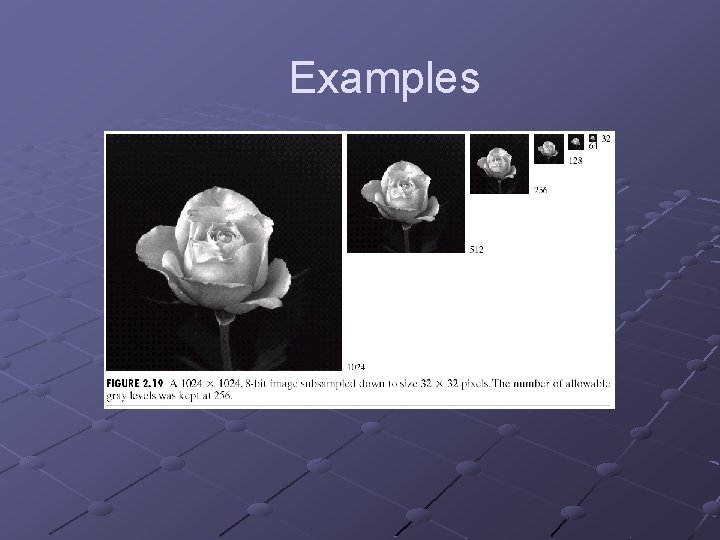

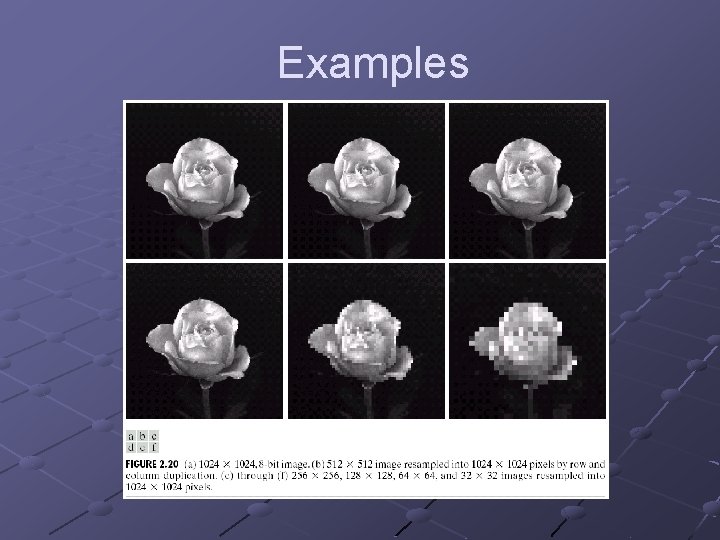

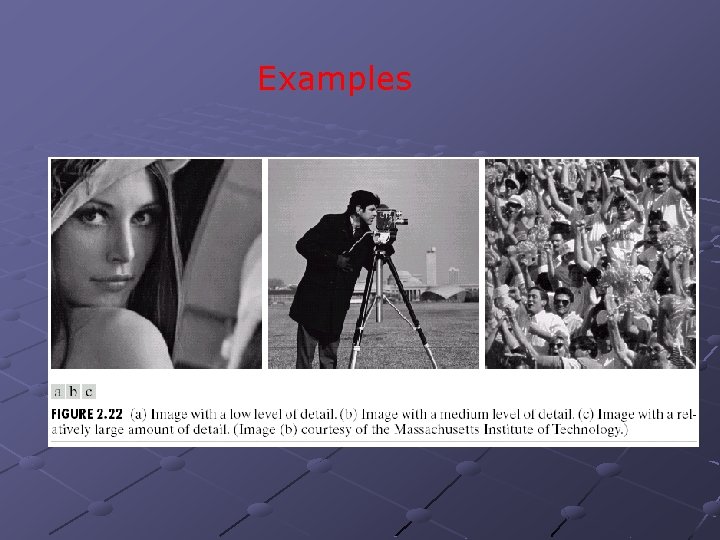

Examples

Examples

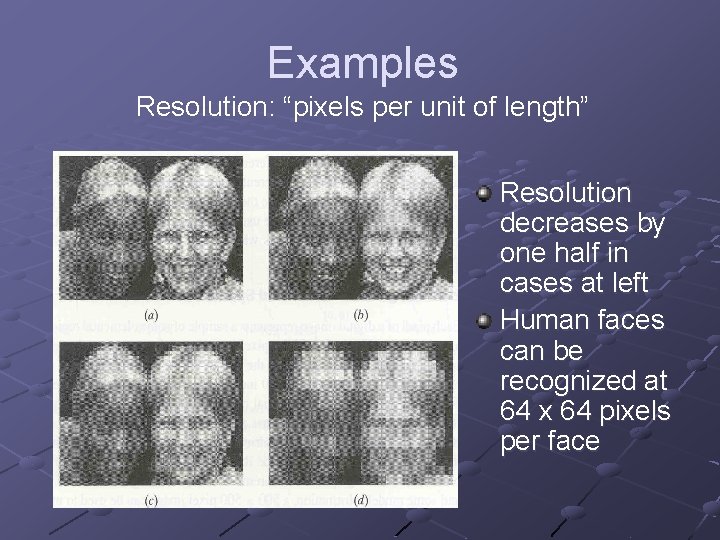

Examples Resolution: “pixels per unit of length” Resolution decreases by one half in cases at left Human faces can be recognized at 64 x 64 pixels per face

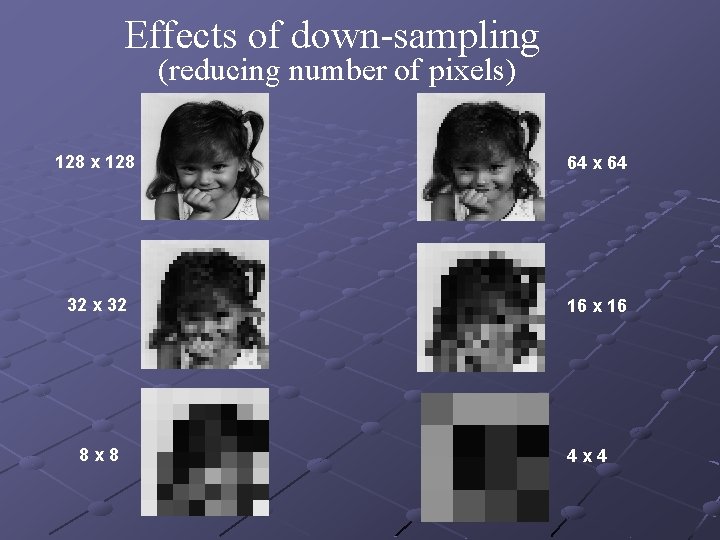

Effects of down-sampling (reducing number of pixels) 128 x 128 64 x 64 32 x 32 16 x 16 8 x 8 4 x 4

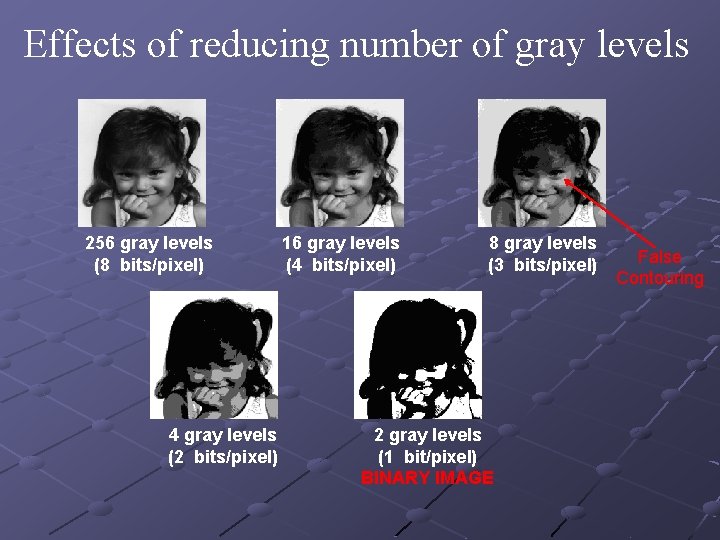

Effects of reducing number of gray levels 256 gray levels (8 bits/pixel) 4 gray levels (2 bits/pixel) 16 gray levels (4 bits/pixel) 8 gray levels (3 bits/pixel) 2 gray levels (1 bit/pixel) BINARY IMAGE False Contouring

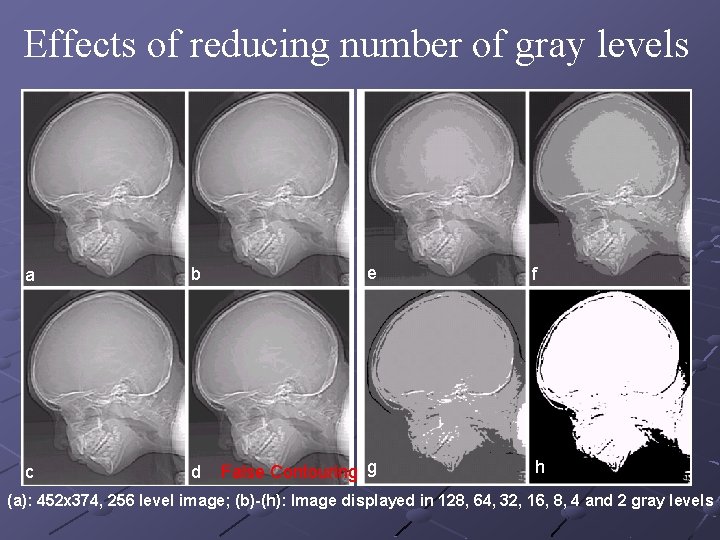

Effects of reducing number of gray levels a b e c d False Contouring g f h (a): 452 x 374, 256 level image; (b)-(h): Image displayed in 128, 64, 32, 16, 8, 4 and 2 gray levels

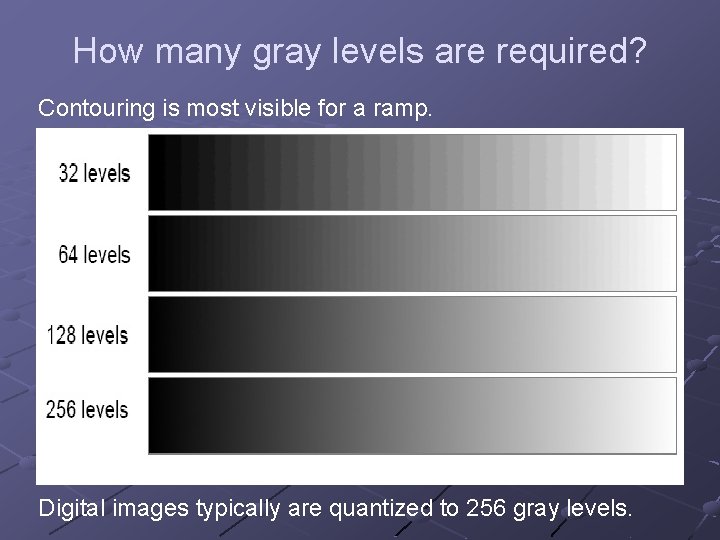

How many gray levels are required? Contouring is most visible for a ramp. Digital images typically are quantized to 256 gray levels.

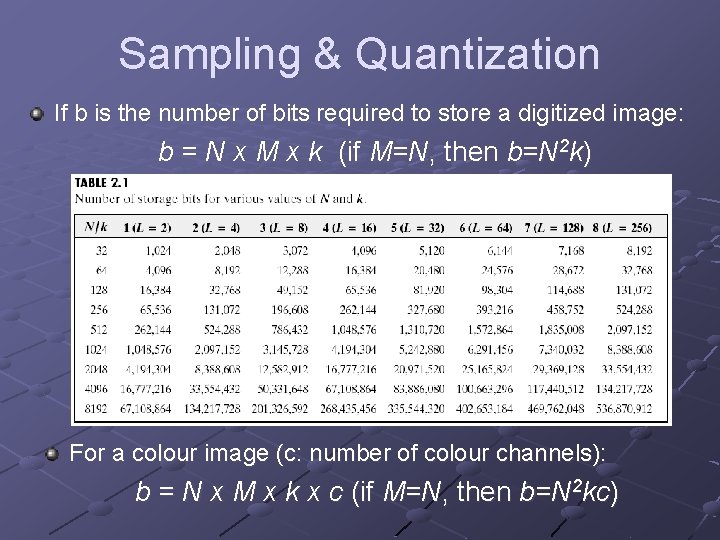

Sampling & Quantization If b is the number of bits required to store a digitized image: b = N x M x k (if M=N, then b=N 2 k) For a colour image (c: number of colour channels): b = N x M x k x c (if M=N, then b=N 2 kc)

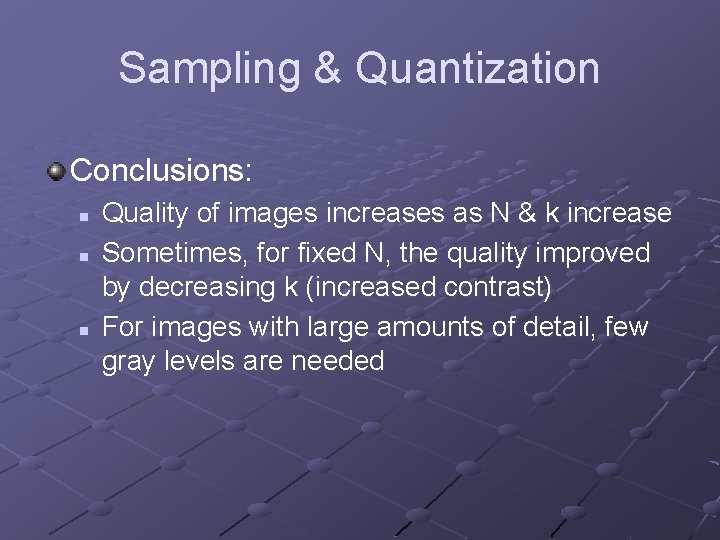

Sampling & Quantization How many samples and gray levels are required for a good approximation? n n Resolution (the degree of discernible detail) of an image depends on sample number and gray level number. i. e. the more these parameters are increased, the closer the digitized array approximates the original image.

Sampling & Quantization How many samples and gray levels are required for a good approximation? (cont. ) n But: storage & processing requirements increase rapidly as a function of N, M, and k

Sampling & Quantization Different versions (images) of the same object can be generated through: n n n Varying N, M numbers Varying k (number of bits) Varying both

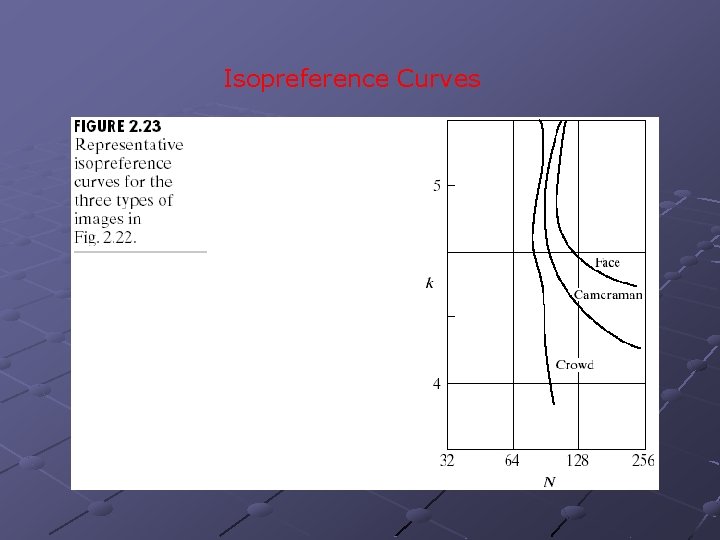

Sampling & Quantization Isopreference curves (in the N-k plane) n n Each point: image having values of N and k equal to the coordinates of this point Points lying on an isopreference curve correspond to images of equal subjective quality.

Examples

Isopreference Curves

Sampling & Quantization Conclusions: n n n Quality of images increases as N & k increase Sometimes, for fixed N, the quality improved by decreasing k (increased contrast) For images with large amounts of detail, few gray levels are needed

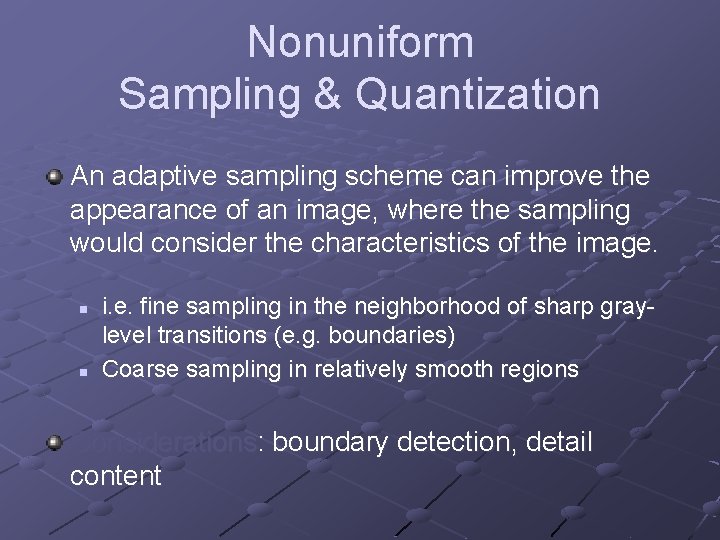

Nonuniform Sampling & Quantization An adaptive sampling scheme can improve the appearance of an image, where the sampling would consider the characteristics of the image. n n i. e. fine sampling in the neighborhood of sharp graylevel transitions (e. g. boundaries) Coarse sampling in relatively smooth regions Considerations: boundary detection, detail content

Nonuniform Sampling & Quantization Similarly: nonuniform quantization process In this case: n n few gray levels in the neighborhood of boundaries more in regions of smooth gray-level variations (reducing thus false contours)

Some Basic Relationships Between Pixels Definitions: n n n f(x, y): digital image Pixels: q, p Subset of pixels of f(x, y): S

Neighbors of a Pixel A pixel p at (x, y) has 2 horizontal and 2 vertical neighbors: n n (x+1, y), (x-1, y), (x, y+1), (x, y-1) This set of pixels is called the 4 -neighbors of p: N 4(p)

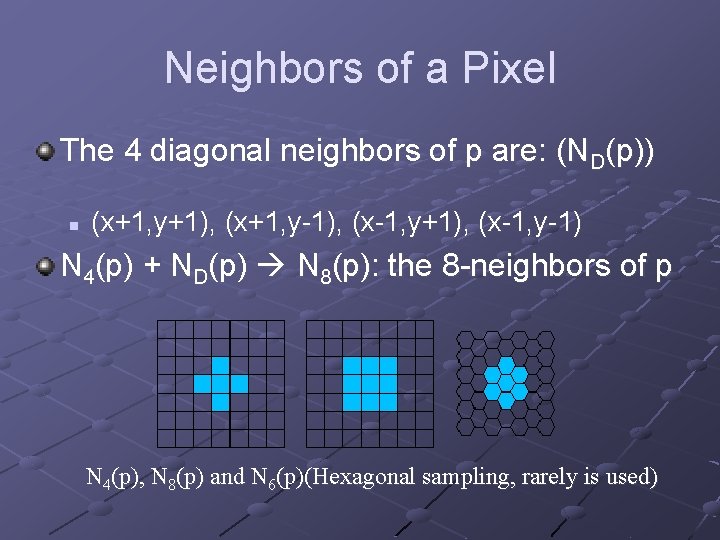

Neighbors of a Pixel The 4 diagonal neighbors of p are: (ND(p)) n (x+1, y+1), (x+1, y-1), (x-1, y+1), (x-1, y-1) N 4(p) + ND(p) N 8(p): the 8 -neighbors of p N 4(p), N 8(p) and N 6(p)(Hexagonal sampling, rarely is used)

Connectivity between pixels is important: n Because it is used in establishing boundaries of objects and components of regions in an image

Connectivity Two pixels are connected if: n n They are neighbors (i. e. adjacent in some sense (e. g. N 4(p), N 8(p), …) Their gray levels satisfy a specified criterion of similarity (e. g. equality, …) V is the set of gray-level values used to define adjacency (e. g. V={1} for adjacency of pixels of value 1)

Adjacency We consider three types of adjacency: n n 4 -adjacency: two pixels p and q with values from V are 4 -adjacent if q is in the set N 4(p) 8 -adjacency : p & q are 8 - adjacent if q is in the set N 8(p)

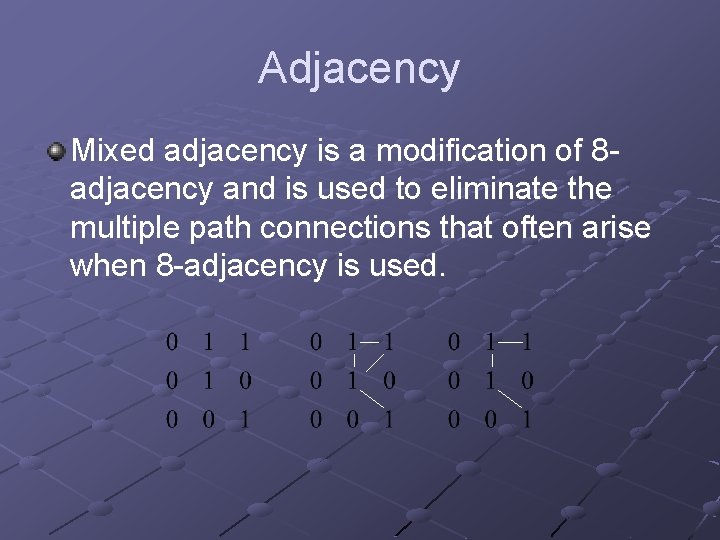

Adjacency The third type of adjacency: n m-adjacency: p & q with values from V are m-adjacent if q is in N 4(p) or q is in ND(p) and the set N 4(p) N 4(q) has no pixels with values from V

Adjacency Mixed adjacency is a modification of 8 adjacency and is used to eliminate the multiple path connections that often arise when 8 -adjacency is used.

Adjacency Two image subsets S 1 and S 2 are adjacent if some pixel in S 1 is adjacent to some pixel in S 2.

Path A path (curve) from pixel p with coordinates (x, y) to pixel q with coordinates (s, t) is a sequence of distinct pixels: n n (x 0, y 0), (x 1, y 1), …, (xn, yn) where (x 0, y 0)=(x, y), (xn, yn)=(s, t), and (xi, yi) is adjacent to (xi-1, yi-1), for 1≤i ≤n ; n is the length of the path. If (xo, yo) = (xn, yn): a closed path

Paths 4 -, 8 -, m-paths can be defined depending on the type of adjacency specified. If p, q S, then q is connected to p in S if there is a path from p to q consisting entirely of pixels in S.

Connected Component For any pixel p in S, the set of pixels in S that are connected to p is a connected component of S. If S has only one connected component then S is called a connected set.

Region & Boundary R a subset of pixels: R is a region if R is a connected set. Its boundary (border, contour) is the set of pixels in R that have at least one neighbor not in R. . . inner borders, outer borders exist. Edge is a local property of a pixel and its immediate neighbourhood, it is a vector given by a magnitude and direction. The edge direction is perpendicular to the gradient direction which points in the direction of image function growth. Border and edge: the border is a global concept related to a region, while edge expresses local properties of an image function.

Distance Measures For pixels p, q, z with coordinates (x, y), (s, t), (u, v), D is a distance function or metric if: n n n D(p, q) ≥ 0 (D(p, q)=0 iff p=q) D(p, q) = D(q, p) and D(p, z) ≥ D(p, q) + D(q, z)

![Distance Measures Euclidean distance: n n De(p, q) = [(x-s)2 + (y-t)2]1/2 Points (pixels) Distance Measures Euclidean distance: n n De(p, q) = [(x-s)2 + (y-t)2]1/2 Points (pixels)](http://slidetodoc.com/presentation_image_h2/262b403daa26d8c6f5c4ea04d7767e32/image-82.jpg)

Distance Measures Euclidean distance: n n De(p, q) = [(x-s)2 + (y-t)2]1/2 Points (pixels) having a distance less than or equal to r from (x, y) are contained in a disk of radius r centered at (x, y).

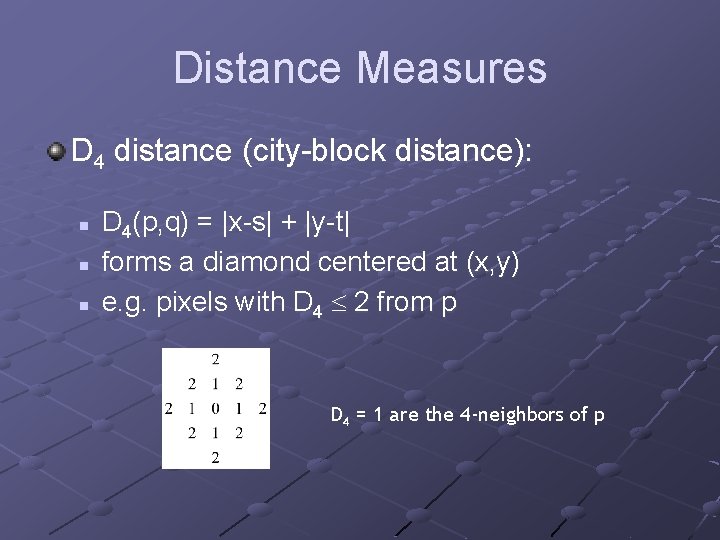

Distance Measures D 4 distance (city-block distance): n n n D 4(p, q) = |x-s| + |y-t| forms a diamond centered at (x, y) e. g. pixels with D 4 2 from p D 4 = 1 are the 4 -neighbors of p

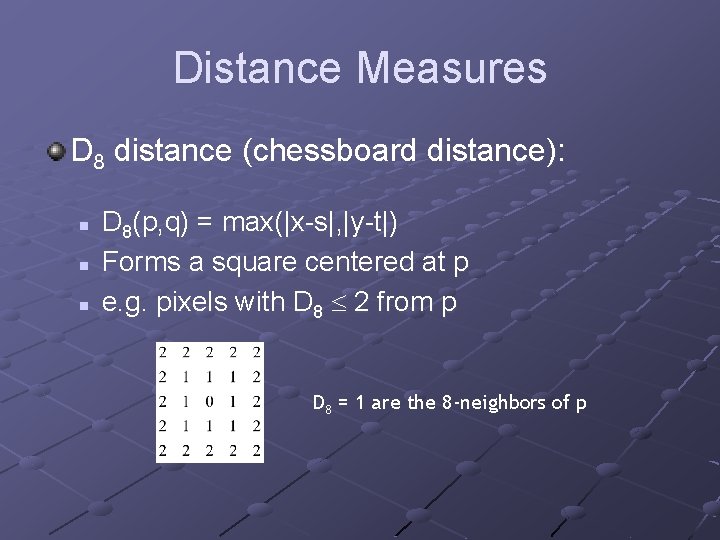

Distance Measures D 8 distance (chessboard distance): n n n D 8(p, q) = max(|x-s|, |y-t|) Forms a square centered at p e. g. pixels with D 8 2 from p D 8 = 1 are the 8 -neighbors of p

Distance Measures D 4 and D 8 distances between p and q are independent of any paths that exist between the points because these distances involve only the coordinates of the points (regardless of whether a connected path exists between them).

Distance Measures However, for m-connectivity the value of the distance (length of path) between two pixels depends on the values of the pixels along the path and those of their neighbours.

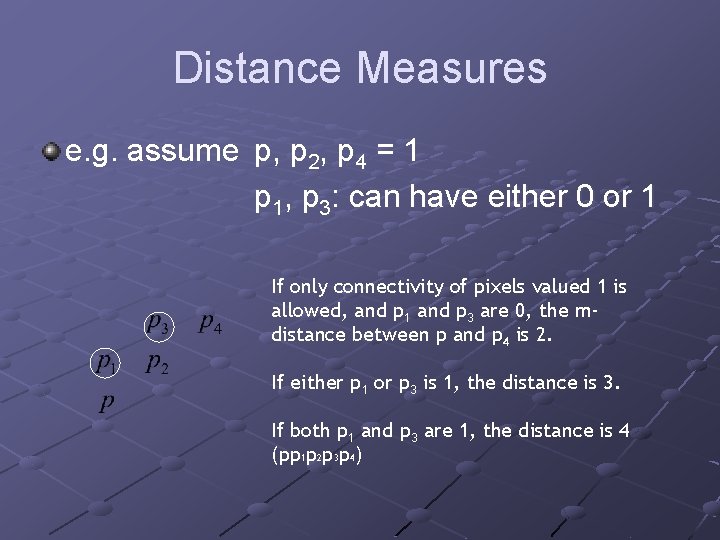

Distance Measures e. g. assume p, p 2, p 4 = 1 p 1, p 3: can have either 0 or 1 If only connectivity of pixels valued 1 is allowed, and p 1 and p 3 are 0, the mdistance between p and p 4 is 2. If either p 1 or p 3 is 1, the distance is 3. If both p 1 and p 3 are 1, the distance is 4 (pp 1 p 2 p 3 p 4)

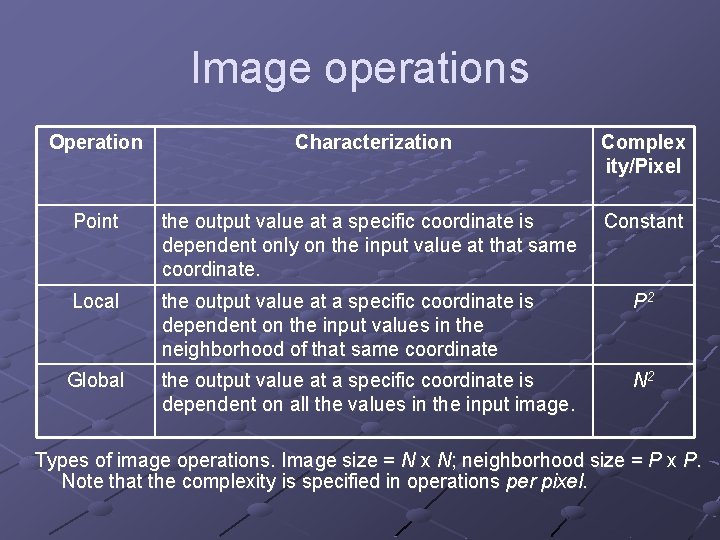

Image operations Operation Characterization Complex ity/Pixel Point the output value at a specific coordinate is dependent only on the input value at that same coordinate. Constant Local the output value at a specific coordinate is dependent on the input values in the neighborhood of that same coordinate P 2 Global the output value at a specific coordinate is dependent on all the values in the input image. N 2 Types of image operations. Image size = N x N; neighborhood size = P x P. Note that the complexity is specified in operations per pixel.

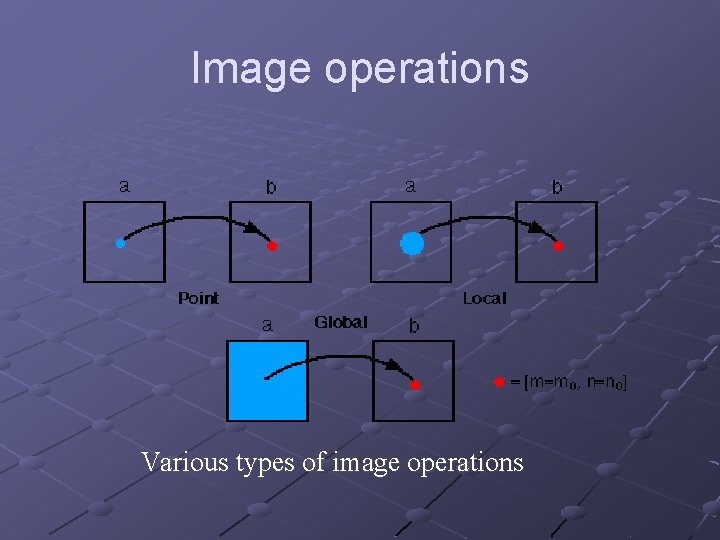

Image operations Various types of image operations

- Slides: 89