Developmental Mechanisms for LifeLong Autonomous Learning in Robots

Developmental Mechanisms for Life-Long Autonomous Learning in Robots Pierre-Yves Oudeyer Project-Team INRIA-ENSTA-Paris. Tech FLOWERS http: //www. pyoudeyer. com http: //flowers. inria. fr

Developmental robotics Sensorimotor and social learning: • Autonomous • Open, « life-long learning » • Real world, physical and social Experimental validation • Fundamental understanding of the Intrinsic • Imitation, Motivation mechanisms of development Social guidance • Maturation Application to assistive robotics

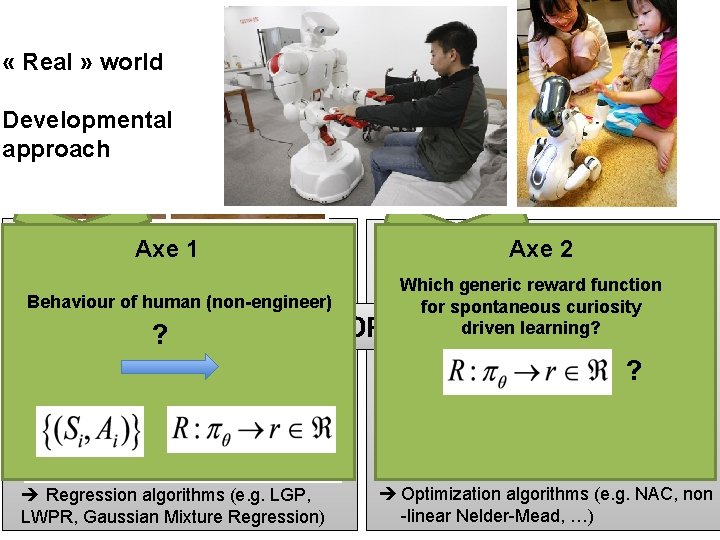

“Engineered” robot learning « Real » world Developmental approach • Engineer shows, with fixed interaction Axe 1 protocol in the lab: Behaviour of human (non-engineer) • Target: Action policy ? State/ context • OR Engineer provides a reward/fitness Axe 2 function: Which generic reward function for spontaneous curiosity driven learning? • Target: ? Action Regression algorithms (e. g. LGP, LWPR, Gaussian Mixture Regression) Optimization algorithms (e. g. NAC, non -linear Nelder-Mead, …)

Learning from interactions with non-engineers 1. Intuitive multimodal interfaces • Synthesis and recognition of emotion in speech (IJHCS, 2001, 5 patents) • Clicker-training (RAS, 2002; 1 patent) • Physical human-robot interfaces (Humanoids 2011) Non-engineer human behaviour ? 2. User studies (Humanoids 2009, HRI 2011) 3. Adaptation: learning flexible teaching interfaces (Conn. Sci. , 2006, ICDL 2011, IROS 2010)

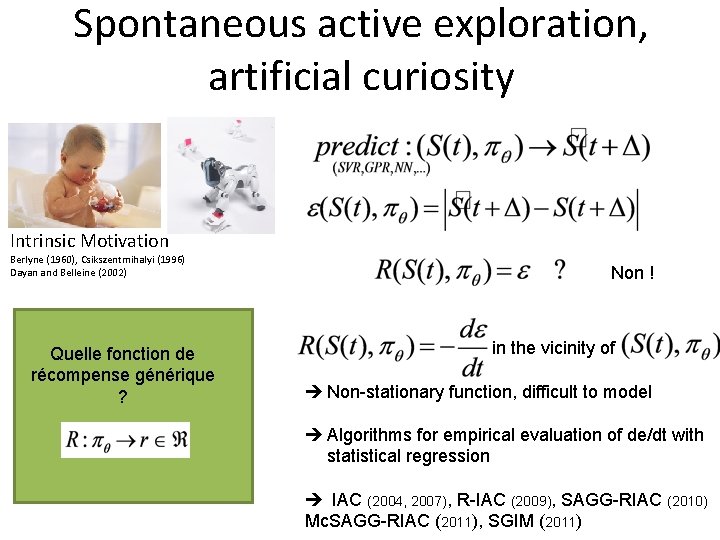

Spontaneous active exploration, artificial curiosity Intrinsic Motivation Berlyne (1960), Csikszentmihalyi (1996) Dayan and Belleine (2002) Quelle fonction de récompense générique ? Non ! in the vicinity of Non-stationary function, difficult to model Algorithms for empirical evaluation of de/dt with statistical regression IAC (2004, 2007), R-IAC (2009), SAGG-RIAC (2010) Mc. SAGG-RIAC (2011), SGIM (2011)

Exploring and learning generalized forward and inverse models Parameterized by

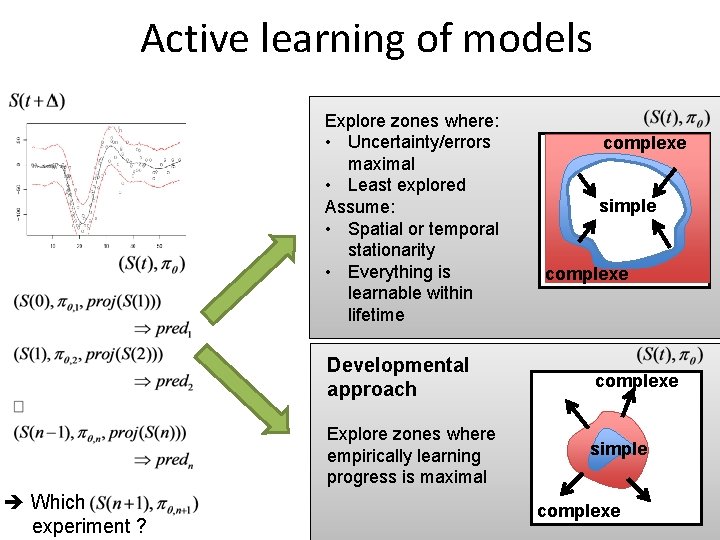

Active learning of models Explore zones where: • Uncertainty/errors maximal • Least explored Assume: • Spatial or temporal stationarity • Everything is learnable within lifetime Developmental approach Explore zones where empirically learning progress is maximal Which experiment ? complexe simple complexe

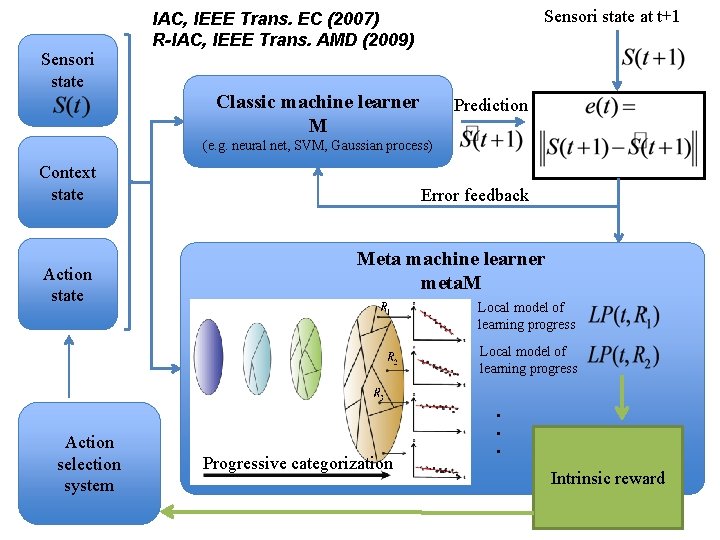

Sensori state at t+1 IAC, IEEE Trans. EC (2007) R-IAC, IEEE Trans. AMD (2009) Classic machine learner M Prediction (e. g. neural net, SVM, Gaussian process) Context state Action state Error feedback Meta machine learner meta. M Local model of learning progress Progressive categorization . . . Action selection system Intrinsic reward

The Playground Experiments (IEEE Trans. EC 2007; Connection Science 2006; AAAI Work. Dev. Learn. 2005)

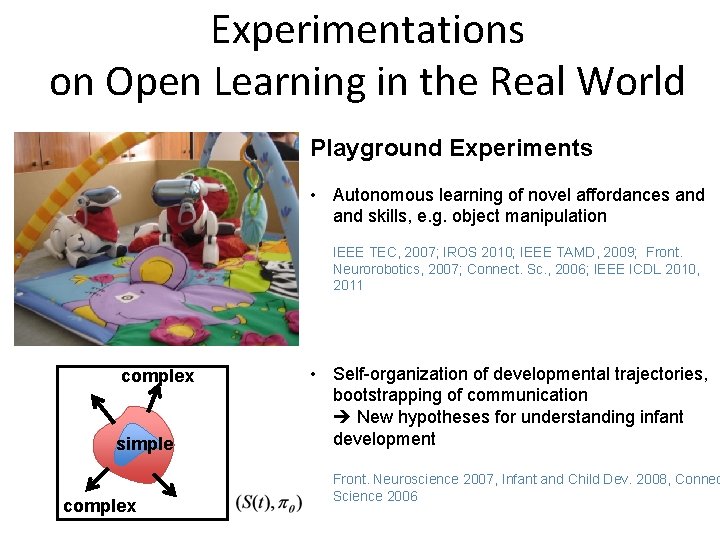

Experimentations on Open Learning in the Real World Playground Experiments • Autonomous learning of novel affordances and skills, e. g. object manipulation IEEE TEC, 2007; IROS 2010; IEEE TAMD, 2009; Front. Neurorobotics, 2007; Connect. Sc. , 2006; IEEE ICDL 2010, 2011 complex simple complex • Self-organization of developmental trajectories, bootstrapping of communication New hypotheses for understanding infant development Front. Neuroscience 2007, Infant and Child Dev. 2008, Connec Science 2006

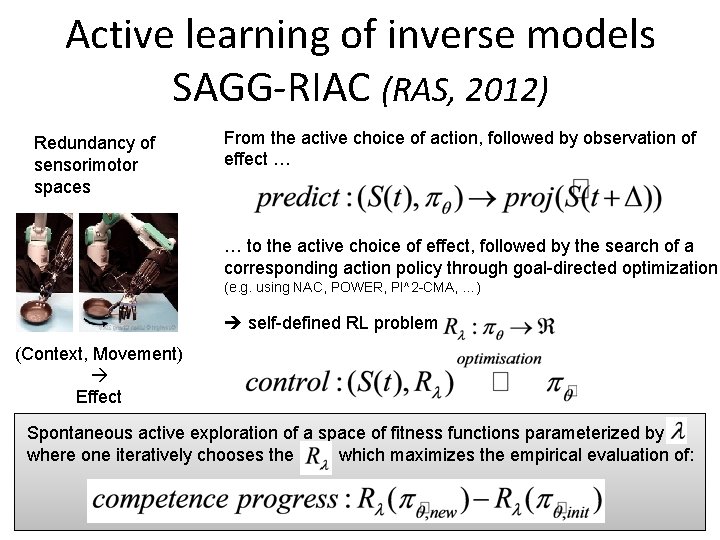

Active learning of inverse models SAGG-RIAC (RAS, 2012) Redundancy of sensorimotor spaces From the active choice of action, followed by observation of effect … … to the active choice of effect, followed by the search of a corresponding action policy through goal-directed optimization (e. g. using NAC, POWER, PI^2 -CMA, …) self-defined RL problem (Context, Movement) Effect Spontaneous active exploration of a space of fitness functions parameterized by where one iteratively chooses the which maximizes the empirical evaluation of:

Experimental evaluation of active learning efficiency Apprentissage de la locomotion omnidirectionnelle Control Space: Task Space: Performance higher than more classical active learning algorithms in real sensorimotor spaces (non-stationary, non homogeneous) (IEEE TAMD 2009; ICDL 2010, 2011; IROS 2010; RAS 2012)

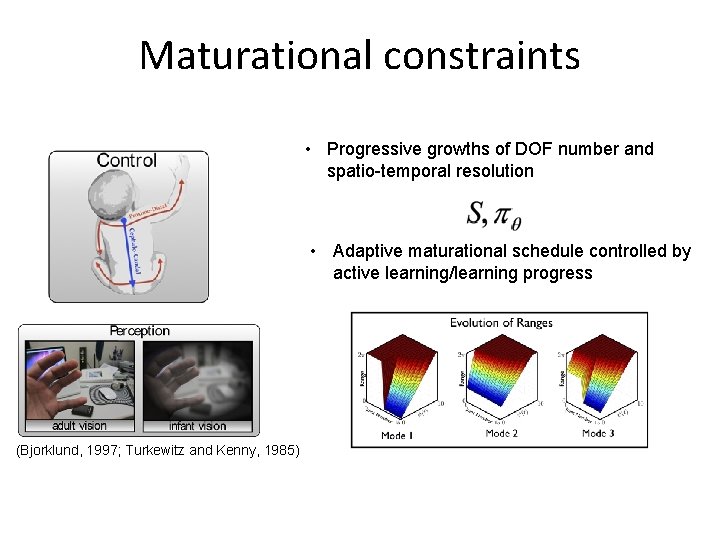

Maturational constraints • Progressive growths of DOF number and spatio-temporal resolution • Adaptive maturational schedule controlled by active learning/learning progress (Bjorklund, 1997; Turkewitz and Kenny, 1985)

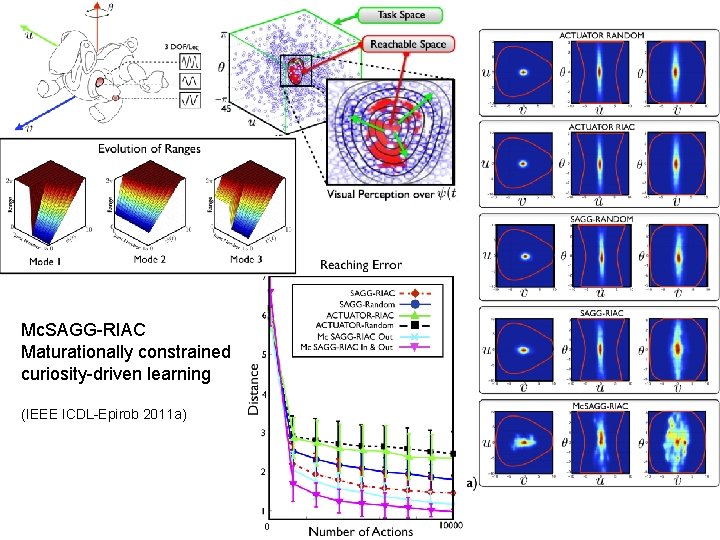

Mc. SAGG-RIAC Maturationally constrained curiosity-driven learning (IEEE ICDL-Epirob 2011 a)

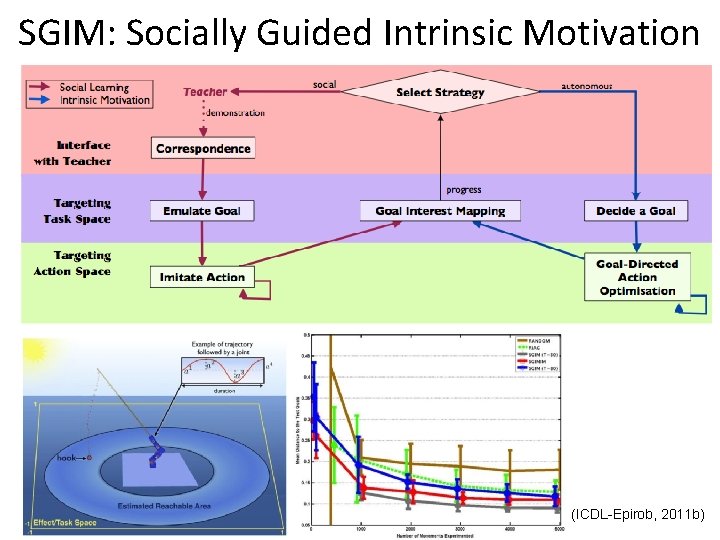

SGIM: Socially Guided Intrinsic Motivation (ICDL-Epirob, 2011 b)

« Life-long » Experimentation • Experimentation of algorithms for « life-long » learning in the real world Technological experimental platforms: robust, reconfigurable, precise, easily repaired, cheap Acroban (Siggraph 2010, IROS 2011, World Expo, South Korea, 2012)

« Life-long » Experimentation • Experimentation of algorithms for « life-long » learning in the real world Technological experimental platforms: robust, reconfigurable, precise, easily repaired, cheap Ergo-Robots Mid-term: open-source distribution of the platform to the scientific community Ergo-Robots (Exhibition « Mathematics, a beautiful elsewhere » , Fond. Cartier, 2011 -2012)

Baranes, A. , Oudeyer, P-Y. (2012) Active Learning of Inverse Models with Intrinsically Motivated Goal Exploration in Robots, Robotics and Autonomous Systems. http: //www. pyoudeyer. com/RAS-SAGG-RIAC-2012. pdf Baranes, A. , Oudeyer, P-Y. (2011 a) The Interaction of Maturational Constraints and Intrinsic Motivation in Active Motor Development, in Proceedings of IEEE ICDL-Epirob 2011. http: //flowers. inria. fr/Baranes. Oudeyer. ICDL 11. pdf Lopes, M. , Melo, F. , Montesano, L. (2009) Active Learning for Reward Estimation in Inverse Reinforcement Learning, European Conference on Machine Learning (ECML/PKDD), Bled, Slovenia, 2009. http: //flowers. inria. fr/mlopes/myrefs/09 -ecml-airl. pdf Nguyen, M. , Baranes, A. , Oudeyer, P-Y. (2011 b) Bootstrapping Intrinsically Motivated Learning with Human Demonstrations, in Proceedings of IEEE ICDL-Epirob 2011. http: //flowers. inria. fr/Nguyen. Baranes. Oudeyer. ICDL 11. pdf Oudeyer P-Y, Kaplan , F. and Hafner, V. (2007) Intrinsic Motivation Systems for Autonomous Mental Development, IEEE Transactions on Evolutionary Computation, 11(2), pp. 265 --286. http: //www. pyoudeyer. com/ims. pdf Baranes, A. , Oudeyer, P-Y. (2009 )R-IAC: Robust intrinsically motivated exploration and active learning, IEEE Transactions on Autonomous Mental Development, 1(3), pp. 155 --169. Ly, O. , Lapeyre, M. , Oudeyer, P-Y. (2011) Bio-inspired vertebral column, compliance and semi-passive dynamics in a lightweight robot, in Proceedings of IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2011), San Francisco, US. Exploration in Model-based Reinforcement Learning by Empirically Estimating Learning Progress, Manuel Lopes, Tobias Lang, Marc Toussaint and Pierre-Yves Oudeyer. Neural Information Processing Systems (NIPS 2012), Tahoe, USA. http: //flowers. inria. fr/mlopes/myrefs/12 -nips-zeta. pdf The Strategic Student Approach for Life-Long Exploration and Learning, Manuel Lopes and Pierre-Yves Oudeyer. In Proceedings of IEEE ICDL-Epirob 2012, http: //flowers. inria. fr/mlopes/myrefs/12 -ssp. pdf

- Slides: 19