Developing Performance Measures Portland Oregon TGA Objectives Share

Developing Performance Measures Portland Oregon TGA

Objectives: Share Portland TGA performance measures n Describe the process of developing one measure and refining it n Share challenges and lessons learned n

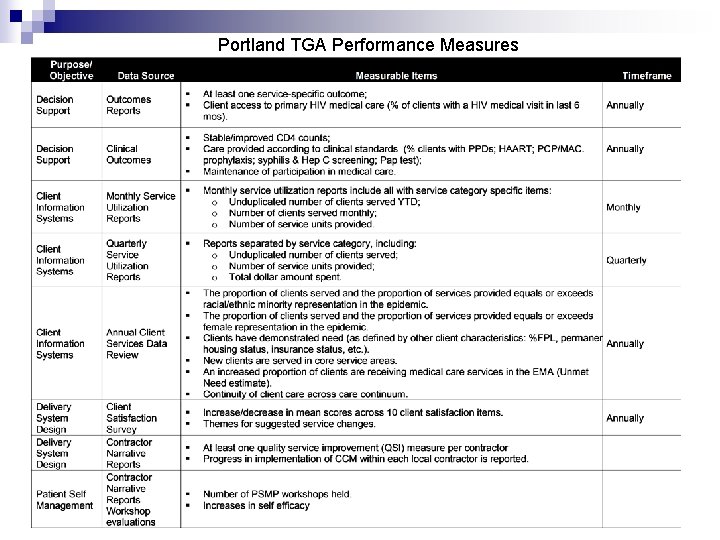

Portland TGA Performance Measures

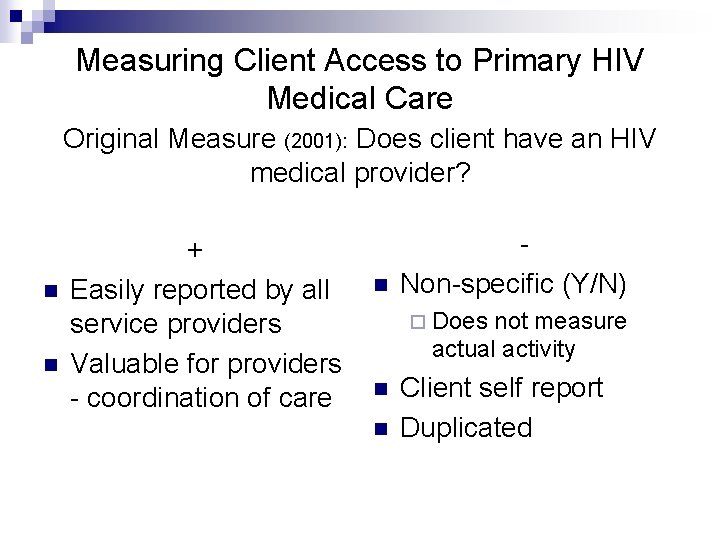

Measuring Client Access to Primary HIV Medical Care Original Measure (2001): Does client have an HIV medical provider? n n + Easily reported by all service providers Valuable for providers - coordination of care n Non-specific (Y/N) ¨ Does not measure actual activity n n Client self report Duplicated

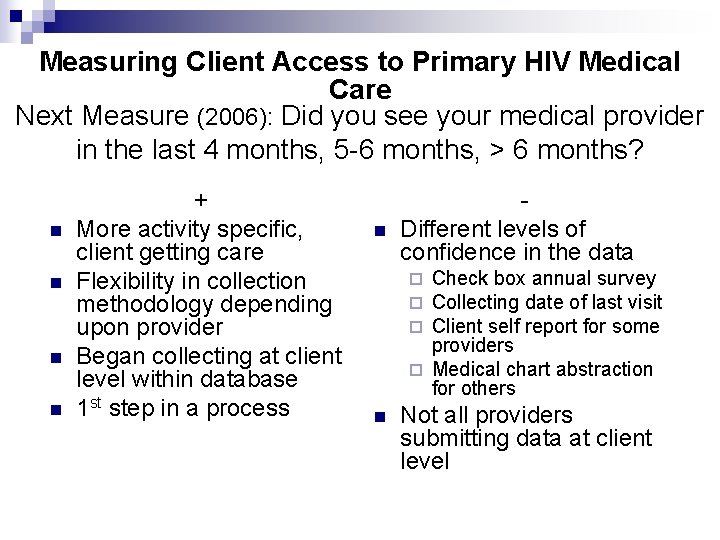

Measuring Client Access to Primary HIV Medical Care Next Measure (2006): Did you see your medical provider in the last 4 months, 5 -6 months, > 6 months? n n + More activity specific, client getting care Flexibility in collection methodology depending upon provider Began collecting at client level within database 1 st step in a process n Different levels of confidence in the data Check box annual survey Collecting date of last visit Client self report for some providers ¨ Medical chart abstraction for others ¨ ¨ ¨ n Not all providers submitting data at client level

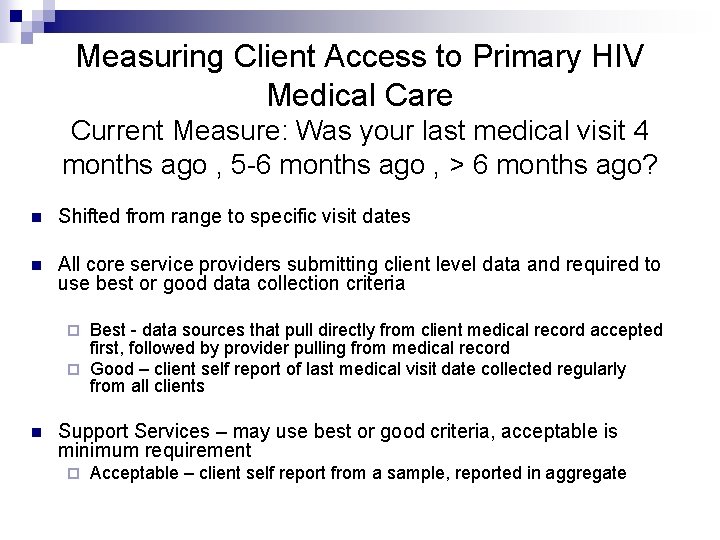

Measuring Client Access to Primary HIV Medical Care Current Measure: Was your last medical visit 4 months ago , 5 -6 months ago , > 6 months ago? n Shifted from range to specific visit dates n All core service providers submitting client level data and required to use best or good data collection criteria Best - data sources that pull directly from client medical record accepted first, followed by provider pulling from medical record ¨ Good – client self report of last medical visit date collected regularly from all clients ¨ n Support Services – may use best or good criteria, acceptable is minimum requirement ¨ Acceptable – client self report from a sample, reported in aggregate

Challenges Maintaining reasonable expectation of service providers n Using medical outcomes is reasonable as an outcome measure for the system. Is it equally applicable across providers? n Data sharing – inability to share data increases data collection and reporting burden HIV n

Lessons Learned Programmatic lessons n Keep clients at the center – performance measures are aimed at improving the quality of the care and evaluation programs, not at proving a thesis n Don’t let measurement guide the program - the results should guide the program but not the measurability n Work with providers to establish measures - provide technical assistance and make compromises Technical lessons n Be realistic about time involved in collecting data for certain measures n Critical to have a client level data base n Providers are experts at providing care – we need to provide expertise in analysis

Contact Information Marisa Mc. Laughlin, Research Analyst marisa. a. mclaughlin@co. multnomah. or. us 503 -988 -3030 x 25705 n Margy Robinson, HIV Care Services Mgr. margaret. l. robinson@co. multnomah. or. us 503 -988 -3030 x 25681 n

- Slides: 10