Designing New Programs Design Chronological Perspectives Presentation of

- Slides: 34

Designing New Programs Design & Chronological Perspectives (Presentation of Berk & Rossi’s Thinking About Program Evaluation, Sage Press, 1990)

Research Design & Program Evaluation

Decisions in Creating a Research Design for Program Evaluation • Which observation units will be selected? • How will measurement be made? • How will treatments (interventions) be delivered?

Decision One: Which Observation Units will be Selected? • Random selection of sample • Non - random selection of sample • Selecting the entire population as one large sample.

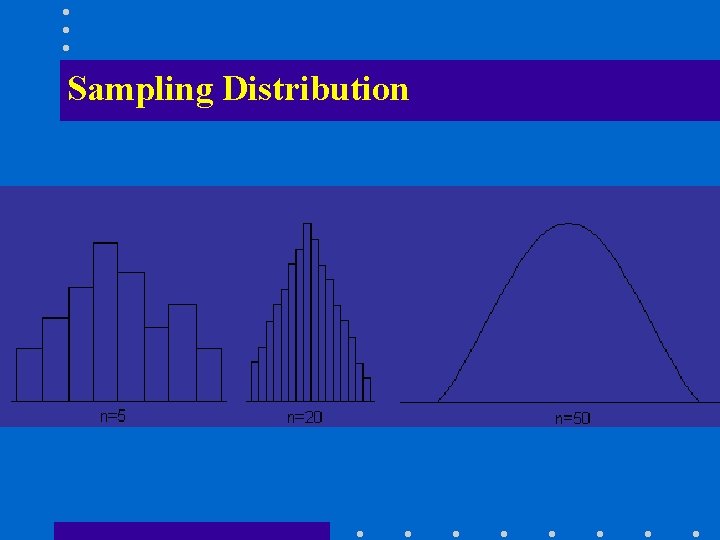

Selecting Units: Repeatedly Drawing Samples from a Population Suppose many different samples of the same size are obtained by repeatedly sampling from a population • for each sample a mean is calculated and • a histogram of these mean values is drawn

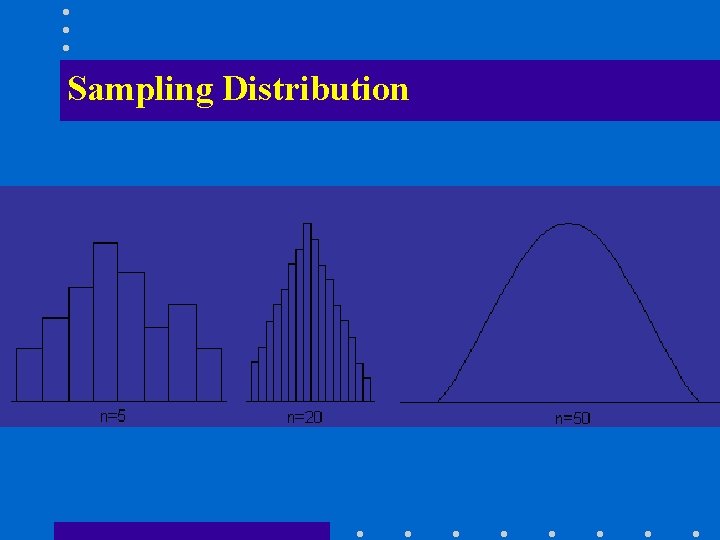

Selecting Units: Repeatedly Drawing Samples from a Population The shape of the histogram depends on the size n of the sample, and approximates to the sampling distribution.

Sampling Distribution

Central Limit Theorem Even if the distribution of the X values was not Normal, as n increases the distribution for becomes more like the Normal distribution - this is the Central Limit Theorem

Properties of the Sampling Distribution As n increases, the distribution of the sample means becomes narrower - that is, they cluster more tightly around µ. In fact the variance is inversely proportional to n

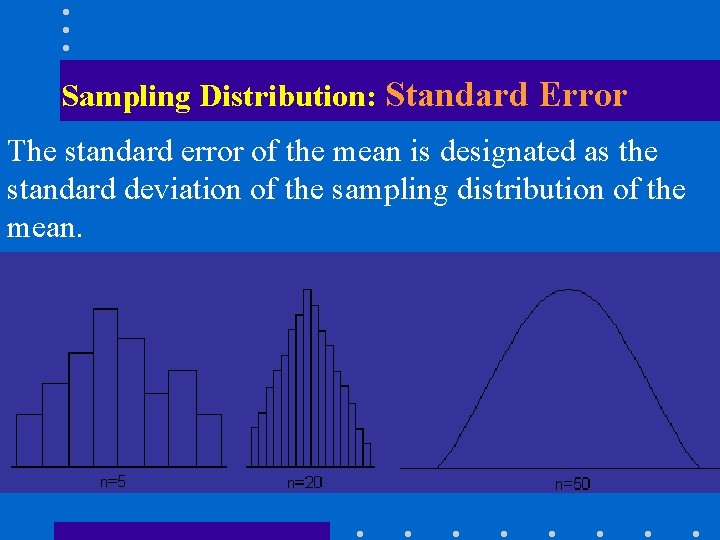

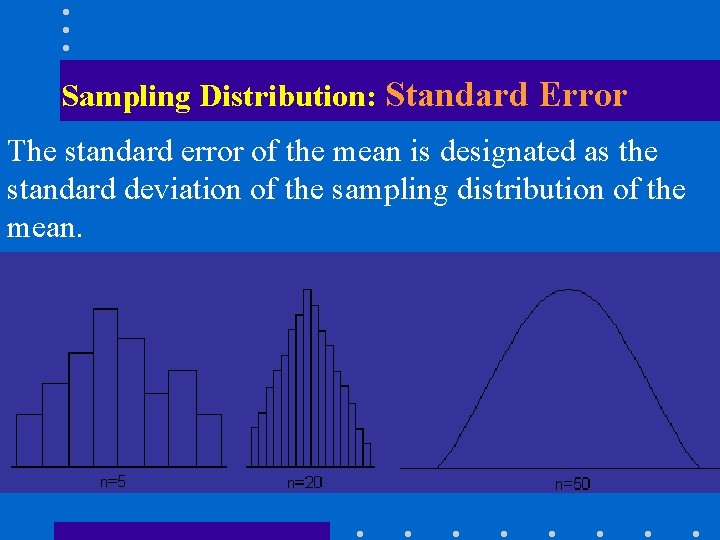

Sampling Distribution: Standard Error The standard error of the mean is designated as the standard deviation of the sampling distribution of the mean.

Decision Two: How Will Measurement be Undertaken? • Identify the unit of data: e. g. arrest report, meal served, transportation miles, hours of service etc. • Determine what type of data you are dealing with: Ordinal, Nominal, Interval, Ratio

Decision Three: What Will the Program Intervention be Delivered? • Random Assignment • Non - Random Assignment

Additional Considerations • Relevance: Is the program intervention relevant to the needs of the stakeholders? • Implementation: Can the program be effectively implemented?

Chronology of Program Development & Evaluation

Chronological Sequence of Program Evaluation • • • Identification of Policy Issues (Problem) Formulation of Policy Responses Design of Programs Improvement of Programs Assessment of Program Impact Determination of Cost Effectiveness

Principles for Fitting the Evaluation Strategy to the Identified Problem • There is no “one best plan”, just best among alternatives (incremental planning) • Methods dependent upon nature of problem • Problem should be broken down into component policy problems. • Evaluation should be linked to each separate policy problem.

Principles for Fitting the Evaluation Strategy to the Identified Problem • Evaluation methods tempered by available resources (time, money, staff, priorities). • Evaluation costs can’t exceed program costs or value. • Evaluation strategy must be congruent with the importance of the problem being addressed.

Contexts for Evaluation

Evaluation Context One: Policy & Program Evaluation Questions raised about the • nature and amount of an identified problem, • whether appropriate policy actions are feasible, & • whether options proposed are both appropriate & effective. RULE: Look to the future to inform the present

Evaluation Context Two: Examining Existing Policies & Programs • Do extant programs & policies accomplish what they were intended to accomplish • RULE: Review the past to inform the future

Policies Change!

Policies Change Because……. • • • times change and new policy issues emerge resources change programs don’t work as expected stakeholder criticize and complain competition emerges politics change

Six Steps………. . . In Problem Identification & Resolution

Step One : Defining the Problem • Social Problem as Social Construction • Need to Define Problem from Several Perspectives • Need to Evaluate & Consider Each Problem Definition and Consider the Social and Methodological Implications of Each Problem Alternative.

Step One: Defining the Problem (An Example) Drug Abuse Problem Definitions: • Problem of Supply • Problem of Use • Problem of Poverty • Problem of Racism • Problem of Lack of Morality • Problem of Legal Status of Drug Use

Step Two: Determining the Extent of the Problem • Using Available Data to Determine the Extent of the Problem • Creating New Data (preliminary assessment) to Determine How Wide Spread the Problem is.

Step Two: Determining the Extent of the Problem • Quality of Data: Some sources are more valid and reliable than others, & some studies are methodologically sounder than others • Conflictual Data: Often data from various sources on the same subject will contradict one another (hint: look for points of agreement)

Step Two: Determining the Extent of the Problem Creating New Data: Methods of Needs Assessment • Expert Informants • Surveys • Qualitative Approaches (e. g. participant observer)

Step Three: Determining Whether the Problem Can be Ameliorated • Defining Remedies • Basing Remedies Upon a Clear Problem Definition • Basing Remedies in Theory • Basing Remedies Upon the Current Realities of the Organizational, Social & Political Environment

Step Four: Translating Promising Ideas Into Promising Programs • Programs are Essentially Activities Undertaken by Individuals & Organizations • Understanding Stakeholders, Including their Interests, Actions & Activities is Essential to Effective Programming • Translating Information Regarding Interests & Activities to Programming is as Much Art as Science

Step Four: Translating Promising Ideas Into Promising Programs • • Use of Pilot Studies: Can Fall Short of Full Scientific Rigor Alternately, Some Meet Full Scientific Rigor Pre-testing in Pilot Studies is Essential Generalization to Non - Test Subjects Can be Problematic

Step Five: Can YOAA Dot It? (Implementation) • YOAA = Your Ordinary American Agency • Need Details on How Programs are Implemented in Agencies • Again, Pilot Programs or Demonstration Projects will Help Evaluators Understand How Agencies & Organizations Implement Your Particular Program

Step Six: Assuring Program Effectiveness is Difficult to Determine: • Typically it is Difficult to Impossible to Discriminate Between Outcomes Related to the Program from Other Causative Factors • Also, It is Difficult to Discriminate from Program - Related Outcomes & Chance

Step Six: Assuring Program Effectiveness Determining Program Effectiveness: • Making Random Samples of Program Outcome • Look at Relative Effectiveness of Several Interventions • Are Opportunity Costs/Benefits Greater than Program Outcome Costs/Benefits